GPU Concurrency Weak Behaviours and Programming Assumptions Jade

GPU Concurrency: Weak Behaviours and Programming Assumptions Jade Alglave 1, 2, Mark Batty 3, Alastair F. Donaldson 4, Ganesh Gopalakrishnan 5, Jeroen Ketema 4, Daniel Poetzl 6, Tyler Sorensen 1, 5, John Wickerson 4 1 University College London, 2 Microsoft Research, 3 University of Cambridge, 4 Imperial College London, 5 University of Utah, 6 University of Oxford 1

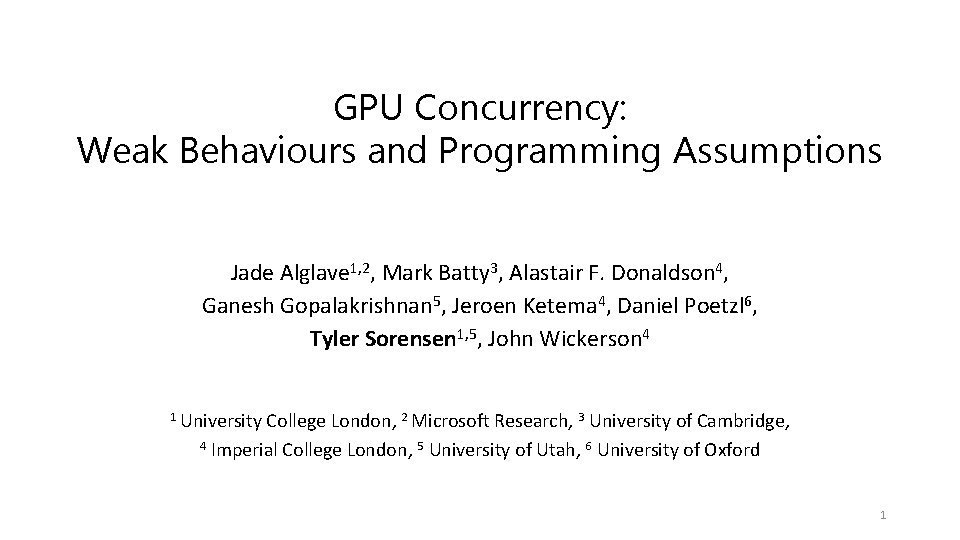

Intel Core i 7 4500 CPU 2

3

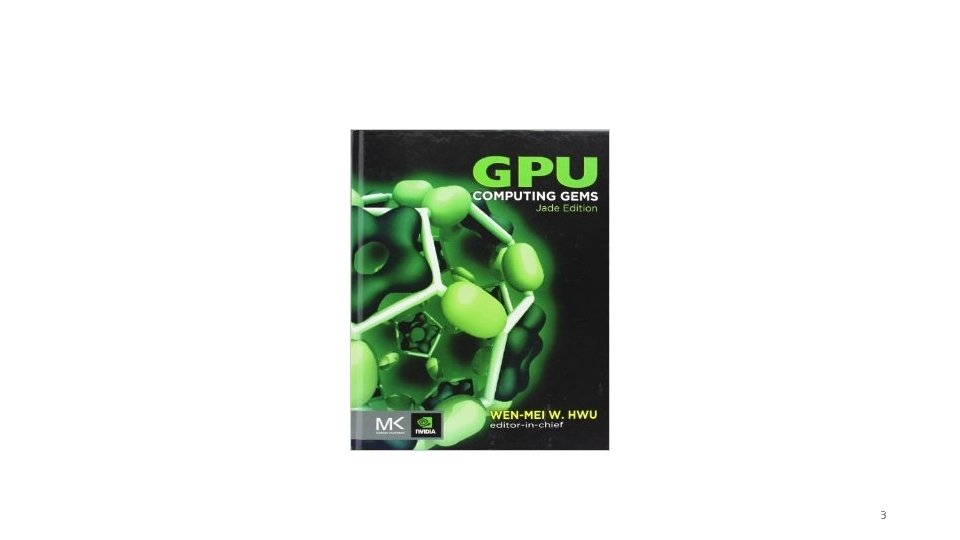

Nvidia Tesla C 2075 GPU 4

Roadmap • what happened to the pony • how we found the bug • how we are able to fix the pony (background) (methodology) (contribution) 5

What happened to the pony? • the visualization bugs are due to weak memory behaviours on GPUs 6

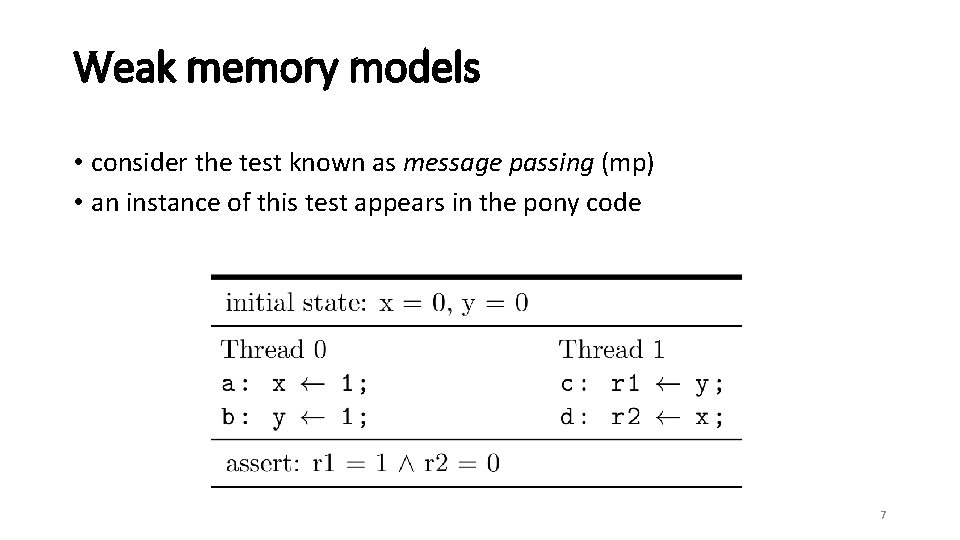

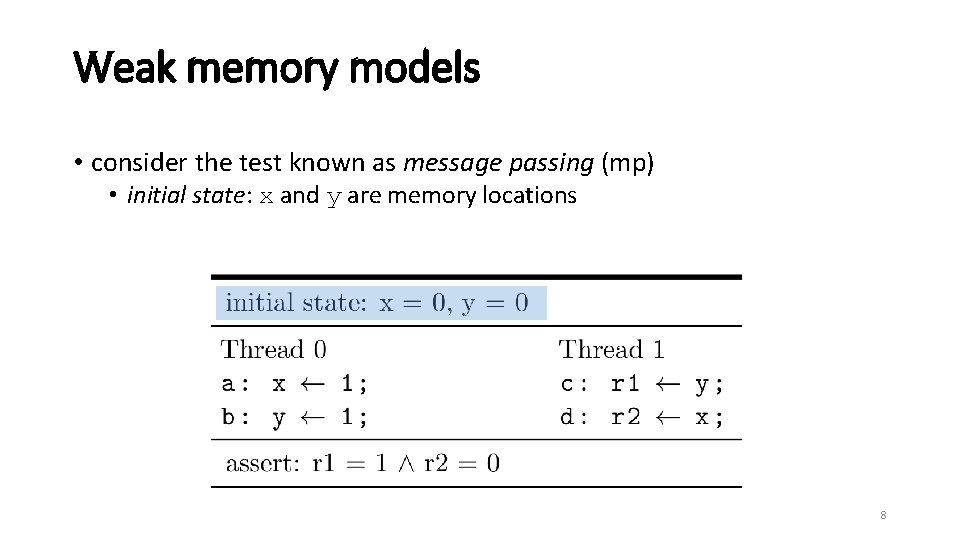

Weak memory models • consider the test known as message passing (mp) • an instance of this test appears in the pony code 7

Weak memory models • consider the test known as message passing (mp) • initial state: x and y are memory locations 8

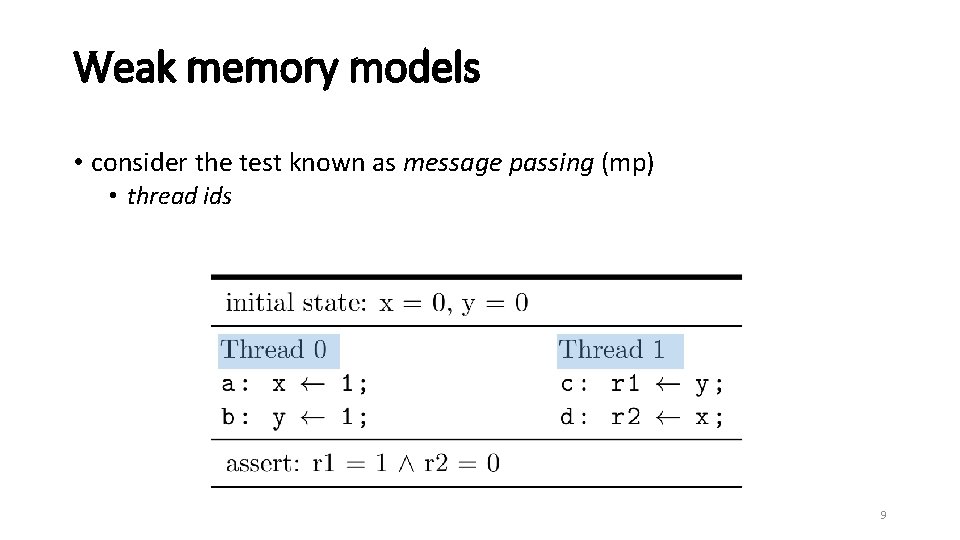

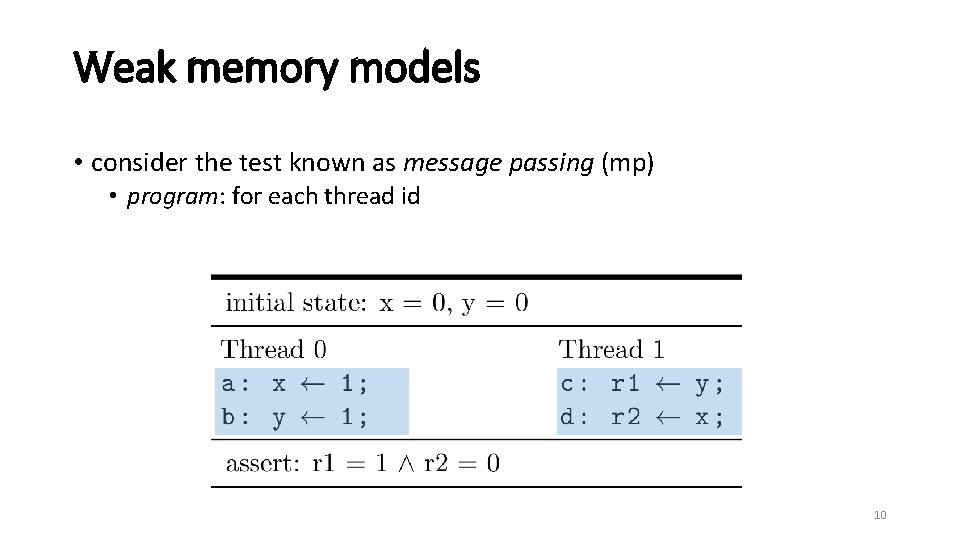

Weak memory models • consider the test known as message passing (mp) • thread ids 9

Weak memory models • consider the test known as message passing (mp) • program: for each thread id 10

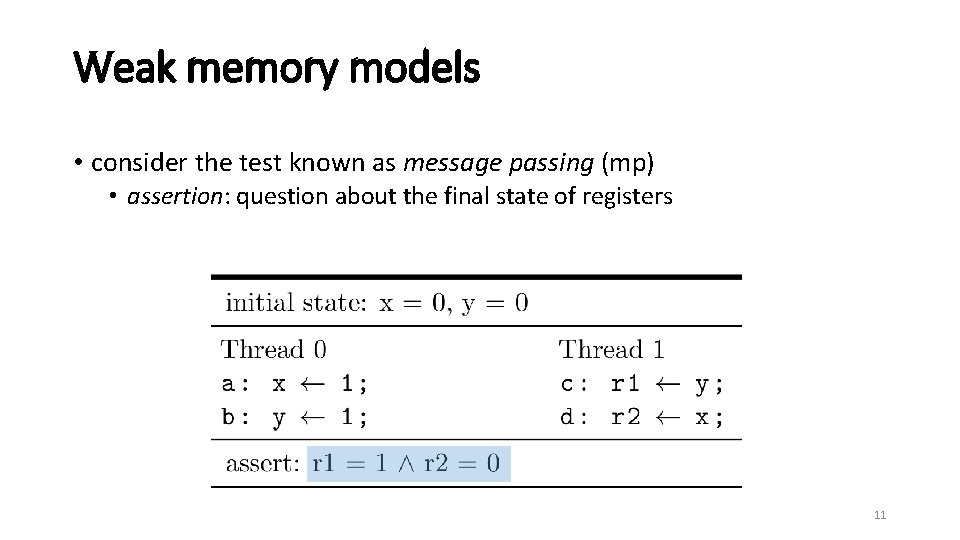

Weak memory models • consider the test known as message passing (mp) • assertion: question about the final state of registers 11

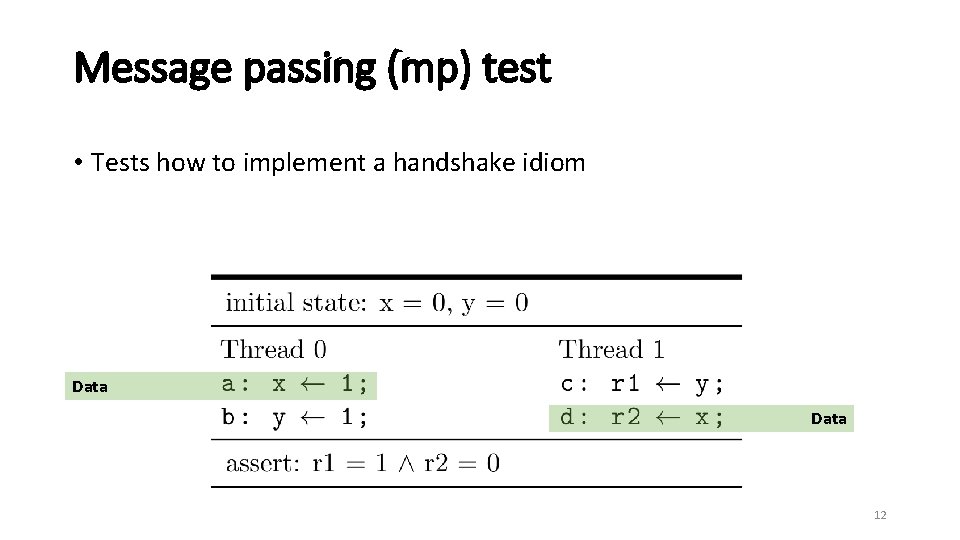

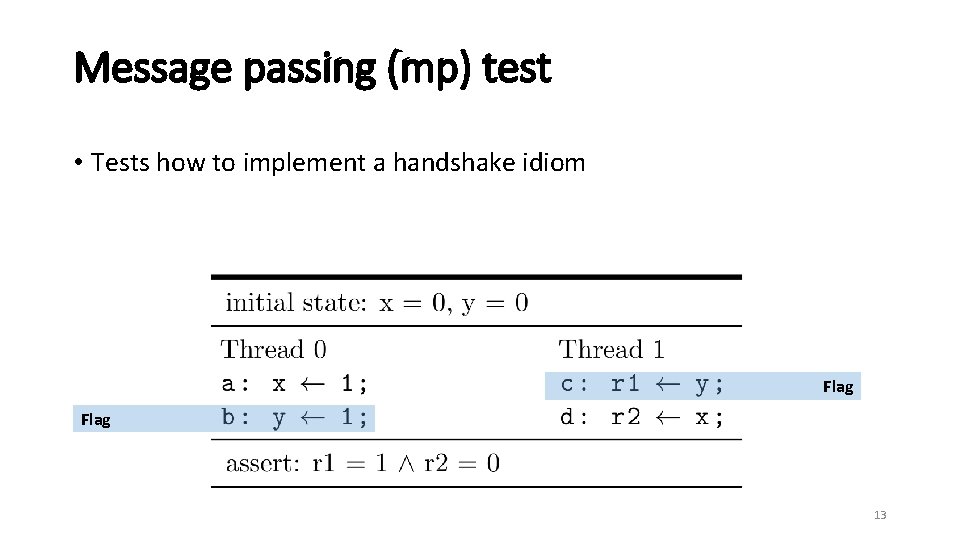

Message passing (mp) test • Tests how to implement a handshake idiom Data 12

Message passing (mp) test • Tests how to implement a handshake idiom Flag 13

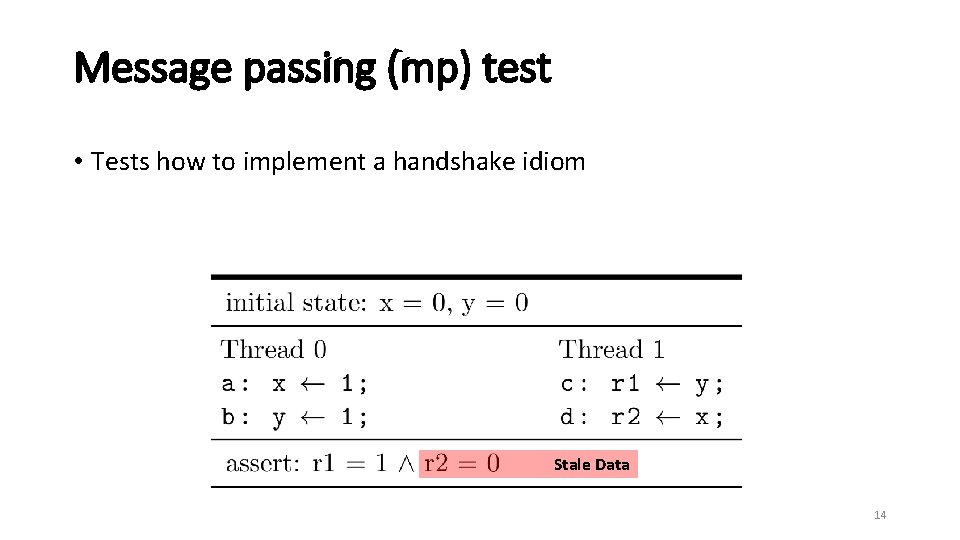

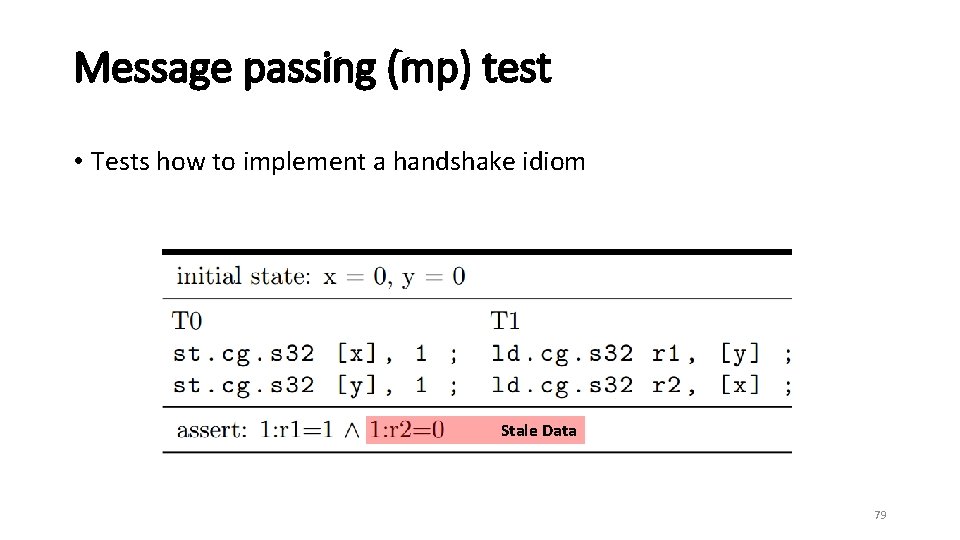

Message passing (mp) test • Tests how to implement a handshake idiom Stale Data 14

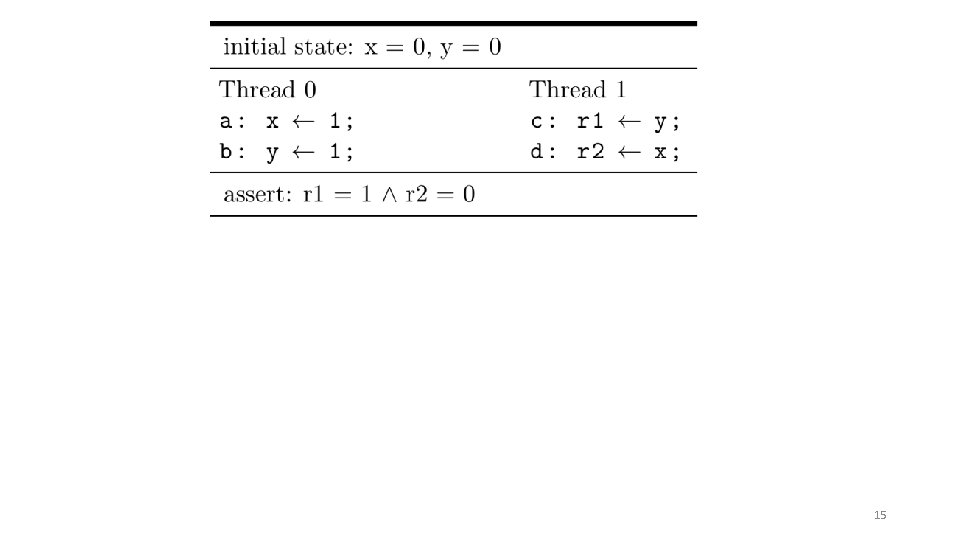

15

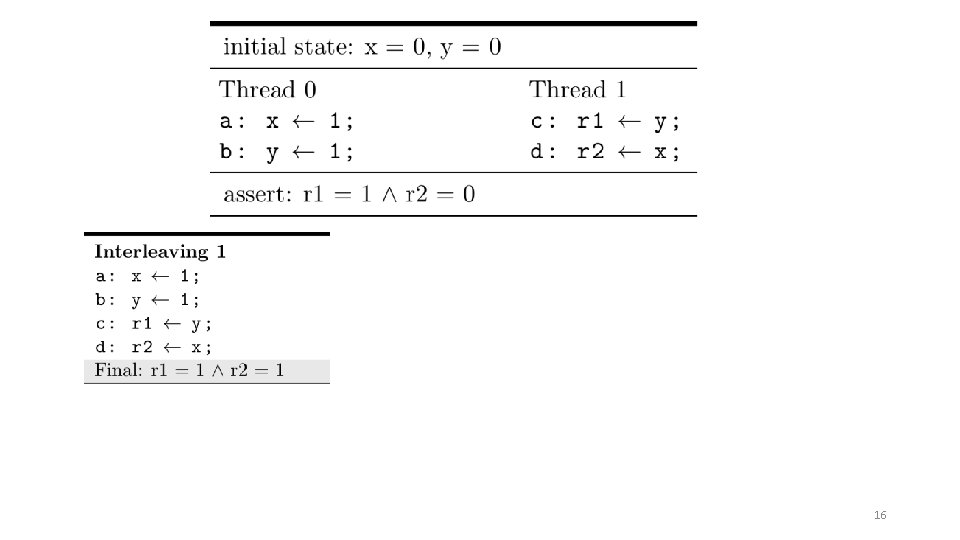

16

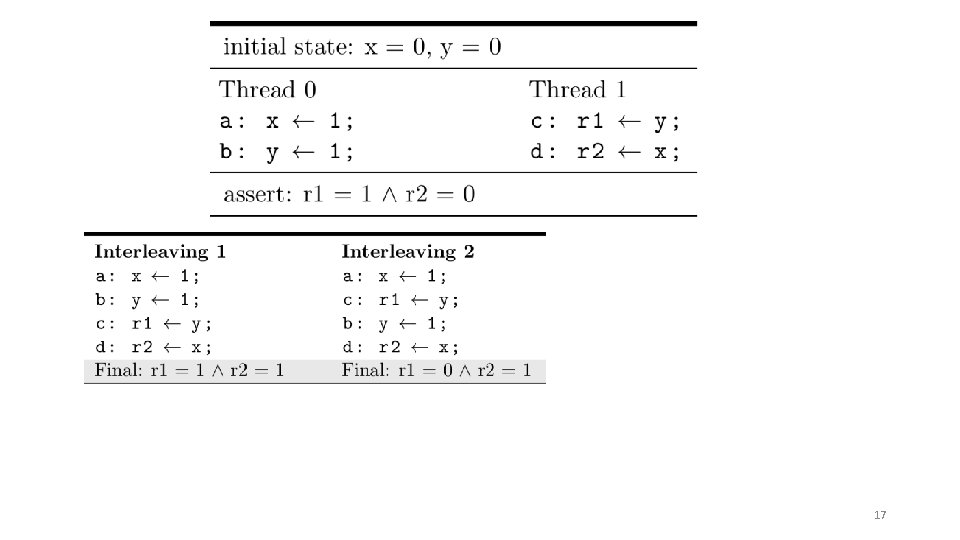

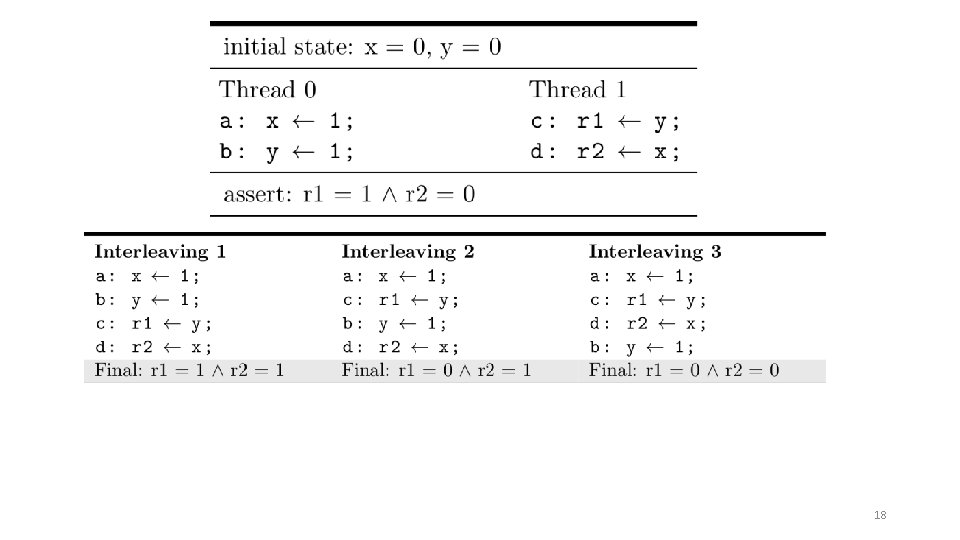

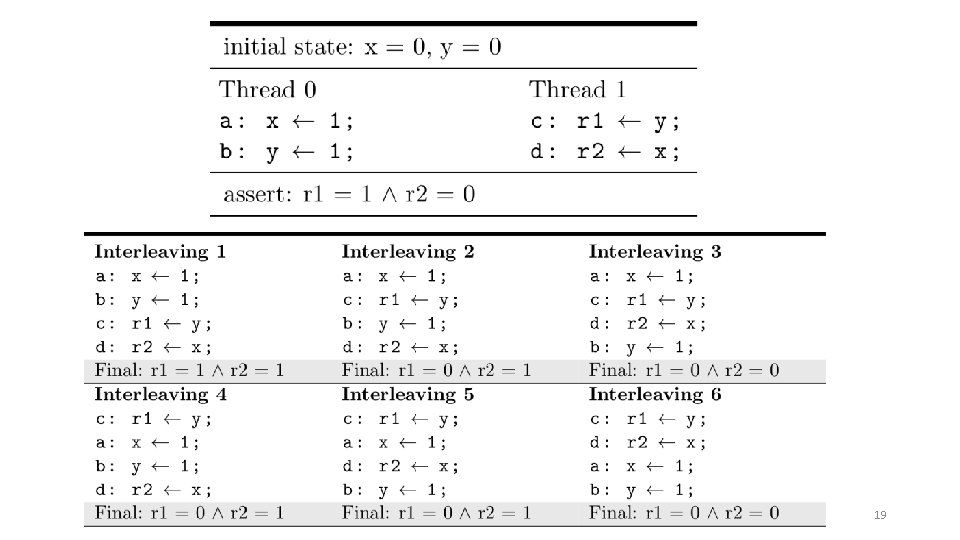

17

18

19

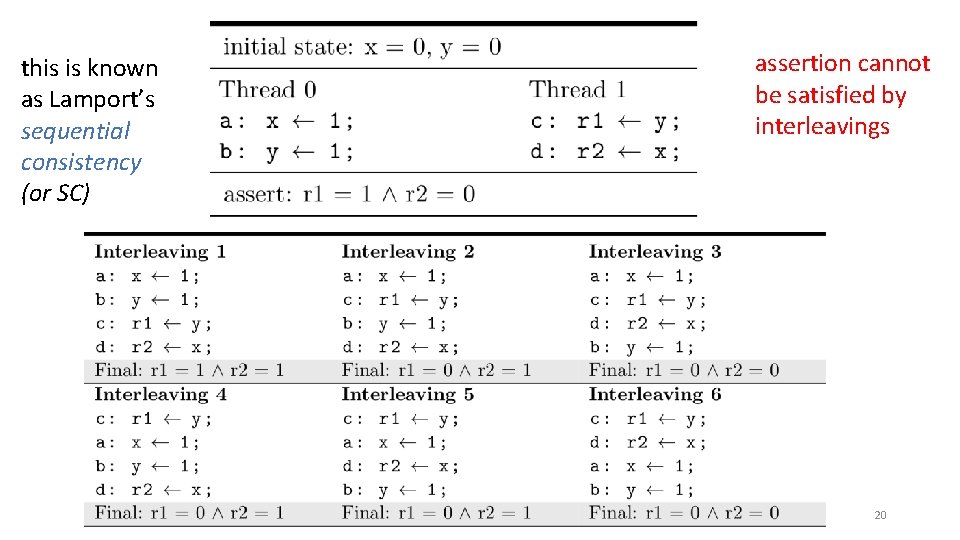

this is known as Lamport’s sequential consistency (or SC) assertion cannot be satisfied by interleavings 20

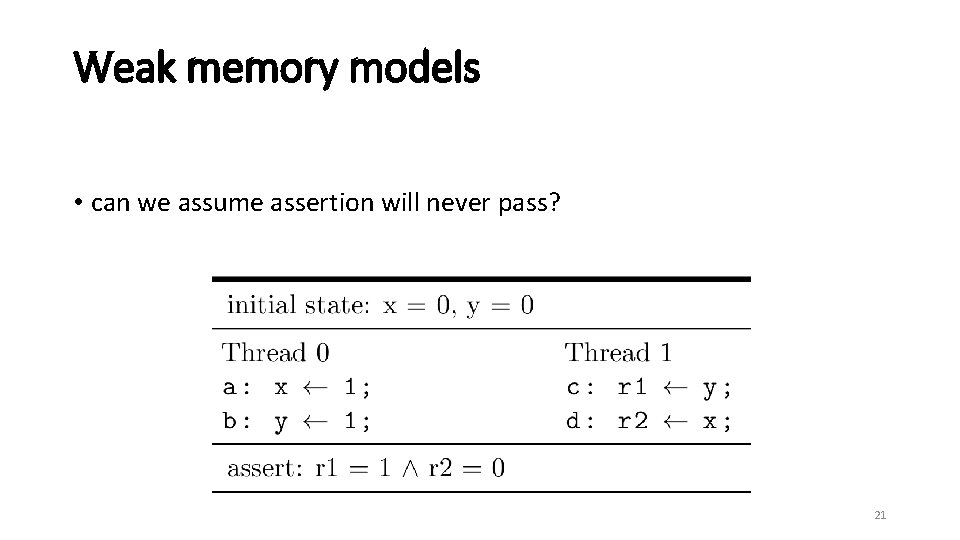

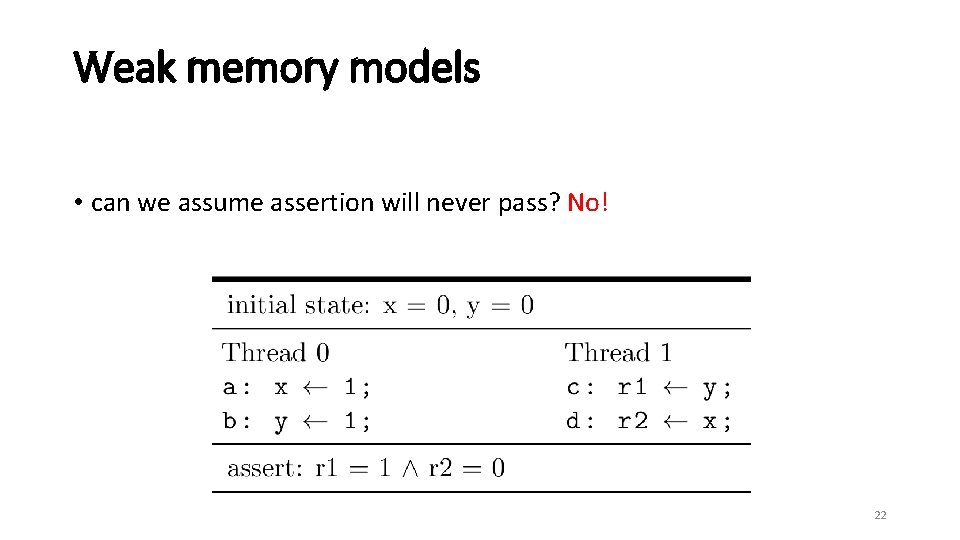

Weak memory models • can we assume assertion will never pass? 21

Weak memory models • can we assume assertion will never pass? No! 22

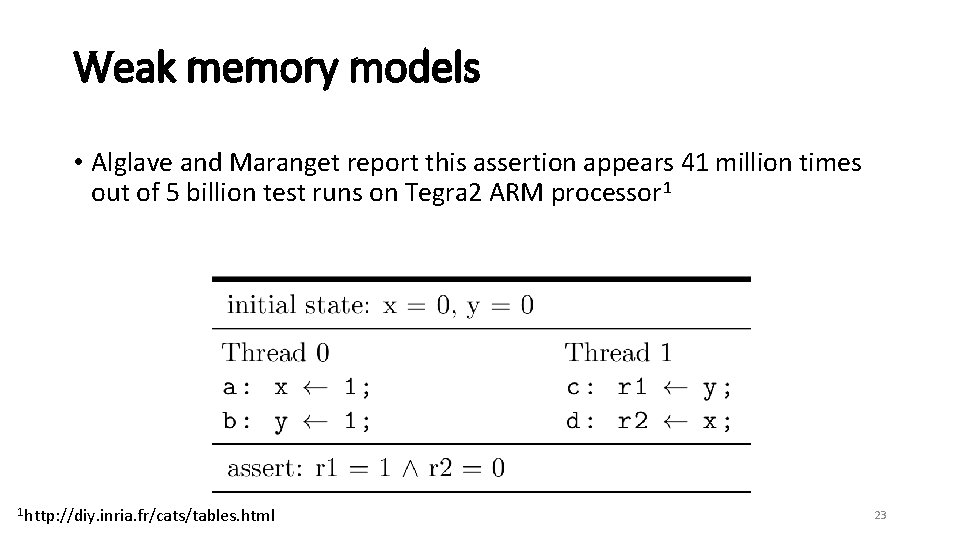

Weak memory models • Alglave and Maranget report this assertion appears 41 million times out of 5 billion test runs on Tegra 2 ARM processor 1 1 http: //diy. inria. fr/cats/tables. html 23

Weak memory models • what happened? • architectures implement weak memory models where the hardware is allowed to re-order certain memory instructions. 24

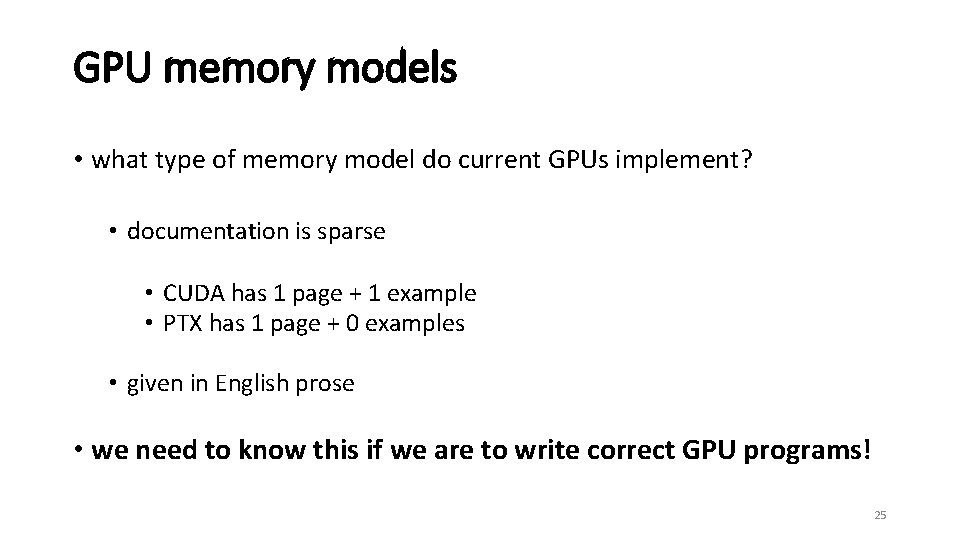

GPU memory models • what type of memory model do current GPUs implement? • documentation is sparse • CUDA has 1 page + 1 example • PTX has 1 page + 0 examples • given in English prose • we need to know this if we are to write correct GPU programs! 25

GPU programming 26

Roadmap • what happened to the pony • how we found the bug • how we are able to fix the pony (background) (methodology) (contribution) 27

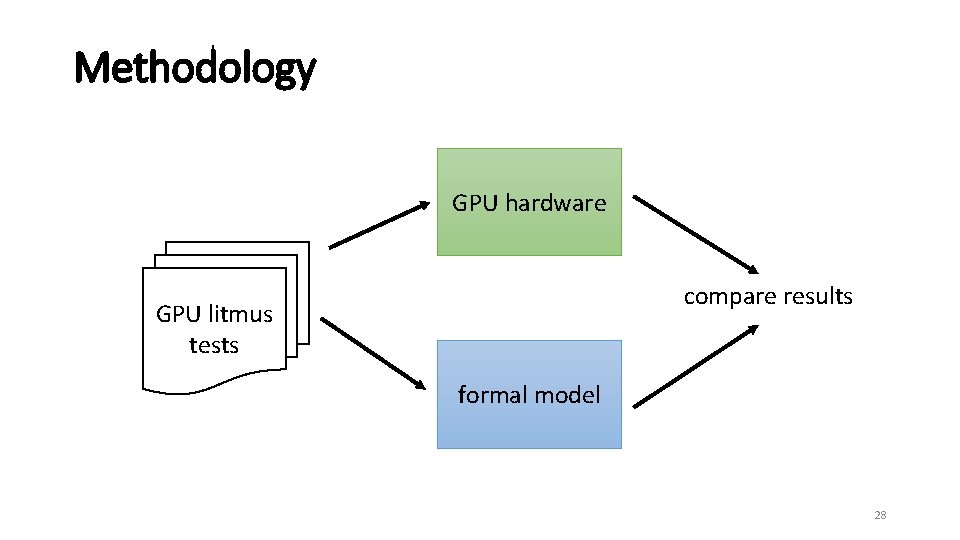

Methodology GPU hardware compare results GPU litmus tests formal model 28

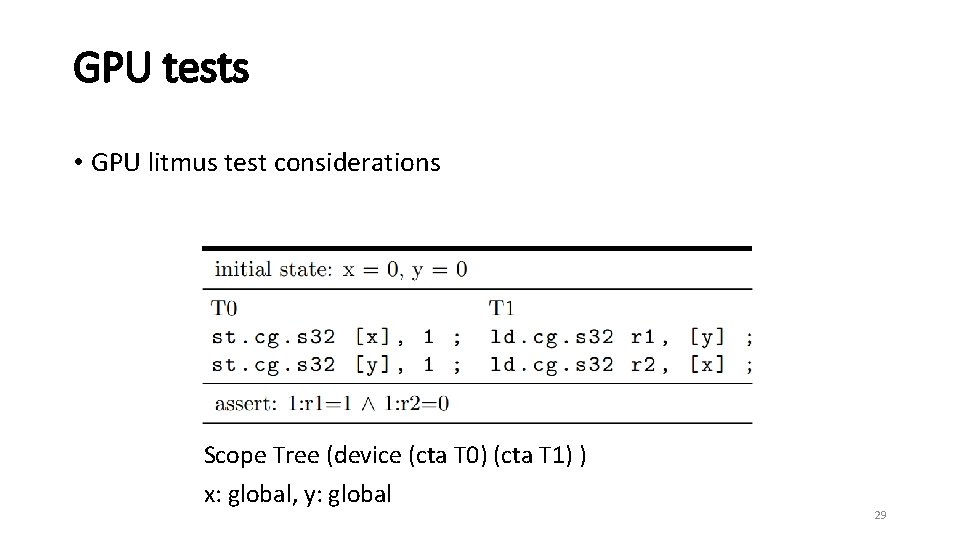

GPU tests • GPU litmus test considerations Scope Tree (device (cta T 0) (cta T 1) ) x: global, y: global 29

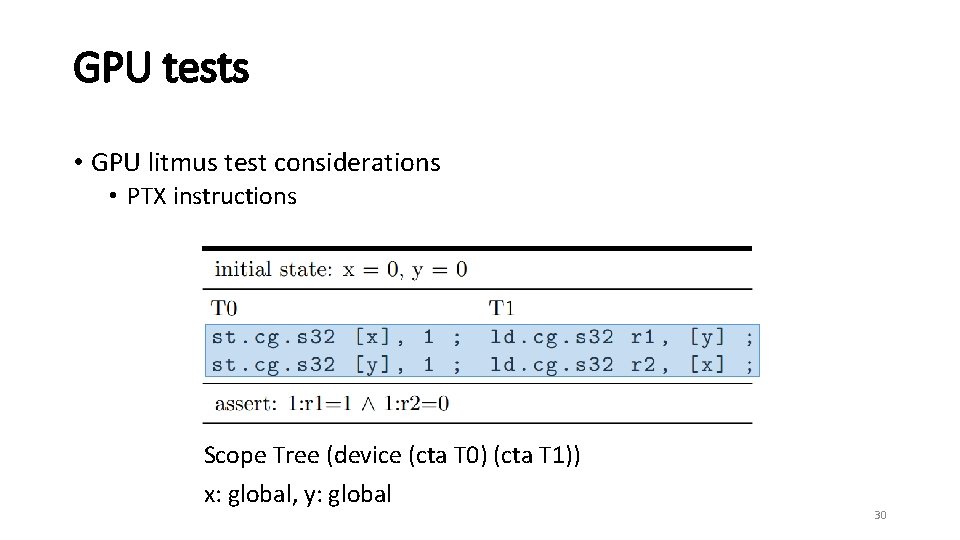

GPU tests • GPU litmus test considerations • PTX instructions Scope Tree (device (cta T 0) (cta T 1)) x: global, y: global 30

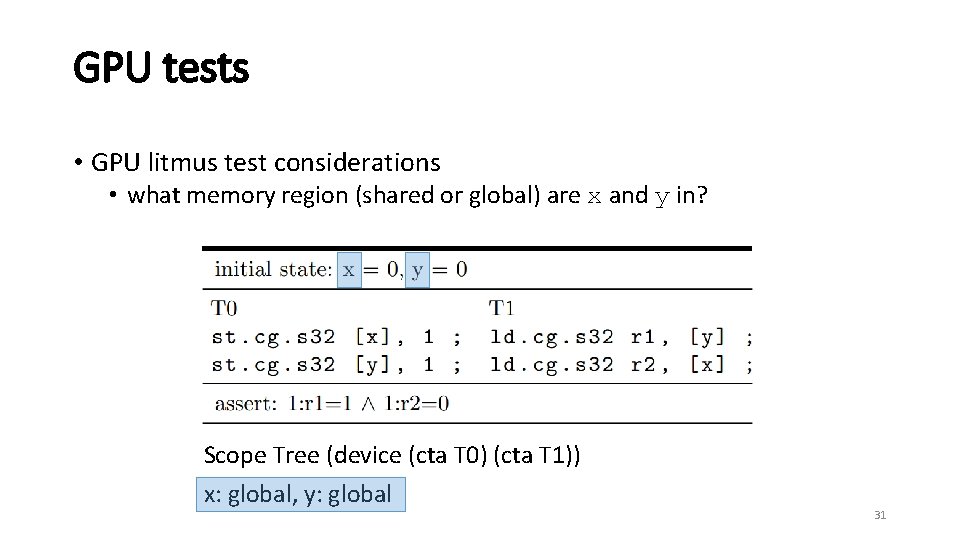

GPU tests • GPU litmus test considerations • what memory region (shared or global) are x and y in? Scope Tree (device (cta T 0) (cta T 1)) x: global, y: global 31

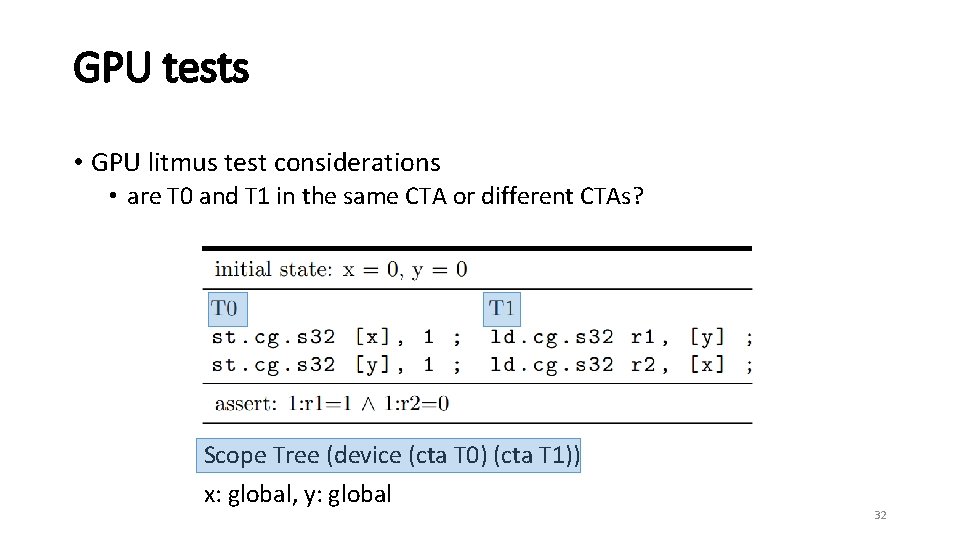

GPU tests • GPU litmus test considerations • are T 0 and T 1 in the same CTA or different CTAs? Scope Tree (device (cta T 0) (cta T 1)) x: global, y: global 32

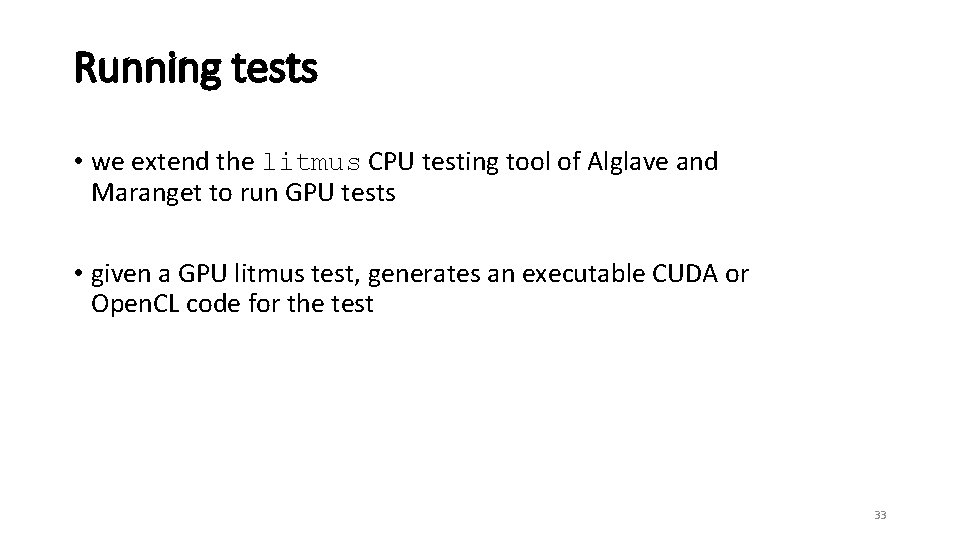

Running tests • we extend the litmus CPU testing tool of Alglave and Maranget to run GPU tests • given a GPU litmus test, generates an executable CUDA or Open. CL code for the test 33

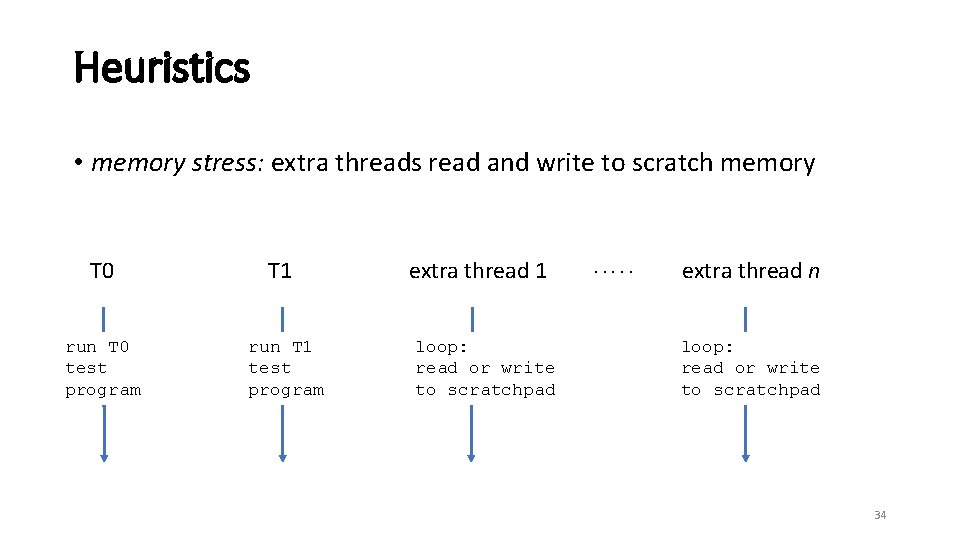

Heuristics • memory stress: extra threads read and write to scratch memory T 0 run T 0 test program T 1 run T 1 test program extra thread 1 loop: read or write to scratchpad . . . extra thread n loop: read or write to scratchpad 34

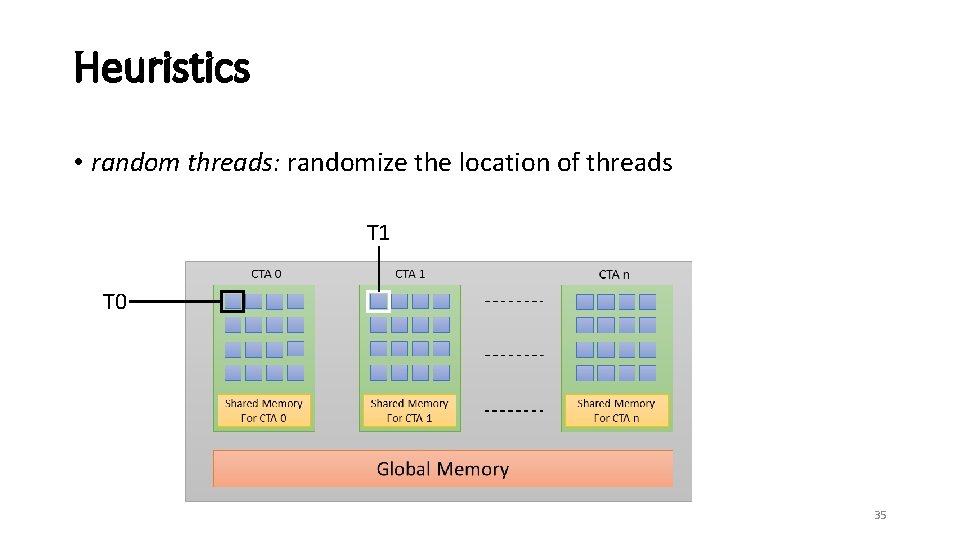

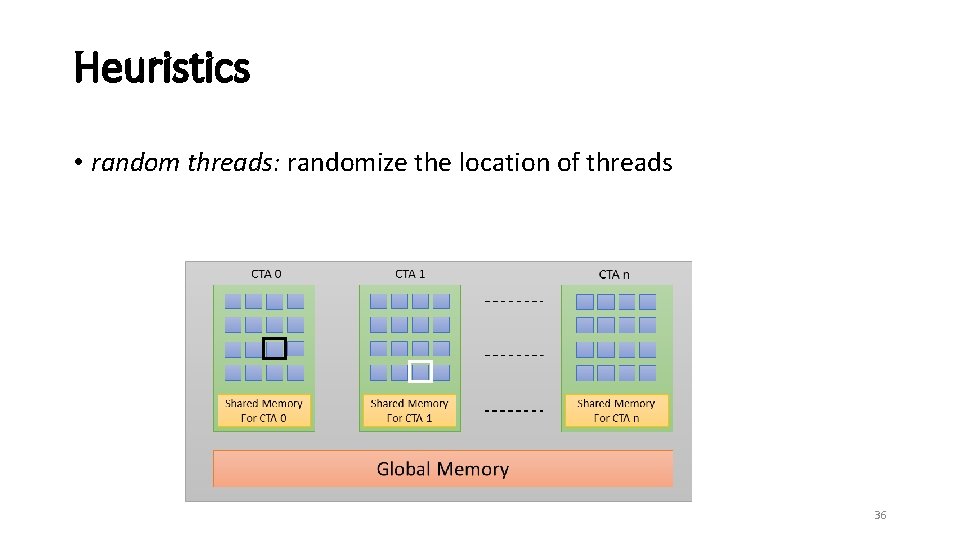

Heuristics • random threads: randomize the location of threads T 1 T 0 35

Heuristics • random threads: randomize the location of threads 36

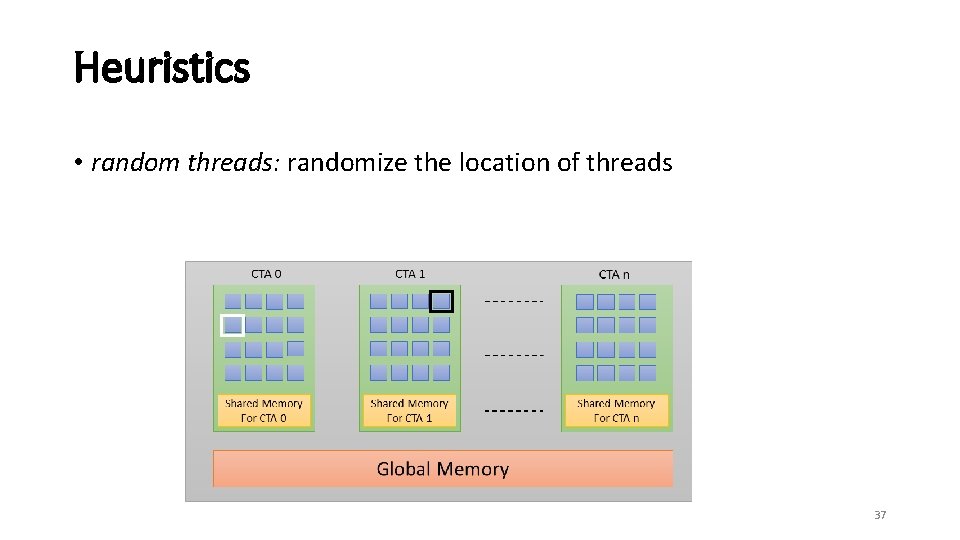

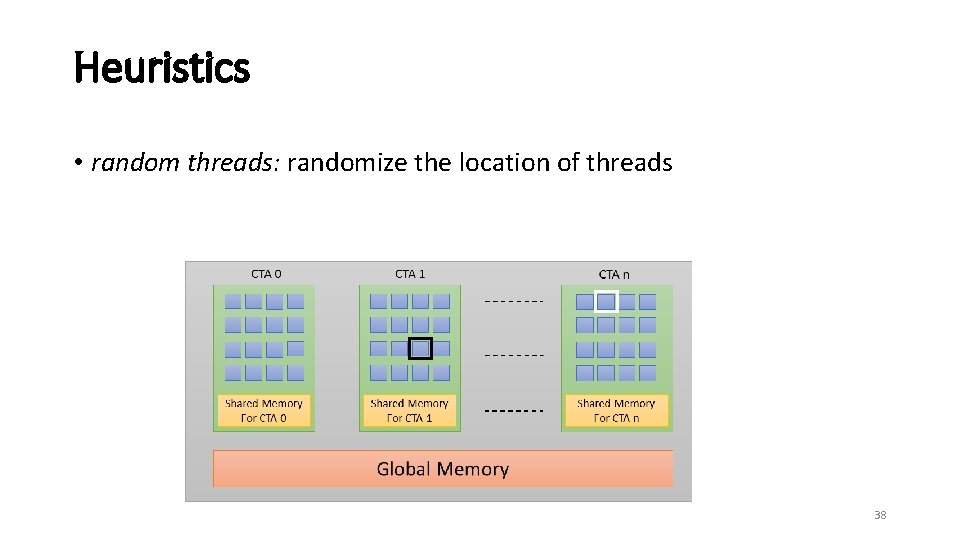

Heuristics • random threads: randomize the location of threads 37

Heuristics • random threads: randomize the location of threads 38

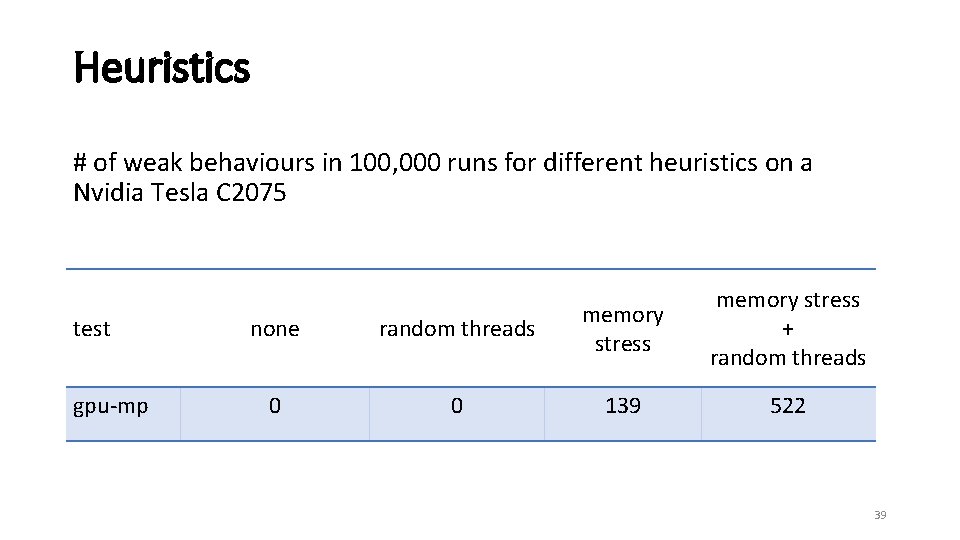

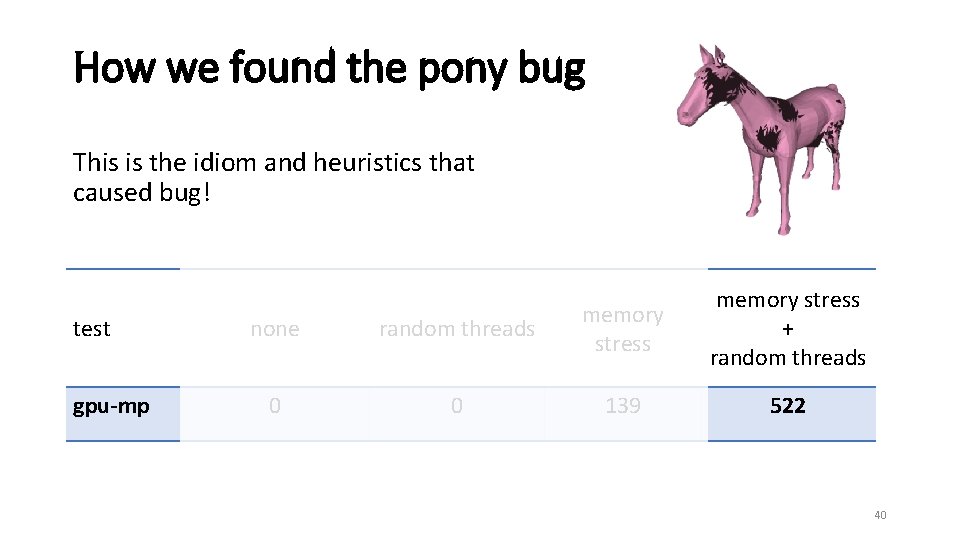

Heuristics # of weak behaviours in 100, 000 runs for different heuristics on a Nvidia Tesla C 2075 test gpu-mp none random threads memory stress 0 0 139 memory stress + random threads 522 39

How we found the pony bug This is the idiom and heuristics that caused bug! test gpu-mp none random threads memory stress 0 0 139 memory stress + random threads 522 40

Roadmap • what happened to the pony • how we found the bug • how we are able to fix the pony (background) (methodology) (contribution) 41

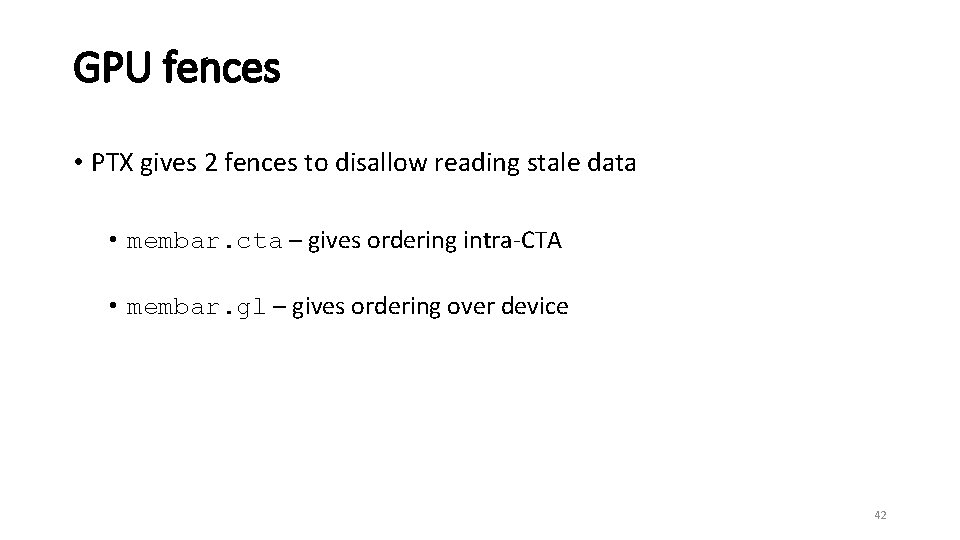

GPU fences • PTX gives 2 fences to disallow reading stale data • membar. cta – gives ordering intra-CTA • membar. gl – gives ordering over device 42

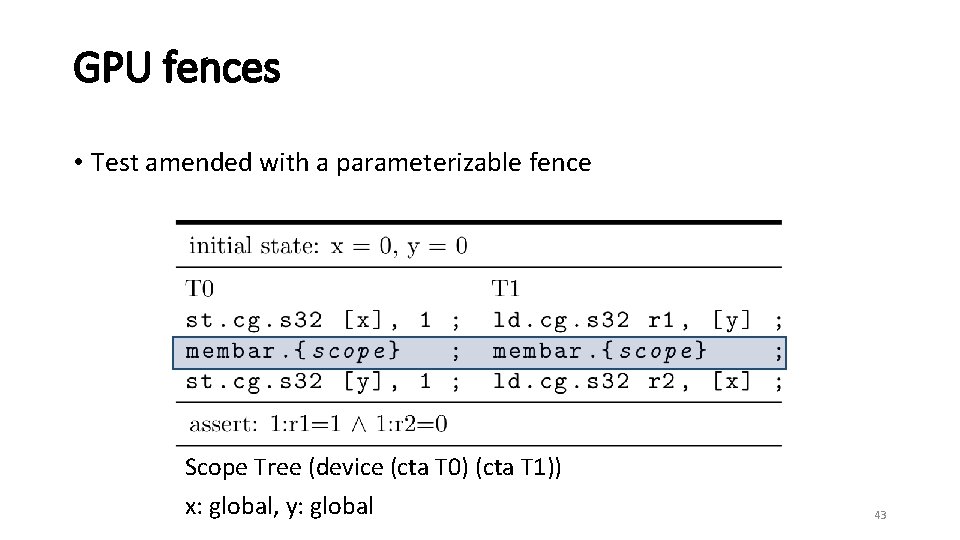

GPU fences • Test amended with a parameterizable fence Scope Tree (device (cta T 0) (cta T 1)) x: global, y: global 43

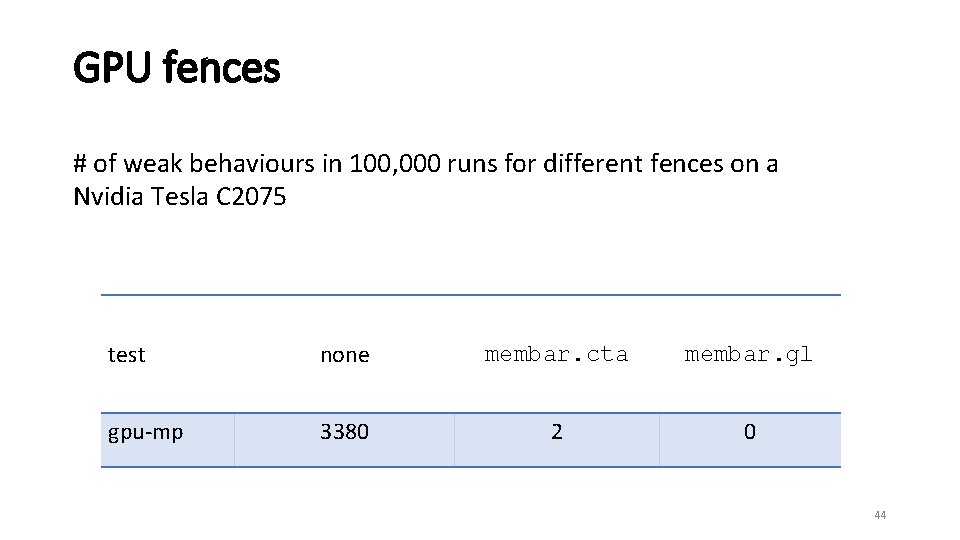

GPU fences # of weak behaviours in 100, 000 runs for different fences on a Nvidia Tesla C 2075 test none membar. cta membar. gl gpu-mp 3380 2 0 44

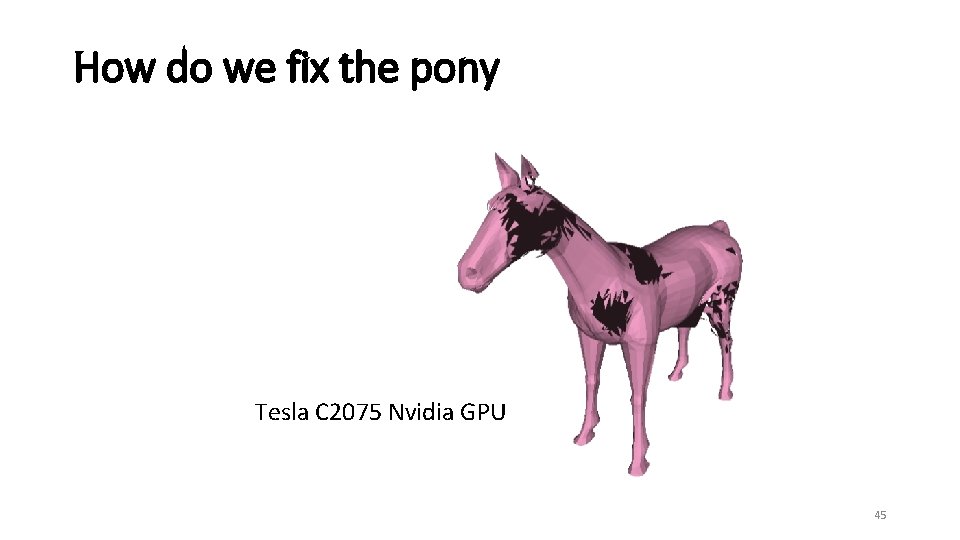

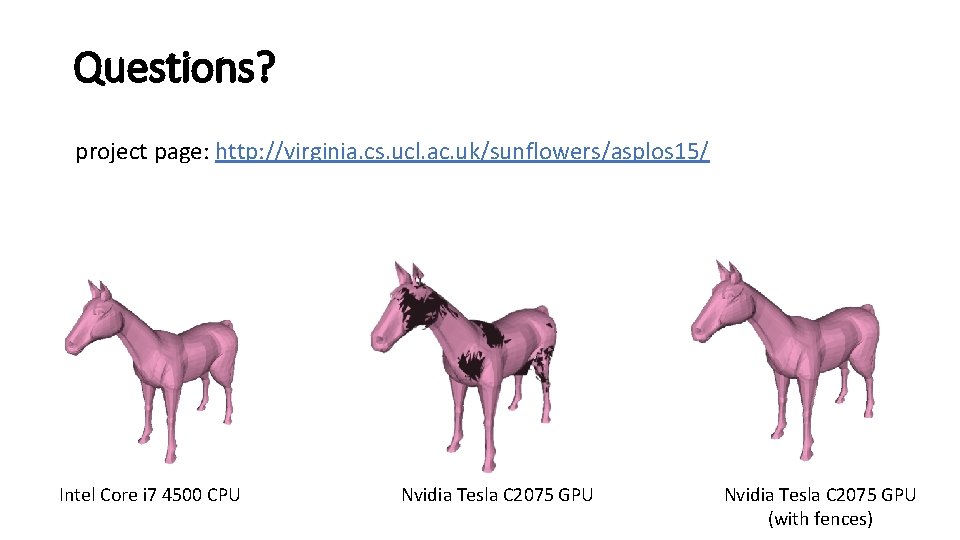

How do we fix the pony Tesla C 2075 Nvidia GPU 45

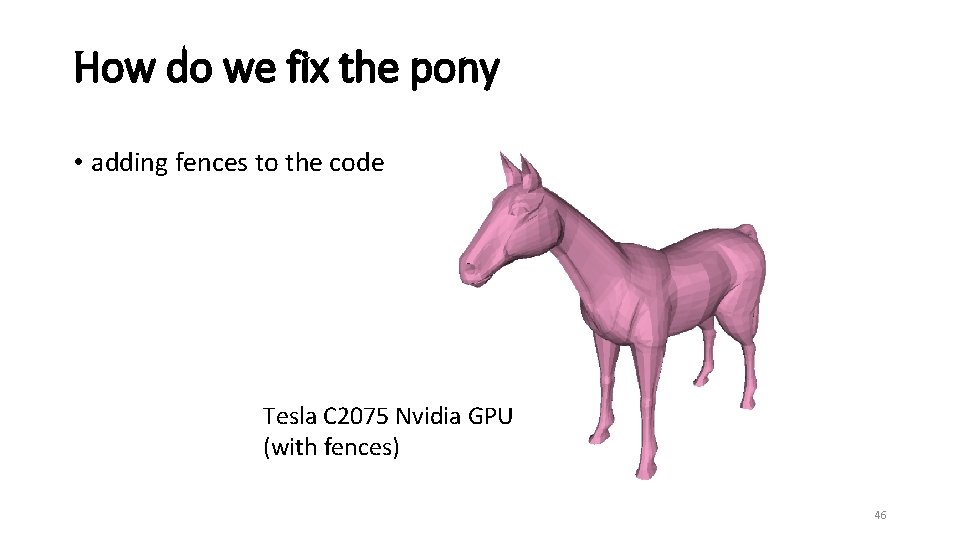

How do we fix the pony • adding fences to the code Tesla C 2075 Nvidia GPU (with fences) 46

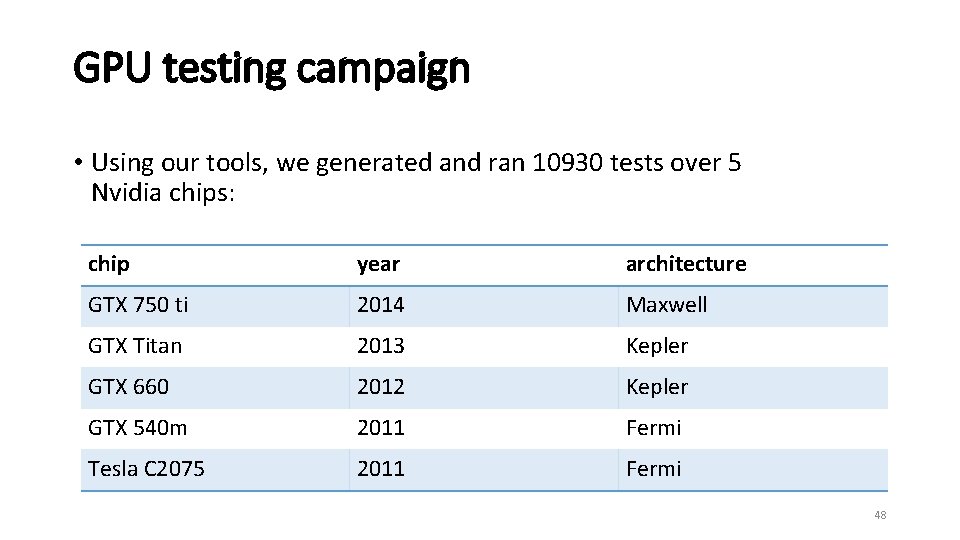

GPU testing campaign • we extend the diy CPU litmus test generation tool of Alglave and Maranget to generate GPU tests • generates litmus tests based on cycles • enumerates the tests over the GPU thread and memory hierarchy 47

GPU testing campaign • Using our tools, we generated and ran 10930 tests over 5 Nvidia chips: chip year architecture GTX 750 ti 2014 Maxwell GTX Titan 2013 Kepler GTX 660 2012 Kepler GTX 540 m 2011 Fermi Tesla C 2075 2011 Fermi 48

GPU testing campaign • Results are hosted at: http: //virginia. cs. ucl. ac. uk/sunflowers/asplos 15/flat. html 49

Modeling • we extended the CPU axiomaitic memory modeling tool herd of Alglave and Maranget, for GPUs • we developed an axiomatic memory model for PTX which is able to simulate all of our tests • our model is sound with respect to all of our hardware observations 50

Modeling • Demo of web interface 51

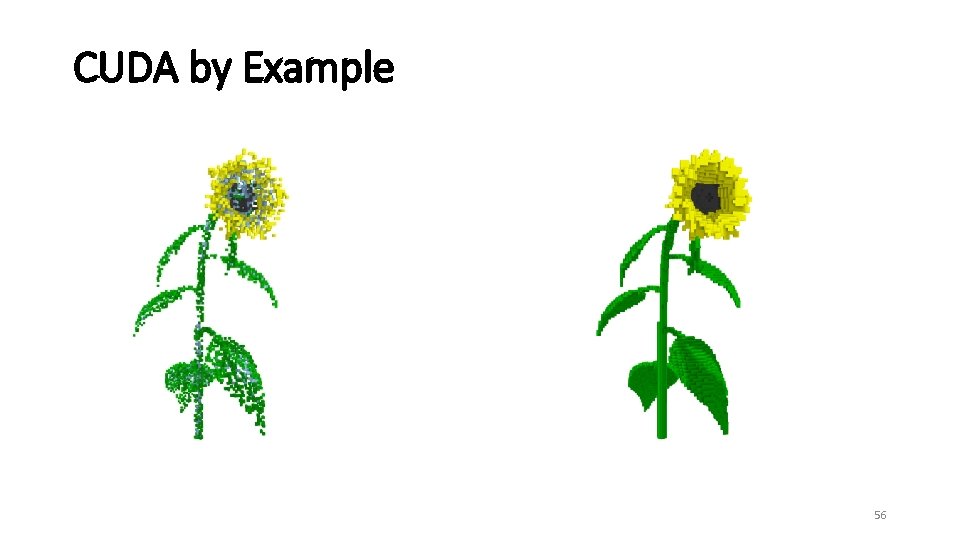

More results • surprising and buggy behaviours observed: • GPU mutex implementations allow stale data to be read (found in CUDA by Example book and other academic papers 1, 2) led to an erratum issued by Nvidia • Hardware re-orders loads from the same address in Nvidia Fermi and Kepler • Some testing on AMD GPUs 1 J. A. Stuart and J. D. Owens, "Efficient synchronization primitives for GPUs" Co. RR, 2011, http: //arxiv. org/pdf/1110. 4623. pdf. 2 B. He and J. X. Yu, “High-throughput transaction executions on graphics processors” PVLDB 2011. 52

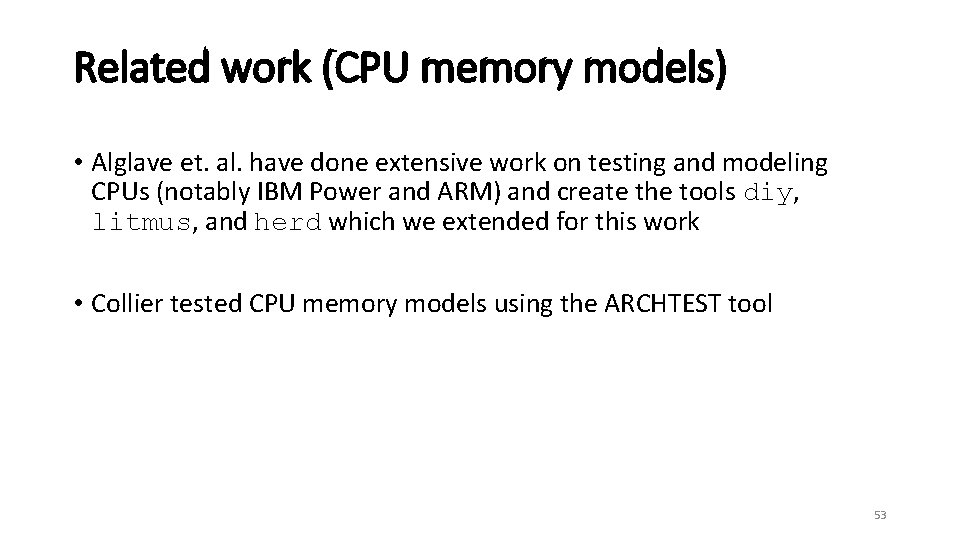

Related work (CPU memory models) • Alglave et. al. have done extensive work on testing and modeling CPUs (notably IBM Power and ARM) and create the tools diy, litmus, and herd which we extended for this work • Collier tested CPU memory models using the ARCHTEST tool 53

Related work (GPU memory models) • Hower have proposed several SC for race-free language level memory models for GPUs 54

Questions? project page: http: //virginia. cs. ucl. ac. uk/sunflowers/asplos 15/ Intel Core i 7 4500 CPU Nvidia Tesla C 2075 GPU (with fences)

CUDA by Example 56

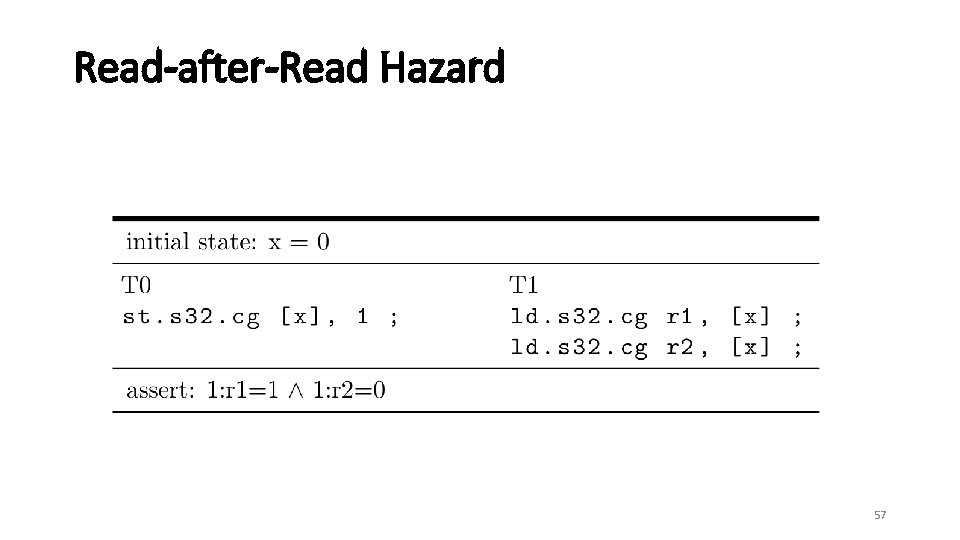

Read-after-Read Hazard 57

Ignore after this 58

Results • Surprising and buggy behaviours observed: • SC-per-location violations on NVIDIA Fermi and Kepler architecture: todo: add CORR test 59

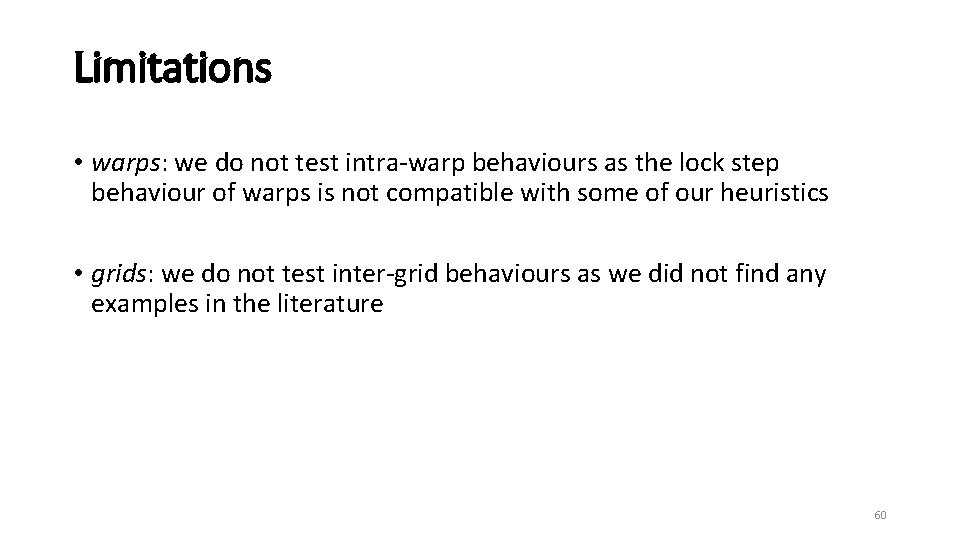

Limitations • warps: we do not test intra-warp behaviours as the lock step behaviour of warps is not compatible with some of our heuristics • grids: we do not test inter-grid behaviours as we did not find any examples in the literature 60

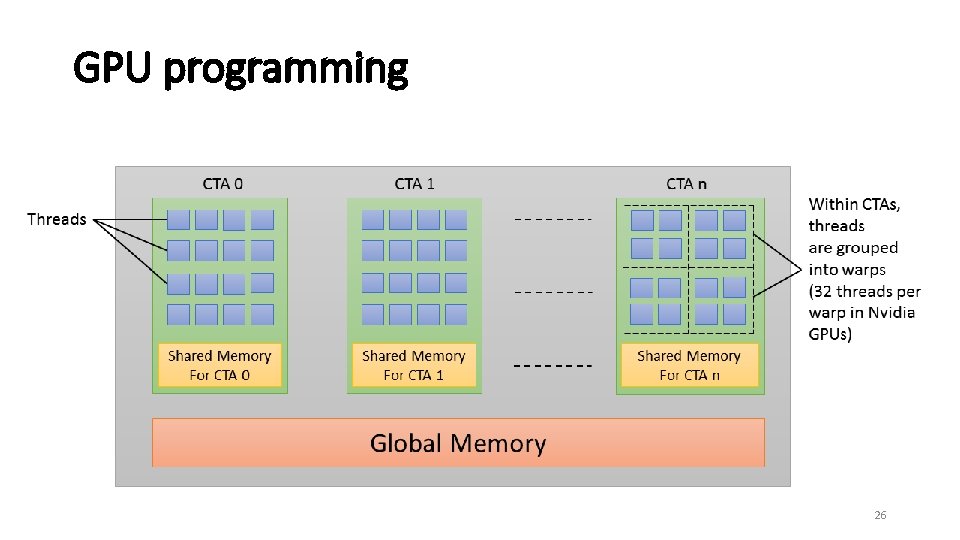

GPU programming • GPUs are SIMT (Single Instruction, Multiple Thread) • Nvidia GPUs may be programmed using CUDA or Open. CL 61

Roadmap • background and motivation • approach • GPU tests • running tests • modeling 62

Heuristics • two additional heuristics: • synchronization: testing threads synchronize immediately before running the test program • general bank conflicts: generate memory access that conflict with the accesses in the memory stress heuristic 63

Challenges • PTX optimizing assembler may reorder or remove instructions • We developed a tool optcheck which compares the litmus test with the binary and checks for optimizations 64

Roadmap • background and motivation • approach • GPU tests • running tests • modeling 65

![GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1 GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1](http://slidetodoc.com/presentation_image/5eba5af8ffac9f85414545141bd73e00/image-66.jpg)

GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1 st. cg. s 32 [y], 1 | T 1 ; | ld. cg. s 32 r 1, [y] ; | ld. cg. s 32 r 2, [x] ; Scope. Tree (grid(cta(warp T 0) (warp T 1))) x: shared, y: global exists (1: r 1=1 / 1: r 2=0) 66

![GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1 GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1](http://slidetodoc.com/presentation_image/5eba5af8ffac9f85414545141bd73e00/image-67.jpg)

GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1 st. cg. s 32 [y], 1 | T 1 ; | ld. cg. s 32 r 1, [y] ; | ld. cg. s 32 r 2, [x] ; Scope. Tree (grid(cta(warp T 0) (warp T 1))) x: shared, y: global exists (1: r 1=1 / 1: r 2=0) 67

![GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1 GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1](http://slidetodoc.com/presentation_image/5eba5af8ffac9f85414545141bd73e00/image-68.jpg)

GPU tests • concrete GPU test T 0 st. cg. s 32 [x], 1 st. cg. s 32 [y], 1 | T 1 ; | ld. cg. s 32 r 1, [y] ; | ld. cg. s 32 r 2, [x] ; Scope. Tree (grid(cta(warp T 0) (warp T 1))) x: shared, y: global exists (1: r 1=1 / 1: r 2=0) 68

GPU programming explicit hierarchical concurrency model • thread hierarchy: • memory hierarchy: • thread • shared memory • warp • global memory • CTA (Cooperative Thread Array) • grid 69

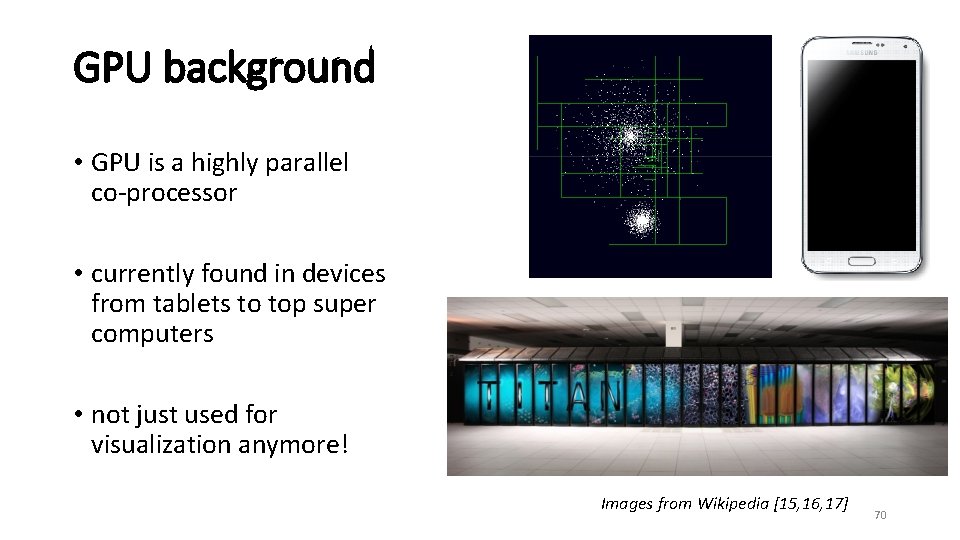

GPU background • GPU is a highly parallel co-processor • currently found in devices from tablets to top super computers • not just used for visualization anymore! Images from Wikipedia [15, 16, 17] 70

![References [1] L. Lamport, "How to make a multiprocessor computer that correctly executes multi-process References [1] L. Lamport, "How to make a multiprocessor computer that correctly executes multi-process](http://slidetodoc.com/presentation_image/5eba5af8ffac9f85414545141bd73e00/image-71.jpg)

References [1] L. Lamport, "How to make a multiprocessor computer that correctly executes multi-process programs" Trans. Comput. 1979. [2] J. Alglave, L. Maranget, S. Sarkar, and P. Sewell, "Litmus: Running tests against hardware" TACAS 2011. [3] J. Alglave, L. Maranget, and M. Tautschnig, "Herding cats: modelling, simulation, testing, and data-mining for weak memory" TOPLAS 2014. [4] NVIDIA, "CUDA C programming guide, version 6 (July 2014)" http: //docs. nvidia. com/cuda/pdf/CUDA C Programming Guide. pdf [5] NVIDIA, "Parallel Thread Execution ISA: Version 4. 0 (Feb. 2014), " http: //docs. nvidia. com/cuda/parallel-thread-execution [6] J. Alglave, L. Maranget, S. Sarkar, and P. Sewell, “Fences in weak memory models (extended version)” FMSD 2012 [7] J. Sanders and E. Kandrot, “CUDA by Example: An Introduction to General-Purpose GPU Programming” Addison-Wesley Professional, 2010. 71

![References [8] J. A. Stuart and J. D. Owens, "Efficient synchronization primitives for GPUs" References [8] J. A. Stuart and J. D. Owens, "Efficient synchronization primitives for GPUs"](http://slidetodoc.com/presentation_image/5eba5af8ffac9f85414545141bd73e00/image-72.jpg)

References [8] J. A. Stuart and J. D. Owens, "Efficient synchronization primitives for GPUs" Co. RR, 2011, http: //arxiv. org/pdf/1110. 4623. pdf. [9] B. He and J. X. Yu, “High-throughput transaction executions on graphics processors” PVLDB 2011. [10] W. W. Collier, Reasoning About Parallel Architectures. Prentice-Hall, Inc. , 1992. [11] D. R. Hower, B. M. Beckmann, B. R. Gaster, B. A. Hechtman, M. D. Hill, S. K. Reinhardt, and D. A. Wood, "Sequential consistency for heterogeneous-race-free" MSPC 2013. [12] D. R. Hower, B. A. Hechtman, B. M. Beckmann, B. R. Gaster, M. D. Hill, S. K. Reinhardt, and D. A. Wood, "Heterogeneous-racefree memory models, " ASPLOS 2014 [13] T. Sorensen, G. Gopalakrishnan, and V. Grover, "Towards shared memory consistency models for GPUs" ICS 2013 [14] W. -m. W. Hwu, “GPU Computing Gems Jade Edition” Morgan Kaufmann Publishers Inc. , 2011. 72

![References [15] http: //en. wikipedia. org/wiki/Samsung_Galaxy_S 5 [16] http: //en. wikipedia. org/wiki/Titan_(supercomputer) [17] http: References [15] http: //en. wikipedia. org/wiki/Samsung_Galaxy_S 5 [16] http: //en. wikipedia. org/wiki/Titan_(supercomputer) [17] http:](http://slidetodoc.com/presentation_image/5eba5af8ffac9f85414545141bd73e00/image-73.jpg)

References [15] http: //en. wikipedia. org/wiki/Samsung_Galaxy_S 5 [16] http: //en. wikipedia. org/wiki/Titan_(supercomputer) [17] http: //en. wikipedia. org/wiki/Barnes_Hut_simulation 73

Roadmap • what happened to the pony • how we found the bug • how we are able to fix the pony (background) (methodology) (contribution) 74

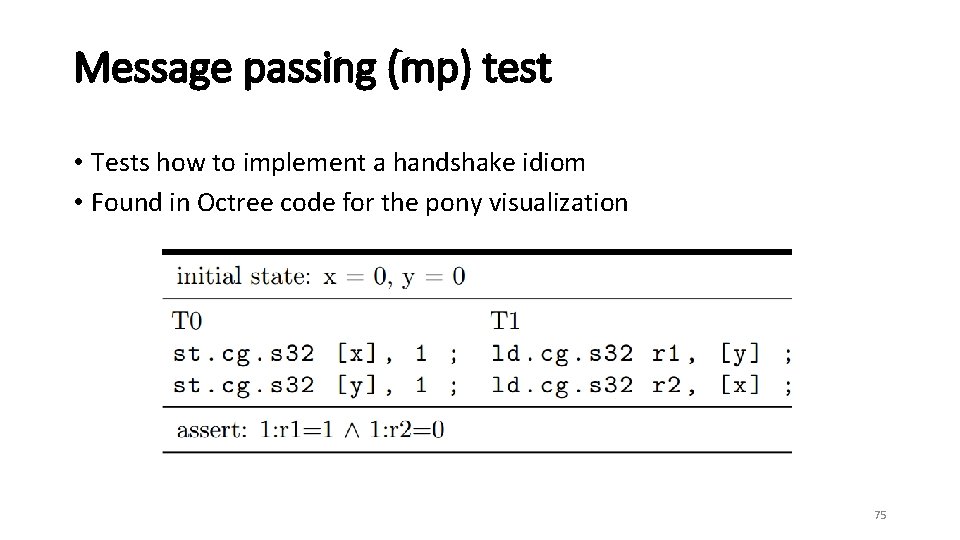

Message passing (mp) test • Tests how to implement a handshake idiom • Found in Octree code for the pony visualization 75

Message passing (mp) test • Tests how to implement a handshake idiom Data 76

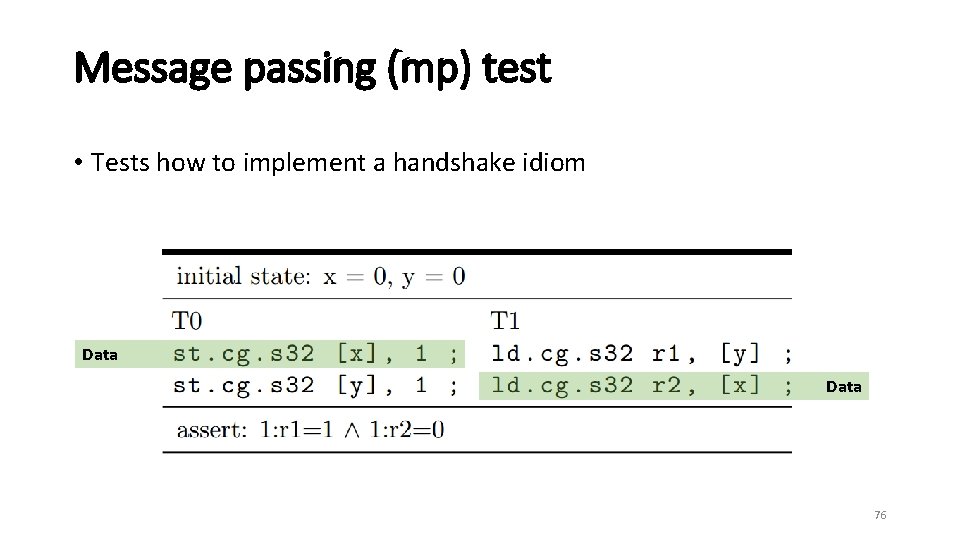

Message passing (mp) test • Tests how to implement a handshake idiom Flag 77

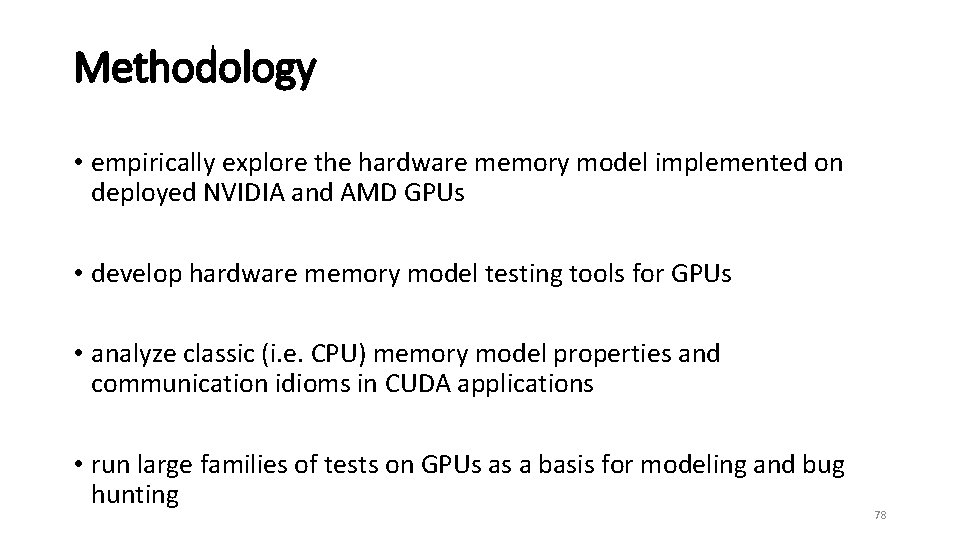

Methodology • empirically explore the hardware memory model implemented on deployed NVIDIA and AMD GPUs • develop hardware memory model testing tools for GPUs • analyze classic (i. e. CPU) memory model properties and communication idioms in CUDA applications • run large families of tests on GPUs as a basis for modeling and bug hunting 78

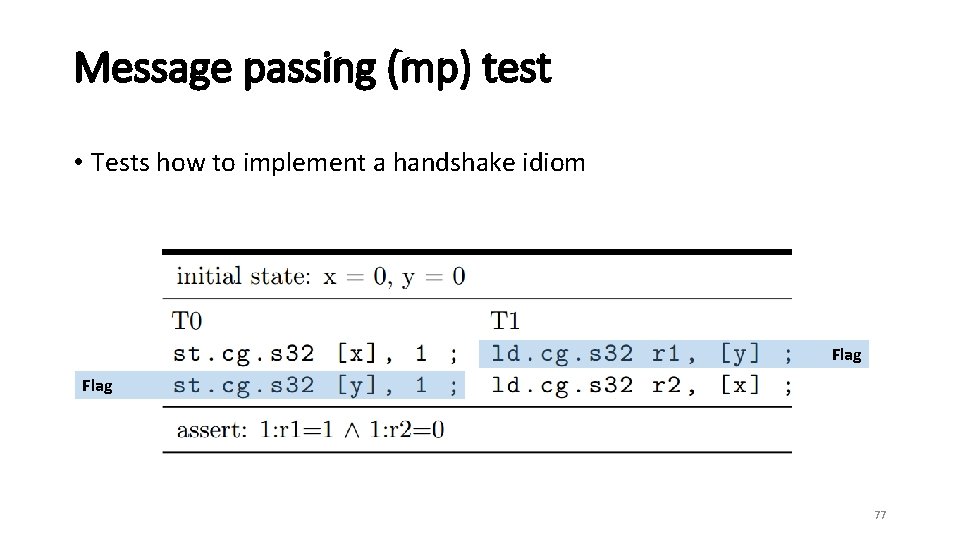

Message passing (mp) test • Tests how to implement a handshake idiom Stale Data 79

Running tests • however, unlike CPUs, simply running the tests did not yield any weak memory behaviours for Nvidia chips! • we developed heuristics to run tests under a variety of stress to expose weak behaviours 80

- Slides: 80