GPU Architecture Implications David Luebke NVIDIA Research GPU

GPU Architecture & Implications David Luebke NVIDIA Research

GPU Architecture CUDA provides a parallel programming model The Tesla GPU architecture implements this This talk will describe the characteristics, goals, and implications of that architecture © NVIDIA Corporation 2007

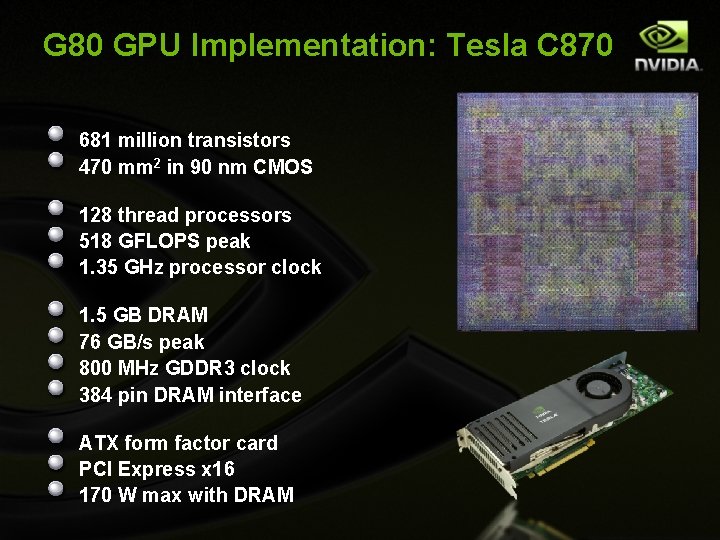

G 80 GPU Implementation: Tesla C 870 681 million transistors 470 mm 2 in 90 nm CMOS 128 thread processors 518 GFLOPS peak 1. 35 GHz processor clock 1. 5 GB DRAM 76 GB/s peak 800 MHz GDDR 3 clock 384 pin DRAM interface ATX form factor card PCI Express x 16 170 W max with DRAM

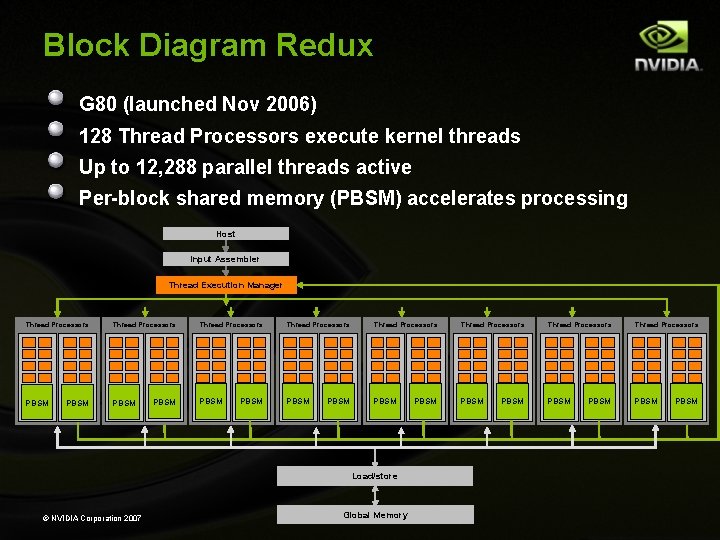

Block Diagram Redux G 80 (launched Nov 2006) 128 Thread Processors execute kernel threads Up to 12, 288 parallel threads active Per-block shared memory (PBSM) accelerates processing Host Input Assembler Thread Execution Manager Thread Processors PBSM Thread Processors Thread Processors PBSM PBSM Load/store © NVIDIA Corporation 2007 Global Memory PBSM

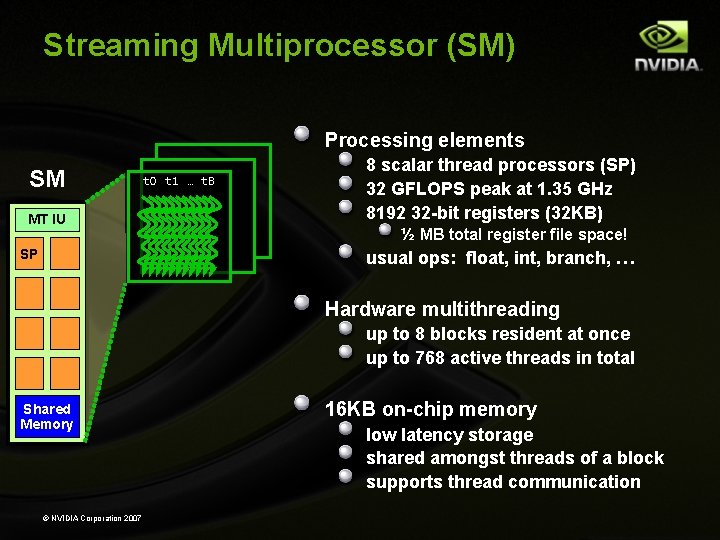

Streaming Multiprocessor (SM) Processing elements SM MT IU SP t 0 t 1 … t. B 8 scalar thread processors (SP) 32 GFLOPS peak at 1. 35 GHz 8192 32 -bit registers (32 KB) ½ MB total register file space! usual ops: float, int, branch, … Hardware multithreading up to 8 blocks resident at once up to 768 active threads in total Shared Memory © NVIDIA Corporation 2007 16 KB on-chip memory low latency storage shared amongst threads of a block supports thread communication

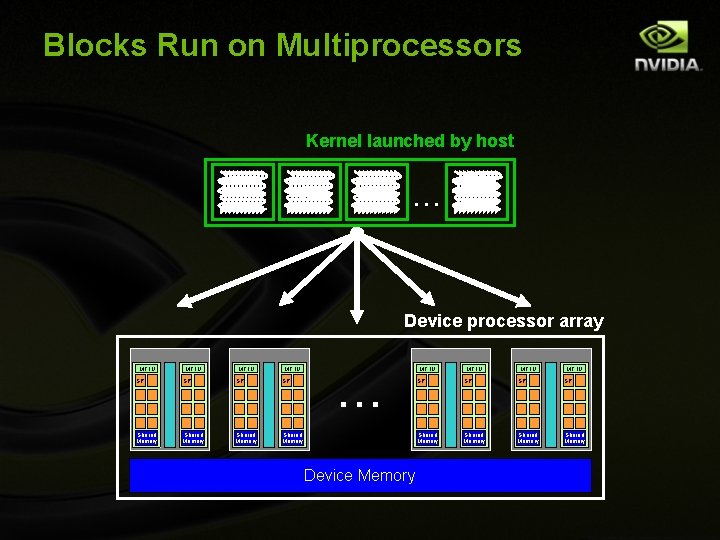

Goal: Scalability Scalable execution Program must be insensitive to the number of cores Write one program for any number of SM cores Program runs on any size GPU without recompiling Hierarchical execution model Decompose problem into sequential steps (kernels) Decompose kernel into computing parallel blocks Decompose block into computing parallel threads Hardware distributes independent blocks to SMs as available

Blocks Run on Multiprocessors Kernel launched by host. . . Device processor array MT IU SP SP Shared Memory MT IU . . . Device Memory MT IU SP SP Shared Memory

Goal: easy to program Strategies: Familiar programming language mechanics C/C++ with small extension Simple parallel abstractions Simple barrier synchronization Shared memory semantics Hardware-managed hierarchy of threads

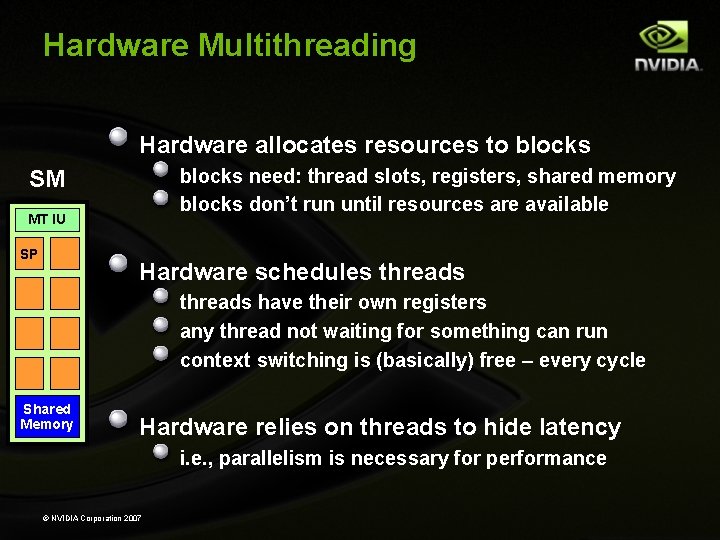

Hardware Multithreading Hardware allocates resources to blocks need: thread slots, registers, shared memory blocks don’t run until resources are available SM MT IU SP Hardware schedules threads have their own registers any thread not waiting for something can run context switching is (basically) free – every cycle Shared Memory Hardware relies on threads to hide latency i. e. , parallelism is necessary for performance © NVIDIA Corporation 2007

Goal: Performance per millimeter For GPUs, perfomance == throughput Strategy: hide latency with computation not cache Heavy multithreading – already discussed by Kevin Implication: need many threads to hide latency Occupancy – typically need 128 threads/SM minimum Multiple thread blocks/SM good to minimize effect of barriers Strategy: Single Instruction Multiple Thread (SIMT) Balances performance with ease of programming

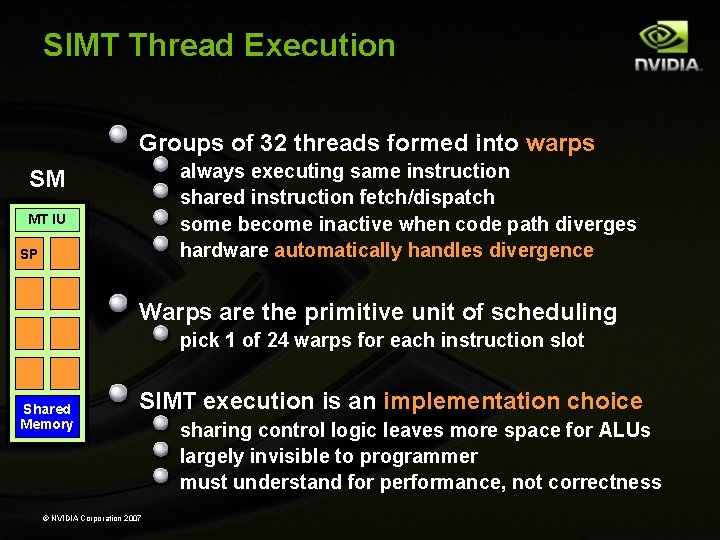

SIMT Thread Execution Groups of 32 threads formed into warps always executing same instruction shared instruction fetch/dispatch some become inactive when code path diverges hardware automatically handles divergence SM MT IU SP Warps are the primitive unit of scheduling pick 1 of 24 warps for each instruction slot Shared Memory SIMT execution is an implementation choice © NVIDIA Corporation 2007 sharing control logic leaves more space for ALUs largely invisible to programmer must understand for performance, not correctness

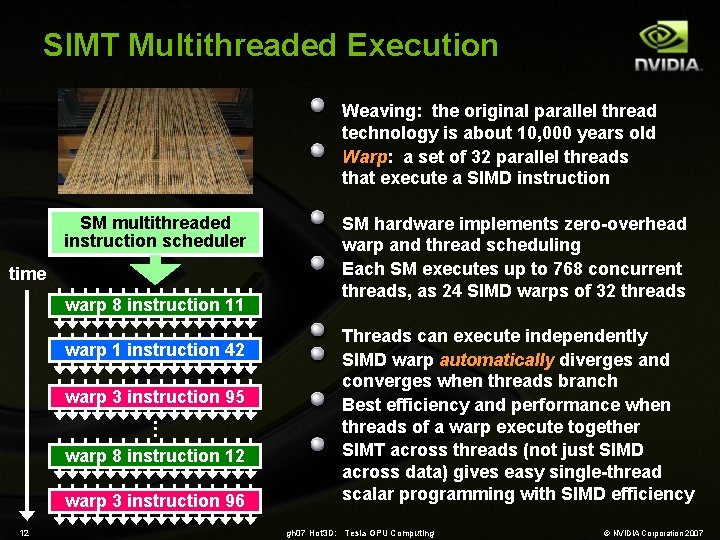

SIMT Multithreaded Execution Weaving: the original parallel thread technology is about 10, 000 years old Warp: a set of 32 parallel threads that execute a SIMD instruction SM multithreaded instruction scheduler time warp 8 instruction 11 warp 1 instruction 42 warp 3 instruction 95. . . warp 8 instruction 12 warp 3 instruction 96 12 SM hardware implements zero-overhead warp and thread scheduling Each SM executes up to 768 concurrent threads, as 24 SIMD warps of 32 threads Threads can execute independently SIMD warp automatically diverges and converges when threads branch Best efficiency and performance when threads of a warp execute together SIMT across threads (not just SIMD across data) gives easy single-thread scalar programming with SIMD efficiency gh 07 Hot 3 D: Tesla GPU Computing © NVIDIA Corporation 2007

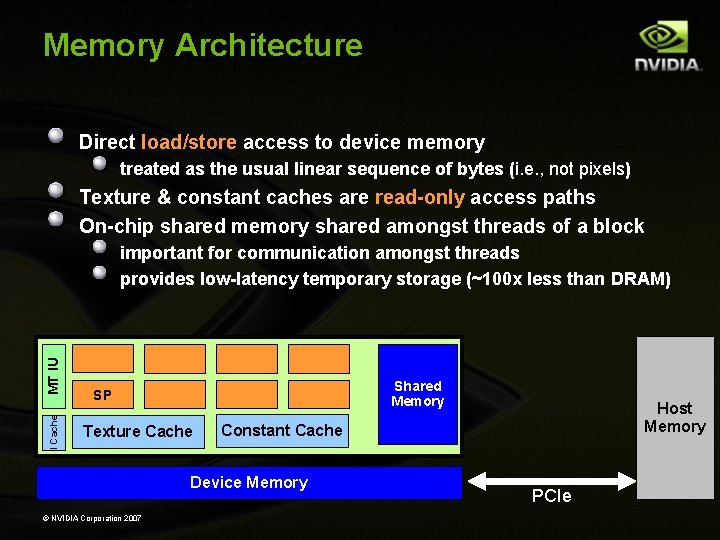

Memory Architecture Direct load/store access to device memory treated as the usual linear sequence of bytes (i. e. , not pixels) Texture & constant caches are read-only access paths On-chip shared memory shared amongst threads of a block I Cache MT IU important for communication amongst threads provides low-latency temporary storage (~100 x less than DRAM) Shared Memory SP Texture Cache Constant Cache Device Memory © NVIDIA Corporation 2007 Host Memory PCIe

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics NO: CUDA compiles directly to the hardware GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… NO: warps are 32 -wide …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… NOPE …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. NO: scalar thread processors GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient: No – 4 -10 x perf/W advantage, up to 89 x reported for some studies GPUs don’t do real floating point

Myths of GPU Computing GPUs layer normal programs on top of graphics GPUs architectures are: Very wide (1000 s) SIMD machines… …on which branching is impossible or prohibitive… …with 4 -wide vector registers. GPUs are power-inefficient: GPUs don’t do real floating point

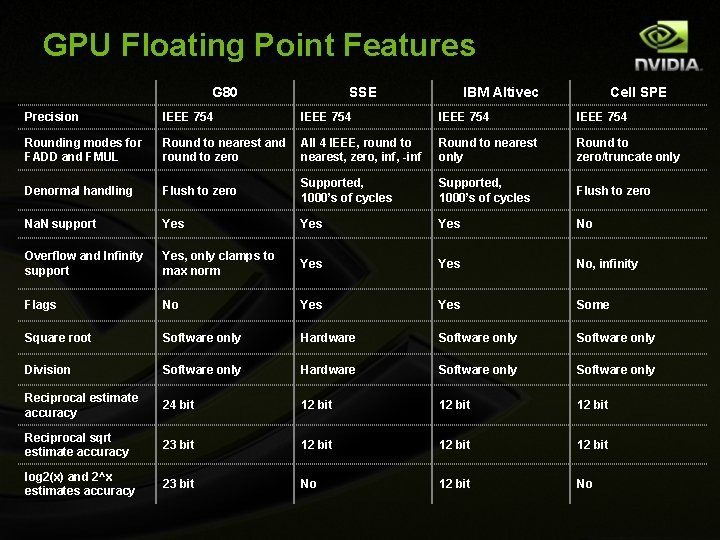

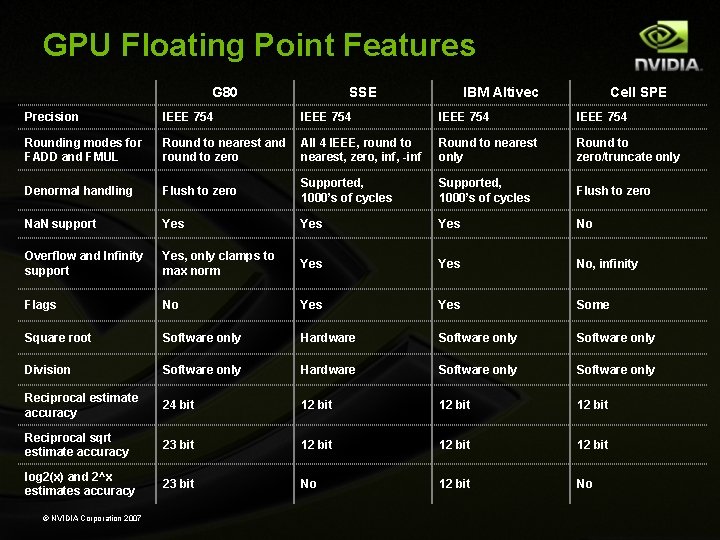

GPU Floating Point Features G 80 SSE IBM Altivec Cell SPE Precision IEEE 754 Rounding modes for FADD and FMUL Round to nearest and round to zero All 4 IEEE, round to nearest, zero, inf, -inf Round to nearest only Round to zero/truncate only Denormal handling Flush to zero Supported, 1000’s of cycles Flush to zero Na. N support Yes Yes No Overflow and Infinity support Yes, only clamps to max norm Yes No, infinity Flags No Yes Some Square root Software only Hardware Software only Division Software only Hardware Software only Reciprocal estimate accuracy 24 bit 12 bit Reciprocal sqrt estimate accuracy 23 bit 12 bit log 2(x) and 2^x estimates accuracy 23 bit No 12 bit No

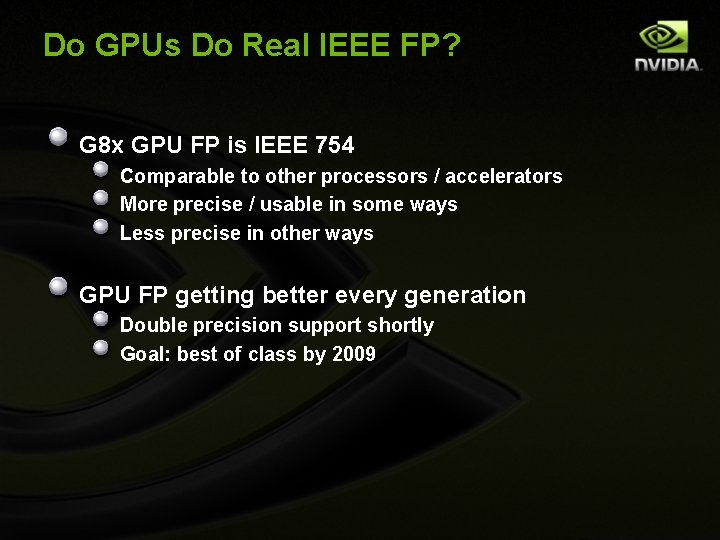

Do GPUs Do Real IEEE FP? G 8 x GPU FP is IEEE 754 Comparable to other processors / accelerators More precise / usable in some ways Less precise in other ways GPU FP getting better every generation Double precision support shortly Goal: best of class by 2009

Questions? David Luebke dluebke@nvidia. com

Applications & Sweet Spots

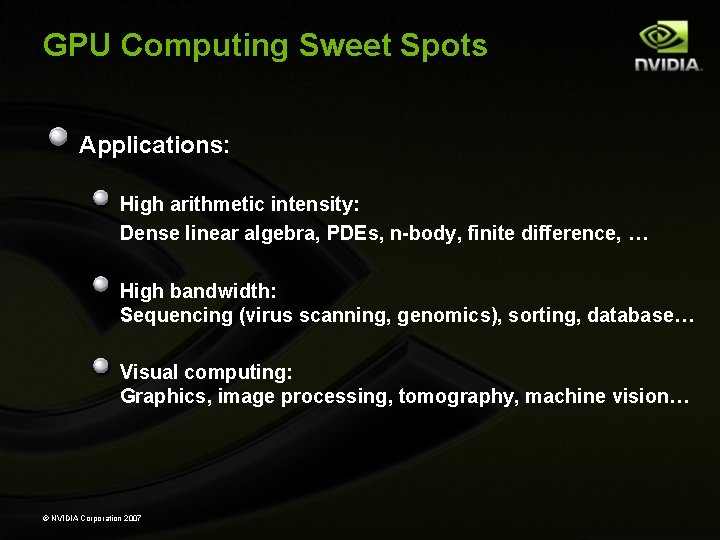

GPU Computing Sweet Spots Applications: High arithmetic intensity: Dense linear algebra, PDEs, n-body, finite difference, … High bandwidth: Sequencing (virus scanning, genomics), sorting, database… Visual computing: Graphics, image processing, tomography, machine vision… © NVIDIA Corporation 2007

GPU Computing Example Markets Computational Geoscience Computational Chemistry Computational Medicine Computational Modeling Computational Science Computational Biology Computational Finance © NVIDIA Corporation 2007 Image Processing

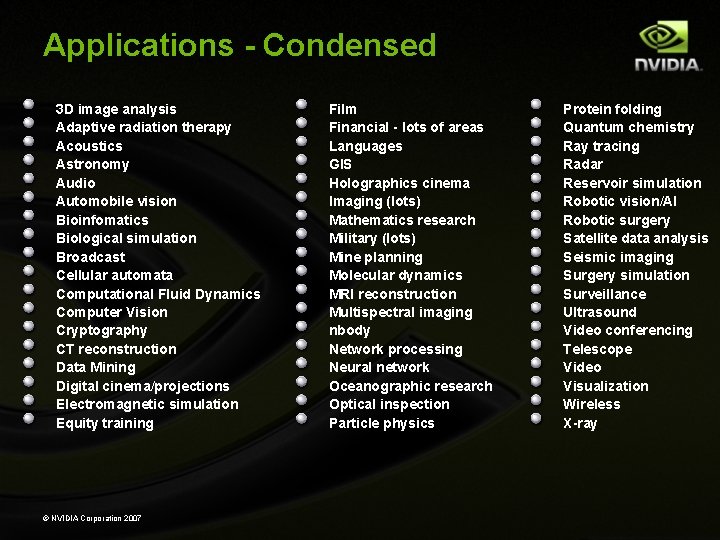

Applications - Condensed 3 D image analysis Adaptive radiation therapy Acoustics Astronomy Audio Automobile vision Bioinfomatics Biological simulation Broadcast Cellular automata Computational Fluid Dynamics Computer Vision Cryptography CT reconstruction Data Mining Digital cinema/projections Electromagnetic simulation Equity training © NVIDIA Corporation 2007 Film Financial - lots of areas Languages GIS Holographics cinema Imaging (lots) Mathematics research Military (lots) Mine planning Molecular dynamics MRI reconstruction Multispectral imaging nbody Network processing Neural network Oceanographic research Optical inspection Particle physics Protein folding Quantum chemistry Ray tracing Radar Reservoir simulation Robotic vision/AI Robotic surgery Satellite data analysis Seismic imaging Surgery simulation Surveillance Ultrasound Video conferencing Telescope Video Visualization Wireless X-ray

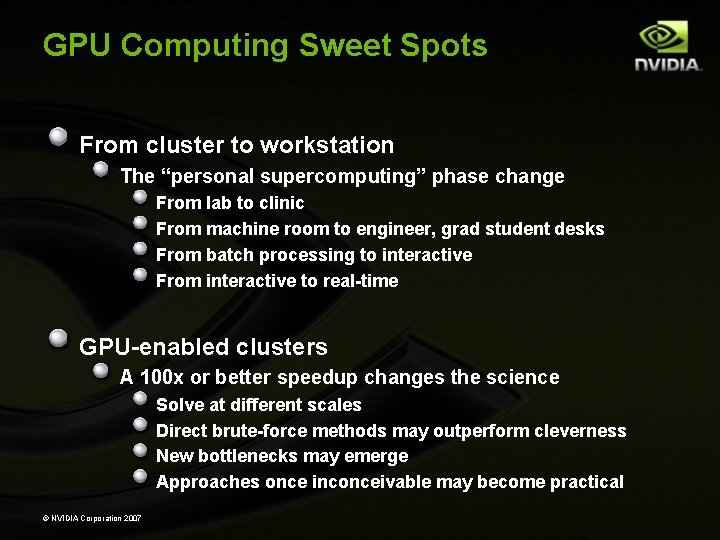

GPU Computing Sweet Spots From cluster to workstation The “personal supercomputing” phase change From lab to clinic From machine room to engineer, grad student desks From batch processing to interactive From interactive to real-time GPU-enabled clusters A 100 x or better speedup changes the science Solve at different scales Direct brute-force methods may outperform cleverness New bottlenecks may emerge Approaches once inconceivable may become practical © NVIDIA Corporation 2007

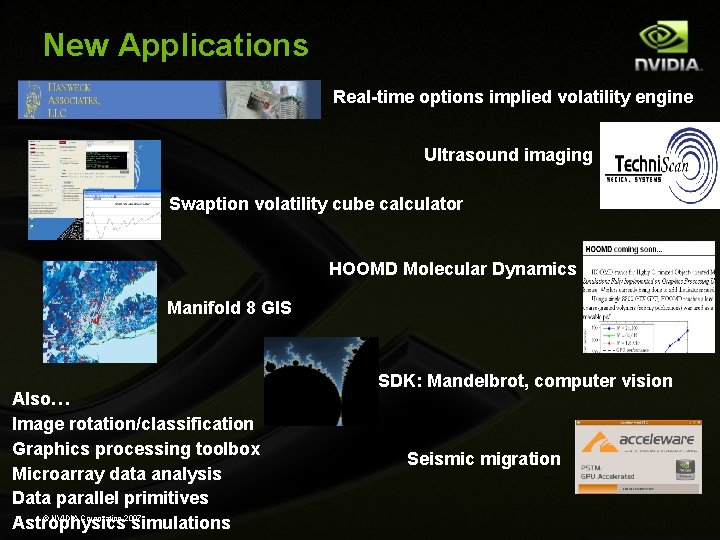

New Applications Real-time options implied volatility engine Ultrasound imaging Swaption volatility cube calculator HOOMD Molecular Dynamics Manifold 8 GIS Also… Image rotation/classification Graphics processing toolbox Microarray data analysis Data parallel primitives © NVIDIA Corporation 2007 Astrophysics simulations SDK: Mandelbrot, computer vision Seismic migration

The Future of GPUs GPU Computing drives new applications Reducing “Time to Discovery” 100 x Speedup changes science and research methods New applications drive the future of GPUs and GPU Computing Drives new GPU capabilities Drives hunger for more performance Some exciting new domains: Vision, acoustic, and embedded applications Large-scale simulation & physics © NVIDIA Corporation 2007

Accuracy & Performance

GPU Floating Point Features G 80 SSE IBM Altivec Cell SPE Precision IEEE 754 Rounding modes for FADD and FMUL Round to nearest and round to zero All 4 IEEE, round to nearest, zero, inf, -inf Round to nearest only Round to zero/truncate only Denormal handling Flush to zero Supported, 1000’s of cycles Flush to zero Na. N support Yes Yes No Overflow and Infinity support Yes, only clamps to max norm Yes No, infinity Flags No Yes Some Square root Software only Hardware Software only Division Software only Hardware Software only Reciprocal estimate accuracy 24 bit 12 bit Reciprocal sqrt estimate accuracy 23 bit 12 bit log 2(x) and 2^x estimates accuracy 23 bit No 12 bit No © NVIDIA Corporation 2007

Do GPUs Do Real IEEE FP? G 8 x GPU FP is IEEE 754 Comparable to other processors / accelerators More precise / usable in some ways Less precise in other ways GPU FP getting better every generation Double precision support shortly Goal: best of class by 2009 © NVIDIA Corporation 2007

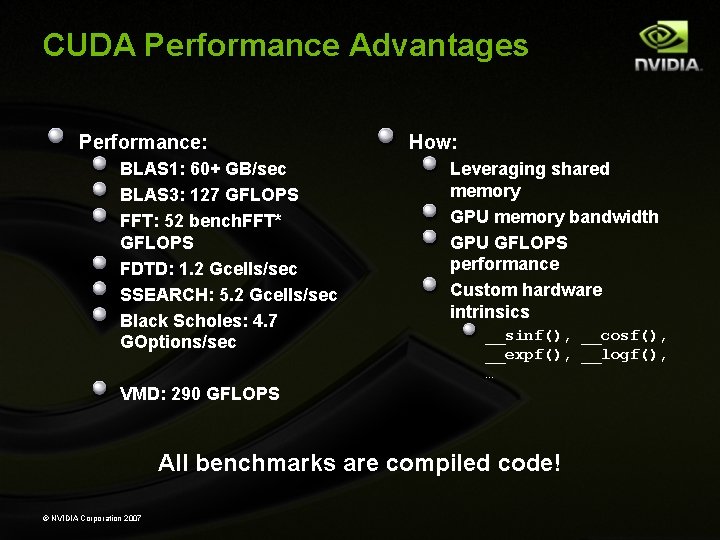

CUDA Performance Advantages Performance: BLAS 1: 60+ GB/sec BLAS 3: 127 GFLOPS FFT: 52 bench. FFT* GFLOPS FDTD: 1. 2 Gcells/sec SSEARCH: 5. 2 Gcells/sec Black Scholes: 4. 7 GOptions/sec How: Leveraging shared memory GPU memory bandwidth GPU GFLOPS performance Custom hardware intrinsics __sinf(), __cosf(), __expf(), __logf(), … VMD: 290 GFLOPS All benchmarks are compiled code! © NVIDIA Corporation 2007

GPGPU vs. GPU Computing

Problem: GPGPU OLD: GPGPU – trick the GPU into general-purpose computing by casting problem as graphics Turn data into images (“texture maps”) Turn algorithms into image synthesis (“rendering passes”) Promising results, but: Tough learning curve, particularly for non-graphics experts Potentially high overhead of graphics API Highly constrained memory layout & access model Need for many passes drives up bandwidth consumption © NVIDIA Corporation 2007

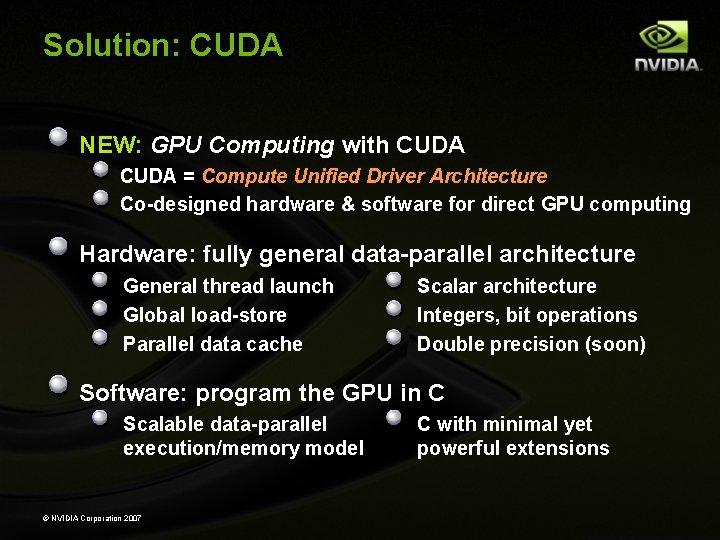

Solution: CUDA NEW: GPU Computing with CUDA = Compute Unified Driver Architecture Co-designed hardware & software for direct GPU computing Hardware: fully general data-parallel architecture General thread launch Global load-store Parallel data cache Scalar architecture Integers, bit operations Double precision (soon) Software: program the GPU in C Scalable data-parallel execution/memory model © NVIDIA Corporation 2007 C with minimal yet powerful extensions

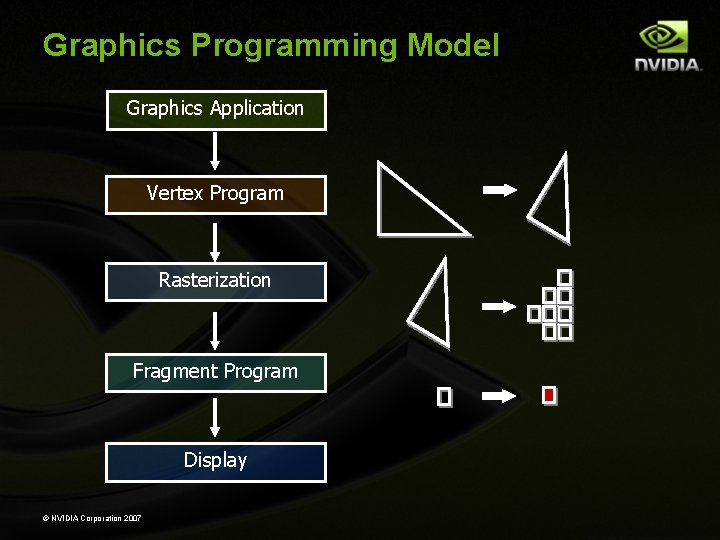

Graphics Programming Model Graphics Application Vertex Program Rasterization Fragment Program Display © NVIDIA Corporation 2007

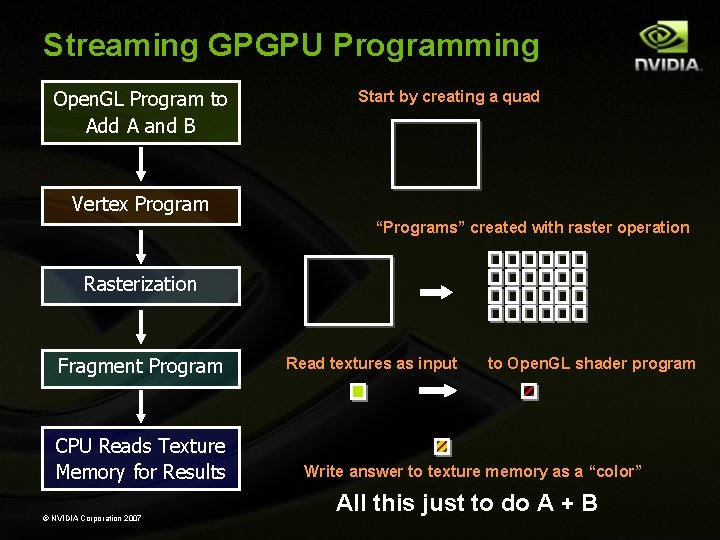

Streaming GPGPU Programming Open. GL Program to Add A and B Start by creating a quad Vertex Program “Programs” created with raster operation Rasterization Fragment Program CPU Reads Texture Memory for Results © NVIDIA Corporation 2007 Read textures as input to Open. GL shader program Write answer to texture memory as a “color” All this just to do A + B

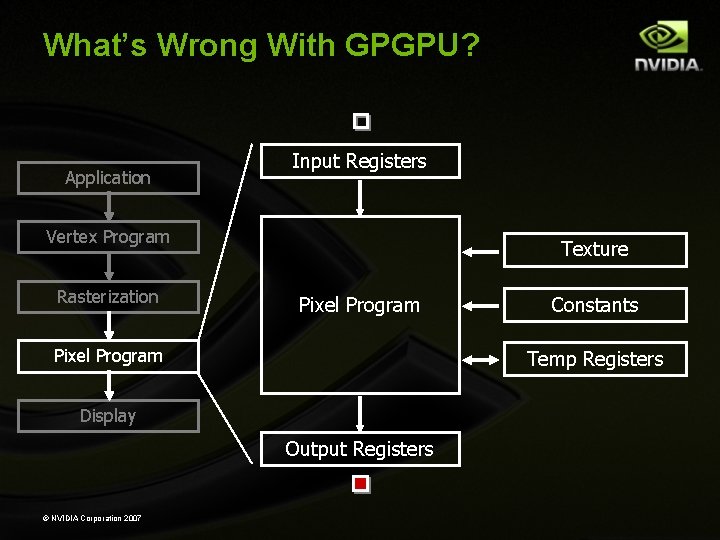

What’s Wrong With GPGPU? Application Input Registers Vertex Program Rasterization Texture Pixel Program Temp Registers Display Output Registers © NVIDIA Corporation 2007 Constants

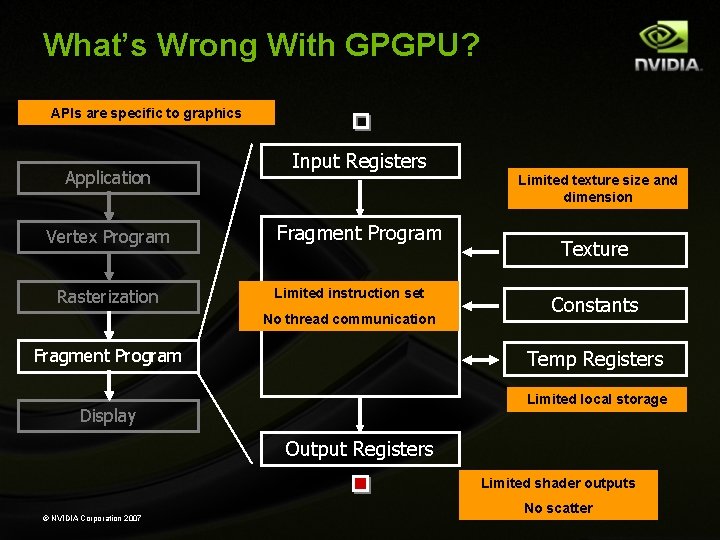

What’s Wrong With GPGPU? APIs are specific to graphics Application Vertex Program Rasterization Input Registers Limited texture size and dimension Fragment Program Limited instruction set No thread communication Fragment Program Texture Constants Temp Registers Limited local storage Display Output Registers Limited shader outputs © NVIDIA Corporation 2007 No scatter

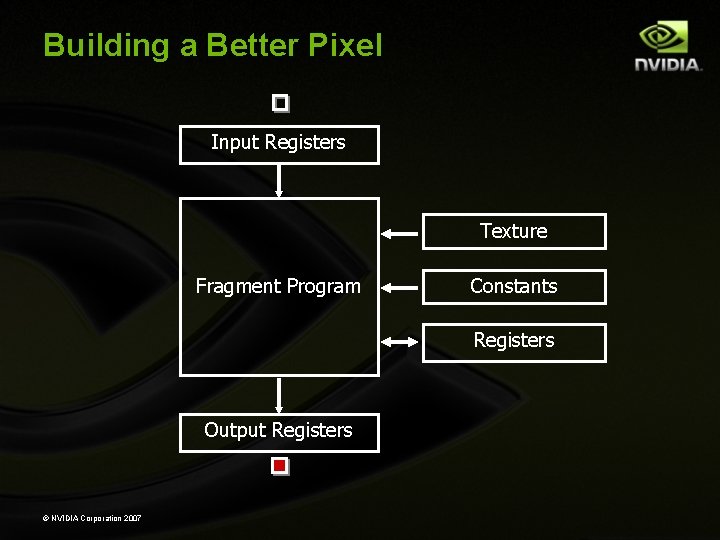

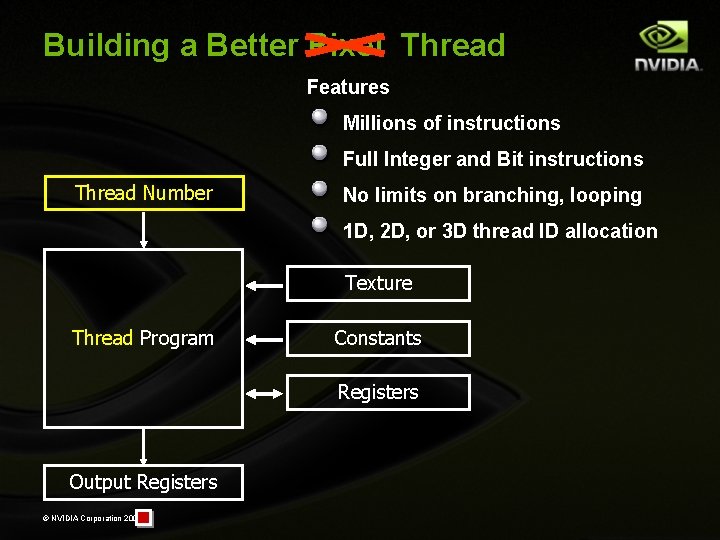

Building a Better Pixel Input Registers Texture Fragment Program Constants Registers Output Registers © NVIDIA Corporation 2007

Building a Better Pixel Thread Features Millions of instructions Full Integer and Bit instructions Thread Number No limits on branching, looping 1 D, 2 D, or 3 D thread ID allocation Texture Thread Program Constants Registers Output Registers © NVIDIA Corporation 2007

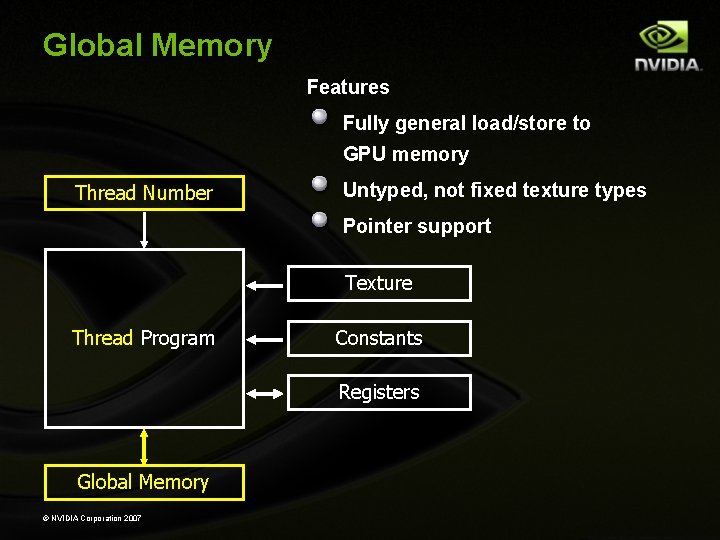

Global Memory Features Fully general load/store to GPU memory Thread Number Untyped, not fixed texture types Pointer support Texture Thread Program Constants Registers Global Memory © NVIDIA Corporation 2007

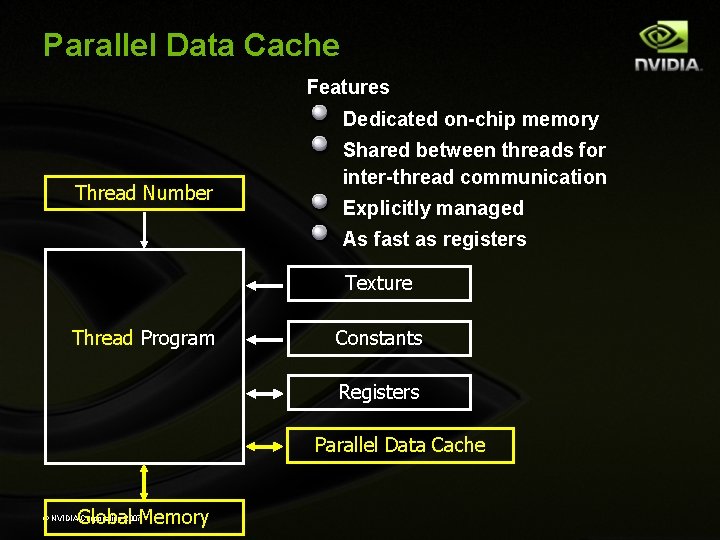

Parallel Data Cache Features Dedicated on-chip memory Thread Number Shared between threads for inter-thread communication Explicitly managed As fast as registers Texture Thread Program Constants Registers Parallel Data Cache Global Memory © NVIDIA Corporation 2007

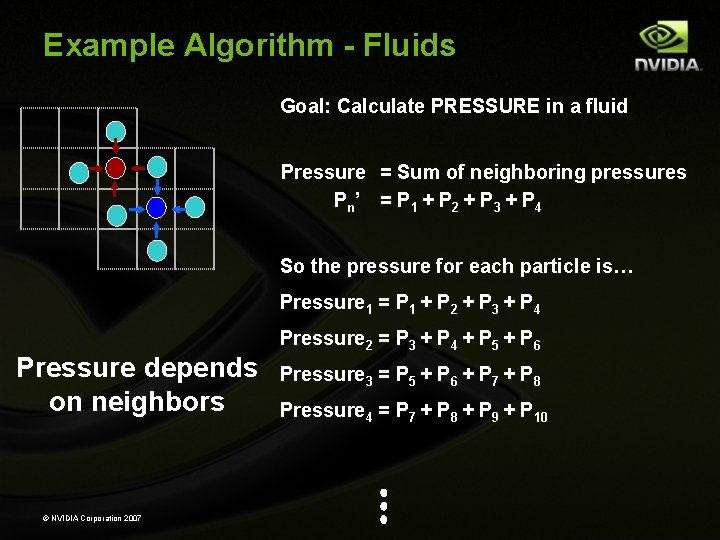

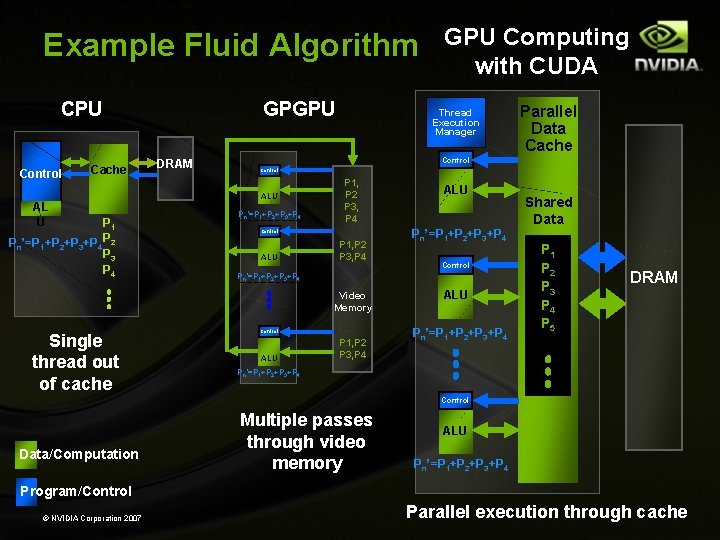

Example Algorithm - Fluids Goal: Calculate PRESSURE in a fluid Pressure = Sum of neighboring pressures Pn ’ = P 1 + P 2 + P 3 + P 4 So the pressure for each particle is… Pressure 1 = P 1 + P 2 + P 3 + P 4 Pressure depends on neighbors © NVIDIA Corporation 2007 Pressure 2 = P 3 + P 4 + P 5 + P 6 Pressure 3 = P 5 + P 6 + P 7 + P 8 Pressure 4 = P 7 + P 8 + P 9 + P 10

Example Fluid Algorithm CPU Control Cache AL U P 1 Pn’=P 1+P 2+P 3+P 4 P 2 P 3 P 4 GPGPU DRAM Thread Execution Manager Parallel Data Cache Control ALU Pn’=P 1+P 2+P 3+P 4 P 1, P 2 P 3, P 4 Control ALU P 1, P 2 P 3, P 4 ALU Pn’=P 1+P 2+P 3+P 4 Control Pn’=P 1+P 2+P 3+P 4 Video Memory Single thread out of cache GPU Computing with CUDA Control ALU P 1, P 2 P 3, P 4 ALU Pn’=P 1+P 2+P 3+P 4 Shared Data P 1 P 2 P 3 P 4 P 5 DRAM Pn’=P 1+P 2+P 3+P 4 Control Data/Computation Multiple passes through video memory ALU Pn’=P 1+P 2+P 3+P 4 Program/Control © NVIDIA Corporation 2007 Parallel execution through cache

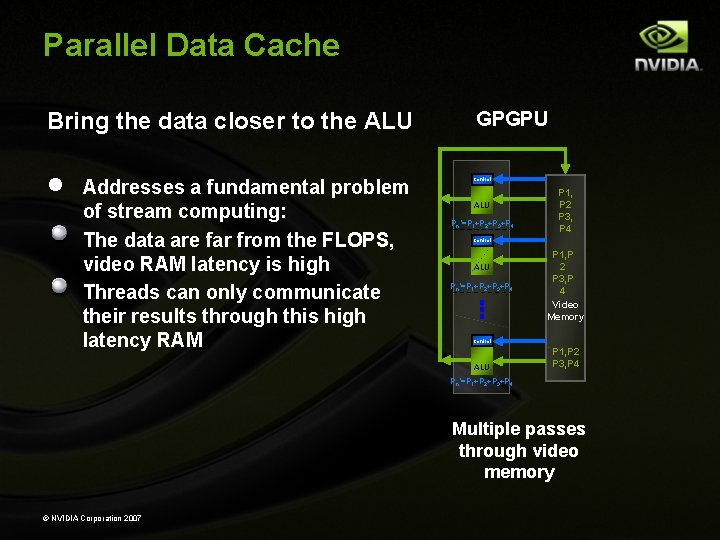

Parallel Data Cache Bring the data closer to the ALU · Addresses a fundamental problem of stream computing: The data are far from the FLOPS, video RAM latency is high Threads can only communicate their results through this high latency RAM GPGPU Control ALU Pn’=P 1+P 2+P 3+P 4 P 1, P 2 P 3, P 4 Video Memory Control ALU P 1, P 2 P 3, P 4 Pn’=P 1+P 2+P 3+P 4 Multiple passes through video memory © NVIDIA Corporation 2007

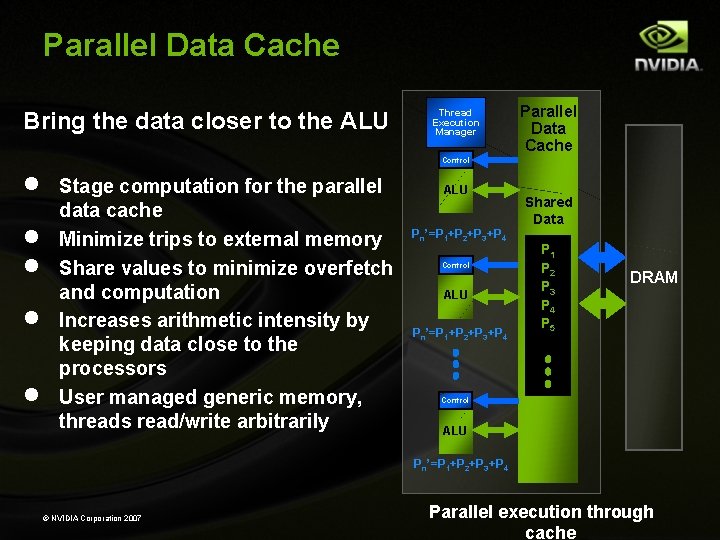

Parallel Data Cache Bring the data closer to the ALU · · · Thread Execution Manager Parallel Data Cache Control Stage computation for the parallel data cache Minimize trips to external memory Share values to minimize overfetch and computation Increases arithmetic intensity by keeping data close to the processors User managed generic memory, threads read/write arbitrarily ALU Pn’=P 1+P 2+P 3+P 4 Control ALU Pn’=P 1+P 2+P 3+P 4 Shared Data P 1 P 2 P 3 P 4 P 5 DRAM Control ALU Pn’=P 1+P 2+P 3+P 4 © NVIDIA Corporation 2007 Parallel execution through cache

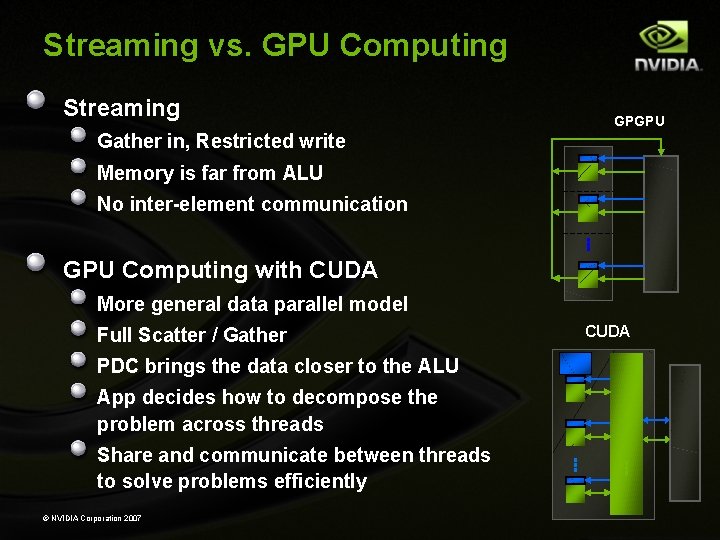

Streaming vs. GPU Computing Streaming GPGPU Gather in, Restricted write Memory is far from ALU No inter-element communication GPU Computing with CUDA ALU More general data parallel model CUDA Full Scatter / Gather PDC brings the data closer to the ALU App decides how to decompose the problem across threads Share and communicate between threads to solve problems efficiently ALU © NVIDIA Corporation 2007

GPU Design

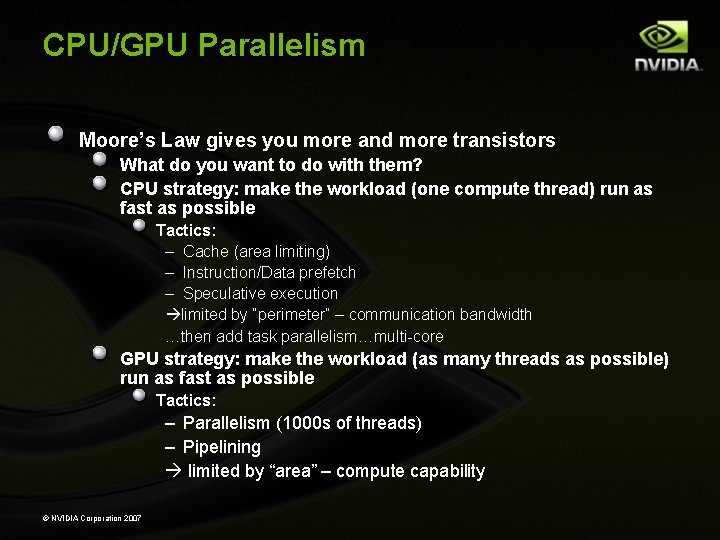

CPU/GPU Parallelism Moore’s Law gives you more and more transistors What do you want to do with them? CPU strategy: make the workload (one compute thread) run as fast as possible Tactics: – Cache (area limiting) – Instruction/Data prefetch – Speculative execution limited by “perimeter” – communication bandwidth …then add task parallelism…multi-core GPU strategy: make the workload (as many threads as possible) run as fast as possible Tactics: – Parallelism (1000 s of threads) – Pipelining limited by “area” – compute capability © NVIDIA Corporation 2007

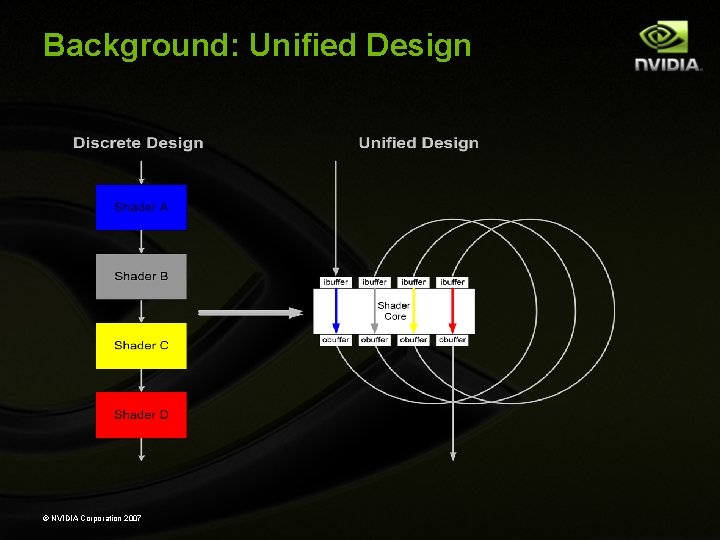

Background: Unified Design © NVIDIA Corporation 2007

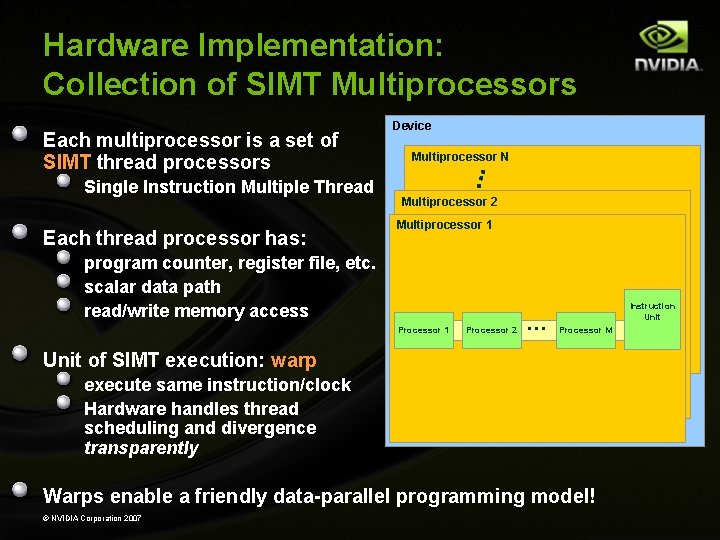

Hardware Implementation: Collection of SIMT Multiprocessors Each multiprocessor is a set of SIMT thread processors Single Instruction Multiple Thread Each thread processor has: Device Multiprocessor N Multiprocessor 2 Multiprocessor 1 program counter, register file, etc. scalar data path read/write memory access Processor 1 Processor 2 … Instruction Unit Processor M Unit of SIMT execution: warp execute same instruction/clock Hardware handles thread scheduling and divergence transparently Warps enable a friendly data-parallel programming model! © NVIDIA Corporation 2007

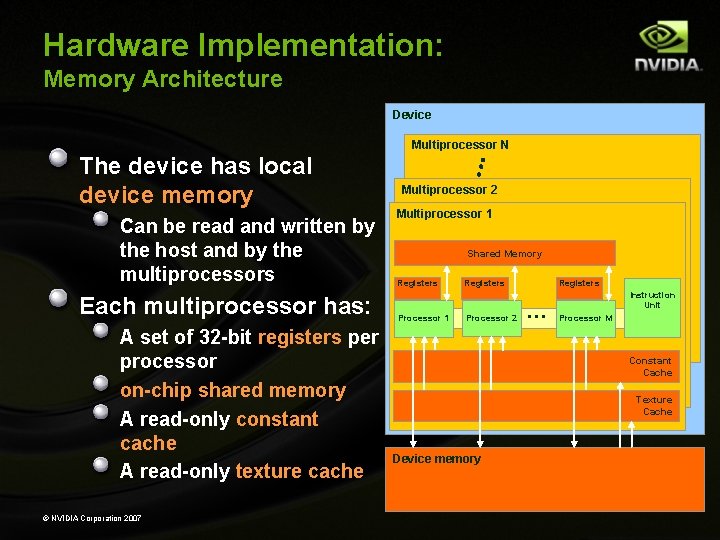

Hardware Implementation: Memory Architecture Device Multiprocessor N The device has local device memory Can be read and written by the host and by the multiprocessors Each multiprocessor has: A set of 32 -bit registers per processor on-chip shared memory A read-only constant cache A read-only texture cache © NVIDIA Corporation 2007 Multiprocessor 2 Multiprocessor 1 Shared Memory Registers Processor 1 Registers Processor 2 Registers … Instruction Unit Processor M Constant Cache Texture Cache Device memory

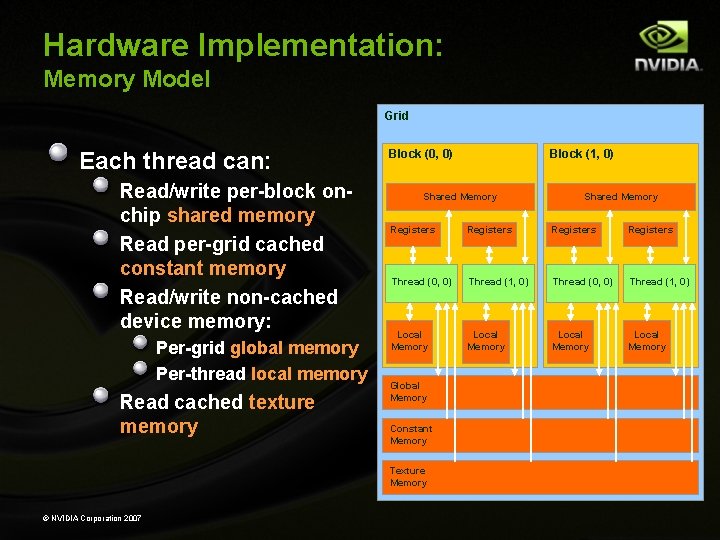

Hardware Implementation: Memory Model Grid Each thread can: Read/write per-block onchip shared memory Read per-grid cached constant memory Read/write non-cached device memory: Per-grid global memory Per-thread local memory Read cached texture memory Block (0, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Local Memory Global Memory Constant Memory Texture Memory © NVIDIA Corporation 2007 Block (1, 0)

CUDA Programming

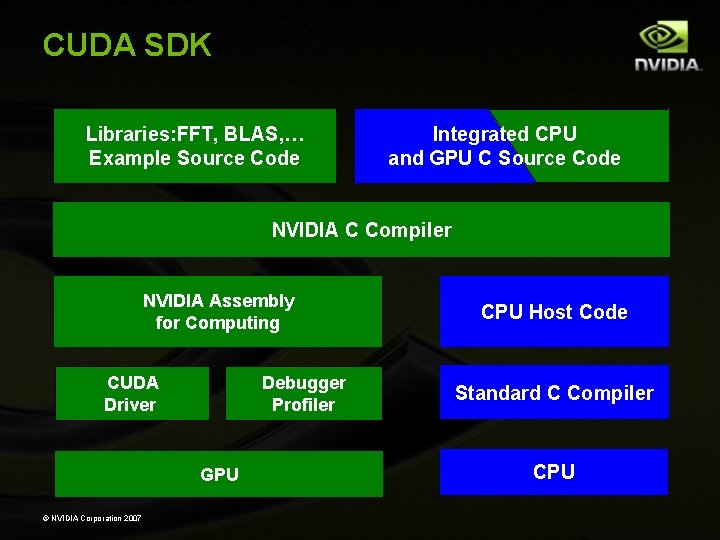

CUDA SDK Libraries: FFT, BLAS, … Example Source Code Integrated CPU and GPU C Source Code NVIDIA C Compiler NVIDIA Assembly for Computing CUDA Driver Debugger Profiler GPU © NVIDIA Corporation 2007 CPU Host Code Standard C Compiler CPU

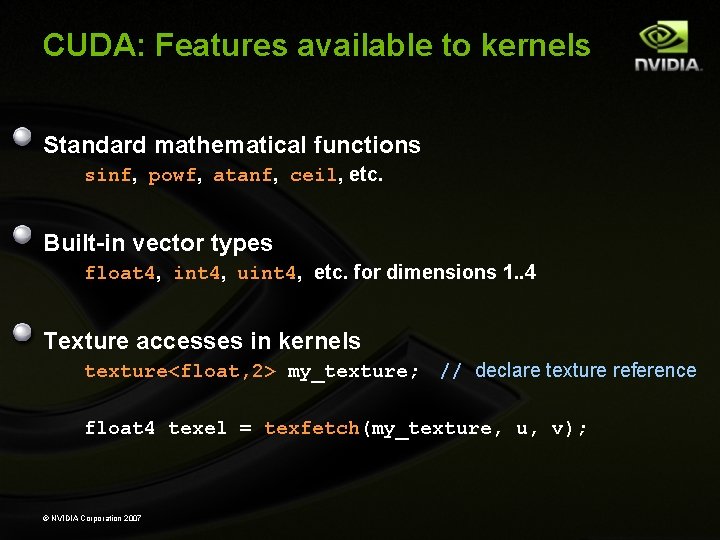

CUDA: Features available to kernels Standard mathematical functions sinf, powf, atanf, ceil, etc. Built-in vector types float 4, int 4, uint 4, etc. for dimensions 1. . 4 Texture accesses in kernels texture<float, 2> my_texture; // declare texture reference float 4 texel = texfetch(my_texture, u, v); © NVIDIA Corporation 2007

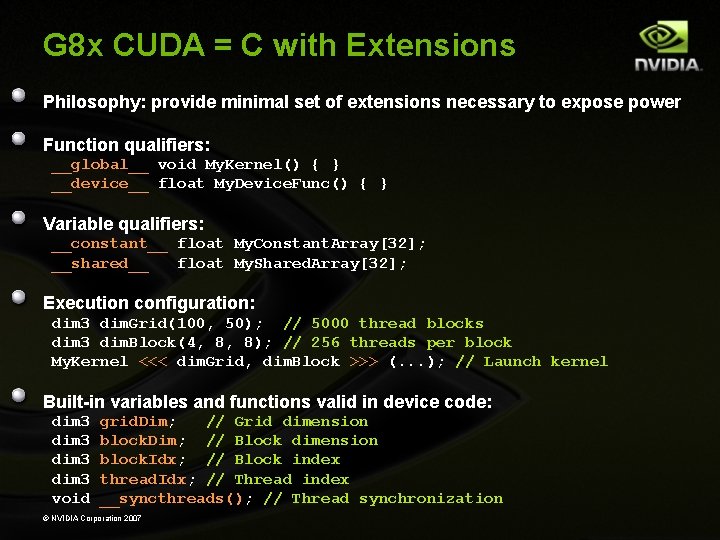

G 8 x CUDA = C with Extensions Philosophy: provide minimal set of extensions necessary to expose power Function qualifiers: __global__ void My. Kernel() { } __device__ float My. Device. Func() { } Variable qualifiers: __constant__ float My. Constant. Array[32]; __shared__ float My. Shared. Array[32]; Execution configuration: dim 3 dim. Grid(100, 50); // 5000 thread blocks dim 3 dim. Block(4, 8, 8); // 256 threads per block My. Kernel <<< dim. Grid, dim. Block >>> (. . . ); // Launch kernel Built-in variables and functions valid in device code: dim 3 void grid. Dim; // Grid dimension block. Dim; // Block dimension block. Idx; // Block index thread. Idx; // Thread index __syncthreads(); // Thread synchronization © NVIDIA Corporation 2007

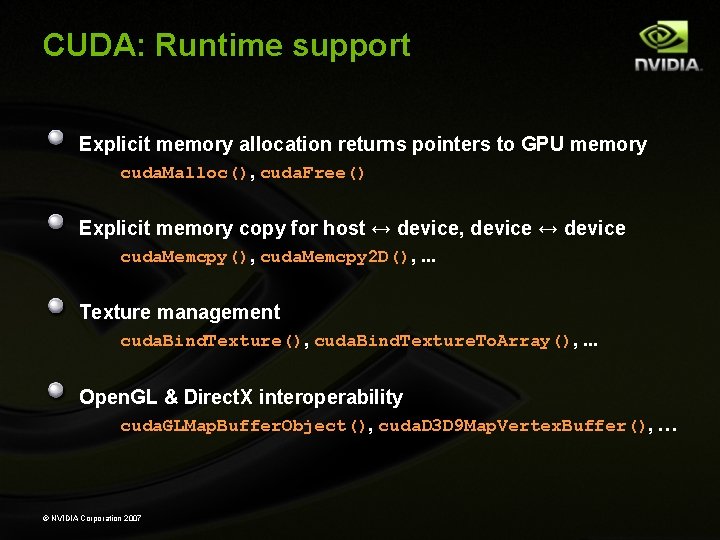

CUDA: Runtime support Explicit memory allocation returns pointers to GPU memory cuda. Malloc(), cuda. Free() Explicit memory copy for host ↔ device, device ↔ device cuda. Memcpy(), cuda. Memcpy 2 D(), . . . Texture management cuda. Bind. Texture(), cuda. Bind. Texture. To. Array(), . . . Open. GL & Direct. X interoperability cuda. GLMap. Buffer. Object(), cuda. D 3 D 9 Map. Vertex. Buffer(), … © NVIDIA Corporation 2007

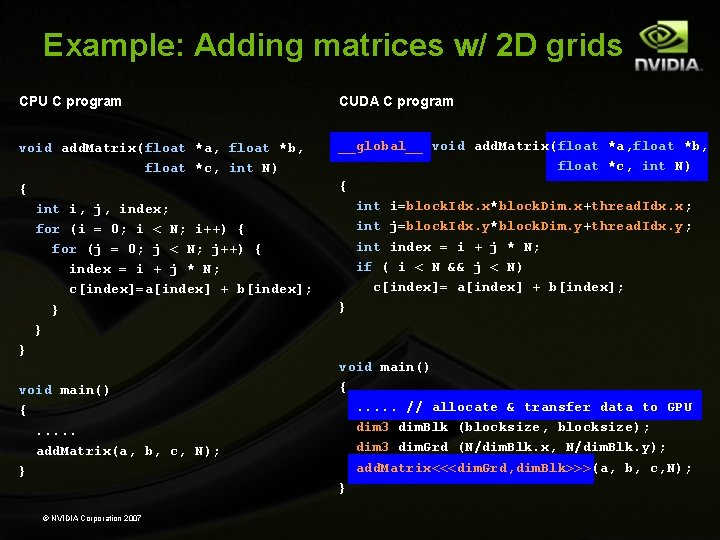

Example: Adding matrices w/ 2 D grids CPU C program CUDA C program void add. Matrix(float *a, float *b, float *c, int N) { int i, j, index; for (i = 0; i < N; i++) { for (j = 0; j < N; j++) { index = i + j * N; c[index]=a[index] + b[index]; } } } __global__ void add. Matrix(float *a, float *b, float *c, int N) { int i=block. Idx. x*block. Dim. x+thread. Idx. x; int j=block. Idx. y*block. Dim. y+thread. Idx. y; int index = i + j * N; if ( i < N && j < N) c[index]= a[index] + b[index]; } void main() {. . . add. Matrix(a, b, c, N); } © NVIDIA Corporation 2007 void main() {. . . // allocate & transfer data to GPU dim 3 dim. Blk (blocksize, blocksize); dim 3 dim. Grd (N/dim. Blk. x, N/dim. Blk. y); add. Matrix<<<dim. Grd, dim. Blk>>>(a, b, c, N); }

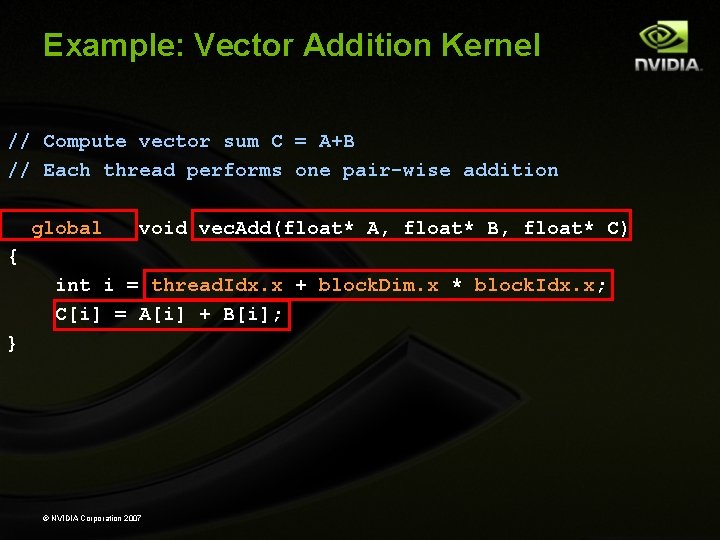

Example: Vector Addition Kernel // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; } © NVIDIA Corporation 2007

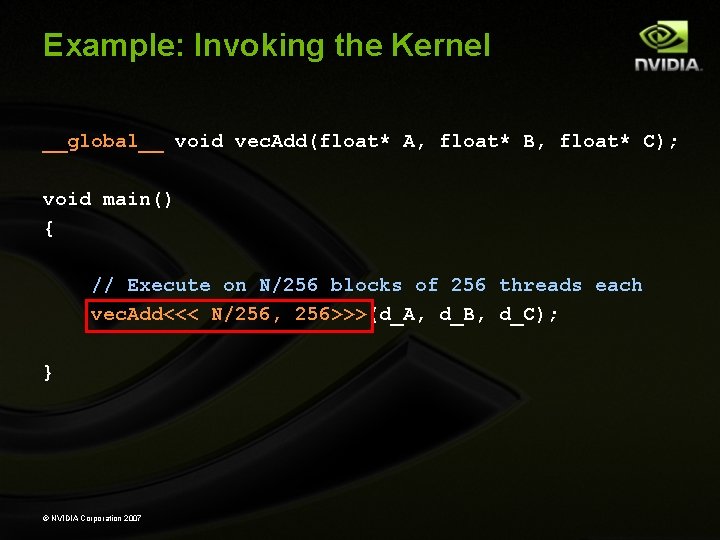

Example: Invoking the Kernel __global__ void vec. Add(float* A, float* B, float* C); void main() { // Execute on N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } © NVIDIA Corporation 2007

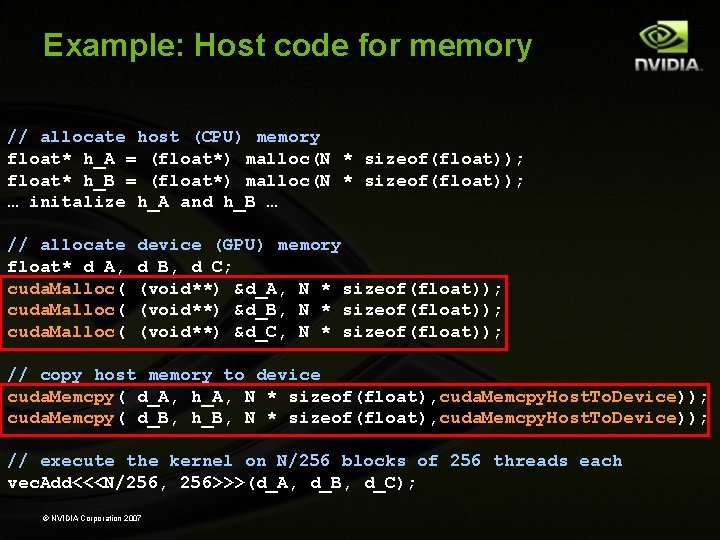

Example: Host code for memory // allocate host (CPU) memory float* h_A = (float*) malloc(N * sizeof(float)); float* h_B = (float*) malloc(N * sizeof(float)); … initalize h_A and h_B … // allocate float* d_A, cuda. Malloc( device (GPU) memory d_B, d_C; (void**) &d_A, N * sizeof(float)); (void**) &d_B, N * sizeof(float)); (void**) &d_C, N * sizeof(float)); // copy host memory to device cuda. Memcpy( d_A, h_A, N * sizeof(float), cuda. Memcpy. Host. To. Device)); cuda. Memcpy( d_B, h_B, N * sizeof(float), cuda. Memcpy. Host. To. Device)); // execute the kernel on N/256 blocks of 256 threads each vec. Add<<<N/256, 256>>>(d_A, d_B, d_C); © NVIDIA Corporation 2007

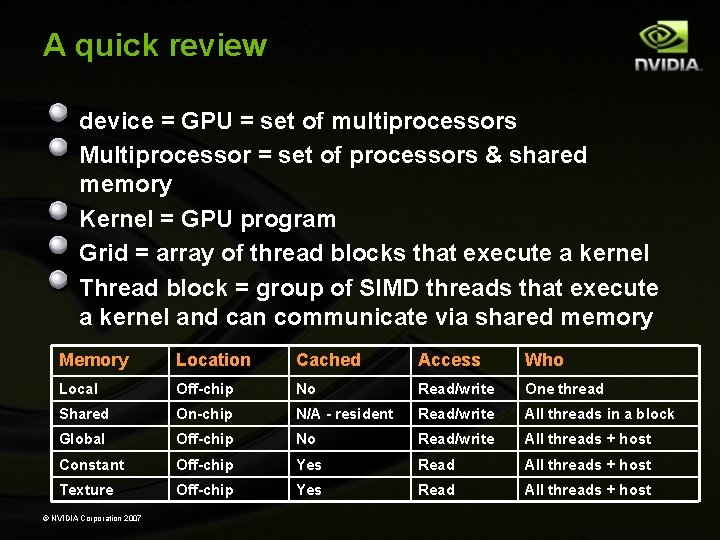

A quick review device = GPU = set of multiprocessors Multiprocessor = set of processors & shared memory Kernel = GPU program Grid = array of thread blocks that execute a kernel Thread block = group of SIMD threads that execute a kernel and can communicate via shared memory Memory Location Cached Access Who Local Off-chip No Read/write One thread Shared On-chip N/A - resident Read/write All threads in a block Global Off-chip No Read/write All threads + host Constant Off-chip Yes Read All threads + host Texture Off-chip Yes Read All threads + host © NVIDIA Corporation 2007

Data-Parallel Programming

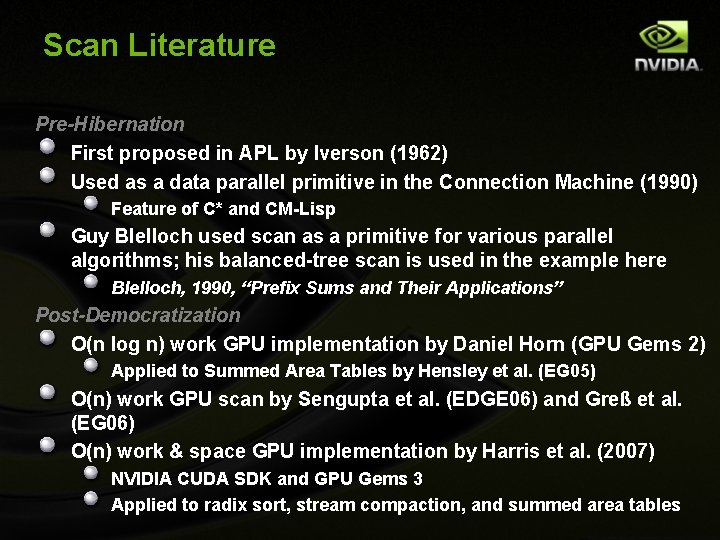

Scan Literature Pre-Hibernation First proposed in APL by Iverson (1962) Used as a data parallel primitive in the Connection Machine (1990) Feature of C* and CM-Lisp Guy Blelloch used scan as a primitive for various parallel algorithms; his balanced-tree scan is used in the example here Blelloch, 1990, “Prefix Sums and Their Applications” Post-Democratization O(n log n) work GPU implementation by Daniel Horn (GPU Gems 2) Applied to Summed Area Tables by Hensley et al. (EG 05) O(n) work GPU scan by Sengupta et al. (EDGE 06) and Greß et al. (EG 06) O(n) work & space GPU implementation by Harris et al. (2007) NVIDIA CUDA SDK and GPU Gems 3 Applied to radix sort, stream compaction, and summed area tables

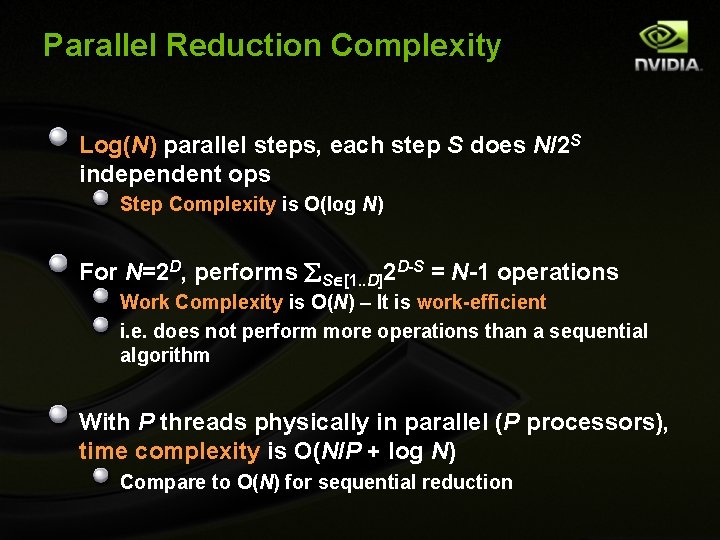

Parallel Reduction Complexity Log(N) parallel steps, each step S does N/2 S independent ops Step Complexity is O(log N) For N=2 D, performs S [1. . D]2 D-S = N-1 operations Work Complexity is O(N) – It is work-efficient i. e. does not perform more operations than a sequential algorithm With P threads physically in parallel (P processors), time complexity is O(N/P + log N) Compare to O(N) for sequential reduction

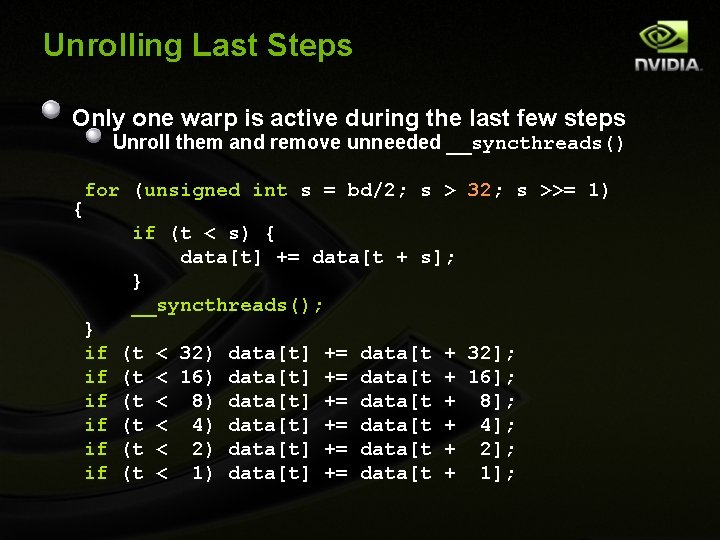

Unrolling Last Steps Only one warp is active during the last few steps Unroll them and remove unneeded __syncthreads() { for (unsigned int s = bd/2; s > 32; s >>= 1) if (t < s) { data[t] += data[t + s]; } __syncthreads(); } if if if (t (t (t < 32) data[t] += data[t + 32]; < 16) data[t] += data[t + 16]; < 8) data[t] += data[t + 8]; < 4) data[t] += data[t + 4]; < 2) data[t] += data[t + 2]; < 1) data[t] += data[t + 1];

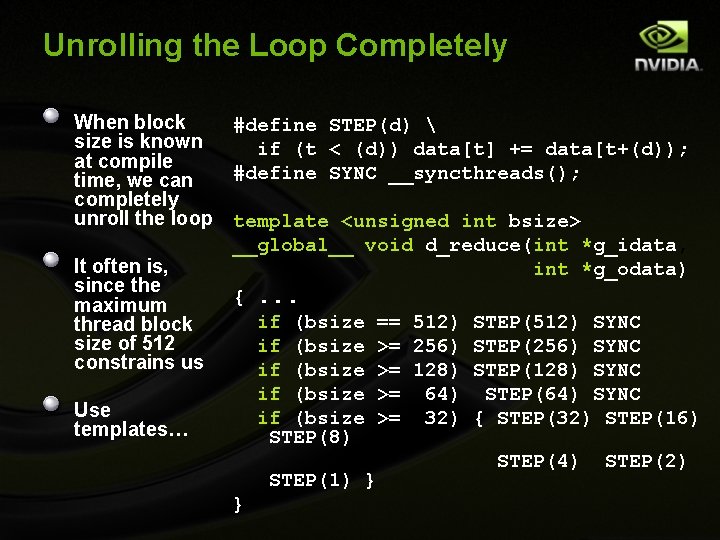

Unrolling the Loop Completely When block #define STEP(d) size is known if (t < (d)) data[t] += data[t+(d)); at compile #define SYNC __syncthreads(); time, we can completely unroll the loop template <unsigned int bsize> __global__ void d_reduce(int *g_idata, It often is, int *g_odata) since the {. . . maximum if (bsize == 512) STEP(512) SYNC thread block size of 512 if (bsize >= 256) STEP(256) SYNC constrains us if (bsize >= 128) STEP(128) SYNC if (bsize >= 64) STEP(64) SYNC Use if (bsize >= 32) { STEP(32) STEP(16) templates… STEP(8) STEP(4) STEP(2) STEP(1) } }

GPU Computing Motivation

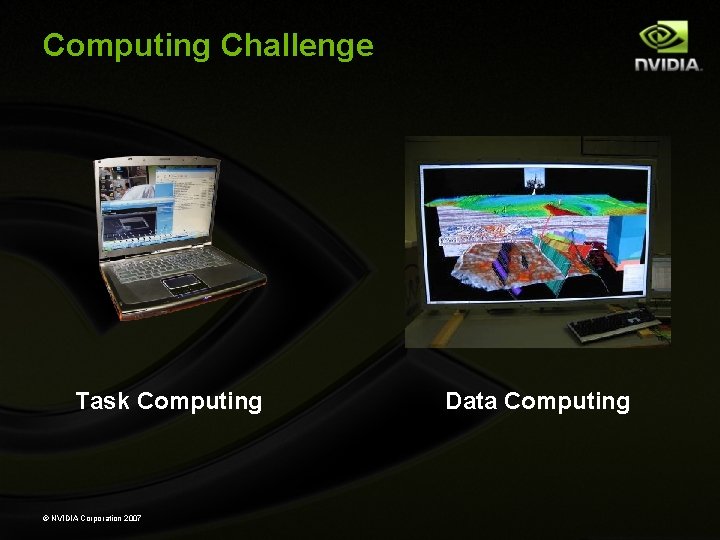

Computing Challenge graphic Task Computing © NVIDIA Corporation 2007 Data Computing

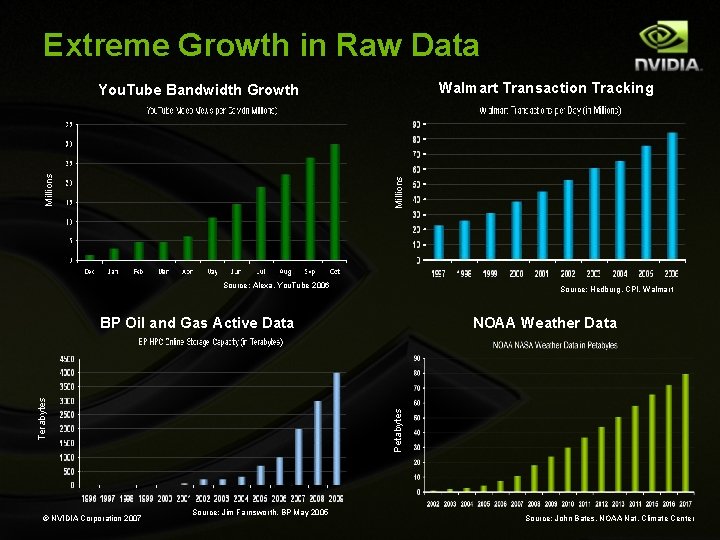

Extreme Growth in Raw Data Walmart Transaction Tracking Millions You. Tube Bandwidth Growth Source: Alexa, You. Tube 2006 Source: Hedburg, CPI, Walmart © NVIDIA Corporation 2007 NOAA Weather Data Petabytes Terabytes BP Oil and Gas Active Data Source: Jim Farnsworth, BP May 2005 Source: John Bates, NOAA Nat. Climate Center

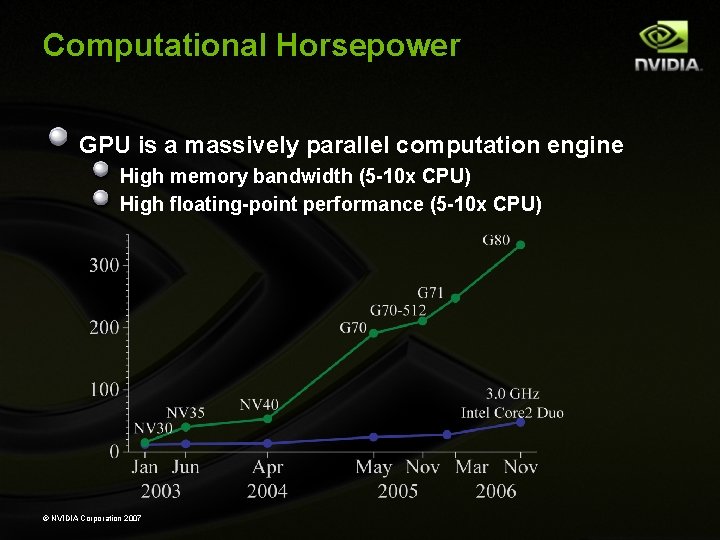

Computational Horsepower GPU is a massively parallel computation engine High memory bandwidth (5 -10 x CPU) High floating-point performance (5 -10 x CPU) © NVIDIA Corporation 2007

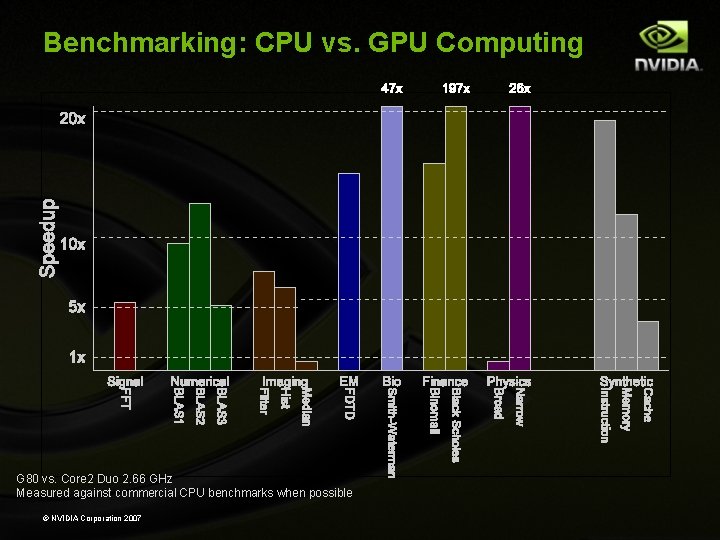

Benchmarking: CPU vs. GPU Computing G 80 vs. Core 2 Duo 2. 66 GHz Measured against commercial CPU benchmarks when possible © NVIDIA Corporation 2007

“Free” Massively Parallel Processors It’s not science fiction, it’s just funded by them Asst Master Chief Harvard

Success Stories

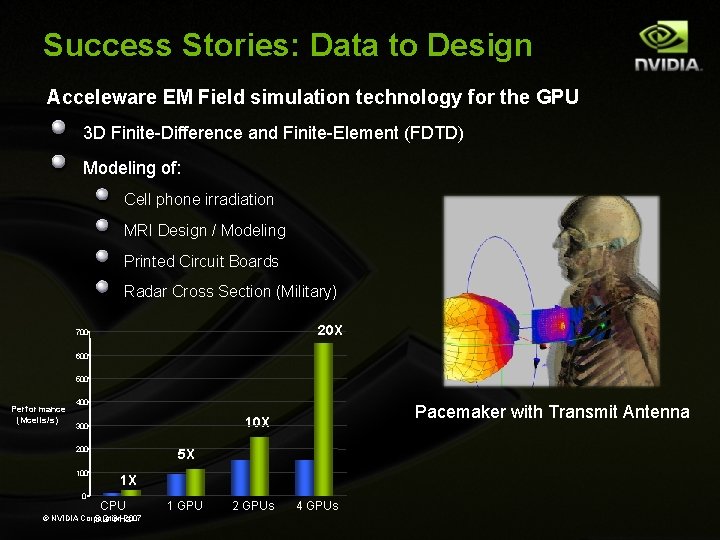

Success Stories: Data to Design Acceleware EM Field simulation technology for the GPU 3 D Finite-Difference and Finite-Element (FDTD) Modeling of: Cell phone irradiation MRI Design / Modeling Printed Circuit Boards Radar Cross Section (Military) 20 X 700 600 500 Performance (Mcells/s) 400 200 100 0 Pacemaker with Transmit Antenna 10 X 300 5 X 1 X CPU © NVIDIA Corporation 2007 3. 2 GHz 1 GPU 2 GPUs 4 GPUs

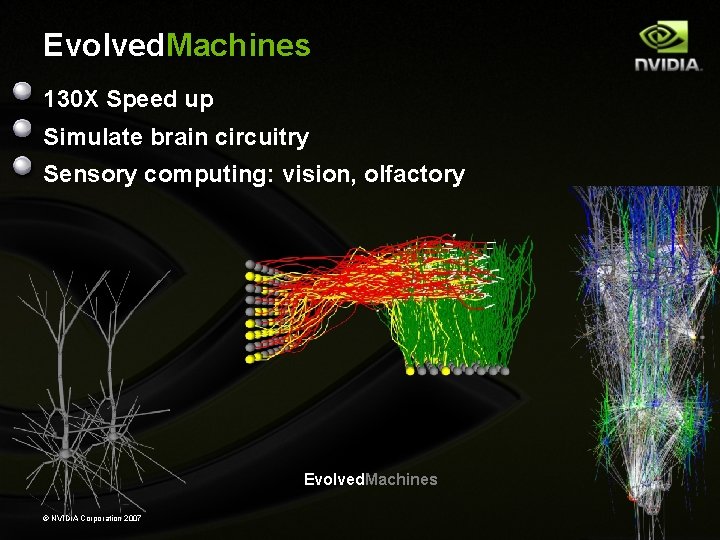

Evolved. Machines 130 X Speed up Simulate brain circuitry Sensory computing: vision, olfactory Evolved. Machines © NVIDIA Corporation 2007

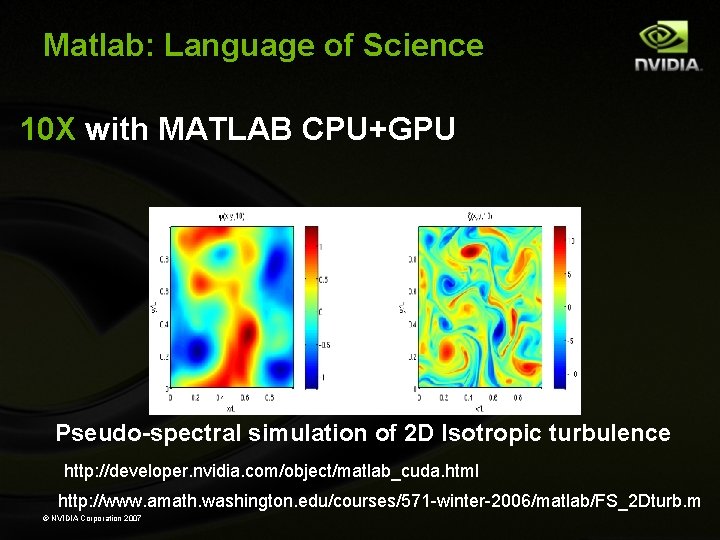

Matlab: Language of Science 10 X with MATLAB CPU+GPU Pseudo-spectral simulation of 2 D Isotropic turbulence http: //developer. nvidia. com/object/matlab_cuda. html http: //www. amath. washington. edu/courses/571 -winter-2006/matlab/FS_2 Dturb. m © NVIDIA Corporation 2007

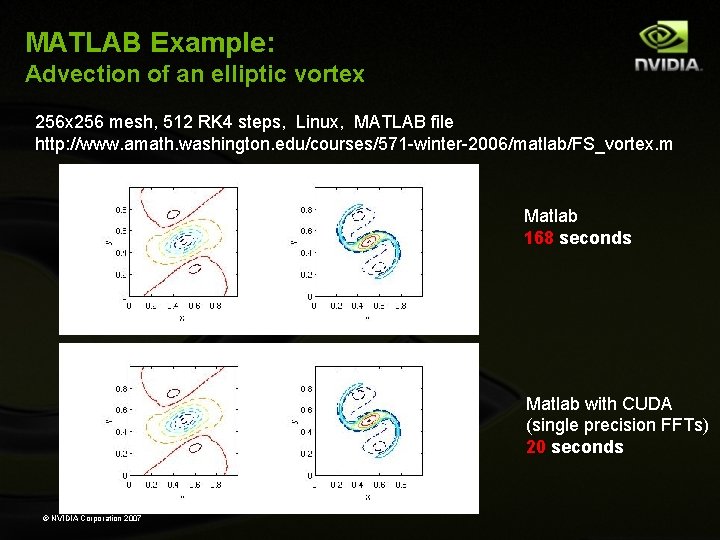

MATLAB Example: Advection of an elliptic vortex 256 mesh, 512 RK 4 steps, Linux, MATLAB file http: //www. amath. washington. edu/courses/571 -winter-2006/matlab/FS_vortex. m Matlab 168 seconds Matlab with CUDA (single precision FFTs) 20 seconds © NVIDIA Corporation 2007

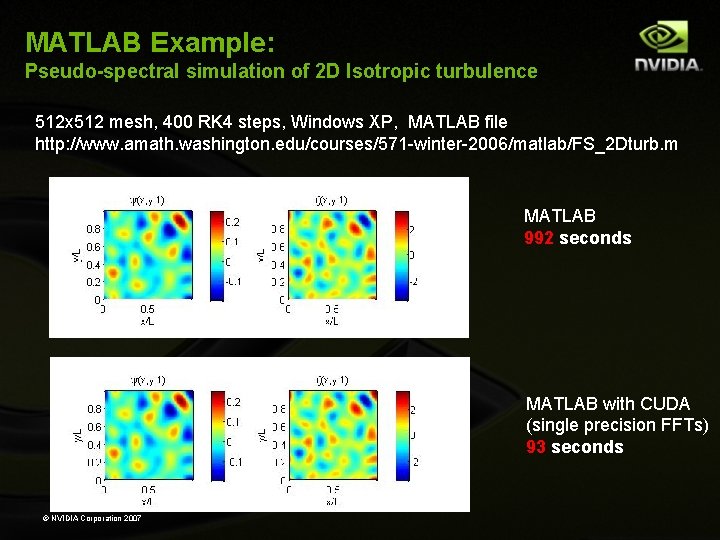

MATLAB Example: Pseudo-spectral simulation of 2 D Isotropic turbulence 512 x 512 mesh, 400 RK 4 steps, Windows XP, MATLAB file http: //www. amath. washington. edu/courses/571 -winter-2006/matlab/FS_2 Dturb. m MATLAB 992 seconds MATLAB with CUDA (single precision FFTs) 93 seconds © NVIDIA Corporation 2007

NAMD/VMD Molecular Dynamics 240 X speedup Computational biology © NVIDIA Corporation 2007 http: //www. ks. uiuc. edu/Research/vmd/projects/ece 498/lecture/

Molecular Dynamics Example Case study: molecular dynamics research at U. Illinois Urbana-Champaign (Scientist-sponsored) course project for CS 498 AL: Programming Massively Parallel Multiprocessors (Kirk/Hwu) Next slides stolen from a nice description of problem, algorithms, and iterative optimization process available at: http: //www. ks. uiuc. edu/Research/vmd/projects/ece 498/lecture/ © NVIDIA Corporation 2007

© NVIDIA Corporation 2007

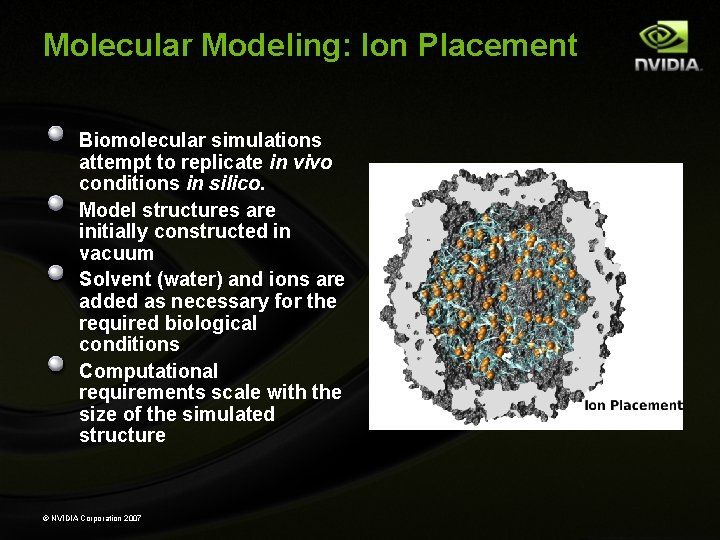

Molecular Modeling: Ion Placement Biomolecular simulations attempt to replicate in vivo conditions in silico. Model structures are initially constructed in vacuum Solvent (water) and ions are added as necessary for the required biological conditions Computational requirements scale with the size of the simulated structure © NVIDIA Corporation 2007

Evolution of Ion Placement Code First implementation was sequential Virus structure with 10^6 atoms would require 10 CPU days Tuned for Intel C/C++ vectorization+SSE, ~20 x speedup Parallelized /w pthreads: high data parallelism = linear speedup Parallelized GPU accelerated implementation: 3 Ge. Force 8800 GTX cards outrun ~300 Itanium 2 CPUs! Virus structure now runs in 25 seconds on 3 GPUs! Further speedups should still be possible… © NVIDIA Corporation 2007

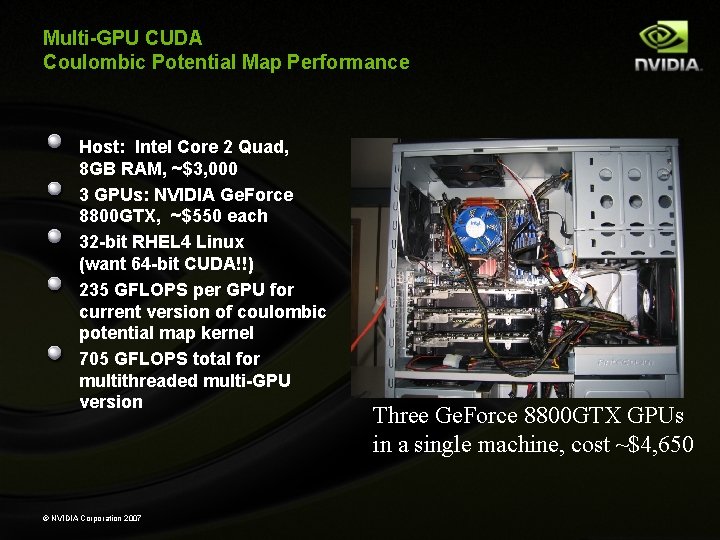

Multi-GPU CUDA Coulombic Potential Map Performance Host: Intel Core 2 Quad, 8 GB RAM, ~$3, 000 3 GPUs: NVIDIA Ge. Force 8800 GTX, ~$550 each 32 -bit RHEL 4 Linux (want 64 -bit CUDA!!) 235 GFLOPS per GPU for current version of coulombic potential map kernel 705 GFLOPS total for multithreaded multi-GPU version © NVIDIA Corporation 2007 Three Ge. Force 8800 GTX GPUs in a single machine, cost ~$4, 650

Professor Partnership

NVIDIA Professor Partnership Support faculty research & teaching efforts Small equipment gifts (1 -2 GPUs) Significant discounts on GPU purchases Especially Quadro, Tesla equipment Useful for cost matching Research contracts Small cash grants (typically ~$25 K gifts) Medium-scale equipment donations (10 -30 GPUs) Easy Competitive Informal proposals, reviewed quarterly Focus areas: GPU computing, especially with an educational mission or component http: //www. nvidia. com/page/professor_partnership. html © NVIDIA Corporation 2007

- Slides: 94