Googles Map Reduce Commodity Clusters Web data sets

Google’s Map Reduce

Commodity Clusters • Web data sets can be very large – Tens to hundreds of terabytes • Cannot mine on a single server • Standard architecture emerging: – Cluster of commodity Linux nodes – Gigabit Ethernet interconnect • How to organize computations on this architecture?

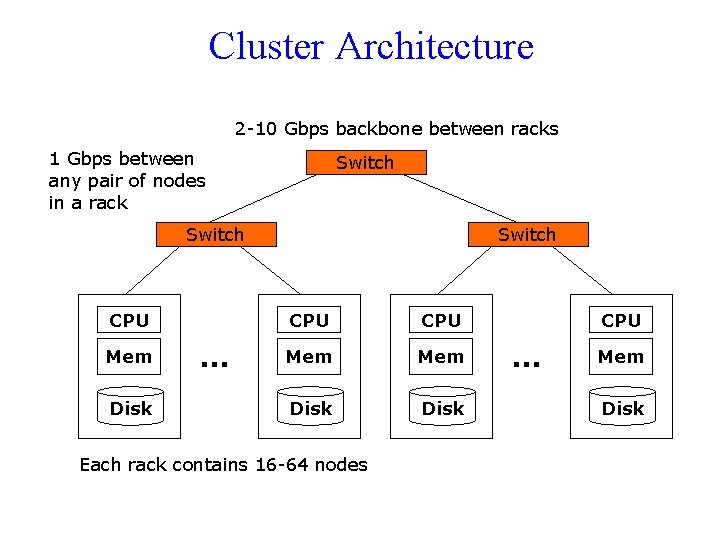

Cluster Architecture 2 -10 Gbps backbone between racks 1 Gbps between any pair of nodes in a rack Switch CPU Mem Disk … Switch CPU Mem Disk Each rack contains 16 -64 nodes CPU … Mem Disk

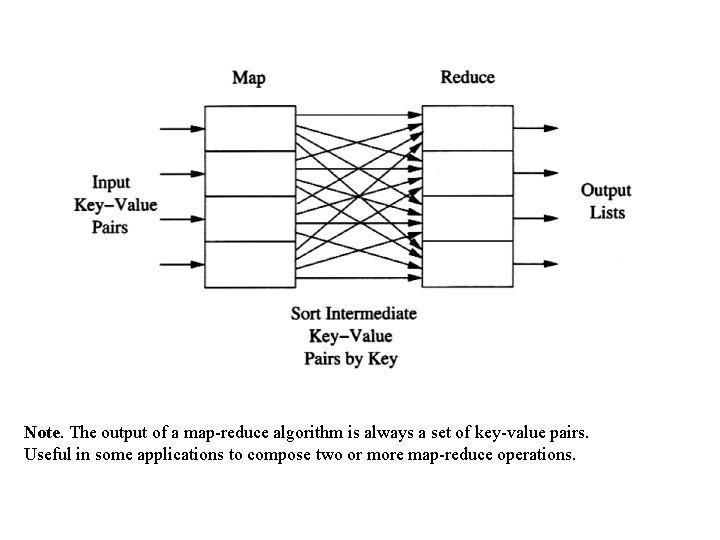

Map Reduce • Map-reduce is a high-level programming system that allows database processes to be written simply. • The user writes code for two functions, map and reduce. • A master controller divides the input data into chunks, and assigns different processors to execute the map function on each chunk. • Other processors, perhaps the same ones, are then assigned to perform the reduce function on pieces of the output from the map function.

Data Organization • Data is assumed stored in files. – Typically, the files are very large compared with the files found in conventional systems. • For example, one file might be all the tuples of a very large relation. • Or, the file might be a terabyte of "market baskets, “ • Or, the file might be the "transition matrix of the Web, " which is a representation of the graph with all Web pages as nodes and hyperlinks as edges. • Files are divided into chunks, which might be complete cylinders of a disk, and are typically many megabytes.

The Map Function • Input is thought of as a set of key-value records. • Executed by one or more processes, located at any number of processors. – Each map process is given a chunk of the entire input data on which to work. • Designed to take one key-value pair as input and to produce a list of key-value pairs as output. – The types of keys and values for the output of the map function need not be the same as the types of input keys and values. – The "keys" that are output from the map function are not true keys in the database sense. • That is, there can be many pairs with the same key value. • The result of executing all the map processes is a collection of keyvalue pairs called the intermediate result. – Each pair appears at the processor that generated it.

Map Example Constructing an Inverted Index • Input is a collection of documents, • Final output (not as the output of map) is a list for each word of the documents that contain that word at least once. Map Function • Input is a set of (i, d) pairs – i is document ID – d is corresponding document. • The map function scans d and for each word w it finds, it emits the pair (w, i). – Notice that in the output, the word is the key and the document ID is the associated value. • Output of map is a list of word-ID pairs. – Not necessary to catch duplicate words in the document; the elimination of duplicates can be done later, at the reduce phase. – The intermediate result is the collection of all word-ID pairs created from all the documents in the input database.

Note. The output of a map-reduce algorithm is always a set of key-value pairs. Useful in some applications to compose two or more map-reduce operations.

The Reduce Function • The second user-defined function, reduce, is also executed by one or more processes, located at any number of processors. • Input to reduce is a single key value from the intermediate result, together with the list of all values that appear with this key in the intermediate result. • The reduce function itself combines the list of values associated with a given key k. • The result is k paired with a value of some type.

Reduce Example Constructing an Inverted Index • Input is a collection of documents, • Final output (not as the output of map) is a list for each word of the documents that contain that word at least once. Reduce Function • The intermediate result consists of pairs of the form (w, [i 1, i 2, …, in]), – where the i's are a list of document ID's, one for each occurrence of word w. • The reduce function takes a list of ID's, eliminates duplicates, and sorts the list of unique ID's.

Parallelism • This organization of the computation makes excellent use of whatever parallelism is available. • The map function works on a single document, so we could have as many processes and processors as there are documents in the database. • The reduce function works on a single word, so we could have as many processes and processors as there are words in the database. • Of course, it is unlikely that we would use so many processors in practice.

Another Example – Word Count Construct a word count. • For each word w that appears at least once in our database of documents, output pair (w, c), where c is the number of times w appears among all the documents. The map function • Input is a document. • Goes through the document, and each time it encounters another word w, it emits the pair (w, 1). • Intermediate result is a list of pairs (w 1, 1), (w 2, 1), …. The reduce function • Input is a pair (w, [1, 1, . . . , 1]), with a 1 for each occurrence of word w. • Sums the 1's, producing the count. • Output is word-count pairs (w, c).

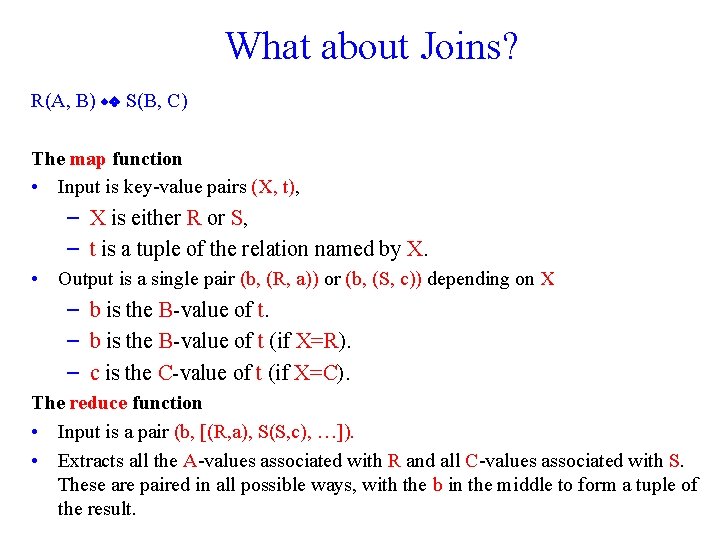

What about Joins? R(A, B) S(B, C) The map function • Input is key-value pairs (X, t), – X is either R or S, – t is a tuple of the relation named by X. • Output is a single pair (b, (R, a)) or (b, (S, c)) depending on X – b is the B-value of t (if X=R). – c is the C-value of t (if X=C). The reduce function • Input is a pair (b, [(R, a), S(S, c), …]). • Extracts all the A-values associated with R and all C-values associated with S. These are paired in all possible ways, with the b in the middle to form a tuple of the result.

Reading • Jeffrey Dean and Sanjay Ghemawat, Map. Reduce: Simplified Data Processing on Large Clusters http: //labs. google. com/papers/mapreduce. html • Hadoop (Apache) – Open Source implementation of Map. Reduce http: //hadoop. apache. org/core

- Slides: 14