GoalDriven Autonomy in Strategy Simulations Matthew Klenk AI

- Slides: 22

Goal-Driven Autonomy in Strategy Simulations Matthew Klenk AI Center, Naval Research Laboratory

Intelligent Behavior • Goal-directed action – (Newell & Simon 1972) • AI Planning techniques – Selecting actions to reach a goal state S 0 • Reinforcement learning – Policy A 0 … An Sg

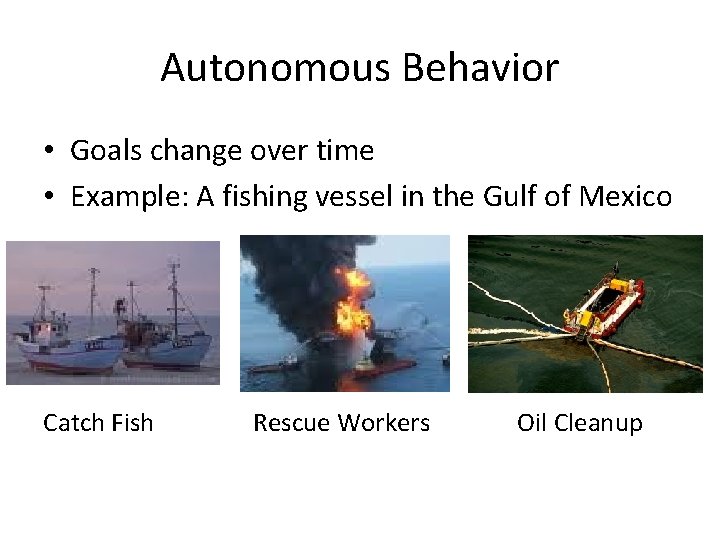

Autonomous Behavior • Goals change over time • Example: A fishing vessel in the Gulf of Mexico Catch Fish Rescue Workers Oil Cleanup

Outline • Planning in Strategy Simulations • Goal-Driven Autonomy – Discrepancy detection, explanation generation, goal formulation, and goal management • Autonomous Response to Unexpected Events • Evaluation • Related and Future Work

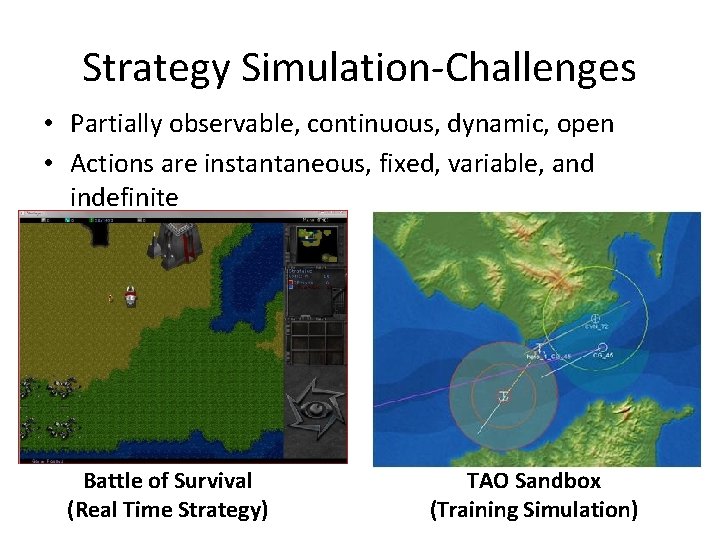

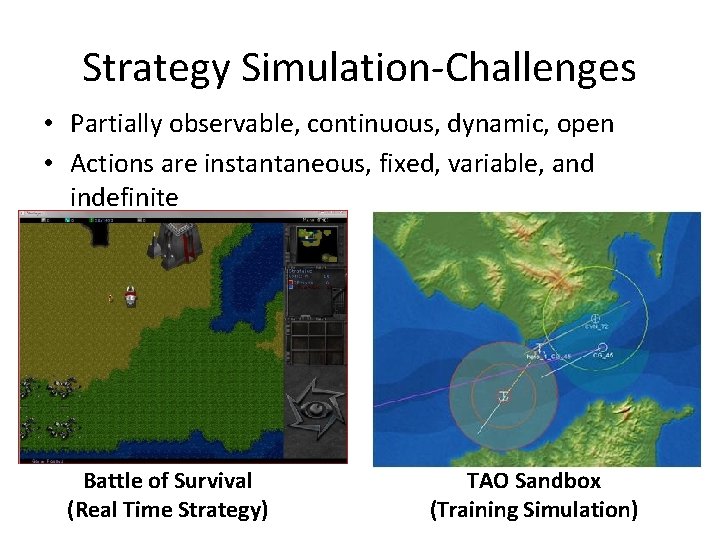

Strategy Simulation-Challenges • Partially observable, continuous, dynamic, open • Actions are instantaneous, fixed, variable, and indefinite Battle of Survival (Real Time Strategy) TAO Sandbox (Training Simulation)

Strategy Simulation-Approach • PDDL+ planning representation language – Processes and Events capture dynamic and continuous changes in the environment • Hierarchical Task Network planning – Goals are tasks – Given an initial set of observations and a task – Formulate a sequence of actions – Predict future states

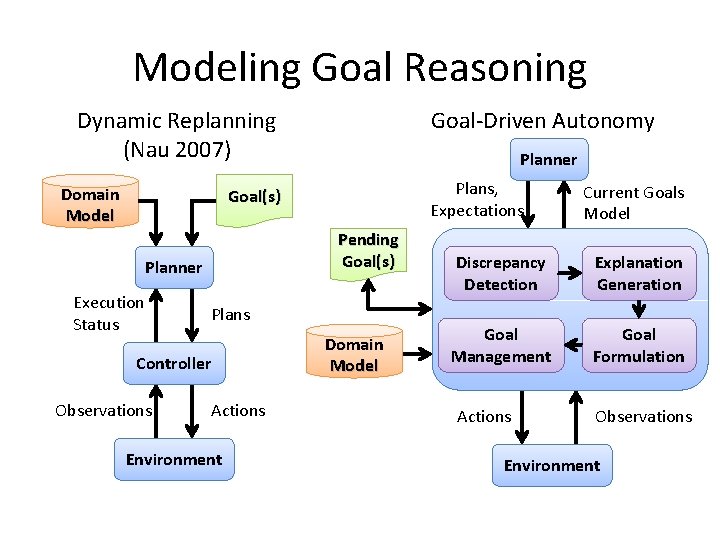

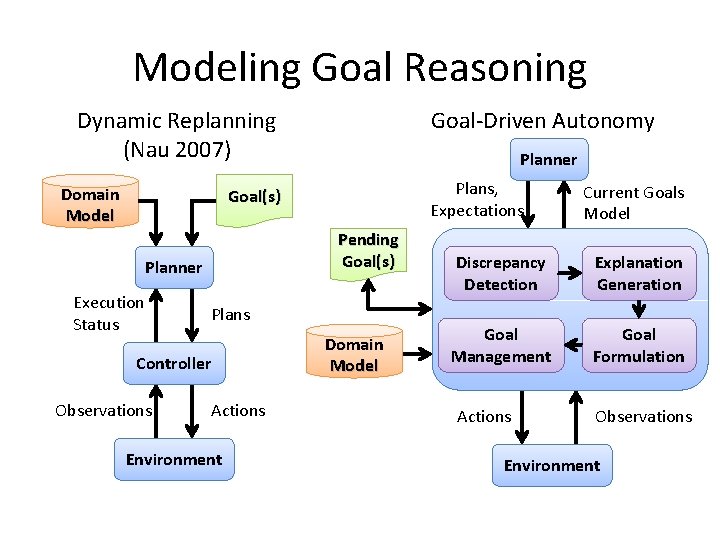

Modeling Goal Reasoning Dynamic Replanning (Nau 2007) Domain Model Goal-Driven Autonomy Planner Plans, Expectations Goal(s) Pending Goal(s) Planner Execution Status Plans Controller Observations Actions Environment Domain Model Current Goals Model Discrepancy Detection Explanation Generation Goal Management Goal Formulation Actions Observations Environment

ARTUE • Autonomous Response to Unexpected Events • Discrepancy Detection – Differences between expected and observed facts • Explanation Generation – Assumptions about hidden previous state • Goal Formulation and Management – Principles schemas for generating goals and utilities.

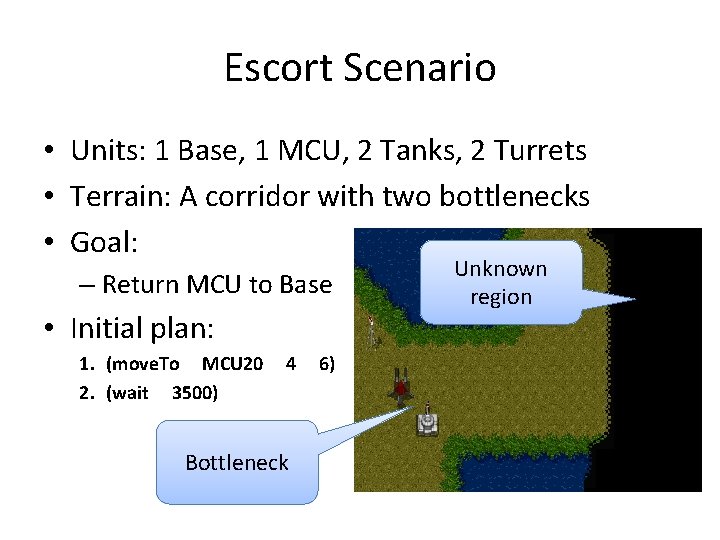

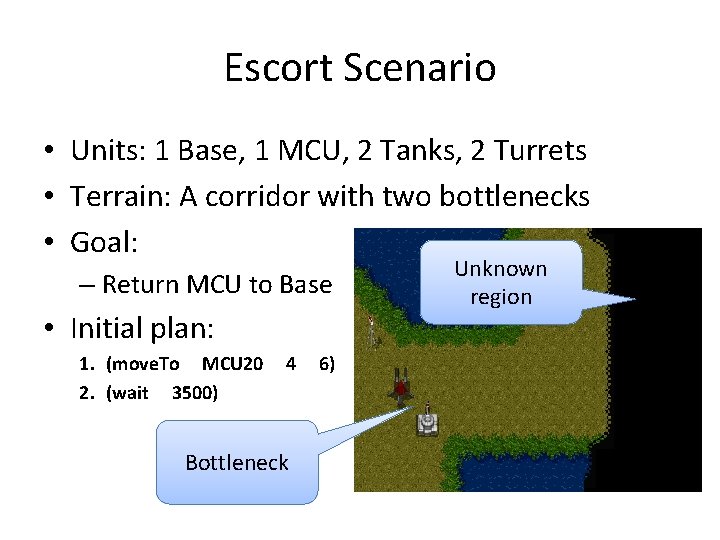

Escort Scenario • Units: 1 Base, 1 MCU, 2 Tanks, 2 Turrets • Terrain: A corridor with two bottlenecks • Goal: – Return MCU to Base • Initial plan: 1. (move. To MCU 20 2. (wait 3500) 4 Bottleneck 6) Unknown region

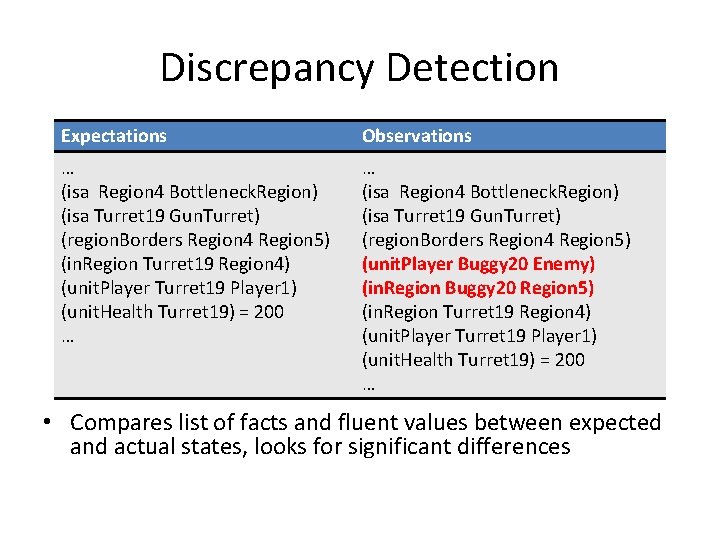

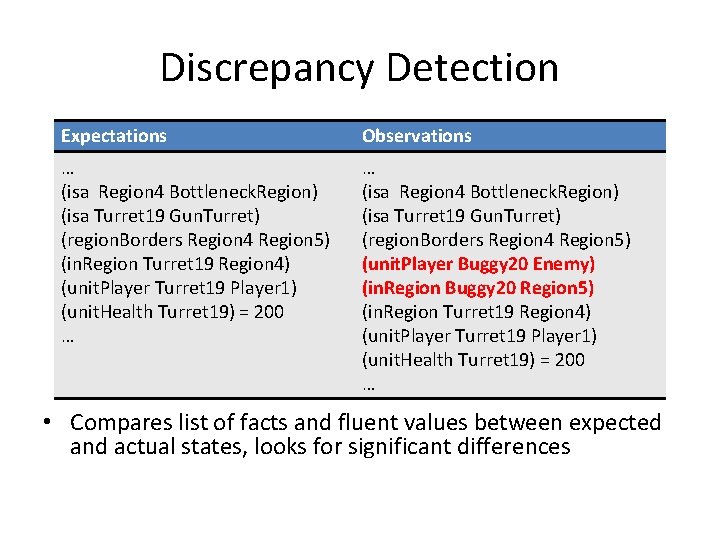

Discrepancy Detection Expectations Observations … (isa Region 4 Bottleneck. Region) (isa Turret 19 Gun. Turret) (region. Borders Region 4 Region 5) (in. Region Turret 19 Region 4) (unit. Player Turret 19 Player 1) (unit. Health Turret 19) = 200 … … (isa Region 4 Bottleneck. Region) (isa Turret 19 Gun. Turret) (region. Borders Region 4 Region 5) (unit. Player Buggy 20 Enemy) (in. Region Buggy 20 Region 5) (in. Region Turret 19 Region 4) (unit. Player Turret 19 Player 1) (unit. Health Turret 19) = 200 … • Compares list of facts and fluent values between expected and actual states, looks for significant differences

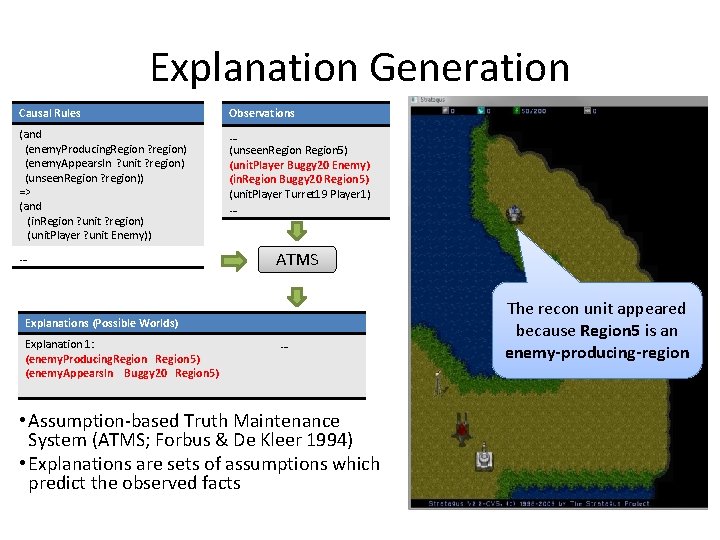

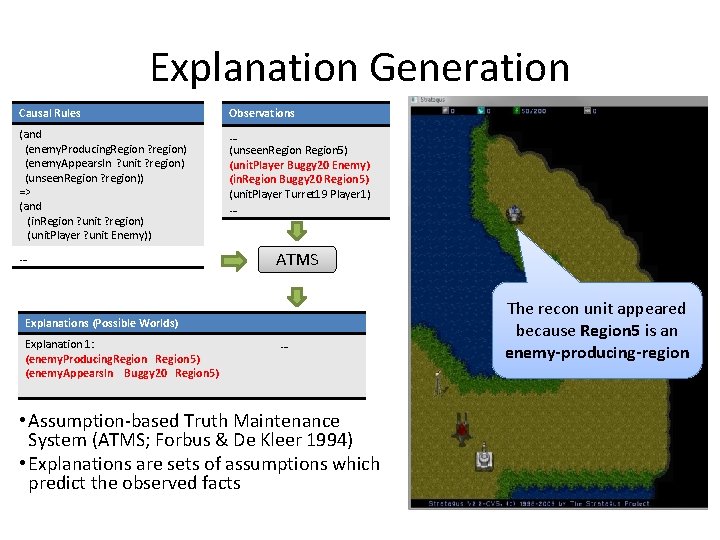

Explanation Generation Causal Rules Observations (and (enemy. Producing. Region ? region) (enemy. Appears. In ? unit ? region) (unseen. Region ? region)) => (and (in. Region ? unit ? region) (unit. Player ? unit Enemy)) … (unseen. Region 5) (unit. Player Buggy 20 Enemy) (in. Region Buggy 20 Region 5) (unit. Player Turret 19 Player 1) … … ATMS Explanations (Possible Worlds) Explanation 1: (enemy. Producing. Region 5) (enemy. Appears. In Buggy 20 Region 5) … • Assumption-based Truth Maintenance System (ATMS; Forbus & De Kleer 1994) • Explanations are sets of assumptions which predict the observed facts The recon unit appeared because Region 5 is an enemy-producing-region

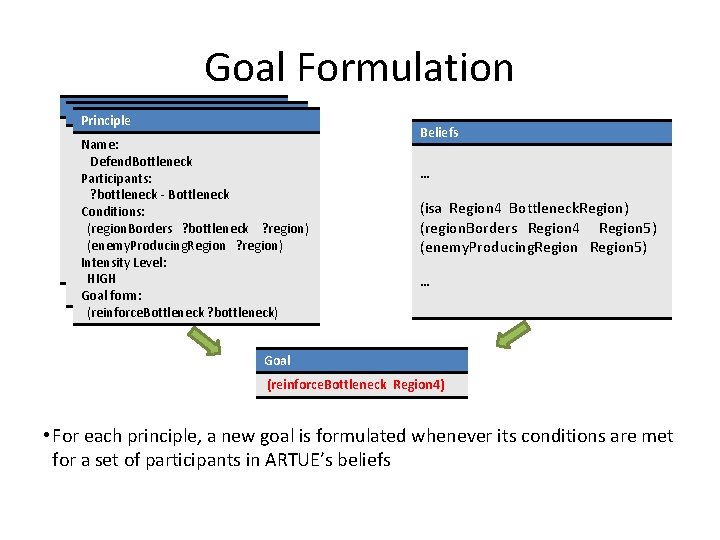

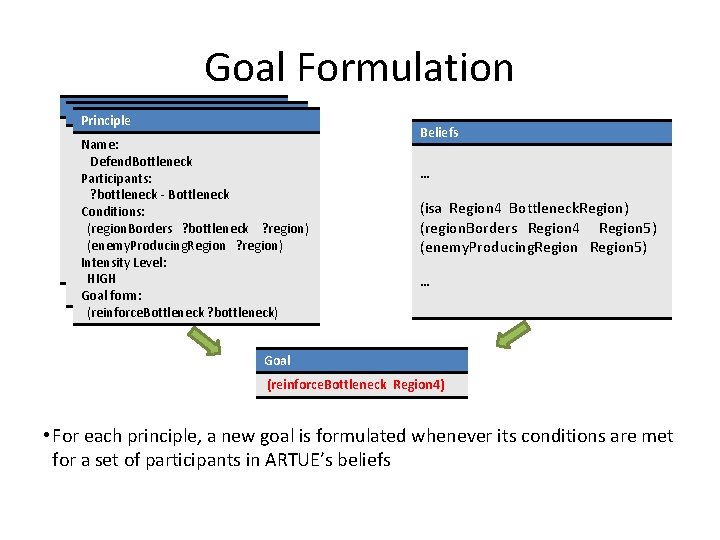

Goal Formulation Principle Name: Detain. Attackers Participants: Defend. Bottleneck Participants: ? vessel - VESSEL Conditions: ? bottleneck - Bottleneck (UNDERWATER ? vessel) Conditions: (TORPEDOES ? vessel ? any. Ship) (region. Borders ? bottleneck ? region) Intensity Level: HIGH (enemy. Producing. Region ? region) HIGH Goal form: Level: Intensity Goal form: (STOP ? vessel) HIGH (STOP ? vessel) Goal form: (reinforce. Bottleneck ? bottleneck) Beliefs … (isa Region 4 Bottleneck. Region) (region. Borders Region 4 Region 5) (enemy. Producing. Region 5) … Goal (reinforce. Bottleneck Region 4) • For each principle, a new goal is formulated whenever its conditions are met for a set of participants in ARTUE’s beliefs

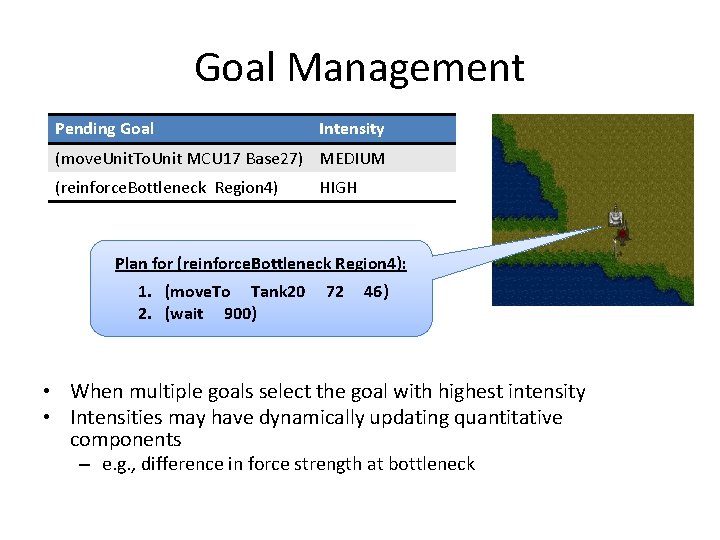

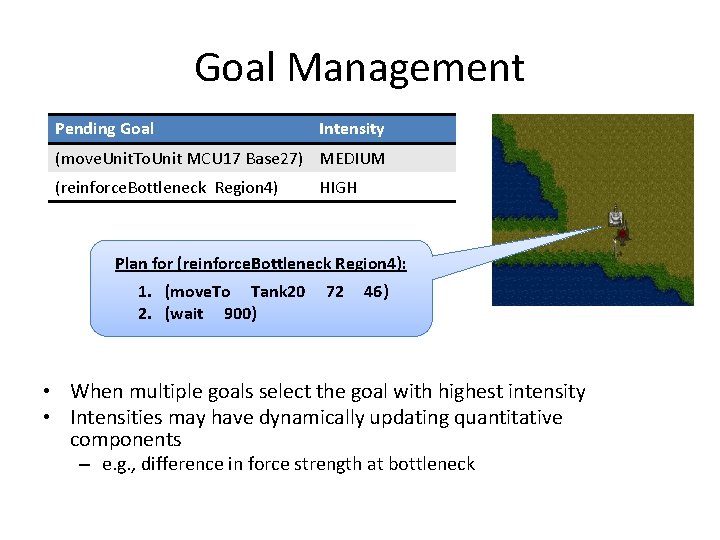

Goal Management Pending Goal Intensity (move. Unit. To. Unit MCU 17 Base 27) MEDIUM (reinforce. Bottleneck Region 4) HIGH Plan for (reinforce. Bottleneck Region 4): 1. (move. To Tank 20 2. (wait 900) 72 46) • When multiple goals select the goal with highest intensity • Intensities may have dynamically updating quantitative components – e. g. , difference in force strength at bottleneck

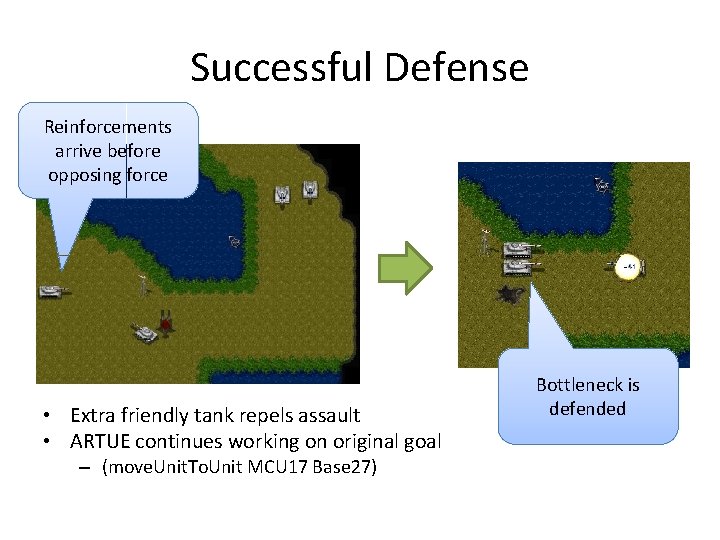

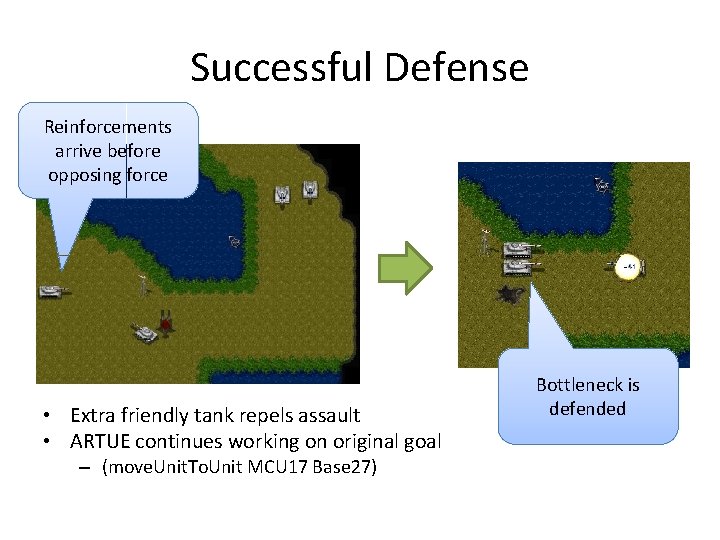

Successful Defense Reinforcements arrive before opposing force • Extra friendly tank repels assault • ARTUE continues working on original goal – (move. Unit. To. Unit MCU 17 Base 27) Bottleneck is defended

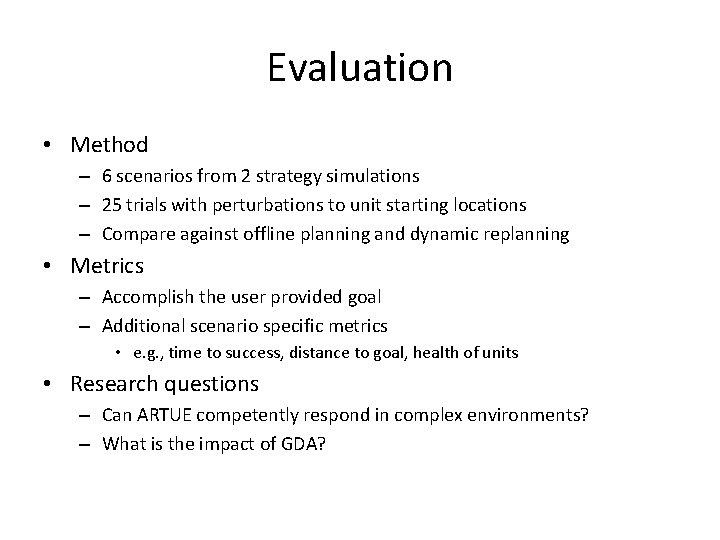

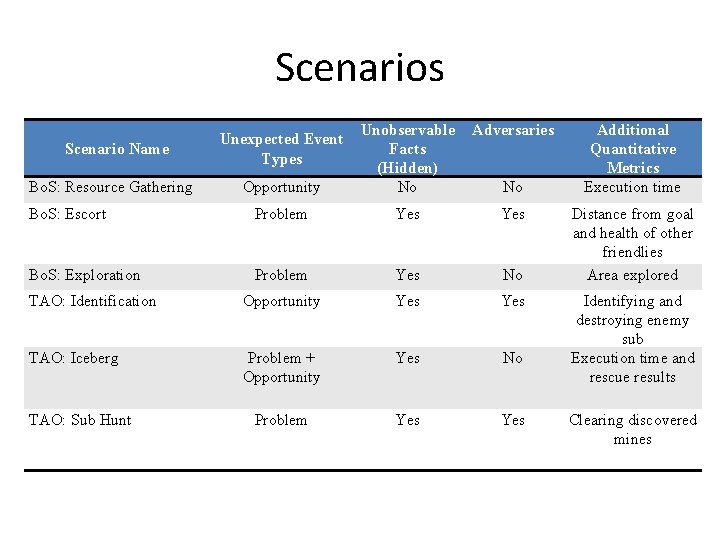

Evaluation • Method – 6 scenarios from 2 strategy simulations – 25 trials with perturbations to unit starting locations – Compare against offline planning and dynamic replanning • Metrics – Accomplish the user provided goal – Additional scenario specific metrics • e. g. , time to success, distance to goal, health of units • Research questions – Can ARTUE competently respond in complex environments? – What is the impact of GDA?

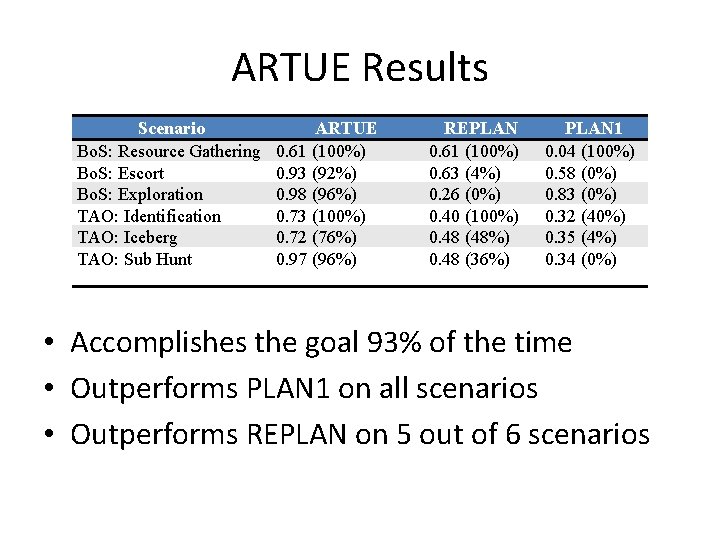

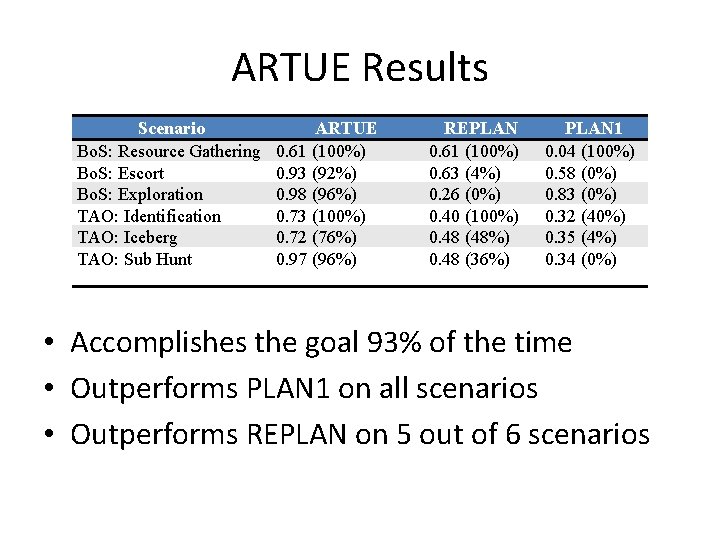

ARTUE Results Scenario Bo. S: Resource Gathering Bo. S: Escort Bo. S: Exploration TAO: Identification TAO: Iceberg TAO: Sub Hunt ARTUE 0. 61 (100%) 0. 93 (92%) 0. 98 (96%) 0. 73 (100%) 0. 72 (76%) 0. 97 (96%) REPLAN 0. 61 (100%) 0. 63 (4%) 0. 26 (0%) 0. 40 (100%) 0. 48 (48%) 0. 48 (36%) PLAN 1 0. 04 (100%) 0. 58 (0%) 0. 83 (0%) 0. 32 (40%) 0. 35 (4%) 0. 34 (0%) • Accomplishes the goal 93% of the time • Outperforms PLAN 1 on all scenarios • Outperforms REPLAN on 5 out of 6 scenarios

Discussion • ARTUE outperforms REPLAN when – The scenarios involve reasoning about unobservable facts and entities • e. g. , the enemy producing region – Unexpected situations present goals outside the scope of the current mission • e. g. , rescuing nearby passengers • PLAN 1 outperforms REPLAN, when REPLAN repeatedly attempts a failed action. • e. g. , attempting to explore an inaccessible area

Related Work • Continual planning – CPEF (des. Jardins et al. 1999) • Extending the goal specification – Goal generators (Hanheide et al. 2010) – Open world quantified goals (Talamadupula et al. 2010) • Cognitive architectures – SOAR, appraisal mechanisms for subgoaling (Mariner et al. 2010) – ICARUS, long-term goals (Choi 2010)

Conclusions • Goal-Driven Autonomy allows ARTUE to respond in strategy simulations – Partially observable, dynamic, continuous, open • Future work – Explore different AI techniques for GDA tasks – How does learning and experience enter this story? • Active learning for goal formulation • Analogical learning for explanation

Thank You (감사합니다) Collaborators: • Matthew Molineaux (Knexus) • David Aha (NRL) Acknowledgments: • Mike Cox (DARPA IPTO) • National Research Council (NRC) 20

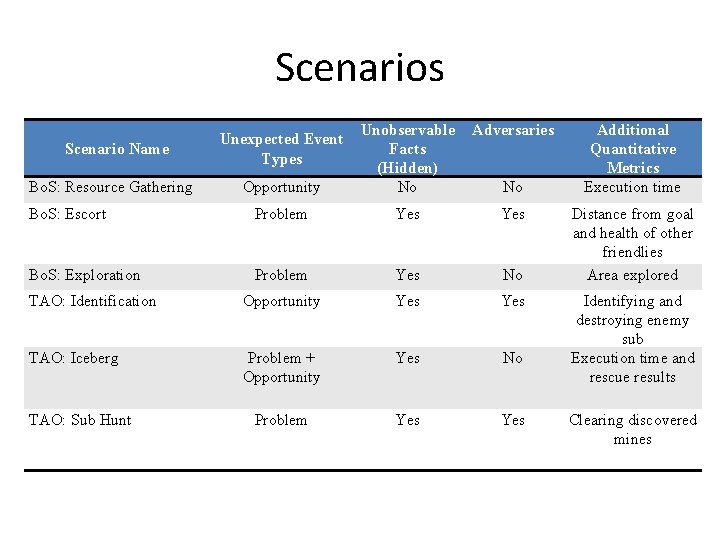

Scenarios Adversaries Opportunity Unobservable Facts (Hidden) No Bo. S: Escort Problem Yes Bo. S: Exploration Problem Yes No TAO: Identification Opportunity Yes TAO: Iceberg Problem + Opportunity Yes No Problem Yes Scenario Name Bo. S: Resource Gathering TAO: Sub Hunt Unexpected Event Types No Additional Quantitative Metrics Execution time Distance from goal and health of other friendlies Area explored Identifying and destroying enemy sub Execution time and rescue results Clearing discovered mines

References • Klenk, M. , Molineaux, M. , and Aha, D. (under review). Goal-Driven Autonomy: Exploring a conceptual model of goal reasoning. Knowledge Engineering Review. Cambridge University Press. • Molineaux, M. , Klenk, M. , and Aha, D. (2010). Planning in dynamic environments: Extending HTNs with nonlinear continuous effects. In Proceedings of Twenty-Fourth AAAI Conference on Artificial Intelligence (AAAI-10). Atlanta, GA. 26% acceptance rate. • Molineaux, M. , Klenk, M. , and Aha, D. (2010). Goal-driven autonomy in a navy training simulation. In Proceedings of Twenty. Fourth AAAI Conference on Artificial Intelligence (AAAI-10). Atlanta, GA. 29% acceptance rate (special track on Integrated Intelligence).