Gnutella Searching Algorithms in Unstructured PeertoPeer Networks CS

![Iterative Deepening • To implement more efficiently, [3] proposed the following – Assume message Iterative Deepening • To implement more efficiently, [3] proposed the following – Assume message](https://slidetodoc.com/presentation_image/058769a1f19137026a9682dd9998ca63/image-39.jpg)

![References [1] Gnutella RFC (http: //rfc-gnutella. sourceforge. net/src/rfc-0_6 draft. html) [2] Yang and Garcia-Molina, References [1] Gnutella RFC (http: //rfc-gnutella. sourceforge. net/src/rfc-0_6 draft. html) [2] Yang and Garcia-Molina,](https://slidetodoc.com/presentation_image/058769a1f19137026a9682dd9998ca63/image-65.jpg)

- Slides: 65

Gnutella & Searching Algorithms in Unstructured Peer-to-Peer Networks CS 780 -3 Lecture Notes In Courtesy of David Bryan

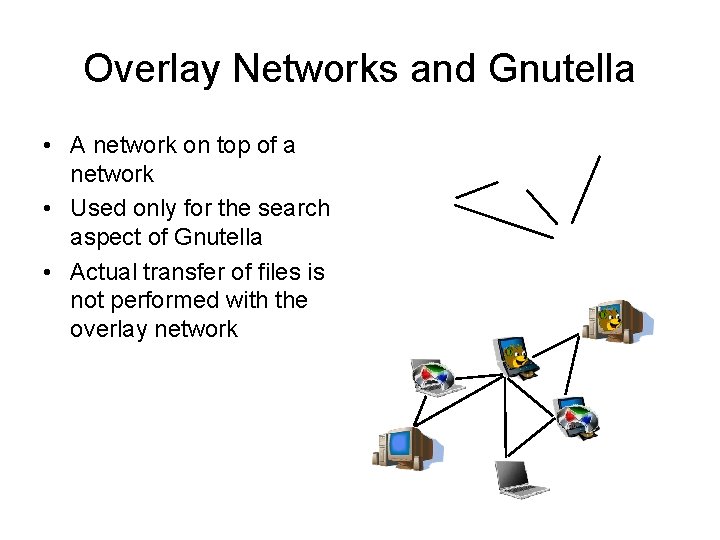

What is Gnutella? • Gnutella is a fully decentralized peer-to-peer protocol for locating resources • Standard, not a program. There are many implementations of Gnutella (Bear. Share, Lime. Wire, Morpheus) • Each member of the network may act as a client, looking for data, or a server, sharing that data Server Serv Client

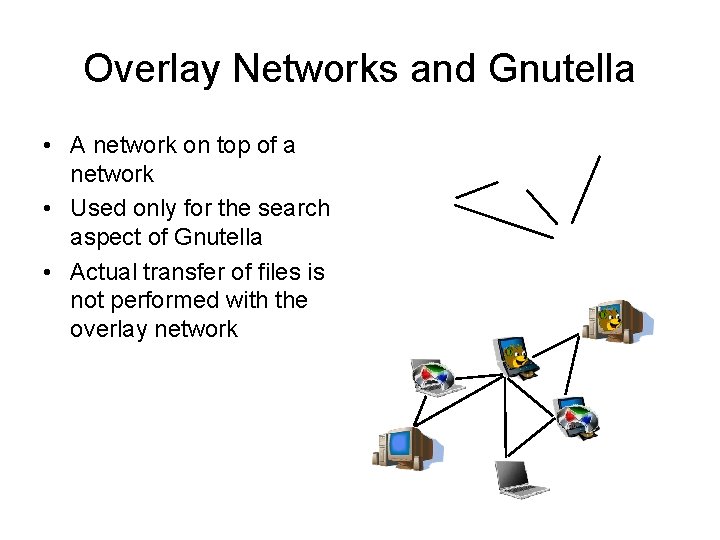

Overlay Networks and Gnutella • A network on top of a network • Used only for the search aspect of Gnutella • Actual transfer of files is not performed with the overlay network

Connecting to the Network • When you start the application, need to first connect to a node - bootstrapping • No set way to do this. Protocol is silent on the issue – Node Cache – contact a well known node that maintains a list of nodes – Node Web Page – lists locations of nodes to bootstrap and get started

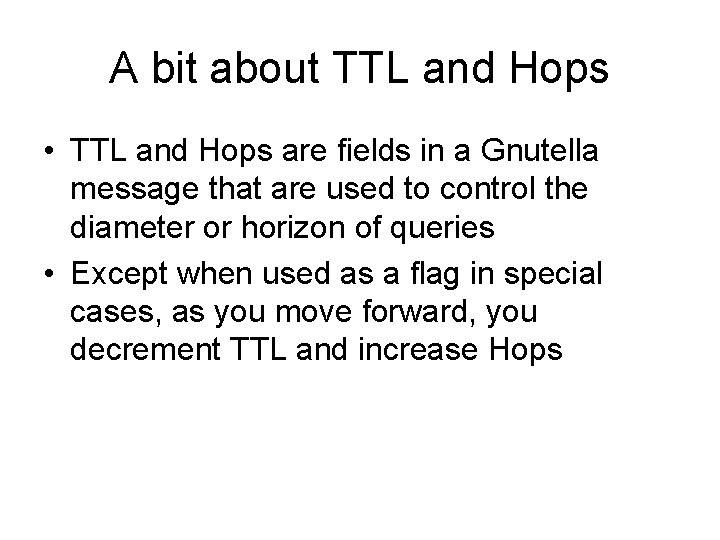

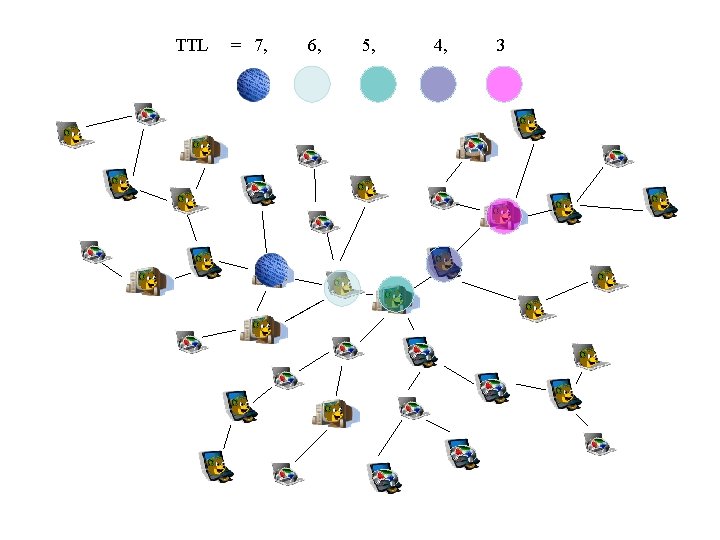

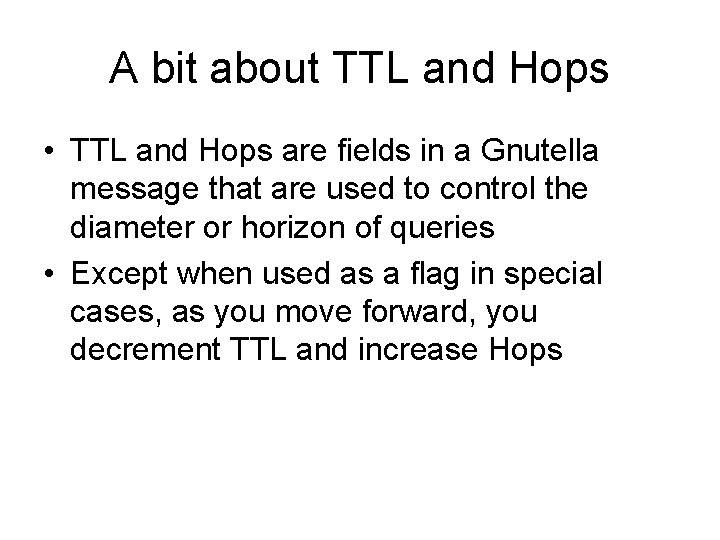

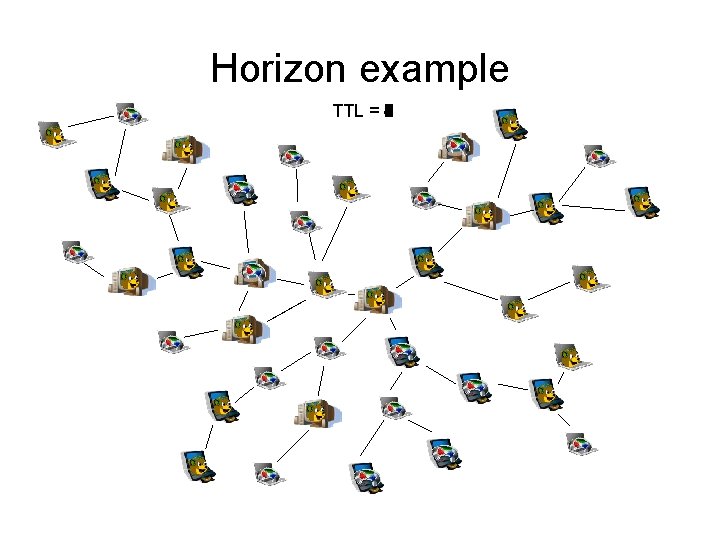

A bit about TTL and Hops • TTL and Hops are fields in a Gnutella message that are used to control the diameter or horizon of queries • Except when used as a flag in special cases, as you move forward, you decrement TTL and increase Hops

TTL/Hops Example TTL = 7 Hops = 0 TTL = 6 Hops = 1 TTL = 4 Hops = 3 TTL = 5 Hops = 2 TTL = 3 Hops = 4

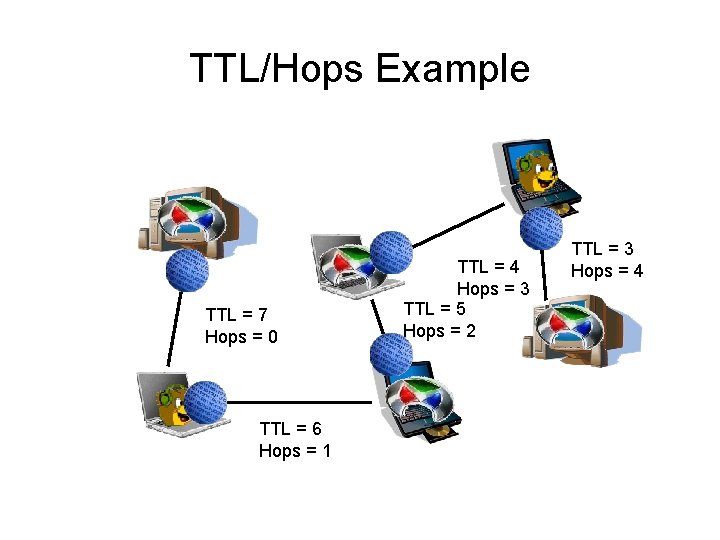

Horizon example TTL = 7 1 2 4 3 5 6

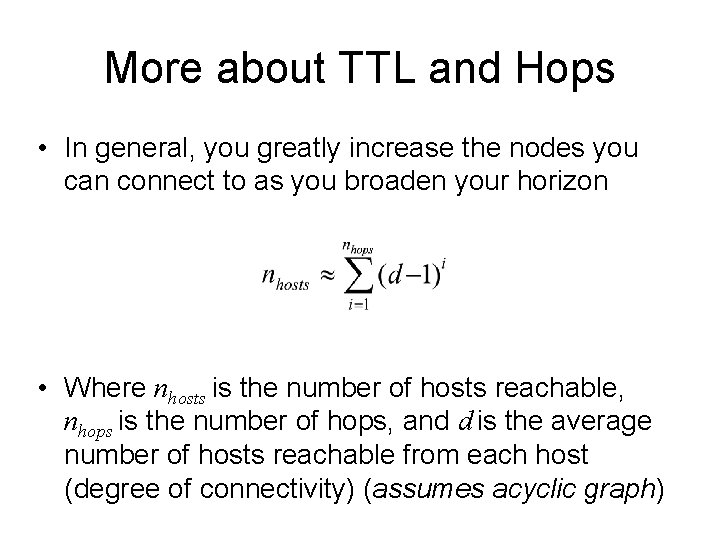

More about TTL and Hops • In general, you greatly increase the nodes you can connect to as you broaden your horizon • Where nhosts is the number of hosts reachable, nhops is the number of hops, and d is the average number of hosts reachable from each host (degree of connectivity) (assumes acyclic graph)

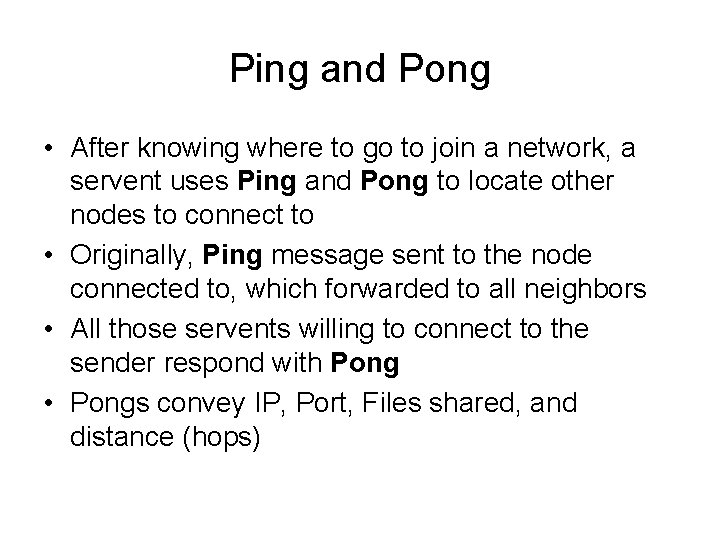

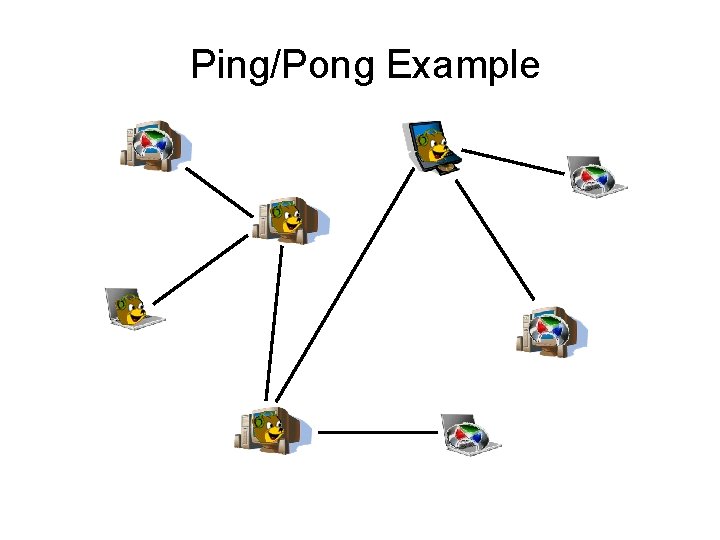

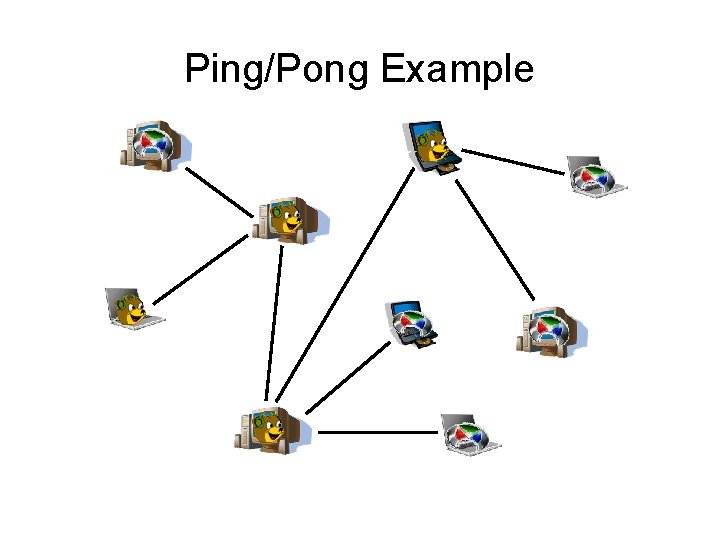

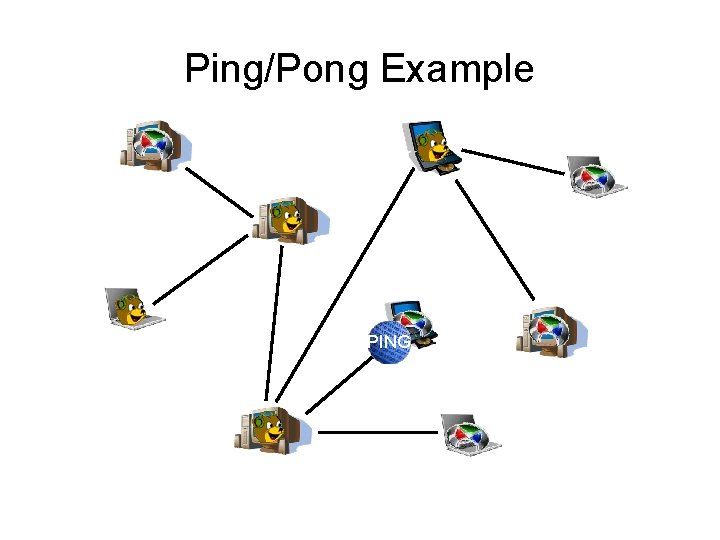

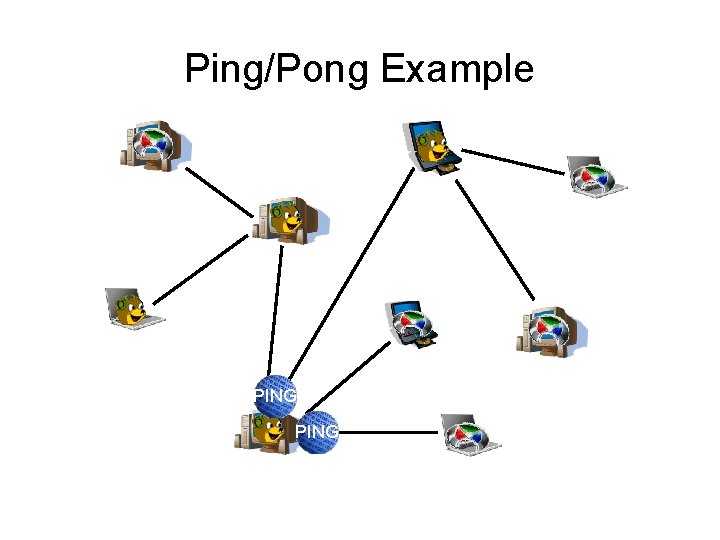

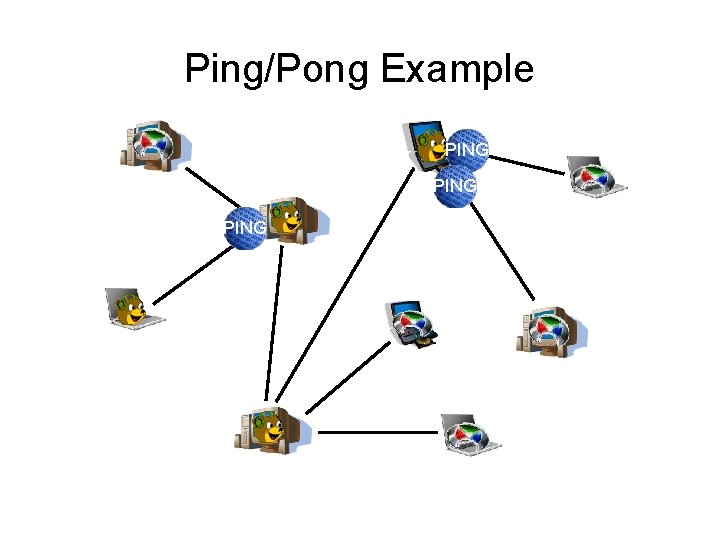

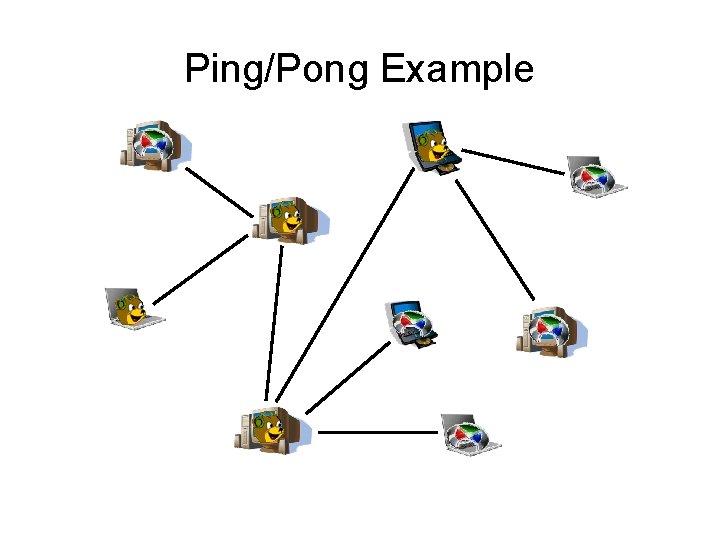

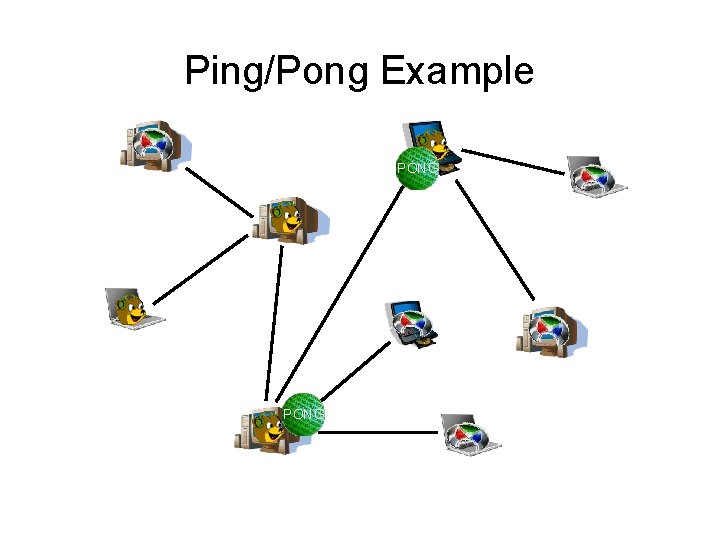

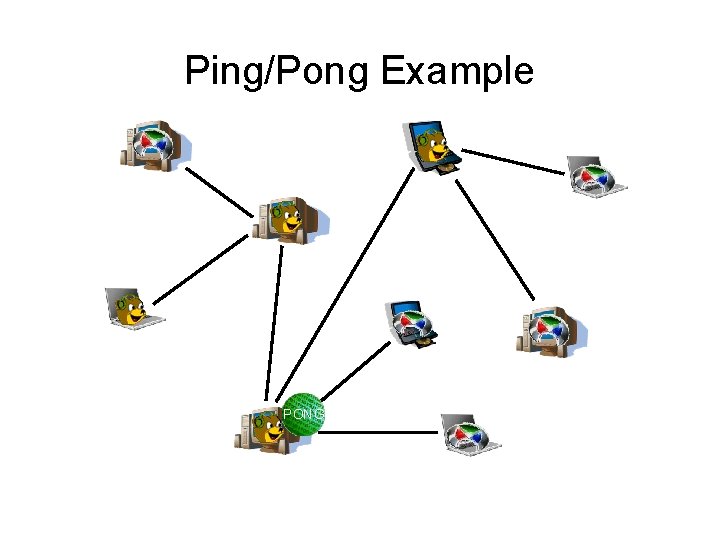

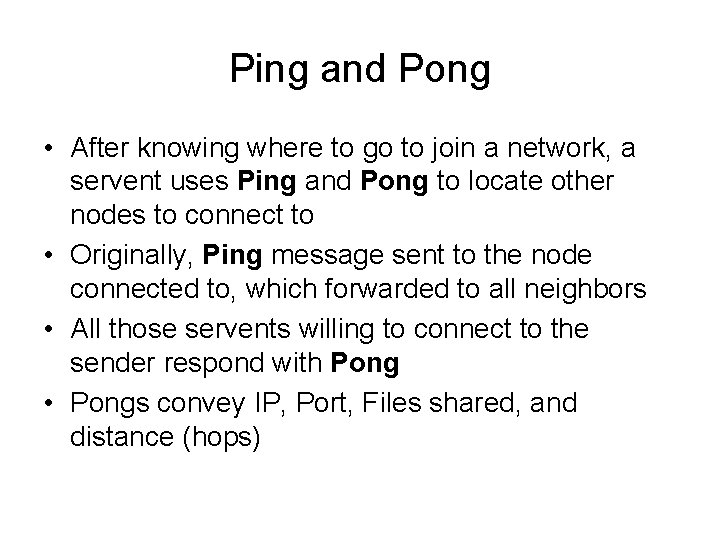

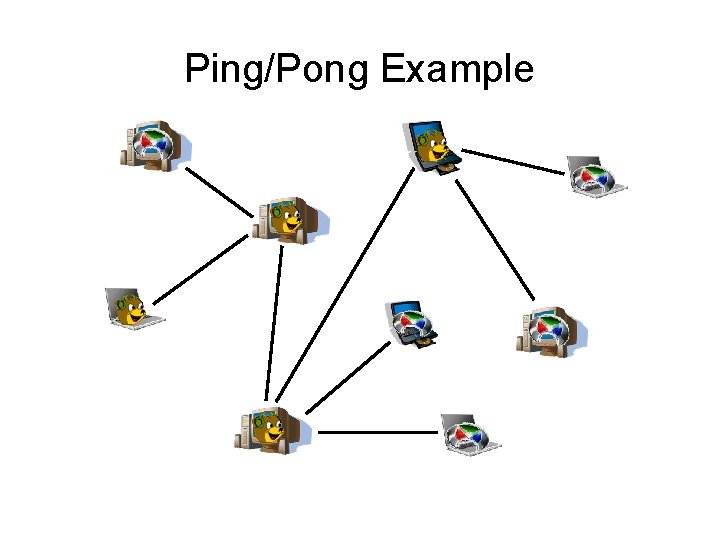

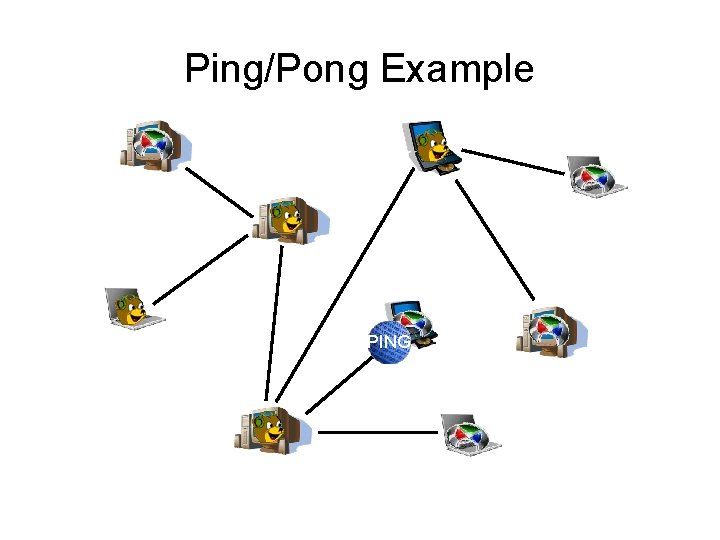

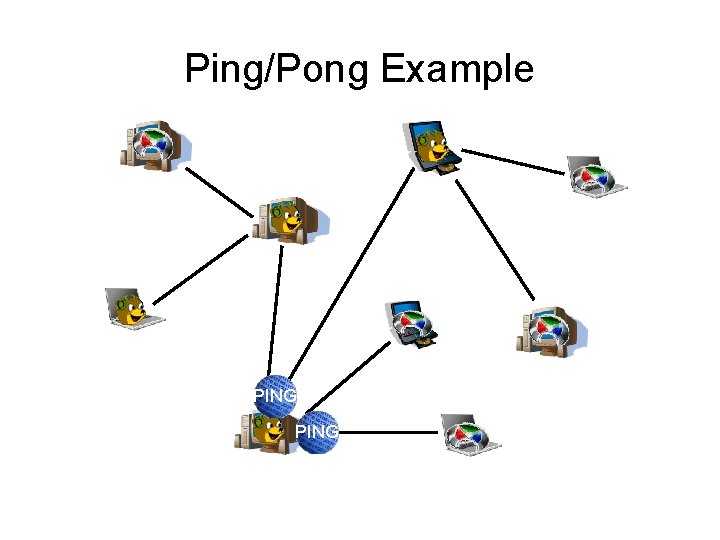

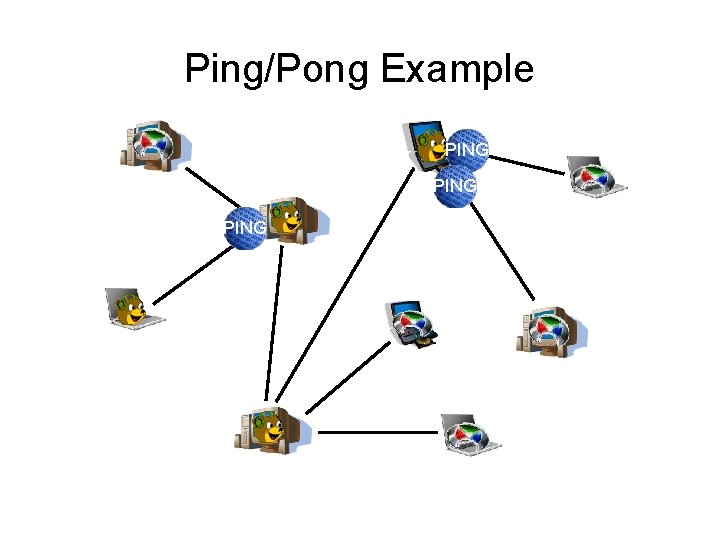

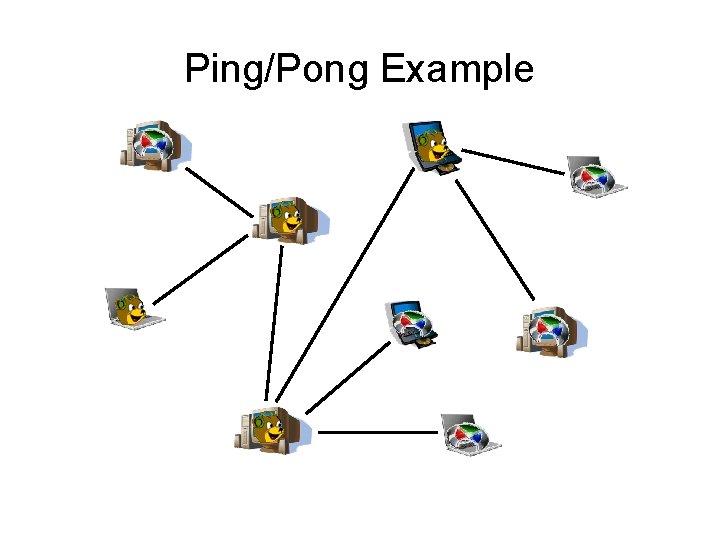

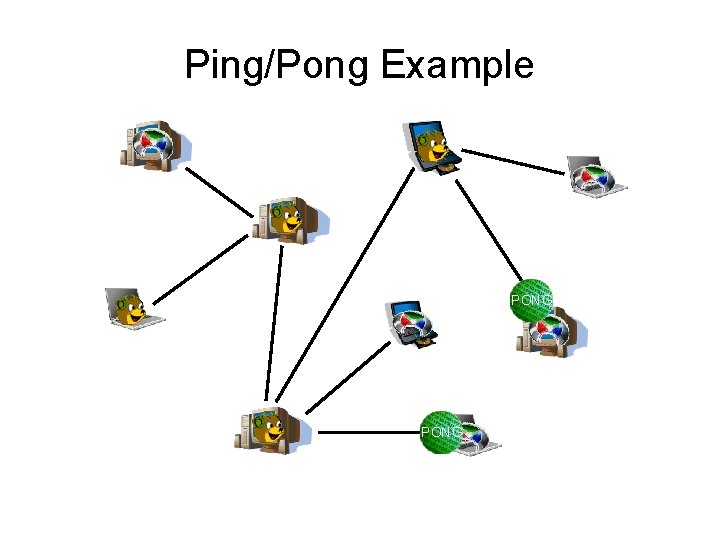

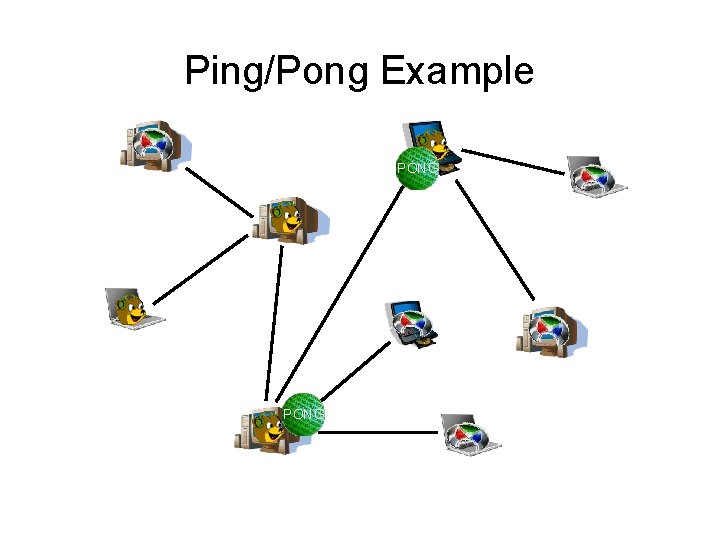

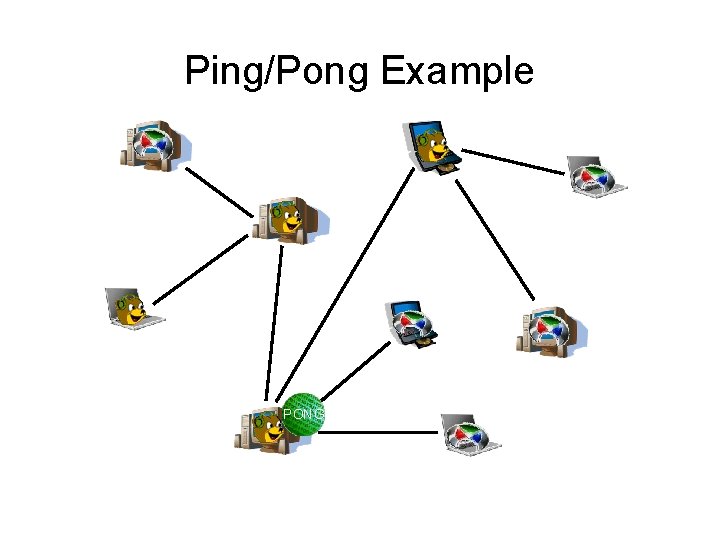

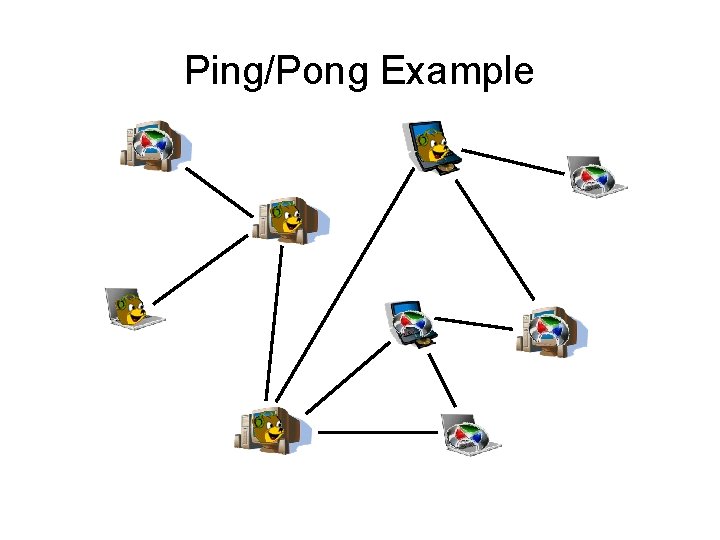

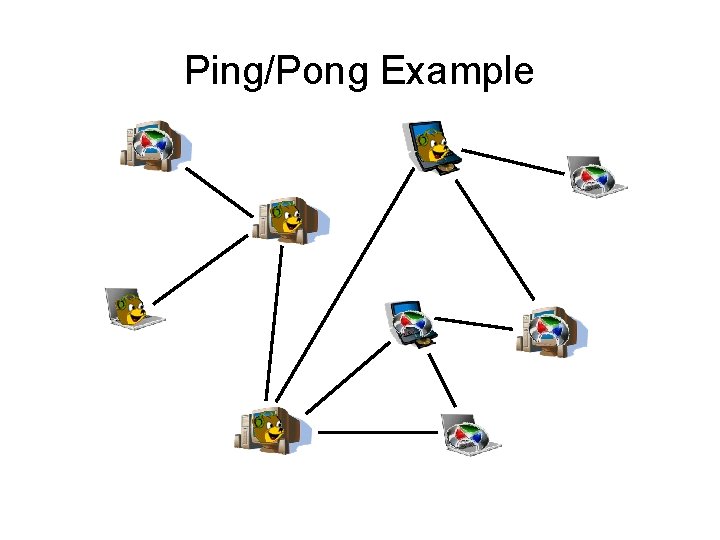

Ping and Pong • After knowing where to go to join a network, a servent uses Ping and Pong to locate other nodes to connect to • Originally, Ping message sent to the node connected to, which forwarded to all neighbors • All those servents willing to connect to the sender respond with Pong • Pongs convey IP, Port, Files shared, and distance (hops)

Ping/Pong Example

Ping/Pong Example

Ping/Pong Example PING

Ping/Pong Example PING

Ping/Pong Example PING

Ping/Pong Example

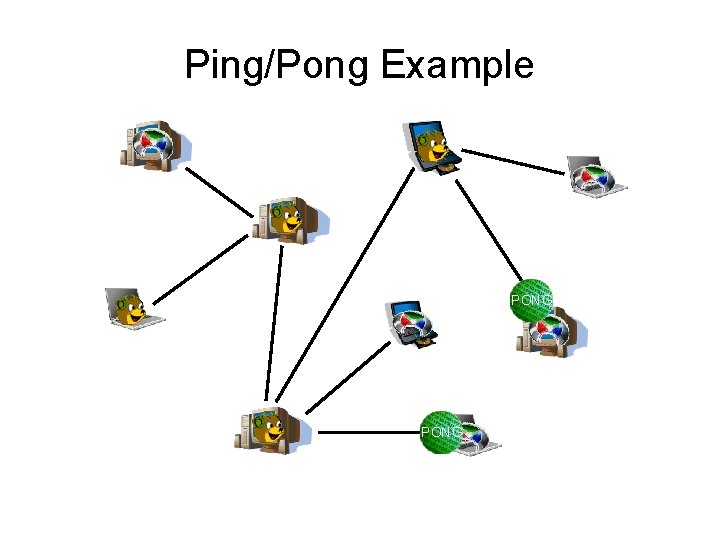

Ping/Pong Example PONG

Ping/Pong Example PONG

Ping/Pong Example PONG

Ping/Pong Example

Ping/Pong Example

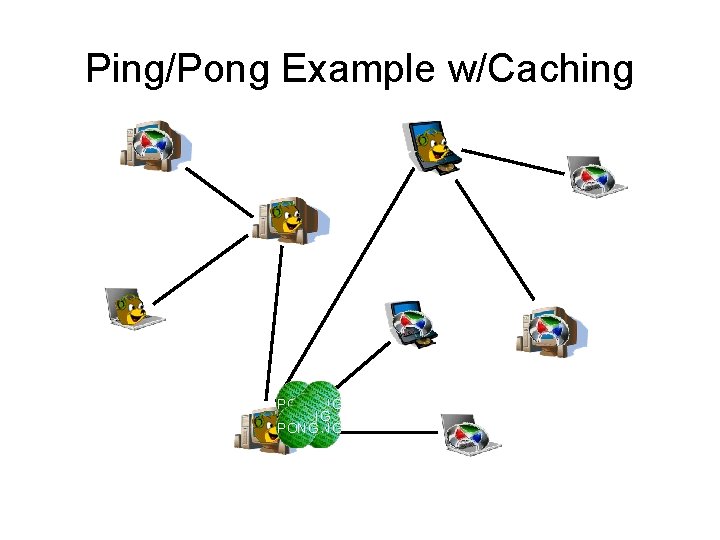

Pong Caching • Problem with this approach was traffic – broadcasting causes too much traffic • Newer standard uses “Pong Caching”. Servents keep information about nearby nodes, and send this when Pinged • Protocol suggests one method for how to maintain the cache, but doesn’t require that it be used

Pong Caching, Continued • Use modified TTL and Hops – TTL=1, Hops=0 is a probe – servent only responds about itself – TTL=2, Hops=0 is a crawler – servent responds with pongs from immediate neighbors only

Pong Caching, Continued – TTL=7, Hops=0 is a probe – servent replies about any nearby nodes • Protocol suggests 10 pongs should be returned – those 10 that have most recently been received • Must be good pongs (recent responses, well behaved servents) • Servents should send every 3 seconds, but responses come from other’s cache, so bandwidth is claimed to be low

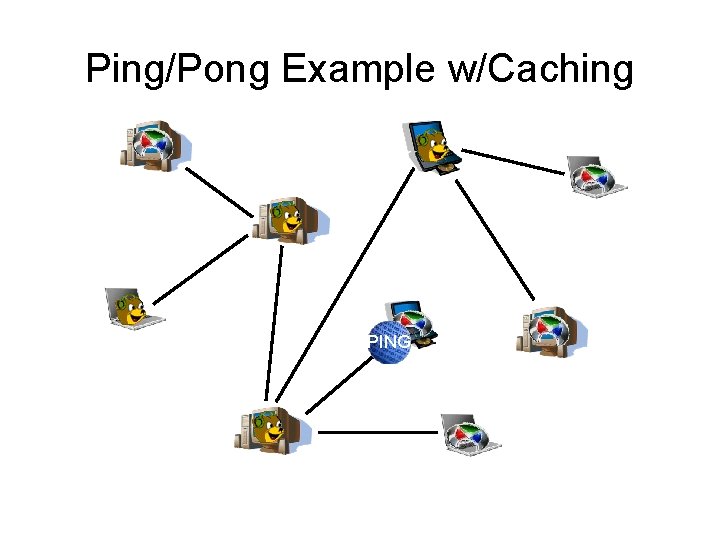

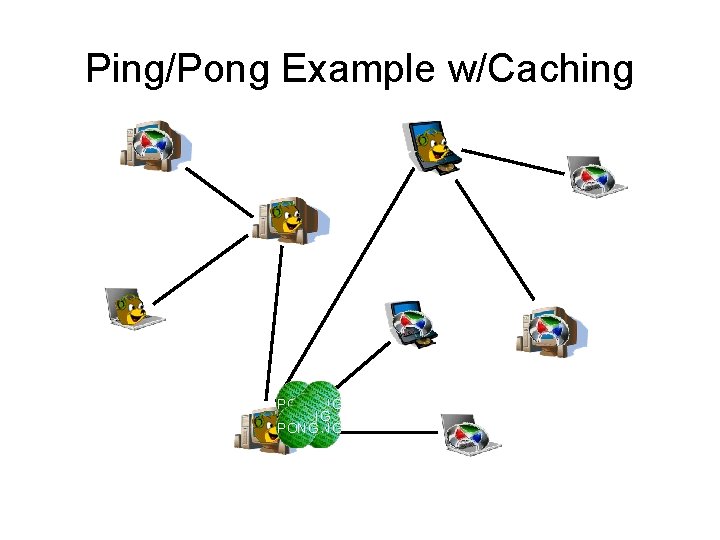

Ping/Pong Example w/Caching PING

Ping/Pong Example w/Caching PONG PONG

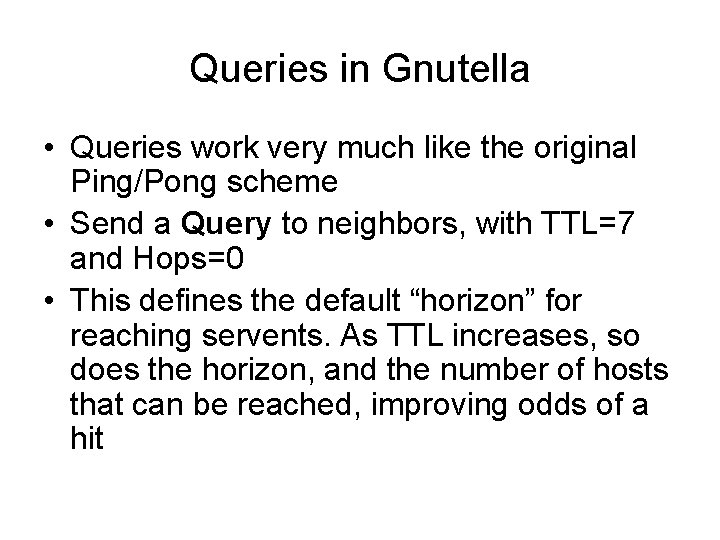

Queries in Gnutella • Queries work very much like the original Ping/Pong scheme • Send a Query to neighbors, with TTL=7 and Hops=0 • This defines the default “horizon” for reaching servents. As TTL increases, so does the horizon, and the number of hosts that can be reached, improving odds of a hit

Queries in Gnutella, Continued • Send Query to neighbors, who send to their neighbors • Decrement TTL, increase Hops when you receive • Continue forwarding while TTL != 0 • If you have the requested file, return a Query Hit message • Generates lots of messages. Called “flood” search

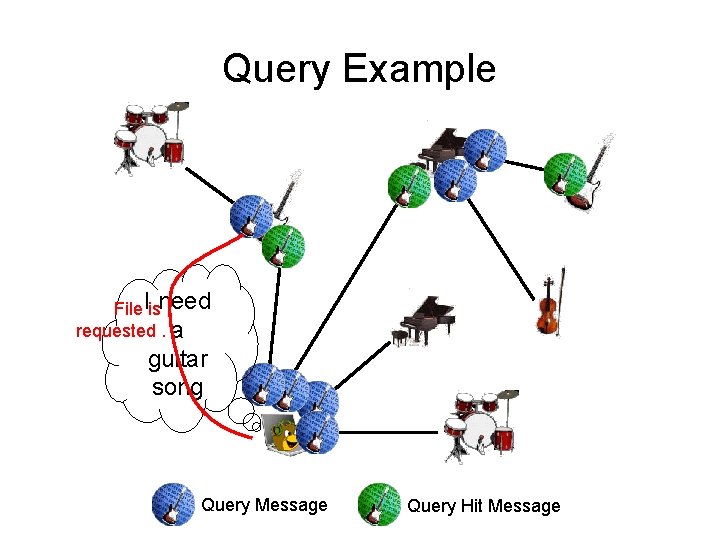

Query Example File Iisneed requested. a guitar song Query Message Query Hit Message

Transferring Data • When a file is located using the Gnutella protocol, the Query Hit message returns a host/port to contact to obtain the file – The connection to get data is made using HTTP – Usually (but not always) same host/port as used for Gnutella traffic • Problems occur when servent is behind firewall

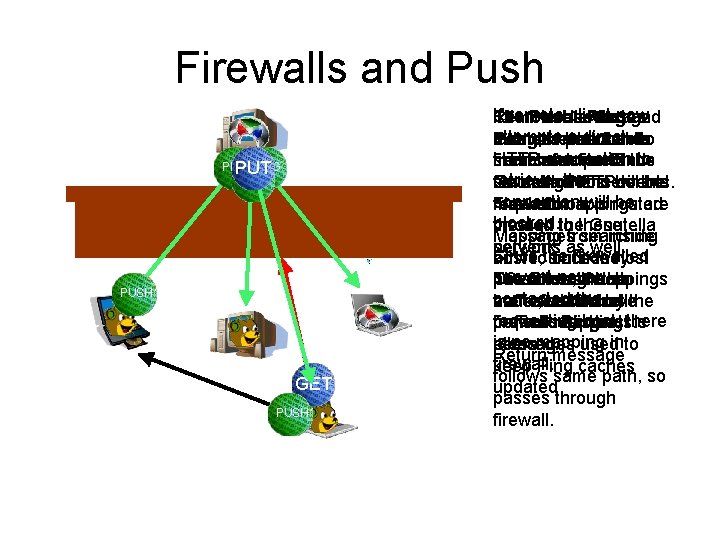

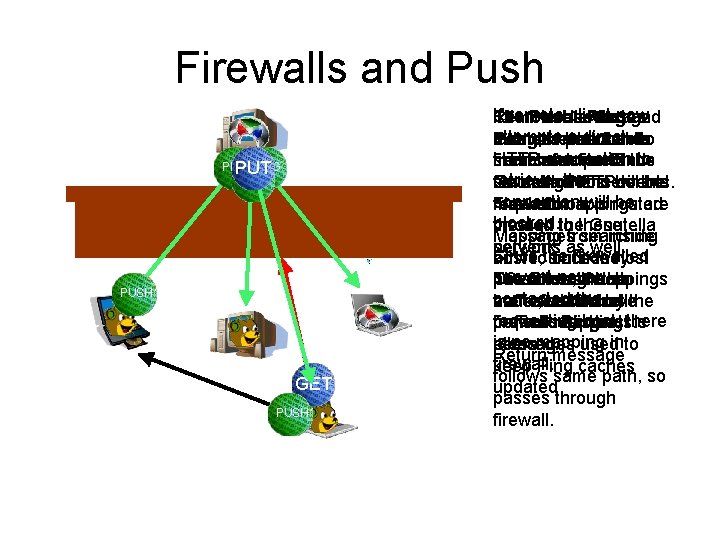

Firewalls and Push • Gnutella protocol can usually pass through firewalls if servents send/receive on same ports – Ping/Pong mechanism will keep pinholes open • Problem comes with direct connections from remote host

Firewalls and Push PING PUT PING QUERY PUSH GET QUERY PUSH If remote client now The Client Push connects message toand Remote uses client Ping uses attempts a direct outsidemechanism instructs host. the remote Sends Gnutella Pong protocol toto HTTP connection fromand machine same tofile. port send the search find for connect Finds toto retrieve, the Gnutella file using traffic HTTP will to the be several on the other hostservents. behind connection be are receivedmappings requestor. on. Itwill is routed firewall. Firewall blocked. through thethese Gnutella created to Mapping from inside Messages searching network. servents as well. Since firewalled host tothe outside host arrive, since they servent never now allows pass through the Of course, ifreturn both The firewall mappings contacted thehave traffic, so Gnutella nodes which ends are behind are refreshed by the requesting host, traffic tomappings. this host is firewalls and not there periodic Ping is no mapping into allowed. peers… messages used Return message firewall. keep Ping caches follows same path, so updated passes through firewall.

Flow Control • In order to control network traffic, Gnutella suggests a very simple flow control method – Servents create a FIFO output queue – When half full, enter flow control mode, stay as long as more than one quarter full • Drop all input • Sort queue to send responses before requests • Long traveling responses gain priority, Long traveling requests lose priority

Bye • Message to tell other clients you have terminated • Not required, but recommended • Should include a reason code. Loosely defined in the protocol

Format and Style of Gnutella Messages • Connections are made via TCP • Classic HTTP style text triple handshake – CONNECT, replied to with 200 • After this, Gnutella messages are exchanged – Gnutella messages are binary • Each requests uses a semi-unique ID number

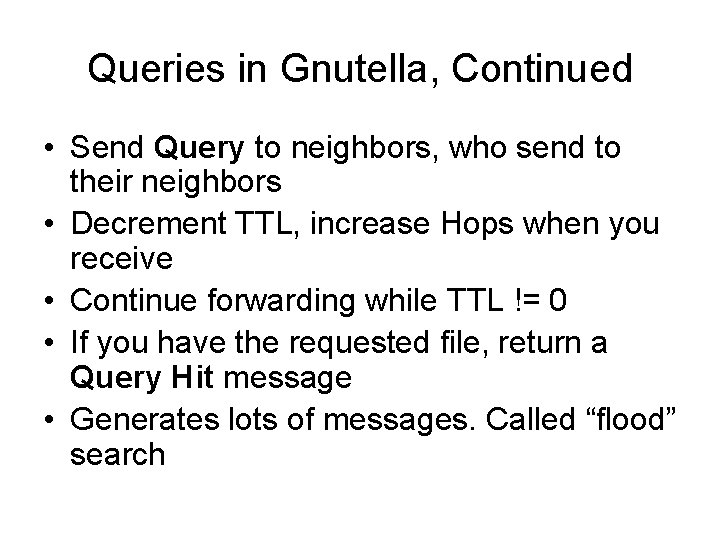

Problems with Flood Query • Traditional Gnutella flood query has a number of problems – Very large number of packets generated to fulfill queries • Study [2] indicates that most searches on Gnutella can be satisfied with a search that visits fewer nodes – Essentially, just a Breadth First Search (BFS) – Some proposals [3, 4] attempt to address this with alternate schemes for searching

Alternatives to Flood Query • • Iterative Deepening Directed BFS Local Indices Random Walkers

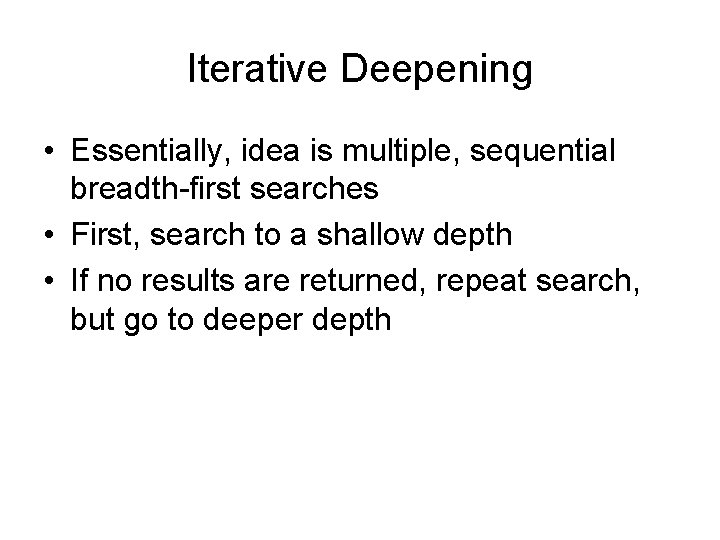

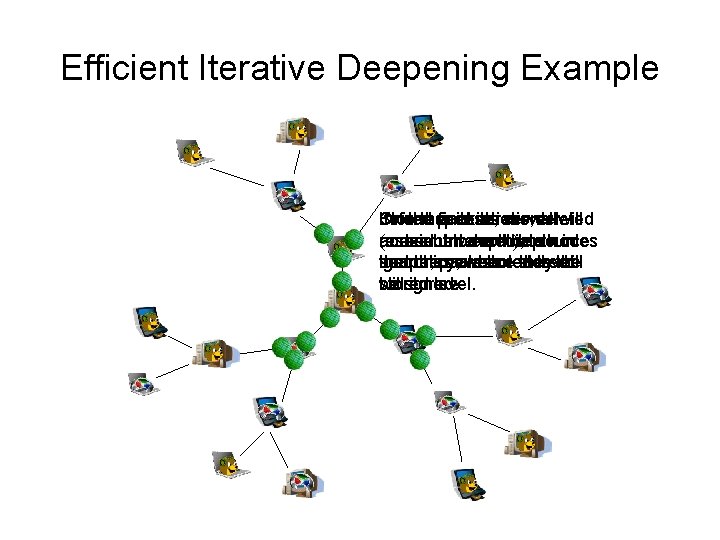

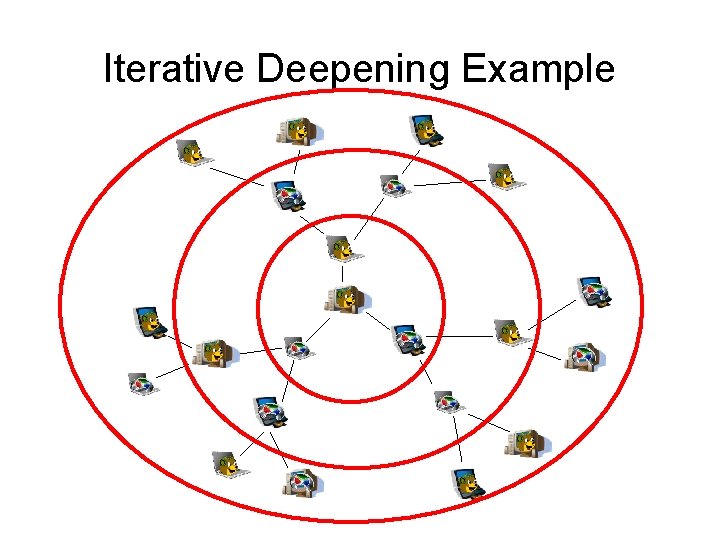

Iterative Deepening • Essentially, idea is multiple, sequential breadth-first searches • First, search to a shallow depth • If no results are returned, repeat search, but go to deeper depth

Iterative Deepening Example

![Iterative Deepening To implement more efficiently 3 proposed the following Assume message Iterative Deepening • To implement more efficiently, [3] proposed the following – Assume message](https://slidetodoc.com/presentation_image/058769a1f19137026a9682dd9998ca63/image-39.jpg)

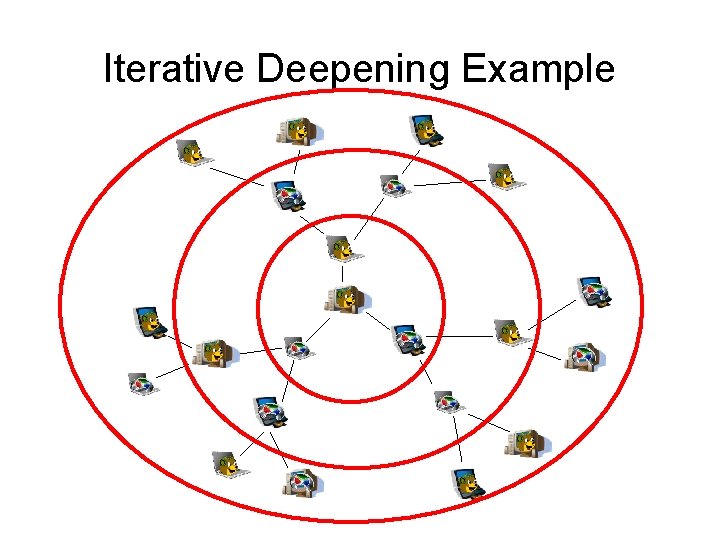

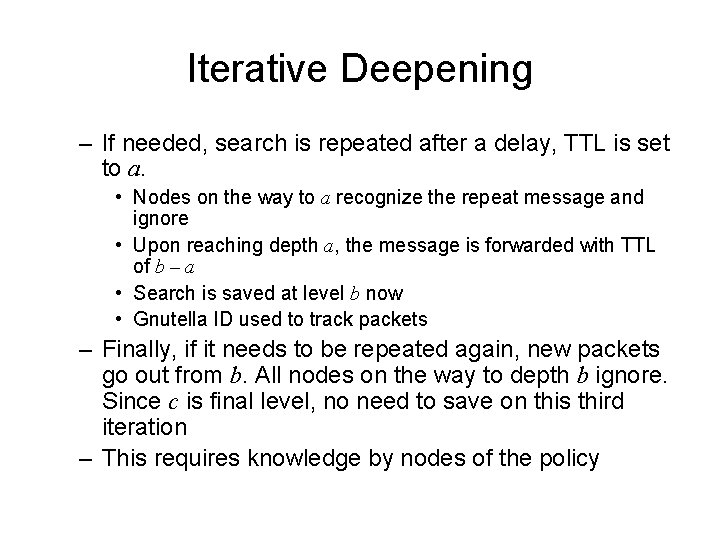

Iterative Deepening • To implement more efficiently, [3] proposed the following – Assume message depth tried first is a, followed by b, then finally c if needed – Nodes that receive search at depth a store the search temporarily – If originating node is satisfied, it doesn’t resend, nodes at depth a will delete the message after a time

Iterative Deepening – If needed, search is repeated after a delay, TTL is set to a. • Nodes on the way to a recognize the repeat message and ignore • Upon reaching depth a, the message is forwarded with TTL of b – a • Search is saved at level b now • Gnutella ID used to track packets – Finally, if it needs to be repeated again, new packets go out from b. All nodes on the way to depth b ignore. Since c is final level, no need to save on this third iteration – This requires knowledge by nodes of the policy

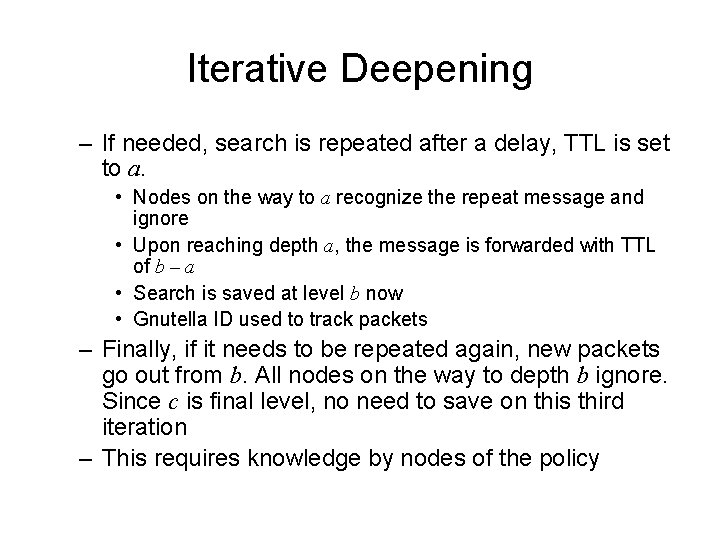

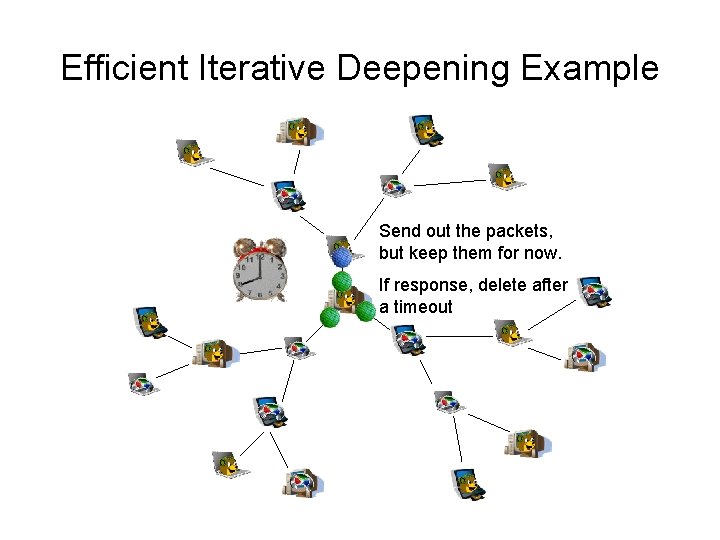

Efficient Iterative Deepening Example Send out the packets, but keep them for now. If response, delete after a timeout

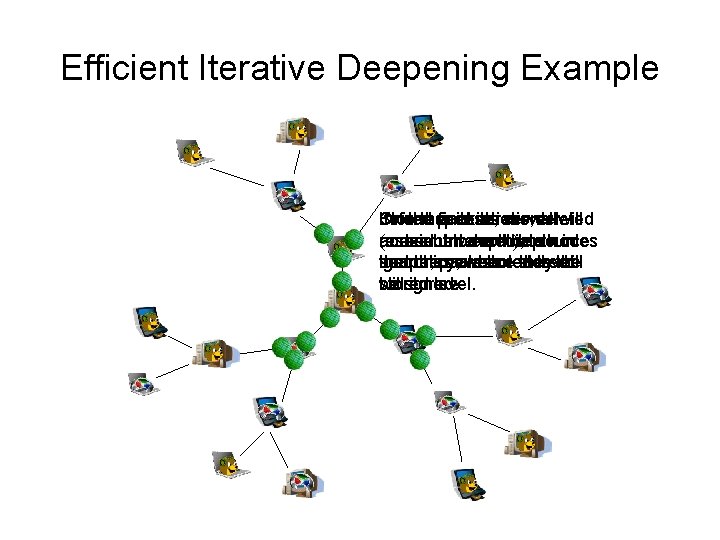

Efficient Iterative Deepening Example Inno On If Stored further the response, packets final iterations, iteration server are deleted all will (maximum resend. and nodes sent between Intermediate todepth), nextthe depth source nodes in searches ignore, the and policy, thepackets new are where stored notreach stored. they level the will stored be will stored. ignore. level.

Directed BFS • Directed BFS works by selecting a subset of the neighbors, and sending to these • You are trying to guess who is most likely to respond • That neighbor may pass on using standard BFS, or the neighbor may use Directed BFS to further limit its messages • Trick is finding a good way to select neighbors

Selection Strategies in BFS • • Random? Highest # results for recent queries Results w/low hop counts High message count Short message queue (flow control) Returns results quickly Has most neighbors Shortest latency

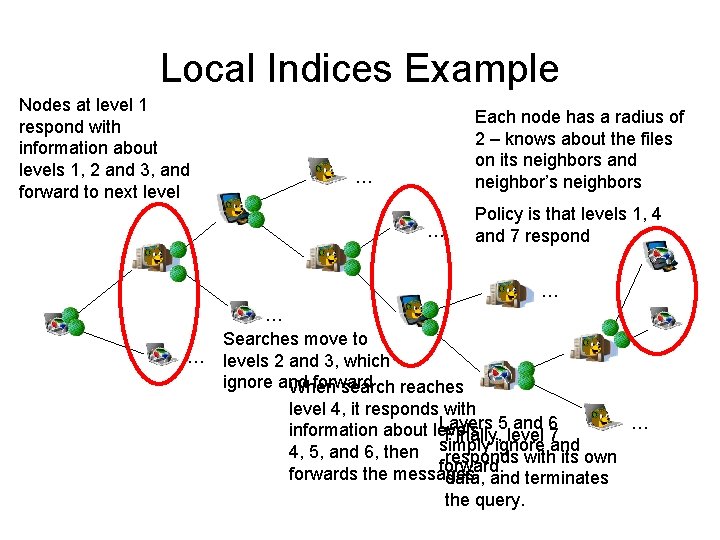

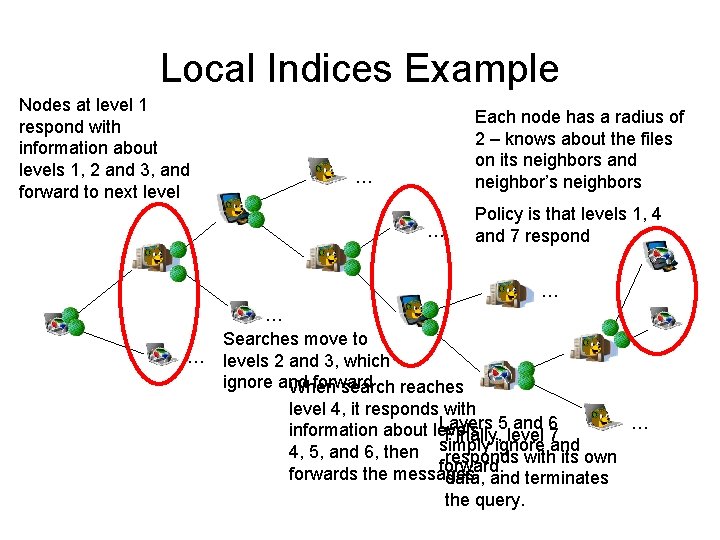

Local Indices • Idea is that a node maintains information about what files neighbors, and possibly neighbor’s neighbors store. “radius of knowledge” • All nodes know about radius, and know about policy – Policy lists which levels will respond and which will ignore messages – Servents look at TTL/Hops to determine if they process or ignore

Local Indices Example Nodes at level 1 respond with information about levels 1, 2 and 3, and forward to next level Each node has a radius of 2 – knows about the files on its neighbors and neighbor’s neighbors … … Policy is that levels 1, 4 and 7 respond … … … Searches move to levels 2 and 3, which ignore and forward When search reaches level 4, it responds with Layers 5 and 6 … information about levels Finally, level 7 ignore and 4, 5, and 6, then simply responds with its own forwards the messages. data, and terminates the query.

Local Indices • Memory issue of maintaining lists – Size is far below a megabyte for radius < 5 • Network issue of building list – Hit from extra packets

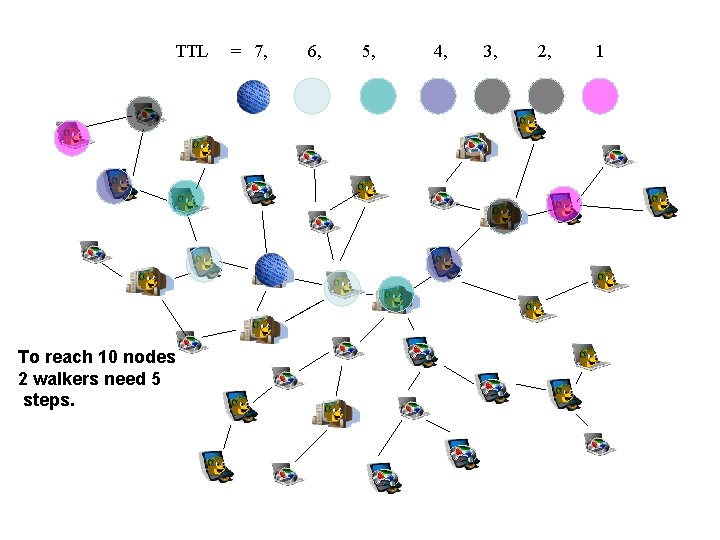

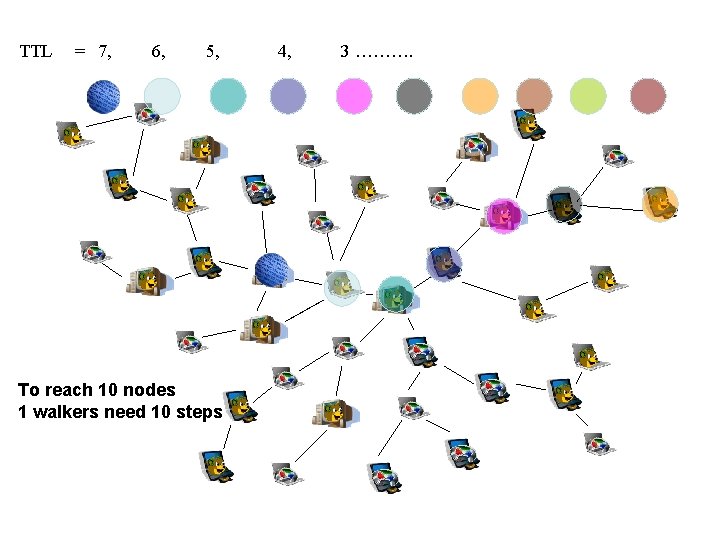

Random Walkers • Quite a different approach – Doesn't use TTLs in same way • One or a few messages are sent out a random link, if the object is found, return a hit, otherwise send to a different node • Choice of where to go next is essentially random • Periodically, when a node receives a query, it checks with source to see if query has been satisfied

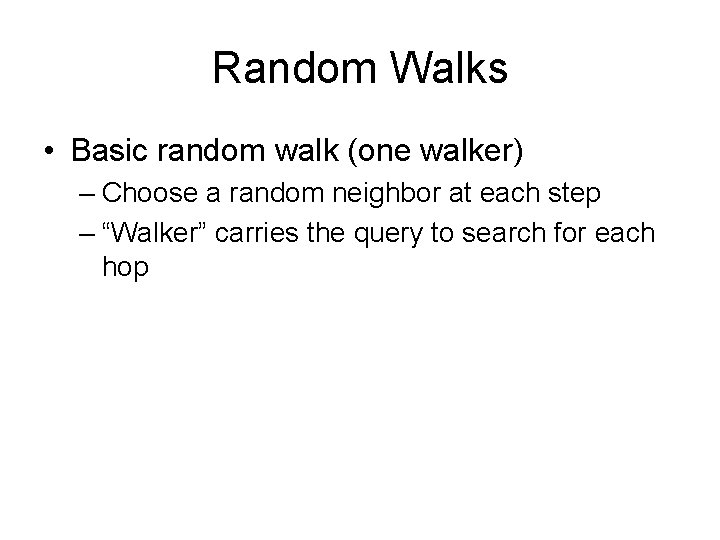

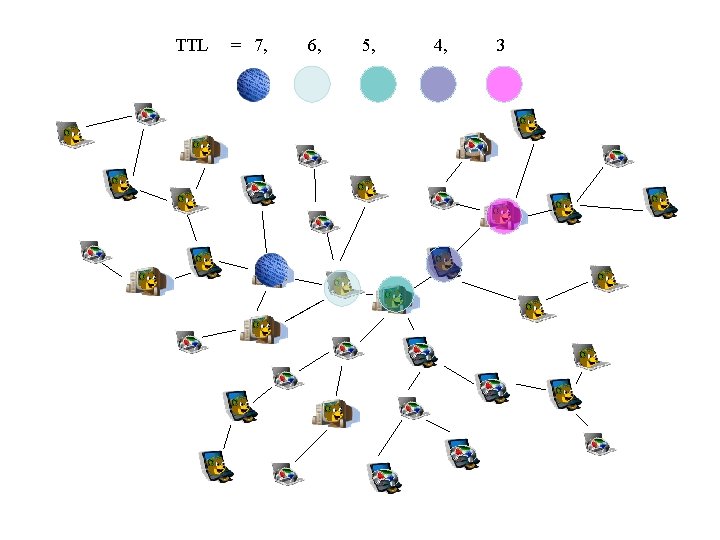

Random Walks • Basic random walk (one walker) – Choose a random neighbor at each step – “Walker” carries the query to search for each hop

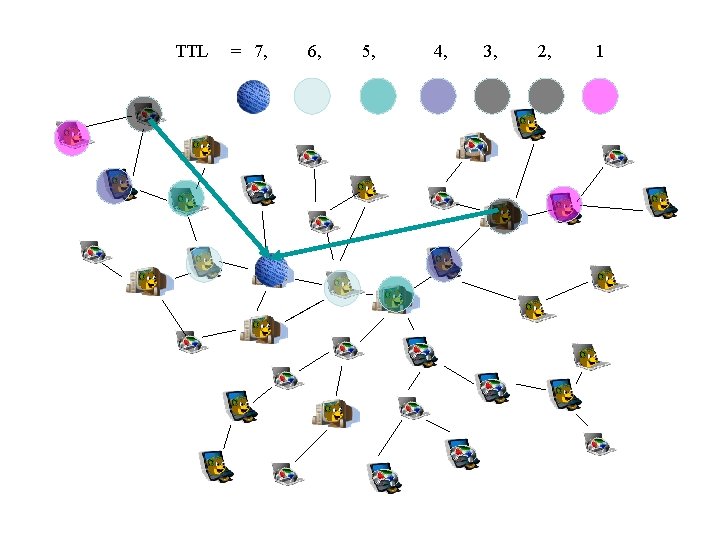

TTL = 7, 6, 5, 4, 3

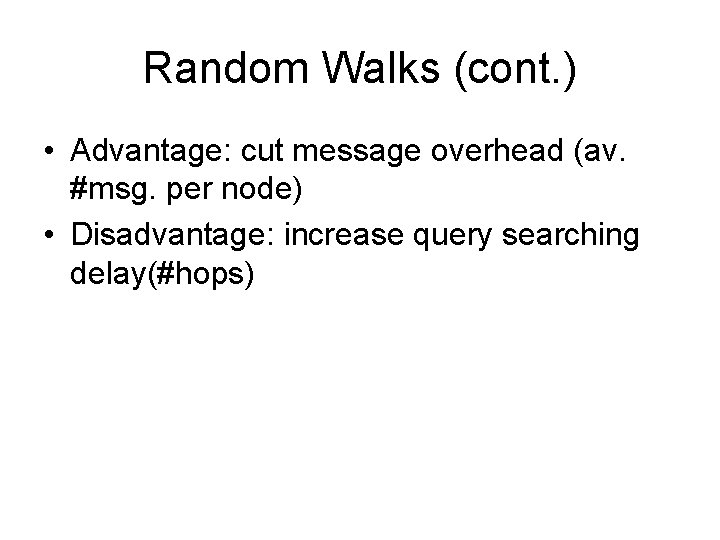

Random Walks (cont. ) • Advantage: cut message overhead (av. #msg. per node) • Disadvantage: increase query searching delay(#hops)

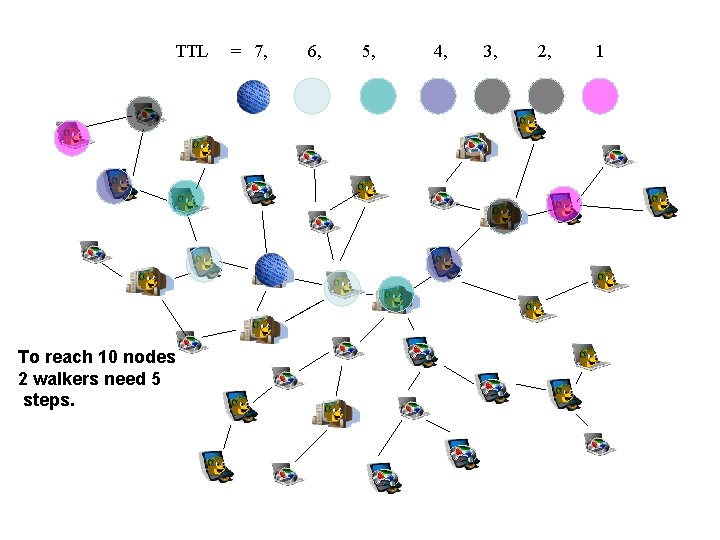

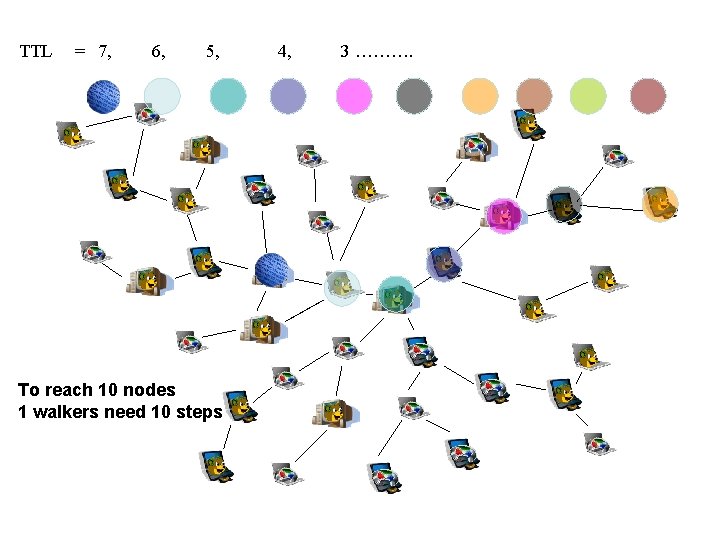

Multiple Parallel Random Walks (K Walker) • To decrease delay, increase walkers • Multiple Walkers – K walkers, T steps = 1 walker, k*T steps therefore cut the delay by factor of k.

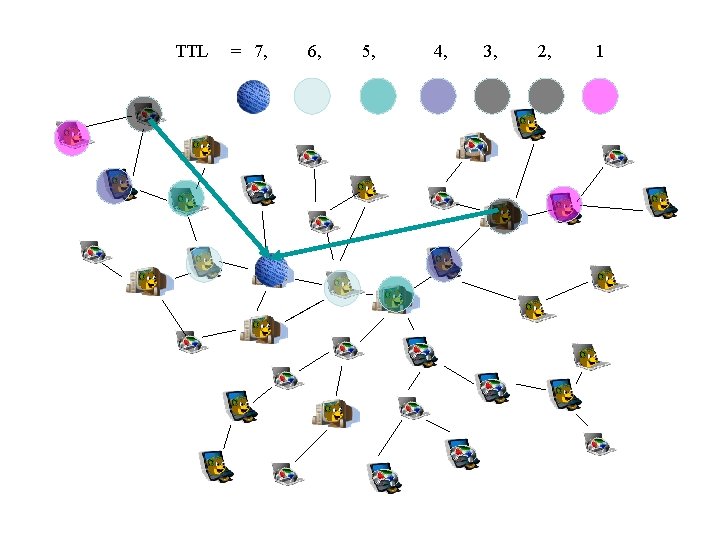

TTL To reach 10 nodes 2 walkers need 5 steps. = 7, 6, 5, 4, 3, 2, 1

TTL = 7, 6, 5, To reach 10 nodes 1 walkers need 10 steps 4, 3 ……….

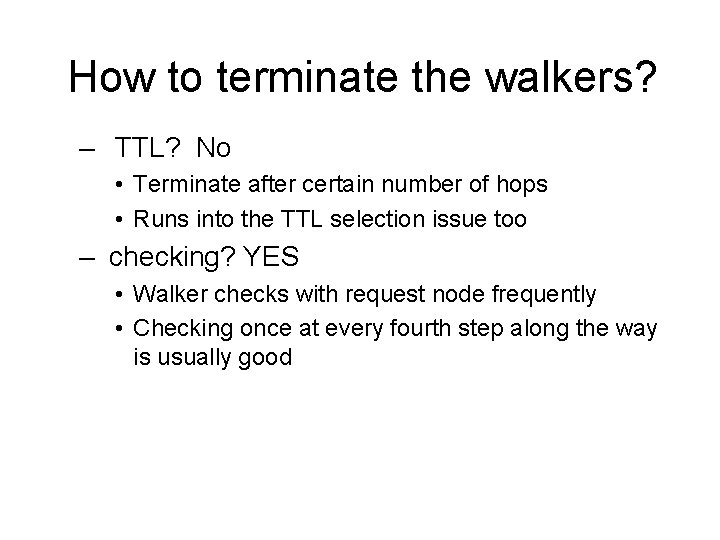

How to terminate the walkers? – TTL? No • Terminate after certain number of hops • Runs into the TTL selection issue too – checking? YES • Walker checks with request node frequently • Checking once at every fourth step along the way is usually good

TTL = 7, 6, 5, 4, 3, 2, 1

One improvement: State Keeping • Each query has a unique ID and All its K walkers are tagged with that ID • For each ID, a node only forwards the query to a neighbor who is new to this query

Issues • Several alternatives (Local Indices, Iterative Deepening) require a global policy to be understood by all nodes • Sharing information about file index (Local Indices) or even statistics (Directed BFS) leads to possible security risks • Most, require at least some modification to the servents

Evaluating Techniques • Do the results suffer? – Most of these assume a request to be satisfied with a number of hits – typically 50 -100. – Time for those results? • • What sort of network traffic does this add? What sort of processing is needed? What sort of memory is needed? Local state and increased client complexity?

Iterative Deepening • Savings depend on how long to wait between iterations, depth of each search – 80% bandwidth, 60% processing for wait of 8 seconds between iterations • A policy with depths of 5, 6, 7 and 6 second delays optimal – Saves 70% bandwidth, 50% processing – Adds only ~30% to time to satisfy • Some state needed for knowing policy, storing packets

Directed BFS • Using neighbors with greatest number of results and lowest avg. response time worked best – Past performance is an indicator of future performance • Both had probability of satisfying >85% of a BFS • About 70% less bandwidth and processing • Higher cost than iterative deepening, but slightly faster

Local Indices • Same results claimed as BFS • No timing results, since simulated on existing data • Traffic is reduced by 60%, processing by 50% for a radius of 1 • Index size is a factor – radius of 1 71 KB, but 7 is 21 MB! • Bandwidth actually increases for large r – too much traffic to maintain indices

Random Walkers • Can have large gain in efficiency if – State to prevent duplicates – Good policy to terminate once satisfied (not TTL based) – Very good results obtained for low number of hops (as low as 8, which is similar to depth used for floods) – Looks like a promising area of research, perhaps combined with some metrics used in Directed BFS to select nodes

Conclusion • Almost all improve, but trade-offs – Most need to maintain state, increased coding complexity – Usually less results, slower performance – Security implications of more information sharing • Good deal of research left to be done in this area, although most work appears to be a year or more old

![References 1 Gnutella RFC http rfcgnutella sourceforge netsrcrfc06 draft html 2 Yang and GarciaMolina References [1] Gnutella RFC (http: //rfc-gnutella. sourceforge. net/src/rfc-0_6 draft. html) [2] Yang and Garcia-Molina,](https://slidetodoc.com/presentation_image/058769a1f19137026a9682dd9998ca63/image-65.jpg)

References [1] Gnutella RFC (http: //rfc-gnutella. sourceforge. net/src/rfc-0_6 draft. html) [2] Yang and Garcia-Molina, Comparing Hybrid Peer-to-Peer Systems, Technical Report (http: //dbpubs. stanford. edu: 8090/pub/2000 -35) [3] Yang and Garcia-Molina, Improving Search in Peer-to-Peer Networks, in Proc. of the 22 nd International Conference on Distributed Computing Systems (ICDCS’ 02), June 2002 (http: //dbpubs. stanford. edu: 8090/pub/2002 -28) [4] Q. Lv, P. Cao, E. Cohen, K. Li and S. Shenker, Search and Replication in Unstructured Peer-to-Peer Networks, in Proc. Of the ACM ICS, 2002 (http: //parapet. ee. princeton. edu/~sigm 2002/papers/p 258 -lv. pdf) [5] Yang and Garcia-Molina, Improving Search in Peer-to-Peer Networks, Technical Report Version of [3] (http: //dbpubs. stanford. edu: 8090/pub/2001 -47)