Globus Trends Future Directions Dr Dan Fraser Director

Globus Trends & Future Directions Dr. Dan Fraser Director, Community Driven Improvement of Globus Software

Proposed Globus BOF Outline l What is Globus? u Relationship to other programs l l Where is Globus going? u u Community building via Incubator Projects Emphasis on benefits to HEP computing l l l UNICORE, g. Lite, OMII, … GRAM (Job management) Grid. FTP (Fast File Transfers) – Nordu. Grid, FNAL Data Placement Service Workspaces – STAR, Alice Service creation (Service Oriented Science) How can we work together? 2

The Grid Enable “coordinated resource sharing & problem solving in dynamic, multiinstitutional virtual organizations. ” (Source: “The Anatomy of the Grid”) l Access to shared resources Virtualization, allocation, management l l With predictable behaviors Provisioning, quality of service In dynamic, heterogeneous environments Standards-based interfaces and protocols 3

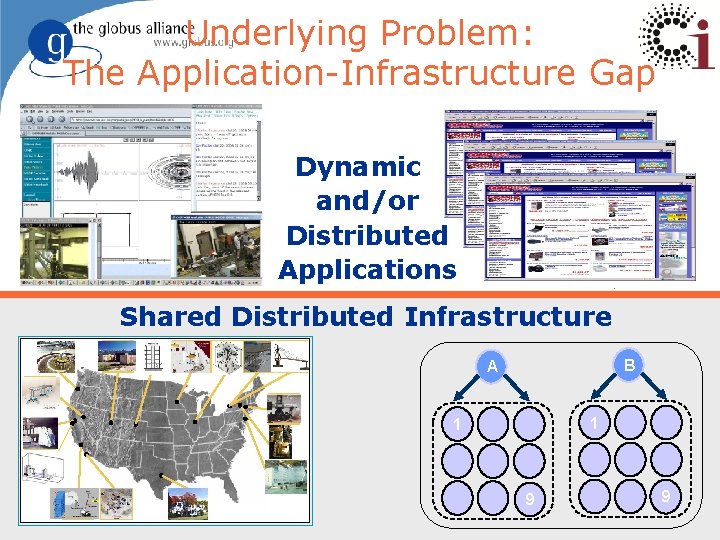

Underlying Problem: The Application-Infrastructure Gap Dynamic and/or Distributed Applications Shared Distributed Infrastructure B A 1 1 9 9 4

More Specifically, I May Want To … l Create a service for use by my colleagues l Manage who is allowed to access my service (or my experimental data or …) l Ensure reliable & secure distribution of data from my lab to my partners l Run 10, 000 jobs on whatever computers I can get hold of l Monitor the status of the different resources to which I have access 5

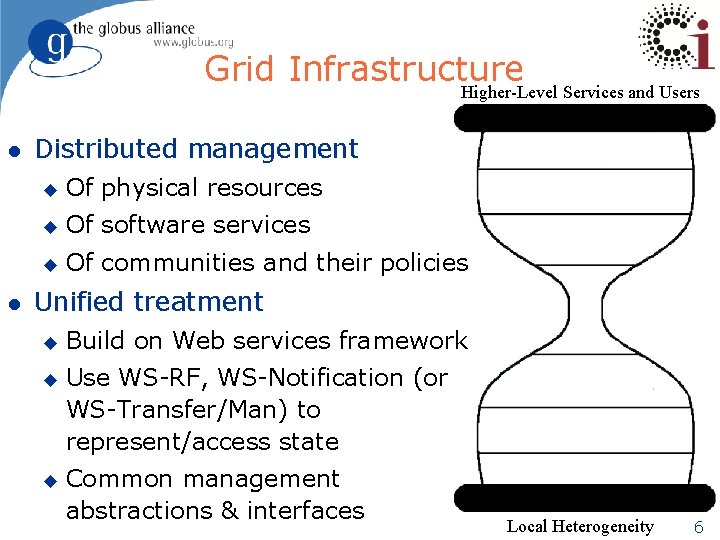

Grid Infrastructure Higher-Level Services and Users l l Distributed management u Of physical resources u Of software services u Of communities and their policies Unified treatment u u u Build on Web services framework Use WS-RF, WS-Notification (or WS-Transfer/Man) to represent/access state Common management abstractions & interfaces Local Heterogeneity 6

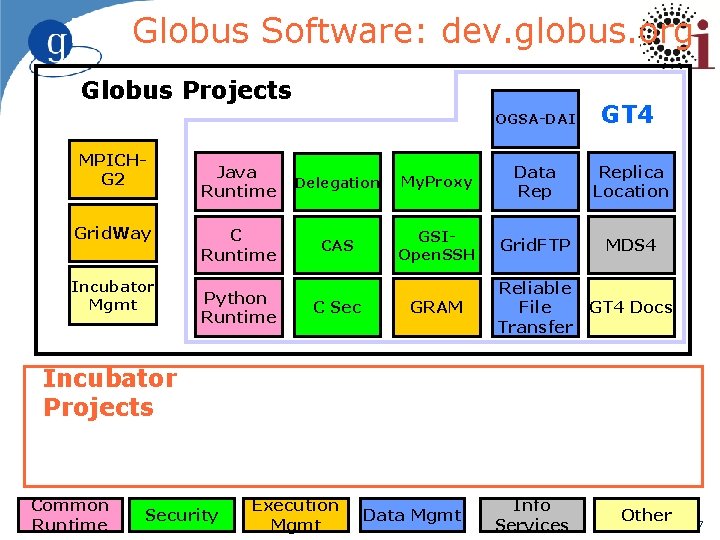

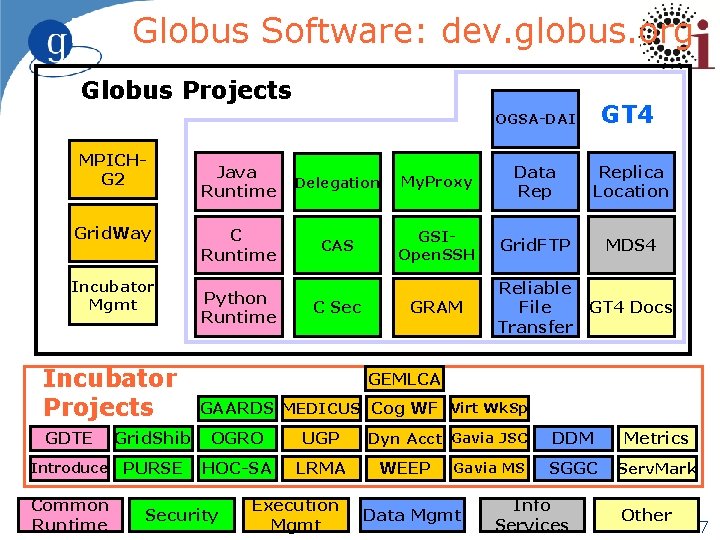

Globus Software: dev. globus. org Globus Projects MPICHG 2 Grid. Way Incubator Mgmt OGSA-DAI GT 4 Java Runtime Delegation My. Proxy Data Replica Location C Runtime CAS GSIOpen. SSH Grid. FTP MDS 4 GRAM Reliable File Transfer GT 4 Docs Data Mgmt Info Services Other Python Runtime C Sec Incubator Projects Common Runtime Security Execution Mgmt 7

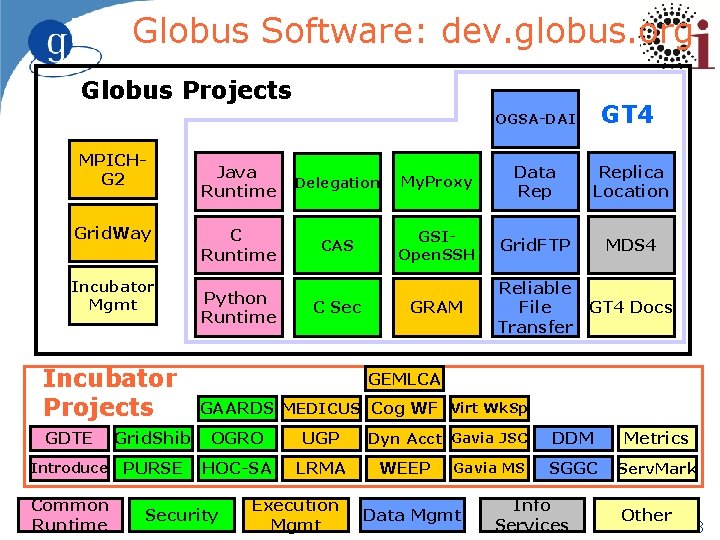

Globus Software: dev. globus. org Globus Projects MPICHG 2 Grid. Way Incubator Mgmt Incubator Projects GT 4 Java Runtime Delegation My. Proxy Data Replica Location C Runtime CAS GSIOpen. SSH Grid. FTP MDS 4 GRAM Reliable File Transfer GT 4 Docs Python Runtime C Sec GEMLCA GAARDS MEDICUS Cog WF Virt Wk. Sp GDTE Grid. Shib OGRO UGP Introduce PURSE HOC-SA LRMA Common Runtime OGSA-DAI Security Execution Mgmt Dyn Acct Gavia JSC WEEP Gavia MS Data Mgmt DDM Metrics SGGC Serv. Mark Info Services Other 8

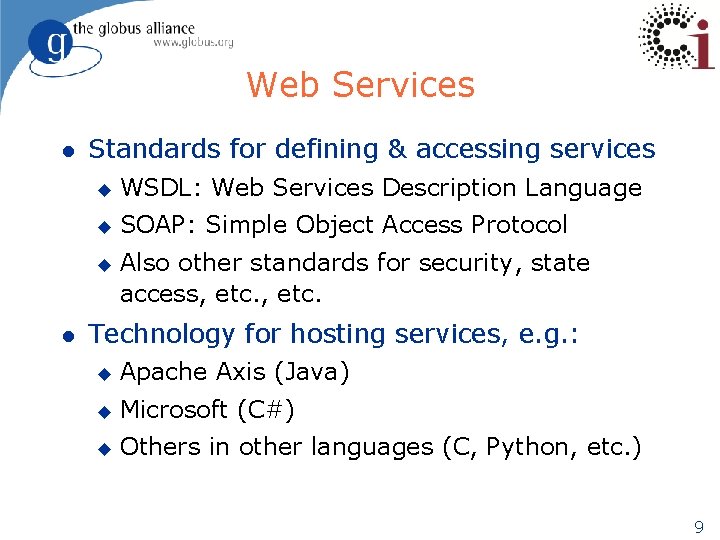

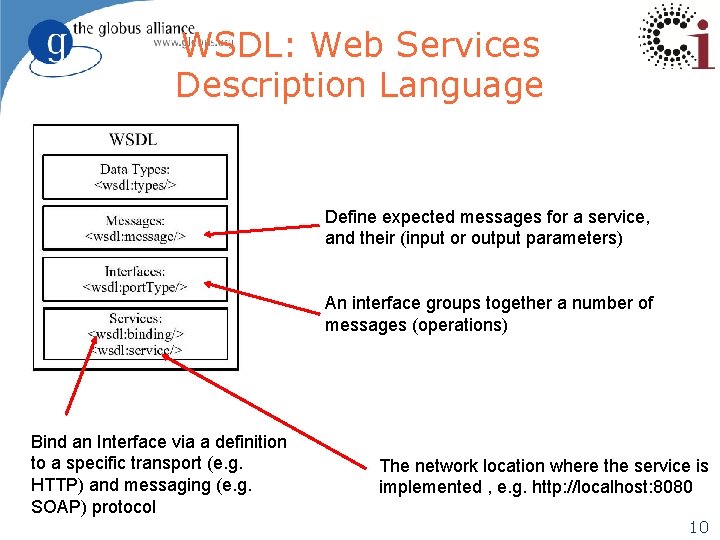

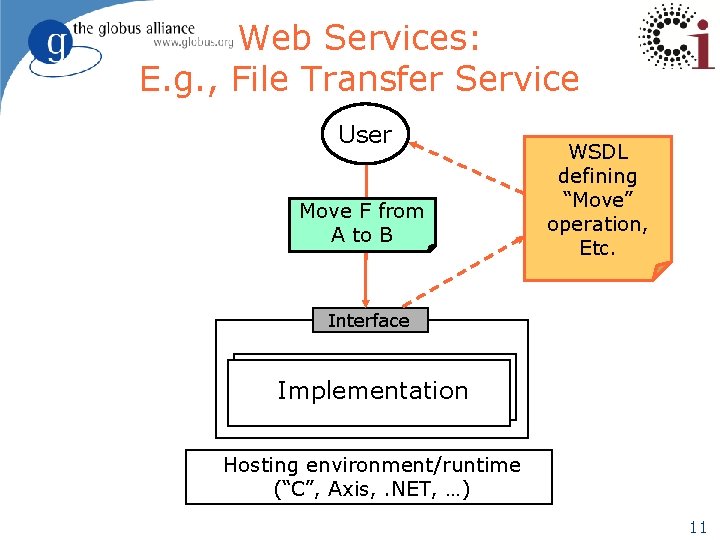

Web Services l Standards for defining & accessing services u WSDL: Web Services Description Language u SOAP: Simple Object Access Protocol u l Also other standards for security, state access, etc. Technology for hosting services, e. g. : u Apache Axis (Java) u Microsoft (C#) u Others in other languages (C, Python, etc. ) 9

WSDL: Web Services Description Language Define expected messages for a service, and their (input or output parameters) An interface groups together a number of messages (operations) Bind an Interface via a definition to a specific transport (e. g. HTTP) and messaging (e. g. SOAP) protocol The network location where the service is implemented , e. g. http: //localhost: 8080 10

Web Services: E. g. , File Transfer Service User Move F from A to B WSDL defining “Move” operation, Etc. Interface Implementation Hosting environment/runtime (“C”, Axis, . NET, …) 11

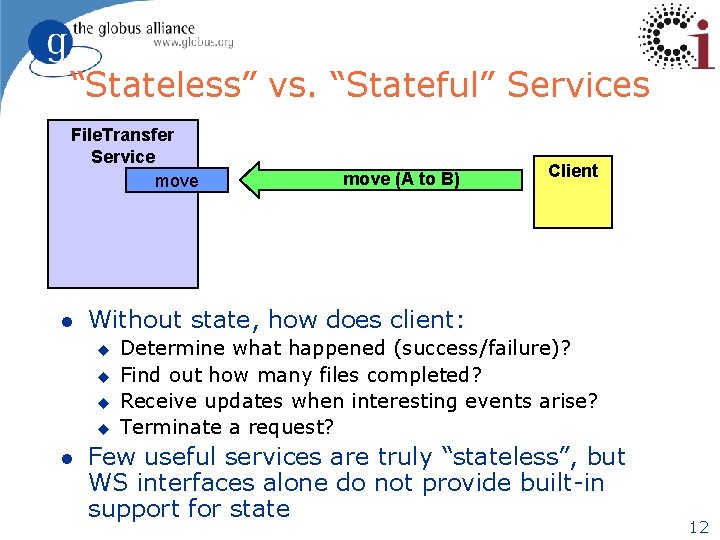

“Stateless” vs. “Stateful” Services File. Transfer Service move l Client Without state, how does client: u u l move (A to B) Determine what happened (success/failure)? Find out how many files completed? Receive updates when interesting events arise? Terminate a request? Few useful services are truly “stateless”, but WS interfaces alone do not provide built-in support for state 12

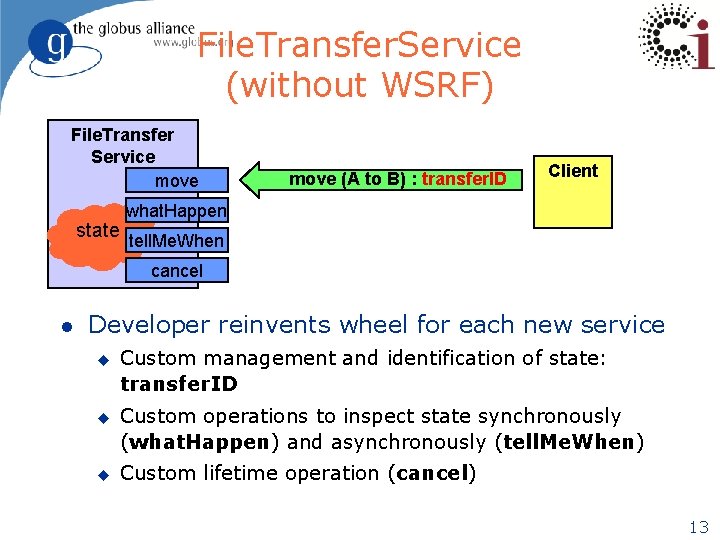

File. Transfer. Service (without WSRF) File. Transfer Service move (A to B) : transfer. ID Client what. Happen state tell. Me. When cancel l Developer reinvents wheel for each new service u u u Custom management and identification of state: transfer. ID Custom operations to inspect state synchronously (what. Happen) and asynchronously (tell. Me. When) Custom lifetime operation (cancel) 13

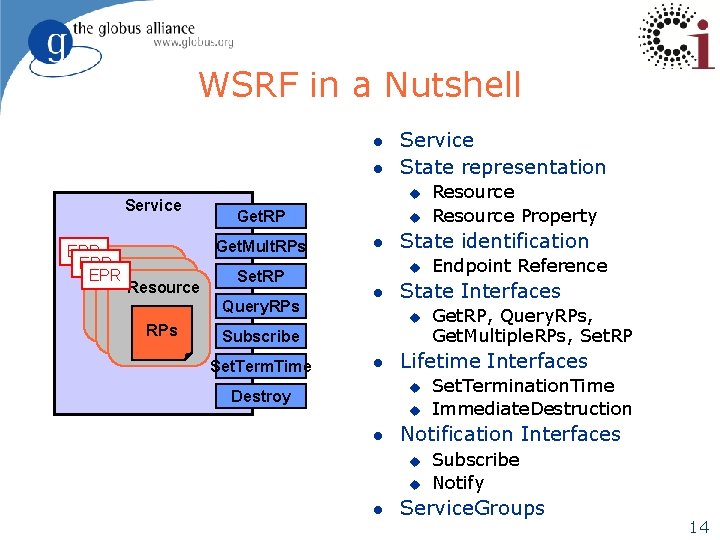

WSRF in a Nutshell l l Service EPR EPR u Get. RP Get. Mult. RPs Resource Set. RP Query. RPs Service State representation u l State identification u l u l Set. Termination. Time Immediate. Destruction Notification Interfaces u u l Get. RP, Query. RPs, Get. Multiple. RPs, Set. RP Lifetime Interfaces u Destroy Endpoint Reference State Interfaces Subscribe Set. Term. Time Resource Property Subscribe Notify Service. Groups 14

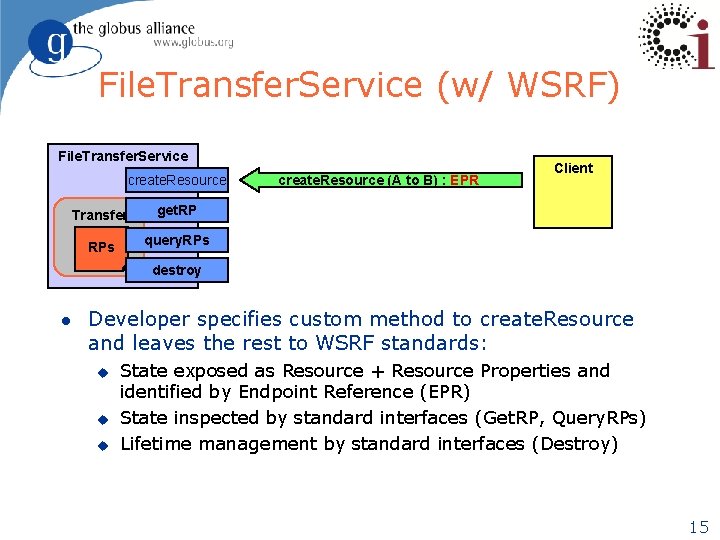

File. Transfer. Service (w/ WSRF) File. Transfer. Service create. Resource Transfer get. RP RPs query. RPs create. Resource (A to B) : EPR Client destroy l Developer specifies custom method to create. Resource and leaves the rest to WSRF standards: u u u State exposed as Resource + Resource Properties and identified by Endpoint Reference (EPR) State inspected by standard interfaces (Get. RP, Query. RPs) Lifetime management by standard interfaces (Destroy) 15

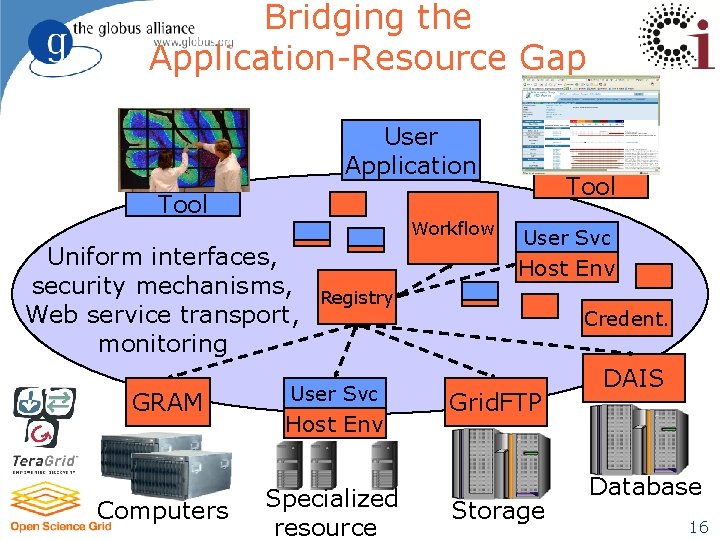

Bridging the Application-Resource Gap User Application Tool Workflow Uniform interfaces, security mechanisms, Web service transport, monitoring GRAM Computers Tool User Svc Host Env Registry User Svc Host Env Specialized resource Credent. Grid. FTP Storage DAIS Database 16

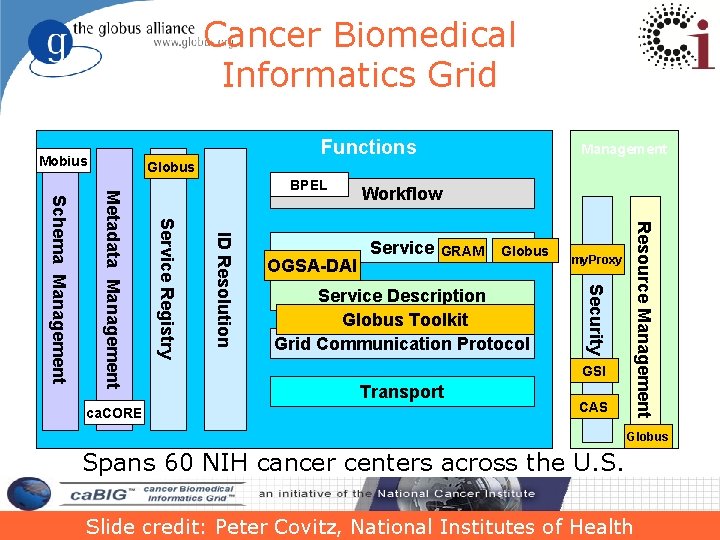

Cancer Biomedical Informatics Grid Functions Mobius Management Globus Service GRAM Globus Service Description Globus Toolkit Grid Communication Protocol Resource Management OGSA-DAI Workflow my. Proxy Security ID Resolution Service Registry Metadata Management Schema Management ca. CORE BPEL GSI Transport CAS Globus Spans 60 NIH cancer centers across the U. S. Slide credit: Peter Covitz, National Institutes of Health 17

Relationship to Other Programs l UNICORE l OMII l g. Lite l Supporting common standards l Additional ways of working together? 18

Where is Globus today? l http: //incubator. globus. org/metrics > 75, 000 GT 4 downloads u > 95% are production downloads u l l Maintaining production quality code Supporting the most important OGF standards Innovating with new features Incorporating Community Involvement 19

The Globus Toolkit: “Standard Plumbing” for the Grid l Not turnkey solutions, but building blocks & tools for application developers & system integrators u l Easier to reuse than to reinvent u l Some components (e. g. , file transfer) go farther than others (e. g. , remote job submission) toward enduser relevance Compatibility with other Grid systems comes for free Today the majority of the GT public interfaces are usable by application developers and system integrators u u Relatively few end-user interfaces In general, not intended for direct use by end users (scientists, engineers, marketing specialists) 20

Where is Globus Going? l Service Oriented Science (SOS) l Community Involvement u Dev. globus. org l u Participate in the discussions Incubators l Add your Innovative contributions 21

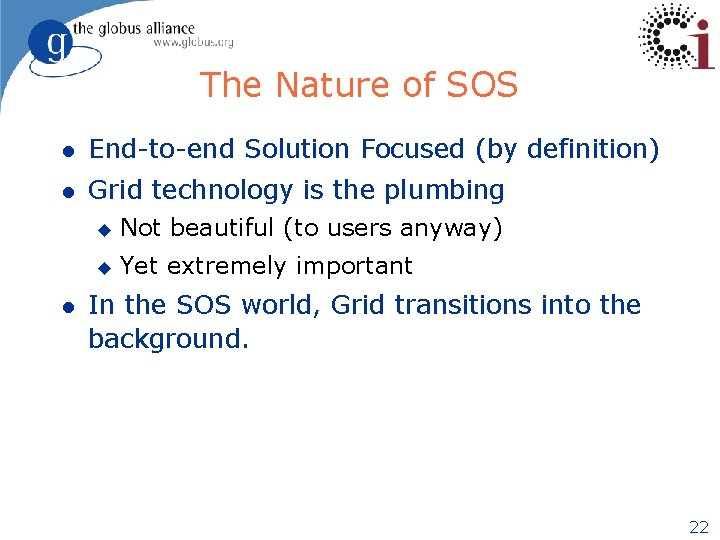

The Nature of SOS l End-to-end Solution Focused (by definition) l Grid technology is the plumbing l u Not beautiful (to users anyway) u Yet extremely important In the SOS world, Grid transitions into the background. 22

How are we getting there? l l Our community is helping us! Also through our ongoing internal development, of course. 23

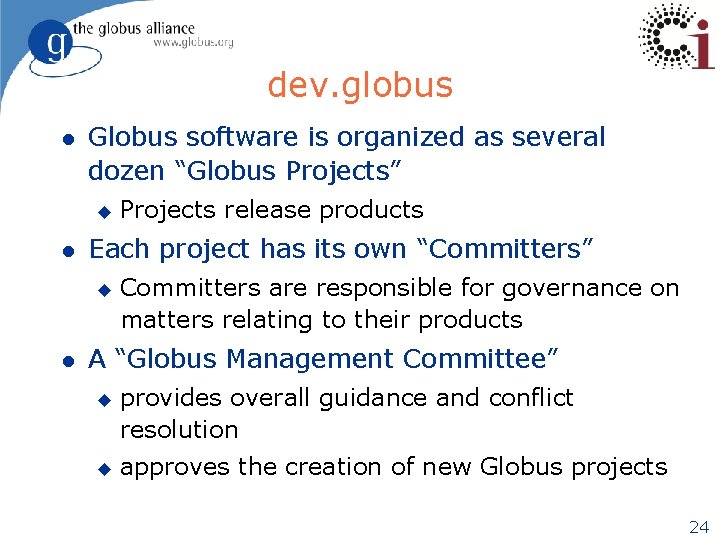

dev. globus l Globus software is organized as several dozen “Globus Projects” u l Each project has its own “Committers” u l Projects release products Committers are responsible for governance on matters relating to their products A “Globus Management Committee” u u provides overall guidance and conflict resolution approves the creation of new Globus projects 24

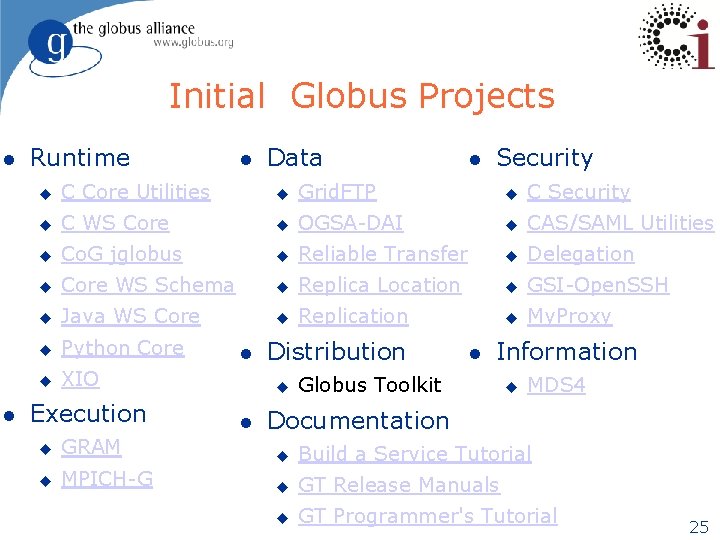

Initial Globus Projects l l Runtime l Data l Security u C Core Utilities u Grid. FTP u C Security u C WS Core u OGSA-DAI u CAS/SAML Utilities u Co. G jglobus u Reliable Transfer u Delegation u Core WS Schema u Replica Location u GSI-Open. SSH u Java WS Core u Replication u My. Proxy u Python Core u XIO Execution l Distribution u l l Information Globus Toolkit u MDS 4 Documentation u GRAM u Build a Service Tutorial u MPICH-G u GT Release Manuals u GT Programmer's Tutorial 25

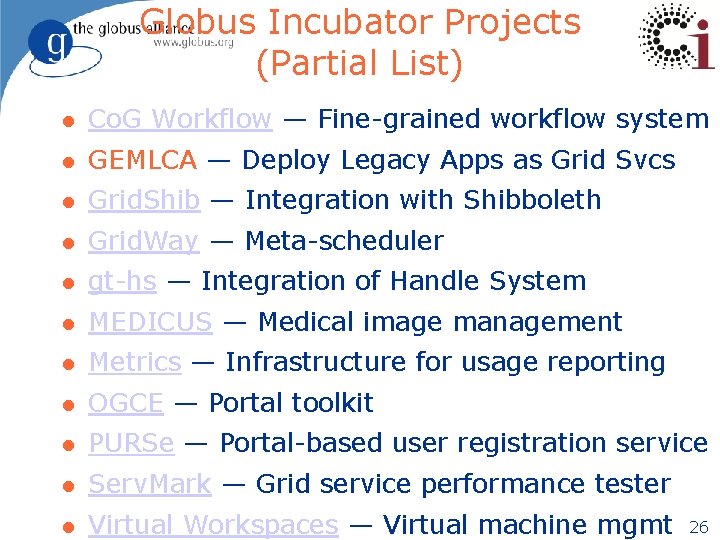

Globus Incubator Projects (Partial List) l Co. G Workflow — Fine-grained workflow system l GEMLCA — Deploy Legacy Apps as Grid Svcs l Grid. Shib — Integration with Shibboleth l Grid. Way — Meta-scheduler l gt-hs — Integration of Handle System l MEDICUS — Medical image management l Metrics — Infrastructure for usage reporting l OGCE — Portal toolkit l PURSe — Portal-based user registration service l Serv. Mark — Grid service performance tester l Virtual Workspaces — Virtual machine mgmt 26

Globus Software: dev. globus. org Globus Projects MPICHG 2 Grid. Way Incubator Mgmt Incubator Projects GT 4 Java Runtime Delegation My. Proxy Data Replica Location C Runtime CAS GSIOpen. SSH Grid. FTP MDS 4 GRAM Reliable File Transfer GT 4 Docs Python Runtime C Sec GEMLCA GAARDS MEDICUS Cog WF Virt Wk. Sp GDTE Grid. Shib OGRO UGP Introduce PURSE HOC-SA LRMA Common Runtime OGSA-DAI Security Execution Mgmt Dyn Acct Gavia JSC WEEP Gavia MS Data Mgmt DDM Metrics SGGC Serv. Mark Info Services Other 27

http: //dev. globus. org/wiki/Incubator/Introduce Shannon Hastings hastings@bmi. osu. edu Multiscale Computing Laboratory Department of Biomedical Informatics The Ohio State University 28

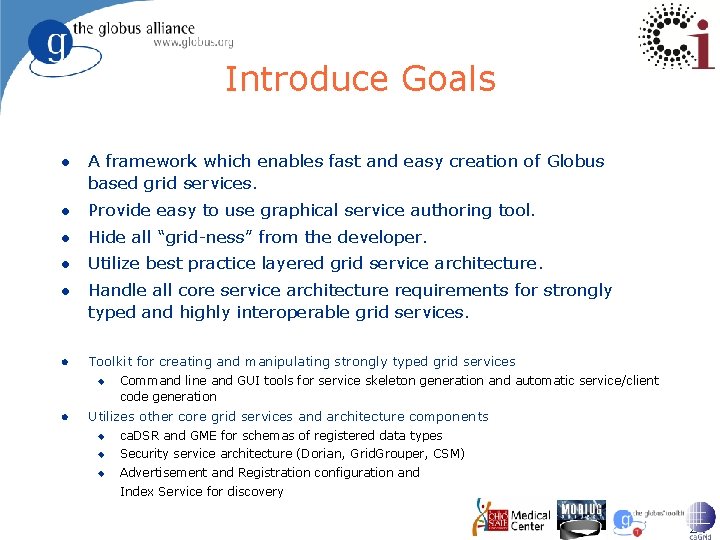

Introduce Goals l A framework which enables fast and easy creation of Globus based grid services. l Provide easy to use graphical service authoring tool. l Hide all “grid-ness” from the developer. l Utilize best practice layered grid service architecture. l Handle all core service architecture requirements for strongly typed and highly interoperable grid services. l Toolkit for creating and manipulating strongly typed grid services u l Command line and GUI tools for service skeleton generation and automatic service/client code generation Utilizes other core grid services and architecture components u ca. DSR and GME for schemas of registered data types u Security service architecture (Dorian, Grid. Grouper, CSM) u Advertisement and Registration configuration and Index Service for discovery 29

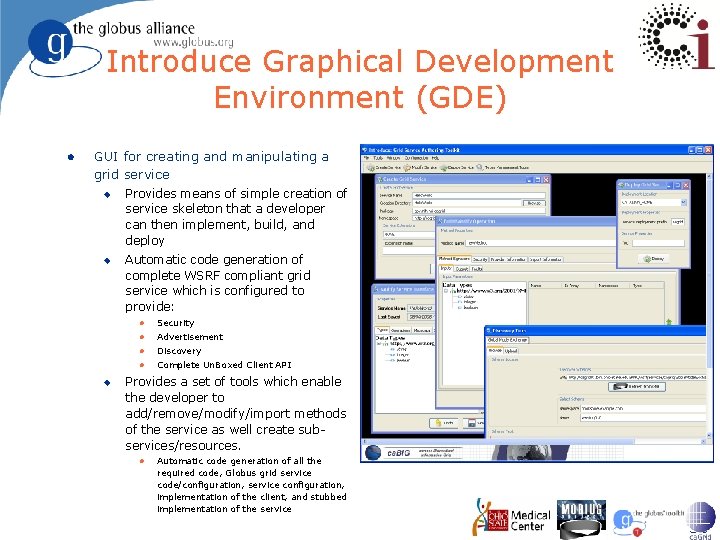

Introduce Graphical Development Environment (GDE) l GUI for creating and manipulating a grid service u u u Provides means of simple creation of service skeleton that a developer can then implement, build, and deploy Automatic code generation of complete WSRF compliant grid service which is configured to provide: l Security l Advertisement l Discovery l Complete Un. Boxed Client API Provides a set of tools which enable the developer to add/remove/modify/import methods of the service as well create subservices/resources. l Automatic code generation of all the required code, Globus grid service code/configuration, service configuration, implementation of the client, and stubbed implementation of the service 30

Invitation to add your Incubator l http: //dev. globus. org l Leverage the Globus Infrastructure for your project. 31

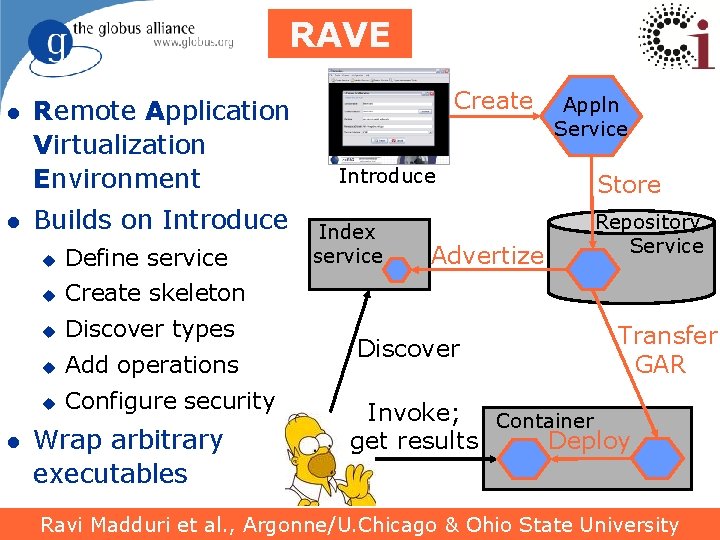

RAVE l l l Remote Application Virtualization Environment Builds on Introduce u Define service u Create skeleton u Discover types u Add operations u Configure security Wrap arbitrary executables Create Introduce Index service Advertize Discover Appln Service Store Repository Service Transfer GAR Invoke; Container Deploy get results Ravi Madduri et al. , Argonne/U. Chicago & Ohio State University 33

RAVE Collaboration l We are interested in collaborating… l If you have an application you want to expose as a Grid service, let us know l Questions ? 36

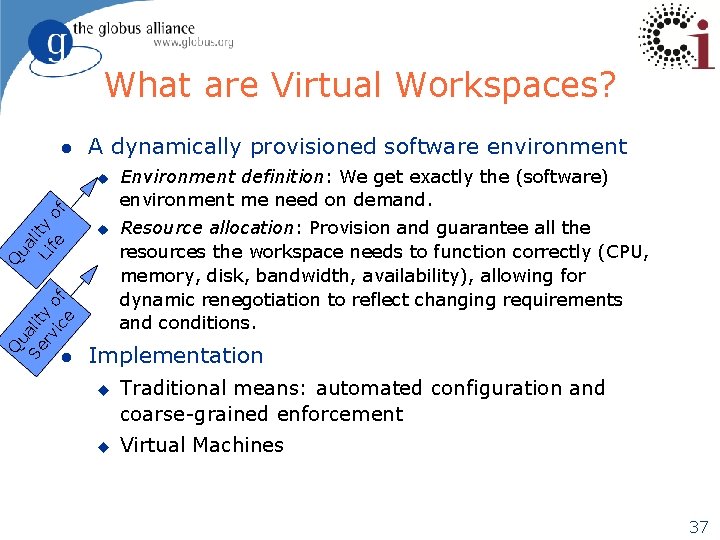

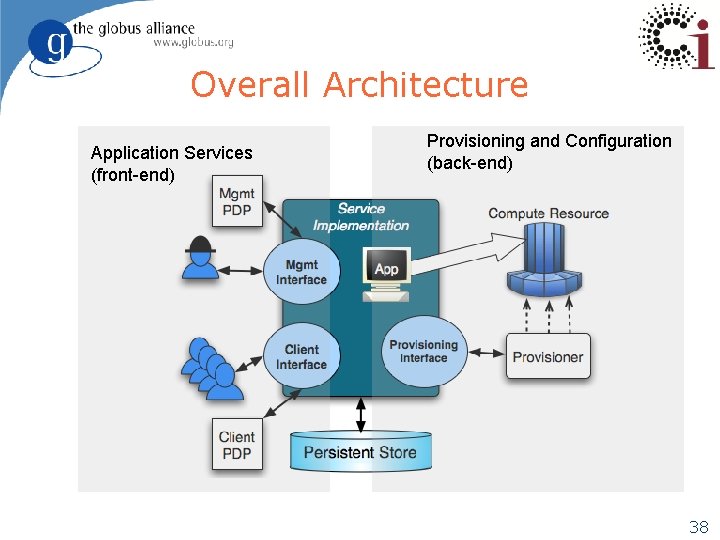

What are Virtual Workspaces? l A dynamically provisioned software environment u Q ua Se lit rv y o ic f e Q ua l Li ity fe of u l Environment definition: We get exactly the (software) environment me need on demand. Resource allocation: Provision and guarantee all the resources the workspace needs to function correctly (CPU, memory, disk, bandwidth, availability), allowing for dynamic renegotiation to reflect changing requirements and conditions. Implementation u u Traditional means: automated configuration and coarse-grained enforcement Virtual Machines 37

Overall Architecture Application Services (front-end) Provisioning and Configuration (back-end) 38

Challenges l How can we automate providing applications as services? u u u l Integrate code into a services framework Sharing across community members Composition of analysis capability in workflows How can we provision platforms for application execution in response to time-varying demand u u u Isolating users from details concerning resource availability Configuring and maintaining application environments On-demand provisioning of application platforms 39

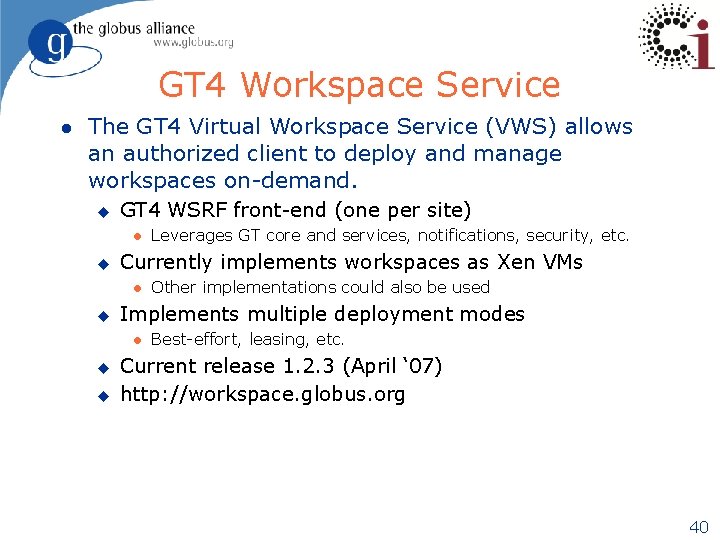

GT 4 Workspace Service l The GT 4 Virtual Workspace Service (VWS) allows an authorized client to deploy and manage workspaces on-demand. u GT 4 WSRF front-end (one per site) l u Currently implements workspaces as Xen VMs l u u Other implementations could also be used Implements multiple deployment modes l u Leverages GT core and services, notifications, security, etc. Best-effort, leasing, etc. Current release 1. 2. 3 (April ‘ 07) http: //workspace. globus. org 40

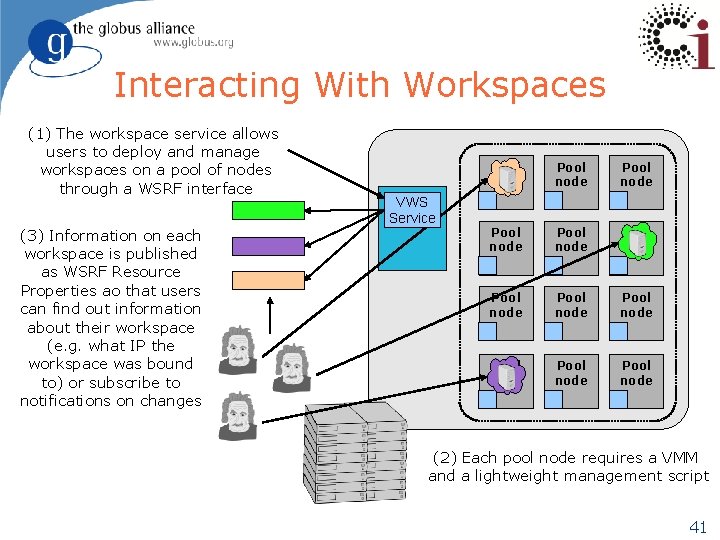

Interacting With Workspaces (1) The workspace service allows users to deploy and manage workspaces on a pool of nodes through a WSRF interface (3) Information on each workspace is published as WSRF Resource Properties ao that users can find out information about their workspace (e. g. what IP the workspace was bound to) or subscribe to notifications on changes VWS Service Pool node Pool node Pool node (2) Each pool node requires a VMM and a lightweight management script 41

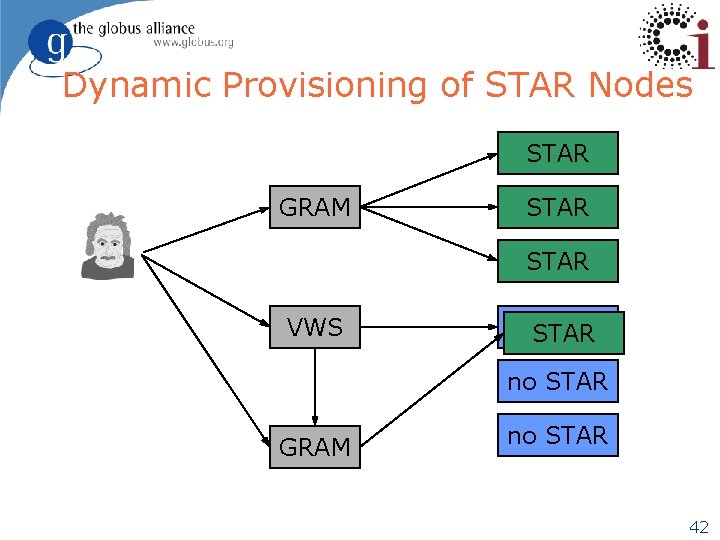

Dynamic Provisioning of STAR Nodes STAR GRAM STAR VWS no. STAR no STAR GRAM no STAR 42

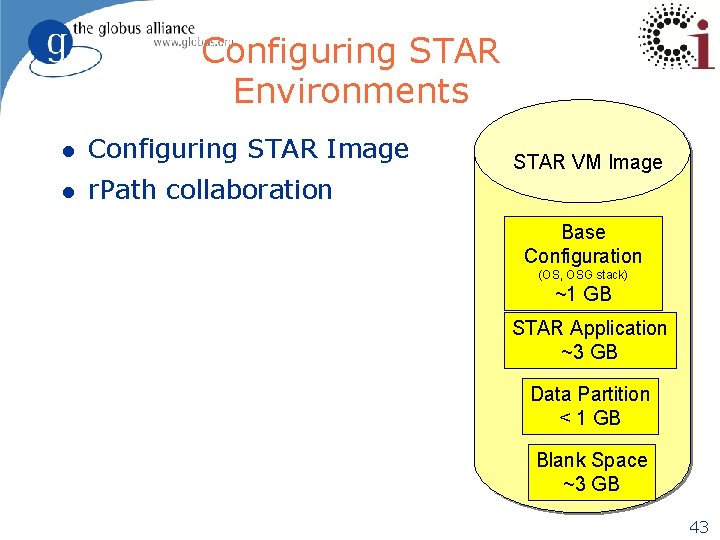

Configuring STAR Environments l Configuring STAR Image l r. Path collaboration STAR VM Image Base Configuration (OS, OSG stack) ~1 GB STAR Application ~3 GB Data Partition < 1 GB Blank Space ~3 GB 43

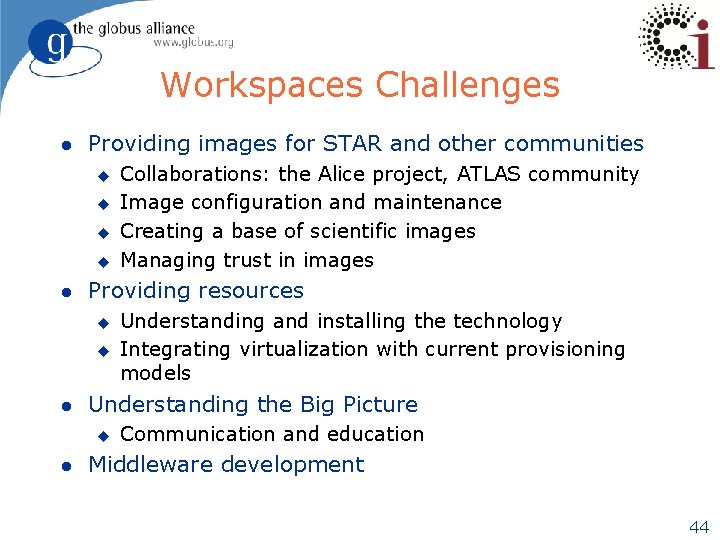

Workspaces Challenges l Providing images for STAR and other communities u u l Providing resources u u l Understanding and installing the technology Integrating virtualization with current provisioning models Understanding the Big Picture u l Collaborations: the Alice project, ATLAS community Image configuration and maintenance Creating a base of scientific images Managing trust in images Communication and education Middleware development 44

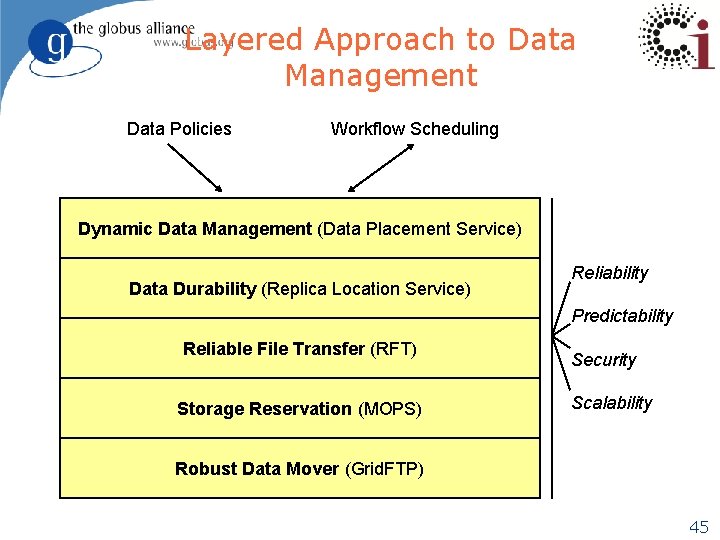

Layered Approach to Data Management Data Policies Workflow Scheduling Dynamic Data Management (Data Placement Service) Data Durability (Replica Location Service) Reliability Predictability Reliable File Transfer (RFT) Storage Reservation (MOPS) Security Scalability Robust Data Mover (Grid. FTP) 45

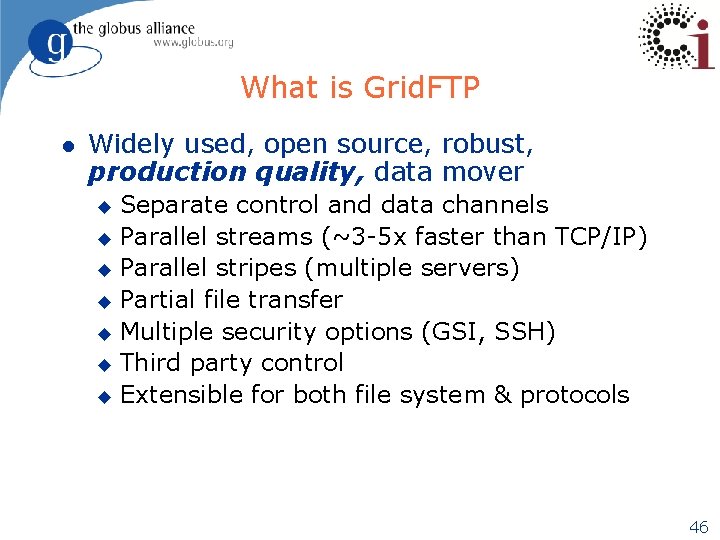

What is Grid. FTP l Widely used, open source, robust, production quality, data mover Separate control and data channels u Parallel streams (~3 -5 x faster than TCP/IP) u Parallel stripes (multiple servers) u Partial file transfer u Multiple security options (GSI, SSH) u Third party control u Extensible for both file system & protocols u 46

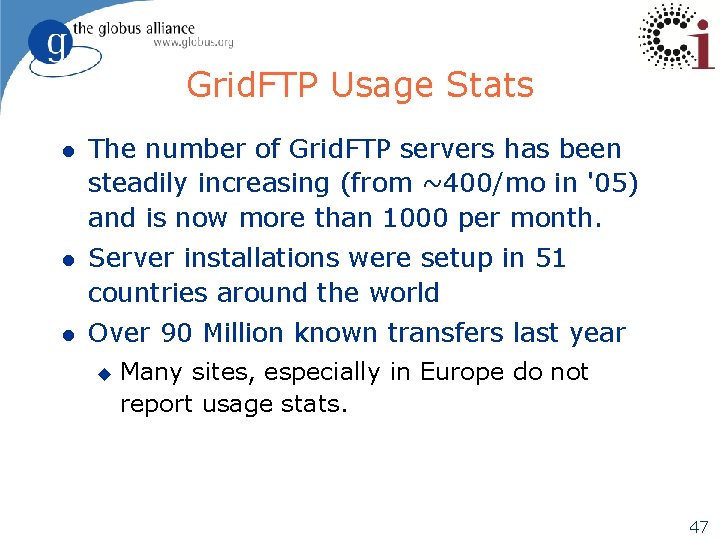

Grid. FTP Usage Stats l The number of Grid. FTP servers has been steadily increasing (from ~400/mo in '05) and is now more than 1000 per month. l Server installations were setup in 51 countries around the world l Over 90 Million known transfers last year u Many sites, especially in Europe do not report usage stats. 47

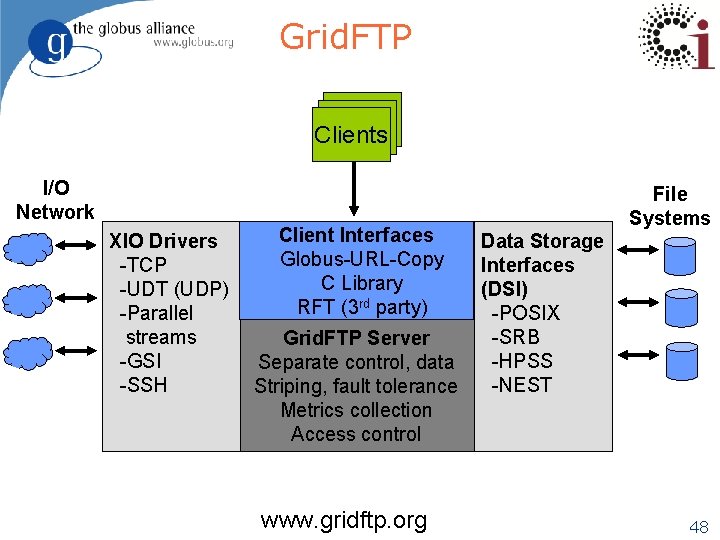

Grid. FTP Clients I/O Network XIO Drivers -TCP -UDT (UDP) -Parallel streams -GSI -SSH ? Client Interfaces Globus-URL-Copy C Library RFT (3 rd party) Grid. FTP Server Separate control, data Striping, fault tolerance Metrics collection Access control www. gridftp. org File Systems Data Storage Interfaces (DSI) -POSIX -SRB -HPSS -NEST 48

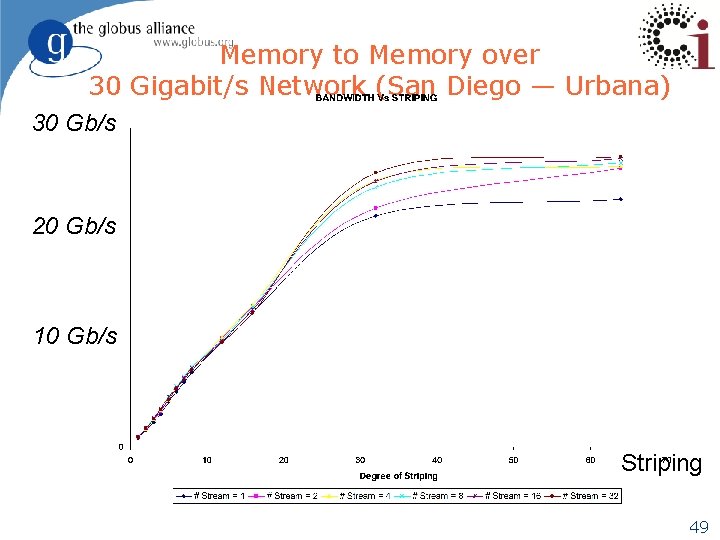

Memory to Memory over 30 Gigabit/s Network (San Diego — Urbana) 30 Gb/s 20 Gb/s 10 Gb/s Striping 49

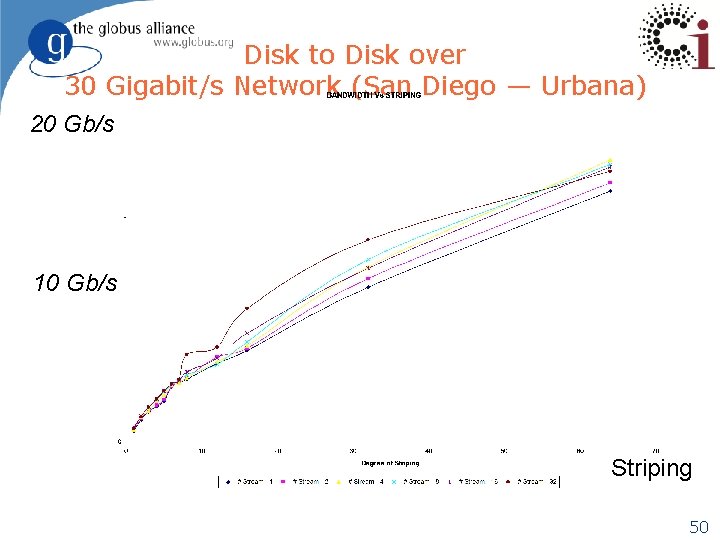

Disk to Disk over 30 Gigabit/s Network (San Diego — Urbana) 20 Gb/s 10 Gb/s Striping 50

Small Files Transfer Improvements l Pipelining u u u l Many transfer requests outstanding at once Client sends second request before the first completes Latency of request is hidden in data transfer time Cached Data channel connections u Reuse established data channels (Mode E) u No additional TCP or GSI connect overhead Now in 4. 1. 2 !! 51

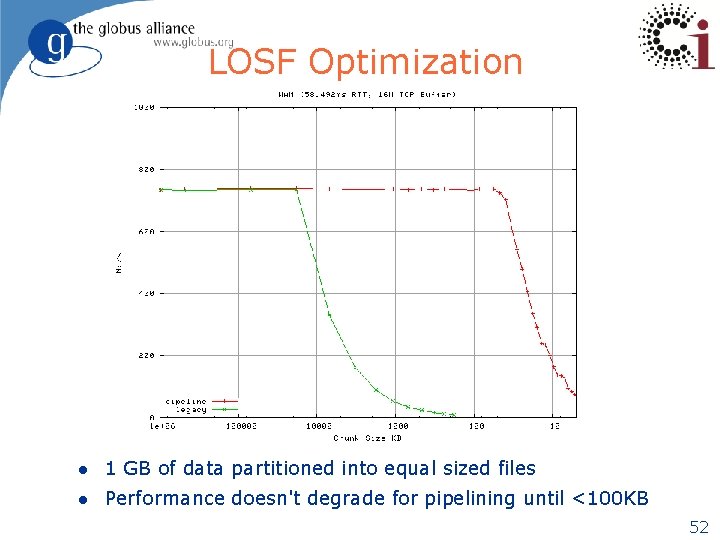

LOSF Optimization l 1 GB of data partitioned into equal sized files l Performance doesn't degrade for pipelining until <100 KB 52

What Else? l Dynamic backends (using GFork) – now in 4. 1. 2 u u l Stability in the event of backend failure Growing resource pools for peak demands Dynamic Protocol selection TCP/UDT u Early tests very promising especially over long, busy networks l u l Requires further side-effect testing & analysis Flexibility in monitoring u Enable Individual grids to track user information l l Detect bottlenecks (storage vs network) Other Improvements u u l UID, DN, IP address Transfer Analysis u l Preliminary tests show ~5 x speedup vs parallel streaming Grid. FTP Best practices (striping parameter settings) Performance improvements Managed Object Placement Service (MOPS) 53

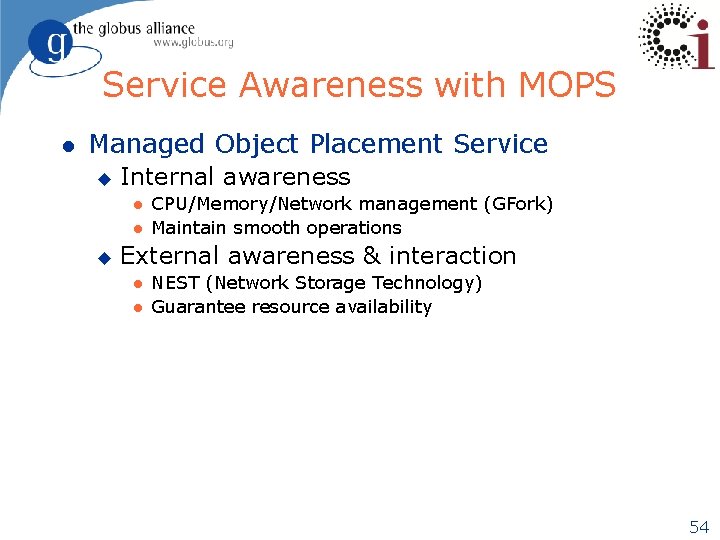

Service Awareness with MOPS l Managed Object Placement Service u Internal awareness l l u CPU/Memory/Network management (GFork) Maintain smooth operations External awareness & interaction l l NEST (Network Storage Technology) Guarantee resource availability 54

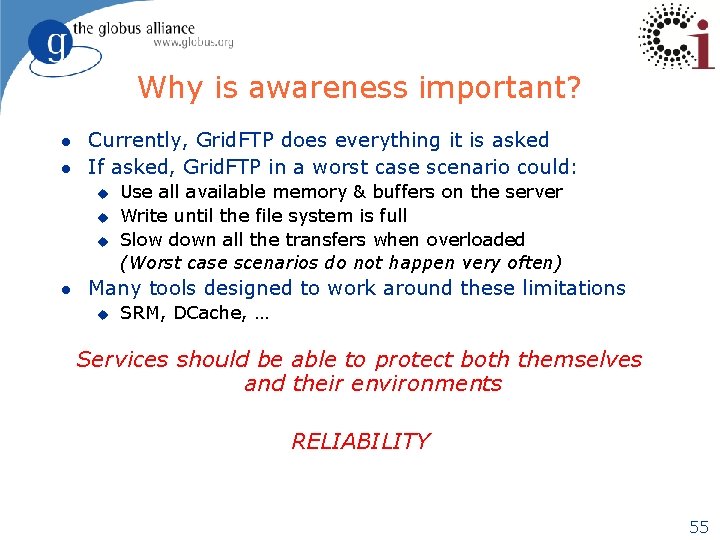

Why is awareness important? l l Currently, Grid. FTP does everything it is asked If asked, Grid. FTP in a worst case scenario could: u u u l Use all available memory & buffers on the server Write until the file system is full Slow down all the transfers when overloaded (Worst case scenarios do not happen very often) Many tools designed to work around these limitations u SRM, DCache, … Services should be able to protect both themselves and their environments RELIABILITY 55

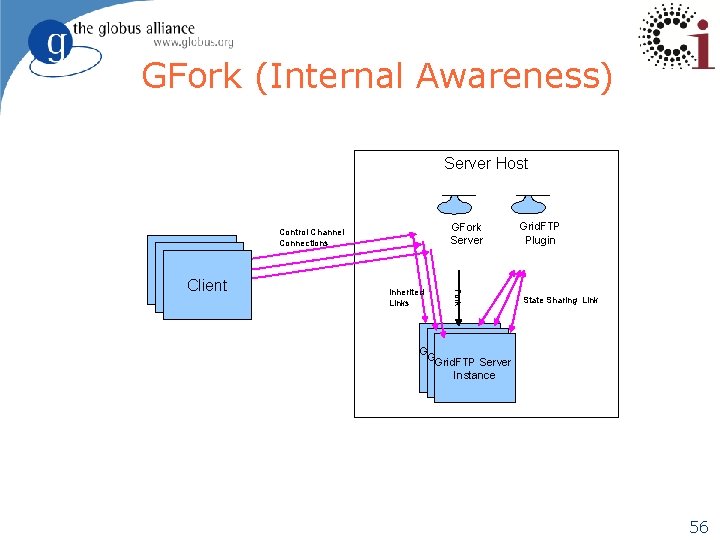

GFork (Internal Awareness) Server Host Client Inherited Links GFork Server Grid. FTP Plugin Fork Control Channel Connections State Sharing Link Grid. FTP Server Instance 56

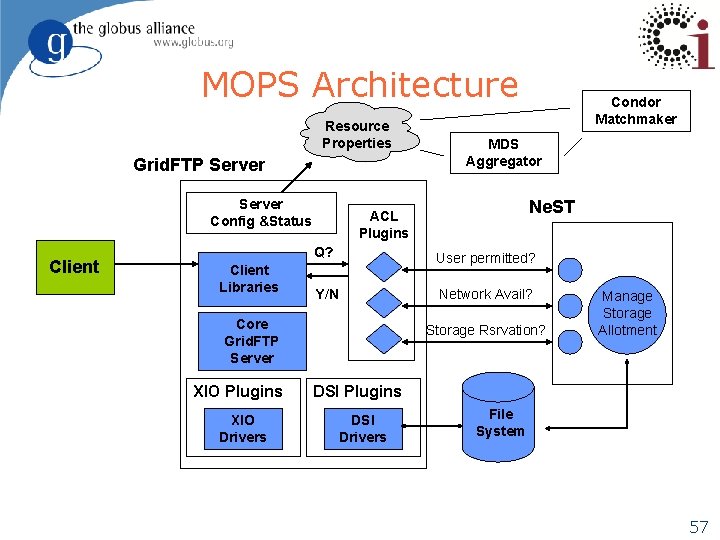

MOPS Architecture Resource Properties Grid. FTP Server Config &Status Client Libraries XIO Drivers MDS Aggregator Ne. ST ACL Plugins Q? User permitted? Y/N Network Avail? Core Grid. FTP Server XIO Plugins Condor Matchmaker Storage Rsrvation? Manage Storage Allotment DSI Plugins DSI Drivers File System 57

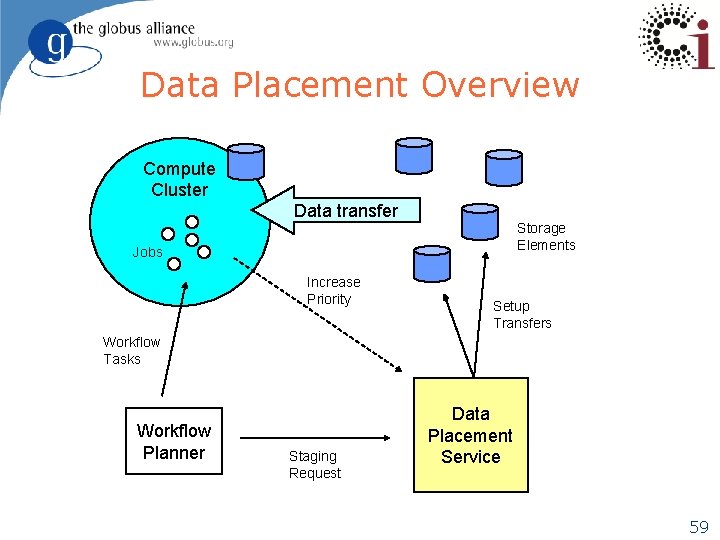

The Data Placement Service (Ann Chervenak et. al. ) l The Data Placement Service (DPS) was designed to support policy based Placement of Data for use in the following (and other) scenarios: u u N-copy Replication Staging datasets from/to Storage Elements Often in support of Workflows u l Current capabilities include u u l Managing hierarchically tiered distribution (HEP) Locating originals & replicas of desired data Monitoring Data Transfers Correcting errors when & where possible Registering staged data in local replica catalogs New effort includes: u u Scheduling transfers between multiple storage elements & compute clusters Enabling workflow feedback to increase priorities 58

Data Placement Overview Compute Cluster Data transfer Storage Elements Jobs Increase Priority Setup Transfers Workflow Tasks Workflow Planner Staging Request Data Placement Service 59

The Data Replication Service l Included in the GT 4. 0. 2 release l Design based on publication component of the LIGO Lightweight Data Replicator system u Developed by Scott Koranda l Client specifies (via DRS interface) which files are required at local site l DRS uses: u u Globus Delegation Service to delegate proxy credentials RLS to discover where replicas exist in the Grid Selection algorithm to choose among available source replicas (provides a callout; default is random selection) Reliable File Transfer (RFT) service to copy data to site l u Via Grid. FTP data transport protocol RLS to register new replicas 60

Users & Targeted Users l High Energy Physics Community u l Open Science Grid (OSG) u l u Heavy users of Grid. FTP New infrastructure being architected for Placement Services Tera. Grid u u l Many diverse communities Earth Systems Grid (ESG) u l LHC, CMS Already using Grid. FTP as the data backbone Improvement requests coming in (PSC) Many Smaller Communities u u APS (Argonne) SNS (ORNL) 61

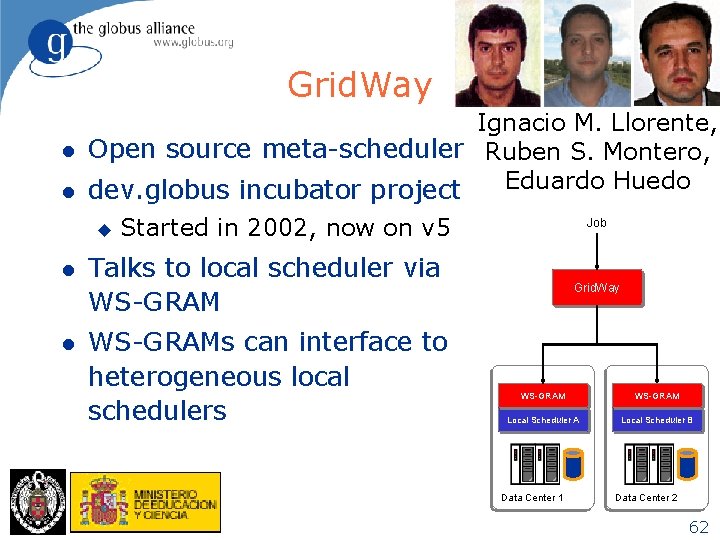

Grid. Way l l Ignacio M. Llorente, Open source meta-scheduler Ruben S. Montero, Eduardo Huedo dev. globus incubator project u l l Started in 2002, now on v 5 Job Talks to local scheduler via WS-GRAMs can interface to heterogeneous local schedulers Grid. Way WS-GRAM Local Scheduler A Local Scheduler B Data Center 1 Data Center 2 62

GRAM Priorities (CMS & others) l Worked with the OSG-CMS group to define their testing scenario and make improvements to GRAM to meet their needs u Submit and (reliably) complete 2000 jobs to Condor-G to a GRAM 4 service with Condor as the local resource manager l u u We created an automated testing framework Once reliability was solved, we addressed performance concerns l l u l This included file staging directives as well as creation and removal of a unique job directory We instrumented our code and testing framework and found that performance for jobs with file staging needed to be improved We prioritized two campaigns to address the issue: u Implement “local” method calls between GRAM 4 and RFT (included in 4. 0. 5) u Implement RFT caching of Grid. FTP service connections (coming soon in 4. 0. x) CMS will soon (Sept 07) evaluate GRAM 4 in OSG 0. 8. 0 Standards Participation – JSDL (prototype complete), BES 63

Globus & OSG l Security u u l GRAM u l In conjunction with FNAL & EGEE Building an XACML interface to support authorization interoperability of common middleware (g. LExec, d. Cache, . . . ) by registering multiple obligation handlers at the PEP, e. g. g. LExec will know obligation "Username" for GUMS and "UID+GID" for LCMAPS. Extended testing and inclusion in VDT 0. 8 Grid. FTP u Add capability for extending metrics collection on specific Grid infrastructures like OSG & Tera. Grid. Examples are DNs & UIDs. 64

Conclusions l Production Quality Software is a Must l Trend is toward Solutions l Service Oriented Science l Community Driven Architectures l Incubator projects advancing the technology l Invitation to Join the Community u Add your Incubator u Join in the discussions 65

- Slides: 62