GHC 14 Testing in Production Key to Data

- Slides: 19

#GHC 14 Testing in Production Key to Data Driven Quality Jyoti Jacob Senior Software Engineer- Microsoft 10/9/2014

Agenda § Why and What is Testing in § § Production? Types − Analytics Vs Synthetics Flighting and Experimentation Ti. P in other companies My Key Takeaways 2014

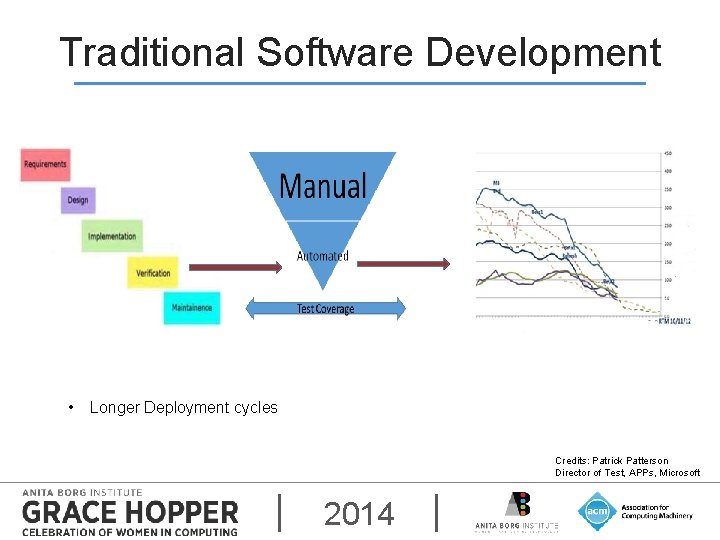

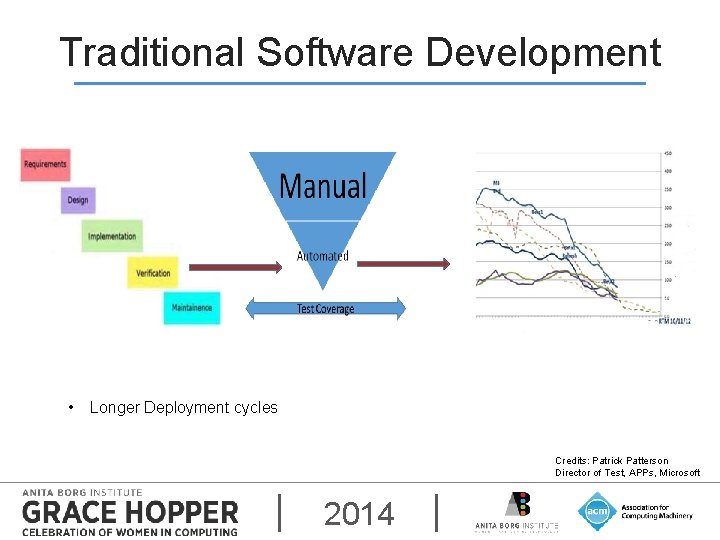

Traditional Software Development • Longer Deployment cycles Credits: Patrick Patterson Director of Test, APPs, Microsoft 2014

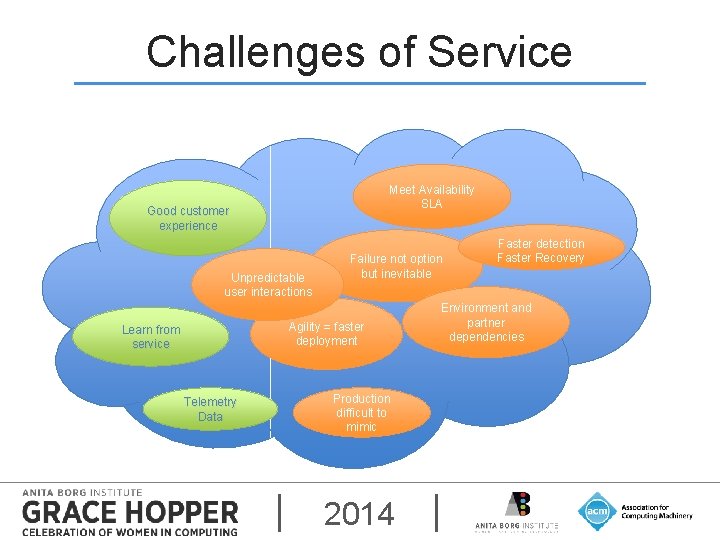

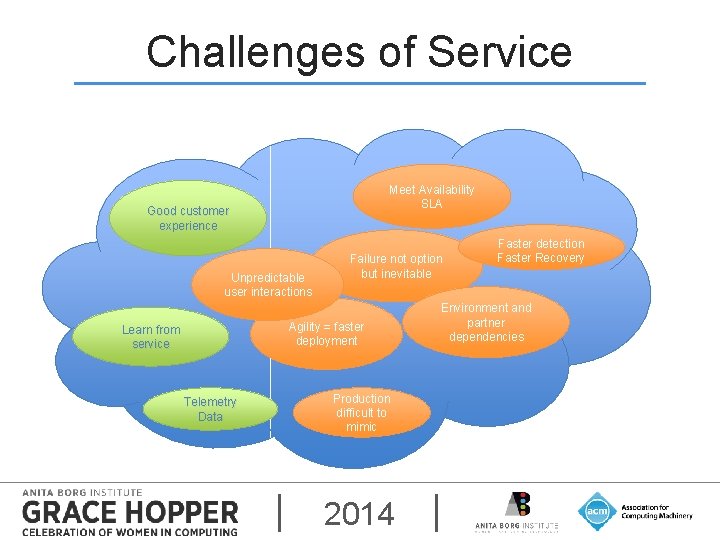

Challenges of Service Meet Availability SLA Good customer experience Unpredictable user interactions Failure not option but inevitable Agility = faster deployment Learn from service Telemetry Data Production difficult to mimic 2014 Faster detection Faster Recovery Environment and partner dependencies

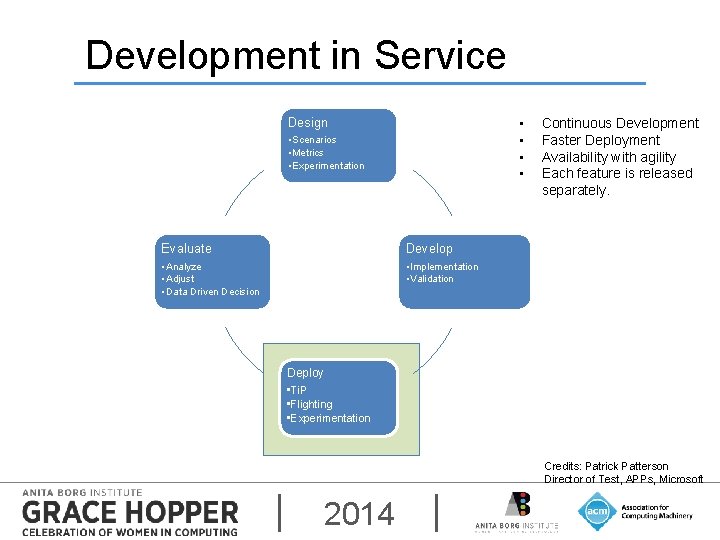

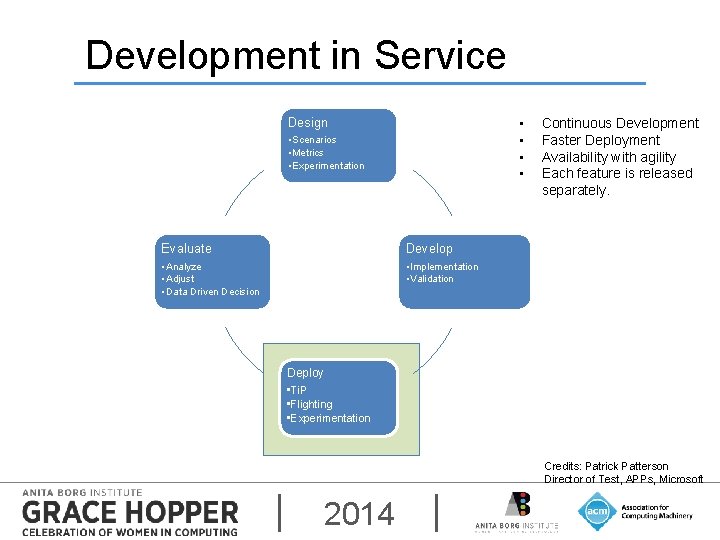

Development in Service Design • • • Scenarios • Metrics • Experimentation Evaluate Develop • Analyze • Adjust • Data Driven Decision • Implementation • Validation Continuous Development Faster Deployment Availability with agility Each feature is released separately. Deploy • Ti. P • Flighting • Experimentation Credits: Patrick Patterson Director of Test, APPs, Microsoft 2014

Testing in Production(Ti. P) § Testing in production (Ti. P) is a set of software methodologies that derive quality assessments not from test results run in a lab but from where your services actually run – in production. Seth Eliot, Principal Knowledge Engineer, Microsoft 2014

TYPES OF TESTING 2014

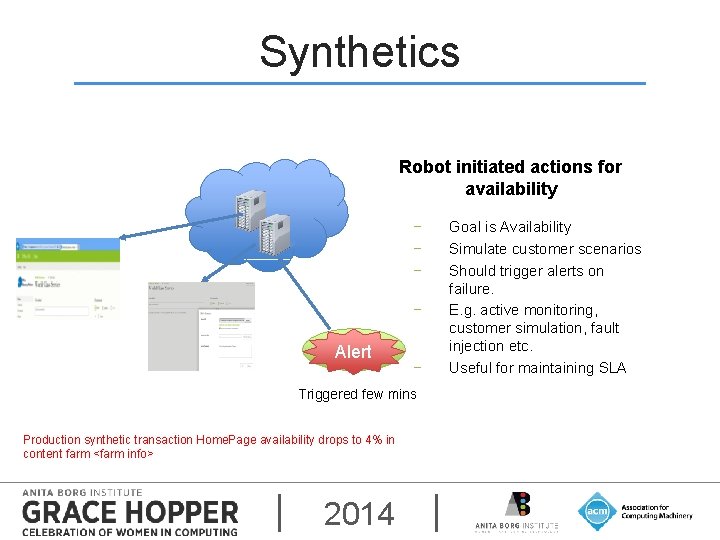

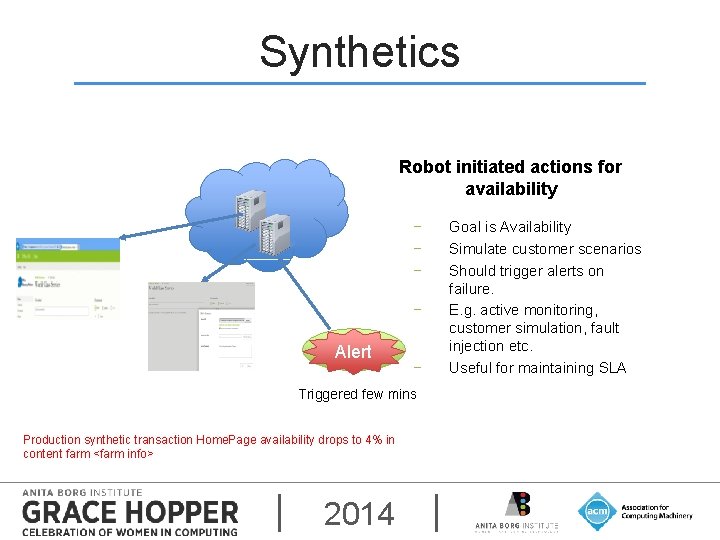

Synthetics Robot initiated actions for availability − − Probe Alert − Triggered few mins Production synthetic transaction Home. Page availability drops to 4% in content farm <farm info> 2014 Goal is Availability Simulate customer scenarios Should trigger alerts on failure. E. g. active monitoring, customer simulation, fault injection etc. Useful for maintaining SLA

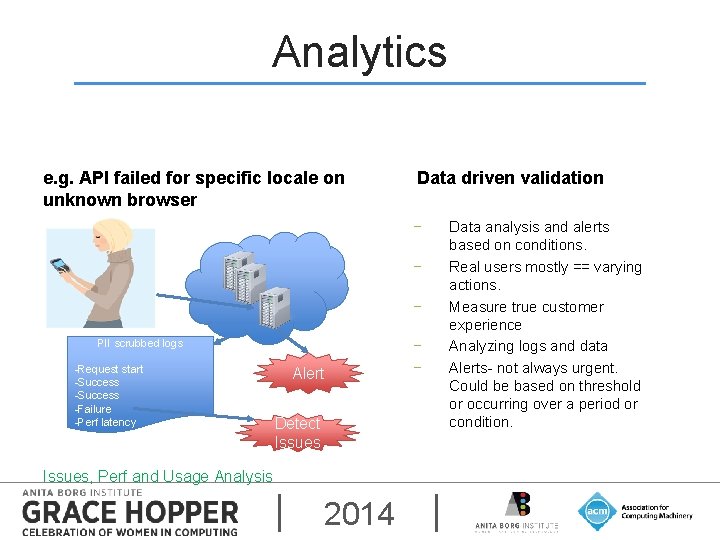

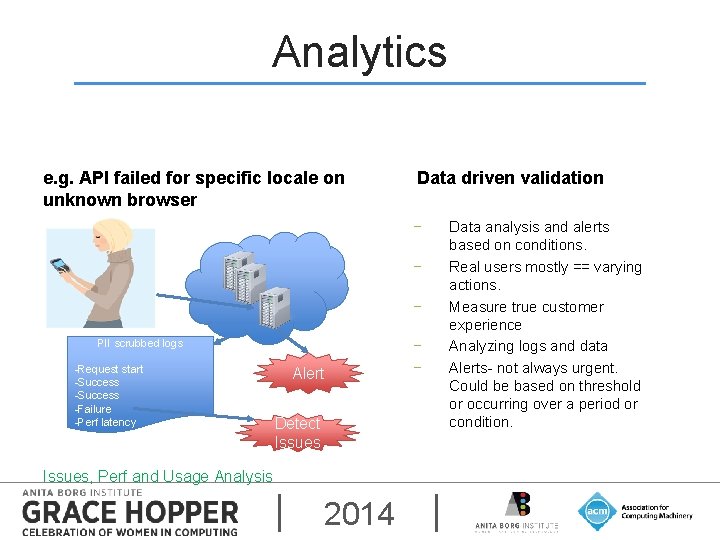

Analytics e. g. API failed for specific locale on unknown browser Data driven validation − − − PII scrubbed logs -Request start -Success -Failure -Perf latency Alert Detect Issues, Perf and Usage Analysis 2014 − − Data analysis and alerts based on conditions. Real users mostly == varying actions. Measure true customer experience Analyzing logs and data Alerts- not always urgent. Could be based on threshold or occurring over a period or condition.

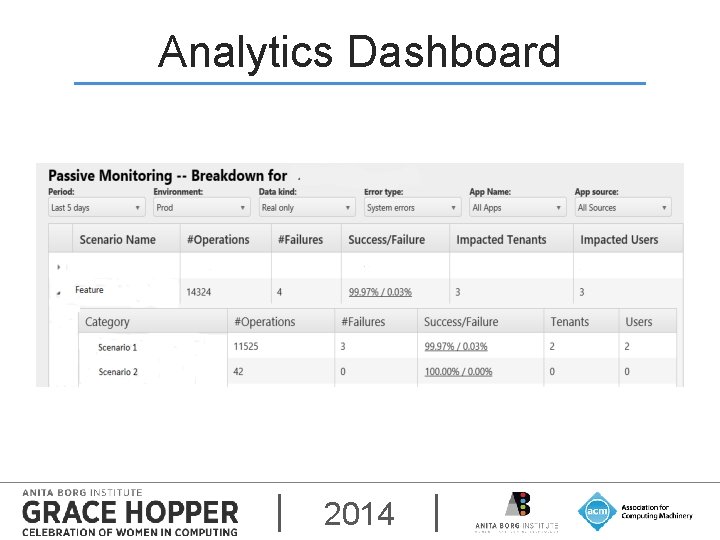

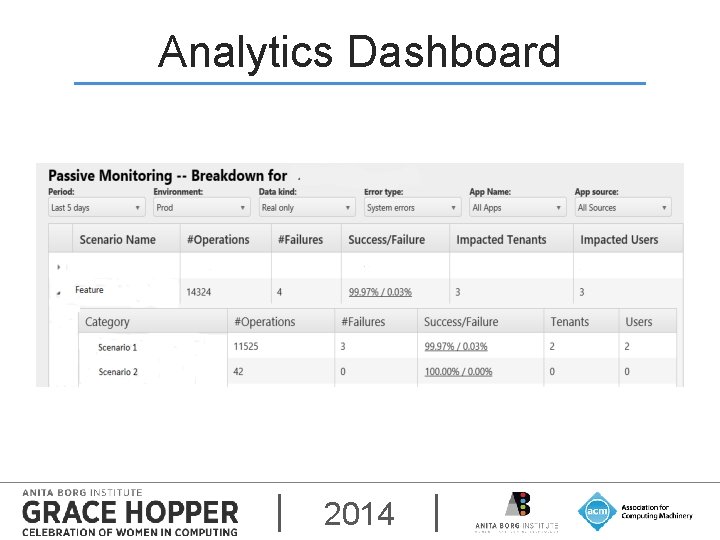

Analytics Dashboard 2014

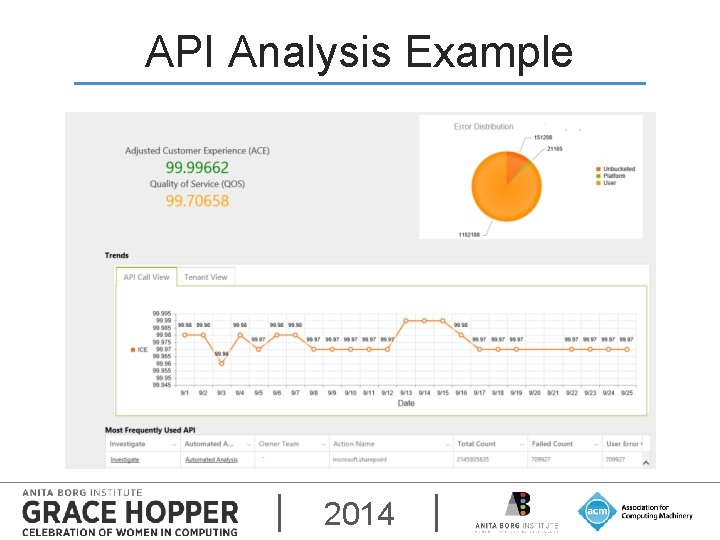

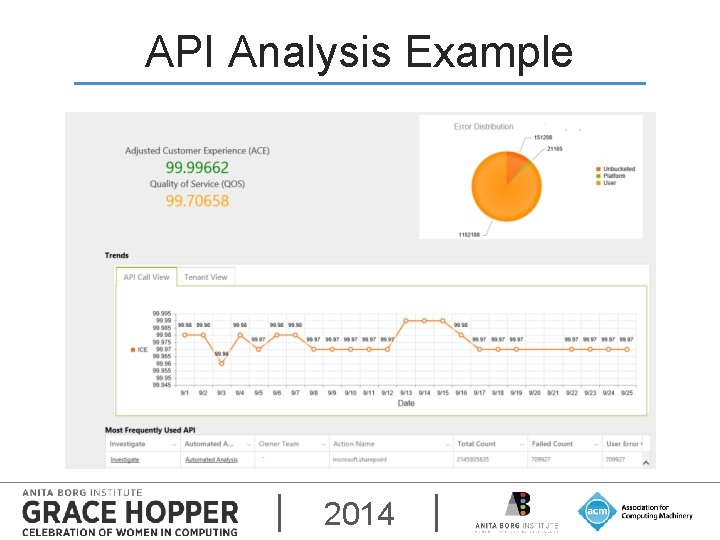

API Analysis Example 2014

FEATURE FLIGHTING Deployment 2014

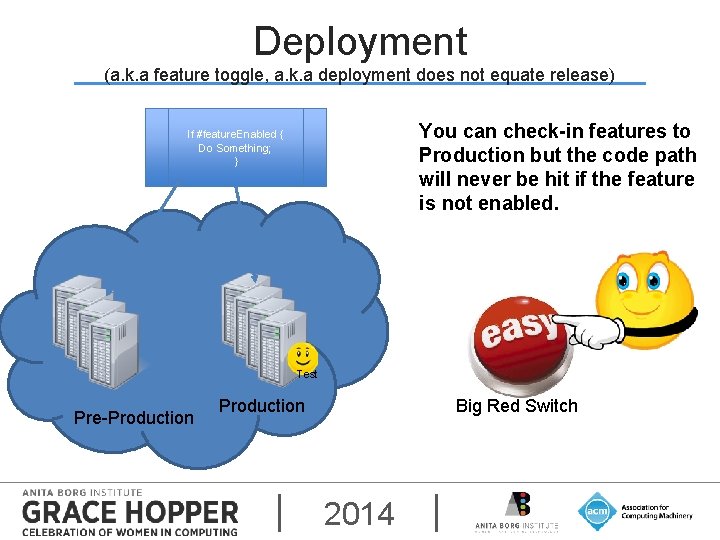

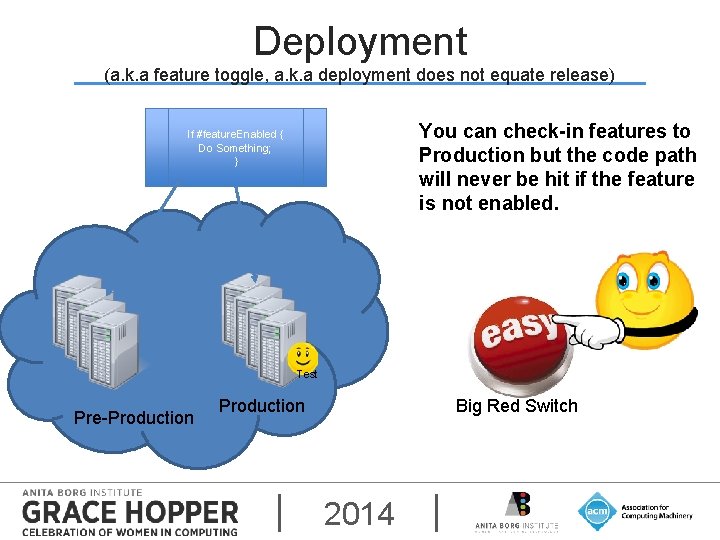

Deployment (a. k. a feature toggle, a. k. a deployment does not equate release) You can check-in features to Production but the code path will never be hit if the feature is not enabled. If #feature. Enabled { Do Something; } Test Pre-Production Big Red Switch 2014

FEATURE FEEDBACK Even during Design 2014

A/B Testing (a. k. a Online Controlled Experimentation) − Most of the users get the original experience (control) − Some users are offered the new experiences or features (experiment) § For success: − least # of variables should change. − Remove random noise or assumption. 2014

Ti. P in Other Companies § Data driven quality − “Netflix is a log generating company that also happens to streams movies”- Adrian Cockroft § A/B and multi-variate testing for Experimentations. § Have adopted some form of dark or ramped deployment. § Shadowing e. g. Google 2014

My Key Takeaways § What not to do − − − Expose PII information Too many synthetic transactions Expose test data to customers. § Key Learnings − − − “It is a capital mistake to theorize before one has data” –Sherlock Holmes Engineers need access to data and debug boxes easily but with security considerations. Synthetics + Analytics + measurements against key performance indicators (KPI) = Quality Assessments 2014

Questions @ end of session. 2014

Got Feedback? Rate and Review the session using the GHC Mobile App To download visit www. gracehopper. org 2014