Gesture Recognition Challenge Isabelle Guyon Vassilis Athitsos Jitendra

Gesture Recognition Challenge Isabelle Guyon, Vassilis Athitsos, Jitendra Malik, Ivan Laptev http: //clopinet. com/gesture@clopinet. com

Video surveillance Image or video indexing/retrieval Recognition of sign languages Emotion recognition Virtual reality, games Ambiant intelligence Robotics

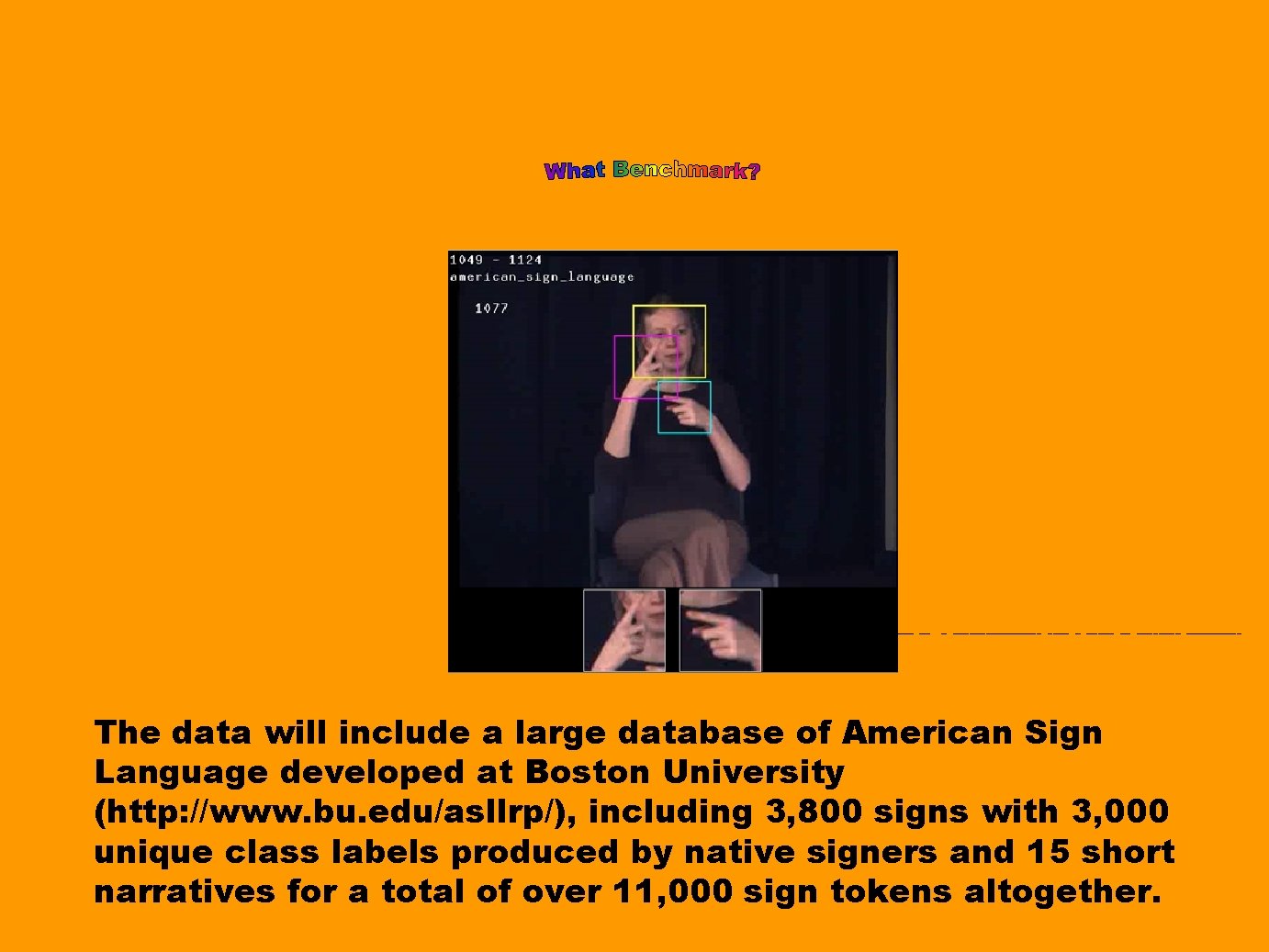

The data will include a large database of American Sign Language developed at Boston University (http: //www. bu. edu/asllrp/), including 3, 800 signs with 3, 000 unique class labels produced by native signers and 15 short narratives for a total of over 11, 000 sign tokens altogether.

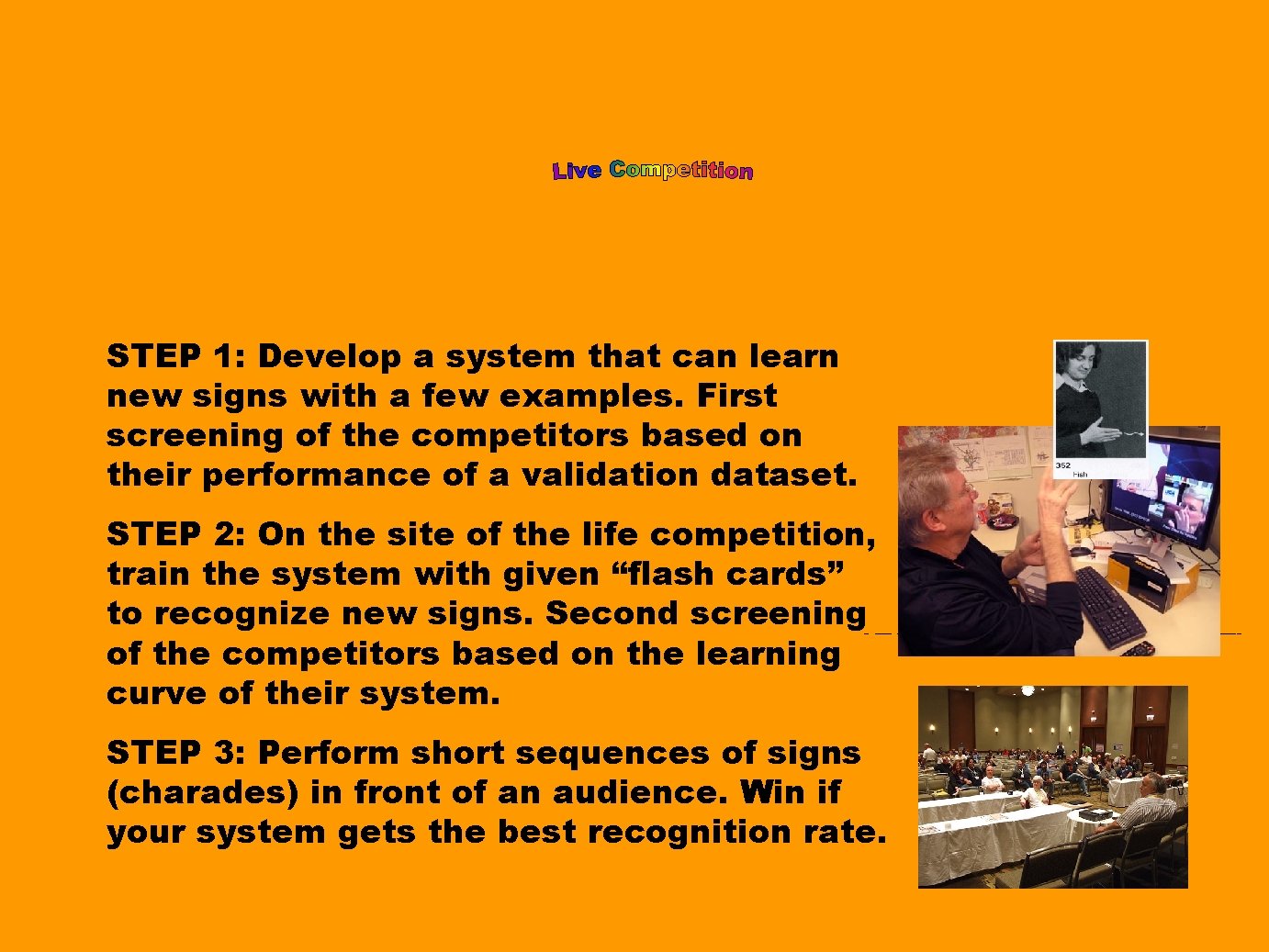

STEP 1: Develop a system that can learn new signs with a few examples. First screening of the competitors based on their performance of a validation dataset. STEP 2: On the site of the life competition, train the system with given “flash cards” to recognize new signs. Second screening of the competitors based on the learning curve of their system. STEP 3: Perform short sequences of signs (charades) in front of an audience. Win if your system gets the best recognition rate.

Launching (data release): CVPR 2011, Colorado Springs, USA JUNE 2011 Live competition: ICCV 2011, Barcelona, Spain. NOVEMBER 2011

On-going UNSUPERVISED LEARNING and TRANSFER LEARNING challenge Recent research has been focusing on making use of the vast amounts of unlabeled data available at low cost or data labeled with “cheap” labels loosely related to the task at hand, including: space transformations, dimensionality reduction, and hierarchical feature representations ("deep learning"). However, these advances tend to be ignored by practitioners who continue using a handful of popular algorithms like PCA, ICA, k-means, and hierarchical clustering. This challenge performs of evaluation of unsupervised and transfer learning algorithms free of inventor bias to identify and popularize algorithms that have advanced the state of the art. Free registrations, cash prizes, 2 workshops (IJCNN 2011, ICML 2011), proceedings in JMLR W&CP, much fun! http: //clopinet. com/ul

CREDITS This project is supported by the DARPA Deep Learning project. Coordinator: Isabelle Guyon, Clopinet, Berkeley, California, USA. Web platform: Server made available by Prof. Joachim Buhmann, ETH Zurich, Switzerland. Computer admin. : Thomas Fuchs, ETH Zurich. Webmaster: Olivier Guyon, Mister. P. net, France. Protocol review and advising: • David W. Aha, Naval Research Laboratory, USA. • Abe Schneider, Knexus Research, USA. • Graham Taylor, NYU, New-York. USA. • Andrew Ng, Stanford Univ. , Palo Alto, California, USA • Vassilis Athitsos, University of Texas at Arlington, Texas, Usa. • Ivan Laptev, INRIA, France. • Jitendra Malik, UC Berkeley, California, USA • Christian Vogler, ILSP Athens, Greece. • Sudeep Sarkar, University of South Florida, USA. • Philippe Dreuw, RWTH Aachen University, Germany. • Richard Bowden, Univ. Surrey, UK. • Greg Mori, Simon Fraser University, Canada. Data collection and preparation: • Vassilis Athitsos, University of Texas at Arlington, Texas, USA • Isabelle Guyon, Clopinet, California, USA. • Graham Taylor, NYU, New-York. USA. • Ivan Laptev, INRIA, France. • Jitendra Malik, UC Berkeley, California, USA. Baseline methods and beta testing: The following researchers experienced in the domain will be providing baseline results: • Vassilis Athitsos, University of Texas at Arlington, Texas, USA. • Graham Taylor, NYU, New-York. USA. • Andrew Ng, Stanford Univ. , Palo Alto, California, USA. • Yann Le. Cun, NYU. New-York, USA. • Ivan Laptev, INRIA, France. The following researchers were top ranking participants in past challenges but are not experienced in the domain will also give it a try: • Alexander Borisov (Intel, USA) • Hugo-Jair Escalante (INAOE, México) • Amir Saffari (Graz Univ. , Austria) • Alexander Statnikov (NYU, USA)

- Slides: 7