Generative Networks in Reinforcement Learning Bharadwaj Ravichandran bzr

Generative Networks in Reinforcement Learning Bharadwaj Ravichandran bzr 49@psu. edu

Overview • Background • Introduction • Technical Approaches outline • Technical Approaches details • Summary • Discussion

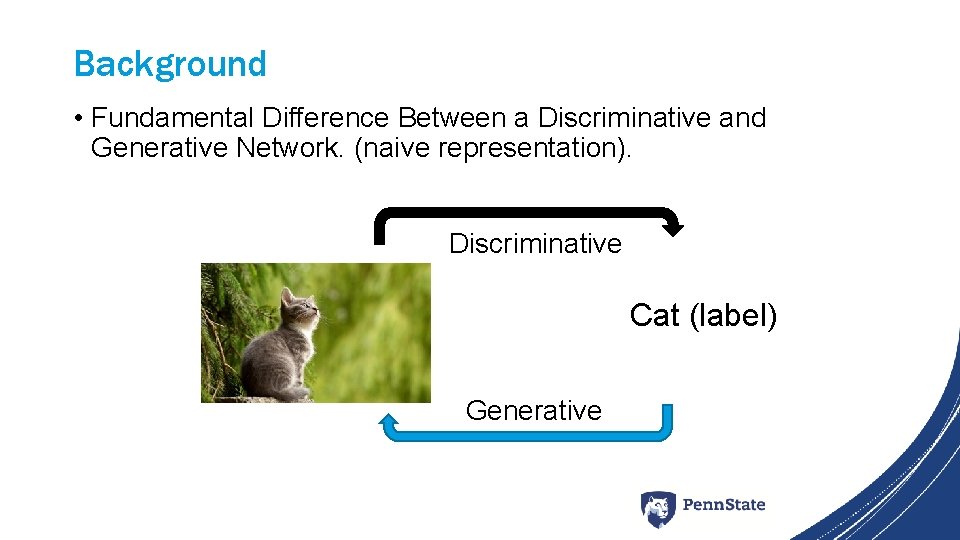

Background • Fundamental Difference Between a Discriminative and Generative Network. (naive representation). Discriminative Cat (label) Generative

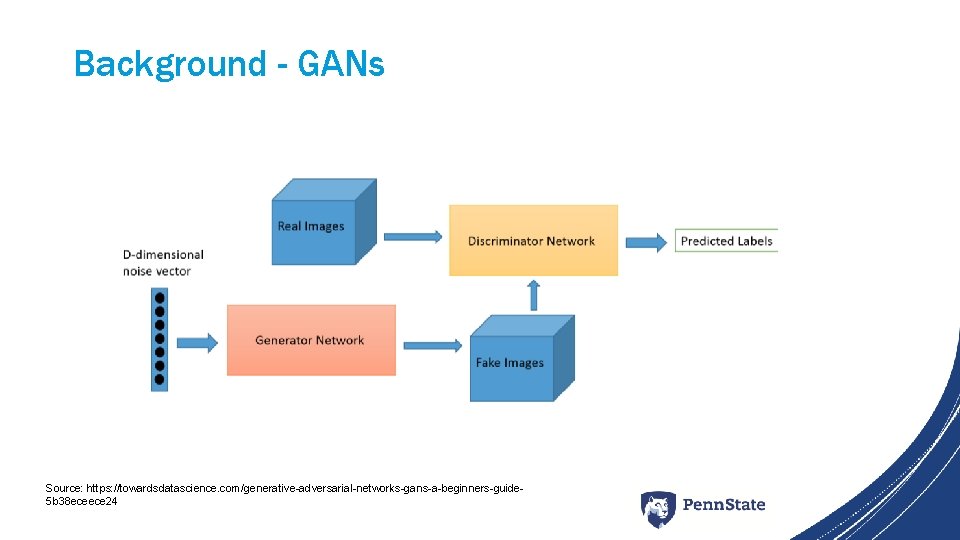

Background - GANs Source: https: //towardsdatascience. com/generative-adversarial-networks-gans-a-beginners-guide 5 b 38 eceece 24

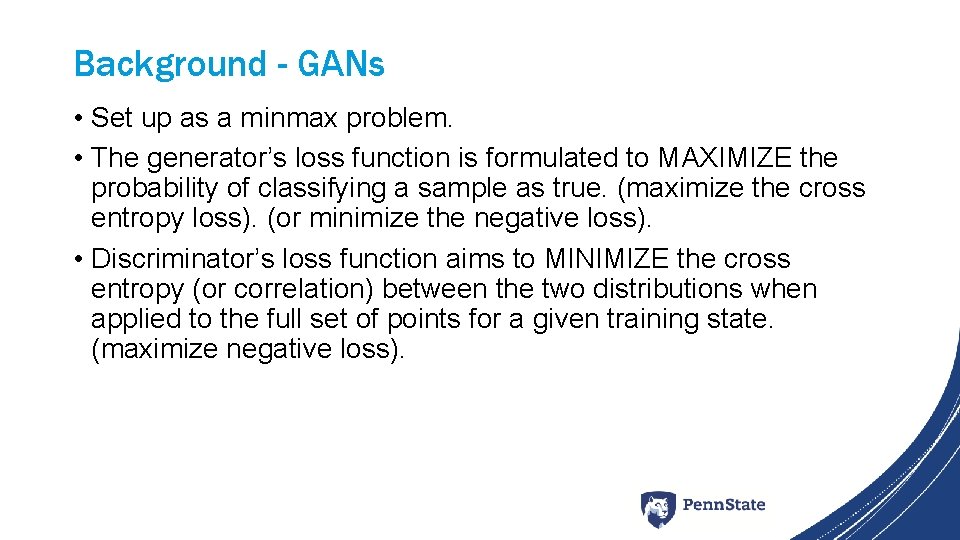

Background - GANs • Set up as a minmax problem. • The generator’s loss function is formulated to MAXIMIZE the probability of classifying a sample as true. (maximize the cross entropy loss). (or minimize the negative loss). • Discriminator’s loss function aims to MINIMIZE the cross entropy (or correlation) between the two distributions when applied to the full set of points for a given training state. (maximize negative loss).

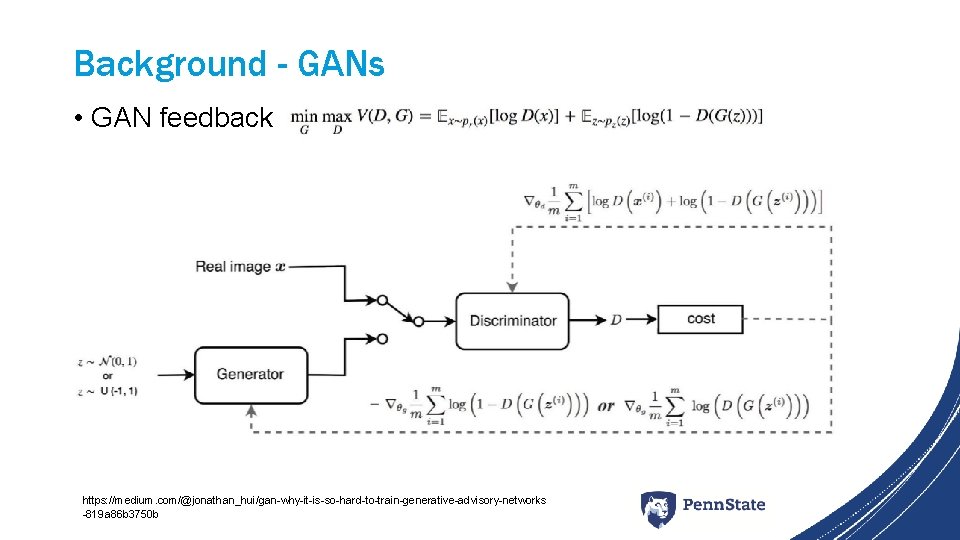

Background - GANs • GAN feedback https: //medium. com/@jonathan_hui/gan-why-it-is-so-hard-to-train-generative-advisory-networks -819 a 86 b 3750 b

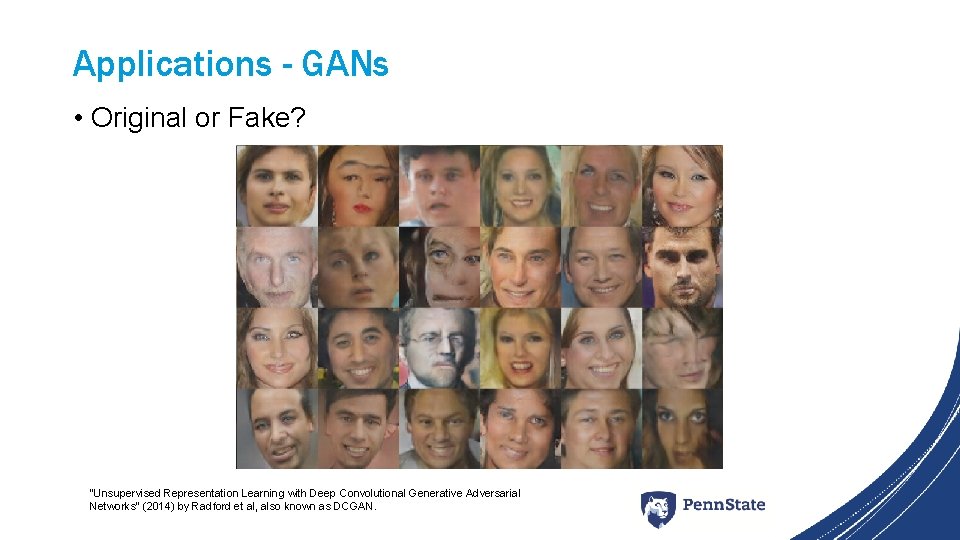

Applications - GANs • Original or Fake? “Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks” (2014) by Radford et al, also known as DCGAN.

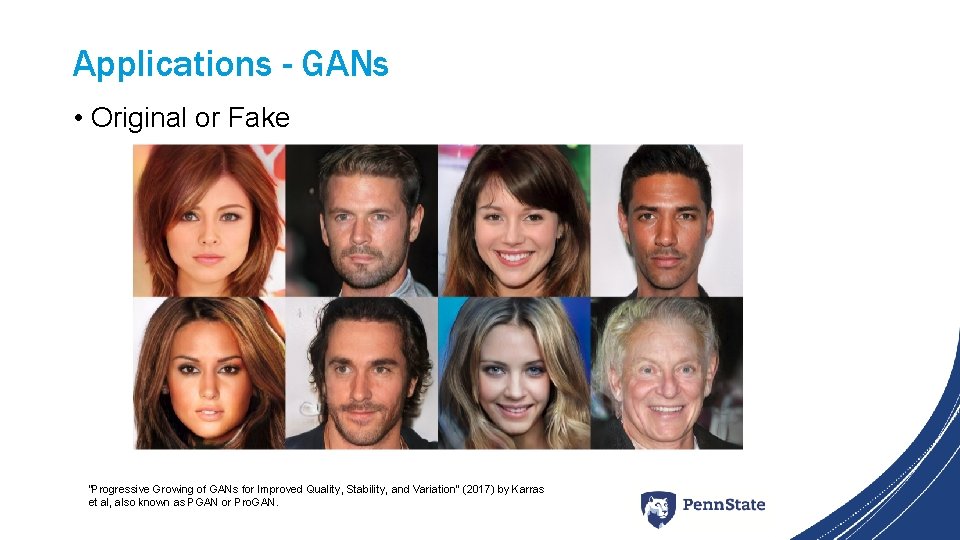

Applications - GANs • Original or Fake “Progressive Growing of GANs for Improved Quality, Stability, and Variation” (2017) by Karras et al, also known as PGAN or Pro. GAN.

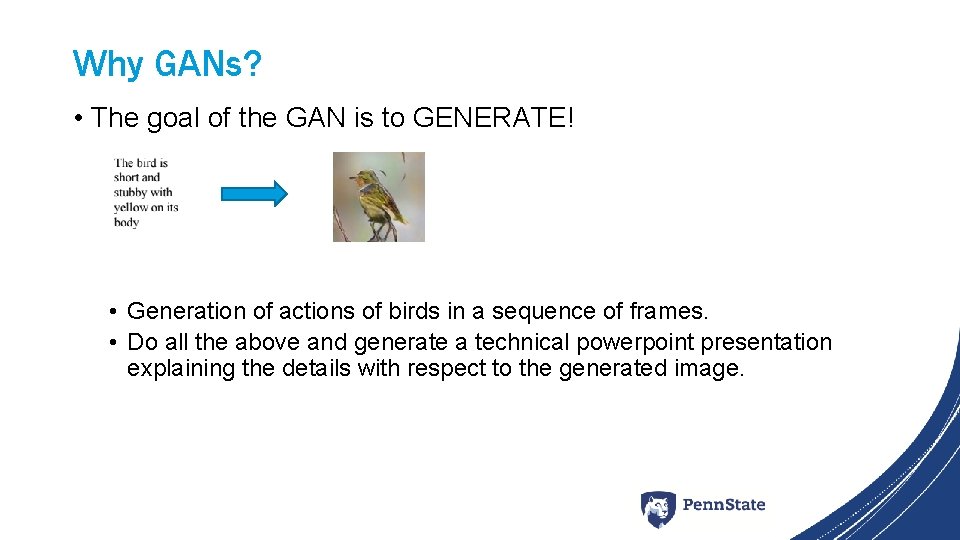

Why GANs? • The goal of the GAN is to GENERATE! • Generation of actions of birds in a sequence of frames. • Do all the above and generate a technical powerpoint presentation explaining the details with respect to the generated image.

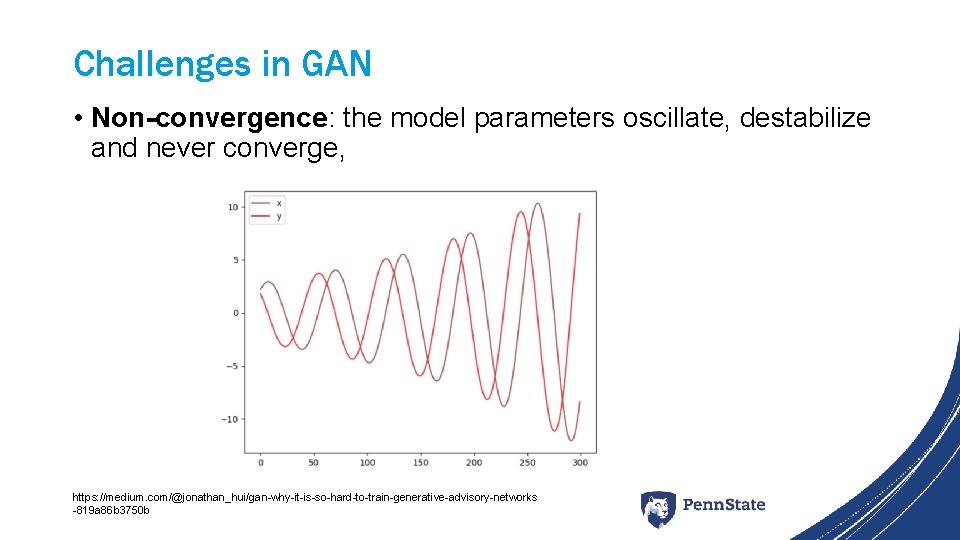

Challenges in GAN • Non-convergence: the model parameters oscillate, destabilize and never converge, https: //medium. com/@jonathan_hui/gan-why-it-is-so-hard-to-train-generative-advisory-networks -819 a 86 b 3750 b

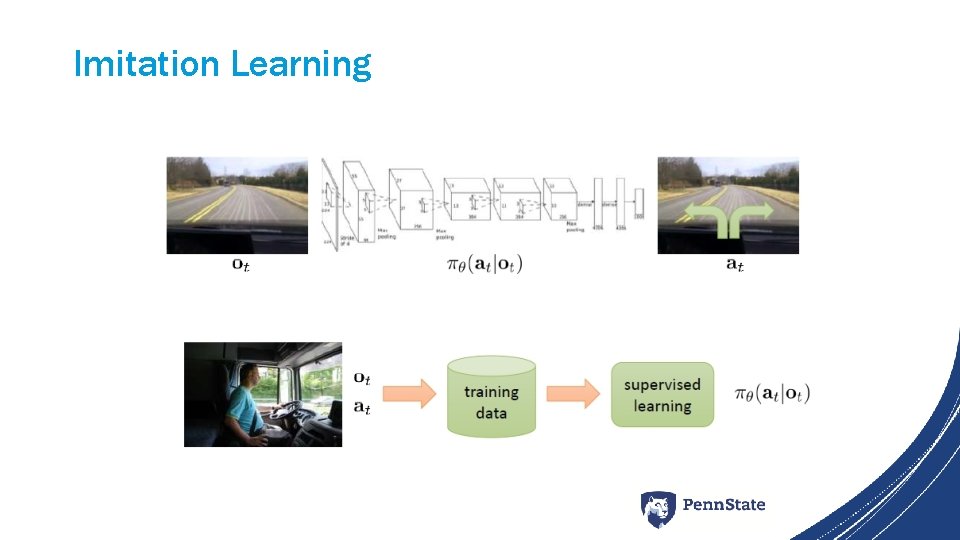

Imitation Learning

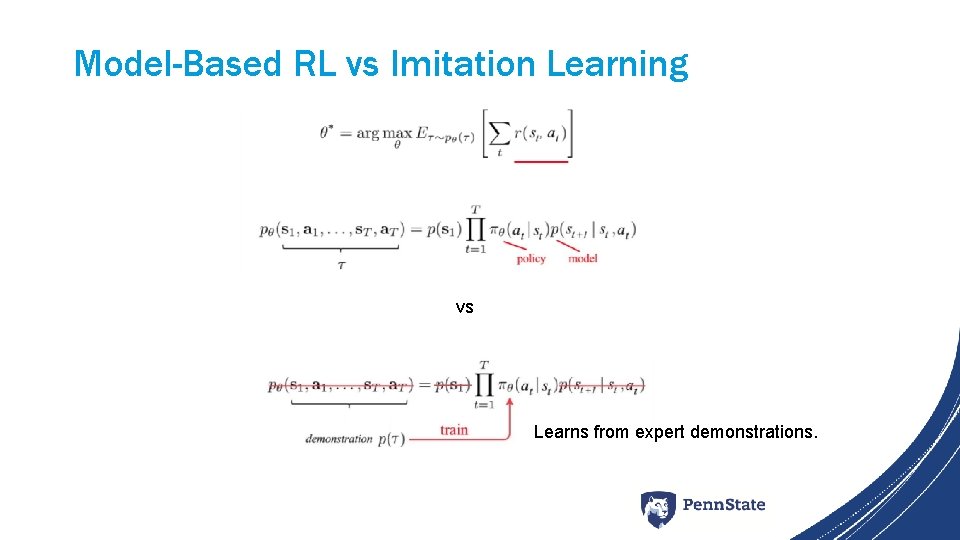

Model-Based RL vs Imitation Learning vs Learns from expert demonstrations.

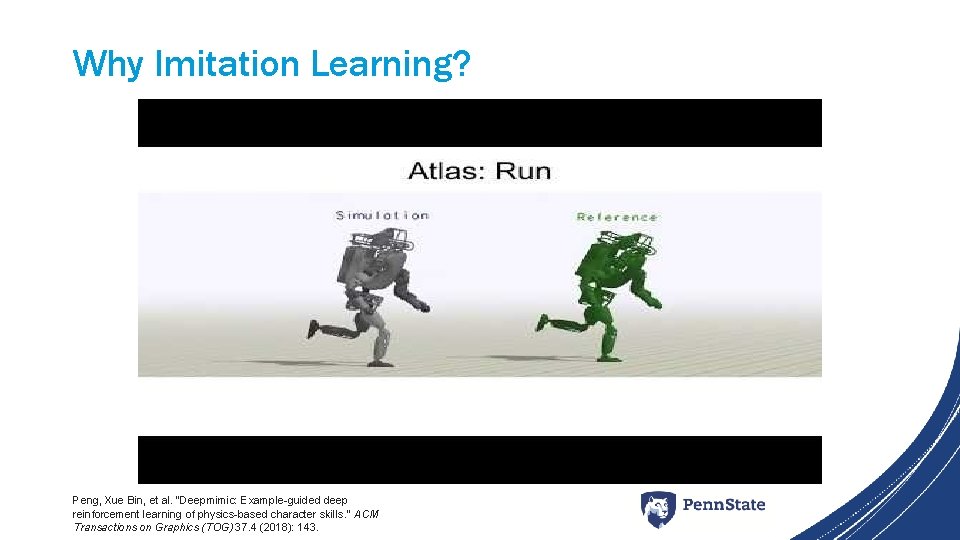

Why Imitation Learning? Peng, Xue Bin, et al. "Deepmimic: Example-guided deep reinforcement learning of physics-based character skills. " ACM Transactions on Graphics (TOG) 37. 4 (2018): 143.

Challenges in Imitation Learning • How to collect expert demonstrations?

Challenges in Imitation Learning • How to optimize the policy for off-course situations?

Introduction • Applications of Generative models in RL https: //www. eurekalert. org/pub_releases/2018 -05/imi-cga 050918. php After training, it’s possible to generate a drug for a previously incurable disease using the Generator, and using the Discriminator to determine whether the sampled drug actually cures the given disease. The reward function named as internal Diversity Clustering (IDC) is introduced to generate more diverse molecules.

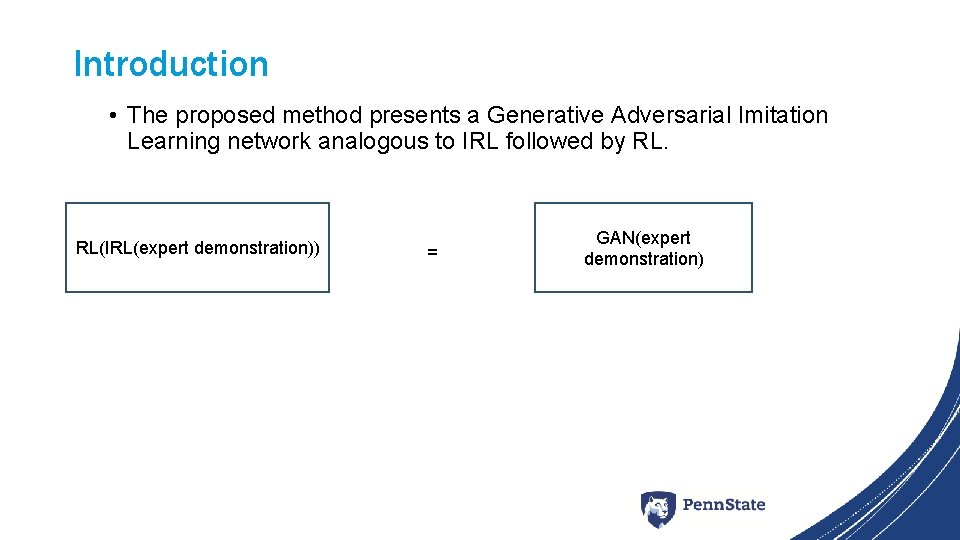

Introduction • The proposed method presents a Generative Adversarial Imitation Learning network analogous to IRL followed by RL. RL(IRL(expert demonstration)) = GAN(expert demonstration)

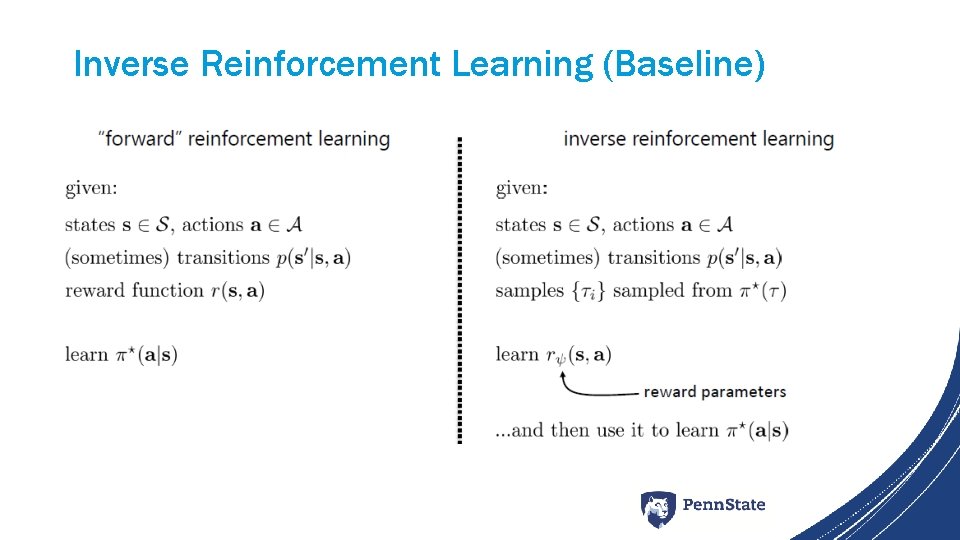

Inverse Reinforcement Learning (Baseline)

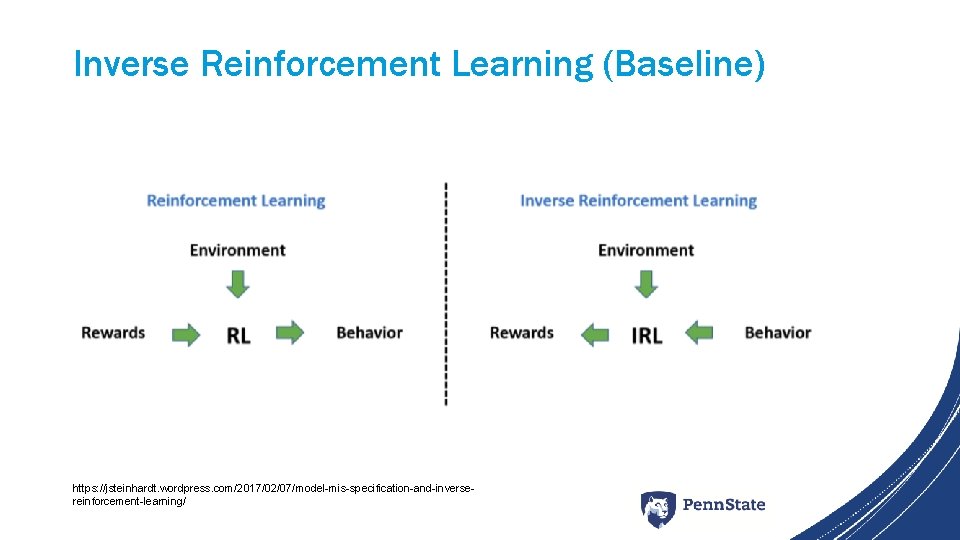

Inverse Reinforcement Learning (Baseline) https: //jsteinhardt. wordpress. com/2017/02/07/model-mis-specification-and-inversereinforcement-learning/

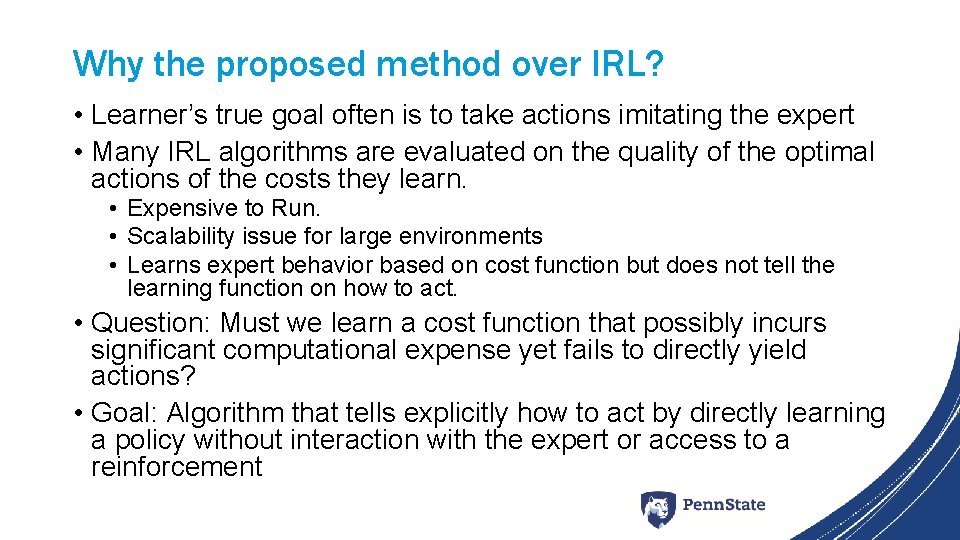

Why the proposed method over IRL? • Learner’s true goal often is to take actions imitating the expert • Many IRL algorithms are evaluated on the quality of the optimal actions of the costs they learn. • Expensive to Run. • Scalability issue for large environments • Learns expert behavior based on cost function but does not tell the learning function on how to act. • Question: Must we learn a cost function that possibly incurs significant computational expense yet fails to directly yield actions? • Goal: Algorithm that tells explicitly how to act by directly learning a policy without interaction with the expert or access to a reinforcement

Technical Approaches Overview • Characterization of policy (Baseline). • Occupancy Measure Matching (Baseline) • Generative Adversarial Imitation Learning (GAIL) – to fit distributions of state and actions defining expert behavior. • Info. GAIL (Variant of GAIL)

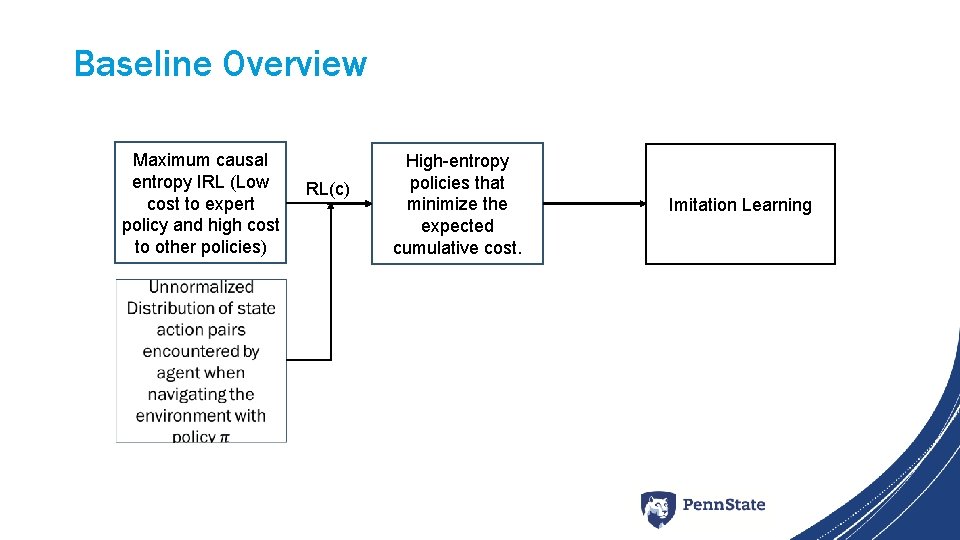

Baseline Overview Maximum causal entropy IRL (Low cost to expert policy and high cost to other policies) RL(c) High-entropy policies that minimize the expected cumulative cost. Imitation Learning

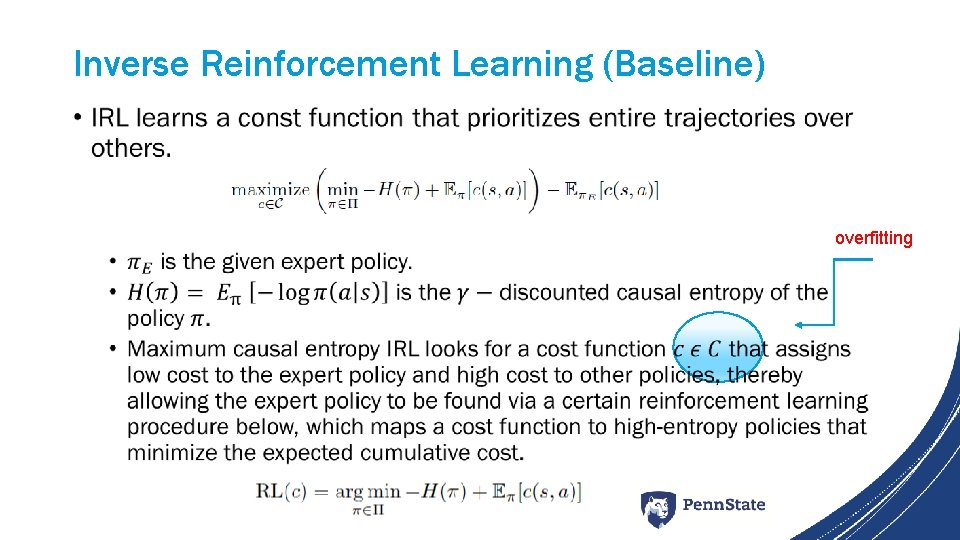

Inverse Reinforcement Learning (Baseline) • overfitting

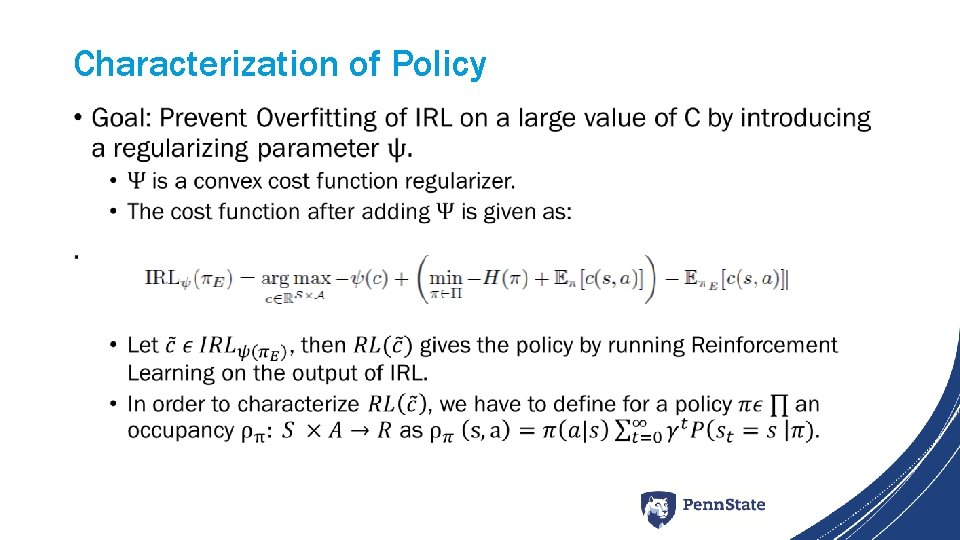

Characterization of Policy •

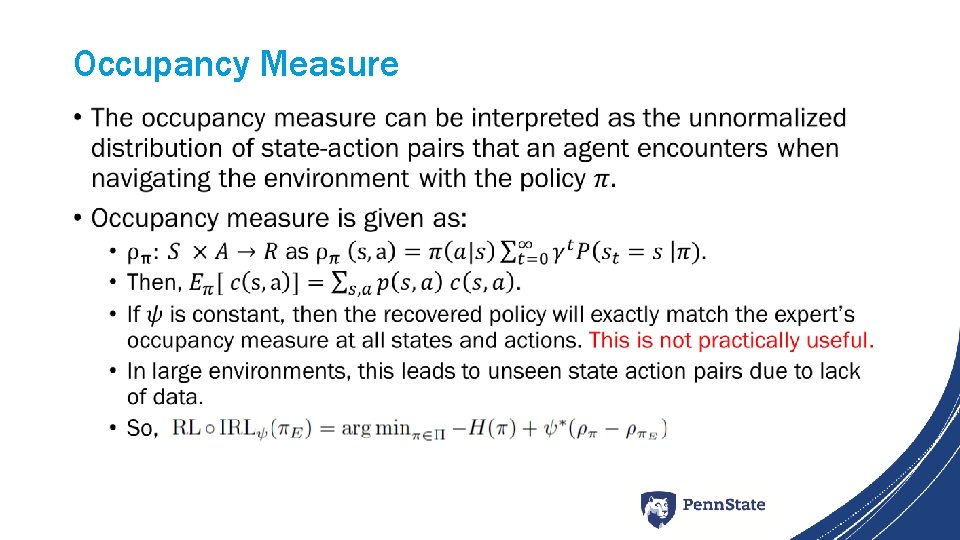

Occupancy Measure •

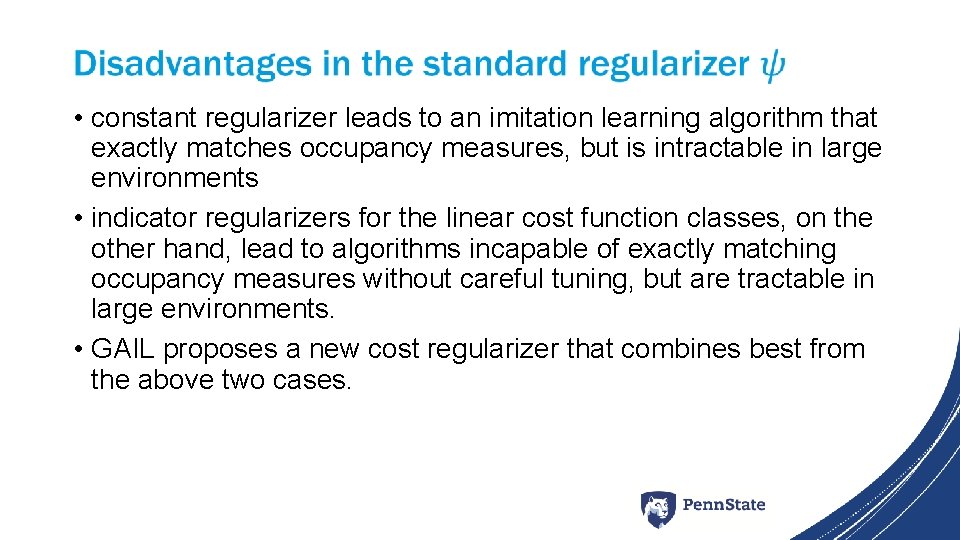

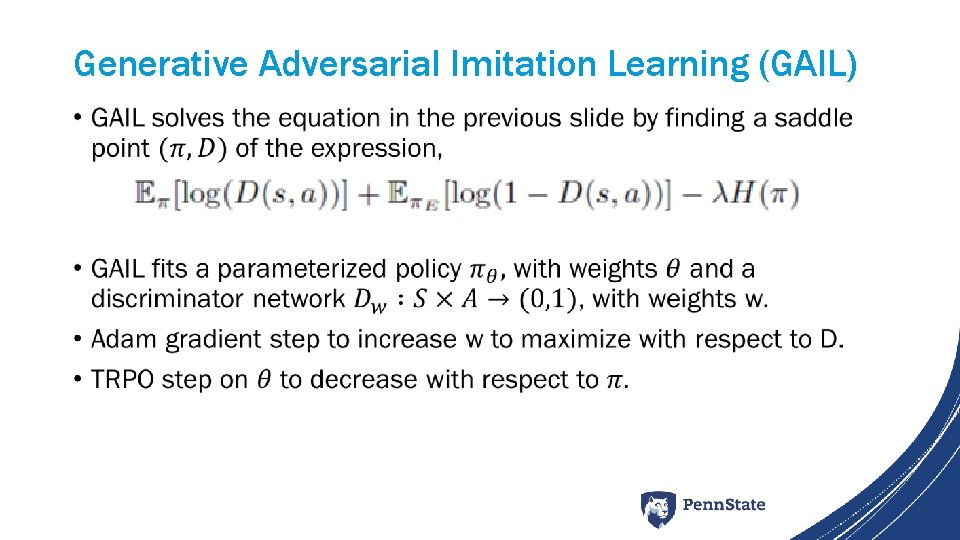

• constant regularizer leads to an imitation learning algorithm that exactly matches occupancy measures, but is intractable in large environments • indicator regularizers for the linear cost function classes, on the other hand, lead to algorithms incapable of exactly matching occupancy measures without careful tuning, but are tractable in large environments. • GAIL proposes a new cost regularizer that combines best from the above two cases.

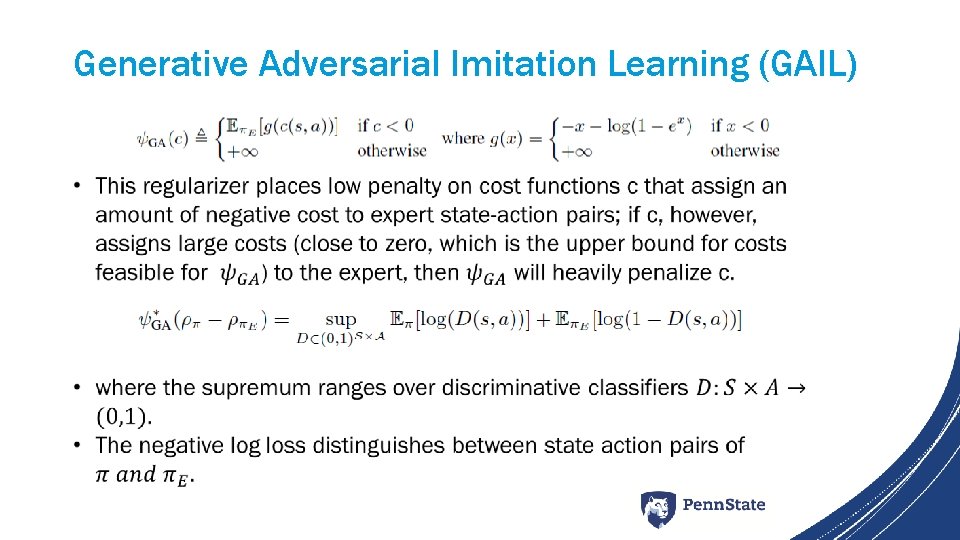

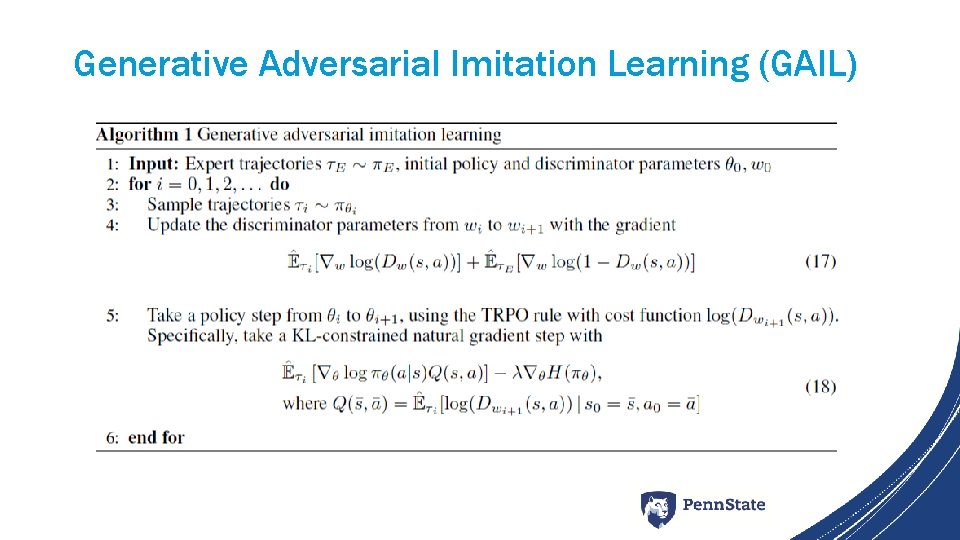

Generative Adversarial Imitation Learning (GAIL)

Generative Adversarial Imitation Learning (GAIL) •

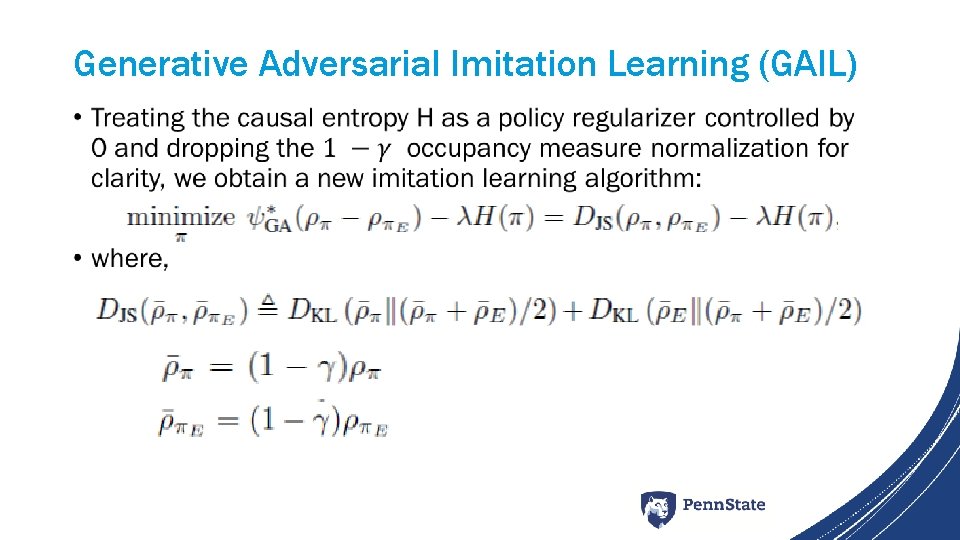

Generative Adversarial Imitation Learning (GAIL) •

Generative Adversarial Imitation Learning (GAIL)

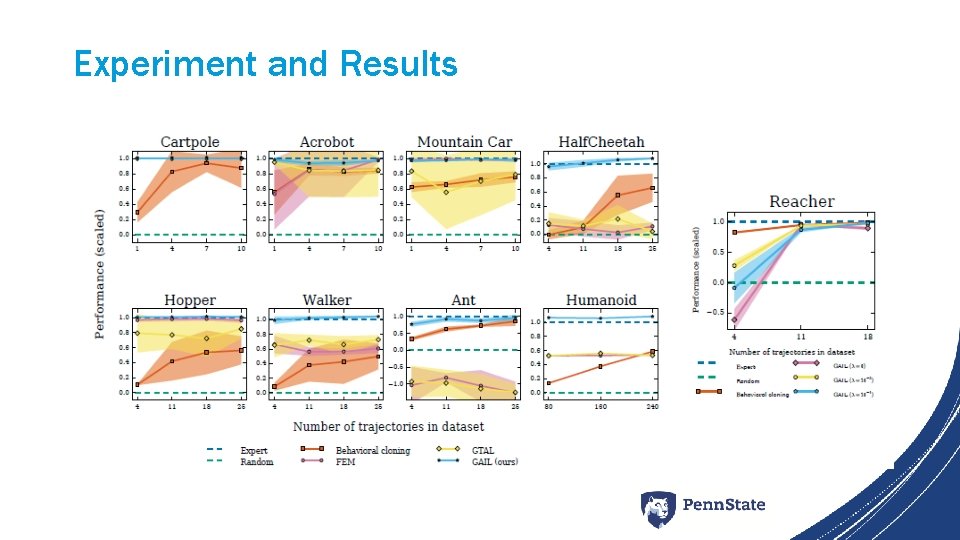

Experiment and Results

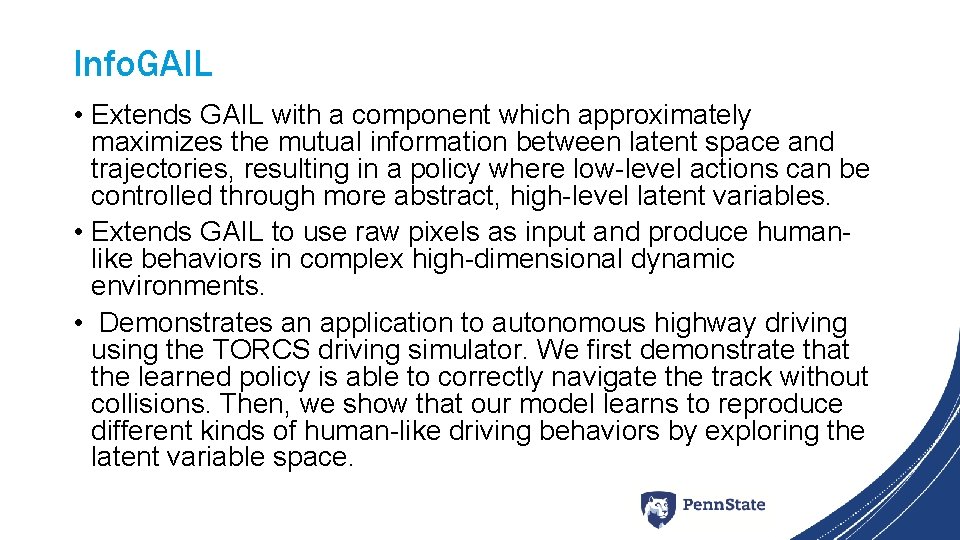

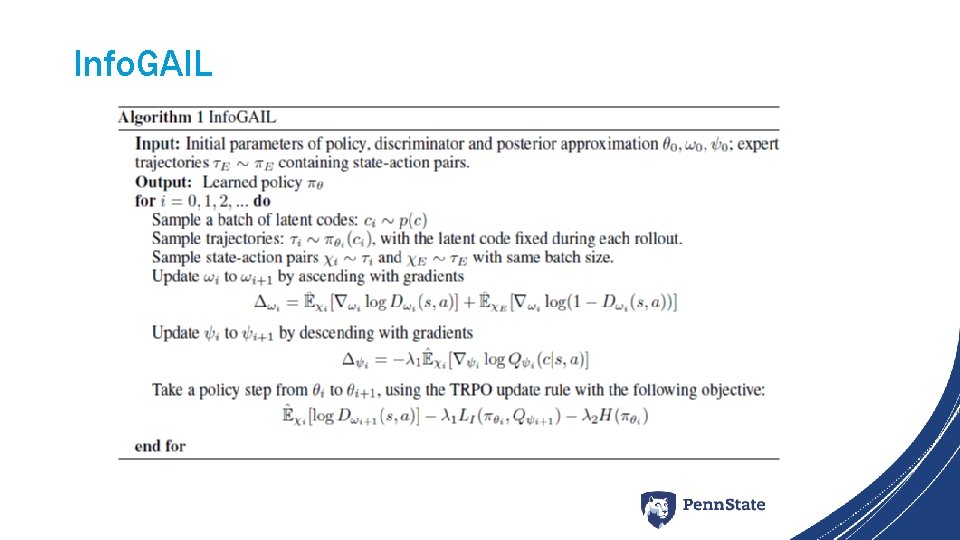

Info. GAIL • Extends GAIL with a component which approximately maximizes the mutual information between latent space and trajectories, resulting in a policy where low-level actions can be controlled through more abstract, high-level latent variables. • Extends GAIL to use raw pixels as input and produce humanlike behaviors in complex high-dimensional dynamic environments. • Demonstrates an application to autonomous highway driving using the TORCS driving simulator. We first demonstrate that the learned policy is able to correctly navigate the track without collisions. Then, we show that our model learns to reproduce different kinds of human-like driving behaviors by exploring the latent variable space.

Info. GAIL

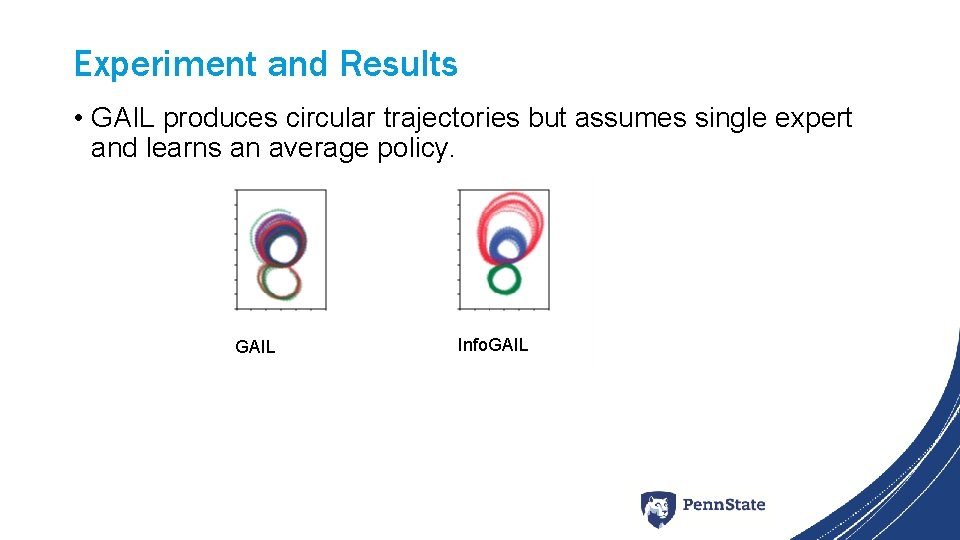

Experiment and Results • GAIL produces circular trajectories but assumes single expert and learns an average policy. GAIL Info. GAIL

Summary • Combines IRL followed by RL into a single step. • Model free method- implies it requires more environment interaction than model-based methods. • Sample efficient in terms of expert data. • Not particularly sample efficient in terms of environment interaction during training • Info. GAIL accommodates multiple expert data demonstrations instead of single (GAIL).

Future Improvements • More improvements can be made with respect to generalizability of the problem. (environment). • Even though directly predics actions from policy without IRL, the convergence of the parameters is not always assured. So, improvements in tuning is required to lead to convergence. • Can be extended for complex scenarios.

References • Ho, Jonathan, and Stefano Ermon. "Generative adversarial imitation learning. " Advances in Neural Information Processing Systems. 2016. • Li, Yunzhu, Jiaming Song, and Stefano Ermon. "Infogail: Interpretable imitation learning from visual demonstrations. " Advances in Neural Information Processing Systems. 2017.

Discussion • Questions?

- Slides: 38