GazeCentered Updating of Remembered Visual Space During Active

Gaze-Centered Updating of Remembered Visual Space During Active Whole-Body Translations Stan Van Pelt and W. Pieter Medendorp J. Neurophysiol 97: 1209 -1220, 2007 Journal Club January 17 2008

§ Research on rotational (eye-) movements suggest that various cortical and sub-cortical structures update the gaze-centred coordinates of remembered visual stimuli to maintain an accurate representation of visual space and further to produce motor plans § Translation is a challenge for the visual system as motion parallax shifts position of objects in front or behind fixation point to opposite side of the retina

§ Authors aim to investigate which reference frame is used as a computational basis during translations § They propose two prediction models to determine the reference frame – Gaze-dependent model – Gaze-independent model

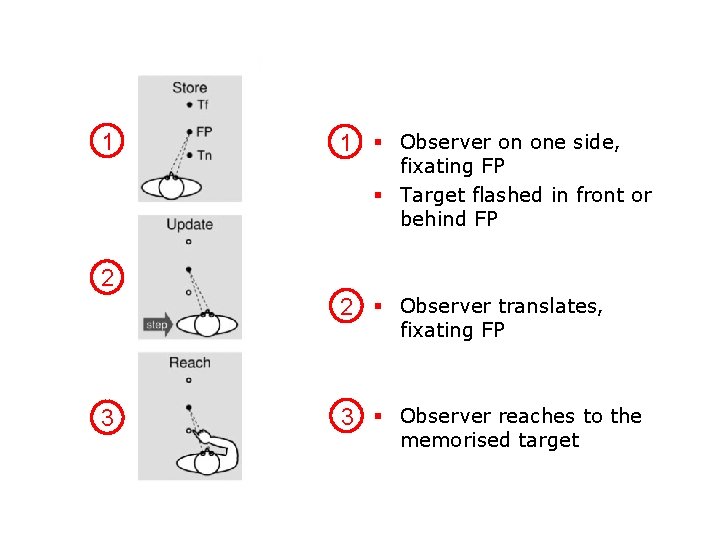

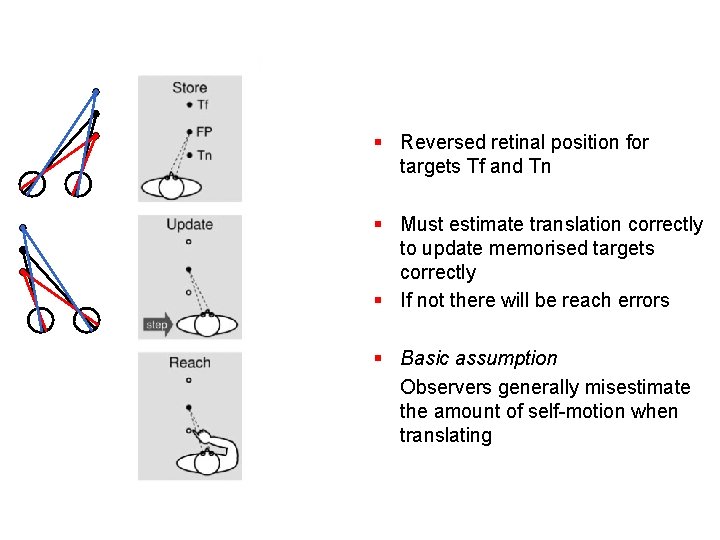

1 1 § Observer on one side, fixating FP § Target flashed in front or behind FP 2 2 § Observer translates, fixating FP 3 3 § Observer reaches to the memorised target

§ Reversed retinal position for targets Tf and Tn § Must estimate translation correctly to update memorised targets correctly § If not there will be reach errors § Basic assumption Observers generally misestimate the amount of self-motion when translating

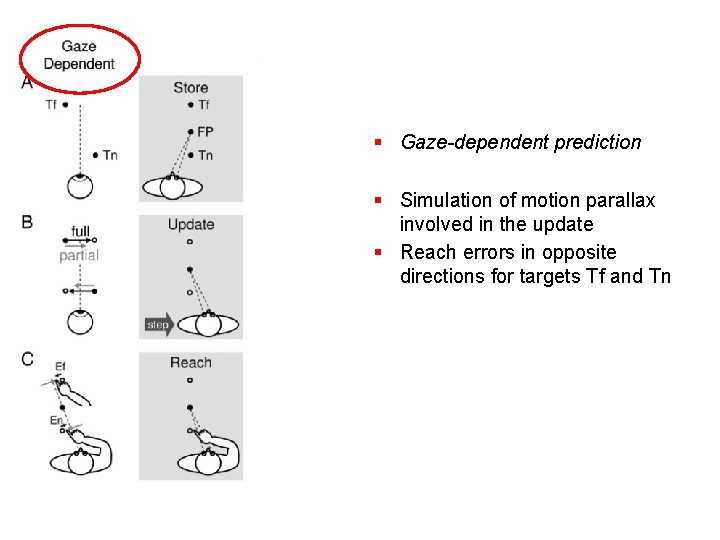

§ Gaze-dependent prediction § Simulation of motion parallax involved in the update § Reach errors in opposite directions for targets Tf and Tn

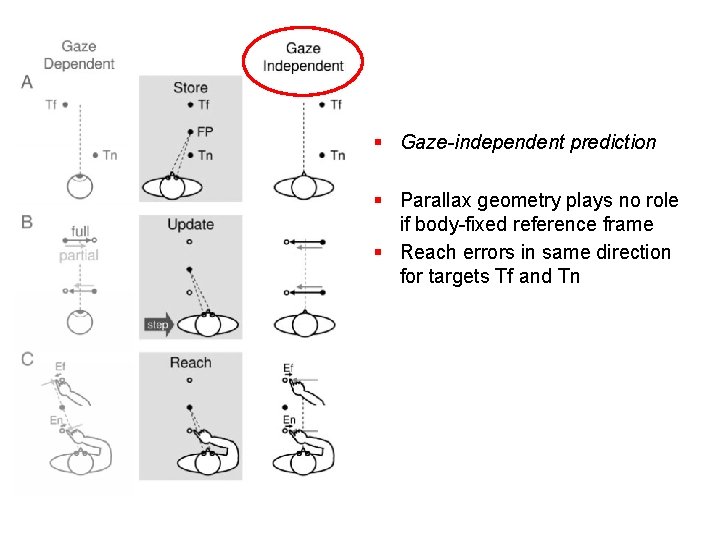

§ Gaze-independent prediction § Parallax geometry plays no role if body-fixed reference frame § Reach errors in same direction for targets Tf and Tn

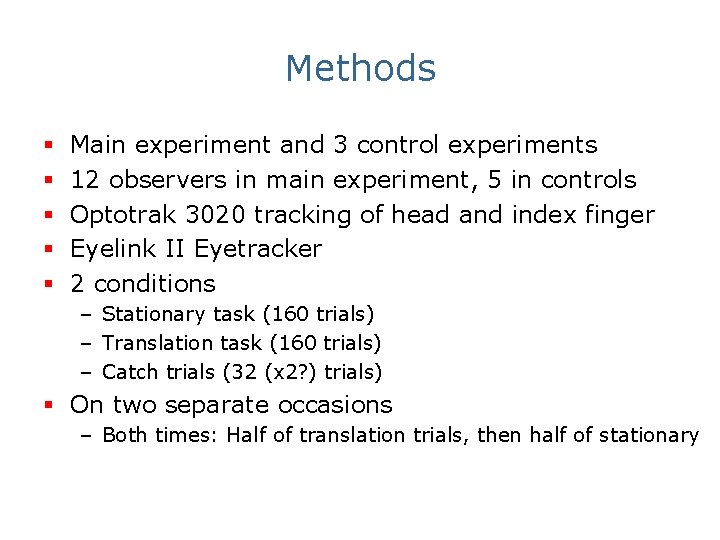

Methods § § § Main experiment and 3 control experiments 12 observers in main experiment, 5 in controls Optotrak 3020 tracking of head and index finger Eyelink II Eyetracker 2 conditions – Stationary task (160 trials) – Translation task (160 trials) – Catch trials (32 (x 2? ) trials) § On two separate occasions – Both times: Half of translation trials, then half of stationary

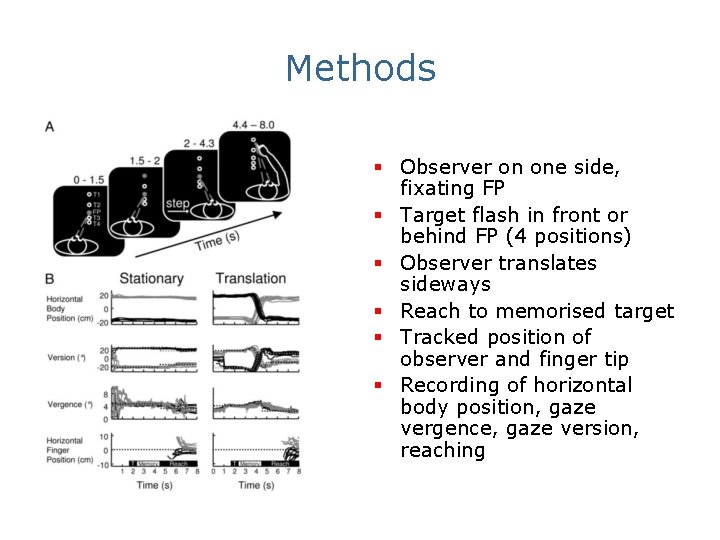

Methods § Observer on one side, fixating FP § Target flash in front or behind FP (4 positions) § Observer translates sideways § Reach to memorised target § Tracked position of observer and finger tip § Recording of horizontal body position, gaze vergence, gaze version, reaching

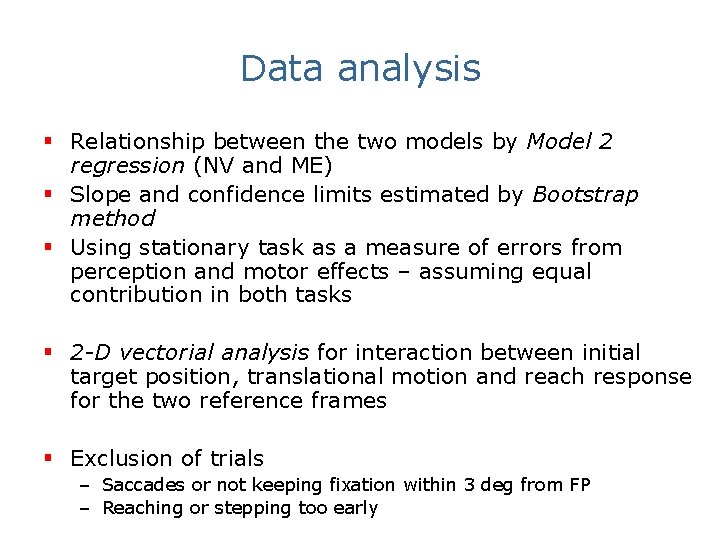

Data analysis § Relationship between the two models by Model 2 regression (NV and ME) § Slope and confidence limits estimated by Bootstrap method § Using stationary task as a measure of errors from perception and motor effects – assuming equal contribution in both tasks § 2 -D vectorial analysis for interaction between initial target position, translational motion and reach response for the two reference frames § Exclusion of trials – Saccades or not keeping fixation within 3 deg from FP – Reaching or stepping too early

Main findings § Reach responses showed parallax-sensitive updating errors § Errors reversed in lateral direction for targets presented at opposite depths from FP § Errors increased with larger depth from FP

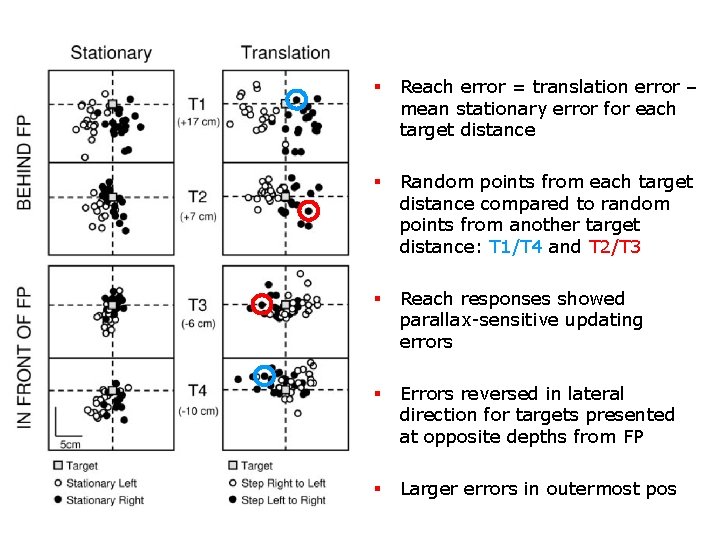

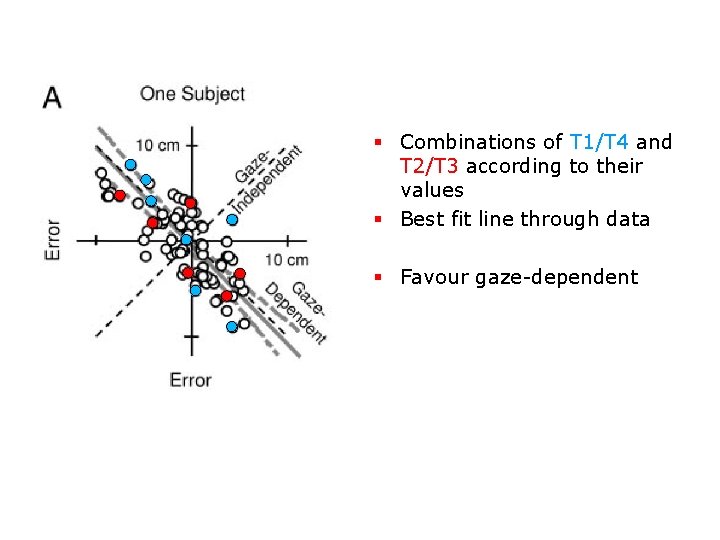

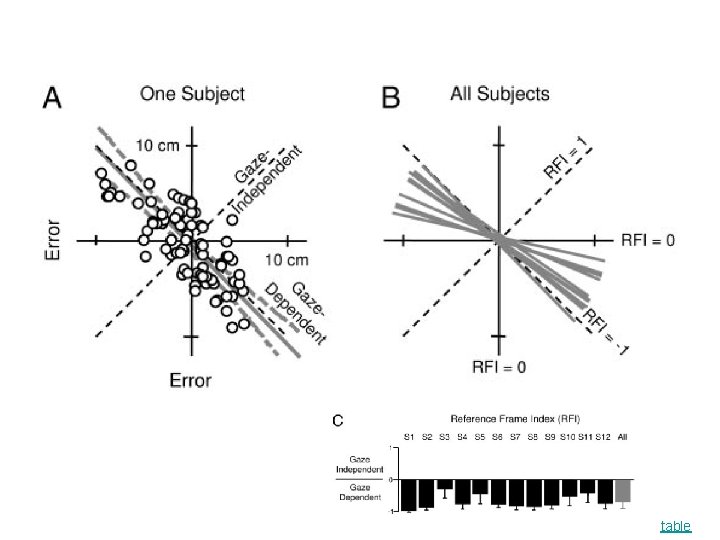

§ Reach error = translation error – mean stationary error for each target distance § Random points from each target distance compared to random points from another target distance: T 1/T 4 and T 2/T 3 § Reach responses showed parallax-sensitive updating errors § Errors reversed in lateral direction for targets presented at opposite depths from FP § Larger errors in outermost pos

§ Combinations of T 1/T 4 and T 2/T 3 according to their values § Best fit line through data § Favour gaze-dependent

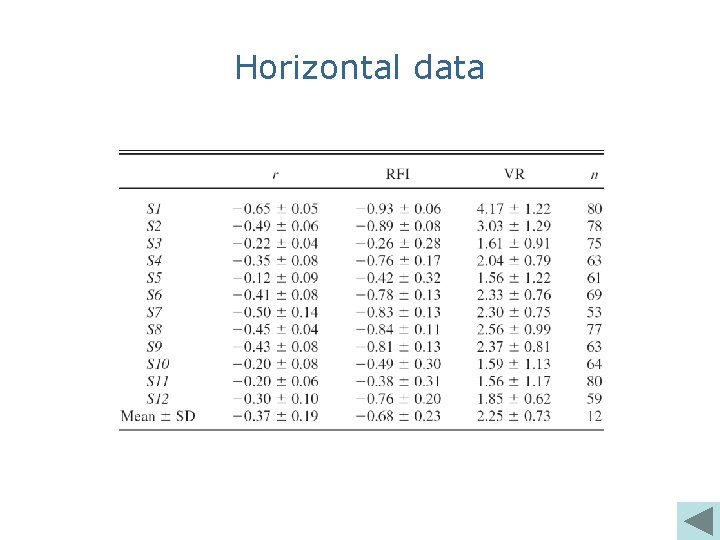

table

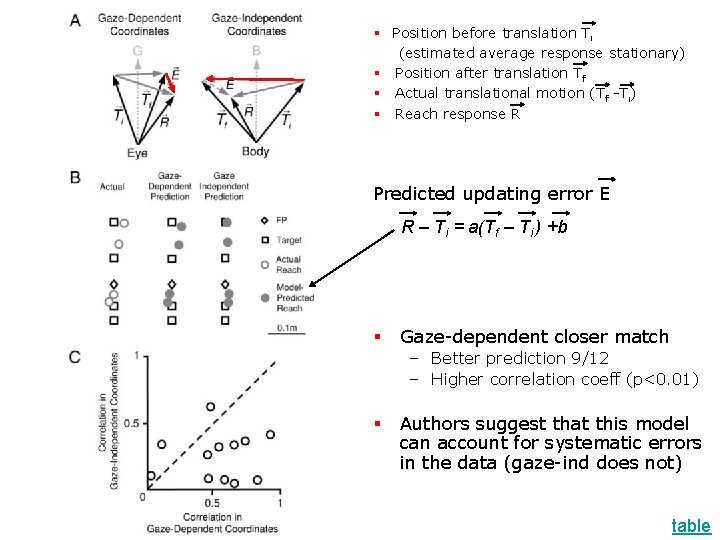

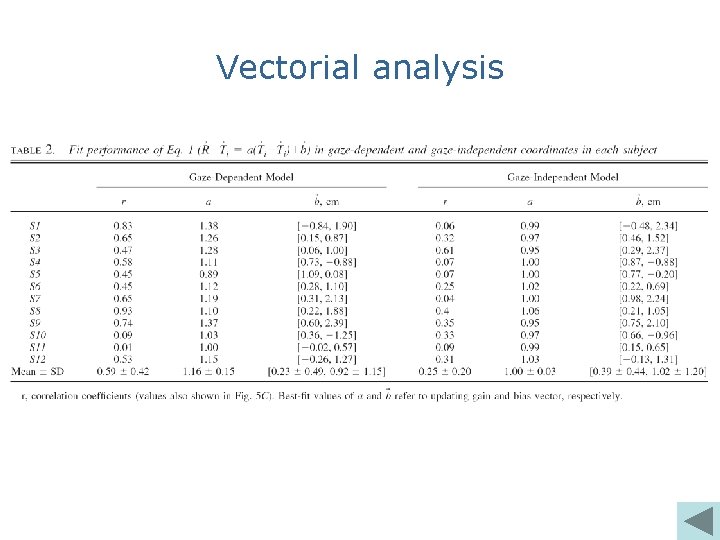

§ Position before translation Ti (estimated average response stationary) § Position after translation Tf § Actual translational motion (Tf -Ti) § Reach response R Predicted updating error E R – Ti = a(Tf – Ti ) +b § Gaze-dependent closer match – Better prediction 9/12 – Higher correlation coeff (p<0. 01) § Authors suggest that this model can account for systematic errors in the data (gaze-ind does not) table

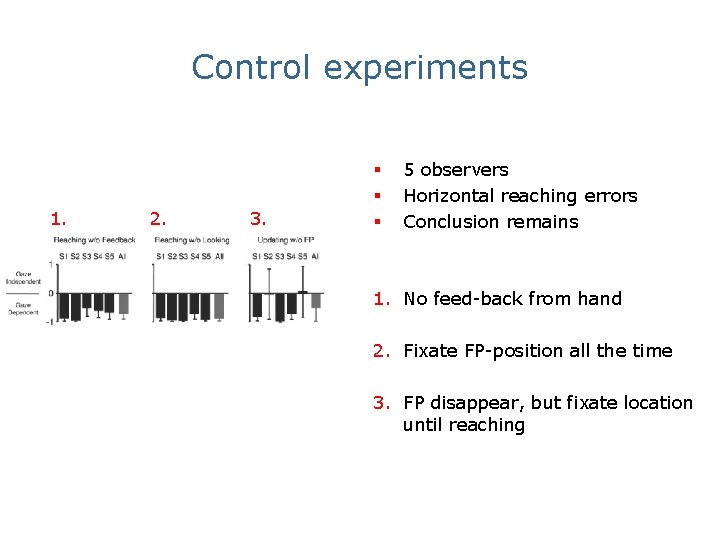

Control experiments 1. 2. 3. § § § 5 observers Horizontal reaching errors Conclusion remains 1. No feed-back from hand 2. Fixate FP-position all the time 3. FP disappear, but fixate location until reaching

Authors’ conclusions § According to their quantitative geometrical analyses, they claim that updating translational errors were better described in gaze-centred than in gaze-independent coordinates § Authors conclude that spatial updating for translational motion operates in gaze-centred coordinates

Considerations from authors § 3/12 observers did not favour the model § The authors admit that they base their models on very simple geometry, and that the brain might have a far more complex visual representation of space § They have focussed on the central representation of body translation as an underlying mechanism for errors, and there may be many other sources to errors in updates

And now… US! § Are the models valid? § Are there fundamental problems in the experimental setup? § Is this a sensible way of processing these data? § Do we believe in the results?

Horizontal data

Vectorial analysis

- Slides: 21