Garbage Collection Compiler Baojian Hua bjhuaustc edu cn

Garbage Collection Compiler Baojian Hua bjhua@ustc. edu. cn

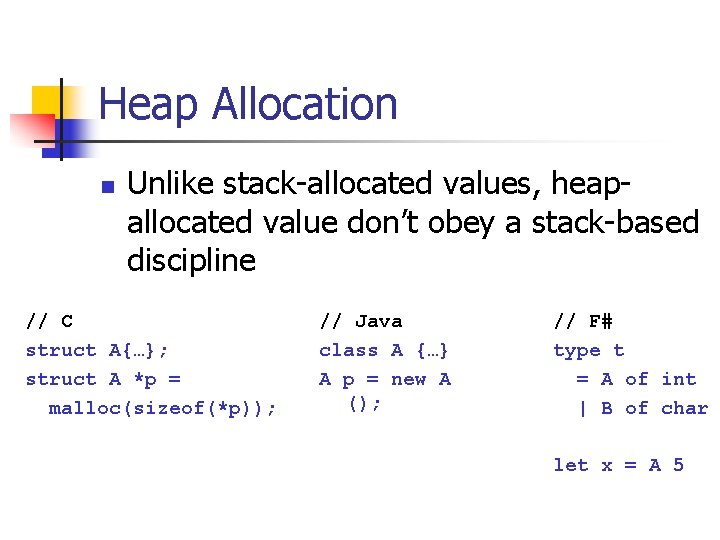

Heap Allocation n Unlike stack-allocated values, heapallocated value don’t obey a stack-based discipline // C struct A{…}; struct A *p = malloc(sizeof(*p)); // Java class A {…} A p = new A (); // F# type t = A of int | B of char let x = A 5

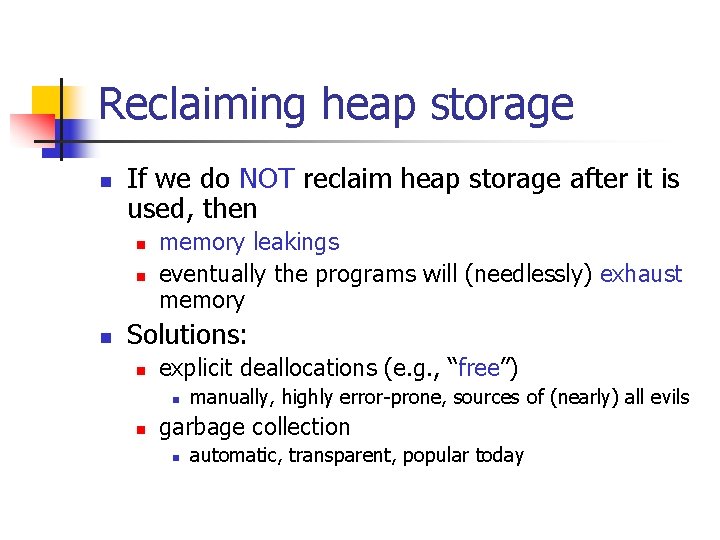

Reclaiming heap storage n If we do NOT reclaim heap storage after it is used, then n memory leakings eventually the programs will (needlessly) exhaust memory Solutions: n explicit deallocations (e. g. , “free”) n n manually, highly error-prone, sources of (nearly) all evils garbage collection n automatic, transparent, popular today

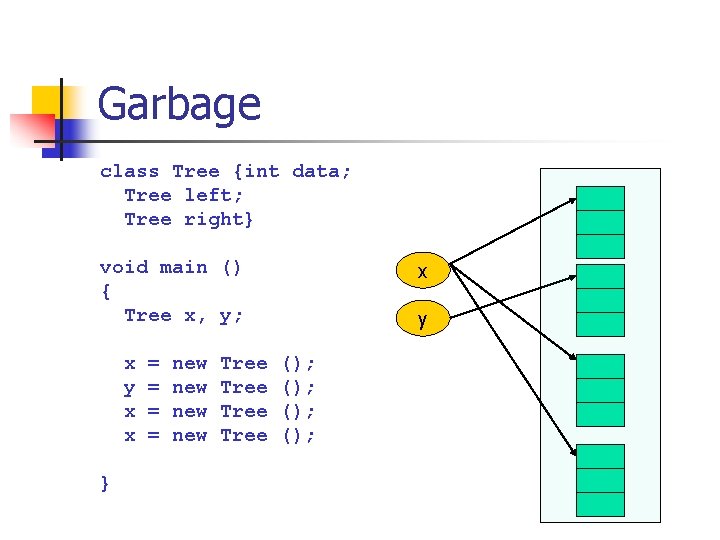

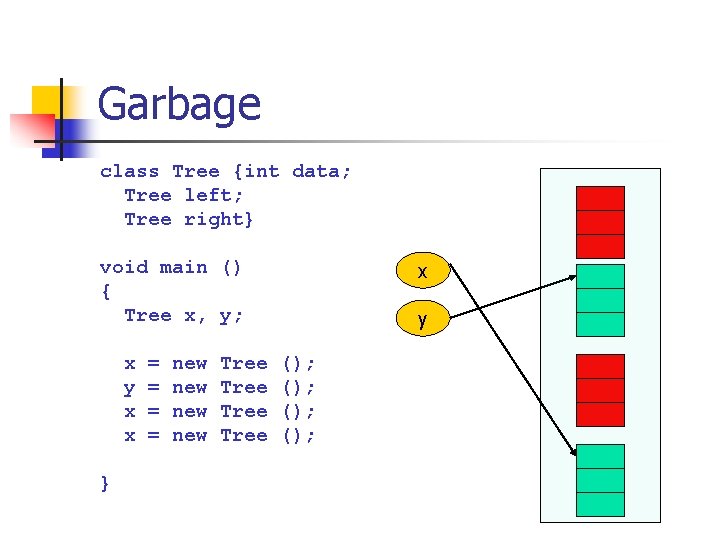

Garbage class Tree {int data; Tree left; Tree right} void main () { Tree x, y; x y x x } = = new new Tree x y (); ();

Garbage class Tree {int data; Tree left; Tree right} void main () { Tree x, y; x y x x } = = new new Tree x y (); ();

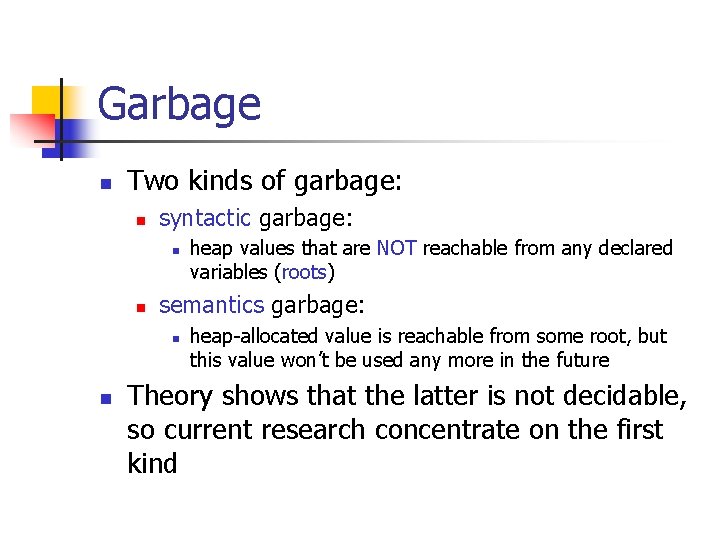

Garbage n Two kinds of garbage: n syntactic garbage: n n semantics garbage: n n heap values that are NOT reachable from any declared variables (roots) heap-allocated value is reachable from some root, but this value won’t be used any more in the future Theory shows that the latter is not decidable, so current research concentrate on the first kind

Reference Counting

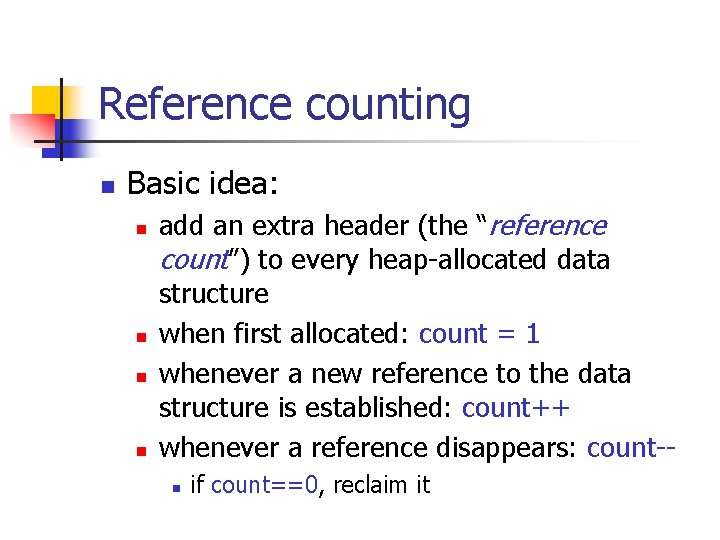

Reference counting n Basic idea: n n add an extra header (the “reference count”) to every heap-allocated data structure when first allocated: count = 1 whenever a new reference to the data structure is established: count++ whenever a reference disappears: count-n if count==0, reclaim it

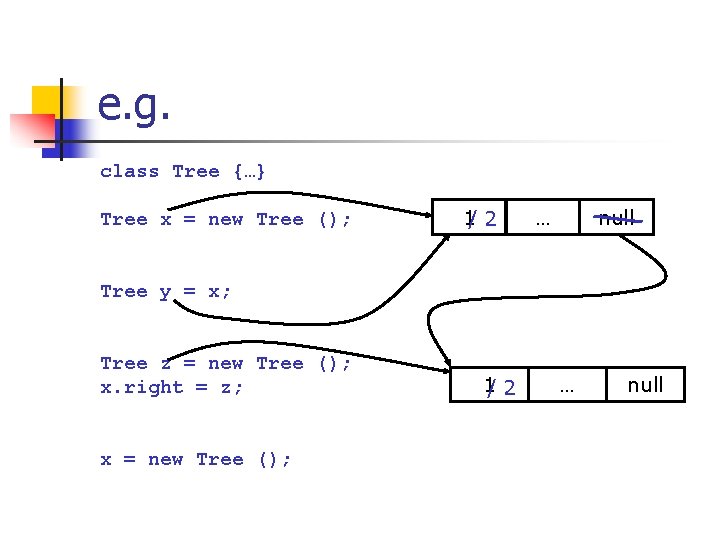

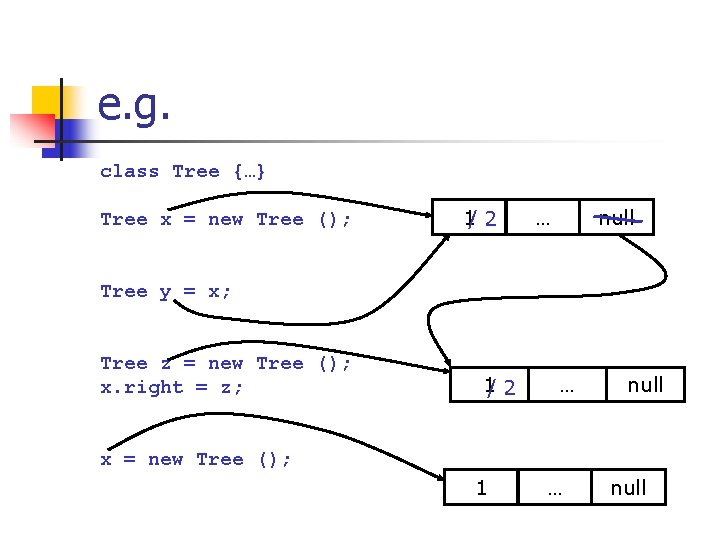

e. g. class Tree {…} Tree x = new Tree (); 1/ 2 … null Tree y = x; Tree z = new Tree (); x. right = z; x = new Tree (); 1/ 2 … null

e. g. class Tree {…} Tree x = new Tree (); 1/ 2 … null Tree y = x; Tree z = new Tree (); x. right = z; 1/ 2 … null x = new Tree (); 1 … null

Moral n RC is simple, and is used in many other areas: n n e. g. , hard disks or page tables, etc. But RC suffers from several serious problems: n space usage: n n inefficient: n n n one word per object? (depends on what? ) increment and decrement on every object assignment tightly-coupled with applications (mutator) ineffective n for circular data structures

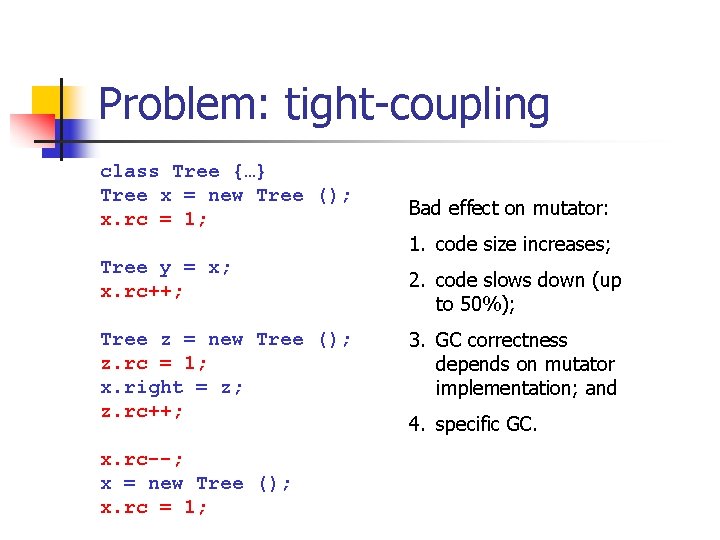

Problem: tight-coupling class Tree {…} Tree x = new Tree (); x. rc = 1; Bad effect on mutator: 1. code size increases; Tree y = x; x. rc++; Tree z = new Tree (); z. rc = 1; x. right = z; z. rc++; x. rc--; x = new Tree (); x. rc = 1; 2. code slows down (up to 50%); 3. GC correctness depends on mutator implementation; and 4. specific GC.

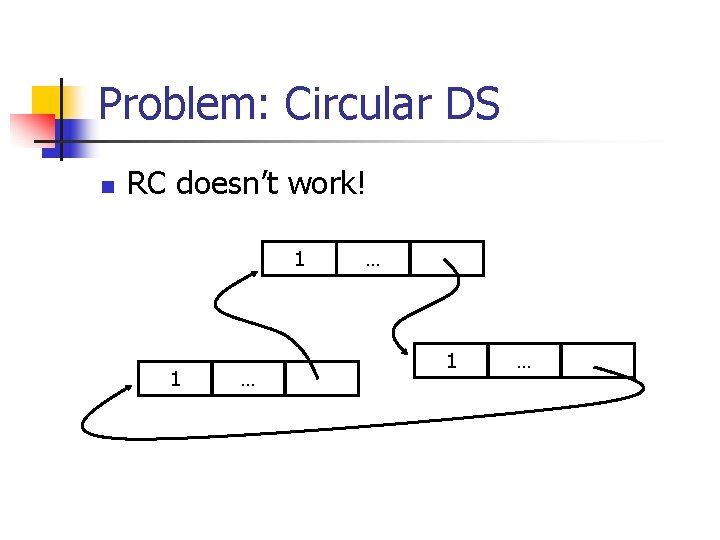

Problem: Circular DS n RC doesn’t work! 1 1 … … 1 …

Mark and Sweep

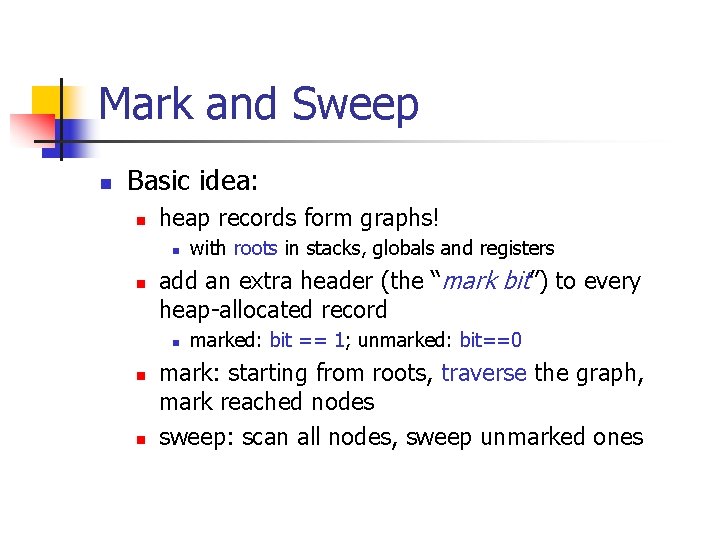

Mark and Sweep n Basic idea: n heap records form graphs! n n add an extra header (the “mark bit”) to every heap-allocated record n n n with roots in stacks, globals and registers marked: bit == 1; unmarked: bit==0 mark: starting from roots, traverse the graph, mark reached nodes sweep: scan all nodes, sweep unmarked ones

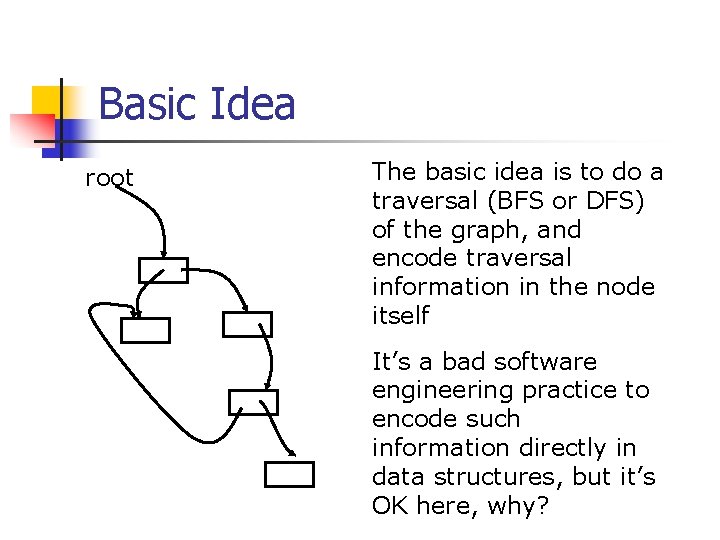

Basic Idea root The basic idea is to do a traversal (BFS or DFS) of the graph, and encode traversal information in the node itself It’s a bad software engineering practice to encode such information directly in data structures, but it’s OK here, why?

Finding roots? n When a collection starts, which stack slots and registers contain heappointers? n n Not easy Essentially, finding roots involves deciding whether or not a machine word is a pointer n need help from compilers (if any)

Many approaches n Mask every word value: n e. g. , a word’s least significant bit == 0 means a pointer, ==1 means an integer n n Bit-vector masks: n n n so must change the representation of integers compiler generates a bit-string at every GC site, for registers and stack slots you do this in lab 4 Allocate roots (live across function calls) in separate stacks, but not registers n even simpler

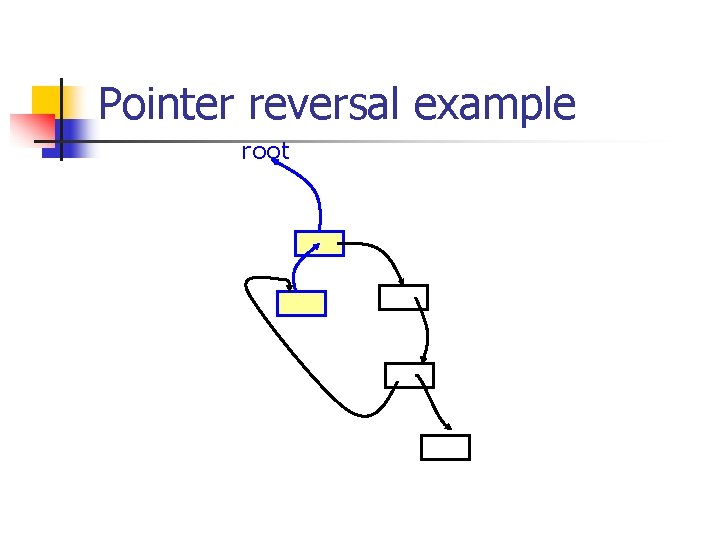

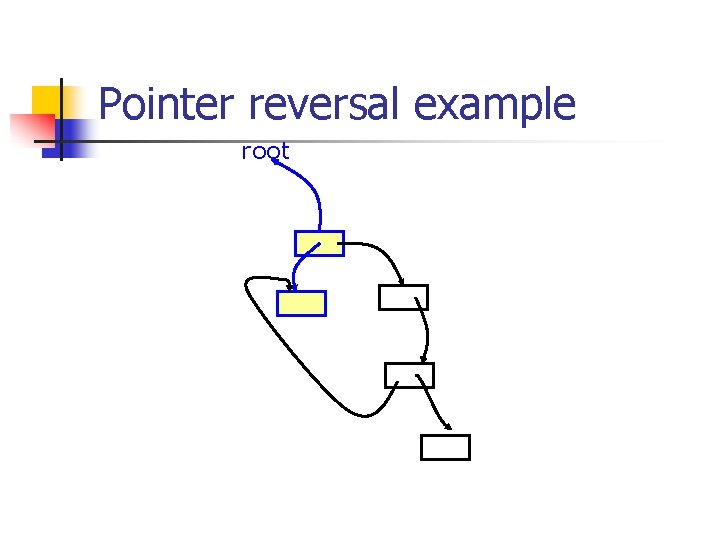

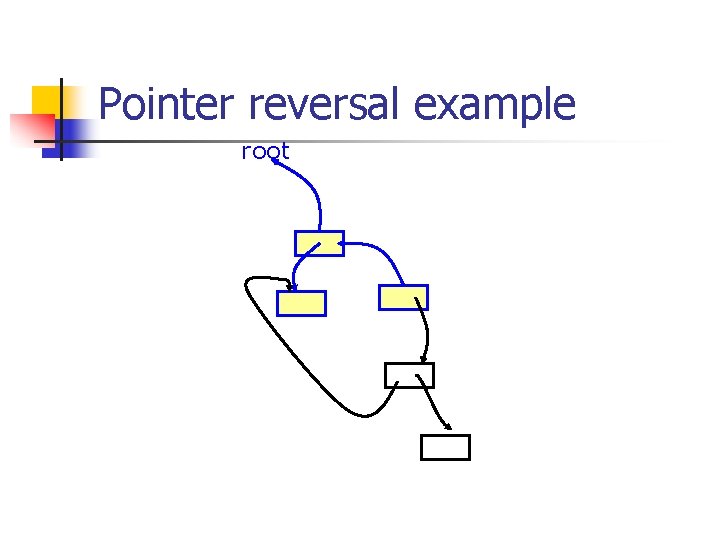

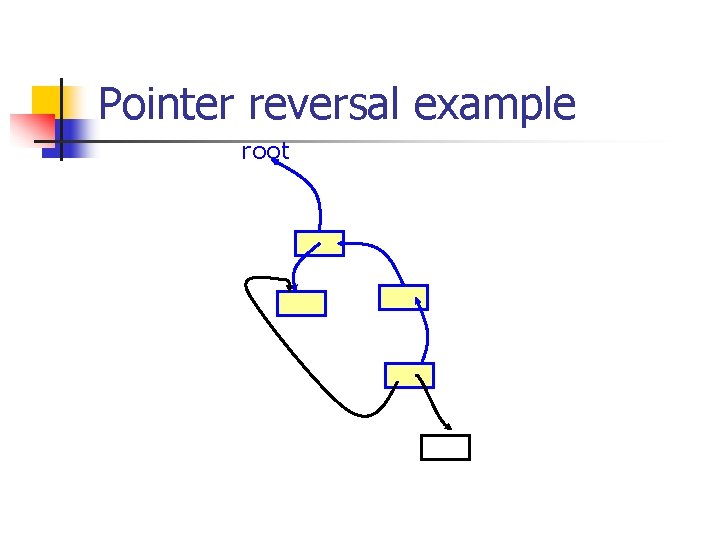

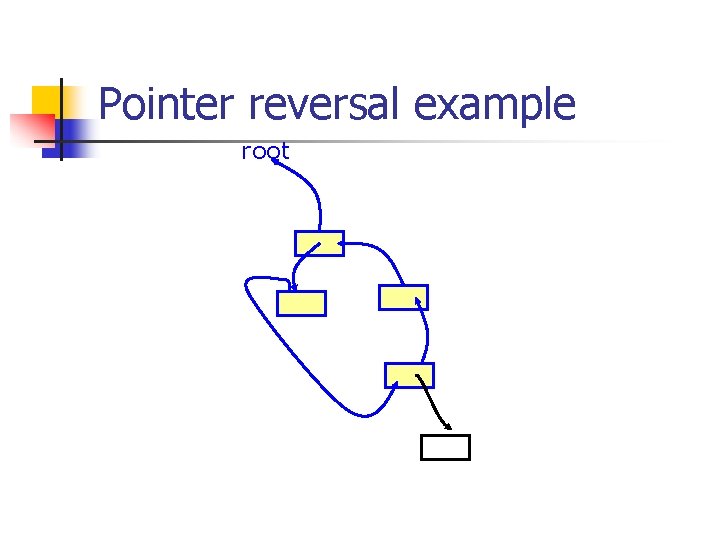

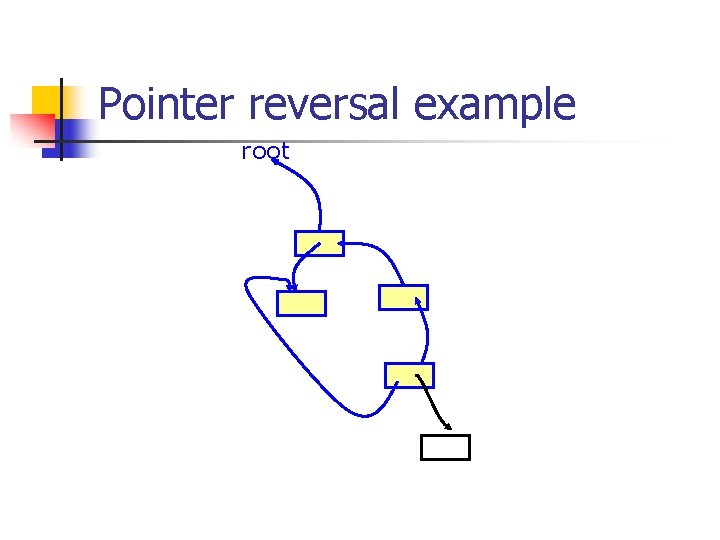

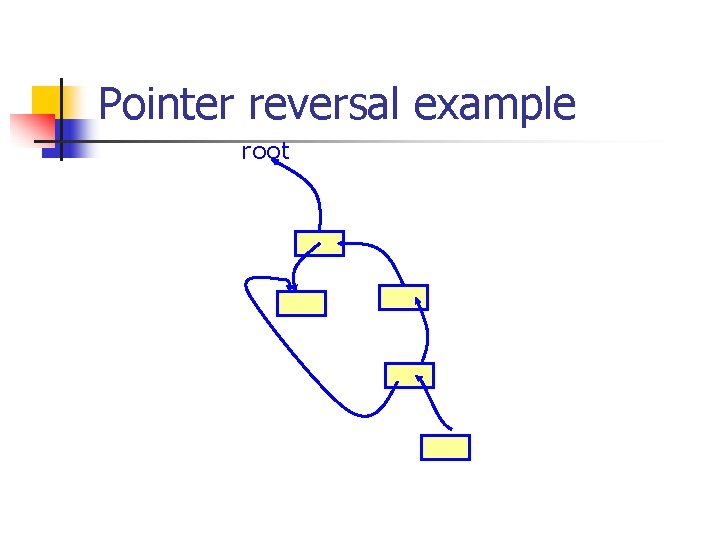

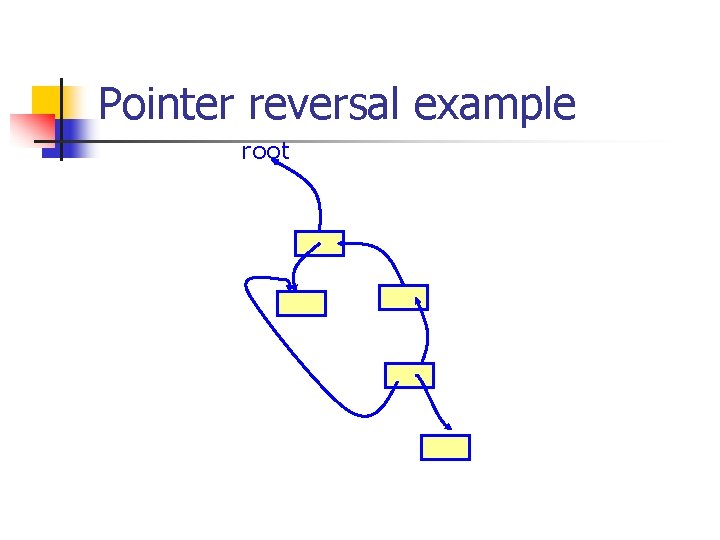

Traversal n How to traversal the pointer graph? n n depth-first or breadth-first search But must do with care: n the traversal itself takes space n n e. g. , the DFS stack is of size O(N) some strategies: n e. g. , explicit stack or pointer reversal, read Tiger book

![Explicit Stack // x is an unmarked root stack = []; DFS (x) push(stack, Explicit Stack // x is an unmarked root stack = []; DFS (x) push(stack,](http://slidetodoc.com/presentation_image_h/1e7297ed8a6bf403c969b10df0573cdd/image-20.jpg)

Explicit Stack // x is an unmarked root stack = []; DFS (x) push(stack, x); while (stack not empty) y = pop (stack); mark (y); for(pointer field y. f in y and y. f not marked) push (stack, y. f) // Key observation: explicit stack is tight than // a call-stack!

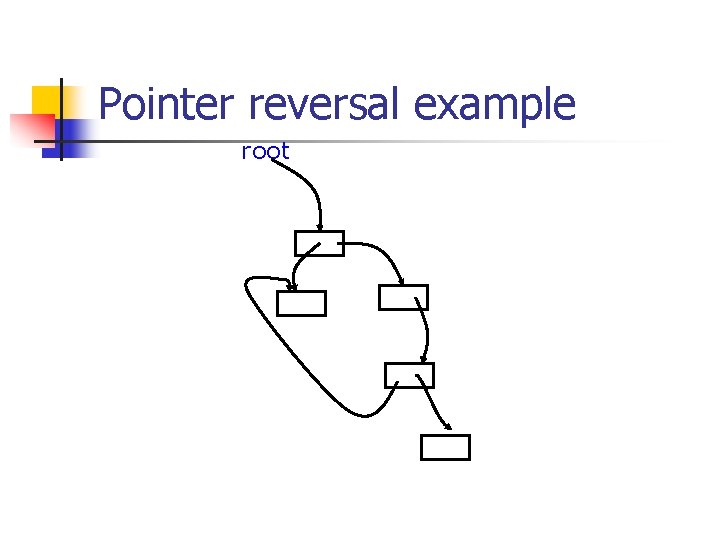

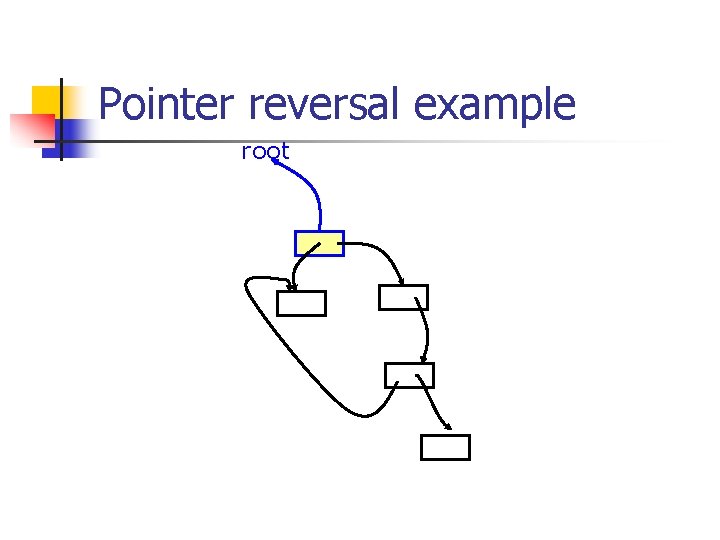

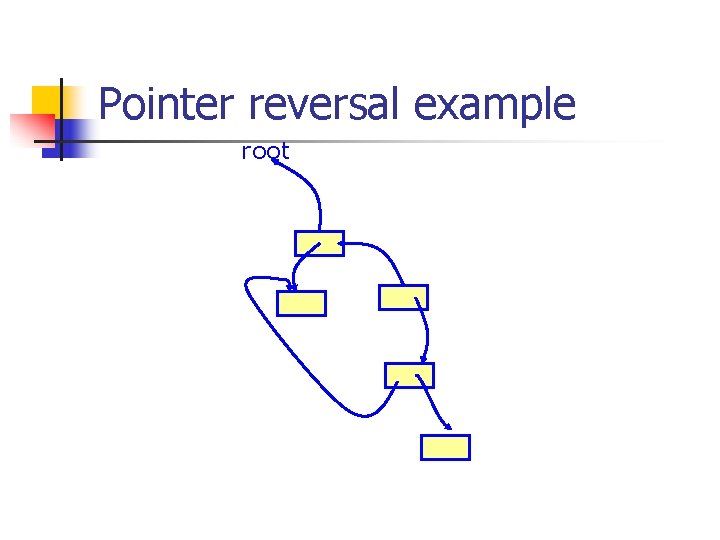

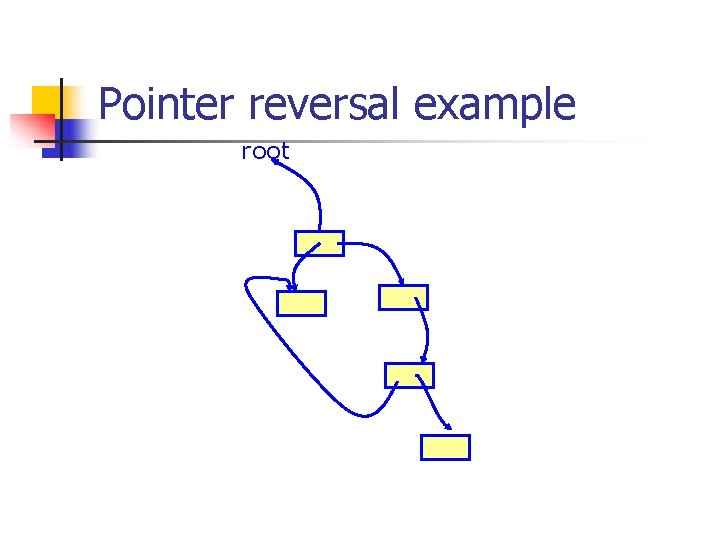

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Pointer reversal example root

Copying Collection

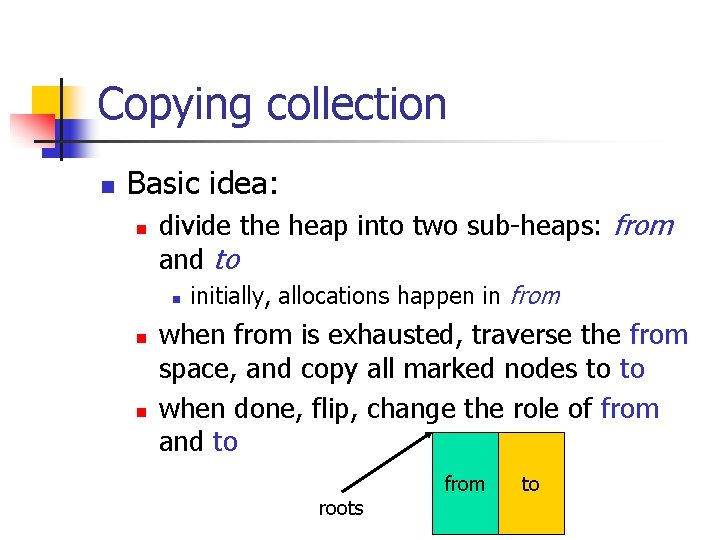

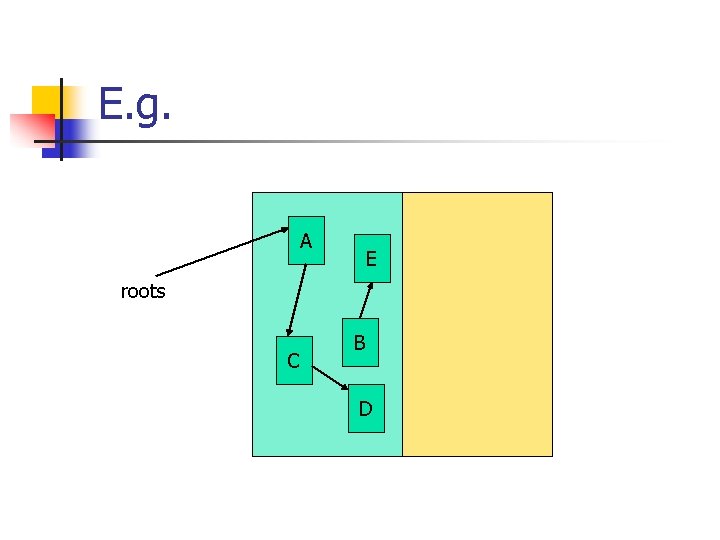

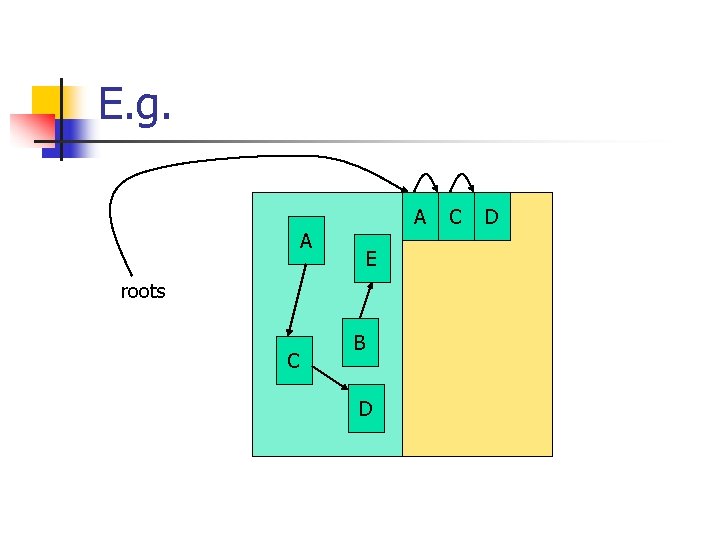

Copying collection n Basic idea: n divide the heap into two sub-heaps: from and to n n n initially, allocations happen in from when from is exhausted, traverse the from space, and copy all marked nodes to to when done, flip, change the role of from and to from roots to

E. g. A E roots C B D

E. g. A A E roots C B D C D

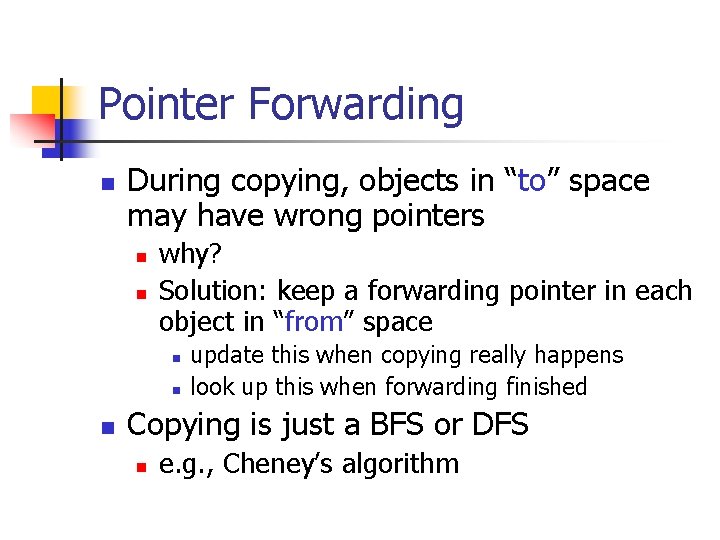

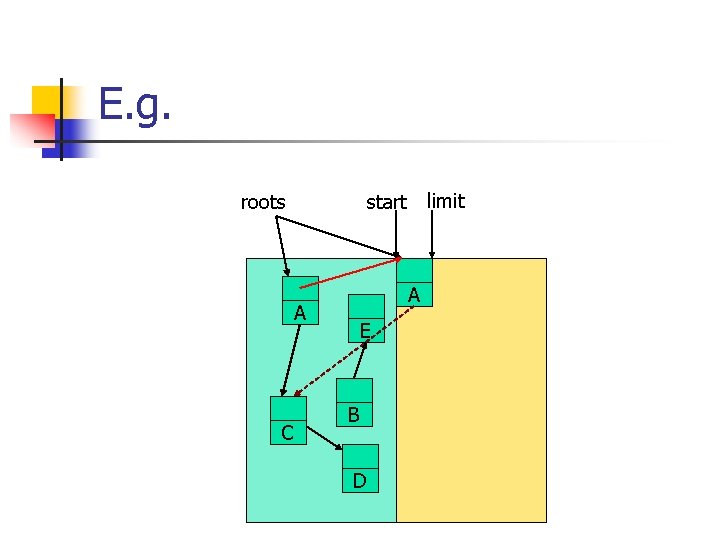

Pointer Forwarding n During copying, objects in “to” space may have wrong pointers n n why? Solution: keep a forwarding pointer in each object in “from” space n n n update this when copying really happens look up this when forwarding finished Copying is just a BFS or DFS n e. g. , Cheney’s algorithm

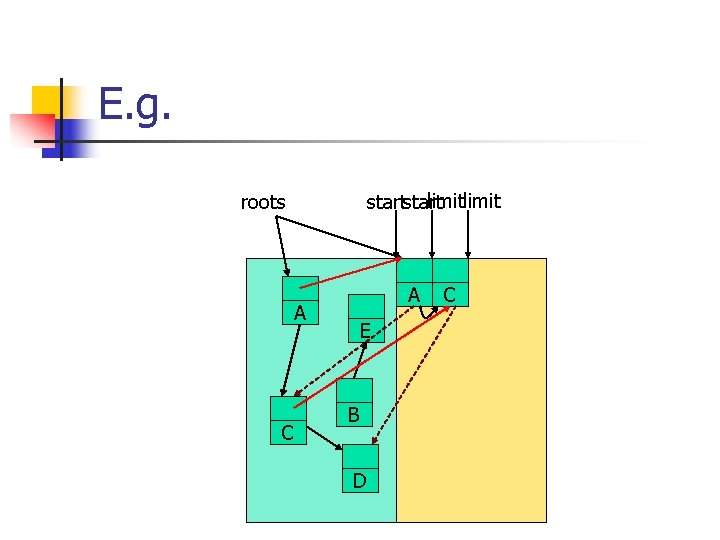

E. g. roots A A C limit start E B D

E. g. limit start roots A A C E B D C

Moral n Simple data structures: n n n Fast allocation n n compaction comes free after copying! Space overhead n n allocation == a pointer bumping No fragmentation n n linear allocation only forwarding pointers in headers half space wasted! Slow, if ratio of live objects is high n why?

Generational Collection

Generational GC n Two observations by Hewitt in 1987: n n n most heap-allocated objects die younger objects usually point to older ones, but not vice verse So put objects in several sub-heaps according to their generations n younger generations are collected more frequently

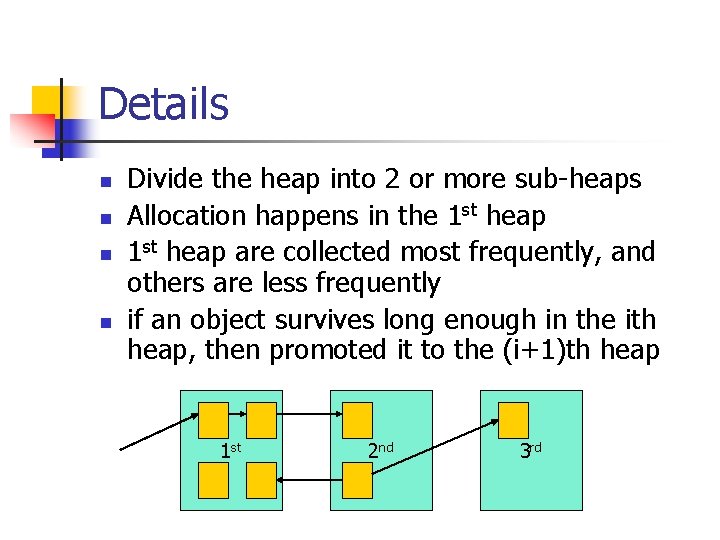

Details n n Divide the heap into 2 or more sub-heaps Allocation happens in the 1 st heap are collected most frequently, and others are less frequently if an object survives long enough in the ith heap, then promoted it to the (i+1)th heap 1 st 2 nd 3 rd

Number of Generations n Design choice in different GCs n n n some has a up-to 7 generations some has a dynamic changing numbers Most have 2 generations: n n n the younger one called nursery the older one called tenured nursery is relatively small (<1 M) n the GC on it is fast!

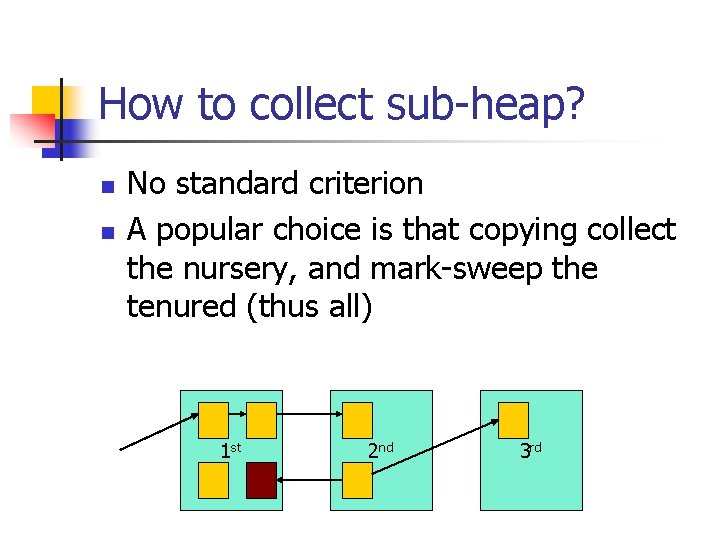

How to collect sub-heap? n n No standard criterion A popular choice is that copying collect the nursery, and mark-sweep the tenured (thus all) 1 st 2 nd 3 rd

Two issues n How to find roots? n normal roots + pointers from older generation to younger ones n n must detect these pointers (conservatively) When and how to promote objects? n after objects live long enough, they should be promoted from nursery to tenured

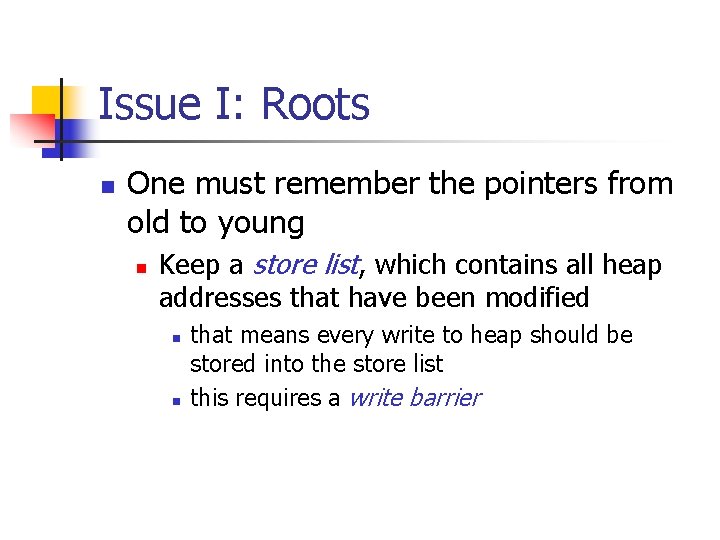

Issue I: Roots n One must remember the pointers from old to young n Keep a store list, which contains all heap addresses that have been modified n n that means every write to heap should be stored into the store list this requires a write barrier

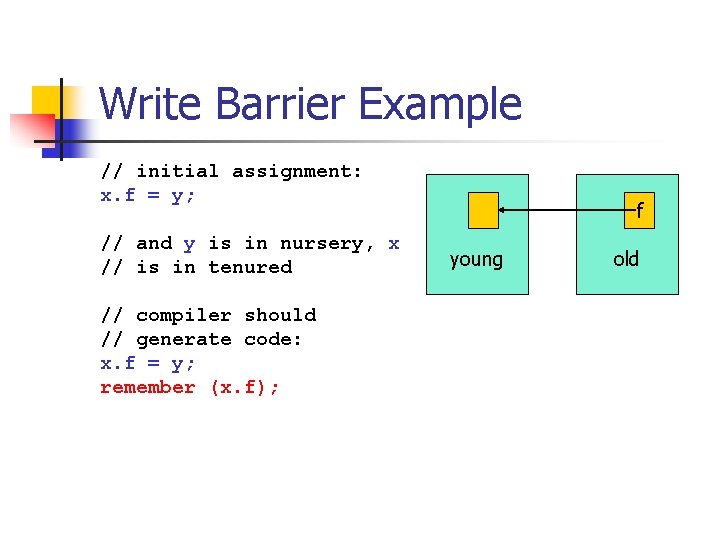

Write Barrier Example // initial assignment: x. f = y; // and y is in nursery, x // is in tenured // compiler should // generate code: x. f = y; remember (x. f); f young old

How to implement write barrier? n Several widely-used techniques: n Remembered list n n Remembered set n n mark each object Card marking n n n store addresses in a list mark cards, not objects coarse version of remembered set Page marking n with OS support

Issue II: Promotion n Must record in each object the number of collections it has survived n n promote it, when N>threshold But it’s hard to tune threshold n n must leave enough space in nursery must keep few garbage in tenured n n whole collection is slow! A typical choice is 80%, but should be dynamic

Moral n Fast and short-pause n n n for the nursery space is small Locality is good Widely used (most popular one)

Incremental GC

Concurrent GC n Basic idea: n further reduce the GC pause, by allowing the mutator (the user program) to continue its work, before the collector finishes n n n the collector may stop (incremental) or work concurrently so the collection time is amortized

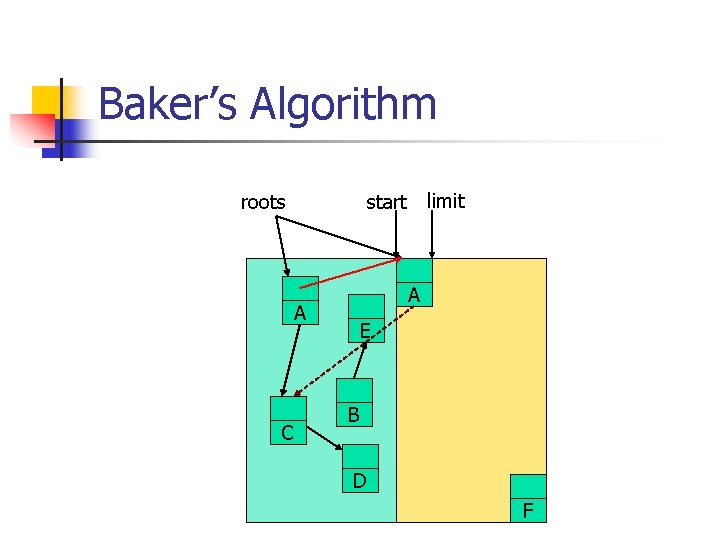

Baker’s Algorithm roots A A C limit start E B D F

Read barrier n Baker’s algorithm requires extra work before every read of a heap pointer n n so one maintain the invariant that mutator always points into “to” space This may be more expensive than write barrier n n read is more frequent than write? and the overall execution time of mutator decreases when allocation is high

Conservative GC

Conservative GC n GC C/C++ is more difficult n n n language designed with no GC in head Every value is an integer (pointer) Basic idea: n n be more “conservative” in guessing roots and pointers, and don’t try to do an accurate trace of the pointer graph maybe more leakings

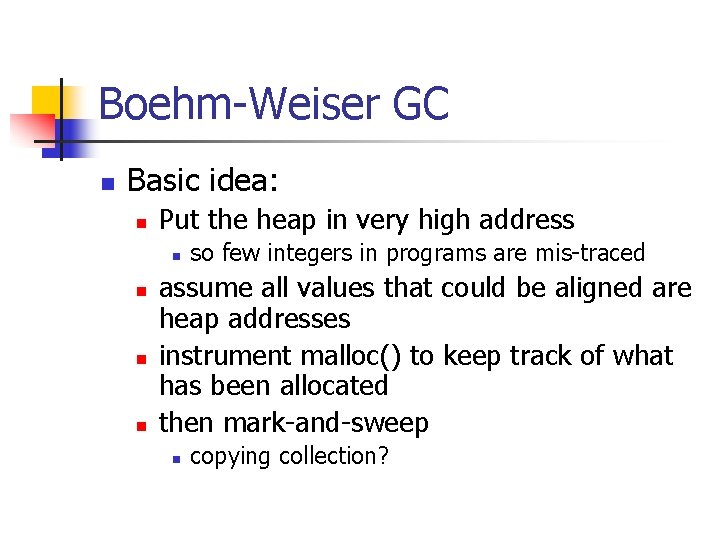

Boehm-Weiser GC n Basic idea: n Put the heap in very high address n n so few integers in programs are mis-traced assume all values that could be aligned are heap addresses instrument malloc() to keep track of what has been allocated then mark-and-sweep n copying collection?

- Slides: 58