Games Times and Probabilities Value Iteration in Verification

Games, Times, and Probabilities: Value Iteration in Verification and Control Krishnendu Chatterjee Tom Henzinger

Graph Models of Systems vertices = states edges = transitions paths = behaviors

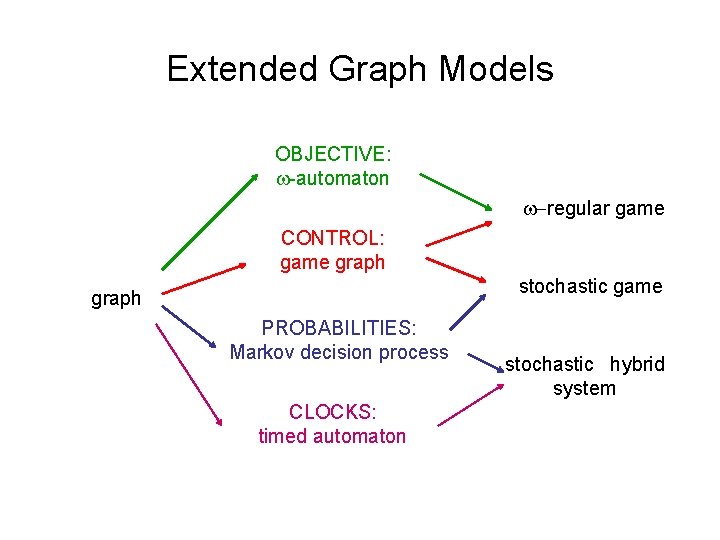

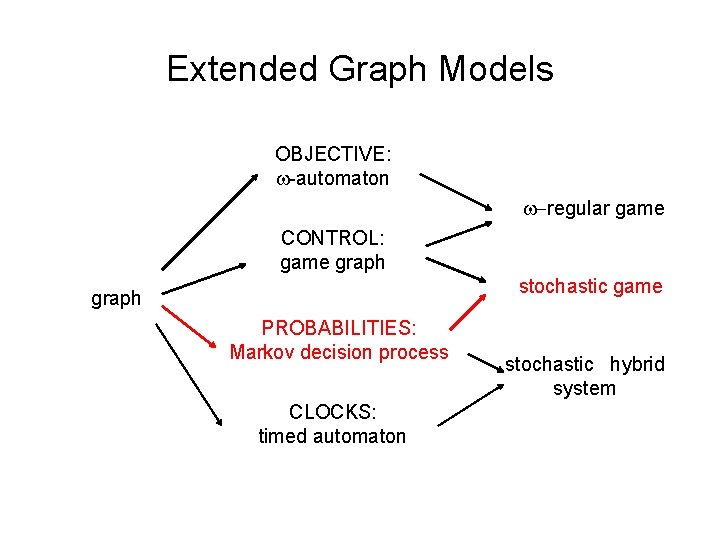

Extended Graph Models OBJECTIVE: -automaton -regular game CONTROL: game graph stochastic game graph PROBABILITIES: Markov decision process CLOCKS: timed automaton stochastic hybrid system

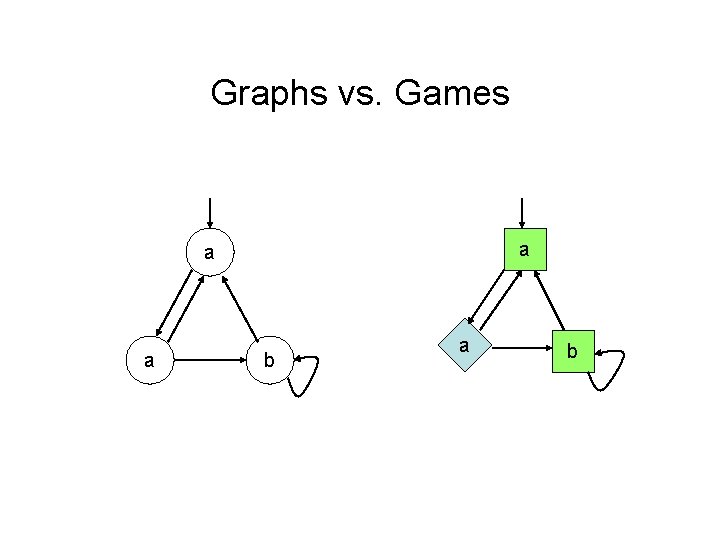

Graphs vs. Games a a a b

Games model Open Systems Two players: environment / controller / input vs. system / plant / output Multiple players: processes / components / agents Stochastic players: nature / randomized algorithms

Example P 1: P 2: init x : = 0 init y : = 0 loop choice | x : = x+1 mod 2 | x : = 0 choice | y : = x+1 mod 2 end choice end loop 1 : ( x = y ) 2 : ( y = 0 )

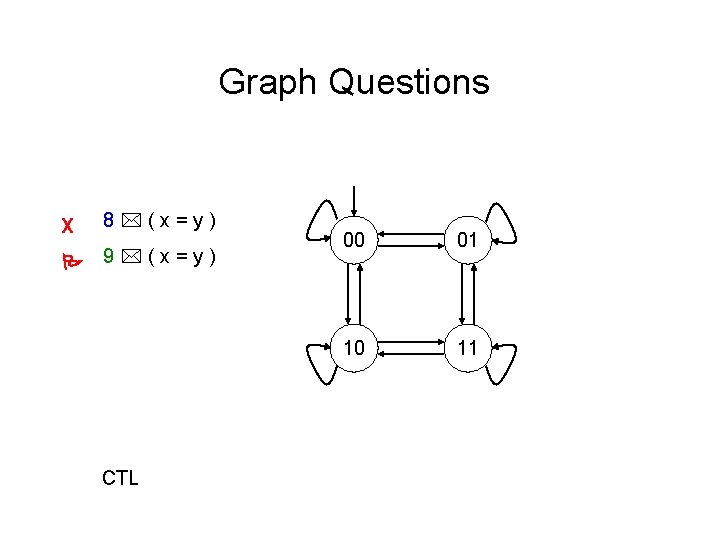

Graph Questions 8 (x=y) 9 (x=y) CTL

Graph Questions X 8 (x=y) 9 (x=y) CTL 00 01 10 11

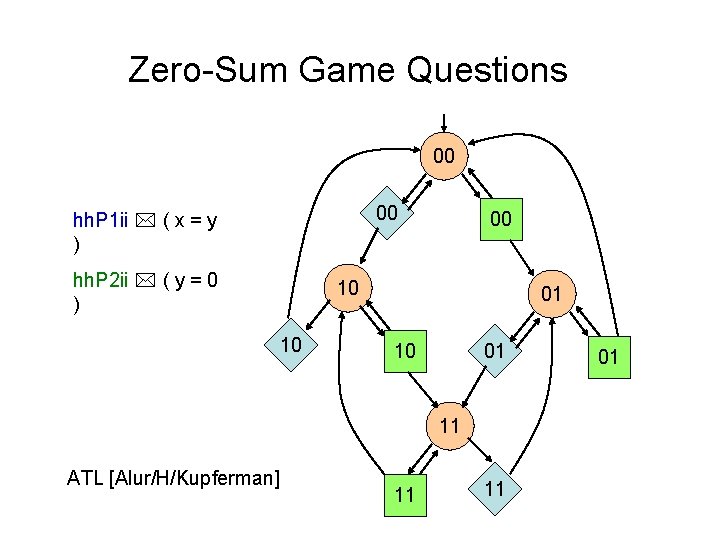

Zero-Sum Game Questions hh. P 1 ii ( x = y ) hh. P 2 ii ( y = 0 ) ATL [Alur/H/Kupferman]

Zero-Sum Game Questions 00 00 hh. P 1 ii ( x = y ) hh. P 2 ii ( y = 0 ) 00 10 10 01 11 ATL [Alur/H/Kupferman] 11 11 01

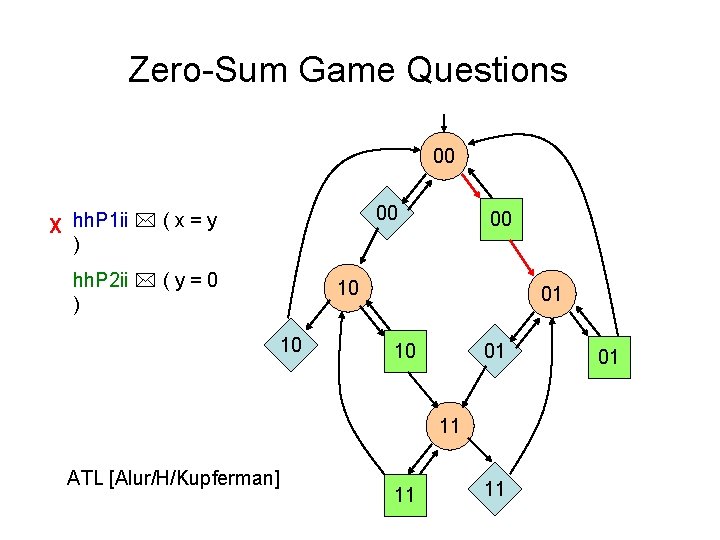

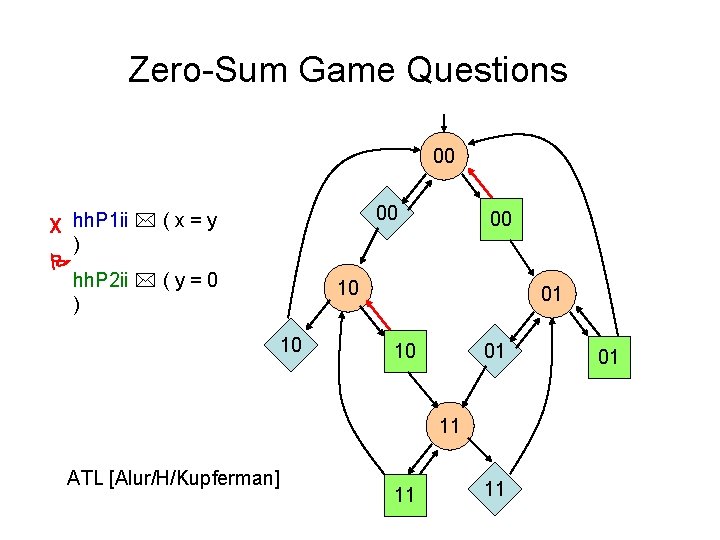

Zero-Sum Game Questions 00 00 X hh. P 1 ii ( x = y ) hh. P 2 ii ( y = 0 ) 00 10 10 01 11 ATL [Alur/H/Kupferman] 11 11 01

Zero-Sum Game Questions 00 00 X hh. P 1 ii ( x = y ) hh. P 2 ii ( y = 0 ) 00 10 10 01 11 ATL [Alur/H/Kupferman] 11 11 01

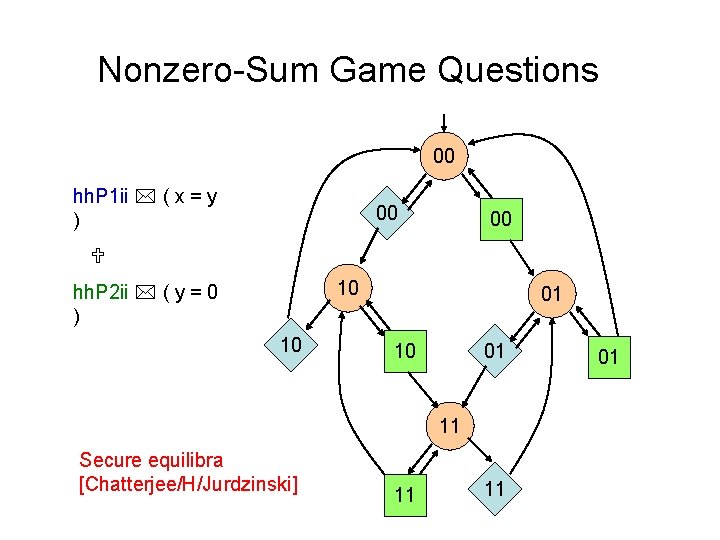

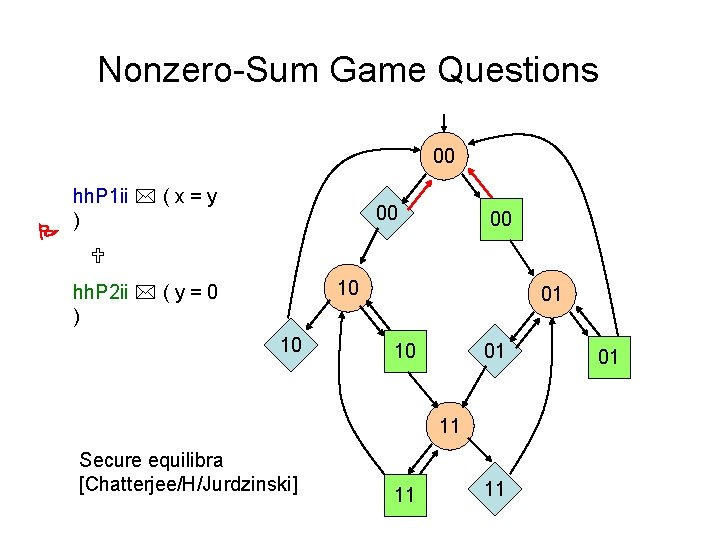

Nonzero-Sum Game Questions 00 hh. P 1 ii ( x = y ) 00 00 10 hh. P 2 ii ( y = 0 ) 10 01 11 Secure equilibra [Chatterjee/H/Jurdzinski] 11 11 01

Nonzero-Sum Game Questions 00 hh. P 1 ii ( x = y ) 00 00 10 hh. P 2 ii ( y = 0 ) 10 01 11 Secure equilibra [Chatterjee/H/Jurdzinski] 11 11 01

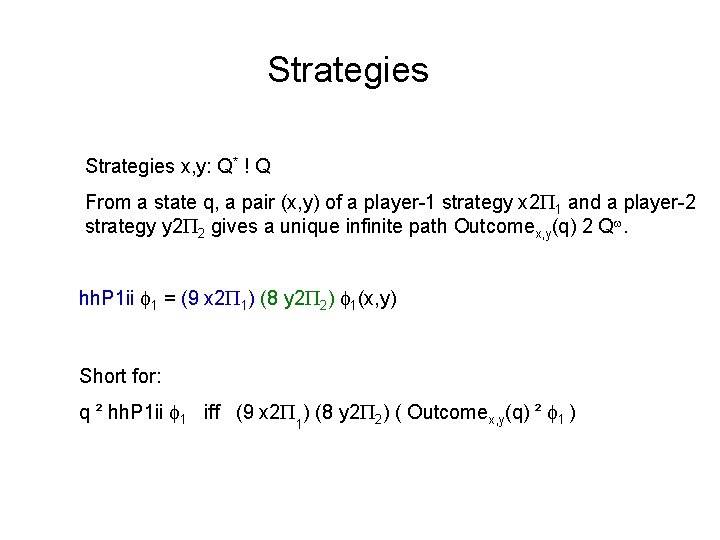

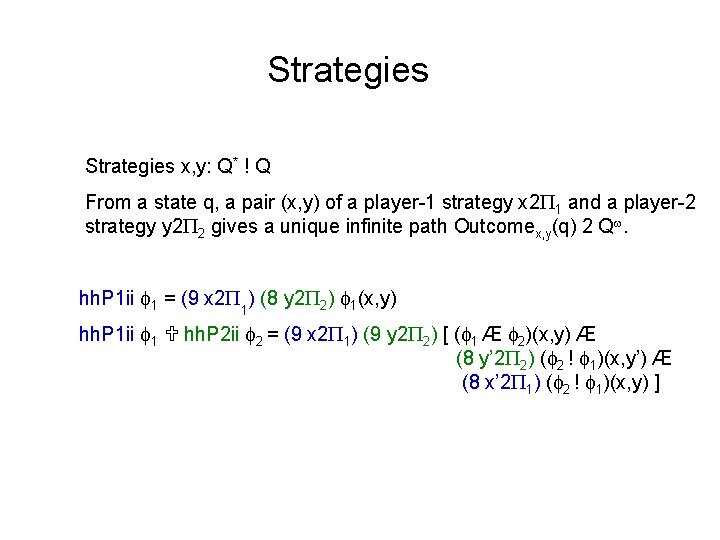

Strategies x, y: Q* ! Q From a state q, a pair (x, y) of a player-1 strategy x 2 1 and a player-2 strategy y 2 2 gives a unique infinite path Outcomex, y(q) 2 Q.

Strategies x, y: Q* ! Q From a state q, a pair (x, y) of a player-1 strategy x 2 1 and a player-2 strategy y 2 2 gives a unique infinite path Outcomex, y(q) 2 Q. hh. P 1 ii 1 = (9 x 2 1) (8 y 2 2) 1(x, y) Short for: q ² hh. P 1 ii 1 iff (9 x 2 ) (8 y 2 2) ( Outcomex, y(q) ² 1 ) 1

Strategies x, y: Q* ! Q From a state q, a pair (x, y) of a player-1 strategy x 2 1 and a player-2 strategy y 2 2 gives a unique infinite path Outcomex, y(q) 2 Q. hh. P 1 ii 1 = (9 x 2 1) (8 y 2 2) 1(x, y) hh. P 1 ii 1 hh. P 2 ii 2 = (9 x 2 1) (9 y 2 2) [ ( 1 Æ 2)(x, y) Æ (8 y’ 2 2) ( 2 ! 1)(x, y’) Æ (8 x’ 2 1) ( 2 ! 1)(x, y) ]

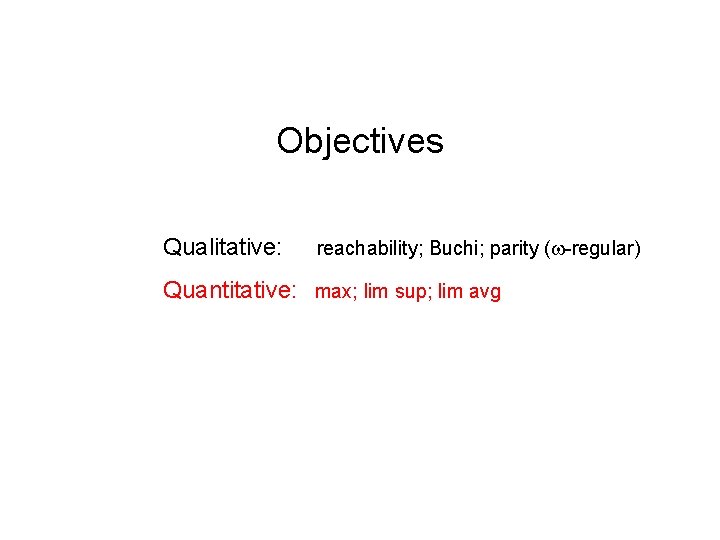

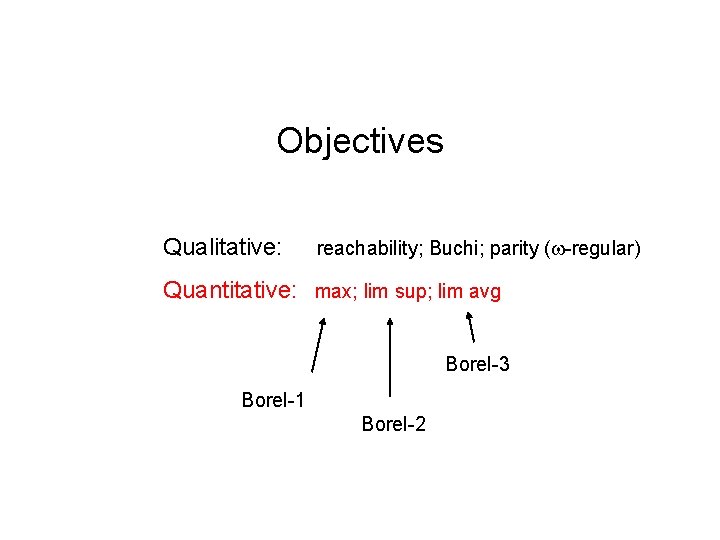

Objectives 1 and 2 Qualitative: reachability; Buechi; parity ( -regular) Quantitative: max; lim sup; lim avg

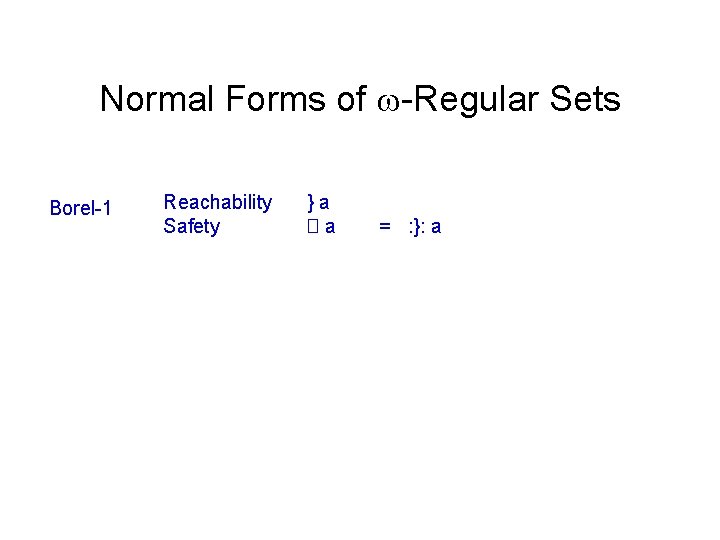

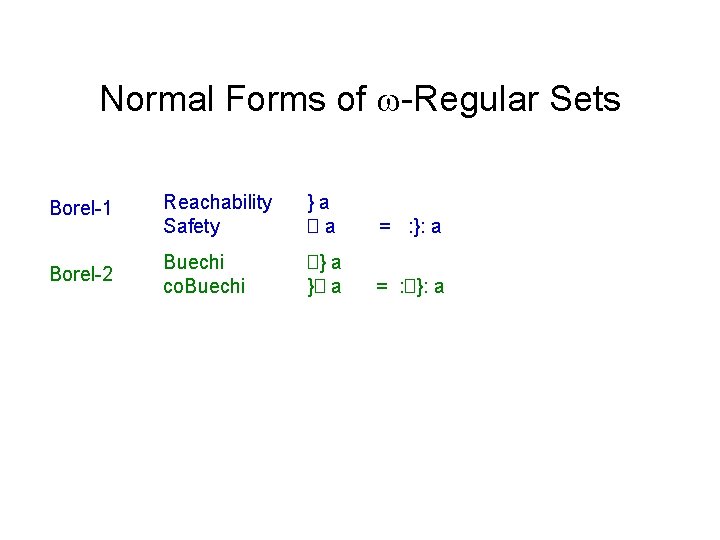

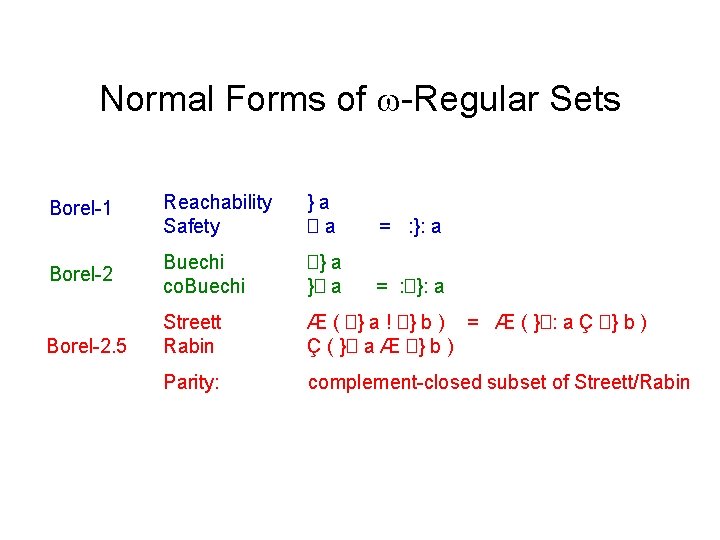

Normal Forms of -Regular Sets Borel-1 Reachability Safety }a �a = : }: a

Normal Forms of -Regular Sets Borel-1 Reachability Safety }a �a = : }: a Borel-2 Buechi co. Buechi �} a }� a = : �}: a

Normal Forms of -Regular Sets Borel-1 Reachability Safety }a �a = : }: a Borel-2 Buechi co. Buechi �} a }� a = : �}: a Streett Rabin Æ ( �} a ! �} b ) = Æ ( }�: a Ç �} b ) Ç ( }� a Æ �} b ) Parity: complement-closed subset of Streett/Rabin Borel-2. 5

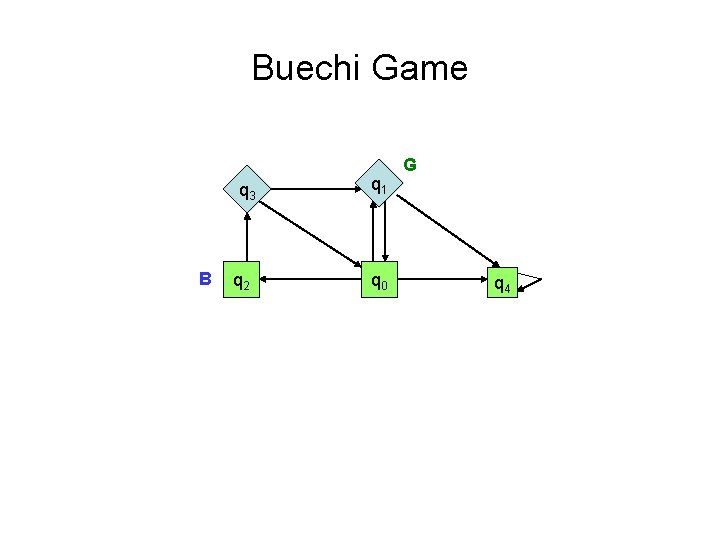

Buechi Game q 3 B q 2 q 1 q 0 G q 4

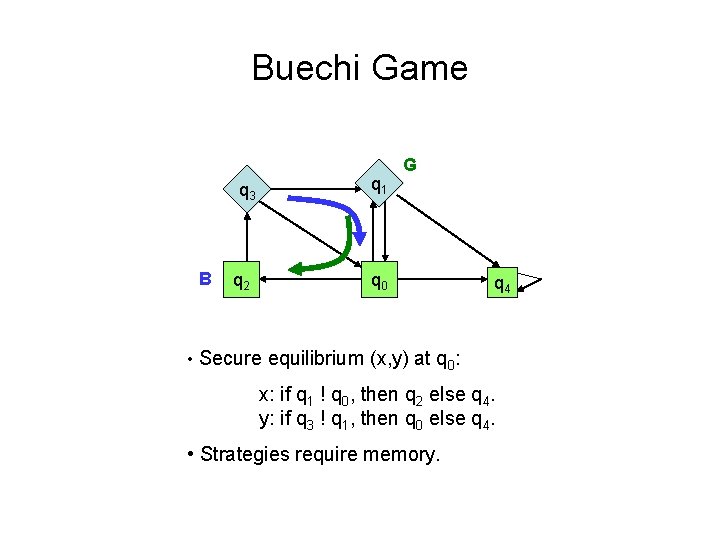

Buechi Game q 3 B q 2 q 1 G q 0 q 4 • Secure equilibrium (x, y) at q 0: x: if q 1 ! q 0, then q 2 else q 4. y: if q 3 ! q 1, then q 0 else q 4. • Strategies require memory.

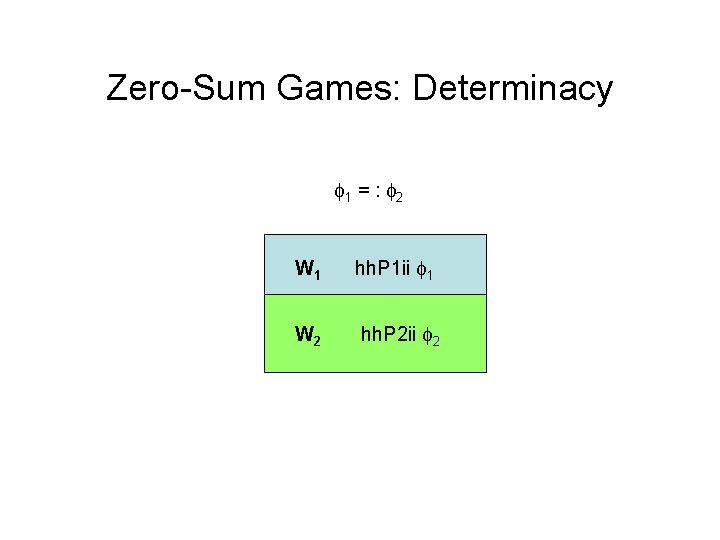

Zero-Sum Games: Determinacy 1 = : 2 W 1 hh. P 1 ii 1 W 2 hh. P 2 ii 2

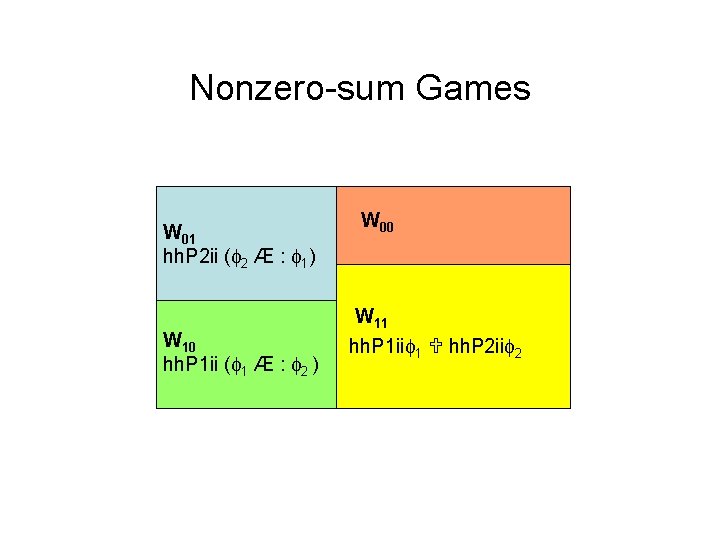

Nonzero-sum Games W 01 hh. P 2 ii ( 2 Æ : 1) W 10 hh. P 1 ii ( 1 Æ : 2 ) W 00 W 11 hh. P 1 ii 1 hh. P 2 ii 2

Objectives Qualitative: reachability; Buchi; parity ( -regular) Quantitative: max; lim sup; lim avg

Objectives Qualitative: reachability; Buchi; parity ( -regular) Quantitative: max; lim sup; lim avg Borel-3 Borel-1 Borel-2

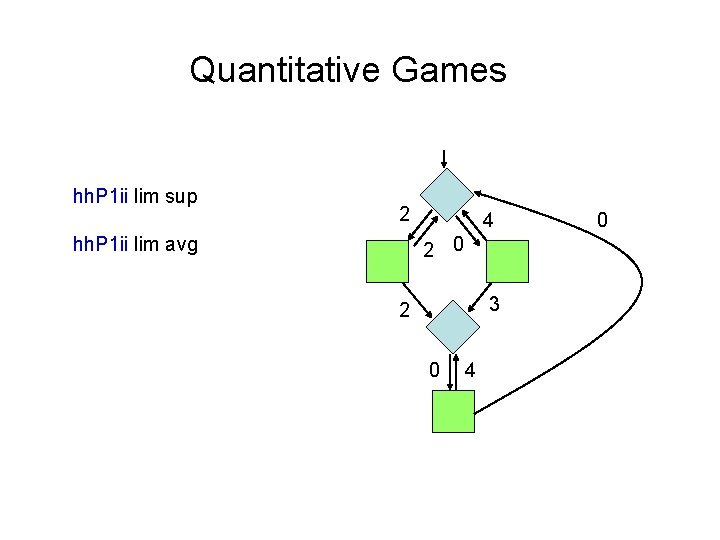

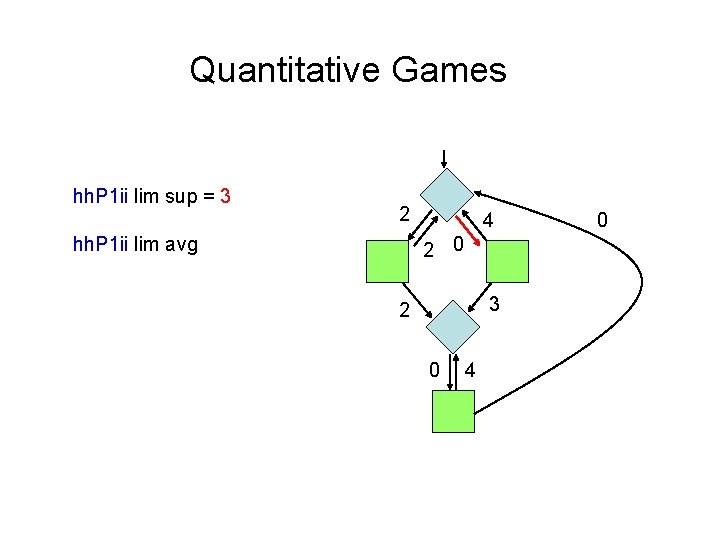

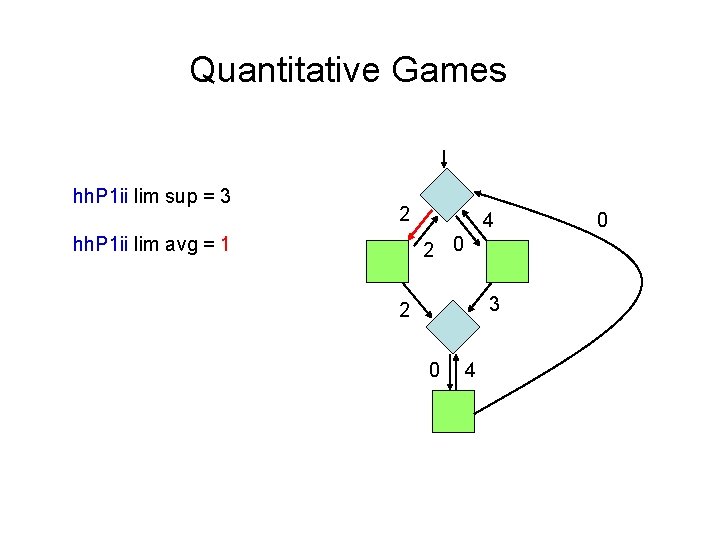

Quantitative Games hh. P 1 ii lim sup 2 4 2 0 hh. P 1 ii lim avg 3 2 0 4 0

Quantitative Games hh. P 1 ii lim sup = 3 2 4 2 0 hh. P 1 ii lim avg 3 2 0 4 0

Quantitative Games hh. P 1 ii lim sup = 3 2 4 2 0 hh. P 1 ii lim avg = 1 3 2 0 4 0

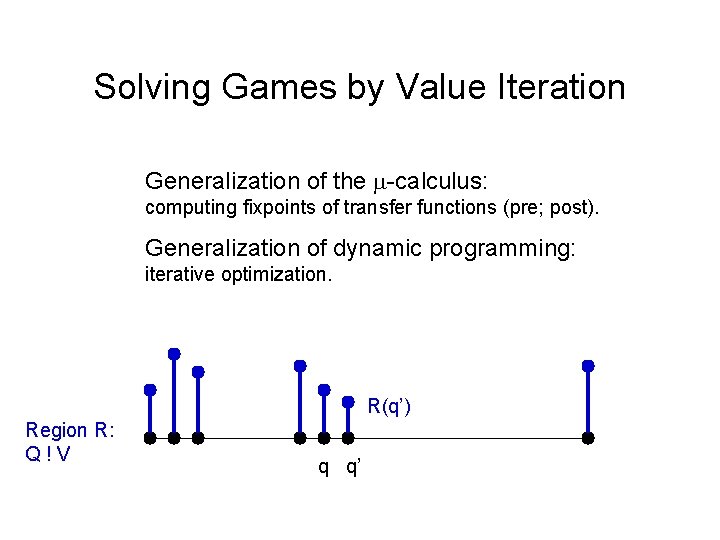

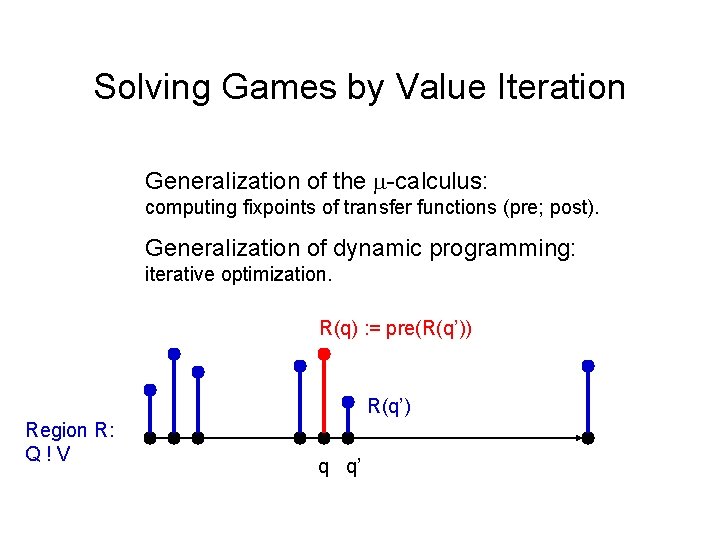

Solving Games by Value Iteration Generalization of the -calculus: computing fixpoints of transfer functions (pre; post). Generalization of dynamic programming: iterative optimization. R(q’) Region R: Q!V q q’

Solving Games by Value Iteration Generalization of the -calculus: computing fixpoints of transfer functions (pre; post). Generalization of dynamic programming: iterative optimization. R(q) : = pre(R(q’)) R(q’) Region R: Q!V q q’

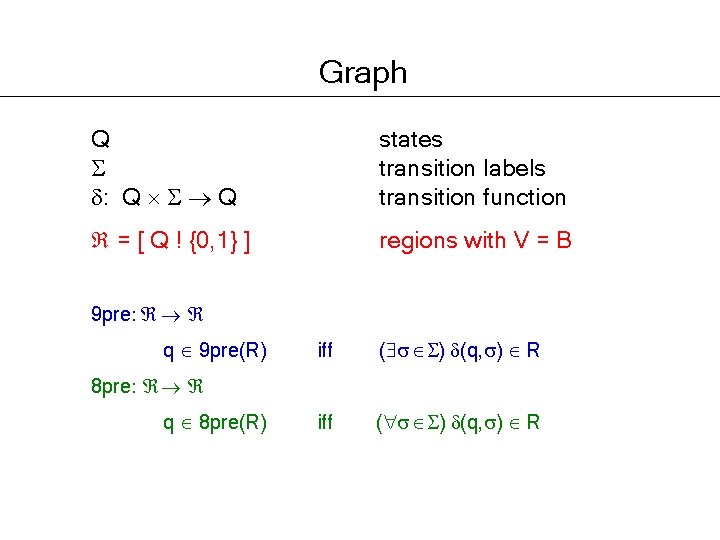

Graph Q : Q Q states transition labels transition function

Graph Q : Q Q states transition labels transition function = [ Q ! {0, 1} ] regions with V = B 9 pre: q 9 pre(R) iff ( ) (q, ) R 8 pre: q 8 pre(R)

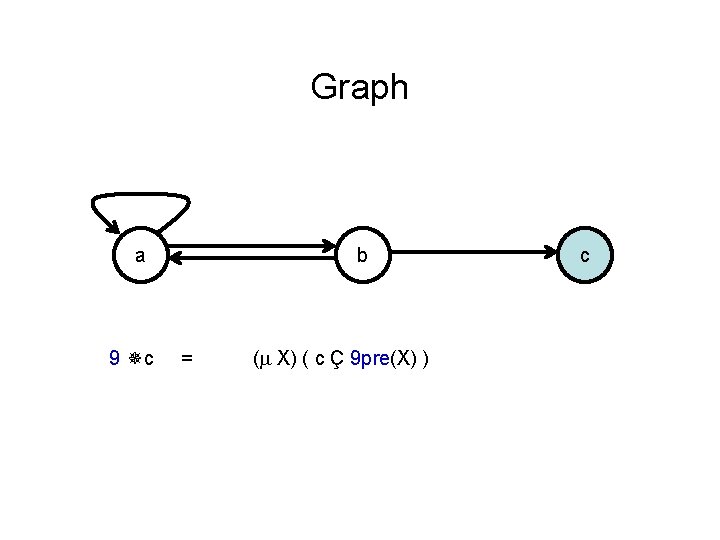

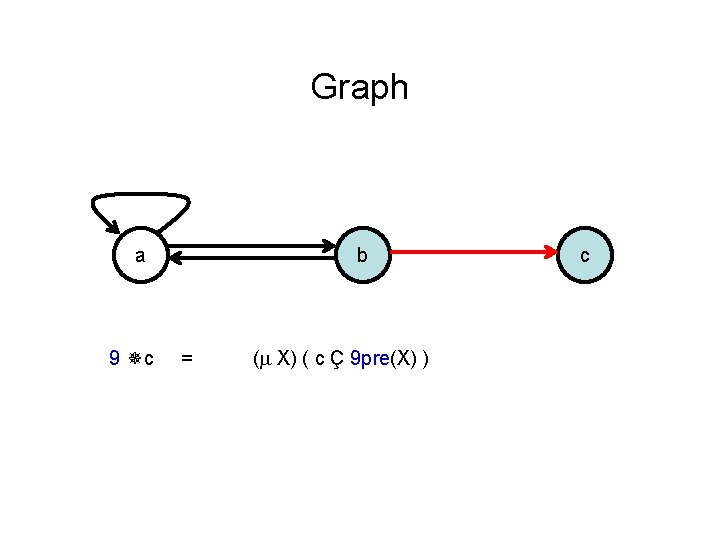

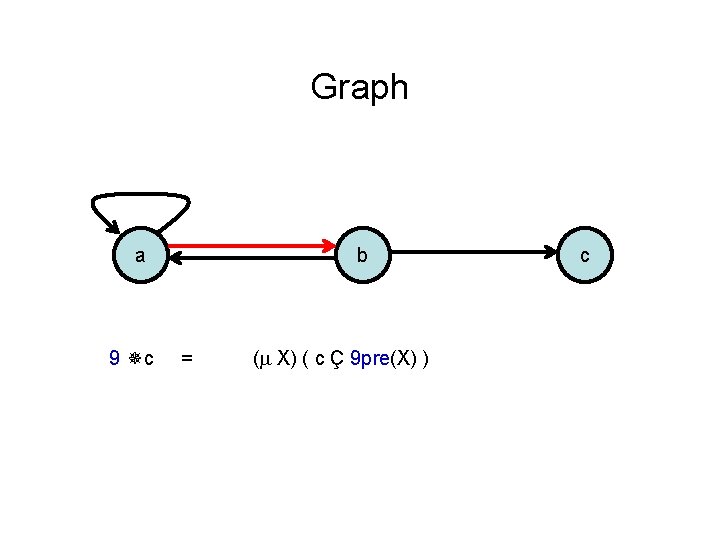

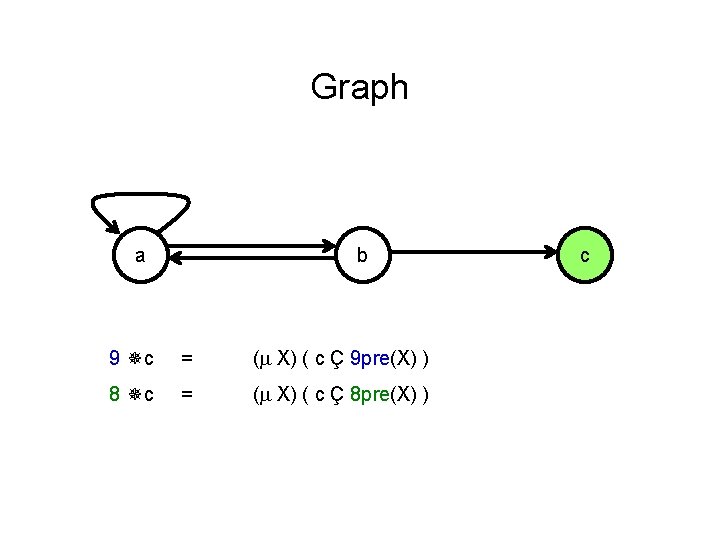

Graph a 9 c b = ( X) ( c Ç 9 pre(X) ) c

Graph a 9 c b = ( X) ( c Ç 9 pre(X) ) c

Graph a 9 c b = ( X) ( c Ç 9 pre(X) ) c

Graph a b 9 c = ( X) ( c Ç 9 pre(X) ) 8 c = ( X) ( c Ç 8 pre(X) ) c

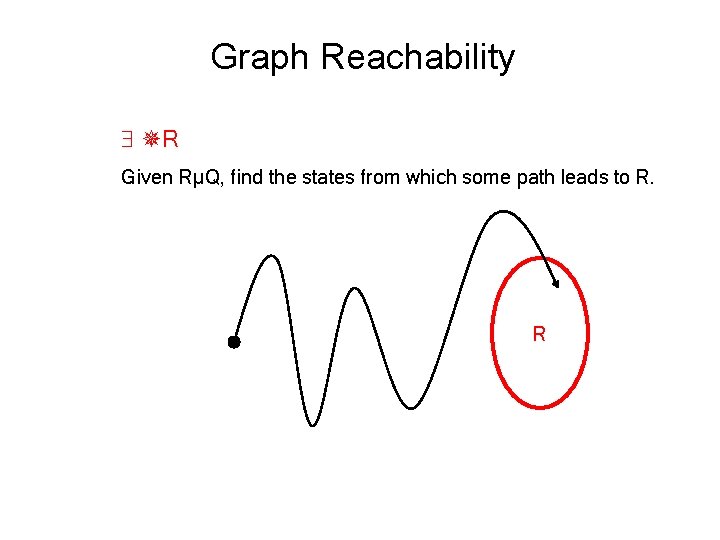

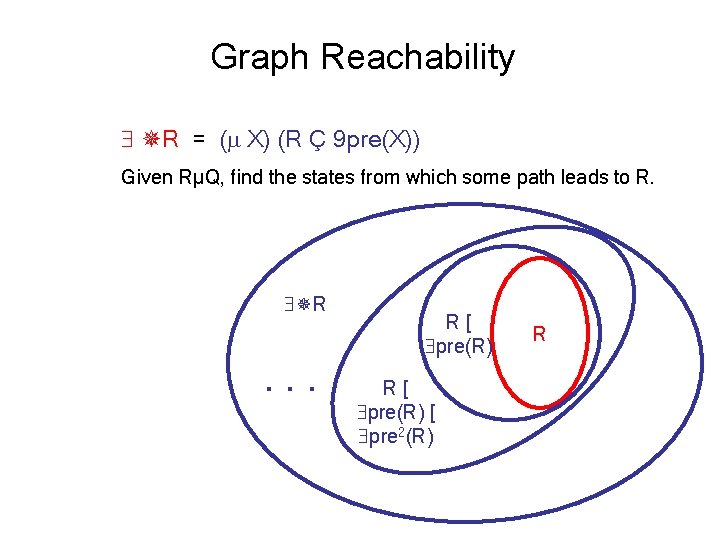

Graph Reachability R Given RµQ, find the states from which some path leads to R. R

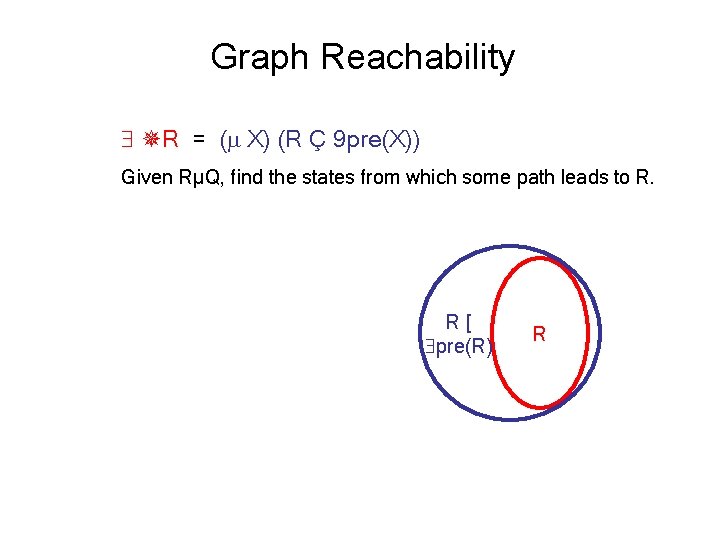

Graph Reachability R = ( X) (R Ç 9 pre(X)) Given RµQ, find the states from which some path leads to R. R[ pre(R) R

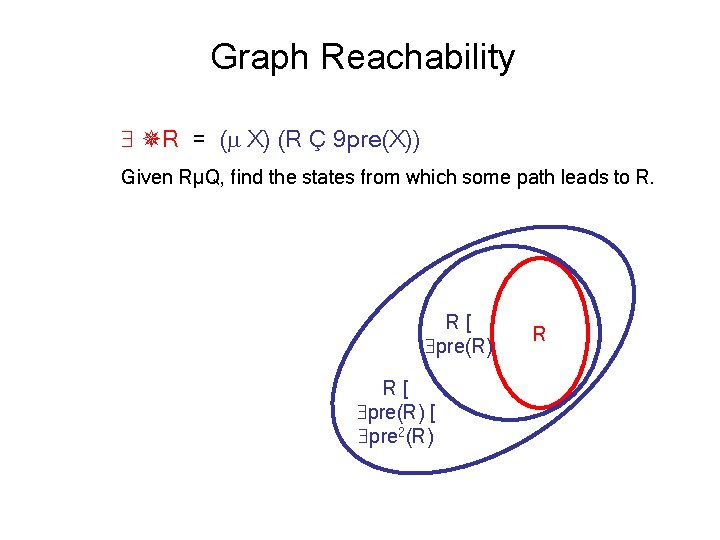

Graph Reachability R = ( X) (R Ç 9 pre(X)) Given RµQ, find the states from which some path leads to R. R[ pre(R) [ pre 2(R) R

Graph Reachability R = ( X) (R Ç 9 pre(X)) Given RµQ, find the states from which some path leads to R. R . . . R[ pre(R) [ pre 2(R) R

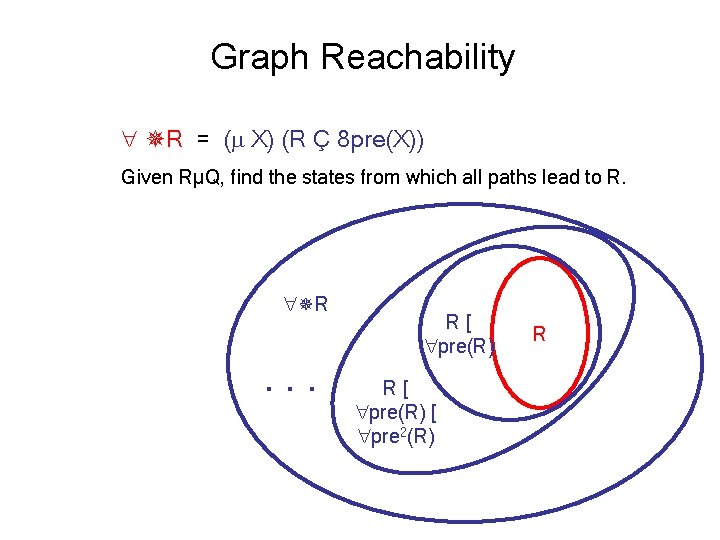

Graph Reachability R = ( X) (R Ç 8 pre(X)) Given RµQ, find the states from which all paths lead to R. R . . . R[ pre(R) [ pre 2(R) R

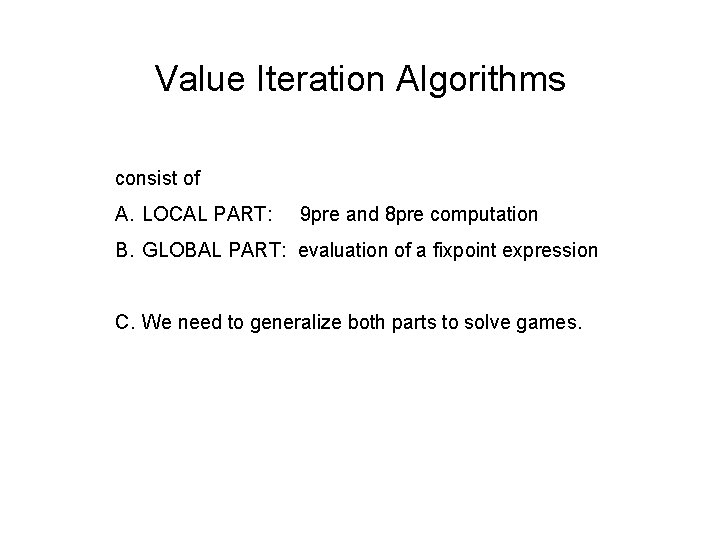

Value Iteration Algorithms consist of A. LOCAL PART: 9 pre and 8 pre computation B. GLOBAL PART: evaluation of a fixpoint expression C. We need to generalize both parts to solve games.

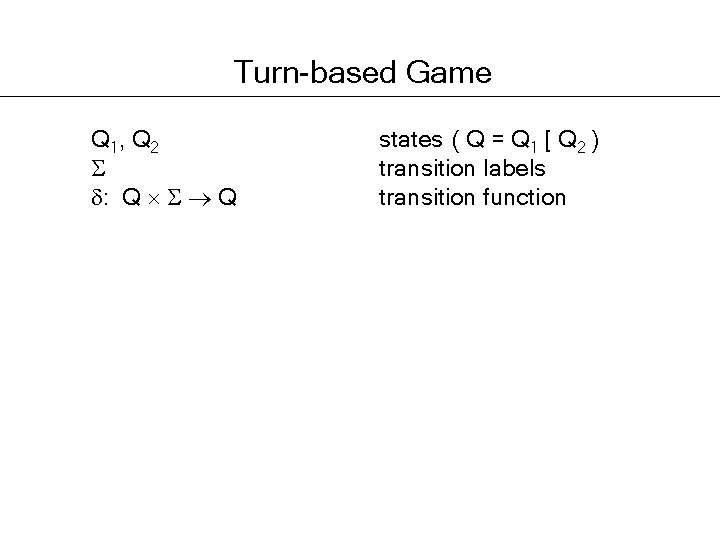

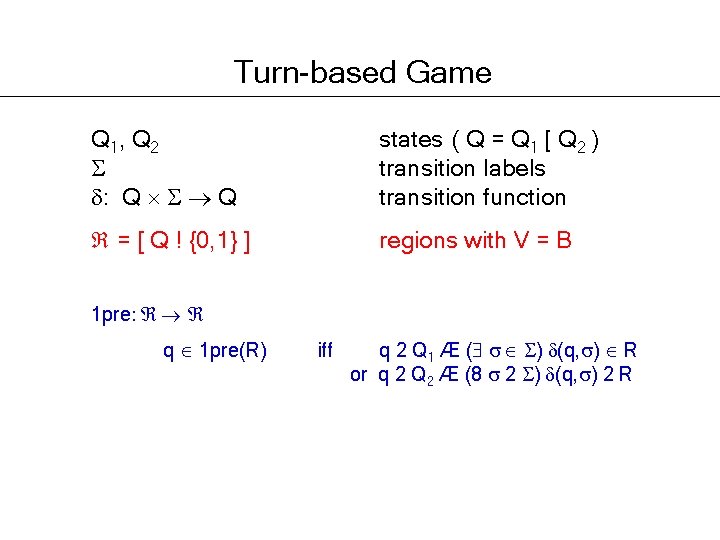

Turn-based Game Q 1, Q 2 : Q Q states ( Q = Q 1 [ Q 2 ) transition labels transition function

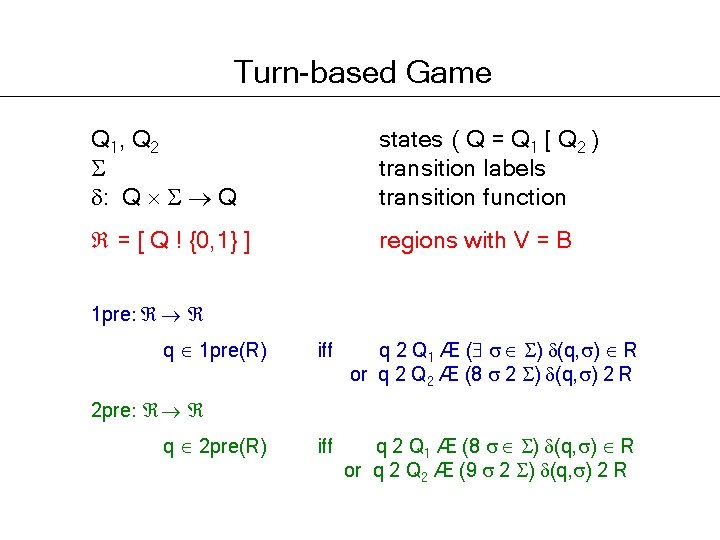

Turn-based Game Q 1, Q 2 : Q Q states ( Q = Q 1 [ Q 2 ) transition labels transition function = [ Q ! {0, 1} ] regions with V = B 1 pre: q 1 pre(R) iff q 2 Q 1 Æ ( ) (q, ) R or q 2 Q 2 Æ (8 2 ) (q, ) 2 R

Turn-based Game Q 1, Q 2 : Q Q states ( Q = Q 1 [ Q 2 ) transition labels transition function = [ Q ! {0, 1} ] regions with V = B 1 pre: q 1 pre(R) iff q 2 Q 1 Æ ( ) (q, ) R or q 2 Q 2 Æ (8 2 ) (q, ) 2 R iff q 2 Q 1 Æ (8 ) (q, ) R or q 2 Q 2 Æ (9 2 ) (q, ) 2 R 2 pre: q 2 pre(R)

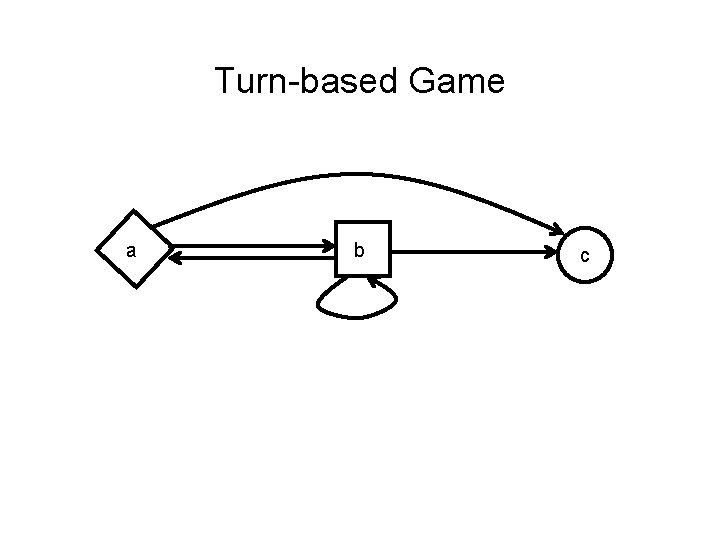

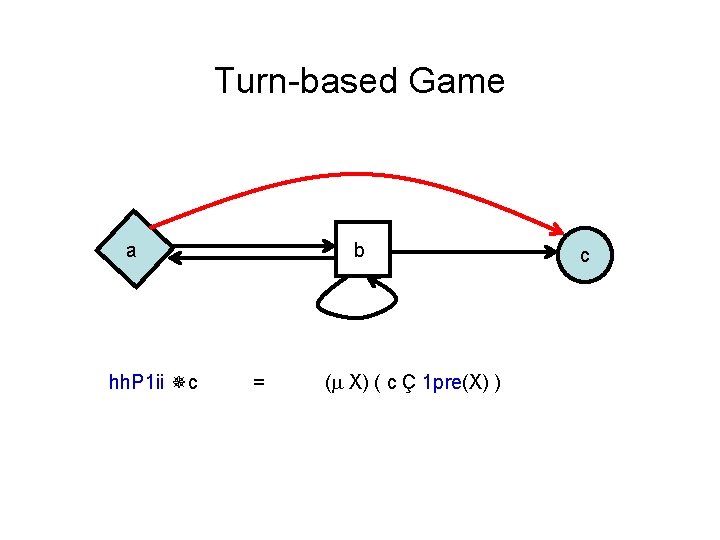

Turn-based Game a b c

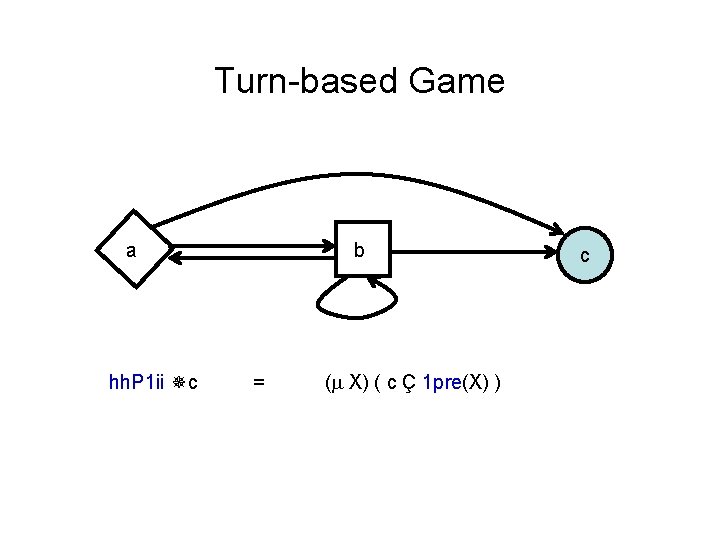

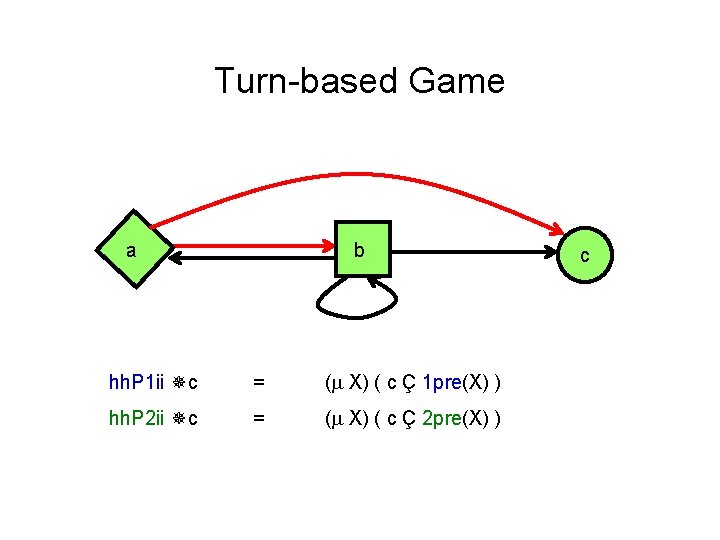

Turn-based Game a hh. P 1 ii c b = ( X) ( c Ç 1 pre(X) ) c

Turn-based Game a hh. P 1 ii c b = ( X) ( c Ç 1 pre(X) ) c

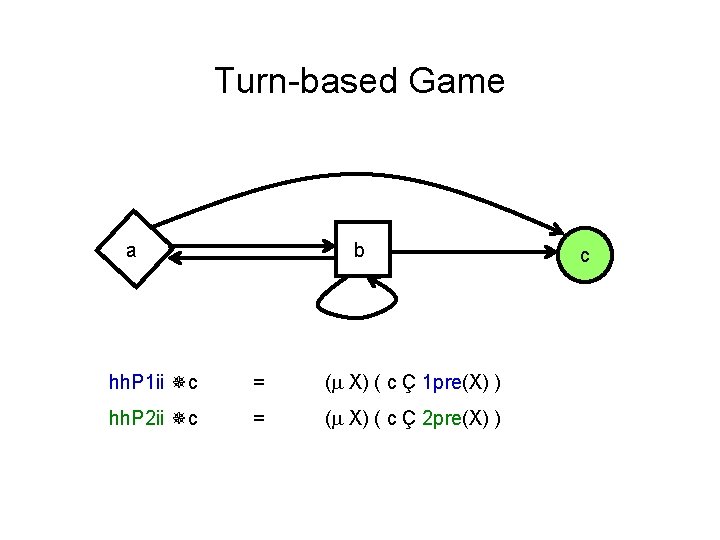

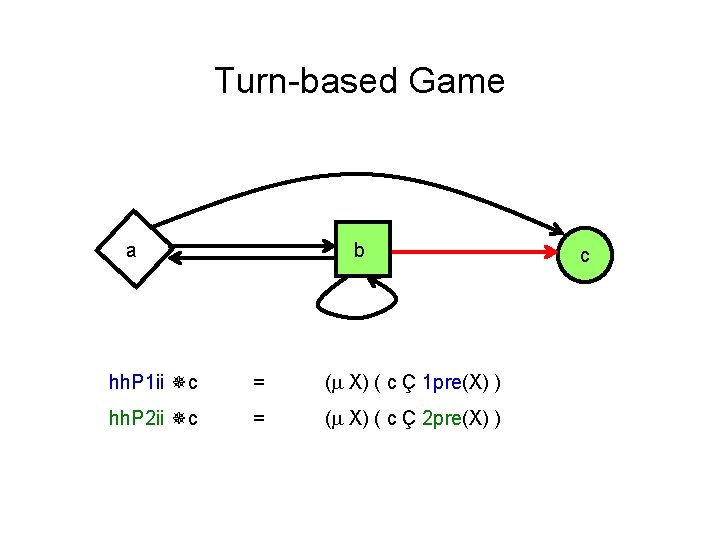

Turn-based Game a b hh. P 1 ii c = ( X) ( c Ç 1 pre(X) ) hh. P 2 ii c = ( X) ( c Ç 2 pre(X) ) c

Turn-based Game a b hh. P 1 ii c = ( X) ( c Ç 1 pre(X) ) hh. P 2 ii c = ( X) ( c Ç 2 pre(X) ) c

Turn-based Game a b hh. P 1 ii c = ( X) ( c Ç 1 pre(X) ) hh. P 2 ii c = ( X) ( c Ç 2 pre(X) ) c

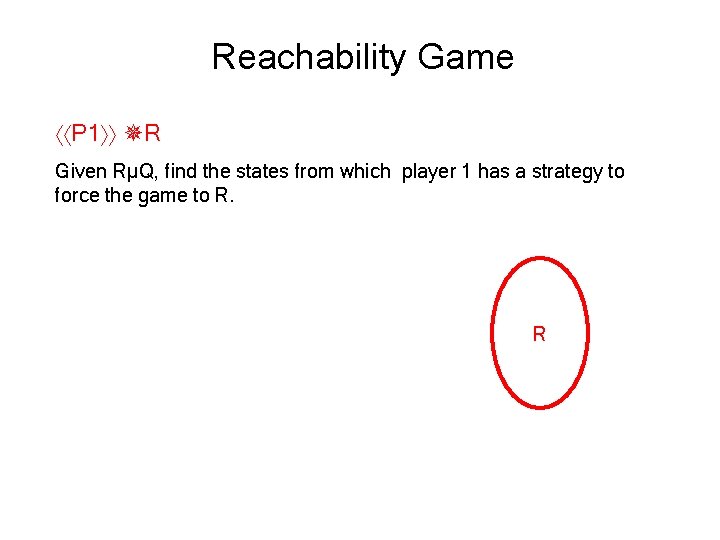

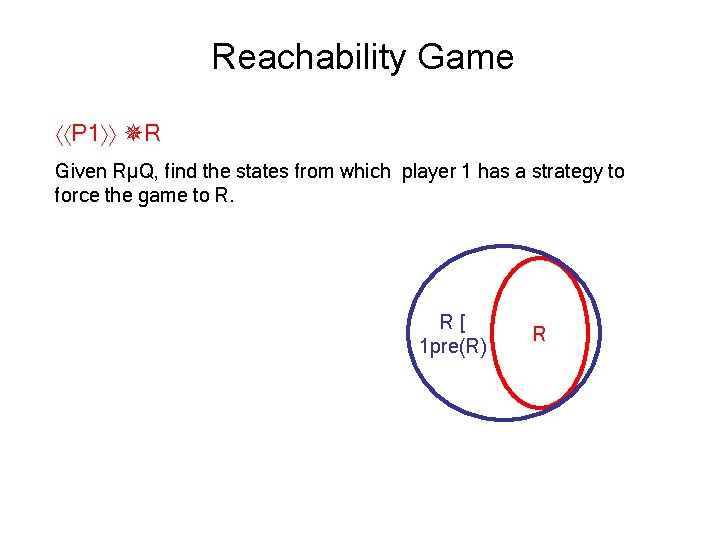

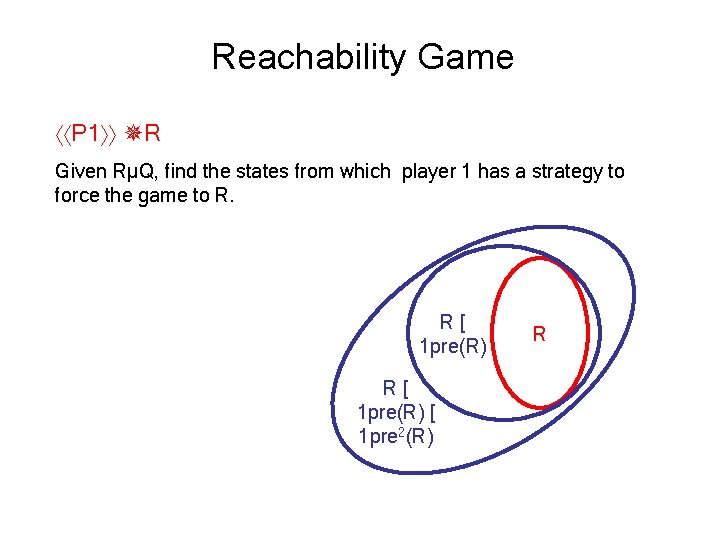

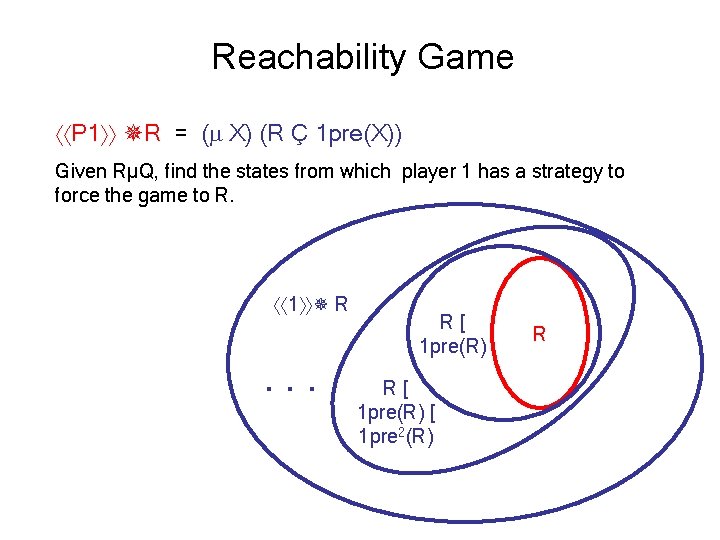

Reachability Game P 1 R Given RµQ, find the states from which player 1 has a strategy to force the game to R. R

Reachability Game P 1 R Given RµQ, find the states from which player 1 has a strategy to force the game to R. R[ 1 pre(R) R

Reachability Game P 1 R Given RµQ, find the states from which player 1 has a strategy to force the game to R. R[ 1 pre(R) [ 1 pre 2(R) R

Reachability Game P 1 R = ( X) (R Ç 1 pre(X)) Given RµQ, find the states from which player 1 has a strategy to force the game to R. 1 R . . . R[ 1 pre(R) [ 1 pre 2(R) R

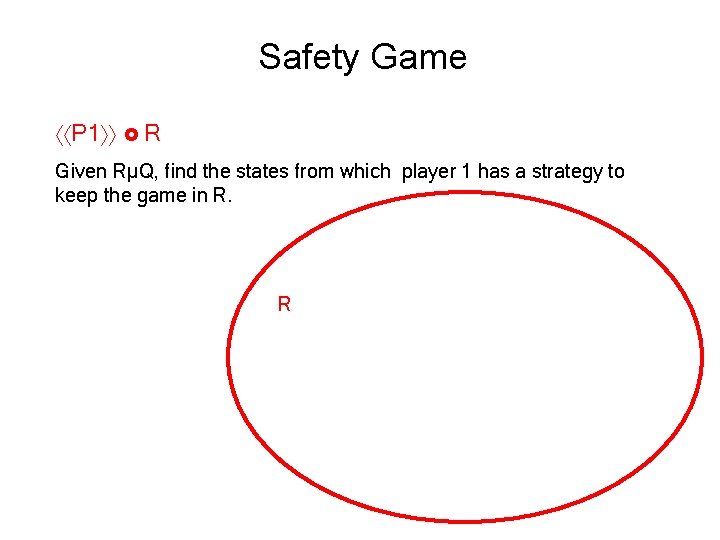

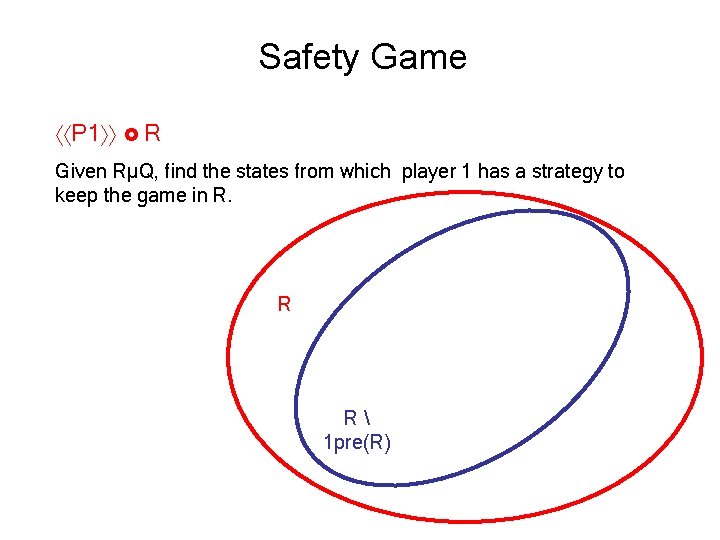

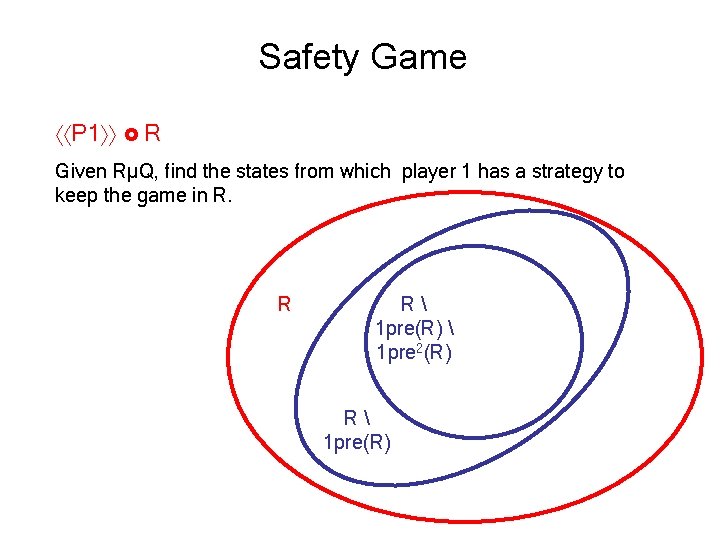

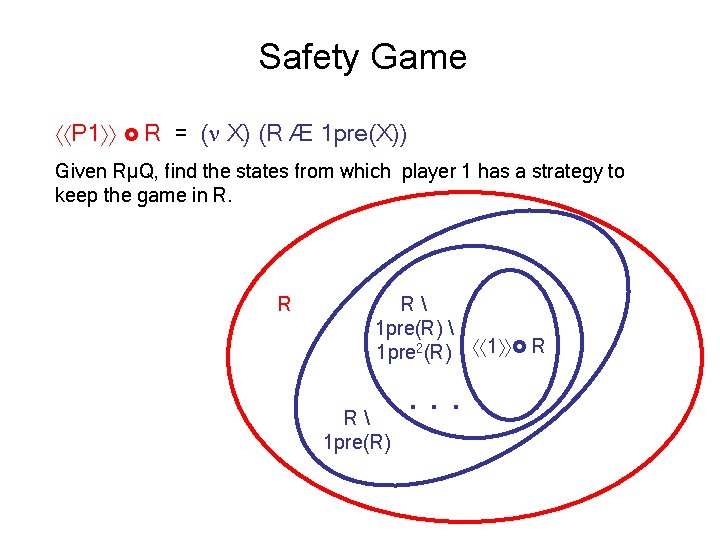

Safety Game P 1 R Given RµQ, find the states from which player 1 has a strategy to keep the game in R. R

Safety Game P 1 R Given RµQ, find the states from which player 1 has a strategy to keep the game in R. R R 1 pre(R)

Safety Game P 1 R Given RµQ, find the states from which player 1 has a strategy to keep the game in R. R R 1 pre(R) 1 pre 2(R) R 1 pre(R)

Safety Game P 1 R = ( X) (R Æ 1 pre(X)) Given RµQ, find the states from which player 1 has a strategy to keep the game in R. R R 1 pre(R) 1 pre 2(R) R 1 pre(R) . . . 1 R

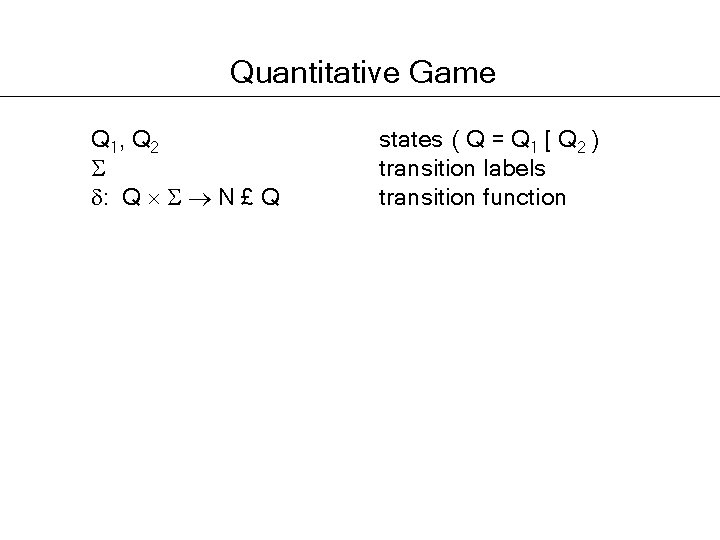

Quantitative Game Q 1, Q 2 : Q N £ Q states ( Q = Q 1 [ Q 2 ) transition labels transition function

![Quantitative Game Q 1, Q 2 : Q N £ Q =[Q!N] states ( Quantitative Game Q 1, Q 2 : Q N £ Q =[Q!N] states (](http://slidetodoc.com/presentation_image_h2/3e1348b54fdac9da0df3dff53f88f637/image-63.jpg)

Quantitative Game Q 1, Q 2 : Q N £ Q =[Q!N] states ( Q = Q 1 [ Q 2 ) transition labels transition function regions with V = N 1 pre: 1 pre(R)(q) = (max ) max( 1(q, ), R( 2(q, )) ) if q 2 Q 1 (min 2 ) max( 1(q, ), R( 2(q, )) ) if q 2 Q 2

![Quantitative Game Q 1, Q 2 : Q N £ Q =[Q!N] states ( Quantitative Game Q 1, Q 2 : Q N £ Q =[Q!N] states (](http://slidetodoc.com/presentation_image_h2/3e1348b54fdac9da0df3dff53f88f637/image-64.jpg)

Quantitative Game Q 1, Q 2 : Q N £ Q =[Q!N] states ( Q = Q 1 [ Q 2 ) transition labels transition function regions with V = N 1 pre: 1 pre(R)(q) = (max ) max( 1(q, ), R( 2(q, )) ) if q 2 Q 1 (min 2 ) max( 1(q, ), R( 2(q, )) ) if q 2 Q 2 2 pre: 2 pre(R)(q) = (min ) max( 1(q, ), R( 2(q, )) ) if q 2 Q 1 (max 2 ) max( 1(q, ), R( 2(q, )) ) if q 2 Q 2

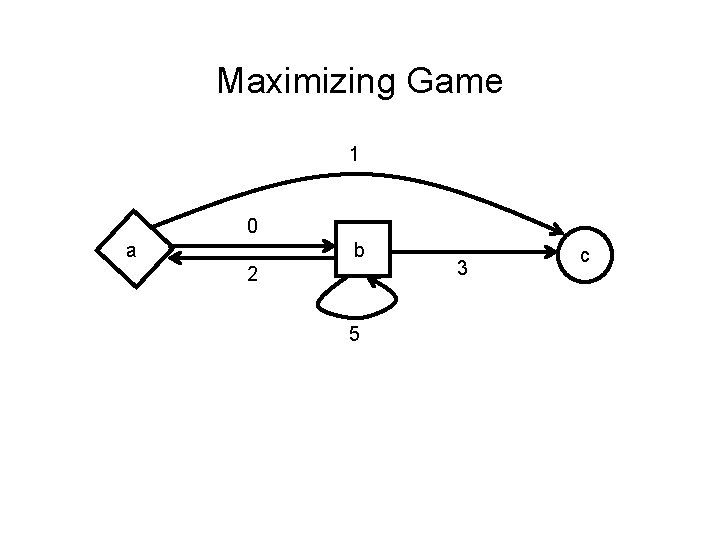

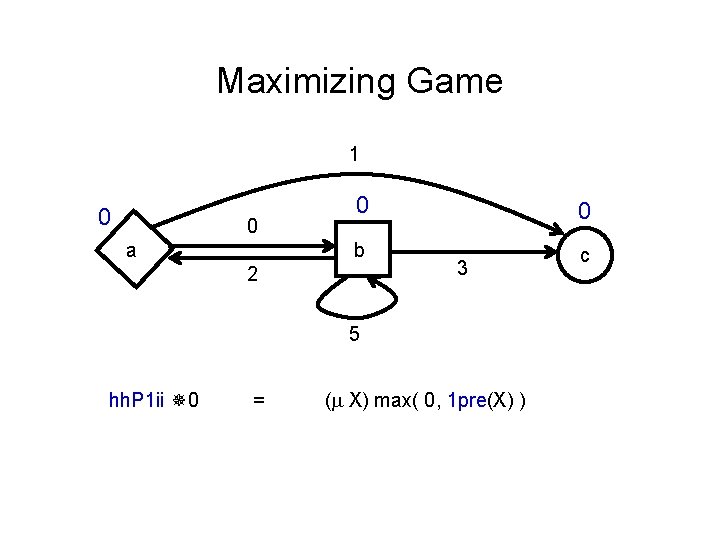

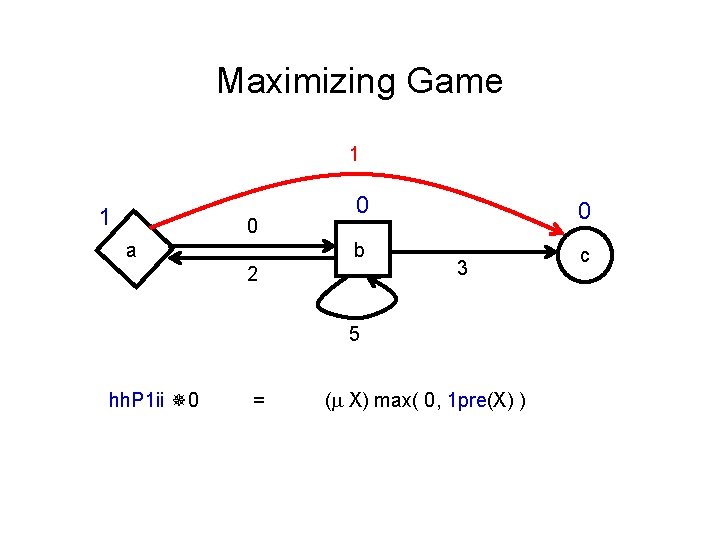

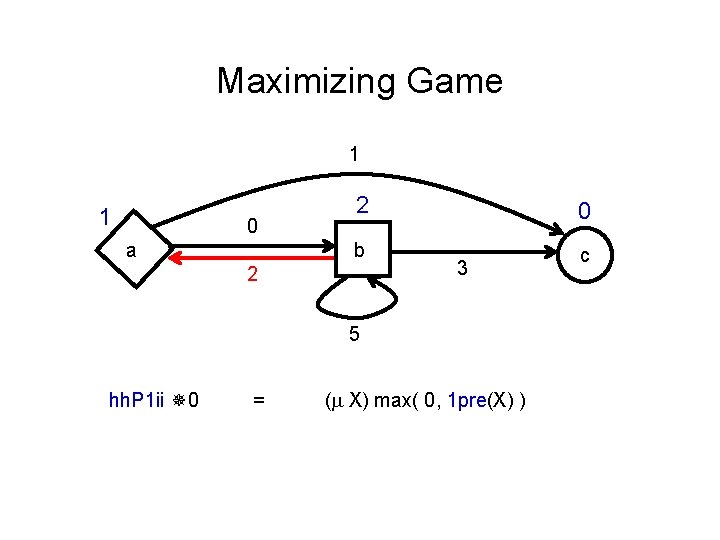

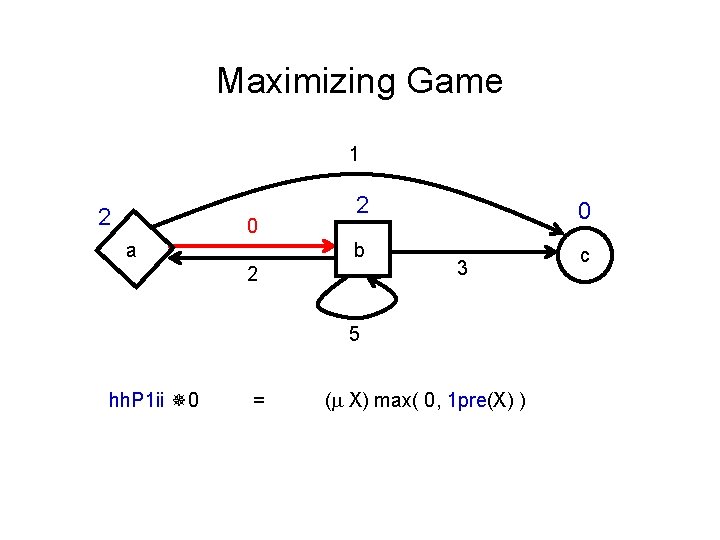

Maximizing Game 1 0 a b 2 5 3 c

Maximizing Game 1 0 0 a 0 b 2 0 3 5 hh. P 1 ii 0 = ( X) max( 0, 1 pre(X) ) c

Maximizing Game 1 1 0 a 0 b 2 0 3 5 hh. P 1 ii 0 = ( X) max( 0, 1 pre(X) ) c

Maximizing Game 1 1 0 a 2 b 2 0 3 5 hh. P 1 ii 0 = ( X) max( 0, 1 pre(X) ) c

Maximizing Game 1 2 0 a 2 b 2 0 3 5 hh. P 1 ii 0 = ( X) max( 0, 1 pre(X) ) c

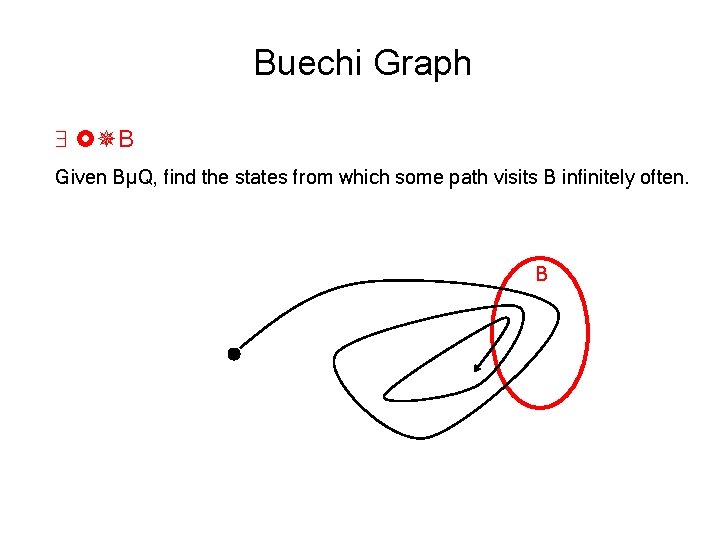

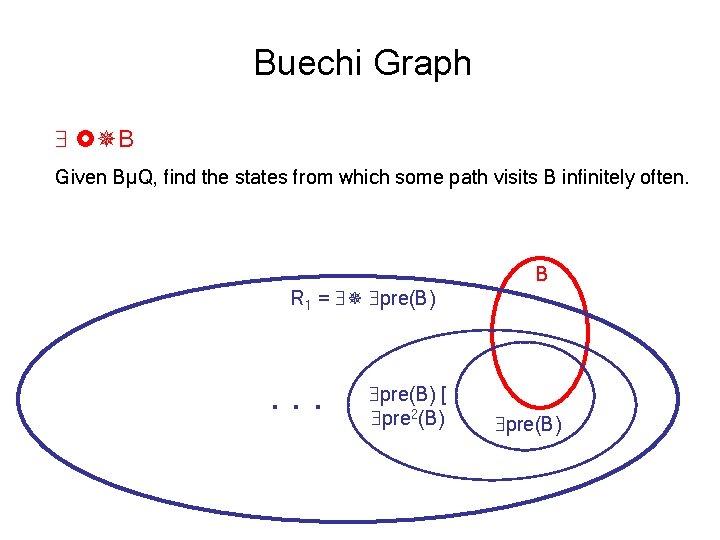

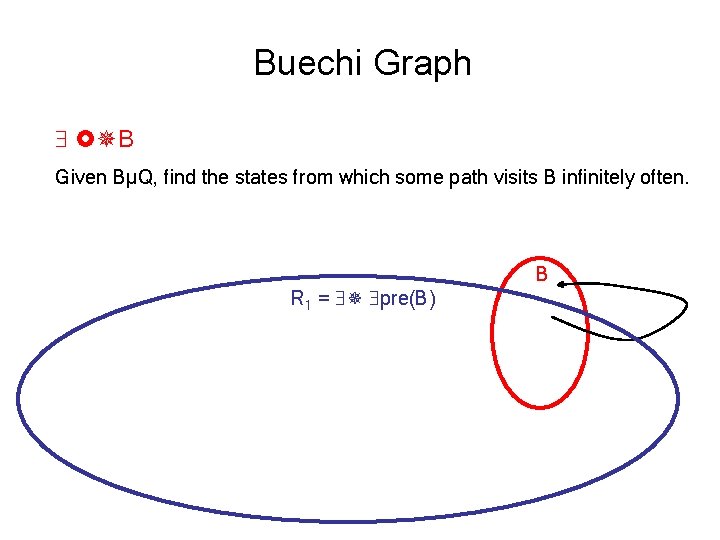

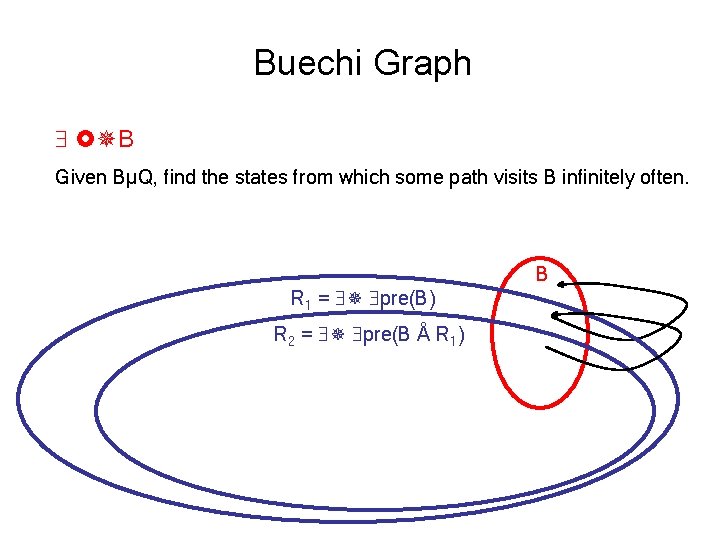

Buechi Graph B Given BµQ, find the states from which some path visits B infinitely often. B

Buechi Graph B Given BµQ, find the states from which some path visits B infinitely often. R 1 = pre(B) . . . pre(B) [ pre 2(B) B pre(B)

Buechi Graph B Given BµQ, find the states from which some path visits B infinitely often. R 1 = pre(B) B

Buechi Graph B Given BµQ, find the states from which some path visits B infinitely often. R 1 = pre(B) R 2 = pre(B Å R 1) B

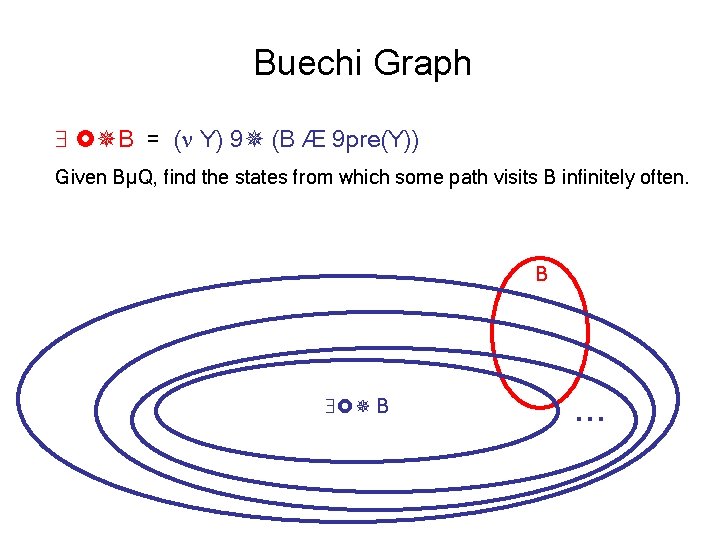

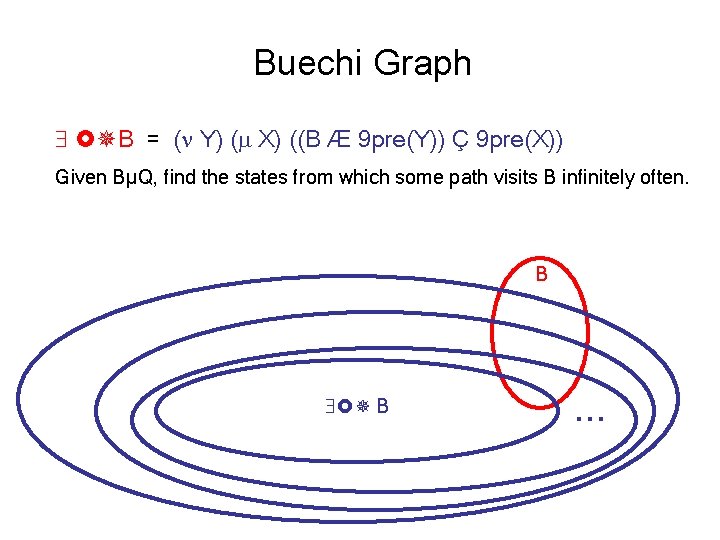

Buechi Graph B = ( Y) 9 (B Æ 9 pre(Y)) Given BµQ, find the states from which some path visits B infinitely often. B B . . .

Buechi Graph B = ( Y) ( X) ((B Æ 9 pre(Y)) Ç 9 pre(X)) Given BµQ, find the states from which some path visits B infinitely often. B B . . .

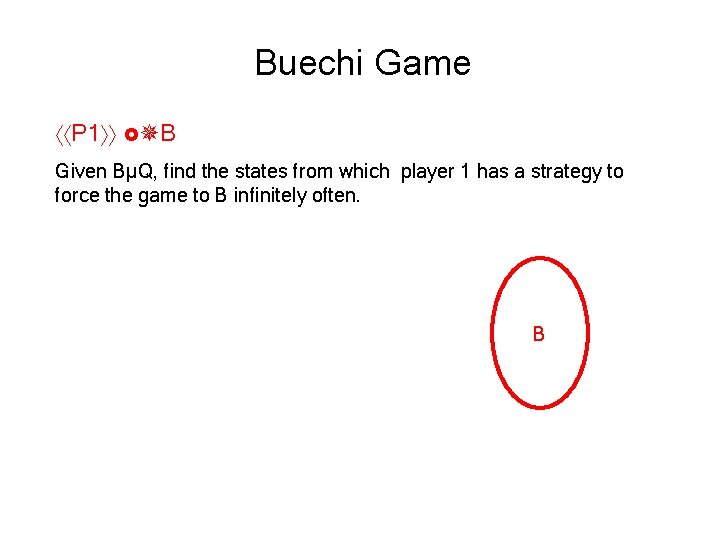

Buechi Game P 1 B Given BµQ, find the states from which player 1 has a strategy to force the game to B infinitely often. B

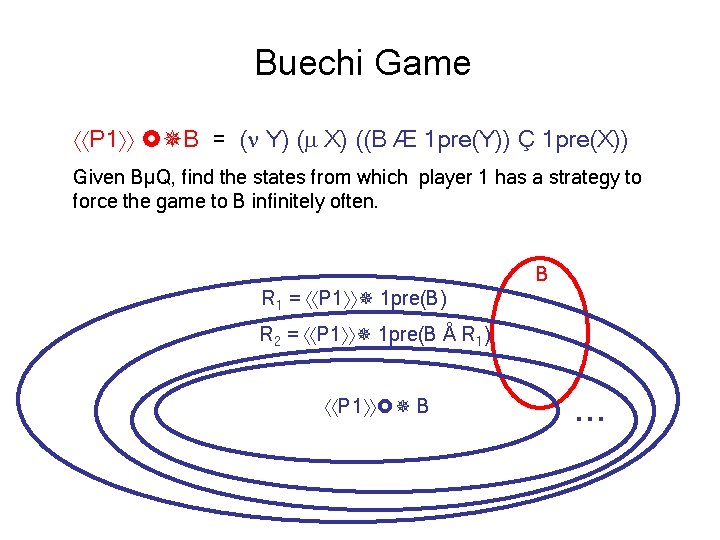

Buechi Game P 1 B = ( Y) ( X) ((B Æ 1 pre(Y)) Ç 1 pre(X)) Given BµQ, find the states from which player 1 has a strategy to force the game to B infinitely often. R 1 = P 1 1 pre(B) B R 2 = P 1 1 pre(B Å R 1) P 1 B . . .

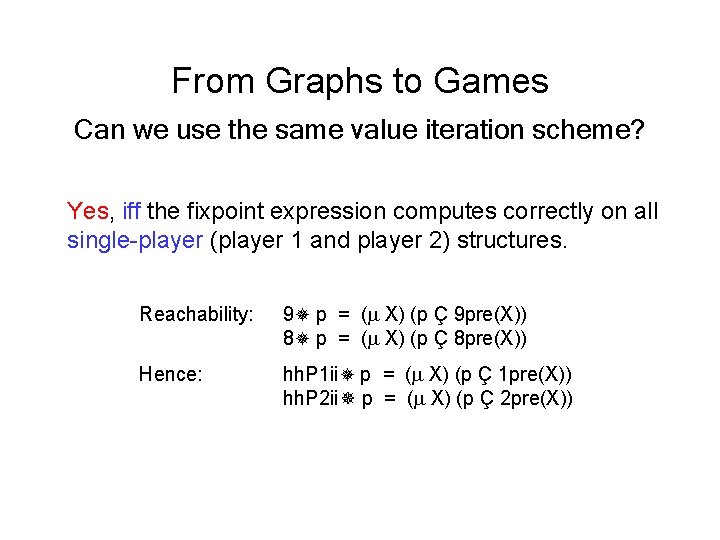

From Graphs to Games Can we use the same value iteration scheme? Yes, iff the fixpoint expression computes correctly on all single-player (player 1 and player 2) structures. Reachability: 9 p = ( X) (p Ç 9 pre(X)) 8 p = ( X) (p Ç 8 pre(X)) Hence: hh. P 1 ii p = ( X) (p Ç 1 pre(X)) hh. P 2 ii p = ( X) (p Ç 2 pre(X))

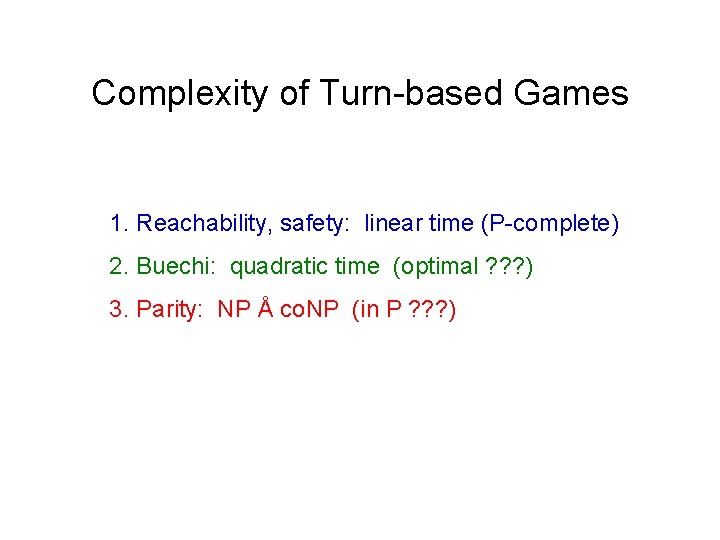

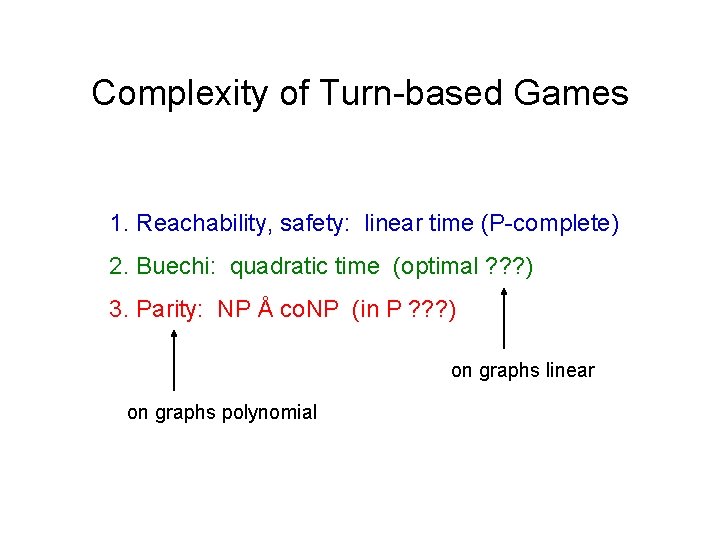

Complexity of Turn-based Games 1. Reachability, safety: linear time (P-complete) 2. Buechi: quadratic time (optimal ? ? ? ) 3. Parity: NP Å co. NP (in P ? ? ? )

Complexity of Turn-based Games 1. Reachability, safety: linear time (P-complete) 2. Buechi: quadratic time (optimal ? ? ? ) 3. Parity: NP Å co. NP (in P ? ? ? ) on graphs linear on graphs polynomial

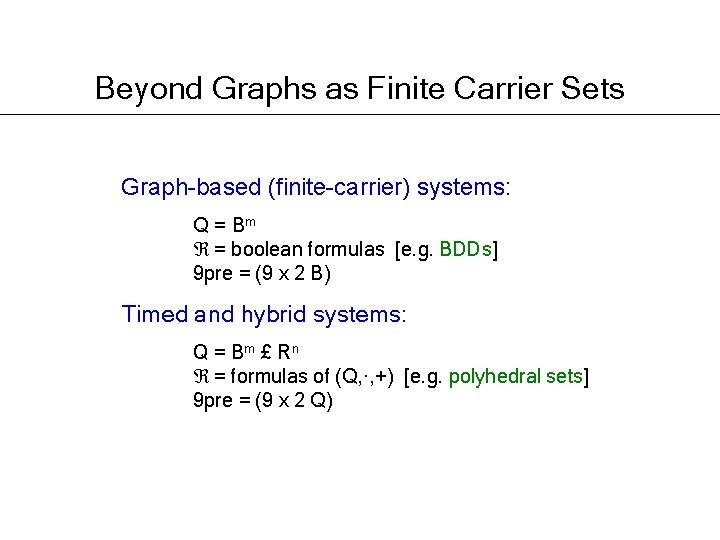

Beyond Graphs as Finite Carrier Sets Graph-based (finite-carrier) systems: Q = Bm = boolean formulas [e. g. BDDs] 9 pre = (9 x 2 B) Timed and hybrid systems: Q = B m £ Rn = formulas of (Q, ·, +) [e. g. polyhedral sets] 9 pre = (9 x 2 Q)

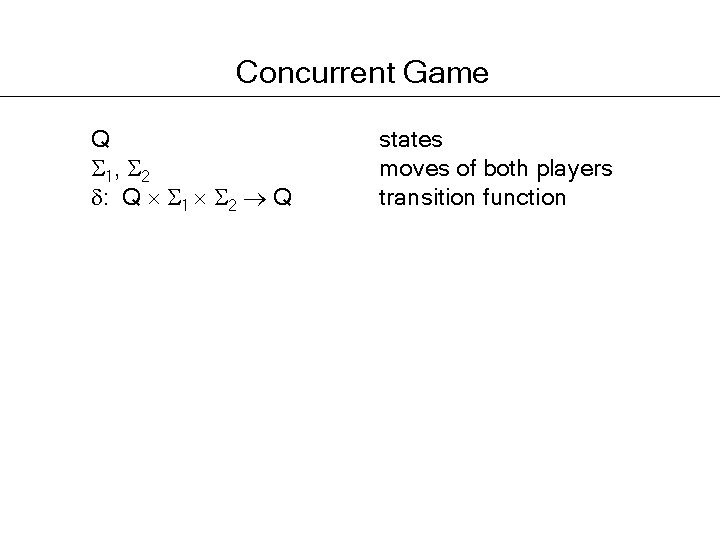

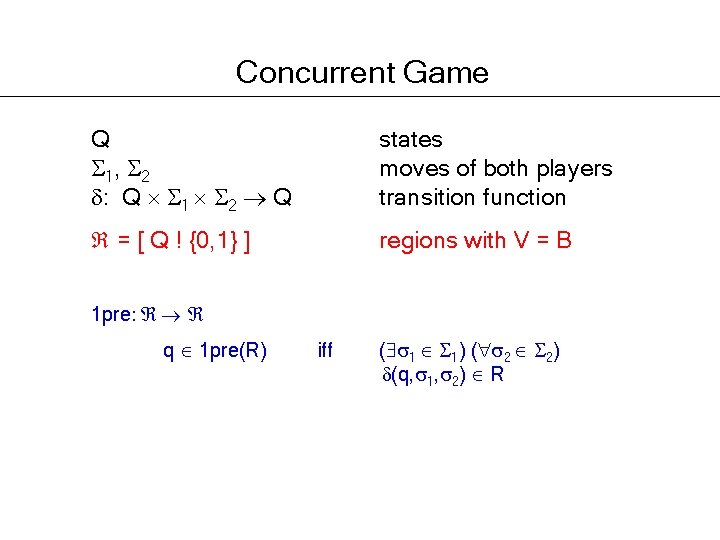

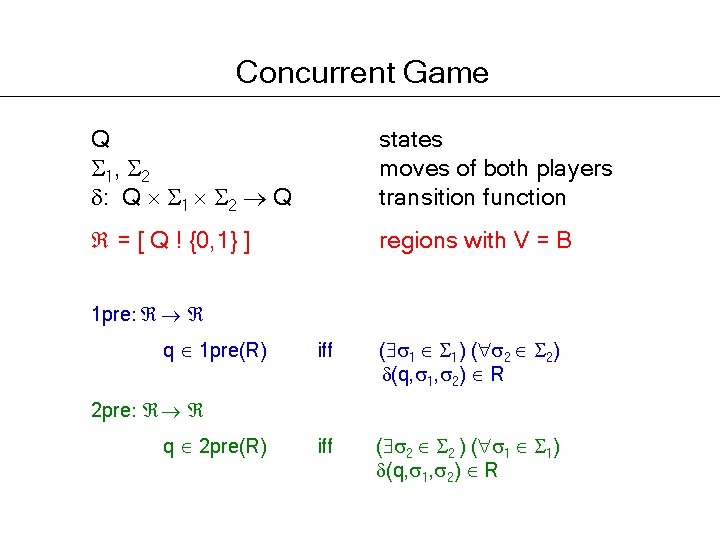

Concurrent Game Q 1, 2 : Q 1 2 Q states moves of both players transition function

Concurrent Game Q 1, 2 : Q 1 2 Q states moves of both players transition function = [ Q ! {0, 1} ] regions with V = B 1 pre: q 1 pre(R) iff ( 1 1) ( 2 2) (q, 1, 2) R

Concurrent Game Q 1, 2 : Q 1 2 Q states moves of both players transition function = [ Q ! {0, 1} ] regions with V = B 1 pre: q 1 pre(R) iff ( 1 1) ( 2 2) (q, 1, 2) R iff ( 2 2 ) ( 1 1) (q, 1, 2) R 2 pre: q 2 pre(R)

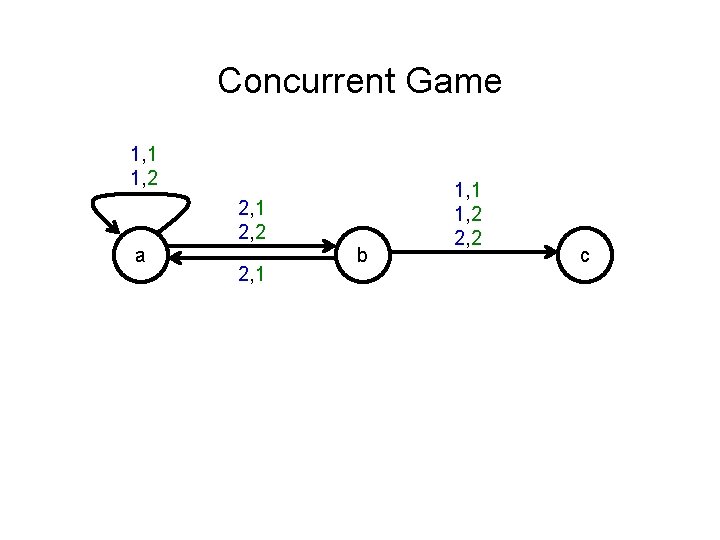

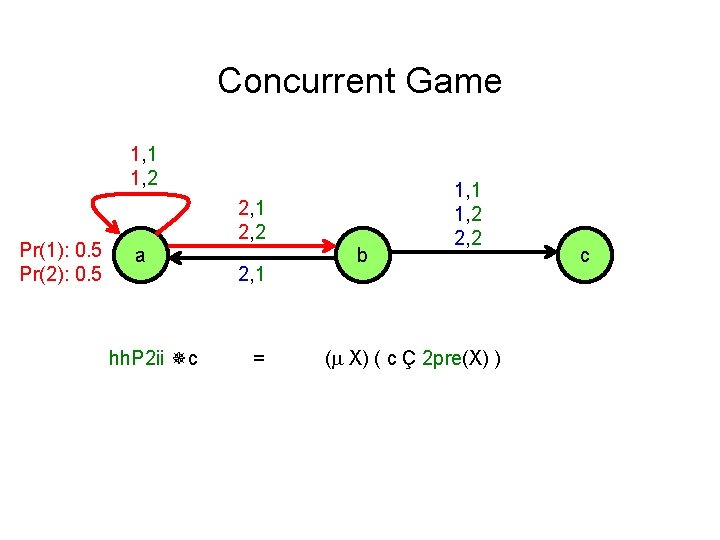

Concurrent Game 1, 1 1, 2 2, 1 2, 2 a 2, 1 b 1, 1 1, 2 2, 2 c

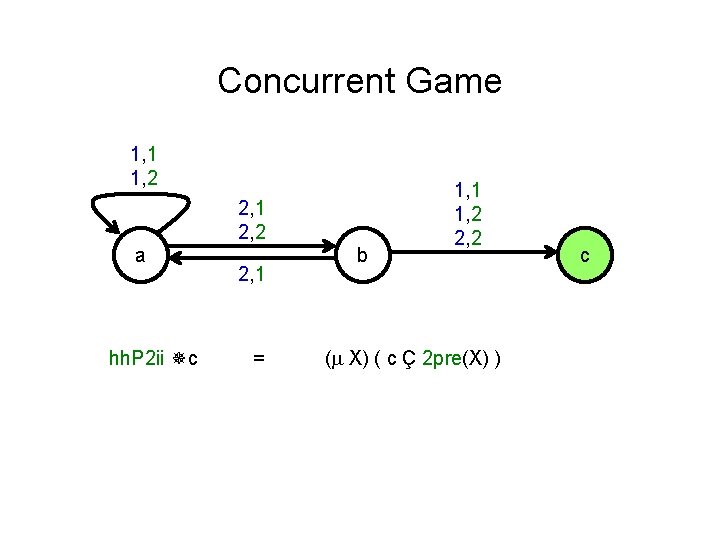

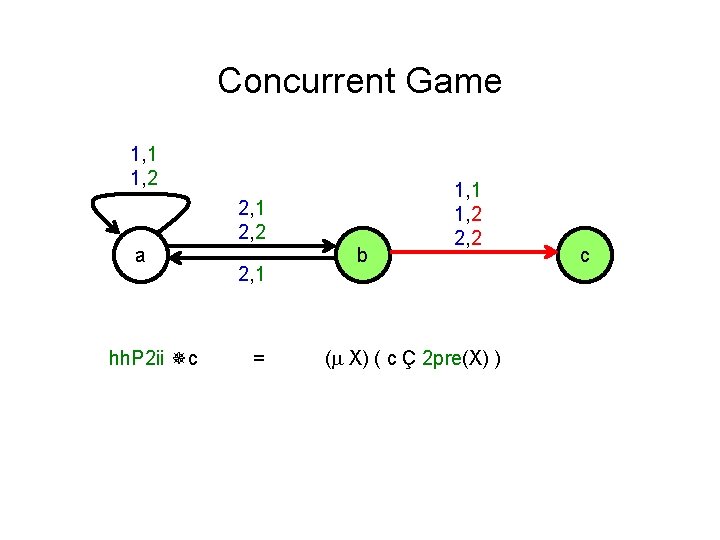

Concurrent Game 1, 1 1, 2 2, 1 2, 2 a hh. P 2 ii c 2, 1 = b 1, 1 1, 2 2, 2 ( X) ( c Ç 2 pre(X) ) c

Concurrent Game 1, 1 1, 2 2, 1 2, 2 a hh. P 2 ii c 2, 1 = b 1, 1 1, 2 2, 2 ( X) ( c Ç 2 pre(X) ) c

Concurrent Game 1, 1 1, 2 Pr(1): 0. 5 Pr(2): 0. 5 2, 1 2, 2 a hh. P 2 ii c 2, 1 = b 1, 1 1, 2 2, 2 ( X) ( c Ç 2 pre(X) ) c

Extended Graph Models OBJECTIVE: -automaton -regular game CONTROL: game graph stochastic game graph PROBABILITIES: Markov decision process CLOCKS: timed automaton stochastic hybrid system

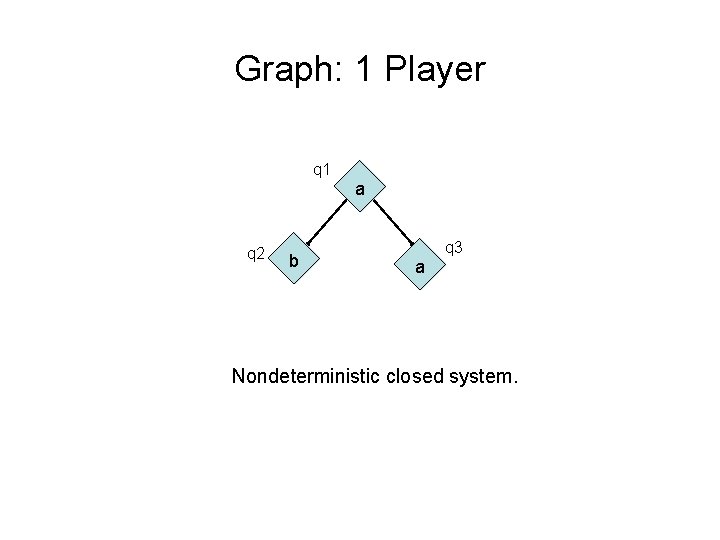

Graph: 1 Player q 1 a q 2 b q 3 a Nondeterministic closed system.

MDP: 1. 5 Players q 1 a q 3 q 2 b a 0. 4 q 4 0. 6 q 5 c a Probabilistic closed system.

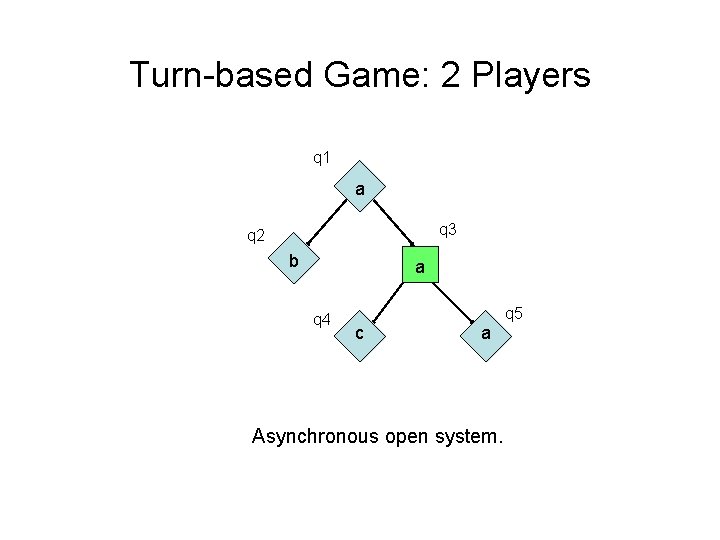

Turn-based Game: 2 Players q 1 a q 3 q 2 b a q 4 q 5 c a Asynchronous open system.

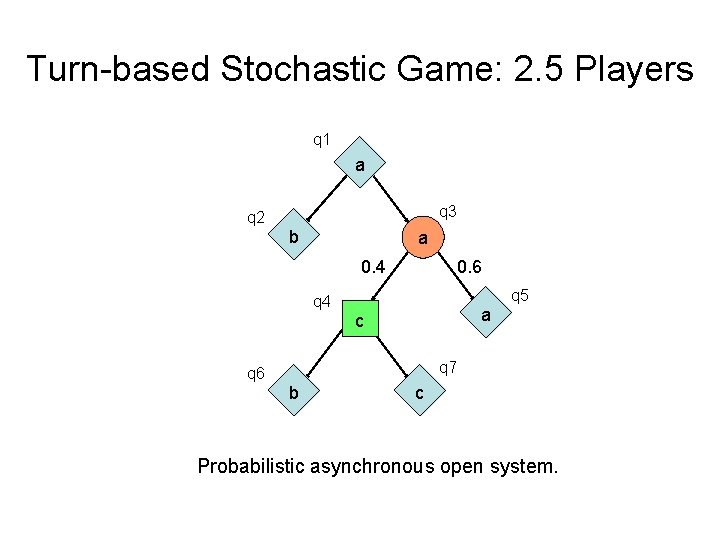

Turn-based Stochastic Game: 2. 5 Players q 1 a q 3 q 2 b a 0. 4 0. 6 q 5 q 4 a c q 7 q 6 b c Probabilistic asynchronous open system.

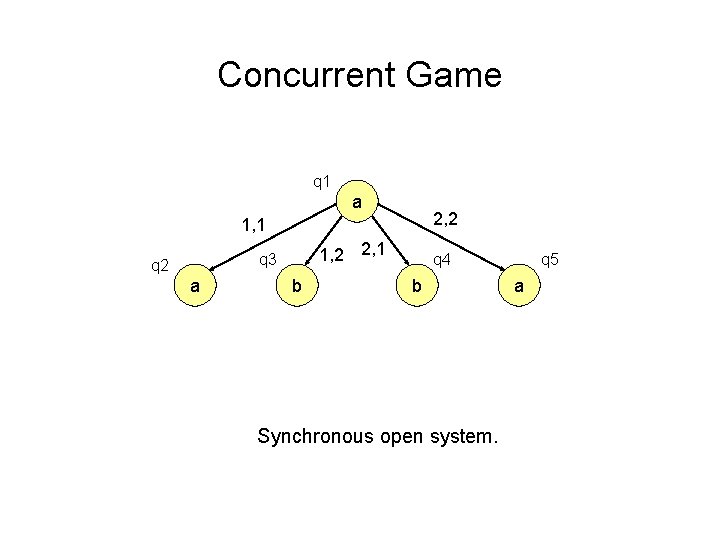

Concurrent Game q 1 a 2, 2 1, 1 1, 2 2, 1 q 3 q 2 a b q 4 b Synchronous open system. q 5 a

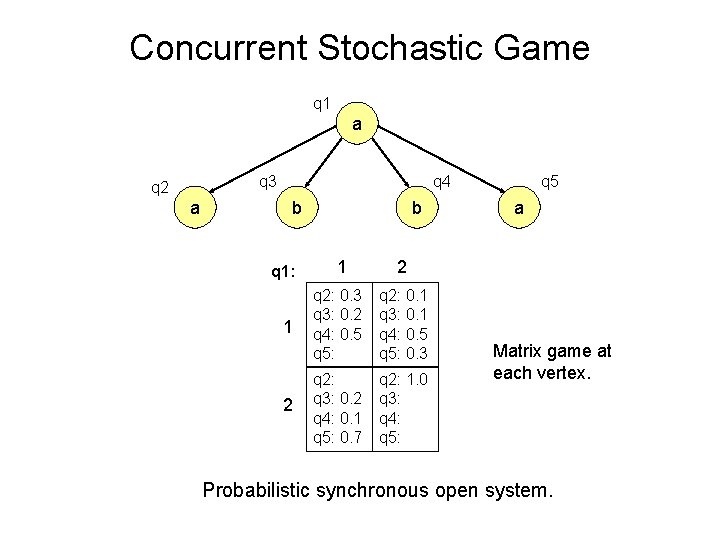

Concurrent Stochastic Game q 1 a q 3 q 2 a q 4 b q 1: 1 2 b 1 2 q 2: 0. 3 q 3: 0. 2 q 4: 0. 5 q 5: q 2: 0. 1 q 3: 0. 1 q 4: 0. 5 q 5: 0. 3 q 2: q 3: 0. 2 q 4: 0. 1 q 5: 0. 7 q 2: 1. 0 q 3: q 4: q 5: q 5 a Matrix game at each vertex. Probabilistic synchronous open system.

Graph: nondeterministic generator of behaviors (possibly stochastic) Strategy: deterministic selector of behaviors (possibly randomized) Graph + Strategies for both players ! Behavior

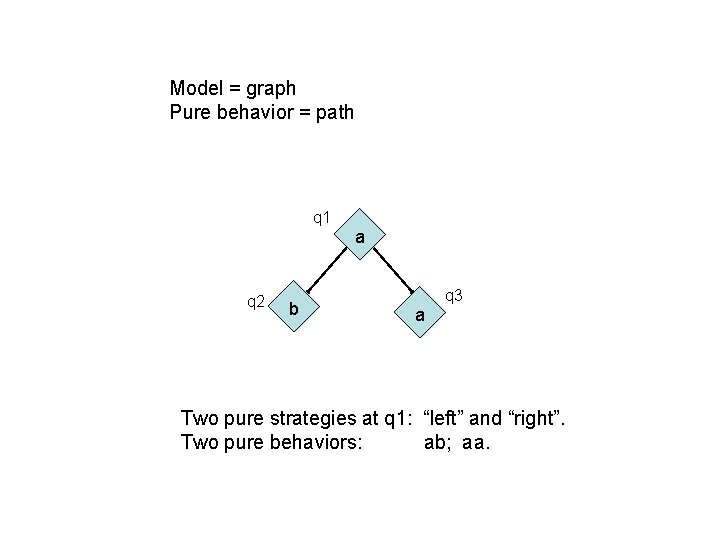

Model = graph Pure behavior = path q 1 a q 2 b q 3 a Two pure strategies at q 1: “left” and “right”. Two pure behaviors: ab; aa.

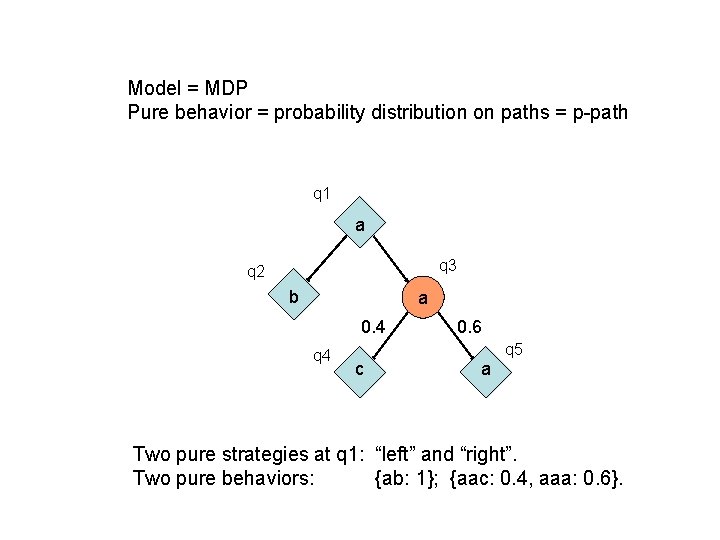

Model = MDP Pure behavior = probability distribution on paths = p-path q 1 a q 3 q 2 b a 0. 4 q 4 0. 6 q 5 c a Two pure strategies at q 1: “left” and “right”. Two pure behaviors: {ab: 1}; {aac: 0. 4, aaa: 0. 6}.

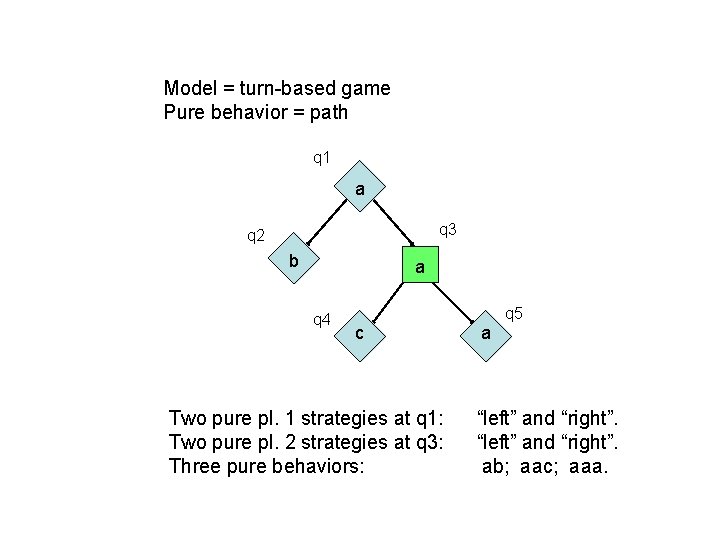

Model = turn-based game Pure behavior = path q 1 a q 3 q 2 b a q 4 q 5 c Two pure pl. 1 strategies at q 1: Two pure pl. 2 strategies at q 3: Three pure behaviors: a “left” and “right”. ab; aac; aaa.

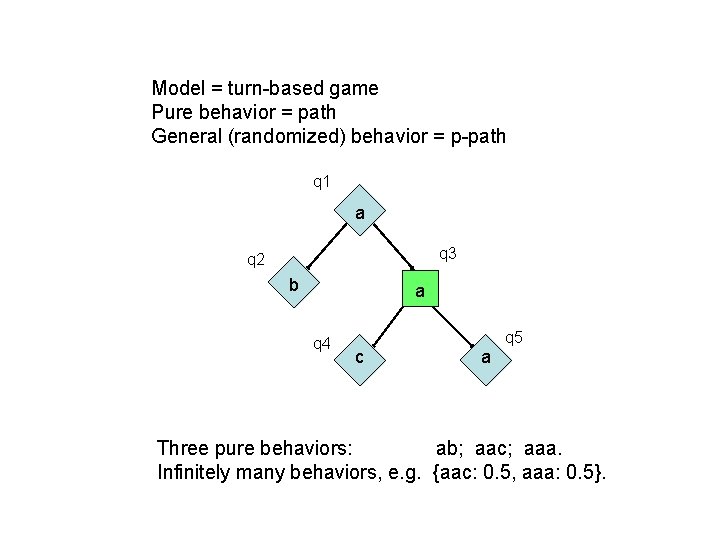

Model = turn-based game Pure behavior = path General (randomized) behavior = p-path q 1 a q 3 q 2 b a q 4 q 5 c a Three pure behaviors: ab; aac; aaa. Infinitely many behaviors, e. g. {aac: 0. 5, aaa: 0. 5}.

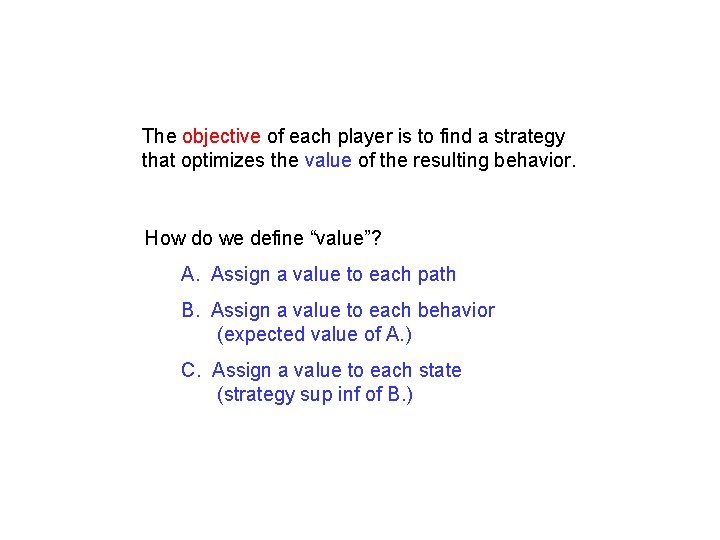

The objective of each player is to find a strategy that optimizes the value of the resulting behavior. How do we define “value”? A. Assign a value to each path B. Assign a value to each behavior (expected value of A. ) C. Assign a value to each state (strategy sup inf of B. )

A. Value of Paths Qualitative value function: : Q ! {0, 1} e. g. -regular subsets of Q

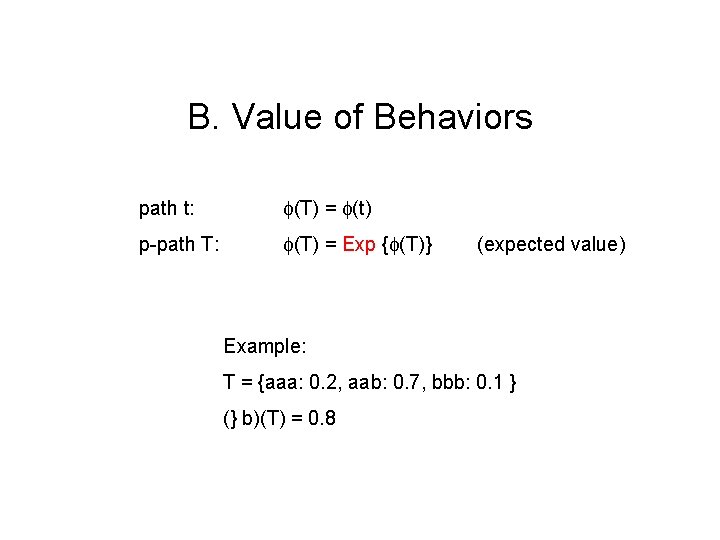

B. Value of Behaviors path t: (T) = (t) p-path T: (T) = Exp { (T)} (expected value) Example: T = {aaa: 0. 2, aab: 0. 7, bbb: 0. 1 } (} b)(T) = 0. 8

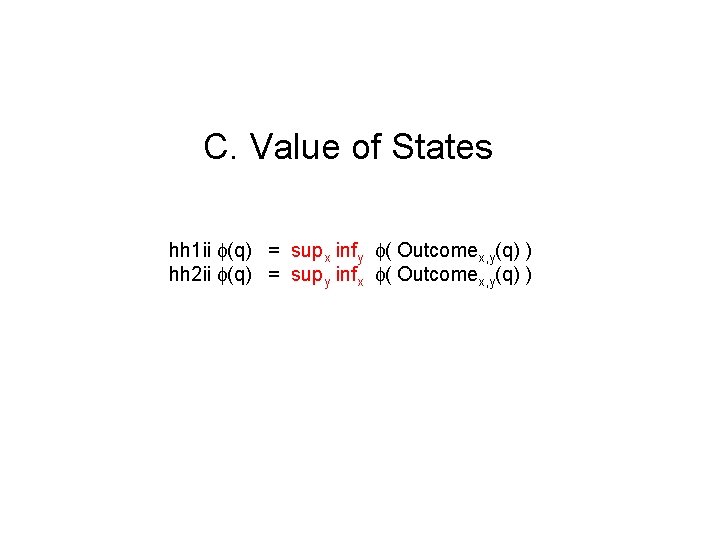

C. Value of States hh 1 ii (q) = supx infy ( Outcomex, y(q) ) hh 2 ii (q) = supy infx ( Outcomex, y(q) )

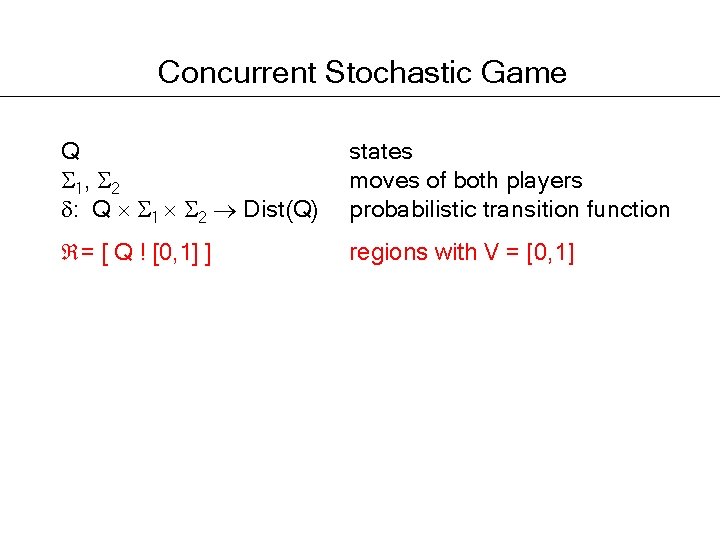

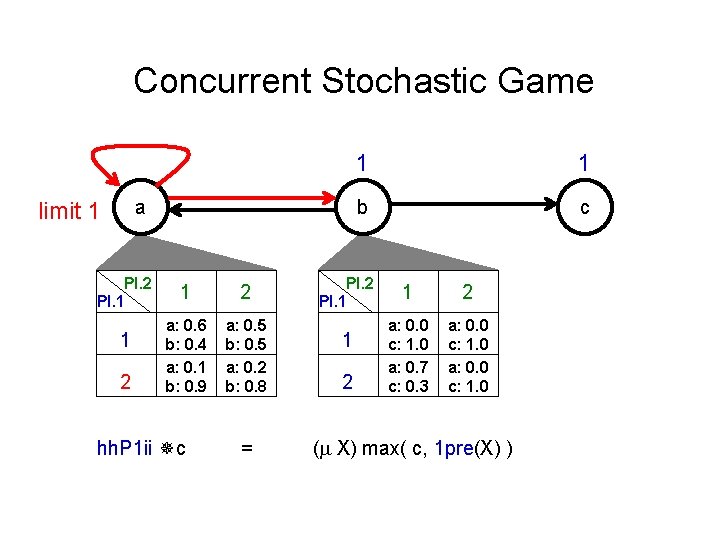

Concurrent Stochastic Game Q 1, 2 : Q 1 2 Dist(Q) states moves of both players probabilistic transition function = [ Q ! [0, 1] ] regions with V = [0, 1]

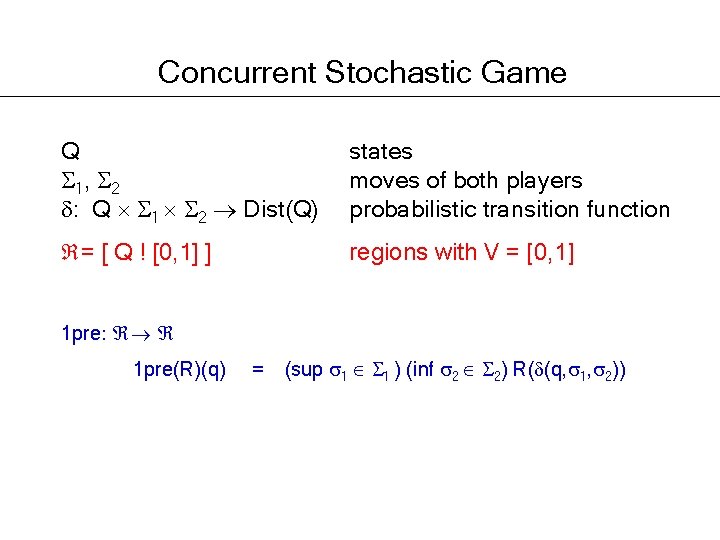

Concurrent Stochastic Game Q 1, 2 : Q 1 2 Dist(Q) states moves of both players probabilistic transition function = [ Q ! [0, 1] ] regions with V = [0, 1] 1 pre: 1 pre(R)(q) = (sup 1 1 ) (inf 2 2) R( (q, 1, 2))

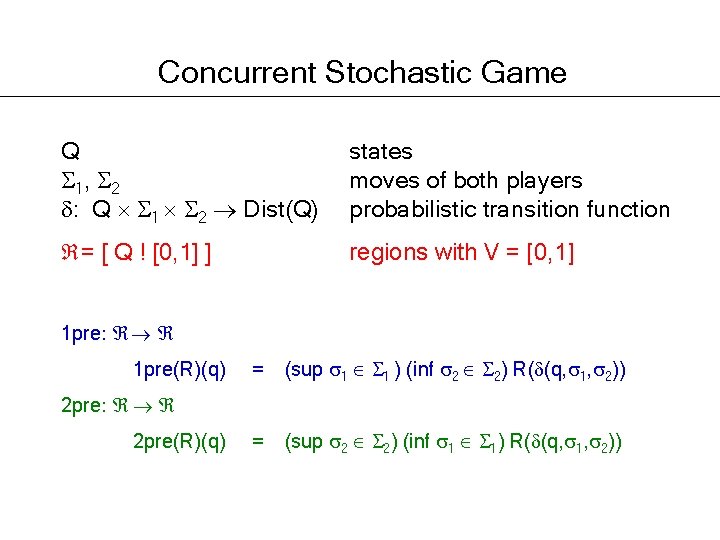

Concurrent Stochastic Game Q 1, 2 : Q 1 2 Dist(Q) states moves of both players probabilistic transition function = [ Q ! [0, 1] ] regions with V = [0, 1] 1 pre: 1 pre(R)(q) = (sup 1 1 ) (inf 2 2) R( (q, 1, 2)) = (sup 2 2) (inf 1 1) R( (q, 1, 2)) 2 pre: 2 pre(R)(q)

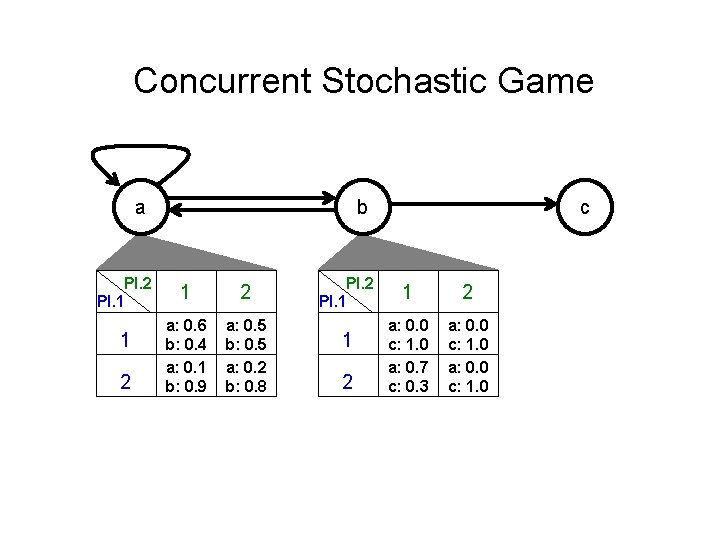

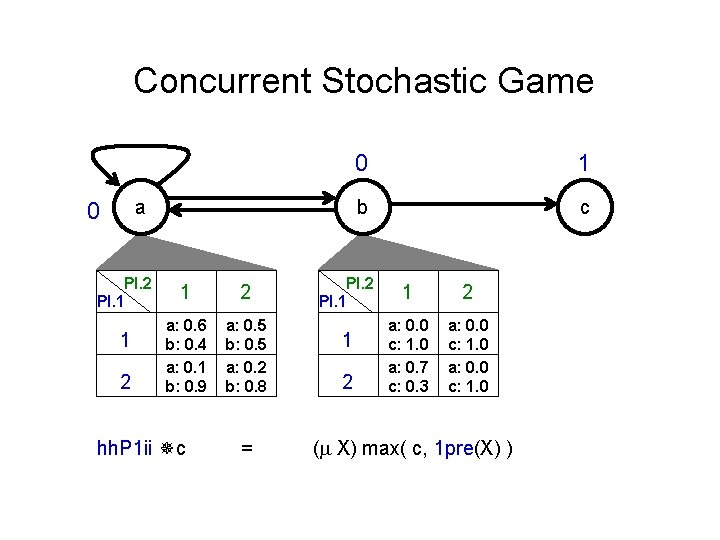

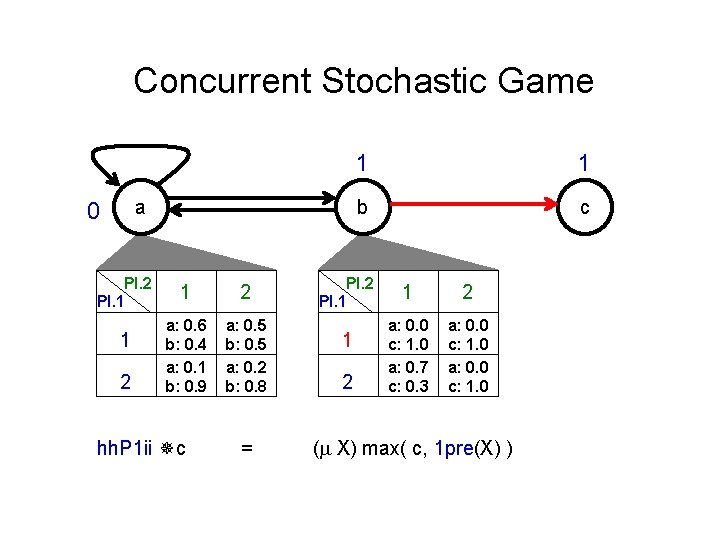

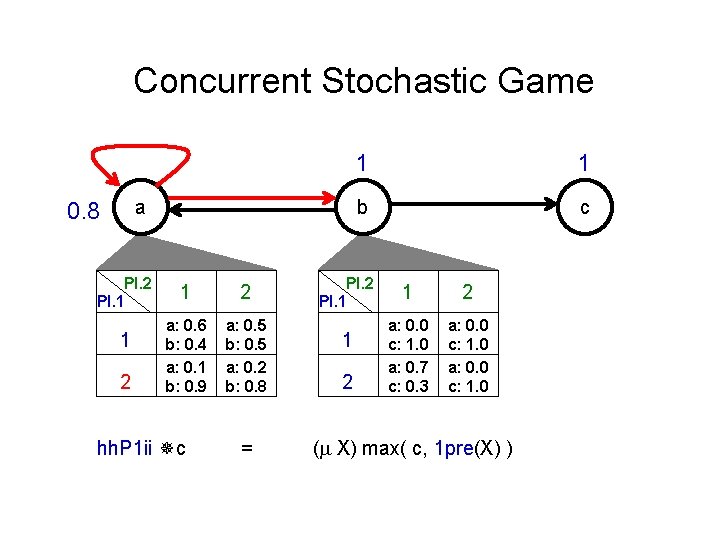

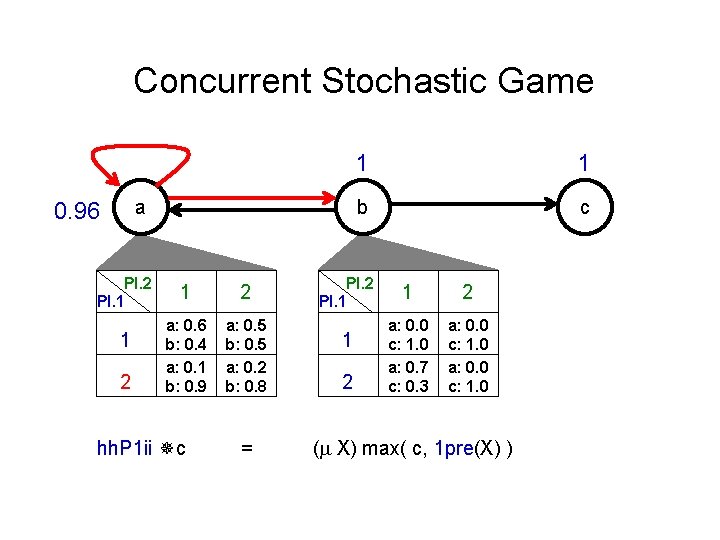

Concurrent Stochastic Game a Pl. 2 Pl. 1 1 2 b 1 2 a: 0. 6 b: 0. 4 a: 0. 1 b: 0. 9 a: 0. 5 b: 0. 5 a: 0. 2 b: 0. 8 Pl. 2 Pl. 1 1 2 c 1 2 a: 0. 0 c: 1. 0 a: 0. 7 c: 0. 3 a: 0. 0 c: 1. 0

Concurrent Stochastic Game a 0 Pl. 2 Pl. 1 1 2 a: 0. 6 b: 0. 4 a: 0. 1 b: 0. 9 a: 0. 5 b: 0. 5 a: 0. 2 b: 0. 8 hh. P 1 ii c = 0 1 b c Pl. 2 Pl. 1 1 2 a: 0. 0 c: 1. 0 a: 0. 7 c: 0. 3 a: 0. 0 c: 1. 0 ( X) max( c, 1 pre(X) )

Concurrent Stochastic Game a 0 Pl. 2 Pl. 1 1 2 a: 0. 6 b: 0. 4 a: 0. 1 b: 0. 9 a: 0. 5 b: 0. 5 a: 0. 2 b: 0. 8 hh. P 1 ii c = 1 1 b c Pl. 2 Pl. 1 1 2 a: 0. 0 c: 1. 0 a: 0. 7 c: 0. 3 a: 0. 0 c: 1. 0 ( X) max( c, 1 pre(X) )

Concurrent Stochastic Game a 0. 8 Pl. 2 Pl. 1 1 2 a: 0. 6 b: 0. 4 a: 0. 1 b: 0. 9 a: 0. 5 b: 0. 5 a: 0. 2 b: 0. 8 hh. P 1 ii c = 1 1 b c Pl. 2 Pl. 1 1 2 a: 0. 0 c: 1. 0 a: 0. 7 c: 0. 3 a: 0. 0 c: 1. 0 ( X) max( c, 1 pre(X) )

Concurrent Stochastic Game a 0. 96 Pl. 2 Pl. 1 1 2 a: 0. 6 b: 0. 4 a: 0. 1 b: 0. 9 a: 0. 5 b: 0. 5 a: 0. 2 b: 0. 8 hh. P 1 ii c = 1 1 b c Pl. 2 Pl. 1 1 2 a: 0. 0 c: 1. 0 a: 0. 7 c: 0. 3 a: 0. 0 c: 1. 0 ( X) max( c, 1 pre(X) )

Concurrent Stochastic Game a limit 1 Pl. 2 Pl. 1 1 2 a: 0. 6 b: 0. 4 a: 0. 1 b: 0. 9 a: 0. 5 b: 0. 5 a: 0. 2 b: 0. 8 hh. P 1 ii c = 1 1 b c Pl. 2 Pl. 1 1 2 a: 0. 0 c: 1. 0 a: 0. 7 c: 0. 3 a: 0. 0 c: 1. 0 ( X) max( c, 1 pre(X) )

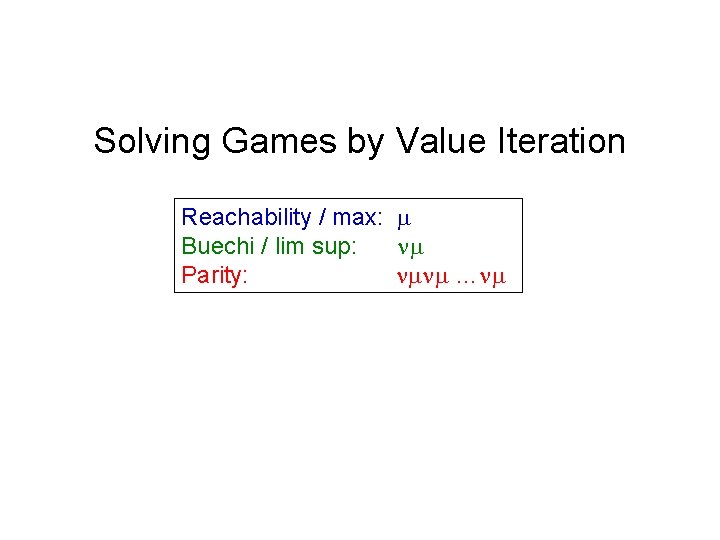

Solving Games by Value Iteration Reachability / max: Buechi / lim sup: Parity: …

Solving Games by Value Iteration Reachability / max: Buechi / lim sup: Parity: … Many open questions: How do different evaluation orders compare? How fast do these algorithms converge? When are they optimal?

Summary: Classification of Games 1. Number of players: 1, 1. 5, 2, 2. 5 2. Alternation: turn-based or concurrent 3. Strategies: pure or randomized 4. Value of a path: qualitative (boolean) or quantitative (real) 5. Objective: Borel 1, 2, 3 6. Zero-sum vs. nonzero-sum

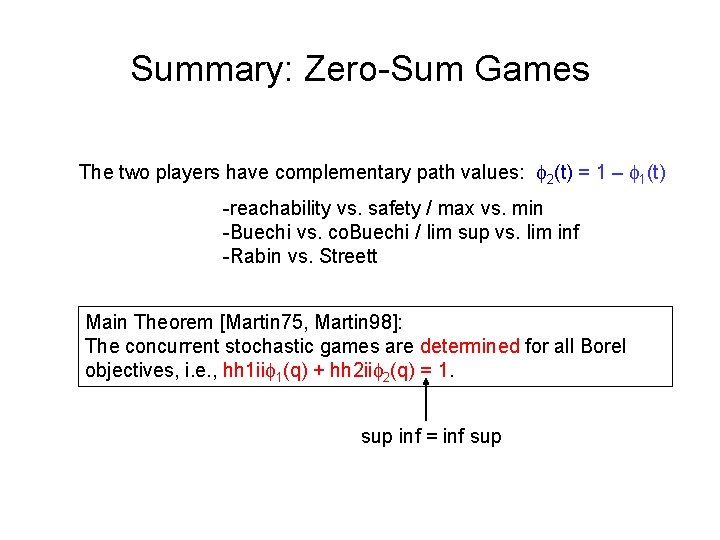

Summary: Zero-Sum Games The two players have complementary path values: 2(t) = 1 – 1(t) -reachability vs. safety / max vs. min -Buechi vs. co. Buechi / lim sup vs. lim inf -Rabin vs. Streett Main Theorem [Martin 75, Martin 98]: The concurrent stochastic games are determined for all Borel objectives, i. e. , hh 1 ii 1(q) + hh 2 ii 2(q) = 1. sup inf = inf sup

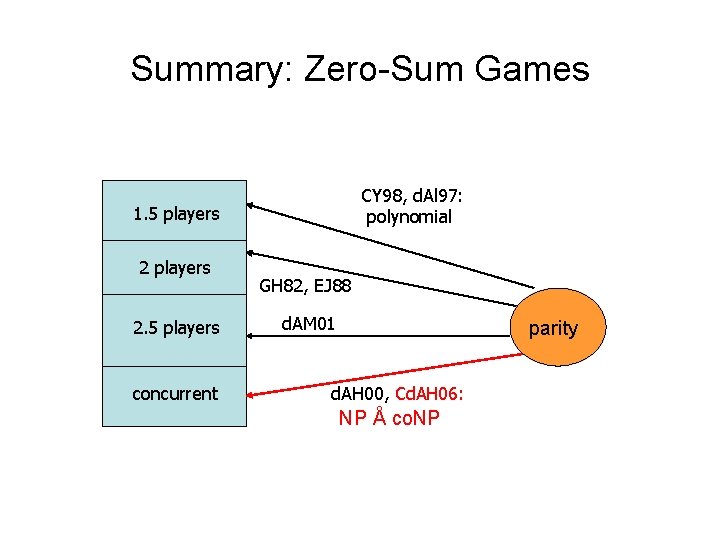

Summary: Zero-Sum Games CY 98, d. Al 97: polynomial 1. 5 players 2. 5 players concurrent GH 82, EJ 88 d. AM 01 parity d. AH 00, Cd. AH 06: NP Å co. NP

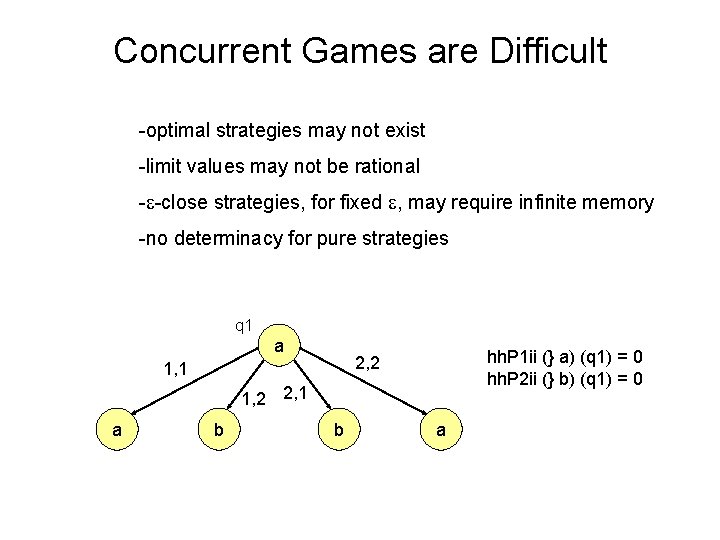

Concurrent Games are Difficult -optimal strategies may not exist -limit values may not be rational - -close strategies, for fixed , may require infinite memory -no determinacy for pure strategies q 1 a hh. P 1 ii (} a) (q 1) = 0 hh. P 2 ii (} b) (q 1) = 0 2, 2 1, 1 1, 2 2, 1 a b b a

![Turn-based Games are More Pleasant -optimal strategies always exist [Mc. Iver/Morgan] -in the non-stochastic Turn-based Games are More Pleasant -optimal strategies always exist [Mc. Iver/Morgan] -in the non-stochastic](http://slidetodoc.com/presentation_image_h2/3e1348b54fdac9da0df3dff53f88f637/image-120.jpg)

Turn-based Games are More Pleasant -optimal strategies always exist [Mc. Iver/Morgan] -in the non-stochastic case, pure finite-memory optimal strategies exist for -regular objectives [Gurevich/Harrington] -for parity objectives, pure memoryless optimal strategies exist [Emerson/Jutla: non-stochastic Rabin; Condon: stochastic reachability; Chatterjee/de. Alfaro/H: stochastic Rabin], hence NP Å co. NP

![Turn-based Games are More Pleasant -optimal strategies always exist [Mc. Iver/Morgan] -in the non-stochastic Turn-based Games are More Pleasant -optimal strategies always exist [Mc. Iver/Morgan] -in the non-stochastic](http://slidetodoc.com/presentation_image_h2/3e1348b54fdac9da0df3dff53f88f637/image-121.jpg)

Turn-based Games are More Pleasant -optimal strategies always exist [Mc. Iver/Morgan] -in the non-stochastic case, pure finite-memory optimal strategies exist for -regular objectives [Gurevich/Harrington] -for parity objectives, pure memoryless optimal strategies exist [Emerson/Jutla: non-stochastic Rabin; Condon: stochastic reachability; Chatterjee/de. Alfaro/H: stochastic Rabin], hence NP Å co. NP If solvable in P is open for non-stochastic parity games and for stochastic reachability games.

Summary Verification and control are very special (boolean) cases of graph-based optimization problems. They can be generalized to solve questions that involve multiple players, quantitative resources, probabilistic transitions, and continuous state spaces. The theory and practice of this is still wide open …

- Slides: 122