Gaining Insights into MultiCore Cache Partitioning Bridging the

Gaining Insights into Multi-Core Cache Partitioning: Bridging the Gap between Simulation and Real Systems Jiang Lin 1, Qingda Lu 2, Xiaoning Ding 2, Zhao Zhang 1, Xiaodong Zhang 2, and P. Sadayappan 2 1 Department of ECE Iowa State University 2 Department of CSE The Ohio State University

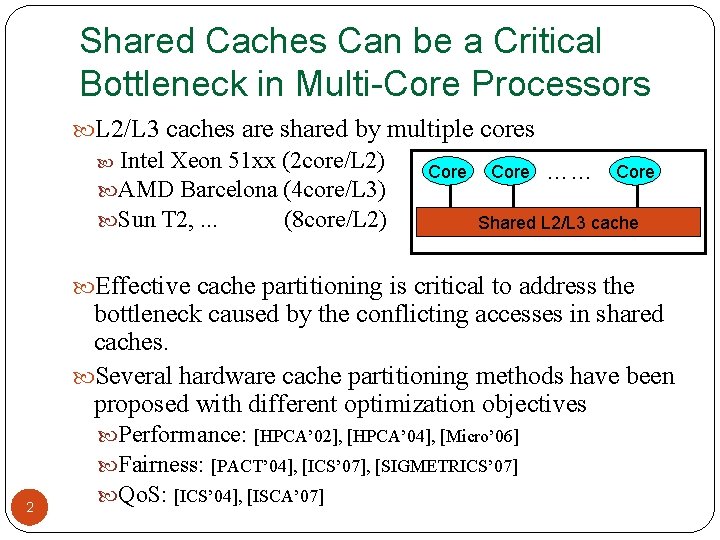

Shared Caches Can be a Critical Bottleneck in Multi-Core Processors L 2/L 3 caches are shared by multiple cores Intel Xeon 51 xx (2 core/L 2) AMD Barcelona (4 core/L 3) Sun T 2, . . . (8 core/L 2) Core …… Core Shared L 2/L 3 cache Effective cache partitioning is critical to address the bottleneck caused by the conflicting accesses in shared caches. Several hardware cache partitioning methods have been proposed with different optimization objectives 2 Performance: [HPCA’ 02], [HPCA’ 04], [Micro’ 06] Fairness: [PACT’ 04], [ICS’ 07], [SIGMETRICS’ 07] Qo. S: [ICS’ 04], [ISCA’ 07]

Limitations of Simulation-Based Studies Excessive simulation time Whole programs can not be evaluated. It would take several weeks/months to complete a single SPEC CPU 2006 benchmark As the number of cores continues to increase, simulation ability becomes even more limited Absence of long-term OS activities Interactions between processor/OS affect performance significantly Proneness to simulation inaccuracy Bugs in simulator Impossible to model many dynamics and details of 3 the system

Our Approach to Address the Issues Design and implement OS-based Cache Partitioning Embedding cache partitioning mechanism in OS By enhancing page coloring technique To support both static and dynamic cache partitioning Evaluate cache partitioning policies on commodity processors Execution- and measurement-based Run applications to completion 4 Measure performance with hardware counters

Four Questions to Answer Can we confirm the conclusions made by the simulation-based studies? Can we provide new insights and findings that simulation is not able to? Can we make a case for our OS-based approach as an effective option to evaluate multicore cache partitioning designs? What are advantages and disadvantages for OSbased cache partitioning? 5

Outline Introduction Design and implementation of OS-based cache partitioning mechanisms Evaluation environment and workload construction Cache partitioning policies and their results Conclusion 6

OS-Based Cache Partitioning Mechanisms Static cache partitioning Predetermines the amount of cache blocks allocated to each program at the beginning of its execution Page coloring enhancement Divides shared cache to multiple regions and partition cache regions through OS page address mapping Dynamic cache partitioning Adjusts cache quota among processes dynamically Page re-coloring Dynamically changes processes’ cache usage 7 through OS page address re-mapping

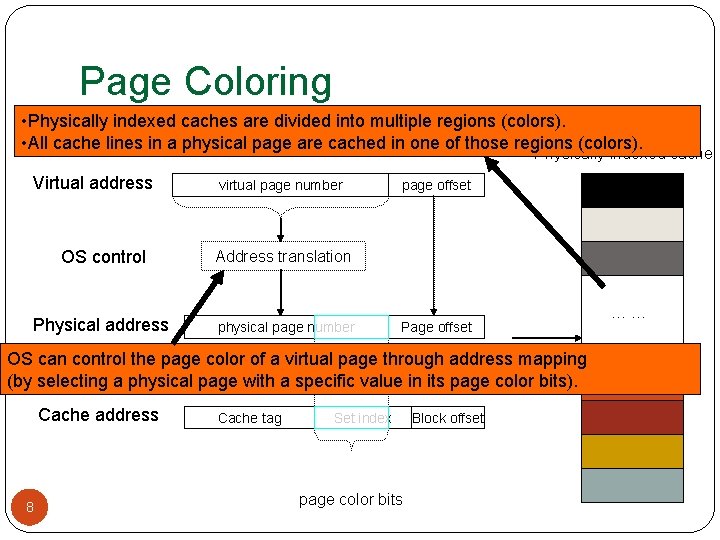

Page Coloring • Physically indexed caches are divided into multiple regions (colors). • All cache lines in a physical page are cached in one of those regions (colors). Physically indexed cache Virtual address virtual page number OS control Address translation Physical address physical page number page offset Page offset = OS can control the page color of a virtual page through address mapping (by selecting a physical page with a specific value in its page color bits). Cache address 8 Cache tag Set index page color bits Block offset ……

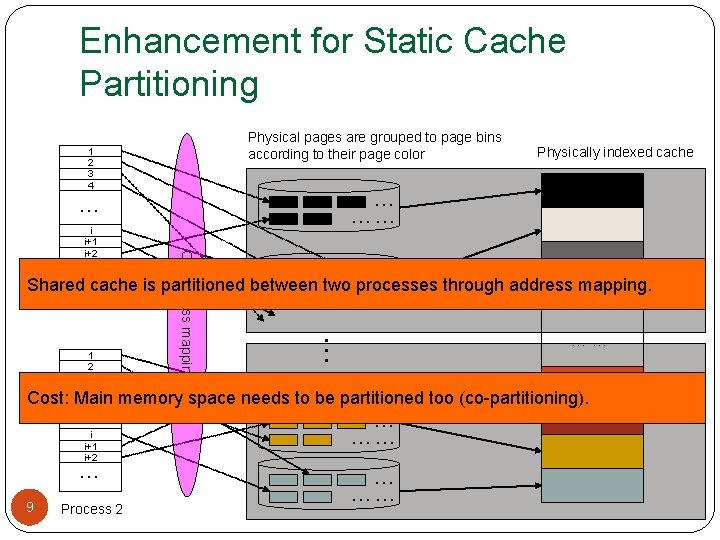

Enhancement for Static Cache Partitioning Physical pages are grouped to page bins according to their page color 1 2 3 4 … …… … … OS address mapping i i+1 i+2 Physically indexed cache … Shared cache is partitioned between two…… processes through address mapping. Process 1 . . . 1 2 3 4 …… Cost: Main memory space needs to be partitioned too (co-partitioning). … i i+1 i+2 … 9 Process 2 … ……

Dynamic Cache Partitioning Why? Programs have dynamic behaviors Most proposed schemes are dynamic How? Page re-coloring How to handle overhead? Measure overhead by performance counter Remove overhead in result (emulating hardware schemes) 10

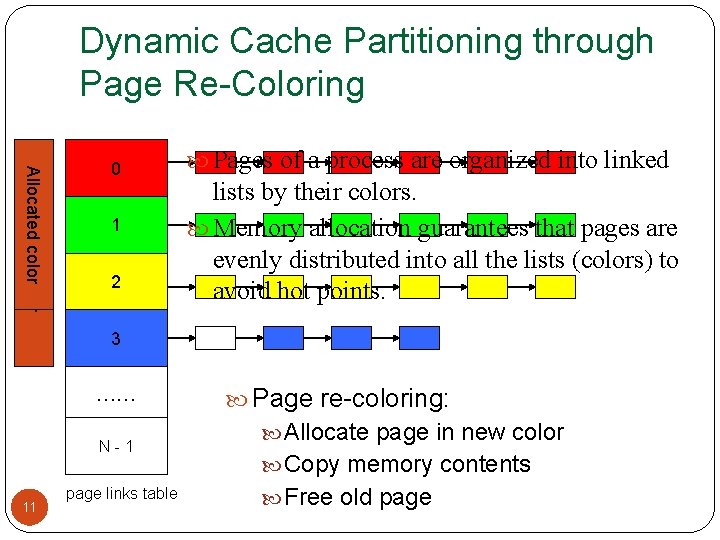

Dynamic Cache Partitioning through Page Re-Coloring Allocated color 0 1 2 Pages of a process are organized into linked lists by their colors. Memory allocation guarantees that pages are evenly distributed into all the lists (colors) to avoid hot points. 3 …… N-1 11 page links table Page re-coloring: Allocate page in new color Copy memory contents Free old page

Control the Page Migration Overhead Control the frequency of page migration Frequent enough to capture application phase changes Not too often to introduce large page migration overhead Lazy migration: avoid unnecessary page migration Observation: Not all pages are accessed between 12 their two migrations. Optimization: do not migrate a page until it is accessed

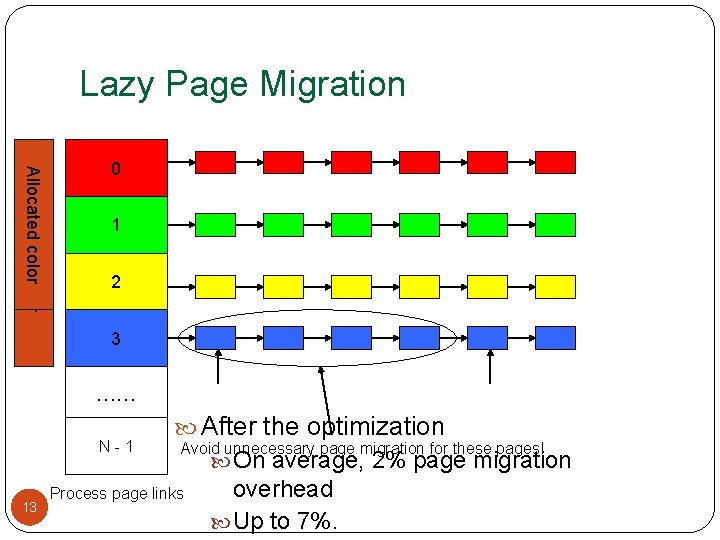

Lazy Page Migration Allocated color 0 1 2 3 …… N-1 13 After the optimization Avoid unnecessary page migration for these pages! Process page links On average, 2% page migration overhead Up to 7%.

Outline Introduction Design and implementation of OS-based cache partitioning mechanisms Evaluation environment and workload construction Cache partitioning policies and their results Conclusion 14

Experimental Environment Dell Power. Edge 1950 Two-way SMP, Intel dual-core Xeon 5160 Shared 4 MB L 2 cache, 16 -way 8 GB Fully Buffered DIMM Red Hat Enterprise Linux 4. 0 2. 6. 20. 3 kernel Performance counter tools from HP (Pfmon) Divide L 2 cache into 16 colors 15

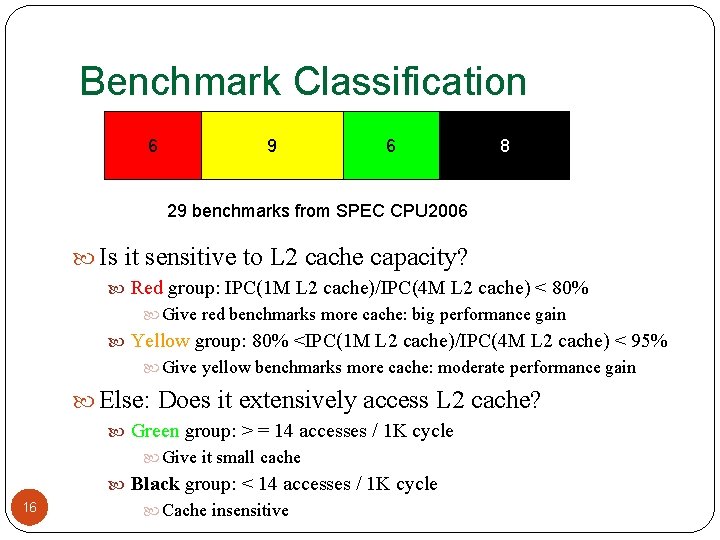

Benchmark Classification 6 9 6 8 29 benchmarks from SPEC CPU 2006 Is it sensitive to L 2 cache capacity? Red group: IPC(1 M L 2 cache)/IPC(4 M L 2 cache) < 80% Give red benchmarks more cache: big performance gain Yellow group: 80% <IPC(1 M L 2 cache)/IPC(4 M L 2 cache) < 95% Give yellow benchmarks more cache: moderate performance gain Else: Does it extensively access L 2 cache? Green group: > = 14 accesses / 1 K cycle Give it small cache 16 Black group: < 14 accesses / 1 K cycle Cache insensitive

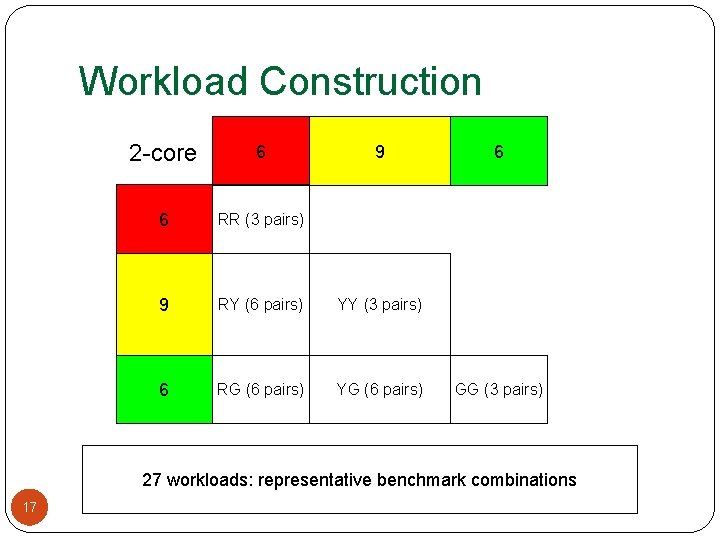

Workload Construction 2 -core 6 6 RR (3 pairs) 9 RY (6 pairs) YY (3 pairs) 6 RG (6 pairs) YG (6 pairs) 9 6 GG (3 pairs) 27 workloads: representative benchmark combinations 17

Outline Introduction OS-based cache partitioning mechanism Evaluation environment and workload construction Cache partitioning policies and their results Performance Fairness Conclusion 18

![Performance – Metrics Divide metrics into evaluation metrics and policy metrics [PACT’ 06] Evaluation Performance – Metrics Divide metrics into evaluation metrics and policy metrics [PACT’ 06] Evaluation](http://slidetodoc.com/presentation_image_h2/e5c5b5c905d035be6247d17809ee7629/image-19.jpg)

Performance – Metrics Divide metrics into evaluation metrics and policy metrics [PACT’ 06] Evaluation metrics: Optimization objectives, not always available during run-time Policy metrics Used to drive dynamic partitioning policies: available during runtime Sum of IPC, Combined cache miss rate, Combined cache misses 19

Static Partitioning Total #color of cache: 16 Give at least two colors to each program Make sure that each program get 1 GB memory to avoid swapping (because of co-partitioning) Try all possible partitionings for all workloads (2: 14), (3: 13), (4: 12) ……. (8, 8), ……, (13: 3), (14: 2) Get value of evaluation metrics Compared with performance of all partitionings with performance of shared cache 20

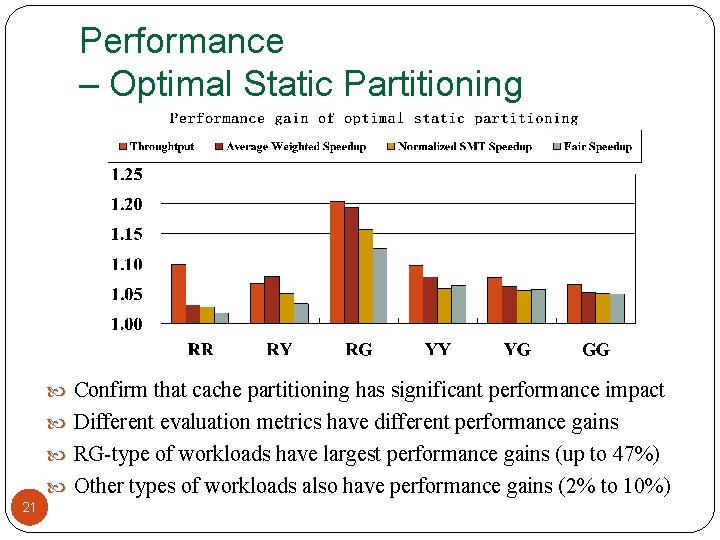

Performance – Optimal Static Partitioning Confirm that cache partitioning has significant performance impact Different evaluation metrics have different performance gains RG-type of workloads have largest performance gains (up to 47%) Other types of workloads also have performance gains (2% to 10%) 21

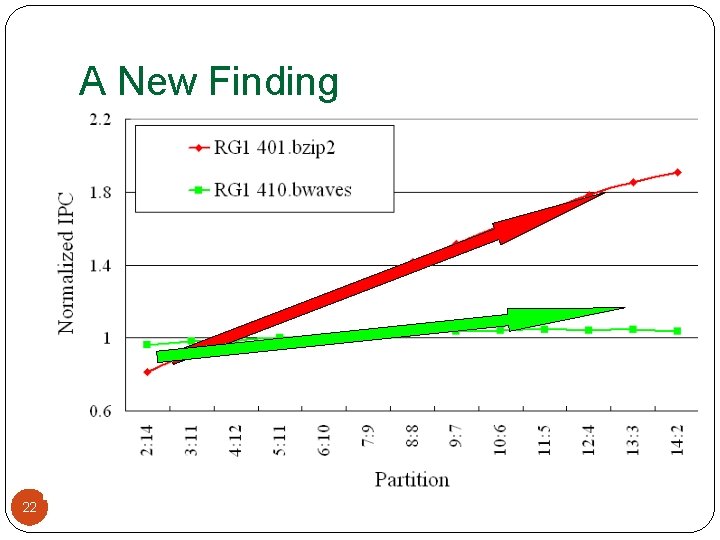

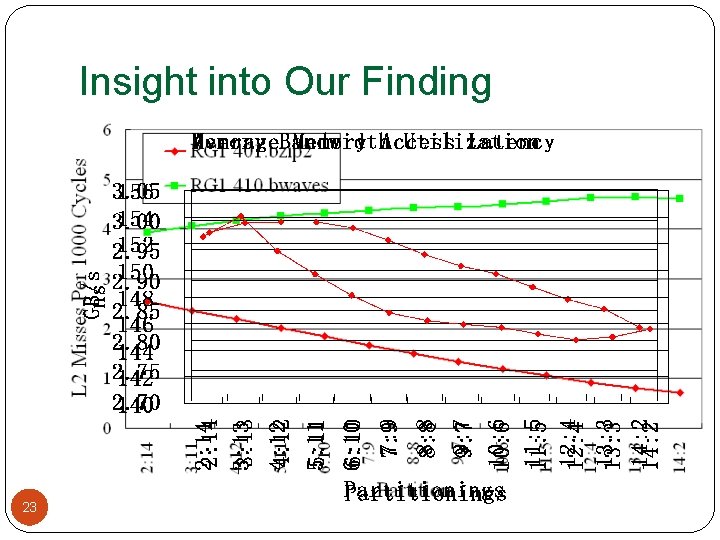

A New Finding Workload RG 1: 401. bzip 2 (Red) + 410. bwaves (Green) Intuitively, giving more cache space to 401. bzip 2 (Red) Increases the performance of 401. bzip 2 largely (Red) Decreases the performance of 410. bwaves slightly (Green) However, we observe that 22

Insight into Our Finding 23

Insight into Our Finding We have the same observation in RG 4, RG 5 and YG 5 This is not observed by simulation Did not model main memory sub-system in detail Assumed fixed memory access latency Shows the advantages of our execution- and measurement-base study 24

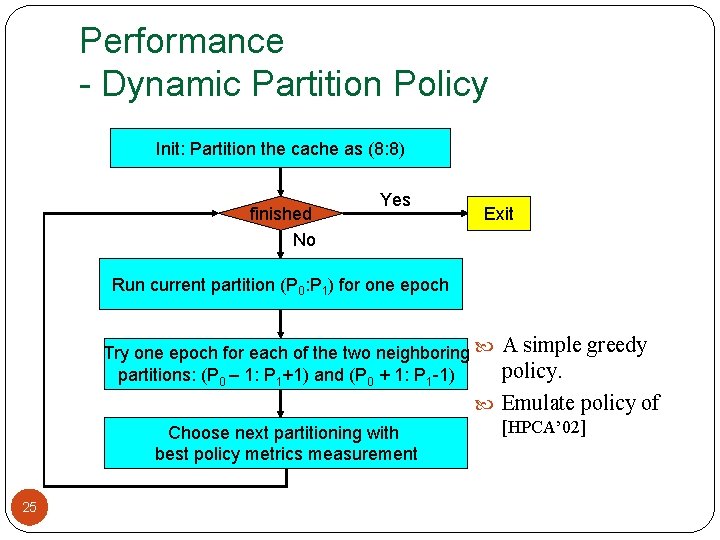

Performance - Dynamic Partition Policy Init: Partition the cache as (8: 8) finished No Yes Exit Run current partition (P 0: P 1) for one epoch A simple greedy policy. Emulate policy of Try one epoch for each of the two neighboring partitions: (P 0 – 1: P 1+1) and (P 0 + 1: P 1 -1) Choose next partitioning with best policy metrics measurement 25 [HPCA’ 02]

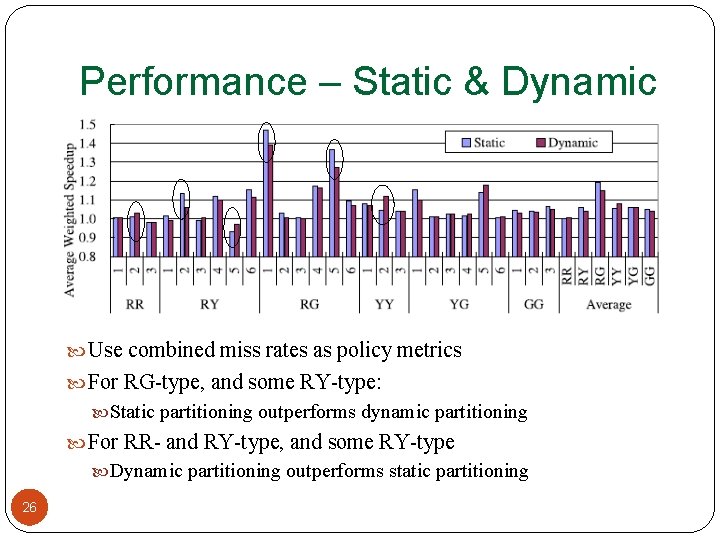

Performance – Static & Dynamic Use combined miss rates as policy metrics For RG-type, and some RY-type: Static partitioning outperforms dynamic partitioning For RR- and RY-type, and some RY-type Dynamic partitioning outperforms static partitioning 26

![Fairness – Metrics and Policy [PACT’ 04] Metrics Evaluation metrics FM 0 difference in Fairness – Metrics and Policy [PACT’ 04] Metrics Evaluation metrics FM 0 difference in](http://slidetodoc.com/presentation_image_h2/e5c5b5c905d035be6247d17809ee7629/image-27.jpg)

Fairness – Metrics and Policy [PACT’ 04] Metrics Evaluation metrics FM 0 difference in slowdown, small is better Policy metrics Policy Repartitioning and rollback 27

Fairness - Result Dynamic partitioning can achieve better fairness If we use FM 0 as both evaluation metrics and policy metrics None of policy metrics (FM 1 to FM 5) is good enough to drive the partitioning policy to get comparable fairness with static partitioning Strong correlation was reported in simulation-based study – [PACT’ 04] None of policy metrics has consistently strong correlation with FM 0 SPEC CPU 2006 (ref input) SPEC CPU 2000 (test input) Complete trillions of instructions less than one billion instruction 4 MB L 2 cache 512 KB L 2 cache 28

Conclusion Confirmed some conclusions made by simulations Provided new insights and findings Give cache space from one to another, increase performance of both Poor correlation between evaluation and policy metrics for fairness Made a case for our OS-based approach as an effective option for evaluation of multicore cache partitioning Advantages of OS-based cache partitioning Working on commodity processors for an execution- and measurement-based study 29 Disadvantages of OS-based cache partitioning Co-partitioning (may underutilize memory), migration overhead

Ongoing Work Reduce migration overhead on commodity processors Cache partitioning at the compiler level Partition cache at object level Hybrid cache partitioning method Remove the cost of co-partitioning Avoid page migration overhead 30

Thanks! Gaining Insights into Multi-Core Cache Partitioning: Bridging the Gap between Simulation and Real Systems Jiang Lin 1, Qingda Lu 2, Xiaoning Ding 2, Zhao Zhang 1, Xiaodong Zhang 2, and P. Sadayappan 2 1 Iowa State University 2 The Ohio State University

Backup Slides 32

![Fairness - Correlation between Evaluation Metrics and Policy Metrics (Reported by [PACT’ 04]) Strong Fairness - Correlation between Evaluation Metrics and Policy Metrics (Reported by [PACT’ 04]) Strong](http://slidetodoc.com/presentation_image_h2/e5c5b5c905d035be6247d17809ee7629/image-33.jpg)

Fairness - Correlation between Evaluation Metrics and Policy Metrics (Reported by [PACT’ 04]) Strong correlation was reported in simulation study – [PACT’ 04] 33

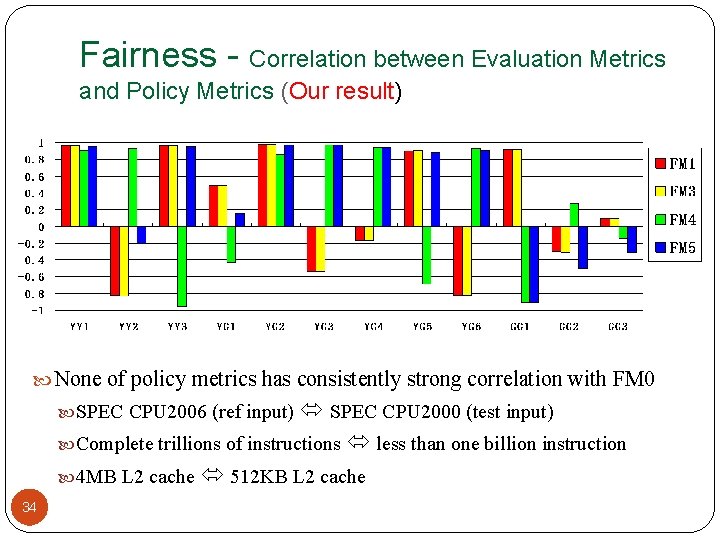

Fairness - Correlation between Evaluation Metrics and Policy Metrics (Our result) None of policy metrics has consistently strong correlation with FM 0 SPEC CPU 2006 (ref input) SPEC CPU 2000 (test input) Complete trillions of instructions less than one billion instruction 4 MB L 2 cache 512 KB L 2 cache 34

- Slides: 34