fx Filtering x NCLEFDC noisy continuous labeled examples

f(x) Filtering x NCLEFDC noisy continuous labeled examples INDEX 1. Introduction IBERAMIA 2002 2. The Principle 3. The Algorithm José Ramón Quevedo 4. Divide & Conquer María Dolores García 5. Experimentation Elena Montañés 6. Conclusions Artificial Intelligence Centre Oviedo University (Spain)

f(x) NCLEFDC x Index INDEX 1. Introduction 2. The Principle 3. The Algorithm 4. Divide & Conquer 5. Experimentation 6. Conclusions 1. Introduction 2. The Principle 3. The Algorithm 4. Divide and Conquer 5. Experimentation 6. Conclusions

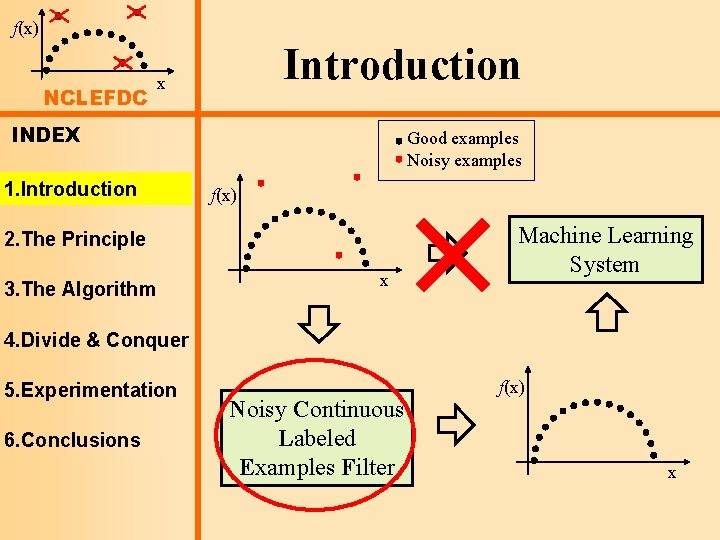

f(x) NCLEFDC Introduction x INDEX 1. Introduction Good examples Noisy examples f(x) 2. The Principle 3. The Algorithm x Machine Learning System 4. Divide & Conquer 5. Experimentation 6. Conclusions Noisy Continuous Labeled Examples Filter f(x) x

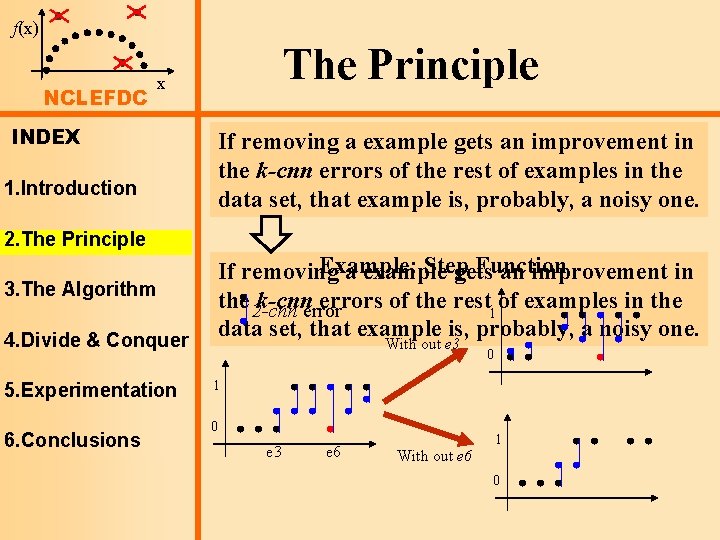

f(x) NCLEFDC The Principle x INDEX 1. Introduction If The removing examples a example whose neighbour gets an improvement is a noisy onein the would k-cnn improve errorstheir of thek-cnn rest of errors examples if the in noisy the data example set, that was removed. example is, probably, a noisy one. 2. The Principle 3. The Algorithm 4. Divide & Conquer 5. Experimentation 6. Conclusions Example: Step Function If removing a example gets an improvement in the 2 -cnn k-cnnerrors of the rest 1 of examples in the data set, that example is, probably, a noisy one. With out e 3 0 1 0 e 3 e 6 With out e 6 1 0

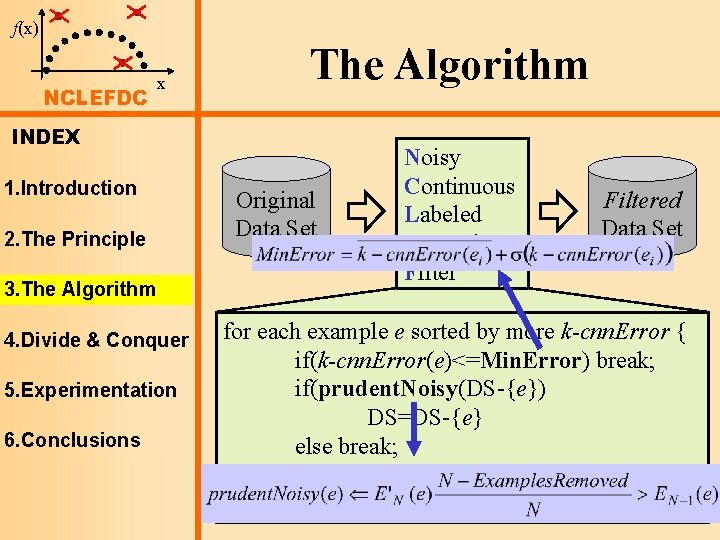

f(x) NCLEFDC x The Algorithm INDEX 1. Introduction 2. The Principle 3. The Algorithm 4. Divide & Conquer 5. Experimentation 6. Conclusions Original Data Set Noisy Continuous Labeled Examples Filtered Data Set for each example e sorted by more k-cnn. Error { if(k-cnn. Error(e)<=Min. Error) break; if(prudent. Noisy(DS-{e}) DS=DS-{e} else break; } return DS;

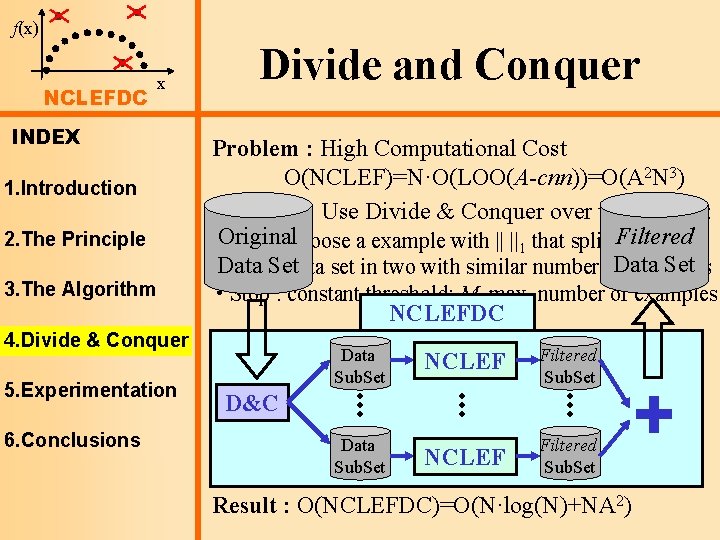

f(x) NCLEFDC x INDEX 1. Introduction 2. The Principle 3. The Algorithm Divide and Conquer Problem : High Computational Cost O(NCLEF)=N·O(LOO(A-cnn))=O(A 2 N 3) Solution : Use Divide & Conquer over the data set: • Original Split : choose a example with || ||1 that splits. Filtered the Set Data Setdata set in two with similar number of. Data examples • Stop : constant threshold: M, max. number of examples NCLEFDC 4. Divide & Conquer 5. Experimentation 6. Conclusions Data Sub. Set NCLEF Filtered Sub. Set D&C Data Sub. Set Result : O(NCLEFDC)=O(N·log(N)+NA 2)

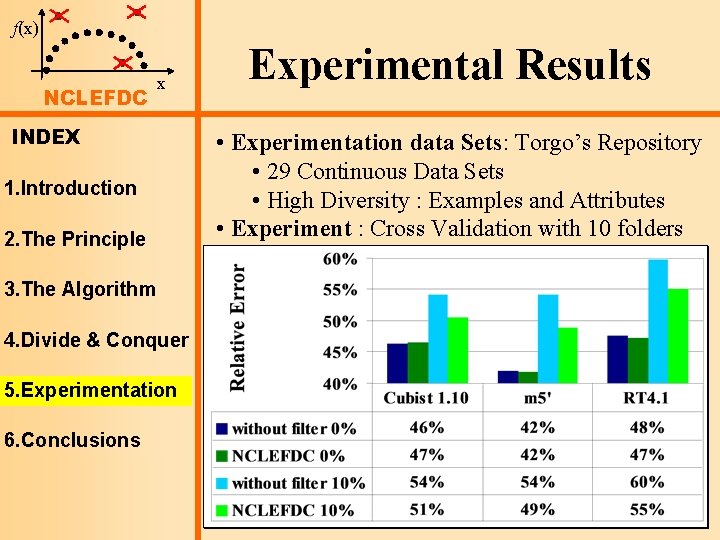

f(x) NCLEFDC x INDEX 1. Introduction 2. The Principle 3. The Algorithm 4. Divide & Conquer 5. Experimentation 6. Conclusions Experimental Results • Experimentation data Sets: Torgo’s Repository • 29 Continuous Data Sets • High Diversity : Examples and Attributes • Experiment : Cross Validation with 10 folders

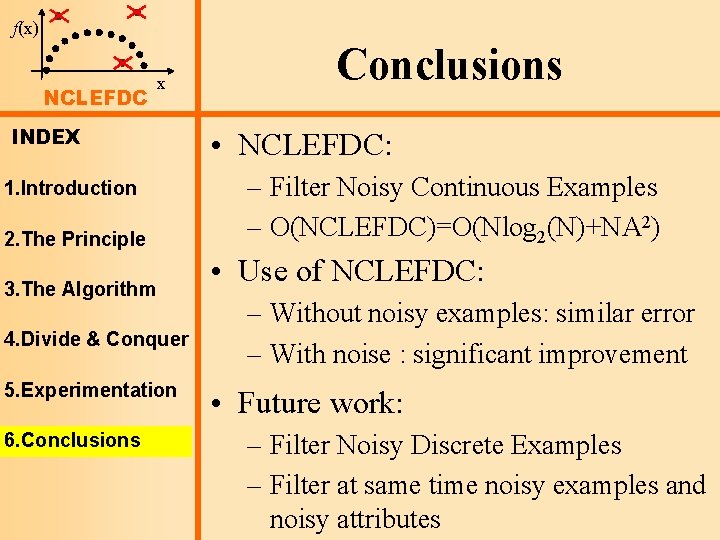

f(x) NCLEFDC x INDEX 1. Introduction 2. The Principle 3. The Algorithm 4. Divide & Conquer 5. Experimentation 6. Conclusions • NCLEFDC: – Filter Noisy Continuous Examples – O(NCLEFDC)=O(Nlog 2(N)+NA 2) • Use of NCLEFDC: – Without noisy examples: similar error – With noise : significant improvement • Future work: – Filter Noisy Discrete Examples – Filter at same time noisy examples and noisy attributes

f(x) Filtering x NCLEFDC noisy continuous labeled examples INDEX 1. Introduction IBERAMIA 2002 2. The Principle 3. The Algorithm José Ramón Quevedo 4. Divide & Conquer María Dolores García 5. Experimentation Elena Montañés 6. Conclusions Artificial Intelligence Centre Oviedo University (Spain)

- Slides: 9