Future Grid Overview Bloomington Indiana January 17 2010

Future. Grid Overview Bloomington Indiana January 17 2010 Future. Grid Collaboration Presented by Geoffrey Fox gcf@indiana. edu http: //www. infomall. org https: //portal. futuregrid. org Director, Digital Science Center, Pervasive Technology Institute Associate Dean for Research and Graduate Studies, School of Informatics and Computing Indiana University Bloomington https: //portal. futuregrid. org

Topics to Discuss • • Overview 60 Minutes Management and Budget 40 minutes Hardware 20 minutes Software 160 minutes (overview before lunch) Uses of Future. Grid 45 minutes User Support 15 minutes Training Education Outreach 45 minutes Time includes questions: total 385 minutes https: //portal. futuregrid. org 2

Future. Grid key Concepts I • Future. Grid is an international testbed modeled on Grid 5000 • Supporting international Computer Science and Computational Science research in cloud, grid and parallel computing (HPC) – Industry and Academia • The Future. Grid testbed provides to its users: – A flexible development and testing platform for middleware and application users looking at interoperability, functionality, performance or evaluation – Each use of Future. Grid is an experiment that is reproducible – A rich education and teaching platform for advanced cyberinfrastructure (computer science) classes https: //portal. futuregrid. org

Future. Grid key Concepts I • Future. Grid has a complementary focus to both the Open Science Grid and the other parts of Tera. Grid. – Future. Grid is user-customizable, accessed interactively and supports Grid, Cloud and HPC software with and without virtualization. – Future. Grid is an experimental platform where computer science applications can explore many facets of distributed systems – and where domain sciences can explore various deployment scenarios and tuning parameters and in the future possibly migrate to the large-scale national Cyberinfrastructure. – Future. Grid supports Interoperability Testbeds – OGF really needed! • Note a lot of current use Education, Computer Science Systems and Biology/Bioinformatics https: //portal. futuregrid. org

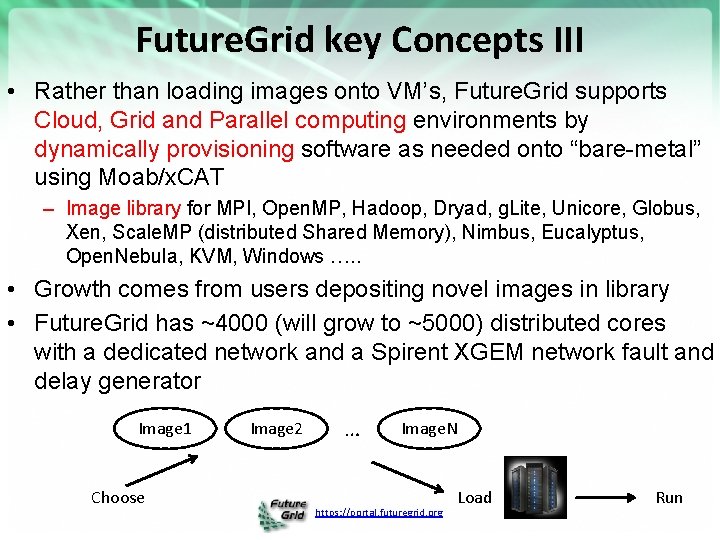

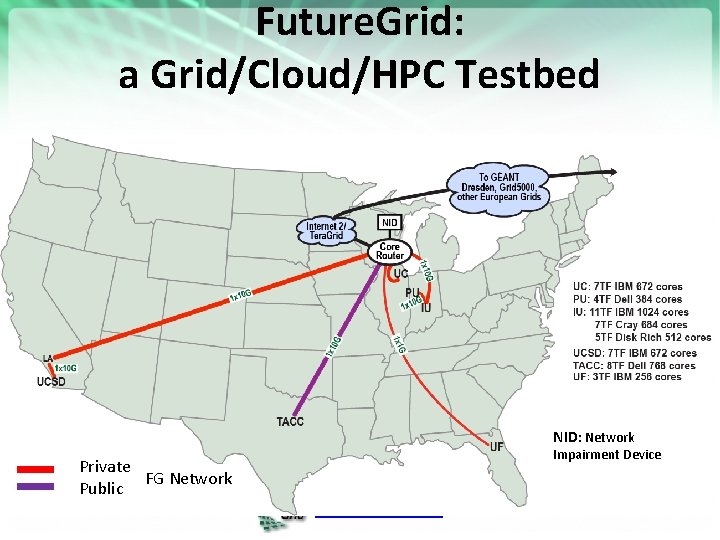

Future. Grid key Concepts III • Rather than loading images onto VM’s, Future. Grid supports Cloud, Grid and Parallel computing environments by dynamically provisioning software as needed onto “bare-metal” using Moab/x. CAT – Image library for MPI, Open. MP, Hadoop, Dryad, g. Lite, Unicore, Globus, Xen, Scale. MP (distributed Shared Memory), Nimbus, Eucalyptus, Open. Nebula, KVM, Windows …. . • Growth comes from users depositing novel images in library • Future. Grid has ~4000 (will grow to ~5000) distributed cores with a dedicated network and a Spirent XGEM network fault and delay generator Image 1 Choose Image 2 … Image. N https: //portal. futuregrid. org Load Run

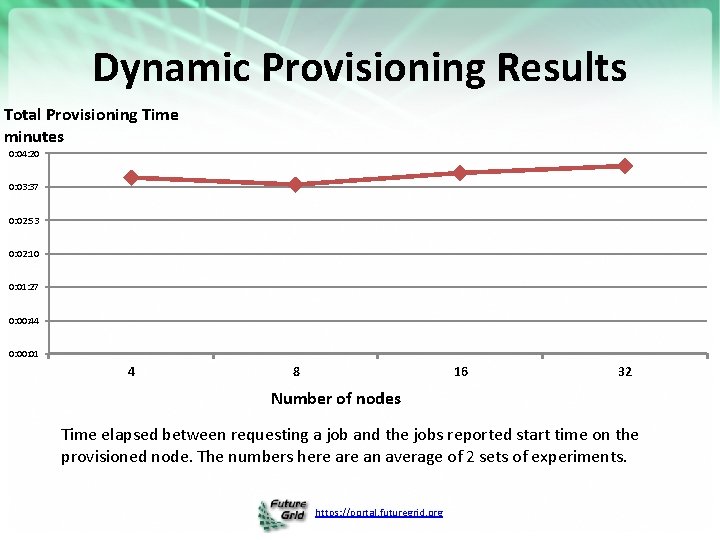

Dynamic Provisioning Results Total Provisioning Time minutes 0: 04: 20 0: 03: 37 0: 02: 53 0: 02: 10 0: 01: 27 0: 00: 44 0: 01 4 8 16 32 Number of nodes Time elapsed between requesting a job and the jobs reported start time on the provisioned node. The numbers here an average of 2 sets of experiments. https: //portal. futuregrid. org

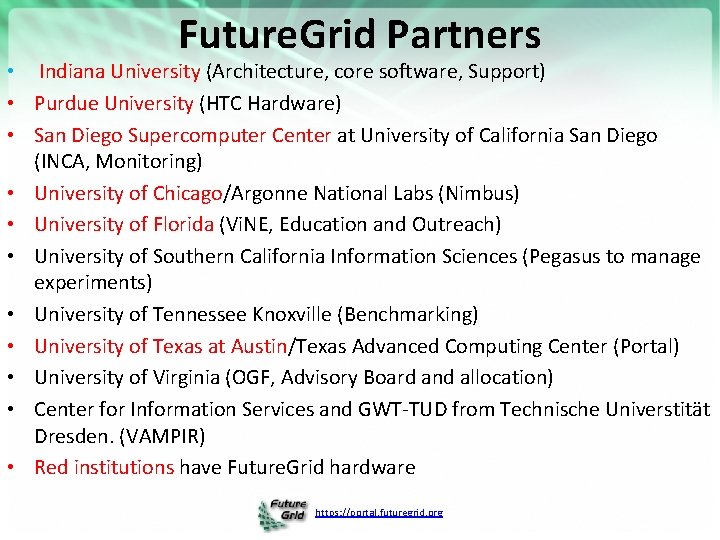

Future. Grid Partners • Indiana University (Architecture, core software, Support) • Purdue University (HTC Hardware) • San Diego Supercomputer Center at University of California San Diego (INCA, Monitoring) • University of Chicago/Argonne National Labs (Nimbus) • University of Florida (Vi. NE, Education and Outreach) • University of Southern California Information Sciences (Pegasus to manage experiments) • University of Tennessee Knoxville (Benchmarking) • University of Texas at Austin/Texas Advanced Computing Center (Portal) • University of Virginia (OGF, Advisory Board and allocation) • Center for Information Services and GWT-TUD from Technische Universtität Dresden. (VAMPIR) • Red institutions have Future. Grid hardware https: //portal. futuregrid. org

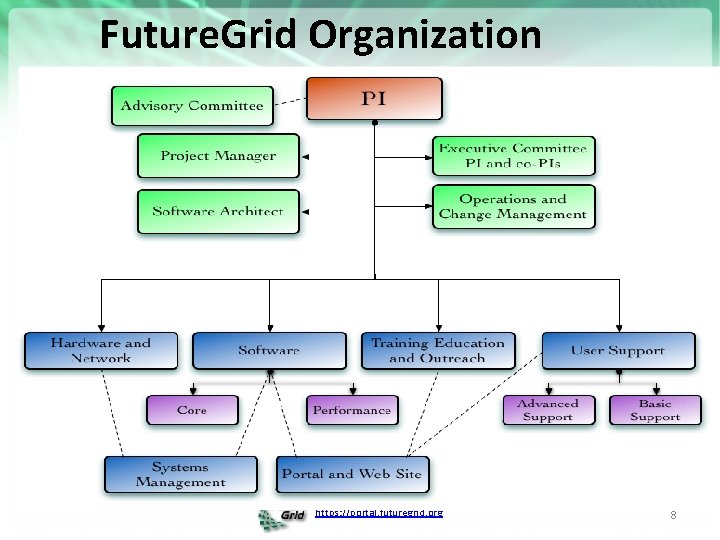

Future. Grid Organization https: //portal. futuregrid. org 8

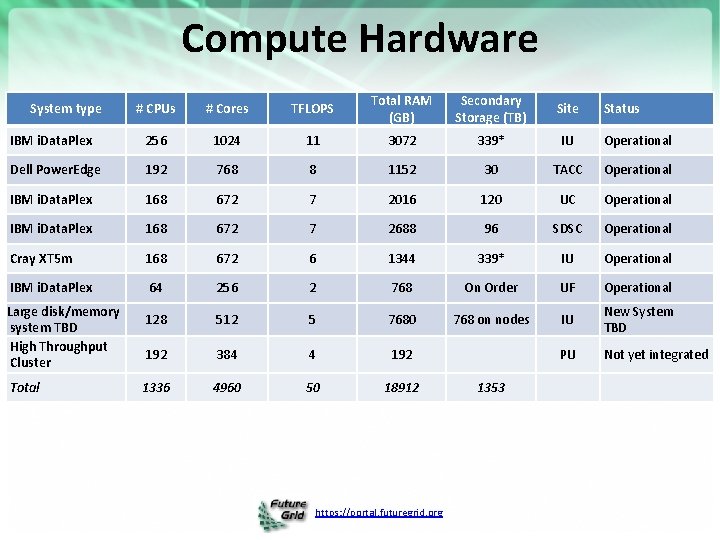

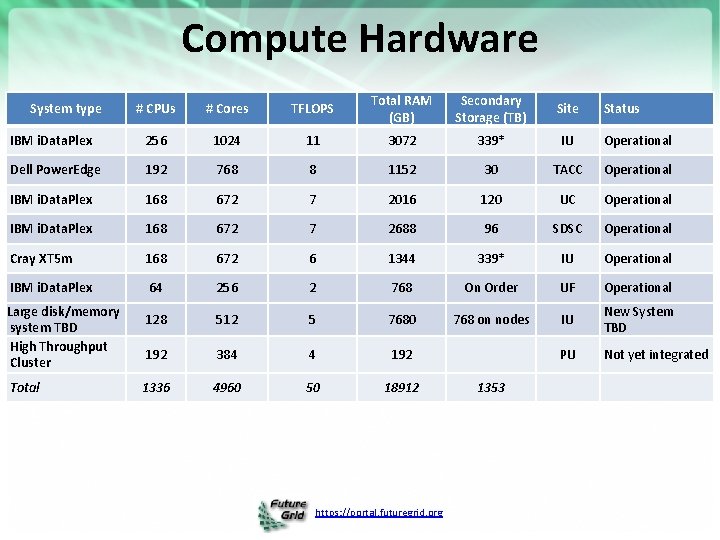

Compute Hardware # CPUs # Cores TFLOPS Total RAM (GB) Secondary Storage (TB) Site IBM i. Data. Plex 256 1024 11 3072 339* IU Operational Dell Power. Edge 192 768 8 1152 30 TACC Operational IBM i. Data. Plex 168 672 7 2016 120 UC Operational IBM i. Data. Plex 168 672 7 2688 96 SDSC Operational Cray XT 5 m 168 672 6 1344 339* IU Operational IBM i. Data. Plex 64 256 2 768 On Order UF Operational 128 512 5 7680 768 on nodes IU New System TBD 192 384 4 192 PU Not yet integrated 1336 4960 50 18912 System type Large disk/memory system TBD High Throughput Cluster Total https: //portal. futuregrid. org 1353 Status

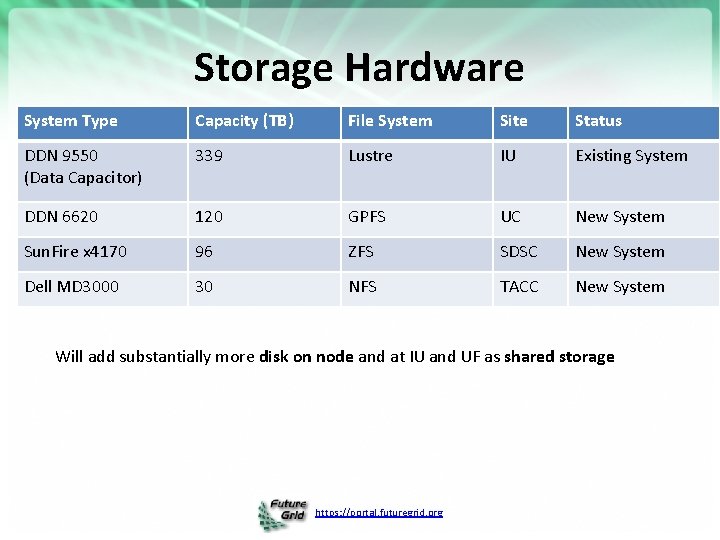

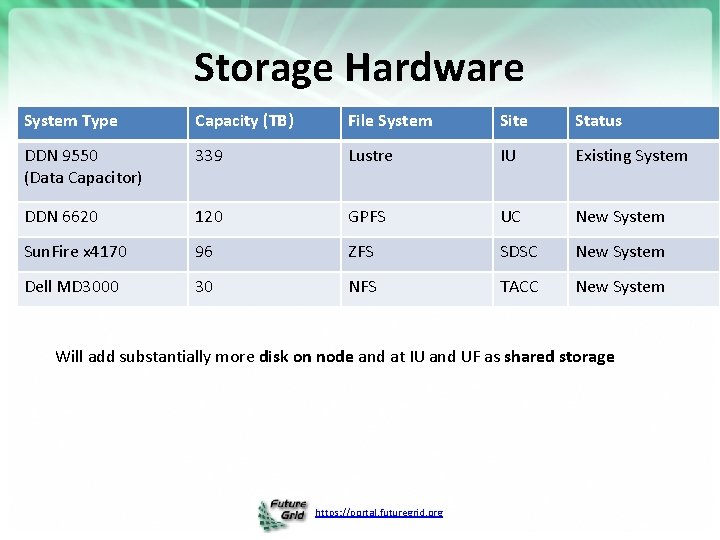

Storage Hardware System Type Capacity (TB) File System Site Status DDN 9550 (Data Capacitor) 339 Lustre IU Existing System DDN 6620 120 GPFS UC New System Sun. Fire x 4170 96 ZFS SDSC New System Dell MD 3000 30 NFS TACC New System Will add substantially more disk on node and at IU and UF as shared storage https: //portal. futuregrid. org

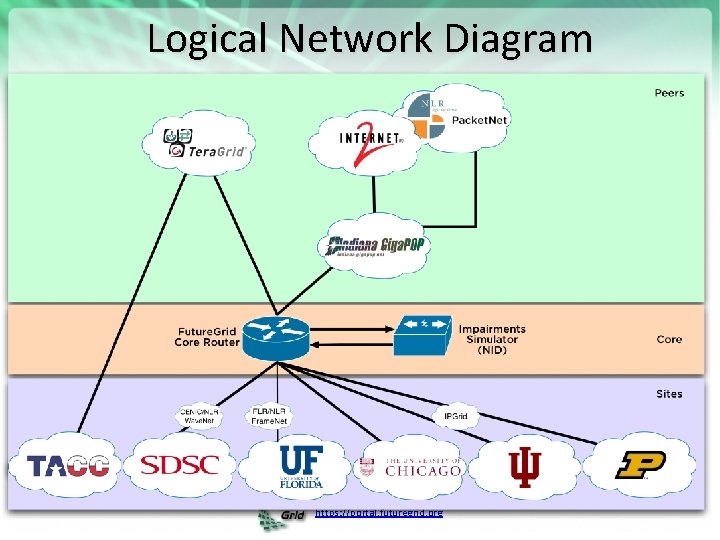

Future. Grid: a Grid/Cloud/HPC Testbed NID: Network Impairment Device Private FG Network Public https: //portal. futuregrid. org

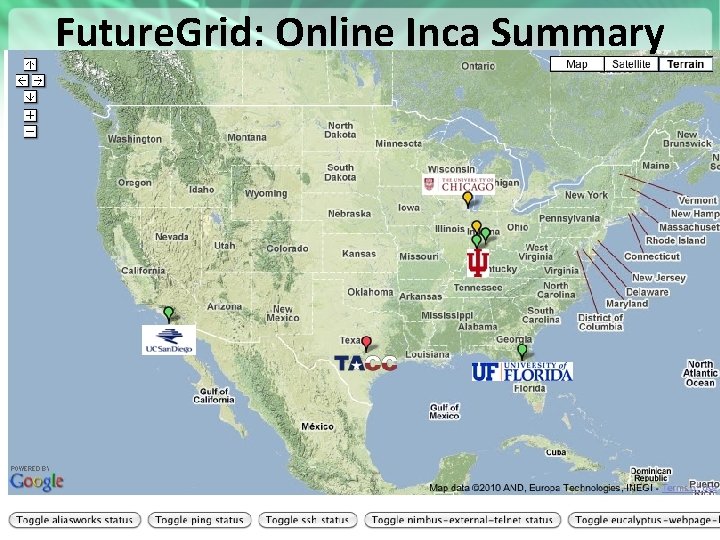

Future. Grid: Online Inca Summary https: //portal. futuregrid. org

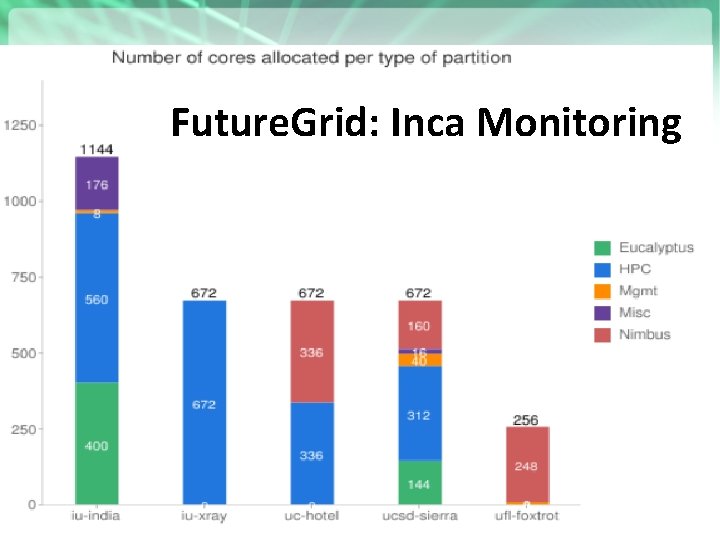

Future. Grid: Inca Monitoring https: //portal. futuregrid. org

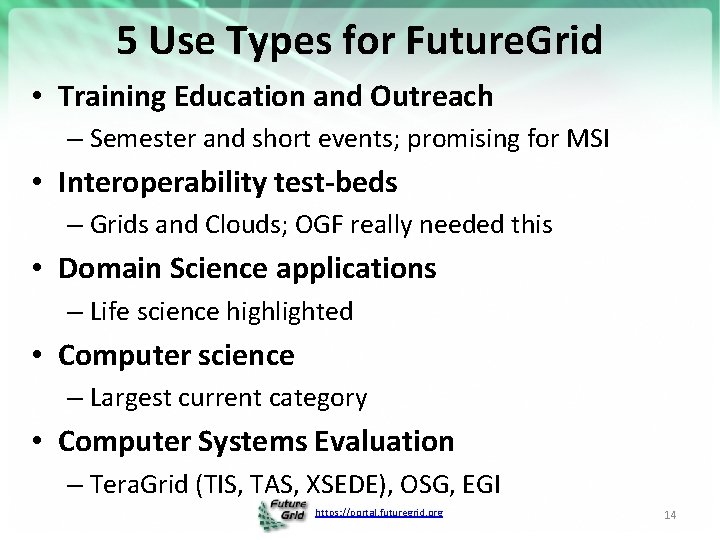

5 Use Types for Future. Grid • Training Education and Outreach – Semester and short events; promising for MSI • Interoperability test-beds – Grids and Clouds; OGF really needed this • Domain Science applications – Life science highlighted • Computer science – Largest current category • Computer Systems Evaluation – Tera. Grid (TIS, TAS, XSEDE), OSG, EGI https: //portal. futuregrid. org 14

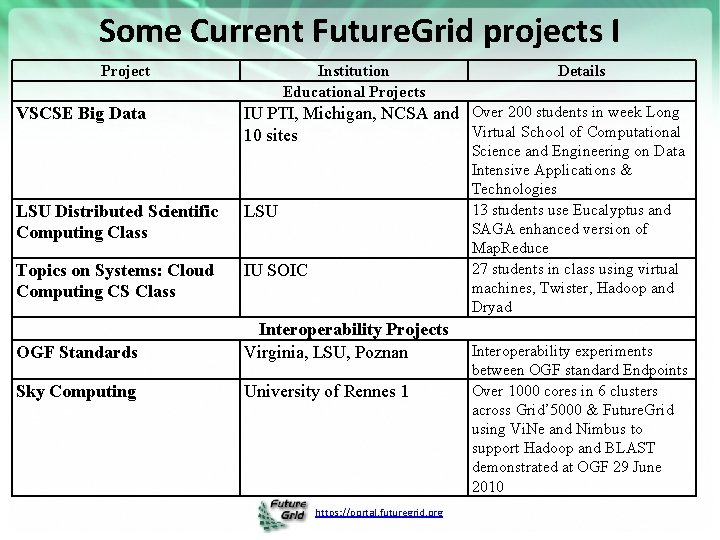

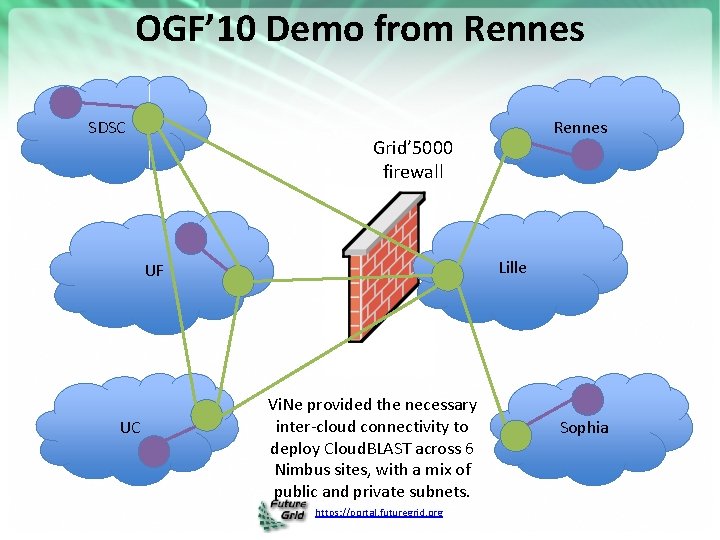

Some Current Future. Grid projects I Project VSCSE Big Data Institution Educational Projects Details IU PTI, Michigan, NCSA and Over 200 students in week Long Virtual School of Computational 10 sites LSU Distributed Scientific Computing Class LSU Topics on Systems: Cloud Computing CS Class IU SOIC Science and Engineering on Data Intensive Applications & Technologies 13 students use Eucalyptus and SAGA enhanced version of Map. Reduce 27 students in class using virtual machines, Twister, Hadoop and Dryad OGF Standards Interoperability Projects Virginia, LSU, Poznan Sky Computing University of Rennes 1 https: //portal. futuregrid. org Interoperability experiments between OGF standard Endpoints Over 1000 cores in 6 clusters across Grid’ 5000 & Future. Grid using Vi. Ne and Nimbus to support Hadoop and BLAST demonstrated at OGF 29 June 2010

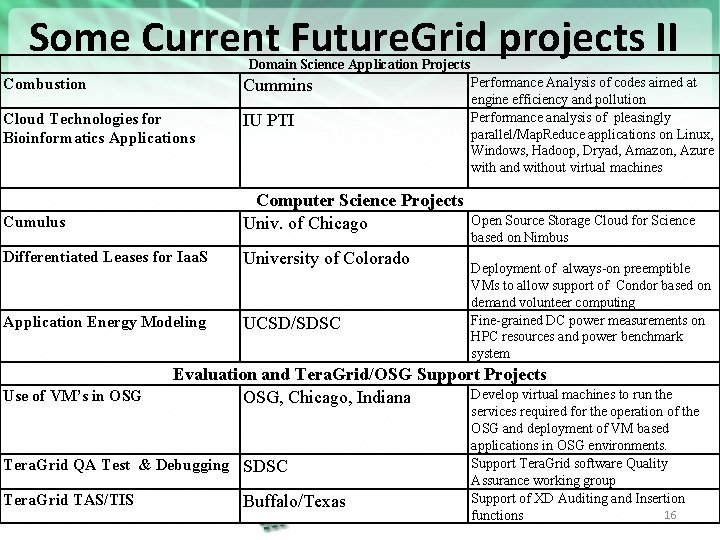

Some Current Future. Grid projects II Domain Science Application Projects Combustion Cummins Cloud Technologies for Bioinformatics Applications IU PTI Performance Analysis of codes aimed at engine efficiency and pollution Performance analysis of pleasingly parallel/Map. Reduce applications on Linux, Windows, Hadoop, Dryad, Amazon, Azure with and without virtual machines Cumulus Computer Science Projects Univ. of Chicago Differentiated Leases for Iaa. S University of Colorado Application Energy Modeling UCSD/SDSC Use of VM’s in OSG Deployment of always-on preemptible VMs to allow support of Condor based on demand volunteer computing Fine-grained DC power measurements on HPC resources and power benchmark system Evaluation and Tera. Grid/OSG Support Projects Develop virtual machines to run the OSG, Chicago, Indiana Tera. Grid QA Test & Debugging SDSC Tera. Grid TAS/TIS Open Source Storage Cloud for Science based on Nimbus Buffalo/Texas https: //portal. futuregrid. org services required for the operation of the OSG and deployment of VM based applications in OSG environments. Support Tera. Grid software Quality Assurance working group Support of XD Auditing and Insertion 16 functions

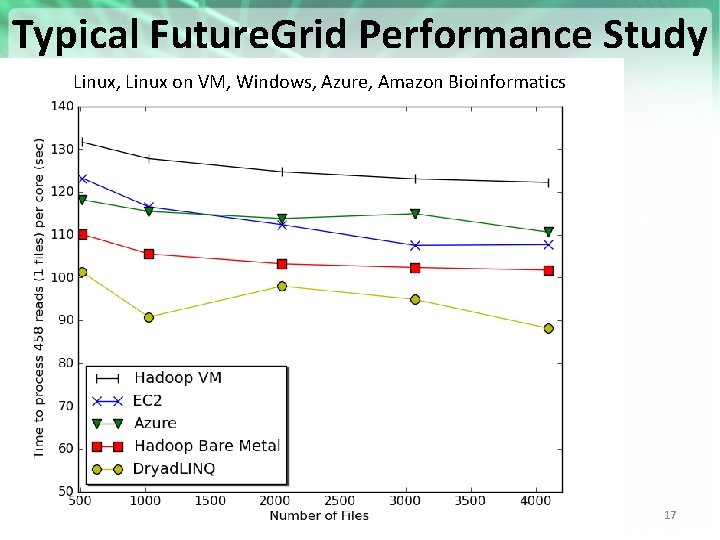

Typical Future. Grid Performance Study Linux, Linux on VM, Windows, Azure, Amazon Bioinformatics https: //portal. futuregrid. org 17

OGF’ 10 Demo from Rennes SDSC Grid’ 5000 firewall Lille UF UC Rennes Vi. Ne provided the necessary inter-cloud connectivity to deploy Cloud. BLAST across 6 Nimbus sites, with a mix of public and private subnets. https: //portal. futuregrid. org Sophia

One User Report (Jha) I • The design and development of distributed scientific applications presents a challenging research agenda at the intersection of cyberinfrastructure and computational science. • It is no exaggeration that the US Academic community has lagged in its ability to design and implement novel distributed scientific applications, tools and run-time systems that are broadly-used, extensible, interoperable and simple to use/adapt/deploy. • The reasons are many and resist oversimplification. But one critical reason has been the absence of infrastructure where abstractions, run-time systems and applications can be developed, tested and hardened at the scales and with a degree of distribution (and the concomitant heterogeneity, dynamism and faults) required to facilitate the transition from "toy solutions" to "production grade", i. e. , the intermediate infrastructure. https: //portal. futuregrid. org 19

One User Report (Jha) II • For the SAGA project that is concerned with all of the above elements, Future. Grid has proven to be that *panacea*, the hitherto missing element preventing progress towards scalable distributed applications. • In a nutshell, FG has provided a persistent, production-grade experimental infrastructure with the ability to perform controlled experiments, without violating production policies and disrupting production infrastructure priorities. • These attributes coupled with excellent technical support -- the bedrock upon which all these capabilities depend, have resulted in the following specific advances in the short period of under a year: • Standards based development and interoperability tests • Analyzing & Comparing Programming Models and Run-time tools for Computation and Data-Intensive Science • Developing Hybrid Cloud-Grid Scientific Applications and Tools (Autonomic Schedulers) [Work in Conjunction with Manish Parashar's group] https: //portal. futuregrid. org 20

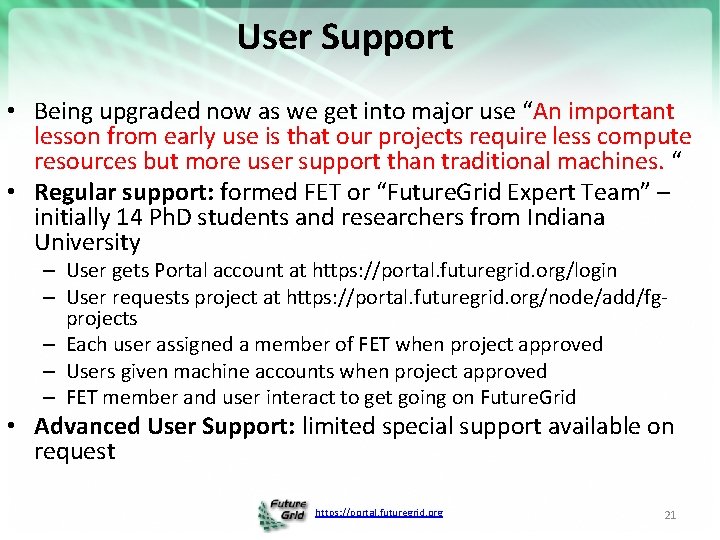

User Support • Being upgraded now as we get into major use “An important lesson from early use is that our projects require less compute resources but more user support than traditional machines. “ • Regular support: formed FET or “Future. Grid Expert Team” – initially 14 Ph. D students and researchers from Indiana University – User gets Portal account at https: //portal. futuregrid. org/login – User requests project at https: //portal. futuregrid. org/node/add/fgprojects – Each user assigned a member of FET when project approved – Users given machine accounts when project approved – FET member and user interact to get going on Future. Grid • Advanced User Support: limited special support available on request https: //portal. futuregrid. org 21

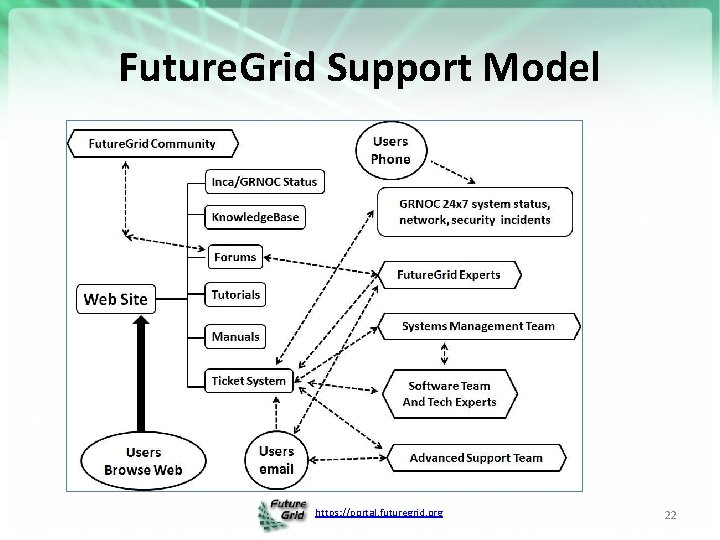

Future. Grid Support Model https: //portal. futuregrid. org 22

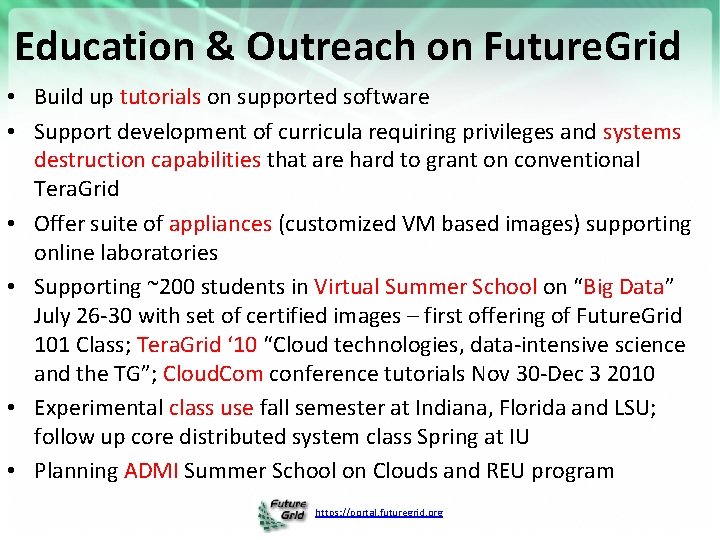

Education & Outreach on Future. Grid • Build up tutorials on supported software • Support development of curricula requiring privileges and systems destruction capabilities that are hard to grant on conventional Tera. Grid • Offer suite of appliances (customized VM based images) supporting online laboratories • Supporting ~200 students in Virtual Summer School on “Big Data” July 26 -30 with set of certified images – first offering of Future. Grid 101 Class; Tera. Grid ‘ 10 “Cloud technologies, data-intensive science and the TG”; Cloud. Com conference tutorials Nov 30 -Dec 3 2010 • Experimental class use fall semester at Indiana, Florida and LSU; follow up core distributed system class Spring at IU • Planning ADMI Summer School on Clouds and REU program https: //portal. futuregrid. org

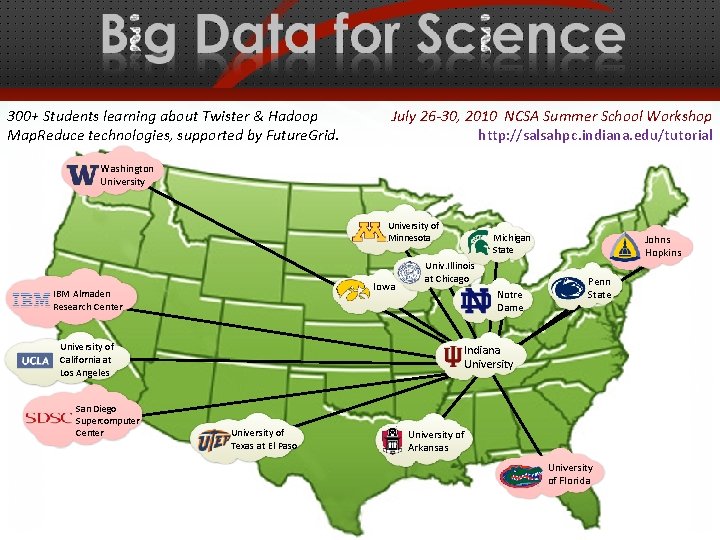

300+ Students learning about Twister & Hadoop Map. Reduce technologies, supported by Future. Grid. July 26 -30, 2010 NCSA Summer School Workshop http: //salsahpc. indiana. edu/tutorial Washington University of Minnesota Iowa IBM Almaden Research Center Univ. Illinois at Chicago Notre Dame University of California at Los Angeles San Diego Supercomputer Center Michigan State Johns Hopkins Penn State Indiana University of Texas at El Paso University of Arkansas University of Florida https: //portal. futuregrid. org

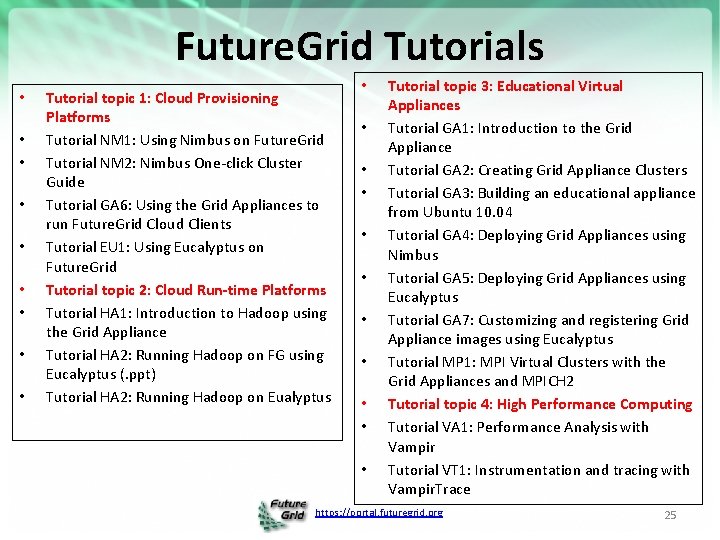

Future. Grid Tutorials • • • Tutorial topic 1: Cloud Provisioning Platforms Tutorial NM 1: Using Nimbus on Future. Grid Tutorial NM 2: Nimbus One-click Cluster Guide Tutorial GA 6: Using the Grid Appliances to run Future. Grid Cloud Clients Tutorial EU 1: Using Eucalyptus on Future. Grid Tutorial topic 2: Cloud Run-time Platforms Tutorial HA 1: Introduction to Hadoop using the Grid Appliance Tutorial HA 2: Running Hadoop on FG using Eucalyptus (. ppt) Tutorial HA 2: Running Hadoop on Eualyptus • • • Tutorial topic 3: Educational Virtual Appliances Tutorial GA 1: Introduction to the Grid Appliance Tutorial GA 2: Creating Grid Appliance Clusters Tutorial GA 3: Building an educational appliance from Ubuntu 10. 04 Tutorial GA 4: Deploying Grid Appliances using Nimbus Tutorial GA 5: Deploying Grid Appliances using Eucalyptus Tutorial GA 7: Customizing and registering Grid Appliance images using Eucalyptus Tutorial MP 1: MPI Virtual Clusters with the Grid Appliances and MPICH 2 Tutorial topic 4: High Performance Computing Tutorial VA 1: Performance Analysis with Vampir Tutorial VT 1: Instrumentation and tracing with Vampir. Trace https: //portal. futuregrid. org 25

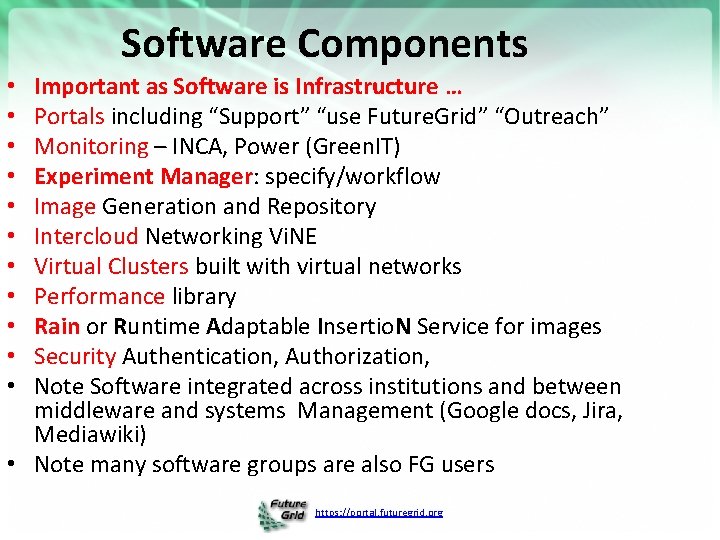

Software Components Important as Software is Infrastructure … Portals including “Support” “use Future. Grid” “Outreach” Monitoring – INCA, Power (Green. IT) Experiment Manager: specify/workflow Image Generation and Repository Intercloud Networking Vi. NE Virtual Clusters built with virtual networks Performance library Rain or Runtime Adaptable Insertio. N Service for images Security Authentication, Authorization, Note Software integrated across institutions and between middleware and systems Management (Google docs, Jira, Mediawiki) • Note many software groups are also FG users • • • https: //portal. futuregrid. org

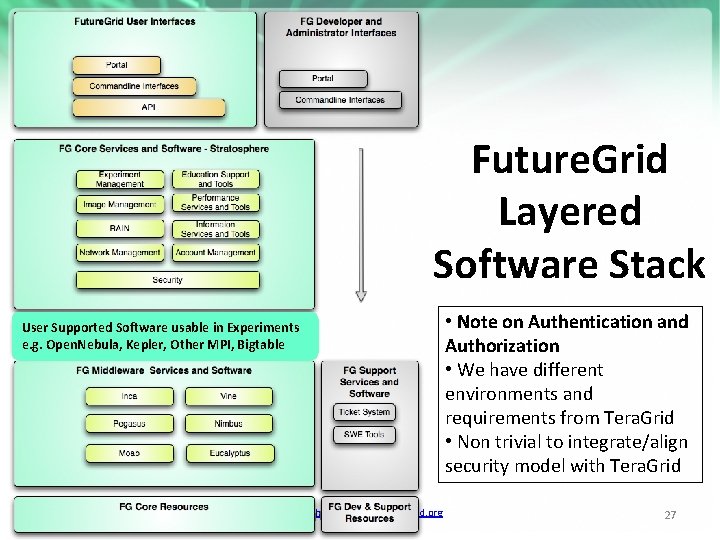

Future. Grid Layered Software Stack User Supported Software usable in Experiments e. g. Open. Nebula, Kepler, Other MPI, Bigtable https: //portal. futuregrid. org http: //futuregrid. org • Note on Authentication and Authorization • We have different environments and requirements from Tera. Grid • Non trivial to integrate/align security model with Tera. Grid 27

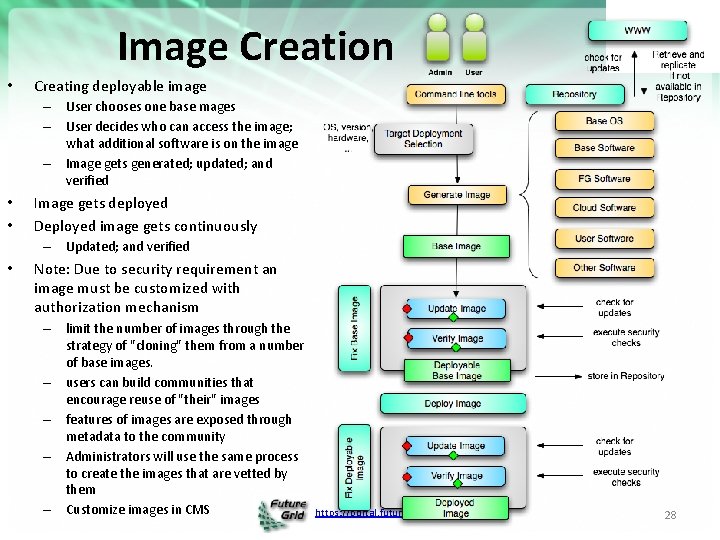

Image Creation • Creating deployable image – User chooses one base mages – User decides who can access the image; what additional software is on the image – Image gets generated; updated; and verified • • Image gets deployed Deployed image gets continuously – Updated; and verified • Note: Due to security requirement an image must be customized with authorization mechanism – limit the number of images through the strategy of "cloning" them from a number of base images. – users can build communities that encourage reuse of "their" images – features of images are exposed through metadata to the community – Administrators will use the same process to create the images that are vetted by them – Customize images in CMS https: //portal. futuregrid. org 28

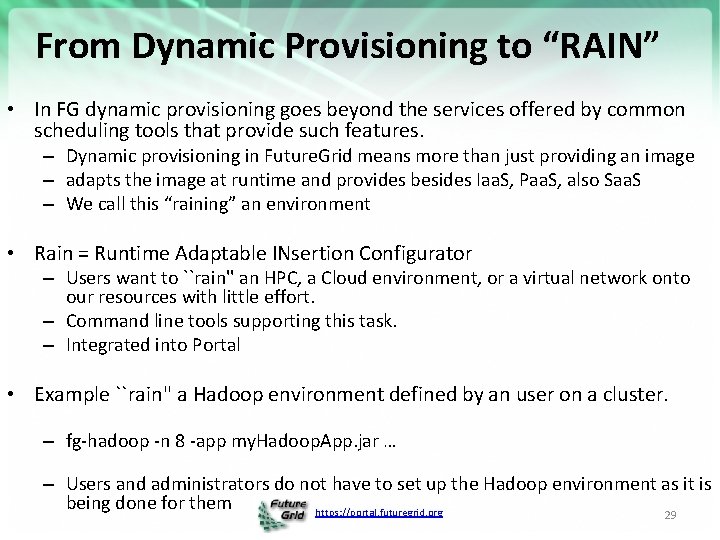

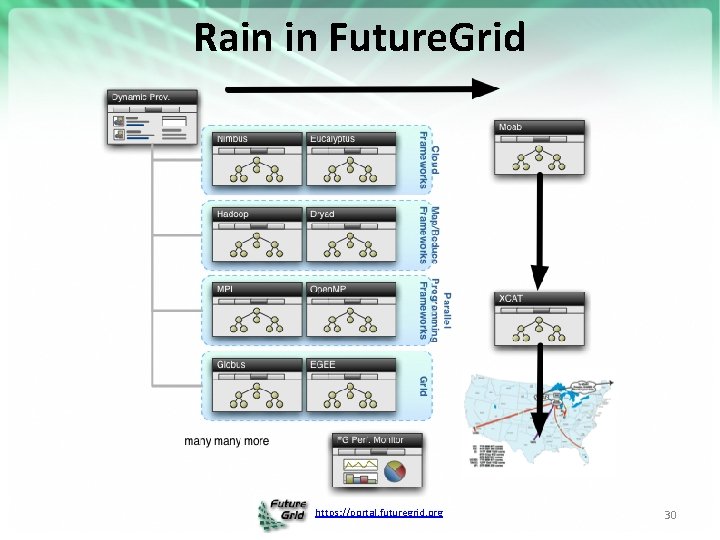

From Dynamic Provisioning to “RAIN” • In FG dynamic provisioning goes beyond the services offered by common scheduling tools that provide such features. – Dynamic provisioning in Future. Grid means more than just providing an image – adapts the image at runtime and provides besides Iaa. S, Paa. S, also Saa. S – We call this “raining” an environment • Rain = Runtime Adaptable INsertion Configurator – Users want to ``rain'' an HPC, a Cloud environment, or a virtual network onto our resources with little effort. – Command line tools supporting this task. – Integrated into Portal • Example ``rain'' a Hadoop environment defined by an user on a cluster. – fg-hadoop -n 8 -app my. Hadoop. App. jar … – Users and administrators do not have to set up the Hadoop environment as it is being done for them https: //portal. futuregrid. org 29

Rain in Future. Grid https: //portal. futuregrid. org 30

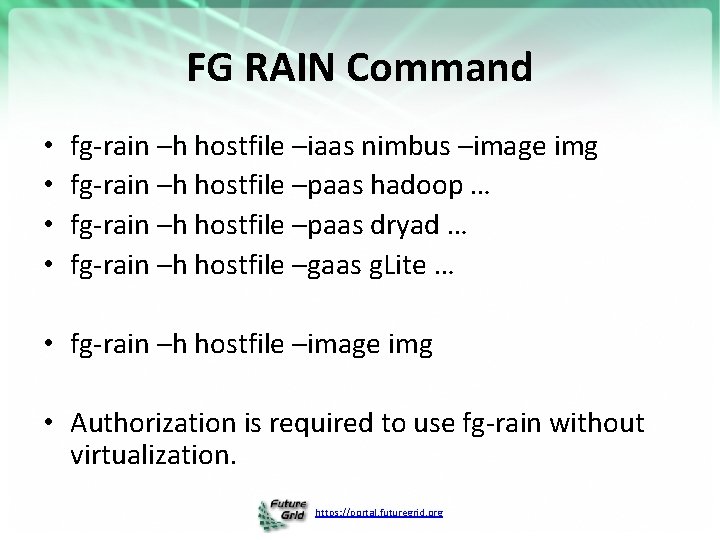

FG RAIN Command • • fg-rain –h hostfile –iaas nimbus –image img fg-rain –h hostfile –paas hadoop … fg-rain –h hostfile –paas dryad … fg-rain –h hostfile –gaas g. Lite … • fg-rain –h hostfile –image img • Authorization is required to use fg-rain without virtualization. https: //portal. futuregrid. org

Future. Grid Viral Growth Model • Users apply for a project • Users improve/develop some software in project • This project leads to new images which are placed in Future. Grid repository • Project report and other web pages document use of new images • Images are used by other users • And so on ad infinitum ……… https: //portal. futuregrid. org http: //futuregrid. org 32

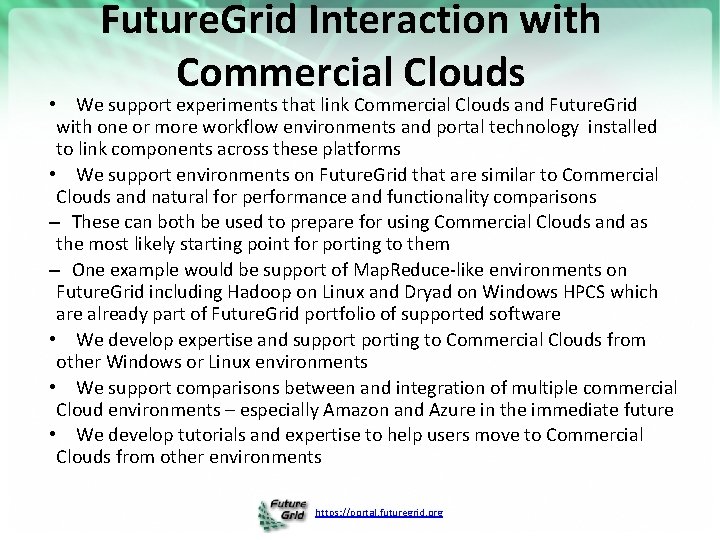

Future. Grid Interaction with Commercial Clouds • We support experiments that link Commercial Clouds and Future. Grid with one or more workflow environments and portal technology installed to link components across these platforms • We support environments on Future. Grid that are similar to Commercial Clouds and natural for performance and functionality comparisons – These can both be used to prepare for using Commercial Clouds and as the most likely starting point for porting to them – One example would be support of Map. Reduce-like environments on Future. Grid including Hadoop on Linux and Dryad on Windows HPCS which are already part of Future. Grid portfolio of supported software • We develop expertise and supporting to Commercial Clouds from other Windows or Linux environments • We support comparisons between and integration of multiple commercial Cloud environments – especially Amazon and Azure in the immediate future • We develop tutorials and expertise to help users move to Commercial Clouds from other environments https: //portal. futuregrid. org

Management https: //portal. futuregrid. org 34

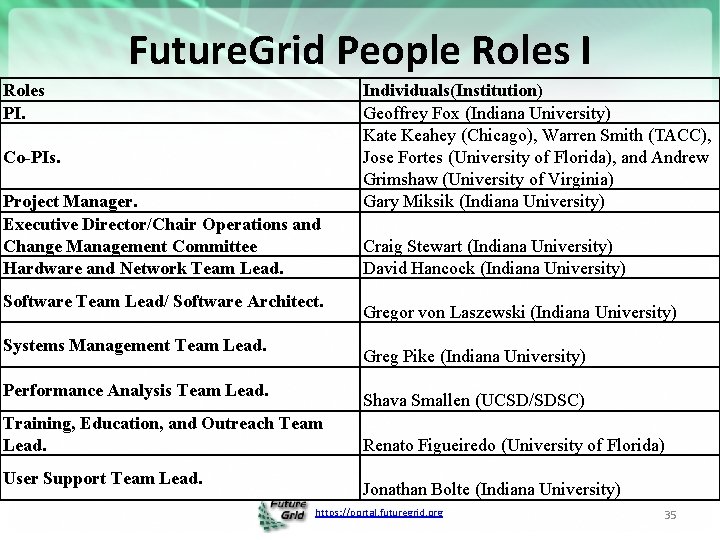

Future. Grid People Roles I Roles PI. Co-PIs. Project Manager. Executive Director/Chair Operations and Change Management Committee Hardware and Network Team Lead. Software Team Lead/ Software Architect. Systems Management Team Lead. Craig Stewart (Indiana University) David Hancock (Indiana University) Gregor von Laszewski (Indiana University) Greg Pike (Indiana University) Performance Analysis Team Lead. Shava Smallen (UCSD/SDSC) Training, Education, and Outreach Team Lead. User Support Team Lead. Individuals(Institution) Geoffrey Fox (Indiana University) Kate Keahey (Chicago), Warren Smith (TACC), Jose Fortes (University of Florida), and Andrew Grimshaw (University of Virginia) Gary Miksik (Indiana University) Renato Figueiredo (University of Florida) Jonathan Bolte (Indiana University) https: //portal. futuregrid. org 35

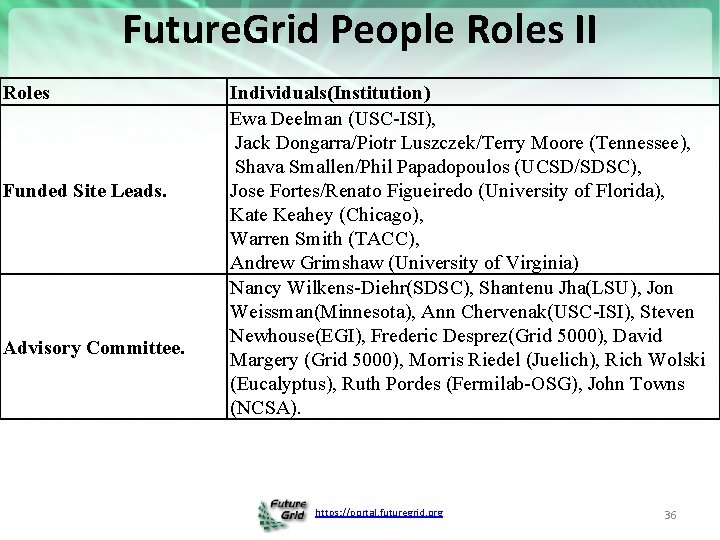

Future. Grid People Roles II Roles Funded Site Leads. Advisory Committee. Individuals(Institution) Ewa Deelman (USC-ISI), Jack Dongarra/Piotr Luszczek/Terry Moore (Tennessee), Shava Smallen/Phil Papadopoulos (UCSD/SDSC), Jose Fortes/Renato Figueiredo (University of Florida), Kate Keahey (Chicago), Warren Smith (TACC), Andrew Grimshaw (University of Virginia) Nancy Wilkens-Diehr(SDSC), Shantenu Jha(LSU), Jon Weissman(Minnesota), Ann Chervenak(USC-ISI), Steven Newhouse(EGI), Frederic Desprez(Grid 5000), David Margery (Grid 5000), Morris Riedel (Juelich), Rich Wolski (Eucalyptus), Ruth Pordes (Fermilab-OSG), John Towns (NCSA). https: //portal. futuregrid. org 36

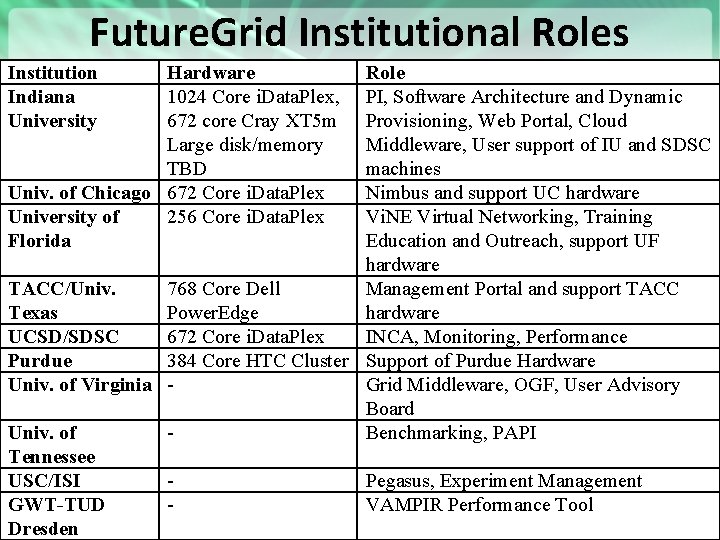

Future. Grid Institutional Roles Institution Indiana University Hardware 1024 Core i. Data. Plex, 672 core Cray XT 5 m Large disk/memory TBD Univ. of Chicago 672 Core i. Data. Plex University of 256 Core i. Data. Plex Florida Role PI, Software Architecture and Dynamic Provisioning, Web Portal, Cloud Middleware, User support of IU and SDSC machines Nimbus and support UC hardware Vi. NE Virtual Networking, Training Education and Outreach, support UF hardware TACC/Univ. 768 Core Dell Management Portal and support TACC Texas Power. Edge hardware UCSD/SDSC 672 Core i. Data. Plex INCA, Monitoring, Performance Purdue 384 Core HTC Cluster Support of Purdue Hardware Univ. of Virginia Grid Middleware, OGF, User Advisory Board Univ. of Benchmarking, PAPI Tennessee USC/ISI Pegasus, Experiment Management GWT-TUD VAMPIR Performance Tool https: //portal. futuregrid. org 37 Dresden

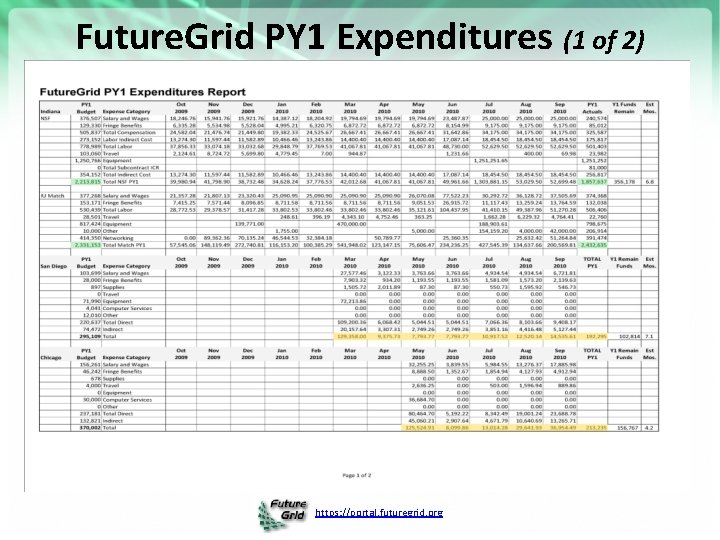

Future. Grid PY 1 Expenditures (1 of 2) https: //portal. futuregrid. org

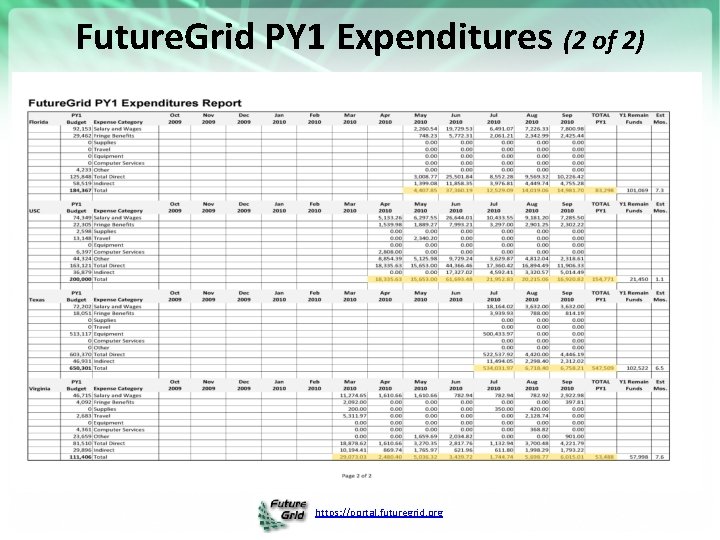

Future. Grid PY 1 Expenditures (2 of 2) https: //portal. futuregrid. org

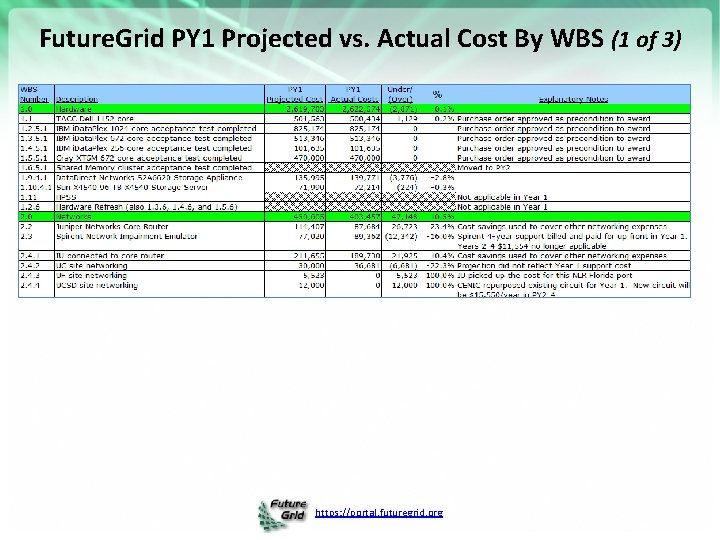

Future. Grid PY 1 Projected vs. Actual Cost By WBS (1 of 3) https: //portal. futuregrid. org

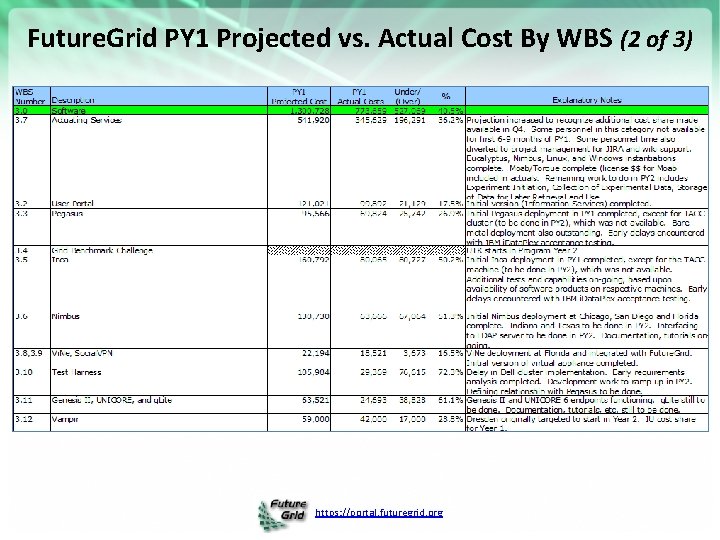

Future. Grid PY 1 Projected vs. Actual Cost By WBS (2 of 3) https: //portal. futuregrid. org

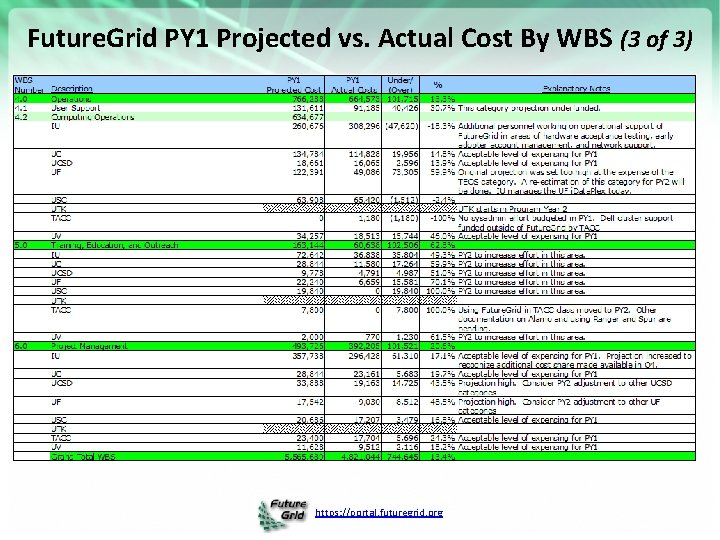

Future. Grid PY 1 Projected vs. Actual Cost By WBS (3 of 3) https: //portal. futuregrid. org

Grid’ 5000 and Future. Grid Collaboration Presented by Kate Keahey http: //futuregrid. org

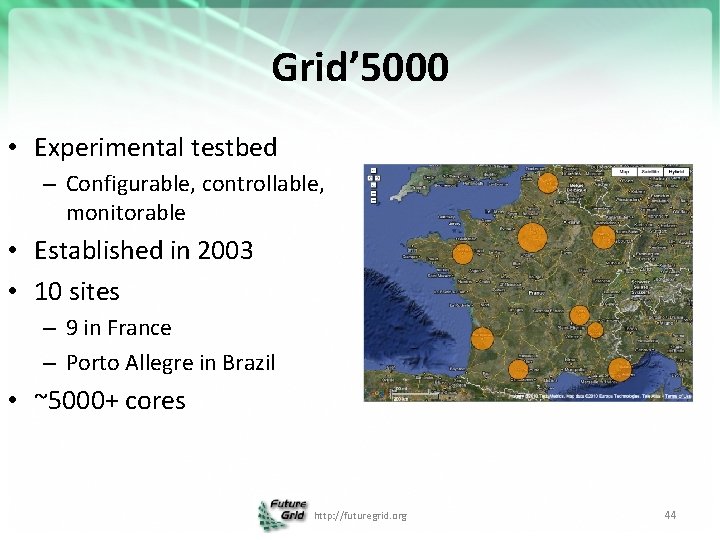

Grid’ 5000 • Experimental testbed – Configurable, controllable, monitorable • Established in 2003 • 10 sites – 9 in France – Porto Allegre in Brazil • ~5000+ cores http: //futuregrid. org 44

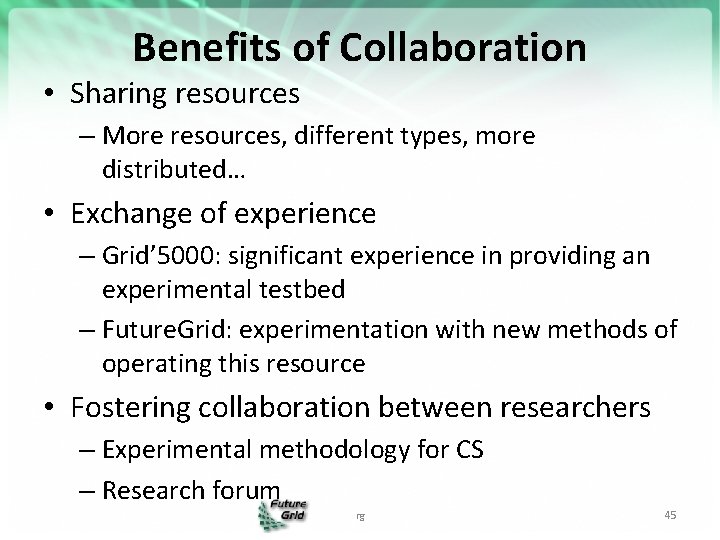

Benefits of Collaboration • Sharing resources – More resources, different types, more distributed… • Exchange of experience – Grid’ 5000: significant experience in providing an experimental testbed – Future. Grid: experimentation with new methods of operating this resource • Fostering collaboration between researchers – Experimental methodology for CS – Research forum rg 45

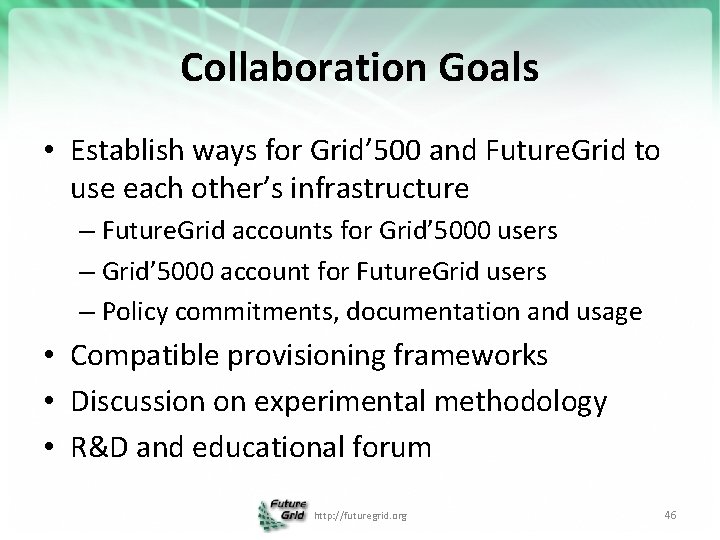

Collaboration Goals • Establish ways for Grid’ 500 and Future. Grid to use each other’s infrastructure – Future. Grid accounts for Grid’ 5000 users – Grid’ 5000 account for Future. Grid users – Policy commitments, documentation and usage • Compatible provisioning frameworks • Discussion on experimental methodology • R&D and educational forum http: //futuregrid. org 46

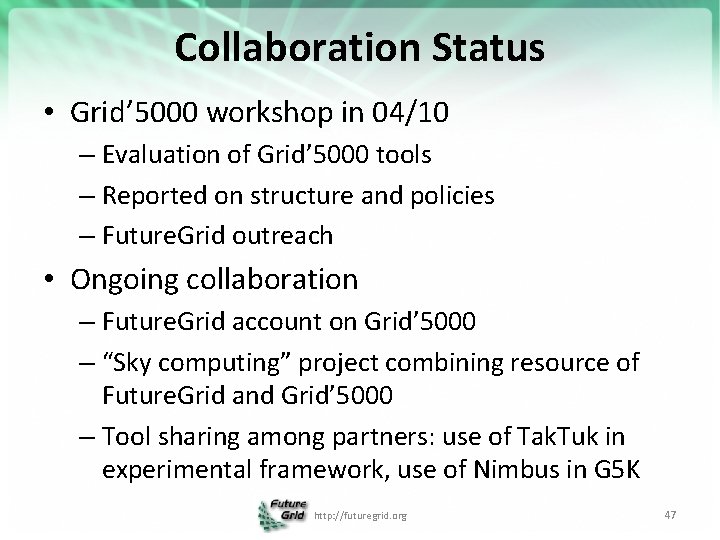

Collaboration Status • Grid’ 5000 workshop in 04/10 – Evaluation of Grid’ 5000 tools – Reported on structure and policies – Future. Grid outreach • Ongoing collaboration – Future. Grid account on Grid’ 5000 – “Sky computing” project combining resource of Future. Grid and Grid’ 5000 – Tool sharing among partners: use of Tak. Tuk in experimental framework, use of Nimbus in G 5 K http: //futuregrid. org 47

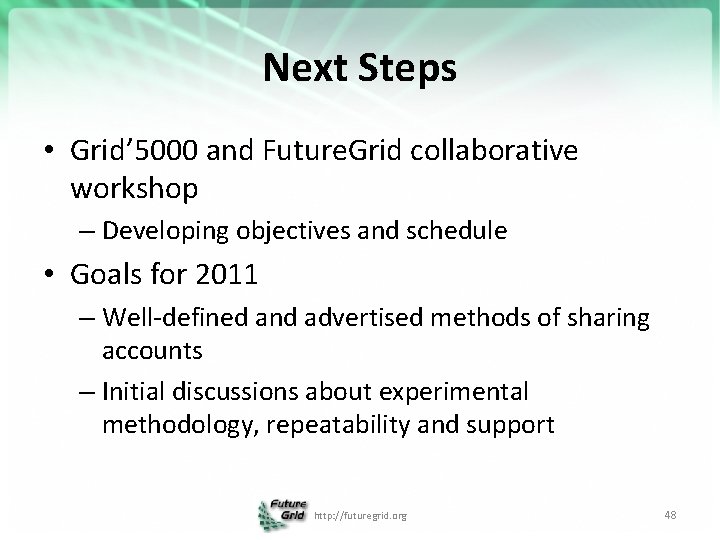

Next Steps • Grid’ 5000 and Future. Grid collaborative workshop – Developing objectives and schedule • Goals for 2011 – Well-defined and advertised methods of sharing accounts – Initial discussions about experimental methodology, repeatability and support http: //futuregrid. org 48

Hardware https: //portal. futuregrid. org 49

Compute Hardware # CPUs # Cores TFLOPS Total RAM (GB) Secondary Storage (TB) Site IBM i. Data. Plex 256 1024 11 3072 339* IU Operational Dell Power. Edge 192 768 8 1152 30 TACC Operational IBM i. Data. Plex 168 672 7 2016 120 UC Operational IBM i. Data. Plex 168 672 7 2688 96 SDSC Operational Cray XT 5 m 168 672 6 1344 339* IU Operational IBM i. Data. Plex 64 256 2 768 On Order UF Operational 128 512 5 7680 768 on nodes IU New System TBD 192 384 4 192 PU Not yet integrated 1336 4960 50 18912 System type Large disk/memory system TBD High Throughput Cluster Total https: //portal. futuregrid. org 1353 Status

Storage Hardware System Type Capacity (TB) File System Site Status DDN 9550 (Data Capacitor) 339 Lustre IU Existing System DDN 6620 120 GPFS UC New System Sun. Fire x 4170 96 ZFS SDSC New System Dell MD 3000 30 NFS TACC New System Will add substantially more disk on node and at IU and UF as shared storage https: //portal. futuregrid. org

Logical Network Diagram https: //portal. futuregrid. org

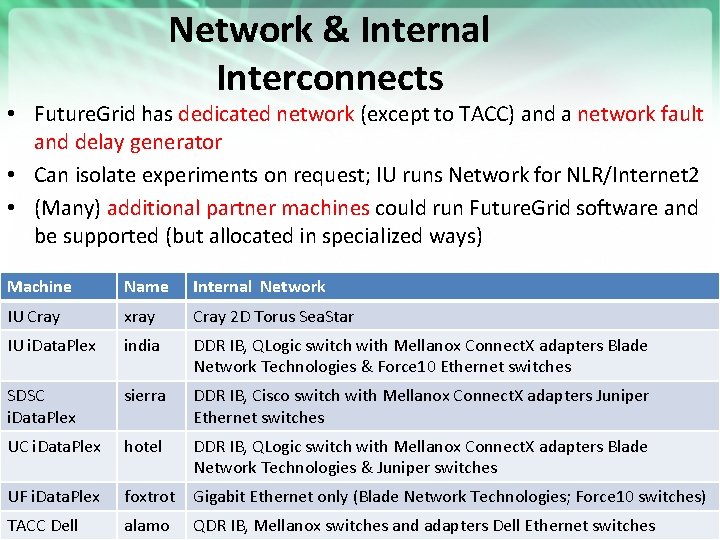

Network & Internal Interconnects • Future. Grid has dedicated network (except to TACC) and a network fault and delay generator • Can isolate experiments on request; IU runs Network for NLR/Internet 2 • (Many) additional partner machines could run Future. Grid software and be supported (but allocated in specialized ways) Machine Name Internal Network IU Cray xray Cray 2 D Torus Sea. Star IU i. Data. Plex india DDR IB, QLogic switch with Mellanox Connect. X adapters Blade Network Technologies & Force 10 Ethernet switches SDSC i. Data. Plex sierra DDR IB, Cisco switch with Mellanox Connect. X adapters Juniper Ethernet switches UC i. Data. Plex hotel DDR IB, QLogic switch with Mellanox Connect. X adapters Blade Network Technologies & Juniper switches UF i. Data. Plex foxtrot Gigabit Ethernet only (Blade Network Technologies; Force 10 switches) TACC Dell alamo QDR IB, Mellanox switches and adapters Dell Ethernet switches https: //portal. futuregrid. org

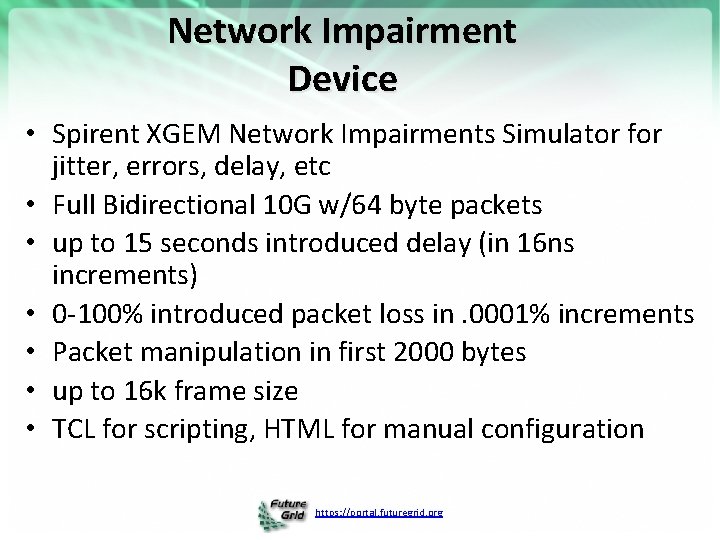

Network Impairment Device • Spirent XGEM Network Impairments Simulator for jitter, errors, delay, etc • Full Bidirectional 10 G w/64 byte packets • up to 15 seconds introduced delay (in 16 ns increments) • 0 -100% introduced packet loss in. 0001% increments • Packet manipulation in first 2000 bytes • up to 16 k frame size • TCL for scripting, HTML for manual configuration https: //portal. futuregrid. org

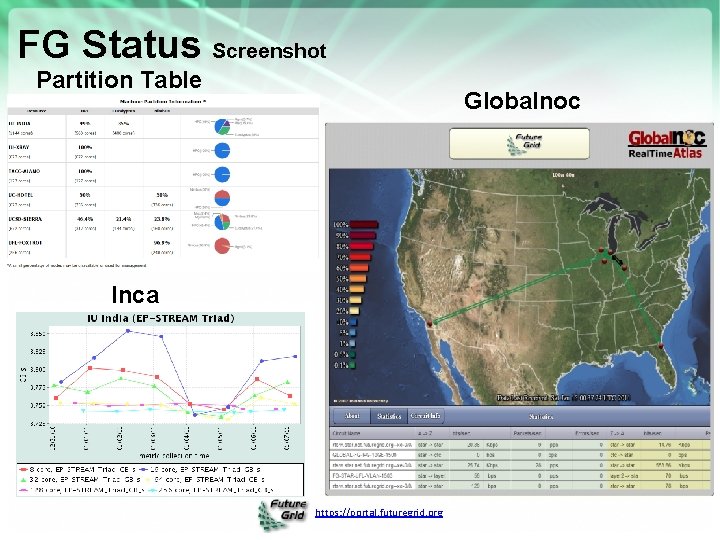

FG Status Screenshot Partition Table Globalnoc Inca https: //portal. futuregrid. org

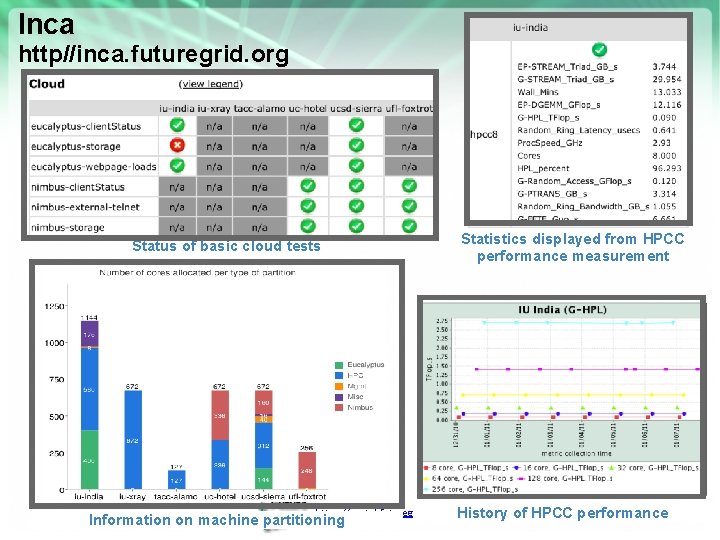

Inca http//inca. futuregrid. org Status of basic cloud tests https: //portal. futuregrid. org Information on machine partitioning Statistics displayed from HPCC performance measurement History of HPCC performance

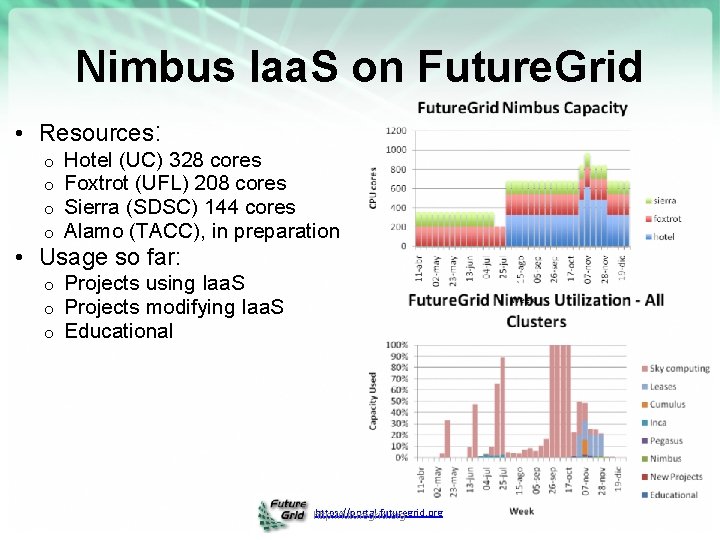

Nimbus Iaa. S on Future. Grid • Resources: o o Hotel (UC) 328 cores Foxtrot (UFL) 208 cores Sierra (SDSC) 144 cores Alamo (TACC), in preparation o o o Projects using Iaa. S Projects modifying Iaa. S Educational • Usage so far: https: //portal. futuregrid. org http: //futuregrid. org

Hardware Upgrades • Funding available for a refresh in PY 3: $400 K • Funding for a core system that was originally a shared memory system (mimicking Pittsburgh Track II): $448 K – Current suggestion is a data intensive cluster with each node 8 cores, 192 GB memory, 12 TB disk and Infiniband interconnect https: //portal. futuregrid. org 58

Strengths, Weaknesses, Opportunities and Threats to Future. Grid https: //portal. futuregrid. org 59

Future. Grid SWOT • Difference from Tera. Grid/XD/DEISA/EGI implies need to develop processes and software from scratch • Newness implies need to explain why its useful! • High “user support” load • Software is Infrastructure and must be approached as this • Rich skill base from distributed team • Lots of new education and outreach opportunities • 5 interesting use categories: TEO, Interoperability, Domain applications, CS Middleware, System Evaluation • Tremendous student interest in all parts of Future. Grid can be tapped to help support & software development https: //portal. futuregrid. org 60

- Slides: 60