Future Grid Computing Testbed as a Service Details

Future. Grid Computing Testbed as a Service Details July 3 2013 Geoffrey Fox for Future. Grid Team gcf@indiana. edu http: //www. infomall. org http: //www. futuregrid. org School of Informatics and Computing Digital Science Center Indiana University Bloomington https: //portal. futuregrid. org

• • • • Topics Covered Current Status Recap Overview Details of Hardware More sample Future. Grid Projects Scale. MP High Performance Cloud Infrastructure? Details – XSEDE Testing and Future. Grid Relation of Future. Grid to other Projects MOOC’s Services Future. Grid Futures Cloudmesh Testbedaa. S Tool Security in Future. Grid Details of Image Generation on Future. Grid Details of Monitoring on Future. Grid Appliances available on Future. Grid https: //portal. futuregrid. org 2

Current Status https: //portal. futuregrid. org 3

Basic Status • Future. Grid has been running for 3 years – 339 projects; 2009 users September 13 2013 • Funding available through September 30, 2014 with No Cost Extension which can be submitted in mid August (45 days prior to the formal expiration of the grant) • Participated in Computer Science activities (call for white papers and presentation to CISE director) • Participated in OCI solicitations • Pursuing GENI collaborations

Technology • Open. Stack becoming best open source virtual machine management environment – Also more reliable than previous versions of Open. Stack and Eucalyptus – Nimbus switch to Open. Stack core with projects like Phantom – In past Nimbus was essential as only reliable open source VM manager • XSEDE Integration has made major progress; 80% complete • These improvements/progress will allow much greater focus on Testbedaa. S software • Solicitations motivated adding “On-ramp” capabilities; develop code on Future. Grid – Burst or Shift to other cloud or HPC systems (Cloudmesh)

Assumptions • “Democratic” support of Clouds and HPC likely to be important • As a testbed, offer bare metal or clouds on a given node • Run HPC systems with similar tools to clouds so HPC bursting as well as Cloud bursting • Define images by templates that can be built for different HPC and cloud environments • Education integration important (MOOC’s)

Recap Overview https: //portal. futuregrid. org 7

Future. Grid Testbed as a Service • Future. Grid is part of XSEDE set up as a testbed with cloud focus • Operational since Summer 2010 (i. e. coming to end of third year of use) • The Future. Grid testbed provides to its users: – Support of Computer Science and Computational Science research – A flexible development and testing platform for middleware and application users looking at interoperability, functionality, performance or evaluation – Future. Grid is user-customizable, accessed interactively and supports Grid, Cloud and HPC software with and without VM’s – A rich education and teaching platform for classes • Offers Open. Stack, Eucalyptus, Nimbus, Open. Nebula, HPC (MPI) on same hardware moving to software defined systems; supports both classic HPC and Cloud storage https: //portal. futuregrid. org

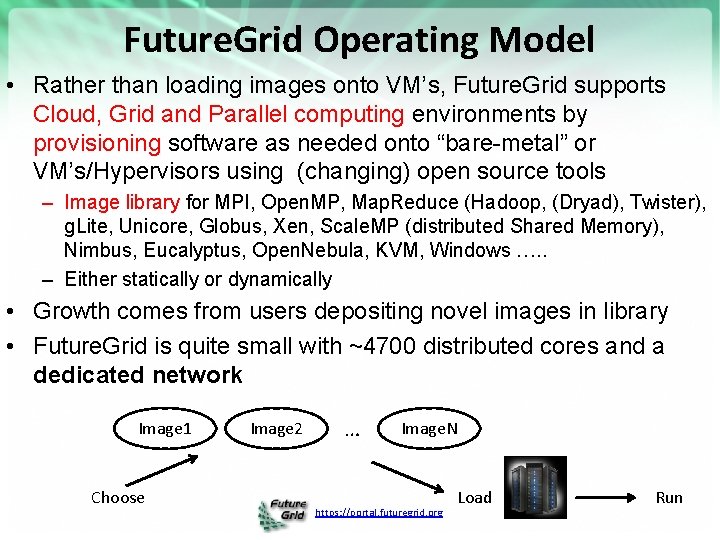

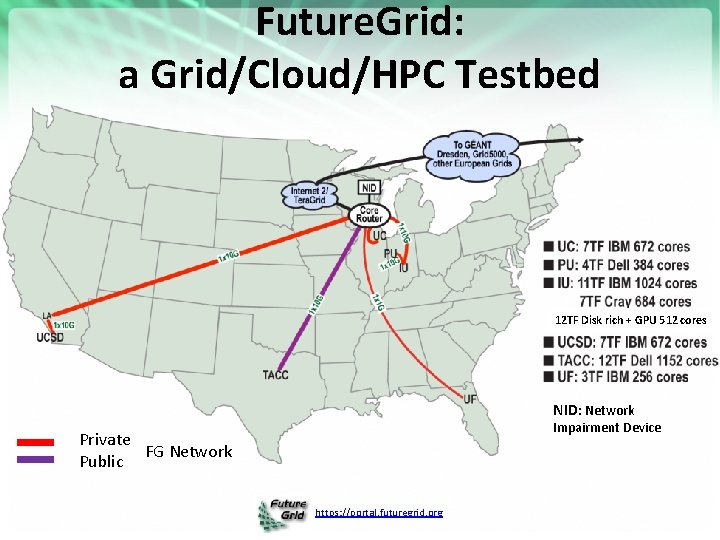

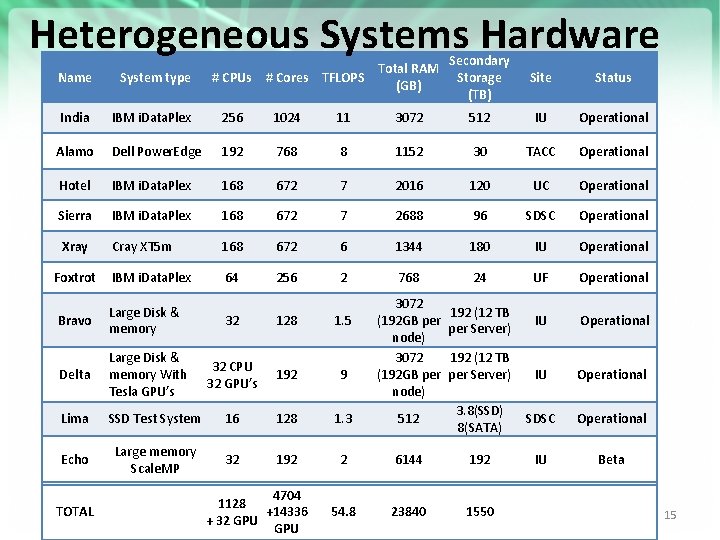

Future. Grid Operating Model • Rather than loading images onto VM’s, Future. Grid supports Cloud, Grid and Parallel computing environments by provisioning software as needed onto “bare-metal” or VM’s/Hypervisors using (changing) open source tools – Image library for MPI, Open. MP, Map. Reduce (Hadoop, (Dryad), Twister), g. Lite, Unicore, Globus, Xen, Scale. MP (distributed Shared Memory), Nimbus, Eucalyptus, Open. Nebula, KVM, Windows …. . – Either statically or dynamically • Growth comes from users depositing novel images in library • Future. Grid is quite small with ~4700 distributed cores and a dedicated network Image 1 Choose Image 2 … Image. N https: //portal. futuregrid. org Load Run

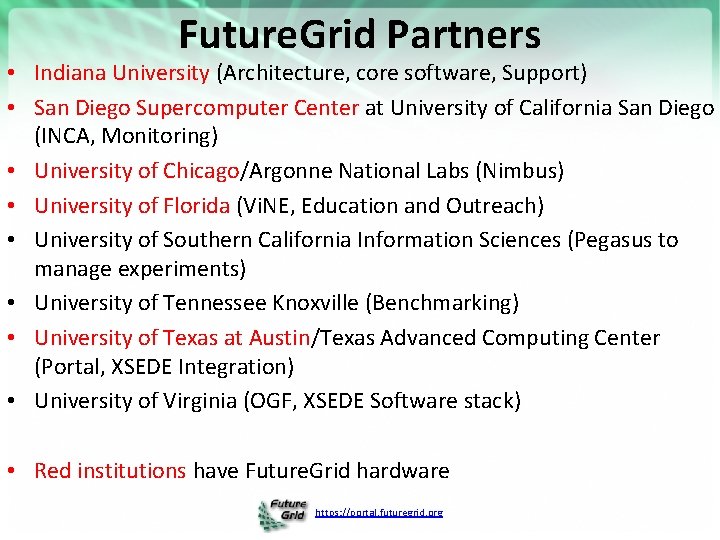

Future. Grid Partners • Indiana University (Architecture, core software, Support) • San Diego Supercomputer Center at University of California San Diego (INCA, Monitoring) • University of Chicago/Argonne National Labs (Nimbus) • University of Florida (Vi. NE, Education and Outreach) • University of Southern California Information Sciences (Pegasus to manage experiments) • University of Tennessee Knoxville (Benchmarking) • University of Texas at Austin/Texas Advanced Computing Center (Portal, XSEDE Integration) • University of Virginia (OGF, XSEDE Software stack) • Red institutions have Future. Grid hardware https: //portal. futuregrid. org

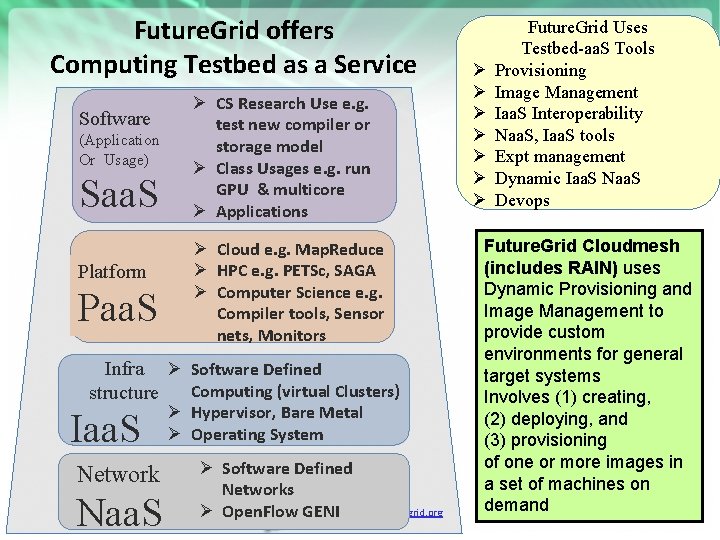

Future. Grid offers Computing Testbed as a Service Software (Application Or Usage) Saa. S Platform Paa. S Ø CS Research Use e. g. test new compiler or storage model Ø Class Usages e. g. run GPU & multicore Ø Applications Ø Cloud e. g. Map. Reduce Ø HPC e. g. PETSc, SAGA Ø Computer Science e. g. Compiler tools, Sensor nets, Monitors Infra Ø Software Defined Computing (virtual Clusters) structure Iaa. S Network Naa. S Ø Hypervisor, Bare Metal Ø Operating System Ø Software Defined Networks https: //portal. futuregrid. org Ø Open. Flow GENI Ø Ø Ø Ø Future. Grid Uses Testbed-aa. S Tools Provisioning Image Management Iaa. S Interoperability Naa. S, Iaa. S tools Expt management Dynamic Iaa. S Naa. S Devops Future. Grid Cloudmesh (includes RAIN) uses Dynamic Provisioning and Image Management to provide custom environments for general target systems Involves (1) creating, (2) deploying, and (3) provisioning of one or more images in a set of machines on demand

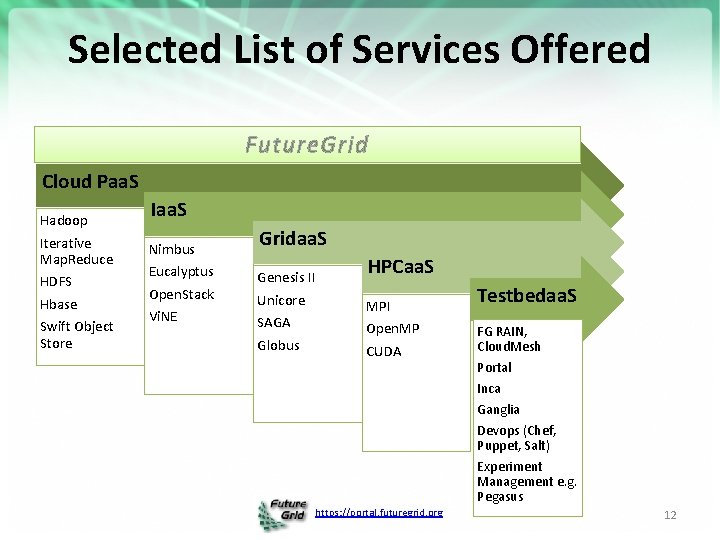

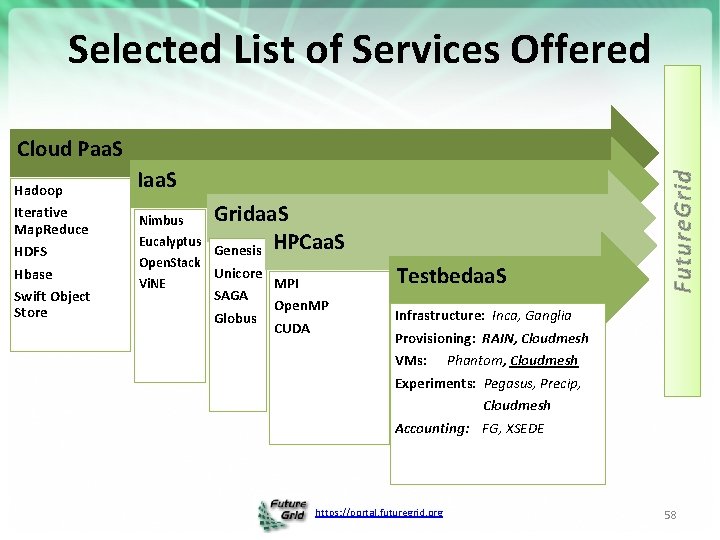

Selected List of Services Offered Future. Grid Cloud Paa. S Hadoop Iterative Map. Reduce HDFS Hbase Swift Object Store Iaa. S Nimbus Eucalyptus Open. Stack Vi. NE Gridaa. S Genesis II Unicore SAGA Globus HPCaa. S MPI Open. MP CUDA Testbedaa. S FG RAIN, Cloud. Mesh Portal Inca Ganglia Devops (Chef, Puppet, Salt) Experiment Management e. g. Pegasus https: //portal. futuregrid. org 12

Hardware(Systems) Details https: //portal. futuregrid. org 13

Future. Grid: a Grid/Cloud/HPC Testbed 12 TF Disk rich + GPU 512 cores NID: Network Impairment Device Private FG Network Public https: //portal. futuregrid. org

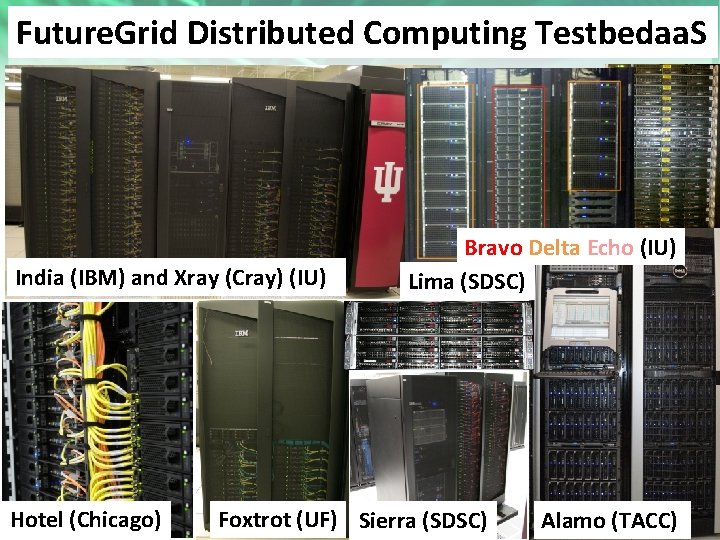

Heterogeneous Systems Hardware Total RAM # CPUs # Cores TFLOPS (GB) Secondary Storage (TB) Site Status Name System type India IBM i. Data. Plex 256 1024 11 3072 512 IU Operational Alamo Dell Power. Edge 192 768 8 1152 30 TACC Operational Hotel IBM i. Data. Plex 168 672 7 2016 120 UC Operational Sierra IBM i. Data. Plex 168 672 7 2688 96 SDSC Operational Xray Cray XT 5 m 168 672 6 1344 180 IU Operational 64 256 2 768 24 UF Operational Foxtrot IBM i. Data. Plex Bravo Large Disk & memory 32 128 1. 5 Delta Large Disk & memory With Tesla GPU’s 32 CPU 32 GPU’s 192 9 Lima SSD Test System 16 128 1. 3 Echo Large memory Scale. MP 32 192 2 TOTAL 4704 1128 +14336 + 32 GPU 3072 192 (12 TB IU (192 GB per per Server) node) 3. 8(SSD) 512 SDSC 8(SATA) 6144 https: //portal. futuregrid. org 54. 8 23840 192 1550 IU Operational Beta 15

Future. Grid Distributed Computing Testbedaa. S India (IBM) and Xray (Cray) (IU) Hotel (Chicago) Bravo Delta Echo (IU) Lima (SDSC) https: //portal. futuregrid. org Foxtrot (UF) Sierra (SDSC) 16 Alamo (TACC)

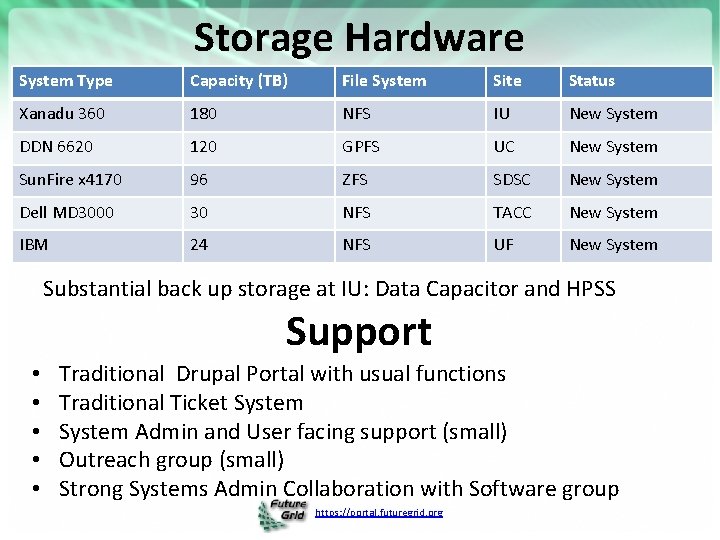

Storage Hardware System Type Capacity (TB) File System Site Status Xanadu 360 180 NFS IU New System DDN 6620 120 GPFS UC New System Sun. Fire x 4170 96 ZFS SDSC New System Dell MD 3000 30 NFS TACC New System IBM 24 NFS UF New System Substantial back up storage at IU: Data Capacitor and HPSS Support • • • Traditional Drupal Portal with usual functions Traditional Ticket System Admin and User facing support (small) Outreach group (small) Strong Systems Admin Collaboration with Software group https: //portal. futuregrid. org

More Example Projects https: //portal. futuregrid. org 18

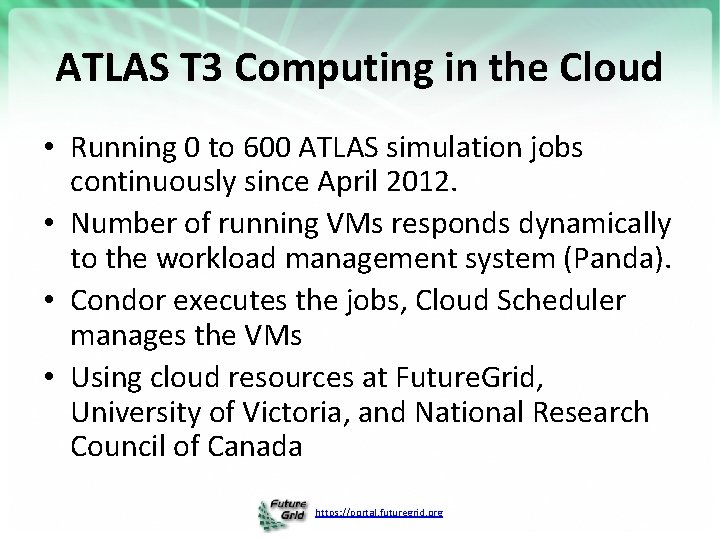

ATLAS T 3 Computing in the Cloud • Running 0 to 600 ATLAS simulation jobs continuously since April 2012. • Number of running VMs responds dynamically to the workload management system (Panda). • Condor executes the jobs, Cloud Scheduler manages the VMs • Using cloud resources at Future. Grid, University of Victoria, and National Research Council of Canada https: //portal. futuregrid. org

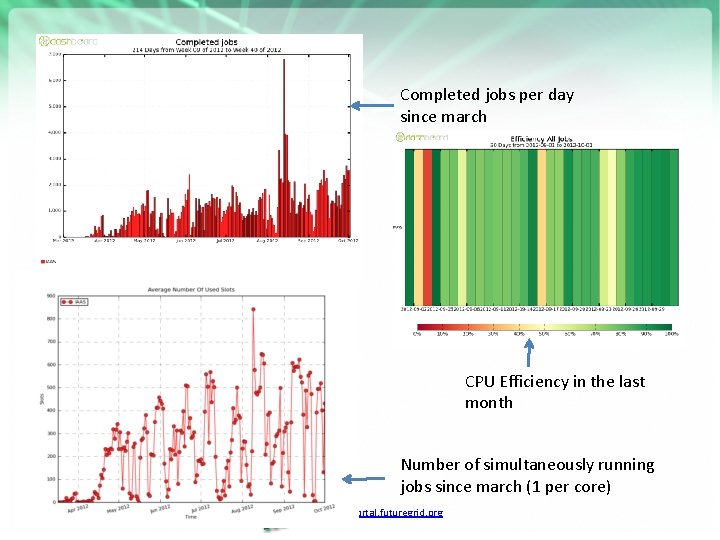

Completed jobs per day since march CPU Efficiency in the last month Number of simultaneously running jobs since march (1 per core) https: //portal. futuregrid. org

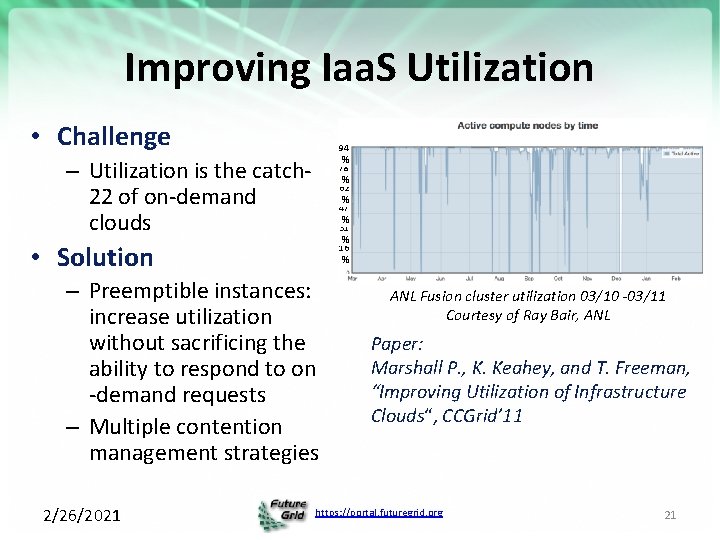

Improving Iaa. S Utilization • Challenge 94 % 78 % 62 % 47 % 31 % 16 % – Utilization is the catch 22 of on-demand clouds • Solution – Preemptible instances: increase utilization without sacrificing the ability to respond to on -demand requests – Multiple contention management strategies 2/26/2021 ANL Fusion cluster utilization 03/10 -03/11 Courtesy of Ray Bair, ANL Paper: Marshall P. , K. Keahey, and T. Freeman, “Improving Utilization of Infrastructure Clouds“, CCGrid’ 11 https: //portal. futuregrid. org 21

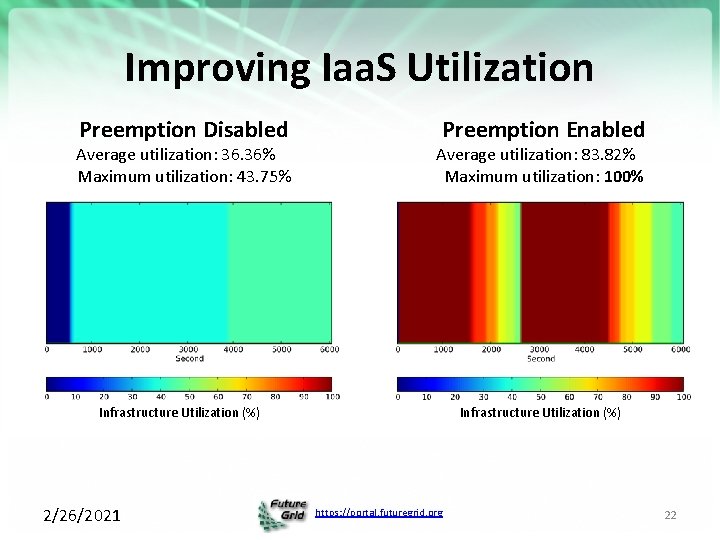

Improving Iaa. S Utilization Preemption Disabled Preemption Enabled Average utilization: 36. 36% Maximum utilization: 43. 75% Average utilization: 83. 82% Maximum utilization: 100% Infrastructure Utilization (%) 2/26/2021 https: //portal. futuregrid. org 22

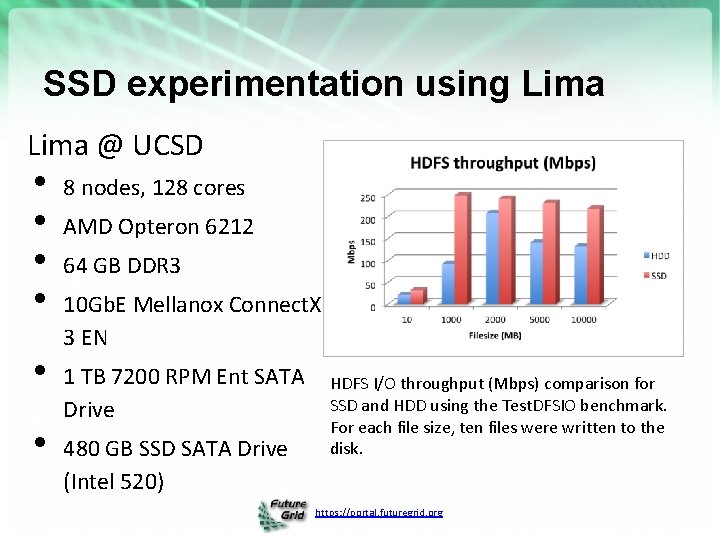

SSD experimentation using Lima @ UCSD • • • 8 nodes, 128 cores AMD Opteron 6212 64 GB DDR 3 10 Gb. E Mellanox Connect. X 3 EN 1 TB 7200 RPM Ent SATA Drive 480 GB SSD SATA Drive (Intel 520) HDFS I/O throughput (Mbps) comparison for SSD and HDD using the Test. DFSIO benchmark. For each file size, ten files were written to the disk. https: //portal. futuregrid. org

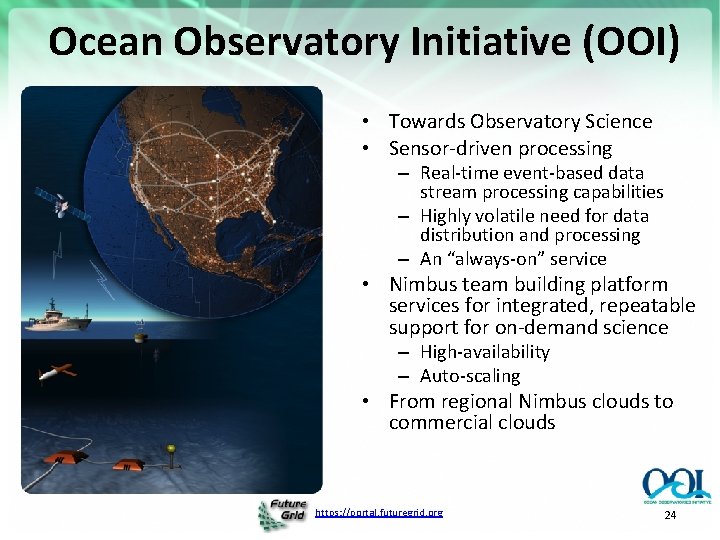

Ocean Observatory Initiative (OOI) • Towards Observatory Science • Sensor-driven processing – Real-time event-based data stream processing capabilities – Highly volatile need for data distribution and processing – An “always-on” service • Nimbus team building platform services for integrated, repeatable support for on-demand science – High-availability – Auto-scaling • From regional Nimbus clouds to commercial clouds https: //portal. futuregrid. org 24

Scale. MP https: //portal. futuregrid. org 25

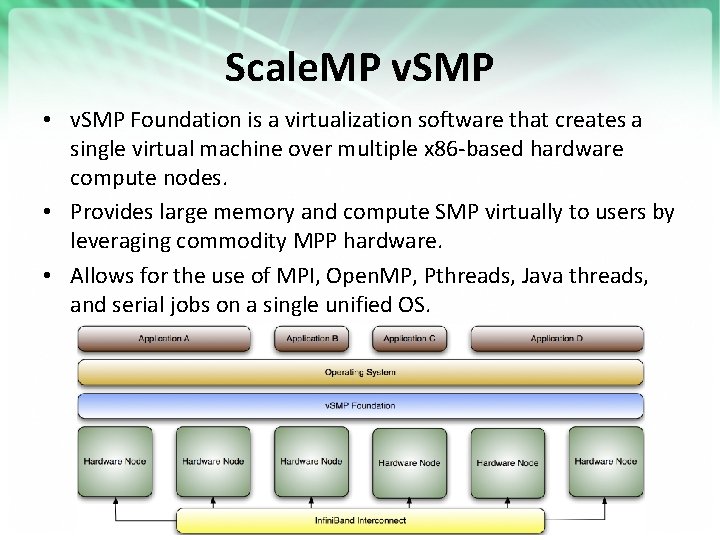

Scale. MP v. SMP • v. SMP Foundation is a virtualization software that creates a single virtual machine over multiple x 86 -based hardware compute nodes. • Provides large memory and compute SMP virtually to users by leveraging commodity MPP hardware. • Allows for the use of MPI, Open. MP, Pthreads, Java threads, and serial jobs on a single unified OS. https: //portal. futuregrid. org

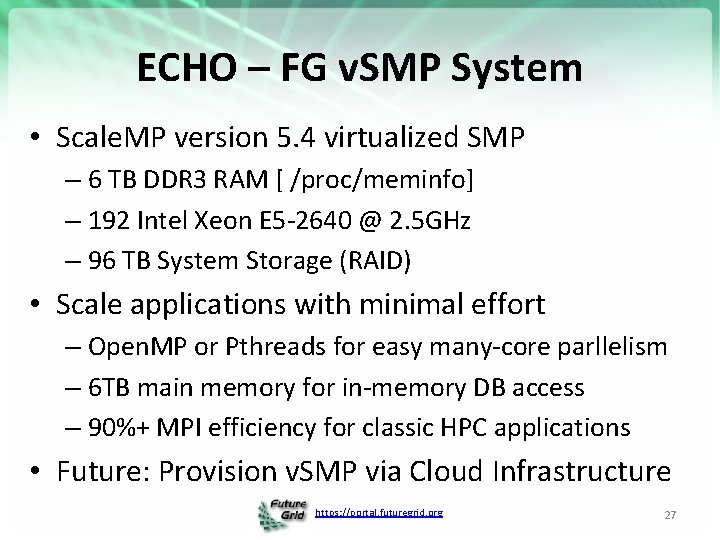

ECHO – FG v. SMP System • Scale. MP version 5. 4 virtualized SMP – 6 TB DDR 3 RAM [ /proc/meminfo] – 192 Intel Xeon E 5 -2640 @ 2. 5 GHz – 96 TB System Storage (RAID) • Scale applications with minimal effort – Open. MP or Pthreads for easy many-core parllelism – 6 TB main memory for in-memory DB access – 90%+ MPI efficiency for classic HPC applications • Future: Provision v. SMP via Cloud Infrastructure https: //portal. futuregrid. org 27

High Performance Cloud Infrasturcture? https: //portal. futuregrid. org 28

Virtualized GPUs in a Cloud • Need for GPUs on Clouds – GPUs are becoming commonplace in scientific computing – Great performance-per-watt • Different competing methods for virtualizing GPUs – Remote API for CUDA calls r. CUDA, v. CUDA, g. Virtus – Direct GPU usage within VM our method • Also need Infini. Band in Cloud Infrastucture – High speed, low latency interconnect – RDMA & IPo. IB to VMs. Support many traditional HPC applications via MPI • Supercomputing today uses high performance interconnects and advanced accelerators (GPUs) – Goal: provide the same hardware at a minimal overhead to build the first ever HPC Cloud https: //portal. futuregrid. org 29

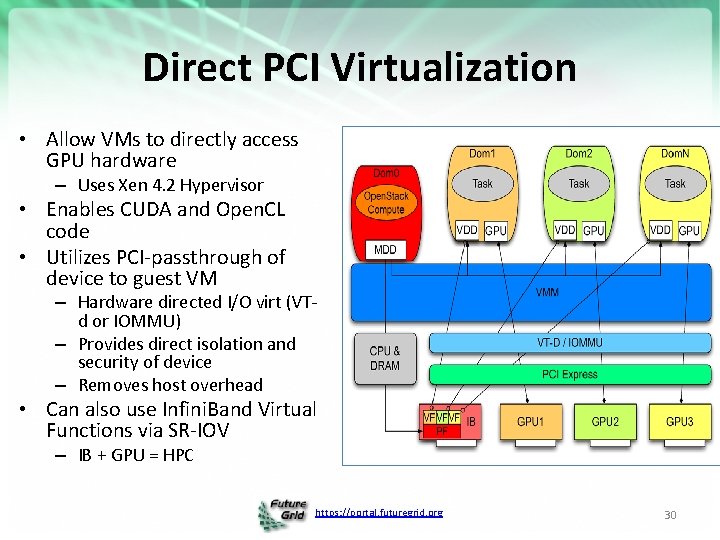

Direct PCI Virtualization • Allow VMs to directly access GPU hardware – Uses Xen 4. 2 Hypervisor • Enables CUDA and Open. CL code • Utilizes PCI-passthrough of device to guest VM – Hardware directed I/O virt (VTd or IOMMU) – Provides direct isolation and security of device – Removes host overhead • Can also use Infini. Band Virtual Functions via SR-IOV – IB + GPU = HPC https: //portal. futuregrid. org 30

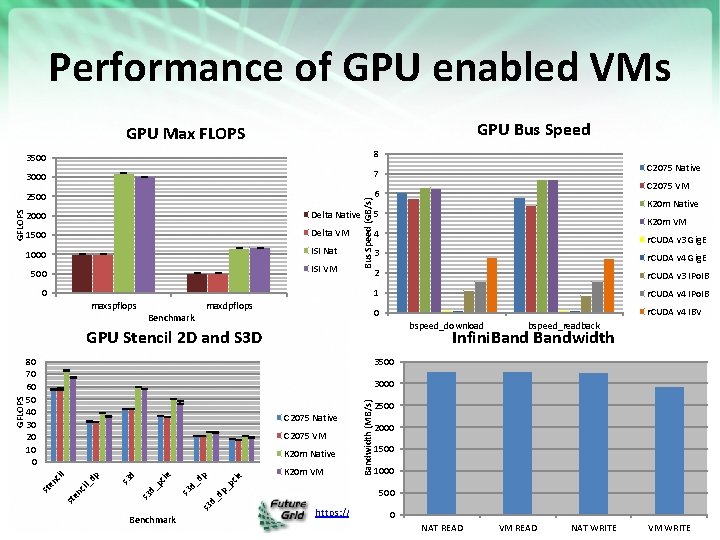

Performance of GPU enabled VMs GPU Bus Speed 3500 8 3000 7 2500 6 2000 Delta Native 1500 Delta VM 1000 ISI Nat ISI VM 500 Bus Speed (GB/s) GFLOPS GPU Max FLOPS 0 maxspflops maxdpflops Benchmark K 20 m Native K 20 m VM 4 r. CUDA v 3 Gig. E 3 r. CUDA v 4 Gig. E 2 r. CUDA v 3 IPo. IB 1 r. CUDA v 4 IPo. IB 0 r. CUDA v 4 IBV bspeed_download bspeed_readback Infini. Bandwidth 3500 C 2075 Native C 2075 VM Benchmark dp _p cie p _d s 3 d_ s 3 d ie s 3 d_ pc d s 3 dp nc il_ st e en cil K 20 m Native K 20 m VM Bandwidth (MB/s) 3000 st GFLOPS C 2075 VM 5 GPU Stencil 2 D and S 3 D 80 70 60 50 40 30 20 10 0 C 2075 Native 2500 2000 1500 1000 500 https: //portal. futuregrid. org 0 NAT READ VM READ NAT WRITE VM WRITE

Results • Xen performs relatively well for virtualizing GPUs – Best: Near-native ~2/3 <1% Fermi, ~1/3 < 1% Kepler – Average: -2. 8% Fermi, -4. 9% Kepler (-2% w/o FFT) – Worst: Kepler FFT (-15% Kepler) – Xen VM performs near-native for memory transfer • Xen works for enabling Infini. Band in VMs – R/W bandwidth and latency perform at near-native – Support for SR-IOV Virtual Functions soon to come • Can now build a High Performance Cloud Infrasturcture? https: //portal. futuregrid. org 32

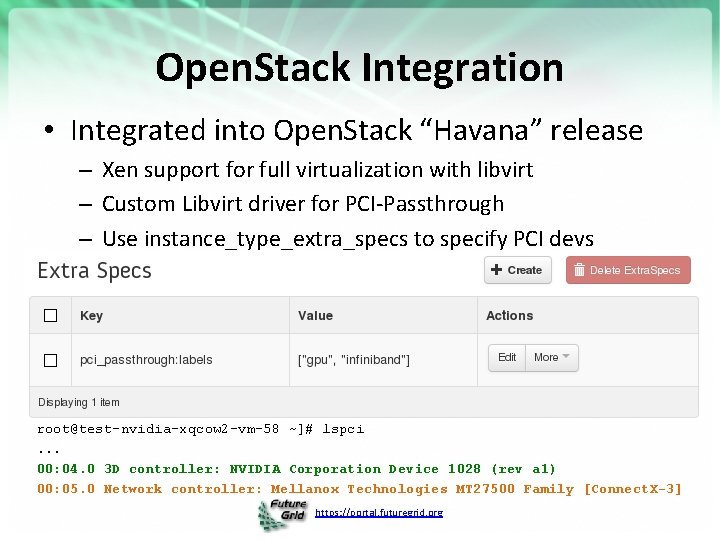

Open. Stack Integration • Integrated into Open. Stack “Havana” release – Xen support for full virtualization with libvirt – Custom Libvirt driver for PCI-Passthrough – Use instance_type_extra_specs to specify PCI devs root@test-nvidia-xqcow 2 -vm-58 ~]# lspci. . . 00: 04. 0 3 D controller: NVIDIA Corporation Device 1028 (rev a 1) 00: 05. 0 Network controller: Mellanox Technologies MT 27500 Family [Connect. X-3] https: //portal. futuregrid. org

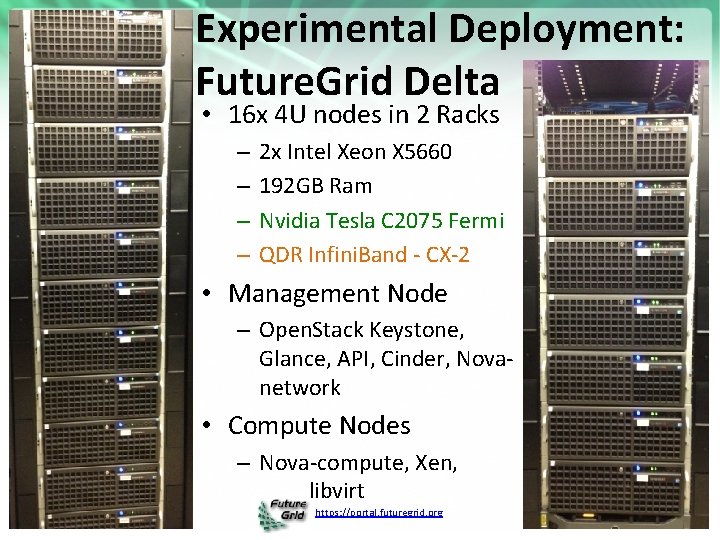

Experimental Deployment: Future. Grid Delta • 16 x 4 U nodes in 2 Racks – – 2 x Intel Xeon X 5660 192 GB Ram Nvidia Tesla C 2075 Fermi QDR Infini. Band - CX-2 • Management Node – Open. Stack Keystone, Glance, API, Cinder, Novanetwork • Compute Nodes – Nova-compute, Xen, libvirt https: //portal. futuregrid. org 34

Details – XSEDE Testing and Future. Grid https: //portal. futuregrid. org 35

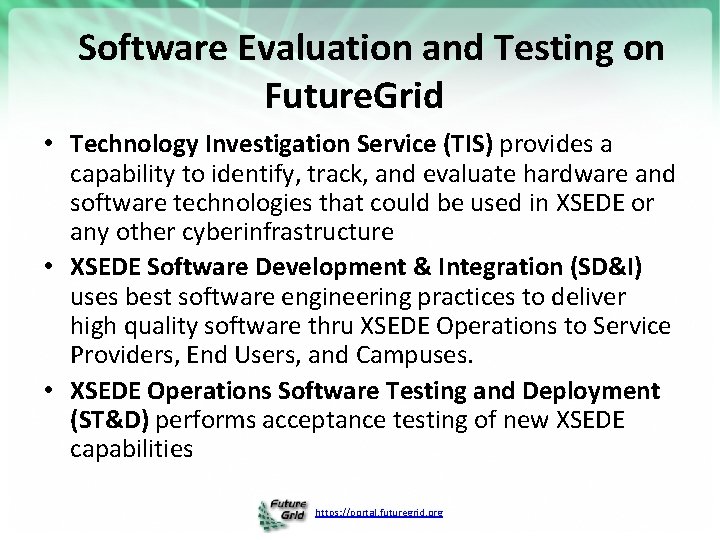

Software Evaluation and Testing on Future. Grid • Technology Investigation Service (TIS) provides a capability to identify, track, and evaluate hardware and software technologies that could be used in XSEDE or any other cyberinfrastructure • XSEDE Software Development & Integration (SD&I) uses best software engineering practices to deliver high quality software thru XSEDE Operations to Service Providers, End Users, and Campuses. • XSEDE Operations Software Testing and Deployment (ST&D) performs acceptance testing of new XSEDE capabilities https: //portal. futuregrid. org

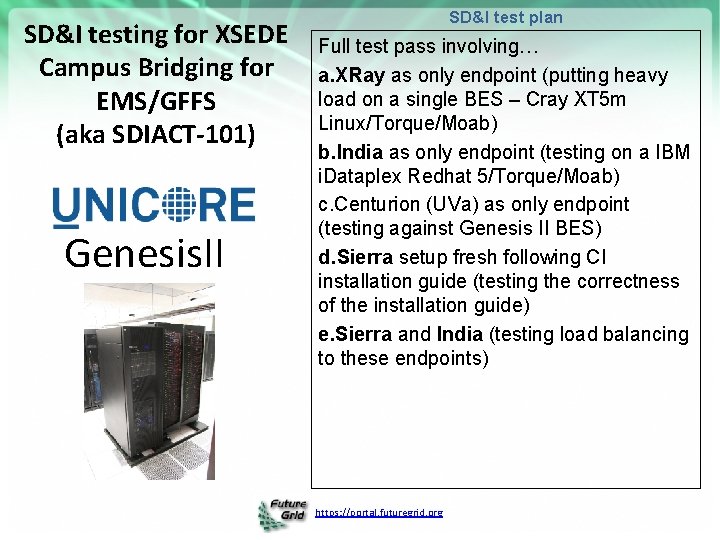

SD&I testing for XSEDE Campus Bridging for EMS/GFFS (aka SDIACT-101) Genesis. II SD&I test plan Full test pass involving… a. XRay as only endpoint (putting heavy load on a single BES – Cray XT 5 m Linux/Torque/Moab) b. India as only endpoint (testing on a IBM i. Dataplex Redhat 5/Torque/Moab) c. Centurion (UVa) as only endpoint (testing against Genesis II BES) d. Sierra setup fresh following CI installation guide (testing the correctness of the installation guide) e. Sierra and India (testing load balancing to these endpoints) https: //portal. futuregrid. org

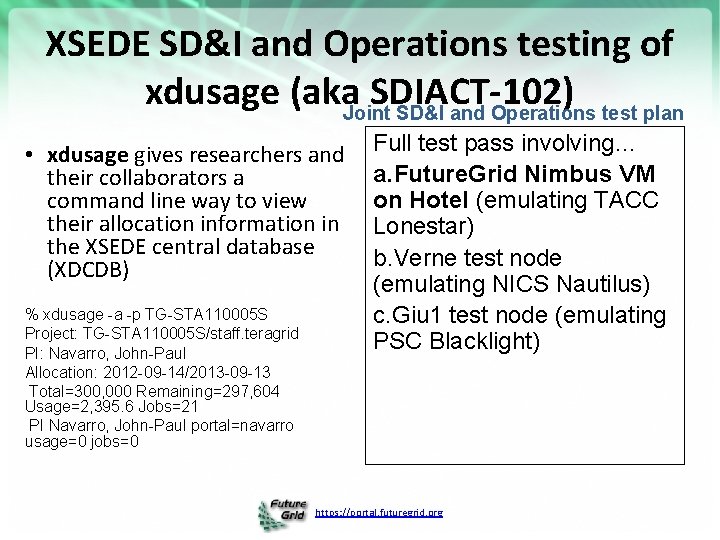

XSEDE SD&I and Operations testing of xdusage (aka SDIACT-102) Joint SD&I and Operations test plan • xdusage gives researchers and their collaborators a command line way to view their allocation information in the XSEDE central database (XDCDB) % xdusage -a -p TG-STA 110005 S Project: TG-STA 110005 S/staff. teragrid PI: Navarro, John-Paul Allocation: 2012 -09 -14/2013 -09 -13 Total=300, 000 Remaining=297, 604 Usage=2, 395. 6 Jobs=21 PI Navarro, John-Paul portal=navarro usage=0 jobs=0 Full test pass involving… a. Future. Grid Nimbus VM on Hotel (emulating TACC Lonestar) b. Verne test node (emulating NICS Nautilus) c. Giu 1 test node (emulating PSC Blacklight) https: //portal. futuregrid. org

Comparison with EGI https: //portal. futuregrid. org 39

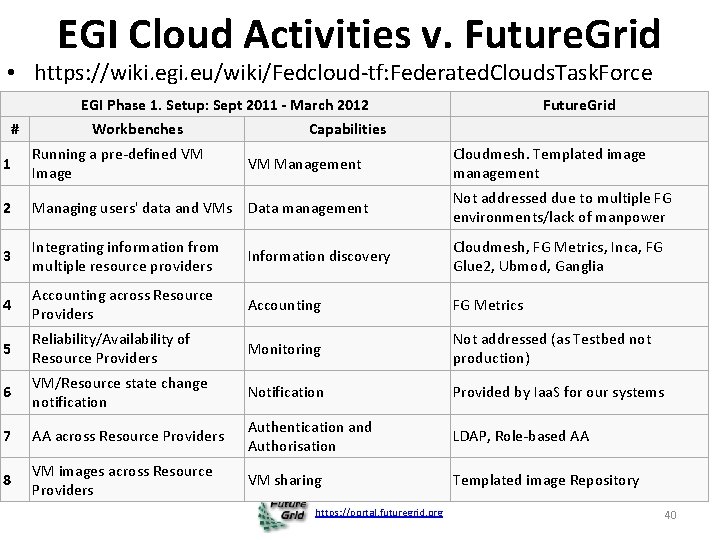

EGI Cloud Activities v. Future. Grid • https: //wiki. egi. eu/wiki/Fedcloud-tf: Federated. Clouds. Task. Force EGI Phase 1. Setup: Sept 2011 - March 2012 # Workbenches Future. Grid Capabilities 1 Running a pre-defined VM Image 2 Managing users' data and VMs Data management Not addressed due to multiple FG environments/lack of manpower 3 Integrating information from multiple resource providers Information discovery Cloudmesh, FG Metrics, Inca, FG Glue 2, Ubmod, Ganglia 4 Accounting across Resource Providers Accounting FG Metrics 5 Reliability/Availability of Resource Providers Monitoring Not addressed (as Testbed not production) 6 VM/Resource state change notification Notification Provided by Iaa. S for our systems 7 AA across Resource Providers Authentication and Authorisation LDAP, Role-based AA 8 VM images across Resource Providers VM sharing Templated image Repository VM Management https: //portal. futuregrid. org Cloudmesh. Templated image management 40

Activities Related to Future. Grid https: //portal. futuregrid. org 41

Essential and Different features of Future. Grid in Cloud area • Unlike many clouds such as Amazon and Azure, Future. Grid allows robust reproducible (in performance and functionality) research (you can request same node with and without VM) – Open Transparent Technology Environment • Future. Grid is more than a Cloud; it is a general distributed Sandbox; a cloud grid HPC testbed • Supports 3 different Iaa. S environments (Nimbus, Eucalyptus, Open. Stack) and projects involve 5 (also Cloud. Stack, Open. Nebula) • Supports research on cloud tools, cloud middleware and cloud-based systems • Future. Grid has itself developed middleware and interfaces to support Future. Grid’s mission e. g. Phantom (cloud user interface) Vine (virtual network) RAIN/Cloudemesh (deploy systems) and security/metric integration • Future. Grid has experience in running cloud systems https: //portal. futuregrid. org 42

Related Projects • Grid 5000 (Europe) and Open. Cirrus with managed flexible environments are closest to Future. Grid and are collaborators • Planet. Lab has a networking focus with less managed system • Several GENI related activities including network centric Emu. Lab, PROb. E (Parallel Reconfigurable Observational Environment), Proto. GENI, Exo. GENI, Insta. GENI and GENICloud • Bon. Fire (Europe) cloud supporting OCCI • Recent EGI Federated Cloud with Open. Stack and Open. Nebula aimed at EU Grid/Cloud federation • Private Clouds: Red Cloud (XSEDE), Wispy (XSEDE), Open Science Data Cloud and the Open Cloud Consortium are typically aimed at computational science • Public Clouds such as AWS do not allow reproducible experiments and bare-metal/VM comparison; do not support experiments on low level cloud technology https: //portal. futuregrid. org 43

Related Projects in Detail I • EGI Federated cloud (see https: //wiki. egi. eu/wiki/Fedcloud-tf: User. Communities and https: //wiki. egi. eu/wiki/Fedcloud-tf: Testbed#Resource_Providers_inventory) with about 4910 documented cores according to the pages. Mostly Open. Nebula and Open. Stack • Grid 5000 is a scientific instrument designed to support experiment-driven research in all areas of computer science related to parallel, large-scale, or distributed computing and networking. Experience from Grid 5000 is a motivating factor for FG. However, the management of the various Cloud and Paa. S frameworks is not addressed. • Emu. Lab provides the software and a hardware specification for a Network Testbed. Emulab is a long-running project and has through its integration into GENI and its deployment in a number of sites resulted in a number of tools that we will try to leverage. These tools have evolved from a network-centric view and allow users to emulate network environments to further users’ research goals. Additionally, some attempts have been made to run Iaa. S frameworks such as Open. Stack and Eucalyptus on Emulab. https: //portal. futuregrid. org 44

Related Projects in Detail II • PROb. E (Parallel Reconfigurable Observational Environment) using Emu. Lab targets scalability experiments on the supercomputing level while providing a large-scale, low-level systems research facility. It consists of recycled super-computing servers from Los Alamos National Laboratory. • Planet. Lab consists of a few hundred machines spread over the world, mainly designed to support wide-area networking and distributed systems research • Exo. GENI links GENI to two advances in virtual infrastructure services outside of GENI: open cloud computing (Open. Stack) and dynamic circuit fabrics. Exo. GENI orchestrates a federation of independent cloud sites and circuit providers through their native Iaa. S interfaces and links them to other GENI tools and resources. Exo. GENI uses Open. Flow to connect the sites and ORCA as a control software. Plugins for Open. Stack and Eucalyptus for ORCA are available. • Proto. GENI is a prototype implementation and deployment of GENI largely based on Emulab software. Proto. GENI is the Control Framework for GENI Cluster C, the largest set of integrated projects in GENI. https: //portal. futuregrid. org 45

Related Projects in Detail III • Bon. Fire from the EU is developing a testbed for internet as a service environment. It provides offerings similar to Emulab: a software stack that simplifies experiment execution while allowing a broker to assist in test orchestration based on test specifications provided by users. • Open. Cirrus is a cloud computing testbed for the research community that federates heterogeneous distributed data centers. It has partners from at least 6 sites. Although federation is one of the main research focuses, the testbed does not yet employ a generalized federated access to their resources according to discussions that took place at the last Open. Cirrus Summit. • Amazon Web Services (AWS) provides the de facto standard for clouds. Recently, projects have integrated their software services with resources offered by Amazon, for example, to utilize cloud bursting in the case of resource starvation as part of batch queuing systems. Others (MIT) have automated and simplified the process of building, configuring, and managing clusters of virtual machines on Amazon’s EC 2 cloud. https: //portal. futuregrid. org 46

Related Projects in Detail IV • Insta. GENI and GENICloud build two complementary elements for providing a federation architecture that takes its inspiration from the Web. Their goals are to make it easy, safe, and cheap for people to build small Clouds and run Cloud jobs at many different sites. For this purpose, GENICloud/Trans. Cloud provides a common API across Cloud Systems and access Control without identity. Insta. GENI provides an out-of-the-box small cloud. The main focus of this effort is to provide a federated cloud infrastructure • Cloud testbeds and deployments. In addition a number of testbeds exist providing access to a variety of cloud software. These testbeds include Red Cloud, Wimpy, the Open Science Data Cloud, and the Open Cloud Consortium resources. • XSEDE is a single virtual system that scientists can use to share computing resources, data, and expertise interactively. People around the world use these resources and services, including supercomputers, collections of data, and new tools. XSEDE is devoted to delivering a production-level facility to its user community. It is currently exploring clouds, but has not yet committed to them. XSEDE does not allow the provisioning of the software stack in the way FG allows. https: //portal. futuregrid. org 47

Link Future. Grid and GENI • Identify how to use the ORCA federation framework to integrate Future. Grid (and more of XSEDE? ) into Exo. GENI • Allow FG(XSEDE) users to access the GENI resources and vice versa • Enable Paa. S level services (such as a distributed Hbase or Hadoop) to be deployed across FG and GENI resources • Leverage the Image generation capabilities of FG and the bare metal deployment strategies of FG within the GENI context. – Software defined networks plus cloud/bare metal dynamic provisioning gives software defined systems https: //portal. futuregrid. org 48

Typical Future. Grid/GENI Project • Bringing computing to data is often unrealistic as repositories distinct from computing resource and/or data is distributed • So one can build and measure performance of virtual distributed data stores where software defined networks bring the computing to distributed data repositories. • Example applications already on Future. Grid include Network Science (analysis of Twitter data), “Deep Learning” (large scale clustering of social images), Earthquake and Polar Science, Sensor nets as seen in Smart Power Grids, Pathology images, and Genomics • Compare different data models HDFS, Hbase, Object Stores, Lustre, Databases https: //portal. futuregrid. org 49

MOOC’s https: //portal. futuregrid. org

Integrate MOOC Technology • We are building MOOC lessons to describe core Future. Grid Capabilities • Will help especially educational uses – 36 Semester long classes: over 650 students – Cloud Computing, Distributed Systems, Scientific Computing and Data Analytics – 3 one week summer schools: 390+ students – Big Data, Cloudy View of Computing (for HBCU’s), Science Clouds – 7 one to three day workshop/tutorials: 238 students • • Science Cloud Summer School available in MOOC format First high level Software IP-over-P 2 P (IPOP) Overview and Details of Future. Grid How to get project, use HPC and use Open. Stack

Online MOOC’s • Science Cloud MOOC repository – http: //iucloudsummerschool. appspot. com/preview • Future. Grid MOOC’s – https: //fgmoocs. appspot. com/explorer • A MOOC that will use Future. Grid for class laboratories (for advanced students in IU Online Data Science masters degree) – https: //x-informatics. appspot. com/course • MOOC Introduction to Future. Grid can be used by all classes and tutorials on Future. Grid • Currently use Google Course Builder: Google Apps + You. Tube – Built as collection of modular ~10 minute lessons • Develop techniques to allow Clouds to efficiently support MOOC’s

• Twelve ~10 minutes lesson objects in this lecture • IU wants us to close caption if use in real course 53

Future. Grid hosts many classes per semester How to use Future. Grid is shared MOOC 54

Services https: //portal. futuregrid. org

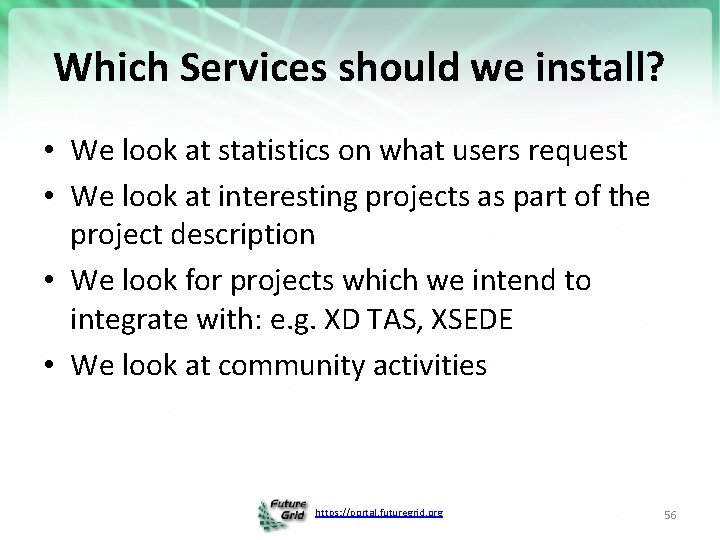

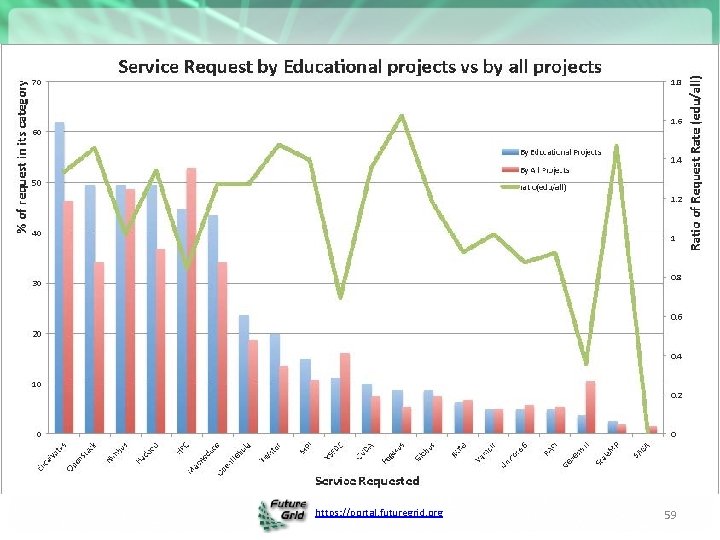

Which Services should we install? • We look at statistics on what users request • We look at interesting projects as part of the project description • We look for projects which we intend to integrate with: e. g. XD TAS, XSEDE • We look at community activities https: //portal. futuregrid. org 56

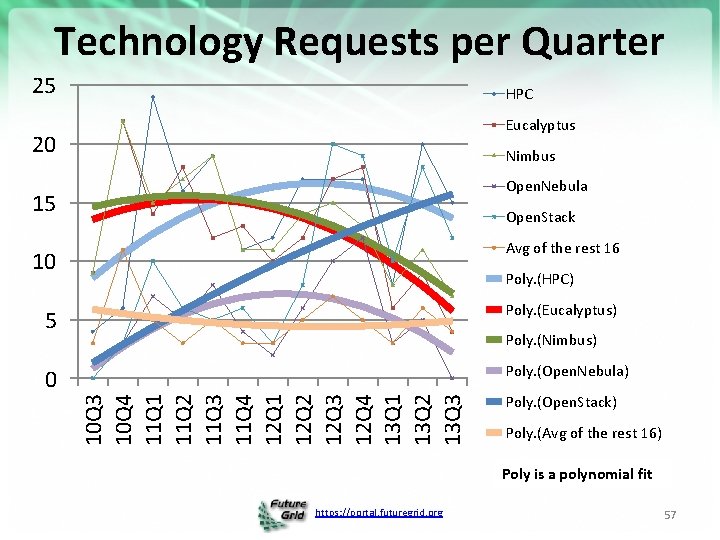

Technology Requests per Quarter 25 HPC Eucalyptus 20 Nimbus Open. Nebula 15 Open. Stack Avg of the rest 16 10 Poly. (HPC) 5 Poly. (Eucalyptus) 0 Poly. (Open. Nebula) 10 Q 3 10 Q 4 11 Q 1 11 Q 2 11 Q 3 11 Q 4 12 Q 1 12 Q 2 12 Q 3 12 Q 4 13 Q 1 13 Q 2 13 Q 3 Poly. (Nimbus) Poly. (Open. Stack) Poly. (Avg of the rest 16) Poly is a polynomial fit https: //portal. futuregrid. org 57

Selected List of Services Offered Hadoop Iterative Map. Reduce HDFS Hbase Swift Object Store Iaa. S Nimbus Eucalyptus Gridaa. S Genesis HPCaa. S Open. Stack Unicore MPI Vi. NE SAGA Globus Testbedaa. S Open. MP CUDA Future. Grid Cloud Paa. S Infrastructure: Inca, Ganglia Provisioning: RAIN, Cloudmesh VMs: Phantom, Cloudmesh Experiments: Pegasus, Precip, Cloudmesh Accounting: FG, XSEDE https: //portal. futuregrid. org 58

https: //portal. futuregrid. org 59

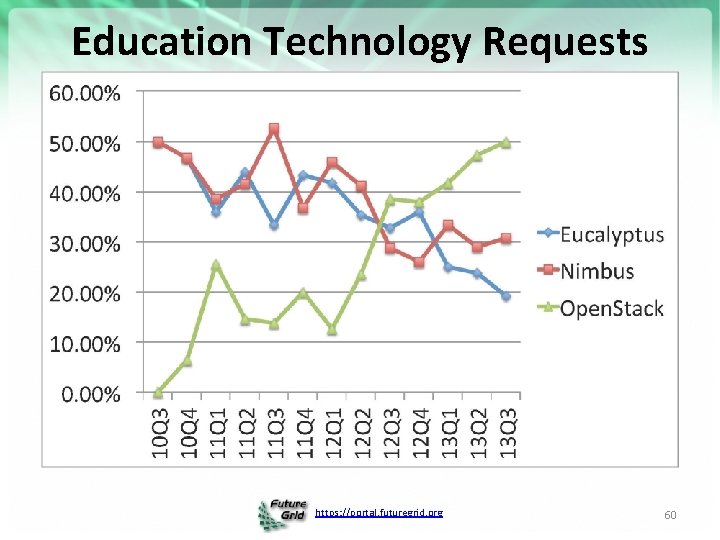

Education Technology Requests https: //portal. futuregrid. org 60

Details – Future. Grid Futures https: //portal. futuregrid. org 61

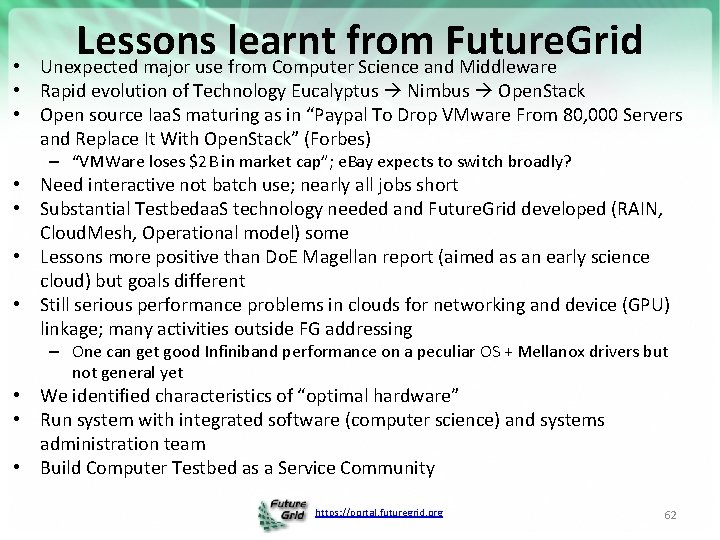

Lessons learnt from Future. Grid Unexpected major use from Computer Science and Middleware • • Rapid evolution of Technology Eucalyptus Nimbus Open. Stack • Open source Iaa. S maturing as in “Paypal To Drop VMware From 80, 000 Servers and Replace It With Open. Stack” (Forbes) – “VMWare loses $2 B in market cap”; e. Bay expects to switch broadly? • Need interactive not batch use; nearly all jobs short • Substantial Testbedaa. S technology needed and Future. Grid developed (RAIN, Cloud. Mesh, Operational model) some • Lessons more positive than Do. E Magellan report (aimed as an early science cloud) but goals different • Still serious performance problems in clouds for networking and device (GPU) linkage; many activities outside FG addressing – One can get good Infiniband performance on a peculiar OS + Mellanox drivers but not general yet • We identified characteristics of “optimal hardware” • Run system with integrated software (computer science) and systems administration team • Build Computer Testbed as a Service Community https: //portal. futuregrid. org 62

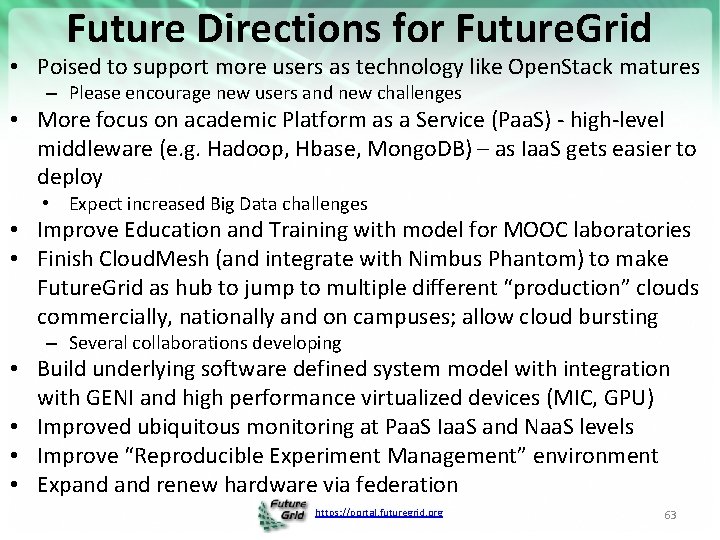

Future Directions for Future. Grid • Poised to support more users as technology like Open. Stack matures – Please encourage new users and new challenges • More focus on academic Platform as a Service (Paa. S) - high-level middleware (e. g. Hadoop, Hbase, Mongo. DB) – as Iaa. S gets easier to deploy • Expect increased Big Data challenges • Improve Education and Training with model for MOOC laboratories • Finish Cloud. Mesh (and integrate with Nimbus Phantom) to make Future. Grid as hub to jump to multiple different “production” clouds commercially, nationally and on campuses; allow cloud bursting – Several collaborations developing • Build underlying software defined system model with integration with GENI and high performance virtualized devices (MIC, GPU) • Improved ubiquitous monitoring at Paa. S Iaa. S and Naa. S levels • Improve “Reproducible Experiment Management” environment • Expand renew hardware via federation https: //portal. futuregrid. org 63

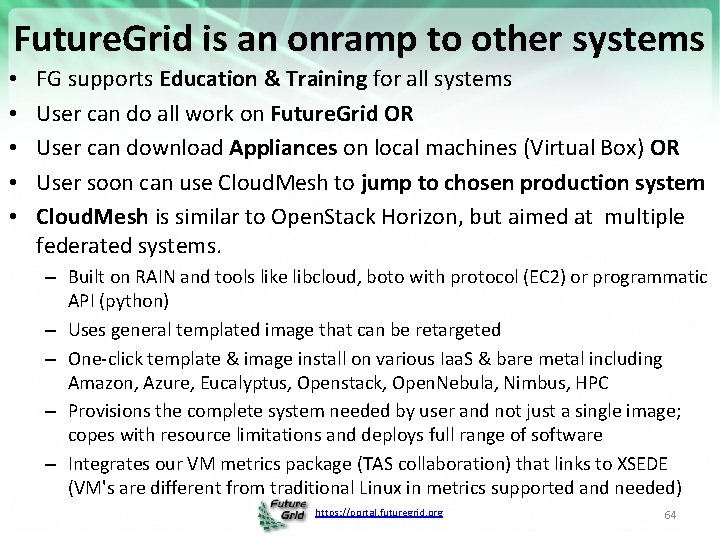

Future. Grid is an onramp to other systems • • • FG supports Education & Training for all systems User can do all work on Future. Grid OR User can download Appliances on local machines (Virtual Box) OR User soon can use Cloud. Mesh to jump to chosen production system Cloud. Mesh is similar to Open. Stack Horizon, but aimed at multiple federated systems. – Built on RAIN and tools like libcloud, boto with protocol (EC 2) or programmatic API (python) – Uses general templated image that can be retargeted – One-click template & image install on various Iaa. S & bare metal including Amazon, Azure, Eucalyptus, Openstack, Open. Nebula, Nimbus, HPC – Provisions the complete system needed by user and not just a single image; copes with resource limitations and deploys full range of software – Integrates our VM metrics package (TAS collaboration) that links to XSEDE (VM's are different from traditional Linux in metrics supported and needed) https: //portal. futuregrid. org 64

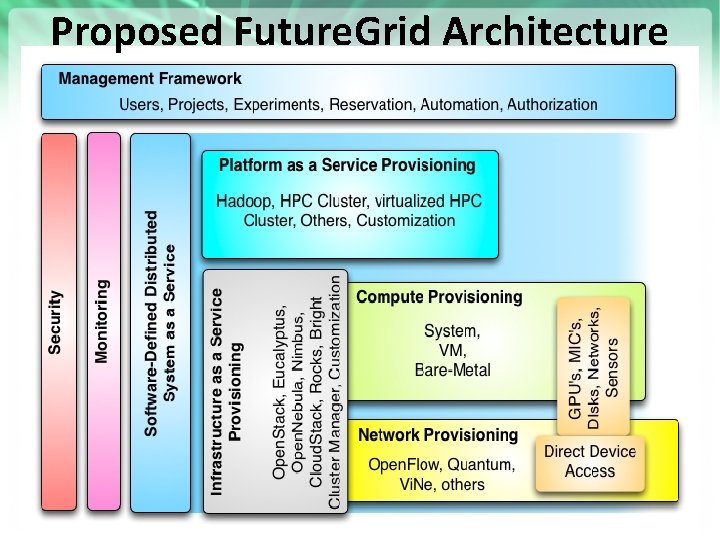

Proposed Future. Grid Architecture https: //portal. futuregrid. org 65

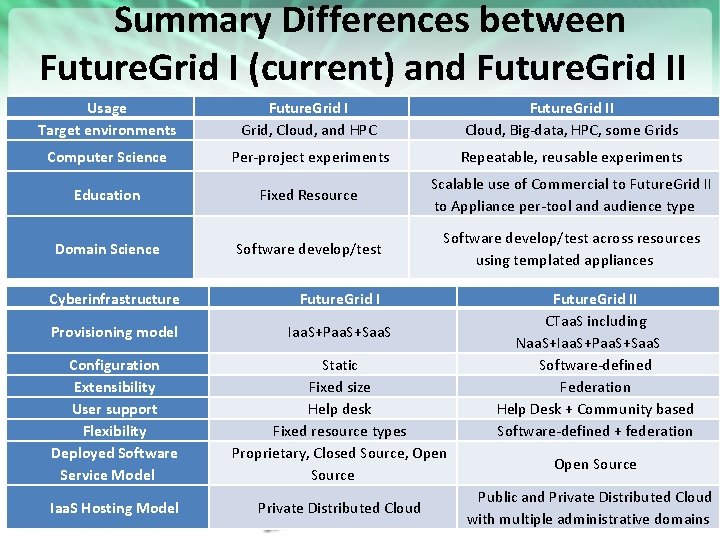

Summary Differences between Future. Grid I (current) and Future. Grid II Usage Target environments Computer Science Future. Grid I Grid, Cloud, and HPC Future. Grid II Cloud, Big-data, HPC, some Grids Per-project experiments Repeatable, reusable experiments Education Fixed Resource Scalable use of Commercial to Future. Grid II to Appliance per-tool and audience type Domain Science Software develop/test across resources using templated appliances Cyberinfrastructure Future. Grid I Provisioning model Iaa. S+Paa. S+Saa. S Configuration Extensibility User support Flexibility Deployed Software Service Model Static Fixed size Help desk Fixed resource types Proprietary, Closed Source, Open Source Iaa. S Hosting Model Private Distributed Cloud https: //portal. futuregrid. org Future. Grid II CTaa. S including Naa. S+Iaa. S+Paa. S+Saa. S Software-defined Federation Help Desk + Community based Software-defined + federation Open Source Public and Private Distributed Cloud 66 with multiple administrative domains

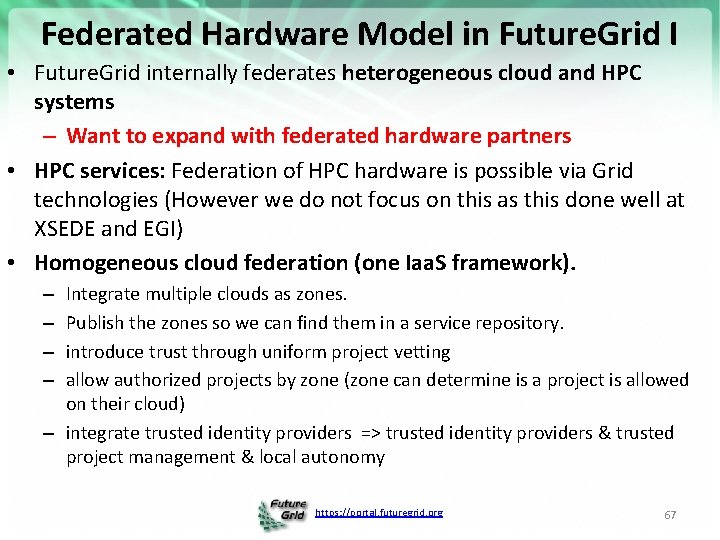

Federated Hardware Model in Future. Grid I • Future. Grid internally federates heterogeneous cloud and HPC systems – Want to expand with federated hardware partners • HPC services: Federation of HPC hardware is possible via Grid technologies (However we do not focus on this as this done well at XSEDE and EGI) • Homogeneous cloud federation (one Iaa. S framework). Integrate multiple clouds as zones. Publish the zones so we can find them in a service repository. introduce trust through uniform project vetting allow authorized projects by zone (zone can determine is a project is allowed on their cloud) – integrate trusted identity providers => trusted identity providers & trusted project management & local autonomy – – https: //portal. futuregrid. org 67

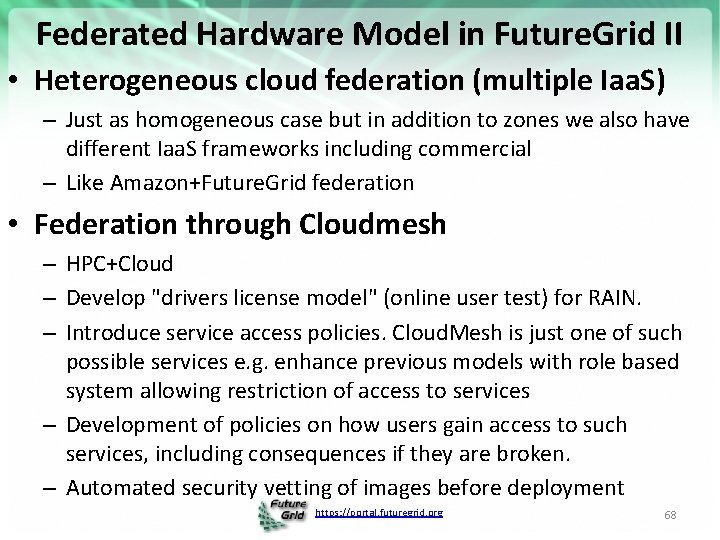

Federated Hardware Model in Future. Grid II • Heterogeneous cloud federation (multiple Iaa. S) – Just as homogeneous case but in addition to zones we also have different Iaa. S frameworks including commercial – Like Amazon+Future. Grid federation • Federation through Cloudmesh – HPC+Cloud – Develop "drivers license model" (online user test) for RAIN. – Introduce service access policies. Cloud. Mesh is just one of such possible services e. g. enhance previous models with role based system allowing restriction of access to services – Development of policies on how users gain access to such services, including consequences if they are broken. – Automated security vetting of images before deployment https: //portal. futuregrid. org 68

Cloudmesh Testbedaa. S Tool RAIN https: //portal. futuregrid. org 69

Avoid Confusion To avoid confusion with the overloaded term Dynamic Provisioning we will use the term RAIN https: //portal. futuregrid. org 70

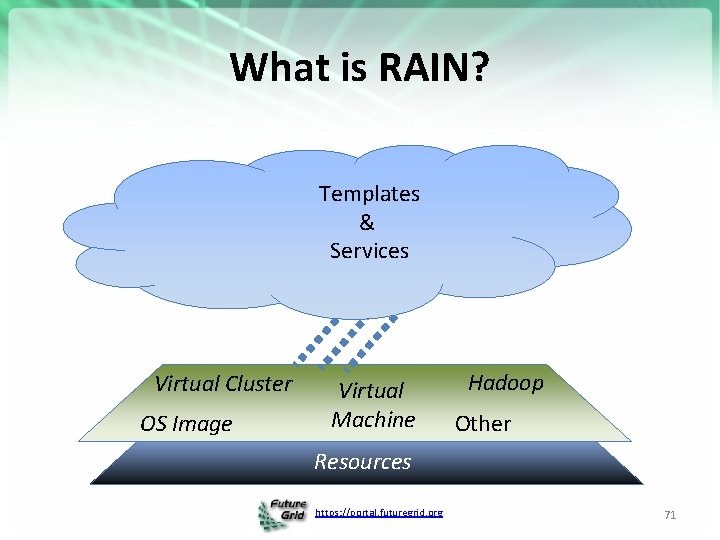

What is RAIN? Templates & Services Virtual Cluster OS Image Virtual Machine Hadoop Other Resources https: //portal. futuregrid. org 71

RAIN/RAINING is a Concept Cloudmesh is a toolkit implementing RAIN and also to support general target environments It includes a component called Rain that is used to build and interface with a testbed so that users can conduct advanced reproducible experiments https: //portal. futuregrid. org 72

Cloudmesh Testbedaa. S Tool An evolving toolkit and service to build and interface with a testbed so that users can conduct advanced reproducible experiments https: //portal. futuregrid. org 73

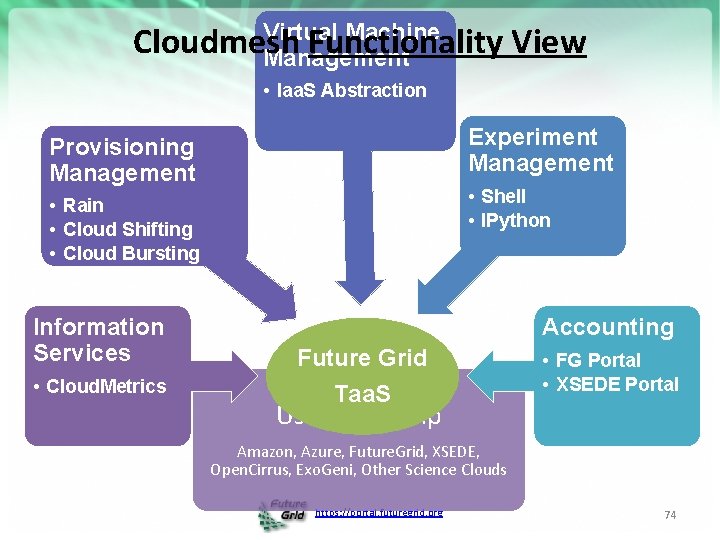

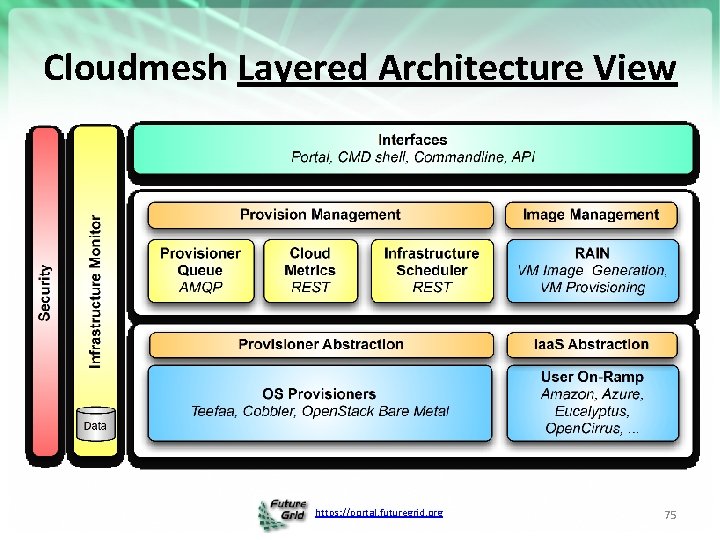

Virtual Machine Cloudmesh Functionality View Management • Iaa. S Abstraction Experiment Management Provisioning Management • Shell • IPython • Rain • Cloud Shifting • Cloud Bursting Information Services • Cloud. Metrics Accounting Future Grid Taa. S • FG Portal • XSEDE Portal User On-Ramp Amazon, Azure, Future. Grid, XSEDE, Open. Cirrus, Exo. Geni, Other Science Clouds https: //portal. futuregrid. org 74

Cloudmesh Layered Architecture View https: //portal. futuregrid. org 75

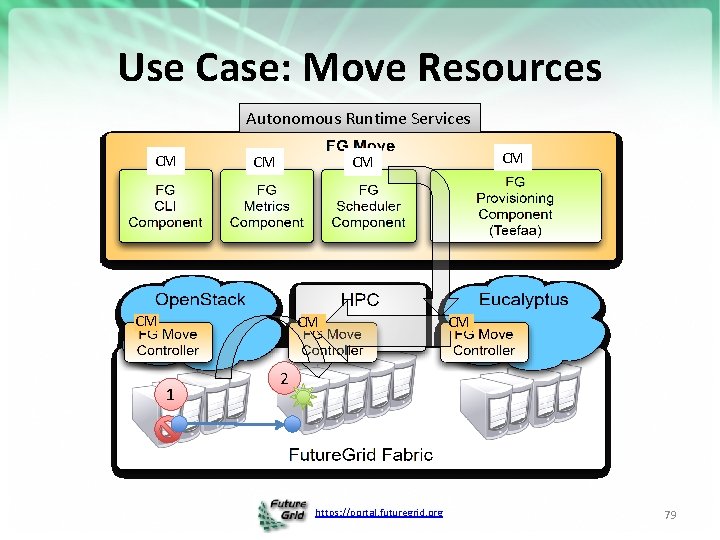

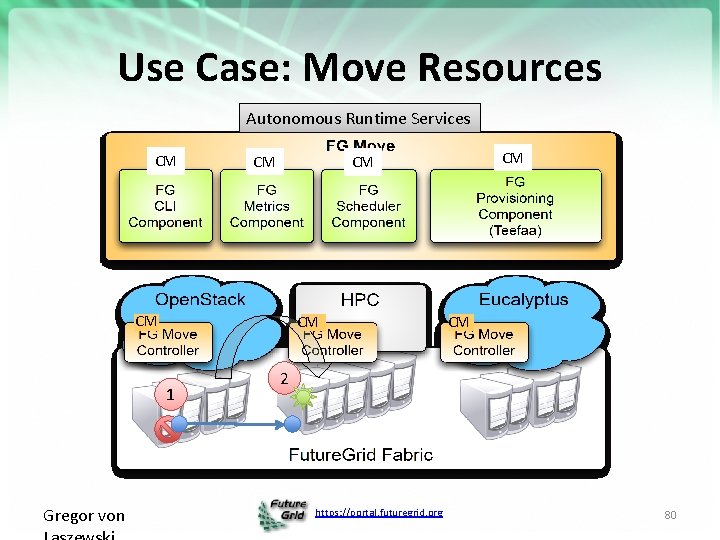

Cloudmesh RAIN Move • Orchestrates resource re-allocation among different infrastructures • Command Line interface to ease the access to this service • Exclusive access to the service to prevent conflicts • Keep status information about the resources assigned to each infrastructure as well as the historical to be able to make predictions about the future needs • Scheduler that can dynamically re-allocate resources and support manually planning future re-allocations https: //portal. futuregrid. org 76

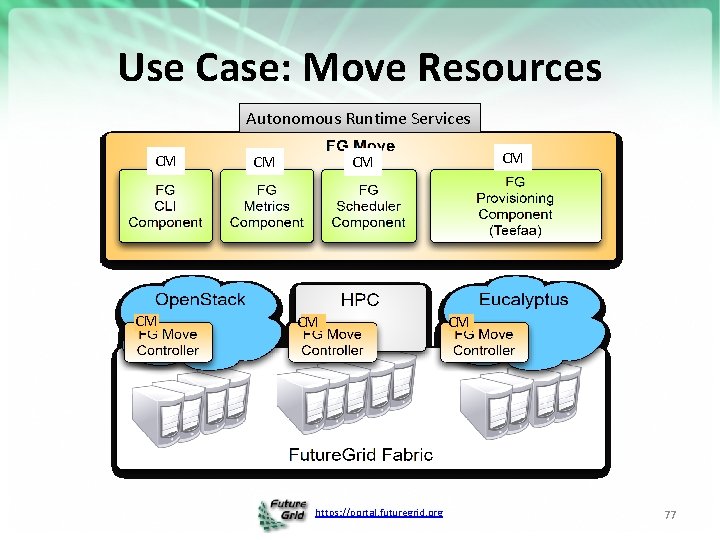

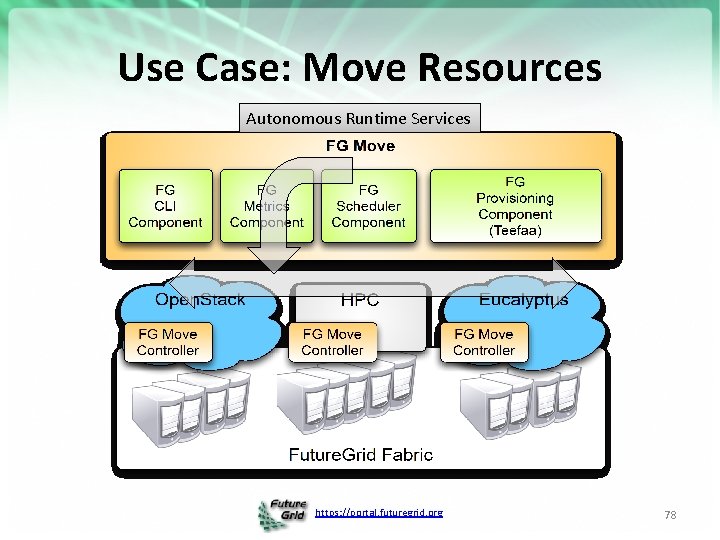

Use Case: Move Resources Autonomous Runtime Services CM CM CM https: //portal. futuregrid. org CM 77

Use Case: Move Resources Autonomous Runtime Services https: //portal. futuregrid. org 78

Use Case: Move Resources Autonomous Runtime Services CM CM 1 CM CM CM 2 https: //portal. futuregrid. org 79

Use Case: Move Resources Autonomous Runtime Services CM CM 1 Gregor von CM CM CM 2 https: //portal. futuregrid. org 80

Cloud. Mesh Feature Summary • Provisioning – – • • • RAIN Bare Metal RAIN of VMs RAIN of Platforms Templated Image Management Resource Inventory Experiment Management with IPython Integration of external clouds Integration of HPC resources Project, Role, and user based authorization framework https: //portal. futuregrid. org

Cloudmesh Federation Aspects • Federate HPC services – Covered by Grid technology – Covered by Genesis II (often used) • Thus: Should not be focus of our activities as addressed by others – We provide users the ability to access HPC resources via key management – This is logical as each HPC resource in FG is independent. https: //portal. futuregrid. org

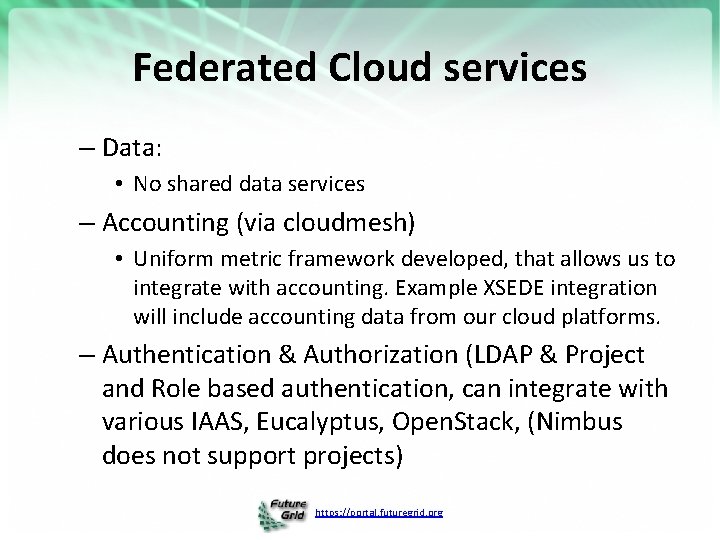

Federated Cloud services – Data: • No shared data services – Accounting (via cloudmesh) • Uniform metric framework developed, that allows us to integrate with accounting. Example XSEDE integration will include accounting data from our cloud platforms. – Authentication & Authorization (LDAP & Project and Role based authentication, can integrate with various IAAS, Eucalyptus, Open. Stack, (Nimbus does not support projects) https: //portal. futuregrid. org

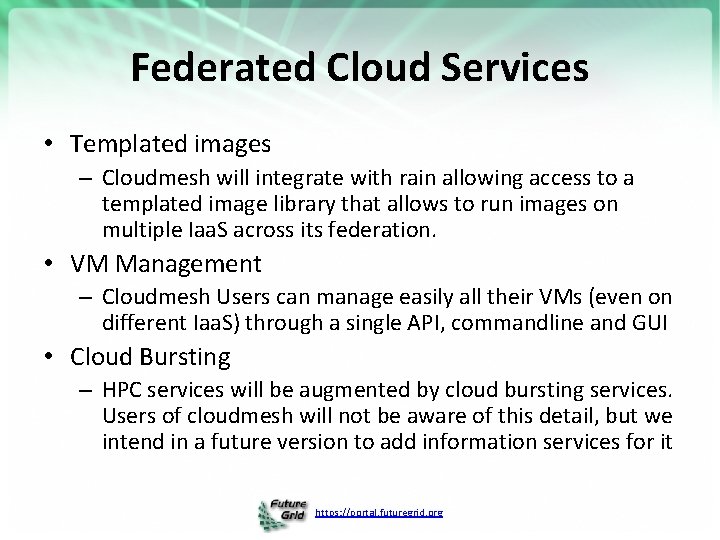

Federated Cloud Services • Templated images – Cloudmesh will integrate with rain allowing access to a templated image library that allows to run images on multiple Iaa. S across its federation. • VM Management – Cloudmesh Users can manage easily all their VMs (even on different Iaa. S) through a single API, commandline and GUI • Cloud Bursting – HPC services will be augmented by cloud bursting services. Users of cloudmesh will not be aware of this detail, but we intend in a future version to add information services for it https: //portal. futuregrid. org

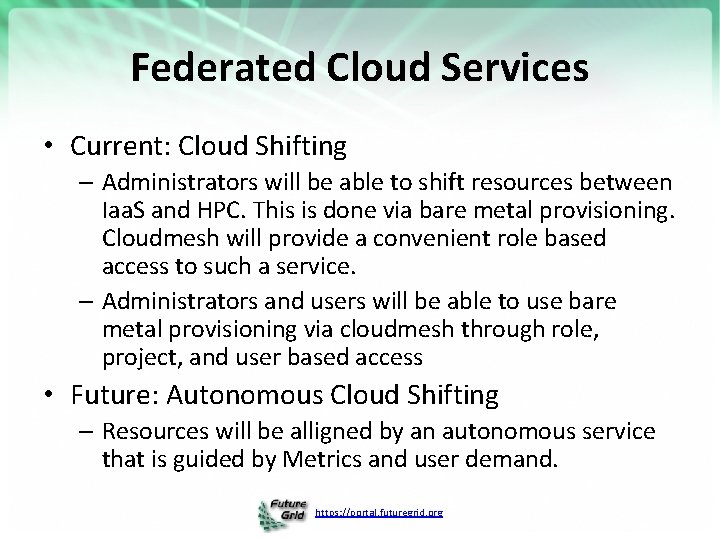

Federated Cloud Services • Current: Cloud Shifting – Administrators will be able to shift resources between Iaa. S and HPC. This is done via bare metal provisioning. Cloudmesh will provide a convenient role based access to such a service. – Administrators and users will be able to use bare metal provisioning via cloudmesh through role, project, and user based access • Future: Autonomous Cloud Shifting – Resources will be alligned by an autonomous service that is guided by Metrics and user demand. https: //portal. futuregrid. org

Cloudmesh Testbedaa. S Tool Screenshots https: //portal. futuregrid. org

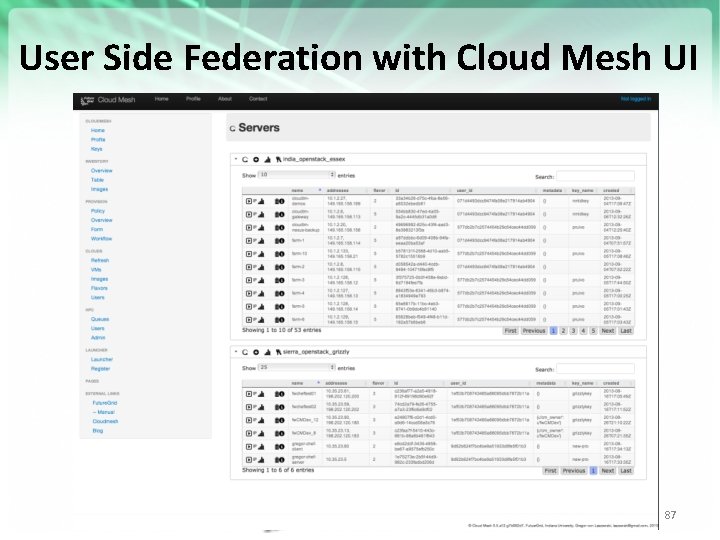

User Side Federation with Cloud Mesh UI https: //portal. futuregrid. org 87

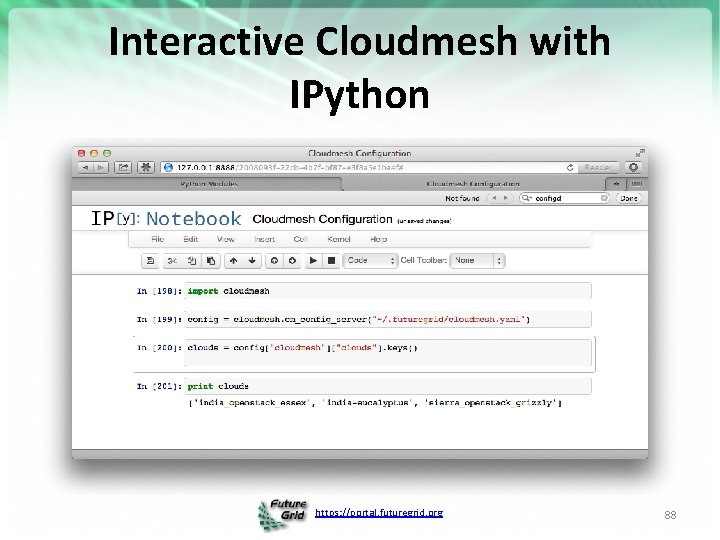

Interactive Cloudmesh with IPython https: //portal. futuregrid. org 88

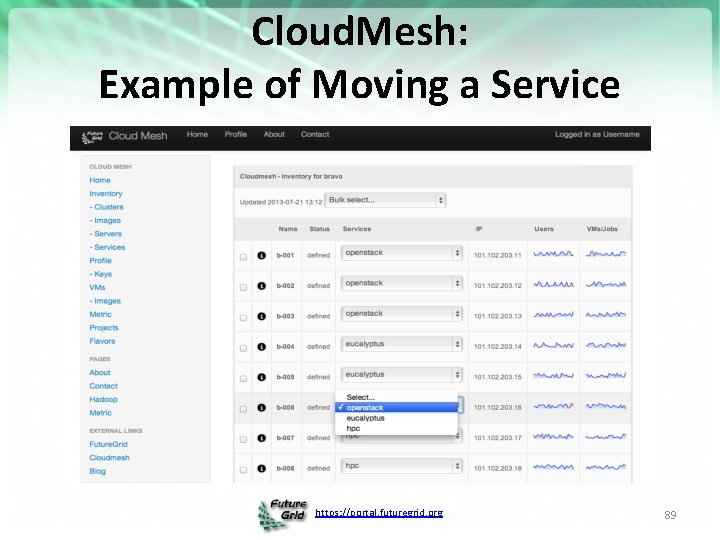

Cloud. Mesh: Example of Moving a Service https: //portal. futuregrid. org 89

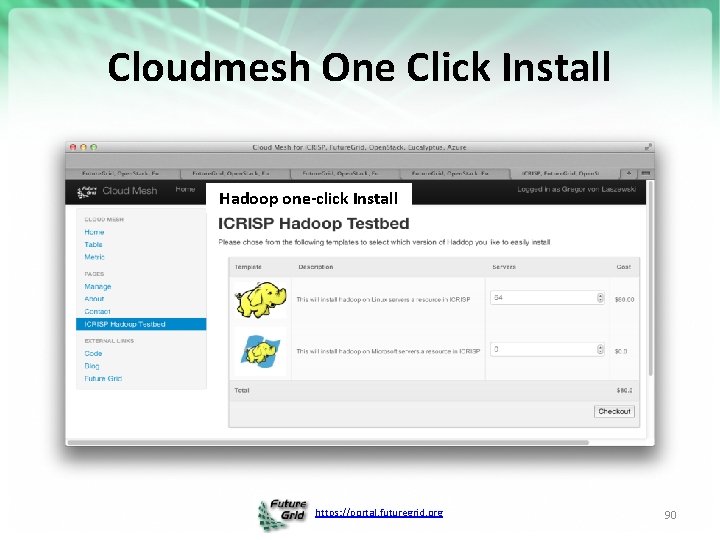

Cloudmesh One Click Install Hadoop one-click Install https: //portal. futuregrid. org 90

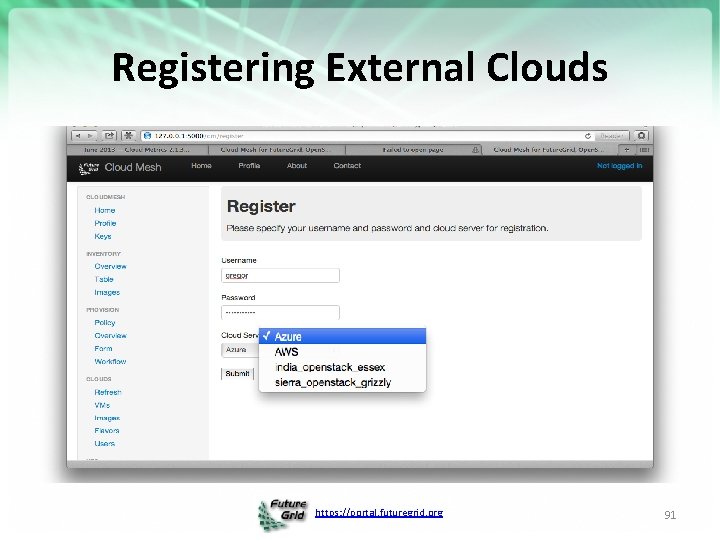

Registering External Clouds https: //portal. futuregrid. org 91

Details -- Security https: //portal. futuregrid. org 92

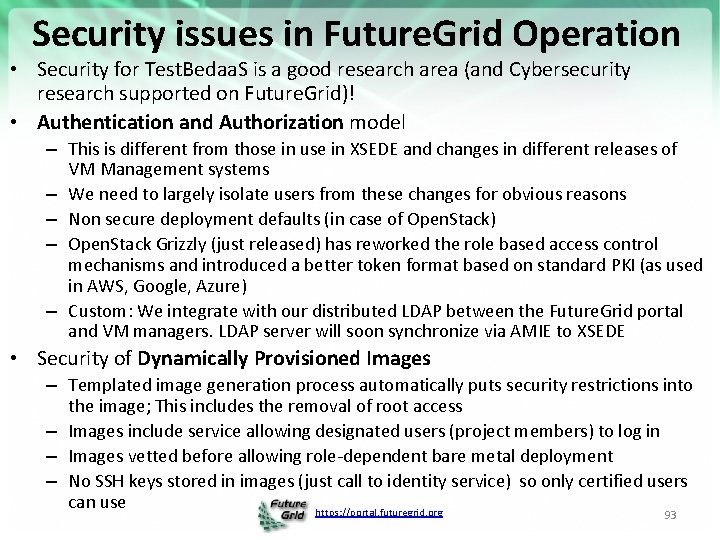

Security issues in Future. Grid Operation • Security for Test. Bedaa. S is a good research area (and Cybersecurity research supported on Future. Grid)! • Authentication and Authorization model – This is different from those in use in XSEDE and changes in different releases of VM Management systems – We need to largely isolate users from these changes for obvious reasons – Non secure deployment defaults (in case of Open. Stack) – Open. Stack Grizzly (just released) has reworked the role based access control mechanisms and introduced a better token format based on standard PKI (as used in AWS, Google, Azure) – Custom: We integrate with our distributed LDAP between the Future. Grid portal and VM managers. LDAP server will soon synchronize via AMIE to XSEDE • Security of Dynamically Provisioned Images – Templated image generation process automatically puts security restrictions into the image; This includes the removal of root access – Images include service allowing designated users (project members) to log in – Images vetted before allowing role-dependent bare metal deployment – No SSH keys stored in images (just call to identity service) so only certified users can use https: //portal. futuregrid. org 93

Some Security Aspects in FG • User Management – Users are vetted twice • (a) when they come to the portal all users are checked if they are technical people and potentially could benefit from a project • (b) when a project is proposed the proposer is checked again. • Surprisingly: so far vetting of most users is simple – Many portals do not do (a) • therefore they have many spammers and people not actually interested in the technology • As we have wiki forum functionality in portal we need (a) so we can avoid vetting every change in the portal which is too time consuming https: //portal. futuregrid. org

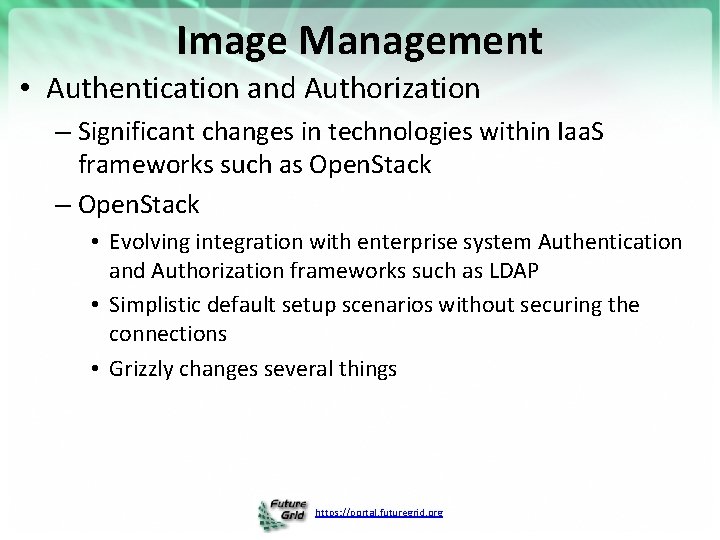

Image Management • Authentication and Authorization – Significant changes in technologies within Iaa. S frameworks such as Open. Stack – Open. Stack • Evolving integration with enterprise system Authentication and Authorization frameworks such as LDAP • Simplistic default setup scenarios without securing the connections • Grizzly changes several things https: //portal. futuregrid. org

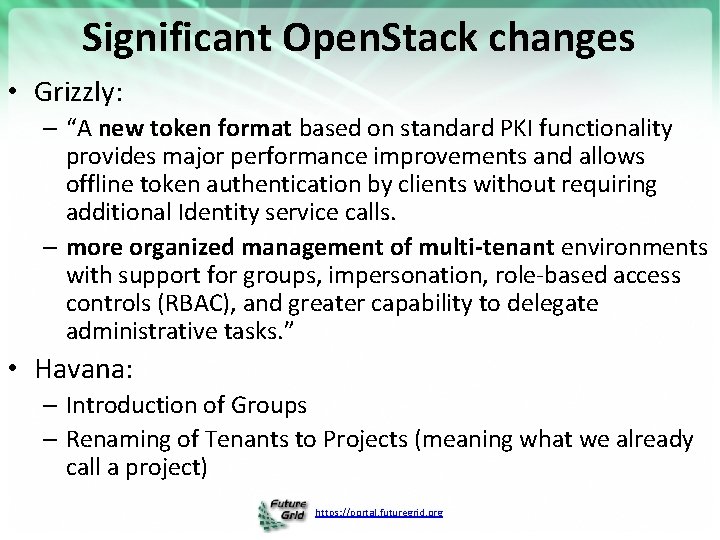

Significant Open. Stack changes • Grizzly: – “A new token format based on standard PKI functionality provides major performance improvements and allows offline token authentication by clients without requiring additional Identity service calls. – more organized management of multi-tenant environments with support for groups, impersonation, role-based access controls (RBAC), and greater capability to delegate administrative tasks. ” • Havana: – Introduction of Groups – Renaming of Tenants to Projects (meaning what we already call a project) https: //portal. futuregrid. org

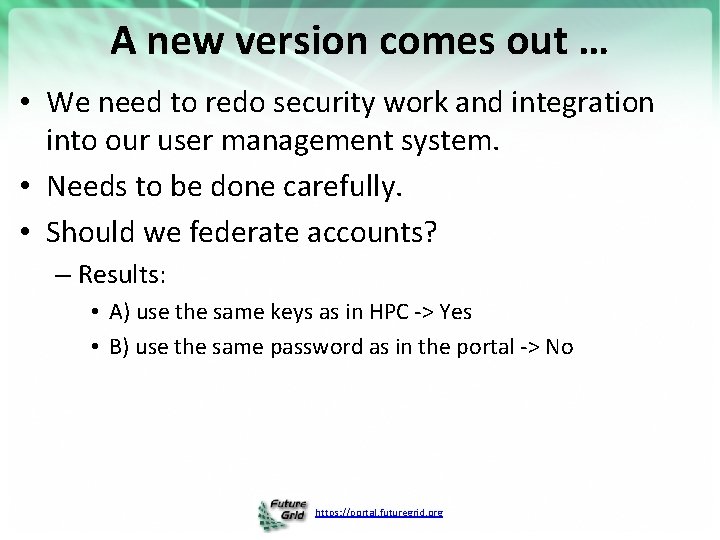

A new version comes out … • We need to redo security work and integration into our user management system. • Needs to be done carefully. • Should we federate accounts? – Results: • A) use the same keys as in HPC -> Yes • B) use the same password as in the portal -> No https: //portal. futuregrid. org

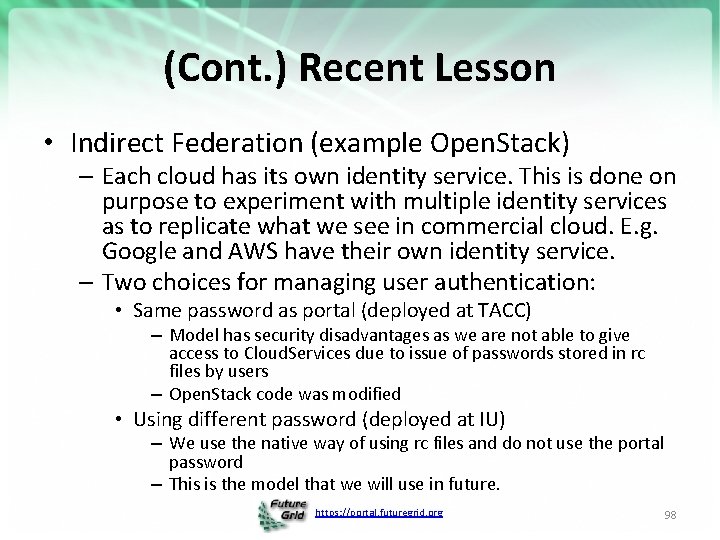

(Cont. ) Recent Lesson • Indirect Federation (example Open. Stack) – Each cloud has its own identity service. This is done on purpose to experiment with multiple identity services as to replicate what we see in commercial cloud. E. g. Google and AWS have their own identity service. – Two choices for managing user authentication: • Same password as portal (deployed at TACC) – Model has security disadvantages as we are not able to give access to Cloud. Services due to issue of passwords stored in rc files by users – Open. Stack code was modified • Using different password (deployed at IU) – We use the native way of using rc files and do not use the portal password – This is the model that we will use in future. https: //portal. futuregrid. org 98

Federation with XSEDE • We can receive new user requests from XSEDE and create accounts for such users • How do we approach SSO? – The Grid community has made this a major task – However we are not just about XSEDE resources, what about EGI, GENI, …, Azure, Google, AWS – Two models (a) VO’s with federated authentication and authorization (b) user-based federation while user manages multiple logins in various services through a key-ring with multiple keys • We believe that for the majority of our users b) was sufficient. However, we use the same keys on HPC as we to on Clouds. https: //portal. futuregrid. org

Details – Image Generation https: //portal. futuregrid. org 100

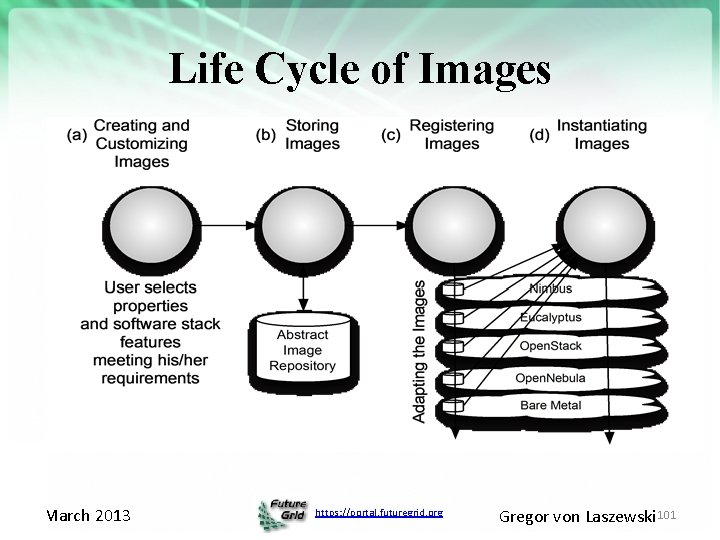

Life Cycle of Images March 2013 https: //portal. futuregrid. org Gregor von Laszewski 101

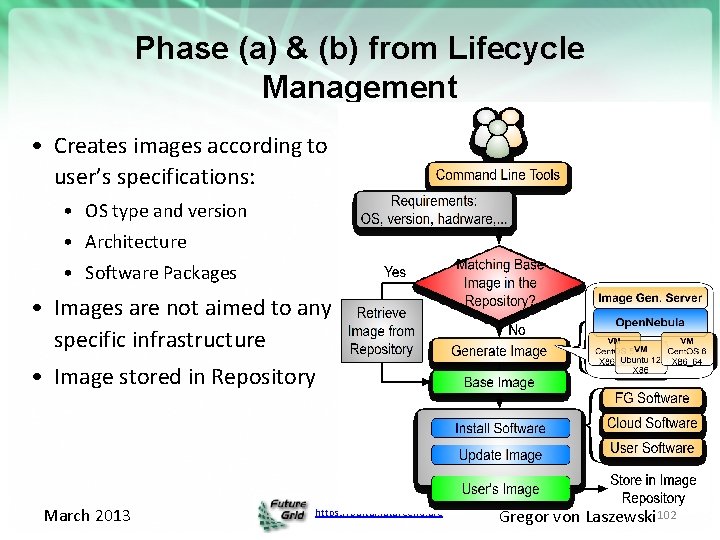

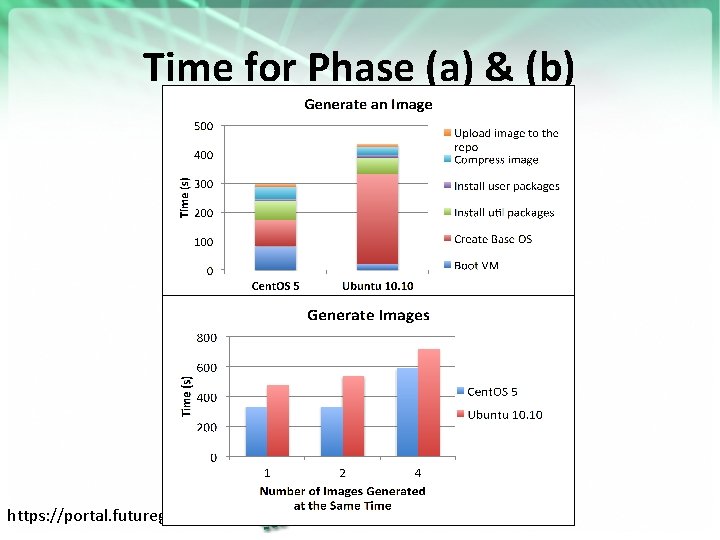

Phase (a) & (b) from Lifecycle Management • Creates images according to user’s specifications: • OS type and version • Architecture • Software Packages • Images are not aimed to any specific infrastructure • Image stored in Repository March 2013 https: //portal. futuregrid. org Gregor von Laszewski 102

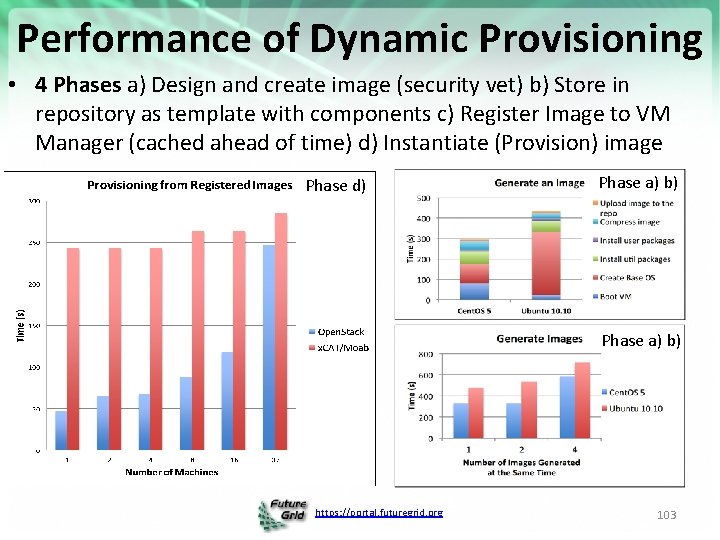

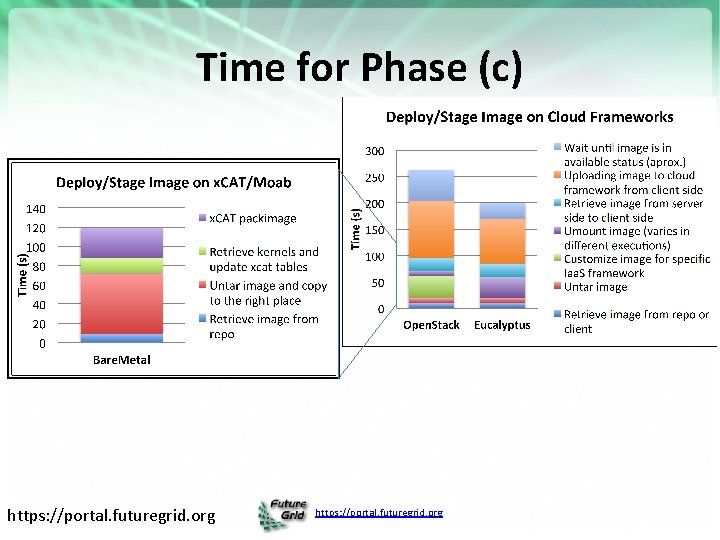

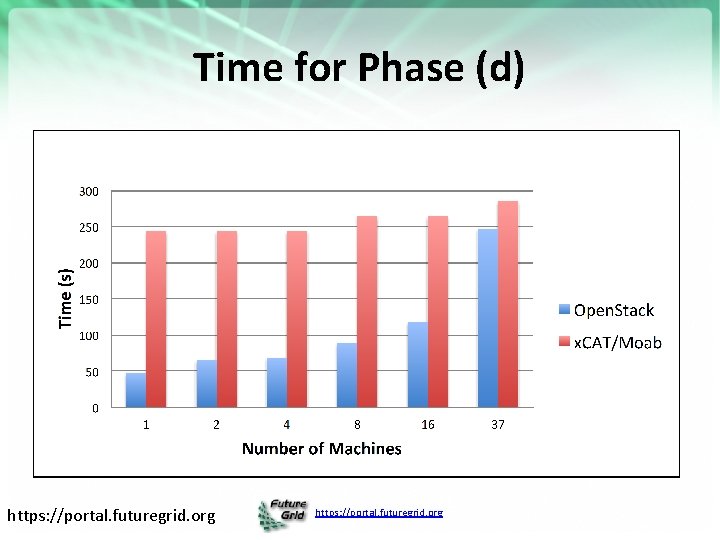

Performance of Dynamic Provisioning • 4 Phases a) Design and create image (security vet) b) Store in repository as template with components c) Register Image to VM Manager (cached ahead of time) d) Instantiate (Provision) image Phase d) Phase a) b) https: //portal. futuregrid. org 103

Time for Phase (a) & (b) https: //portal. futuregrid. org

Time for Phase (c) https: //portal. futuregrid. org

Time for Phase (d) https: //portal. futuregrid. org

Why is bare metal slower • HPC bare metal is slower as time is dominated in last phase, including a bare metal boot • In clouds we do lots of things in memory and avoid bare metal boot by using an in memory boot. • We intend to repeat experiments in Grizzly and will have than more servers. https: //portal. futuregrid. org

Details – Monitoring on Future. Grid Monitoring and metrics are critical for a Testbed https: //portal. futuregrid. org 108

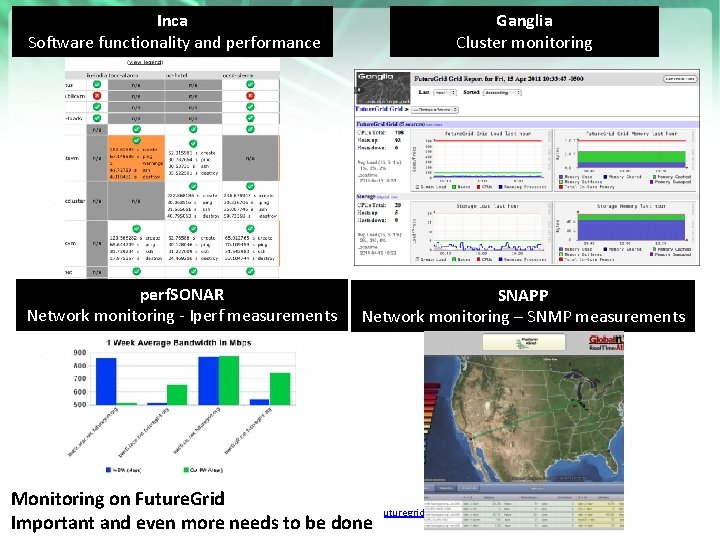

Inca Software functionality and performance perf. SONAR Network monitoring - Iperf measurements Ganglia Cluster monitoring SNAPP Network monitoring – SNMP measurements Monitoring on Future. Grid https: //portal. futuregrid. org Important and even more needs to be done

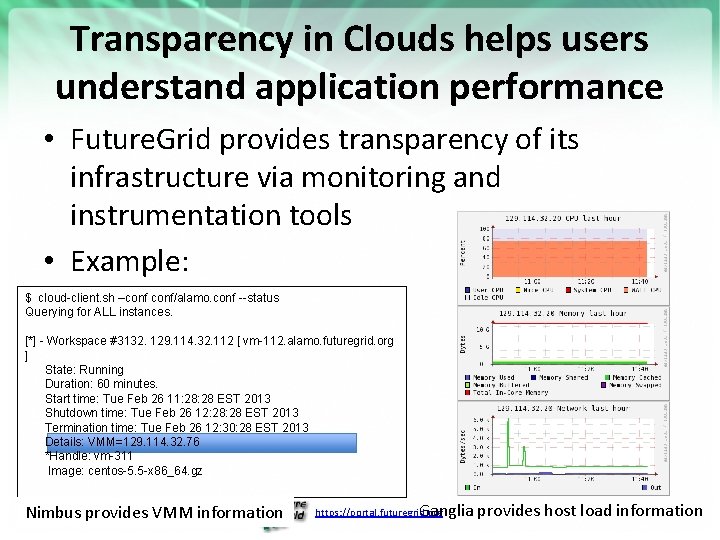

Transparency in Clouds helps users understand application performance • Future. Grid provides transparency of its infrastructure via monitoring and instrumentation tools • Example: $ cloud-client. sh –conf/alamo. conf --status Querying for ALL instances. [*] - Workspace #3132. 129. 114. 32. 112 [ vm-112. alamo. futuregrid. org ] State: Running Duration: 60 minutes. Start time: Tue Feb 26 11: 28 EST 2013 Shutdown time: Tue Feb 26 12: 28 EST 2013 Termination time: Tue Feb 26 12: 30: 28 EST 2013 Details: VMM=129. 114. 32. 76 *Handle: vm-311 Image: centos-5. 5 -x 86_64. gz Nimbus provides VMM information Ganglia provides host load information https: //portal. futuregrid. org

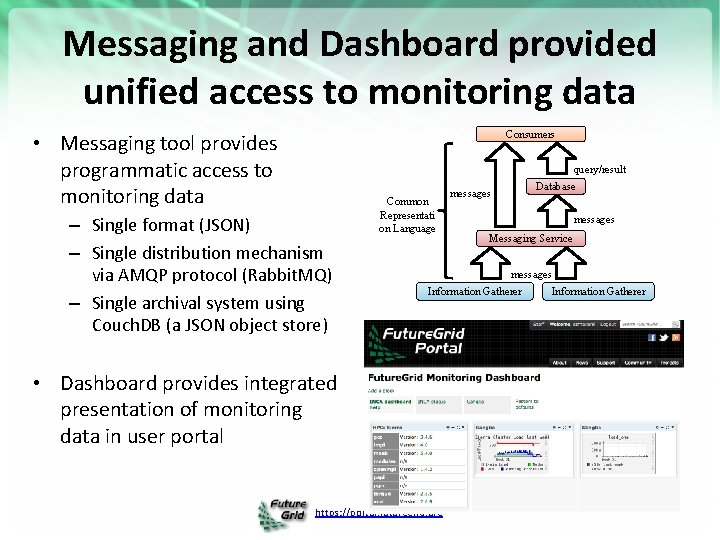

Messaging and Dashboard provided unified access to monitoring data Consumers • Messaging tool provides programmatic access to monitoring data query/result – Single format (JSON) – Single distribution mechanism via AMQP protocol (Rabbit. MQ) – Single archival system using Couch. DB (a JSON object store) Common Representati on Language Database messages Messaging Service messages Information Gatherer • Dashboard provides integrated presentation of monitoring data in user portal https: //portal. futuregrid. org Information Gatherer

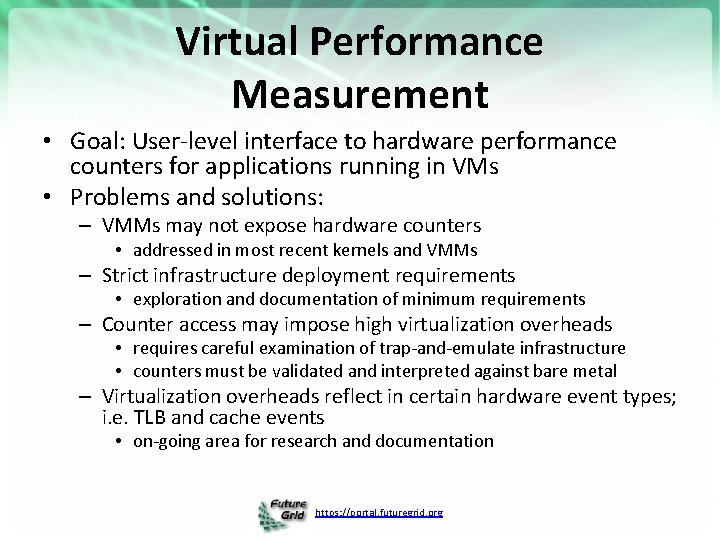

Virtual Performance Measurement • Goal: User-level interface to hardware performance counters for applications running in VMs • Problems and solutions: – VMMs may not expose hardware counters • addressed in most recent kernels and VMMs – Strict infrastructure deployment requirements • exploration and documentation of minimum requirements – Counter access may impose high virtualization overheads • requires careful examination of trap-and-emulate infrastructure • counters must be validated and interpreted against bare metal – Virtualization overheads reflect in certain hardware event types; i. e. TLB and cache events • on-going area for research and documentation https: //portal. futuregrid. org

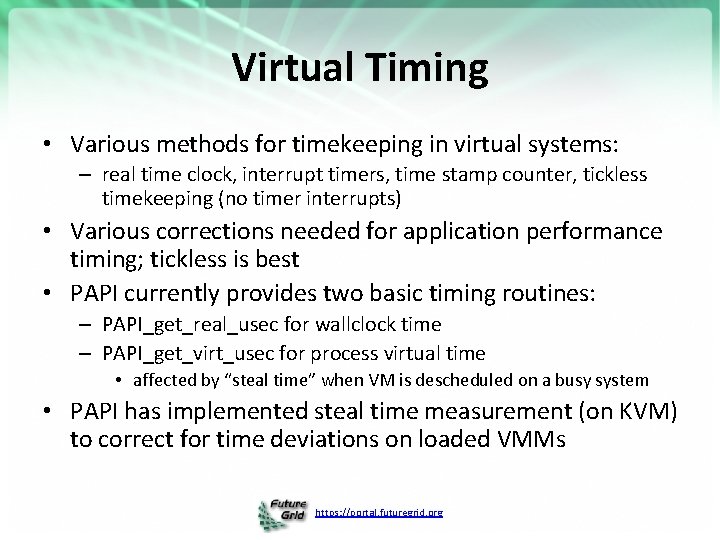

Virtual Timing • Various methods for timekeeping in virtual systems: – real time clock, interrupt timers, time stamp counter, tickless timekeeping (no timer interrupts) • Various corrections needed for application performance timing; tickless is best • PAPI currently provides two basic timing routines: – PAPI_get_real_usec for wallclock time – PAPI_get_virt_usec for process virtual time • affected by “steal time” when VM is descheduled on a busy system • PAPI has implemented steal time measurement (on KVM) to correct for time deviations on loaded VMMs https: //portal. futuregrid. org

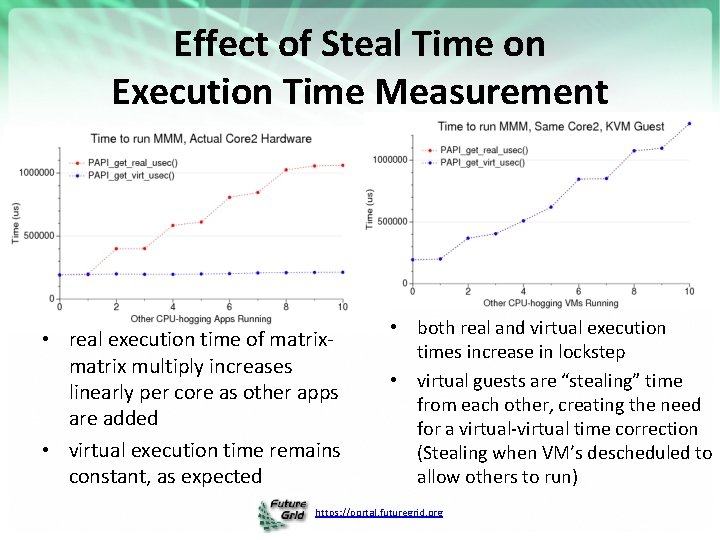

Effect of Steal Time on Execution Time Measurement • real execution time of matrix multiply increases linearly per core as other apps are added • virtual execution time remains constant, as expected • both real and virtual execution times increase in lockstep • virtual guests are “stealing” time from each other, creating the need for a virtual-virtual time correction (Stealing when VM’s descheduled to allow others to run) https: //portal. futuregrid. org

Details – Future. Grid Appliances https: //portal. futuregrid. org 115

Education and Training Use of Future. Grid • 28 Semester long classes: 563+ students – Cloud Computing, Distributed Systems, Scientific Computing and Data Analytics • 3 one week summer schools: 390+ students – Big Data, Cloudy View of Computing (for HBCU’s), Science Clouds 7 one to three day workshop/tutorials: 238 students Several Undergraduate research REU (outreach) projects From 20 Institutions Developing 2 MOOC’s (Google Course Builder) on Cloud Computing and use of Future. Grid supported by either Future. Grid or downloadable appliances (custom images) – See http: //iucloudsummerschool. appspot. com/preview and http: //fgmoocs. appspot. com/preview • Future. Grid appliances support Condor/MPI/Hadoop/Iterative Map. Reduce virtual clusters • • https: //portal. futuregrid. org 116

Educational appliances in Future. Grid • A flexible, extensible platform for hands-on, lab-oriented education on Future. Grid • Executable modules – virtual appliances – Deployable on Future. Grid resources – Deployable on other cloud platforms, as well as virtualized desktops • Community sharing – Web 2. 0 portal, appliance image repositories – An aggregation hub for executable modules and documentation https: //portal. futuregrid. org 11 7

Grid appliances on Future. Grid • Virtual appliances – Encapsulate software environment in image • Virtual disk, virtual hardware configuration • The Grid appliance – Encapsulates cluster software environments • Condor, MPI, Hadoop – Homogeneous images at each node – Virtual Network forms a cluster – Deploy within or across sites • Same environment on a variety of platforms – Future. Grid clouds; student desktop; private cloud; Amazon EC 2; … https: //portal. futuregrid. org 11 8

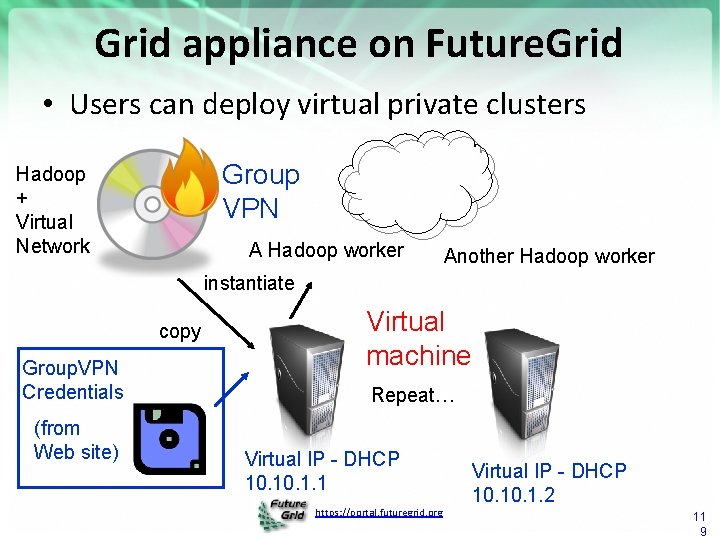

Grid appliance on Future. Grid • Users can deploy virtual private clusters Group VPN Hadoop + Virtual Network A Hadoop worker Another Hadoop worker instantiate copy Group. VPN Credentials (from Web site) Virtual machine Repeat… Virtual IP - DHCP 10. 1. 1 https: //portal. futuregrid. org Virtual IP - DHCP 10. 1. 2 11 9

- Slides: 119