Functions of Random Variables Methods for determining the

Functions of Random Variables

Methods for determining the distribution of functions of Random Variables 1. Distribution function method 2. Moment generating function method 3. Transformation method

Distribution function method Let X, Y, Z …. have joint density f(x, y, z, …) Let W = h( X, Y, Z, …) First step Find the distribution function of W G(w) = P[W ≤ w] = P[h( X, Y, Z, …) ≤ w] Second step Find the density function of W g(w) = G'(w).

Use of moment generating functions 1. Using the moment generating functions of X, Y, Z, …determine the moment generating function of W = h(X, Y, Z, …). 2. Identify the distribution of W from its moment generating function This procedure works well for sums, linear combinations etc.

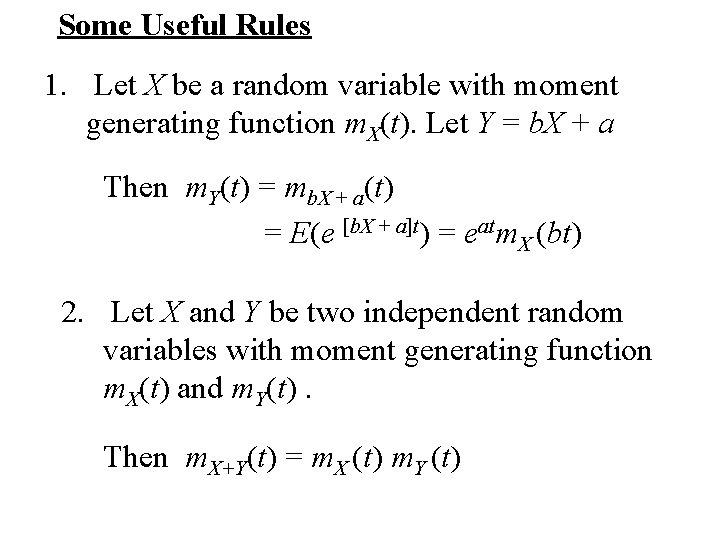

Some Useful Rules 1. Let X be a random variable with moment generating function m. X(t). Let Y = b. X + a Then m. Y(t) = mb. X + a(t) = E(e [b. X + a]t) = eatm. X (bt) 2. Let X and Y be two independent random variables with moment generating function m. X(t) and m. Y(t). Then m. X+Y(t) = m. X (t) m. Y (t)

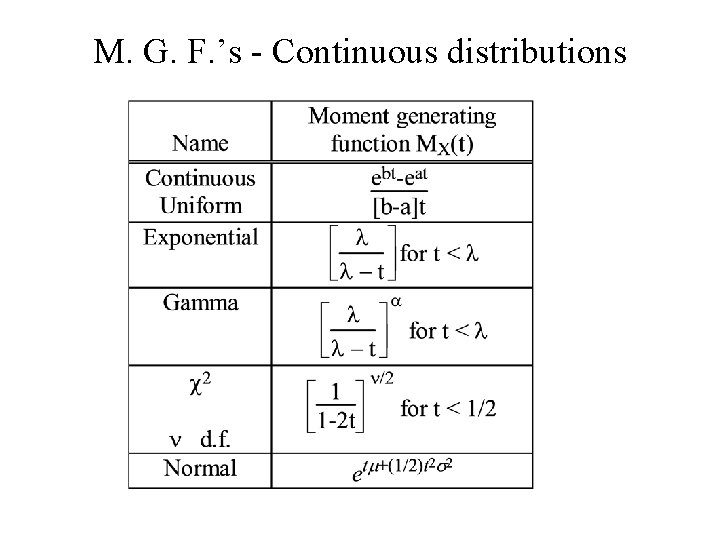

M. G. F. ’s - Continuous distributions

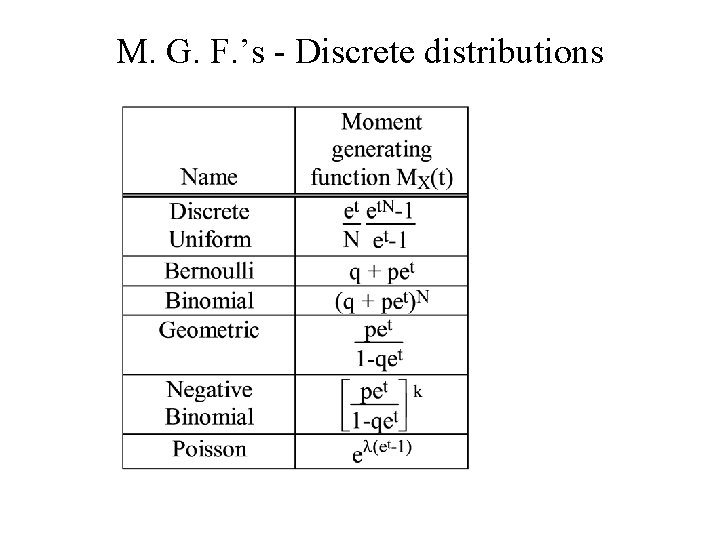

M. G. F. ’s - Discrete distributions

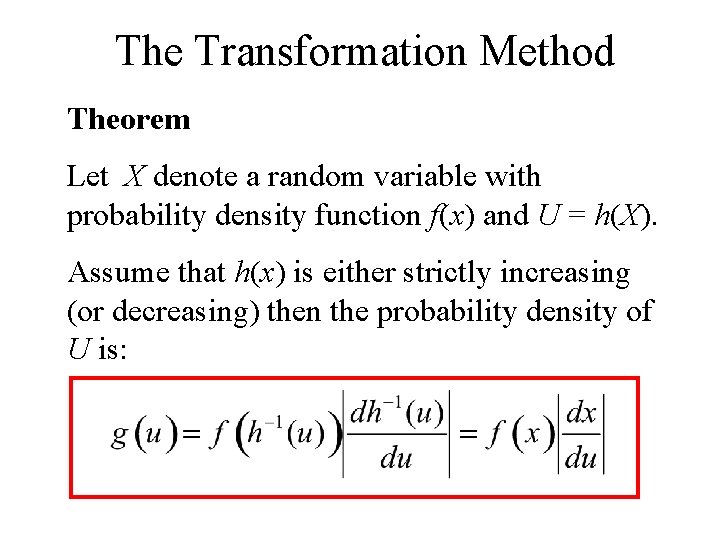

The Transformation Method Theorem Let X denote a random variable with probability density function f(x) and U = h(X). Assume that h(x) is either strictly increasing (or decreasing) then the probability density of U is:

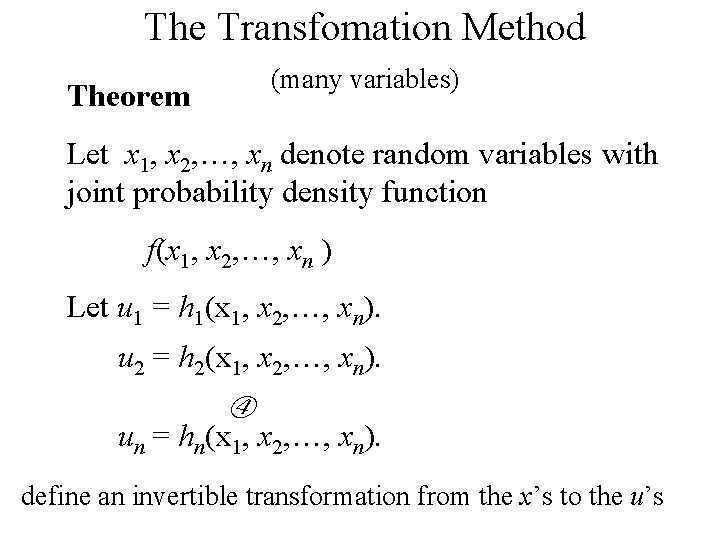

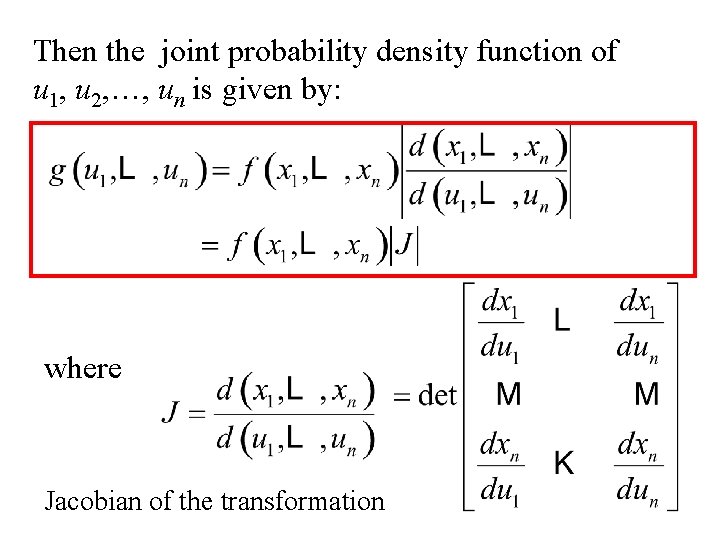

The Transfomation Method (many variables) Theorem Let x 1, x 2, …, xn denote random variables with joint probability density function f(x 1, x 2, …, xn ) Let u 1 = h 1(x 1, x 2, …, xn). u 2 = h 2(x 1, x 2, …, xn). un = hn(x 1, x 2, …, xn). define an invertible transformation from the x’s to the u’s

Then the joint probability density function of u 1, u 2, …, un is given by: where Jacobian of the transformation

The probability of a Gamblers ruin

• Suppose a gambler is playing a game for which he wins 1$ with probability p and loses 1$ with probability q. • Note the game is fair if p = q = ½. • Suppose also that he starts with an initial fortune of i$ and plays the game until he reaches a fortune of n$ or he loses all his money (his fortune reaches 0$) • What is the probability that he achieves his goal? What is the probability the he loses his fortune?

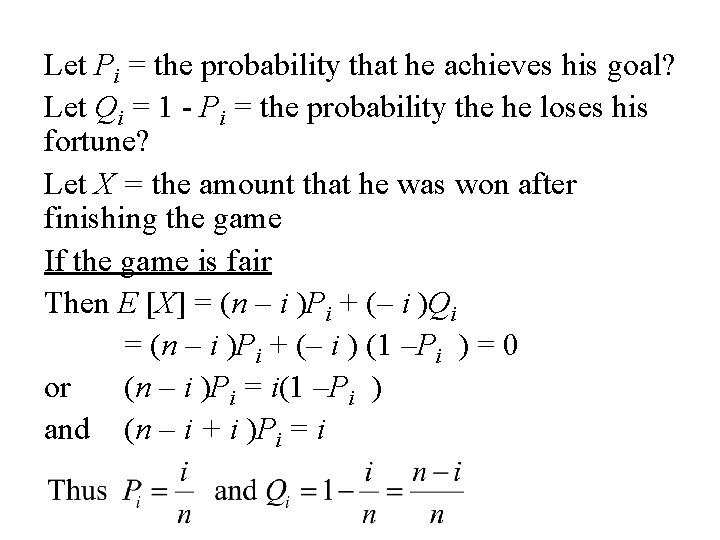

Let Pi = the probability that he achieves his goal? Let Qi = 1 - Pi = the probability the he loses his fortune? Let X = the amount that he was won after finishing the game If the game is fair Then E [X] = (n – i )Pi + (– i )Qi = (n – i )Pi + (– i ) (1 –Pi ) = 0 or (n – i )Pi = i(1 –Pi ) and (n – i + i )Pi = i

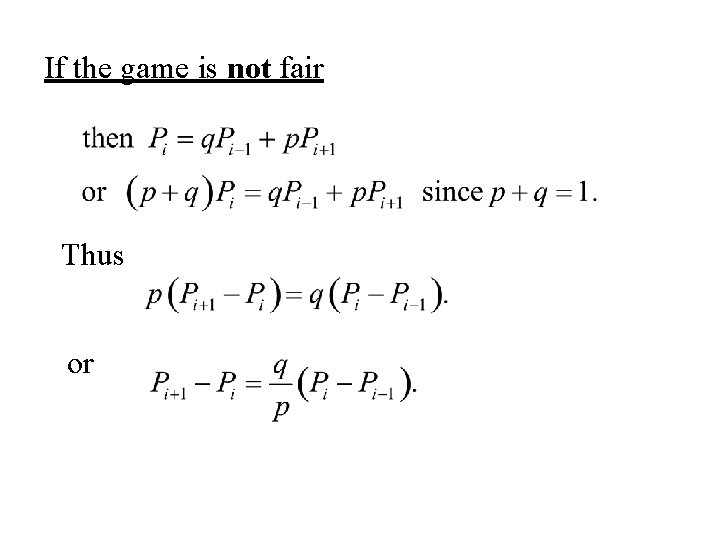

If the game is not fair Thus or

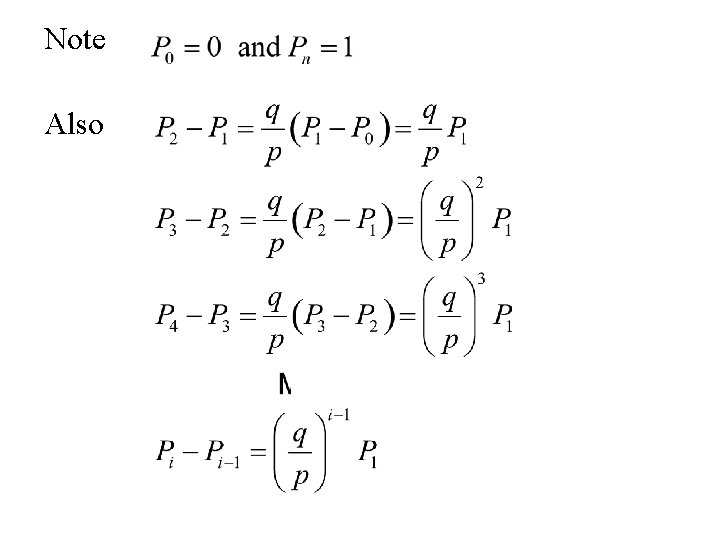

Note Also

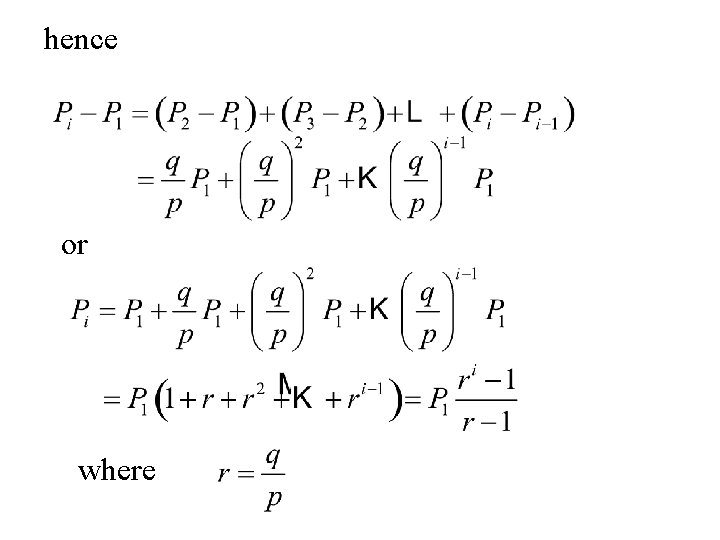

hence or where

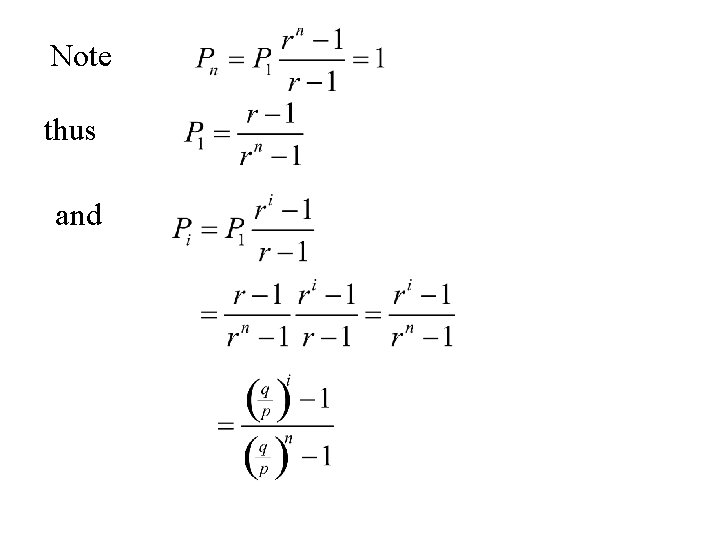

Note thus and

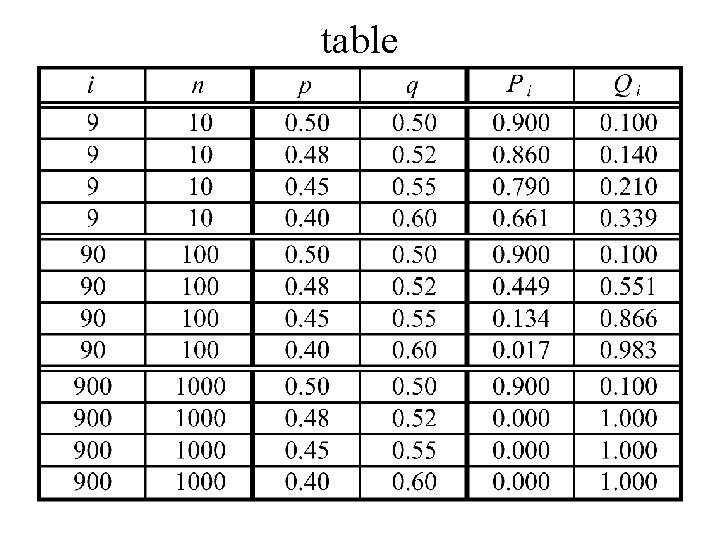

table

A waiting time paradox

• Suppose that each person in a restaurant is being served in an “equal” time. • That is, in a group of n people the probability that one person took the longest time is the same for each person, namely • Suppose that a person starts asking people as they leave – “How long did it take you to be served”. • He continues until it he finds someone who took longer than himself Let X = the number of people that he has to ask. Then E[X] = ∞.

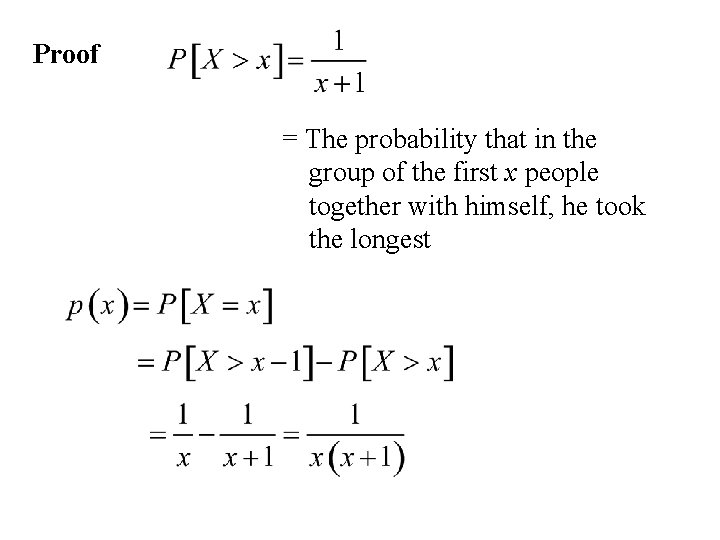

Proof = The probability that in the group of the first x people together with himself, he took the longest

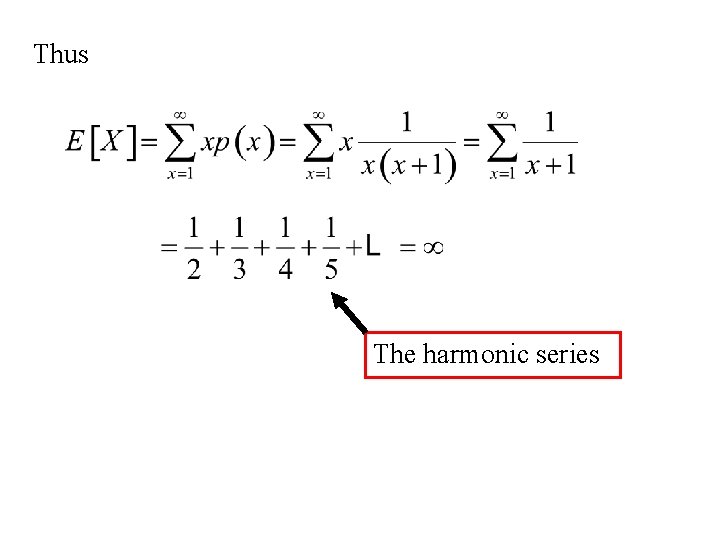

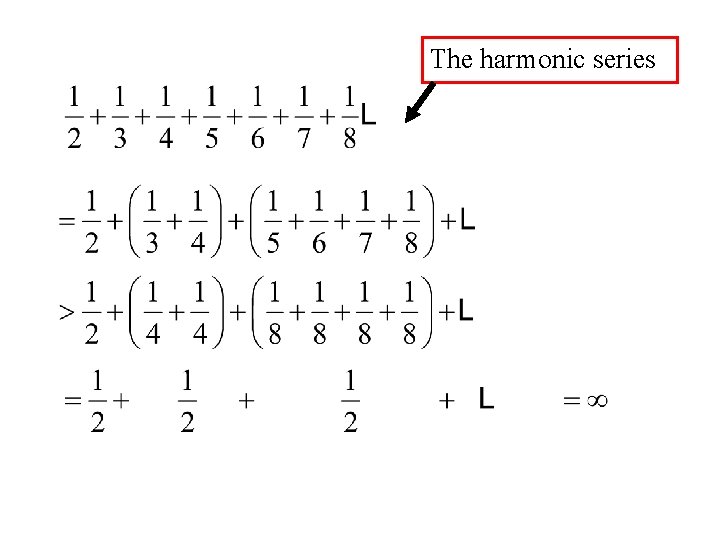

Thus The harmonic series

The harmonic series

- Slides: 23