Function Approximation Fariba Sharifian Somaye Kafi Function Approximation

Function Approximation Fariba Sharifian Somaye Kafi Function Approximation spring 2006 1

Contents l l Introduction to Counterpropagation Full Counterpropagation l l l Architecture Algorithm Application example Forward only Counterpropagation l l Architecture Algorithm Application example Function Approximation spring 2006 2

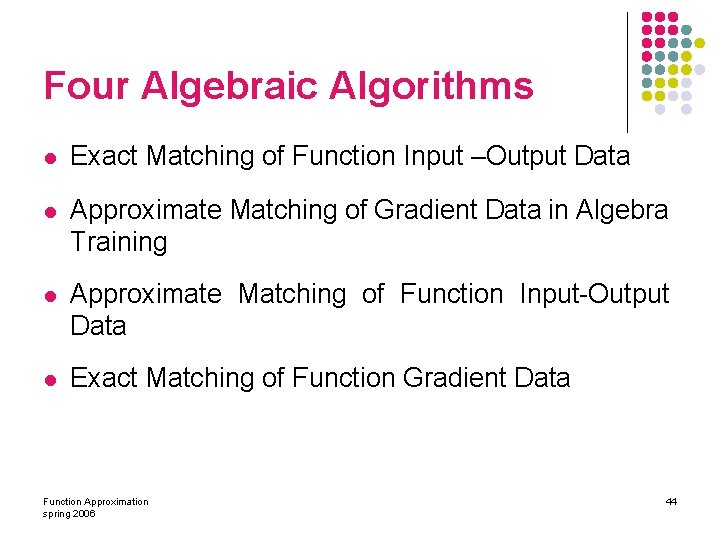

Contents l Function Approximation Using Neural Network l l l Introduction Development of Neural Network Weight Equations Algebra Training Algorithms l Exact Matching of Function Input –Output Data l Approximate Matching of Gradient Data in Algebra Training l Approximate Matching of Function Input-Output Data l Exact Matching of Function Gradient Data Function Approximation spring 2006 3

Introduction to Counterpropagation l l l are multilayer networks based on combination of input, clustering and output layers can be used to compress data, to approximate functions, or to associate patterns approximate its training input vectors pair by adoptively constructing a lookup table Function Approximation spring 2006 4

Introduction to Counterpropagation (cont. ) l training has two stages l l l Clustering Output weight updating There are two types of it l l Full Forward only Function Approximation spring 2006 5

Full Counterpropagation l Produces an approximation x*: y* based on l l l input of an x vector input of a y vector only input of an x: y , possibly with some distorted or missing elements in either or both vectors. Function Approximation spring 2006 6

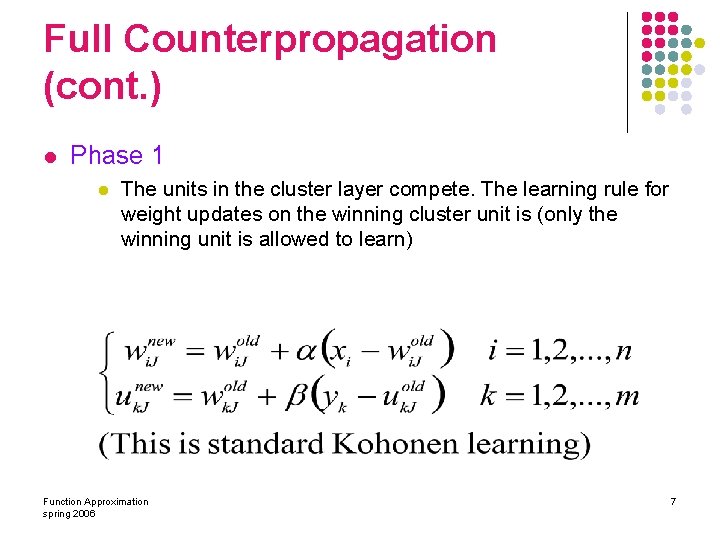

Full Counterpropagation (cont. ) l Phase 1 l The units in the cluster layer compete. The learning rule for weight updates on the winning cluster unit is (only the winning unit is allowed to learn) Function Approximation spring 2006 7

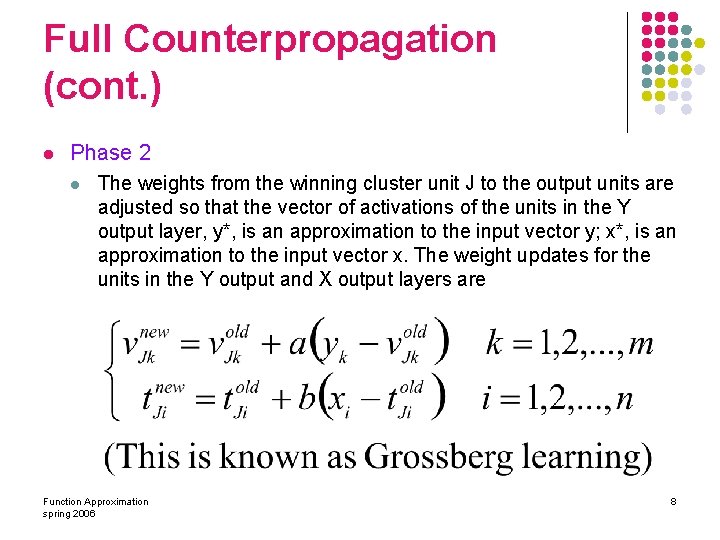

Full Counterpropagation (cont. ) l Phase 2 l The weights from the winning cluster unit J to the output units are adjusted so that the vector of activations of the units in the Y output layer, y*, is an approximation to the input vector y; x*, is an approximation to the input vector x. The weight updates for the units in the Y output and X output layers are Function Approximation spring 2006 8

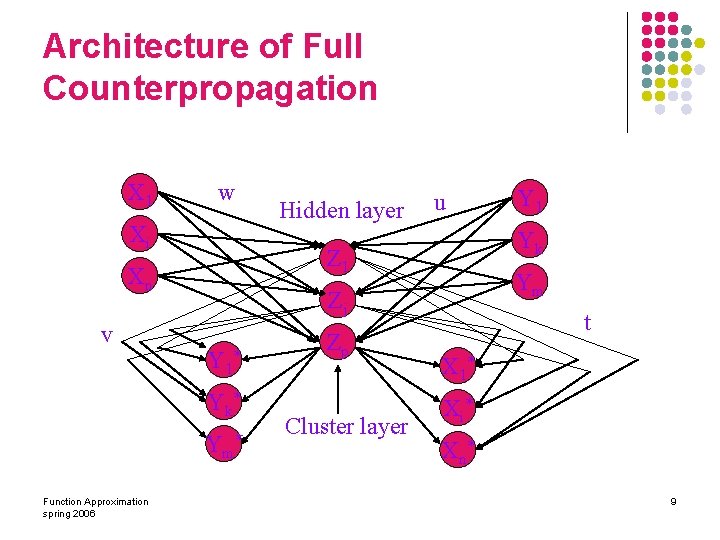

Architecture of Full Counterpropagation X 1 w Xi Y 1 * Ym * Zp Cluster layer Y 1 Yk Ym Zj Y k* Function Approximation spring 2006 u Z 1 Xn v Hidden layer t X 1* Xi * X n* 9

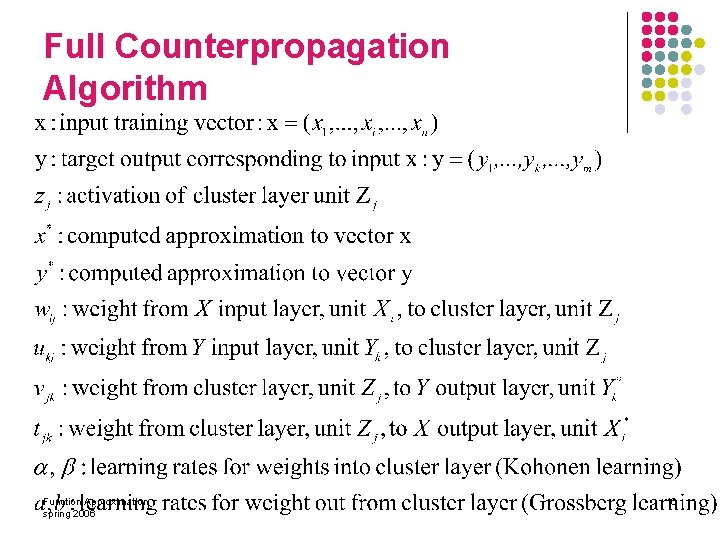

Full Counterpropagation Algorithm Function Approximation spring 2006 10

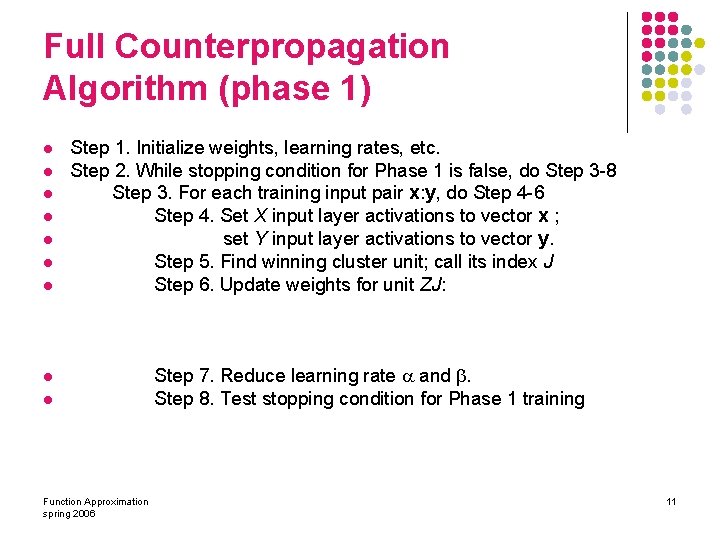

Full Counterpropagation Algorithm (phase 1) l l l l Step 1. Initialize weights, learning rates, etc. Step 2. While stopping condition for Phase 1 is false, do Step 3 -8 Step 3. For each training input pair x: y, do Step 4 -6 Step 4. Set X input layer activations to vector x ; set Y input layer activations to vector y. Step 5. Find winning cluster unit; call its index J Step 6. Update weights for unit ZJ: l l Function Approximation spring 2006 Step 7. Reduce learning rate and . Step 8. Test stopping condition for Phase 1 training 11

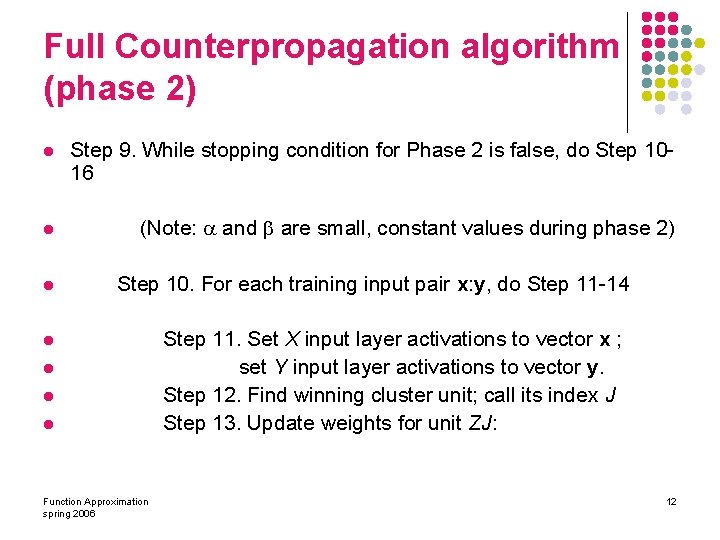

Full Counterpropagation algorithm (phase 2) l Step 9. While stopping condition for Phase 2 is false, do Step 1016 l (Note: and are small, constant values during phase 2) l Step 10. For each training input pair x: y, do Step 11 -14 l l Function Approximation spring 2006 Step 11. Set X input layer activations to vector x ; set Y input layer activations to vector y. Step 12. Find winning cluster unit; call its index J Step 13. Update weights for unit ZJ: 12

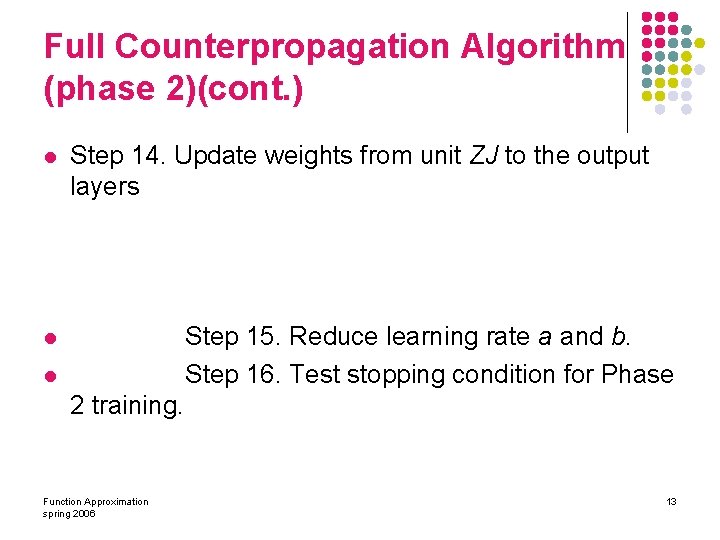

Full Counterpropagation Algorithm (phase 2)(cont. ) l Step 14. Update weights from unit ZJ to the output layers Step 15. Reduce learning rate a and b. Step 16. Test stopping condition for Phase l l 2 training. Function Approximation spring 2006 13

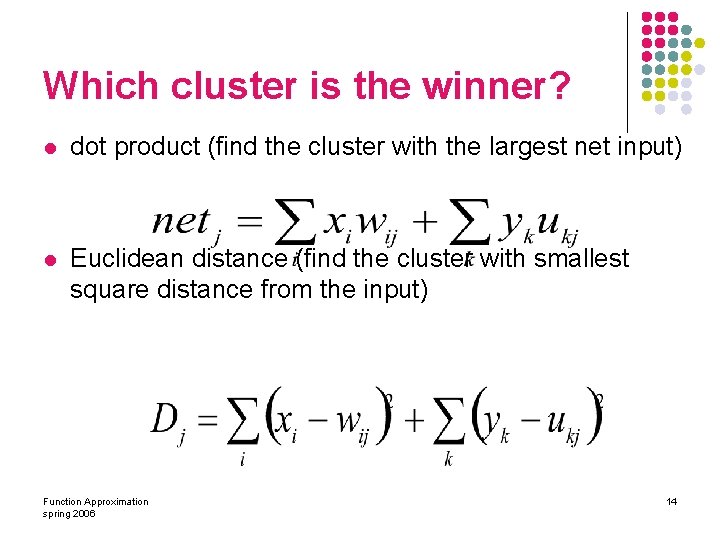

Which cluster is the winner? l dot product (find the cluster with the largest net input) l Euclidean distance (find the cluster with smallest square distance from the input) Function Approximation spring 2006 14

Full Counterpropagation Application l The application for counterpropagation is as follows: Step 0: initialize weights. l step 1: for each input pair x: y, do step 2 -4. l Step 2: set X input layer activation to vector x set Y input layer activation to vector Y; l Function Approximation spring 2006 15

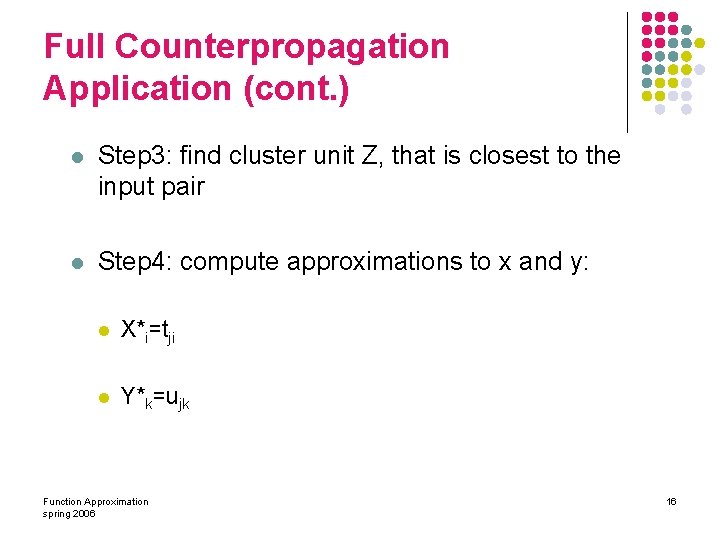

Full Counterpropagation Application (cont. ) l Step 3: find cluster unit Z, that is closest to the input pair l Step 4: compute approximations to x and y: l X*i=tji l Y*k=ujk Function Approximation spring 2006 16

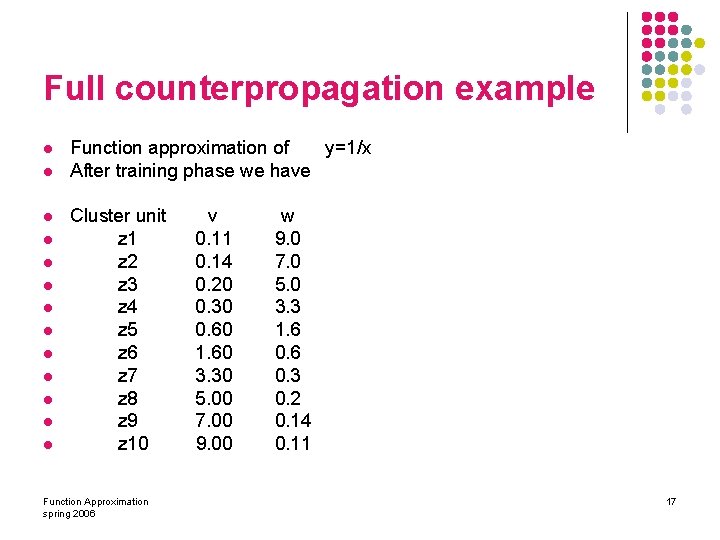

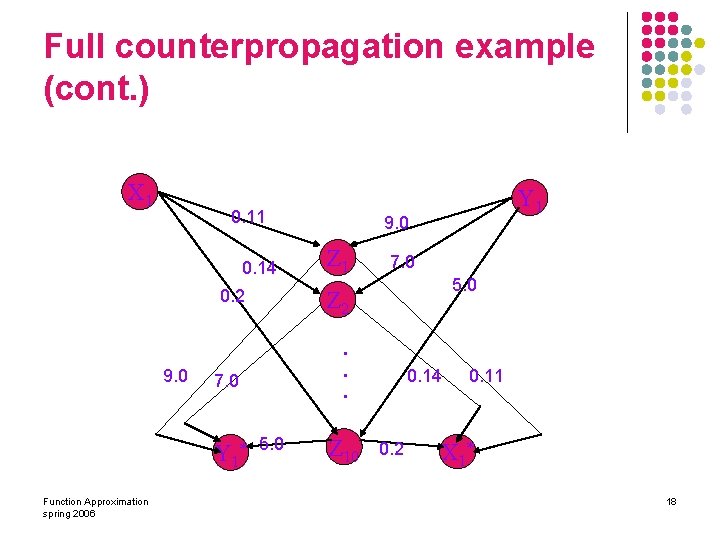

Full counterpropagation example l l l l Function approximation of y=1/x After training phase we have Cluster unit z 1 z 2 z 3 z 4 z 5 z 6 z 7 z 8 z 9 z 10 Function Approximation spring 2006 v 0. 11 0. 14 0. 20 0. 30 0. 60 1. 60 3. 30 5. 00 7. 00 9. 00 w 9. 0 7. 0 5. 0 3. 3 1. 6 0. 3 0. 2 0. 14 0. 11 17

Full counterpropagation example (cont. ) X 1 0. 14 0. 2 9. 0 Function Approximation spring 2006 9. 0 Z 1 7. 0 5. 0 Z 2. . . 7. 0 Y 1* Y 1 5. 0 Z 10 0. 14 0. 2 0. 11 X 1* 18

Full counterpropagation example (cont. ) l l To approximate value for y for x=0. 12 As we don’t know any thing about y compute D just by means of x l l D 1=(. 12 -. 11)2 =. 0001 D 2=. 0004 D 3=. 064 D 4=. 032 D 5=. 23 D 6=2. 2 D 7=10. 1 D 8=23. 8 D 9=47. 3 D 10=81 Function Approximation spring 2006 19

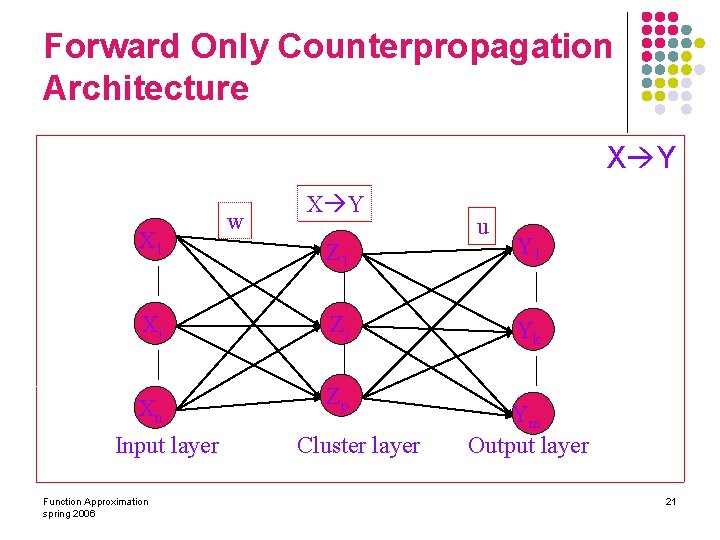

Forward Only Counterpropagation l Is a simplified version of the full counterpropagation l Are intended to approximate y=f(x) function that is not necessarily invertible l It may be used if the mapping from x to y is well defined, but the mapping from y to x is not. Function Approximation spring 2006 20

Forward Only Counterpropagation Architecture X Y X 1 w X Y Z 1 Xi Zj Xn Zp Input layer Function Approximation spring 2006 Cluster layer u Y 1 Yk Ym Output layer 21

Forward Only Counterpropagation Algorithm l l l Step 1. Initialize weights, learning rates, etc. Step 2. While stopping condition for Phase 1 is false, do Step 3 -8 Step 3. For each training input x, do Step 4 -6 Step 4. Set X input layer activations to vector x Step 5. Find winning cluster unit; call its index j Step 6. Update weights for unit ZJ: l l l Function Approximation spring 2006 Step 7. Reduce learning rate Step 8. Test stopping condition for Phase 1 training. 22

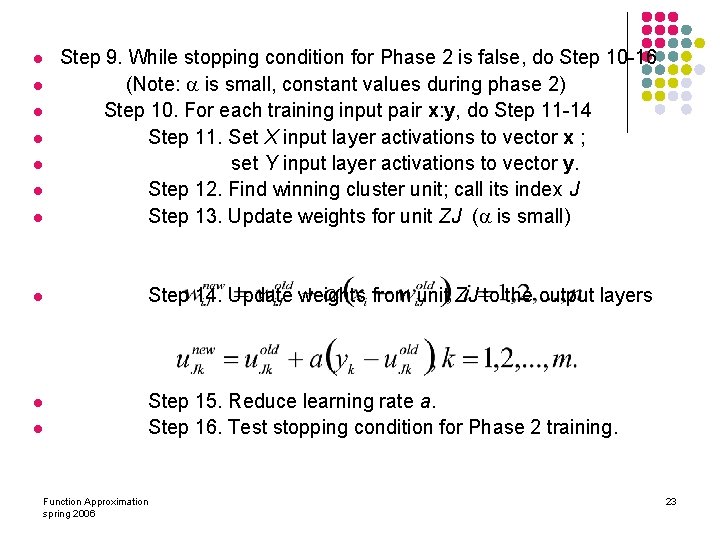

l Step 9. While stopping condition for Phase 2 is false, do Step 10 -16 (Note: is small, constant values during phase 2) Step 10. For each training input pair x: y, do Step 11 -14 Step 11. Set X input layer activations to vector x ; set Y input layer activations to vector y. Step 12. Find winning cluster unit; call its index J Step 13. Update weights for unit ZJ ( is small) l Step 14. Update weights from unit ZJ to the output layers l Step 15. Reduce learning rate a. Step 16. Test stopping condition for Phase 2 training. l l l l Function Approximation spring 2006 23

Forward Only Counterpropagation Application l l Step 0: initialize weights (by training in previous subsection). Step 1: present input vector x. Step 2: find unit J closest to vector x. Step 3: set activation output units: yk=ujk Function Approximation spring 2006 24

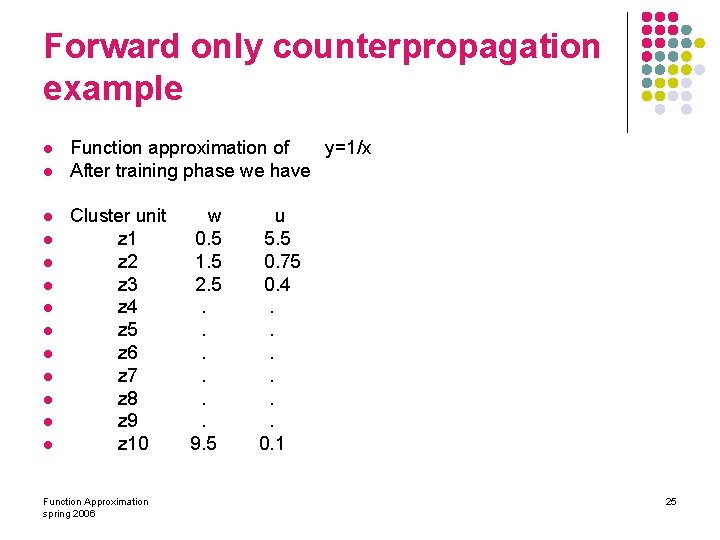

Forward only counterpropagation example l l l l Function approximation of y=1/x After training phase we have Cluster unit z 1 z 2 z 3 z 4 z 5 z 6 z 7 z 8 z 9 z 10 Function Approximation spring 2006 w 0. 5 1. 5 2. 5. . . 9. 5 u 5. 5 0. 75 0. 4. . . 0. 1 25

Function Approximation Using Neural Network ØIntroduction l. Development of Neural Network Weight Equations l. Algebra Training Algorithms l. Exact Matching of Function Input –Output Data l. Approximate Matching of Gradient Data in Algebra Training l. Approximate Matching of Function Input-Output Data l. Exact Matching of Function Gradient Data Function Approximation spring 2006 26

Introduction l analytical description for a set of data l referred to as data modeling or system identification Function Approximation spring 2006 27

standard tools l l l Splines Wavelets Neural network Function Approximation spring 2006 28

Why Using Neural Network l Splines & Wavelets not generalize well to higher 3 dimensional spaces l universal approximators l parallel architecture l trained to map multidimensional nonlinear functions Function Approximation spring 2006 29

Why Using Neural Network (cont) l Central to the solution of differential equations. l l l Provide differentiable closed-analytic- form solutions have very good generalization properties widely applicable l translates into a set of nonlinear, transcendental weight equations l cascade structure l l nonlinearity of the hidden nodes linear operations in the input and output layers Function Approximation spring 2006 30

Function Approximation Using Neural Network l l functions not known analytically have a set of precise input–output samples functions modeled using an algebraic approach design objectives: l l exact matching approximate matching feedforward neural networks Data: l l l Input Output And/or gradient information Function Approximation spring 2006 31

Objective l exact solutions l sufficient degrees of freedom l l retaining good generalization properties synthesize a large data set by a parsimonious network Function Approximation spring 2006 32

Input-to-node values l algebraic training base l if all sigmoidal functions inputs are known weight equations become algebraic l input-to-node values, sigmoidal functions inputs l determine the saturation level of each sigmoid at a given data point Function Approximation spring 2006 33

weight equations structure l analyze & train a nonlinear neural network l means l linear algebra controlling the distribution controlling the saturation level of the active nodes Function Approximation spring 2006 34

Function Approximation Using Neural Network üIntroduction ØDevelopment of Neural Network Weight Equations l. Algebra Training Algorithms l. Exact Matching of Function Input –Output Data l. Approximate Matching of Gradient Data in Algebra Training l. Approximate Matching of Function Input-Output Data l. Exact Matching of Function Gradient Data Function Approximation spring 2006 35

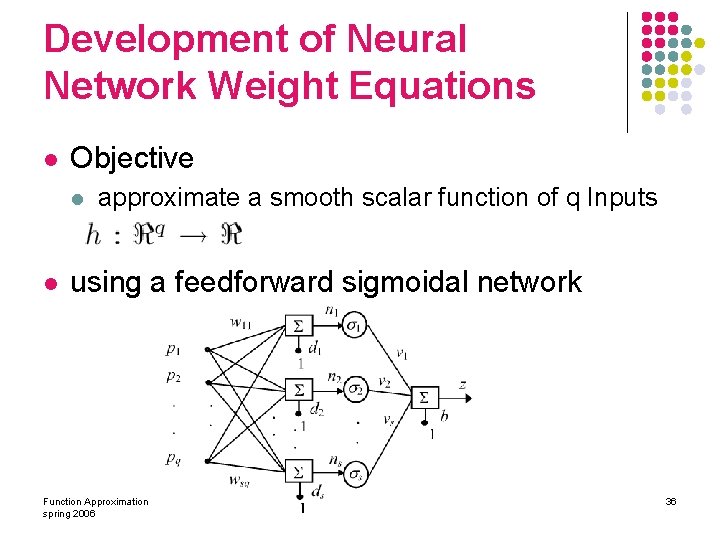

Development of Neural Network Weight Equations l Objective l l approximate a smooth scalar function of q Inputs using a feedforward sigmoidal network Function Approximation spring 2006 36

Derivative information l can improve network’s generalization properties partial derivatives with input l can be incorporated in the training set l Function Approximation spring 2006 37

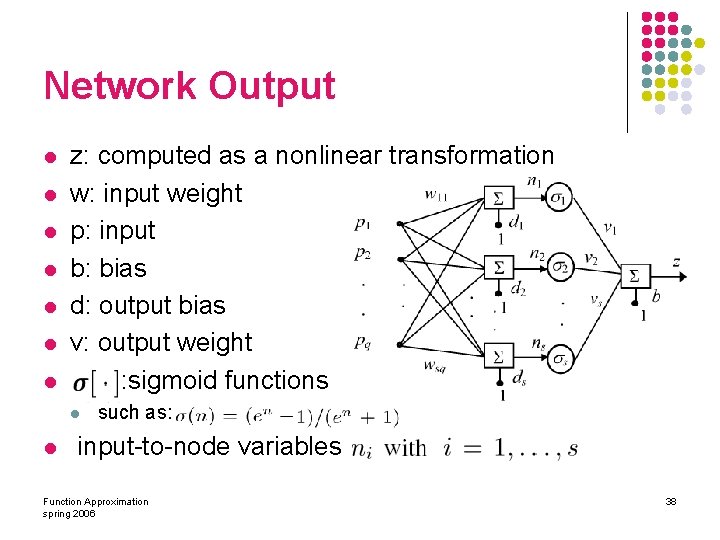

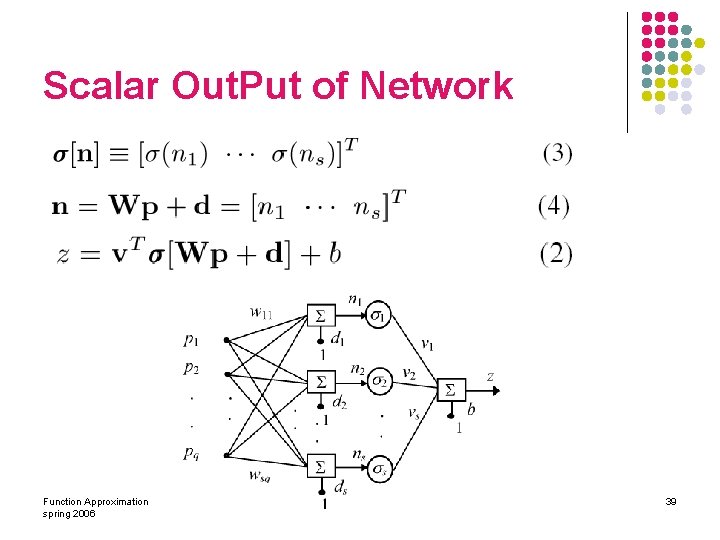

Network Output l l l l z: computed as a nonlinear transformation w: input weight p: input b: bias d: output bias v: output weight : sigmoid functions l l such as: input-to-node variables Function Approximation spring 2006 38

Scalar Out. Put of Network Function Approximation spring 2006 39

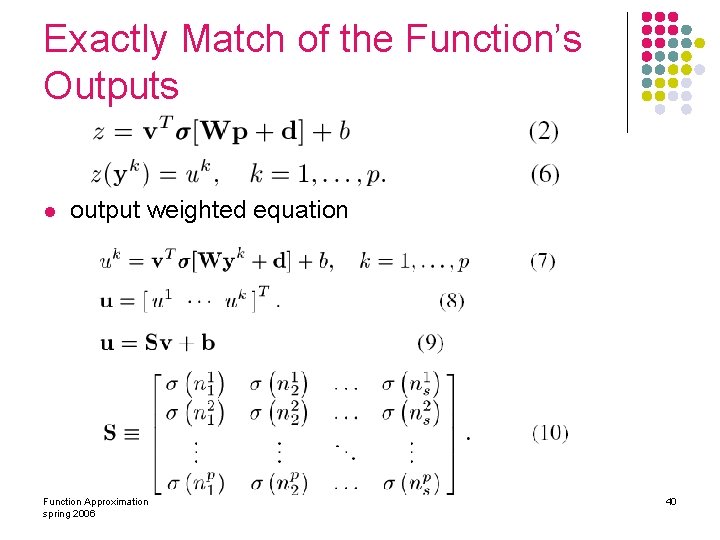

Exactly Match of the Function’s Outputs l output weighted equation Function Approximation spring 2006 40

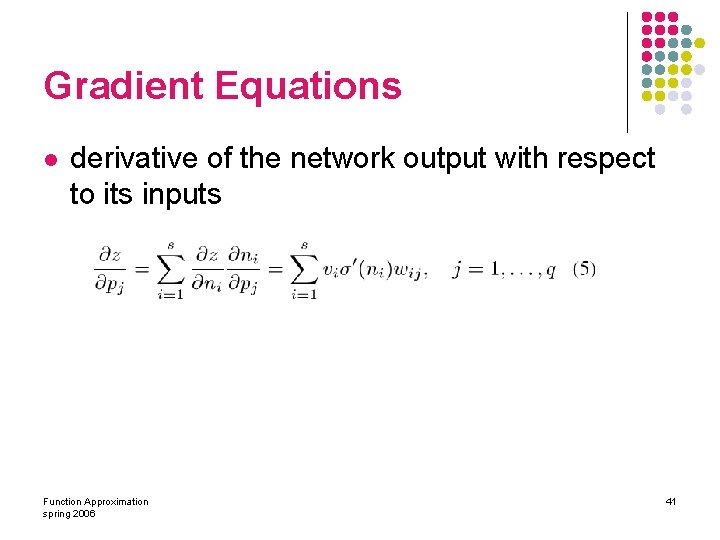

Gradient Equations l derivative of the network output with respect to its inputs Function Approximation spring 2006 41

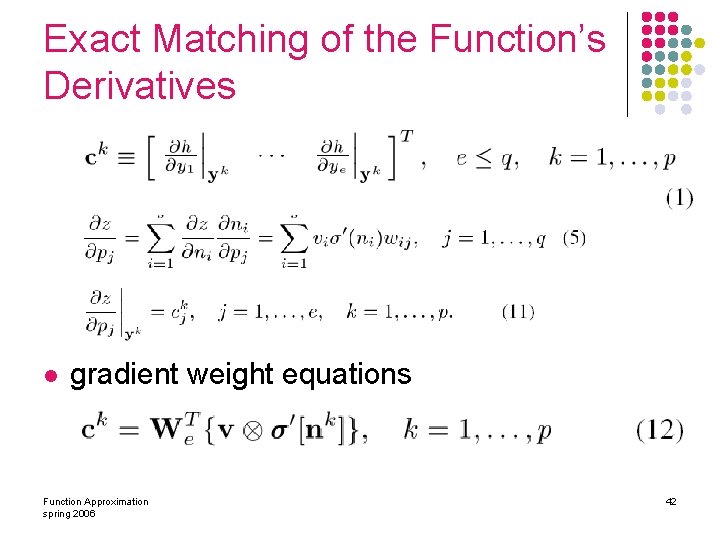

Exact Matching of the Function’s Derivatives l gradient weight equations Function Approximation spring 2006 42

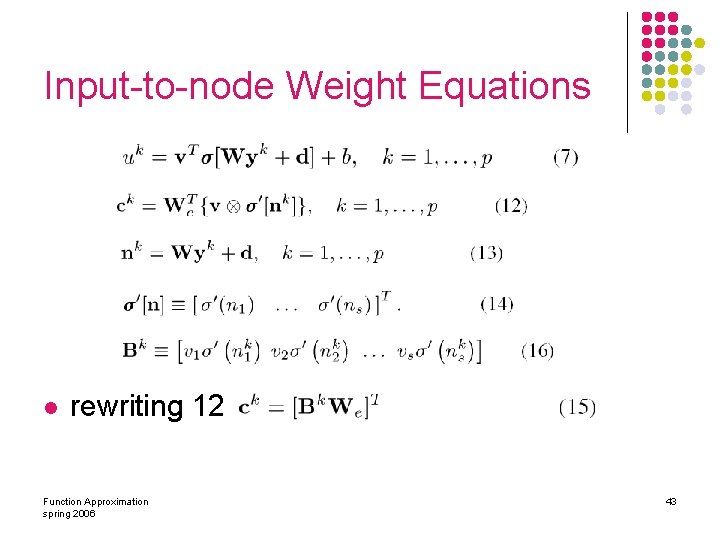

Input-to-node Weight Equations l rewriting 12 Function Approximation spring 2006 43

Four Algebraic Algorithms l Exact Matching of Function Input –Output Data l Approximate Matching of Gradient Data in Algebra Training l Approximate Matching of Function Input-Output Data l Exact Matching of Function Gradient Data Function Approximation spring 2006 44

Function Approximation Using Neural Network üIntroduction üDevelopment of Neural Network Weight Equations üAlgebra Training Algorithms ØExact Matching of Function Input –Output Data l. Approximate Matching of Gradient Data in Algebra Training l. Approximate Matching of Function Input-Output Data l. Exact Matching of Function Gradient Data Function Approximation spring 2006 45

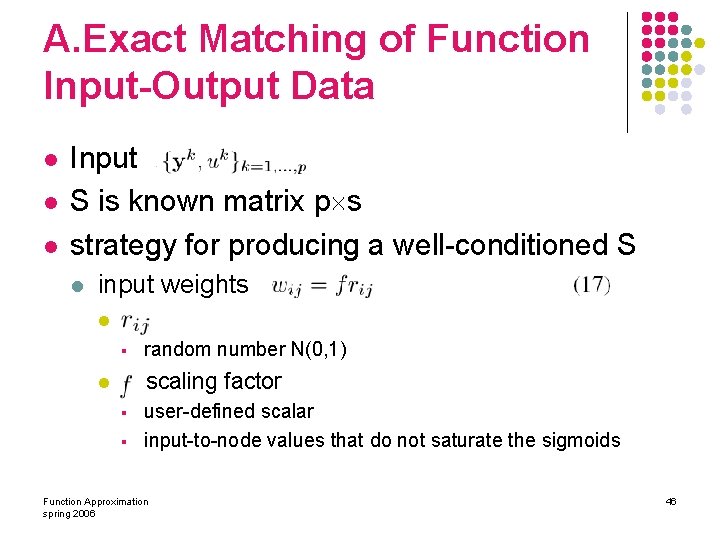

A. Exact Matching of Function Input-Output Data l l l Input S is known matrix p s strategy for producing a well-conditioned S l input weights l o § l random number N(0, 1) L scaling factor § § user-defined scalar input-to-node values that do not saturate the sigmoids Function Approximation spring 2006 46

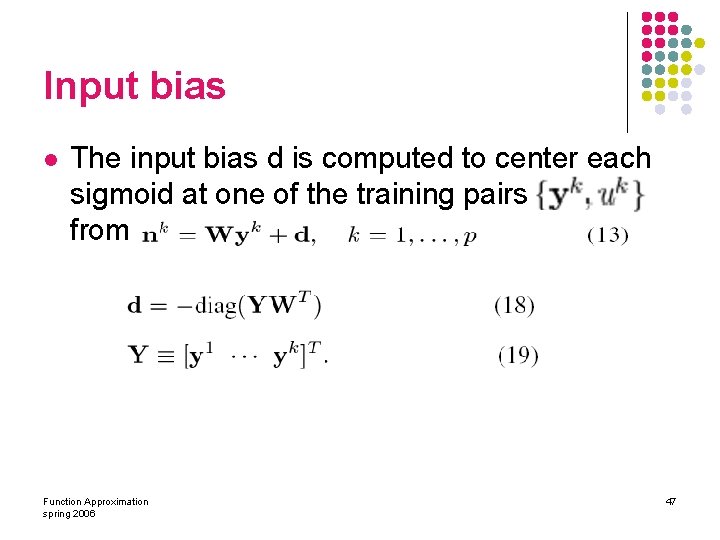

Input bias l The input bias d is computed to center each sigmoid at one of the training pairs from Function Approximation spring 2006 47

l Finally, the linear system in (9) is solved for v by inverting S Function Approximation spring 2006 48

l 17 produced an ill-conditioned S => computation repeated Function Approximation spring 2006 49

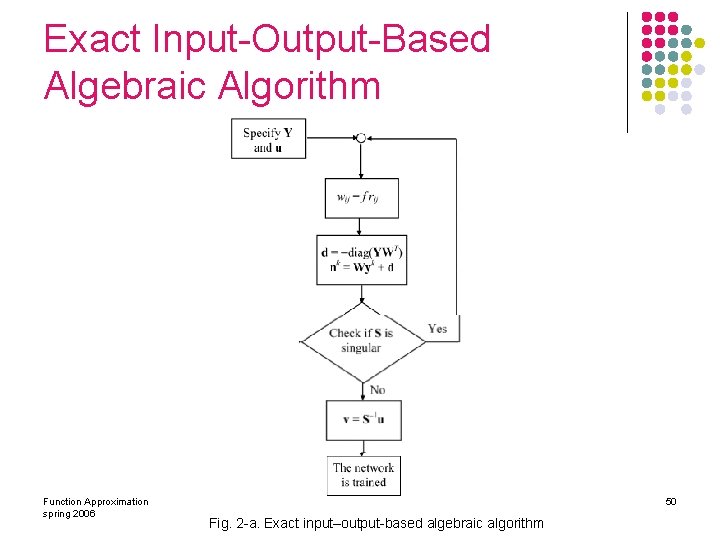

Exact Input-Output-Based Algebraic Algorithm Function Approximation spring 2006 50 Fig. 2 -a. Exact input–output-based algebraic algorithm

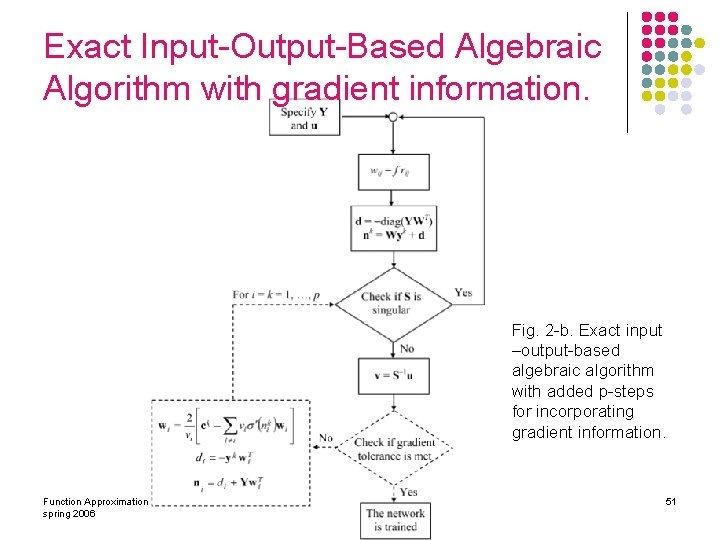

Exact Input-Output-Based Algebraic Algorithm with gradient information. Fig. 2 -b. Exact input –output-based algebraic algorithm with added p-steps for incorporating gradient information. Function Approximation spring 2006 51

Then l Exact matching l l Input output gradient information solved exactly simultaneously for the neural parameters. Function Approximation spring 2006 52

Function Approximation Using Neural Network üIntroduction üDevelopment of Neural Network Weight Equations üAlgebra Training Algorithms üExact Matching of Function Input –Output Data ØApproximate Matching of Gradient Data in Algebra Training l. Approximate Matching of Function Input-Output Data l. Exact Matching of Function Gradient Data Function Approximation spring 2006 53

B. Approximate Matching of Gradient Data in Algebra Training l estimate l l l output weights input-to-node values first soluation: l l use randomized W all parameters refined by a p-step node-by-node update algorithm. Function Approximation spring 2006 54

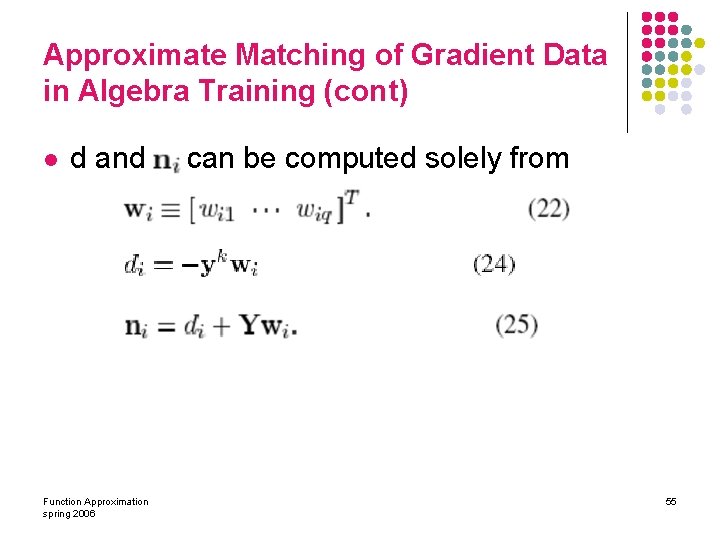

Approximate Matching of Gradient Data in Algebra Training (cont) l d and Function Approximation spring 2006 can be computed solely from 55

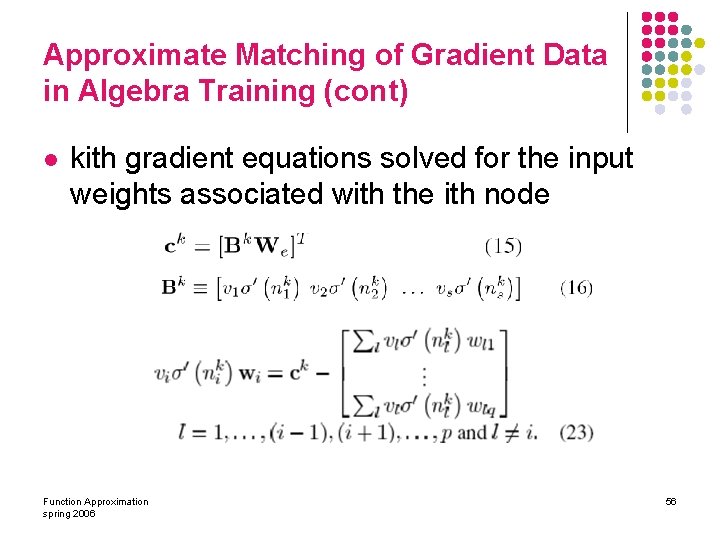

Approximate Matching of Gradient Data in Algebra Training (cont) l kith gradient equations solved for the input weights associated with the ith node Function Approximation spring 2006 56

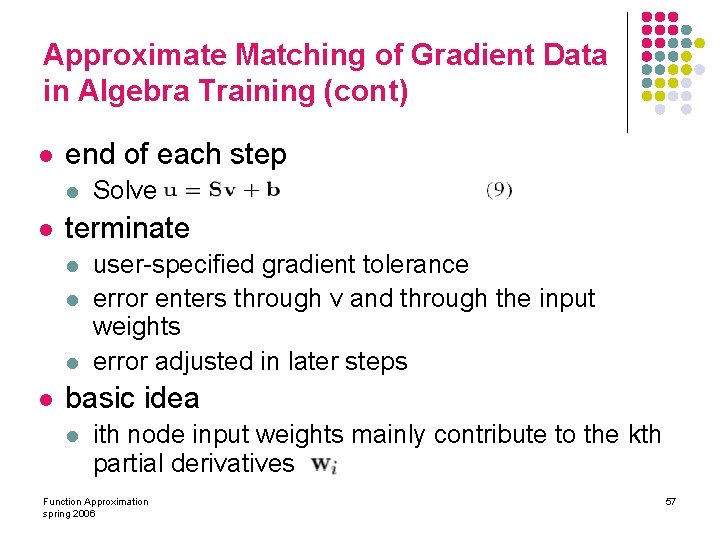

Approximate Matching of Gradient Data in Algebra Training (cont) l end of each step l l terminate l l Solve user-specified gradient tolerance error enters through v and through the input weights error adjusted in later steps basic idea l ith node input weights mainly contribute to the kth partial derivatives Function Approximation spring 2006 57

Function Approximation Using Neural Network üIntroduction üDevelopment of Neural Network Weight Equations üAlgebra Training Algorithms üExact Matching of Function Input –Output Data üApproximate Matching of Gradient Data in Algebra Training ØApproximate Matching of Function Input-Output Data l. Exact Matching of Function Gradient Data Function Approximation spring 2006 58

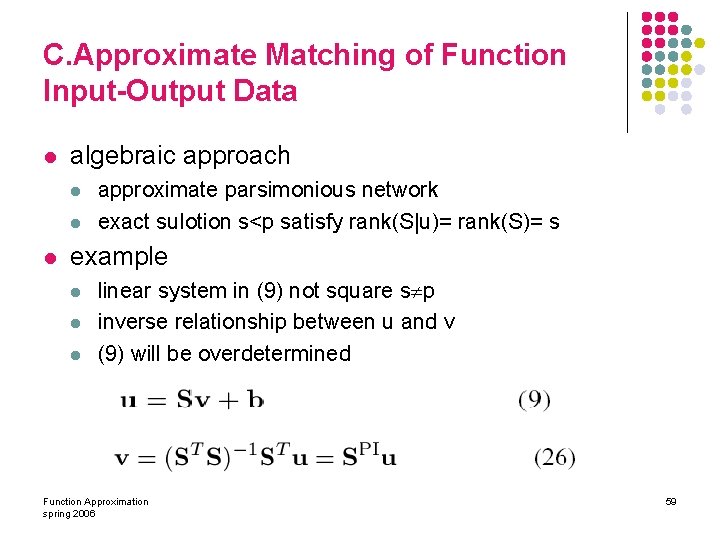

C. Approximate Matching of Function Input-Output Data l algebraic approach l l l approximate parsimonious network exact sulotion s<p satisfy rank(S|u)= rank(S)= s example l linear system in (9) not square s p inverse relationship between u and v (9) will be overdetermined Function Approximation spring 2006 59

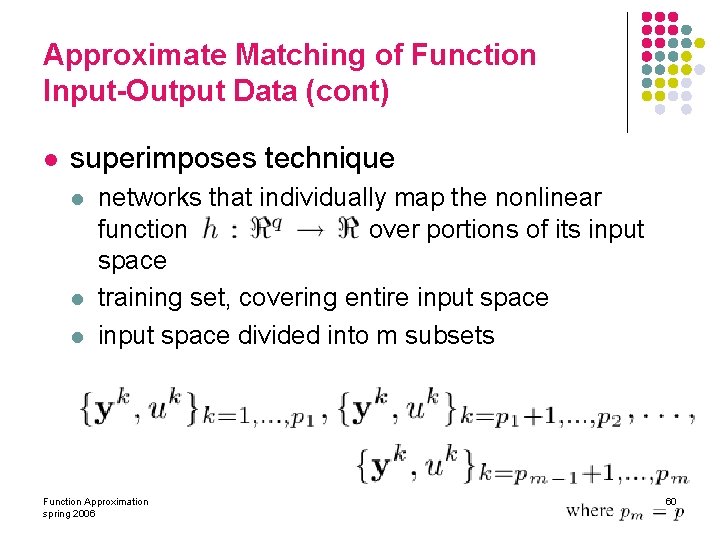

Approximate Matching of Function Input-Output Data (cont) l superimposes technique l l l networks that individually map the nonlinear function over portions of its input space training set, covering entire input space divided into m subsets Function Approximation spring 2006 60

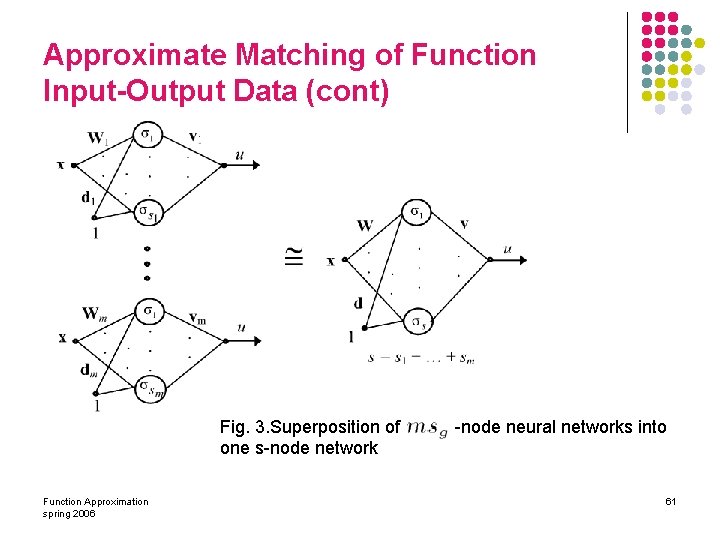

Approximate Matching of Function Input-Output Data (cont) l J Fig. 3. Superposition of one s-node network Function Approximation spring 2006 -node neural networks into 61

Approximate Matching of Function Input-Output Data (cont) l l the gth neural network approximates the vector by the estimate Function Approximation spring 2006 62

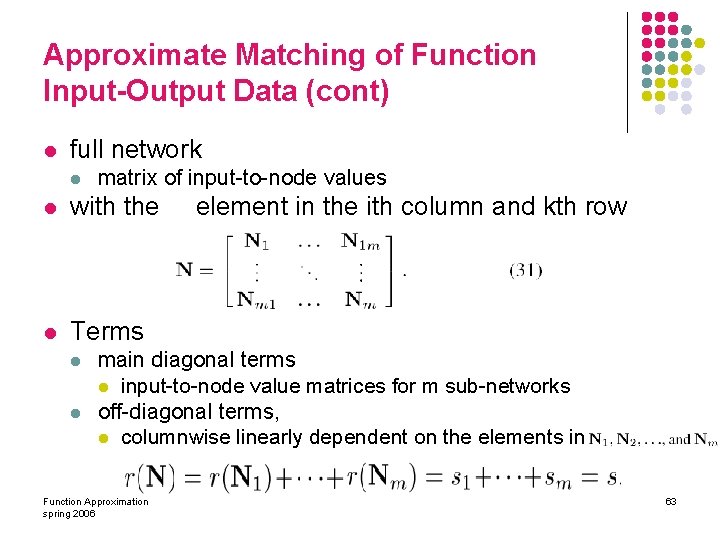

Approximate Matching of Function Input-Output Data (cont) l full network l matrix of input-to-node values l with the l Terms l l element in the ith column and kth row main diagonal terms l input-to-node value matrices for m sub-networks off-diagonal terms, l columnwise linearly dependent on the elements in Function Approximation spring 2006 63

Approximate Matching of Function Input-Output Data (cont) l output weights l S constructed to be of rank s rank of = s or s+1 zero or small error during the superposition error does not increase with m l l l Function Approximation spring 2006 64

Approximate Matching of Function Input-Output Data (cont) l key to developing algebraic training techniques l l l construct a matrix S, through N display the desired characteristics l l S must be of rank s s is kept small to produce a parsimonious network. Function Approximation spring 2006 65

Function Approximation Using Neural Network üIntroduction üDevelopment of Neural Network Weight Equations üAlgebra Training Algorithms üExact Matching of Function Input –Output Data üApproximate Matching of Gradient Data in Algebra Training üApproximate Matching of Function Input-Output Data ØExact Matching of Function Gradient Data Function Approximation spring 2006 66

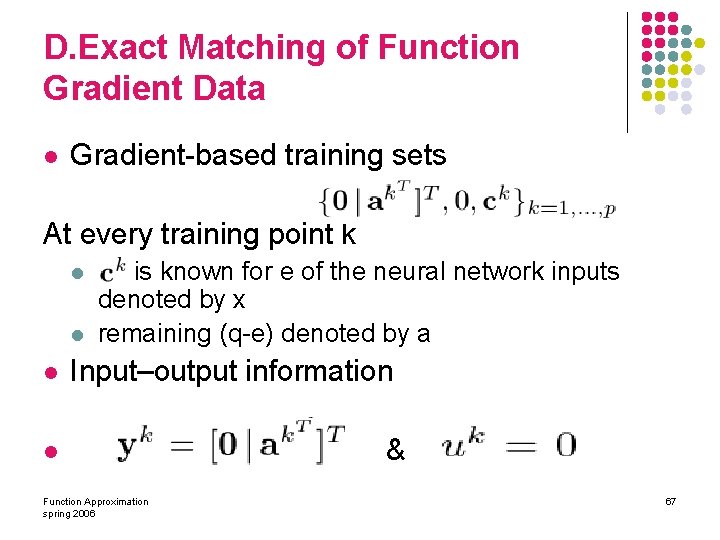

D. Exact Matching of Function Gradient Data l Gradient-based training sets At every training point k l l l is known for e of the neural network inputs denoted by x remaining (q-e) denoted by a Input–output information l Function Approximation spring 2006 & 67

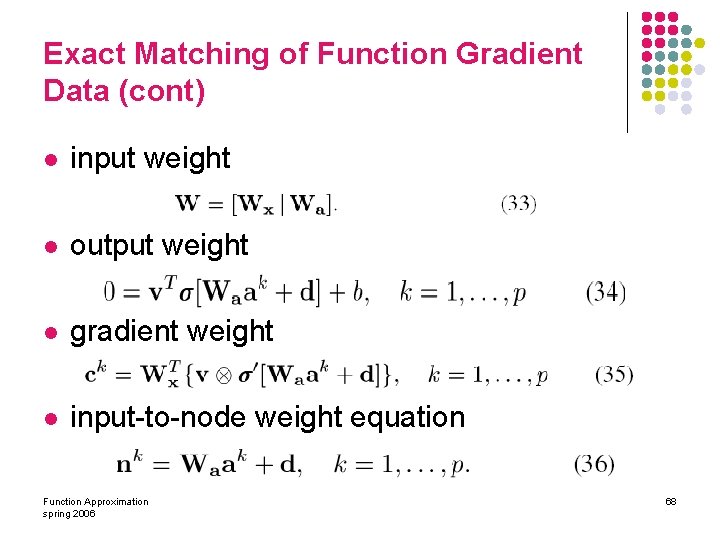

Exact Matching of Function Gradient Data (cont) l input weight l output weight l gradient weight l input-to-node weight equation Function Approximation spring 2006 68

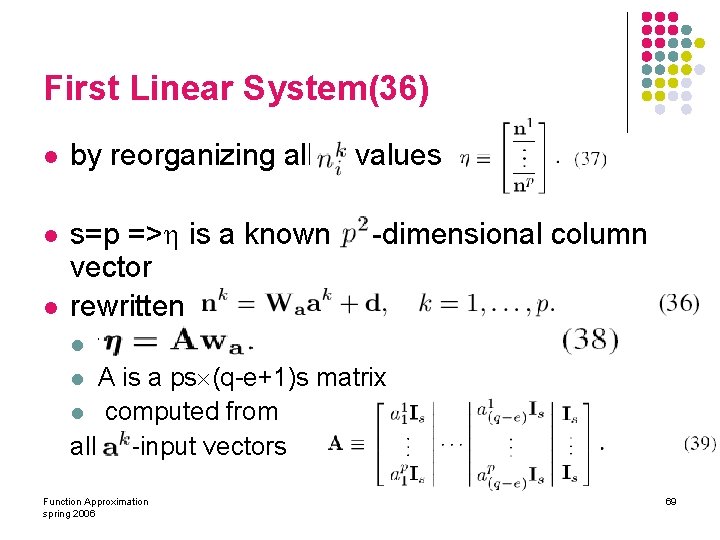

First Linear System(36) l by reorganizing all l s=p => is a known vector rewritten l values -dimensional column f l A is a ps (q-e+1)s matrix l computed from all –input vectors l Function Approximation spring 2006 69

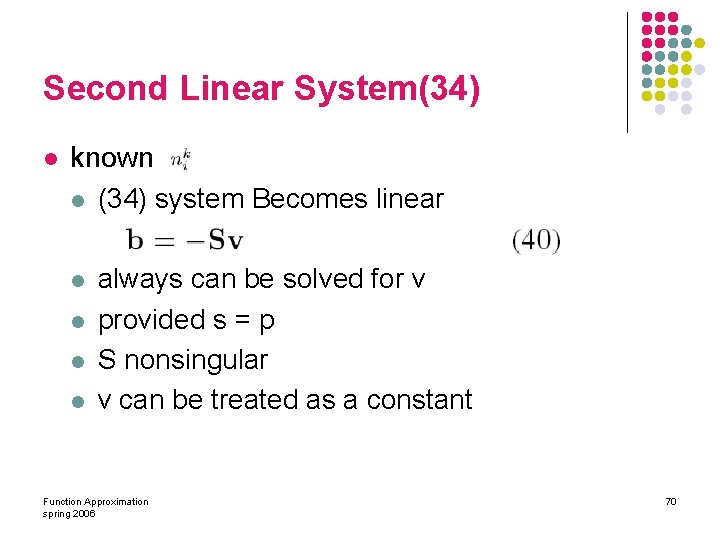

Second Linear System(34) l known l (34) system Becomes linear l l always can be solved for v provided s = p S nonsingular v can be treated as a constant Function Approximation spring 2006 70

Third Linear System(35) l (35) becomes linear unknowns consist of x-input weights l known gradients in training set l X is a known ep es l Function Approximation spring 2006 71

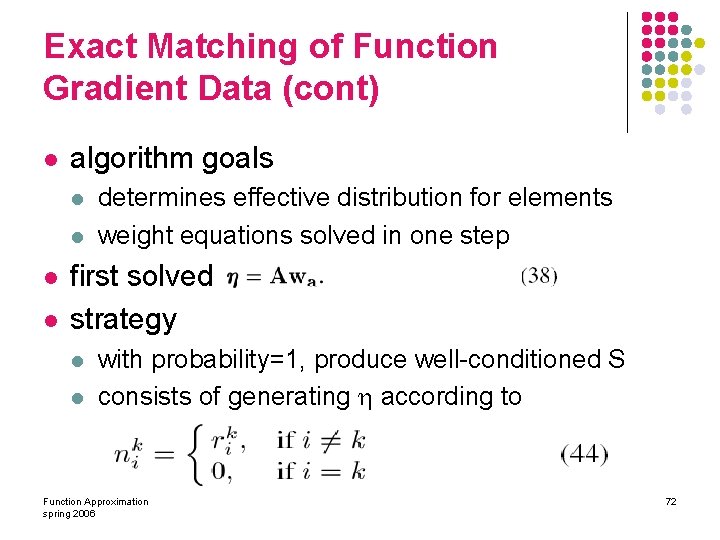

Exact Matching of Function Gradient Data (cont) l algorithm goals l l determines effective distribution for elements weight equations solved in one step first solved strategy l l with probability=1, produce well-conditioned S consists of generating according to Function Approximation spring 2006 72

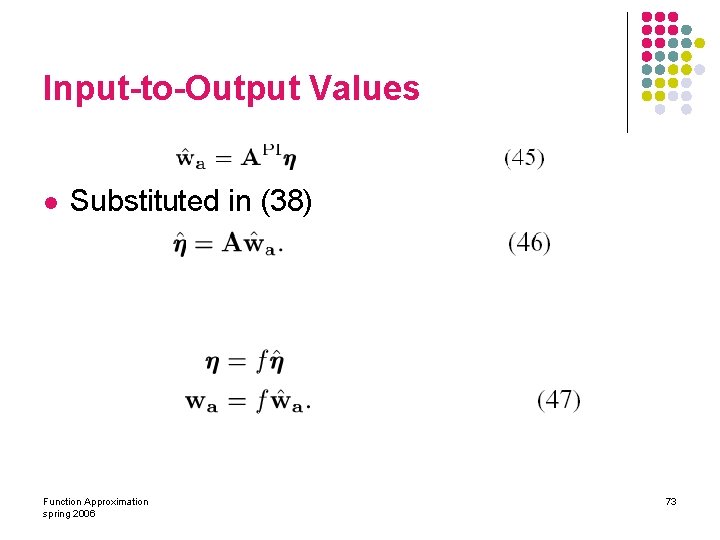

Input-to-Output Values l Substituted in (38) Function Approximation spring 2006 73

Input-to-Output Values (cont) l l l sigmoids are very nearly centered desirable one sigmoid be centered for a given input prevent ill-conditioning S l l same sigmoid should close to saturation for any other known input need a factor l absolute value of the largest element in Function Approximation spring 2006 74

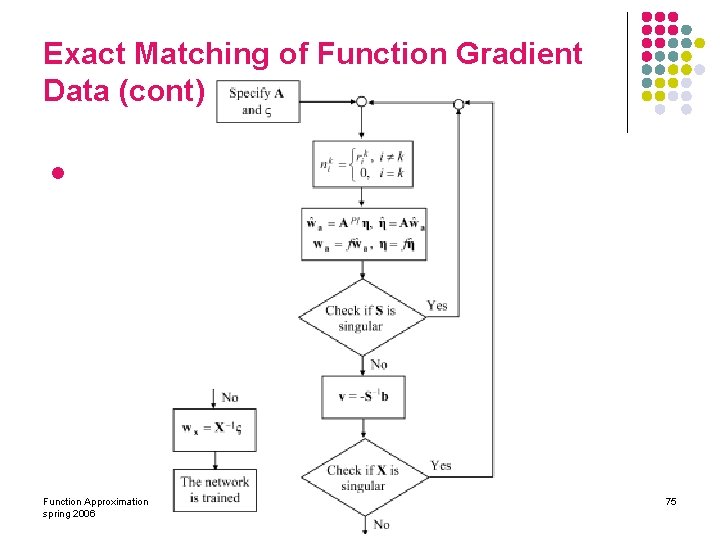

Exact Matching of Function Gradient Data (cont) l Function Approximation spring 2006 75

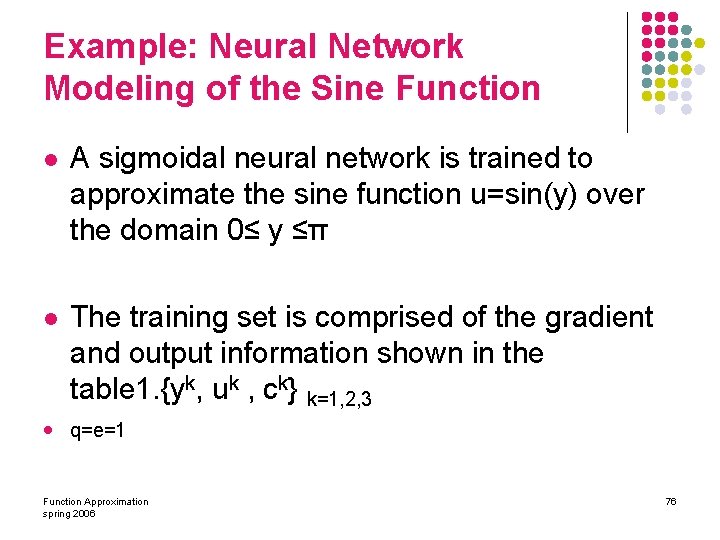

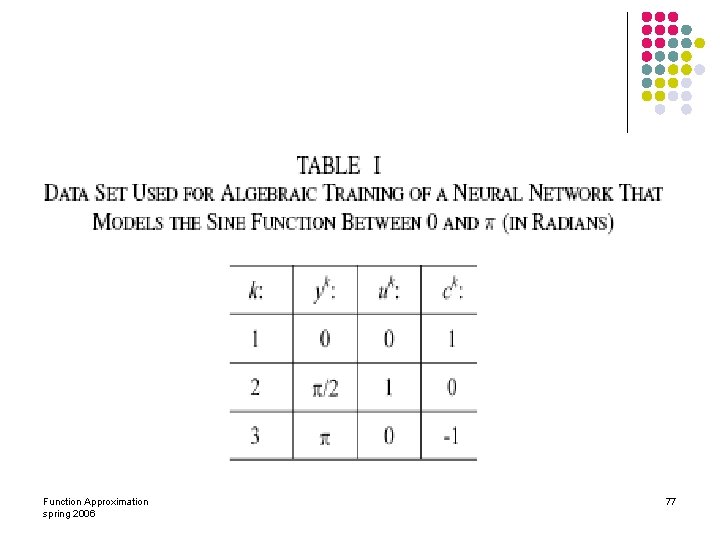

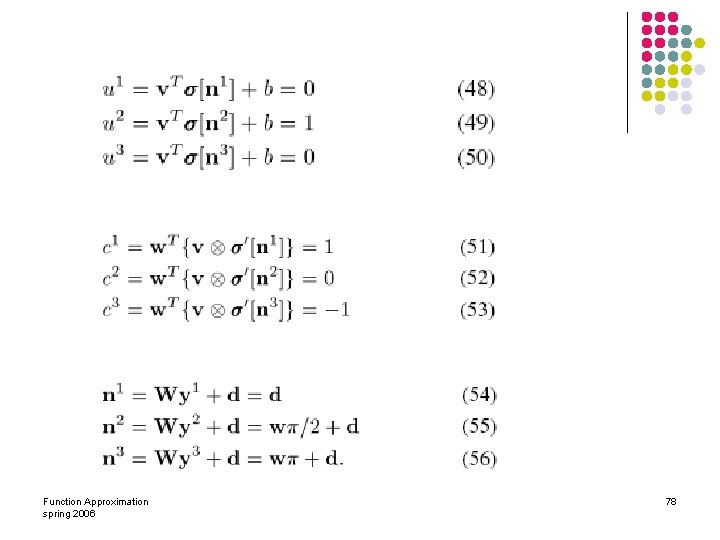

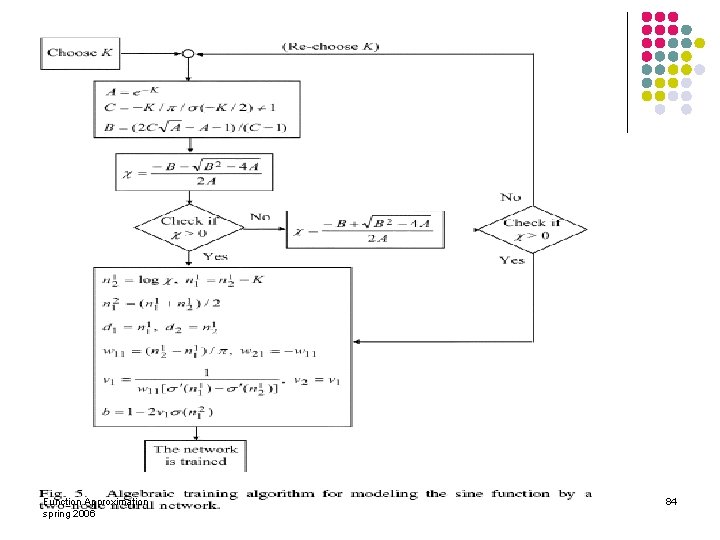

Example: Neural Network Modeling of the Sine Function l A sigmoidal neural network is trained to approximate the sine function u=sin(y) over the domain 0≤ y ≤π l The training set is comprised of the gradient and output information shown in the table 1. {yk, uk , ck} k=1, 2, 3 l q=e=1 Function Approximation spring 2006 76

Function Approximation spring 2006 77

Function Approximation spring 2006 78

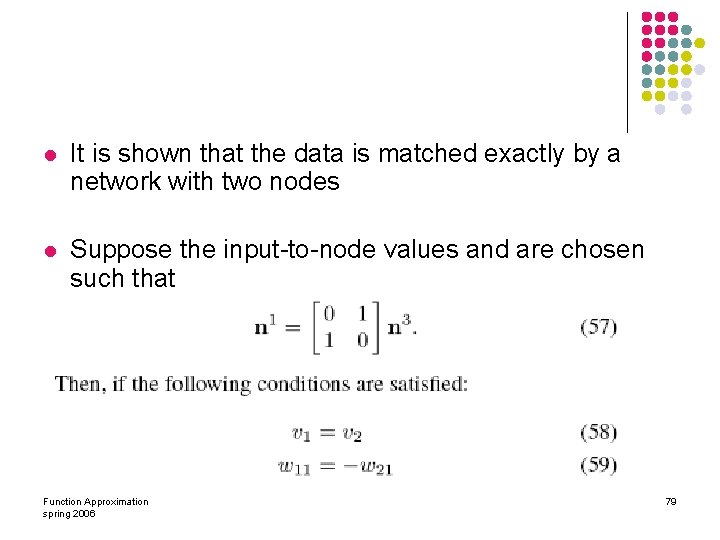

l It is shown that the data is matched exactly by a network with two nodes l Suppose the input-to-node values and are chosen such that Function Approximation spring 2006 79

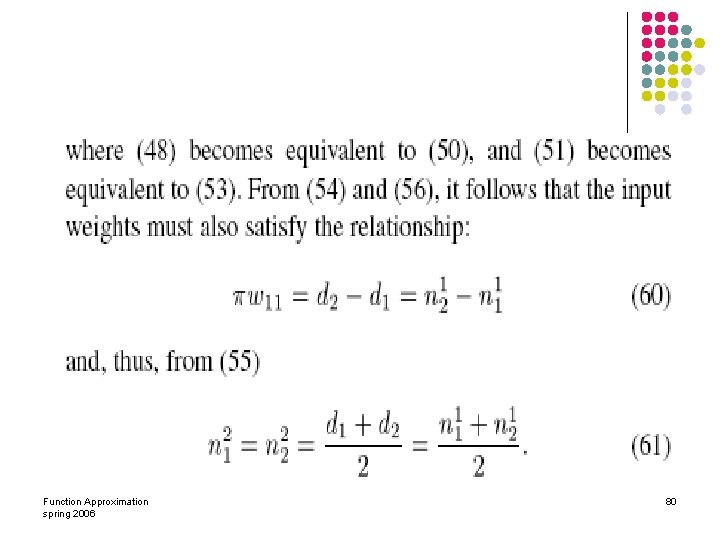

Function Approximation spring 2006 80

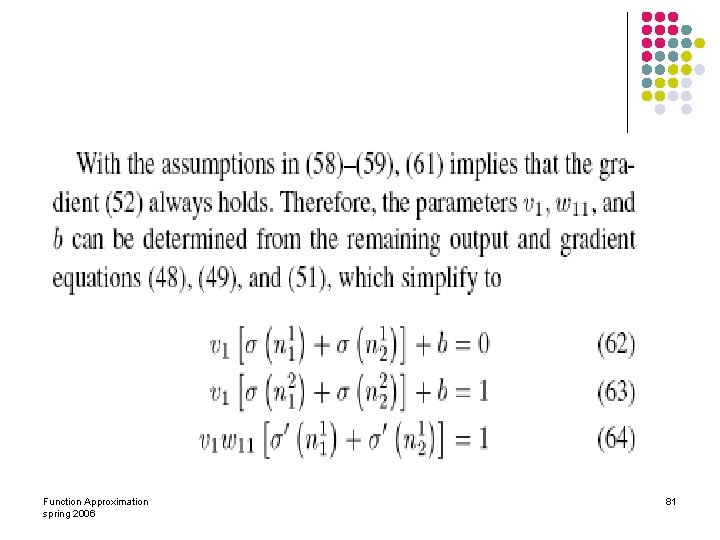

Function Approximation spring 2006 81

l equations. In this example, is chosen to make the above weight equations consistent and to meet the assumptions in (57) and (60)–(61). It can be easily shown that this corresponds to computing the elements of ( and ) from the equation Function Approximation spring 2006 82

Function Approximation spring 2006 83

Function Approximation spring 2006 84

Function Approximation spring 2006 85

Conclusion l algebraic training vs optimization-based techniques. l l faster execution speeds better generalization properties reduced computational complexity can be used to find a direct correlation between the number of network nodes needed to model a given data set and the desired accuracy of representation. Function Approximation spring 2006 86

Function Approximation Fariba Sharifian Somaye Kafi Function Approximation spring 2006 87

- Slides: 87