Frequent Itemsets Association rules and market basket analysis

Frequent Itemsets Association rules and market basket analysis CS 240 B--UCLA Notes by Carlo Zaniolo Most slides borrowed from Jiawei Han, UIUC May 2007 1

Association Rules & Correlations z Basic concepts z Efficient and scalable frequent itemset mining methods: y Apriori, and improvements y FP-growth z z z Rule derivation, visualization and validation Multi-level Associations Temporal associations and frequent sequences Other association mining methods Summary 2

Market Basket Analysis: the context Customer buying habits by finding associations and correlations between the different items that customers place in their “shopping basket” Milk, eggs, sugar, bread Milk, eggs, cereal, bread Eggs, sugar Customer 1 Customer 2 Customer 3 3

Market Basket Analysis: the context Given: a database of customer transactions, where each transaction is a set of items y Find groups of items which are frequently purchased together 4

Goal of MBA z Extract information on purchasing behavior z Actionable information: can suggest y new store layouts y new product assortments y which products to put on promotion z MBA applicable whenever a customer purchases multiple things in proximity y credit cards y services of telecommunication companies y banking services y medical treatments 5

MBA: applicable to many other contexts Telecommunication: Each customer is a transaction containing the set of customer’s phone calls Atmospheric phenomena: Each time interval (e. g. a day) is a transaction containing the set of observed event (rains, wind, etc. ) Etc. 6

Association Rules z Express how product/services relate to each other, and tend to group together z “if a customer purchases three-way calling, then will also purchase call-waiting” z simple to understand z actionable information: bundle three-way calling and call-waiting in a single package 7

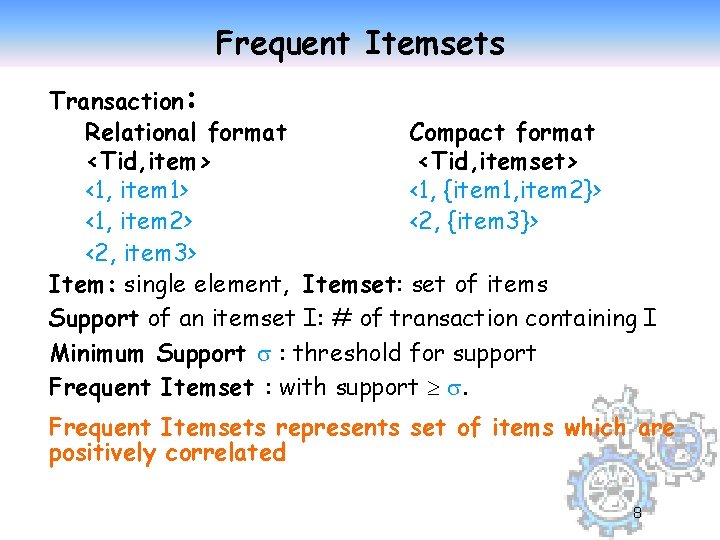

Frequent Itemsets Transaction: Relational format Compact format <Tid, item> <Tid, itemset> <1, item 1> <1, {item 1, item 2}> <1, item 2> <2, {item 3}> <2, item 3> Item: single element, Itemset: set of items Support of an itemset I: # of transaction containing I Minimum Support : threshold for support Frequent Itemset : with support . Frequent Itemsets represents set of items which are positively correlated 8

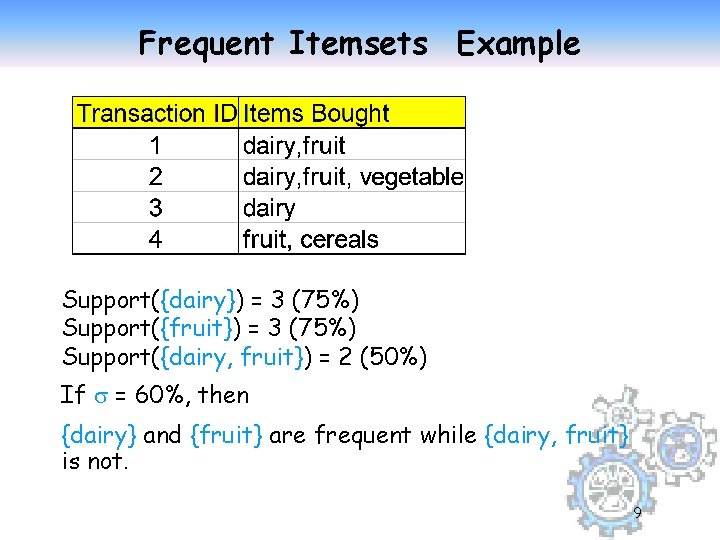

Frequent Itemsets Example Support({dairy}) = 3 (75%) Support({fruit}) = 3 (75%) Support({dairy, fruit}) = 2 (50%) If = 60%, then {dairy} and {fruit} are frequent while {dairy, fruit} is not. 9

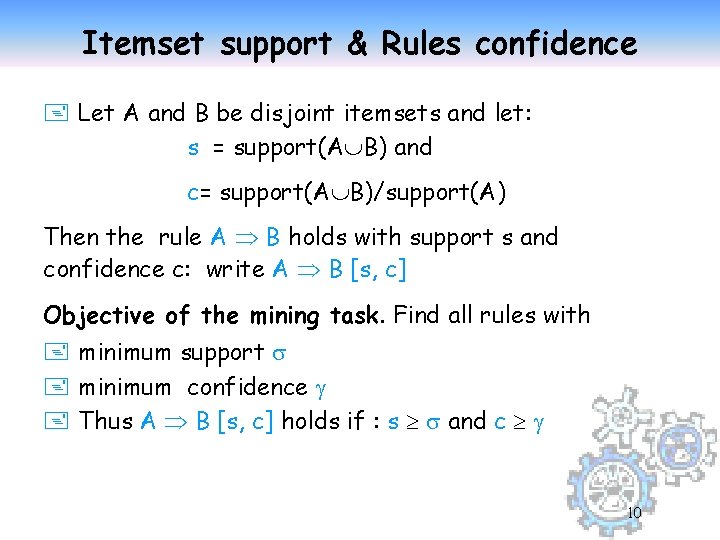

Itemset support & Rules confidence + Let A and B be disjoint itemsets and let: s = support(A B) and c= support(A B)/support(A) Then the rule A B holds with support s and confidence c: write A B [s, c] Objective of the mining task. Find all rules with + minimum support + minimum confidence + Thus A B [s, c] holds if : s and c 10

![Association Rules: Meaning A B [ s, c ] Support: denotes the frequency of Association Rules: Meaning A B [ s, c ] Support: denotes the frequency of](http://slidetodoc.com/presentation_image_h2/4076bd994ac88785e47d19e81b05f35f/image-11.jpg)

Association Rules: Meaning A B [ s, c ] Support: denotes the frequency of the rule within transactions. A high value means that the rule involve a great part of database. support(A B [ s, c ]) = p(A B) Confidence: denotes the percentage of transactions containing A which contain also B. It is an estimation of conditioned probability. confidence(A B [ s, c ]) = p(B|A) = p(A & B)/p(A). 11

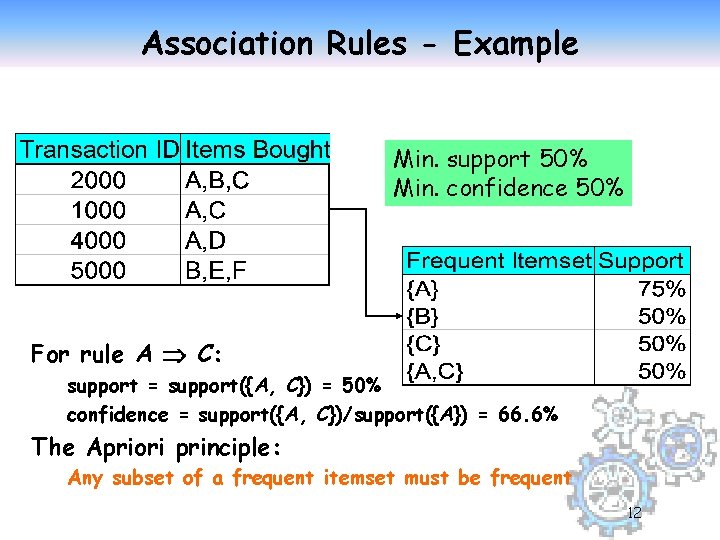

Association Rules - Example Min. support 50% Min. confidence 50% For rule A C: support = support({A, C}) = 50% confidence = support({A, C})/support({A}) = 66. 6% The Apriori principle: Any subset of a frequent itemset must be frequent 12

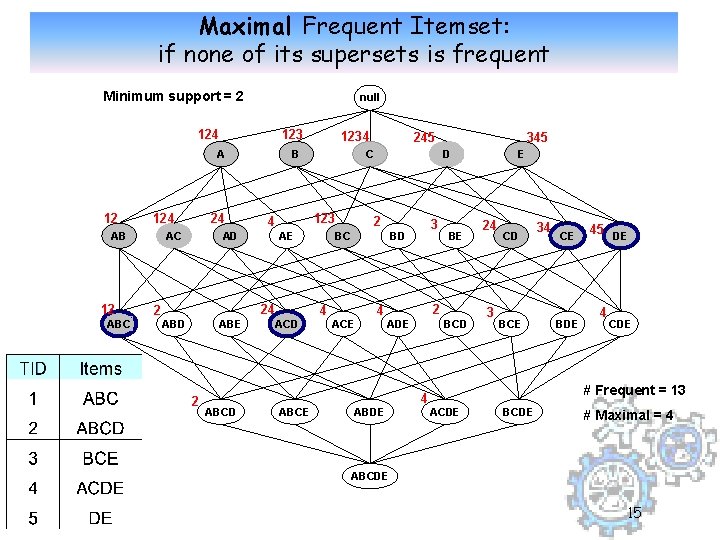

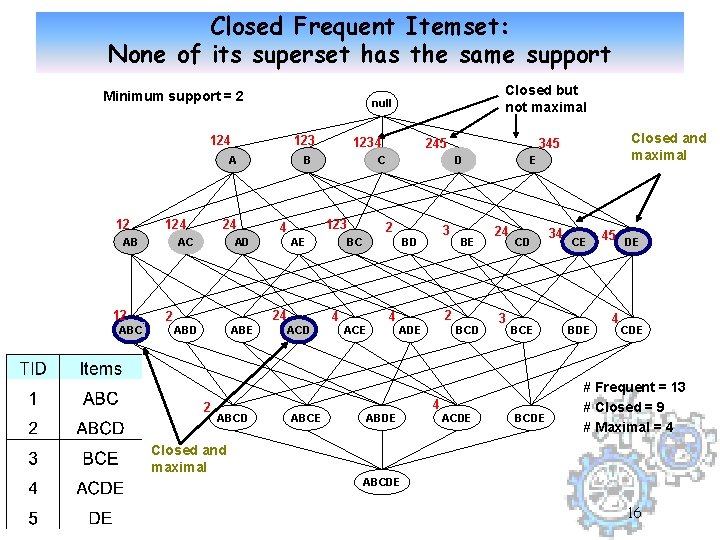

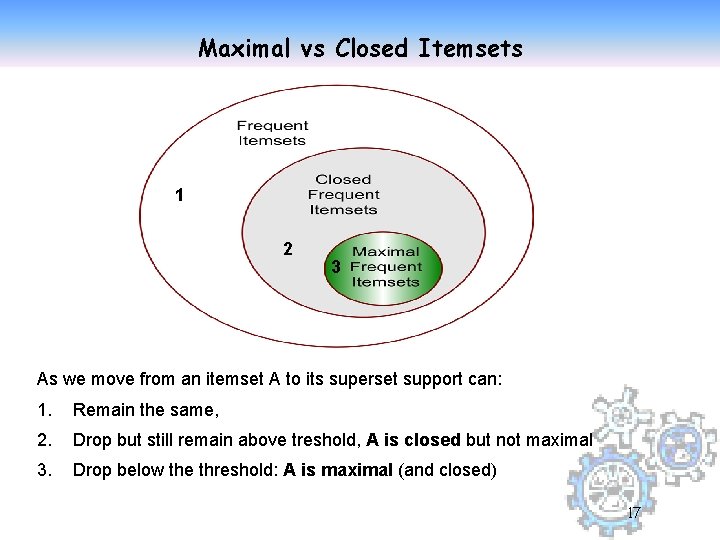

Closed Patterns and Max-Patterns z A long pattern contains very many subpatterns---combinatorial explosion y Closed patterns and max-patterns z An itemset is closed if none of its supersets has the same support y Closed pattern is a lossless compression of freq. patterns--Reducing the # of patterns and rules z An itemset is maximal frequent if none of its supersets is frequent y But support of their subsets is not known – additional DB scans are needed 13

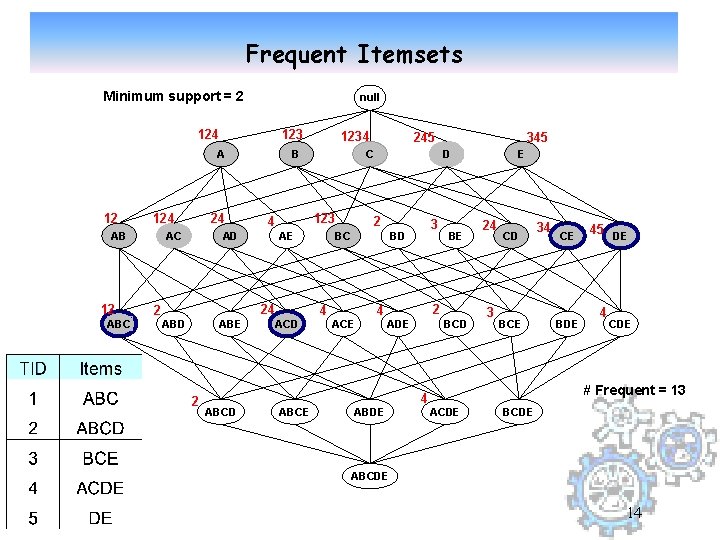

Frequent Itemsets Minimum support = 2 null 124 123 A 12 124 AB 12 ABC 2 24 AC AD ABE B AE ABCD ABCE 245 C 123 4 24 2 1234 D 2 BC 4 3 BD ACE 345 4 BE 2 ADE ABDE BCD 4 E 24 3 CD BCE 34 CE BDE 45 DE 4 CDE # Frequent = 13 ACDE BCDE ABCDE 14

Maximal Frequent Itemset: if none of its supersets is frequent Minimum support = 2 null 124 123 A 12 124 AB 12 ABC 2 24 AC AD ABE B AE ABCD ABCE 245 C 123 4 24 2 1234 D 2 BC 4 3 BD ACE 345 4 BE 2 ADE ABDE BCD 4 E 24 3 CD BCE 34 CE BDE 45 DE 4 CDE # Frequent = 13 ACDE BCDE # Maximal = 4 ABCDE 15

Closed Frequent Itemset: None of its superset has the same support Minimum support = 2 124 123 A 12 124 AB 12 ABC 24 AC 2 AE 2 ABCD ABCE 245 C 123 4 24 ABE 1234 B AD ABD Closed but not maximal null D 2 BC 4 3 BD ACE 4 BE 2 ADE ABDE BCD 4 Closed and maximal 345 ACDE E 24 3 CD BCE BCDE 34 CE BDE 45 DE 4 CDE # Frequent = 13 # Closed = 9 # Maximal = 4 Closed and maximal ABCDE 16

Maximal vs Closed Itemsets 1 2 3 As we move from an itemset A to its superset support can: 1. Remain the same, 2. Drop but still remain above treshold, A is closed but not maximal 3. Drop below the threshold: A is maximal (and closed) 17

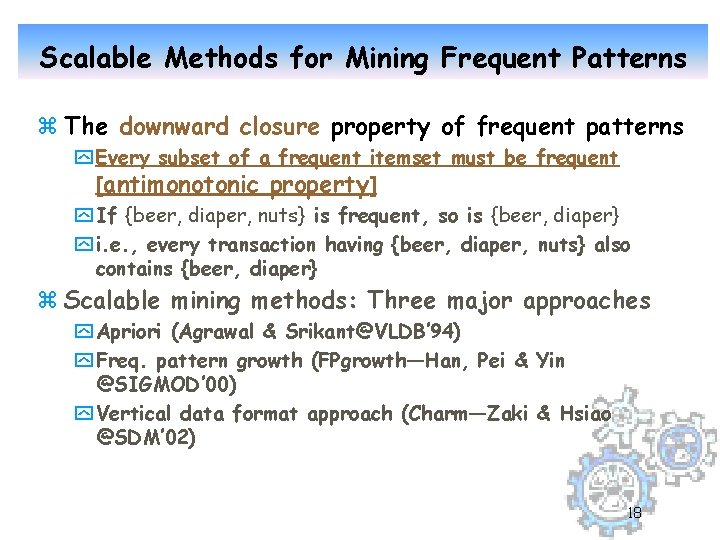

Scalable Methods for Mining Frequent Patterns z The downward closure property of frequent patterns y Every subset of a frequent itemset must be frequent [antimonotonic property] y If {beer, diaper, nuts} is frequent, so is {beer, diaper} y i. e. , every transaction having {beer, diaper, nuts} also contains {beer, diaper} z Scalable mining methods: Three major approaches y Apriori (Agrawal & Srikant@VLDB’ 94) y Freq. pattern growth (FPgrowth—Han, Pei & Yin @SIGMOD’ 00) y Vertical data format approach (Charm—Zaki & Hsiao @SDM’ 02) 18

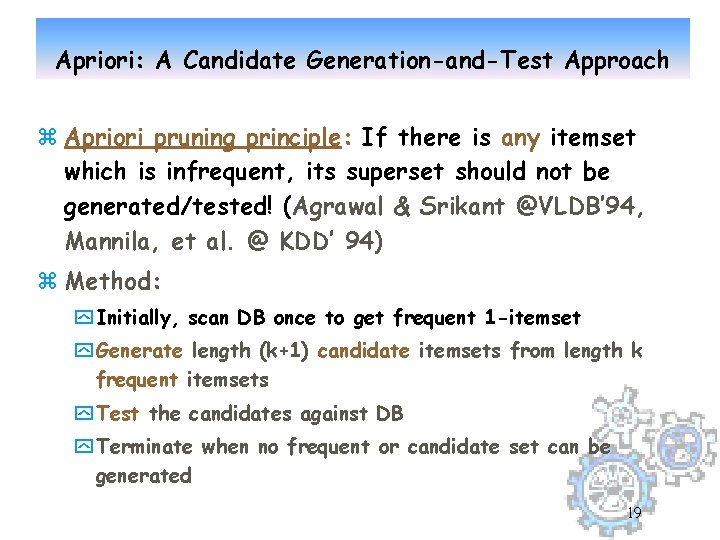

Apriori: A Candidate Generation-and-Test Approach z Apriori pruning principle: If there is any itemset which is infrequent, its superset should not be generated/tested! (Agrawal & Srikant @VLDB’ 94, Mannila, et al. @ KDD’ 94) z Method: y Initially, scan DB once to get frequent 1 -itemset y Generate length (k+1) candidate itemsets from length k frequent itemsets y Test the candidates against DB y Terminate when no frequent or candidate set can be generated 19

Association Rules & Correlations z Basic concepts z Efficient and scalable frequent itemset mining methods: y Apriori, and improvements 20

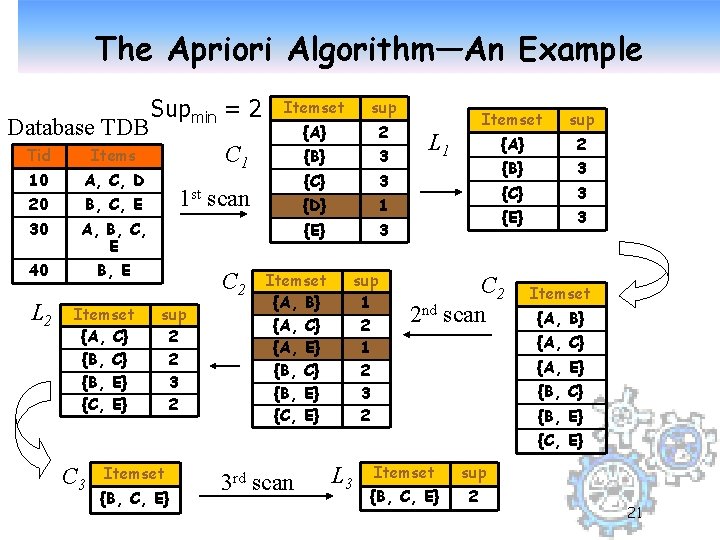

The Apriori Algorithm—An Example Database TDB Tid Items 10 A, C, D 20 B, C, E 30 A, B, C, E 40 B, E L 2 Itemset {A, C} {B, E} {C, E} Supmin = 2 Itemset sup {A} 2 {B} 3 {C} 3 {D} 1 {E} 3 C 1 1 st scan C 2 sup 2 2 3 2 Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} sup 1 2 3 2 L 1 Itemset sup {A} 2 {B} 3 {C} 3 {E} 3 C 2 2 nd scan Itemset {A, B} {A, C} {A, E} {B, C} {B, E} {C, E} C 3 Itemset {B, C, E} 3 rd scan L 3 Itemset sup {B, C, E} 2 21

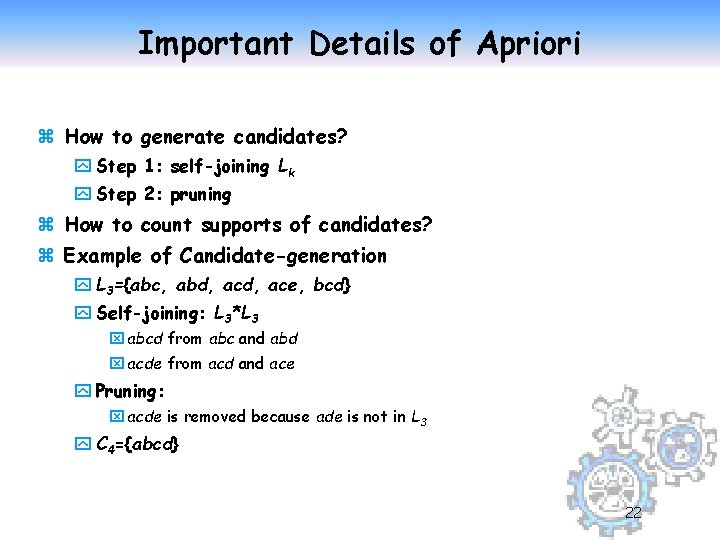

Important Details of Apriori z How to generate candidates? y Step 1: self-joining Lk y Step 2: pruning z How to count supports of candidates? z Example of Candidate-generation y L 3={abc, abd, ace, bcd} y Self-joining: L 3*L 3 x abcd from abc and abd x acde from acd and ace y Pruning: x acde is removed because ade is not in L 3 y C 4={abcd} 22

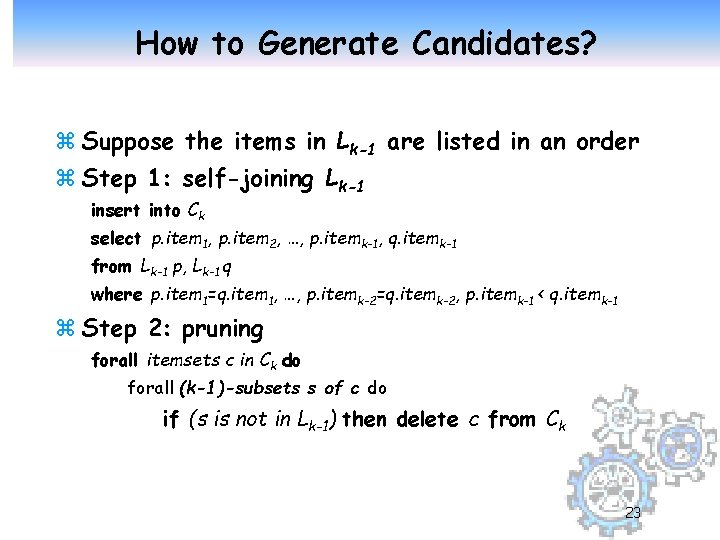

How to Generate Candidates? z Suppose the items in Lk-1 are listed in an order z Step 1: self-joining Lk-1 insert into Ck select p. item 1, p. item 2, …, p. itemk-1, q. itemk-1 from Lk-1 p, Lk-1 q where p. item 1=q. item 1, …, p. itemk-2=q. itemk-2, p. itemk-1 < q. itemk-1 z Step 2: pruning forall itemsets c in Ck do forall (k-1)-subsets s of c do if (s is not in Lk-1) then delete c from Ck 23

How to Count Supports of Candidates? z Why counting supports of candidates a problem? y The total number of candidates can be very huge y One transaction may contain many candidates z Data Structures used: y Candidate itemsets can be stored in a hash-tree y or in a prefix-tree (trie)--example 24

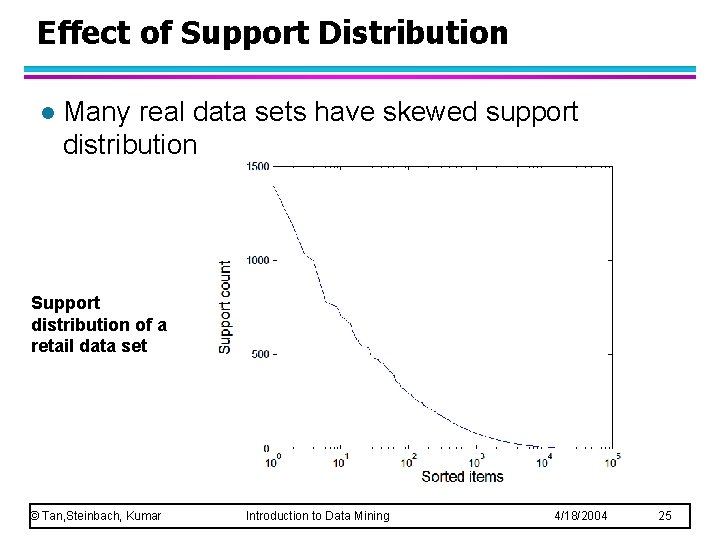

Effect of Support Distribution l Many real data sets have skewed support distribution Support distribution of a retail data set © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 25

Effect of Support Distribution l How to set the appropriate minsup threshold? – If minsup is set too high, we could miss itemsets involving interesting rare items (e. g. , expensive products) – If minsup is set too low, it is computationally expensive and the number of itemsets is very large l Using a single minimum support threshold may not be effective © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 26

Rule Generation l How to efficiently generate rules from frequent itemsets? – In general, confidence does not have an antimonotone property c(ABC D) can be larger or smaller than c(AB D) – But confidence of rules generated from the same itemset has an anti-monotone property – e. g. , L = {A, B, C, D}: c(ABC D) c(AB CD) c(A BCD) Confidence is anti-monotone w. r. t. number of items on the RHS of the rule u © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 27

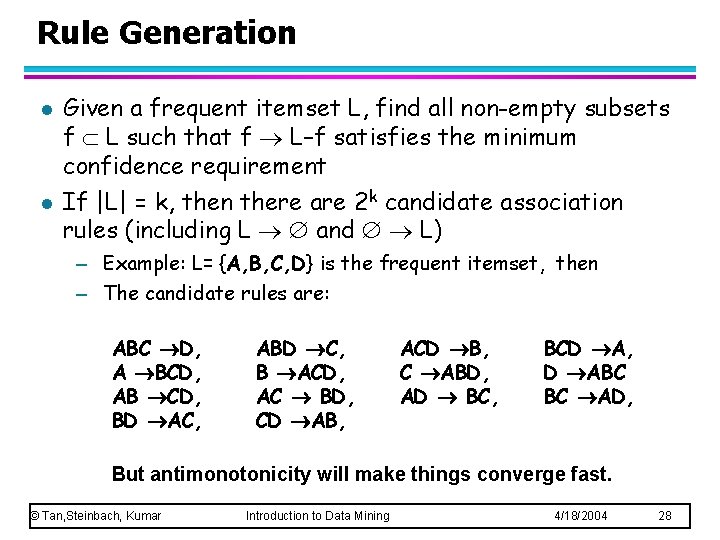

Rule Generation l l Given a frequent itemset L, find all non-empty subsets f L such that f L–f satisfies the minimum confidence requirement If |L| = k, then there are 2 k candidate association rules (including L and L) – Example: L= {A, B, C, D} is the frequent itemset, then – The candidate rules are: ABC D, A BCD, AB CD, BD AC, ABD C, B ACD, AC BD, CD AB, ACD B, C ABD, AD BC, BCD A, D ABC BC AD, But antimonotonicity will make things converge fast. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 28

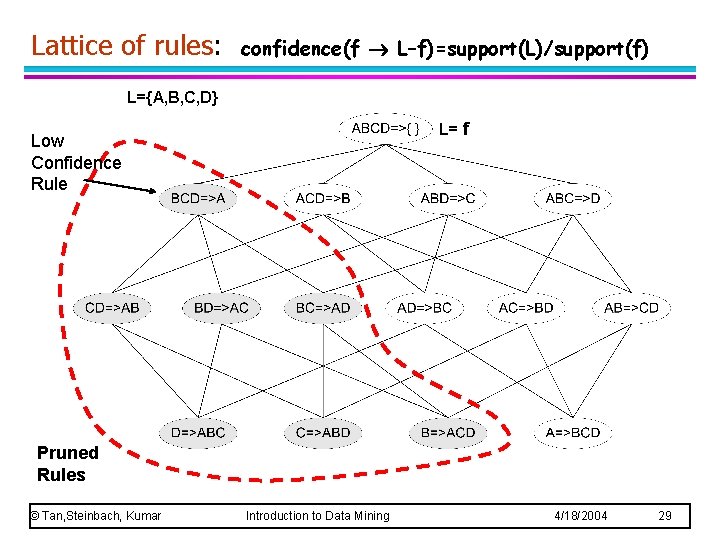

Lattice of rules: confidence(f L–f)=support(L)/support(f) L={A, B, C, D} L= f Low Confidence Rule Pruned Rules © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 29

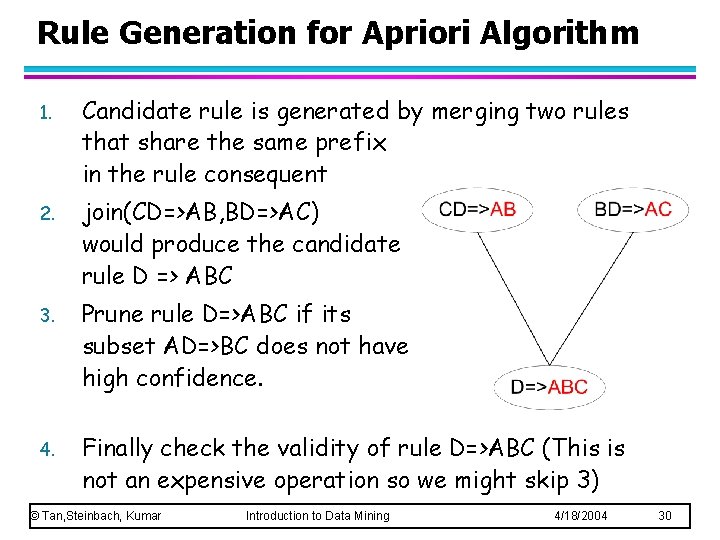

Rule Generation for Apriori Algorithm 1. Candidate rule is generated by merging two rules that share the same prefix in the rule consequent 2. join(CD=>AB, BD=>AC) would produce the candidate rule D => ABC 3. Prune rule D=>ABC if its subset AD=>BC does not have high confidence. 4. Finally check the validity of rule D=>ABC (This is not an expensive operation so we might skip 3) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 30

Rules: some useful, some trivial, others unexplicable z Useful: “On Thursdays, grocery store consumers often purchase diapers and beer together”. z Trivial: “Customers who purchase maintenance agreements are very likely to purchase large appliances”. z Unexplicable: “When a new hardaware store opens, one of the most sold items is toilet rings. ” Conclusion: Inferred rules must be validate by domain expert, before they can be used in the marketplace: Post Mining of association rules. 31

Mining for Association Rules The main steps in the process 1. 2. 3. 4. Select a Find the Validate minimum support/confidence level frequent itemsets association rules (postmine) the rules so found. 32

Mining for Association Rules: Checkpoint z Apriori opened up a big commercial market for DM y association rules came from the db fields, classifier from AI, clustering precedes both … and DM z Many open problem areas, including 1. Performance: Faster Algorithms needed for frequent itemsets 2. Improving statistical/semantic significance of rules 3. Data Stream Mining for association rules. Even Faster algorithms needed, incremental computation, adaptability, etc. Also the post-mining process becomes more challenging. 33

Performance: Efficient Implementation Apriori in SQL z Hard to get good performance out of pure SQL (SQL-92) based approaches alone z Make use of object-relational extensions like UDFs, BLOBs, Table functions etc. y S. Sarawagi, S. Thomas, and R. Agrawal. Integrating association rule mining with relational database systems: Alternatives and implications. In SIGMOD’ 98 y A much better solution: use UDAs—native or imported. Haixun Wang and Carlo Zaniolo: ATLa. S: A Native Extension of SQL for Data Mining. SIAM International Conference on Data Mining 2003, San Francisco, CA, May 1 -3, 2003 34

Performance for Apriori z Challenges y Multiple scans of transaction database [not for data streams] y Huge number of candidates y Tedious workload of support counting for candidates z Many Improvements suggested: general ideas y Reduce passes of transaction database scans y Shrink number of candidates y Facilitate counting of candidates 35

Partition: Scan Database Only Twice z Any itemset that is potentially frequent in DB must be frequent in at least one of the partitions of DB y Scan 1: partition database and find local frequent patterns y Scan 2: consolidate global frequent patterns z A. Savasere, E. Omiecinski, and S. Navathe. An efficient algorithm for mining association in large databases. In VLDB’ 95 z Does this scaleup to larger partitions? 36

Sampling for Frequent Patterns z Select a sample S of original database, mine frequent patterns within sample using Apriori z To avoid losses mine for a support less than that required z Scan rest of database to find exact counts. z H. Toivonen. Sampling large databases for association rules. In VLDB’ 96 37

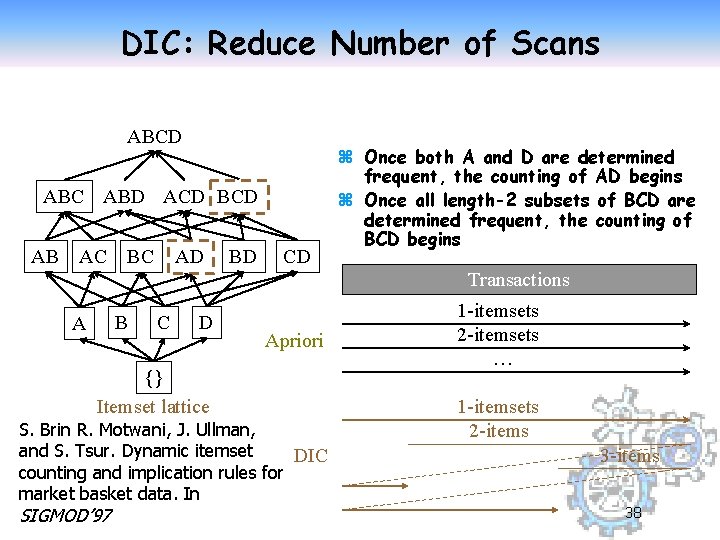

DIC: Reduce Number of Scans ABCD ABC ABD ACD BCD AB AC BC AD BD CD z Once both A and D are determined frequent, the counting of AD begins z Once all length-2 subsets of BCD are determined frequent, the counting of BCD begins Transactions B A C D Apriori {} Itemset lattice S. Brin R. Motwani, J. Ullman, and S. Tsur. Dynamic itemset DIC counting and implication rules for market basket data. In SIGMOD’ 97 1 -itemsets 2 -itemsets … 1 -itemsets 2 -items 38

Improving Performance (cont. ) z APriori Multiple database scans are costly z Mining long patterns needs many passes of scanning and generates lots of candidates y. To find frequent itemset i 1 i 2…i 100 x# of scans: 100 x# of Candidates: (1001) + (1002) + … + (110000) = 2100 -1 = 1. 27*1030 ! z Bottleneck: candidate-generation-and-test z Can we avoid candidate generation? 39

Mining Frequent Patterns Without Candidate Generation z FP-Growth Algorithm 1. Build FP-tree: items are listed by decreasing frequency 2. For each suffix (recursively) x Build its conditionalized subtree x and compute its frequent items z An order of magnitude faster than Apriori 40

Frequent Patterns (FP) Algorithm The algorithm consists of two steps: Step 1: builds the FP-Tree (Frequent Patterns Tree). Step 2: use FP_Growth Algorithm for finding frequent itemsets from the FP- Tree. _____________________ These slides are based on those by: Yousry Taha, Taghrid Al-Shallali, Ghada AL Modaifer , Nesreen AL Boiez 41

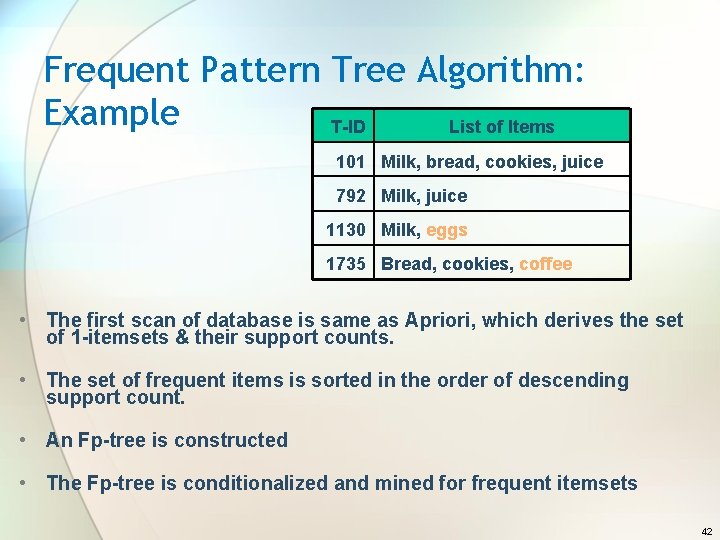

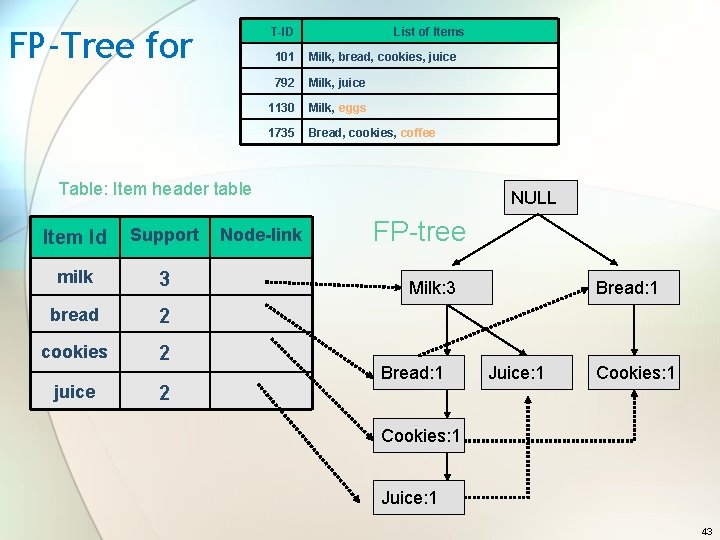

Frequent Pattern Tree Algorithm: Example T-ID List of Items 101 Milk, bread, cookies, juice 792 Milk, juice 1130 Milk, eggs 1735 Bread, cookies, coffee • The first scan of database is same as Apriori, which derives the set of 1 -itemsets & their support counts. • The set of frequent items is sorted in the order of descending support count. • An Fp-tree is constructed • The Fp-tree is conditionalized and mined for frequent itemsets 42

FP-Tree for T-ID List of Items 101 Milk, bread, cookies, juice 792 Milk, juice 1130 Milk, eggs 1735 Bread, cookies, coffee Table: Item header table Item Id Support milk 3 bread 2 cookies 2 juice 2 Node-link NULL FP-tree Milk: 3 Milk: 2 Milk: 1 Bread: 1 Juice: 1 Cookies: 1 Juice: 1 43

FP-Growth Algorithm For Finding Frequent Itemsets Steps: 1. Start from each frequent length-1 pattern (as an initial suffix pattern). 2. Construct its conditional pattern base which consists of the set of prefix paths in the FP-Tree co-occurring with suffix pattern. 3. Then, Construct its conditional FP-Tree & perform mining on such a tree. 4. The pattern growth is achieved by concatenation of the suffix pattern with the frequent patterns generated from a conditional FP-Tree. 5. The union of all frequent patterns (generated by step 4) gives the required frequent itemset. 44

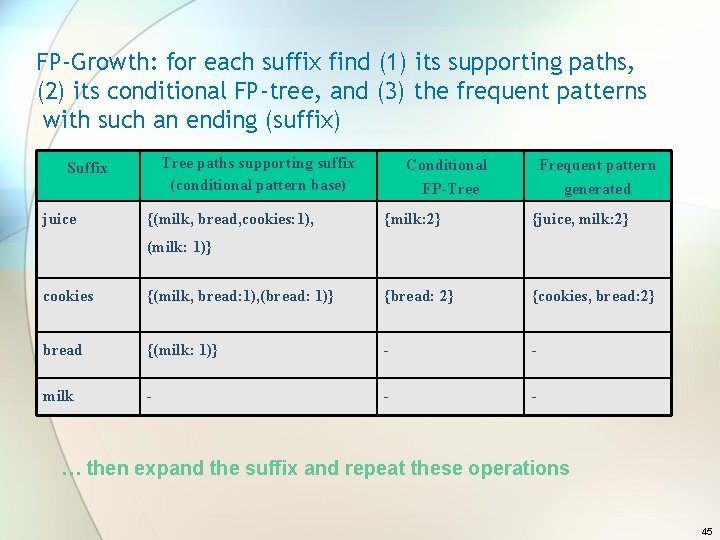

FP-Growth: for each suffix find (1) its supporting paths, (2) its conditional FP-tree, and (3) the frequent patterns with such an ending (suffix) Tree paths supporting suffix (conditional pattern base) Suffix juice {(milk, bread, cookies: 1), Conditional FP-Tree Frequent pattern generated {milk: 2} {juice, milk: 2} (milk: 1)} cookies {(milk, bread: 1), (bread: 1)} {bread: 2} {cookies, bread: 2} bread {(milk: 1)} - - milk - - - … then expand the suffix and repeat these operations 45

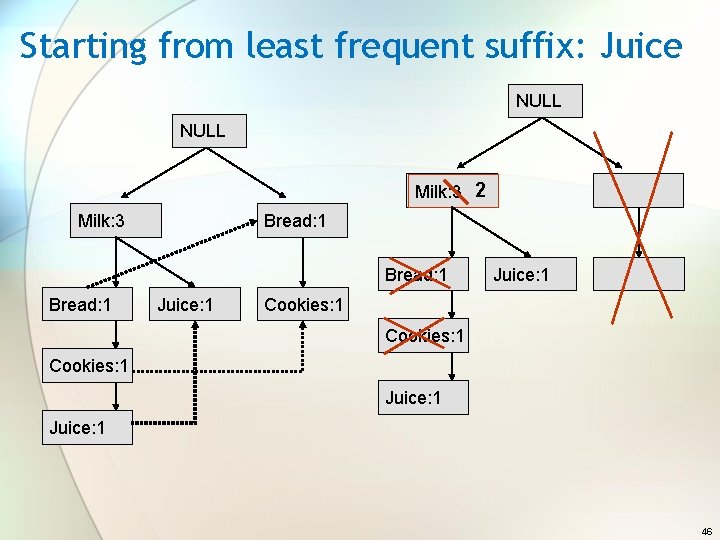

Starting from least frequent suffix: Juice NULL Milk: 1 Milk: 2 Milk: 3 Milk: 2 Milk: 1 Bread: 1 Juice: 1 Cookies: 1 Juice: 1 46

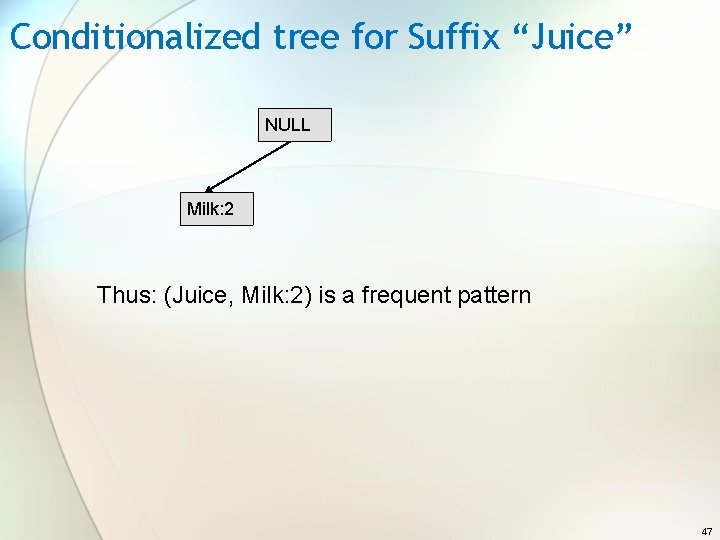

Conditionalized tree for Suffix “Juice” NULL Milk: 2 Thus: (Juice, Milk: 2) is a frequent pattern 47

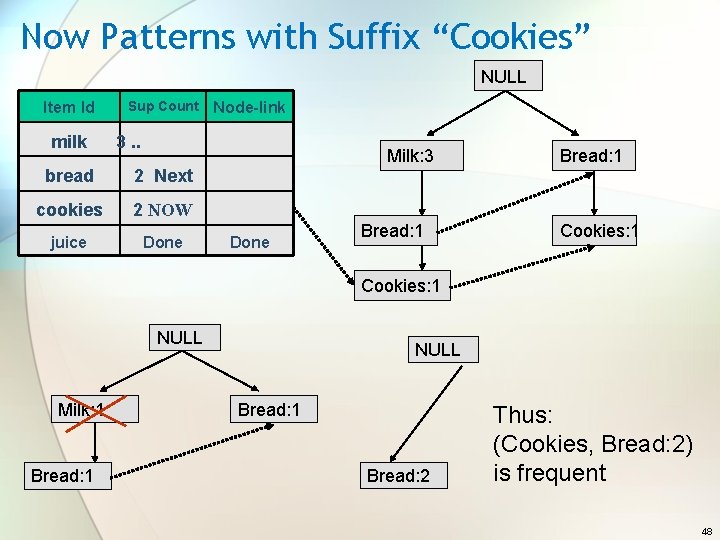

Now Patterns with Suffix “Cookies” NULL Item Id milk Sup Count Node-link 3. . bread 2 Next cookies 2 NOW juice Done Milk: 3 Milk: 2 Milk: 1 Done Bread: 1 Cookies: 1 NULL Milk: 2 Milk: 1 Bread: 1 NULL Bread: 1 Bread: 2 Thus: (Cookies, Bread: 2) is frequent 48

Why Frequent Pattern Growth Fast ? • Performance study shows FP-growth is an order of magnitude faster than Apriori • Reasoning − No candidate generation, no candidate test − Use compact data structure − Eliminate repeated database scan − Basic operation is counting and FP-tree building 49

Other types of Association RULES • Association Rules among Hierarchies. • Multidimensional Association • Negative Association 50

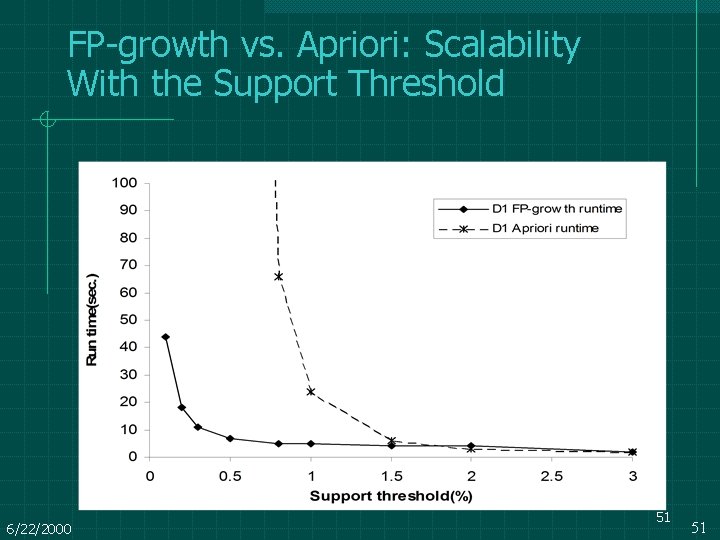

FP-growth vs. Apriori: Scalability With the Support Threshold Data set T 25 I 20 D 10 K 6/22/2000 51 51

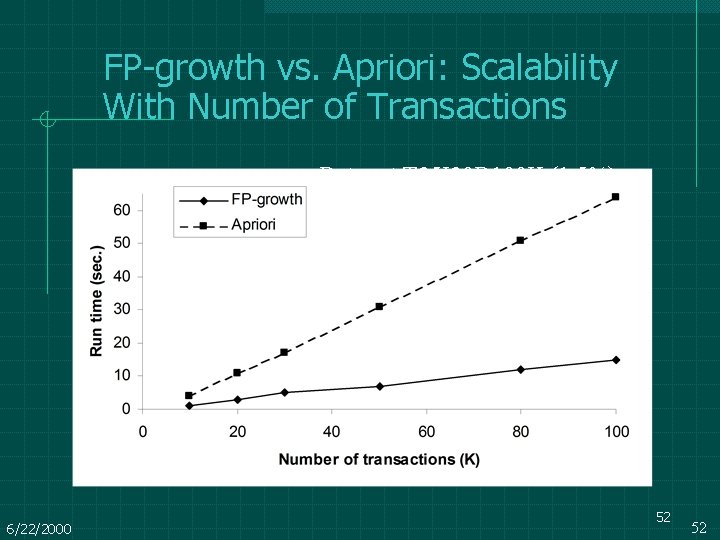

FP-growth vs. Apriori: Scalability With Number of Transactions Data set T 25 I 20 D 100 K (1. 5%) 6/22/2000 52 52

FP-Growth: pros and cons z FP- tree is Complete y Preserve complete information for frequent pattern mining y Never break a long pattern of any transaction z FP- tree Compact y Reduce irrelevant info—infrequent items are gone y Items in frequency descending order: the more frequently occurring, the more likely to be shared y Never be larger than the original database (not count node-links and the count field) z FP-tree is generate in one scan of database (data streams mining? ) y However, deriving the frequent patterns from the FP-tree is still computationally expensive—improved algorithms needed for data streams. 53

- Slides: 53