Frequency BandImportance Functions for Auditory and Auditory Visual

Frequency Band-Importance Functions for Auditory and Auditory. Visual Speech Recognition Ken W. Grant Walter Reed Army Medical Center Washington, D. C. 20307 -5001

Background • Speech recognition involves broadband listening. • Information is not uniformly distributed across the frequency spectrum. – different cues (spectral and temporal) of different relative value reside at different frequencies. – in general, more importance is placed at midfrequencies around 1500 -3000 Hz. – probably related to place-of-articulation cues (F 2/F 3 transitions)

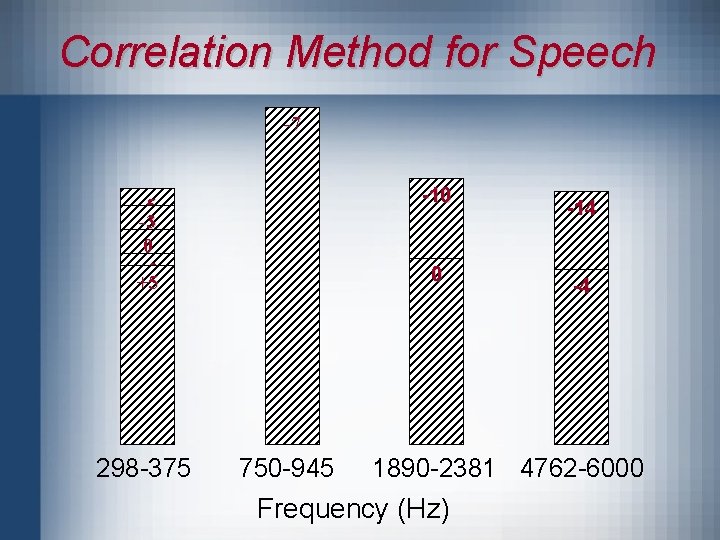

Background (continued) • How can we determine the relative importance or “weights” that listeners place on various frequency regions? • Doherty and Turner, 1996; Turner et al. , 1998 – correlational procedure (Lutfi, 1995; Richards and Zhu, 1994) applied to speech recognition. – partition speech into a number of spectral bands. – perturb each band so that amount of information in each band can be correlated with a listener’s performance.

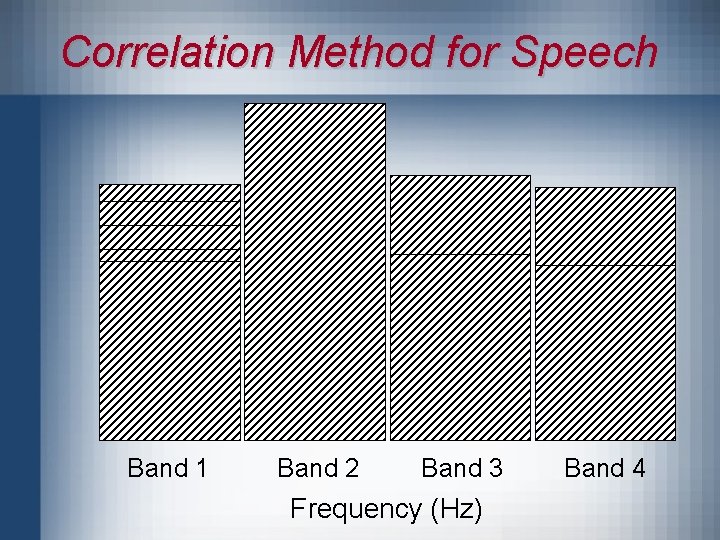

Correlation Method for Speech Band 1 Band 2 Band 3 Frequency (Hz) Band 4

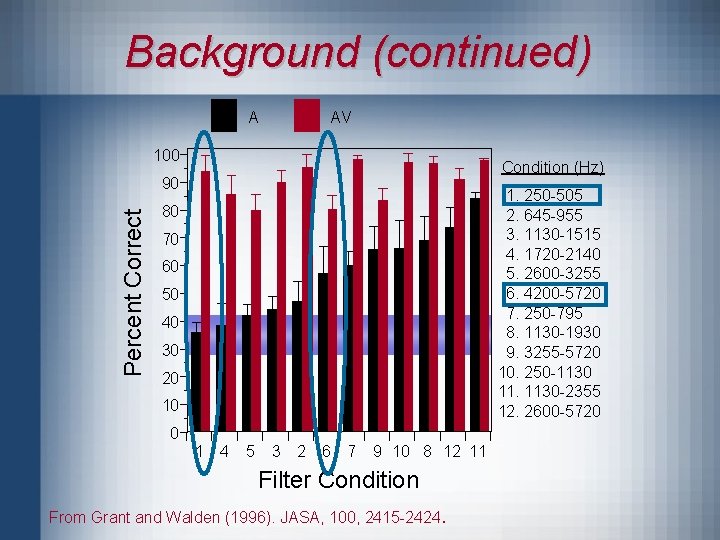

Background (continued) • Are the relative importance of different frequency regions altered by the presence of visual speech cues? • Past results using isolated spectral bands of speech show that low-frequency speech provides more benefit to speechreading than other spectral regions (Grant and Walden, 1996).

Background (continued) A AV 100 Condition (Hz) Percent Correct 90 1. 250 -505 2. 645 -955 3. 1130 -1515 4. 1720 -2140 5. 2600 -3255 6. 4200 -5720 7. 250 -795 8. 1130 -1930 9. 3255 -5720 10. 250 -1130 11. 1130 -2355 12. 2600 -5720 80 70 60 50 40 30 20 10 0 1 4 5 3 2 6 7 9 10 8 12 11 Filter Condition From Grant and Walden (1996). JASA, 100, 2415 -2424 .

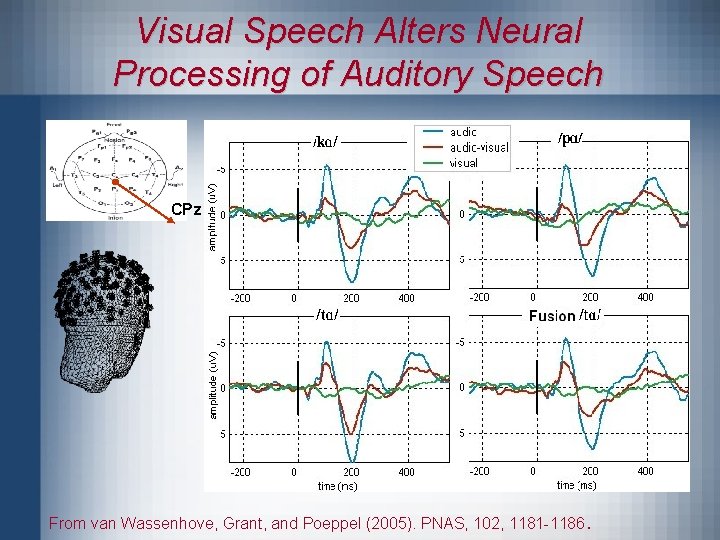

Background (continued) • Evidence from electrophysiological studies show that visual speech cues fundamentally alter the way the auditory cortex responds to sound input (Calvert, 1977; van Wassenhove et al. , 2005). – reduction in N 1 -P 2 amplitude. – latency shift in N 2 peak for highly visible consonants.

Visual Speech Alters Neural Processing of Auditory Speech CPz From van Wassenhove, Grant, and Poeppel (2005). PNAS, 102, 1181 -1186 .

Goals • Determine relative importance of different frequency regions for auditory and auditory-visual speech. • Minimize band-on-band interactions by partitioning the speech signal into widely spaced narrow bands.

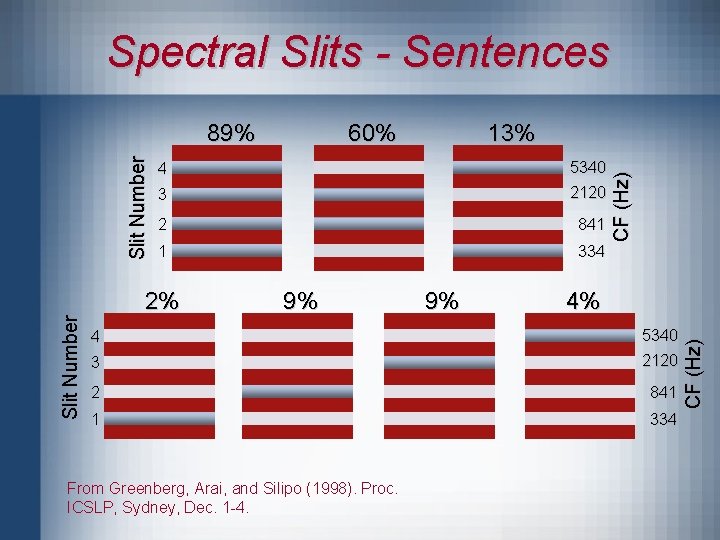

Spectral Slits - Sentences 13% 4 5340 3 2120 2 841 1 334 2% 9% 9% CF (Hz) 60% 4% 4 5340 3 2120 2 841 1 334 From Greenberg, Arai, and Silipo (1998). Proc. ICSLP, Sydney, Dec. 1 -4. CF (Hz) Slit Number 89%

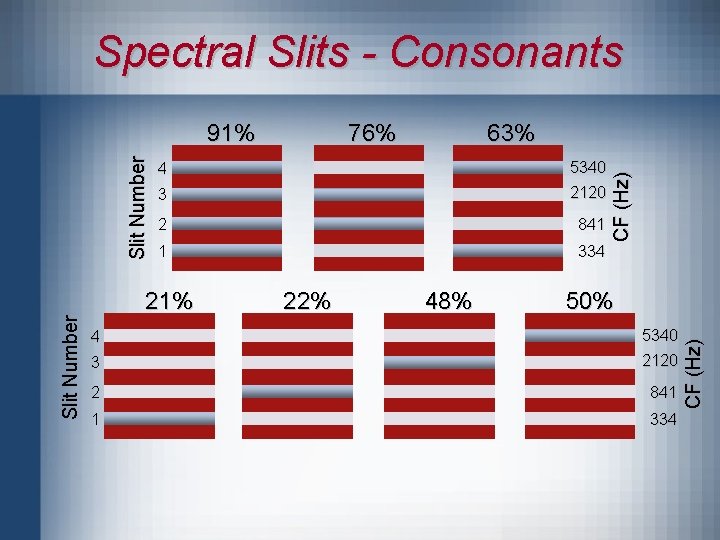

Spectral Slits - Consonants 63% 4 5340 3 2120 2 841 1 334 21% 22% 48% CF (Hz) 76% 50% 4 5340 3 2120 2 841 1 334 CF (Hz) Slit Number 91%

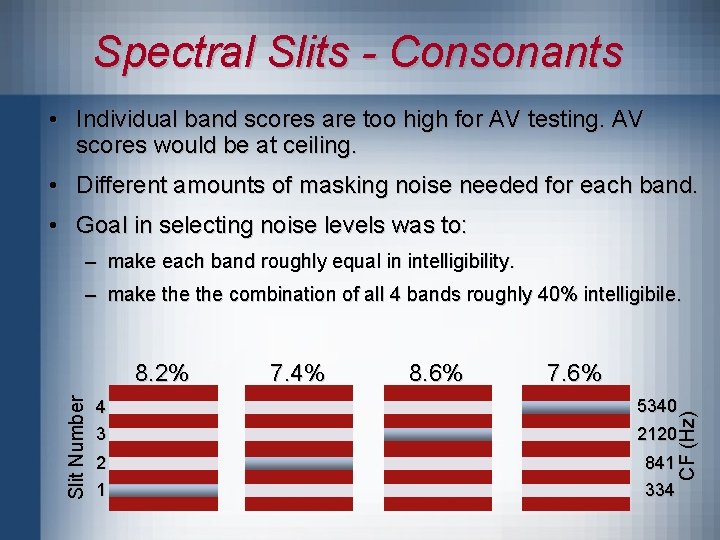

Spectral Slits - Consonants • Individual band scores are too high for AV testing. AV scores would be at ceiling. • Different amounts of masking noise needed for each band. • Goal in selecting noise levels was to: – make each band roughly equal in intelligibility. – make the combination of all 4 bands roughly 40% intelligibile. 7. 4% 8. 6% 7. 6% 4 3 5340 2120 2 1 841 334 CF (Hz) Slit Number 8. 2%

Correlation Method for Speech -7 -5 -5 -3 0 +3 +5 298 -375 0 +1 +3 -10 -8 -5 -3 0 750 -945 -14 -9 -7 -6 -4 1890 -2381 4762 -6000 Frequency (Hz)

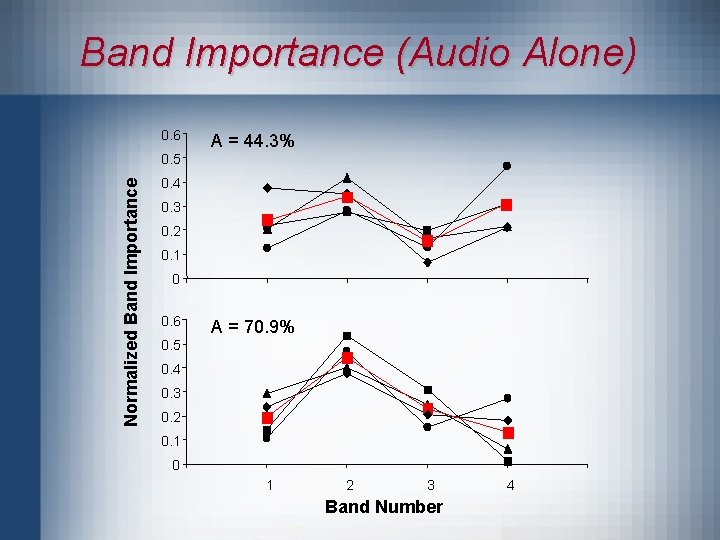

Band Importance (Audio Alone) 0. 6 A = 44. 3% Normalized Band Importance 0. 5 0. 4 0. 3 0. 2 0. 1 0 0. 6 A = 70. 9% 0. 5 0. 4 0. 3 0. 2 0. 1 0 1 2 3 Band Number 4

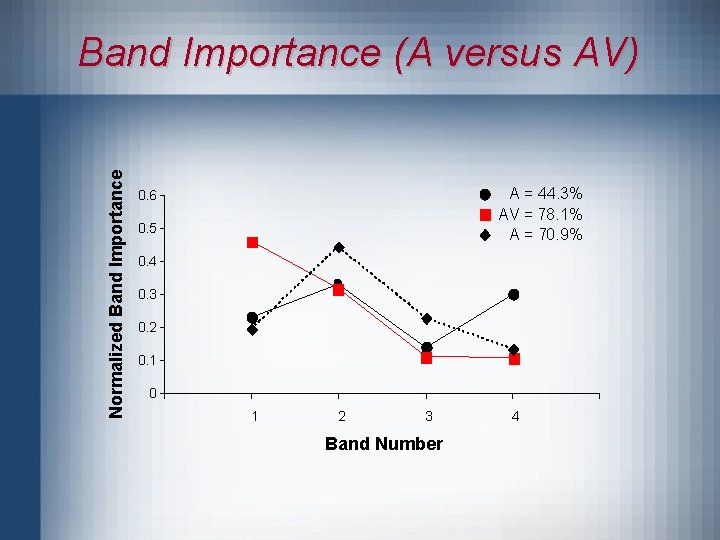

Normalized Band Importance (A versus AV) A = 44. 3% AV = 78. 1% A = 70. 9% 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 1 2 3 Band Number 4

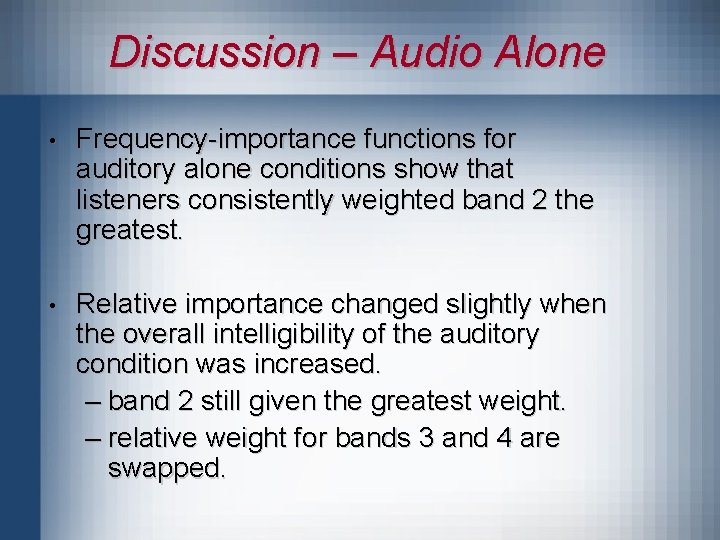

Discussion – Audio Alone • Frequency-importance functions for auditory alone conditions show that listeners consistently weighted band 2 the greatest. • Relative importance changed slightly when the overall intelligibility of the auditory condition was increased. – band 2 still given the greatest weight. – relative weight for bands 3 and 4 are swapped.

Discussion – Audiovisual • When visual speech cues are present, listeners’ place more importance on low frequencies. • Results are consistent with past studies using isolated spectral bands of speech. – low-frequency speech provides cues for voicing which is highly complementary with speechreading. – mid-to-high-frequency speech provides cues for place of articulation which is highly redundant with speechreading.

Conclusions - Questions • For robust speech recognition, information must be extracted from many different spectral regions. • The presence or absence of visual speech cues alters the importance of different spectral regions for the listener. • For listening conditions where low-frequency speech cues are compromised (noise, reverberation, hearing loss), enhancement of the low frequencies of speech may be advantageous, especially in situations where visual cues are available.

- Slides: 18