FRD A Filtering based Buffer Cache Algorithm that

FRD: A Filtering based Buffer Cache Algorithm that Considers both Frequency and Reuse Distance 33 rd International Conference on Massive Storage Systems and Technology (MSST 2017)

OUTLINE • INTRODUCTION • BACKGROUND & MOTIVATION • PROPOSED FRD ALGORITHM • EVALUATION • CONCLUSION

OUTLINE • INTRODUCTION • BACKGROUND & MOTIVATION • PROPOSED FRD ALGORITHM • EVALUATION • CONCLUSION

INTRODUCTION(1/3) • Buffer cache algorithms play a major role in building a memory hierarchy in a storage system. • Buffer cache is one of the key factors for improving performance. • Because of its simplicity and low overhead, a least-recently-used (LRU) algorithm is one of the most commonly used buffer cache algorithms.

INTRODUCTION(2/3) • However, the LRU algorithm performs poorly for the following workloads because of certain characteristics. • Scanning workload. • →meaningful blocks are present in the cache, the scanning workload evicts them. • Cyclic access (loop-like) workload in which loop length is greater than cache size. • →request sequence is 1 -2 -3 -4 -1 -2 -3 -4 4 3 2 1

INTRODUCTION(3/3) • We propose a buffer cache algorithm, called frequency and reuse distance (FRD), that considers both frequency and reuse distance. • Through careful analysis on various real-world workloads, we find that infrequently accessed blocks are dominant in most cases and such blocks are the main source of cache pollution. • We concentrate on the manner in which to identify these infrequently accessed blocks and exclude them from the cache.

OUTLINE • INTRODUCTION • BACKGROUND & MOTIVATION • PROPOSED FRD ALGORITHM • EVALUATION • CONCLUSION

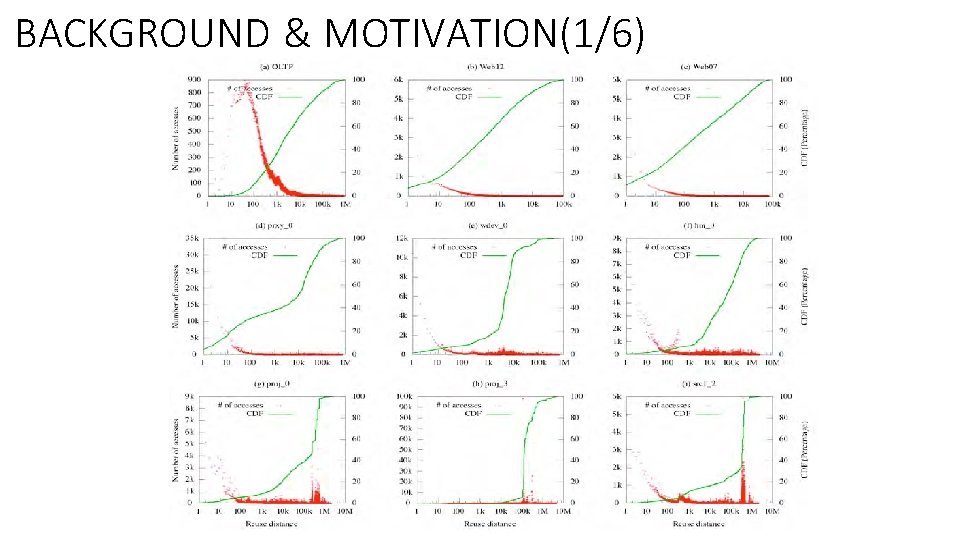

BACKGROUND & MOTIVATION(1/6)

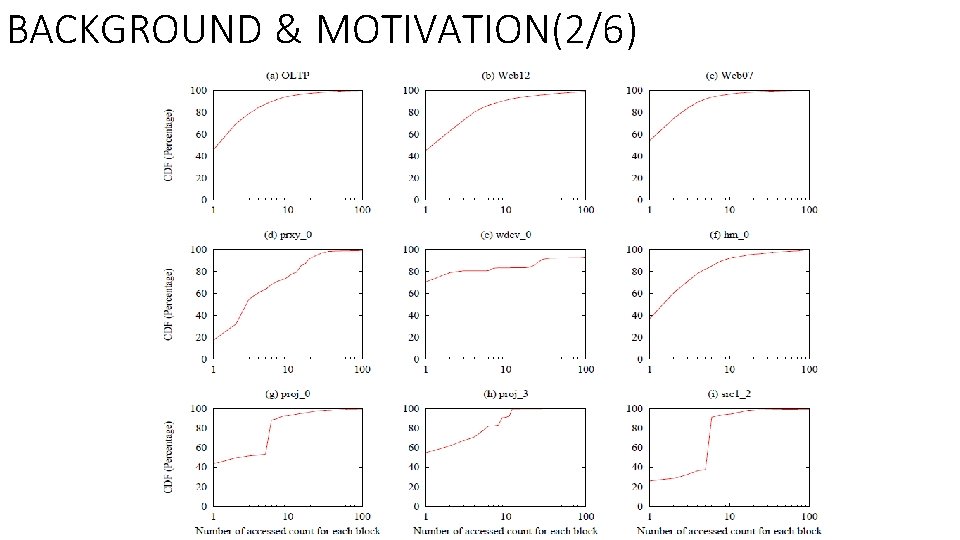

BACKGROUND & MOTIVATION(2/6)

BACKGROUND & MOTIVATION(3/6) • Observation #1. Approximately 50 to 90% of blocks are infrequently accessed in a real-world workload. • Most blocks are infrequently accessed and only a few blocks are frequently accessed. • If a cache algorithm performs in an LRU manner, blocks to be frequently accessed may be easily evicted from a cache because of infrequently accessed blocks. • Therefore, infrequently accessed blocks should be evicted prior to frequently accessed blocks, and frequently accessed blocks should be retained for a long time to make more cache hits.

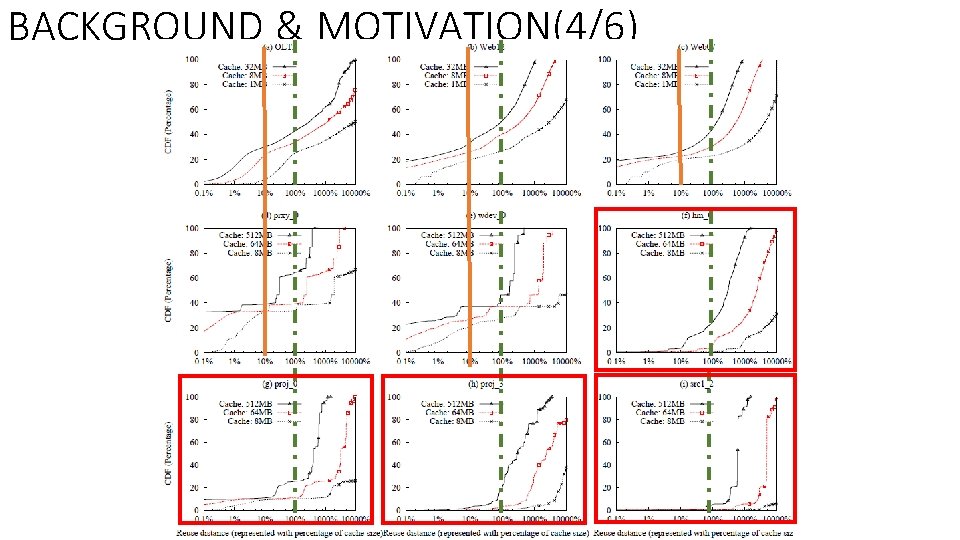

BACKGROUND & MOTIVATION(4/6)

BACKGROUND & MOTIVATION(5/6) • Observation #2. Reuse distance for infrequently accessed blocks is either very short or very long. • Regarding cache size, most distribution is less than 10% or greater than 100% of cache size.

BACKGROUND & MOTIVATION(6/6) • Type 1(Infrequently accessed with a long reuse distance)blocks generate pure cache pollution and this algorithm cannot be a cache hit as well. • The Type 2(Infrequently accessed with a short reuse distance)blocks might be a cache hit because it has a short reuse distance. • However, after a cache hit, it still produces cache pollution because it has infrequently accessed characteristics.

OUTLINE • INTRODUCTION • BACKGROUND & MOTIVATION • PROPOSED FRD ALGORITHM • EVALUATION • CONCLUSION

PROPOSED FRD ALGORITHM(1/11) • We propose a buffer cache algorithm that considers both access frequency and reuse distance, known as FRD. • The FRD effectively filters out cache polluting blocks using a filter stack and effectively maintains meaningful blocks using a reuse distance stack.

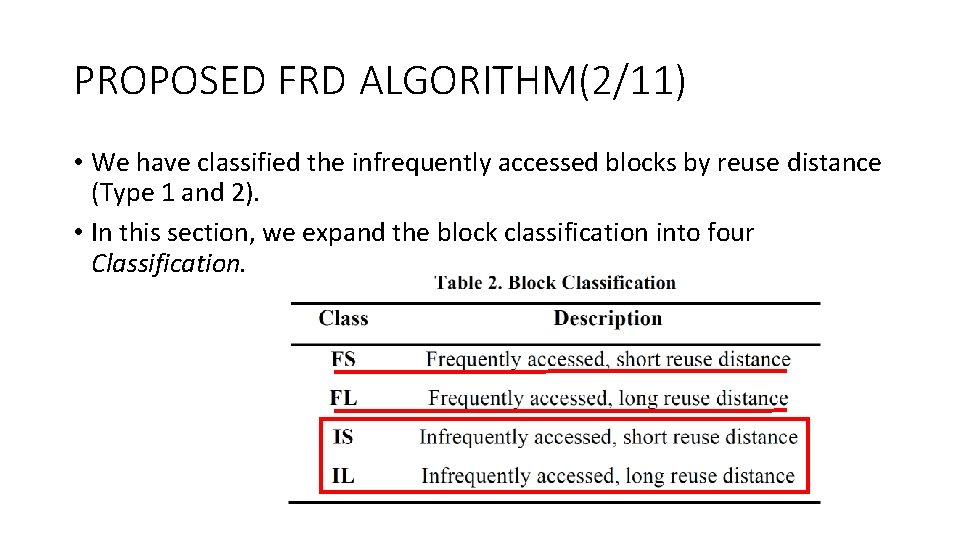

PROPOSED FRD ALGORITHM(2/11) • We have classified the infrequently accessed blocks by reuse distance (Type 1 and 2). • In this section, we expand the block classification into four Classification.

PROPOSED FRD ALGORITHM(3/11) • Cache pollution caused by Class IS or IL commonly occurs as a result of a scan-like workload in a LRU cache algorithm. • To avoid this cache pollution, we design our cache algorithm using two types of buffers: temporal and actual cache buffers. Temporal cache (Filter stack) Actual cache (Reuse distance stack)

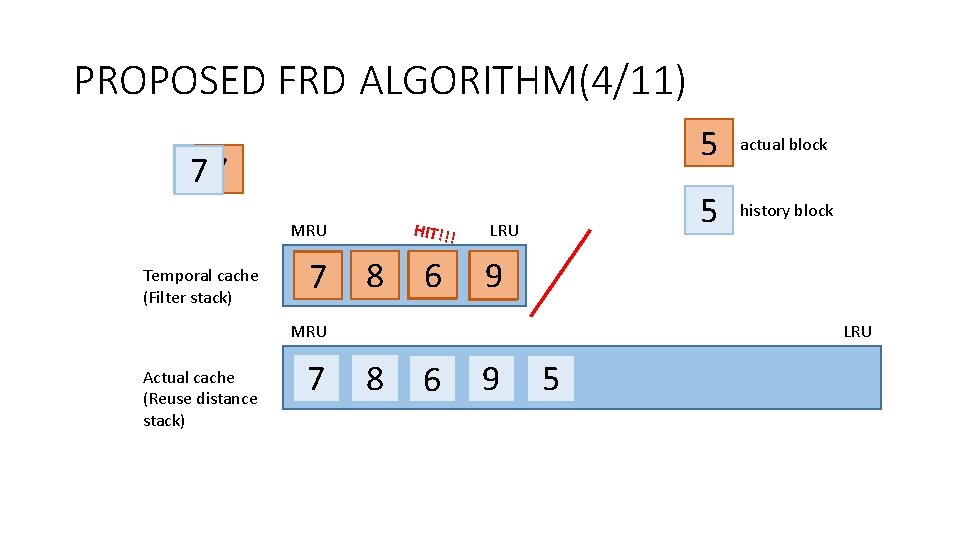

PROPOSED FRD ALGORITHM(4/11) 875 857 HIT!!! MRU Temporal cache (Filter stack) 68 7 8 95 6 986 LRU 7 86 actual block 5 history block 59 LRU MRU Actual cache (Reuse distance stack) 5 9 5 86 8 96 59 5

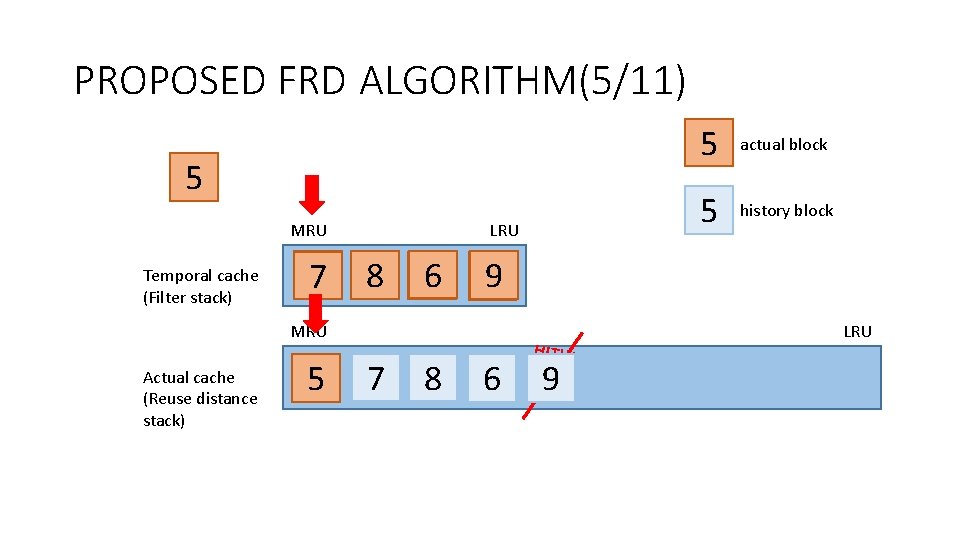

PROPOSED FRD ALGORITHM(5/11) 5 MRU Temporal cache (Filter stack) 68 7 LRU 8 95 6 986 57 86 9 57 86 8 96 actual block 5 history block 59 MRU Actual cache (Reuse distance stack) 5 596 HIT!!! 59 LRU

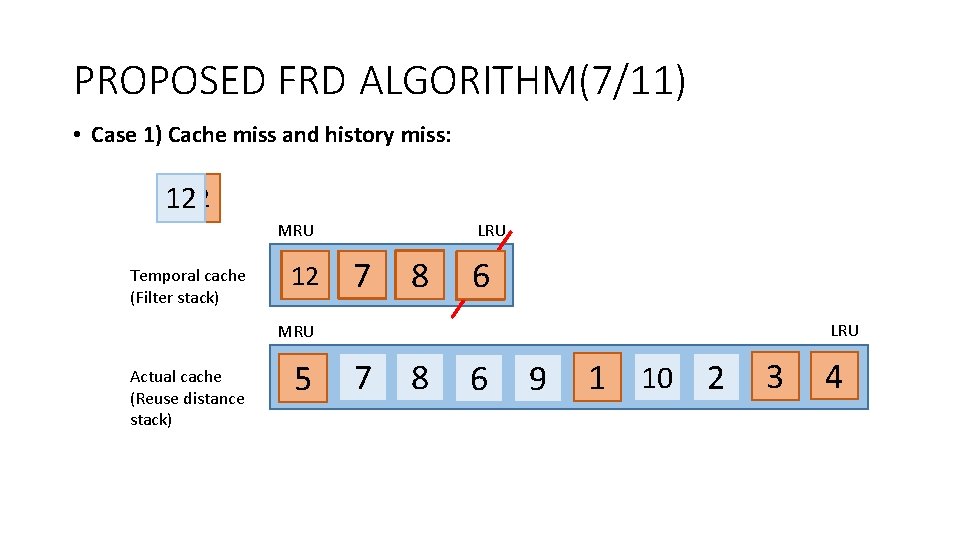

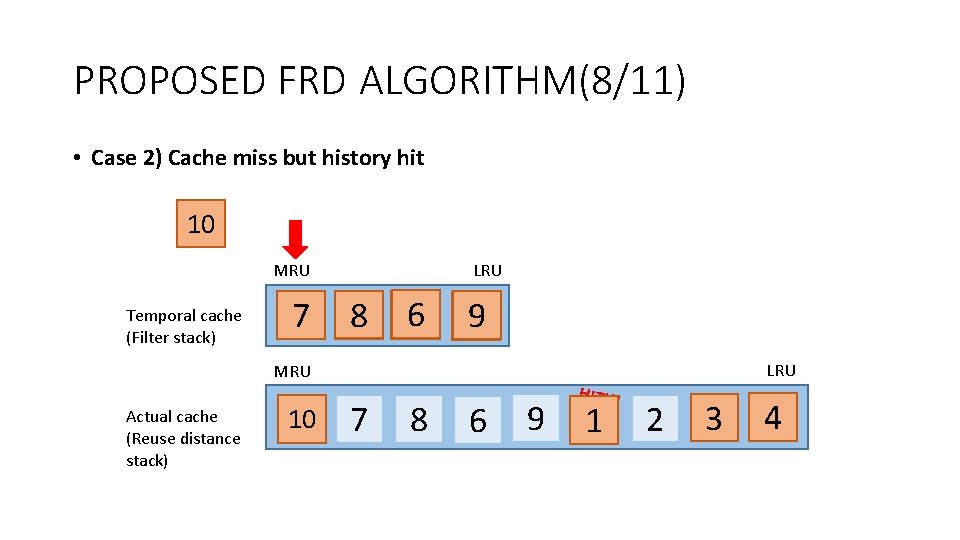

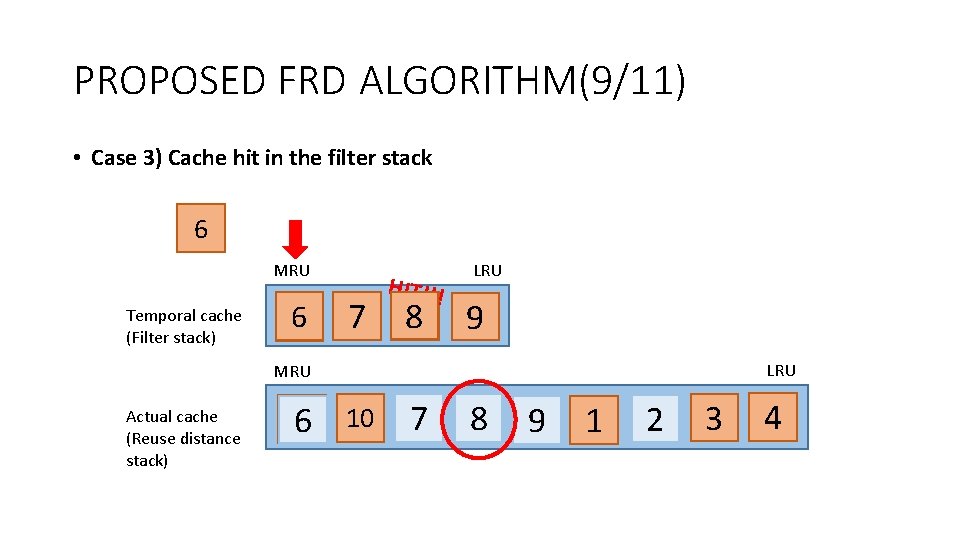

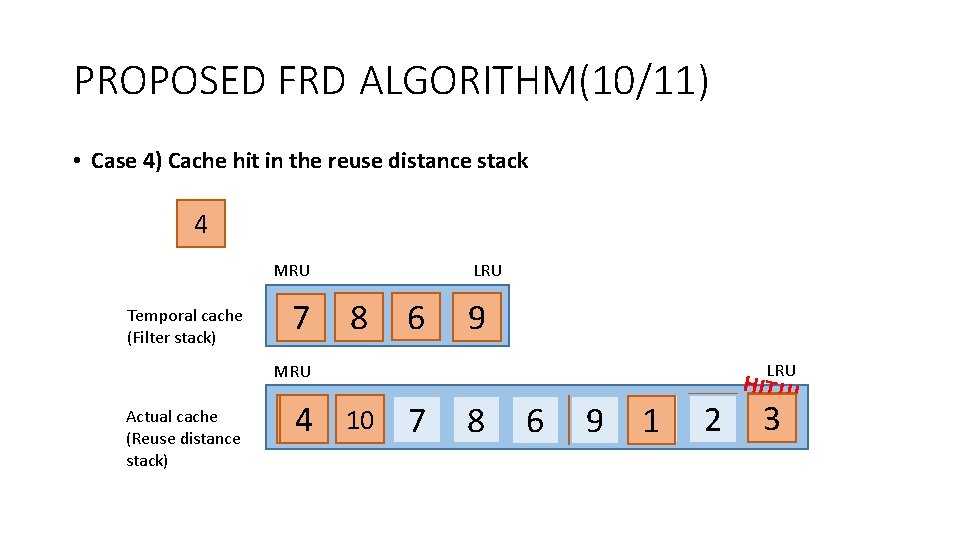

PROPOSED FRD ALGORITHM(6/11) • When the two stacks are full, a total of four cases are possible for a block request because of the history block accesses. • • Case 1) Cache miss and history miss Case 2) Cache miss but history hit Case 3) Cache hit in the filter stack Case 4) Cache hit in the reuse distance stack

PROPOSED FRD ALGORITHM(7/11) • Case 1) Cache miss and history miss: 1212 MRU Temporal cache (Filter stack) 12 68 7 LRU 8 7 9 5 6 986 9 56 LRU MRU Actual cache (Reuse distance stack) 57 86 9 57 86 8 96 59 59 52 1 10 3 4

PROPOSED FRD ALGORITHM(8/11) • Case 2) Cache miss but history hit 10 MRU Temporal cache (Filter stack) 68 7 LRU 8 95 6 986 59 LRU MRU Actual cache (Reuse distance stack) 10 9 57 86 87 96 5968 596 HIT!!! 9 1 10 1 52 3 4

PROPOSED FRD ALGORITHM(9/11) • Case 3) Cache hit in the filter stack 6 MRU Temporal cache (Filter stack) 76 8 7 HIT!!! 8 6 LRU 9 LRU MRU Actual cache (Reuse distance stack) 10 9 57 86 10 87 96 59687 8 6 1 52 9 10 3 4

PROPOSED FRD ALGORITHM(10/11) • Case 4) Cache hit in the reuse distance stack 4 MRU Temporal cache (Filter stack) 7 LRU 8 6 9 LRU MRU Actual cache (Reuse distance stack) 10 4 10 7 78 86 96 19 12 23 HIT!!! 4 3

PROPOSED FRD ALGORITHM(11/11) • The filter stack has two purposes. • Identify noise blocks (e. g. , Class IS or IL) • Identify blocks with short reuse distance (e. g. , Class IS or FS). • The reuse distance stack also has two purposes. • Maintain history block in reuse distance order. • Store frequently accessed blocks for later used (e. g. , Class FL).

OUTLINE • INTRODUCTION • BACKGROUND & MOTIVATION • PROPOSED FRD ALGORITHM • EVALUATION • CONCLUSION

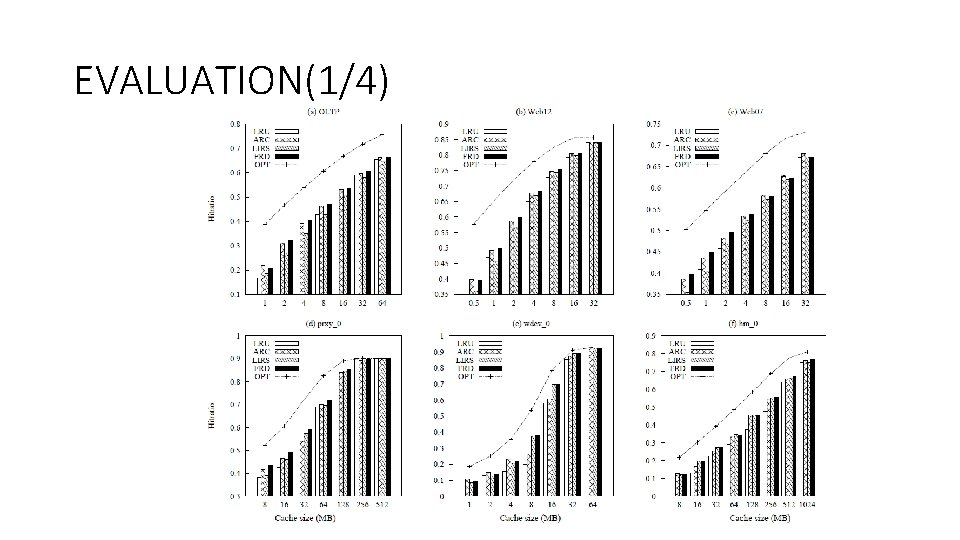

EVALUATION(1/4)

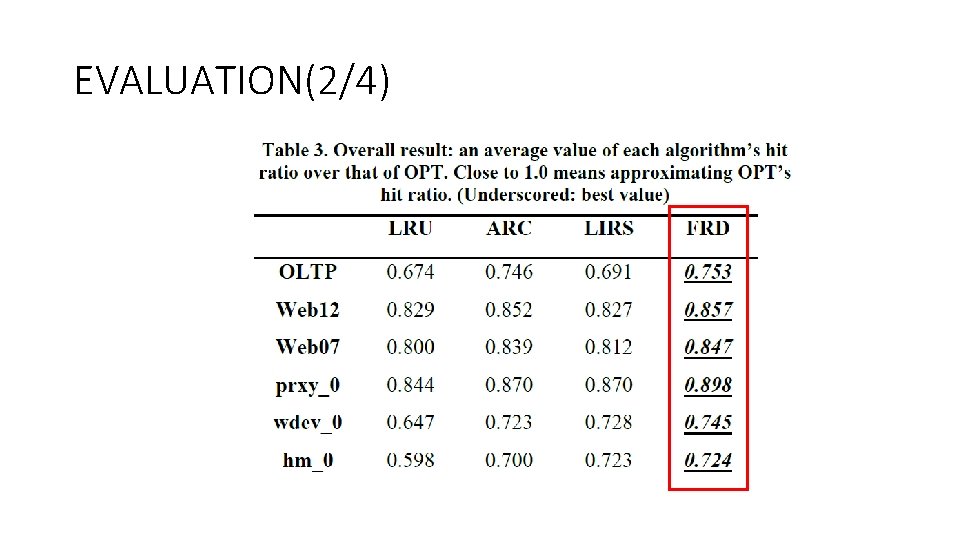

EVALUATION(2/4)

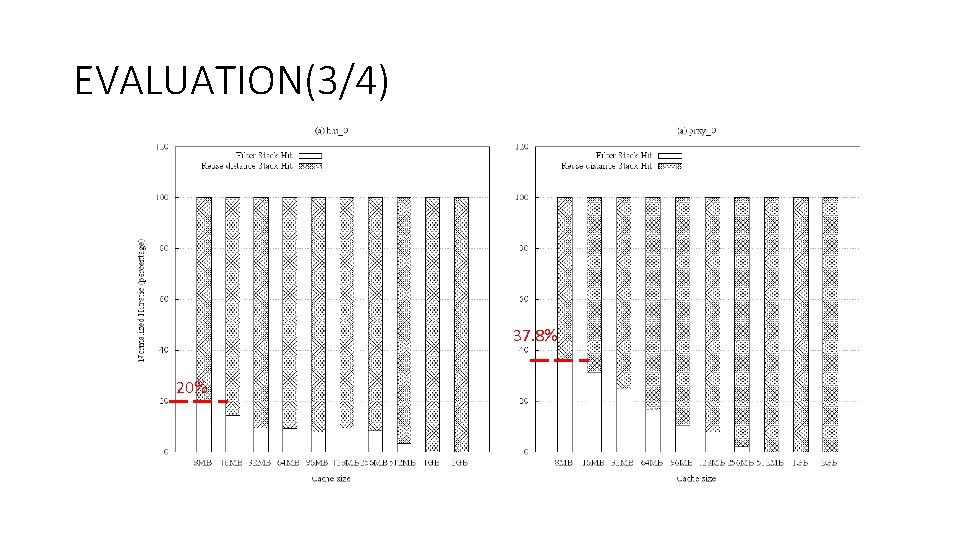

EVALUATION(3/4) 37. 8% 20%

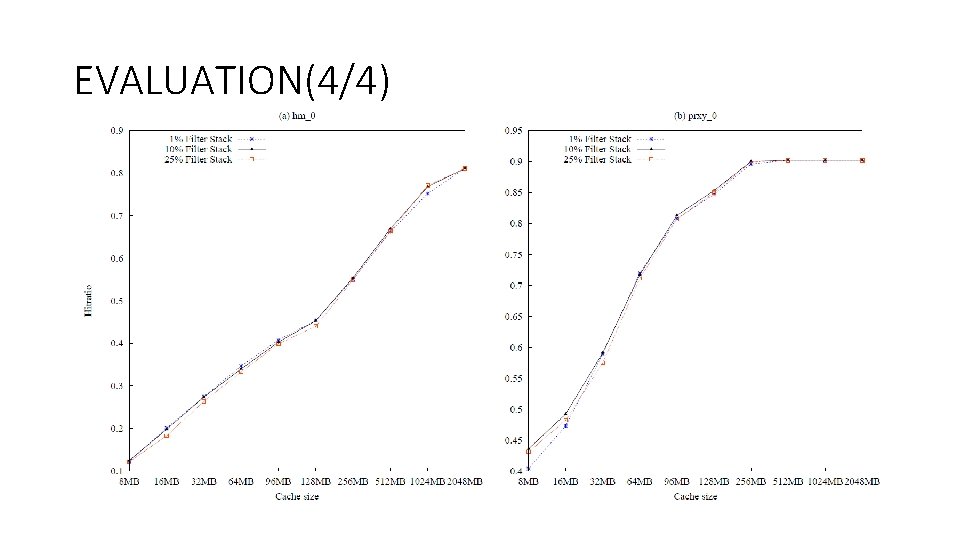

EVALUATION(4/4)

OUTLINE • INTRODUCTION • BACKGROUND & MOTIVATION • PROPOSED FRD ALGORITHM • EVALUATION • CONCLUSION

CONCLUSION(1/1) • We proposed a buffer cache algorithm called FRD that considers both frequency and reuse distance. • The primary purpose of the FRD is to exclude infrequently accessed blocks that may cause cache pollution and to maintain frequently accessed blocks based on reuse distance. • Experimental results showed that the FRD outperformed ARC or LIRS and that FRD’s hit ratio was stable for various cache sizes.

- Slides: 32