FORMATIVE EVALUATION OF HEALTHCARE SIMULATION CONSTRUCTIVE FEEDBACK TO

FORMATIVE EVALUATION OF HEALTHCARE SIMULATION CONSTRUCTIVE FEEDBACK TO ENHANCE LEARNING Michael Mc. Laughlin, Ph. D Dean of Health Occupations Director- Katz Family Healthcare Simulation Center Kirkwood Community College Cedar Rapids, Iowa

Objectives • Review the definitions of formative and summative evaluation • Discuss levels/classifications of evaluation • Discuss the creation and use of formative assessment tools • Review the role of formative assessment in simulation • Discuss the Team. STEPPS model of assessment of teambased performance • Review how Team. STEPPS and the Mayo High Performance Teamwork Scale can be used in simulation

DISCLOSURES The presenter has no disclosures relevant to the topic of the presentation

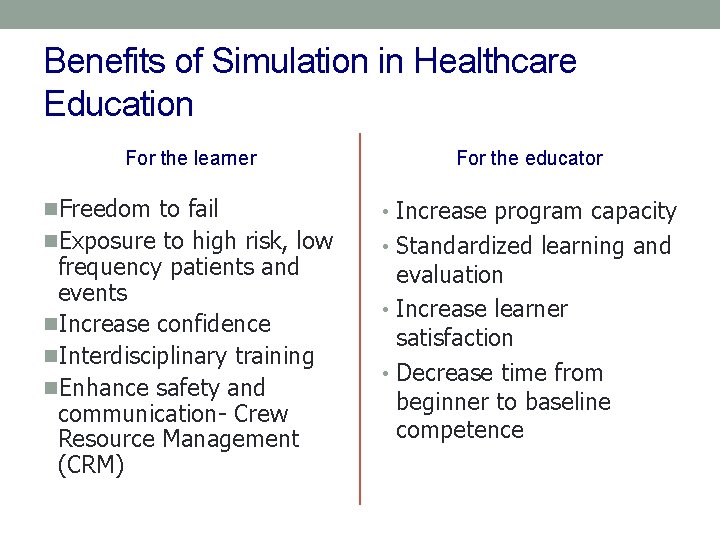

Benefits of Simulation in Healthcare Education For the learner For the educator n. Freedom to fail • Increase program capacity n. Exposure to high risk, low • Standardized learning and frequency patients and events n. Increase confidence n. Interdisciplinary training n. Enhance safety and communication- Crew Resource Management (CRM) evaluation • Increase learner satisfaction • Decrease time from beginner to baseline competence

Issenberg’s Principles for Teaching with Simulation • Provide feedback during the learning experience • Require learners to engage in repetitive practice • Integrate simulation throughout the curriculum • Increase levels of difficulty • Adapt simulation to accommodate multiple learning strategies • Ensure clinical variation • Establish a controlled environment • Provide individualized and team learning • Define benchmarks and outcomes

Debriefing is…. “ The act of reviewing a real or simulated event in which the participants explain, analyze and synthesize information and emotional states to improve performance in similar situations. ” Center for Medical Simulation, 2006

The Goals of Debriefing- Mort & Donahue • Offer a safe educational environment • Offer constructive feedback (formative assessment) • Encourage meta-cognition or self-knowledge • Promote communication and teamwork • Create a culture of change • Constructively correct behavior

EXPERIENCE ITSELF IS NOT ENOUGH TO PROMOTE CHANGE The debrief session allows for reflection that enables learning to take place Formative assessment is a critical part of the debriefing process

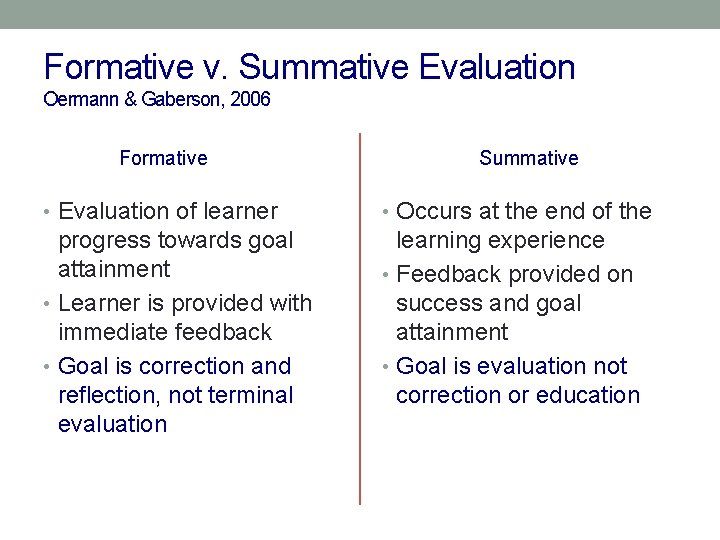

Formative v. Summative Evaluation Oermann & Gaberson, 2006 Formative Summative • Evaluation of learner • Occurs at the end of the progress towards goal attainment • Learner is provided with immediate feedback • Goal is correction and reflection, not terminal evaluation learning experience • Feedback provided on success and goal attainment • Goal is evaluation not correction or education

“Formative assessment is a process used by teachers and students during instruction that provides feedback to adjust ongoing teaching and learning to improve students’ achievement of intended instructional outcomes” Council of Chief State School Officers 2008

“Formative assessment practices include not only a variety of informal strategies that teachers may use ‘on the fly’, but also formal assessment tasks and methods systematically designed to help teachers probe and promote student thinking and reasoning” Messick, 1994

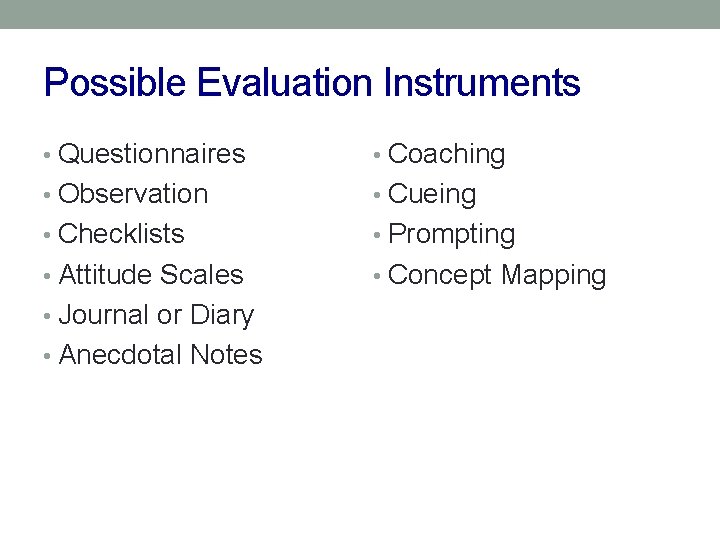

Possible Evaluation Instruments • Questionnaires • Coaching • Observation • Cueing • Checklists • Prompting • Attitude Scales • Concept Mapping • Journal or Diary • Anecdotal Notes

Formative Assessment should be: • Aligned with developmental objectives to: • Meet participant outcomes • Provided feedback • Remedy errors in thinking and practice • Accommodating for varied learning speeds • Appropriate for the current level of experience of learners • Specific to provide supplemental strategies for achievement of participant outcomes • Completed in a manner consistent with best debriefing practices • “Standards of Best Practices In Simulation: VII- Participant Assessment and Evaluation” (2013)

Developing good formative assessment tools • Begin with educational values. • Reflect the learning as multi-dimensional, integrated, and revealed over time. • Have clear, explicitly-state purposes. • Align experiences with desired outcomes. • Make assessment on-going and not episodic. • Involve broad representation from education community. • Identify and address the important issues. • Include assessment in a larger goal of promoting change. • Ensure that goals align with expectations of public. • American Association of Higher Education 2011

Five Levels of Assessment (in Simulation) • Satisfaction/confidence/self-efficacy • Knowledge acquisition • Performance • Problem-solving abilities • Team-based behaviors • Bray, B. S. et al 2011

Satisfaction, Confidence and Self-Efficacy • Measure learner perceptions • Relatively easy to conduct • Data help educators understand learner perspectives and attitudes regarding simulation • Limitation- data do not provide insight on impact of simulation on student learning • Caution- self-efficacy does not always translate into competence as measured by external evaluation

Knowledge and Retention • Pre and post simulation assessments • Data can be prospective and controlled • Assessment is typically written • Knowledge attainment assessment can be confounded by other variables (role played, level of fidelity) • Poor performance may be due to lack of contextual knowledge of information • Successful knowledge acquisition and retention does not translate into competency in application or synthesis of knowledge

Performance-Based Skill Assessment • Assessment of performance while in simulation • Intubation • IV therapy • Assessment of psychomotor skill attainment • Technical competency does not always translate into clinical competency of critical thinking

Critical-Thinking and Problem-Solving • Difficult to measure • Tools and instruments common • Inter-rater reliability of tool makes this difficult to measure

Behavior and Team Interaction • Same challenges as with critical thinking and problem solving evaluation • The simulated environment lends itself to evaluating teamwork and team behaviors • Goal of assessment is to assess communication and teamwork as it relates to patient safety and outcomes • AHRQ and DOD Team. STEPPS initiative • Team Strategies and Tools to Enhance Performance and Patient Safety

Team. STEPPS • Developed by the Department of Defense in collaboration with the Agency for Healthcare Research and Quality (AHRQ) • Goal- improve patient safety within healthcare organizations • An evidence-based teamwork system to improve communication and teamwork skills among healthcare professionals by integrating teamwork principles • Assessment- formative, checklist-based instruments

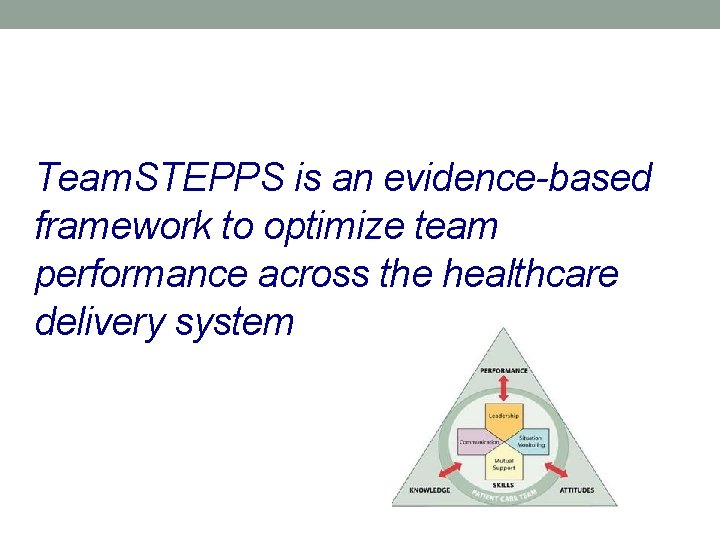

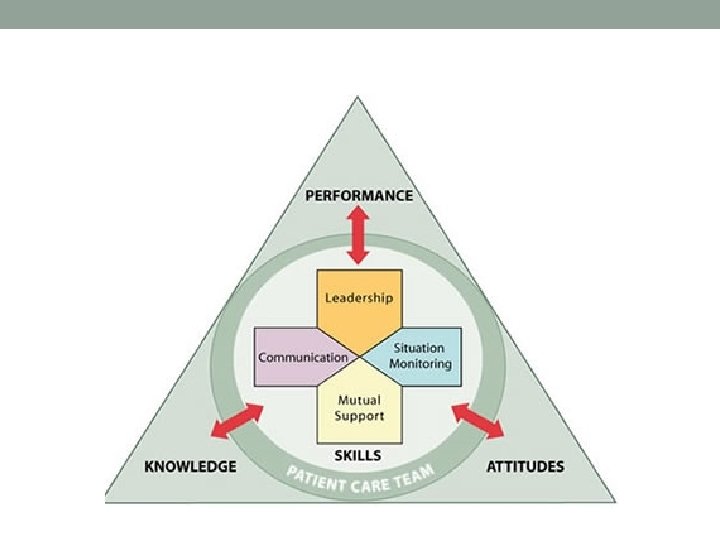

Team. STEPPS is an evidence-based framework to optimize team performance across the healthcare delivery system

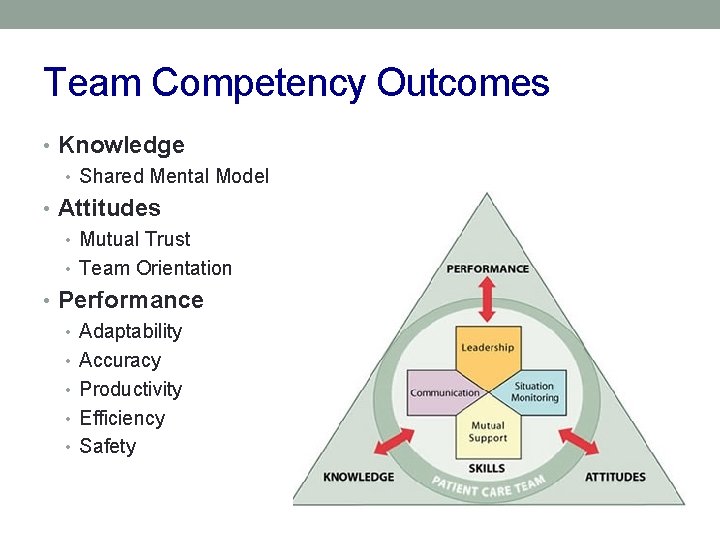

Team Competency Outcomes • Knowledge • Shared Mental Model • Attitudes • Mutual Trust • Team Orientation • Performance • Adaptability • Accuracy • Productivity • Efficiency • Safety

Four Key Principles • Leadership • The ability to coordinate the activities of team members by ensuring team actions are understood, changes in information are shared, and that team members have the necessary resources • Situation Monitoring • The process of actively scanning and assessing situational elements to gain information, understanding, or maintain awareness to support the function of the team • Mutual Support • The ability to anticipate and support other team members’ needs through accurate knowledge about their responsibilities and workload • Communication • The process by which information is clearly and accurately exchanged among team members

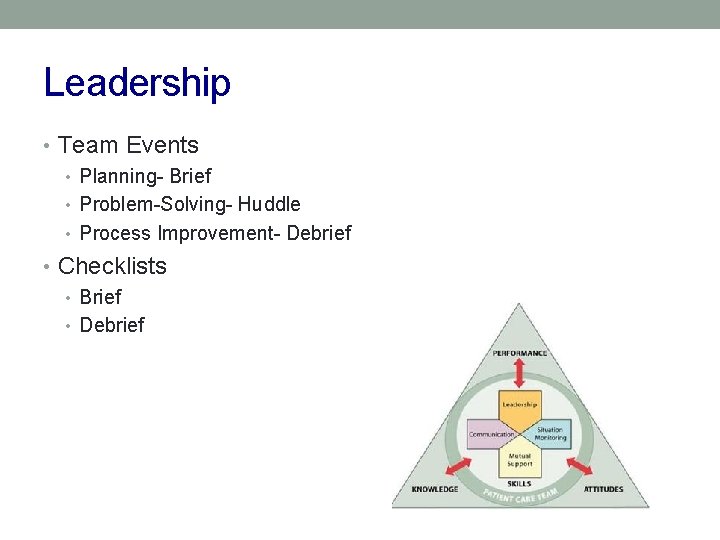

Leadership • Team Events • Planning- Brief • Problem-Solving- Huddle • Process Improvement- Debrief • Checklists • Brief • Debrief

Situation Monitoring • Cross- Monitoring • STEP I’M SAFE checklist

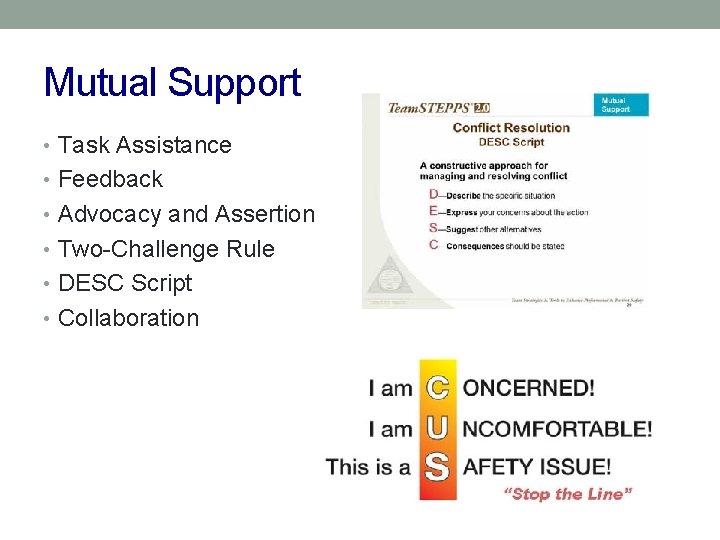

Mutual Support • Task Assistance • Feedback • Advocacy and Assertion • Two-Challenge Rule • DESC Script • Collaboration

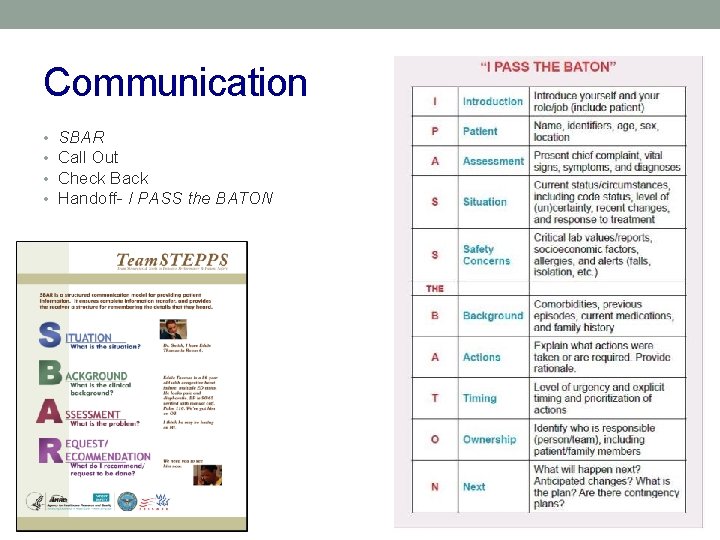

Communication • • SBAR Call Out Check Back Handoff- I PASS the BATON

EVALUATION OF TEAMSTEPPS The Mayo High Performance Teamwork Scale

ACTIVITY Simulated Trauma- evaluate teamwork using the Mayo High Performance Teamwork Scale

Questions?

Thank You

References • Billings, D. M. & Halstead, J. A. (2005). Teaching in Nursing: a guide for faculty (2 nd ed. ) Philadelphia: W. B. Saunders • Oermann, M. H. & Gaberson, K. B. (2006). Evaluation and Testing in Nurse Education (2 nd ed. ). New York. Springer Publishing • Jeffries, P. R. (ed). (2007). Simulation in Nursing Education. New York. NLN Publishing • Dunn, W. F. (ed). (2004). Simulators in Critical Care and Beyond. Des Plaines, IL. Society of Critical Care Medicine • “Standards of Best Practice: Simulation” (2013) Clinical Simulation in Nursing. 9: 65 pp. S 3 -S 29

References • Messick, S. (1994) “The interplay of evidence and consequence • • s in validation of performance assessments”. Education Researcher. 32, 13 -23 Bray, B. S. et al (2011) “Assessment of Human Patient Simulation-Based Learning” American Journal of Pharmaceutical Education. 75 (10) Article 208 Issenberg, S. F. Mc. Gaghie W. C. Petrusa, D. R. et al. “Features and uses of high-fidelity medical simulations that lead to effective learning” Medical Teacher. 2005; 27(1) 10 -28 Nine principles of good practice for assessing student learning. University of South Carolina Institutional Assessment and Compliance http: //www. ipr. sc. edu/effectiveness/toolbox/principles. htm Accessed Maay 21 2015 Team. STEPPS Pocket Guide (AHRQ) Pub # 06 -0020 -2 2008

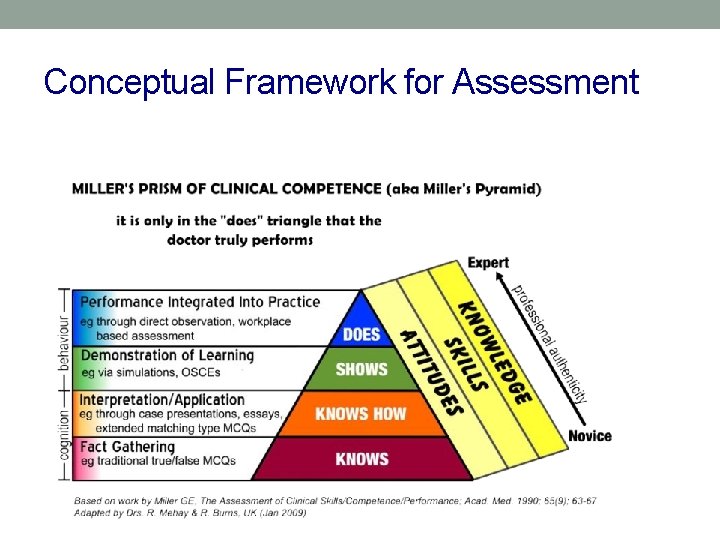

Conceptual Framework for Assessment

- Slides: 37