FloatingPoint Processing and Instruction Encoding Presented by Dr

Floating-Point Processing and Instruction Encoding Presented by: Dr. José M. Reyes Álamo Some slides modified from Assembly Language for x 86 Processors 6 th Edition by Kip R. Irvine (c) Pearson Education, 2010. All rights reserved.

Floating-Point Binary Representation • • • IEEE Floating-Point Binary Reals The Exponent Normalized Binary Floating-Point Numbers Creating the IEEE Representation Converting Decimal Fractions to Binary Reals Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 2

Problems with number representation in computers • They do not follow standard rules of algebra as we only have a limited subset of Integers and Rationals • Computers cannot represent Irrationals, Complex or Transcendental numbers • This affect arithmetic and comparison operations • The more operations we perform, the more precision is lost • All we can do is approximate up to certain precision, usually expressed as tolerance Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 3

Important Considerations • The order of evaluation can affect the accuracy of the result. • Whenever subtracting two numbers with the same signs or adding two numbers with different signs, the accuracy of the result may be less than the precision available in the floating point format. • When performing a chain of calculations involving addition, subtraction, multiplication, and division, try to perform the multiplication and division operations first. • When multiplying and dividing sets of numbers, try to arrange the multiplications so that they multiply large and small numbers together; likewise, try to divide numbers that have the same relative magnitudes. Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 4

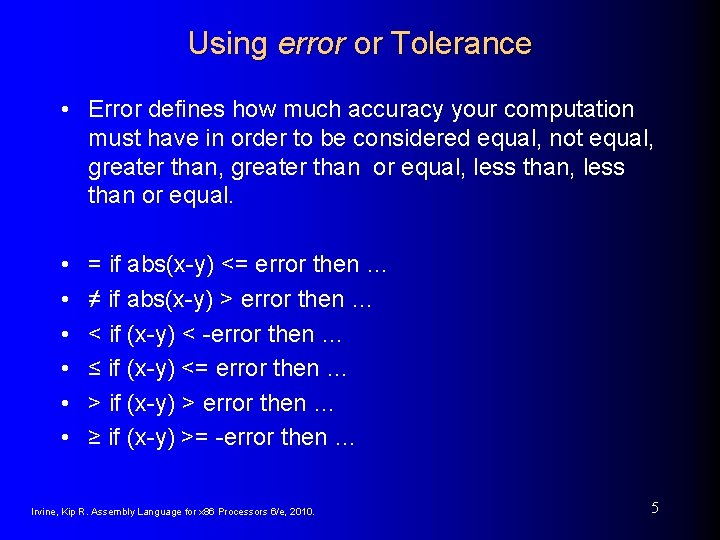

Using error or Tolerance • Error defines how much accuracy your computation must have in order to be considered equal, not equal, greater than or equal, less than or equal. • • • = if abs(x-y) <= error then … ≠ if abs(x-y) > error then … < if (x-y) < -error then … ≤ if (x-y) <= error then … > if (x-y) > error then … ≥ if (x-y) >= -error then … Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 5

IEEE Floating-Point Binary Reals • Types • Single Precision • 32 bits: 1 bit for the sign, 8 bits for the exponent, and 23 bits for the fractional part of the significand (called mantissa). • Double Precision • 64 bits: 1 bit for the sign, 11 bits for the exponent, and 52 bits for the fractional part of the significand. • Double Extended Precision • 80 bits: 1 bit for the sign, 16 bits for the exponent, and 63 bits for the fractional part of the significand. Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 6

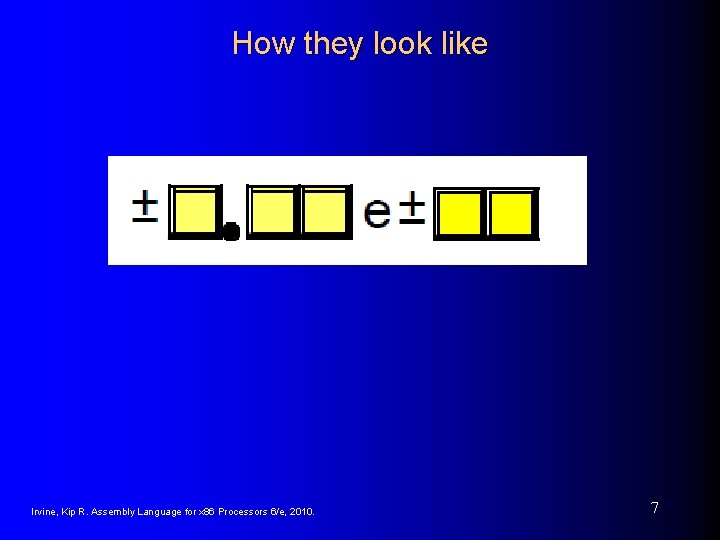

How they look like Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 7

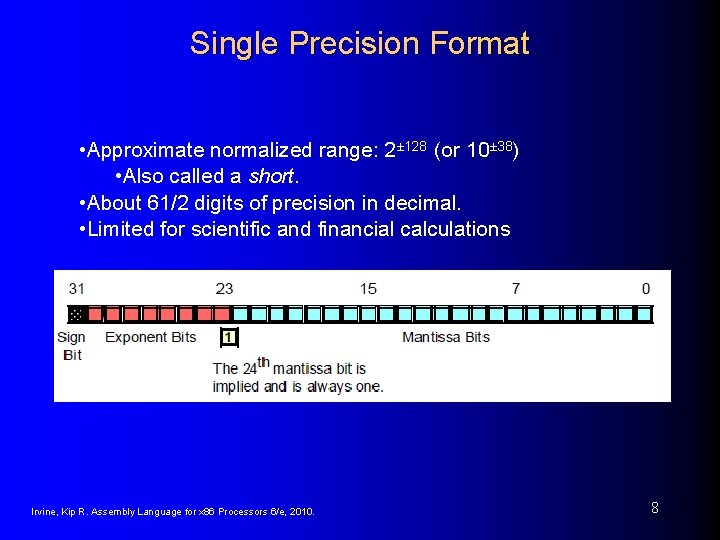

Single Precision Format • Approximate normalized range: 2± 128 (or 10± 38) • Also called a short. • About 61/2 digits of precision in decimal. • Limited for scientific and financial calculations Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 8

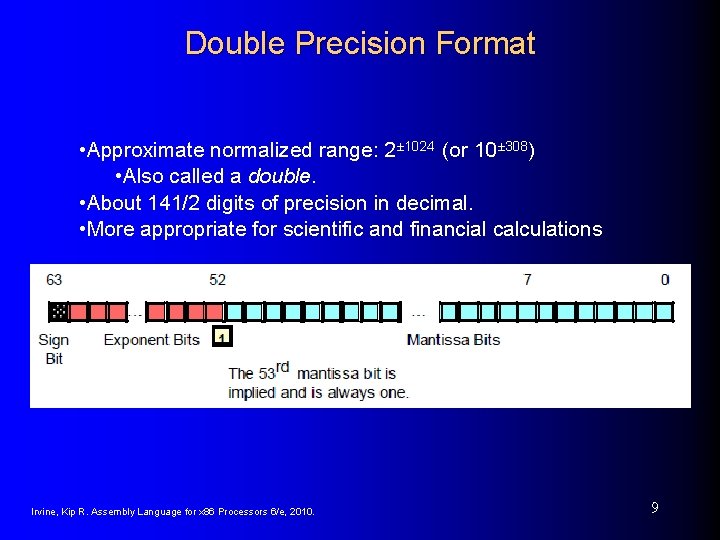

Double Precision Format • Approximate normalized range: 2± 1024 (or 10± 308) • Also called a double. • About 141/2 digits of precision in decimal. • More appropriate for scientific and financial calculations Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 9

Extended Precision Format • Larger range • Also called extended. • Works different that single and double. • Intel processor use it internally for all floating point operations Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 10

Components of a Single-Precision Real • Sign • 1 = negative, 0 = positive • Significand • decimal digits to the left & right of decimal point • weighted positional notation • Example: 123. 154 = (1 x 102) + (2 x 101) + (3 x 100) + (1 x 10– 1) + (5 x 10– 2) + (4 x 10– 3) • Exponent • unsigned integer • integer bias (127 for single precision) Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 11

Decimal Fractions vs Binary Floating-Point Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 12

The Exponent • Sample Exponents represented in Binary • Add 127 to actual exponent to produce the biased exponent Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 13

Normalizing Binary Floating-Point Numbers • Mantissa is normalized when a single 1 appears to the left of the binary point • Unnormalized: shift binary point until exponent is zero • Examples Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 14

Real-Number Encodings • Normalized finite numbers • all the nonzero finite values that can be encoded in a normalized real number between zero and infinity • Positive and Negative Infinity • Na. N (not a number) • bit pattern that is not a valid FP value • Two types: • quiet • signaling Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 15

Real-Number Encodings (cont) • Specific encodings (single precision): Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 16

Example • Single Precision Order: sign bit, exponent bits, and fractional part (mantissa) Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 17

HLA Support for Floating Point • To declare a floating point variable you use the real 32, real 64, or real 80 data types • Example: static flt. Var 1: flt. Var 1 a: pi: Dbl. Var 2: XPVar 2: real 32; real 32 : = 2. 7; real 32 : = 3. 14159; real 64 : = 1. 23456789 e+10; real 80 : = -1. 0 e-104; Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 18

HLA Support for Floating Point • To output a floating point variable in ASCII form, you would use one of the stdout. putr 32, std-out. putr 64, or stdout. putr 80 routines • stdout. putr 80( r: real 80; width: uns 32; decpts: uns 32 ); • stdout. putr 64( r: real 64; width: uns 32; decpts: uns 32 ); • stdout. putr 32( r: real 32; width: uns 32; decpts: uns 32 ); • Examples stdout. putr 32( pi, 10, 4 ); stdout. put( “Pi = “, pi: 5: 3 ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 19

HLA Support for Floating Point • HLA Standard Library only provides two routines to support floating point input: stdin. getf() and stdin. get() • Example stdin. get( Dbl. Var ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 20

Floating Point Arithmetic • Intel provides the Floating Point Unit (FPU) as part of the CPU, but before it used to be a separate unit. • The 80 x 86 FPUs add 13 registers to the 80 x 86 and later processors: eight floating point data registers, a control register, a status register, a tag register, an instruction pointer, and a data pointer Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 21

FPU Data Registers • The FPUs provide eight 80 bit data registers organized as a stack • ST 0 refers to the item on the top of the stack, ST 1 refers to the next item on the stack, and so on. • Floating point instructions push and pop items on the stack Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 22

FPU Control Register • FPU control register (16 bit) lets the user choose several operating modes for compatibility with many applications Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 23

FPU Status Register • The FPU status register provides the status of the coprocessor at the instant you read it (like EFLAGS) • Review Condition Codes in your book Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 24

FPU Data Types Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 25

FPU Data Types Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 26

FPU Data Types Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 27

FPU Instructions • The FPU adds over 80 new instructions to the 80 x 86 instruction set. We can classify these instructions as: • • Data movement instructions Conversions Arithmetic instructions Comparisons Constant instructions Transcendental instructions Miscellaneous instructions Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 28

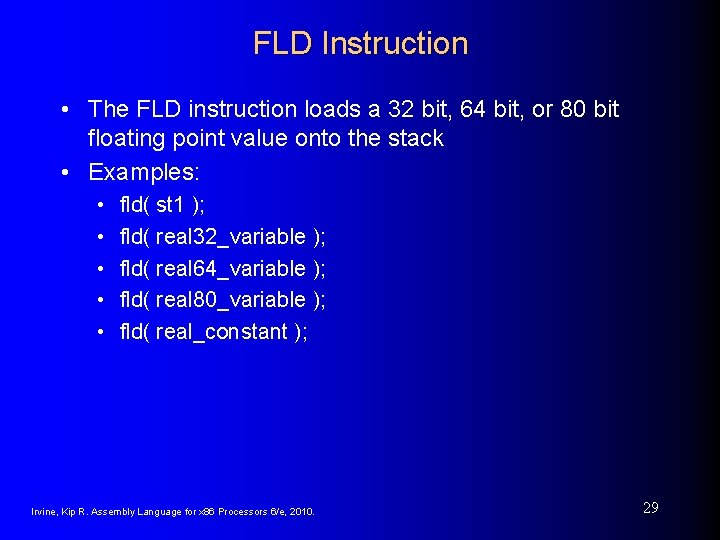

FLD Instruction • The FLD instruction loads a 32 bit, 64 bit, or 80 bit floating point value onto the stack • Examples: • • • fld( st 1 ); fld( real 32_variable ); fld( real 64_variable ); fld( real 80_variable ); fld( real_constant ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 29

FST and FSTP Instructions • The FST and FSTP instructions copy the value on the top of the floating point register stack to another floating point register or to a 32, 64, or 80 bit memory variable • Examples: • • • fst( real 32_variable ); fst( real 64_variable ); fst( real. Array[ ebx*8 ] ); fst( real 80_variable ); fst( st 2 ); fstp( st 1 ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 30

The FADD and FADDP Instructions • The pop ST 0 and ST 1, adds them and pushes them back into the stack • We will discuss more on stack data structure when we study the memory • • fadd() faddp() fadd( st 0, sti ); fadd( sti, st 0 ); faddp( st 0, sti ); fadd( mem_32_64 ); fadd( real_constant ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 31

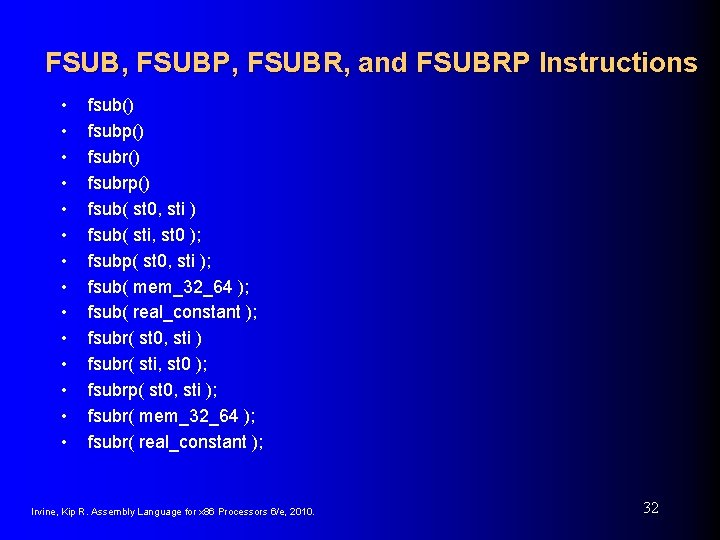

FSUB, FSUBP, FSUBR, and FSUBRP Instructions • • • • fsub() fsubp() fsubrp() fsub( st 0, sti ) fsub( sti, st 0 ); fsubp( st 0, sti ); fsub( mem_32_64 ); fsub( real_constant ); fsubr( st 0, sti ) fsubr( sti, st 0 ); fsubrp( st 0, sti ); fsubr( mem_32_64 ); fsubr( real_constant ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 32

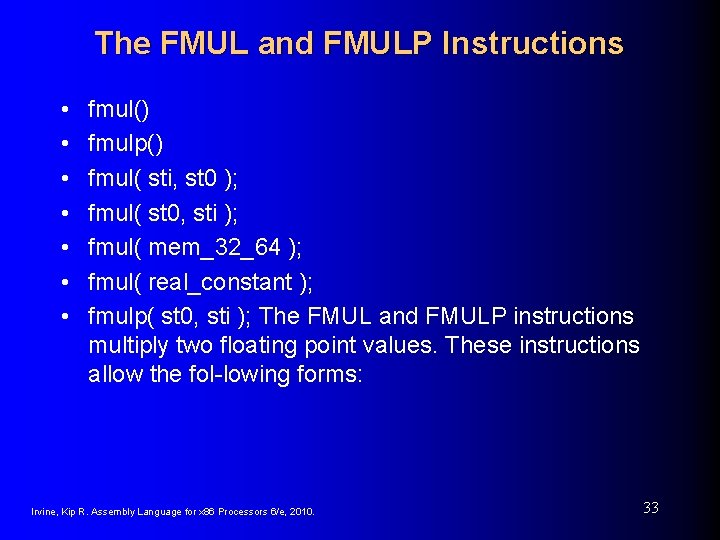

The FMUL and FMULP Instructions • • fmul() fmulp() fmul( sti, st 0 ); fmul( st 0, sti ); fmul( mem_32_64 ); fmul( real_constant ); fmulp( st 0, sti ); The FMUL and FMULP instructions multiply two floating point values. These instructions allow the fol-lowing forms: Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 33

The FDIV, FDIVP, FDIVR, and FDIVRP Instructions • • • • fdiv() fdivp() fdivrp() fdiv( sti, st 0 ); fdiv( st 0, sti ); fdivp( st 0, sti ); fdivr( sti, st 0 ); fdivr( st 0, sti ); fdivrp( st 0, sti ); fdiv( mem_32_64 ); fdivr( mem_32_64 ); fdiv( real_constant ); fdivr( real_constant ); Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 34

Other Instructions • • • FPREM and FPREM 1 – Computes the remainder FRNDINT – Rounds the number on top of stack FABS – Computes absolute value of ST 0 FCHS – Changes the sign of ST 0 FCOM, FCOMP, and FCOMPP – for comparison FSIN, FCOS, and FSINCOS – Computes the Sine and Cosine • FPTAN – Computes the Tangent • FYL 2 X – Computes log 2(x) • See your book for more instructions Irvine, Kip R. Assembly Language for x 86 Processors 6/e, 2010. 35

- Slides: 35