Flexible Speaker Adaptation using Maximum Likelihood Linear Regression

Flexible Speaker Adaptation using Maximum Likelihood Linear Regression Authors: C. J. Leggetter P. C. Woodland Presenter: 陳亮宇 Proc. ARPA Spoken Language Technology Workshop, 1995

Outline l l l 2 Introduction MLLR Overview Fixed and Dynamic Regression Classes Supervised Adaptation vs. Unsupervised Adaptation Evaluation on WSJ Data Conclusion

Introduction l Speaker Independent (SI) Recognition systems – – l Speaker Dependent (SD) Recognition systems – – l Better performance Difficult to get enough training data Solution: SI system + adaptation with little SD data – – 3 Poor performance Easy to get lots of training data Advantage: Little SD data is required Problem: some models are not updated

Introduction (aim of the paper) l MLLR (Maximum Likelihood Linear Regression) approach – – – l Dynamic Regression Classes approach – – 4 Parameter transformation technique All models are updated with little adaptation data Adapts the SI system by transforming the mean parameters with a set of linear transforms Optimizing the adaptation procedure during runtime Allows all models of adaptation to be performed in a single framework

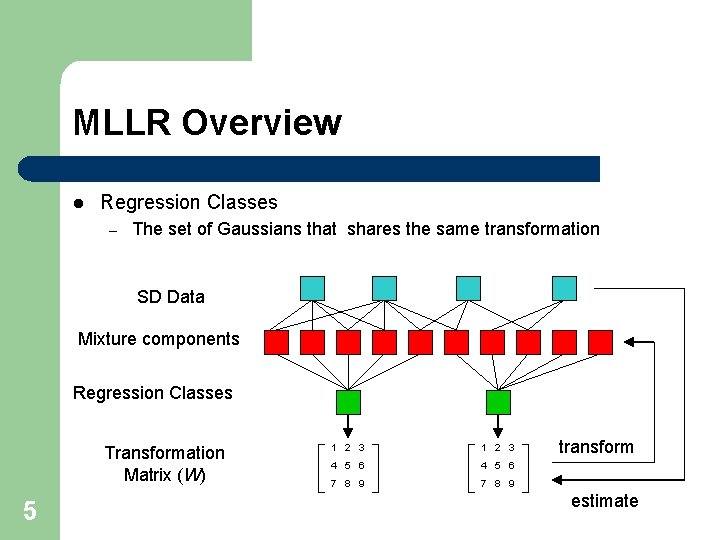

MLLR Overview l Regression Classes – The set of Gaussians that shares the same transformation SD Data Mixture components Regression Classes Transformation Matrix (W) 5 1 2 3 4 5 6 7 8 9 transform estimate

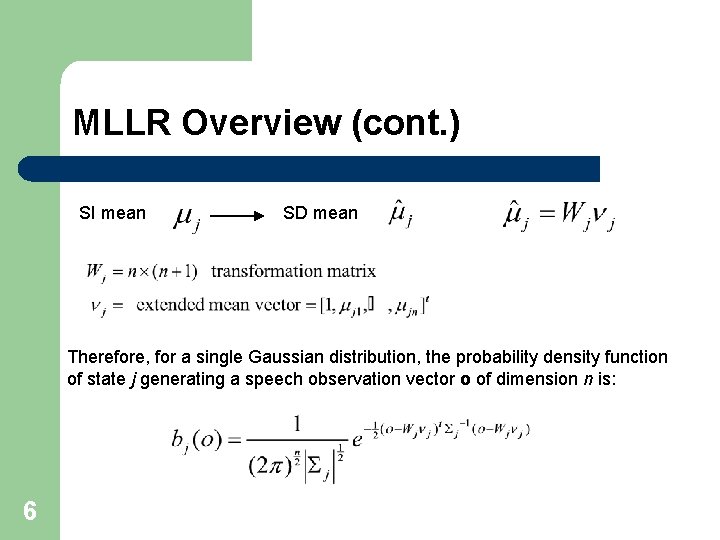

MLLR Overview (cont. ) SI mean SD mean Therefore, for a single Gaussian distribution, the probability density function of state j generating a speech observation vector o of dimension n is: 6

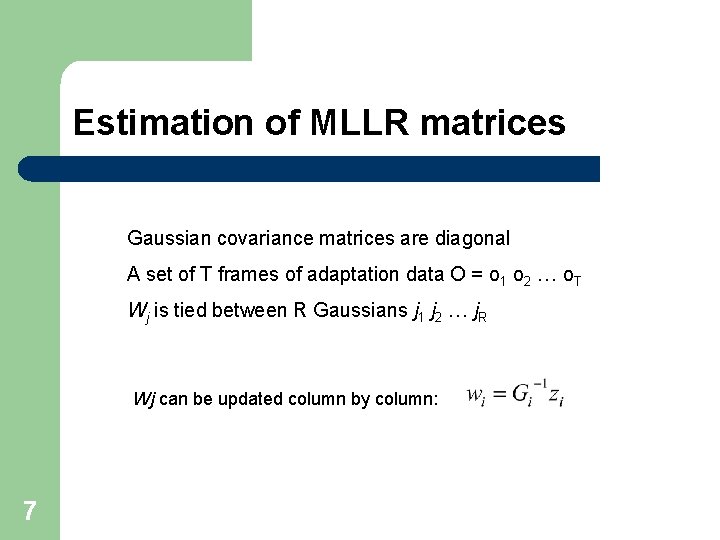

Estimation of MLLR matrices Gaussian covariance matrices are diagonal A set of T frames of adaptation data O = o 1 o 2 … o. T Wj is tied between R Gaussians j 1 j 2 … j. R Wj can be updated column by column: 7

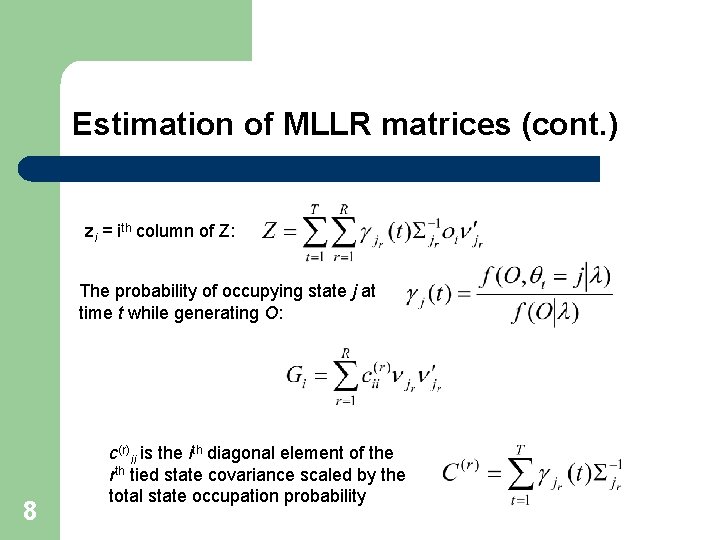

Estimation of MLLR matrices (cont. ) zi = ith column of Z: The probability of occupying state j at time t while generating O: 8 c(r)ii is the ith diagonal element of the rth tied state covariance scaled by the total state occupation probability

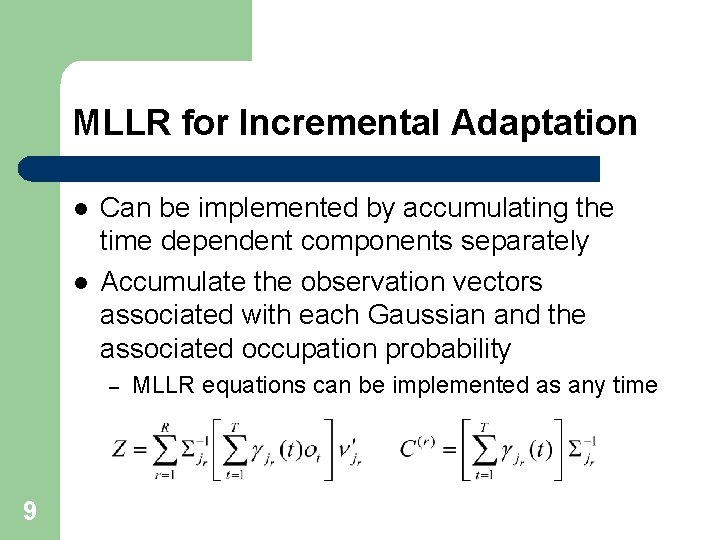

MLLR for Incremental Adaptation l l Can be implemented by accumulating the time dependent components separately Accumulate the observation vectors associated with each Gaussian and the associated occupation probability – 9 MLLR equations can be implemented as any time

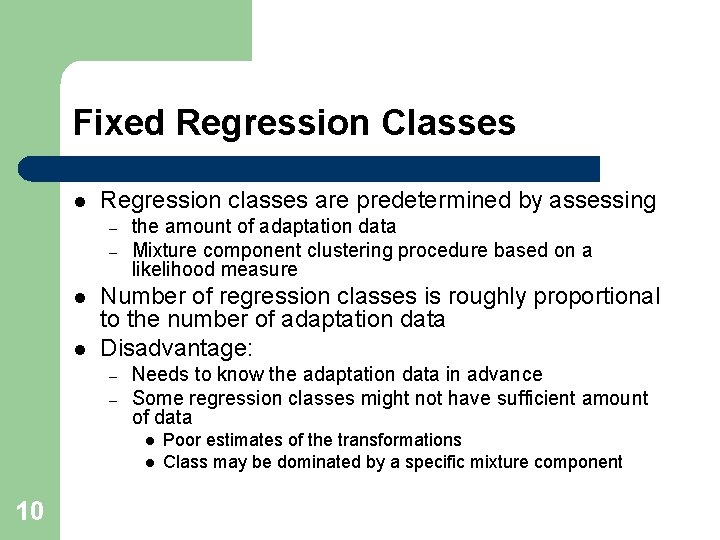

Fixed Regression Classes l Regression classes are predetermined by assessing – – l l the amount of adaptation data Mixture component clustering procedure based on a likelihood measure Number of regression classes is roughly proportional to the number of adaptation data Disadvantage: – – Needs to know the adaptation data in advance Some regression classes might not have sufficient amount of data l l 10 Poor estimates of the transformations Class may be dominated by a specific mixture component

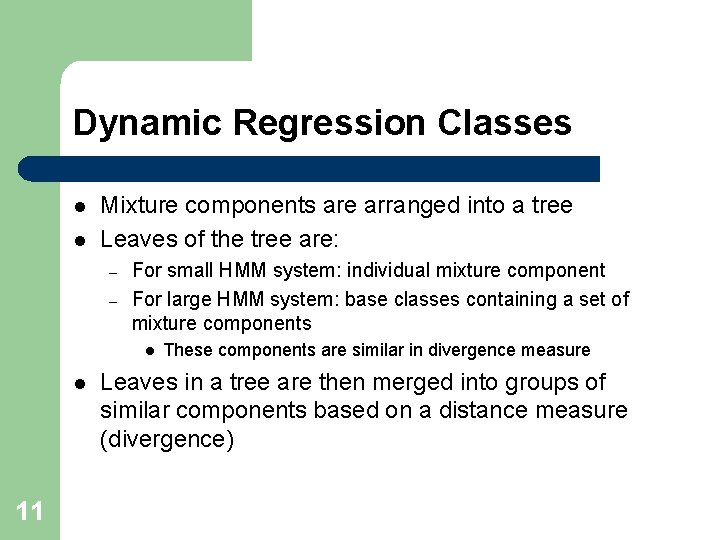

Dynamic Regression Classes l l Mixture components are arranged into a tree Leaves of the tree are: – – For small HMM system: individual mixture component For large HMM system: base classes containing a set of mixture components l l 11 These components are similar in divergence measure Leaves in a tree are then merged into groups of similar components based on a distance measure (divergence)

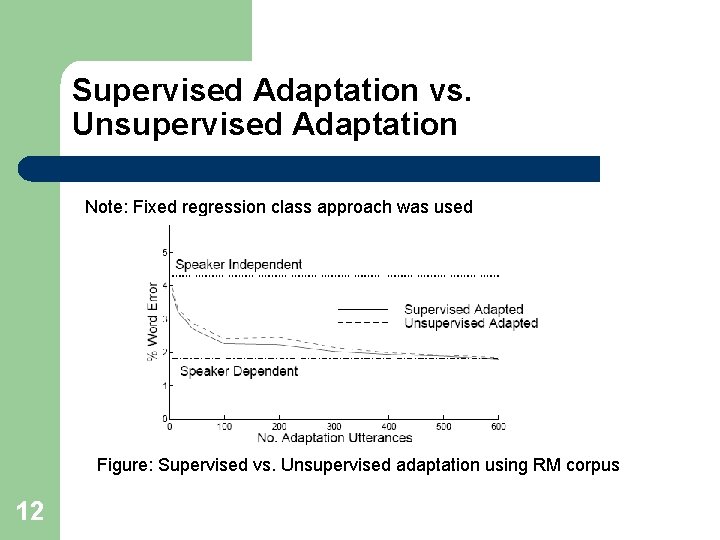

Supervised Adaptation vs. Unsupervised Adaptation Note: Fixed regression class approach was used Figure: Supervised vs. Unsupervised adaptation using RM corpus 12

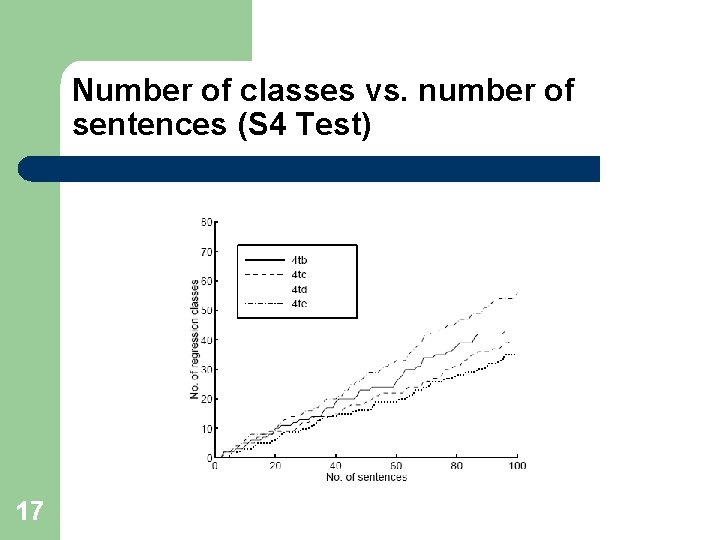

Evaluation on WSJ Data l Experiment settings – – l S 3 test: – l Static supervised adaptation for non-native speakers S 4 test: – 13 Dynamic regression classes approach Baseline Speaker Independent system (refer to 5. 1) Incremental unsupervised adaptation for native speakers

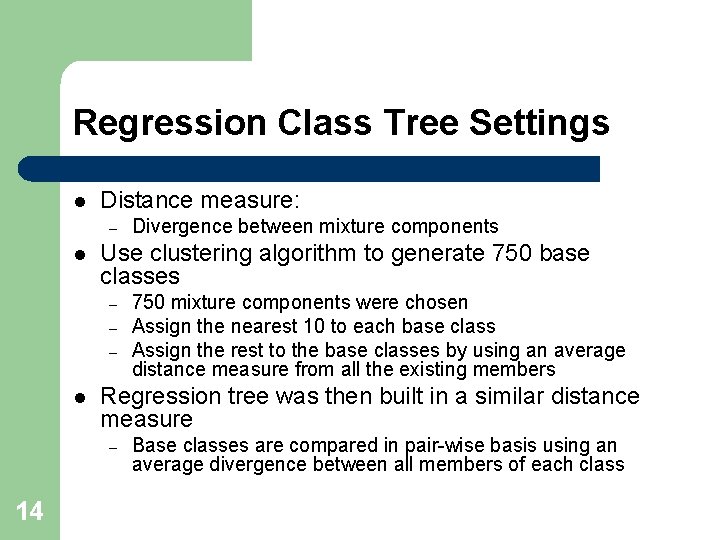

Regression Class Tree Settings l Distance measure: – l Use clustering algorithm to generate 750 base classes – – – l 750 mixture components were chosen Assign the nearest 10 to each base class Assign the rest to the base classes by using an average distance measure from all the existing members Regression tree was then built in a similar distance measure – 14 Divergence between mixture components Base classes are compared in pair-wise basis using an average divergence between all members of each class

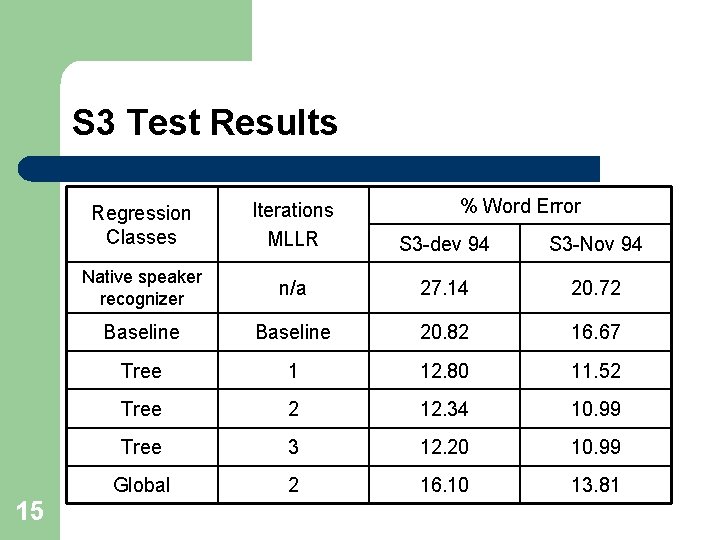

S 3 Test Results 15 % Word Error Regression Classes Iterations MLLR S 3 -dev 94 S 3 -Nov 94 Native speaker recognizer n/a 27. 14 20. 72 Baseline 20. 82 16. 67 Tree 1 12. 80 11. 52 Tree 2 12. 34 10. 99 Tree 3 12. 20 10. 99 Global 2 16. 10 13. 81

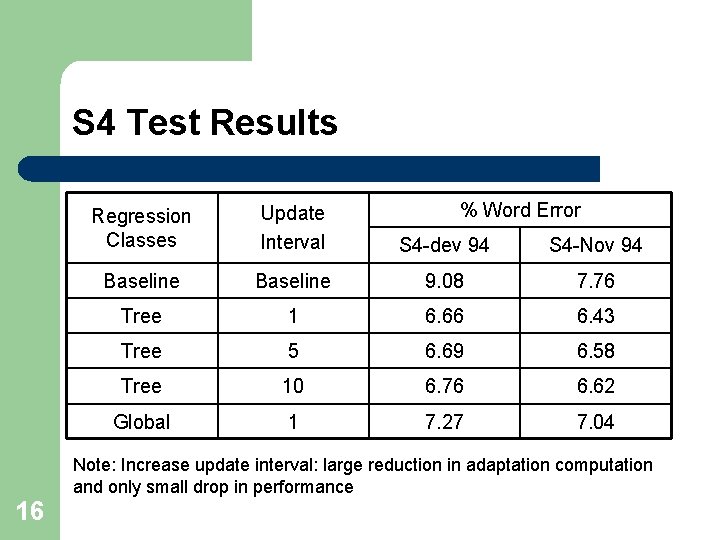

S 4 Test Results % Word Error Regression Classes Update Interval S 4 -dev 94 S 4 -Nov 94 Baseline 9. 08 7. 76 Tree 1 6. 66 6. 43 Tree 5 6. 69 6. 58 Tree 10 6. 76 6. 62 Global 1 7. 27 7. 04 Note: Increase update interval: large reduction in adaptation computation and only small drop in performance 16

Number of classes vs. number of sentences (S 4 Test) 17

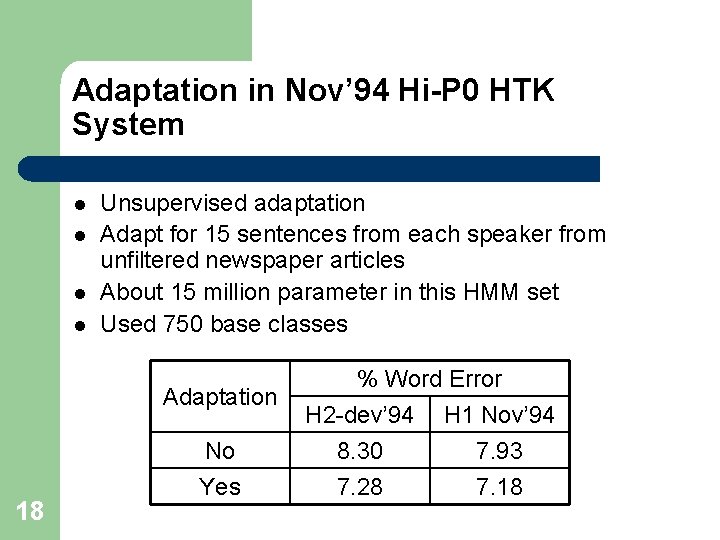

Adaptation in Nov’ 94 Hi-P 0 HTK System l l Unsupervised adaptation Adapt for 15 sentences from each speaker from unfiltered newspaper articles About 15 million parameter in this HMM set Used 750 base classes Adaptation 18 No Yes % Word Error H 2 -dev’ 94 H 1 Nov’ 94 8. 30 7. 93 7. 28 7. 18

Conclusion l l l 19 MLLR approach can be used for both static and incremental adaptation MLLR approach can be used for both supervised and unsupervised adaptation Dynamic regression classes

- Slides: 19