Flattree A Convertible Data Center Network Architecture from

Flat-tree A Convertible Data Center Network Architecture from Clos to Random Graph Yiting Xia, T. S. Eugene Ng Rice University

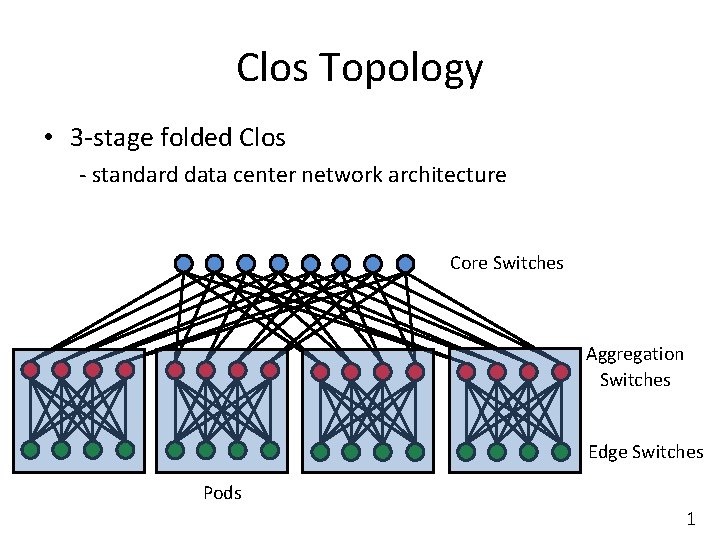

Clos Topology • 3 -stage folded Clos - standard data center network architecture Core Switches Aggregation Switches Edge Switches Pods 1

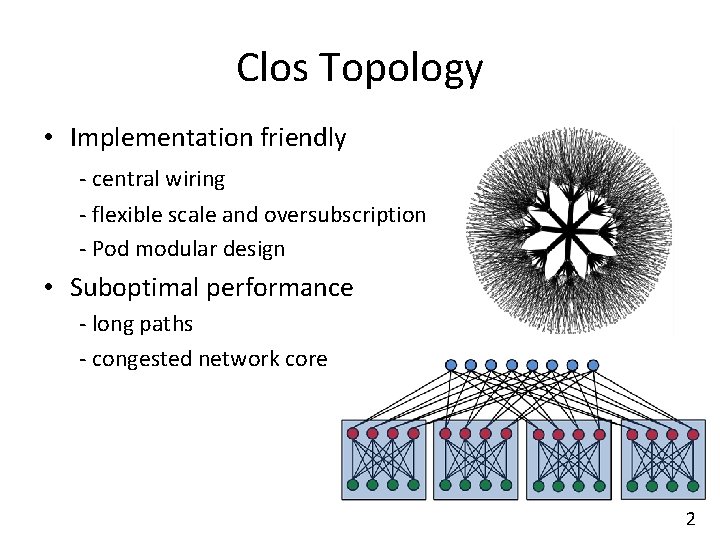

Clos Topology • Implementation friendly - central wiring - flexible scale and oversubscription - Pod modular design • Suboptimal performance - long paths - congested network core 2

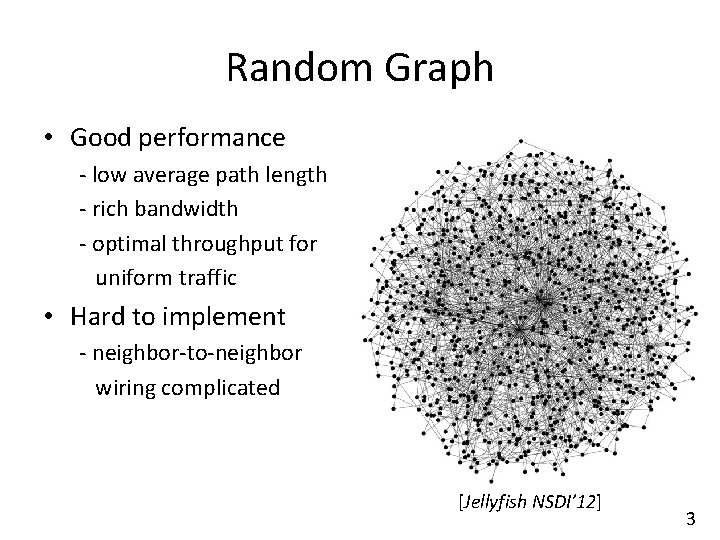

Random Graph • Good performance - low average path length - rich bandwidth - optimal throughput for uniform traffic • Hard to implement - neighbor-to-neighbor wiring complicated [Jellyfish NSDI’ 12] 3

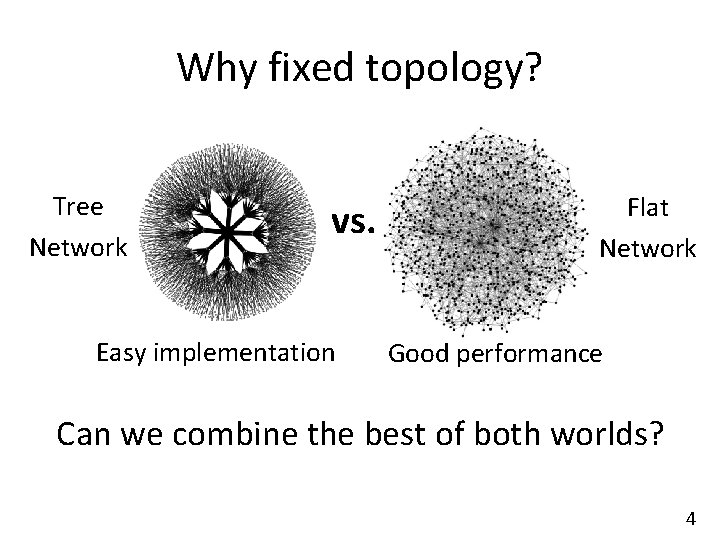

Why fixed topology? Tree Network vs. Easy implementation Flat Network Good performance Can we combine the best of both worlds? 4

Why fixed topology? Fat-tree BCube DCell Hyper. X SIGCOMM’ 08 SIGCOMM’ 09 SIGCOMM’ 08 SC’ 09 Easy implementation Good performance • Fluid data center traffic - each topology has sweet spots - one-size-fit-all topology impossible • Cloud service constantly changing - fixed topology not adaptive to new demands 5

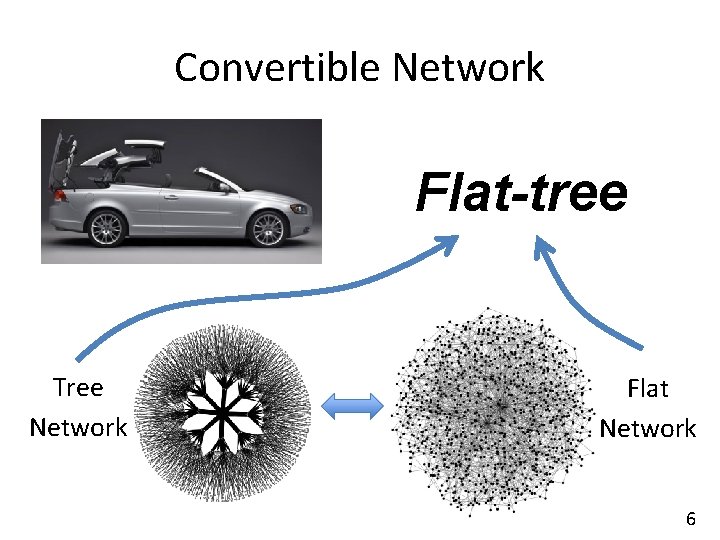

Convertible Network Flat-tree Tree Network Flat Network 6

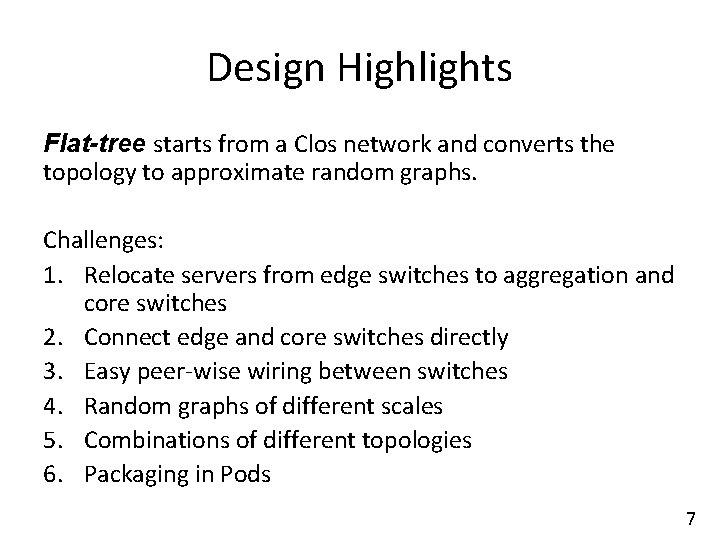

Design Highlights Flat-tree starts from a Clos network and converts the topology to approximate random graphs. Challenges: 1. Relocate servers from edge switches to aggregation and core switches 2. Connect edge and core switches directly 3. Easy peer-wise wiring between switches 4. Random graphs of different scales 5. Combinations of different topologies 6. Packaging in Pods 7

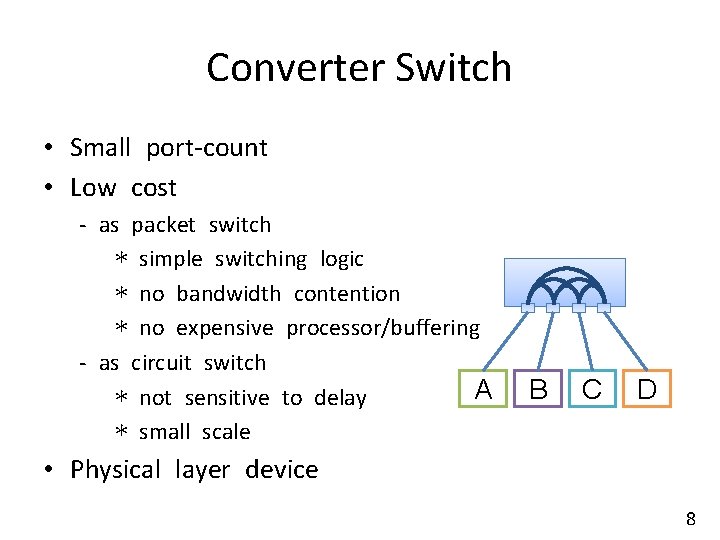

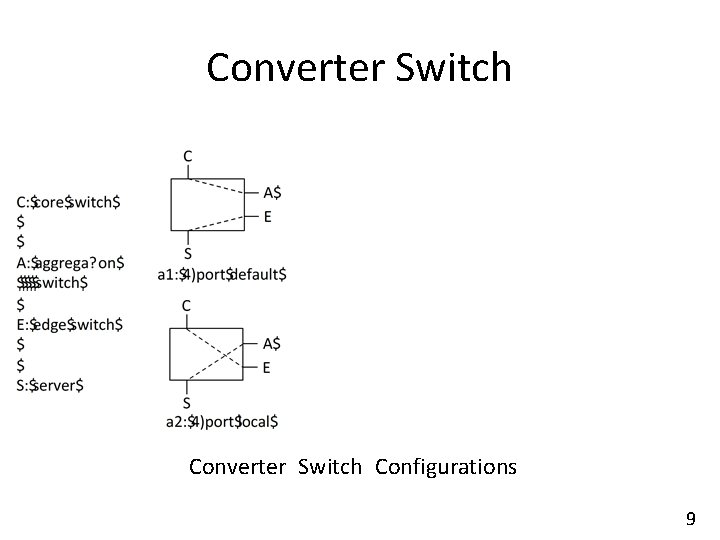

Converter Switch • Small port-count • Low cost - as packet switch * simple switching logic * no bandwidth contention * no expensive processor/buffering - as circuit switch A * not sensitive to delay * small scale B C D • Physical layer device 8

Converter Switch Configurations 9

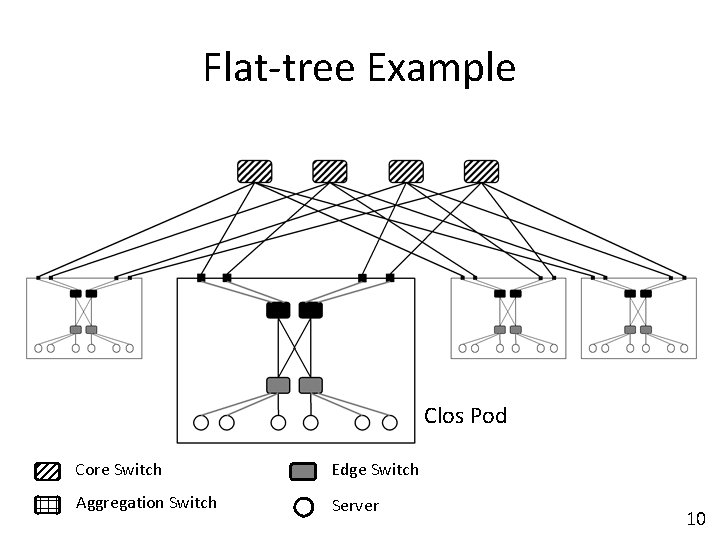

Flat-tree Example Clos Pod Core Switch Edge Switch Aggregation Switch Server 10

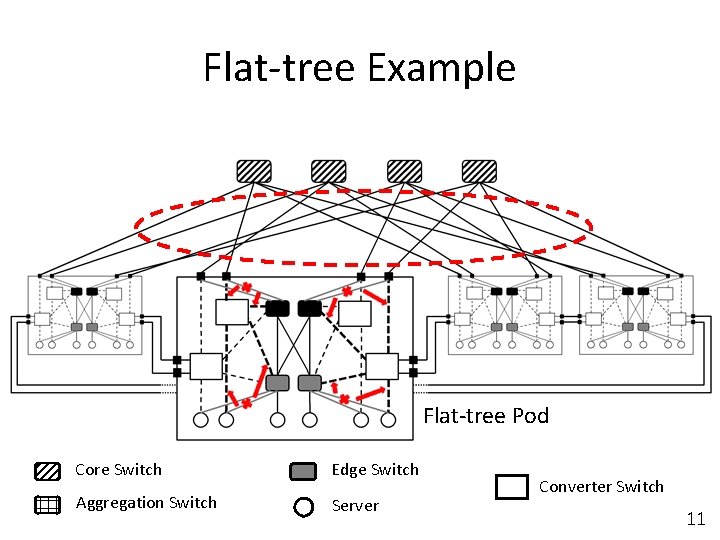

Flat-tree Example Flat-tree Pod Core Switch Edge Switch Aggregation Switch Server Converter Switch 11

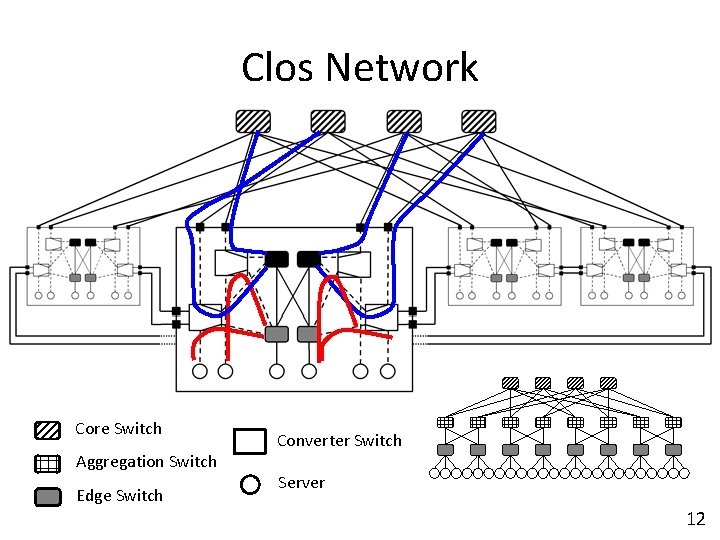

Clos Network Core Switch Aggregation Switch Edge Switch Converter Switch Server 12

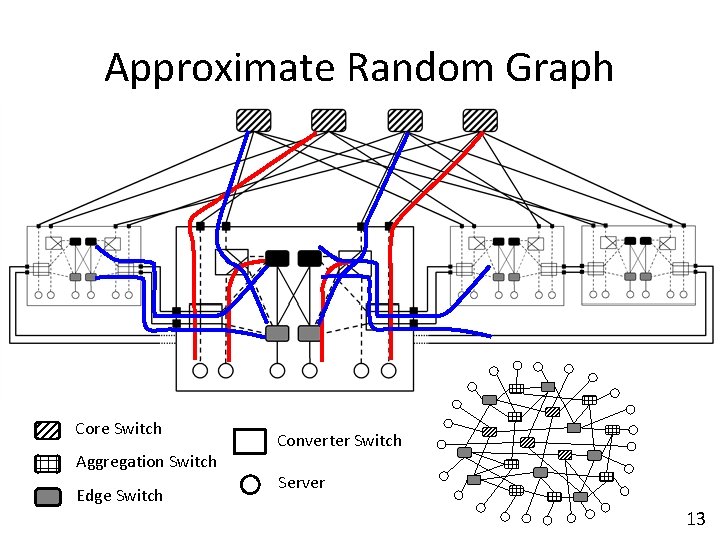

Approximate Random Graph Core Switch Aggregation Switch Edge Switch Converter Switch Server 13

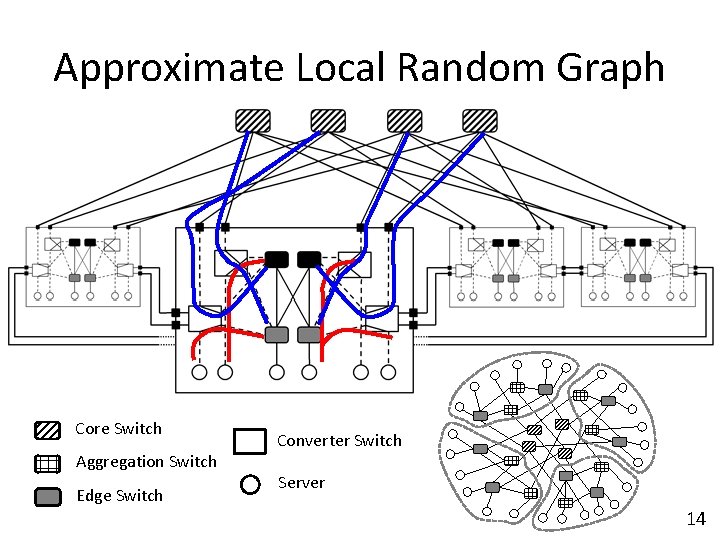

Approximate Local Random Graph Core Switch Aggregation Switch Edge Switch Converter Switch Server 14

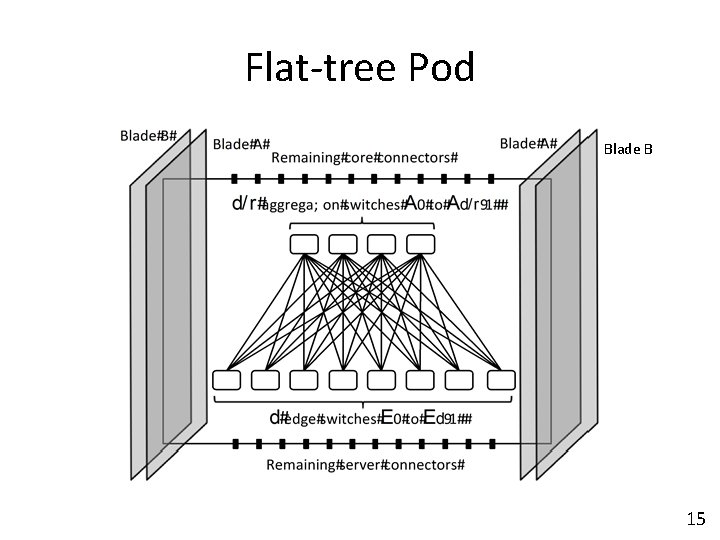

Flat-tree Pod Blade B 15

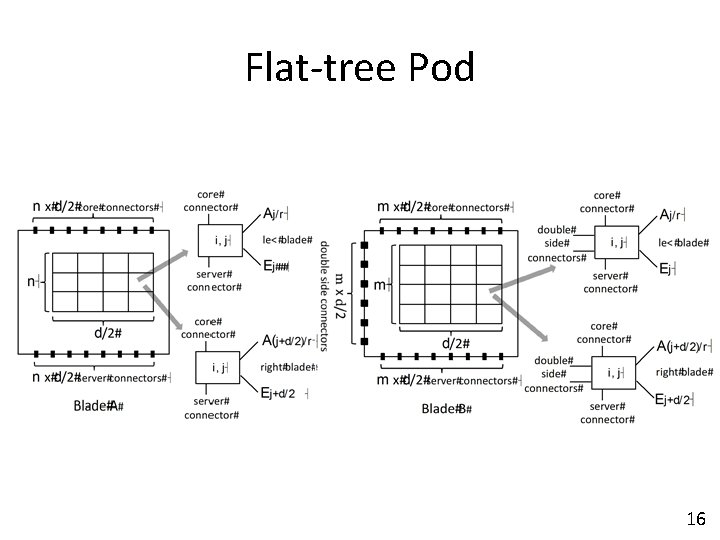

Flat-tree Pod 16

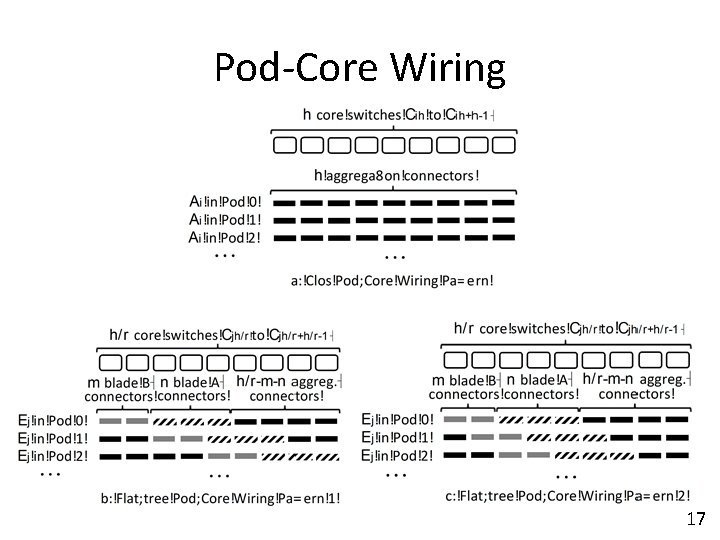

Pod-Core Wiring 17

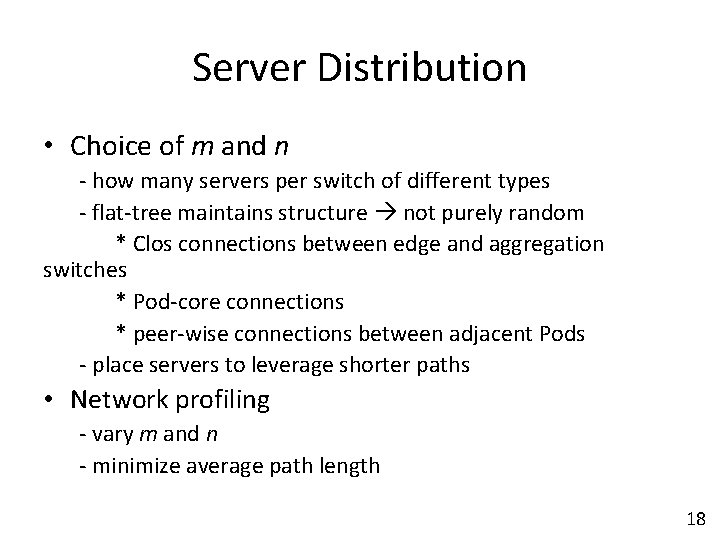

Server Distribution • Choice of m and n - how many servers per switch of different types - flat-tree maintains structure not purely random * Clos connections between edge and aggregation switches * Pod-core connections * peer-wise connections between adjacent Pods - place servers to leverage shorter paths • Network profiling - vary m and n - minimize average path length 18

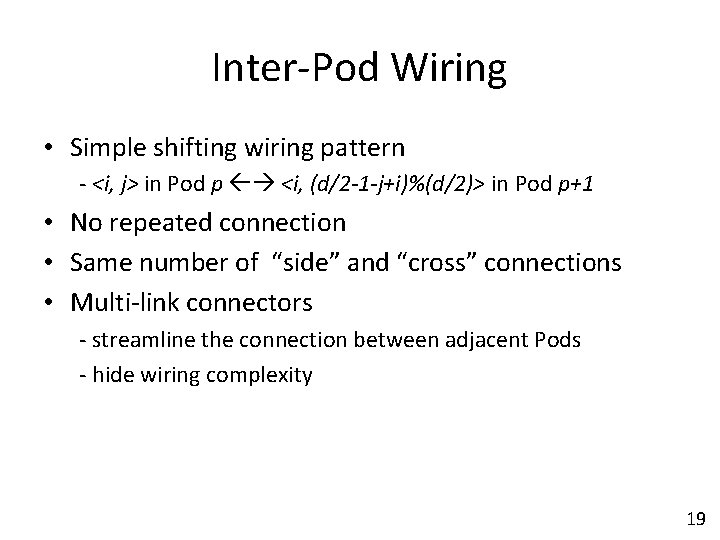

Inter-Pod Wiring • Simple shifting wiring pattern - <i, j> in Pod p <i, (d/2 -1 -j+i)%(d/2)> in Pod p+1 • No repeated connection • Same number of “side” and “cross” connections • Multi-link connectors - streamline the connection between adjacent Pods - hide wiring complexity 19

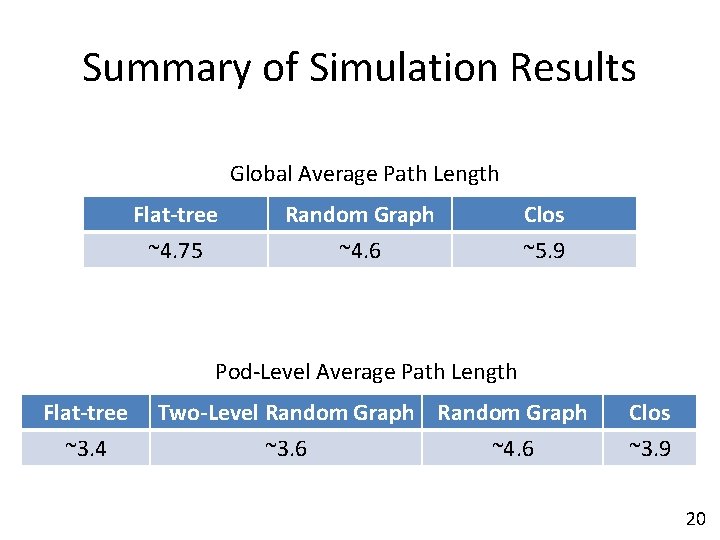

Summary of Simulation Results Global Average Path Length Flat-tree ~4. 75 Random Graph ~4. 6 Clos ~5. 9 Pod-Level Average Path Length Flat-tree ~3. 4 Two-Level Random Graph ~3. 6 ~4. 6 Clos ~3. 9 20

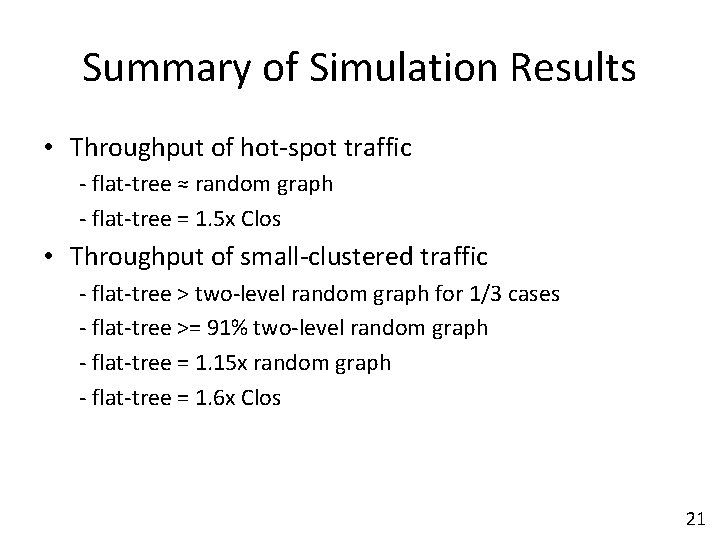

Summary of Simulation Results • Throughput of hot-spot traffic - flat-tree ≈ random graph - flat-tree = 1. 5 x Clos • Throughput of small-clustered traffic - flat-tree > two-level random graph for 1/3 cases - flat-tree >= 91% two-level random graph - flat-tree = 1. 15 x random graph - flat-tree = 1. 6 x Clos 21

Conclusion • Flat-tree converts between Clos topology and random graphs of different scales • Low cost - inexpensive converter switches • Easy implementation - changes packaged in Pods - regular Pod-core wiring patterns - multi-links between adjacent Pods • Hybrid mode - network zones with different topologies • Performance similar to random graphs - < 5% longer average path length - < 9% lower throughput 22

Impact and Inspiration • Flat-tree is one design point of convertible network • Motivate further study of relationship between different topologies • Traffic optimization - joint optimization with routing and workload placement • Network management - self recovery from failures - automatic up/down scale network at busy/idle time 23

- Slides: 24