Fitting Linear Models Regularization Cross Validation Slides by

Fitting Linear Models, Regularization & Cross Validation Slides by: Joseph E. Gonzalez jegonzal@cs. berkeley. edu Fall’ 18 updates: Fernando Perez fernando. perez@berkeley. edu ?

Previously

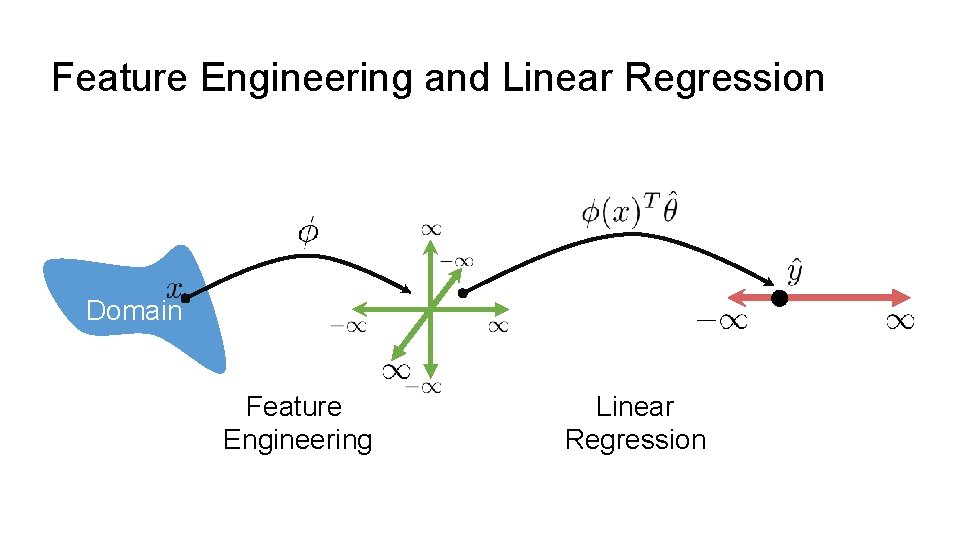

Feature Engineering and Linear Regression Domain Feature Engineering Linear Regression

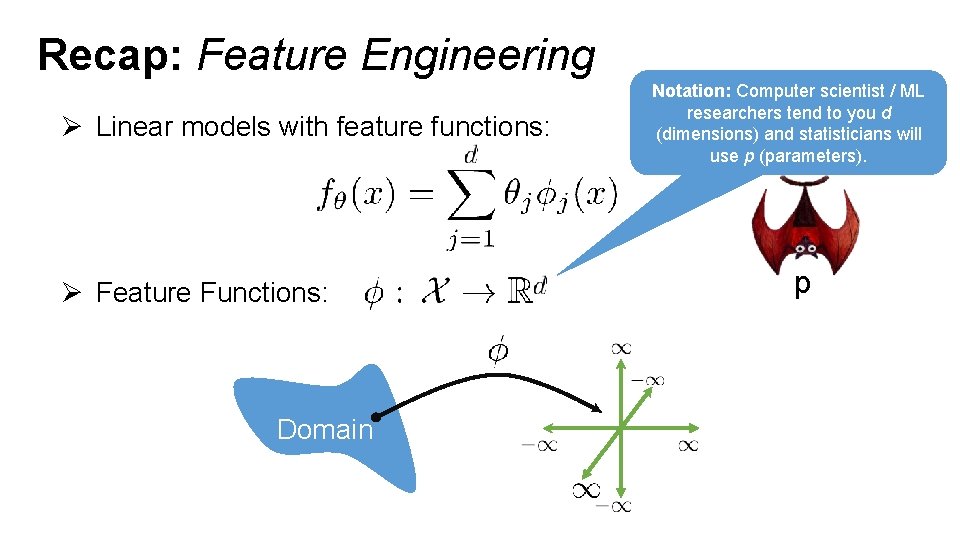

Recap: Feature Engineering Ø Linear models with feature functions: Ø Feature Functions: Domain Notation: Computer scientist / ML researchers tend to you d (dimensions) and statisticians will use p (parameters). p

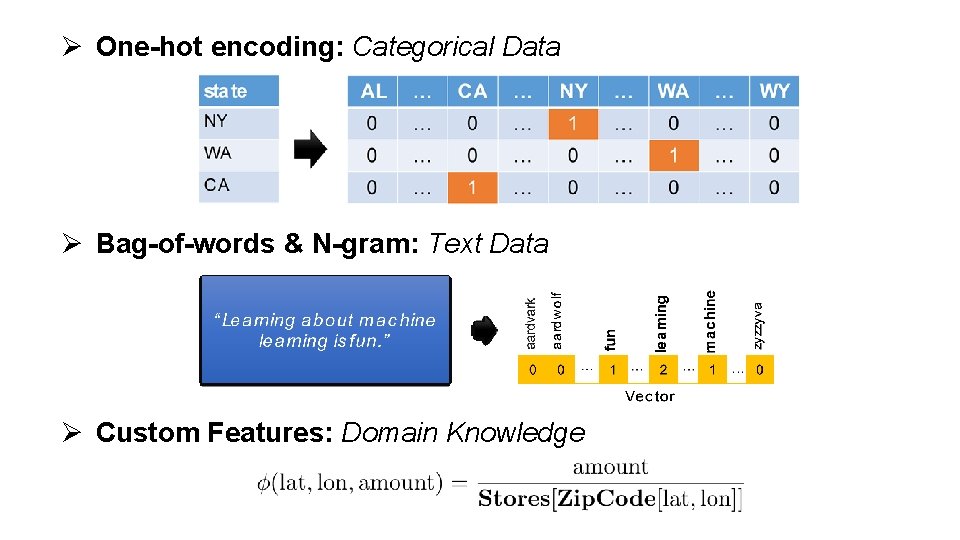

Ø One-hot encoding: Categorical Data Ø Bag-of-words & N-gram: Text Data Ø Custom Features: Domain Knowledge

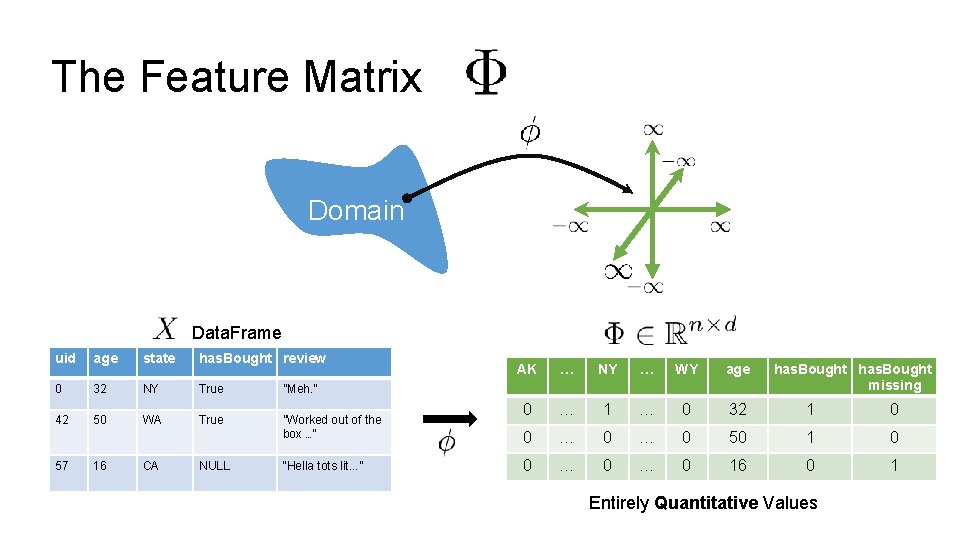

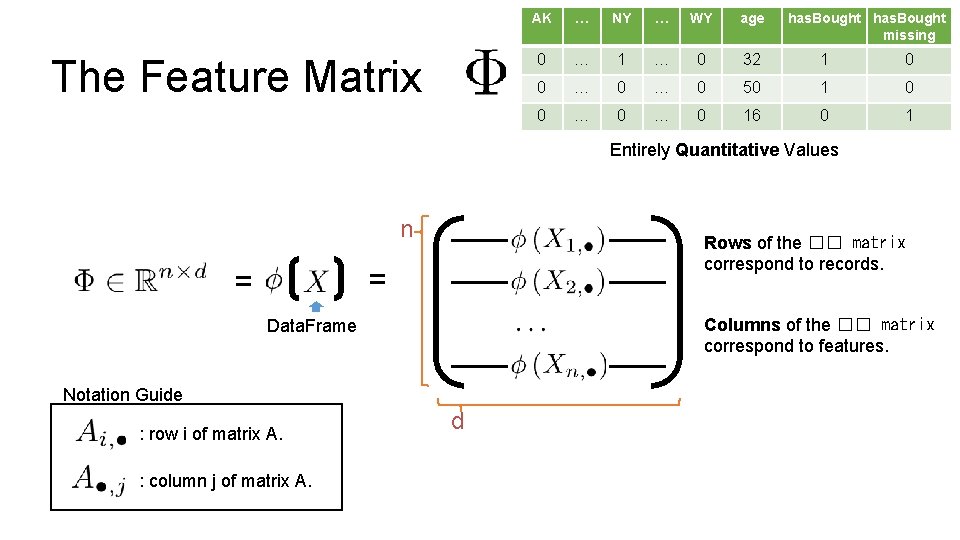

The Feature Matrix Domain Data. Frame uid age state has. Bought review 0 32 NY True ”Meh. ” 42 50 WA True ”Worked out of the box …” 57 16 CA NULL “Hella tots lit. . . ” AK … NY … WY age has. Bought missing 0 … 1 … 0 32 1 0 0 … 0 50 1 0 0 … 0 16 0 1 Entirely Quantitative Values

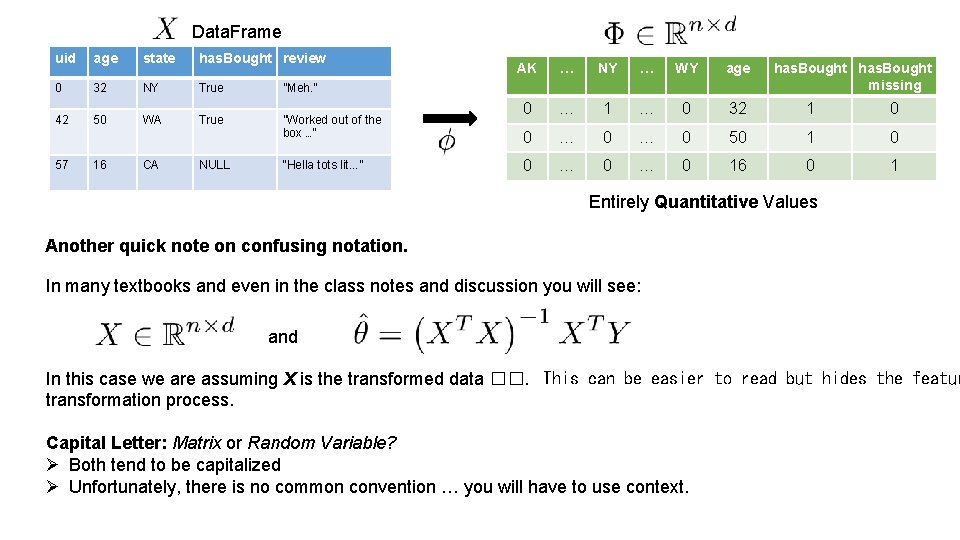

Data. Frame uid age state has. Bought review 0 32 NY True ”Meh. ” 42 50 WA True ”Worked out of the box …” 57 16 CA NULL “Hella tots lit. . . ” AK … NY … WY age has. Bought missing 0 … 1 … 0 32 1 0 0 … 0 50 1 0 0 … 0 16 0 1 Entirely Quantitative Values Another quick note on confusing notation. In many textbooks and even in the class notes and discussion you will see: and In this case we are assuming X is the transformed data ��. This can be easier to read but hides the featur transformation process. Capital Letter: Matrix or Random Variable? Ø Both tend to be capitalized Ø Unfortunately, there is no common convention … you will have to use context.

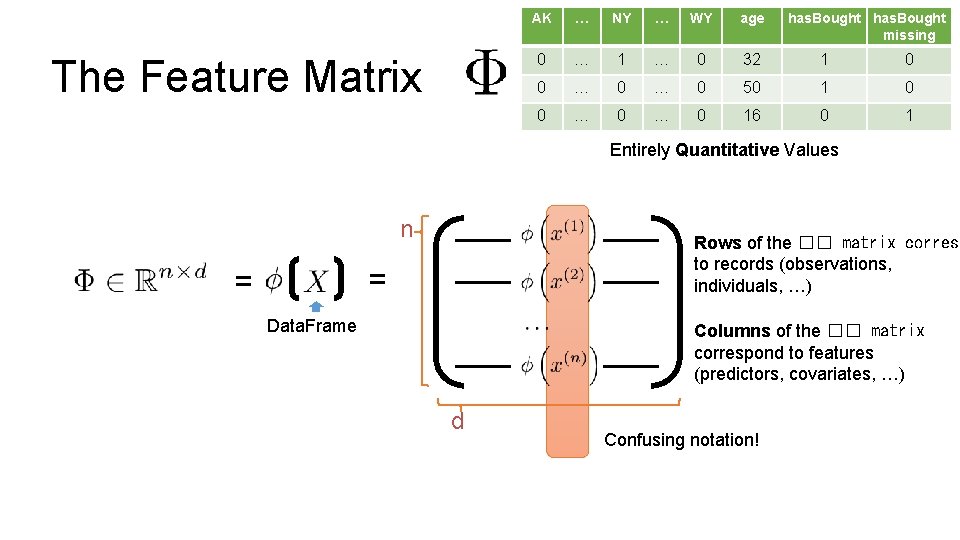

The Feature Matrix AK … NY … WY age has. Bought missing 0 … 1 … 0 32 1 0 0 … 0 50 1 0 0 … 0 16 0 1 Entirely Quantitative Values n Rows of the �� matrix corresp to records (observations, individuals, …) = = Data. Frame Columns of the �� matrix correspond to features (predictors, covariates, …) d Confusing notation!

The Feature Matrix AK … NY … WY age has. Bought missing 0 … 1 … 0 32 1 0 0 … 0 50 1 0 0 … 0 16 0 1 Entirely Quantitative Values n Rows of the �� matrix correspond to records. = = Columns of the �� matrix correspond to features. Data. Frame Notation Guide : row i of matrix A. : column j of matrix A. d

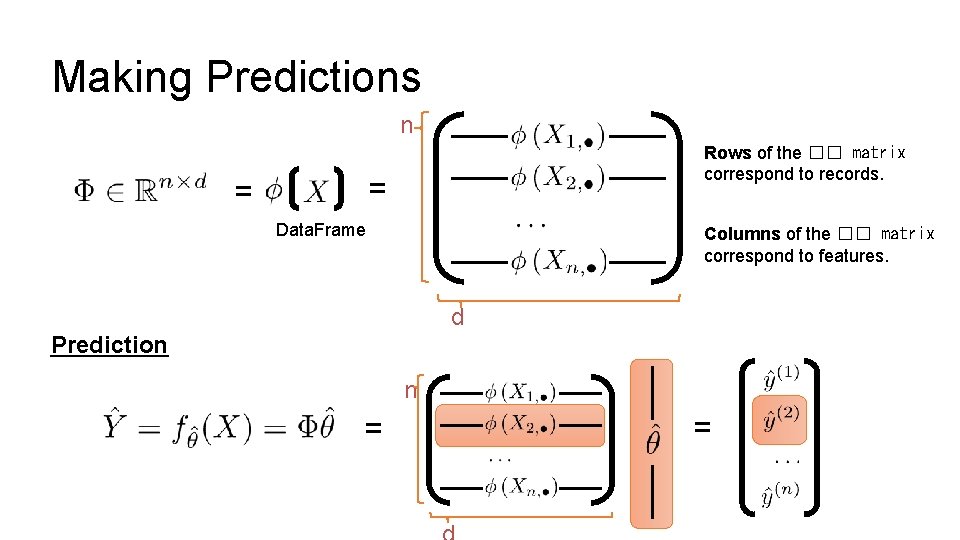

Making Predictions n Rows of the �� matrix correspond to records. = = Data. Frame Columns of the �� matrix correspond to features. d Prediction n = =

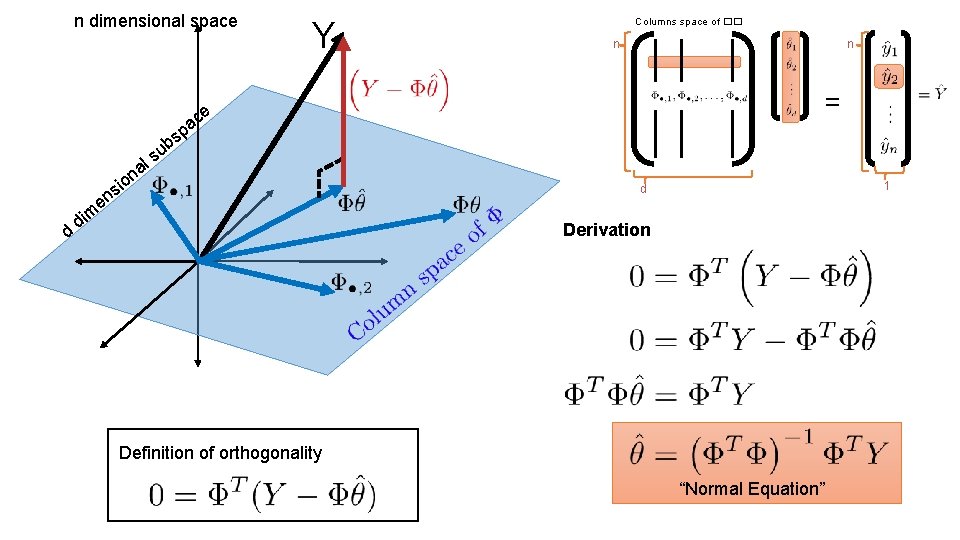

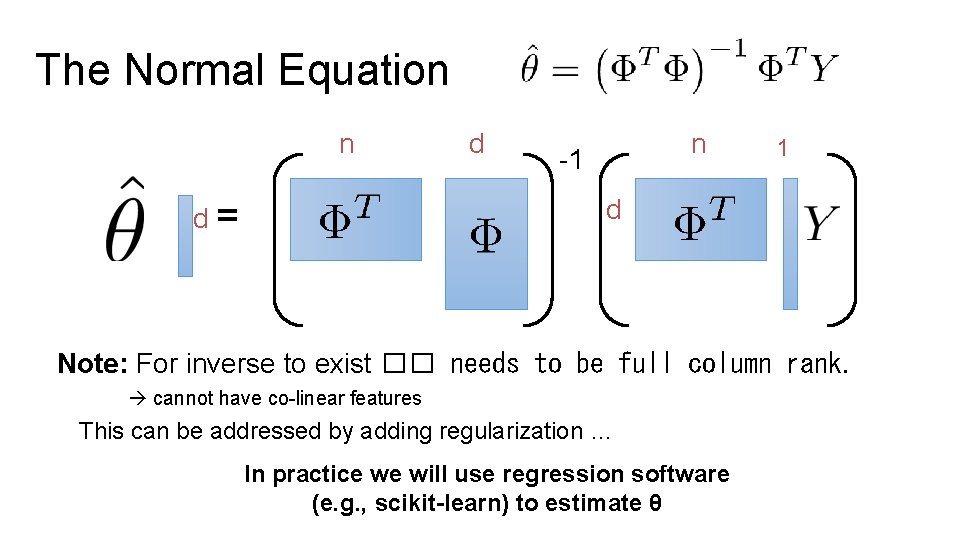

Normal Equations Ø Solution to the least squares model: Ø Given by the normal equation: Ø You should know this! Ø You do not need to know the calculus based derivation. Ø You should know the geometric derivation …

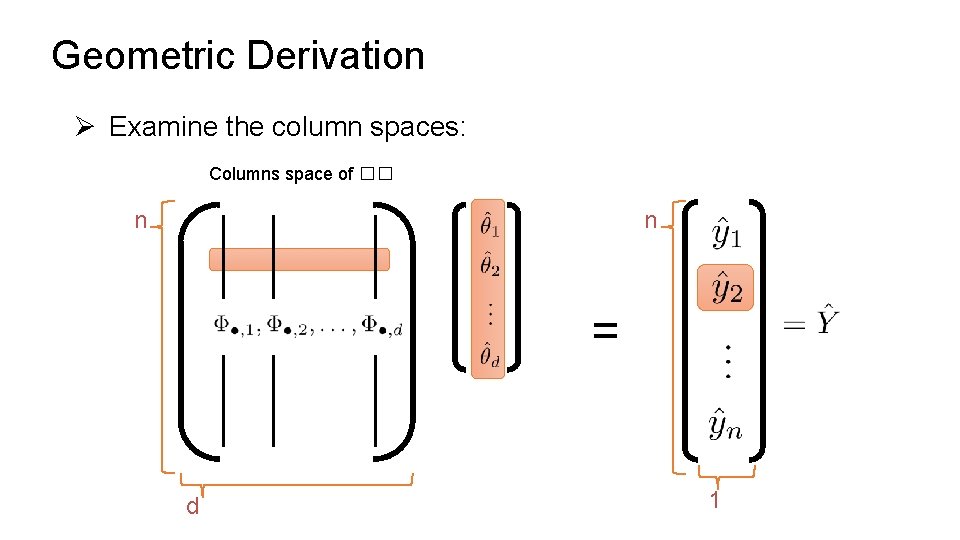

Geometric Derivation Ø Examine the column spaces: Columns space of �� n n = d 1

n dimensional space p s b u ls Y d n n = e ac a n o si n e m di Columns space of �� 1 d Derivation Definition of orthogonality “Normal Equation”

The Normal Equation n d= d n -1 1 d Note: For inverse to exist �� needs to be full column rank. cannot have co-linear features This can be addressed by adding regularization … In practice we will use regression software (e. g. , scikit-learn) to estimate θ

Least Squares Regression in Practice Ø Use optimized software packages Ø Address numerical issues with matrix inversion Ø Incorporate some form of regularization Ø Address issues of collinearity Ø Produce more robust models Ø We will be using scikit-learn: Ø http: //scikit-learn. org/stable/modules/linear_model. html Ø See Homework 6 for details!

Scikit Learn Models Ø Scikit Learn has a wide range of models Ø Many of the models follow a common pattern: Ordinary Least Squares Regression from sklearn import linear_model f = linear_model. Linear. Regression(fit_intercept=True) f. fit(train_data[['X']], train_data['Y']) Yhat = f. predict(test_data[['X']])

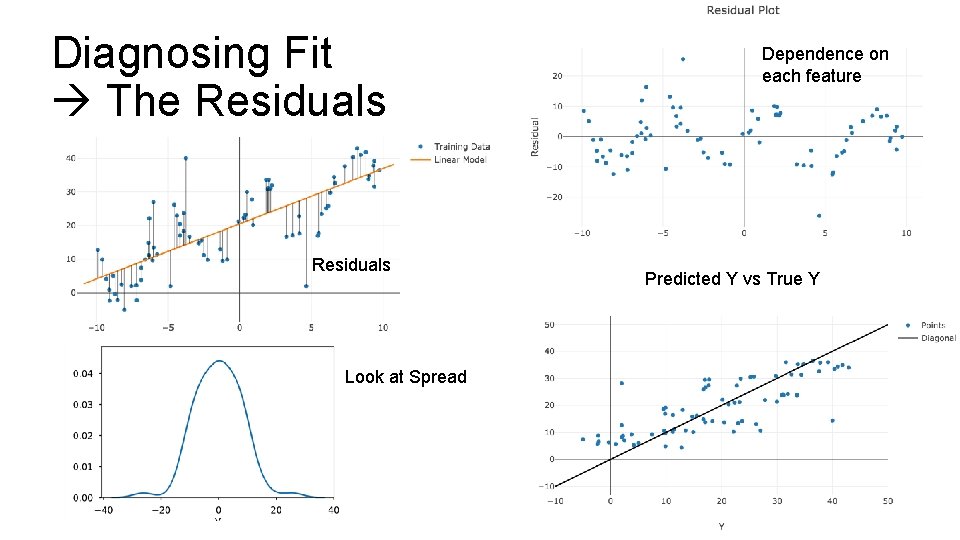

Diagnosing Fit The Residuals Look at Spread Dependence on each feature Predicted Y vs True Y

Regularization Parametrically Controlling the Model Complexity Ø Tradeoff: Ø Increase bias Ø Decrease variance ��

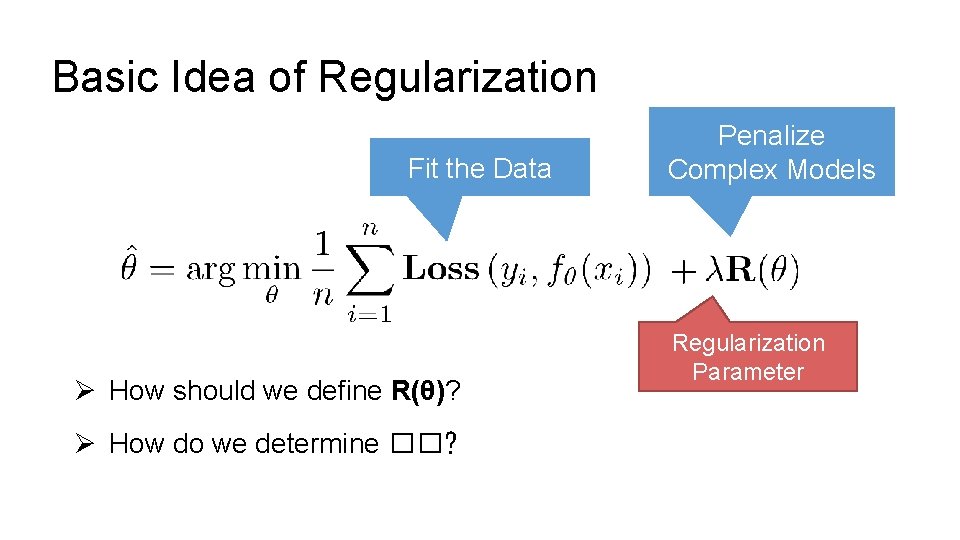

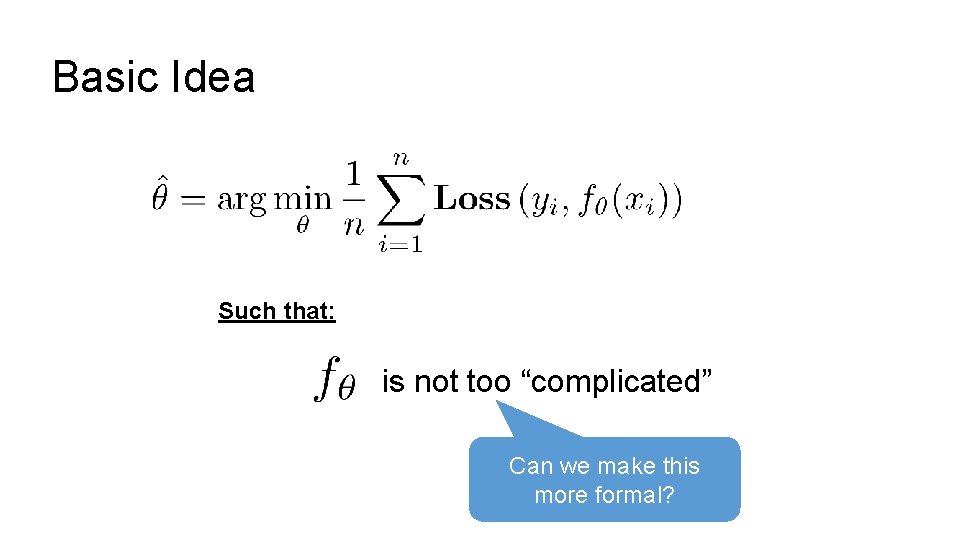

Basic Idea of Regularization Fit the Data Ø How should we define R(θ)? Ø How do we determine ��? Penalize Complex Models Regularization Parameter

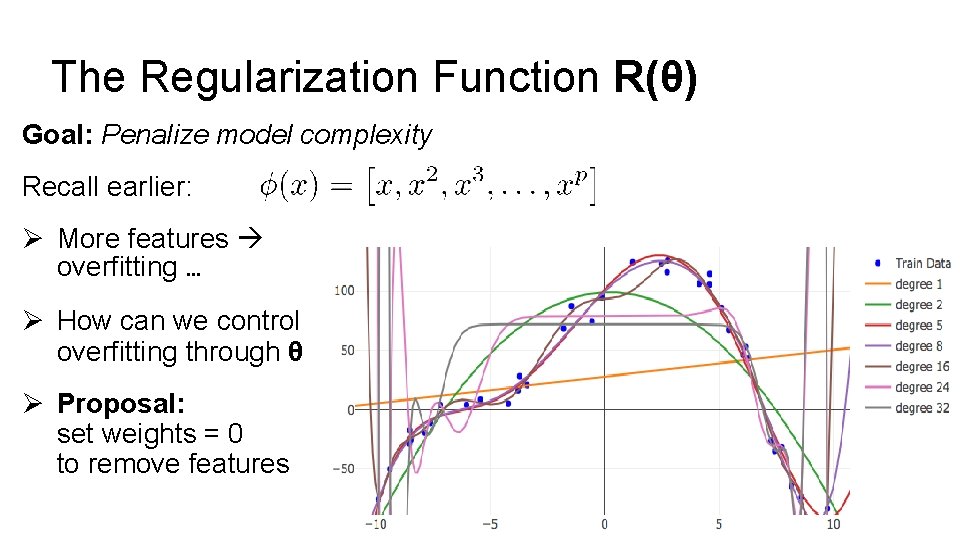

The Regularization Function R(θ) Goal: Penalize model complexity Recall earlier: Ø More features overfitting … Ø How can we control overfitting through θ Ø Proposal: set weights = 0 to remove features

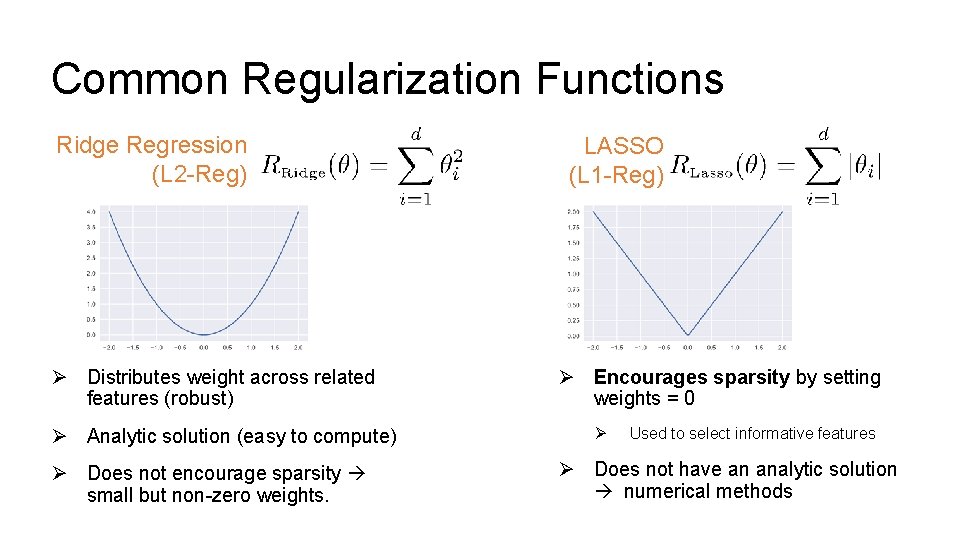

Common Regularization Functions Ridge Regression (L 2 -Reg) Ø Distributes weight across related features (robust) Ø Analytic solution (easy to compute) Ø Does not encourage sparsity small but non-zero weights. LASSO (L 1 -Reg) Ø Encourages sparsity by setting weights = 0 Ø Used to select informative features Ø Does not have an analytic solution numerical methods

Python Demo! The shapes of the norm balls. Maybe show reg. effects on actual models.

An alternate (dual) view on regularization A little easier for geometrical intuition

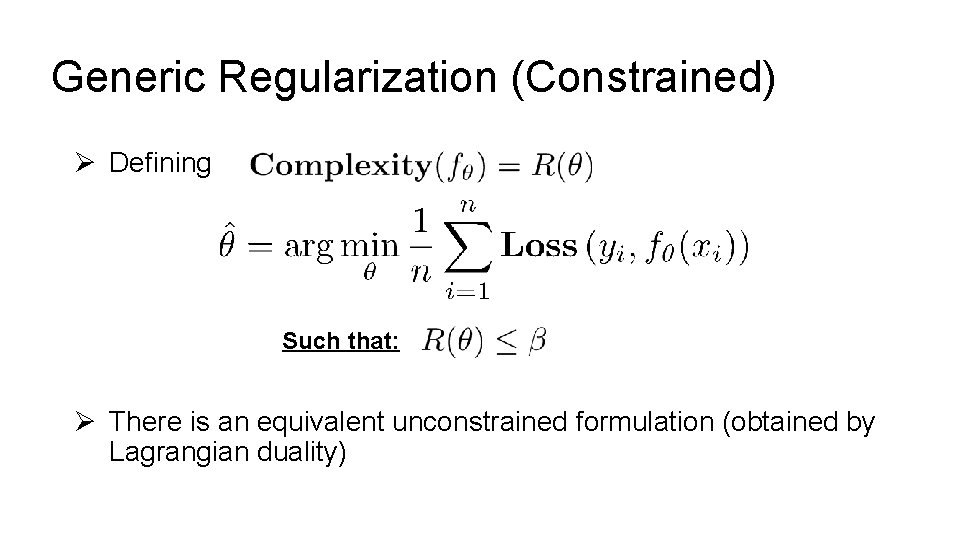

Basic Idea Such that: is not too “complicated” Can we make this more formal?

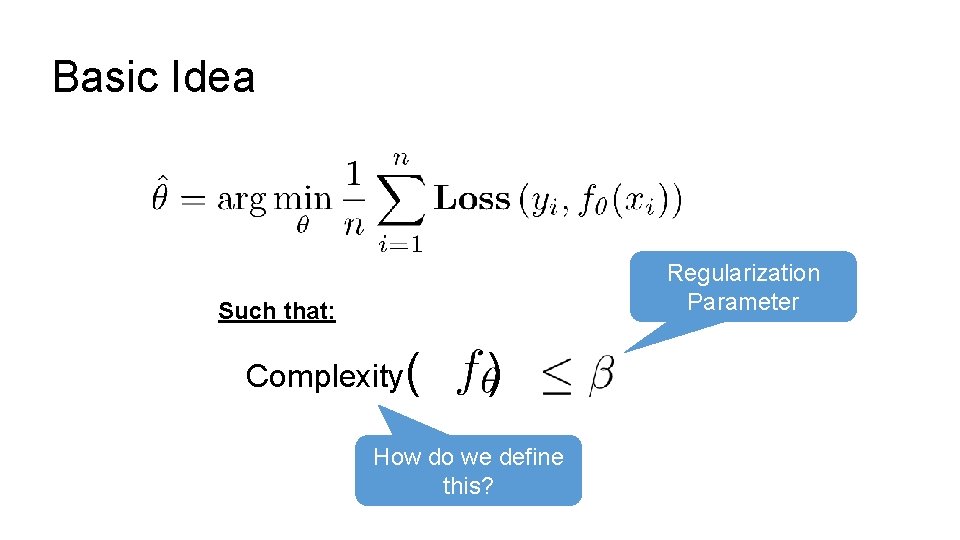

Basic Idea Regularization Parameter Such that: Complexity( ) How do we define this?

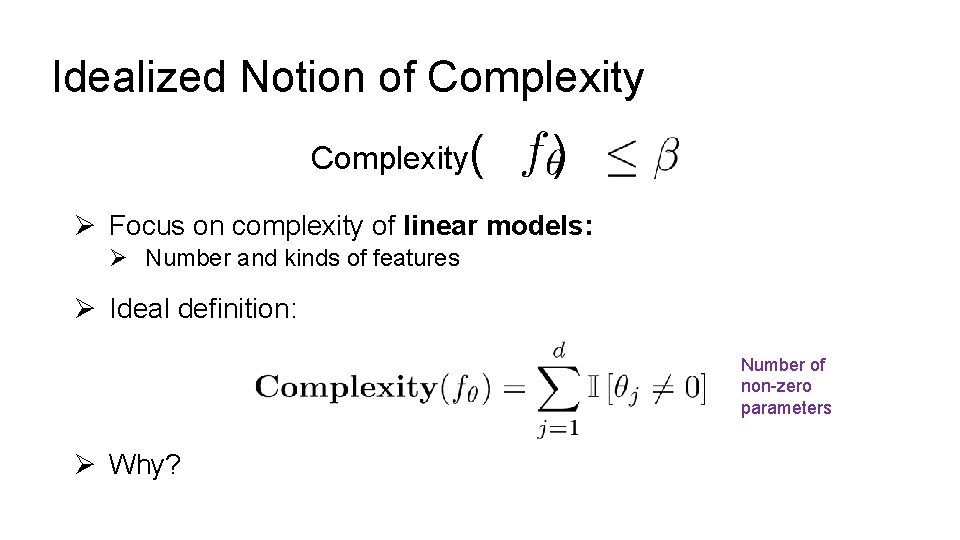

Idealized Notion of Complexity( ) Ø Focus on complexity of linear models: Ø Number and kinds of features Ø Ideal definition: Number of non-zero parameters Ø Why?

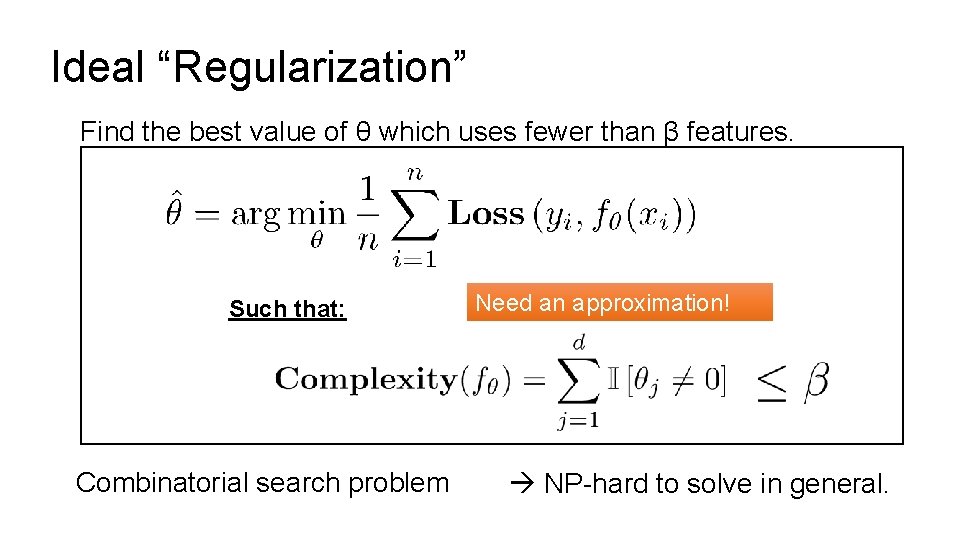

Ideal “Regularization” Find the best value of θ which uses fewer than β features. Such that: Combinatorial search problem Need an approximation! NP-hard to solve in general.

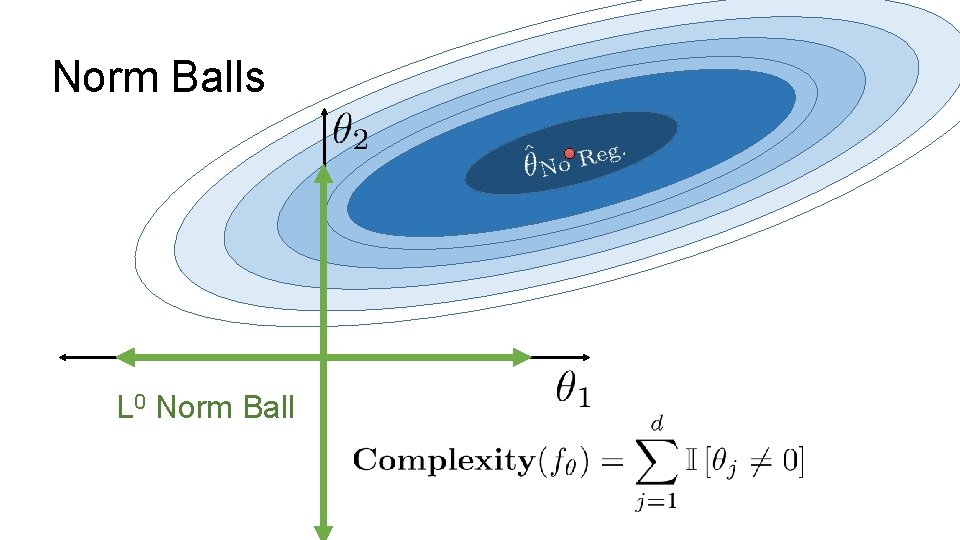

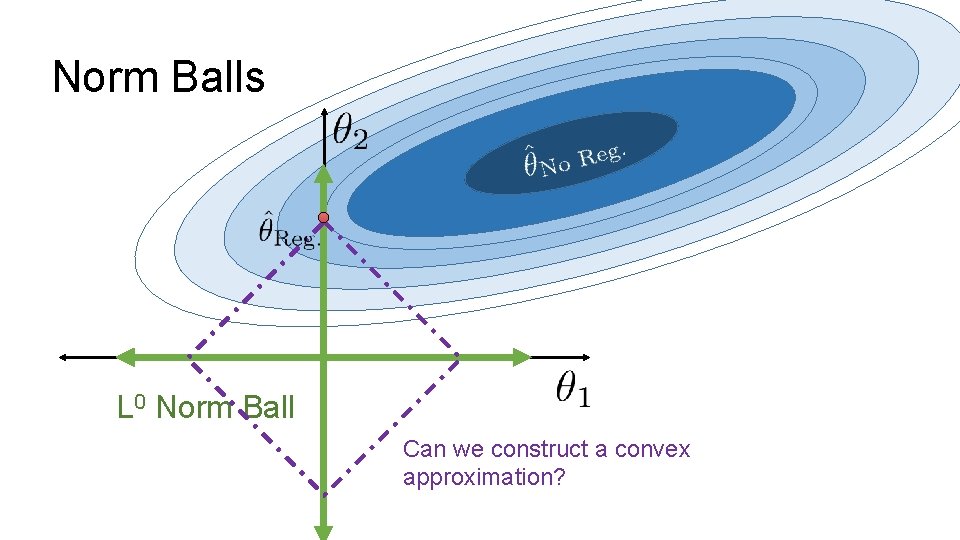

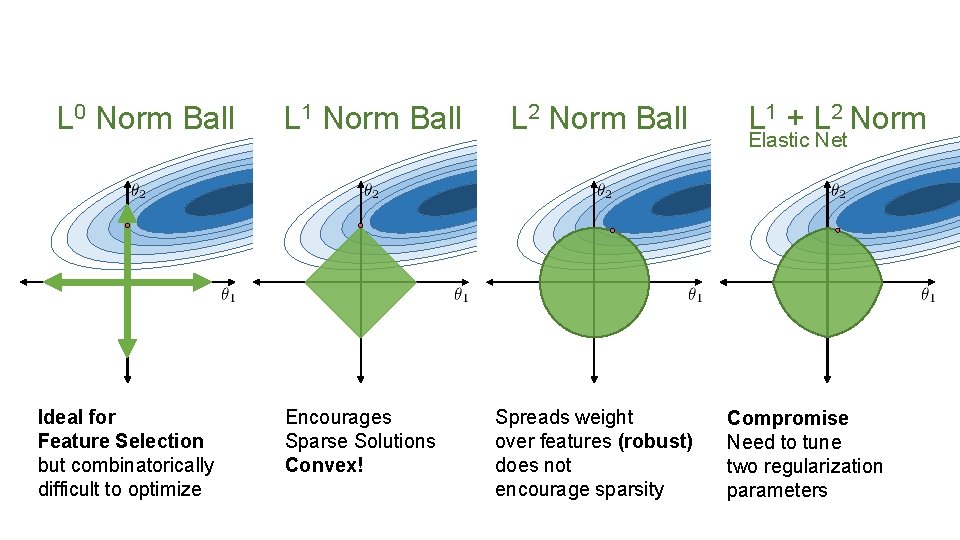

Norm Balls L 0 Norm Ball

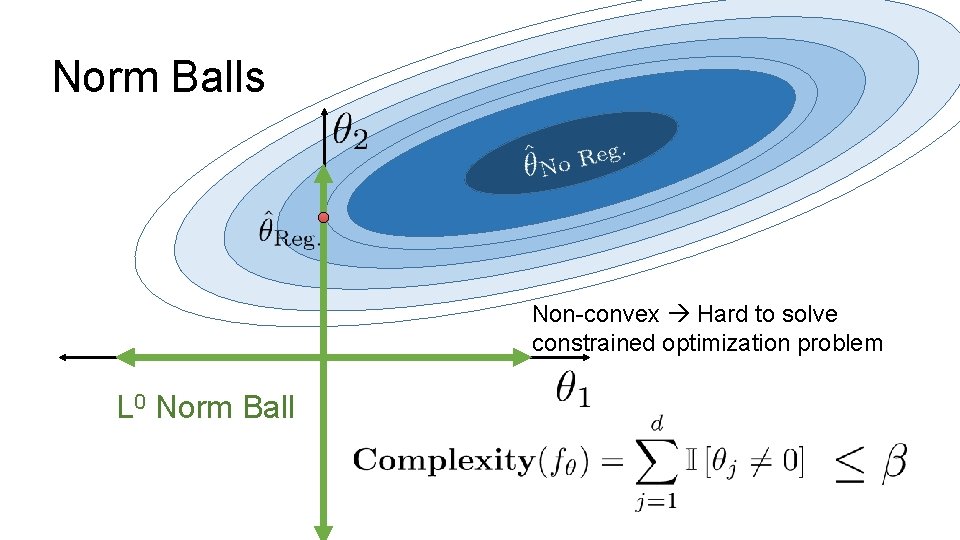

Norm Balls Non-convex Hard to solve constrained optimization problem L 0 Norm Ball

Norm Balls L 0 Norm Ball Can we construct a convex approximation?

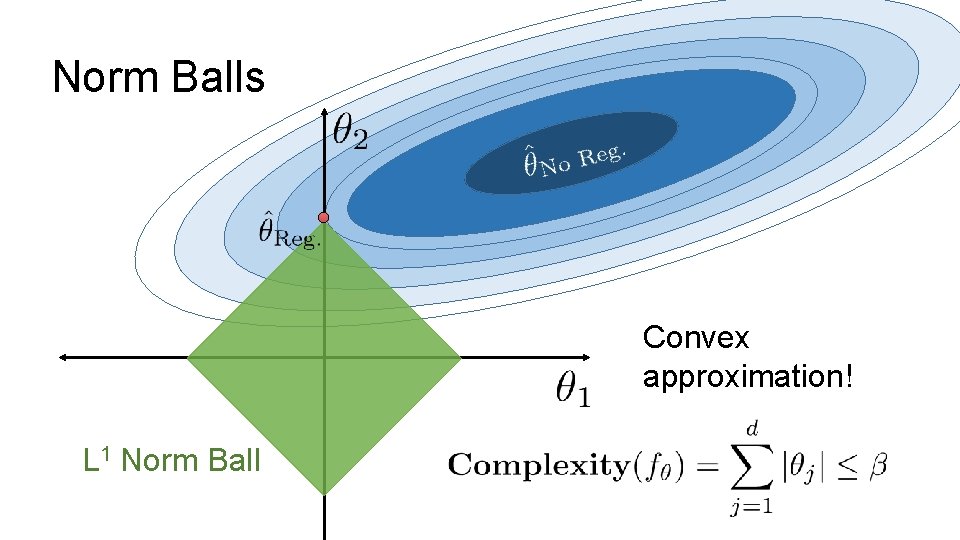

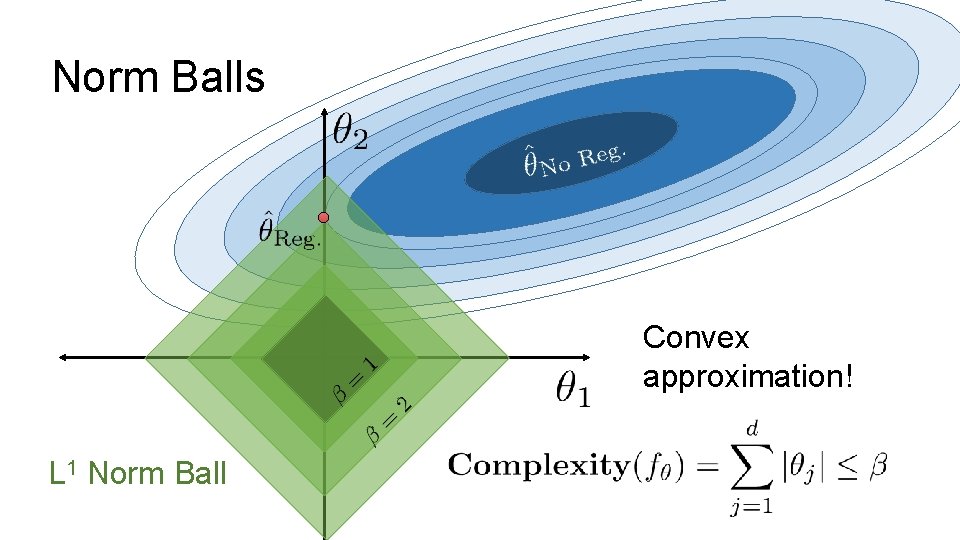

Norm Balls Convex approximation! L 1 Norm Ball

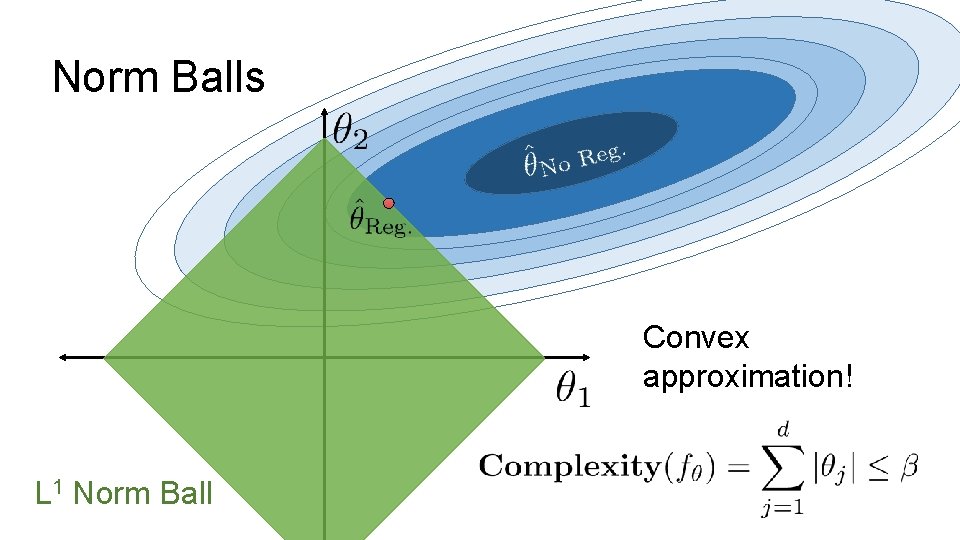

Norm Balls Convex approximation! L 1 Norm Ball

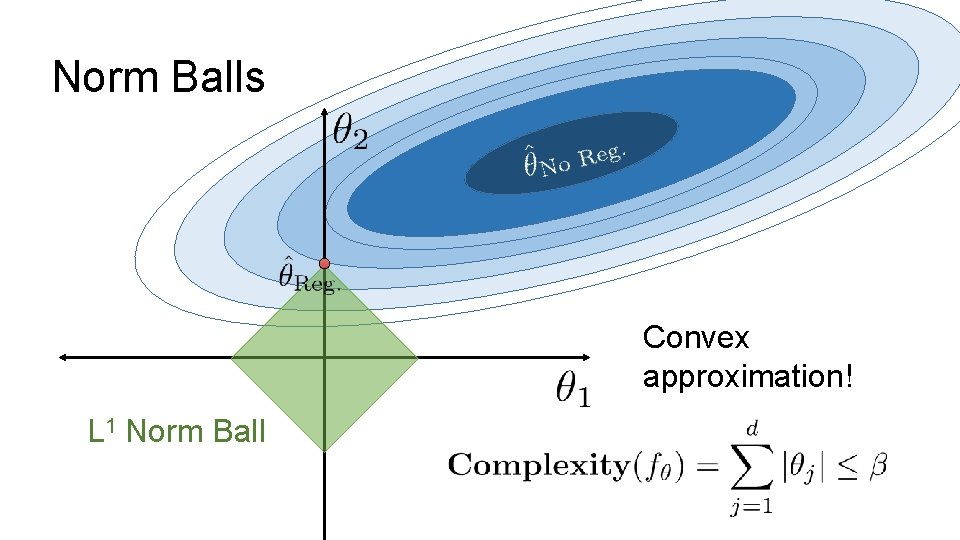

Norm Balls Convex approximation! L 1 Norm Ball

Norm Balls Convex approximation! L 1 Norm Ball

Norm Balls L 1 Norm Ball Other Approximations?

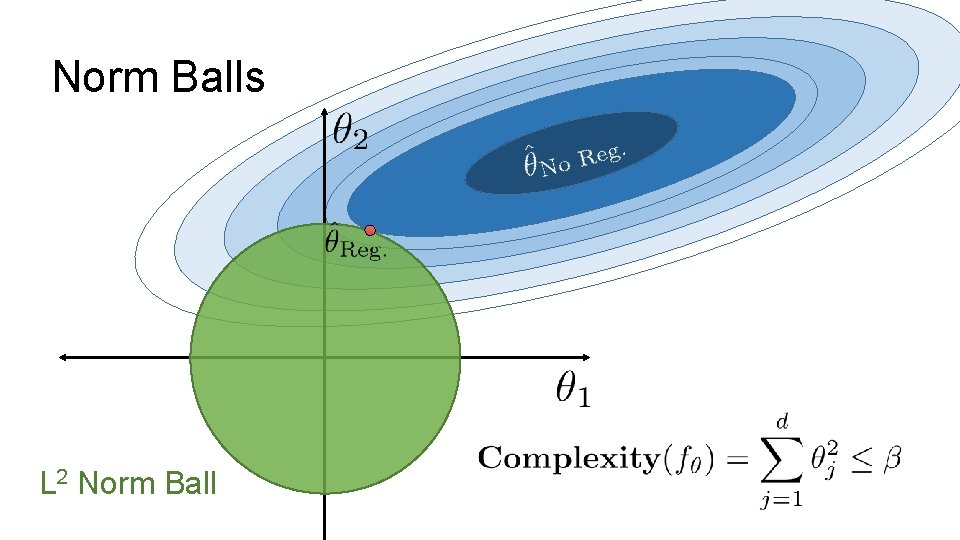

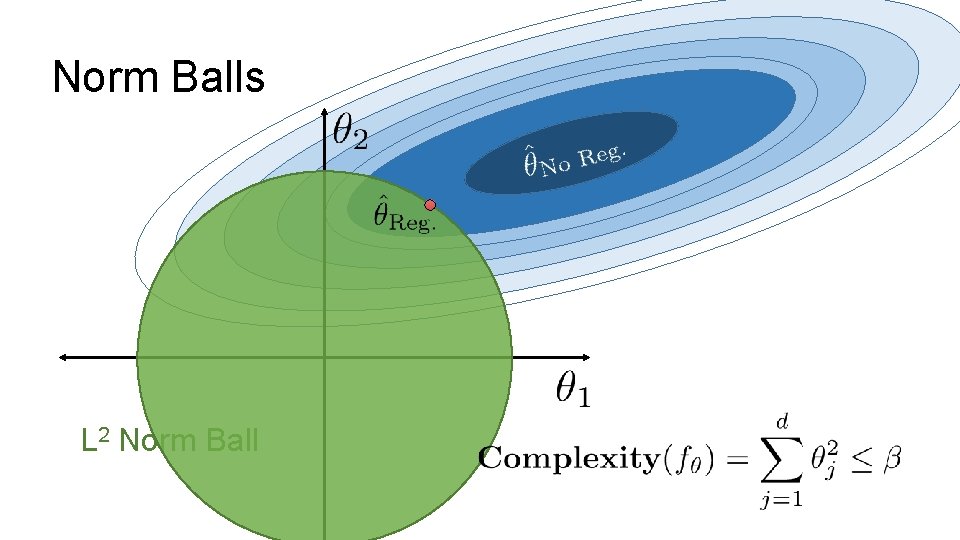

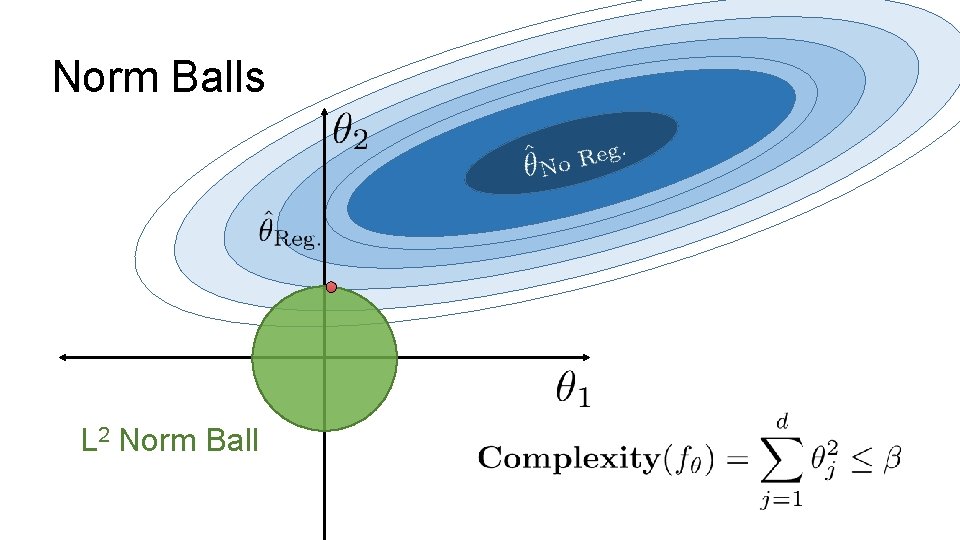

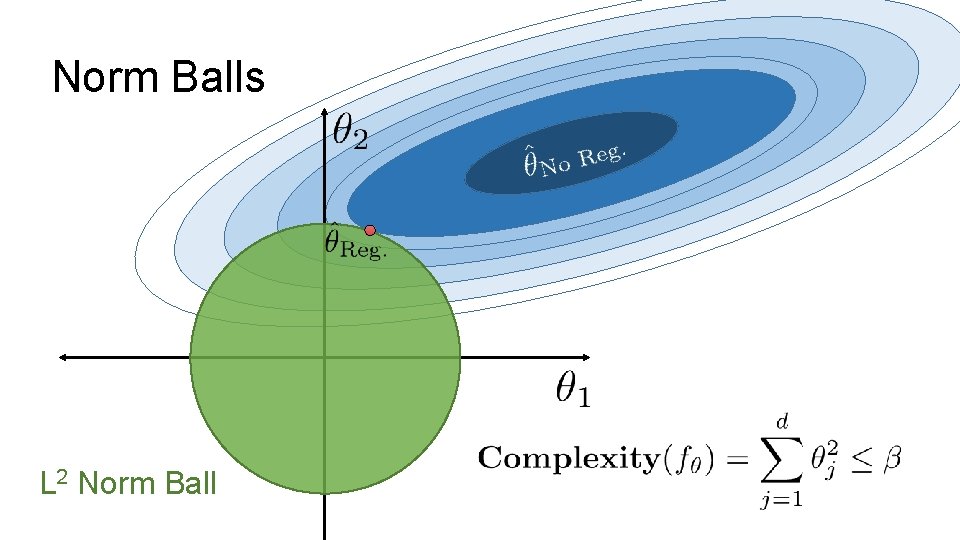

Norm Balls L 2 Norm Ball

Norm Balls L 2 Norm Ball

Norm Balls L 2 Norm Ball

Norm Balls L 2 Norm Ball

L 0 Norm Ball Ideal for Feature Selection but combinatorically difficult to optimize L 1 Norm Ball Encourages Sparse Solutions Convex! L 2 Norm Ball Spreads weight over features (robust) does not encourage sparsity L 1 + L 2 Norm Elastic Net Compromise Need to tune two regularization parameters

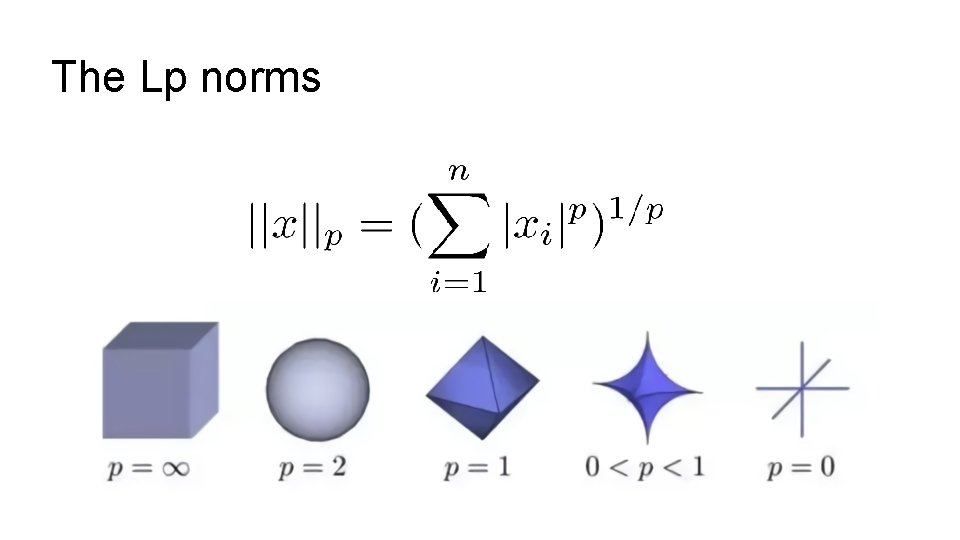

The Lp norms

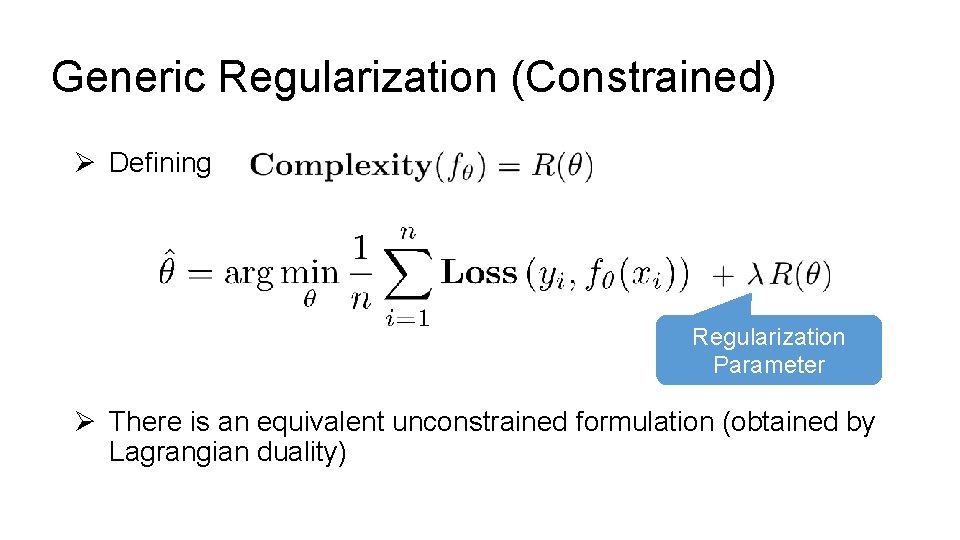

Generic Regularization (Constrained) Ø Defining Such that: Ø There is an equivalent unconstrained formulation (obtained by Lagrangian duality)

Generic Regularization (Constrained) Ø Defining Regularization Parameter Ø There is an equivalent unconstrained formulation (obtained by Lagrangian duality)

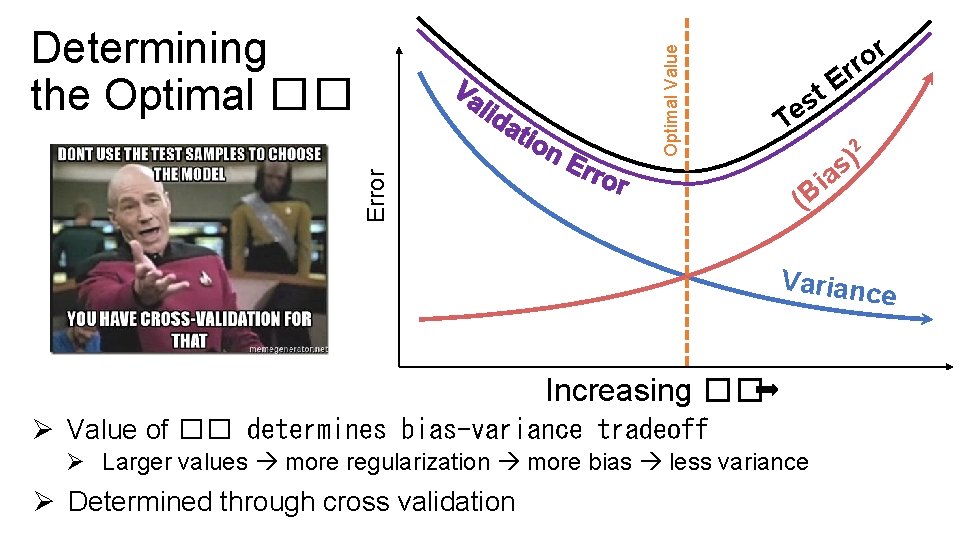

r o rr T E t es Varianc e Increasing �� Ø Value of �� determines bias-variance tradeoff Ø Larger values more regularization more bias less variance Ø Determined through cross validation 2 ) as i B ( Error How do we determine ��? Optimal Value Determining the Optimal ��

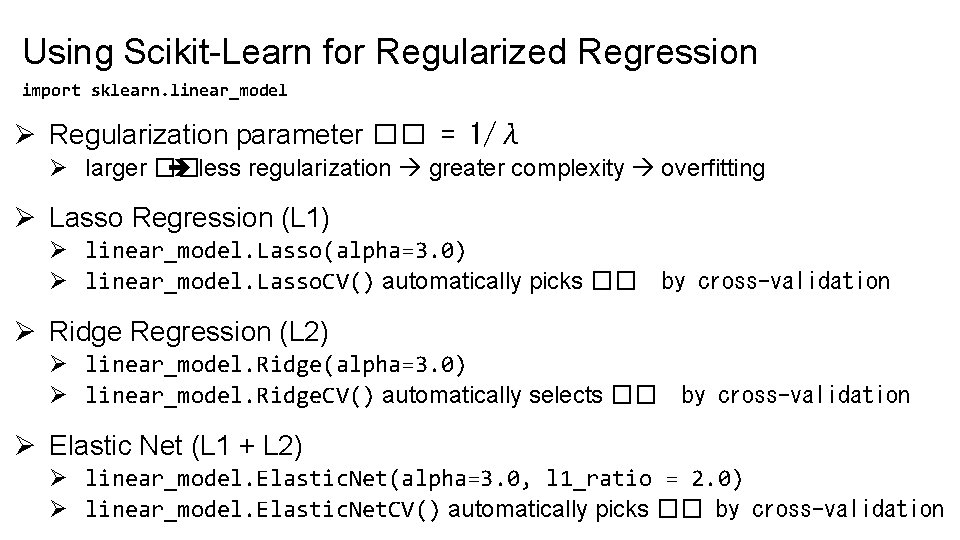

Using Scikit-Learn for Regularized Regression import sklearn. linear_model Ø Regularization parameter �� = 1/λ Ø larger �� less regularization greater complexity overfitting Ø Lasso Regression (L 1) Ø linear_model. Lasso(alpha=3. 0) Ø linear_model. Lasso. CV() automatically picks �� by cross-validation Ø Ridge Regression (L 2) Ø linear_model. Ridge(alpha=3. 0) Ø linear_model. Ridge. CV() automatically selects �� by cross-validation Ø Elastic Net (L 1 + L 2) Ø linear_model. Elastic. Net(alpha=3. 0, l 1_ratio = 2. 0) Ø linear_model. Elastic. Net. CV() automatically picks �� by cross-validation

http: //bit. ly/ds 100 -fa 18 -opq

Notebook Demo

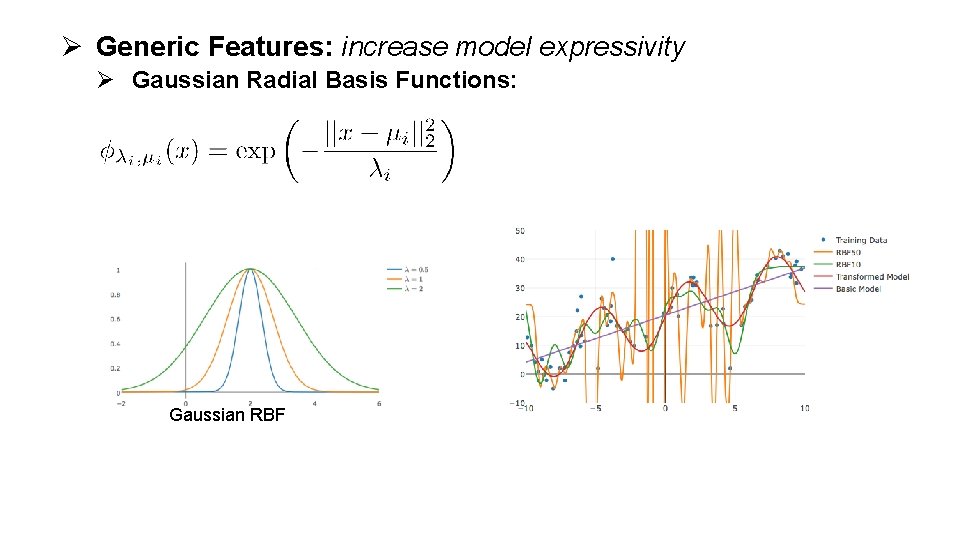

Ø Generic Features: increase model expressivity Ø Gaussian Radial Basis Functions: Gaussian RBF

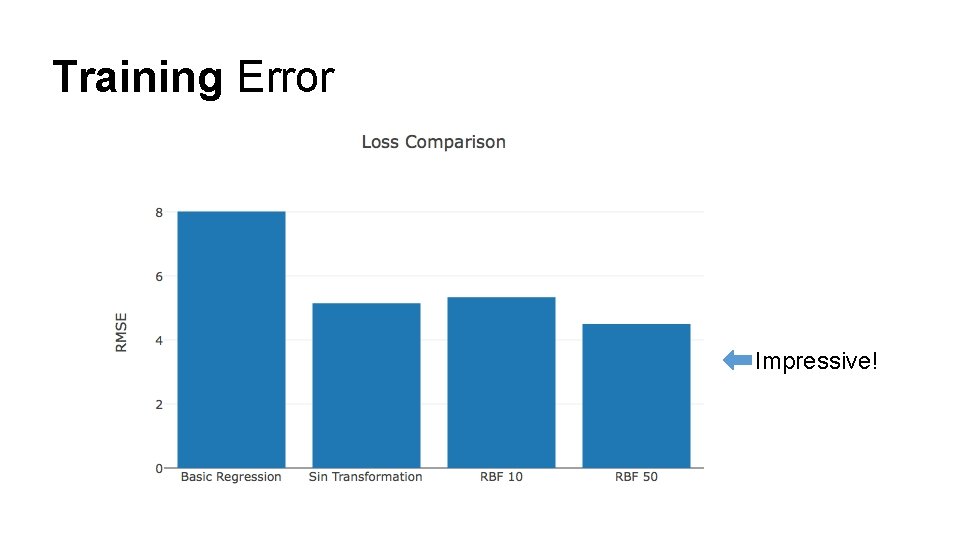

Training Error Impressive!

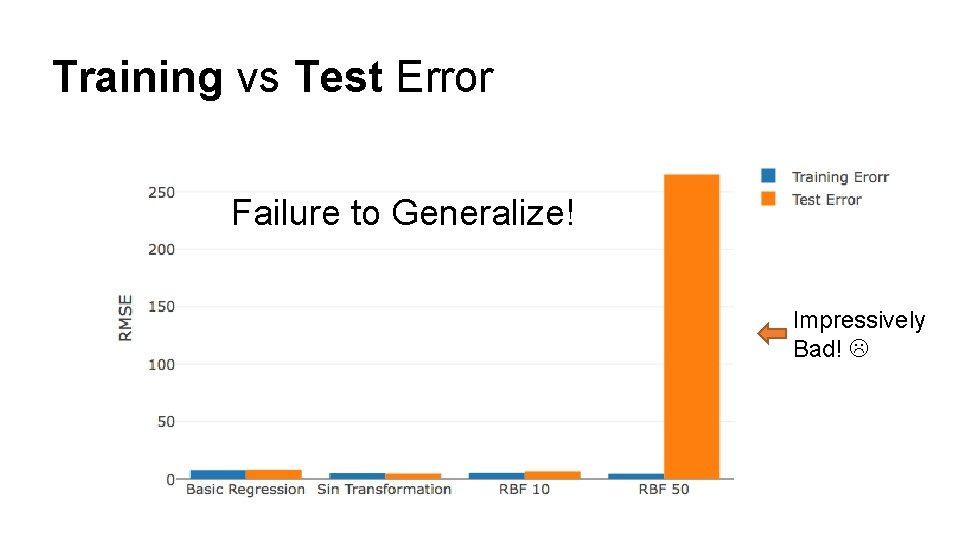

Training vs Test Error Failure to Generalize! Impressively Bad!

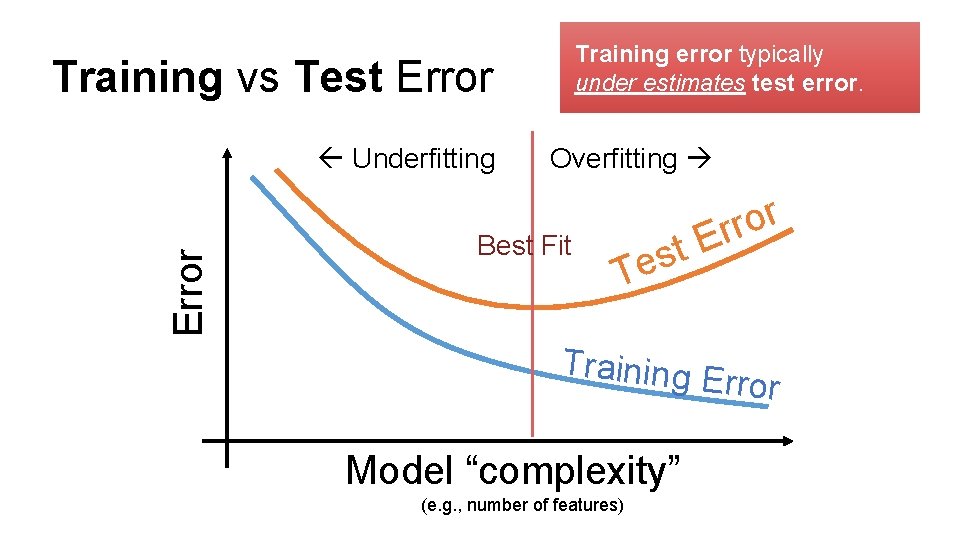

Training error typically under estimates test error. Training vs Test Error Underfitting Overfitting Best Fit t s Te r o r Er Training E rror Model “complexity” (e. g. , number of features)

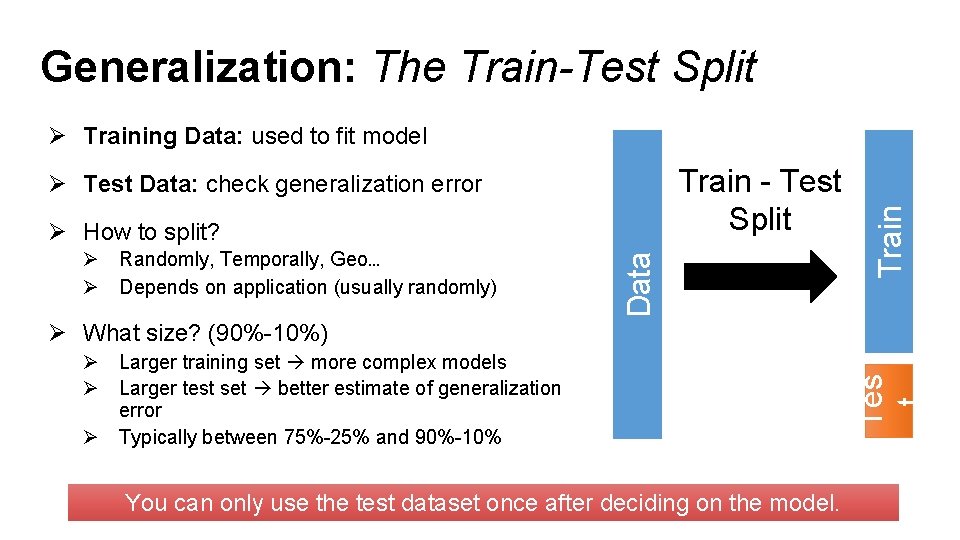

Generalization: The Train-Test Split Train - Test Split Ø Test Data: check generalization error Ø Randomly, Temporally, Geo… Ø Depends on application (usually randomly) Data Ø How to split? Train Ø Training Data: used to fit model Ø Larger training set more complex models Ø Larger test set better estimate of generalization error Ø Typically between 75%-25% and 90%-10% You can only use the test dataset once after deciding on the model. Tes t Ø What size? (90%-10%)

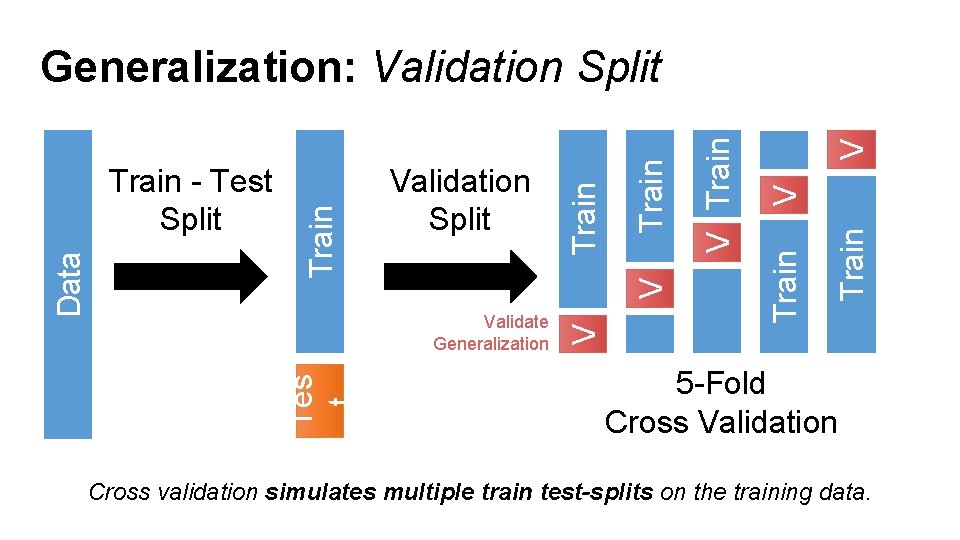

V Train V V Train Validation Split Validate Generalization Tes t Data Train - Test Split Train Generalization: Validation Split 5 -Fold Cross Validation Cross validation simulates multiple train test-splits on the training data.

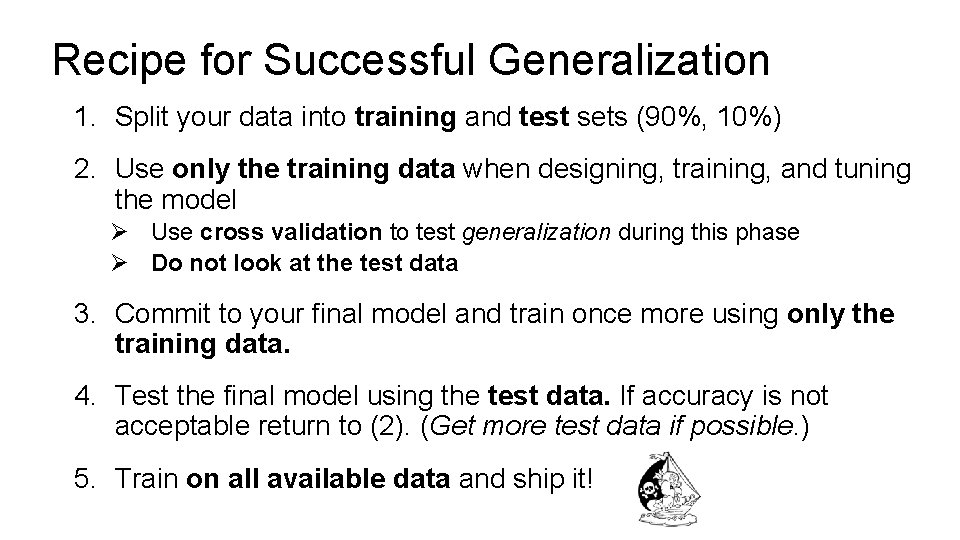

Recipe for Successful Generalization 1. Split your data into training and test sets (90%, 10%) 2. Use only the training data when designing, training, and tuning the model Ø Use cross validation to test generalization during this phase Ø Do not look at the test data 3. Commit to your final model and train once more using only the training data. 4. Test the final model using the test data. If accuracy is not acceptable return to (2). (Get more test data if possible. ) 5. Train on all available data and ship it!

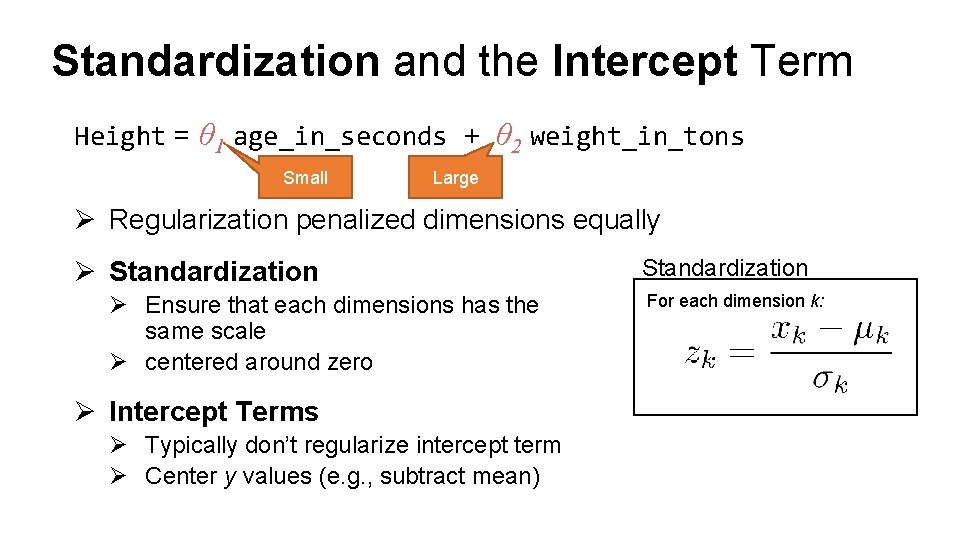

Standardization and the Intercept Term Height = θ 1 age_in_seconds + θ 2 weight_in_tons Small Large Ø Regularization penalized dimensions equally Ø Standardization Ø Ensure that each dimensions has the same scale Ø centered around zero Ø Intercept Terms Ø Typically don’t regularize intercept term Ø Center y values (e. g. , subtract mean) Standardization For each dimension k:

- Slides: 55