First order methods FOR CONVEX OPTIMIZATION J Saketha

![Gradient Method [Cauchy 1847] • Gradient Method [Cauchy 1847] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-19.jpg)

![Accelerated Gradient Method [Ne 83, 88, Be 09] • Two step history Accelerated Gradient Method [Ne 83, 88, Be 09] • Two step history](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-32.jpg)

![Accelerated Gradient Method [Ne 83, 88, Be 09] • Accelerated Gradient Method [Ne 83, 88, Be 09] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-33.jpg)

![Towards optimality [Moritz Hardt] • Towards optimality [Moritz Hardt] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-34.jpg)

![Towards optimality [Moritz Hardt] • Towards optimality [Moritz Hardt] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-35.jpg)

![A Comparison of the two gradient methods • [L. Vandenberghe EE 236 C Notes] A Comparison of the two gradient methods • [L. Vandenberghe EE 236 C Notes]](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-39.jpg)

![Bibliography • [Ne 04] Nesterov, Yurii. Introductory lectures on convex optimization : a basic Bibliography • [Ne 04] Nesterov, Yurii. Introductory lectures on convex optimization : a basic](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-56.jpg)

- Slides: 56

First order methods FOR CONVEX OPTIMIZATION J. Saketha Nath (IIT Bombay; Microsoft)

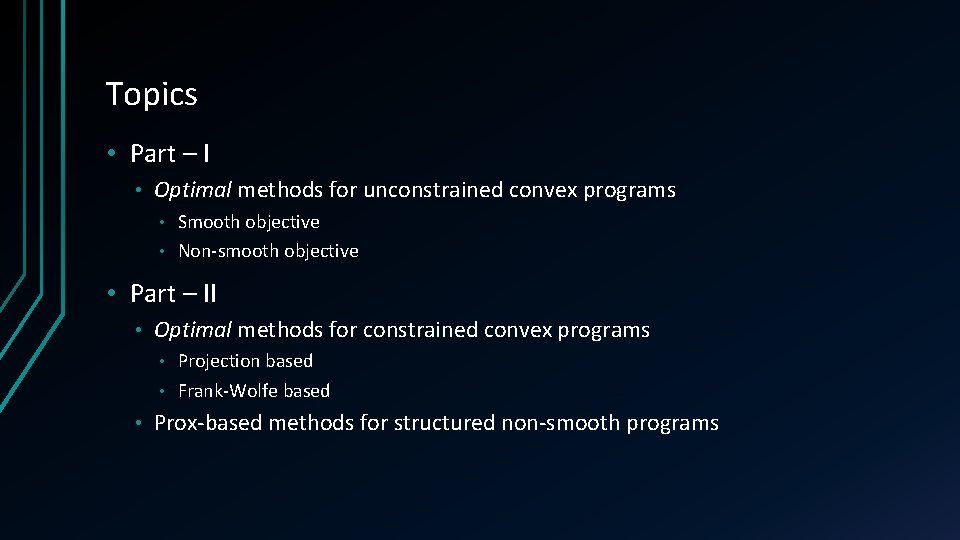

Topics • Part – I • Optimal methods for unconstrained convex programs Smooth objective • Non-smooth objective • • Part – II • Optimal methods for constrained convex programs Projection based • Frank-Wolfe based • • Prox-based methods for structured non-smooth programs

Non-Topics • Step-size schemes • Bundle methods • Stochastic methods • Inexact oracles • Non-Euclidean extensions (Mirror-friends)

Motivation & EXAMPLE APPLICATIONS

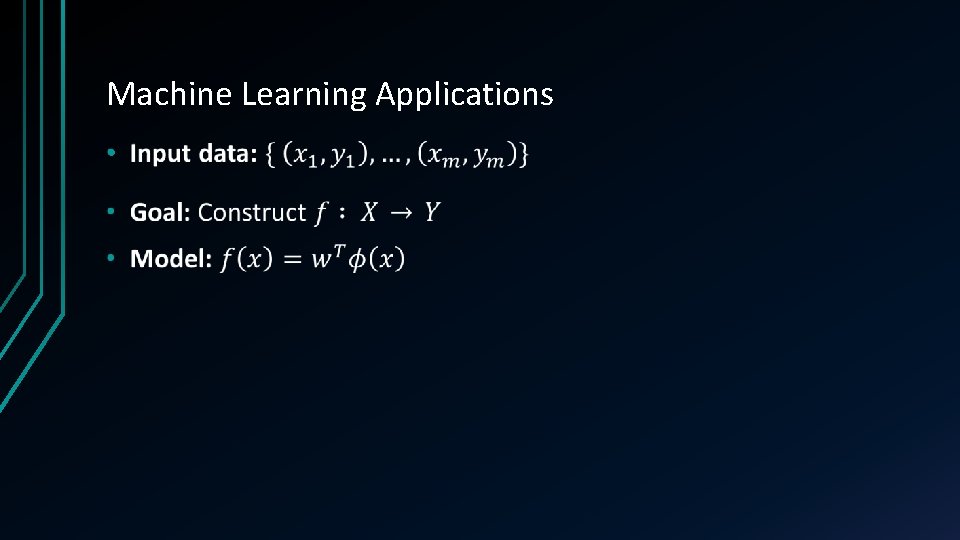

Machine Learning Applications •

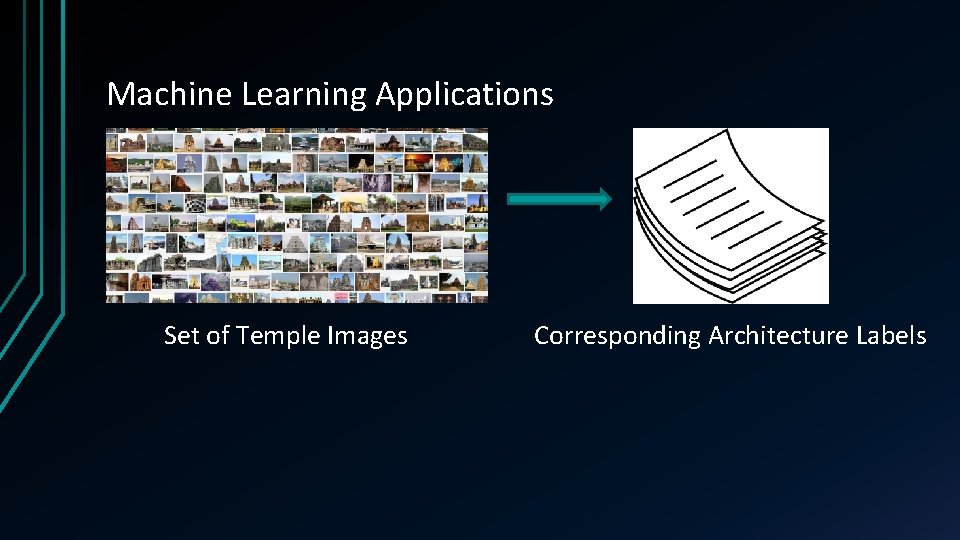

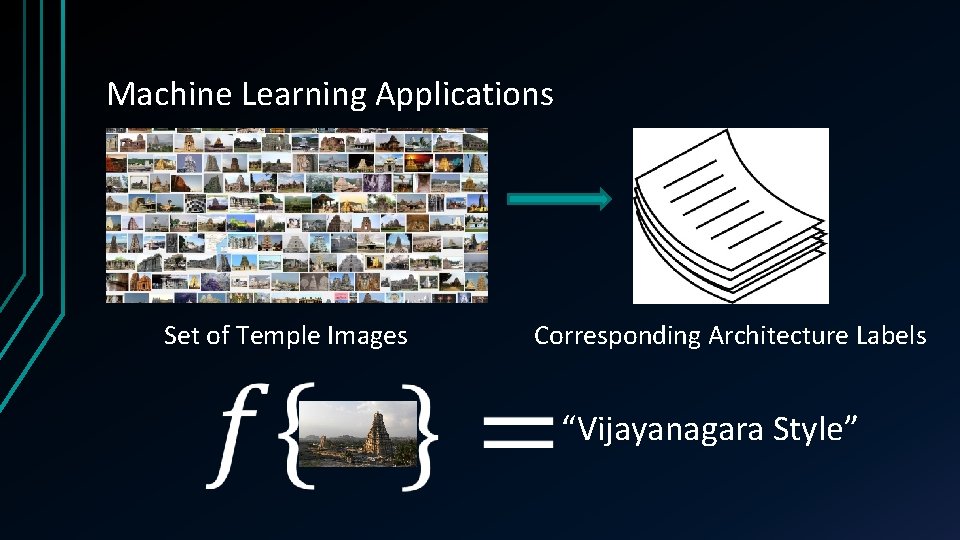

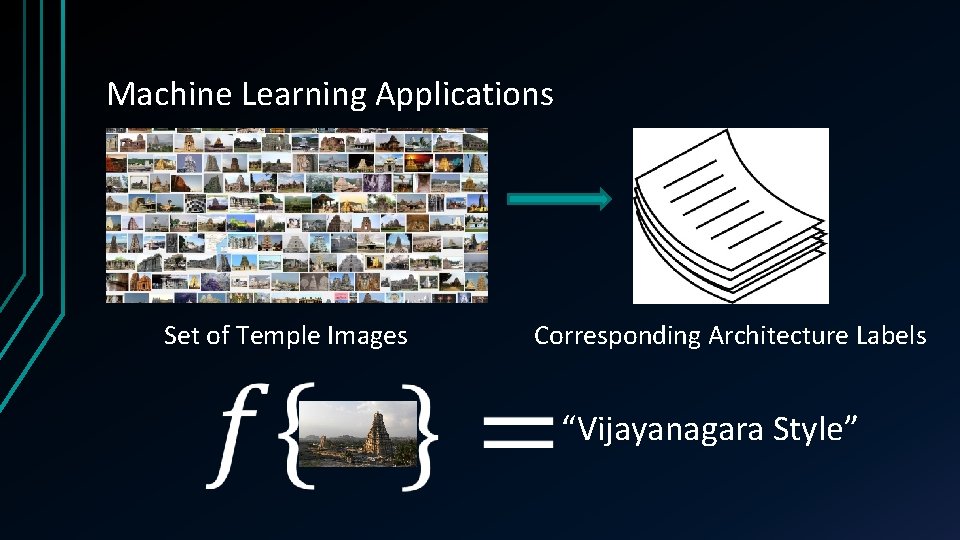

Machine Learning Applications Set of Temple Images Corresponding Architecture Labels

Machine Learning Applications Set of Temple Images Corresponding Architecture Labels “Vijayanagara Style”

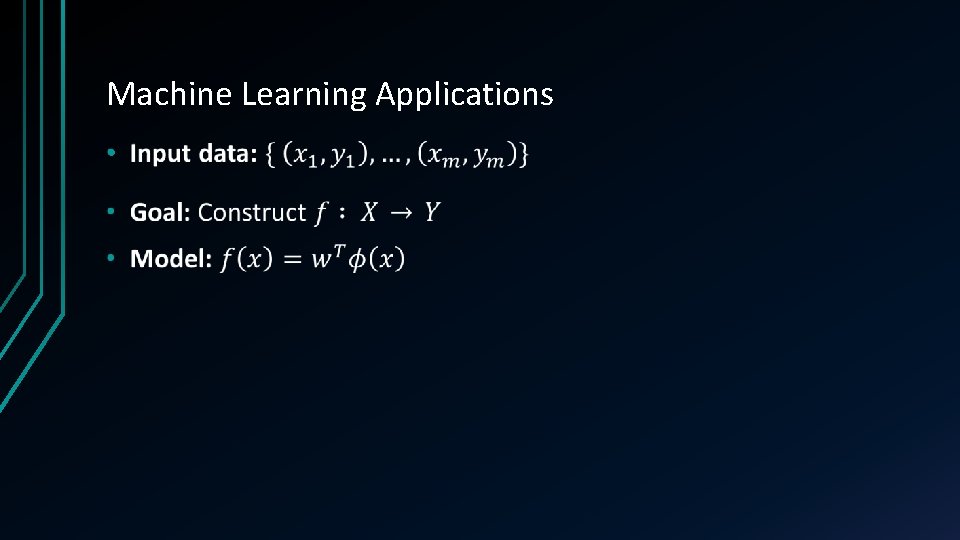

Machine Learning Applications •

Machine Learning Applications •

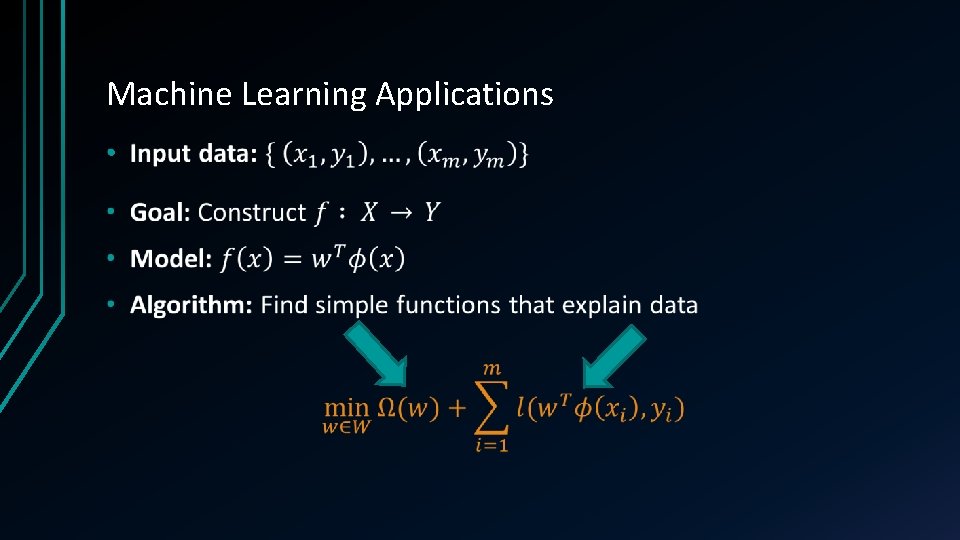

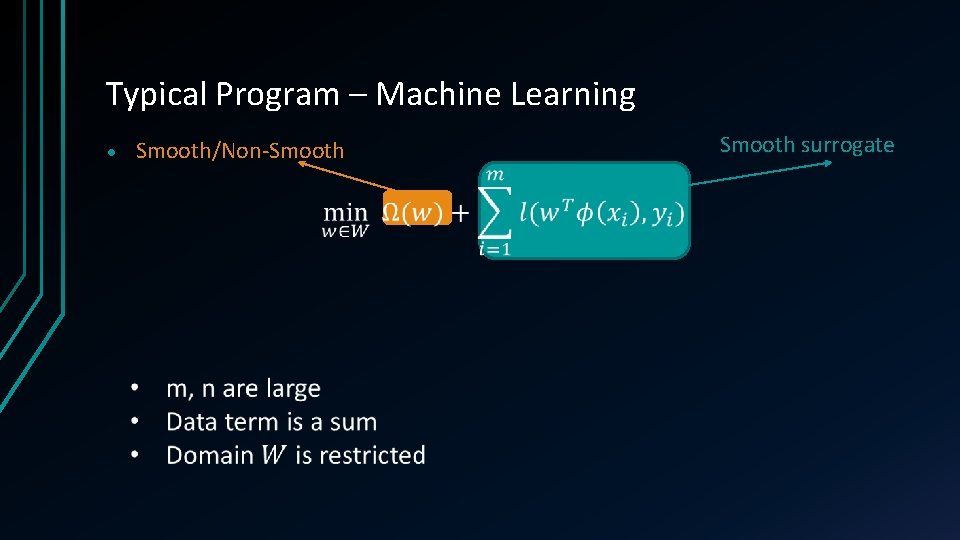

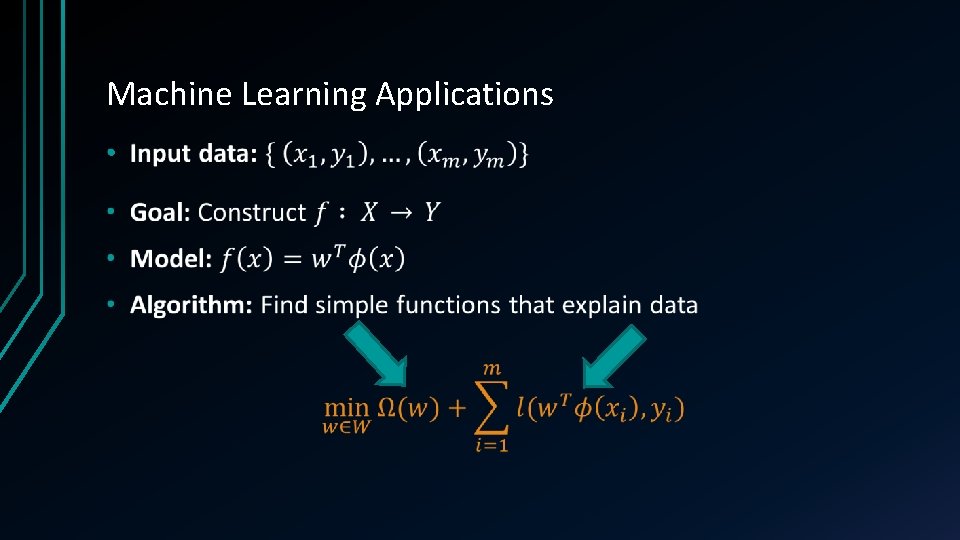

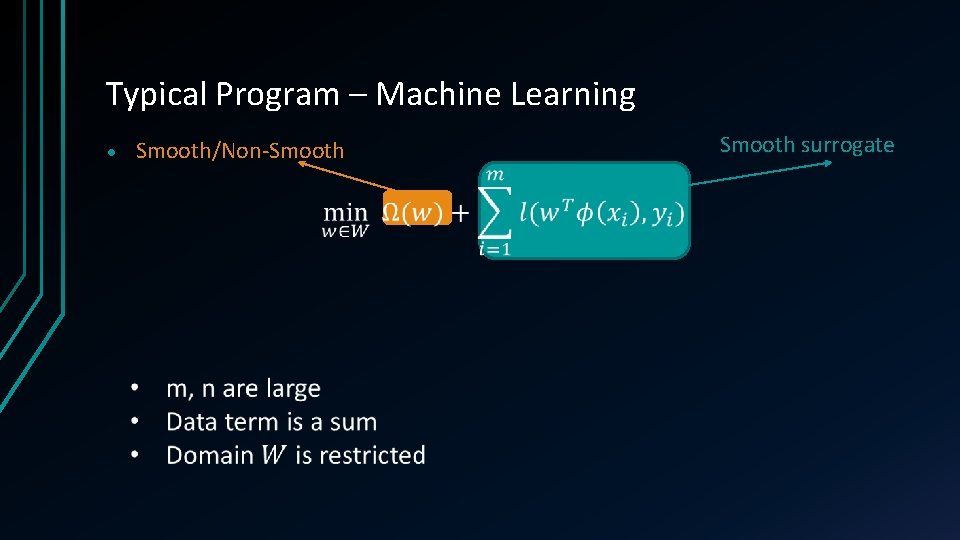

Typical Program – Machine Learning • Smooth/Non-Smooth surrogate

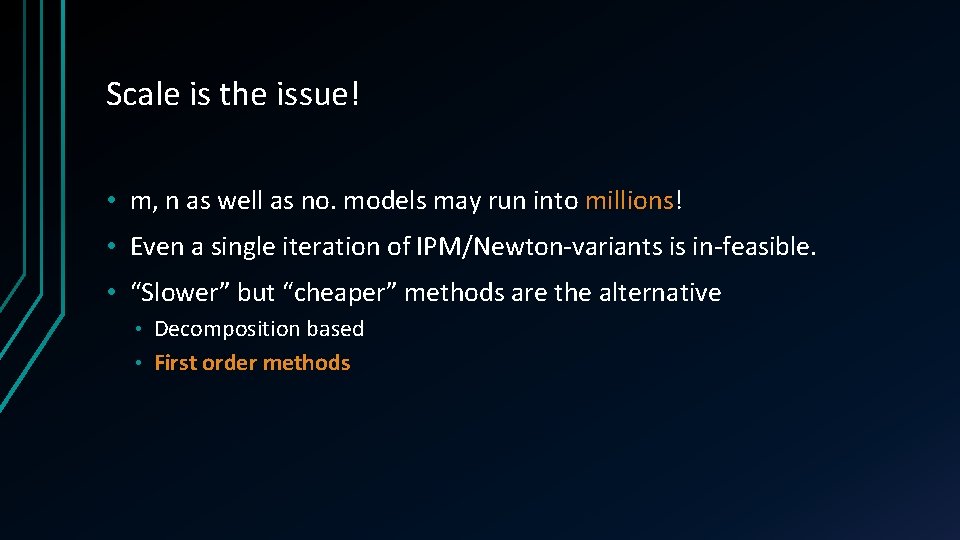

Scale is the issue! • m, n as well as no. models may run into millions! • Even a single iteration of IPM/Newton-variants is in-feasible. • “Slower” but “cheaper” methods are the alternative Decomposition based • First order methods •

First Order Methods - Overview •

First Order Methods - Overview •

Smooth un-constrained

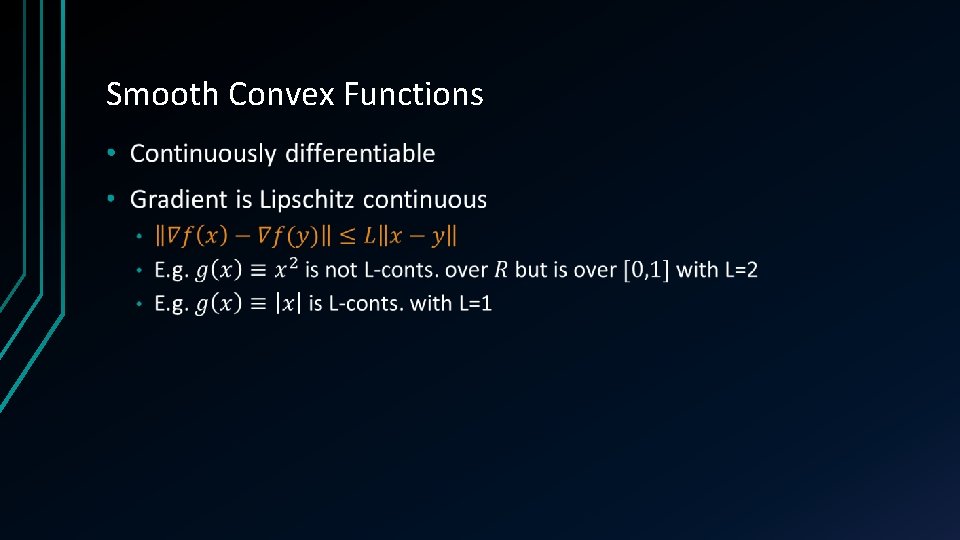

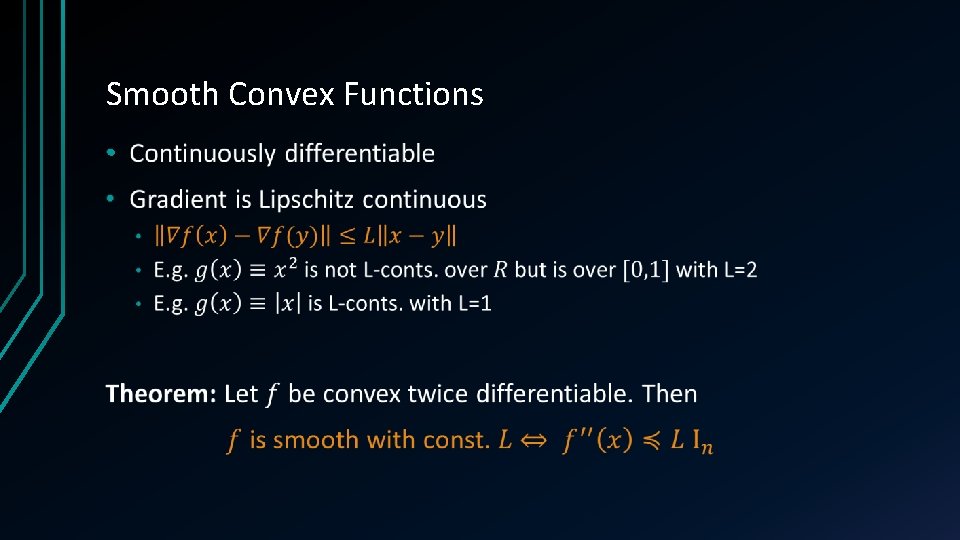

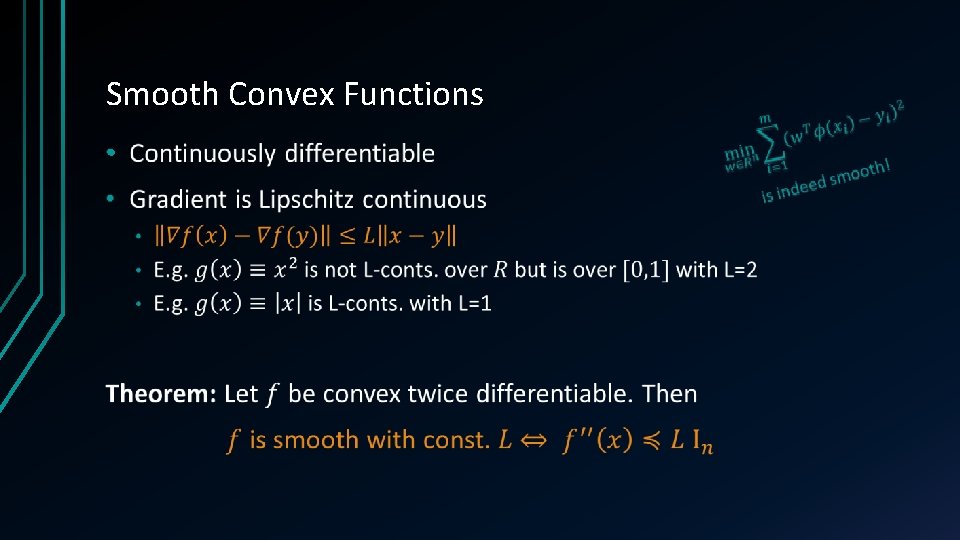

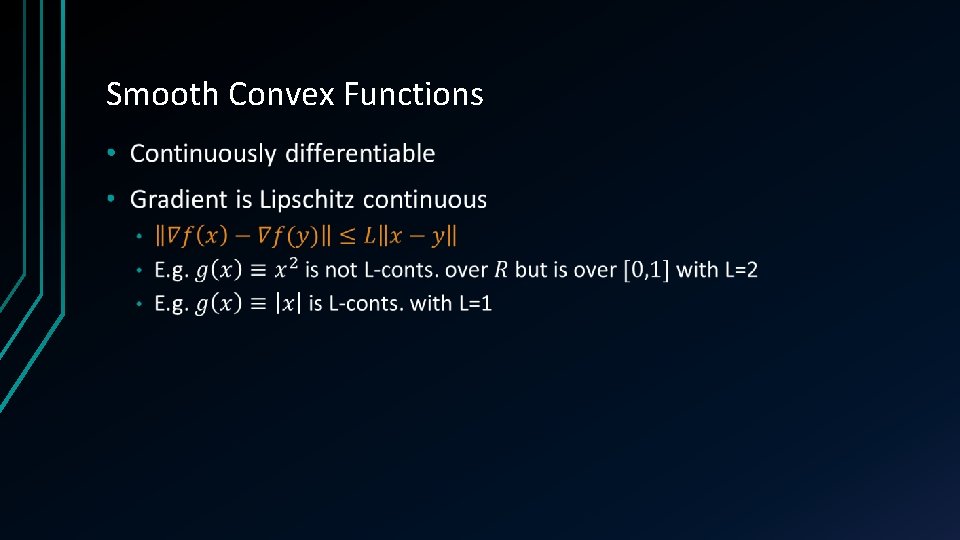

Smooth Convex Functions • Continuously differentiable • Gradient is Lipschitz continuous

Smooth Convex Functions •

Smooth Convex Functions •

Smooth Convex Functions •

![Gradient Method Cauchy 1847 Gradient Method [Cauchy 1847] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-19.jpg)

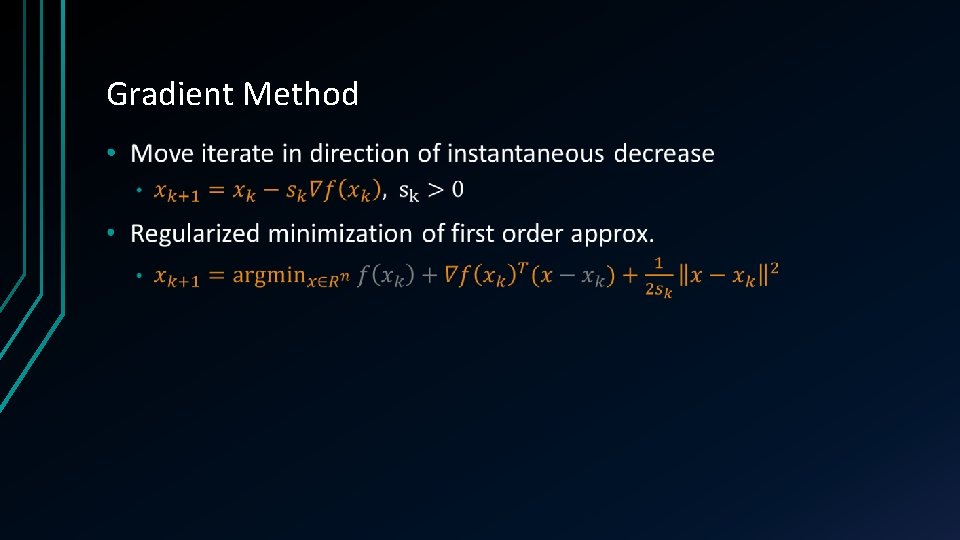

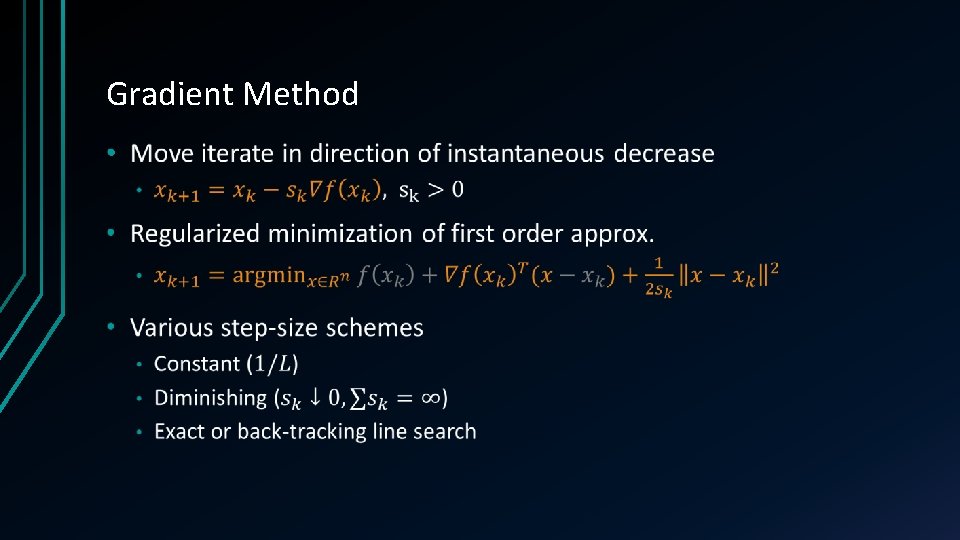

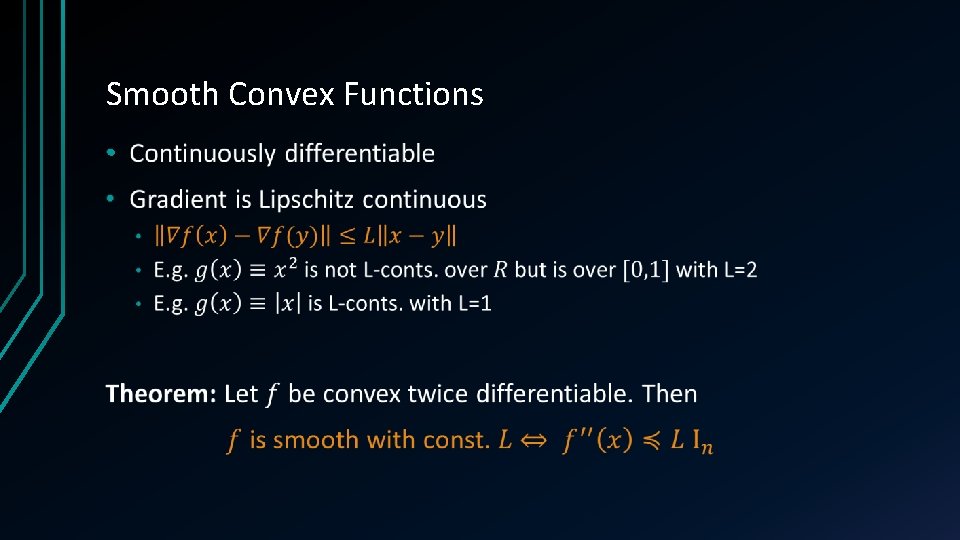

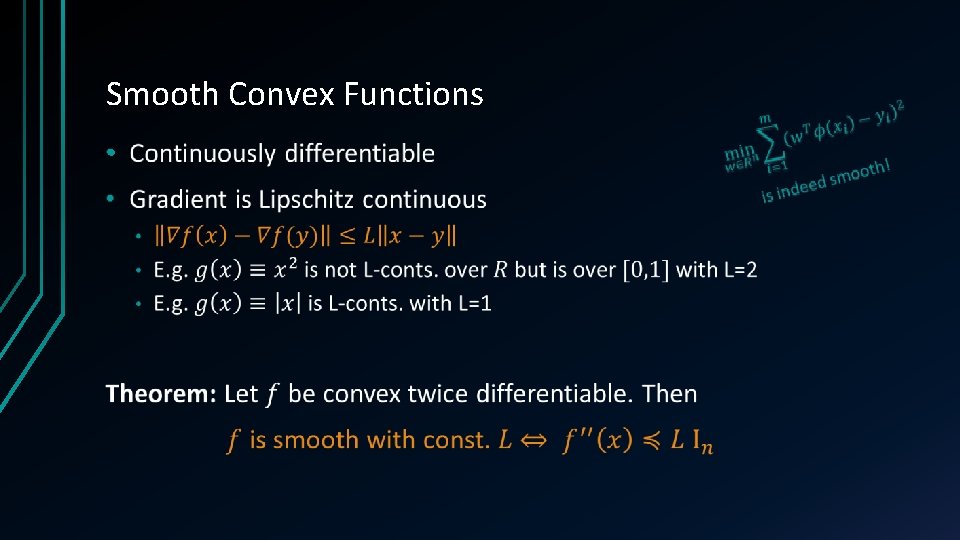

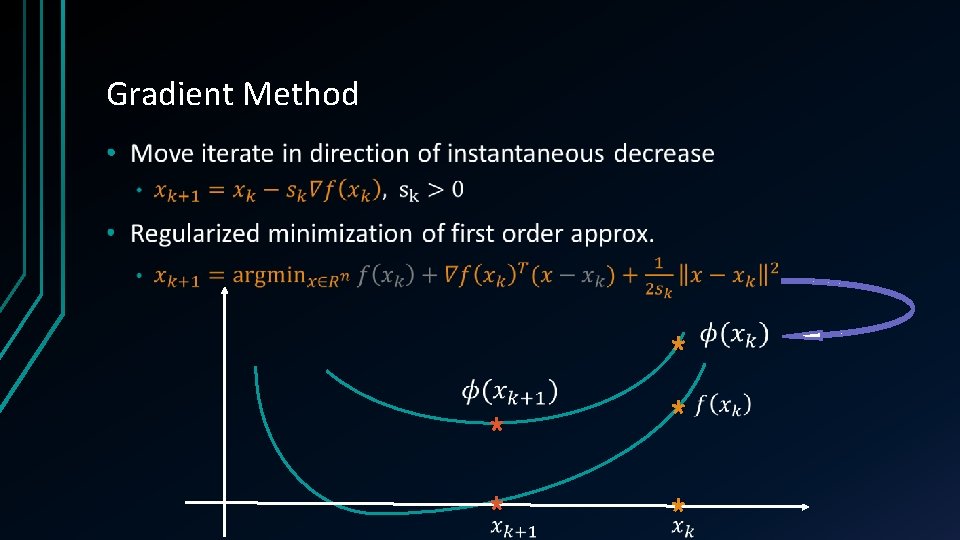

Gradient Method [Cauchy 1847] •

Gradient Method •

Gradient Method •

Gradient Method •

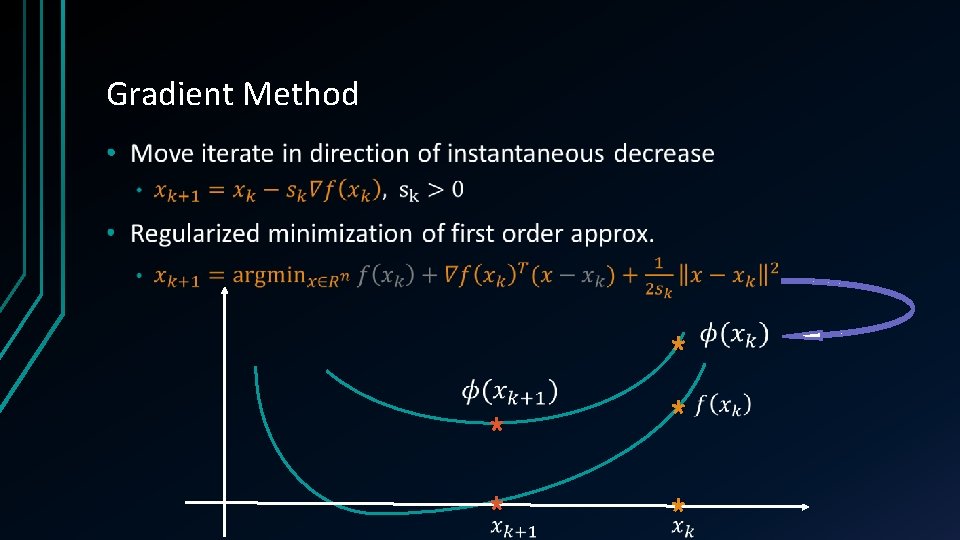

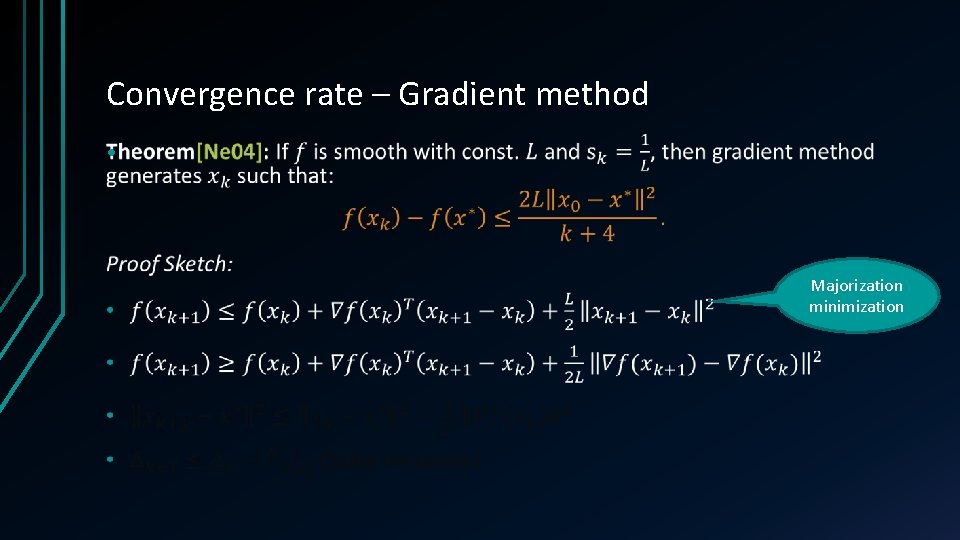

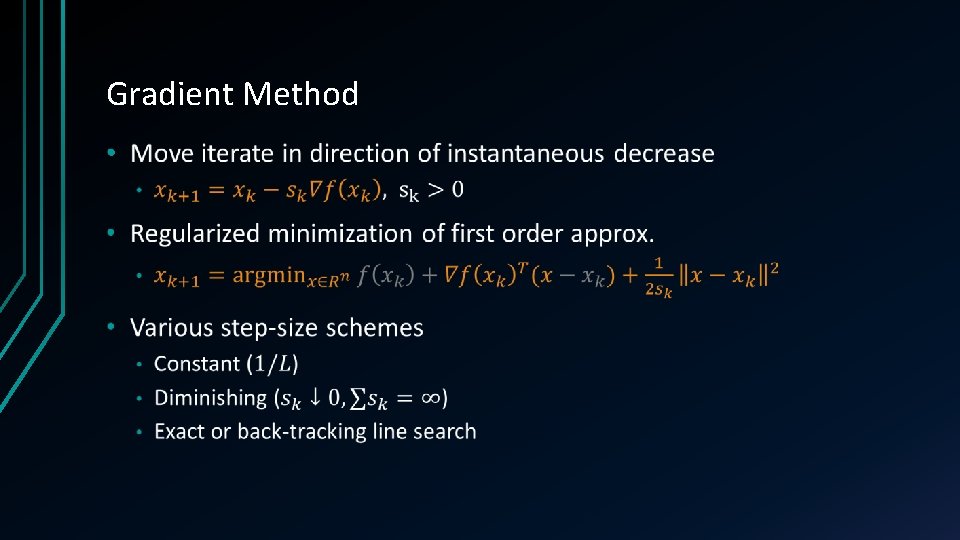

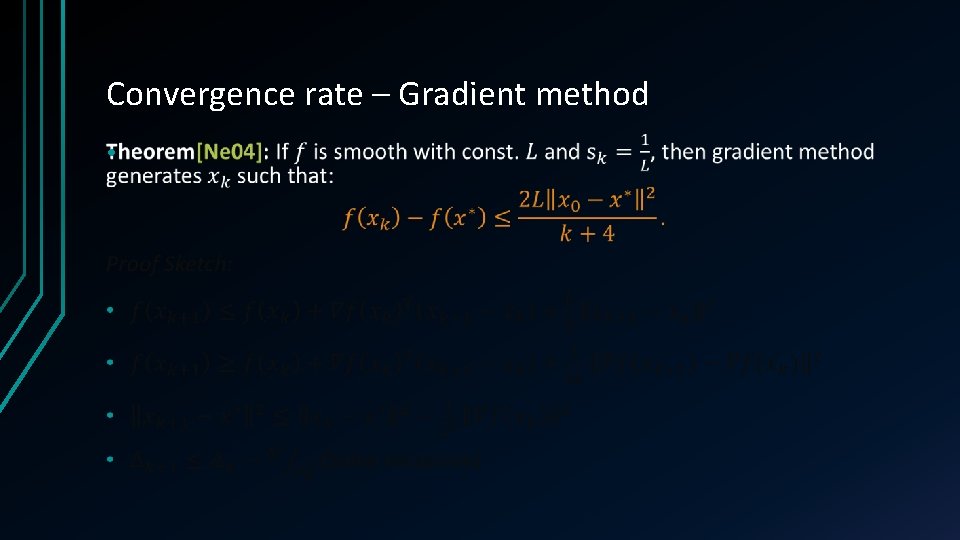

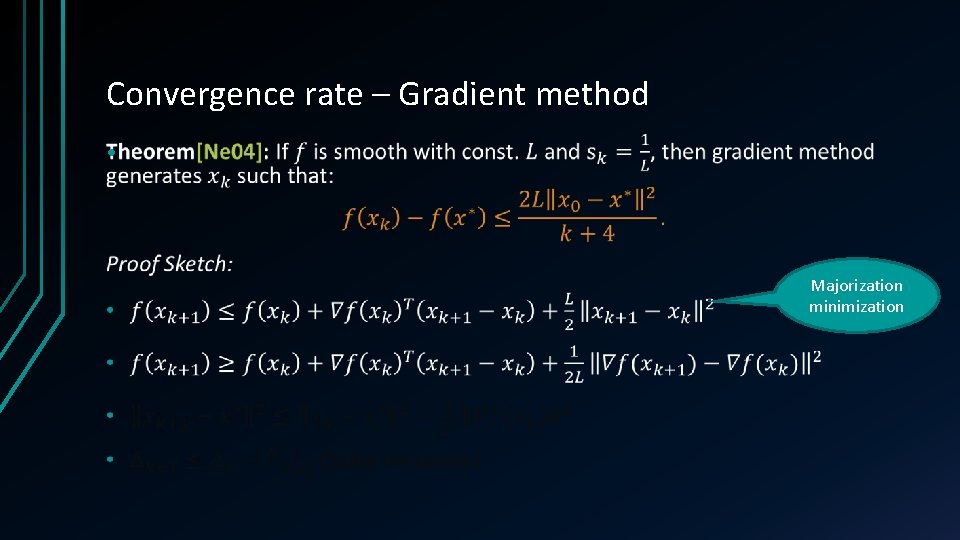

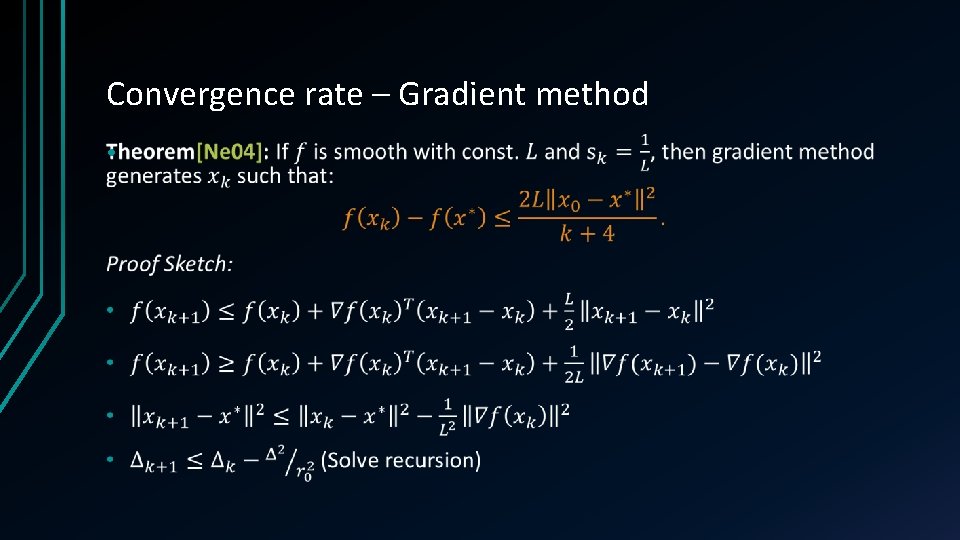

Convergence rate – Gradient method •

Convergence rate – Gradient method • Majorization minimization

Convergence rate – Gradient method •

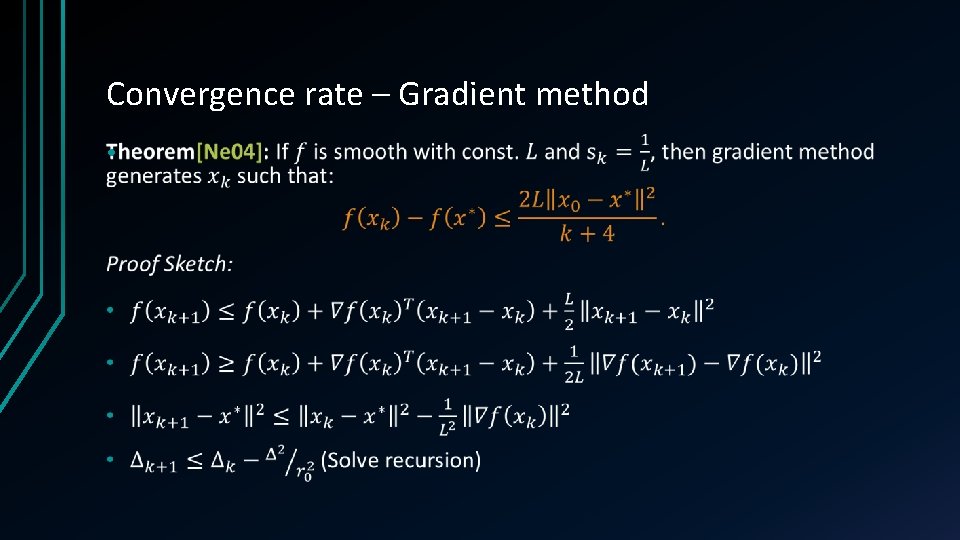

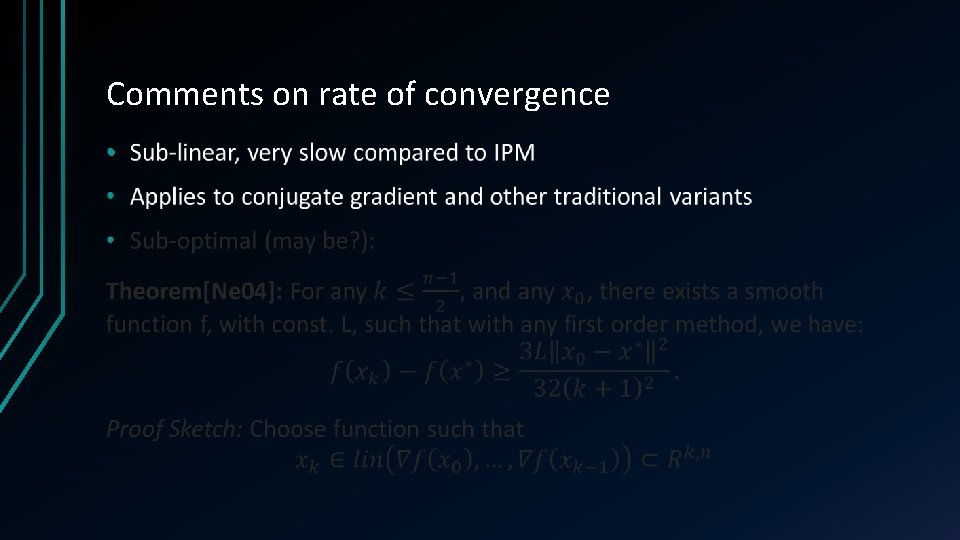

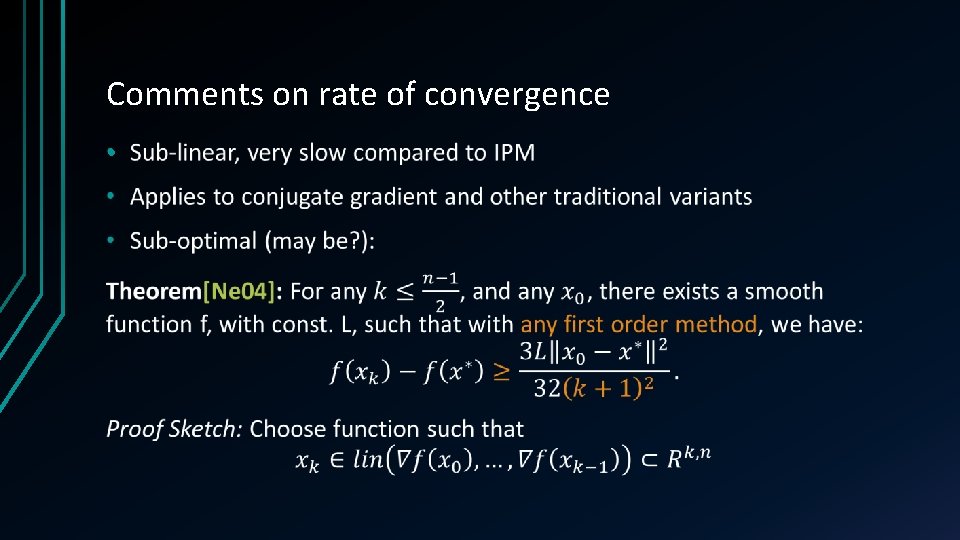

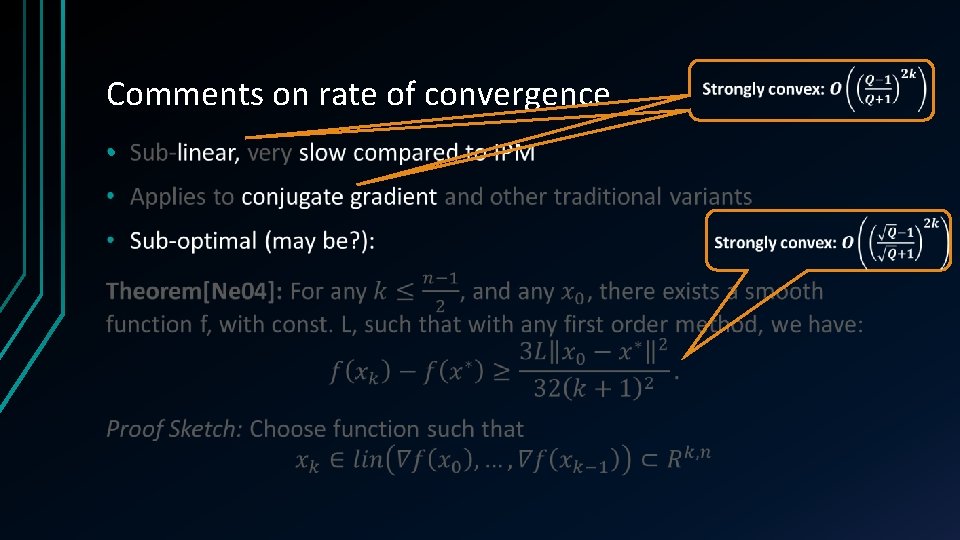

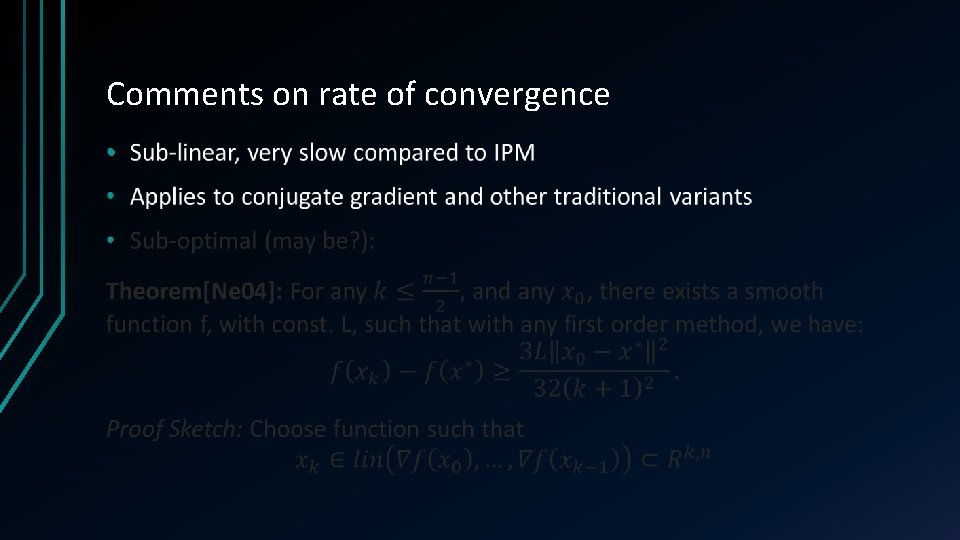

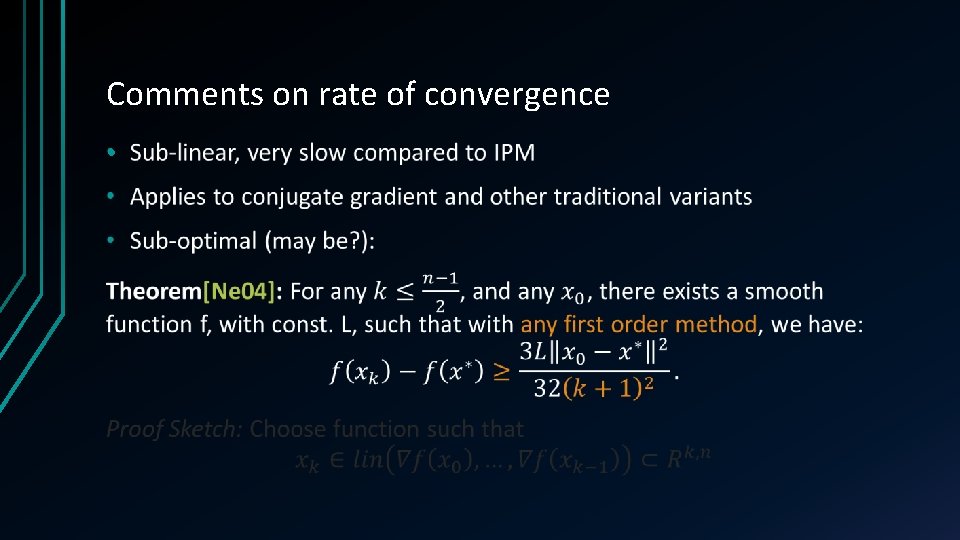

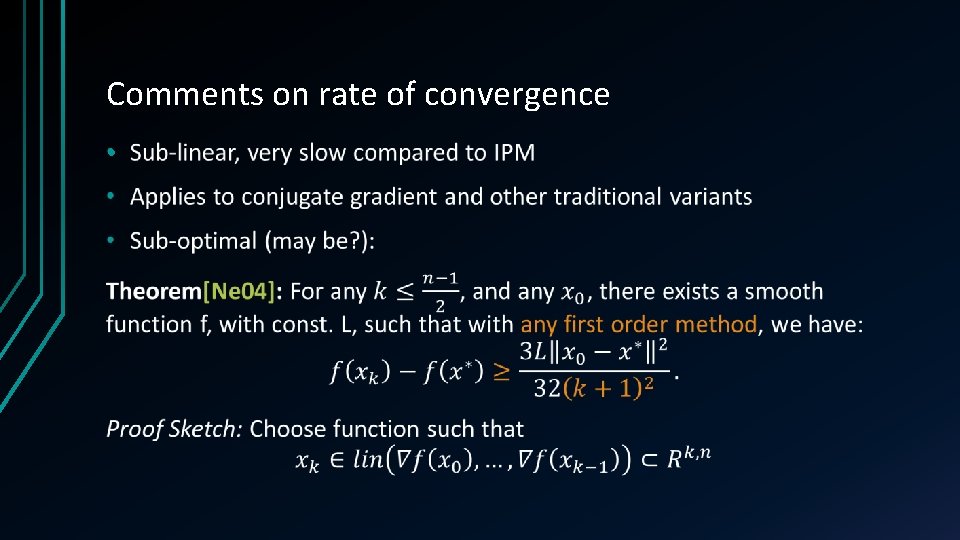

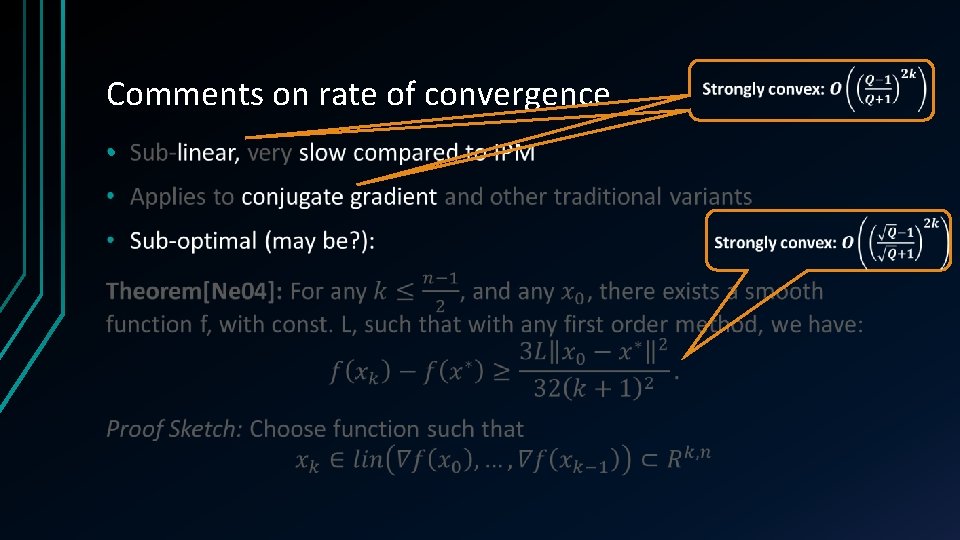

Comments on rate of convergence •

Comments on rate of convergence •

Comments on rate of convergence •

Comments on rate of convergence •

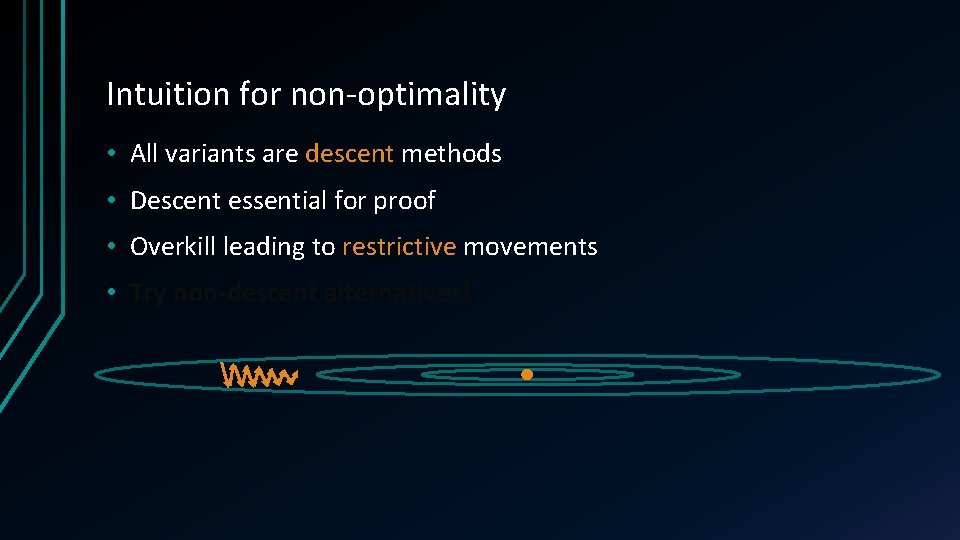

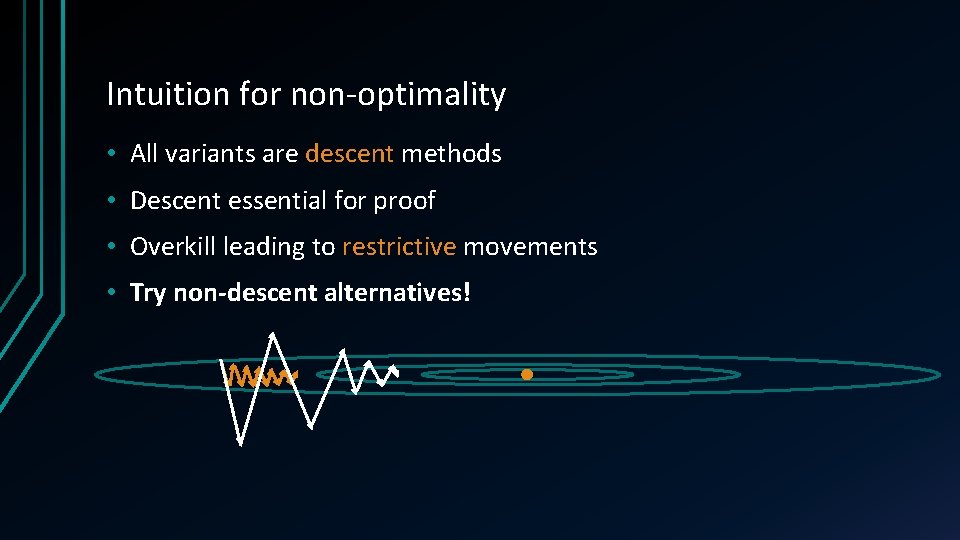

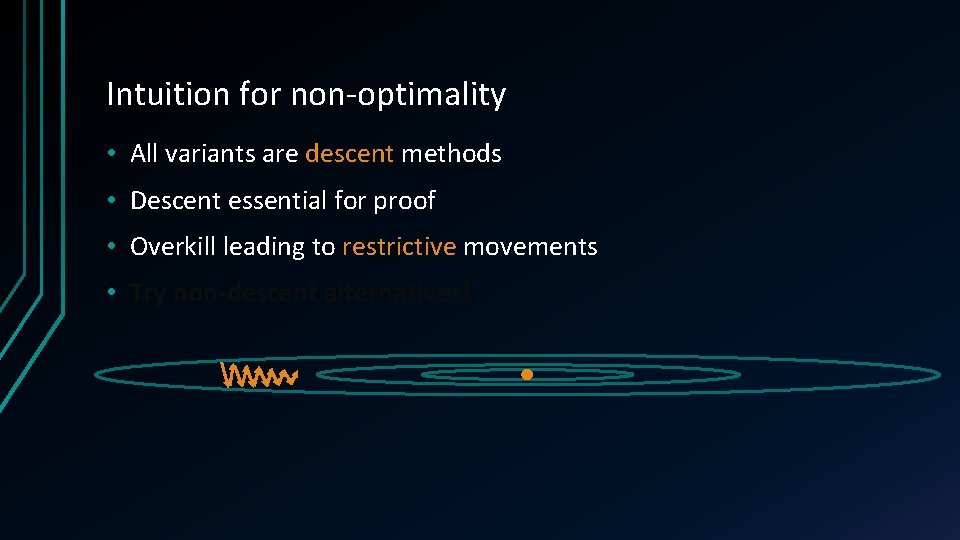

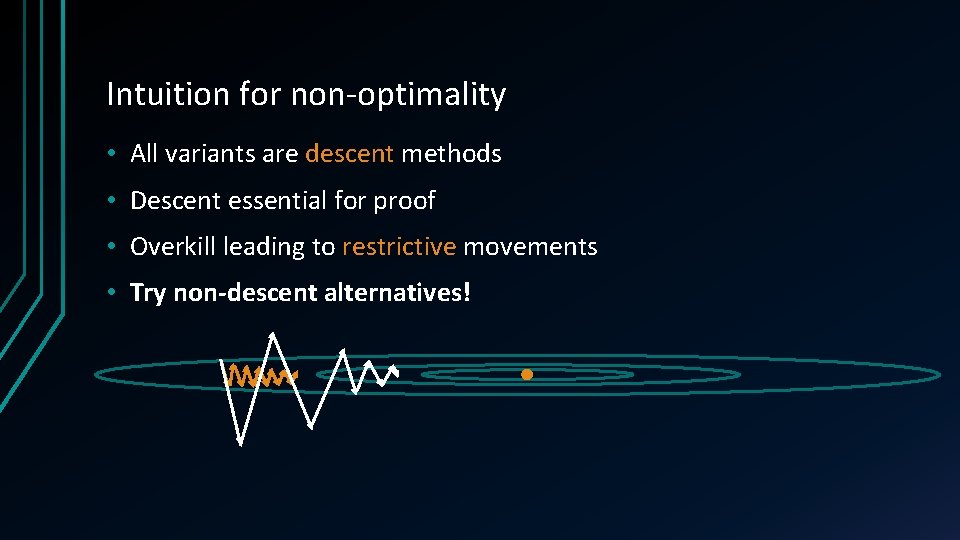

Intuition for non-optimality • All variants are descent methods • Descent essential for proof • Overkill leading to restrictive movements • Try non-descent alternatives!

Intuition for non-optimality • All variants are descent methods • Descent essential for proof • Overkill leading to restrictive movements • Try non-descent alternatives!

![Accelerated Gradient Method Ne 83 88 Be 09 Two step history Accelerated Gradient Method [Ne 83, 88, Be 09] • Two step history](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-32.jpg)

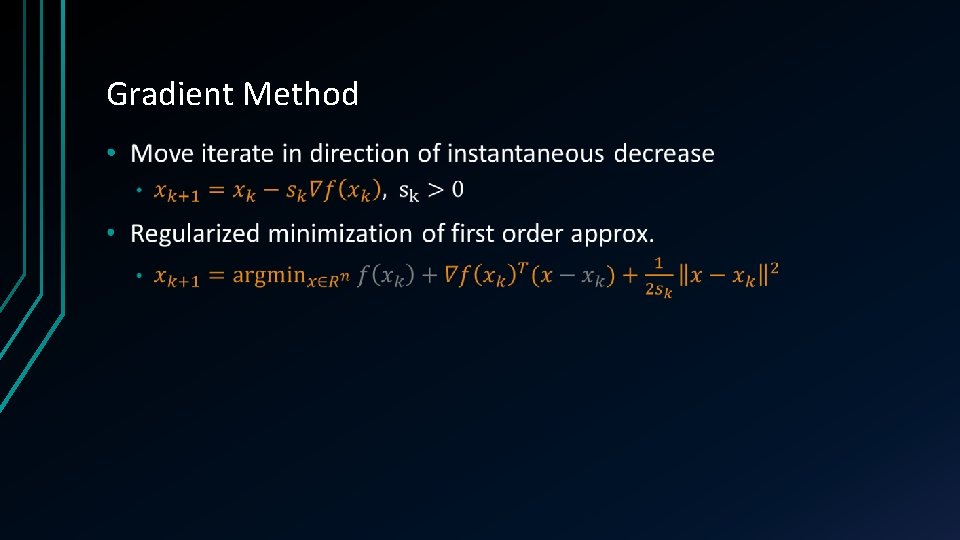

Accelerated Gradient Method [Ne 83, 88, Be 09] • Two step history

![Accelerated Gradient Method Ne 83 88 Be 09 Accelerated Gradient Method [Ne 83, 88, Be 09] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-33.jpg)

Accelerated Gradient Method [Ne 83, 88, Be 09] •

![Towards optimality Moritz Hardt Towards optimality [Moritz Hardt] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-34.jpg)

Towards optimality [Moritz Hardt] •

![Towards optimality Moritz Hardt Towards optimality [Moritz Hardt] •](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-35.jpg)

Towards optimality [Moritz Hardt] •

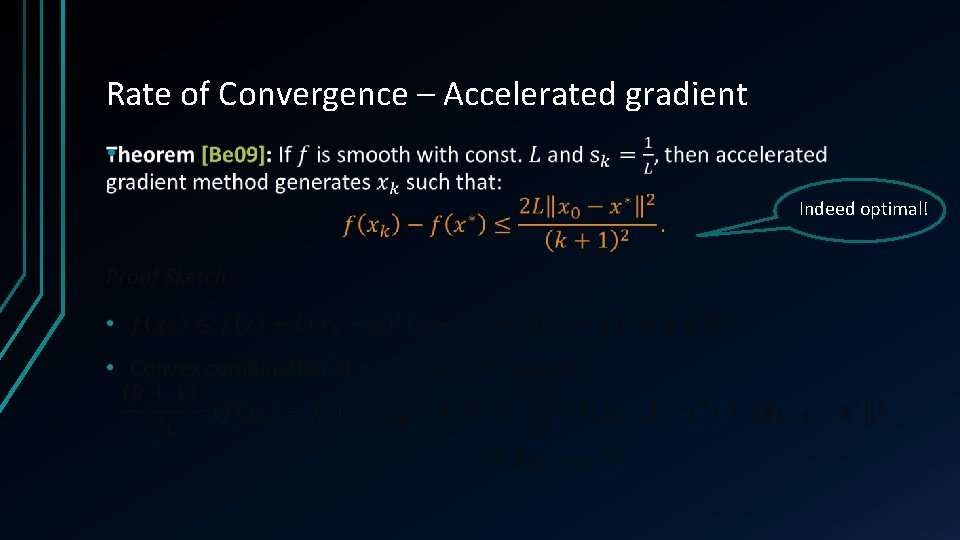

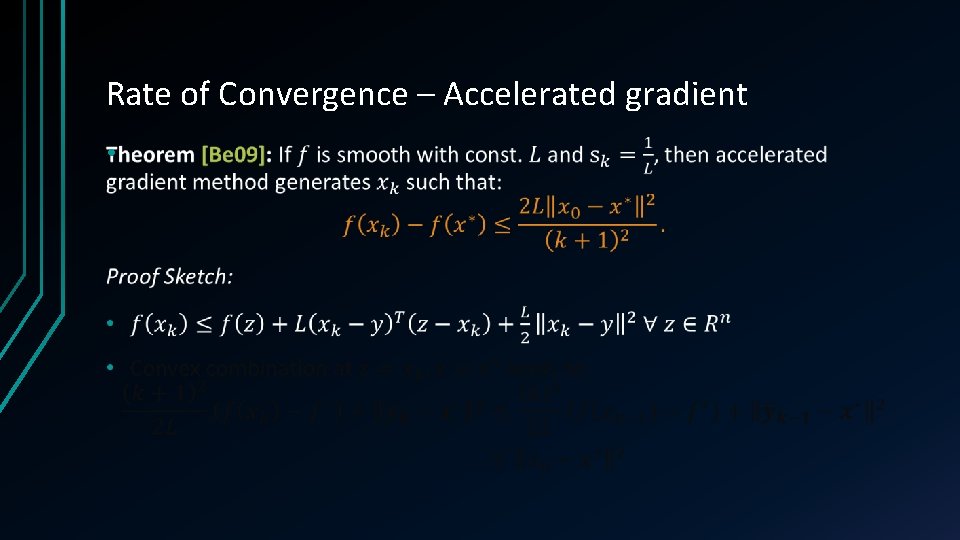

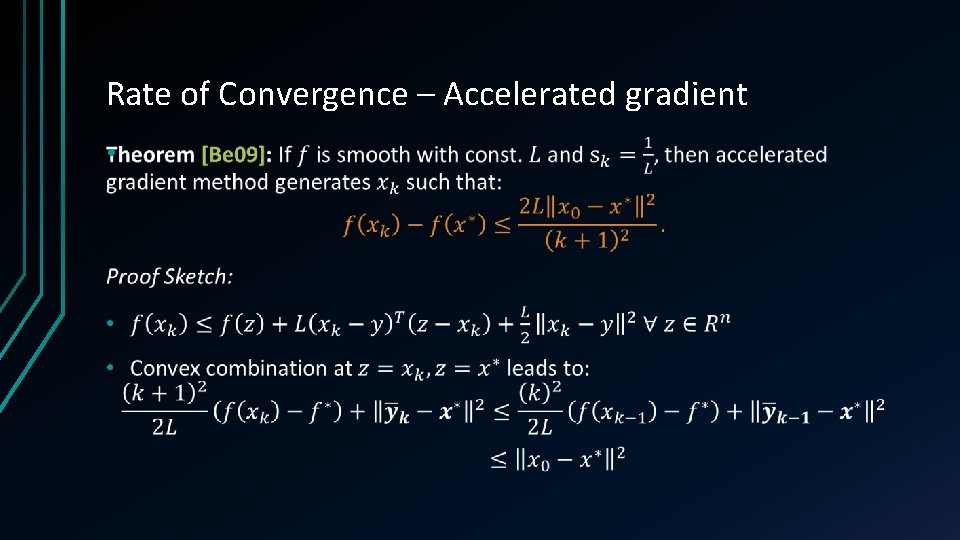

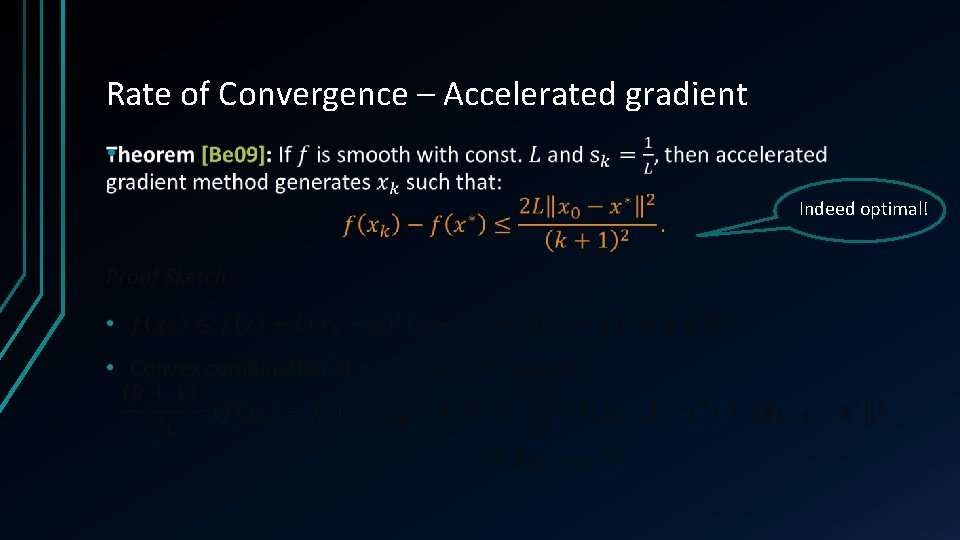

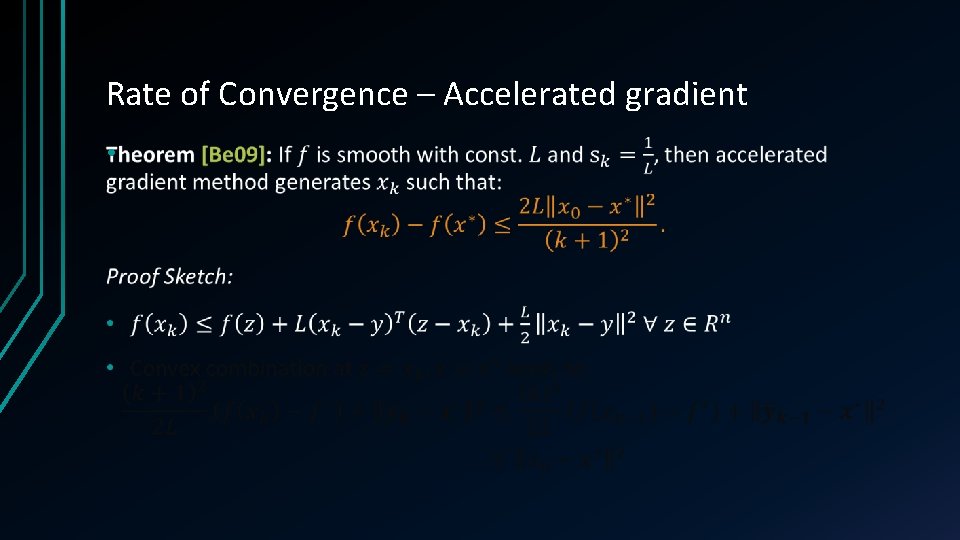

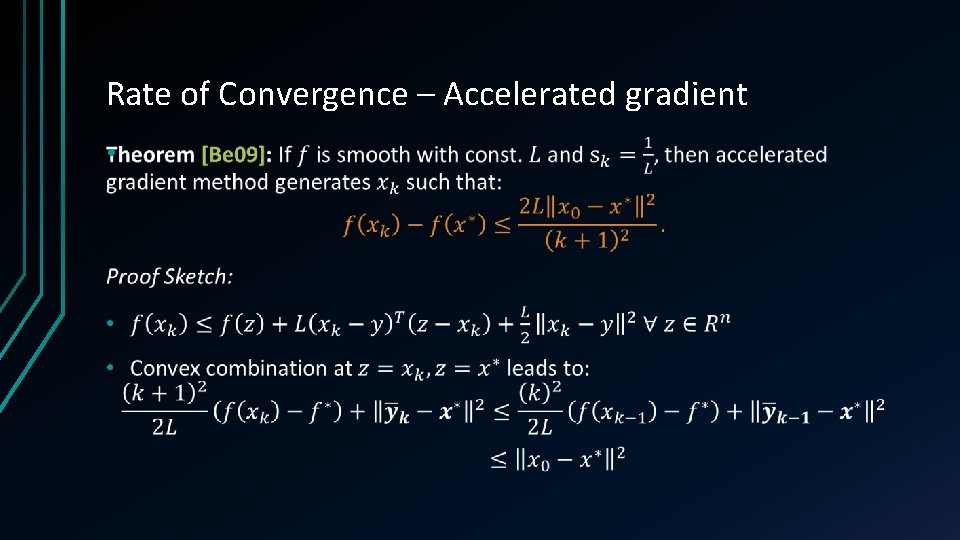

Rate of Convergence – Accelerated gradient • Indeed optimal!

Rate of Convergence – Accelerated gradient •

Rate of Convergence – Accelerated gradient •

![A Comparison of the two gradient methods L Vandenberghe EE 236 C Notes A Comparison of the two gradient methods • [L. Vandenberghe EE 236 C Notes]](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-39.jpg)

A Comparison of the two gradient methods • [L. Vandenberghe EE 236 C Notes]

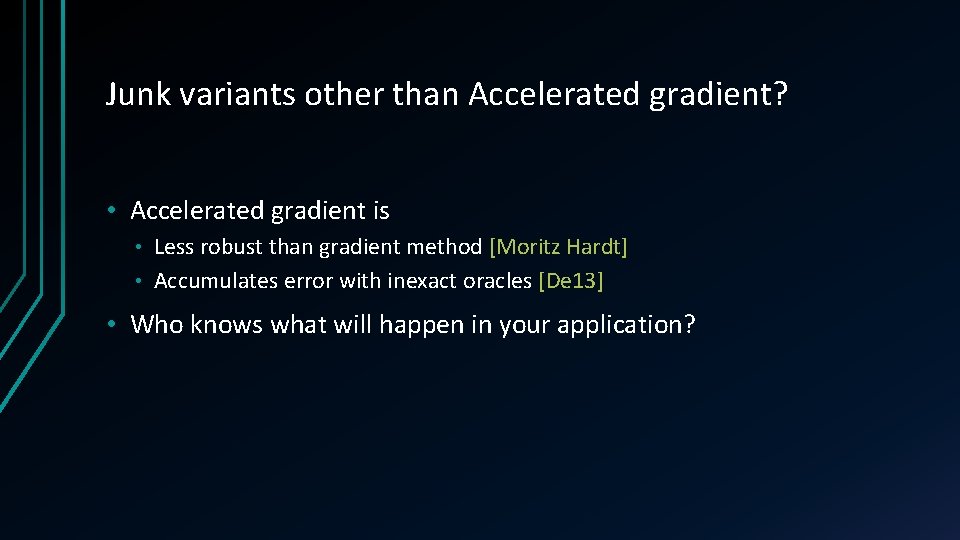

Junk variants other than Accelerated gradient? • Accelerated gradient is Less robust than gradient method [Moritz Hardt] • Accumulates error with inexact oracles [De 13] • • Who knows what will happen in your application?

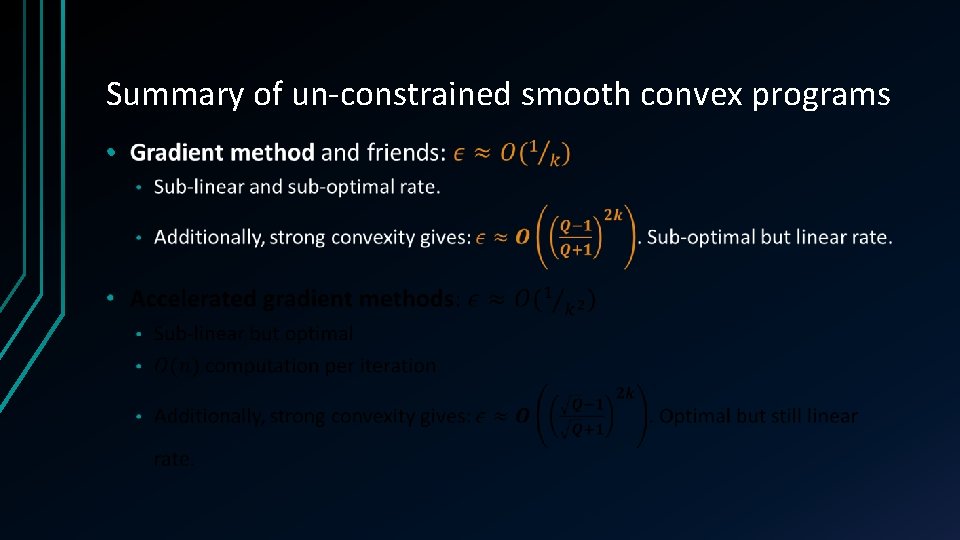

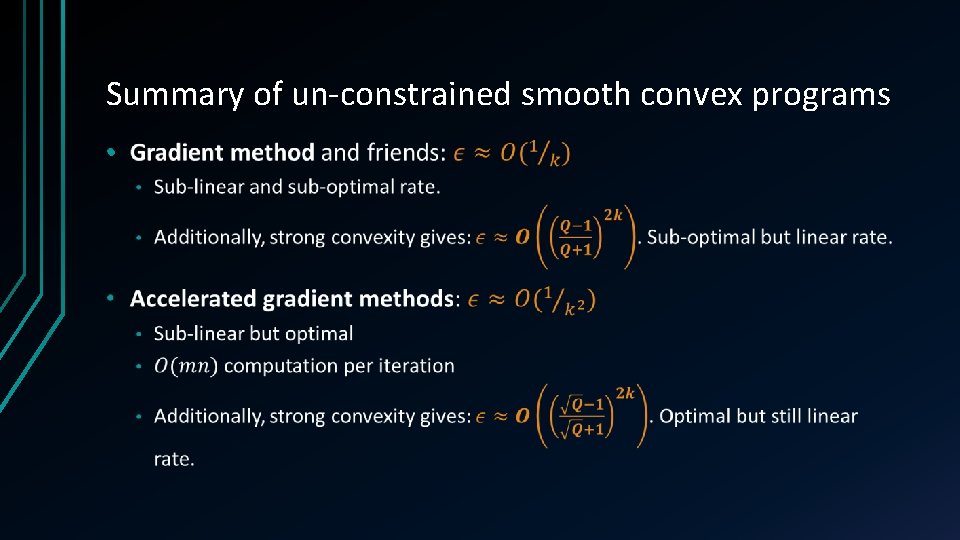

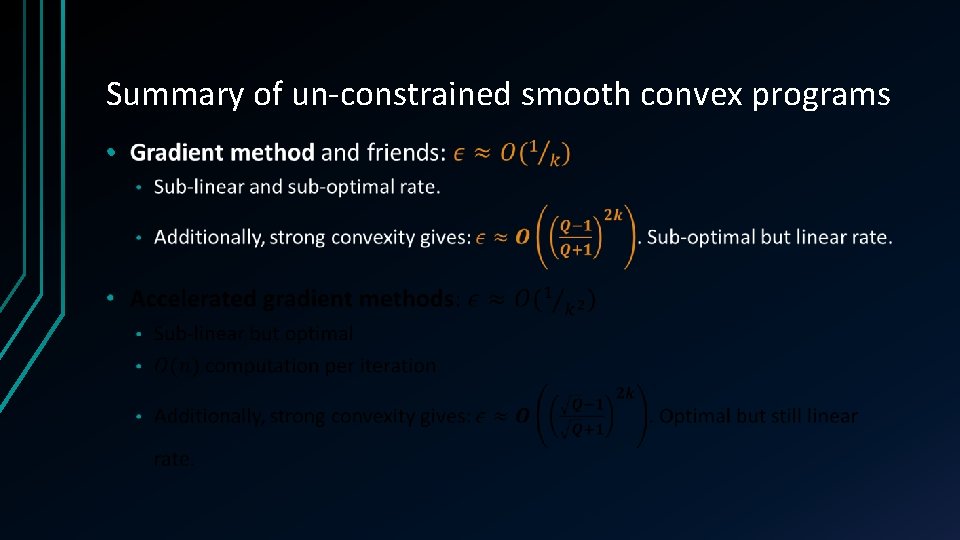

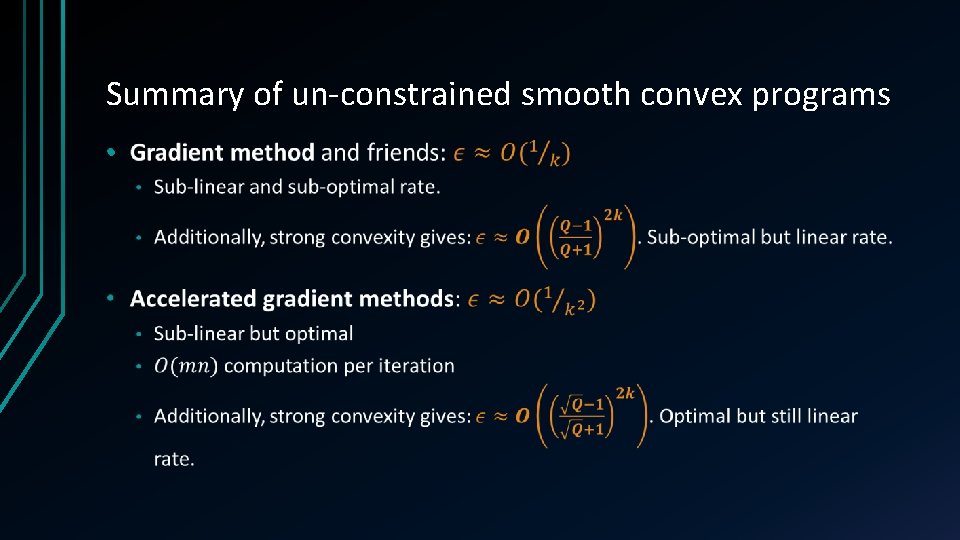

Summary of un-constrained smooth convex programs •

Summary of un-constrained smooth convex programs •

Non-smooth unconstrained

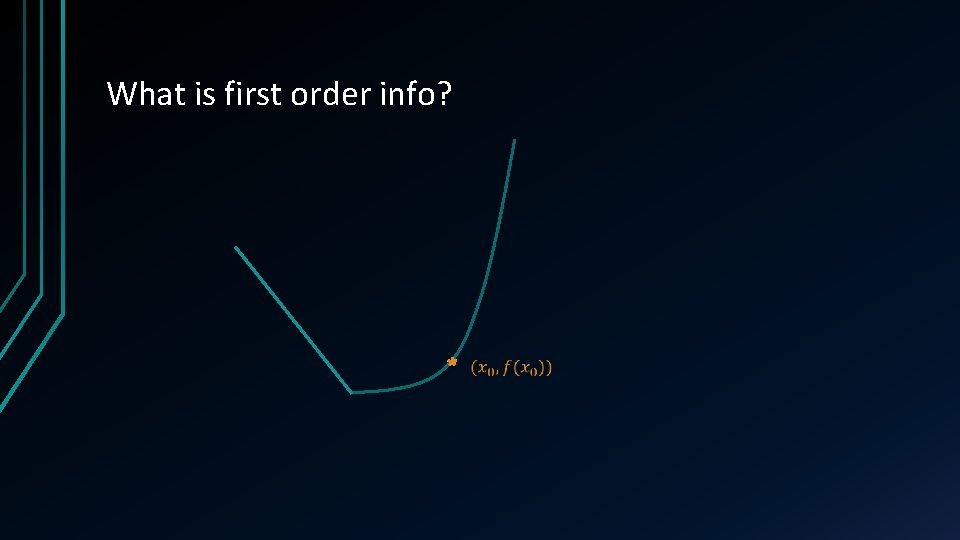

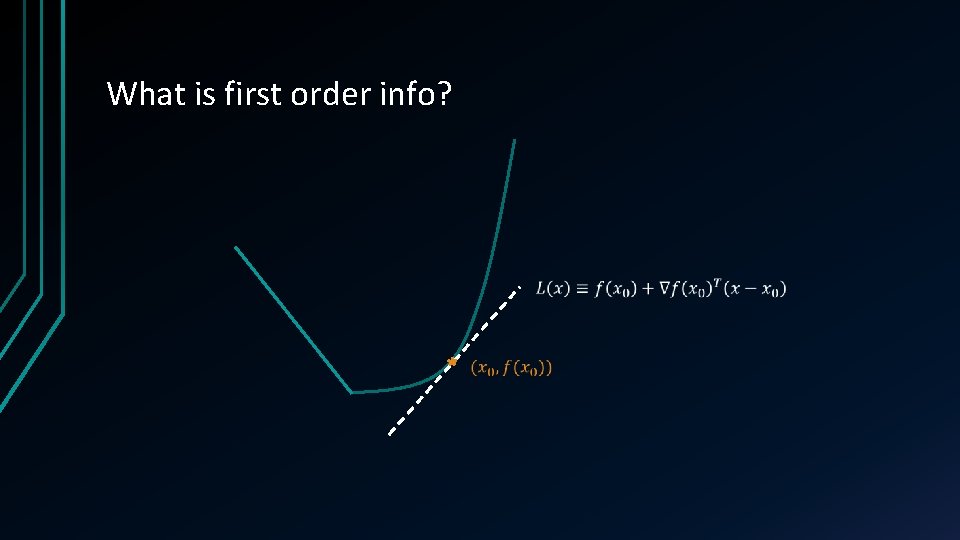

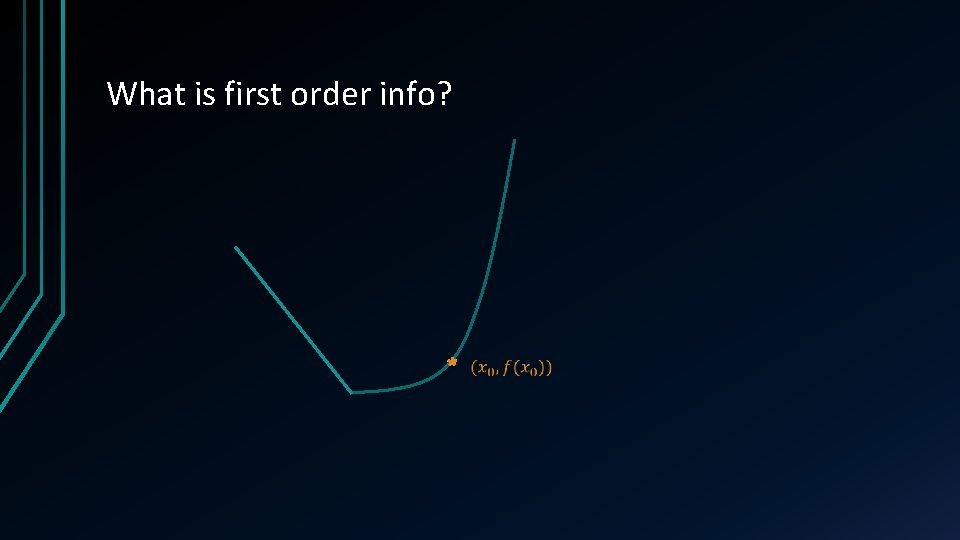

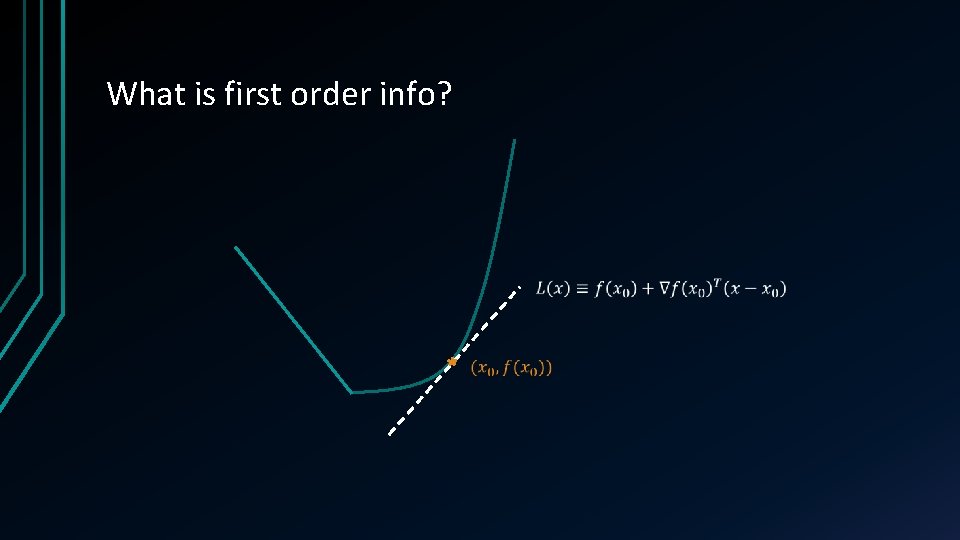

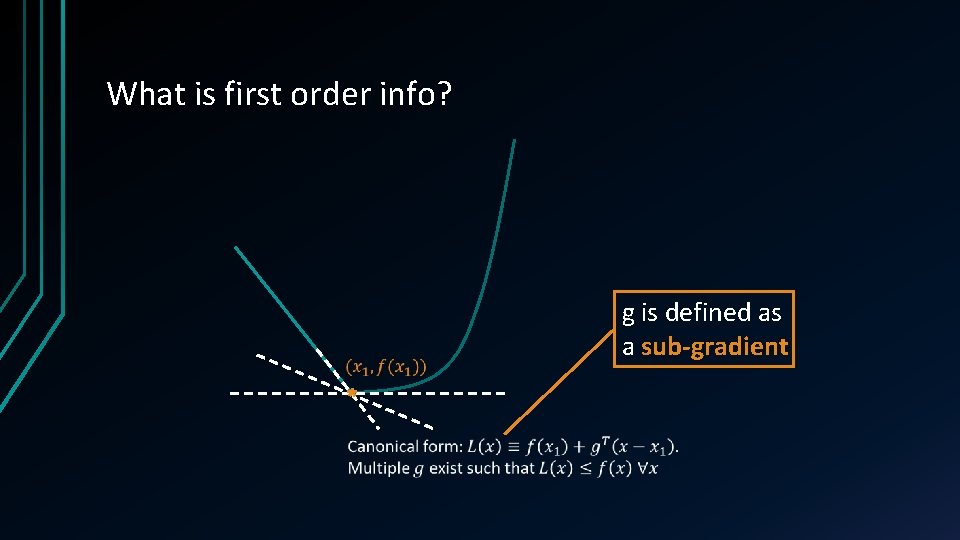

What is first order info?

What is first order info?

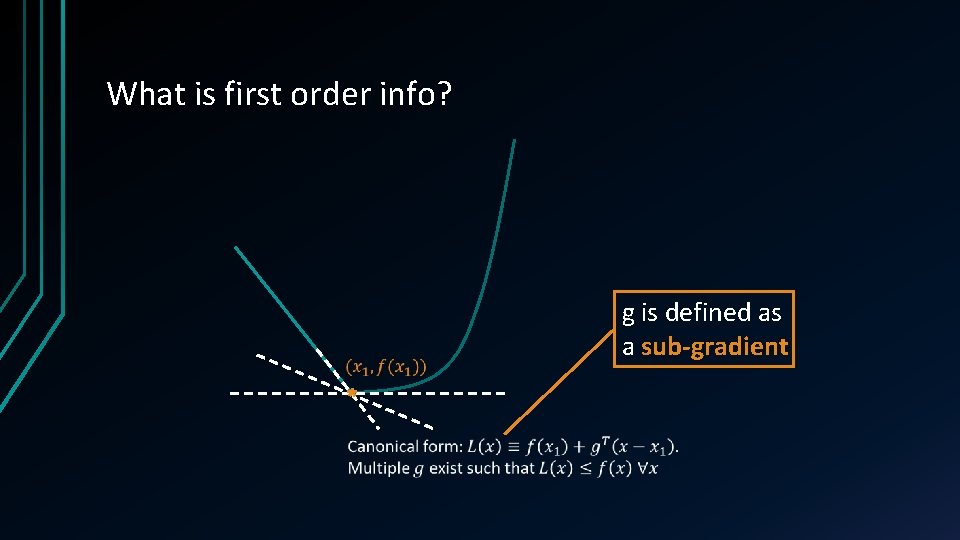

What is first order info? g is defined as a sub-gradient

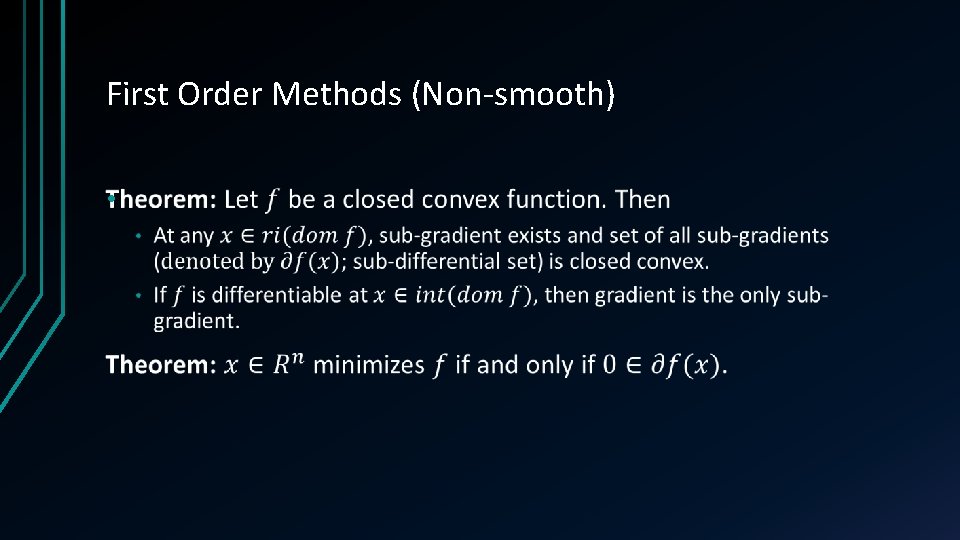

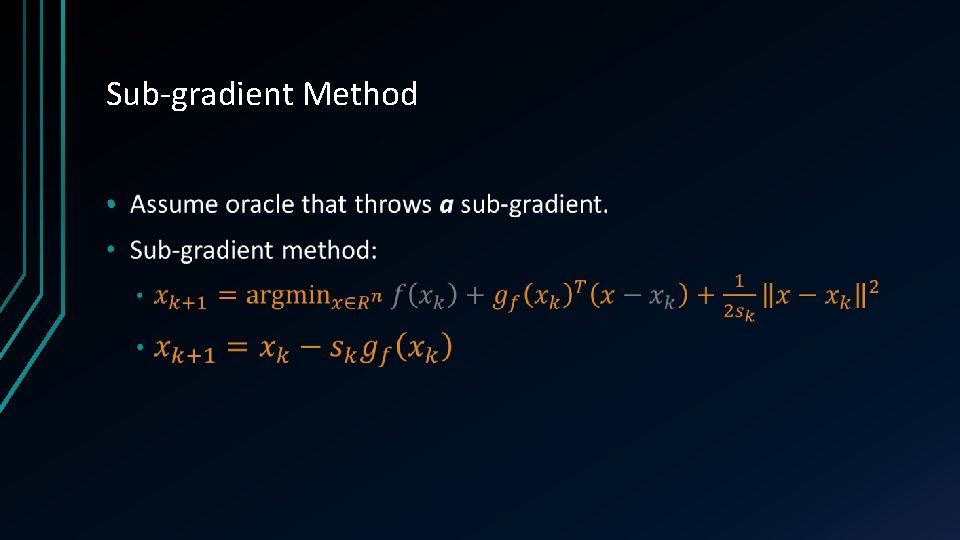

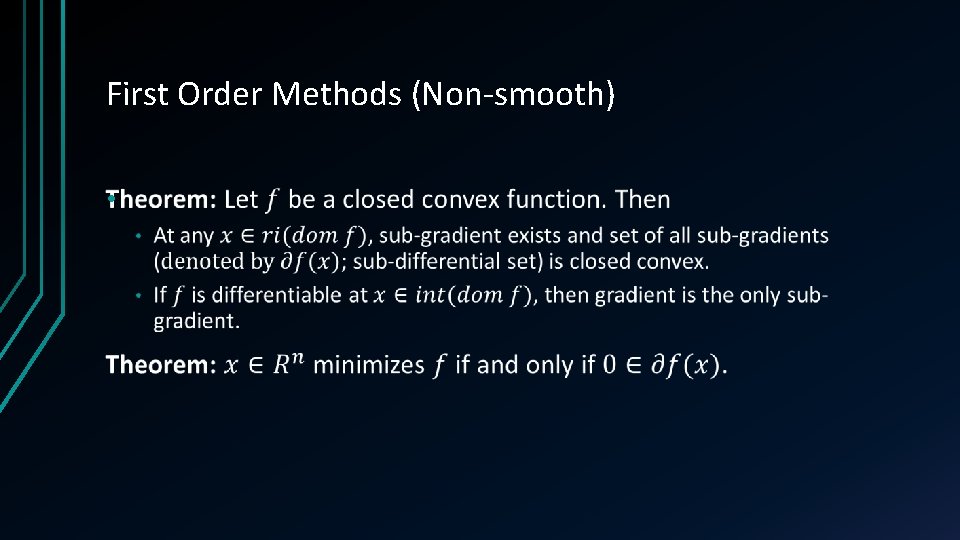

First Order Methods (Non-smooth) •

Sub-gradient Method •

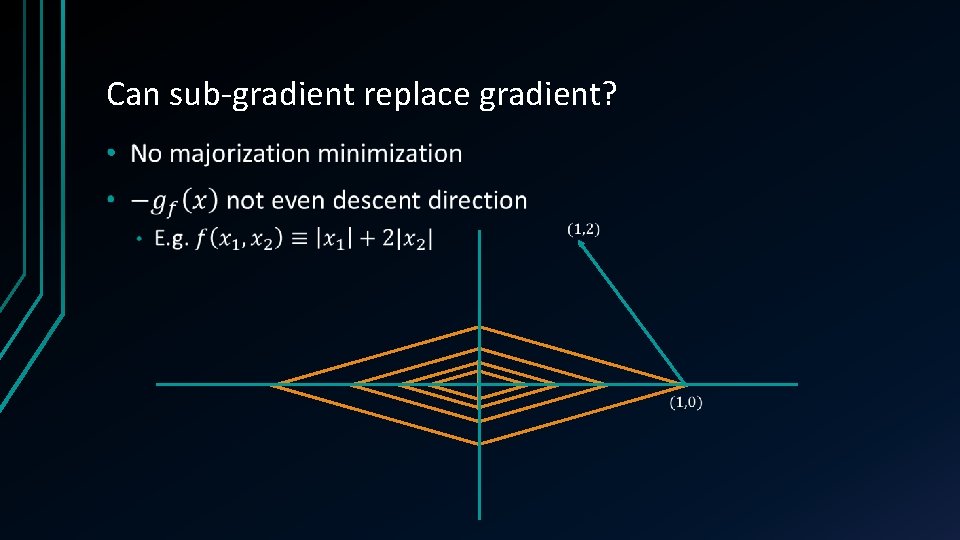

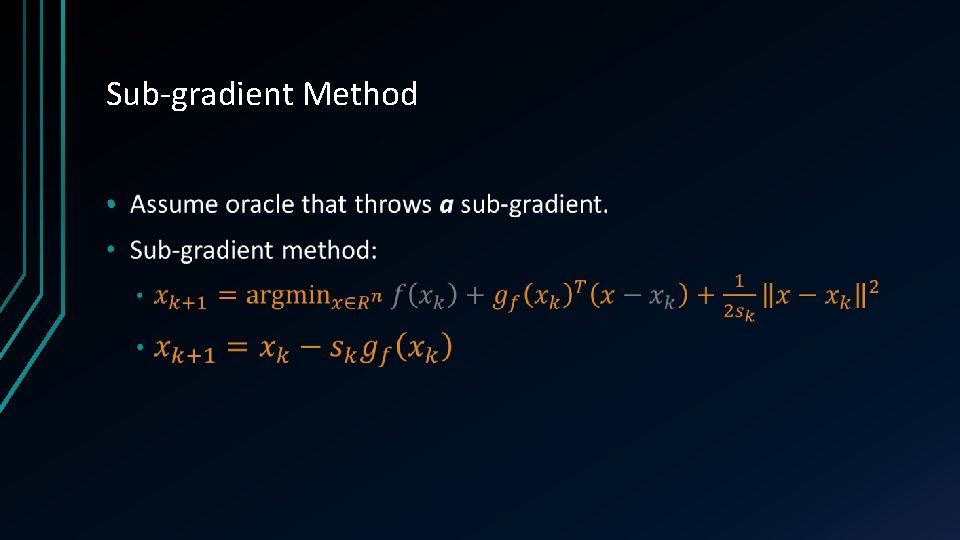

Can sub-gradient replace gradient? •

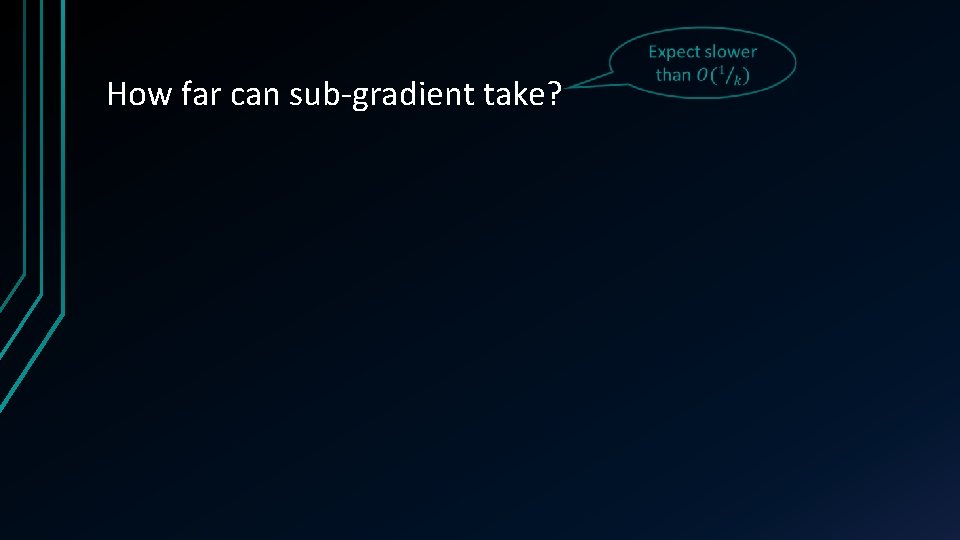

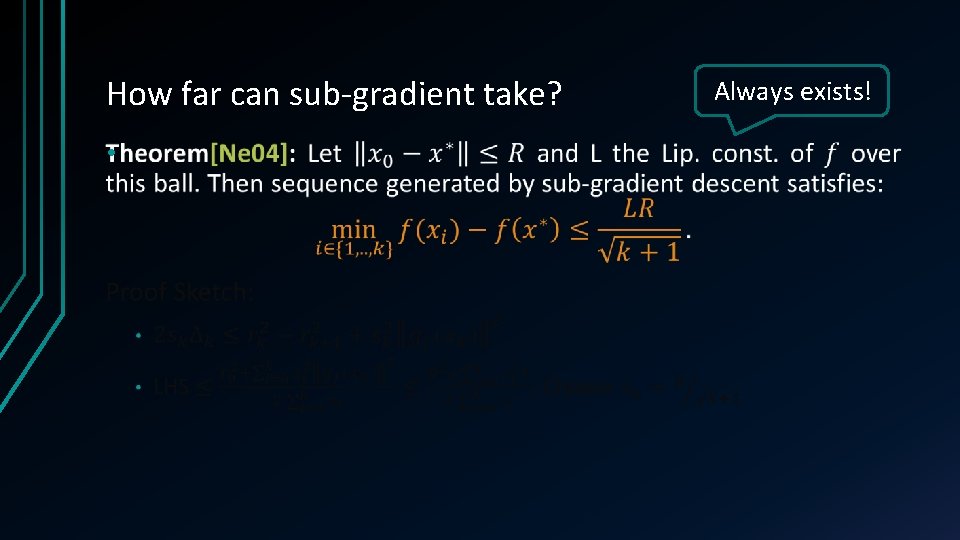

How far can sub-gradient take?

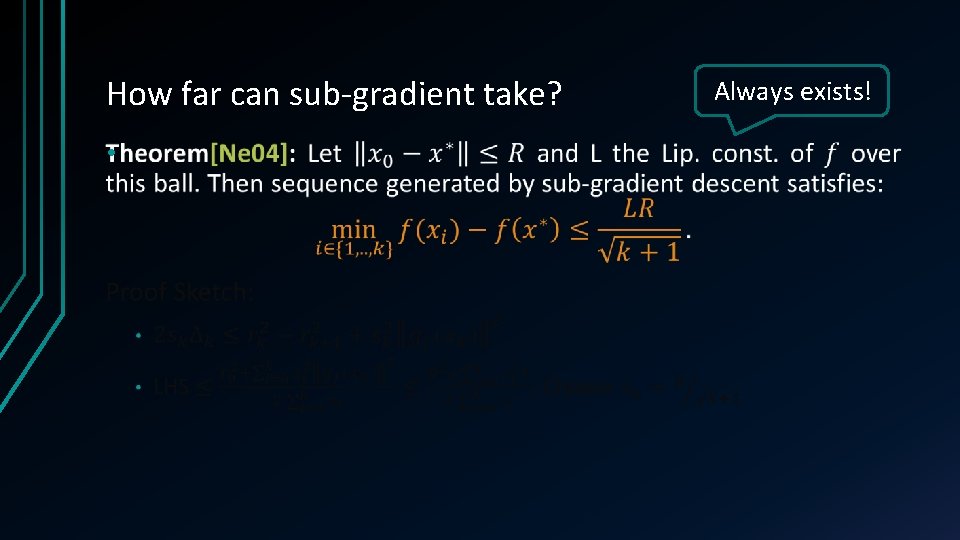

How far can sub-gradient take? • Always exists!

How far can sub-gradient take? •

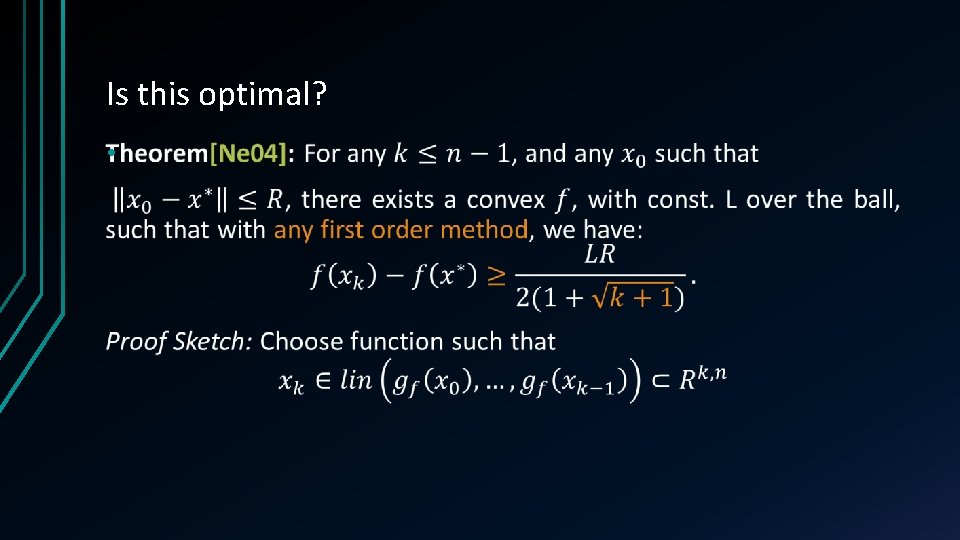

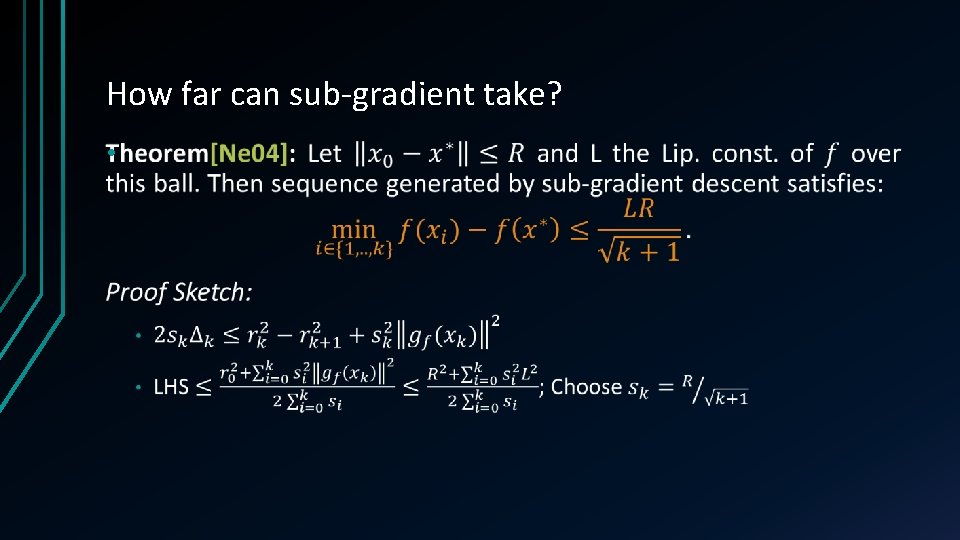

Is this optimal? •

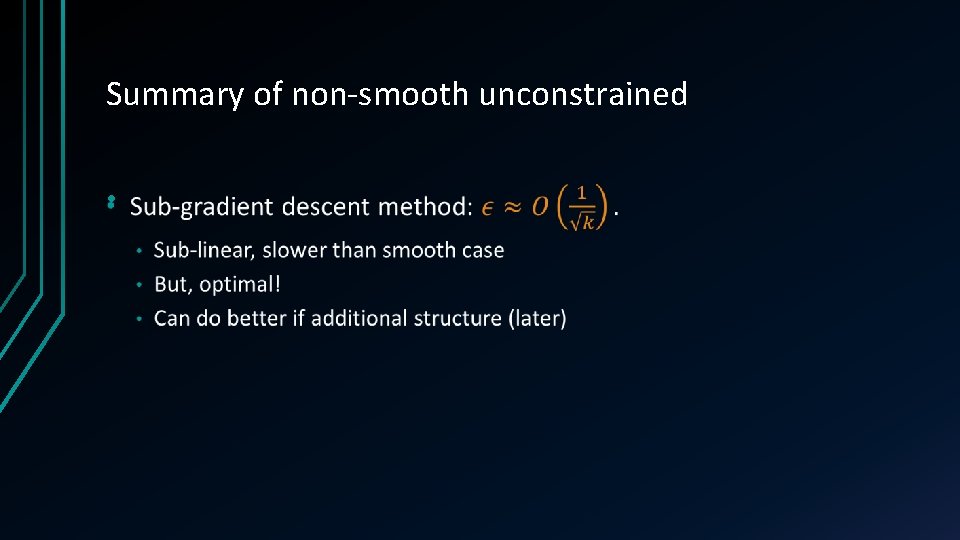

Summary of non-smooth unconstrained •

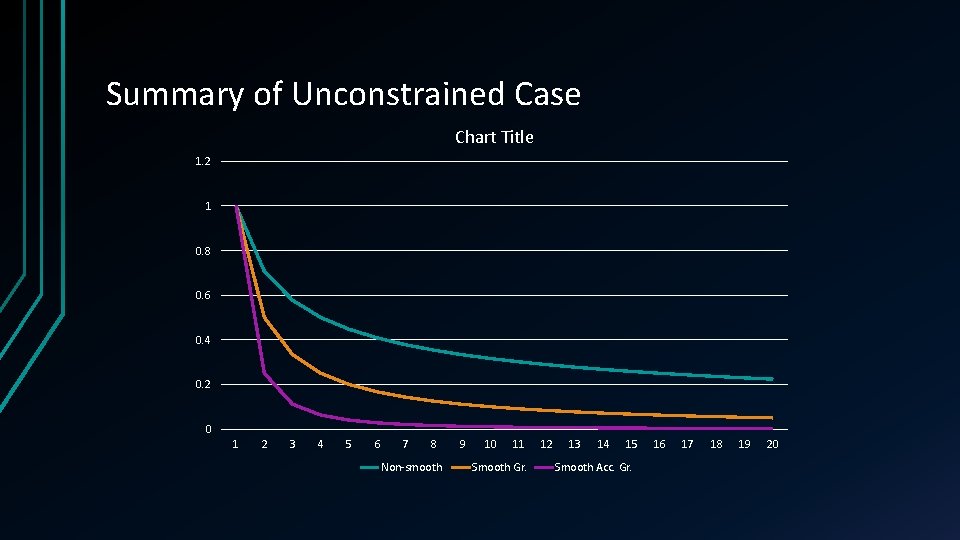

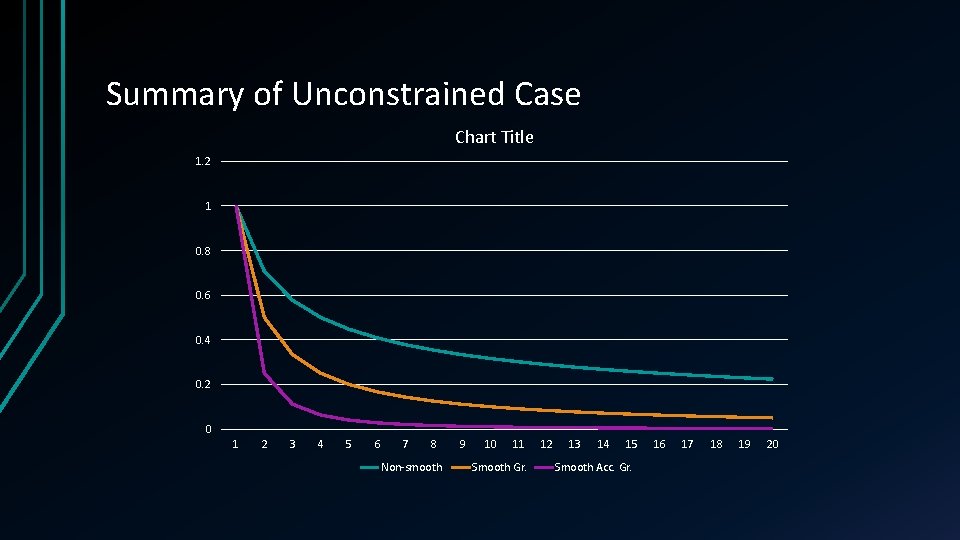

Summary of Unconstrained Case Chart Title 1. 2 1 0. 8 0. 6 0. 4 0. 2 0 1 2 3 4 5 6 7 8 Non-smooth 9 10 11 Smooth Gr. 12 13 14 15 Smooth Acc. Gr. 16 17 18 19 20

![Bibliography Ne 04 Nesterov Yurii Introductory lectures on convex optimization a basic Bibliography • [Ne 04] Nesterov, Yurii. Introductory lectures on convex optimization : a basic](https://slidetodoc.com/presentation_image/40b22c6f504e89c880697f3a11c301fc/image-56.jpg)

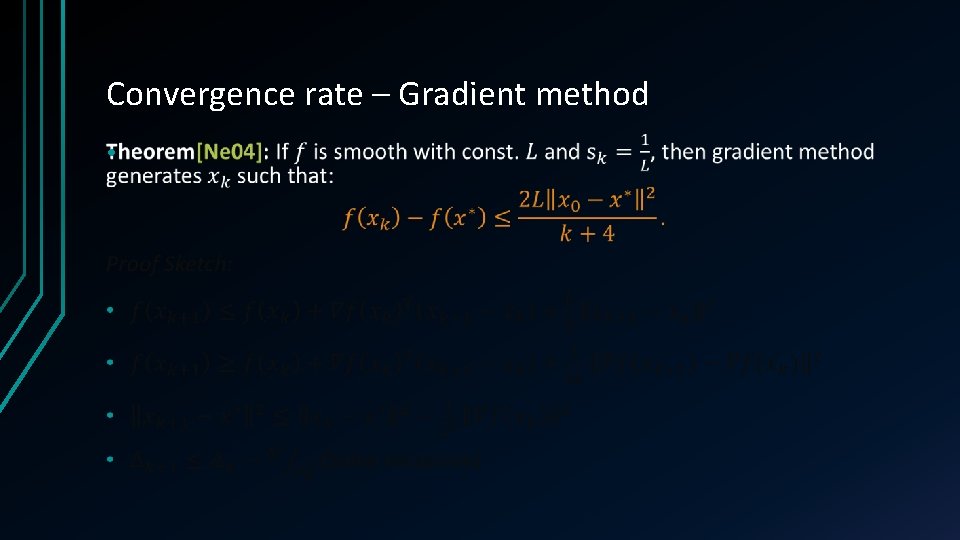

Bibliography • [Ne 04] Nesterov, Yurii. Introductory lectures on convex optimization : a basic course. Kluwer Academic Publ. , 2004. http: //hdl. handle. net/2078. 1/116858. • [Ne 83] Nesterov, Yurii. A method of solving a convex programming problem with convergence rate O (1/k 2). Soviet Mathematics Doklady, Vol. 27(2), 372 -376 pages. • [Mo 12] Moritz Hardt, Guy N. Rothblum and Rocco A. Servedio. Private data release via learning thresholds. SODA 2012, 168 -187 pages. • [Be 09] Amir Beck and Marc Teboulle. A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM Journal of Imaging Sciences, Vol. 2(1), 2009. 183 -202 pages. • [De 13] Olivier Devolder, François Glineur and Yurii Nesterov. First-order methods of smooth convex optimization with inexact oracle. Mathematical Programming 2013.