Finegrained prediction of syntactic typology Discovering latent structure

- Slides: 54

Fine-grained prediction of syntactic typology: Discovering latent structure with supervised learning Dingquan Wang and Jason Eisner 1

Grammar Induction is Broken! • This work tries to fix it! – Starting with syntactic typology induction – Just do supervised learning! • Unsupervised methods (like EM) – Only locally optimal – Hard to harness linguistic knowledge & conventions – Unusable performance in practice 2

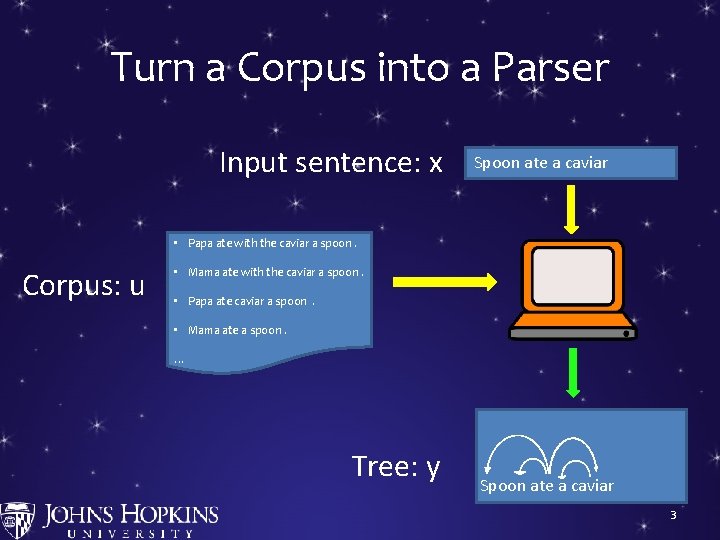

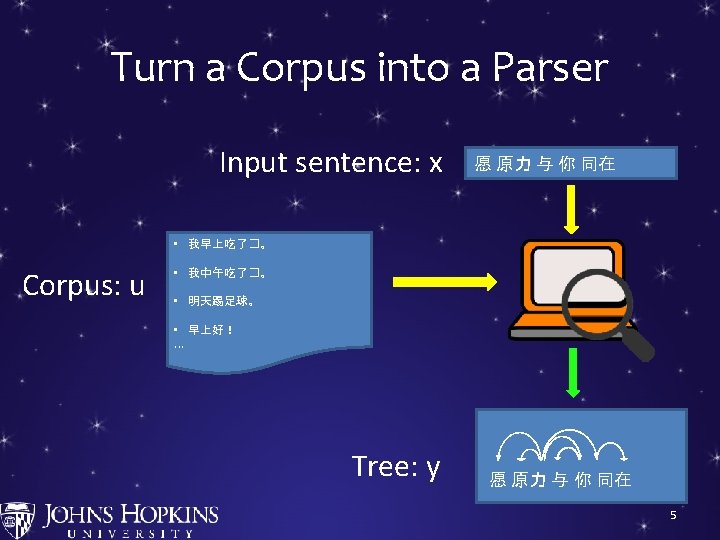

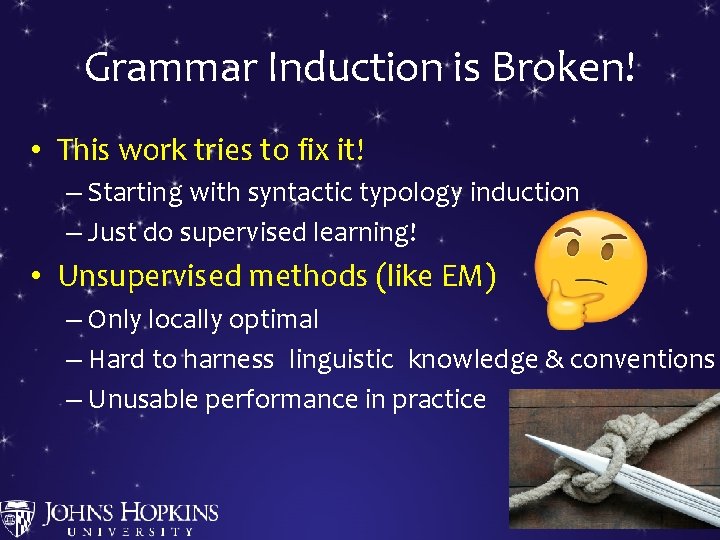

Turn a Corpus into a Parser Input sentence: x Spoon ate a caviar • Papa ate with the caviar a spoon. Corpus: u • Mama ate with the caviar a spoon. • Papa ate caviar a spoon. • Mama ate a spoon. … Tree: y Spoon ate a caviar 3

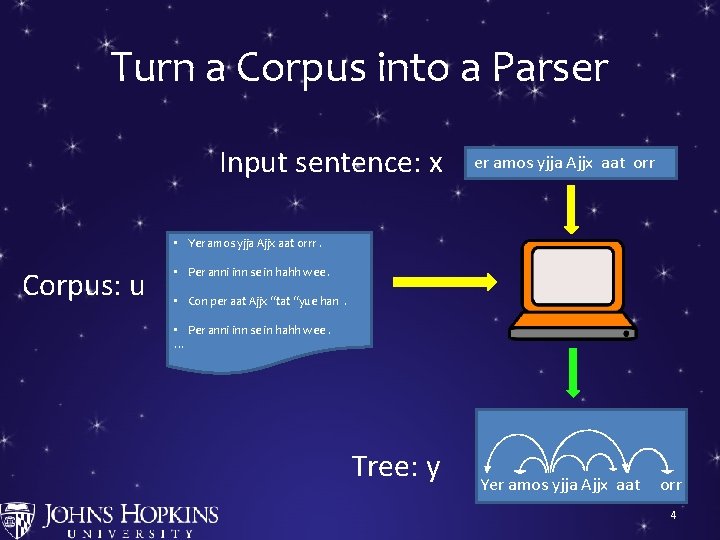

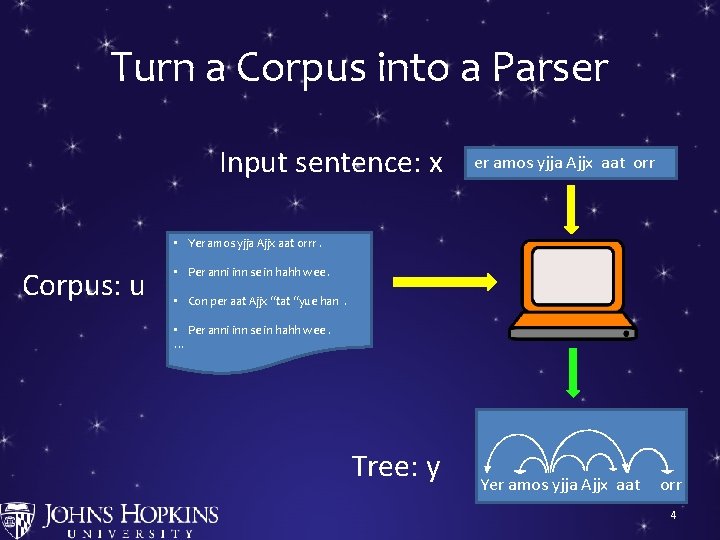

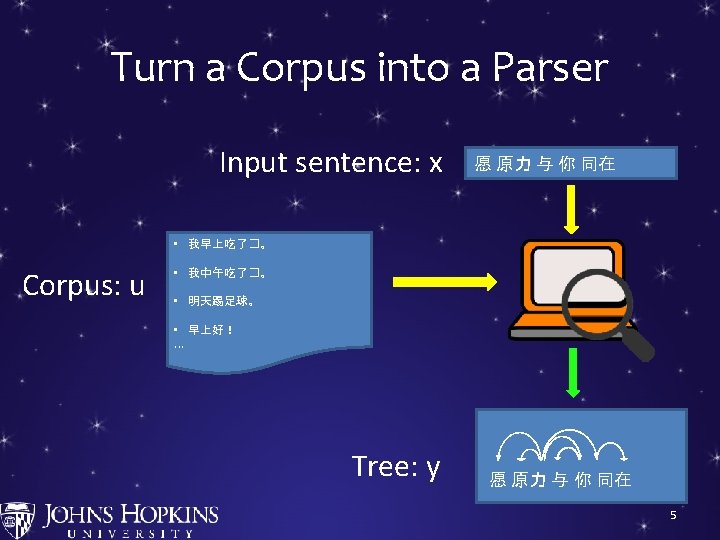

Turn a Corpus into a Parser Input sentence: x er amos yjja Ajjx aat orr • Yer amos yjja Ajjx aat orrr. Corpus: u • Per anni inn se in hahh wee. • Con per aat Ajjx “tat “yue han. • Per anni inn se in hahh wee. … Tree: y Yer amos yjja Ajjx aat orr 4

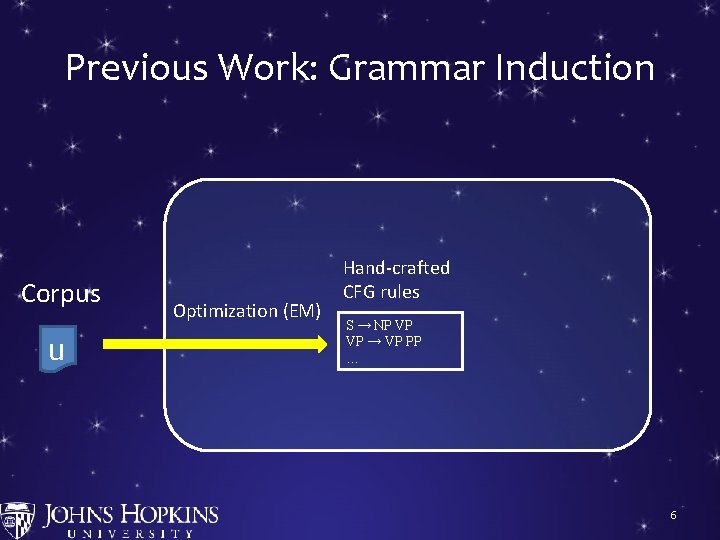

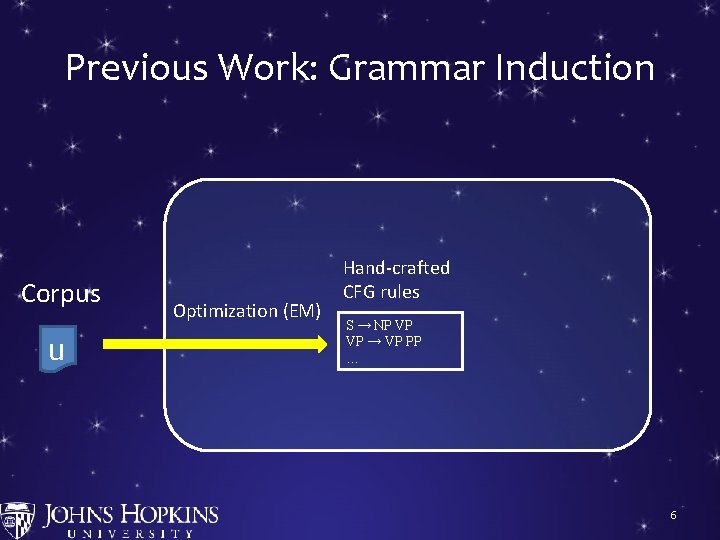

Previous Work: Grammar Induction Corpus u Optimization (EM) Hand-crafted CFG rules S → NP VP VP → VP PP … 6

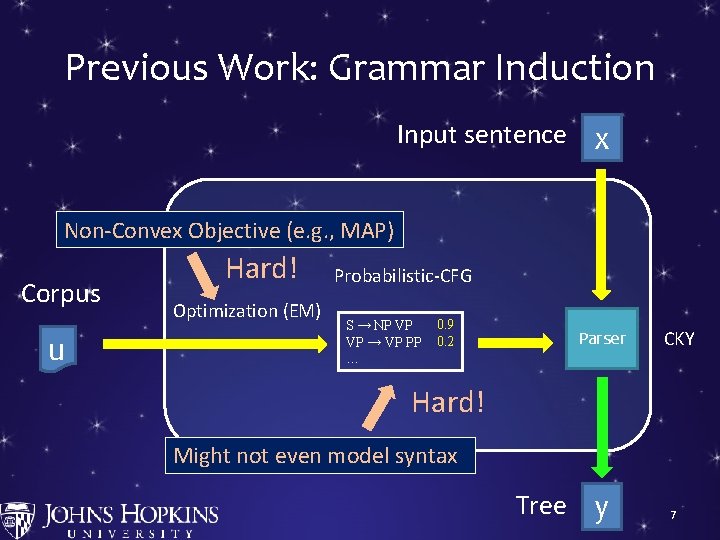

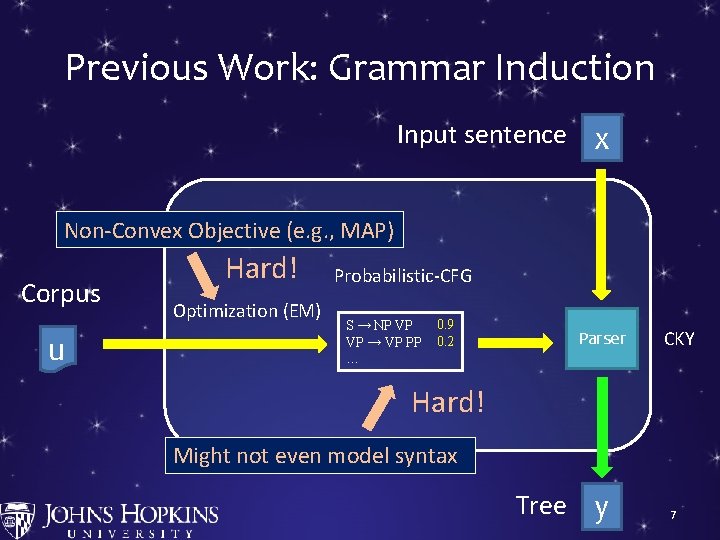

Previous Work: Grammar Induction Input sentence x Non-Convex Objective (e. g. , MAP) Corpus u Hard! Optimization (EM) Probabilistic-CFG S → NP VP VP → VP PP … 0. 9 0. 2 Parser CKY Hard! Might not even model syntax Tree y 7

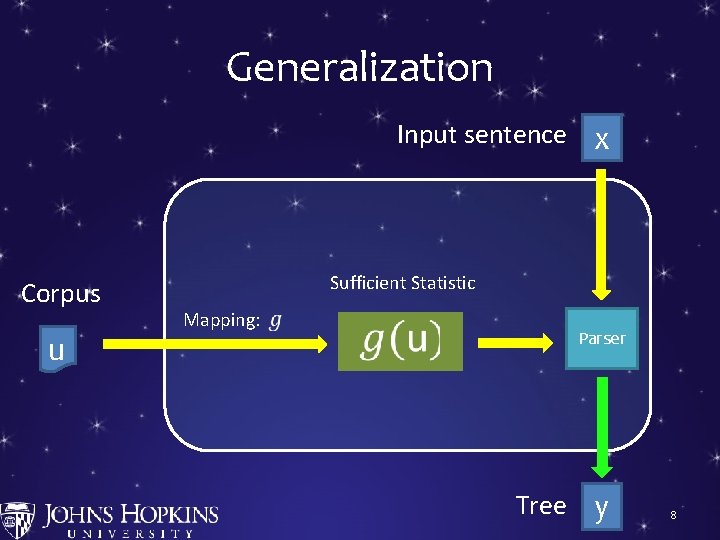

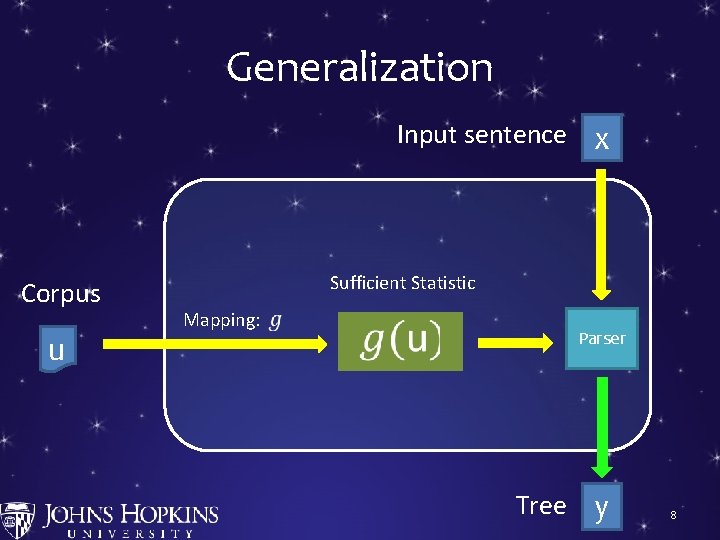

Generalization Input sentence x Corpus u Sufficient Statistic Mapping: S → NP VP VP → VP PP … 0. 9 0. 2 Parser Tree y 8

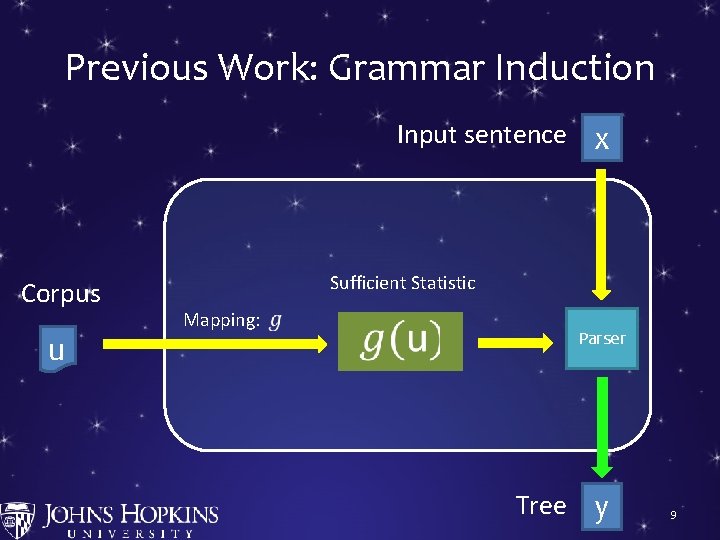

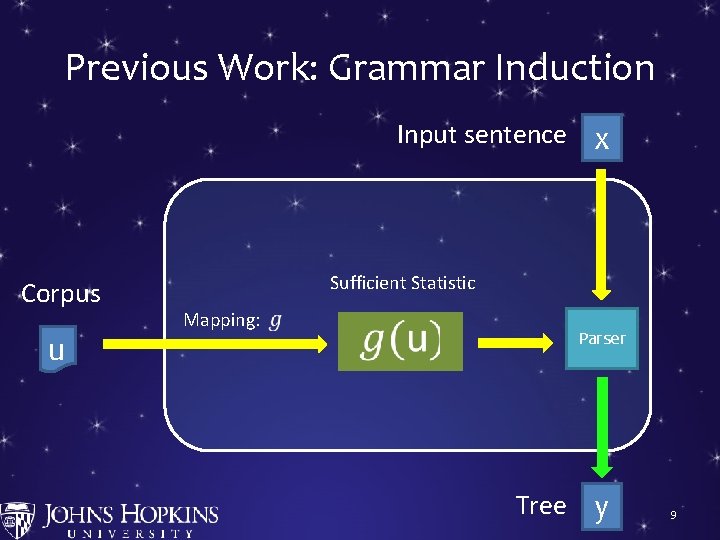

Previous Work: Grammar Induction Input sentence x Corpus u Sufficient Statistic Mapping: S → NP VP VP → VP PP … 0. 9 0. 2 Parser Tree y 9

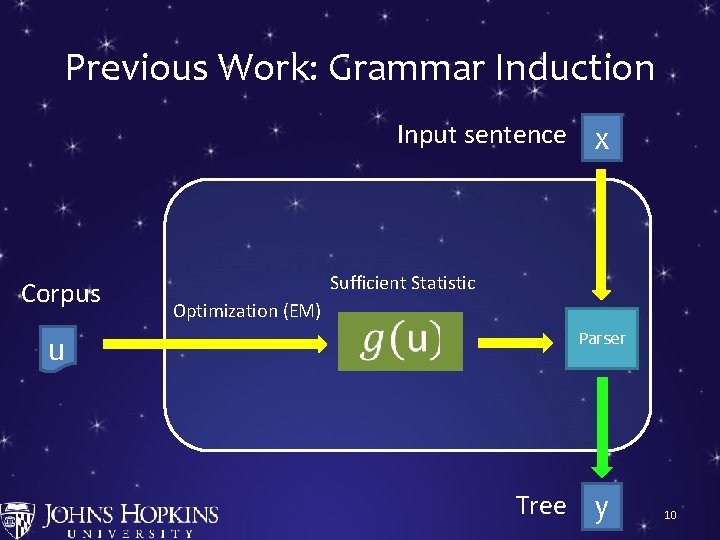

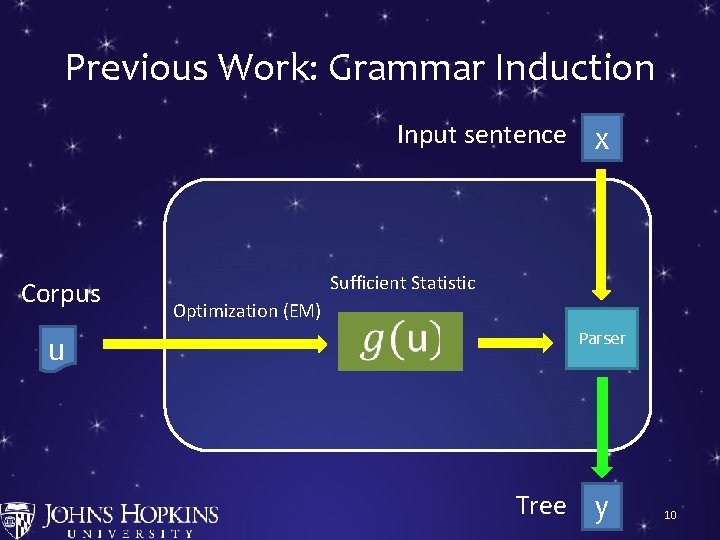

Previous Work: Grammar Induction Input sentence x Corpus u Sufficient Statistic Optimization (EM) S → NP VP VP → VP PP … 0. 9 0. 2 Parser Tree y 10

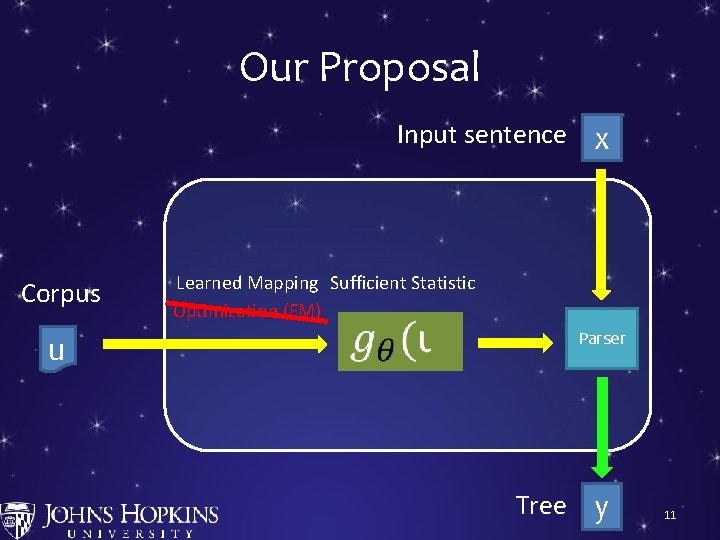

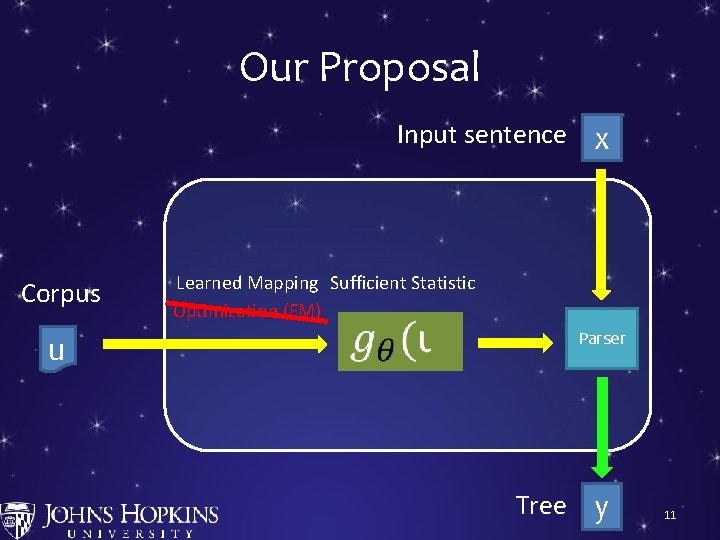

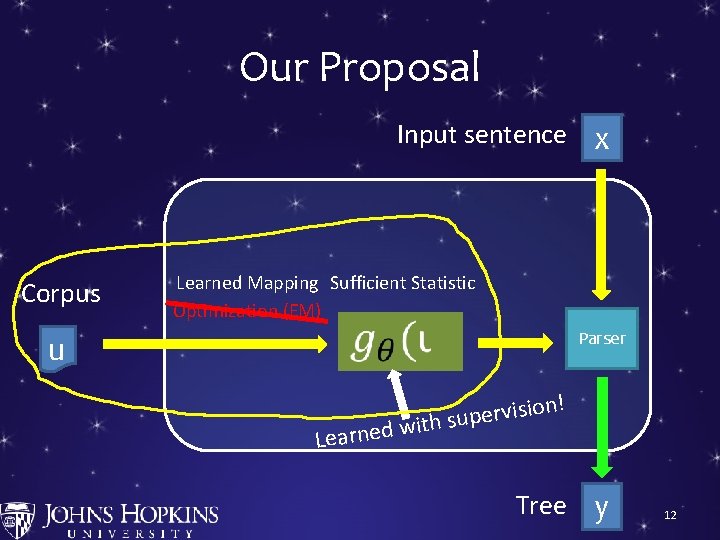

Our Proposal Input sentence x Corpus u Learned Mapping Sufficient Statistic Optimization (EM) S → NP VP VP → VP PP … 0. 9 0. 2 Parser Tree y 11

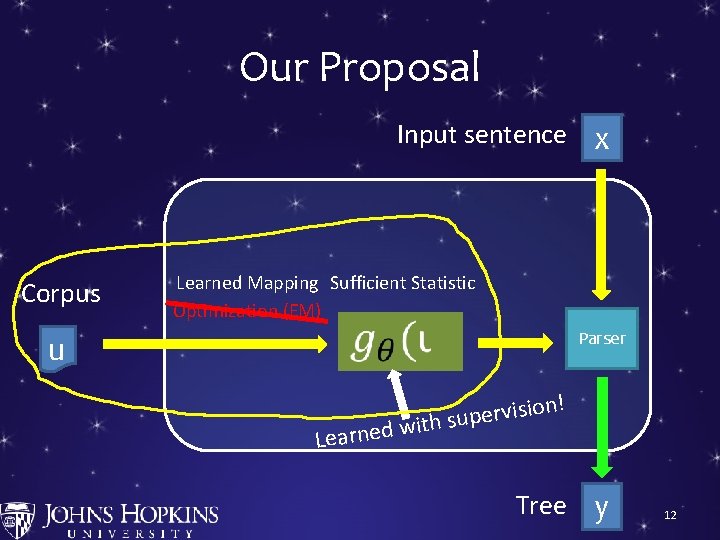

Our Proposal Input sentence x Corpus u Learned Mapping Sufficient Statistic Optimization (EM) S → NP VP VP → VP PP … Learned 0. 9 0. 2 Parser ! n o i s i v r e with sup Tree y 12

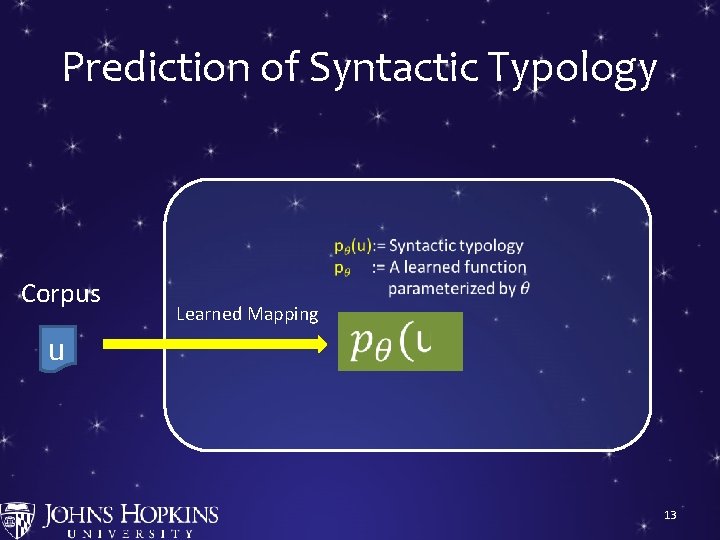

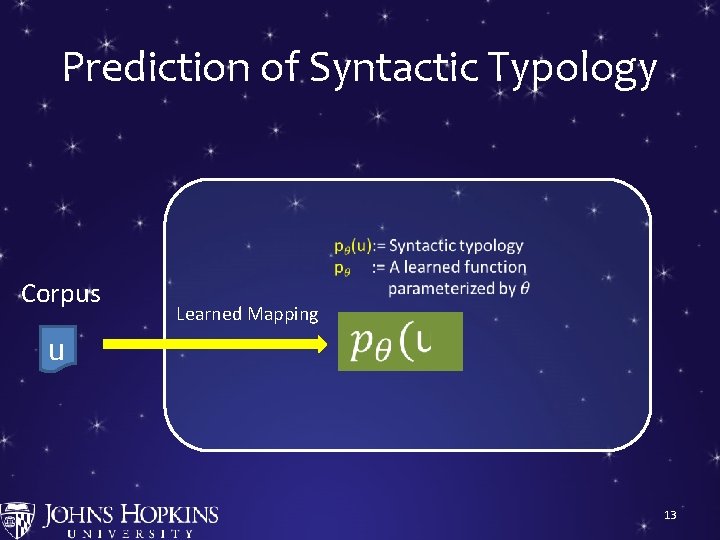

Prediction of Syntactic Typology Corpus u Learned Mapping S → NP VP VP → VP PP … 0. 9 0. 2 13

Syntactic Typology • A set of word order facts of a language 14

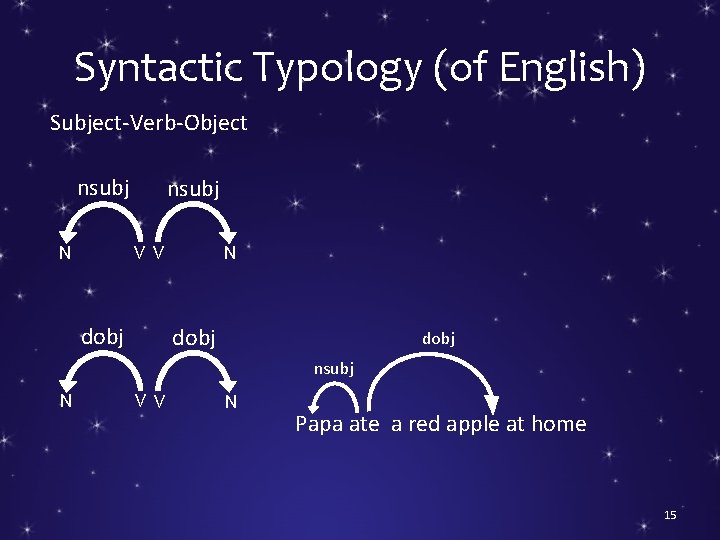

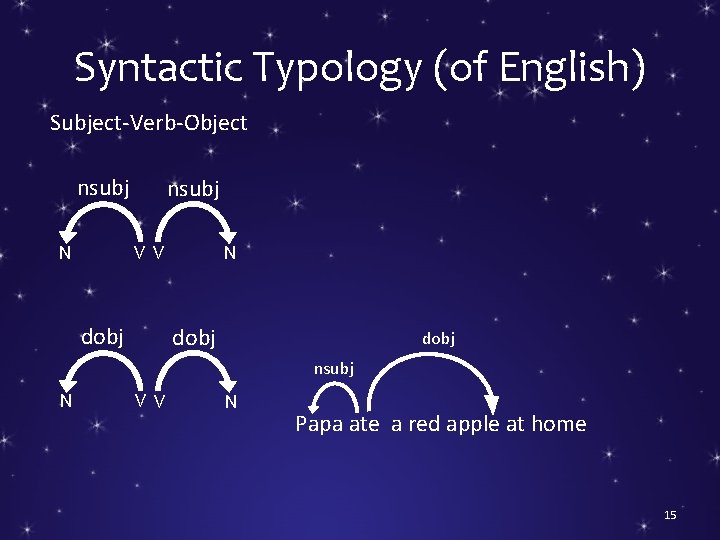

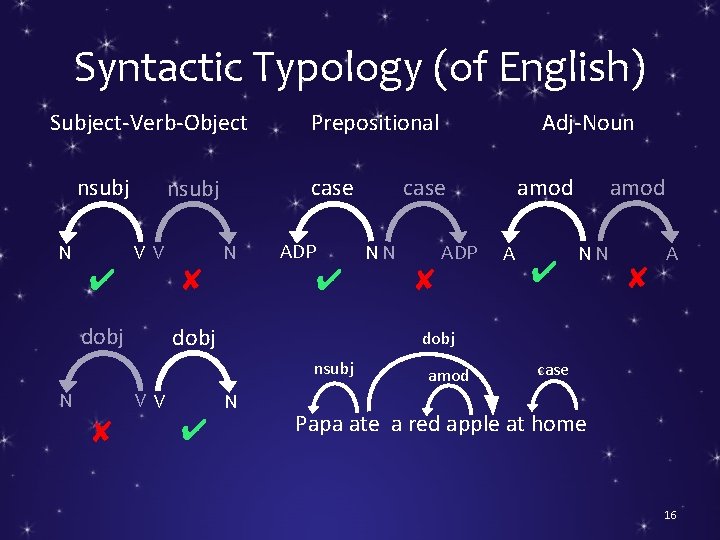

Syntactic Typology (of English) Subject-Verb-Object nsubj N nsubj V V dobj N dobj nsubj N V V N Papa ate a red apple at home 15

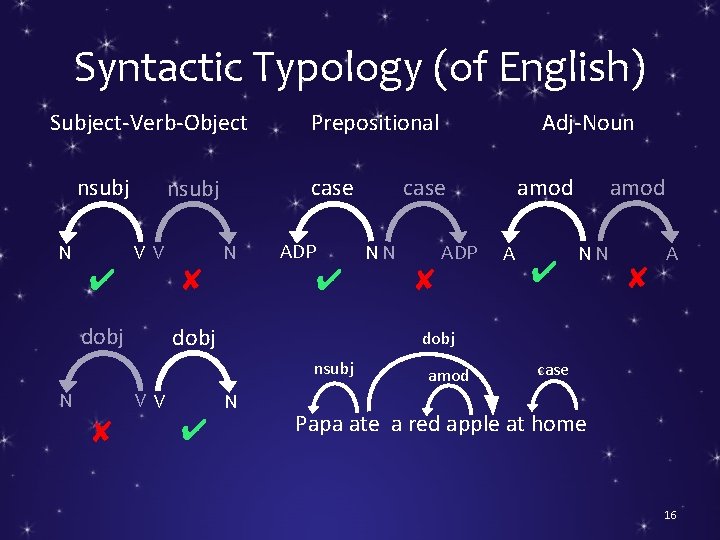

Syntactic Typology (of English) Subject-Verb-Object nsubj N ✔ case nsubj V V dobj ✘ N ADP ✔ dobj V V ✘ N ✔ amod case NN ADP ✘ A ✔ amod NN ✘ A dobj nsubj N Adj-Noun Prepositional amod case Papa ate a red apple at home 16

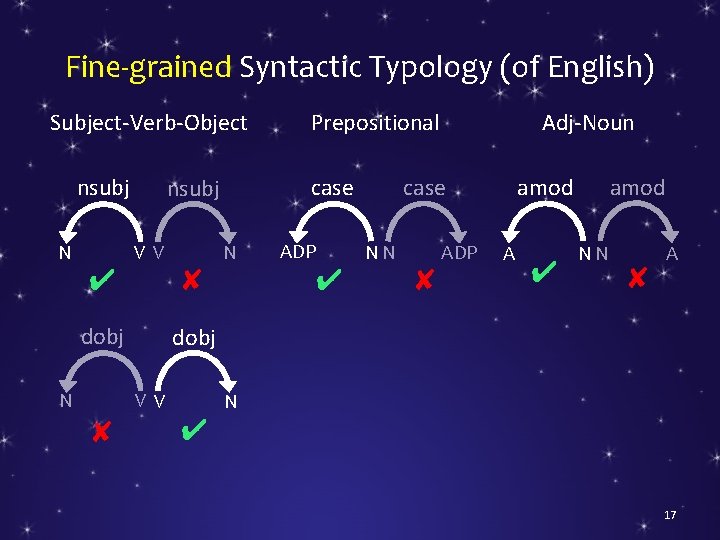

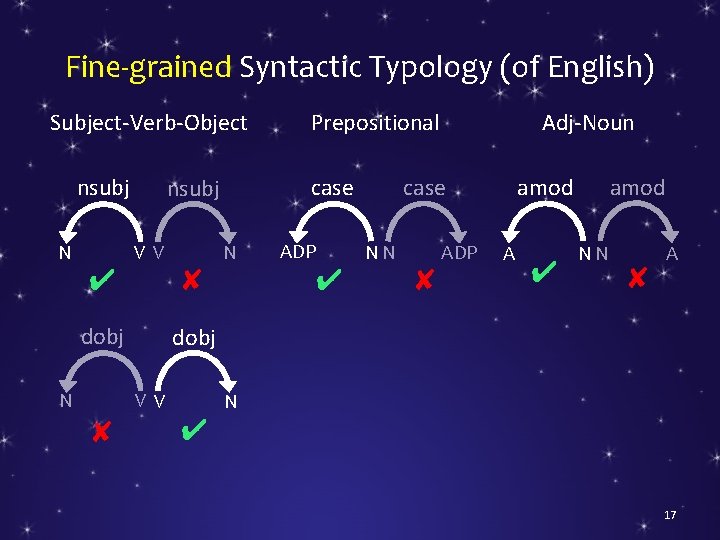

Fine-grained Syntactic Typology (of English) Subject-Verb-Object nsubj N ✔ V V dobj N ✘ N ADP ✔ amod case NN ADP ✘ A ✔ amod NN ✘ A dobj V V ✘ case nsubj Adj-Noun Prepositional N ✔ 17

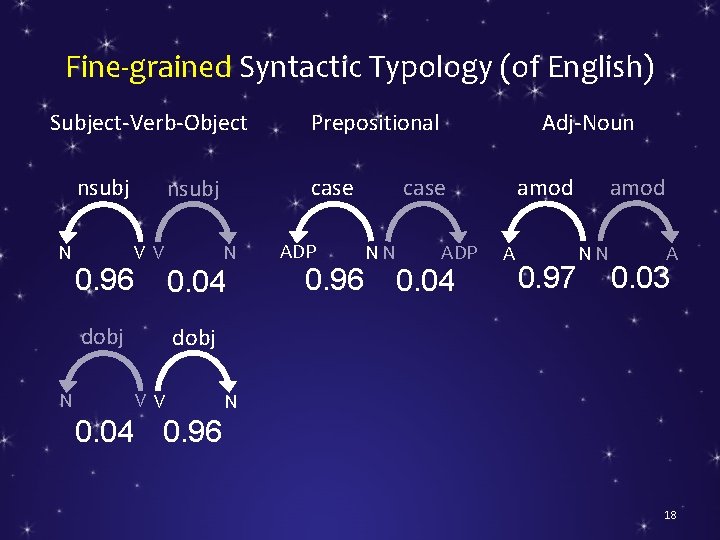

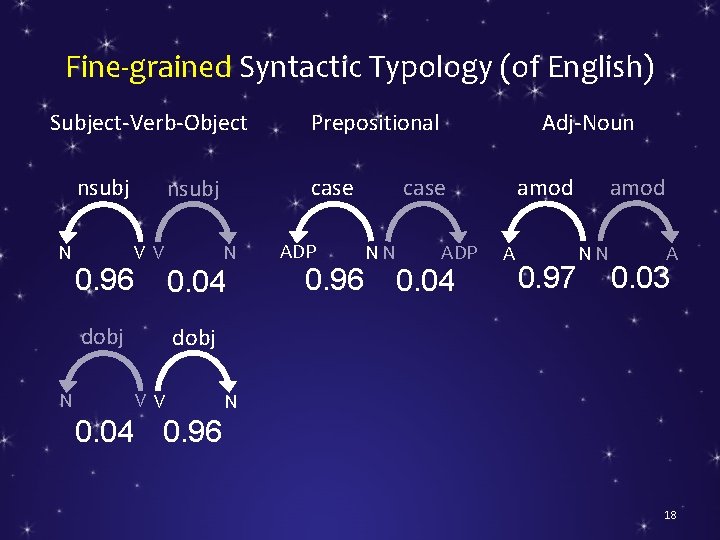

Fine-grained Syntactic Typology (of English) Subject-Verb-Object nsubj N 0. 96 V V N 0. 04 dobj N ADP 0. 96 amod case NN ADP 0. 04 A 0. 97 amod NN A 0. 03 dobj V V 0. 04 case nsubj Adj-Noun Prepositional N 0. 96 18

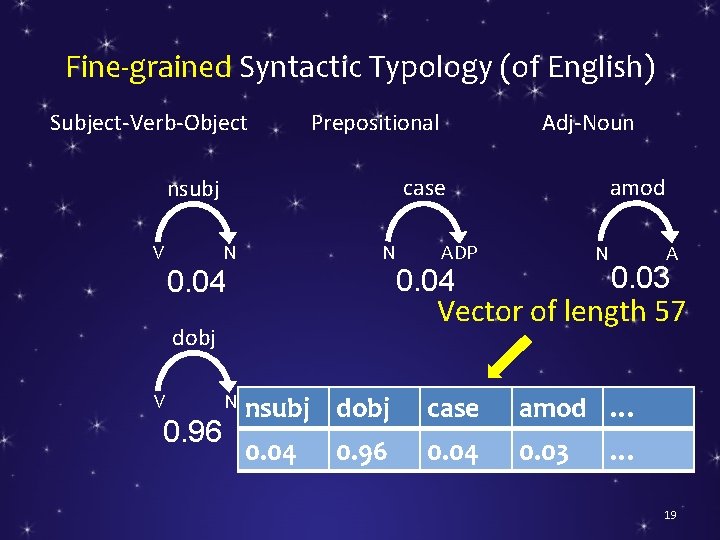

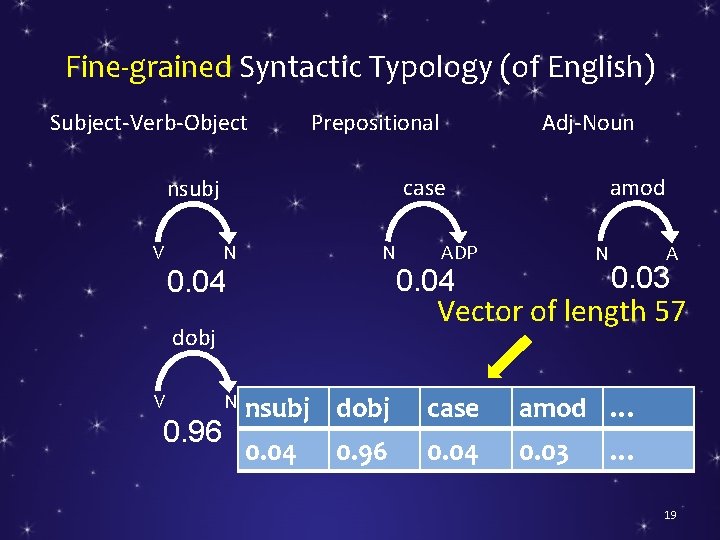

Fine-grained Syntactic Typology (of English) Subject-Verb-Object N N 0. 04 0. 96 N ADP N 0. 04 A 0. 03 Vector of length 57 dobj V amod case nsubj V Adj-Noun Prepositional nsubj dobj case amod … 0. 04 0. 03 0. 96 … 19

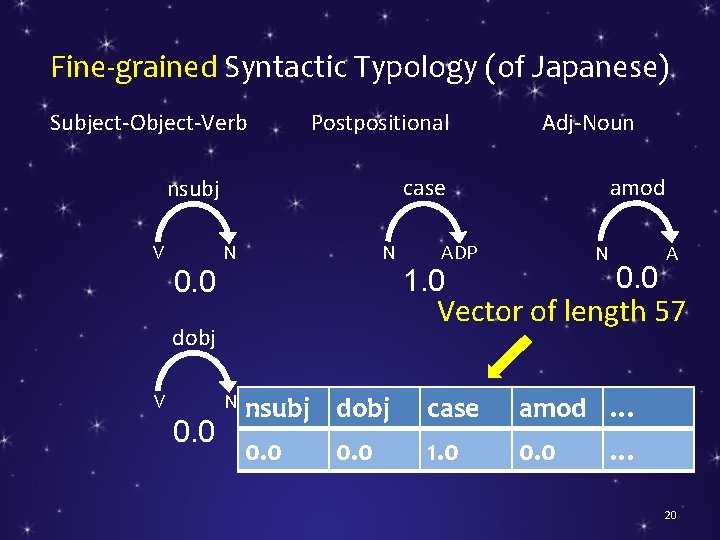

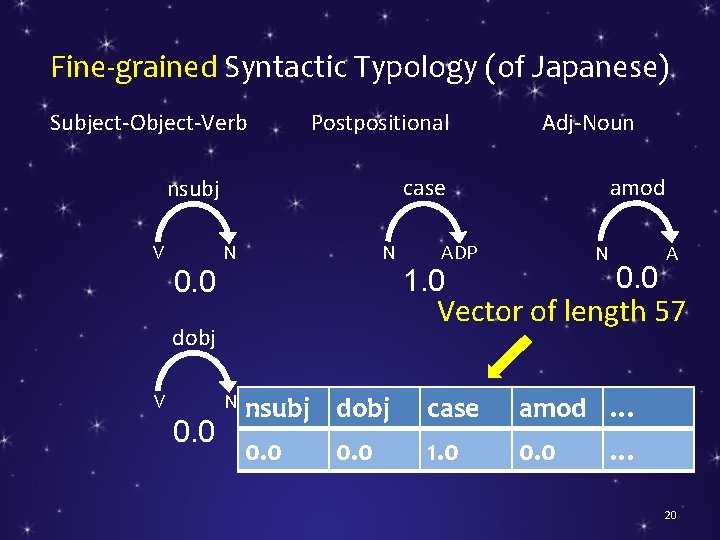

Fine-grained Syntactic Typology (of Japanese) Subject-Object-Verb Postpositional N N 0. 0 ADP N 1. 0 0. 0 A Vector of length 57 dobj V amod case nsubj V Adj-Noun nsubj dobj case amod … 0. 0 1. 0 0. 0 … 20

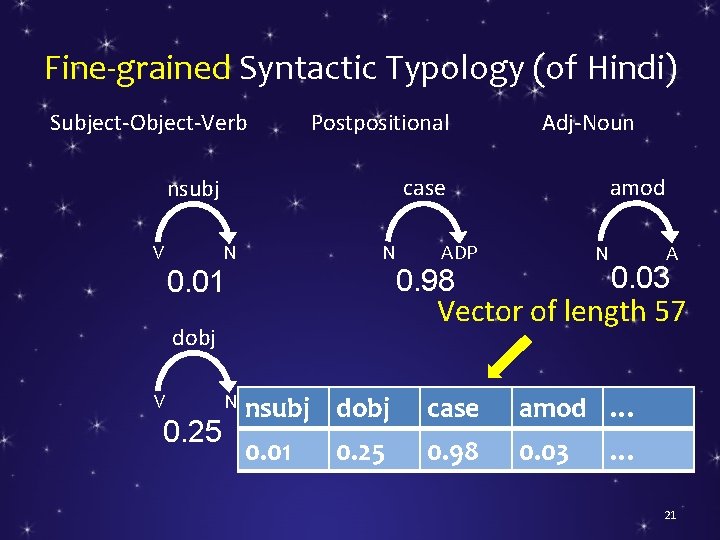

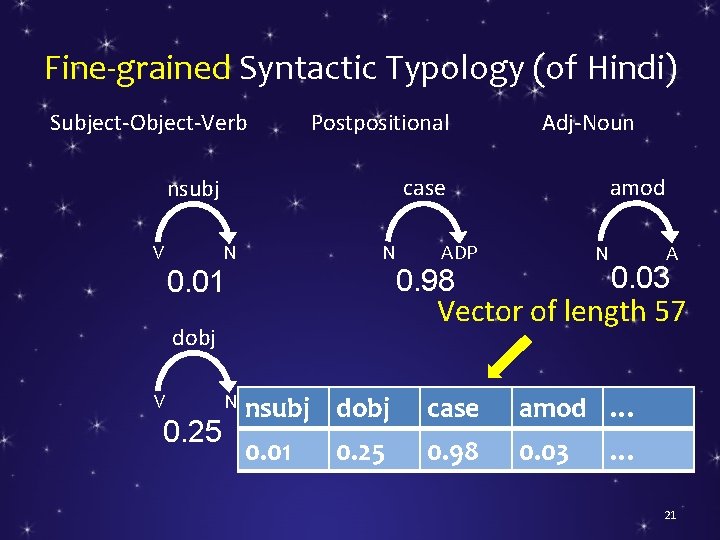

Fine-grained Syntactic Typology (of Hindi) Subject-Object-Verb Postpositional N N 0. 01 0. 25 N ADP N 0. 98 A 0. 03 Vector of length 57 dobj V amod case nsubj V Adj-Noun nsubj dobj case amod … 0. 01 0. 98 0. 03 0. 25 … 21

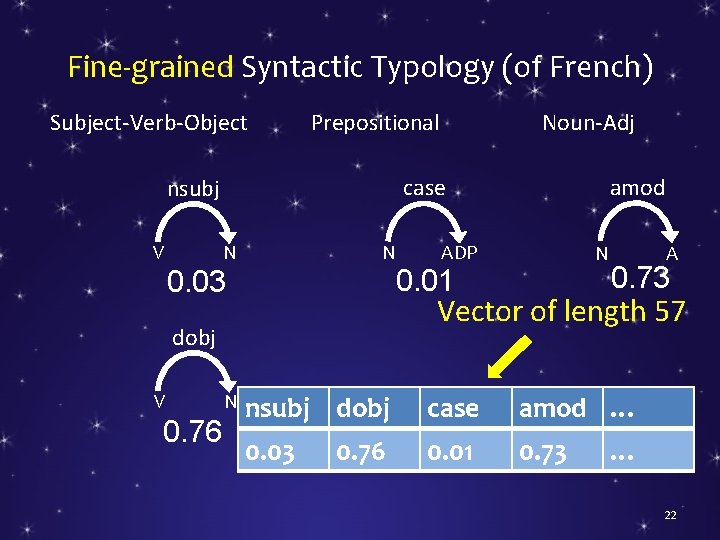

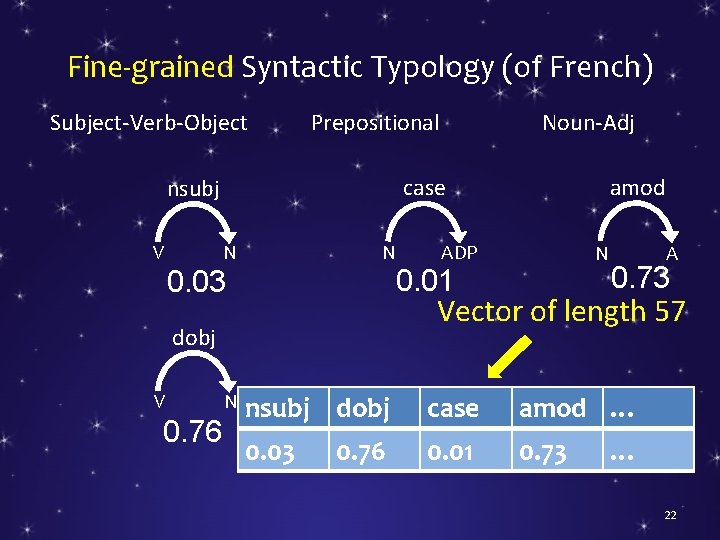

Fine-grained Syntactic Typology (of French) Subject-Verb-Object N N 0. 03 0. 76 N ADP N 0. 01 A 0. 73 Vector of length 57 dobj V amod case nsubj V Noun-Adj Prepositional nsubj dobj case amod … 0. 03 0. 01 0. 73 0. 76 … 22

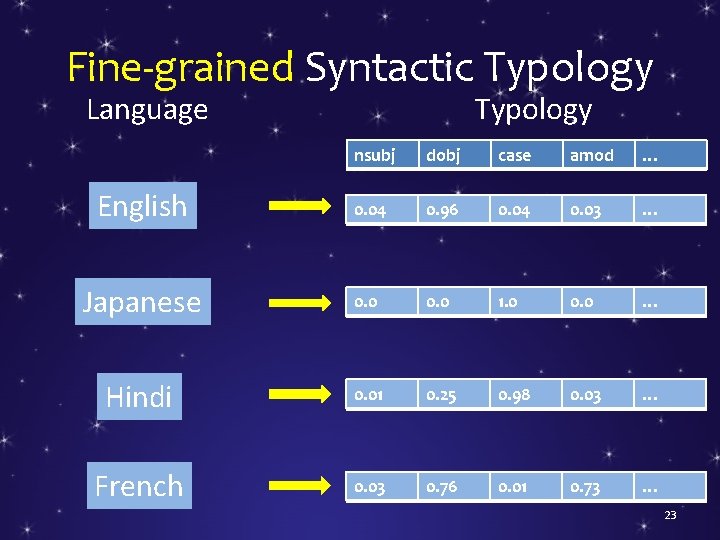

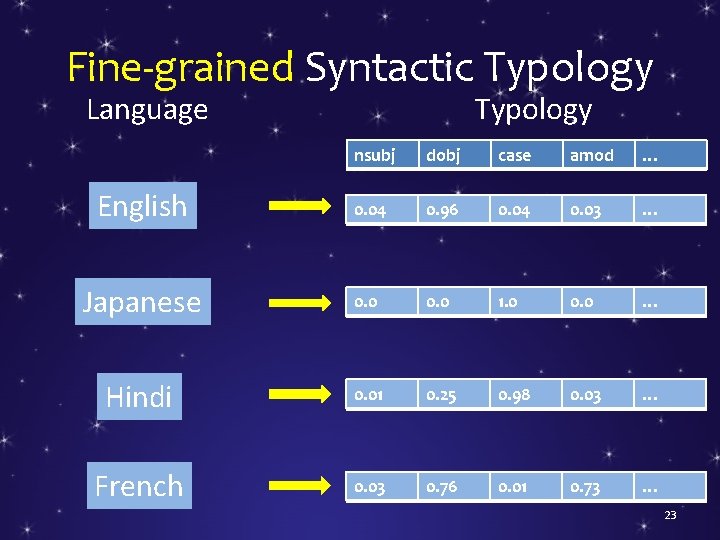

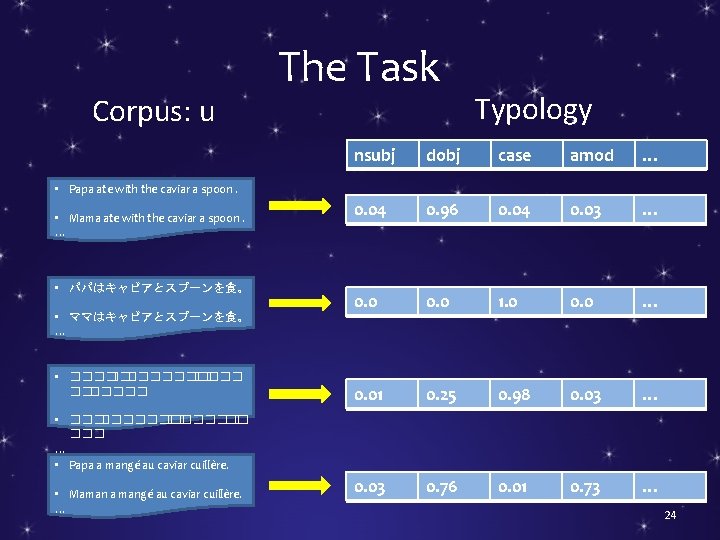

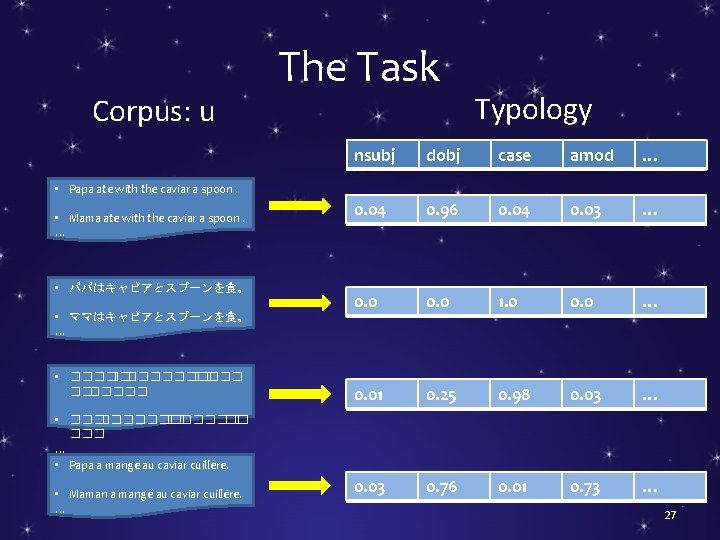

Fine-grained Syntactic Typology Language Typology nsubj dobj case amod … English 0. 04 0. 96 0. 04 0. 03 … Japanese 0. 0 1. 0 0. 0 … Hindi 0. 01 0. 25 0. 98 0. 03 … French 0. 03 0. 76 0. 01 0. 73 … 23

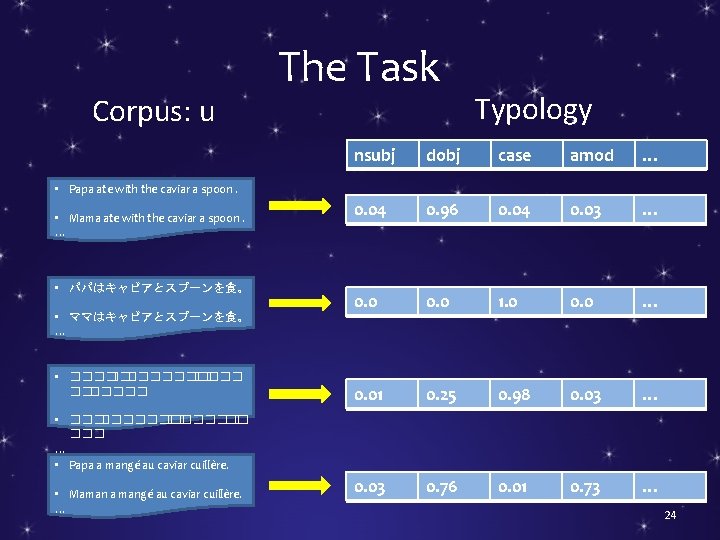

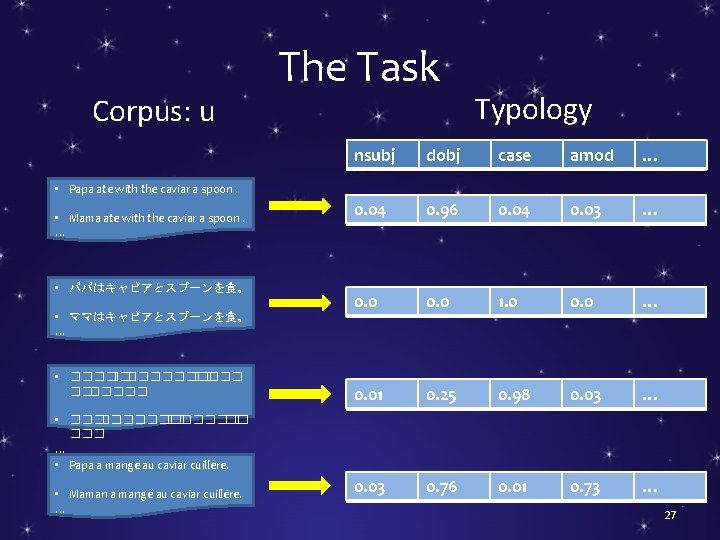

Corpus: u The Task Typology nsubj dobj case amod … 0. 04 0. 96 0. 04 0. 03 … 0. 0 1. 0 0. 0 … 0. 01 0. 25 0. 98 0. 03 … 0. 03 0. 76 0. 01 0. 73 … • Papa ate with the caviar a spoon. • Mama ate with the caviar a spoon. … • パパはキャビアとスプーンを食。 • ママはキャビアとスプーンを食。 … • ��������� • ������� �� ��� … • Papa a mangé au caviar cuillère. • Maman a mangé au caviar cuillère. … 24

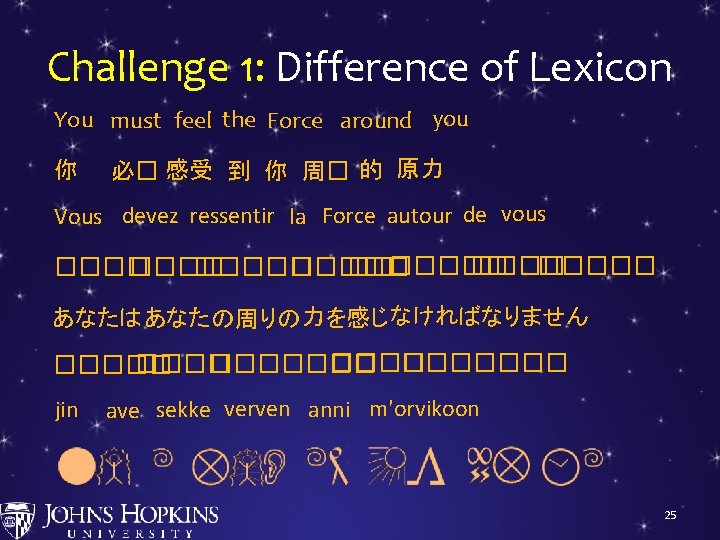

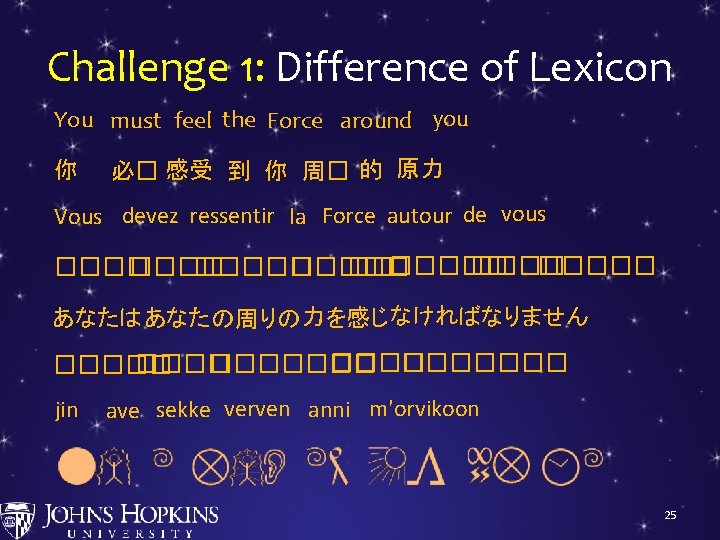

Challenge 1: Difference of Lexicon You must feel the Force around you 你 必� 感受 到 你 周� 的 原力 Vous devez ressentir la Force autour de vous ������� ����� あなたは あなた の周りの力を感じ なければなりません ������� ����� jin ave sekke verven anni m'orvikoon 25

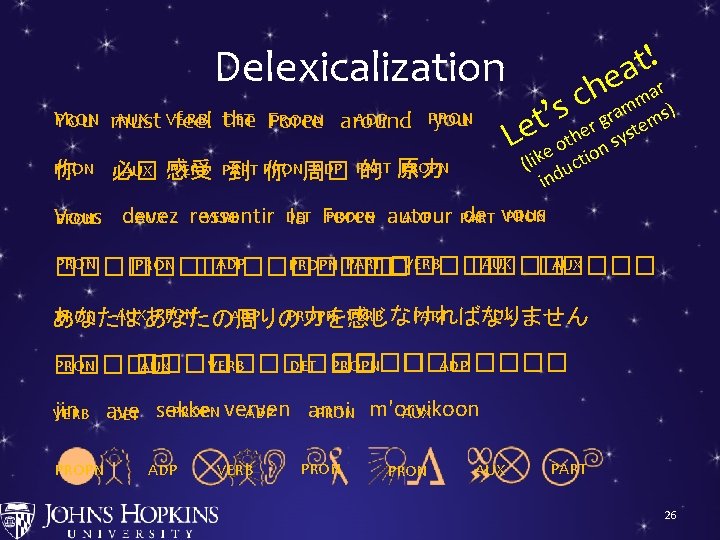

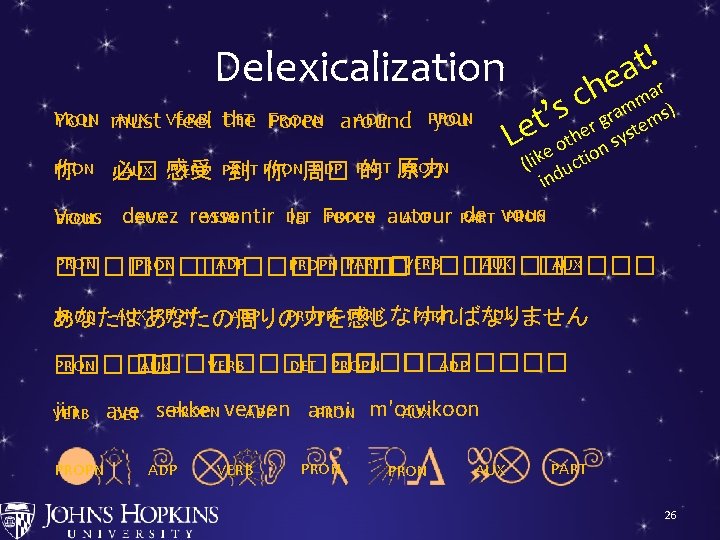

Delexicalization DET Force PRON AUX VERB PROPN You must feel the PRON 你 PRON ADP you around PROPN ADP PART VERB PART AUX 感受 的 原力 必� 到 PRON 你 周� ! t ea ch ar m ram ems) g er syst h t o on e k (li ducti in s ’ t Le ADP PRON de vous PROPN autour VERB DET ressentir PART devez AUX Vous PRON la Force PART AUX VERB PRON ADP PRON ����� PROPN AUX ������� ���� PRON の周りの AUX VERB なけれ PART ばなりません PROPN ADP PRON AUX 力を感じ は あなた PROPN ������� VERB PRON DET ���� ADP ������� AUX ����� jin VERB PROPN anni PROPN verven ave ADP AUX PRON m'orvikoon DET sekke ADP VERB PRON AUX PART 26

Corpus: u The Task Typology nsubj dobj case amod … 0. 04 0. 96 0. 04 0. 03 … 0. 0 1. 0 0. 0 … 0. 01 0. 25 0. 98 0. 03 … 0. 03 0. 76 0. 01 0. 73 … • Papa ate with the caviar a spoon. • Mama ate with the caviar a spoon. … • パパはキャビアとスプーンを食。 • ママはキャビアとスプーンを食。 … • ��������� • ������� �� ��� … • Papa a mangé au caviar cuillère. • Maman a mangé au caviar cuillère. … 27

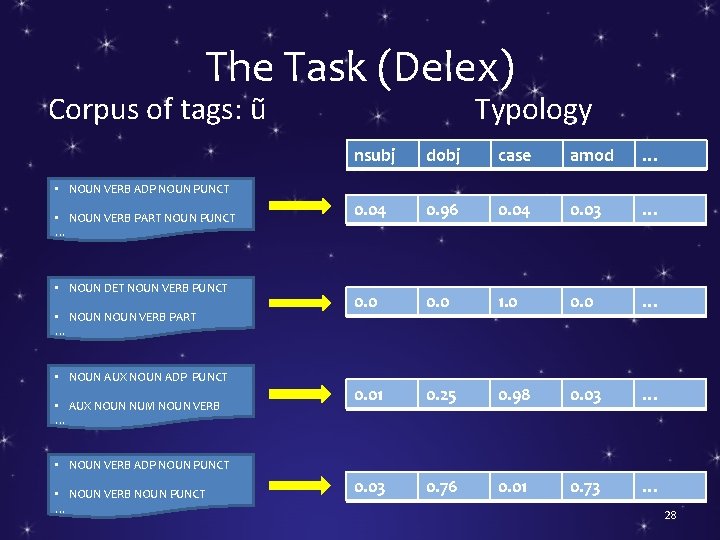

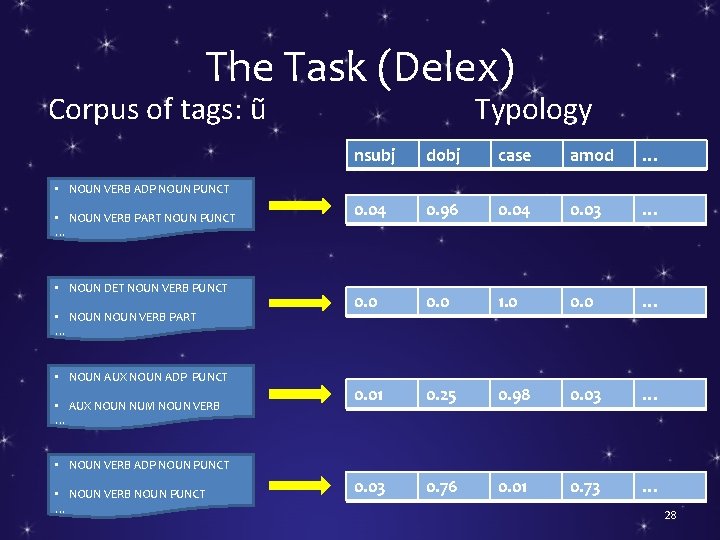

The Task (Delex) Corpus of tags: ũ Typology nsubj dobj case amod … 0. 04 0. 96 0. 04 0. 03 … 0. 0 1. 0 0. 0 … 0. 01 0. 25 0. 98 0. 03 … 0. 03 0. 76 0. 01 0. 73 … • NOUN VERB ADP NOUN PUNCT • NOUN VERB PART NOUN PUNCT … • NOUN DET NOUN VERB PUNCT • NOUN VERB PART … • NOUN AUX NOUN ADP PUNCT • AUX NOUN NUM NOUN VERB … • NOUN VERB ADP NOUN PUNCT • NOUN VERB NOUN PUNCT … 28

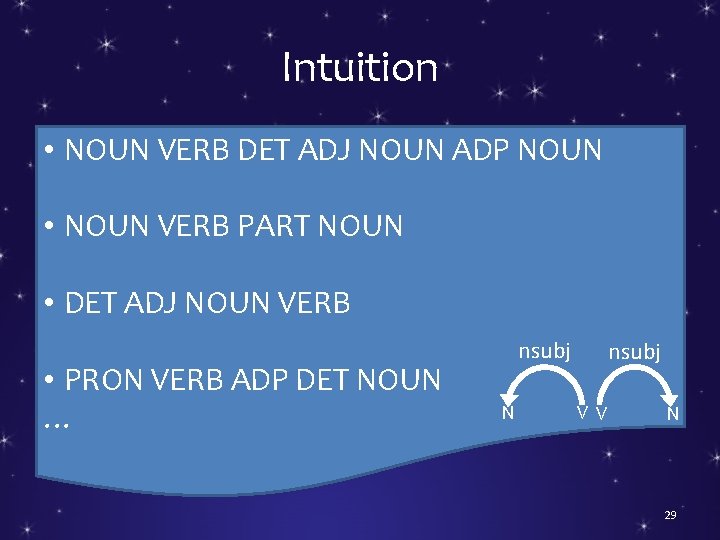

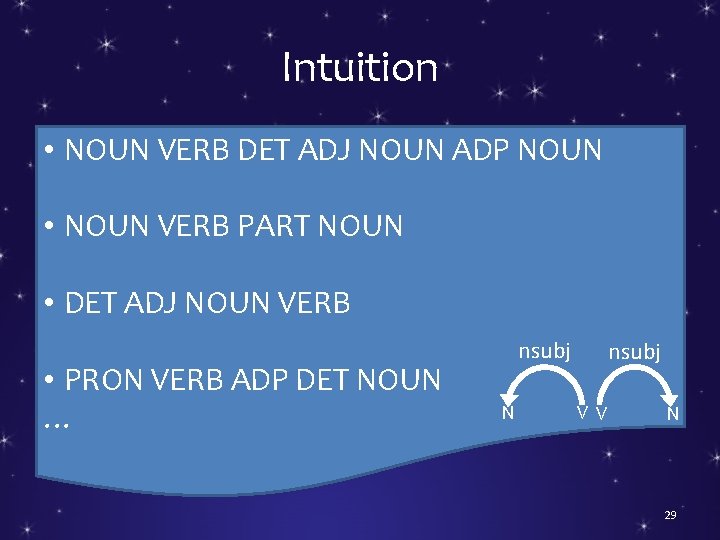

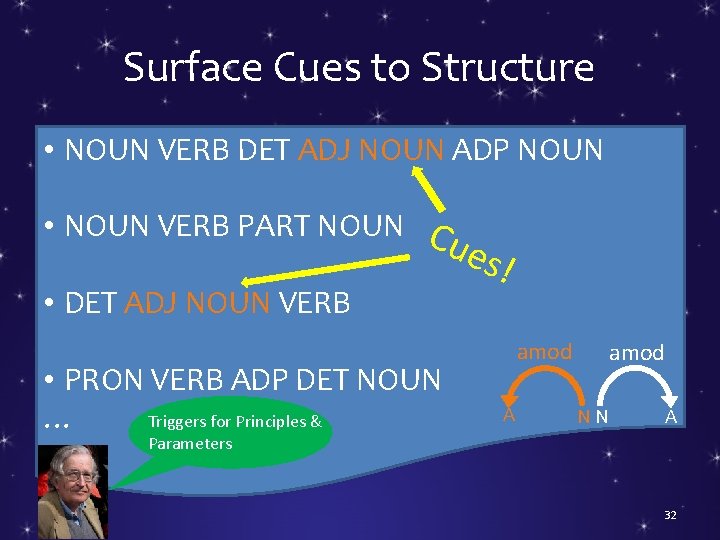

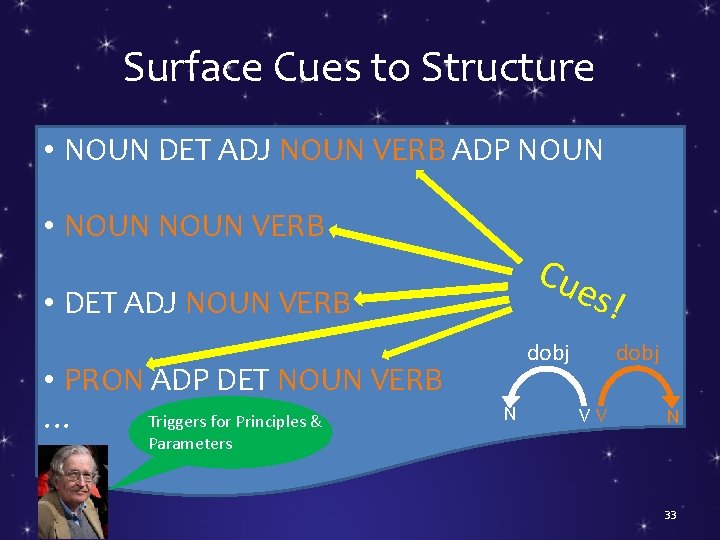

Intuition • NOUN VERB DET ADJ NOUN ADP NOUN • NOUN VERB PART NOUN • DET ADJ NOUN VERB • PRON VERB ADP DET NOUN … nsubj N nsubj V V N 29

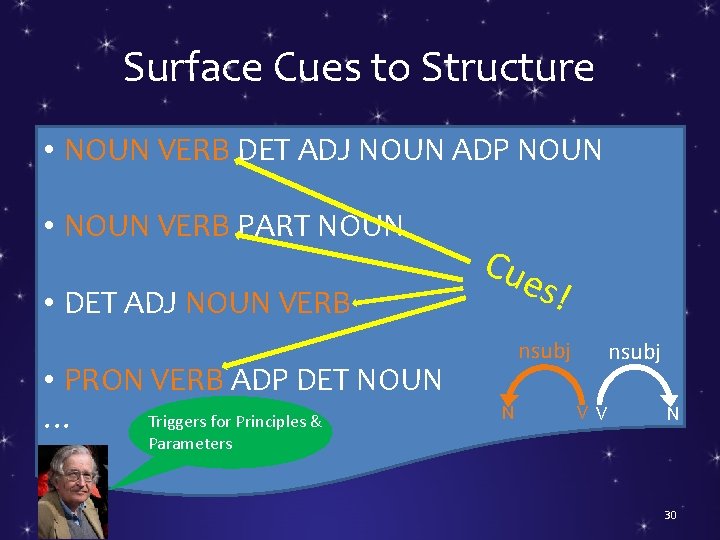

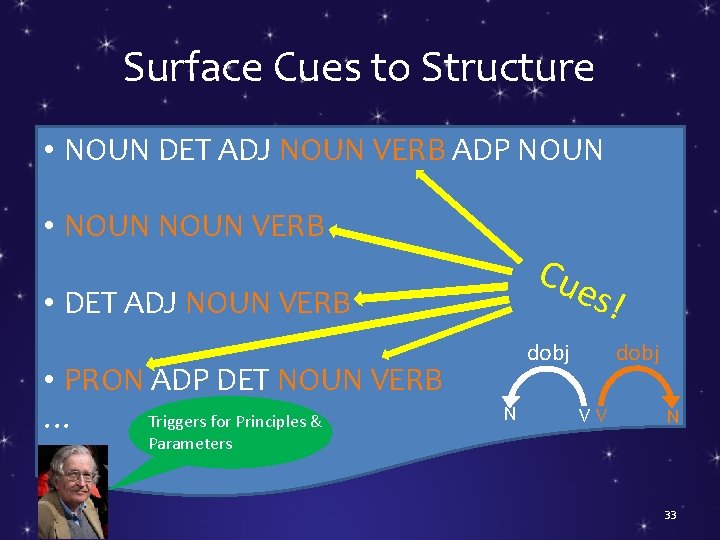

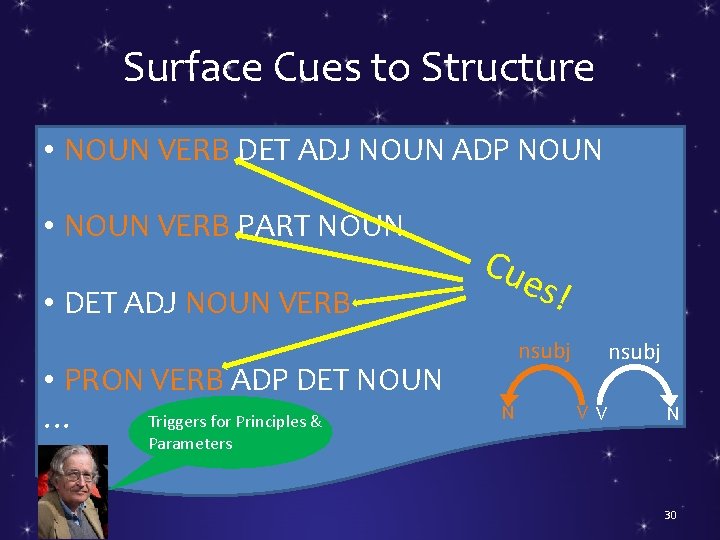

Surface Cues to Structure • NOUN VERB DET ADJ NOUN ADP NOUN • NOUN VERB PART NOUN • DET ADJ NOUN VERB • PRON VERB ADP DET NOUN Triggers for Principles & … Cue s! nsubj N nsubj V V N Parameters 30

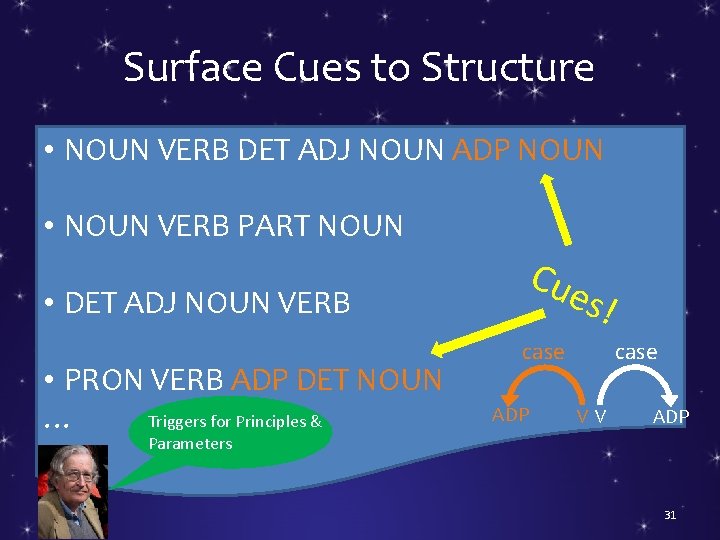

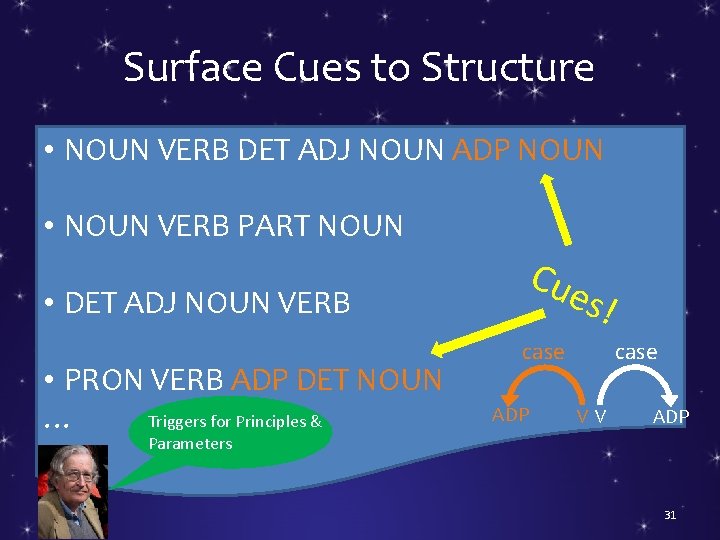

Surface Cues to Structure • NOUN VERB DET ADJ NOUN ADP NOUN • NOUN VERB PART NOUN • DET ADJ NOUN VERB • PRON VERB ADP DET NOUN Triggers for Principles & … Cue s! case ADP case VV ADP Parameters 31

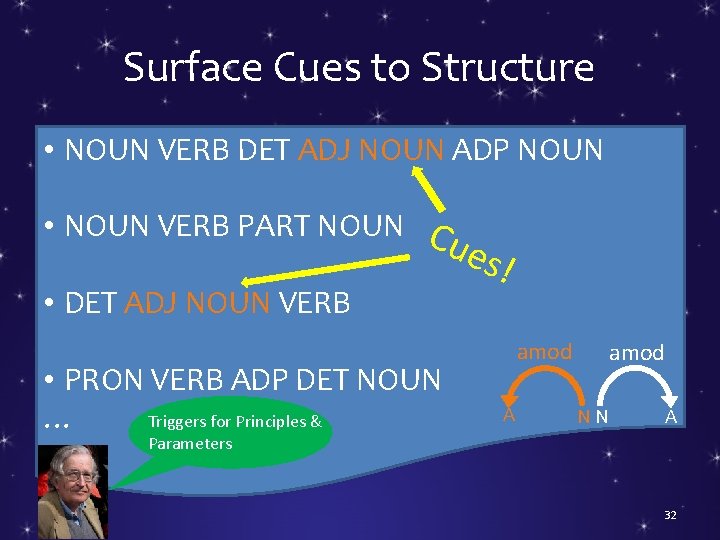

Surface Cues to Structure • NOUN VERB DET ADJ NOUN ADP NOUN • NOUN VERB PART NOUN Cue • DET ADJ NOUN VERB • PRON VERB ADP DET NOUN Triggers for Principles & … s! amod A amod NN A Parameters 32

Surface Cues to Structure • NOUN DET ADJ NOUN VERB ADP NOUN • NOUN VERB Cue s! • DET ADJ NOUN VERB • PRON ADP DET NOUN VERB Triggers for Principles & … dobj N dobj VV N Parameters 33

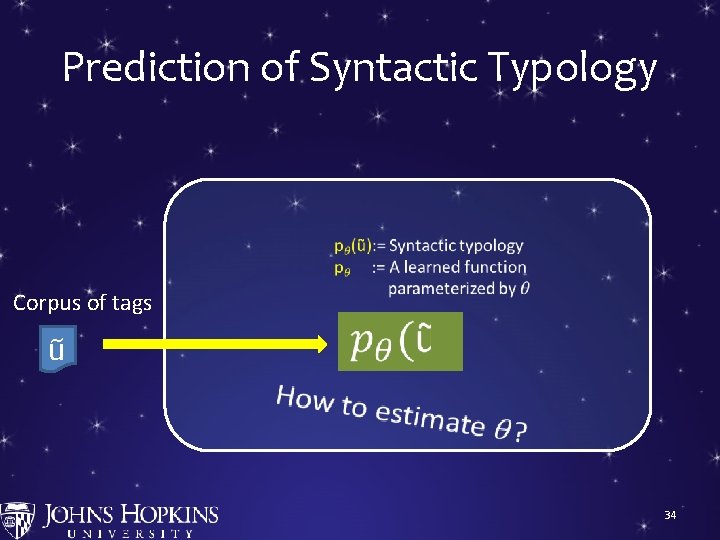

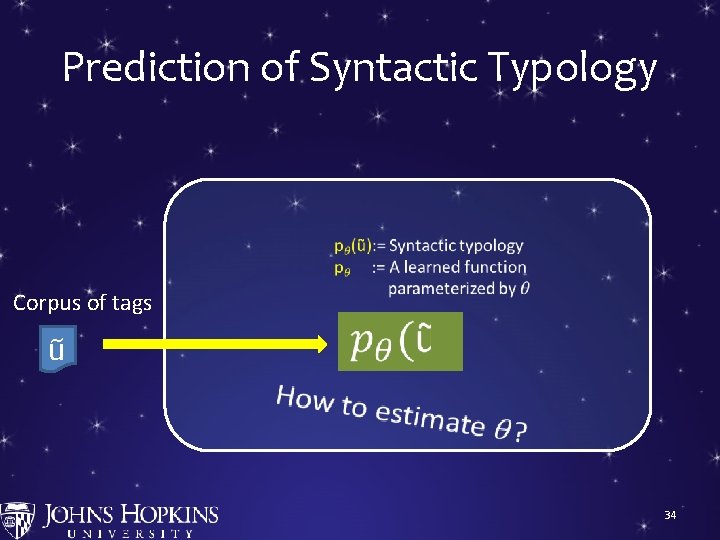

Prediction of Syntactic Typology Corpus of tags ũ S → NP VP VP → VP PP … 0. 9 0. 2 34

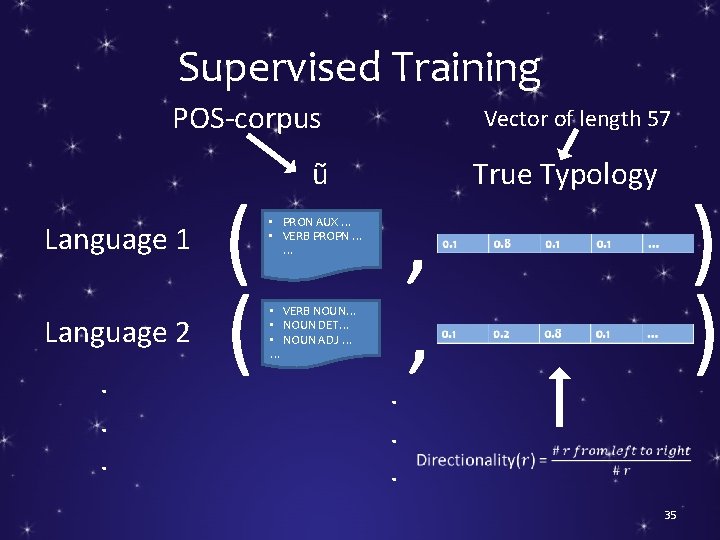

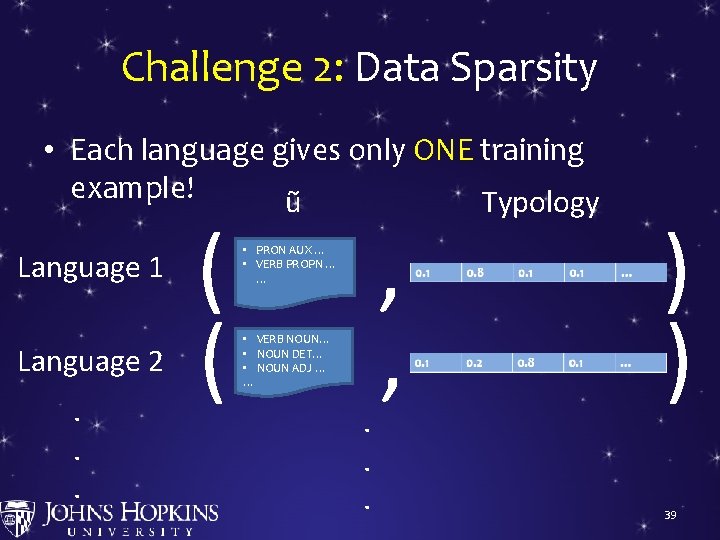

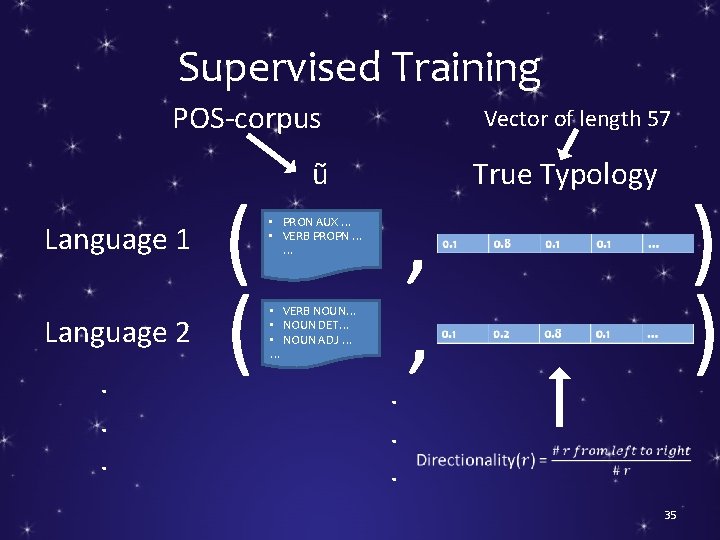

Supervised Training POS-corpus Language 1 Language 2. . . ( ( Vector of length 57 ũ • PRON AUX … • VERB PROPN … … • VERB NOUN… • NOUN DET… • NOUN ADJ … … . . . , , True Typology ) ) 35

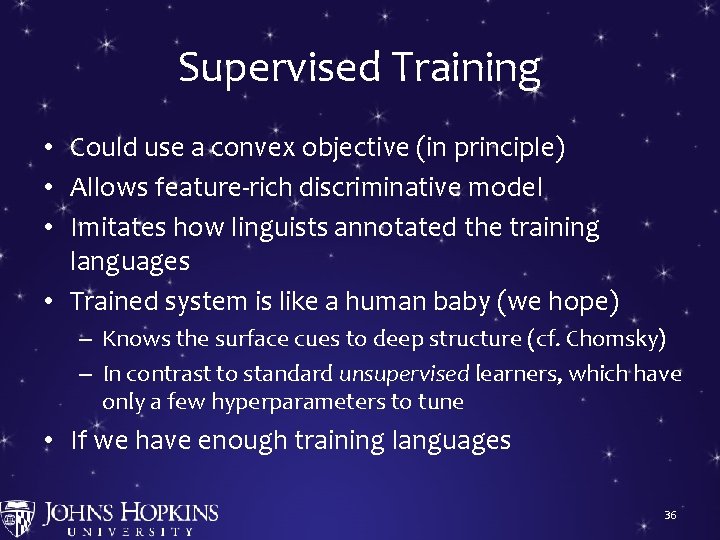

Supervised Training • Could use a convex objective (in principle) • Allows feature-rich discriminative model • Imitates how linguists annotated the training languages • Trained system is like a human baby (we hope) – Knows the surface cues to deep structure (cf. Chomsky) – In contrast to standard unsupervised learners, which have only a few hyperparameters to tune • If we have enough training languages 36

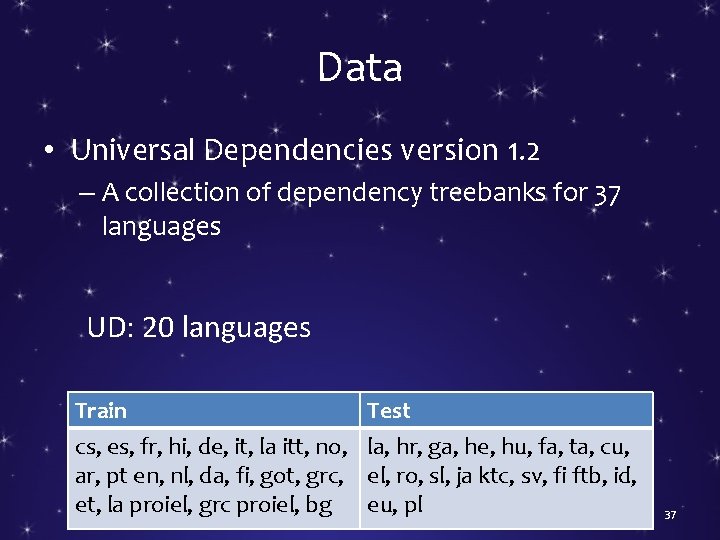

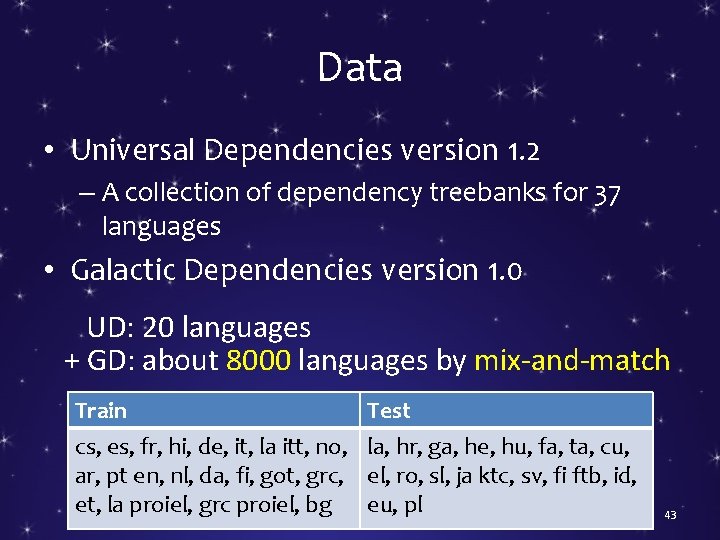

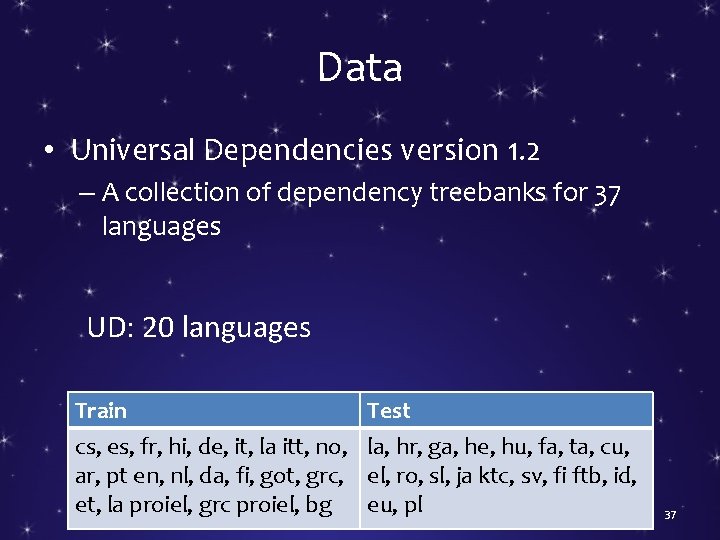

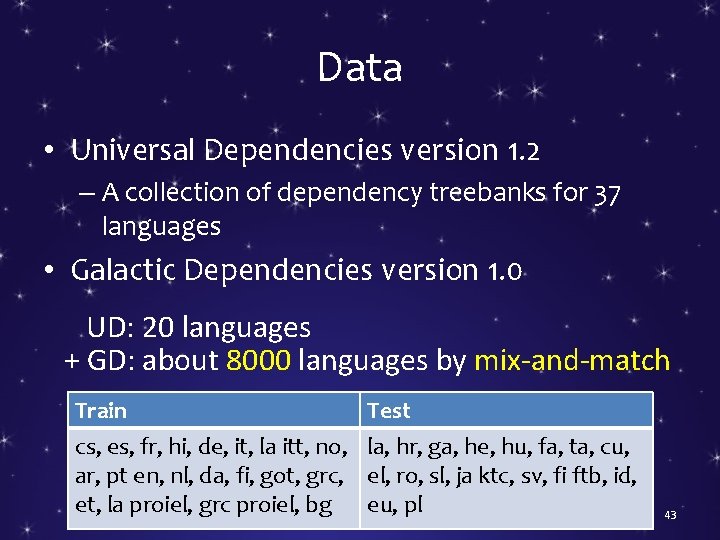

Data • Universal Dependencies version 1. 2 – A collection of dependency treebanks for 37 languages UD: 20 languages Train cs, es, fr, hi, de, it, la itt, no, ar, pt en, nl, da, fi, got, grc, et, la proiel, grc proiel, bg Test la, hr, ga, he, hu, fa, ta, cu, el, ro, sl, ja ktc, sv, fi ftb, id, eu, pl 37

Supervised Training • Could use a convex objective (in principle) • Allows feature-rich discriminative model • Imitates how linguists annotated the training languages • Trained system is like a human baby (we hope) – Knows the surface cues to deep structure (cf. Chomsky) – In contrast to standard unsupervised learners, which have only a few hyperparameters to tune • If we have enough training languages ? ? ? 38

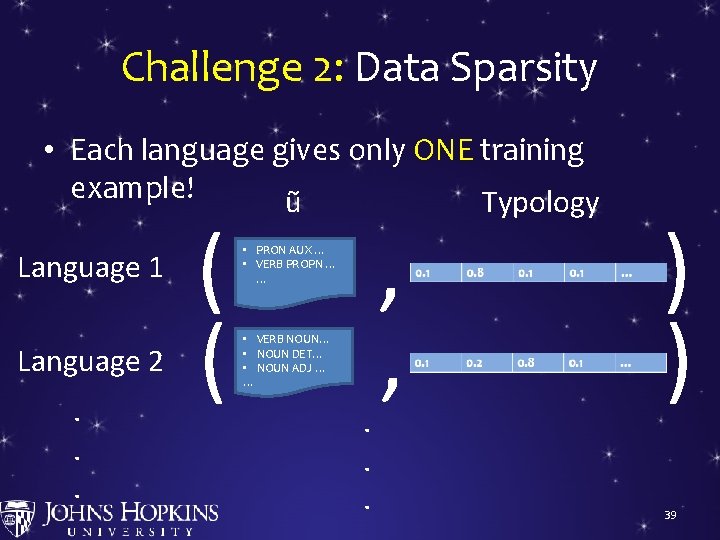

Challenge 2: Data Sparsity • Each language gives only ONE training example! ũ Typology Language 1 Language 2. . . ( ( • PRON AUX … • VERB PROPN … … • VERB NOUN… • NOUN DET… • NOUN ADJ … … . . . , , ) ) 39

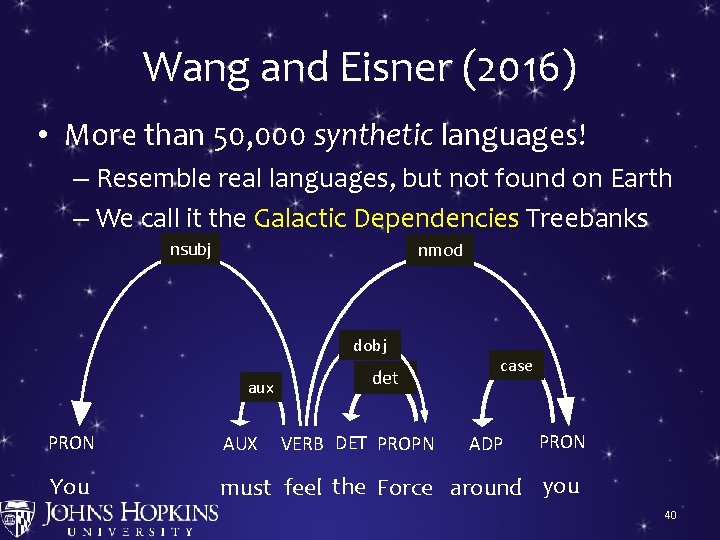

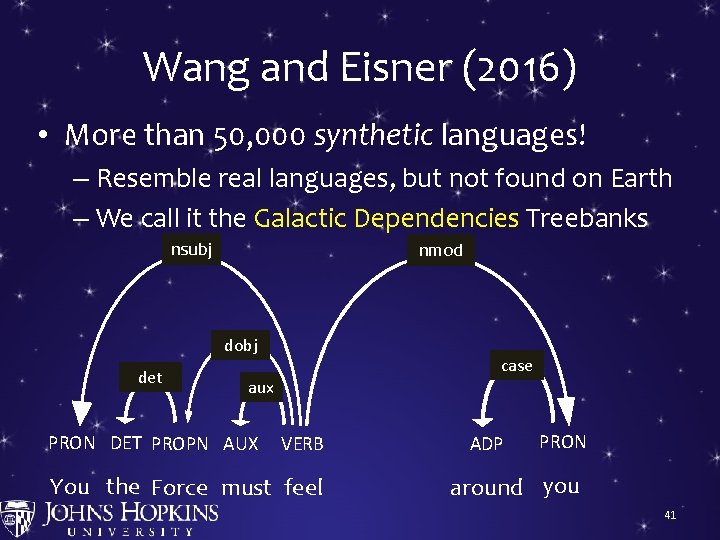

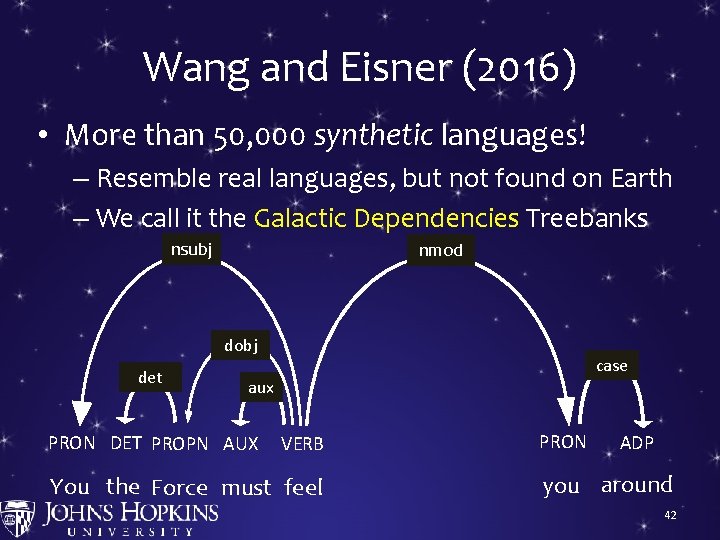

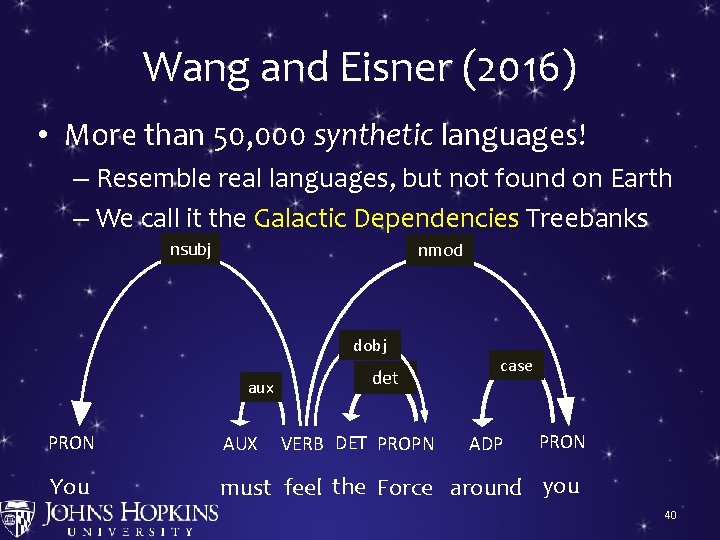

Wang and Eisner (2016) • More than 50, 000 synthetic languages! – Resemble real languages, but not found on Earth – We call it the Galactic Dependencies Treebanks nsubj nmod dobj aux det case PRON AUX You must feel the Force around you VERB DET PROPN ADP 40

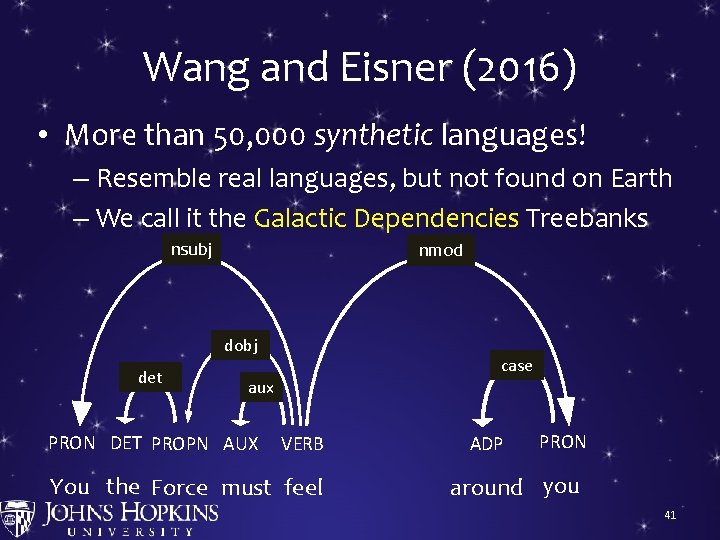

Wang and Eisner (2016) • More than 50, 000 synthetic languages! – Resemble real languages, but not found on Earth – We call it the Galactic Dependencies Treebanks nsubj nmod dobj det case aux PRON DET PROPN AUX VERB You the Force must feel ADP PRON around you 41

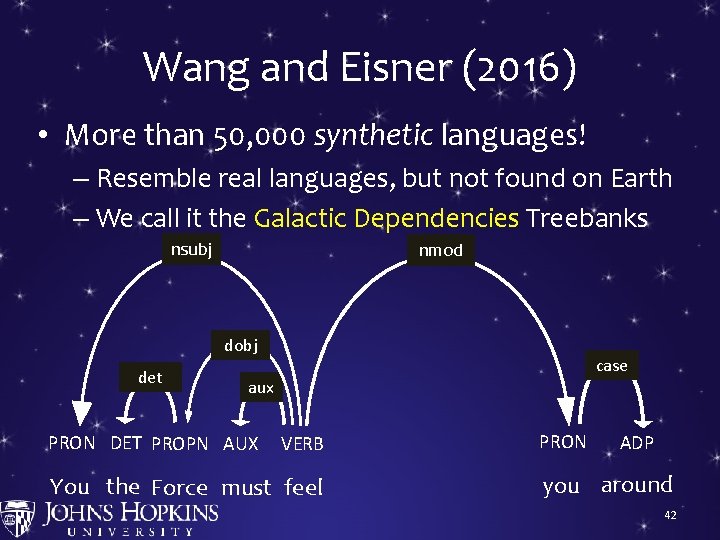

Wang and Eisner (2016) • More than 50, 000 synthetic languages! – Resemble real languages, but not found on Earth – We call it the Galactic Dependencies Treebanks nsubj nmod dobj det case aux PRON DET PROPN AUX VERB You the Force must feel PRON ADP you around 42

Data • Universal Dependencies version 1. 2 – A collection of dependency treebanks for 37 languages • Galactic Dependencies version 1. 0 UD: 20 languages + GD: about 8000 languages by mix-and-match Train cs, es, fr, hi, de, it, la itt, no, ar, pt en, nl, da, fi, got, grc, et, la proiel, grc proiel, bg Test la, hr, ga, he, hu, fa, ta, cu, el, ro, sl, ja ktc, sv, fi ftb, id, eu, pl 43

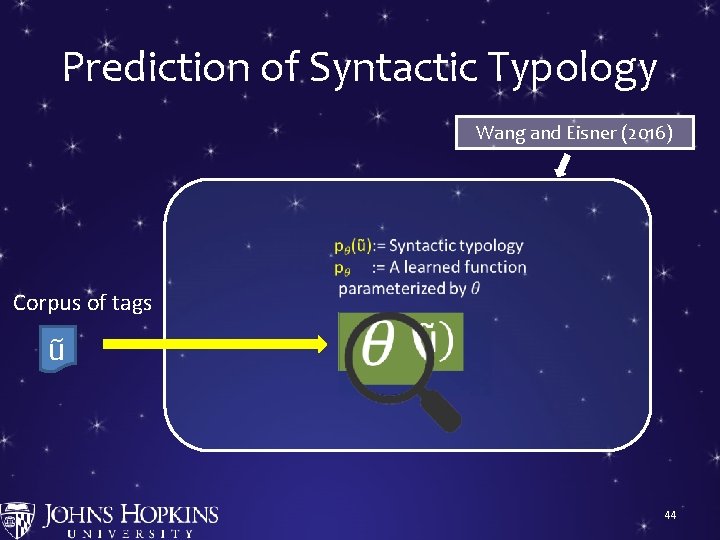

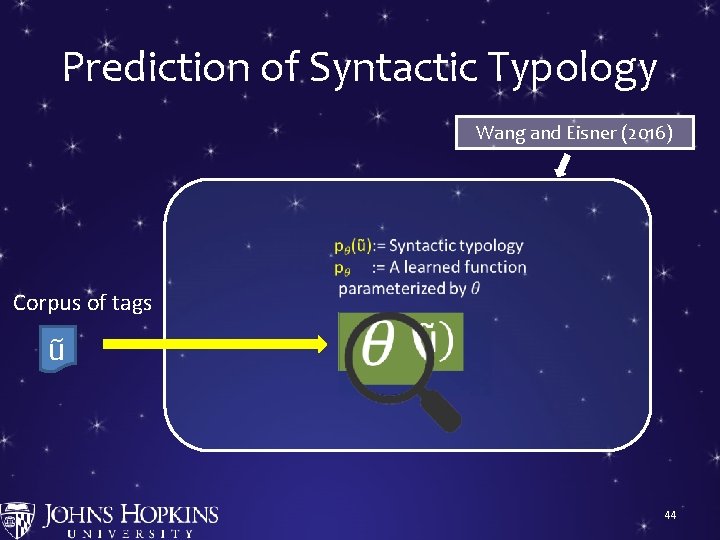

Prediction of Syntactic Typology Wang and Eisner (2016) Corpus of tags ũ S → NP VP VP → VP PP … 0. 9 0. 2 44

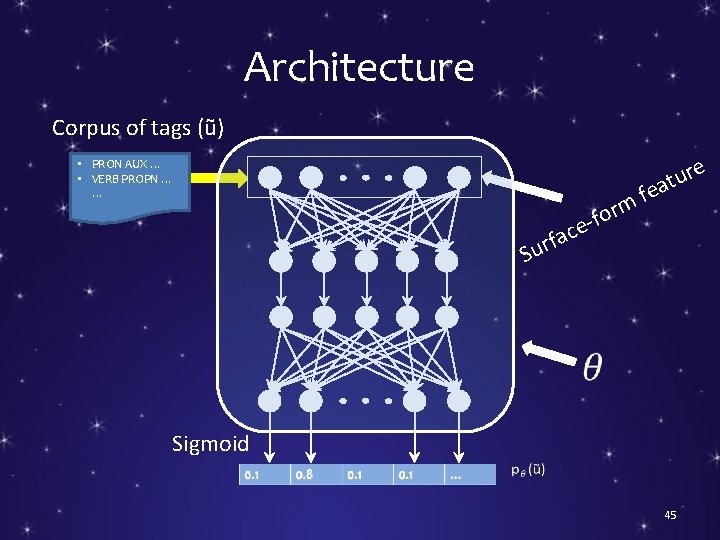

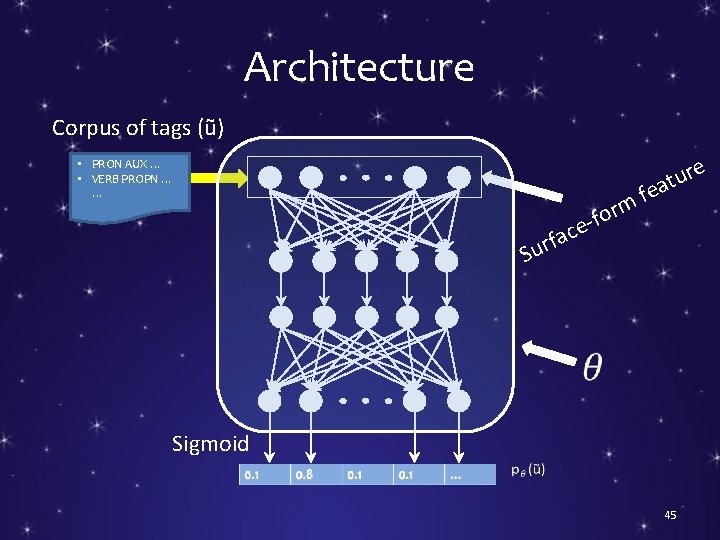

Architecture Corpus of tags (ũ) • PRON AUX … • VERB PROPN … … f m or f e ac f Sur e r u eat Sigmoid 45

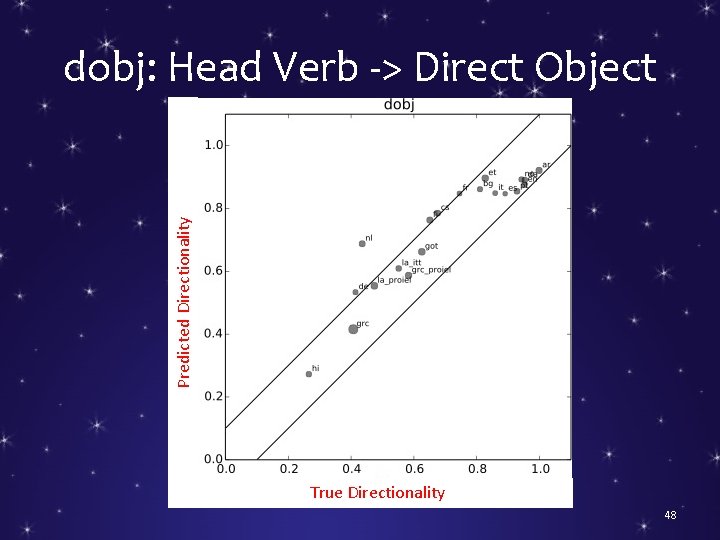

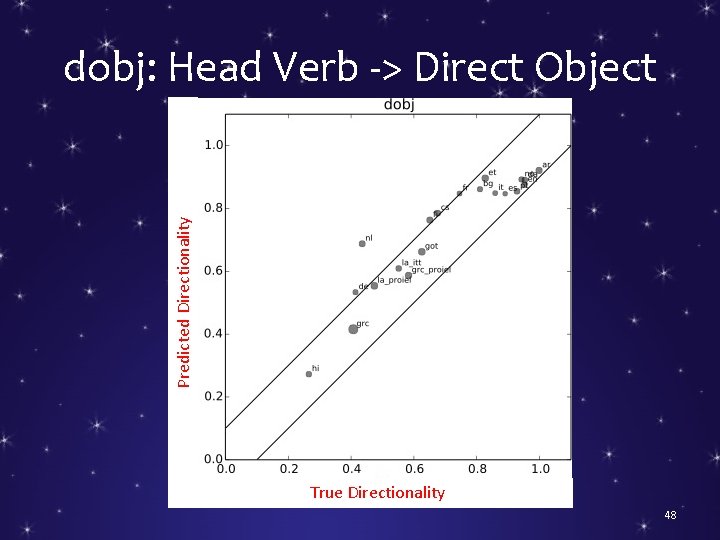

Predicted Directionality dobj: Head Verb -> Direct Object True Directionality 48

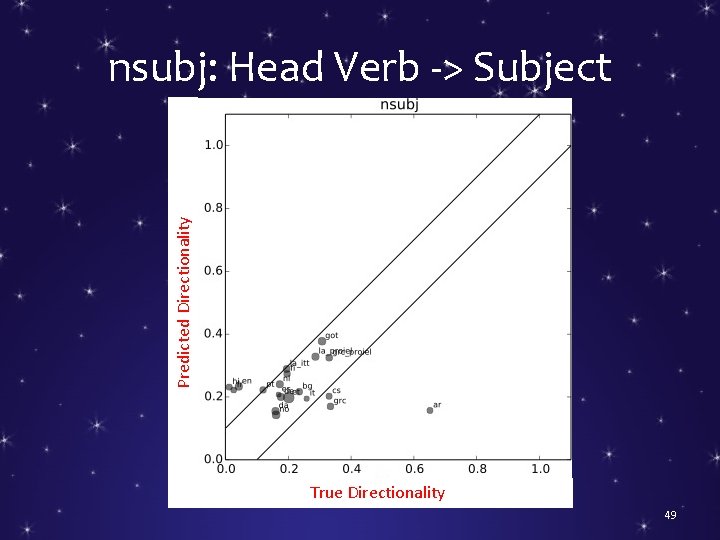

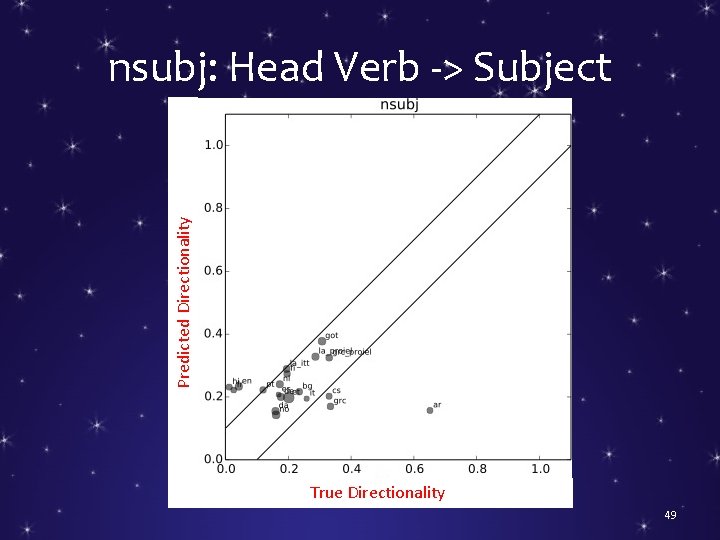

Predicted Directionality nsubj: Head Verb -> Subject True Directionality 49

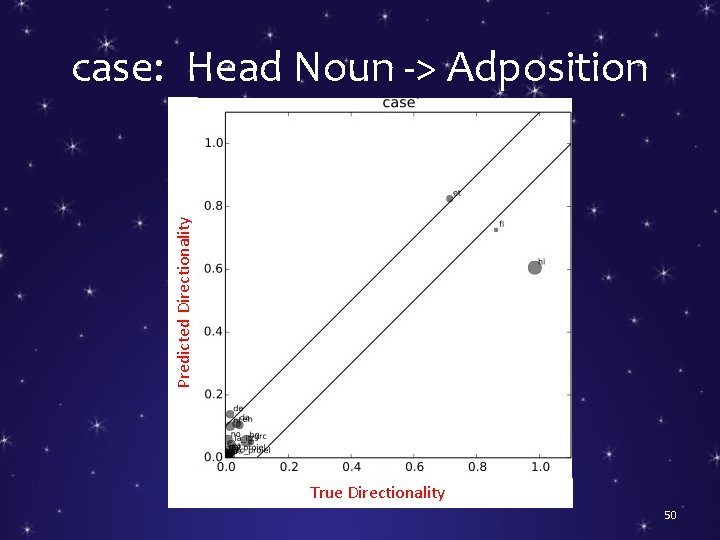

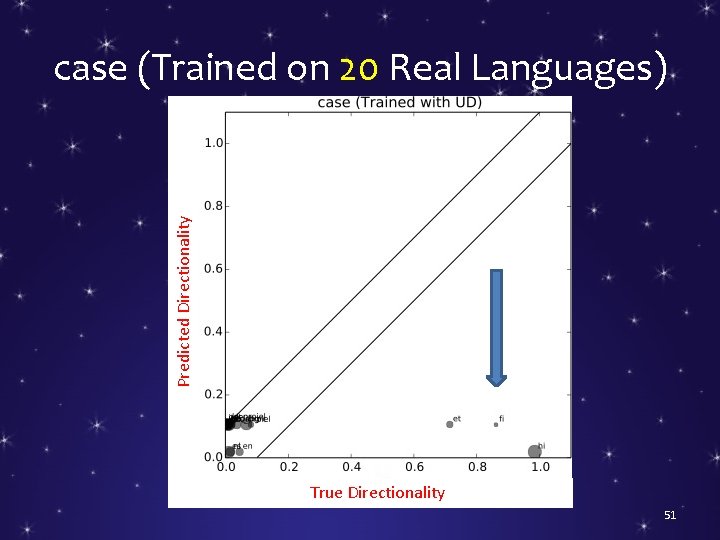

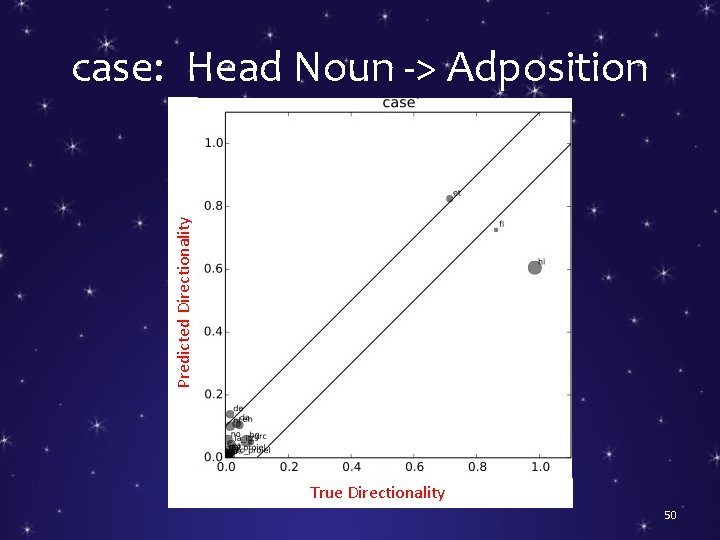

Predicted Directionality case: Head Noun -> Adposition True Directionality 50

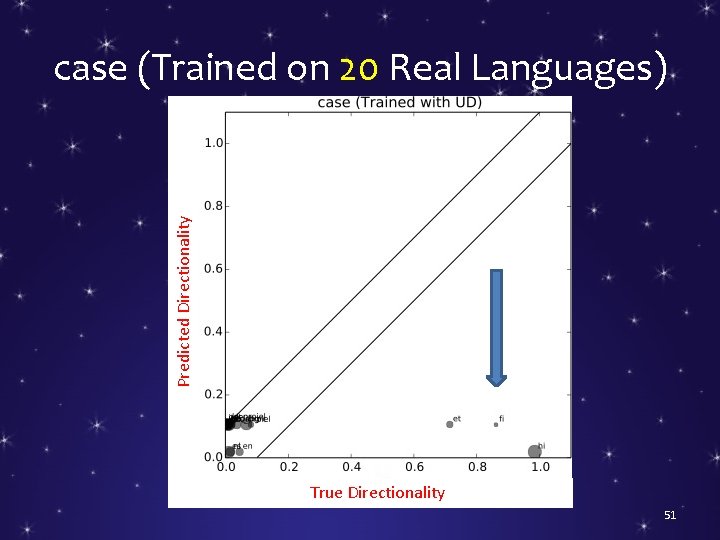

Predicted Directionality case (Trained on 20 Real Languages) True Directionality 51

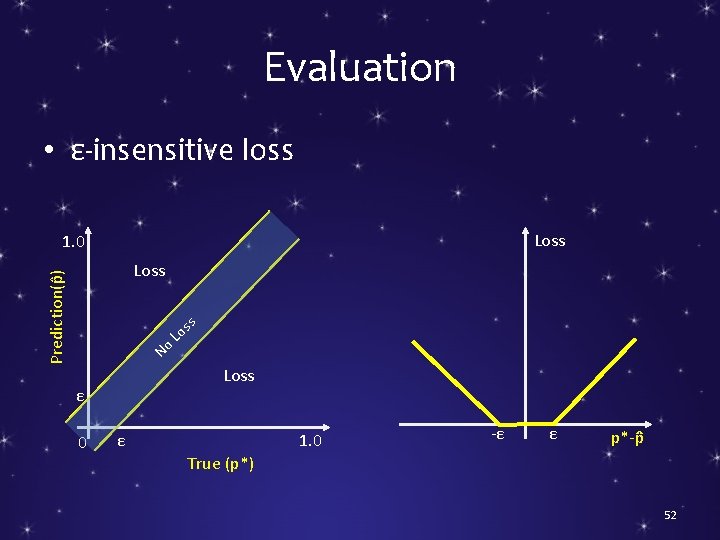

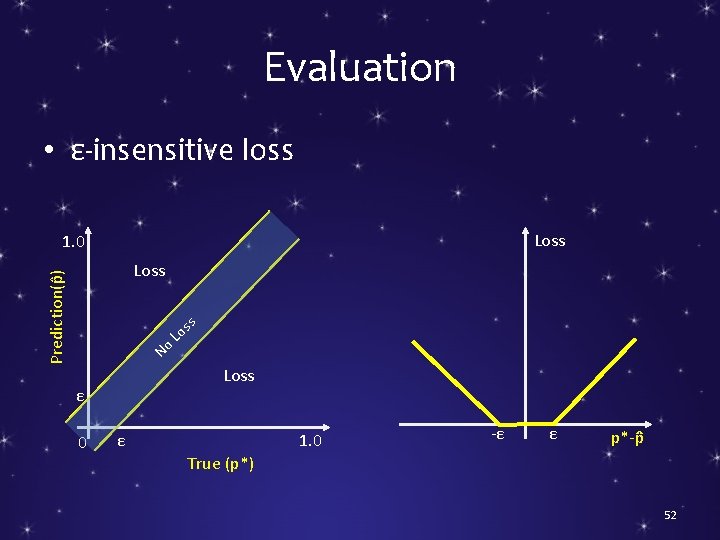

Evaluation • ε-insensitive loss Loss 1. 0 Prediction(p ) Loss No Loss ε 0 ss o L ε 1. 0 -ε ε p*-p True (p*) 52

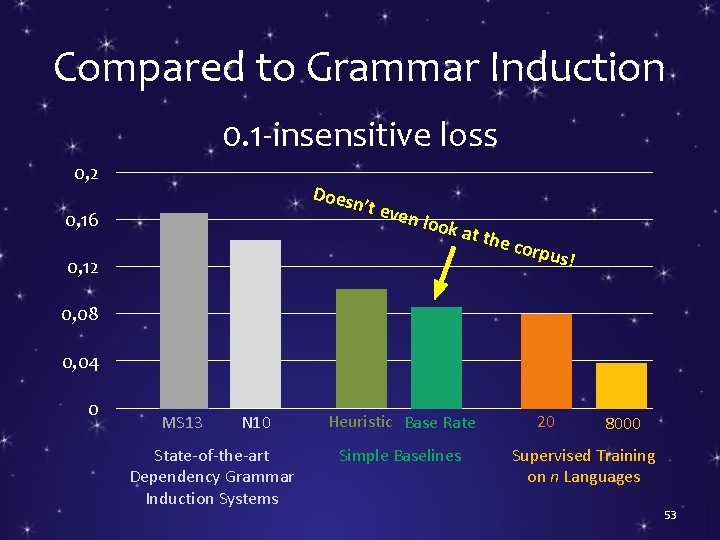

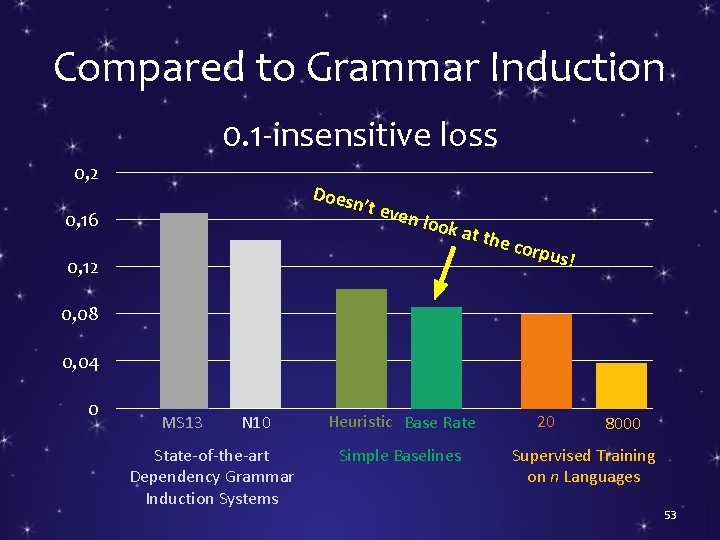

Compared to Grammar Induction 0. 1 -insensitive loss 0, 2 Does n’t ev 0, 16 en lo o k at t 0, 12 he co r pus! 0, 08 0, 04 0 MS 13 N 10 State-of-the-art Dependency Grammar Induction Systems Heuristic Base Rate Simple Baselines 20 8000 Supervised Training on n Languages 53

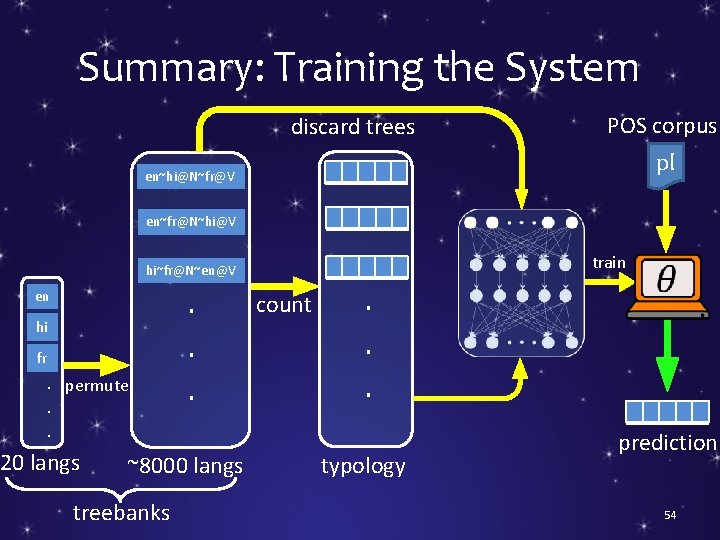

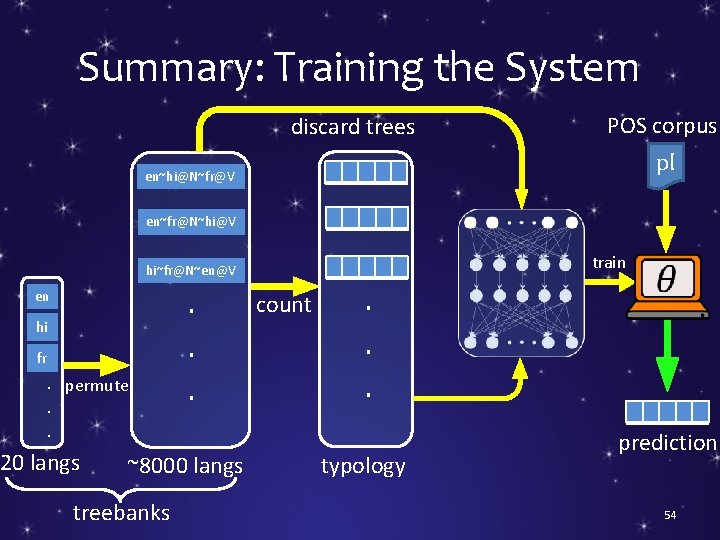

Summary: Training the System discard trees POS corpus pl en~hi@N~fr@V en~fr@N~hi@V train hi~fr@N~en@V en hi fr . permute. . 20 langs . . . ~8000 langs treebanks count . . . typology prediction 54

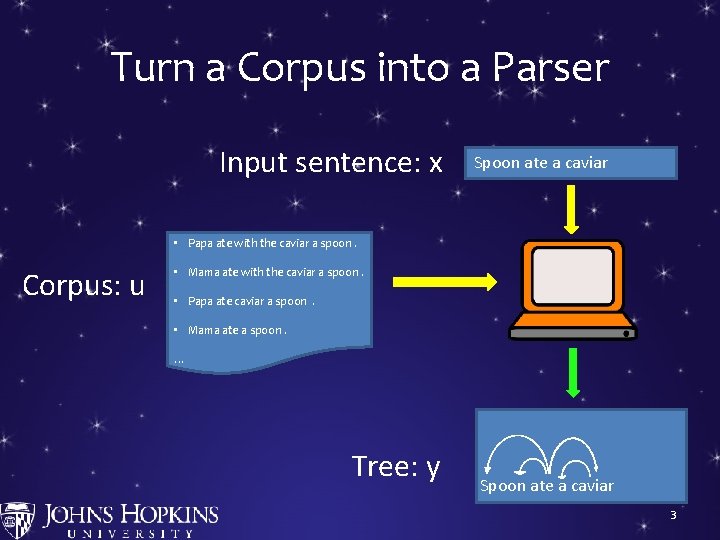

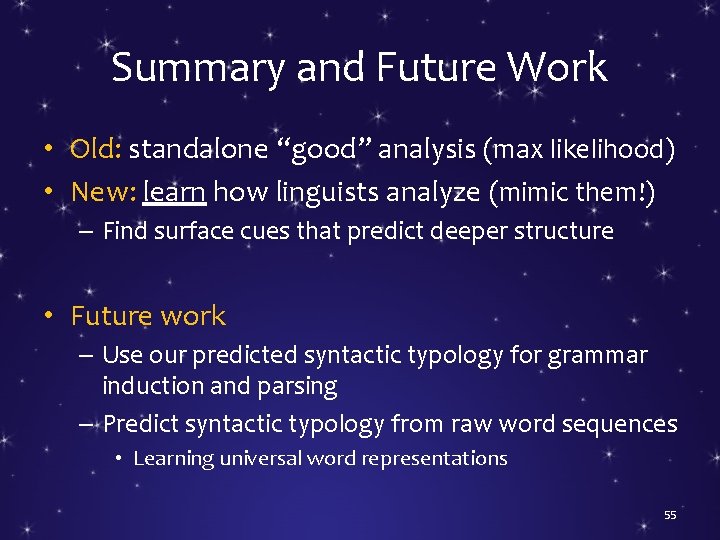

Summary and Future Work • Old: standalone “good” analysis (max likelihood) • New: learn how linguists analyze (mimic them!) – Find surface cues that predict deeper structure • Future work – Use our predicted syntactic typology for grammar induction and parsing – Predict syntactic typology from raw word sequences • Learning universal word representations 55

Thanks! 56