Finding Limits of Parallelism using Dynamic Dependency Graphs

- Slides: 20

Finding Limits of Parallelism using Dynamic Dependency Graphs – How much parallelism is out there? Jonathan Mak & Alan Mycroft University of Cambridge 23 November 2020 WODA 2009, Chicago

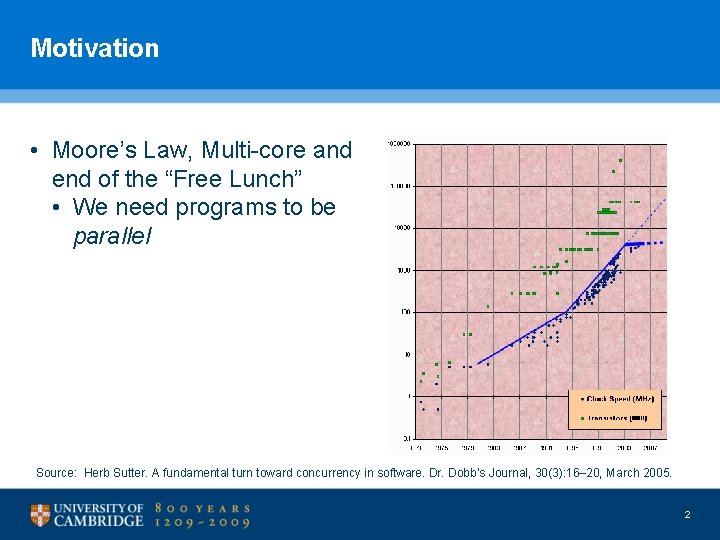

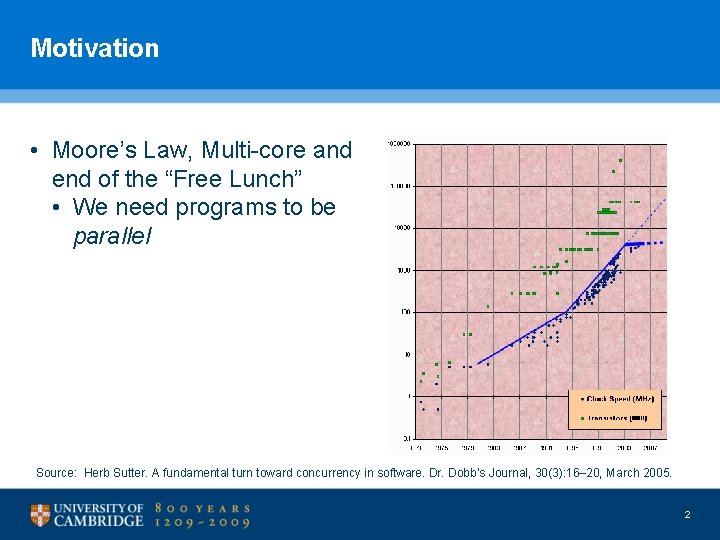

Motivation • Moore’s Law, Multi-core and end of the “Free Lunch” • We need programs to be parallel Source: Herb Sutter. A fundamental turn toward concurrency in software. Dr. Dobb’s Journal, 30(3): 16– 20, March 2005. 2

Two approaches Explicit Parallelism Implicit Parallelism • Specified by programmer • E. g. Open. MP, Java, MPI, Cilk, TBB, Join calculus • Too hard for the average programmer? • Extracted by compiler • E. g. Polaris [Blume+ 94], Dependence analysis [Kennedy 02], DSWP [Ottoni 05], GREMIO [Ottoni 07] 3

Implicit Parallelism – What’s the limit? • Existing implementations evaluated on small number of cores/processors (<10) • Speed-up rises with #procs • But how far can we go? • Limits of Instruction-level parallelism first explored by [Wall 93] • Assume: • • No threading overheads Inter-thread communication is free Perfect alias analysis Perfect oracle for dependence analysis 4

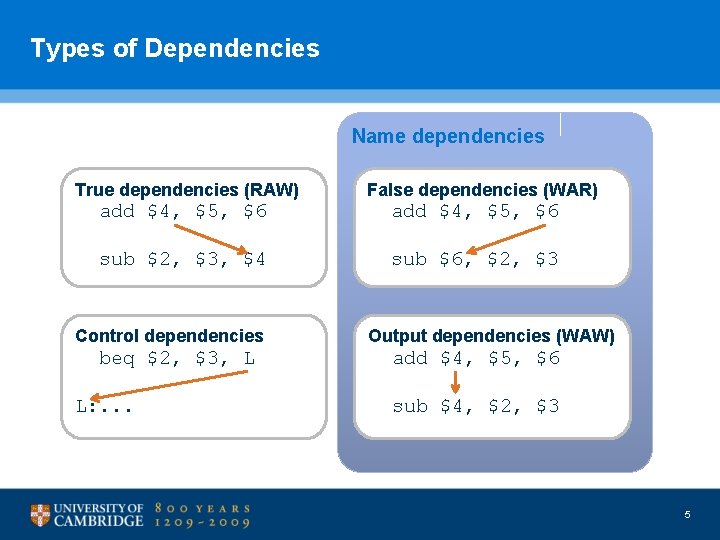

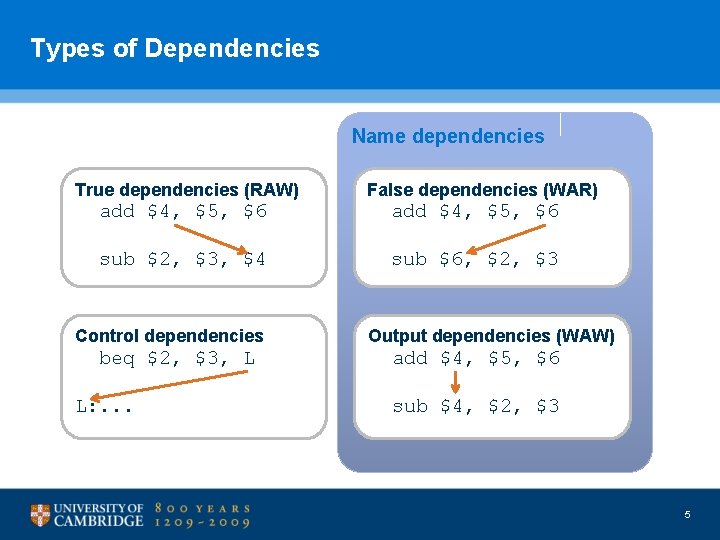

Types of Dependencies Name dependencies True dependencies (RAW) False dependencies (WAR) add $4, $5, $6 sub $2, $3, $4 sub $6, $2, $3 Control dependencies beq $2, $3, L L: . . . Output dependencies (WAW) add $4, $5, $6 sub $4, $2, $3 5

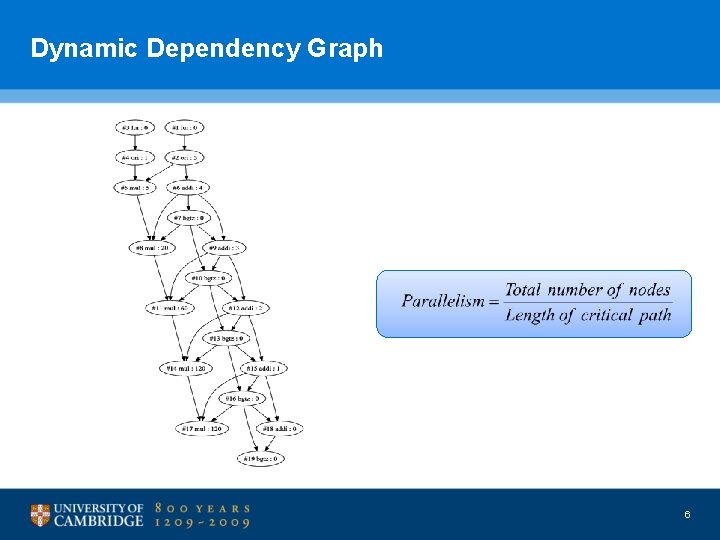

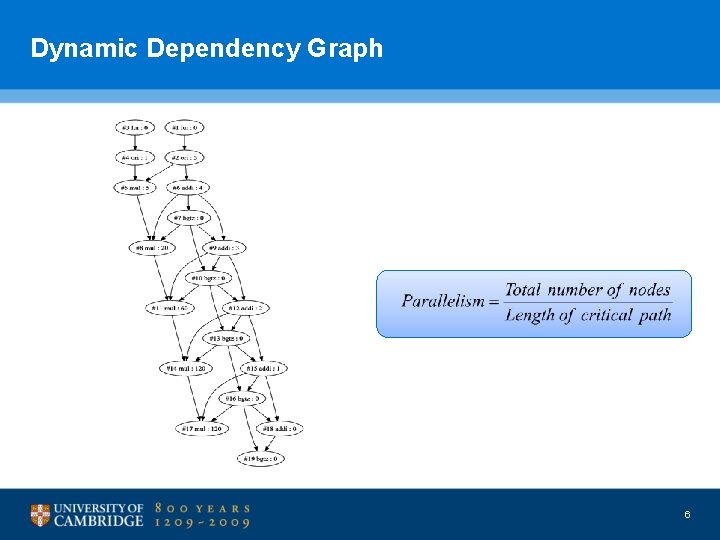

Dynamic Dependency Graph 6

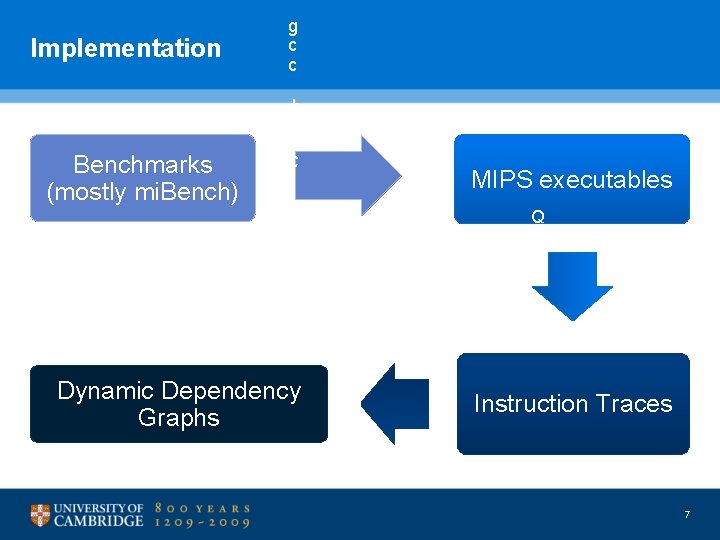

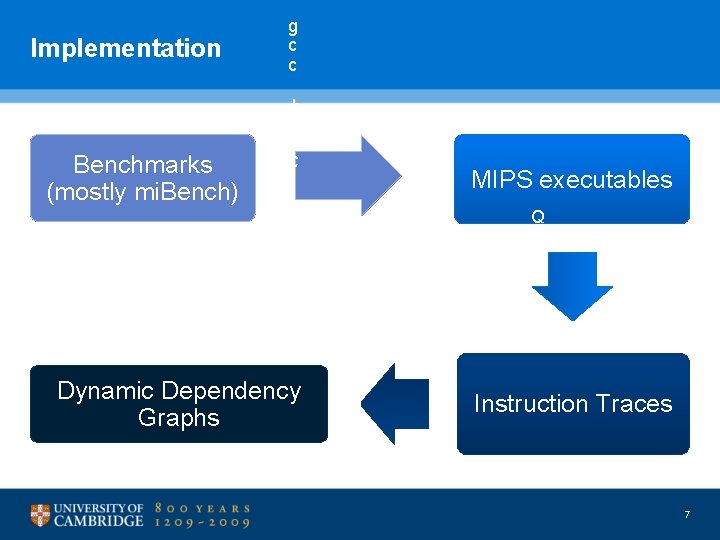

Implementation g c c + Benchmarks (mostly mi. Bench) μ C l i b c MIPS executables D D G Dynamic Dependency Graphs b u i l d e r Q E M U Instruction Traces 7

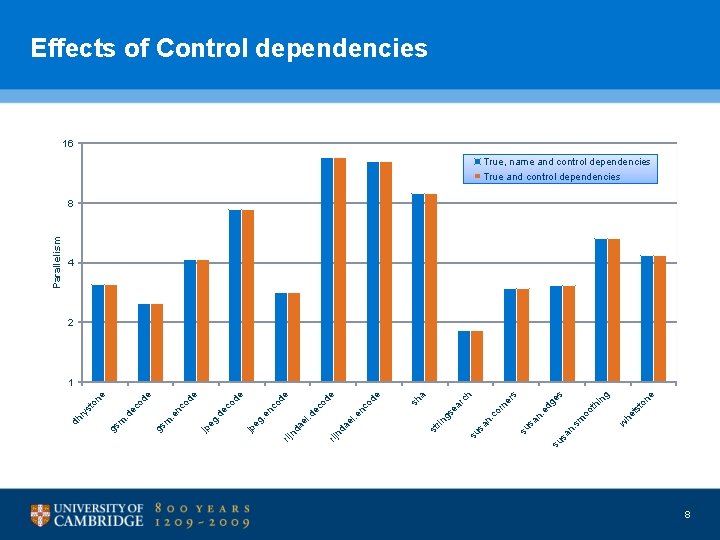

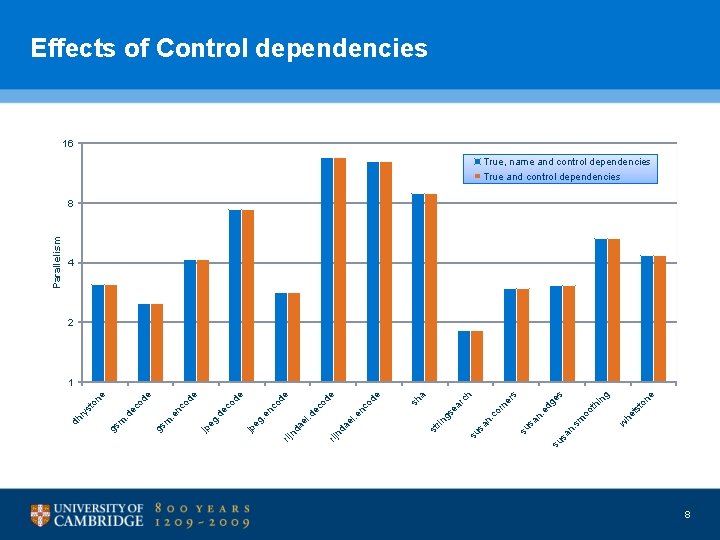

ne ng s ge to ts w he h er s hi oo t sm n. sa su n. ed sa su rn n. co sa a rc ea gs rin st sh e e od nc . e el nd a od de co ec . d el nd a su rij . e n eg jp e de co . d e eg jp e od nc gs m. e od ec gs m. d on e st ry dh Parallelism Effects of Control dependencies 16 True, name and control dependencies True and control dependencies 8 4 2 1 8

Effects of Control dependencies • Restricts parallelism to within (dynamic) basic block • Parallelism <10 in most cases • Already exploited in multiple-issue processors • Good news #1: Good branch prediction is not difficult • But only applies locally, examining at most 10 s of instructions in advance • Good news #2: Control flow merge points not considered here • E. g. if R 1 then { R 2 } else { R 3 } R 4 • Static analysis would help us remove such dependencies 9

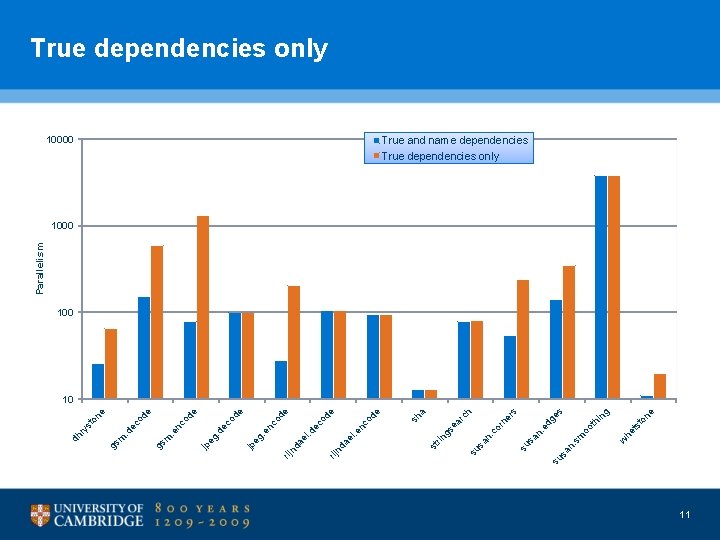

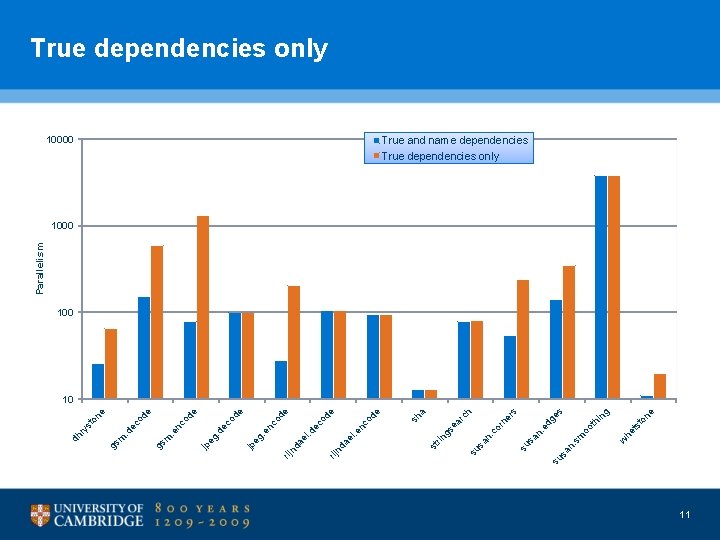

True dependencies only • Can speculate away control dependencies • Some name dependencies are compiler artifacts • Caused by memory being reused by unrelated calculations • True dependencies represent essence of algorithm 10

ne ng s ge to ts w he h er s hi oo t sm n. sa su n. ed sa su rn n. co sa a rc ea gs rin st sh e e od nc . e el nd a de od ec . d el nd a co 10000 su rij . e n eg jp e de co . d e eg jp e od nc gs m. e e od ec gs m. d st on ry dh Parallelism True dependencies only True and name dependencies True dependencies only 1000 10 11

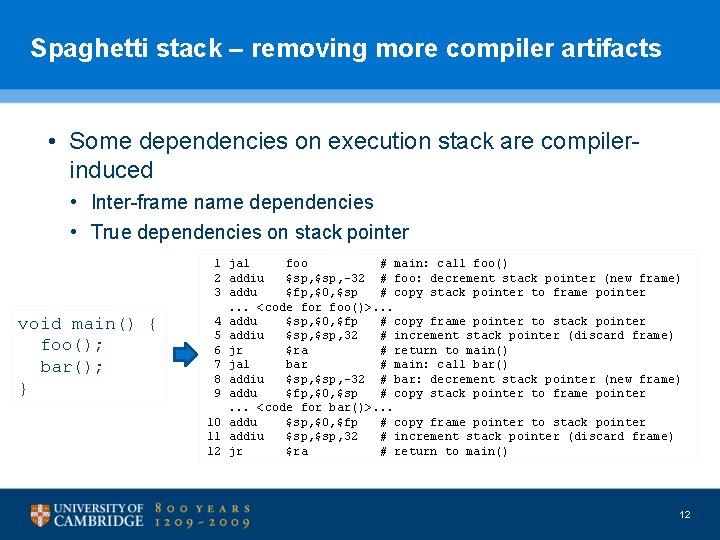

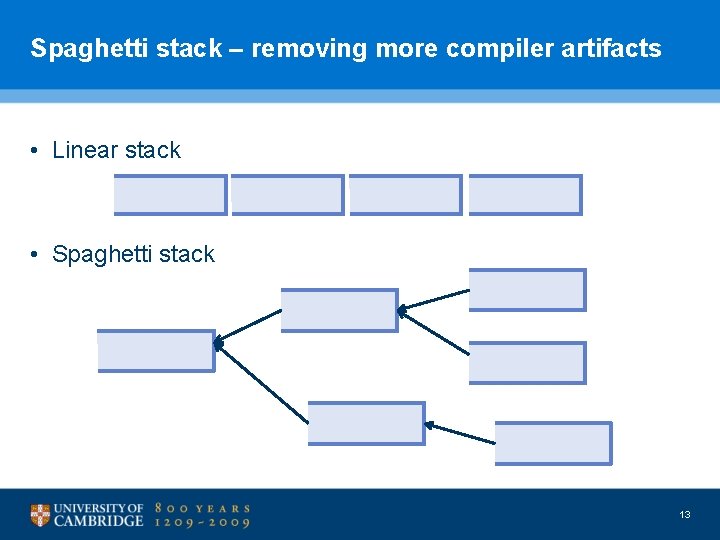

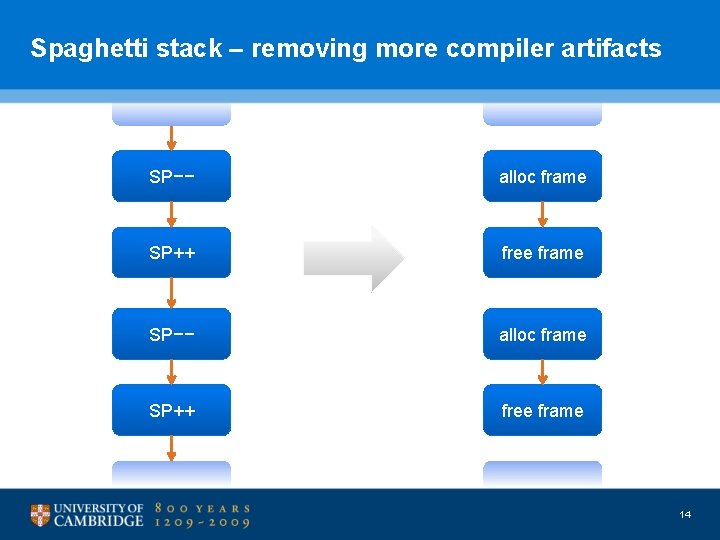

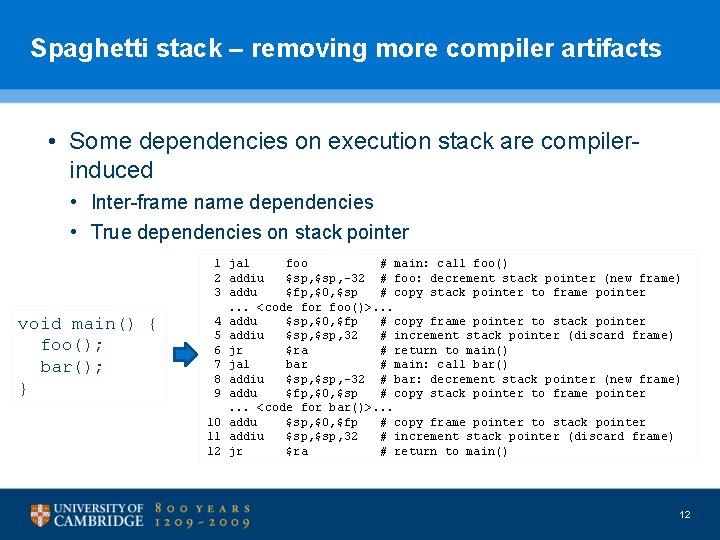

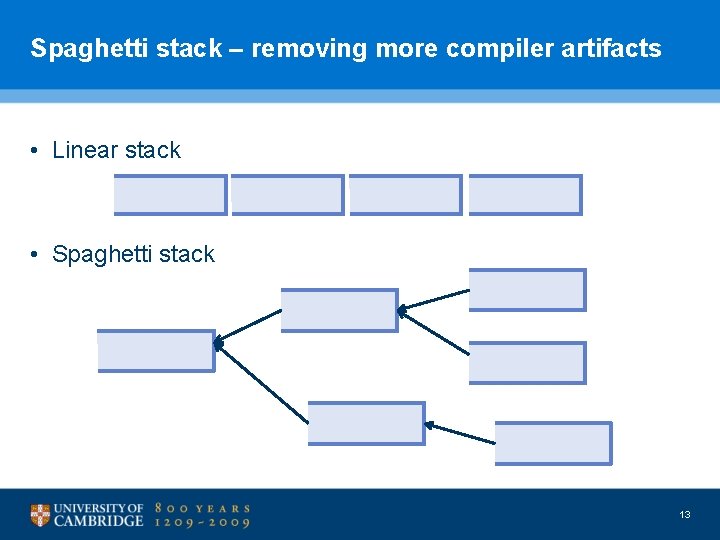

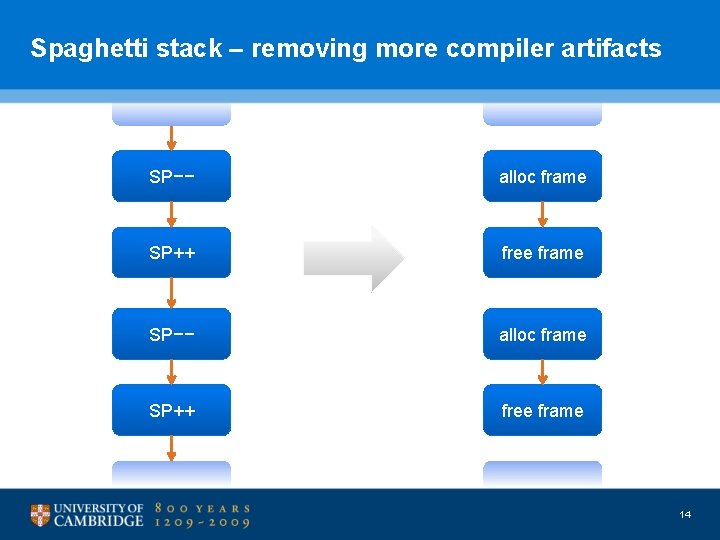

Spaghetti stack – removing more compiler artifacts • Some dependencies on execution stack are compilerinduced • Inter-frame name dependencies • True dependencies on stack pointer void main() { foo(); bar(); } 1 jal foo # main: call foo() 2 addiu $sp, -32 # foo: decrement stack pointer (new frame) 3 addu $fp, $0, $sp # copy stack pointer to frame pointer. . . <code for foo()>. . . 4 addu $sp, $0, $fp # copy frame pointer to stack pointer 5 addiu $sp, 32 # increment stack pointer (discard frame) 6 jr $ra # return to main() 7 jal bar # main: call bar() 8 addiu $sp, -32 # bar: decrement stack pointer (new frame) 9 addu $fp, $0, $sp # copy stack pointer to frame pointer. . . <code for bar()>. . . 10 addu $sp, $0, $fp # copy frame pointer to stack pointer 11 addiu $sp, 32 # increment stack pointer (discard frame) 12 jr $ra # return to main() 12

Spaghetti stack – removing more compiler artifacts • Linear stack • Spaghetti stack 13

Spaghetti stack – removing more compiler artifacts SP−− alloc frame SP++ free frame 14

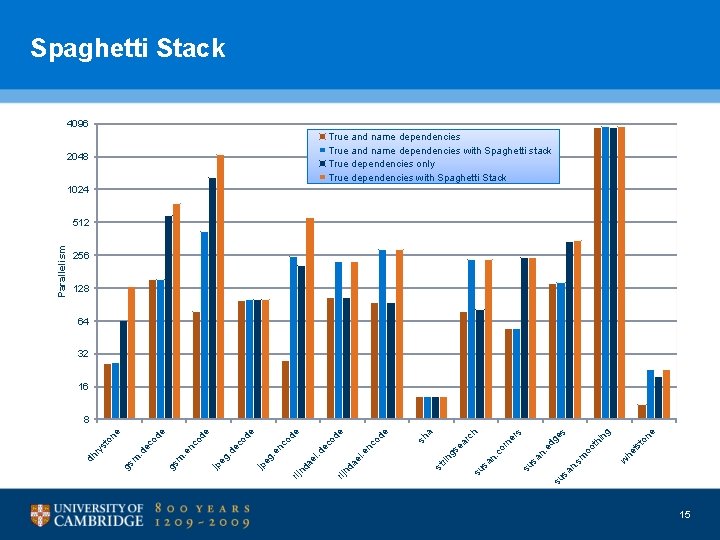

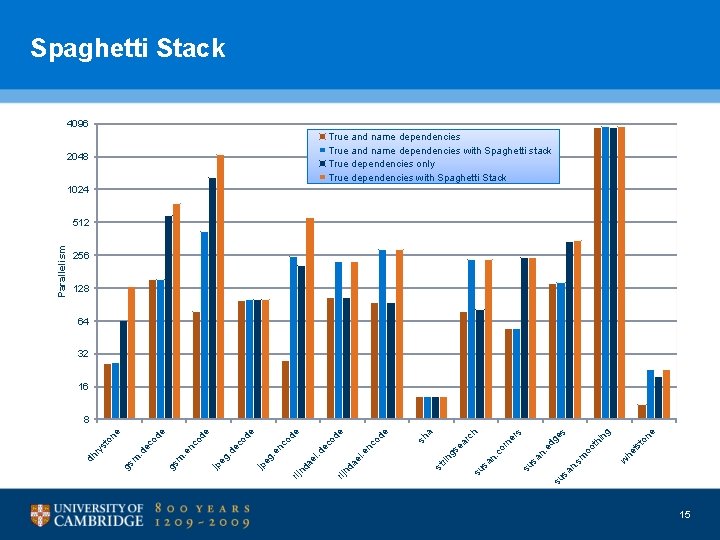

Spaghetti Stack 4096 True and name dependencies with Spaghetti stack True dependencies only True dependencies with Spaghetti Stack 2048 1024 256 128 64 32 16 to ts w he in th sm oo ne g s su sa n. sa su n. ed rn n. co ea gs rin st ge er s h rc a sh e od. e rij nd a el . d el nd a rij nc od ec co. e n eg jp . d ec jp eg nc e de e od e gs m. e od ec gs m. d ry st on e 8 dh Parallelism 512 15

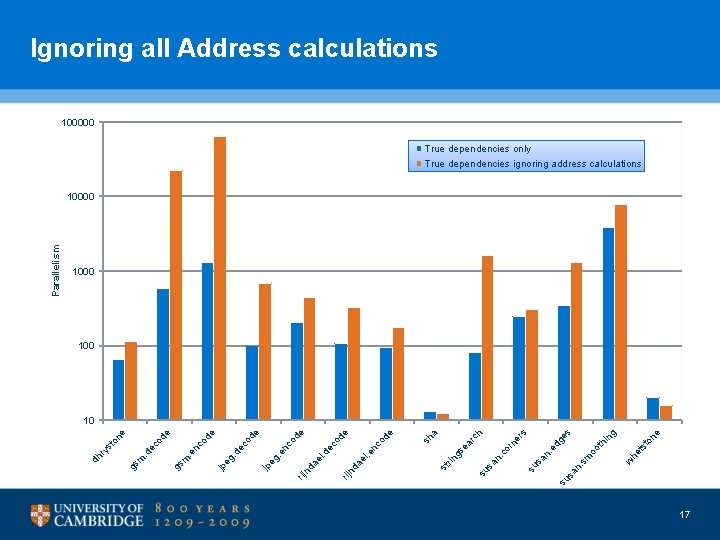

What about other compiler artifacts? • Stack pointer is just one example • Calls to malloc() is another • Extreme case – remove all address calculation nodes from the graph 16

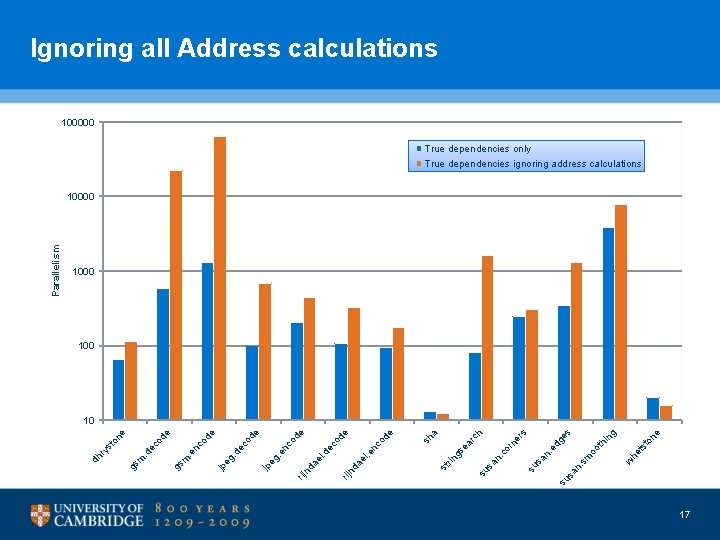

ne to ts w he ng s ge hi oo t sm n. sa su s or ne r n. ed sa su n. c h a rc ea gs rin st sh e od e de od nc . e el nd a e de co ec . d el nd a su sa rij . e n eg jp co . d e eg jp e od nc gs m. e e od ec gs m. d on st ry dh Parallelism Ignoring all Address calculations 100000 True dependencies only True dependencies ignoring address calculations 10000 100 10 17

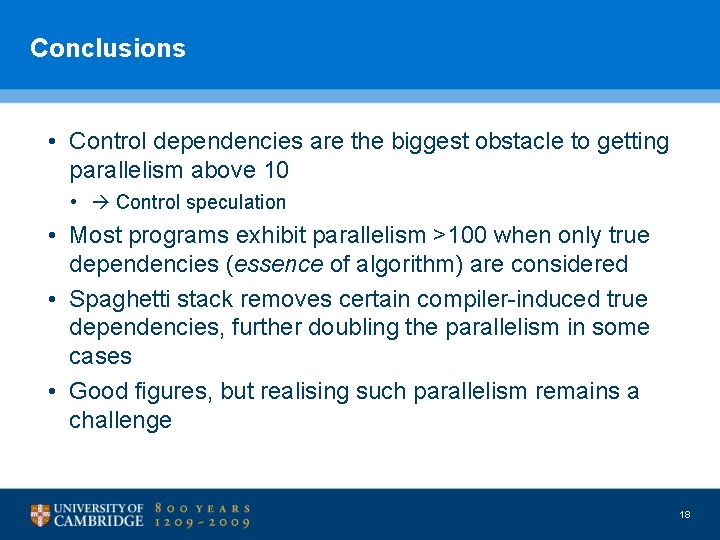

Conclusions • Control dependencies are the biggest obstacle to getting parallelism above 10 • Control speculation • Most programs exhibit parallelism >100 when only true dependencies (essence of algorithm) are considered • Spaghetti stack removes certain compiler-induced true dependencies, further doubling the parallelism in some cases • Good figures, but realising such parallelism remains a challenge 18

Future work • Scale up analysis framework • Bigger, more complex benchmarks (e. g. web/DB server, etc. ) • How does parallelism change when data input size grows? • How much parallelism is instruction-level (ILP), and how much is task-level (TLP)? • Map dependencies back to source code • Paper addressing some of these questions has just been submitted 19

Related work • Wall, “Limits of instruction-level parallelism” (1991) • Lam and Wilson, “Limits of control flow on parallelism” (1992) • Austin and Sohi, “Dynamic dependency analysis of ordinary programs” (1992) • Postiff, Greene, Tyson and Mudge, “The limits of instruction level parallelism in SPEC 95 applications” (1999) • Stefanović and Martonosi, “Limits and graph structure of available instruction-level parallelism” (2001) 20