Filtering and Recommendation INST 734 Module 9 Doug

- Slides: 11

Filtering and Recommendation INST 734 Module 9 Doug Oard

Agenda • Filtering • Recommender systems Ø Classification

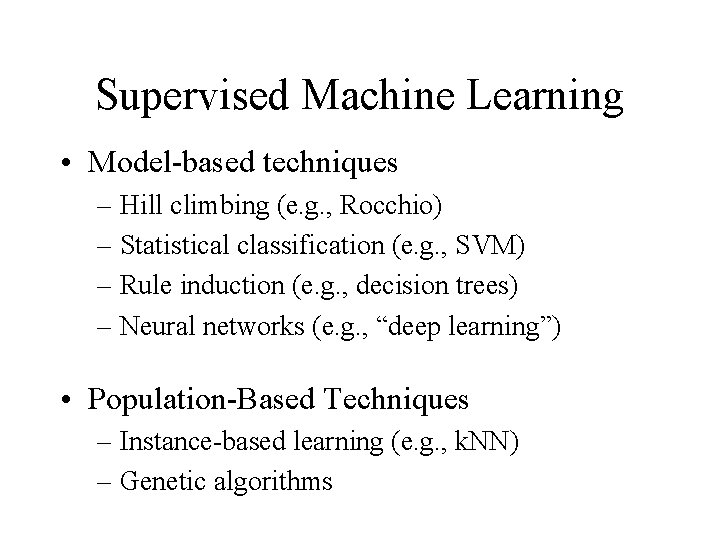

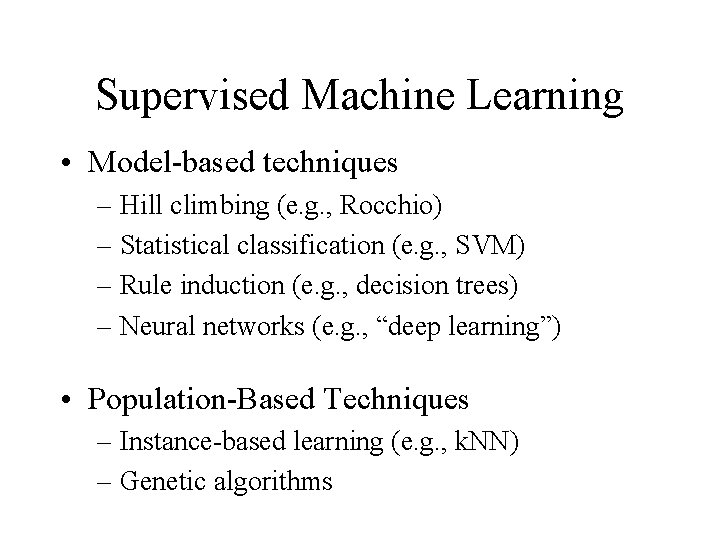

Supervised Machine Learning • Model-based techniques – Hill climbing (e. g. , Rocchio) – Statistical classification (e. g. , SVM) – Rule induction (e. g. , decision trees) – Neural networks (e. g. , “deep learning”) • Population-Based Techniques – Instance-based learning (e. g. , k. NN) – Genetic algorithms

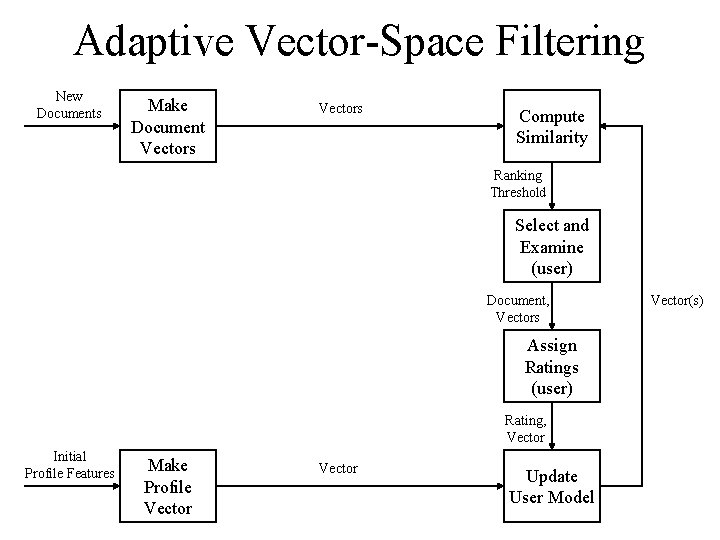

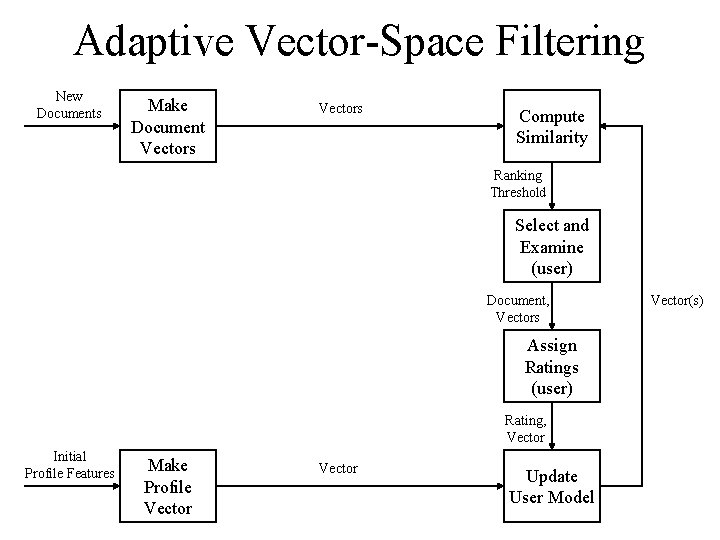

Adaptive Vector-Space Filtering New Documents Make Document Vectors Compute Similarity Ranking Threshold Select and Examine (user) Document, Vectors Assign Ratings (user) Rating, Vector Initial Profile Features Make Profile Vector Update User Model Vector(s)

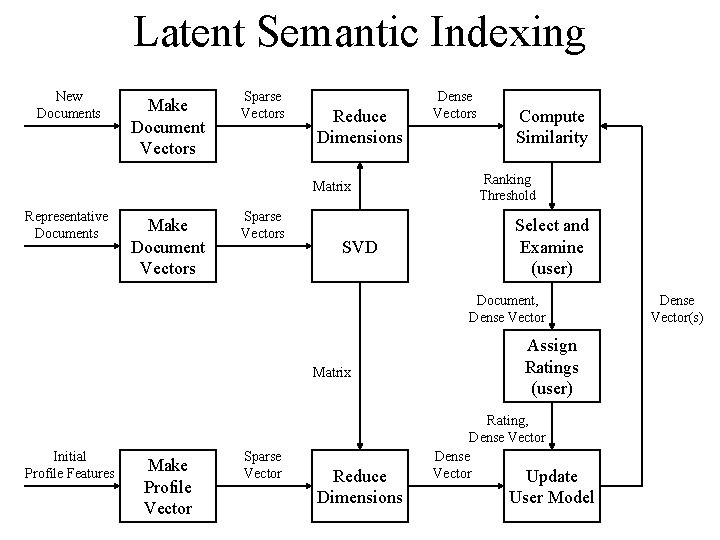

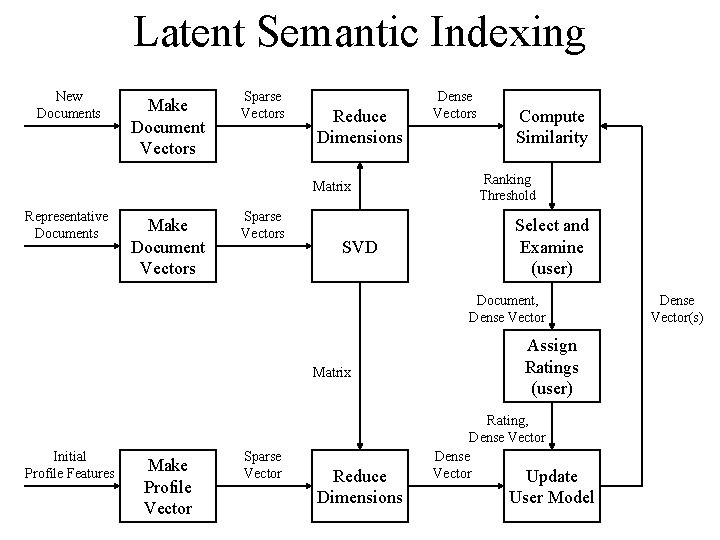

Latent Semantic Indexing New Documents Make Document Vectors Sparse Vectors Reduce Dimensions Matrix Representative Documents Make Document Vectors Sparse Vectors SVD Dense Vectors Compute Similarity Ranking Threshold Select and Examine (user) Document, Dense Vector Matrix Initial Profile Features Make Profile Vector Sparse Vector Reduce Dimensions Assign Ratings (user) Rating, Dense Vector Update User Model Dense Vector(s)

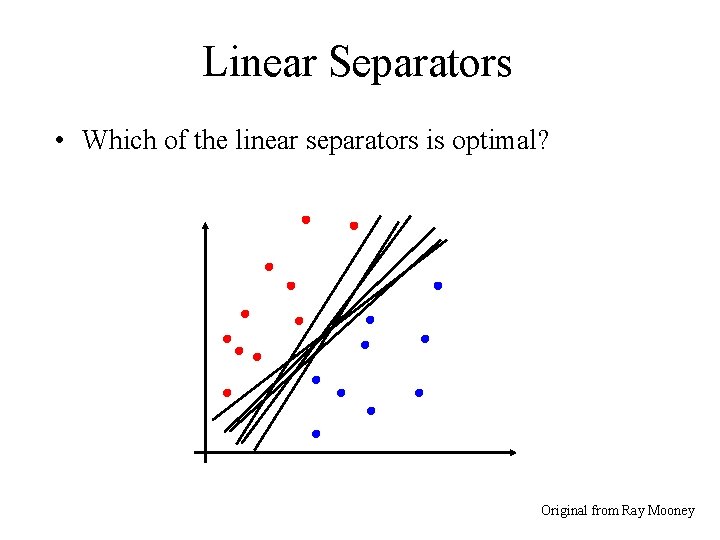

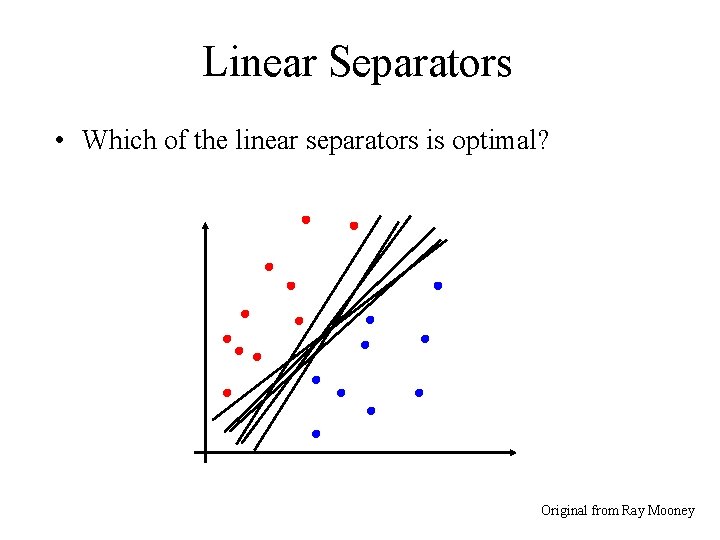

Linear Separators • Which of the linear separators is optimal? Original from Ray Mooney

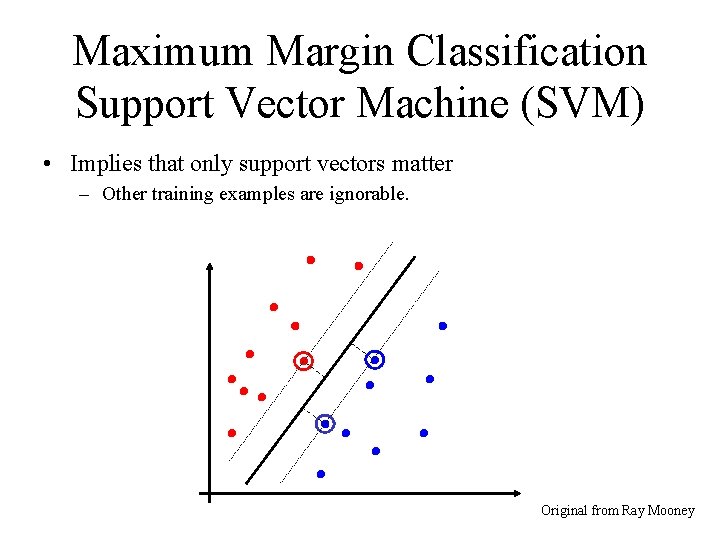

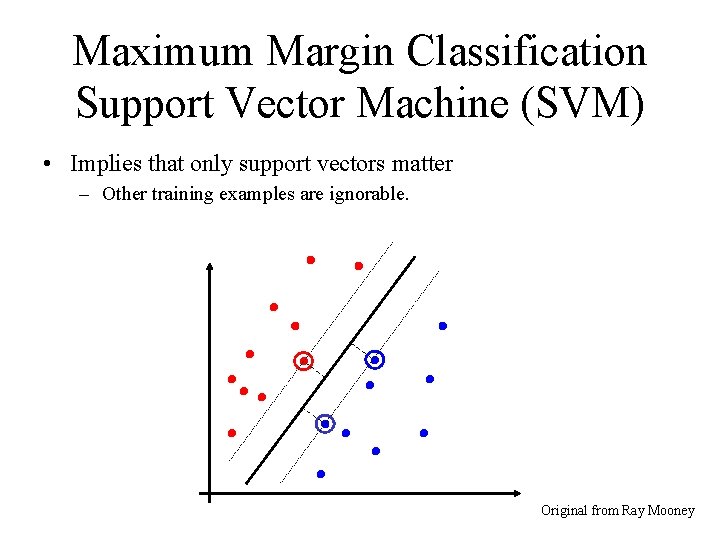

Maximum Margin Classification Support Vector Machine (SVM) • Implies that only support vectors matter – Other training examples are ignorable. Original from Ray Mooney

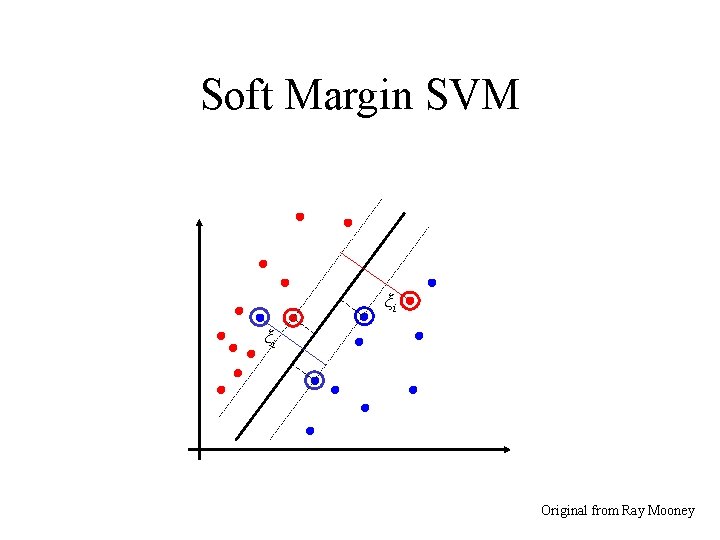

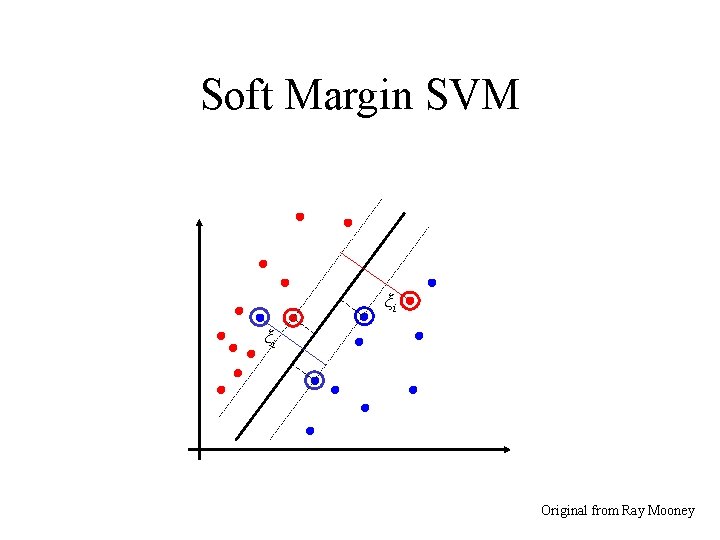

Soft Margin SVM ξi ξi Original from Ray Mooney

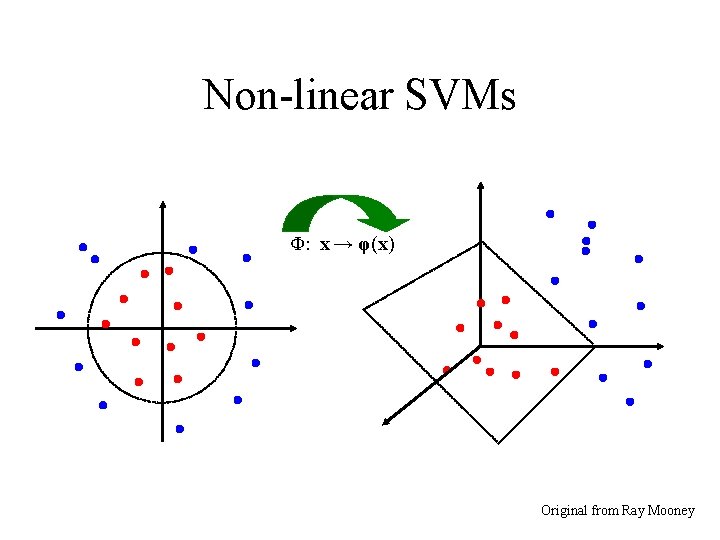

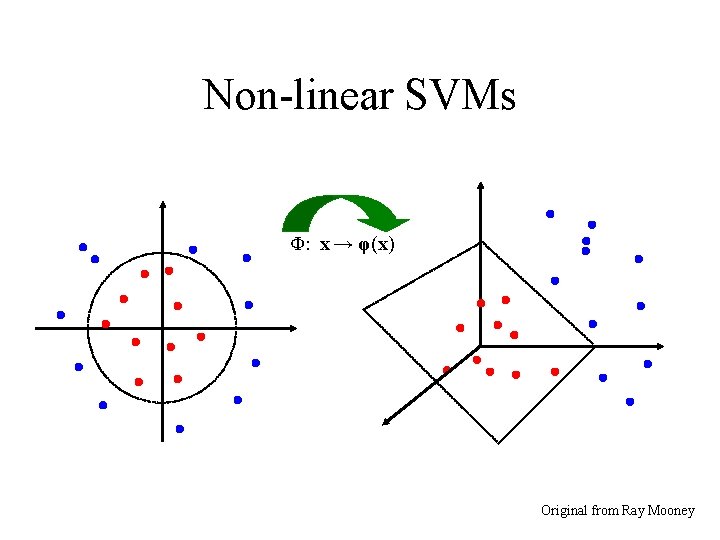

Non-linear SVMs Φ: x → φ(x) Original from Ray Mooney

Training Supervised Classifiers • All learning systems share two problems – They need some “inductive bias” – They must balance adaptation with generalization • Overtraining can hurt performance – Performance on training data rises and plateaus – Performance on new data rises, then falls • Useful strategies – Hold out a “devtest” set to find peak on new data – Emphasize exploration early, exploration later

Summing Up • Filtering poses some unique challenges – Adversarial behavior, new terms, throughput • Behavioral signals offer unique opportunities – For both static and dynamic content • Supervised classifiers learn to make decisions – Two-sided training – Threshold learning