FileSystem Interface n File Concept n Access Methods

- Slides: 104

File-System Interface n File Concept n Access Methods n Directory Structure

File Concept n Contiguous logical address space n Types: l Data 4 numeric 4 character 4 binary l Program

File Structure n None - sequence of words, bytes n Simple record structure Lines l Fixed length l Variable length n Complex Structures l Formatted document l Relocatable load file n Can simulate last two with first method by inserting appropriate control characters l n Who decides: Operating system l Program l

File Attributes n Name – only information kept in human-readable form n Identifier – unique tag (number) identifies file within file system n Type – needed for systems that support different types n Location – pointer to file location on device n Size – current file size n Protection – controls who can do reading, writing, executing n Time, date, and user identification – data for protection, security, and usage monitoring n Information about files are kept in the directory structure, which is maintained on the disk

File Operations n File is an abstract data type n Create n Write n Read n Reposition within file n Delete n Truncate n Open(Fi) – search the directory structure on disk for entry Fi, and move the content of entry to memory n Close (Fi) – move the content of entry Fi in memory to directory structure on disk

Open Files n Several pieces of data are needed to manage open files: l File pointer: pointer to last read/write location, per process that has the file open l File-open count: counter of number of times a file is open – to allow removal of data from open-file table when last processes closes it l Disk location of the file: cache of data access information l Access rights: per-process access mode information

Open File Locking n Provided by some operating systems and file systems n Mediates access to a file n Mandatory or advisory: l Mandatory – access is denied depending on locks held and requested l Advisory – processes can find status of locks and decide what to do

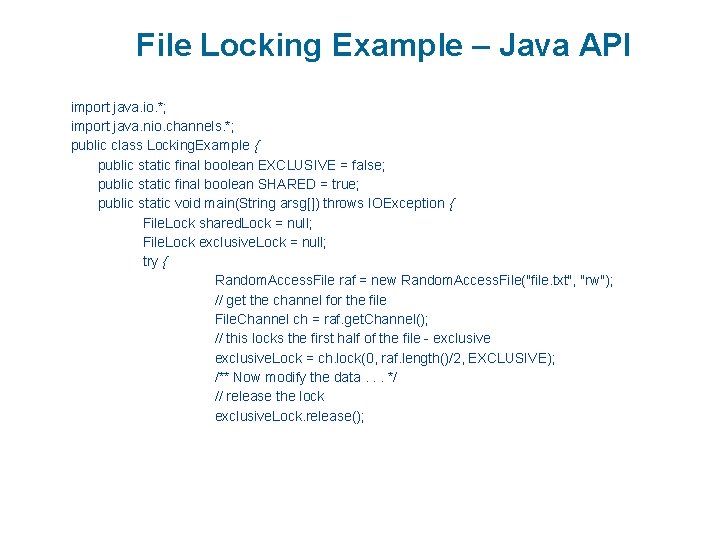

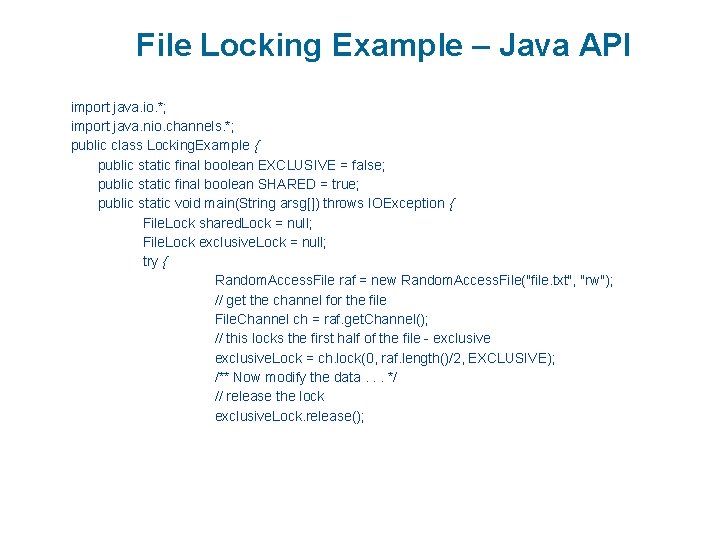

File Locking Example – Java API import java. io. *; import java. nio. channels. *; public class Locking. Example { public static final boolean EXCLUSIVE = false; public static final boolean SHARED = true; public static void main(String arsg[]) throws IOException { File. Lock shared. Lock = null; File. Lock exclusive. Lock = null; try { Random. Access. File raf = new Random. Access. File("file. txt", "rw"); // get the channel for the file File. Channel ch = raf. get. Channel(); // this locks the first half of the file - exclusive. Lock = ch. lock(0, raf. length()/2, EXCLUSIVE); /** Now modify the data. . . */ // release the lock exclusive. Lock. release();

File Locking Example – Java API (Cont. ) // this locks the second half of the file - shared. Lock = ch. lock(raf. length()/2+1, raf. length(), SHARED); /** Now read the data. . . */ // release the lock shared. Lock. release(); } catch (java. io. IOException ioe) { System. err. println(ioe); }finally { if (exclusive. Lock != null) exclusive. Lock. release(); if (shared. Lock != null) shared. Lock. release(); } } }

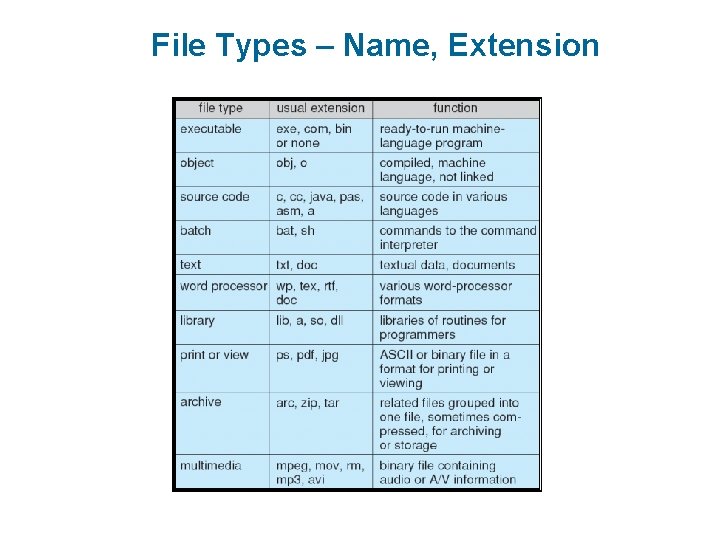

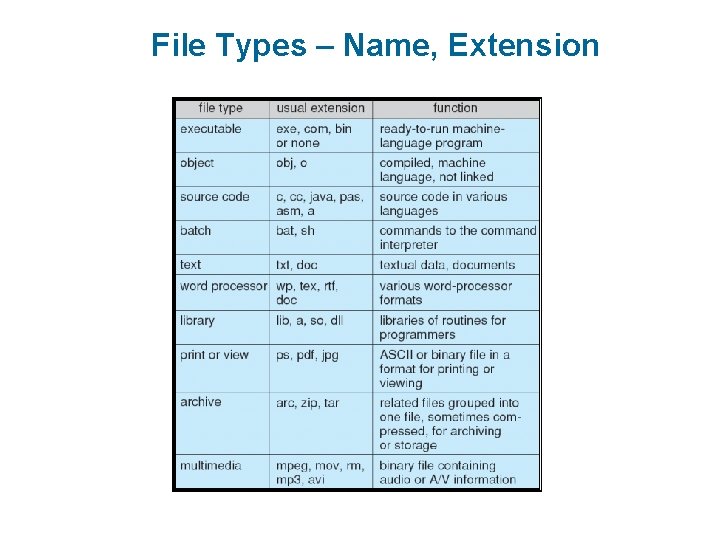

File Types – Name, Extension

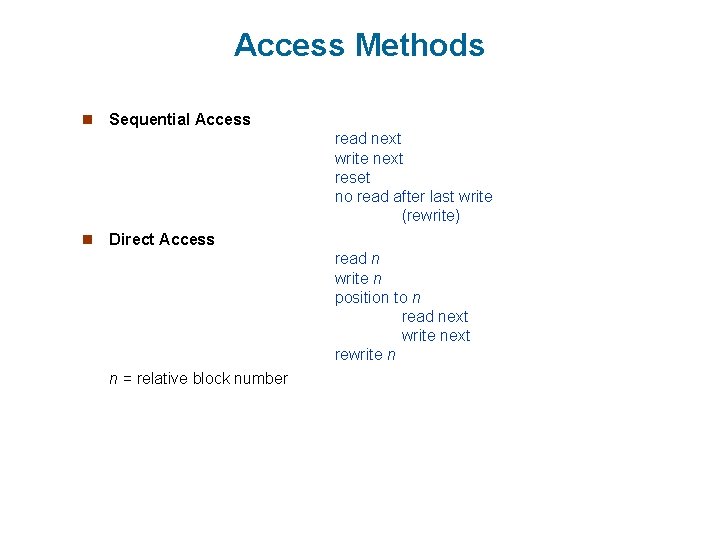

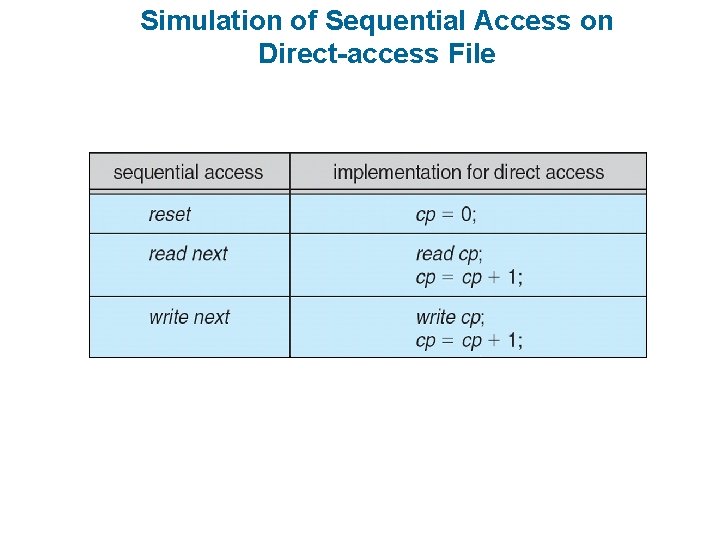

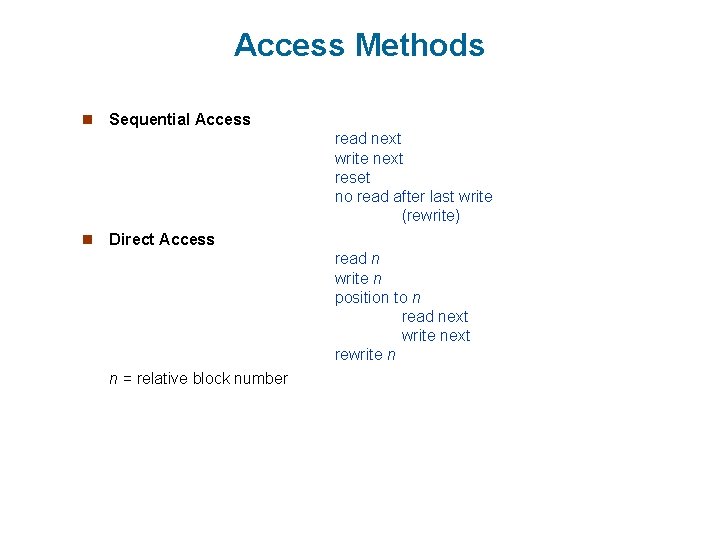

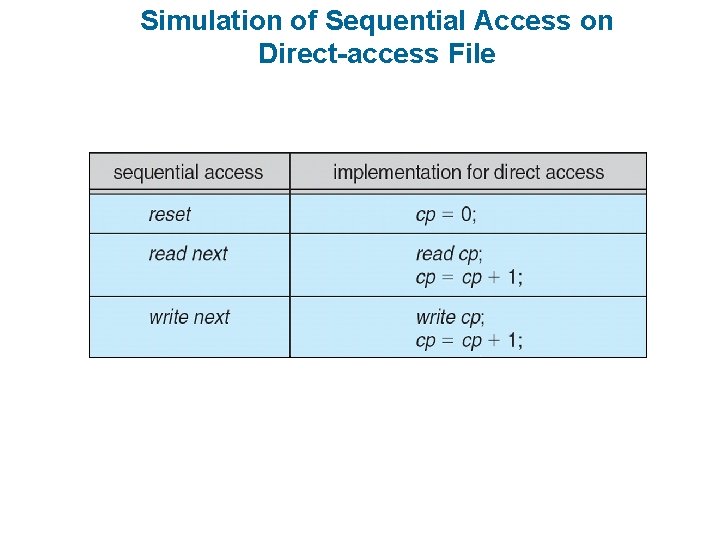

Access Methods n Sequential Access read next write next reset no read after last write (rewrite) n Direct Access read n write n position to n read next write next rewrite n n = relative block number

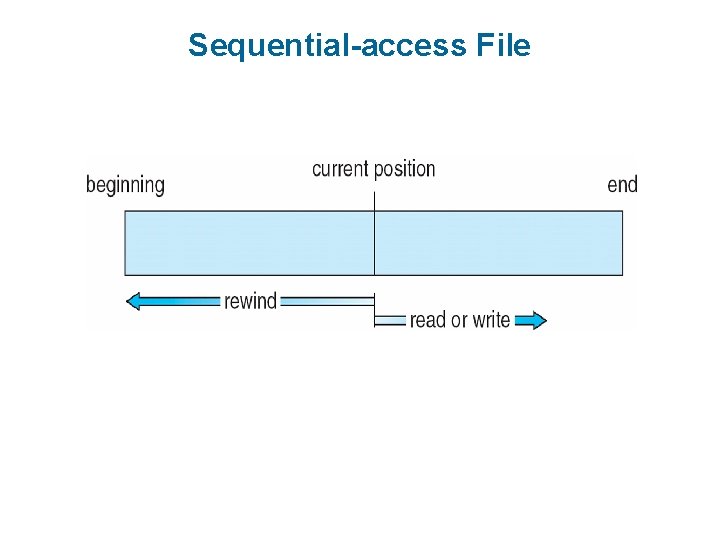

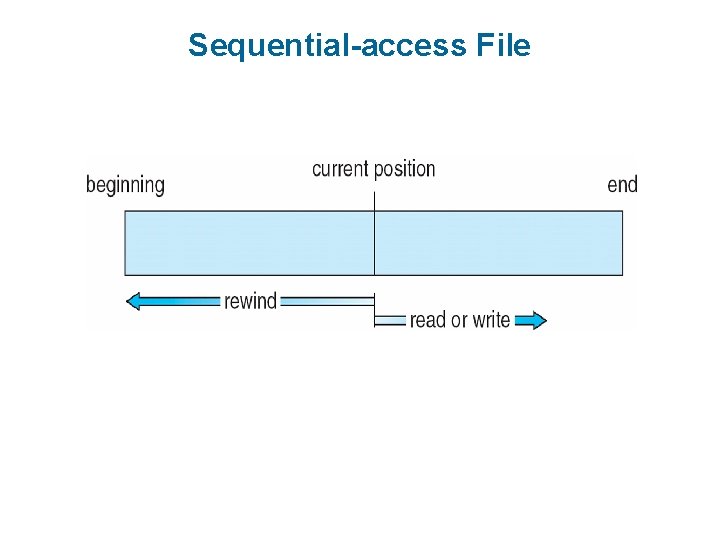

Sequential-access File

Simulation of Sequential Access on Direct-access File

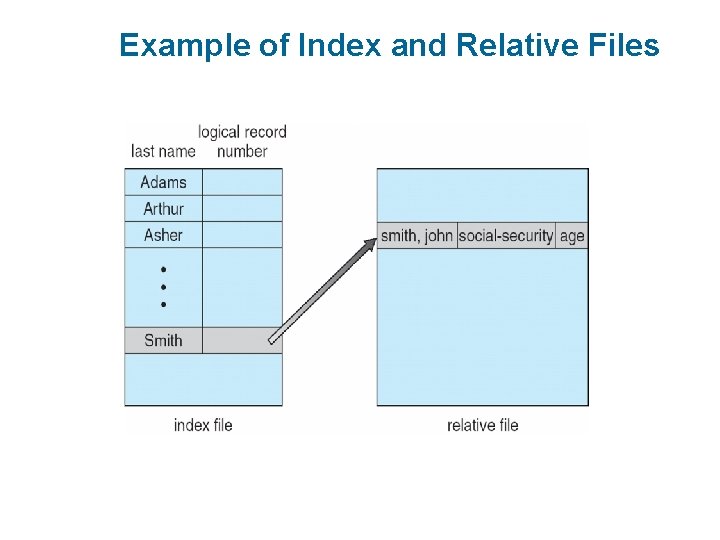

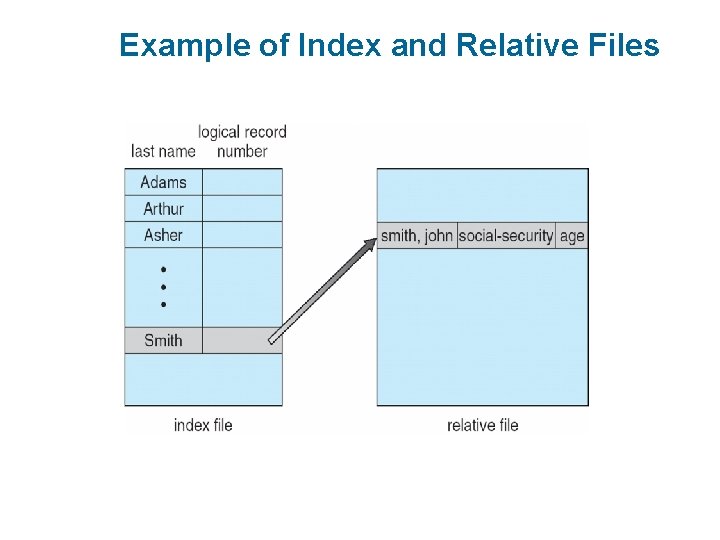

Example of Index and Relative Files

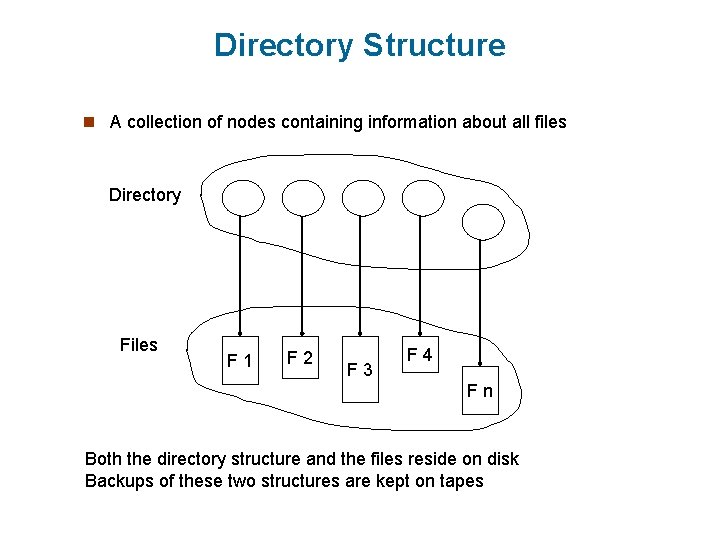

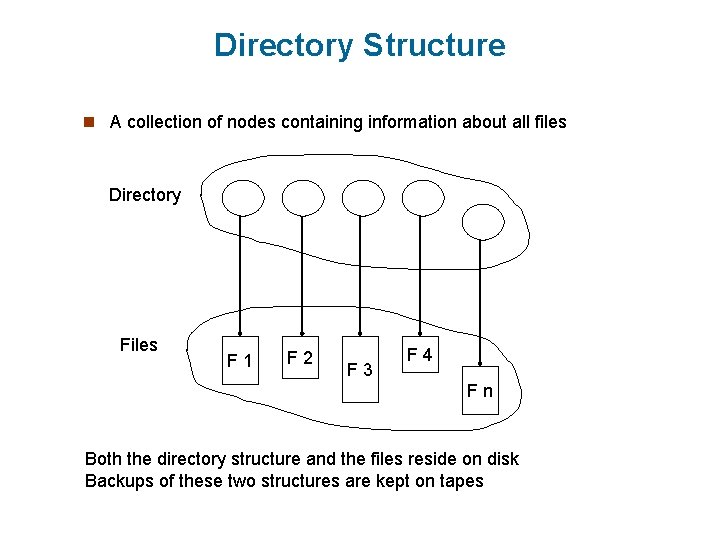

Directory Structure n A collection of nodes containing information about all files Directory Files F 1 F 2 F 3 F 4 Fn Both the directory structure and the files reside on disk Backups of these two structures are kept on tapes

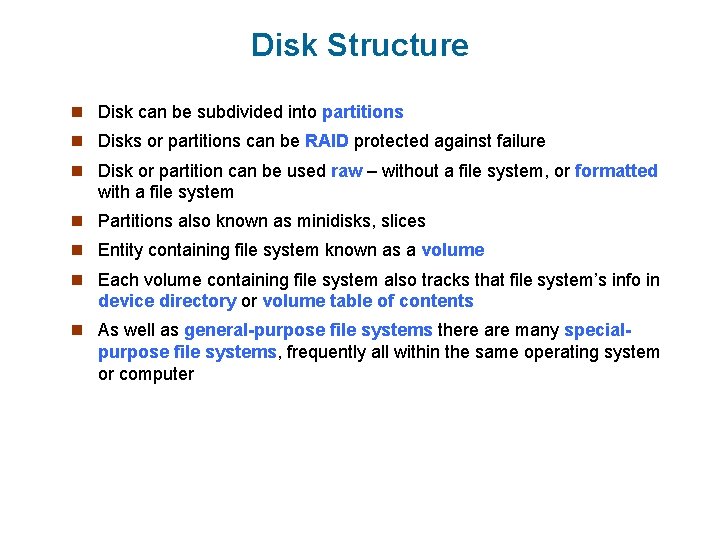

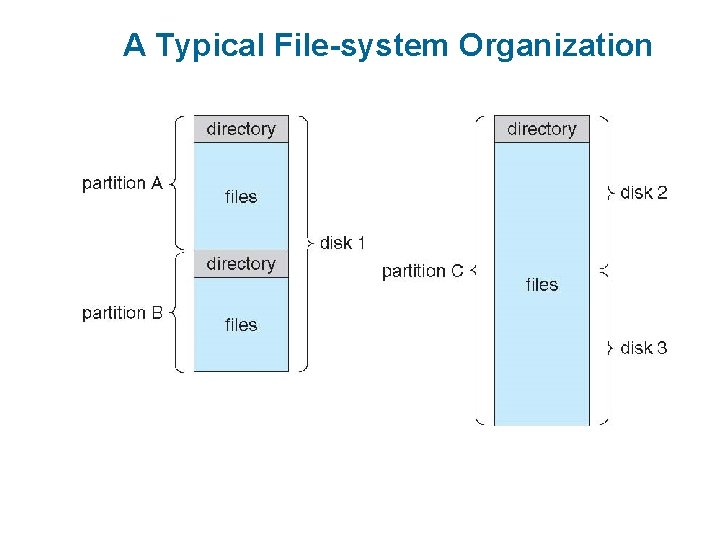

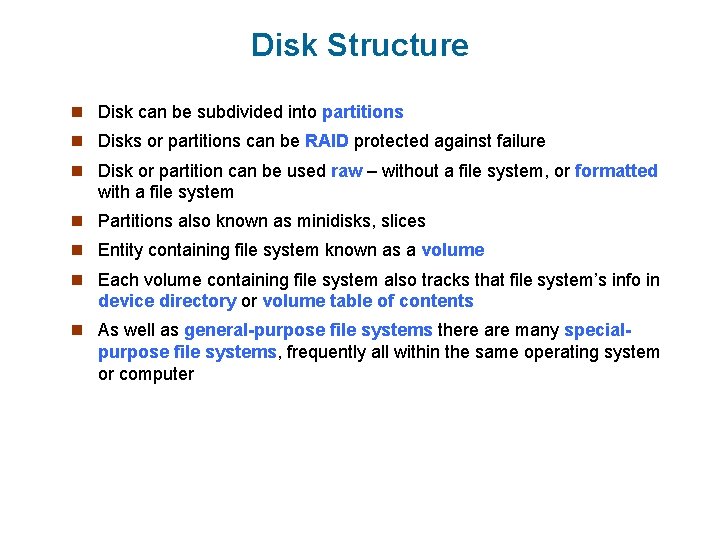

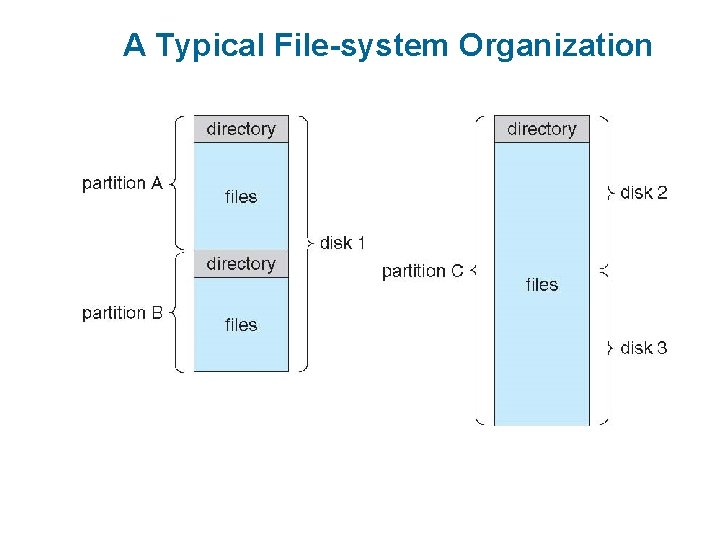

Disk Structure n Disk can be subdivided into partitions n Disks or partitions can be RAID protected against failure n Disk or partition can be used raw – without a file system, or formatted with a file system n Partitions also known as minidisks, slices n Entity containing file system known as a volume n Each volume containing file system also tracks that file system’s info in device directory or volume table of contents n As well as general-purpose file systems there are many special- purpose file systems, frequently all within the same operating system or computer

A Typical File-system Organization

Operations Performed on Directory n Search for a file n Create a file n Delete a file n List a directory n Rename a file n Traverse the file system

Organize the Directory (Logically) to Obtain n Efficiency – locating a file quickly n Naming – convenient to users l Two users can have same name for different files l The same file can have several different names n Grouping – logical grouping of files by properties, (e. g. , all Java programs, all games, …)

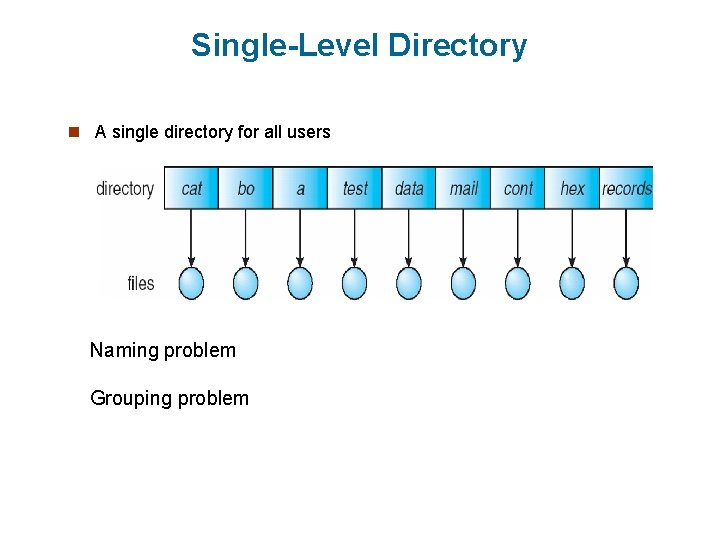

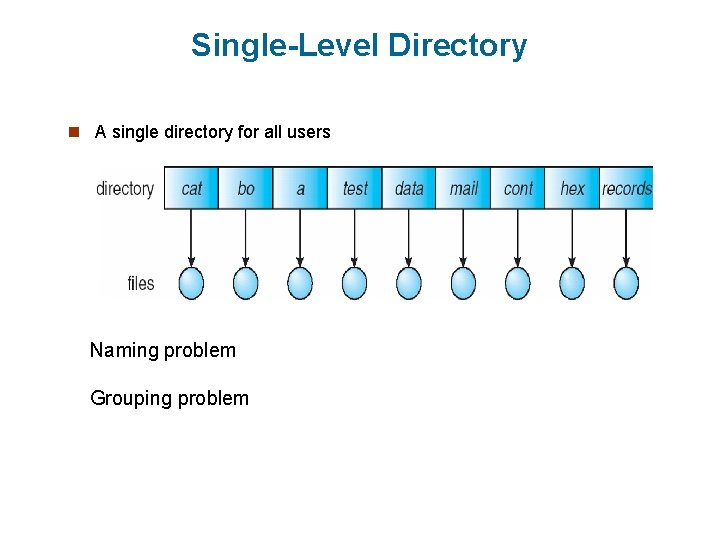

Single-Level Directory n A single directory for all users Naming problem Grouping problem

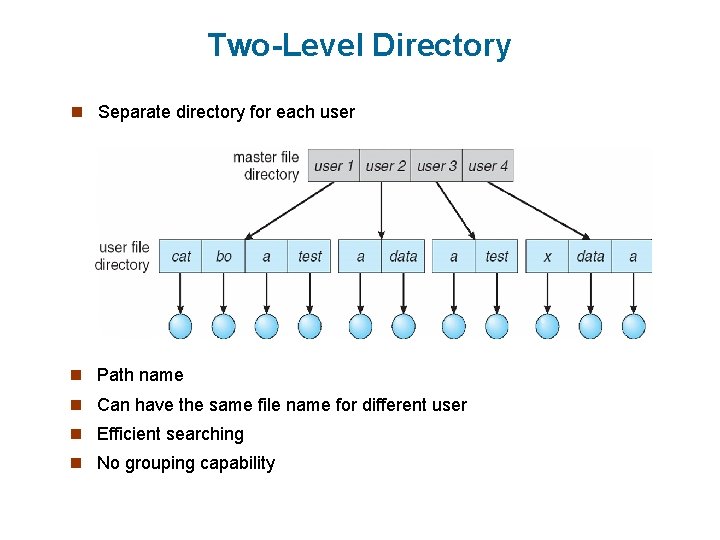

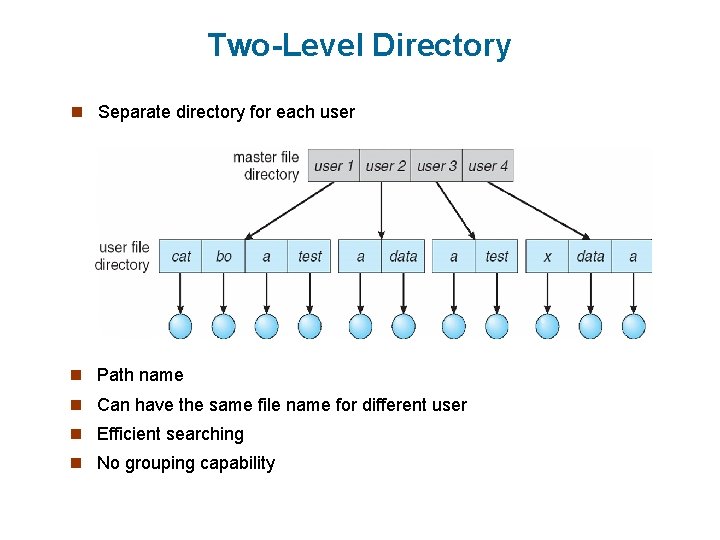

Two-Level Directory n Separate directory for each user n Path name n Can have the same file name for different user n Efficient searching n No grouping capability

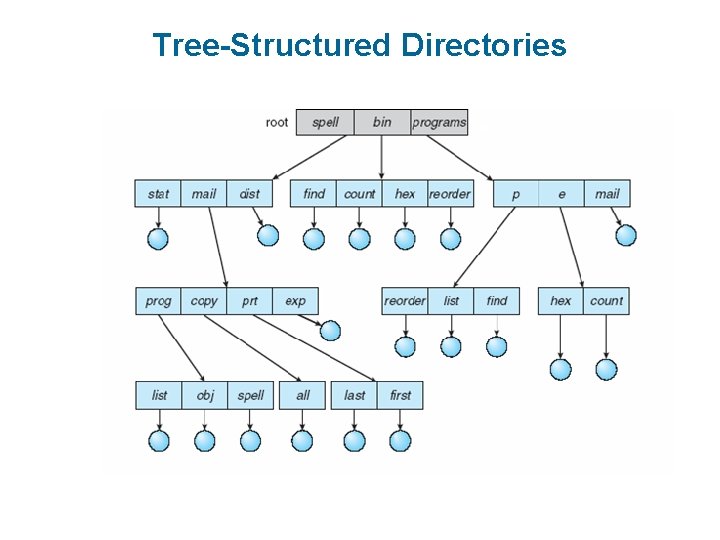

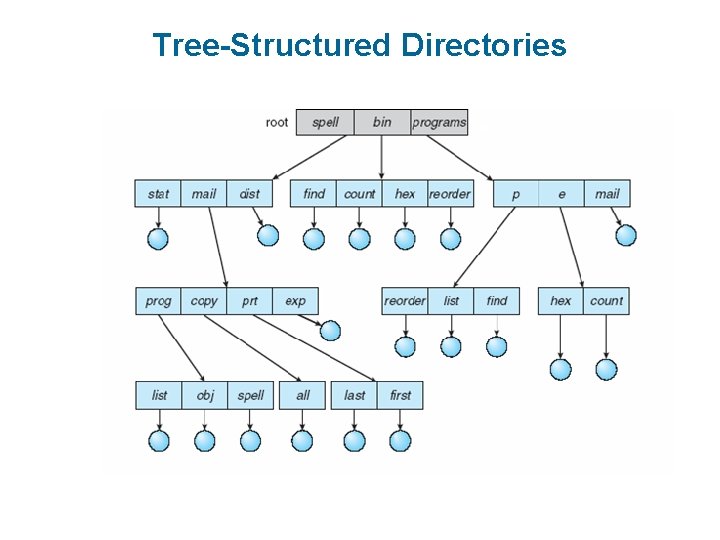

Tree-Structured Directories

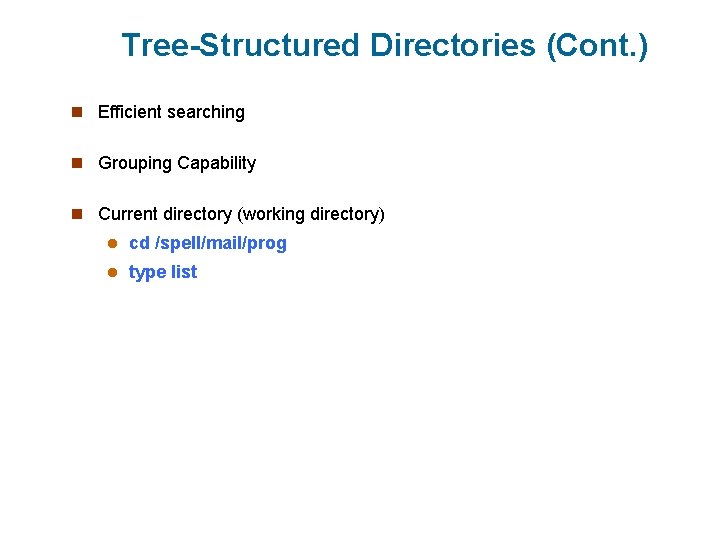

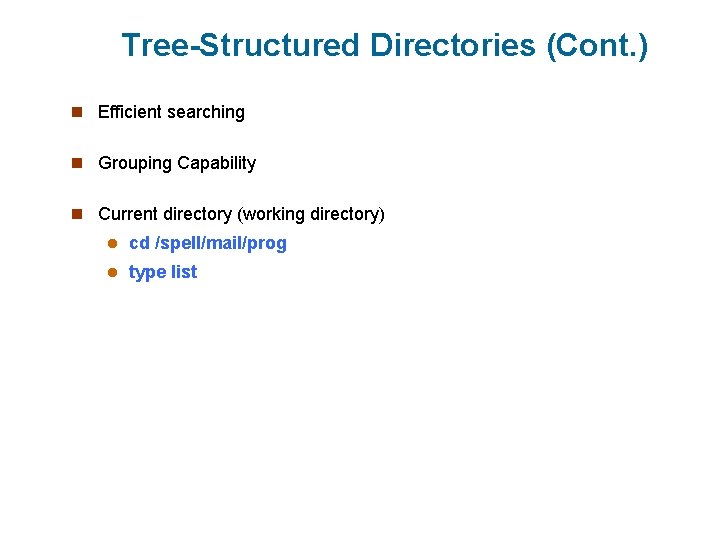

Tree-Structured Directories (Cont. ) n Efficient searching n Grouping Capability n Current directory (working directory) l cd /spell/mail/prog l type list

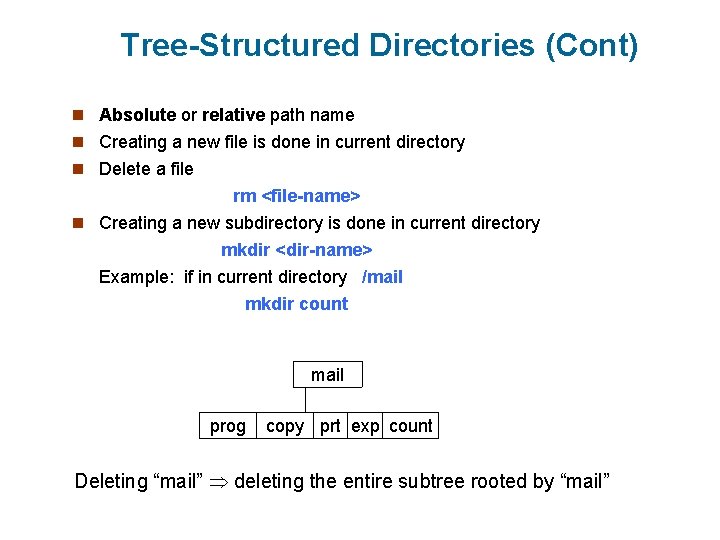

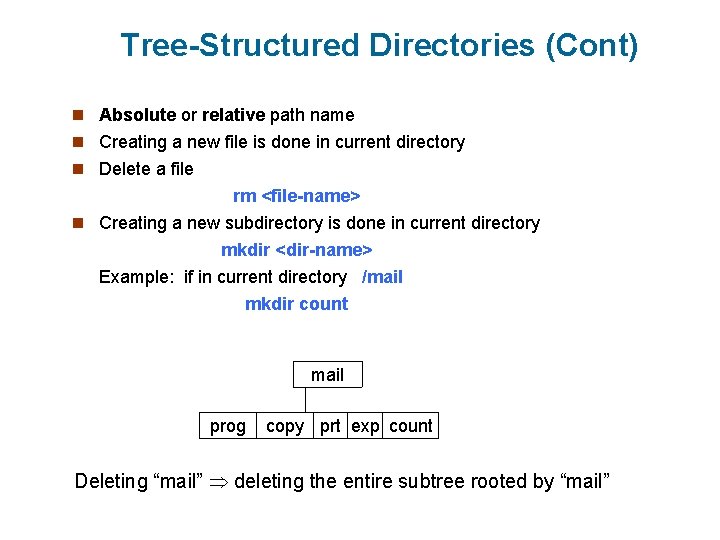

Tree-Structured Directories (Cont) n Absolute or relative path name n Creating a new file is done in current directory n Delete a file rm <file-name> n Creating a new subdirectory is done in current directory mkdir <dir-name> Example: if in current directory /mail mkdir count mail prog copy prt exp count Deleting “mail” deleting the entire subtree rooted by “mail”

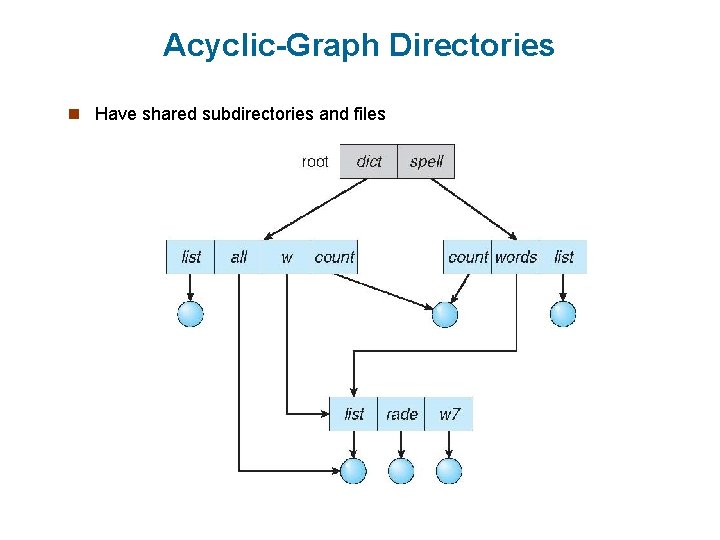

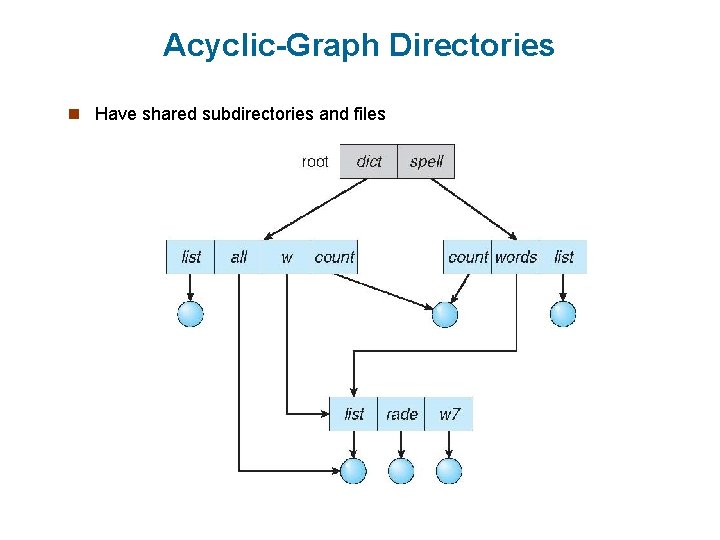

Acyclic-Graph Directories n Have shared subdirectories and files

Acyclic-Graph Directories (Cont. ) n Two different names (aliasing) n If dict deletes list dangling pointer Solutions: l Backpointers, so we can delete all pointers Variable size records a problem l Backpointers using a daisy chain organization l Entry-hold-count solution n New directory entry type l Link – another name (pointer) to an existing file l Resolve the link – follow pointer to locate the file

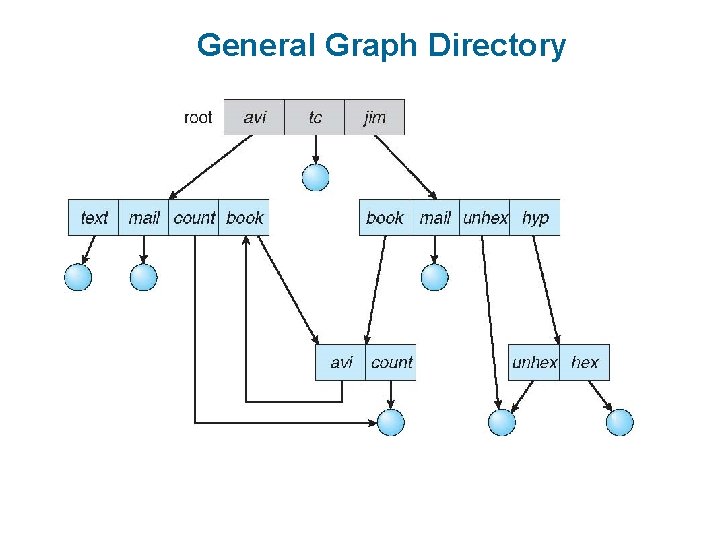

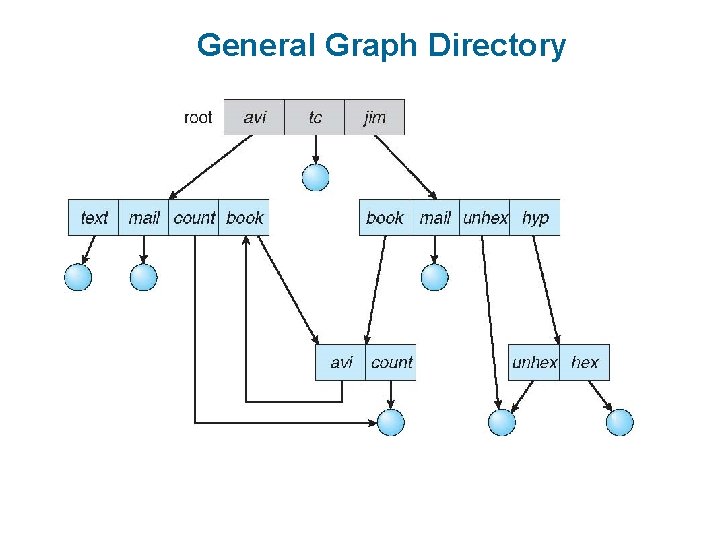

General Graph Directory

General Graph Directory (Cont. ) n How do we guarantee no cycles? l Allow only links to file not subdirectories l Garbage collection l Every time a new link is added use a cycle detection algorithm to determine whether it is OK

File System Implementation n File-System Structure n File-System Implementation n Directory Implementation n Allocation Methods

File-System Structure n File structure l Logical storage unit l Collection of related information n File system resides on secondary storage (disks) l Provided user interface to storage, mapping logical to physical l Provides efficient and convenient access to disk by allowing data to be stored, located retrieved easily n Disk provides in-place rewrite and random access l I/O transfers performed in blocks of sectors (usually 512 bytes) n File control block – storage structure consisting of information about a file n Device driver controls the physical device n File system organized into layers

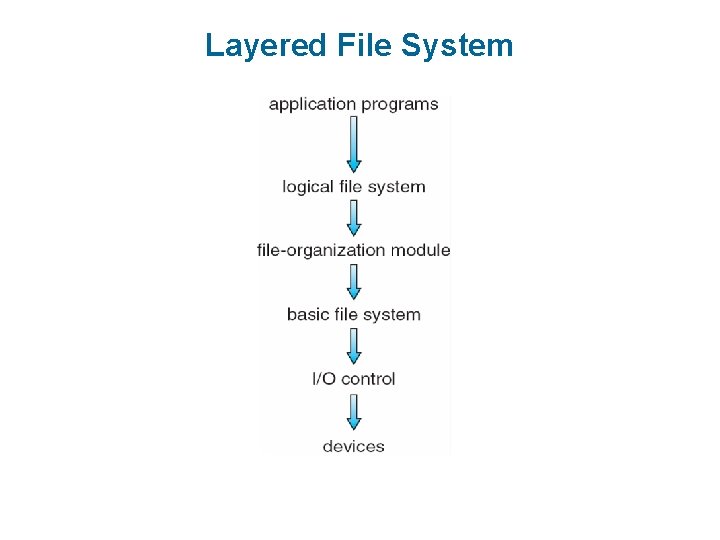

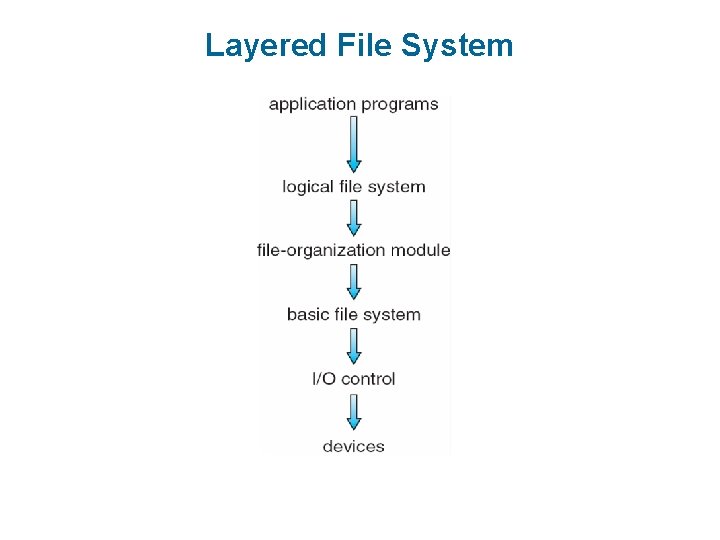

Layered File System

File System Layers n Device drivers manage I/O devices at the I/O control layer l Given commands like “read drive 1, cylinder 72, track 2, sector 10, into memory location 1060” outputs low-level hardware specific commands to hardware controller n Basic file system given command like “retrieve block 123” translates to device driver n Also manages memory buffers and caches (allocation, freeing, replacement) n Buffers hold data in transit n Caches hold frequently used data n File organization module understands files, logical address, and physical blocks n Translates logical block # to physical block # n Manages free space, disk allocation

File System Layers (Cont. ) n Logical file system manages metadata information n Translates file name into file number, file handle, location by maintaining file control blocks (inodes in Unix) n Directory management n Protection n Layering useful for reducing complexity and redundancy, but adds overhead and can decrease performance n Logical layers can be implemented by any coding method according to OS designer n Many file systems, sometimes many within an operating system n Each with its own format (CD-ROM is ISO 9660; Unix has UFS, FFS; Windows has FAT, FAT 32, NTFS as well as floppy, CD, DVD Blu-ray, Linux has more than 40 types, with extended file system ext 2 and ext 3 leading; plus distributed file systems, etc) n New ones still arriving – ZFS, Google. FS, Oracle ASM, FUSE

File-System Implementation n We have system calls at the API level, but how do we implement their functions? l On-disk and in-memory structures n Boot control block contains info needed by system to boot OS from that volume l Needed if volume contains OS, usually first block of volume n Volume control block (superblock, master file table) contains volume details l Total # of blocks, # of free blocks, block size, free block pointers or array n Directory structure organizes the files l Names and inode numbers, master file table n Per-file File Control Block (FCB) contains many details about the file l Inode number, permissions, size, dates l NFTS stores into in master file table using relational DB structures

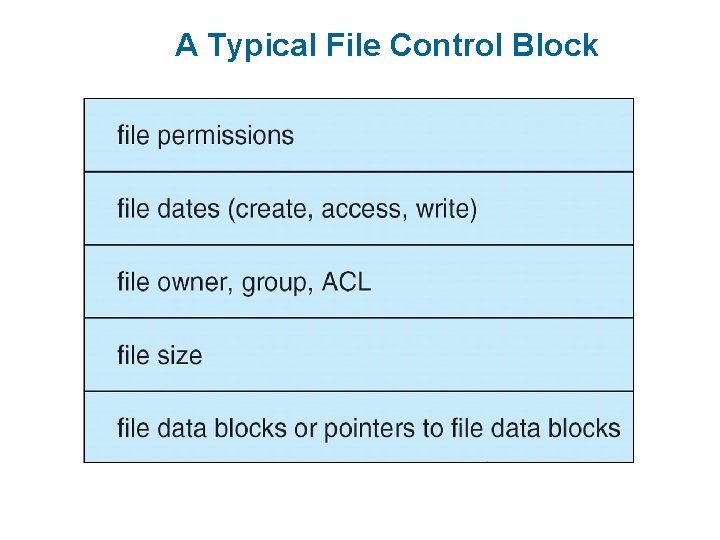

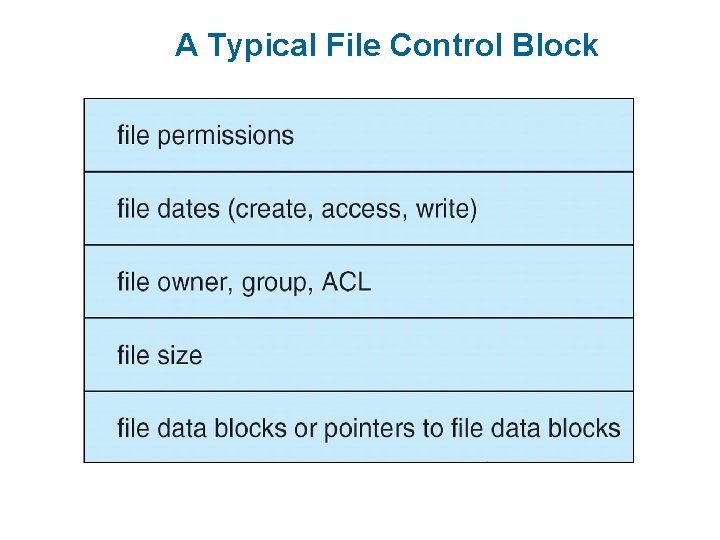

A Typical File Control Block

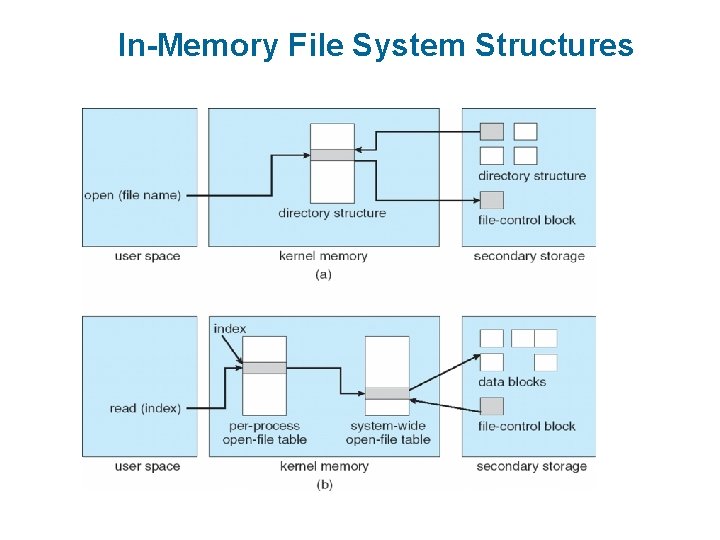

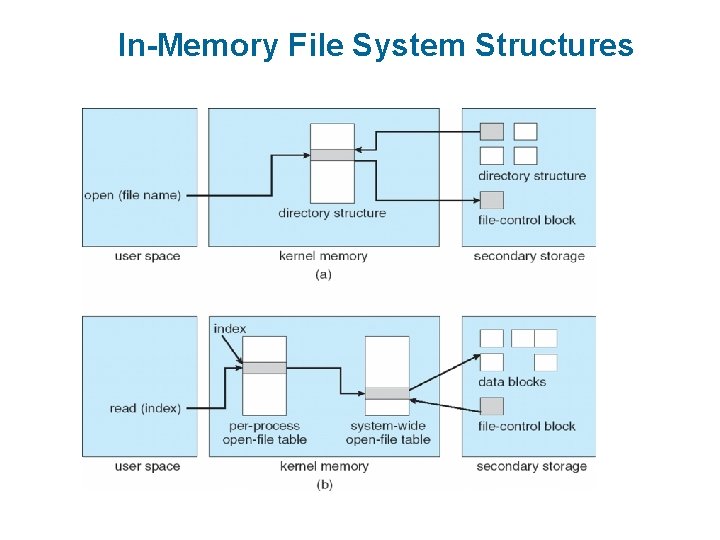

In-Memory File System Structures n Mount table storing file system mounts, mount points, file system types n The following figure illustrates the necessary file system structures provided by the operating systems n Figure 12 -3(a) refers to opening a file n Figure 12 -3(b) refers to reading a file n Plus buffers hold data blocks from secondary storage n Open returns a file handle for subsequent use n Data from read eventually copied to specified user process memory address

In-Memory File System Structures

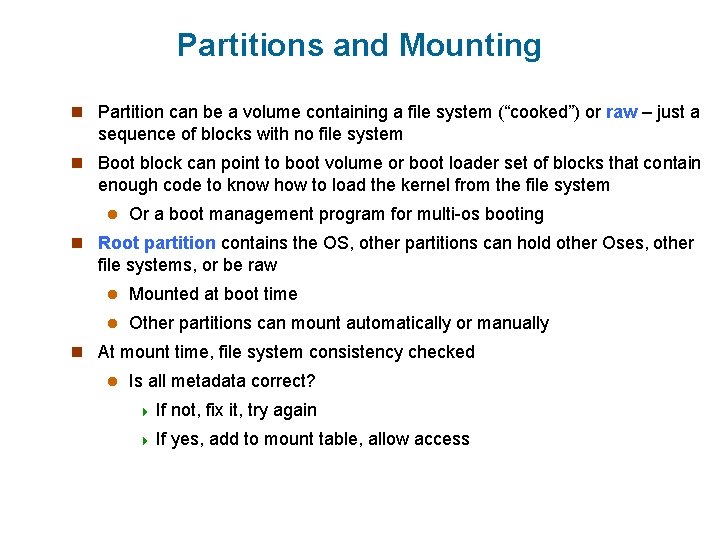

Partitions and Mounting n Partition can be a volume containing a file system (“cooked”) or raw – just a sequence of blocks with no file system n Boot block can point to boot volume or boot loader set of blocks that contain enough code to know how to load the kernel from the file system l Or a boot management program for multi-os booting n Root partition contains the OS, other partitions can hold other Oses, other file systems, or be raw l Mounted at boot time l Other partitions can mount automatically or manually n At mount time, file system consistency checked l Is all metadata correct? 4 If not, fix it, try again 4 If yes, add to mount table, allow access

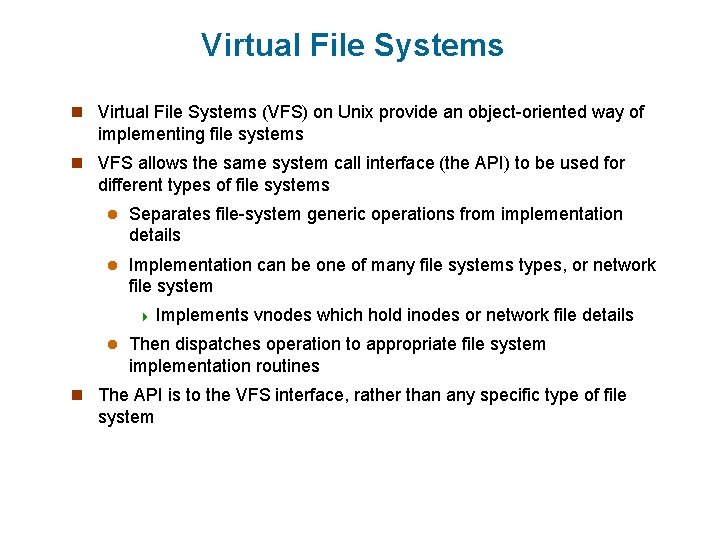

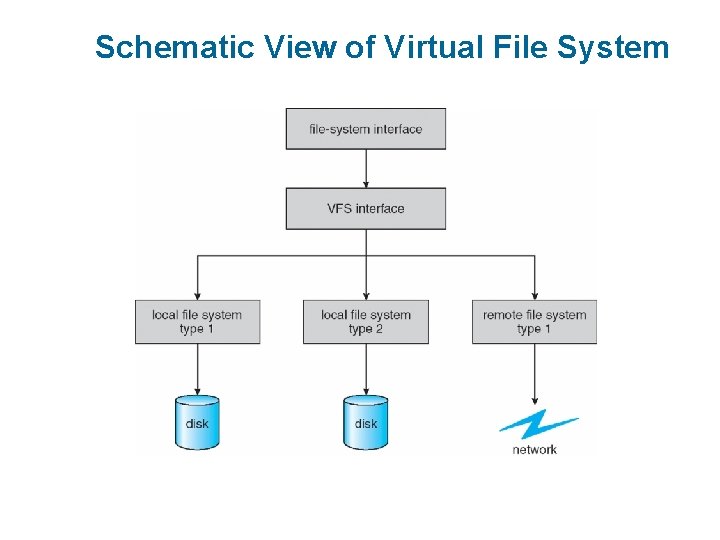

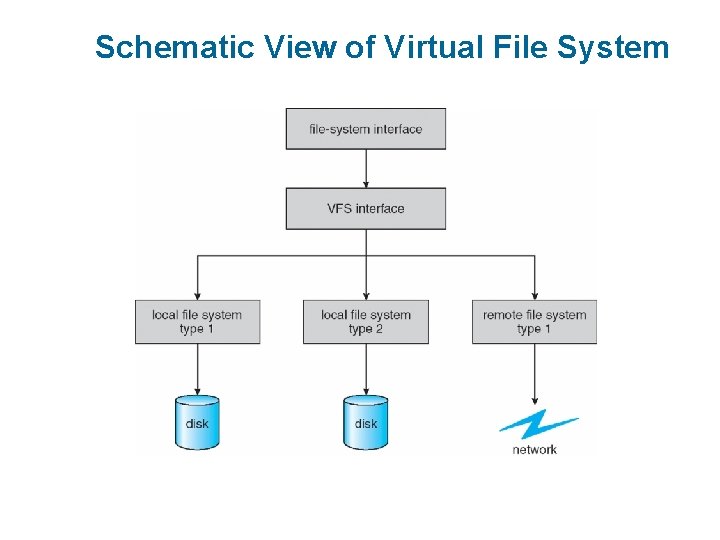

Virtual File Systems n Virtual File Systems (VFS) on Unix provide an object-oriented way of implementing file systems n VFS allows the same system call interface (the API) to be used for different types of file systems l Separates file-system generic operations from implementation details l Implementation can be one of many file systems types, or network file system 4 Implements l vnodes which hold inodes or network file details Then dispatches operation to appropriate file system implementation routines n The API is to the VFS interface, rather than any specific type of file system

Schematic View of Virtual File System

Virtual File System Implementation n For example, Linux has four object types: l inode, file, superblock, dentry n VFS defines set of operations on the objects that must be implemented l Every object has a pointer to a function table 4 Function table has addresses of routines to implement that function on that object

Directory Implementation n Linear list of file names with pointer to the data blocks l Simple to program l Time-consuming to execute 4 Linear search time 4 Could keep ordered alphabetically via linked list or use B+ tree n Hash Table – linear list with hash data structure l Decreases directory search time l Collisions – situations where two file names hash to the same location l Only good if entries are fixed size, or use chained-overflow method

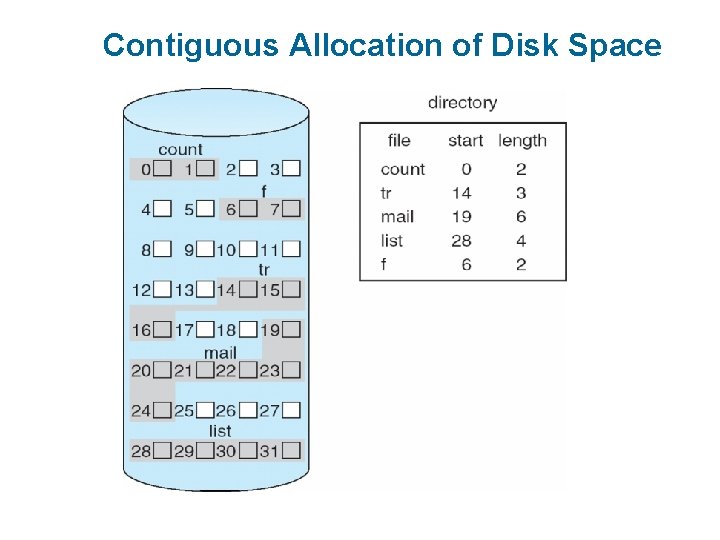

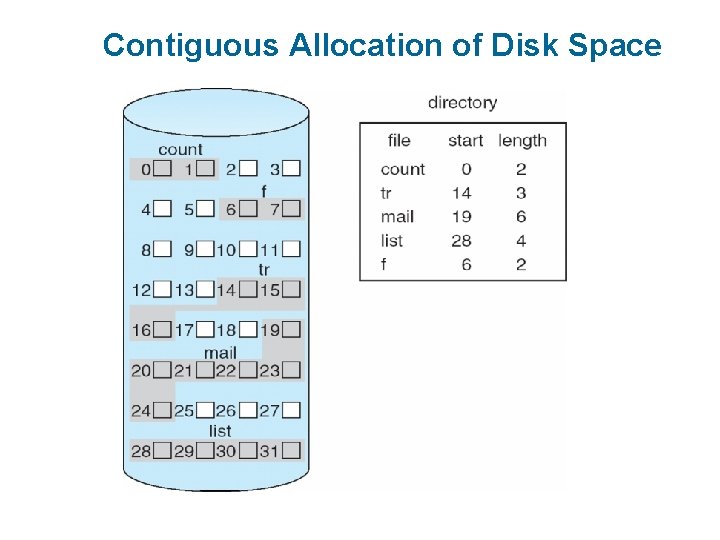

Allocation Methods - Contiguous n An allocation method refers to how disk blocks are allocated for files: n Contiguous allocation – each file occupies set of contiguous blocks l Best performance in most cases l Simple – only starting location (block #) and length (number of blocks) are required l Problems include finding space for file, knowing file size, external fragmentation, need for compaction off-line (downtime) or online

Contiguous Allocation n Mapping from logical to physical Q LA/512 R Block to be accessed = Q + starting address Displacement into block = R

Contiguous Allocation of Disk Space

Extent-Based Systems n Many newer file systems (i. e. , Veritas File System) use a modified contiguous allocation scheme n Extent-based file systems allocate disk blocks in extents n An extent is a contiguous block of disks l Extents are allocated for file allocation l A file consists of one or more extents

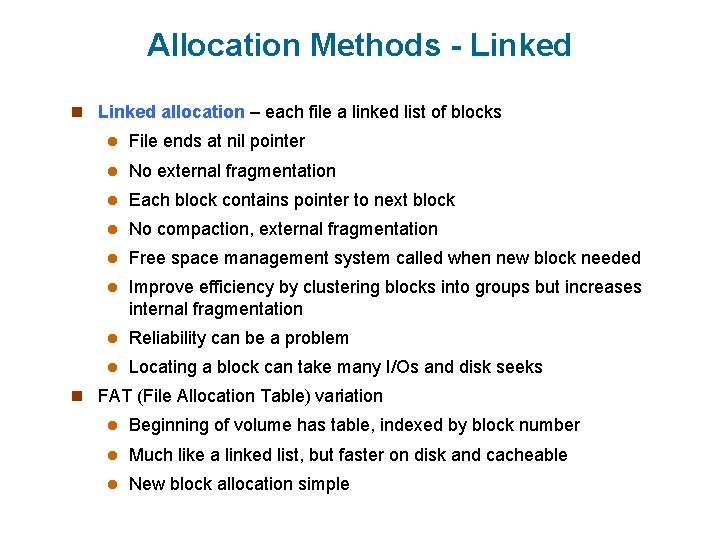

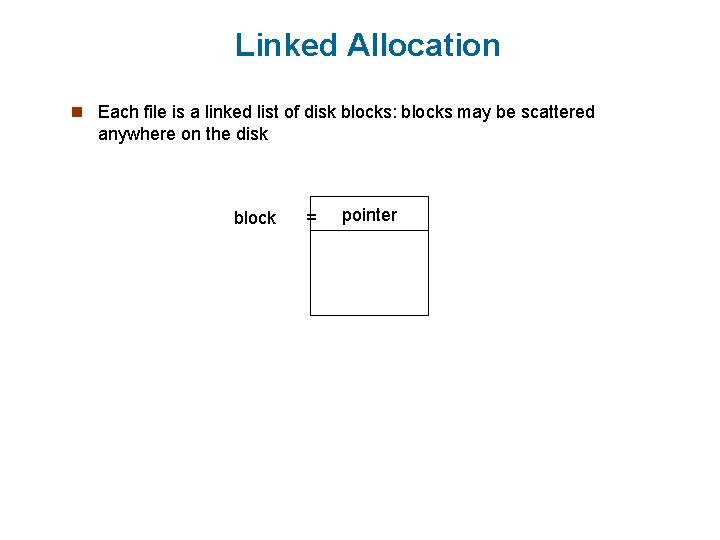

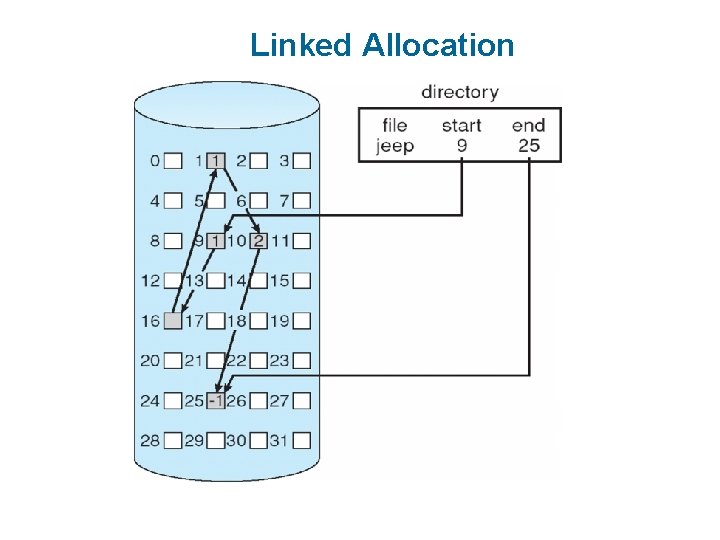

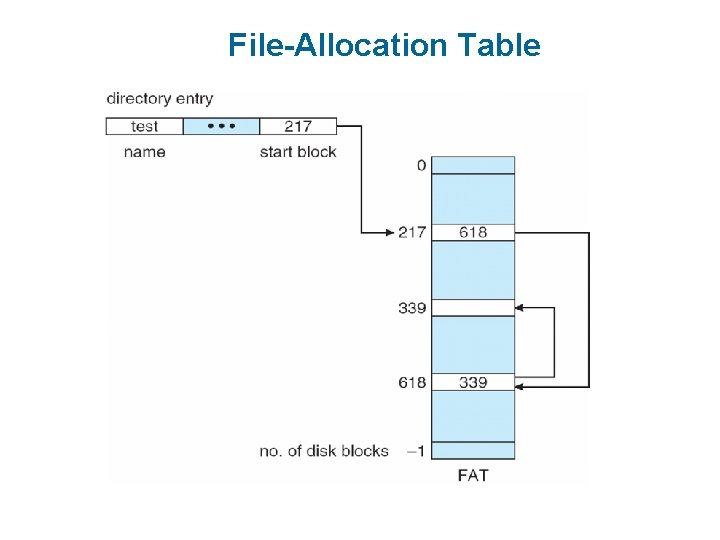

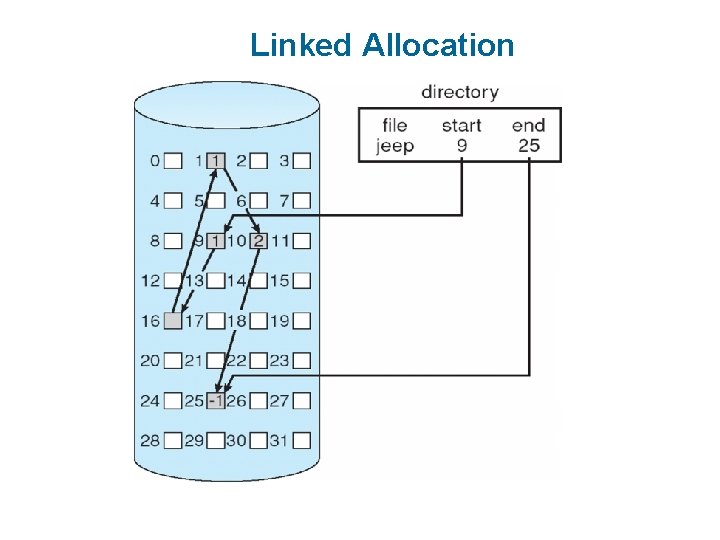

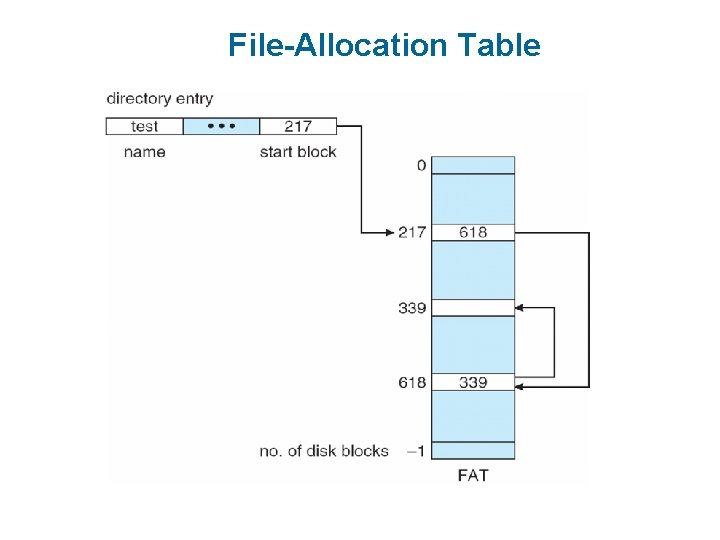

Allocation Methods - Linked n Linked allocation – each file a linked list of blocks l File ends at nil pointer l No external fragmentation l Each block contains pointer to next block l No compaction, external fragmentation l Free space management system called when new block needed l Improve efficiency by clustering blocks into groups but increases internal fragmentation l Reliability can be a problem l Locating a block can take many I/Os and disk seeks n FAT (File Allocation Table) variation l Beginning of volume has table, indexed by block number l Much like a linked list, but faster on disk and cacheable l New block allocation simple

Linked Allocation n Each file is a linked list of disk blocks: blocks may be scattered anywhere on the disk block = pointer

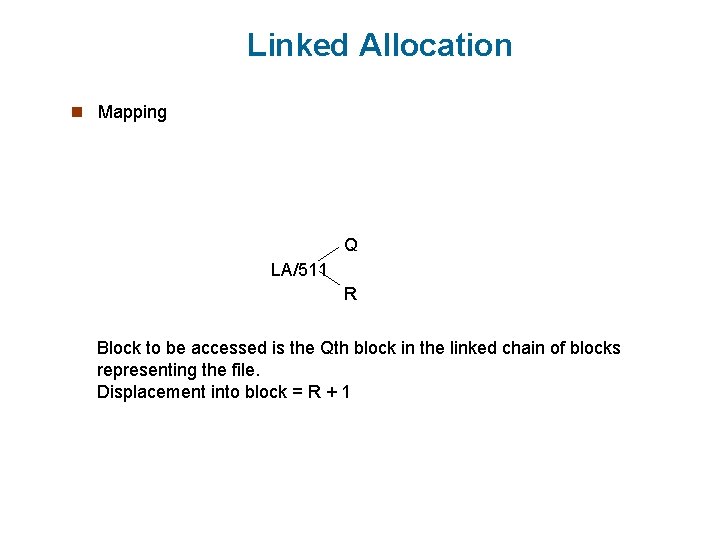

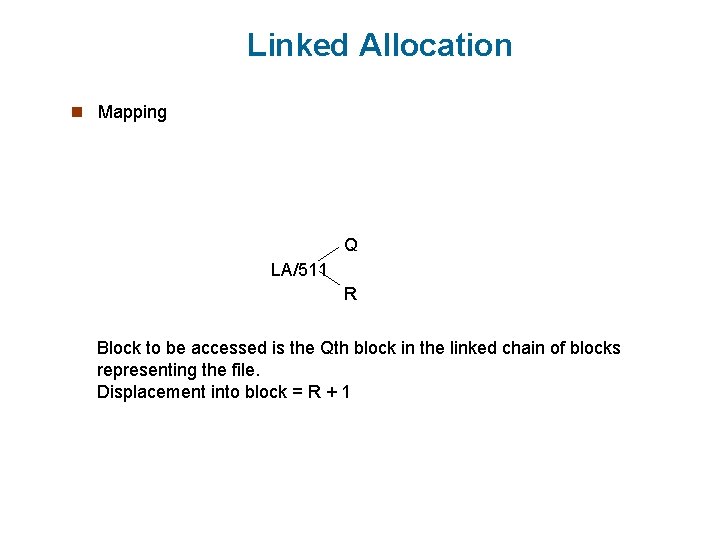

Linked Allocation n Mapping Q LA/511 R Block to be accessed is the Qth block in the linked chain of blocks representing the file. Displacement into block = R + 1

Linked Allocation

File-Allocation Table

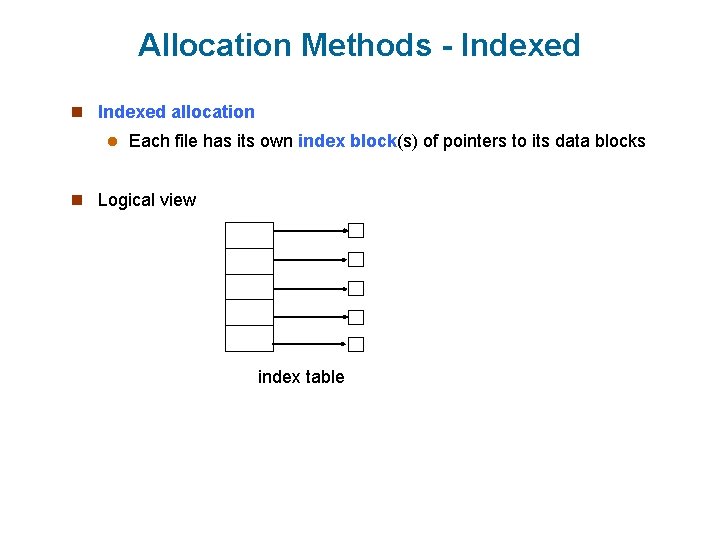

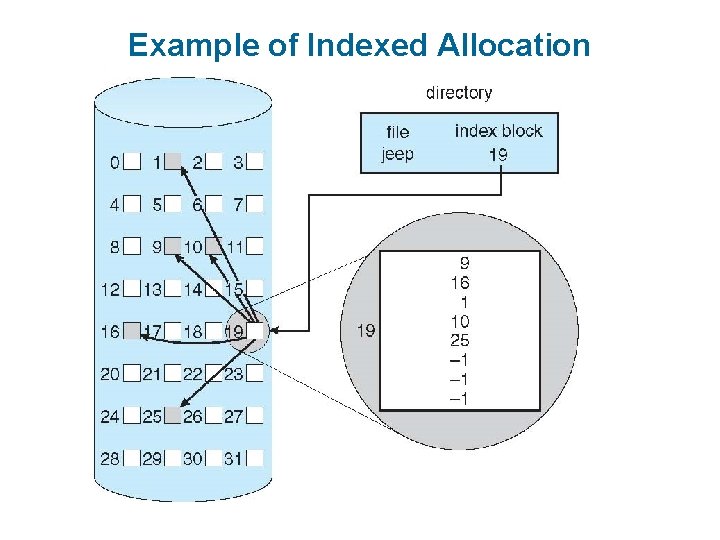

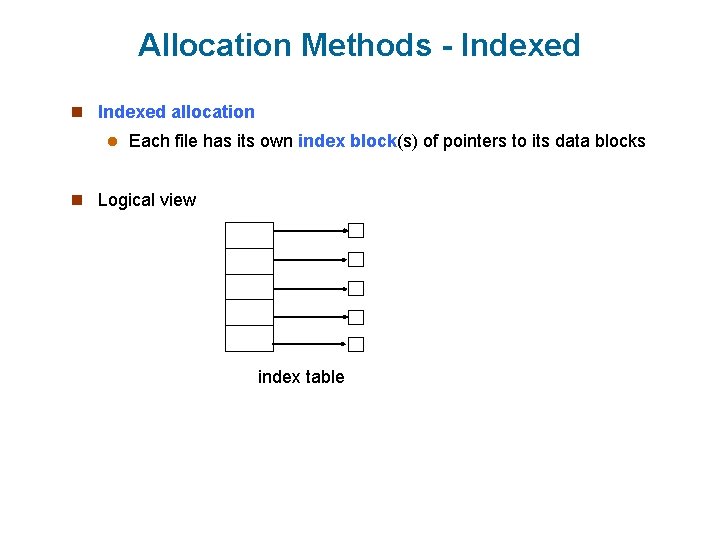

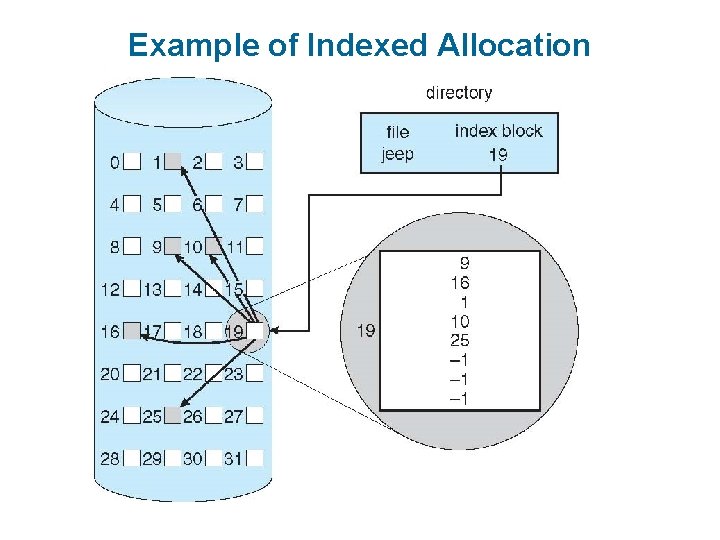

Allocation Methods - Indexed n Indexed allocation l Each file has its own index block(s) of pointers to its data blocks n Logical view index table

Example of Indexed Allocation

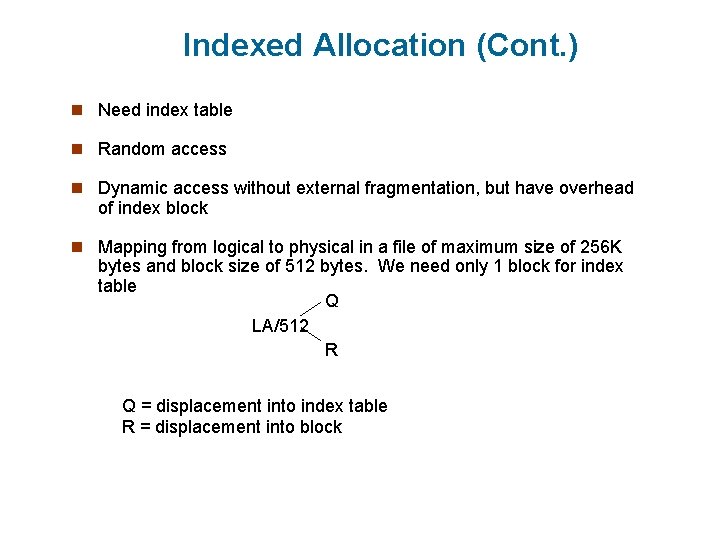

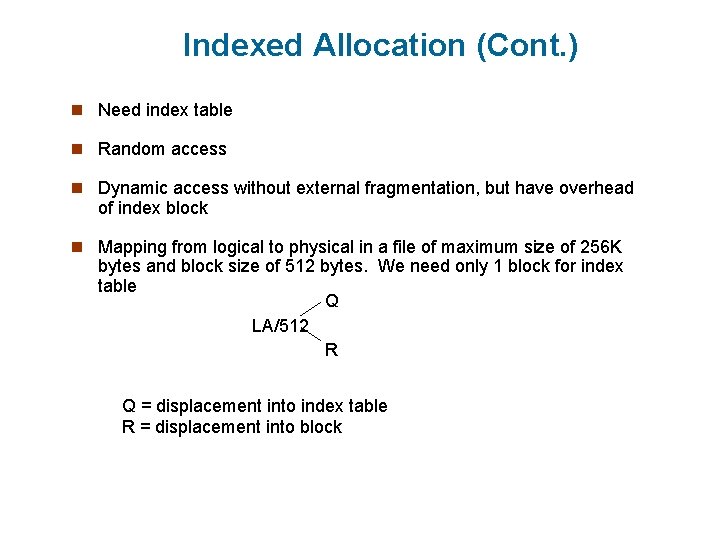

Indexed Allocation (Cont. ) n Need index table n Random access n Dynamic access without external fragmentation, but have overhead of index block n Mapping from logical to physical in a file of maximum size of 256 K bytes and block size of 512 bytes. We need only 1 block for index table Q LA/512 R Q = displacement into index table R = displacement into block

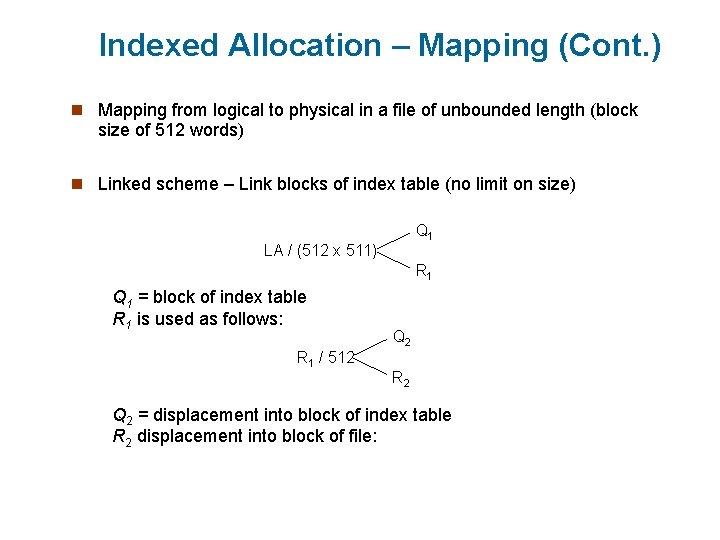

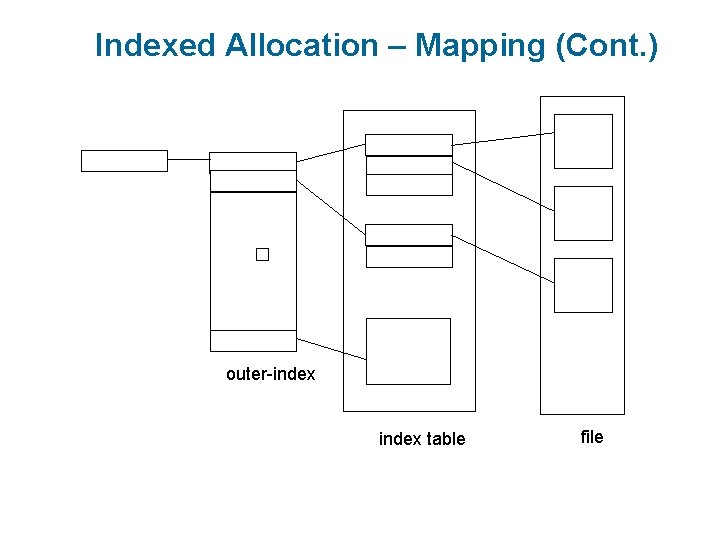

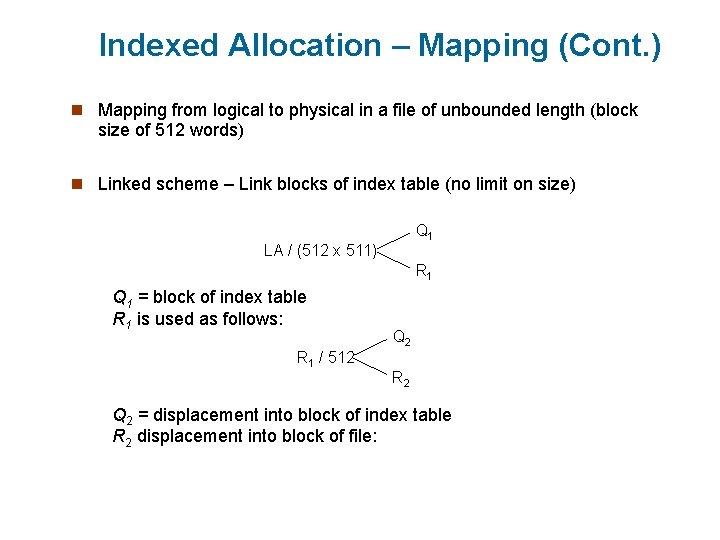

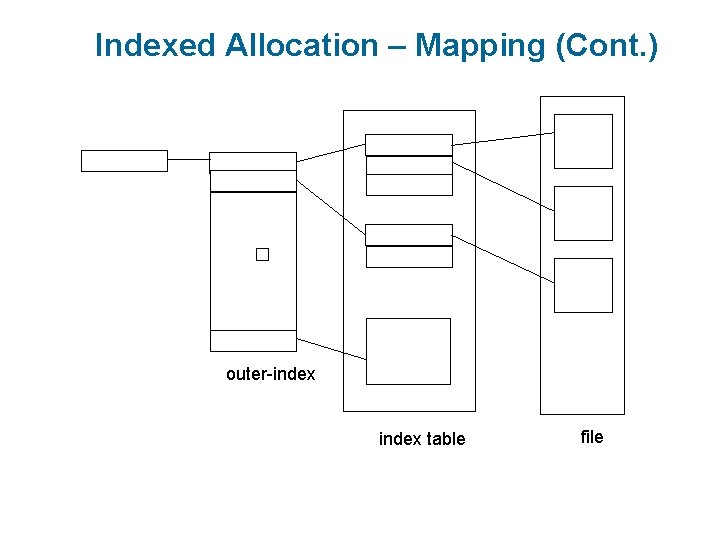

Indexed Allocation – Mapping (Cont. ) n Mapping from logical to physical in a file of unbounded length (block size of 512 words) n Linked scheme – Link blocks of index table (no limit on size) Q 1 LA / (512 x 511) R 1 Q 1 = block of index table R 1 is used as follows: R 1 / 512 Q 2 R 2 Q 2 = displacement into block of index table R 2 displacement into block of file:

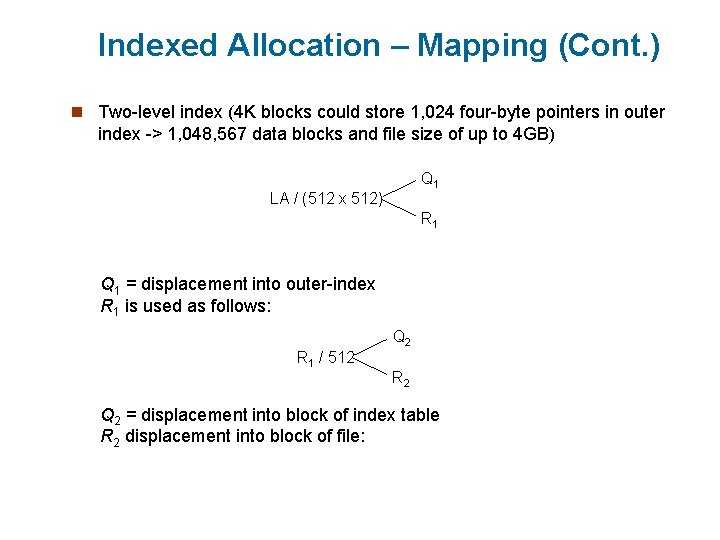

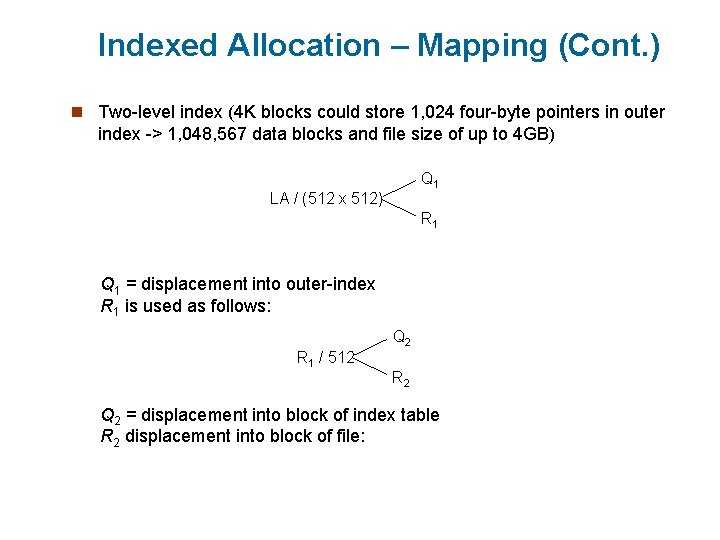

Indexed Allocation – Mapping (Cont. ) n Two-level index (4 K blocks could store 1, 024 four-byte pointers in outer index -> 1, 048, 567 data blocks and file size of up to 4 GB) Q 1 LA / (512 x 512) R 1 Q 1 = displacement into outer-index R 1 is used as follows: Q 2 R 1 / 512 R 2 Q 2 = displacement into block of index table R 2 displacement into block of file:

Indexed Allocation – Mapping (Cont. ) � outer-index table file

Mass-Storage Systems n Overview of Mass Storage Structure n Disk Attachment n Disk Scheduling n Disk Management n Swap-Space Management n Stable-Storage Implementation n Tertiary Storage Devices

Overview of Mass Storage Structure n Magnetic disks provide bulk of secondary storage of modern computers l Drives rotate at 60 to 250 times per second l Transfer rate is rate at which data flow between drive and computer l Positioning time (random-access time) is time to move disk arm to desired cylinder (seek time) and time for desired sector to rotate under the disk head (rotational latency) l Head crash results from disk head making contact with the disk surface 4 That’s bad n Disks can be removable n Drive attached to computer via I/O bus l Busses vary, including EIDE, ATA, SATA, USB, Fibre Channel, SCSI, SAS, Firewire l Host controller in computer uses bus to talk to disk controller built into drive or storage array

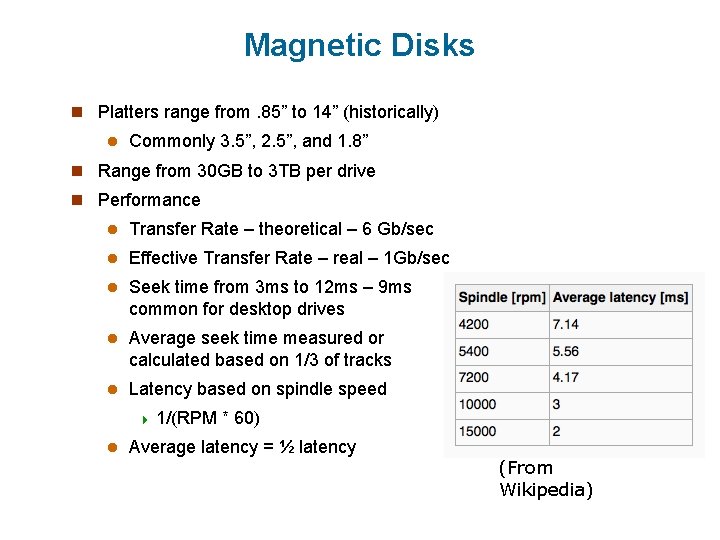

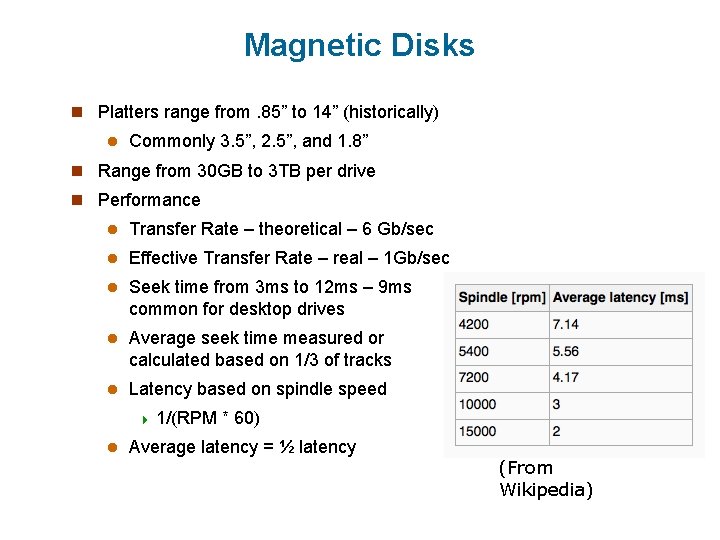

Magnetic Disks n Platters range from. 85” to 14” (historically) l Commonly 3. 5”, 2. 5”, and 1. 8” n Range from 30 GB to 3 TB per drive n Performance l Transfer Rate – theoretical – 6 Gb/sec l Effective Transfer Rate – real – 1 Gb/sec l Seek time from 3 ms to 12 ms – 9 ms common for desktop drives l Average seek time measured or calculated based on 1/3 of tracks l Latency based on spindle speed 4 1/(RPM l * 60) Average latency = ½ latency (From Wikipedia)

Magnetic Disk Performance n Access Latency = Average access time = average seek time + average latency l For fastest disk 3 ms + 2 ms = 5 ms l For slow disk 9 ms + 5. 56 ms = 14. 56 ms n Average I/O time = average access time + (amount to transfer / transfer rate) + controller overhead n For example to transfer a 4 KB block on a 7200 RPM disk with a 5 ms average seek time, 1 Gb/sec transfer rate with a. 1 ms controller overhead = l 5 ms + 4. 17 ms + 4 KB / 1 Gb/sec + 0. 1 ms = l 9. 27 ms + 4 / 131072 sec = l 9. 27 ms +. 12 ms = 9. 39 ms

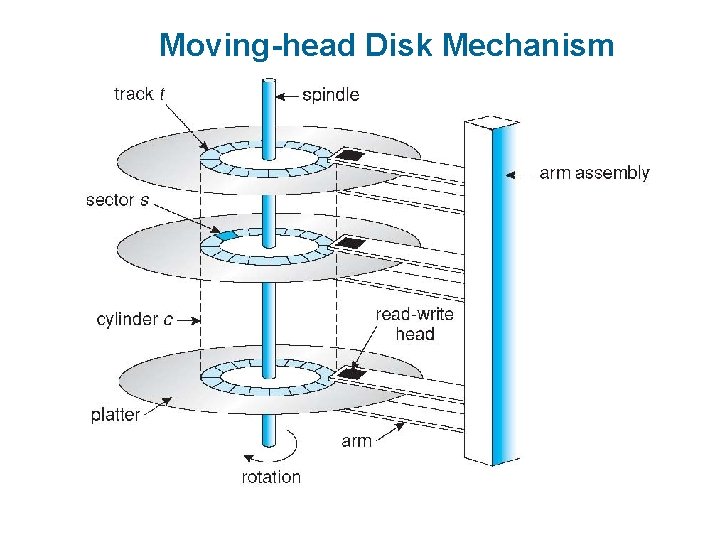

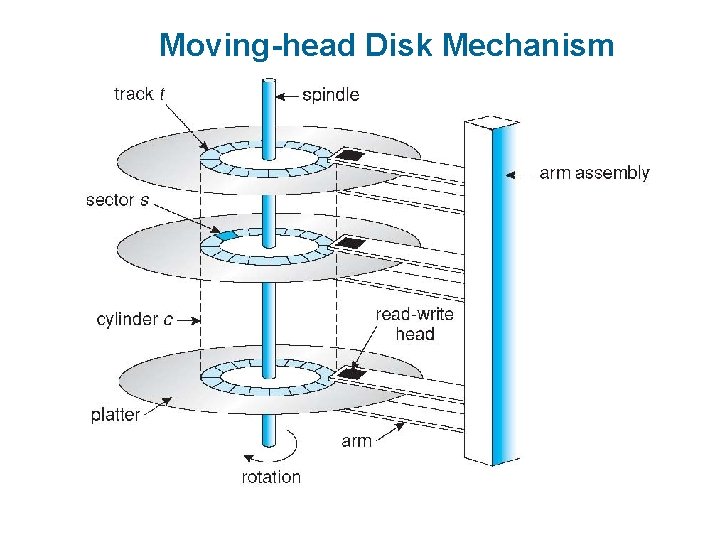

Moving-head Disk Mechanism

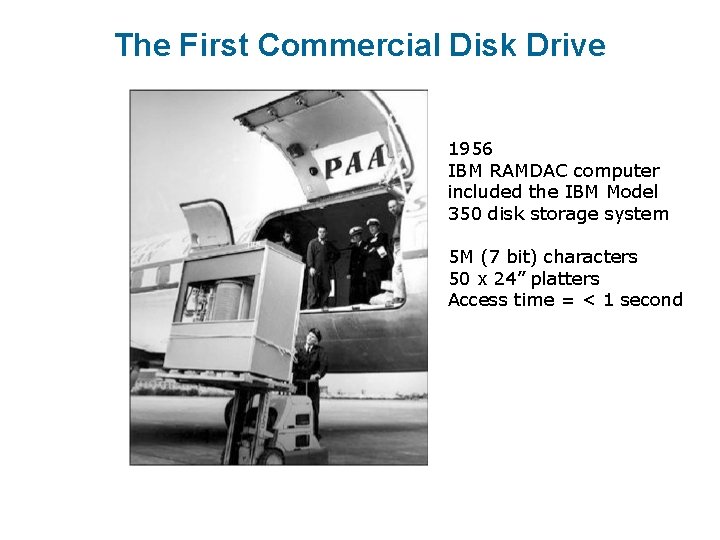

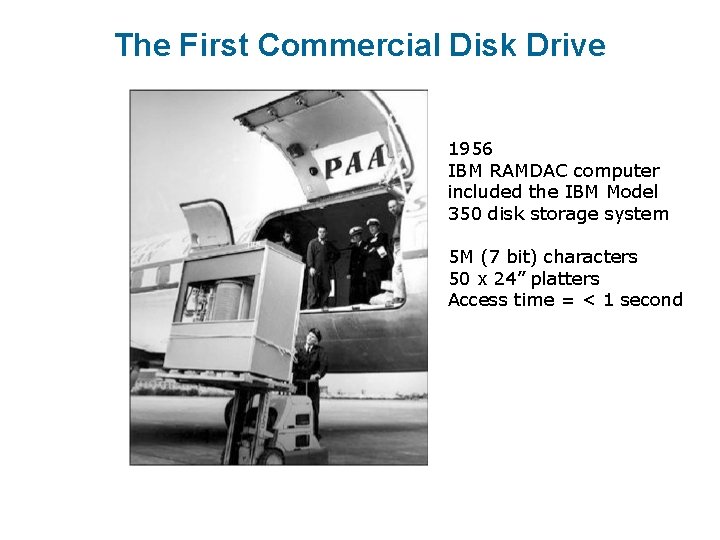

The First Commercial Disk Drive 1956 IBM RAMDAC computer included the IBM Model 350 disk storage system 5 M (7 bit) characters 50 x 24” platters Access time = < 1 second

Magnetic Tape n Was early secondary-storage medium l Evolved from open spools to cartridges n Relatively permanent and holds large quantities of data n Access time slow n Random access ~1000 times slower than disk n Mainly used for backup, storage of infrequently-used data, transfer medium between systems n Kept in spool and wound or rewound past read-write head n Once data under head, transfer rates comparable to disk l 140 MB/sec and greater n 200 GB to 1. 5 TB typical storage n Common technologies are LTO-{3, 4, 5} and T 10000

Disk Structure n Disk drives are addressed as large 1 -dimensional arrays of logical blocks, where the logical block is the smallest unit of transfer n The 1 -dimensional array of logical blocks is mapped into the sectors of the disk sequentially l Sector 0 is the first sector of the first track on the outermost cylinder l Mapping proceeds in order through that track, then the rest of the tracks in that cylinder, and then through the rest of the cylinders from outermost to innermost l Logical to physical address should be easy 4 Except for bad sectors 4 Non-constant velocity # of sectors per track via constant angular

Disk Attachment n Host-attached storage accessed through I/O ports talking to I/O busses n SCSI itself is a bus, up to 16 devices on one cable, SCSI initiator requests operation and SCSI targets perform tasks l Each target can have up to 8 logical units (disks attached to device controller) n FC is high-speed serial architecture l Can be switched fabric with 24 -bit address space – the basis of storage area networks (SANs) in which many hosts attach to many storage units n I/O directed to bus ID, device ID, logical unit (LUN)

Storage Array n Can just attach disks, or arrays of disks n Storage Array has controller(s), provides features to attached host(s) l Ports to connect hosts to array l Memory, controlling software (sometimes NVRAM, etc) l A few to thousands of disks l RAID, hot spares, hot swap (discussed later) l Shared storage -> more efficiency l Features found in some file systems 4 Snaphots, clones, thin provisioning, replication, deduplication, etc

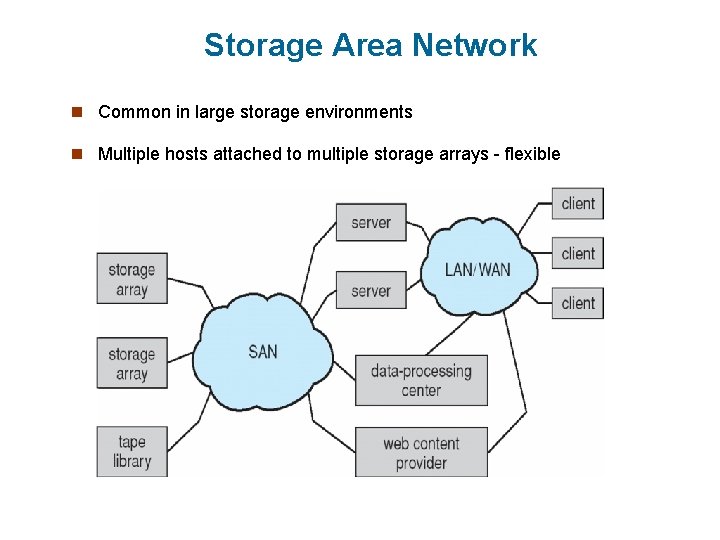

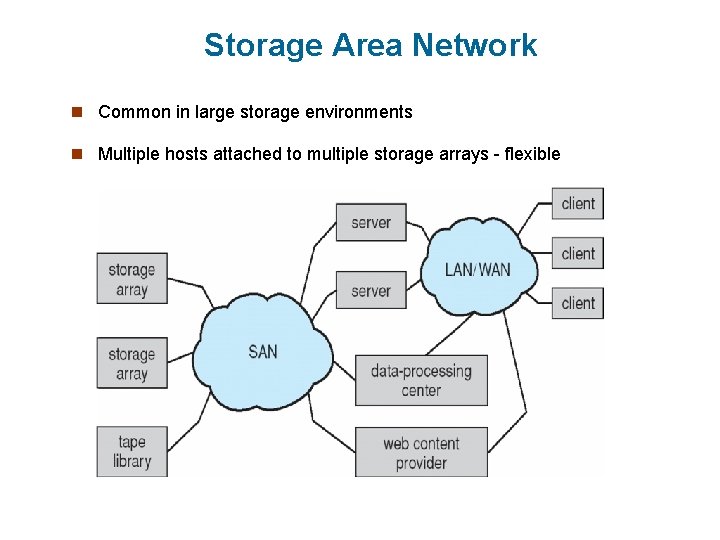

Storage Area Network n Common in large storage environments n Multiple hosts attached to multiple storage arrays - flexible

Storage Area Network (Cont. ) n SAN is one or more storage arrays l Connected to one or more Fibre Channel switches n Hosts also attach to the switches n Storage made available via LUN Masking from specific arrays to specific servers n Easy to add or remove storage, add new host and allocate it storage l Over low-latency Fibre Channel fabric n Why have separate storage networks and communications networks? l Consider i. SCSI, FCOE

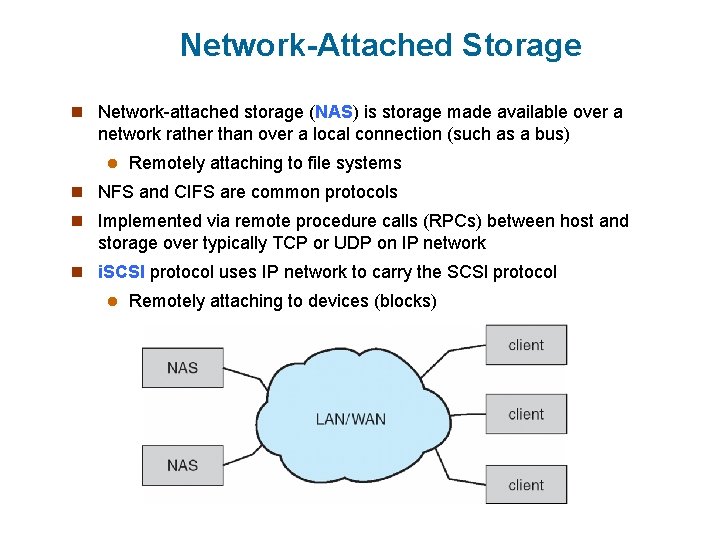

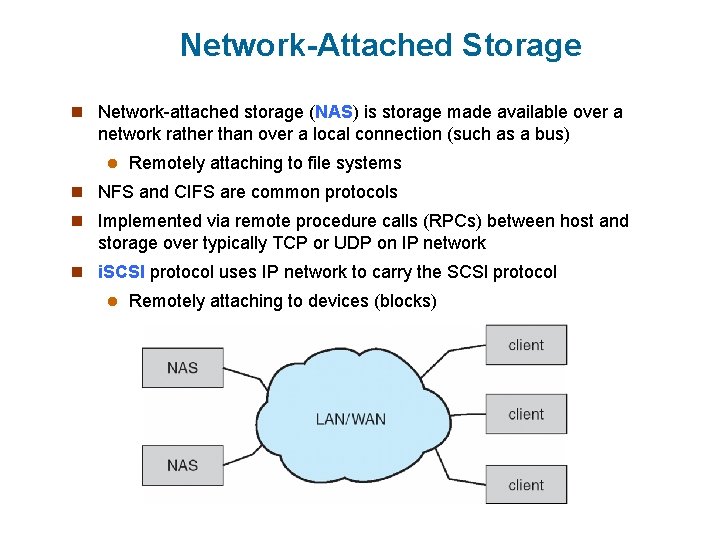

Network-Attached Storage n Network-attached storage (NAS) is storage made available over a network rather than over a local connection (such as a bus) l Remotely attaching to file systems n NFS and CIFS are common protocols n Implemented via remote procedure calls (RPCs) between host and storage over typically TCP or UDP on IP network n i. SCSI protocol uses IP network to carry the SCSI protocol l Remotely attaching to devices (blocks)

Disk Scheduling n The operating system is responsible for using hardware efficiently — for the disk drives, this means having a fast access time and disk bandwidth n Minimize seek time n Seek time seek distance n Disk bandwidth is the total number of bytes transferred, divided by the total time between the first request for service and the completion of the last transfer

Disk Scheduling (Cont. ) n There are many sources of disk I/O request l OS l System processes l Users processes n I/O request includes input or output mode, disk address, memory address, number of sectors to transfer n OS maintains queue of requests, per disk or device n Idle disk can immediately work on I/O request, busy disk means work must queue l Optimization algorithms only make sense when a queue exists n Note that drive controllers have small buffers and can manage a queue of I/O requests (of varying “depth”) n Several algorithms exist to schedule the servicing of disk I/O requests n The analysis is true for one or many platters

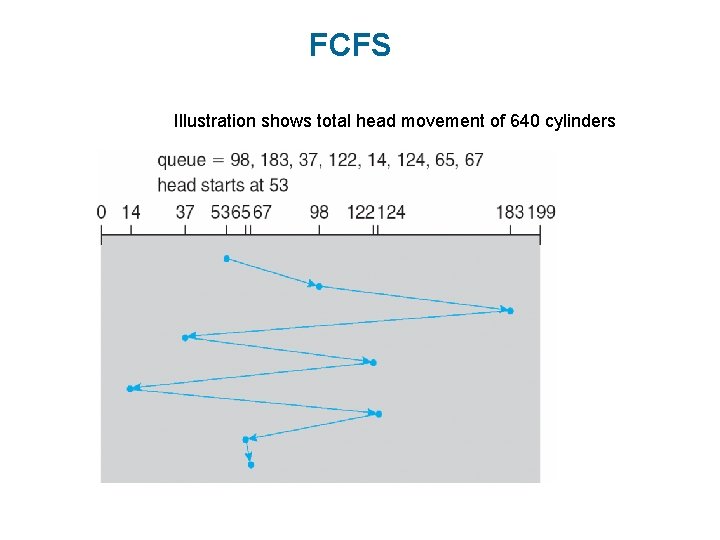

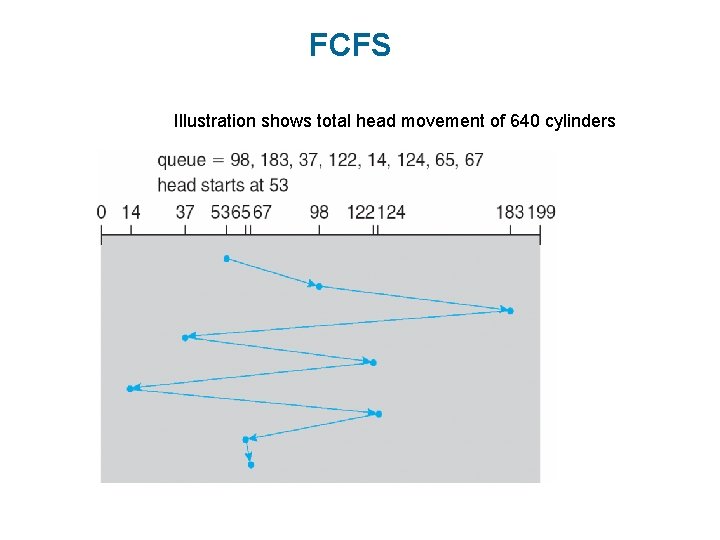

FCFS Illustration shows total head movement of 640 cylinders

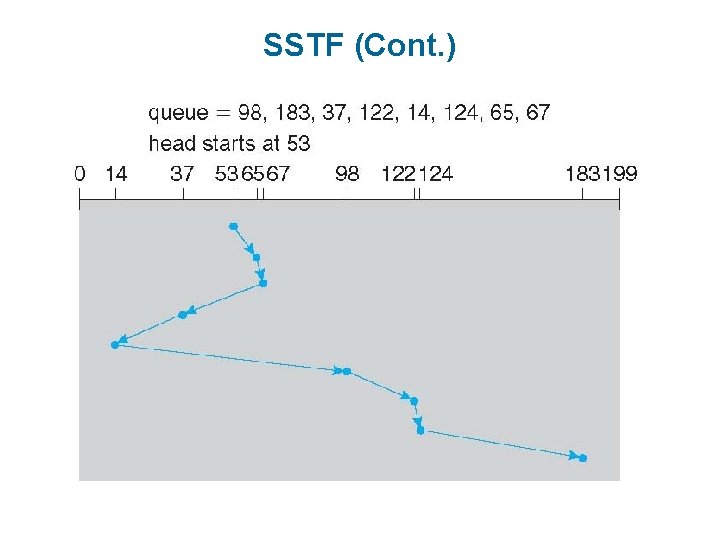

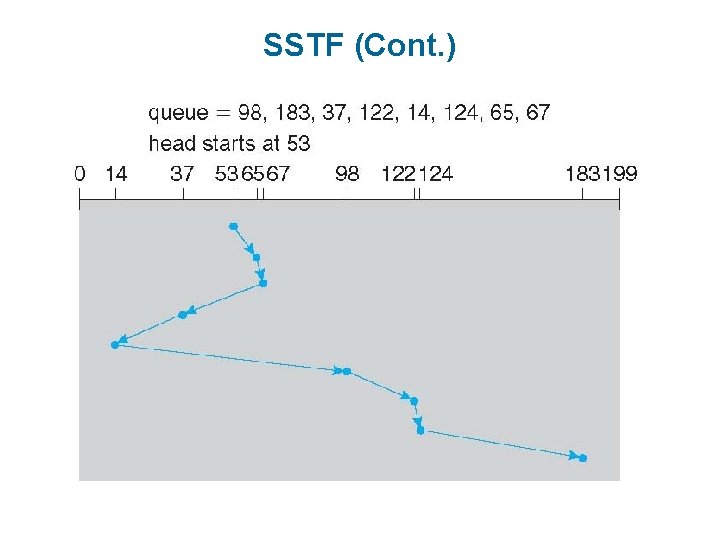

SSTF n Shortest Seek Time First selects the request with the minimum seek time from the current head position n SSTF scheduling is a form of SJF scheduling; may cause starvation of some requests n Illustration shows total head movement of 236 cylinders

SSTF (Cont. )

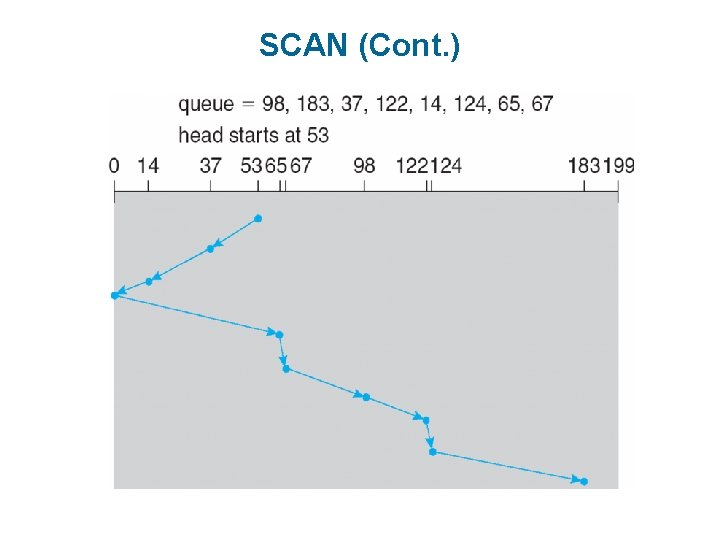

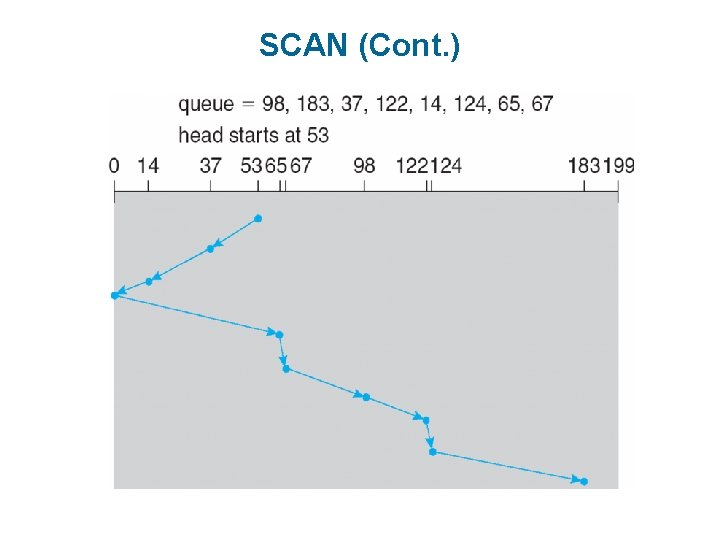

SCAN n The disk arm starts at one end of the disk, and moves toward the other end, servicing requests until it gets to the other end of the disk, where the head movement is reversed and servicing continues. n SCAN algorithm Sometimes called the elevator algorithm n Illustration shows total head movement of 208 cylinders n But note that if requests are uniformly dense, largest density at other end of disk and those wait the longest

SCAN (Cont. )

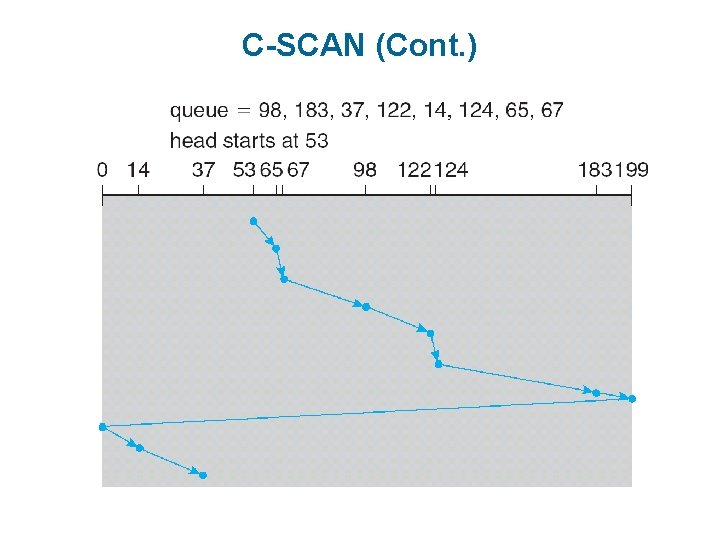

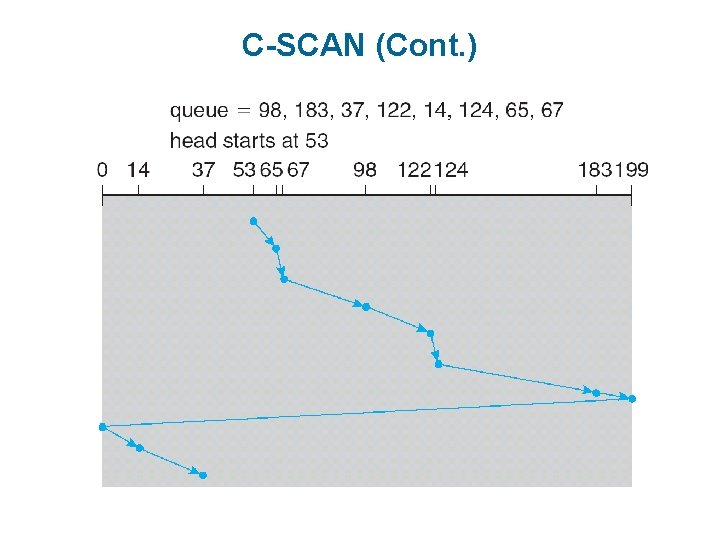

C-SCAN n Provides a more uniform wait time than SCAN n The head moves from one end of the disk to the other, servicing requests as it goes l When it reaches the other end, however, it immediately returns to the beginning of the disk, without servicing any requests on the return trip n Treats the cylinders as a circular list that wraps around from the last cylinder to the first one n Total number of cylinders?

C-SCAN (Cont. )

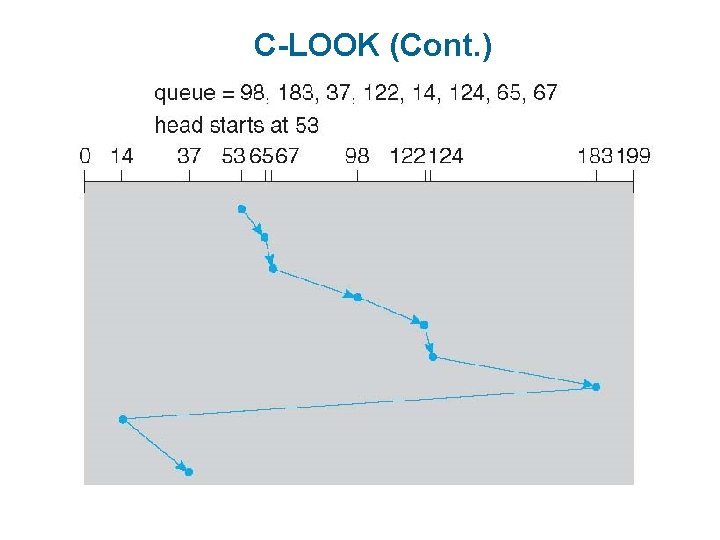

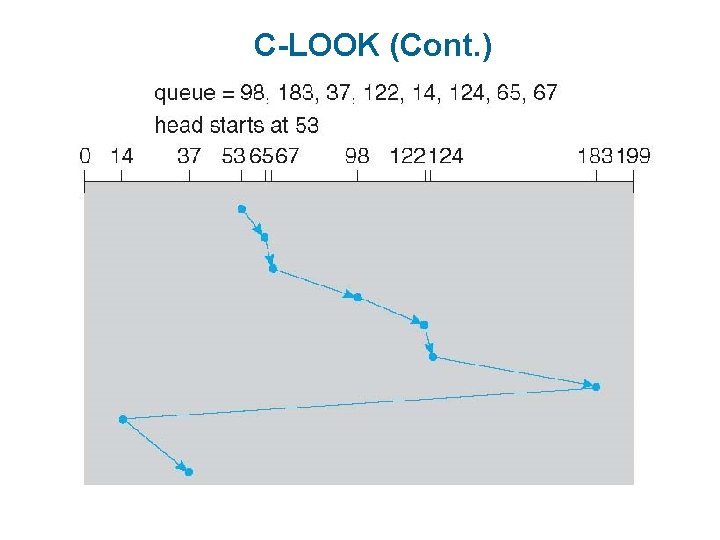

C-LOOK n LOOK a version of SCAN, C-LOOK a version of C-SCAN n Arm only goes as far as the last request in each direction, then reverses direction immediately, without first going all the way to the end of the disk n Total number of cylinders?

C-LOOK (Cont. )

Selecting a Disk-Scheduling Algorithm n SSTF is common and has a natural appeal n SCAN and C-SCAN perform better for systems that place a heavy load on the disk l Less starvation n Performance depends on the number and types of requests n Requests for disk service can be influenced by the file-allocation method l And metadata layout n The disk-scheduling algorithm should be written as a separate module of the operating system, allowing it to be replaced with a different algorithm if necessary n Either SSTF or LOOK is a reasonable choice for the default algorithm n What about rotational latency?

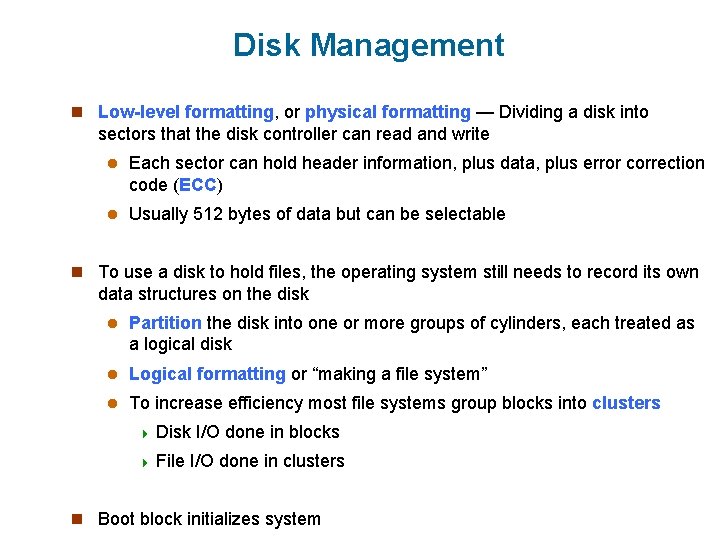

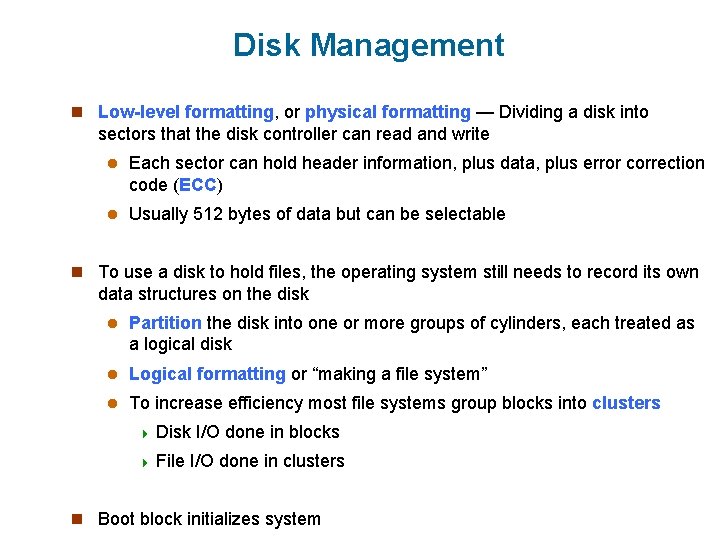

Disk Management n Low-level formatting, or physical formatting — Dividing a disk into sectors that the disk controller can read and write l Each sector can hold header information, plus data, plus error correction code (ECC) l Usually 512 bytes of data but can be selectable n To use a disk to hold files, the operating system still needs to record its own data structures on the disk l Partition the disk into one or more groups of cylinders, each treated as a logical disk l Logical formatting or “making a file system” l To increase efficiency most file systems group blocks into clusters 4 Disk 4 File I/O done in blocks I/O done in clusters n Boot block initializes system

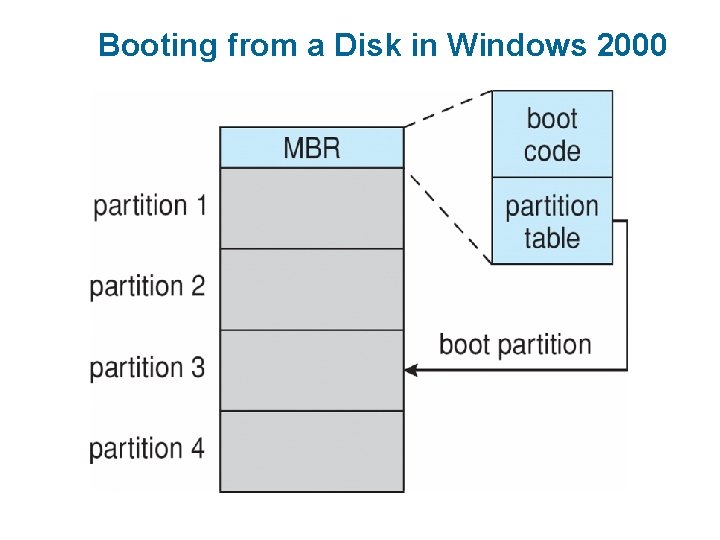

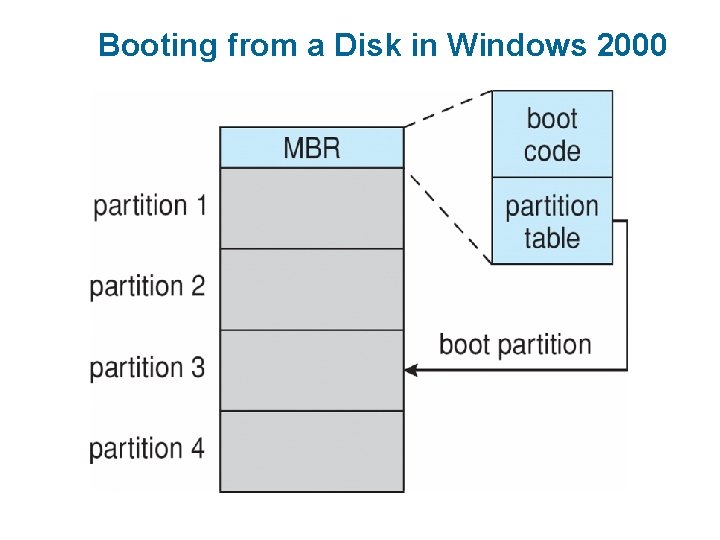

Booting from a Disk in Windows 2000

Swap-Space Management n Swap-space — Virtual memory uses disk space as an extension of main memory l Less common now due to memory capacity increases n Swap-space can be carved out of the normal file system, or, more commonly, it can be in a separate disk partition (raw) n Swap-space management l 4. 3 BSD allocates swap space when process starts; holds text segment (the program) and data segment l Kernel uses swap maps to track swap-space use l Solaris 2 allocates swap space only when a dirty page is forced out of physical memory, not when the virtual memory page is first created 4 File data written to swap space until write to file system requested 4 Other dirty pages go to swap space due to no other home

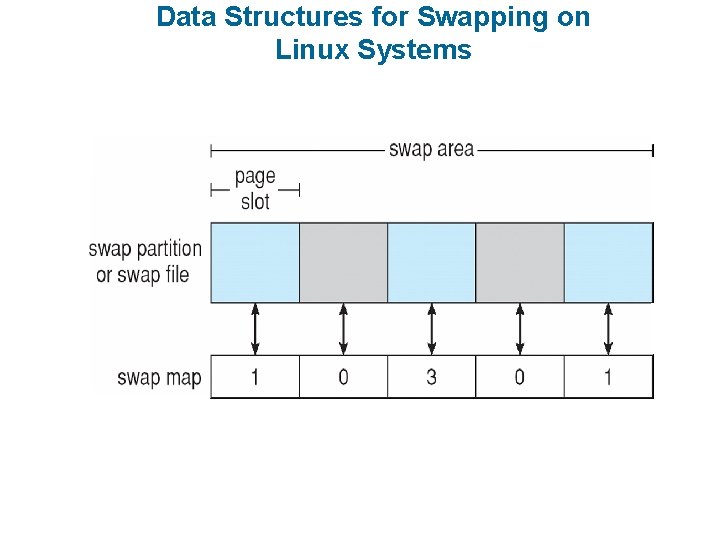

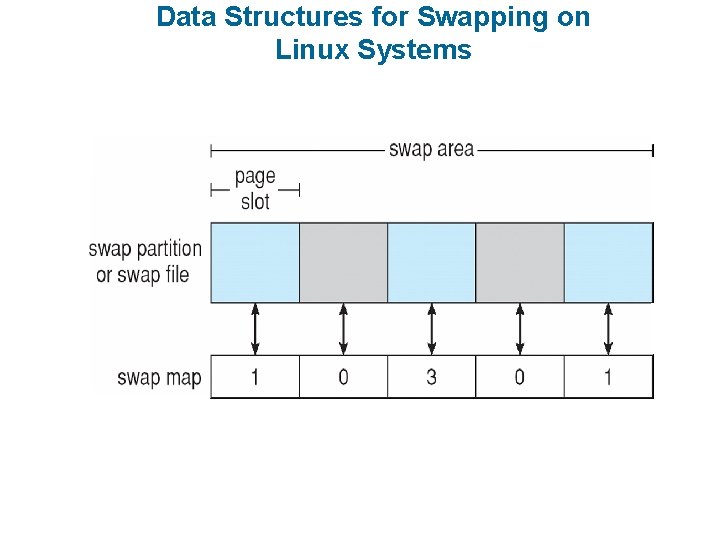

Data Structures for Swapping on Linux Systems

Stable-Storage Implementation n Write-ahead log scheme requires stable storage n To implement stable storage: l Replicate information on more than one nonvolatile storage media with independent failure modes l Update information in a controlled manner to ensure that we can recover the stable data after any failure during data transfer or recovery

Tertiary Storage Devices n Low cost is the defining characteristic of tertiary storage n Generally, tertiary storage is built using removable media n Common examples of removable media are floppy disks and CD- ROMs; other types are available

Removable Disks n Floppy disk — thin flexible disk coated with magnetic material, enclosed in a protective plastic case l Most floppies hold about 1 MB; similar technology is used for removable disks that hold more than 1 GB l Removable magnetic disks can be nearly as fast as hard disks, but they are at a greater risk of damage from exposure

Removable Disks (Cont. ) n A magneto-optic disk records data on a rigid platter coated with magnetic material l Laser heat is used to amplify a large, weak magnetic field to record a bit l Laser light is also used to read data (Kerr effect) l The magneto-optic head flies much farther from the disk surface than a magnetic disk head, and the magnetic material is covered with a protective layer of plastic or glass; resistant to head crashes n Optical disks do not use magnetism; they employ special materials that are altered by laser light

WORM Disks n The data on read-write disks can be modified over and over n WORM (“Write Once, Read Many Times”) disks can be written only once n Thin aluminum film sandwiched between two glass or plastic platters n To write a bit, the drive uses a laser light to burn a small hole through the aluminum; information can be destroyed by not altered n Very durable and reliable n Read-only disks, such ad CD-ROM and DVD, com from the factory with the data pre-recorded

Tapes n Compared to a disk, a tape is less expensive and holds more data, but random access is much slower. n Tape is an economical medium for purposes that do not require fast random access, e. g. , backup copies of disk data, holding huge volumes of data. n Large tape installations typically use robotic tape changers that move tapes between tape drives and storage slots in a tape library l stacker – library that holds a few tapes l silo – library that holds thousands of tapes n A disk-resident file can be archived to tape for low cost storage; the computer can stage it back into disk storage for active use.

Operating System Support n Major OS jobs are to manage physical devices and to present a virtual machine abstraction to applications n For hard disks, the OS provides two abstraction: l Raw device – an array of data blocks l File system – the OS queues and schedules the interleaved requests from several applications

Application Interface n Most OSs handle removable disks almost exactly like fixed disks — a new cartridge is formatted an empty file system is generated on the disk n Tapes are presented as a raw storage medium, i. e. , and application does not open a file on the tape, it opens the whole tape drive as a raw device n Usually the tape drive is reserved for the exclusive use of that application n Since the OS does not provide file system services, the application must decide how to use the array of blocks n Since every application makes up its own rules for how to organize a tape, a tape full of data can generally only be used by the program that created it

Tape Drives n The basic operations for a tape drive differ from those of a disk drive n locate()positions the tape to a specific logical block, not an entire track (corresponds to seek()) n The read position()operation returns the logical block number where the tape head is n The space()operation enables relative motion n Tape drives are “append-only” devices; updating a block in the middle of the tape also effectively erases everything beyond that block n An EOT mark is placed after a block that is written

File Naming n The issue of naming files on removable media is especially difficult when we want to write data on a removable cartridge on one computer, and then use the cartridge in another computer. n Contemporary OSs generally leave the name space problem unsolved for removable media, and depend on applications and users to figure out how to access and interpret the data. n Some kinds of removable media (e. g. , CDs) are so well standardized that all computers use them the same way.

Hierarchical Storage Management (HSM) n A hierarchical storage system extends the storage hierarchy beyond primary memory and secondary storage to incorporate tertiary storage — usually implemented as a jukebox of tapes or removable disks. n Usually incorporate tertiary storage by extending the file system l Small and frequently used files remain on disk l Large, old, inactive files are archived to the jukebox n HSM is usually found in supercomputing centers and other large installations that have enormous volumes of data.

Speed n Two aspects of speed in tertiary storage are bandwidth and latency. n Bandwidth is measured in bytes per second. l Sustained bandwidth – average data rate during a large transfer; # of bytes/transfer time Data rate when the data stream is actually flowing l Effective bandwidth – average over the entire I/O time, including seek() or locate(), and cartridge switching Drive’s overall data rate

Speed (Cont. ) n Access latency – amount of time needed to locate data l Access time for a disk – move the arm to the selected cylinder and wait for the rotational latency; < 35 milliseconds l Access on tape requires winding the tape reels until the selected block reaches the tape head; tens or hundreds of seconds l Generally say that random access within a tape cartridge is about a thousand times slower than random access on disk n The low cost of tertiary storage is a result of having many cheap cartridges share a few expensive drives n A removable library is best devoted to the storage of infrequently used data, because the library can only satisfy a relatively small number of I/O requests per hour

Reliability n A fixed disk drive is likely to be more reliable than a removable disk or tape drive n An optical cartridge is likely to be more reliable than a magnetic disk or tape n A head crash in a fixed hard disk generally destroys the data, whereas the failure of a tape drive or optical disk drive often leaves the data cartridge unharmed

Cost n Main memory is much more expensive than disk storage n The cost per megabyte of hard disk storage is competitive with magnetic tape if only one tape is used per drive n The cheapest tape drives and the cheapest disk drives have had about the same storage capacity over the years n Tertiary storage gives a cost savings only when the number of cartridges is considerably larger than the number of drives

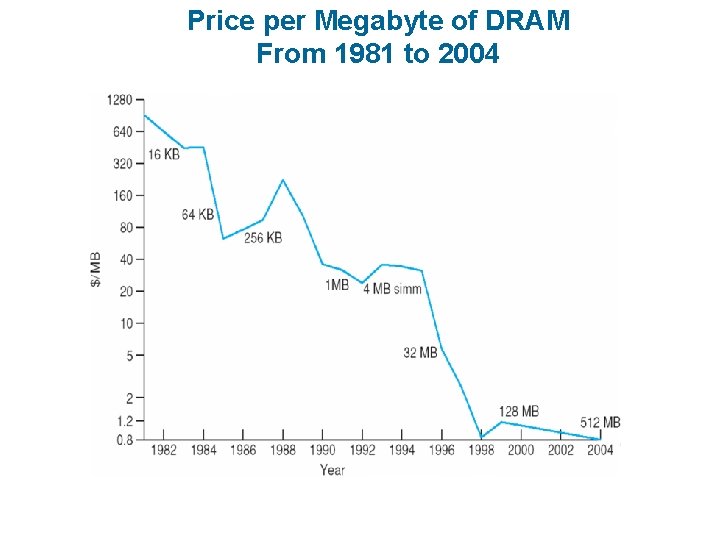

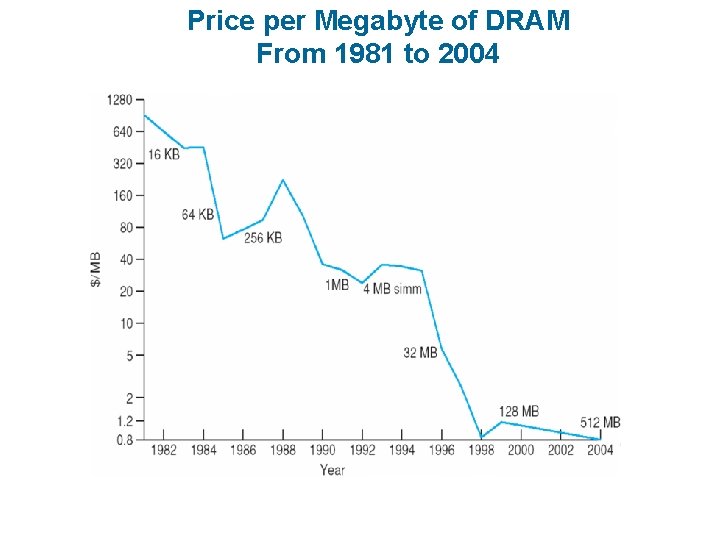

Price per Megabyte of DRAM From 1981 to 2004

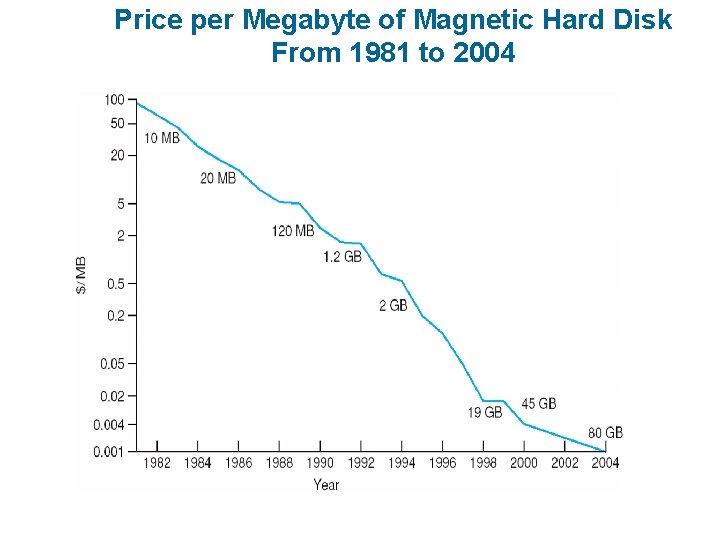

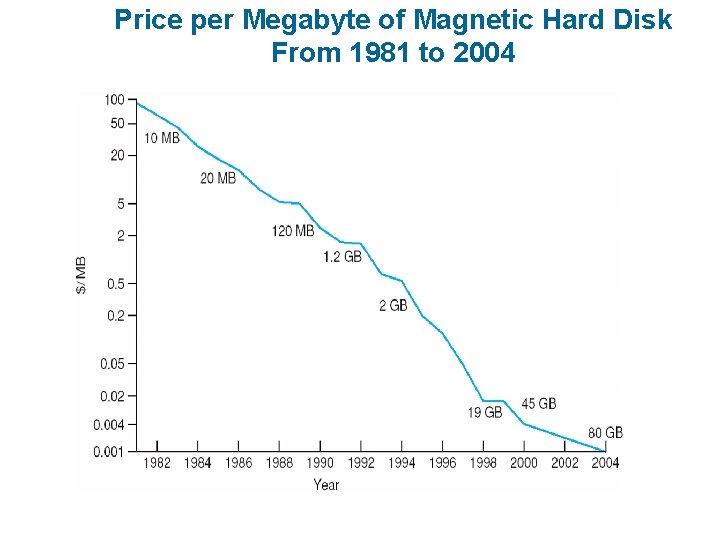

Price per Megabyte of Magnetic Hard Disk From 1981 to 2004

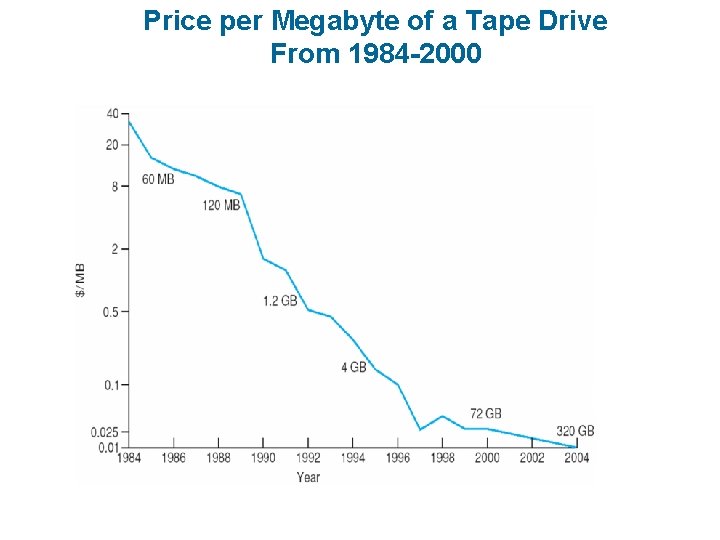

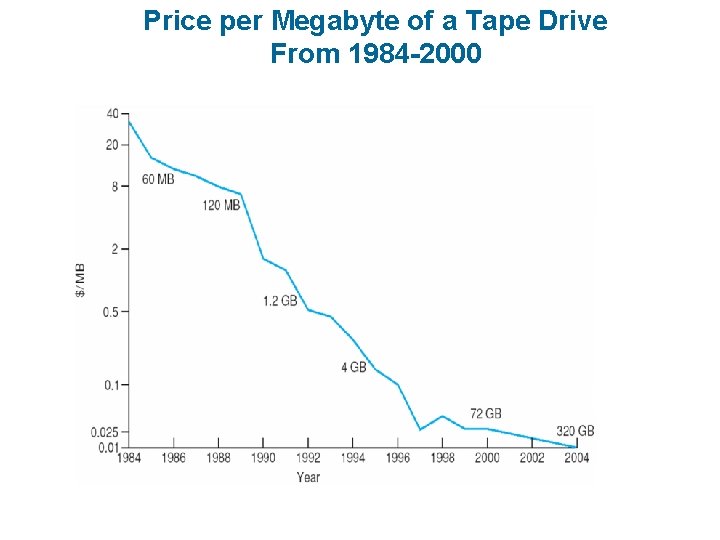

Price per Megabyte of a Tape Drive From 1984 -2000