FFS LFS and RAID UNIX Fast File System

- Slides: 67

FFS, LFS, and RAID

UNIX Fast File System l Designed to improve performance of UNIX file I/O l Two major areas of performance improvement ¡Bigger block sizes ¡Better on-disk layout for files

Block Size Improvement l 4 x block size quadrupled amount of data gotten per disk fetch l But could lead to fragmentation problems l So fragments introduced ¡Small files stored in fragments ¡Fragments addressable l. But not independently fetchable

Disk Layout Improvements l Aimed toward avoiding disk seeks l Bad if finding related files takes many seeks l Very bad if find all the blocks of a single file requires seeks l Spatial locality: keep related things close together on disk

Cylinder Groups l A cylinder group: a set of consecutive disk cylinders in the FFS l Files in the same directory stored in the same cylinder group l Within a cylinder group, tries to keep things contiguous l But must not let a cylinder group fill up

Locations for New Directories l Put new directory in relatively empty cylinder group l What is “empty”? ¡Many free i_nodes ¡Few directories already there

The Importance of Free Space l FFS must not run too close to capacity l No room for new files l Layout policies ineffective when too few free blocks l Typically, FFS needs 10% of the total blocks free to perform well

Performance of FFS l 4 x to 15 x the bandwidth of old UNIX file system l Depending on size of disk blocks l Performance on original file system ¡Limited by CPU speed ¡Due to memory-to-memory buffer copies

FFS Not the Ultimate Solution l Based on technology of the early 80 s l And file usage patterns of those times l In modern systems, FFS achieves only ~5% of raw disk bandwidth

The Log-Structured File System l Large caches can catch almost all reads l But most writes have to go to disk l So FS performance can be limited by writes l So, produce a FS that writes quickly l Like an append-only log

Basic LFS Architecture l Buffer writes, send them sequentially to disk ¡Data blocks ¡Attributes ¡Directories ¡And almost everything else l Converts small sync writes to large async writes

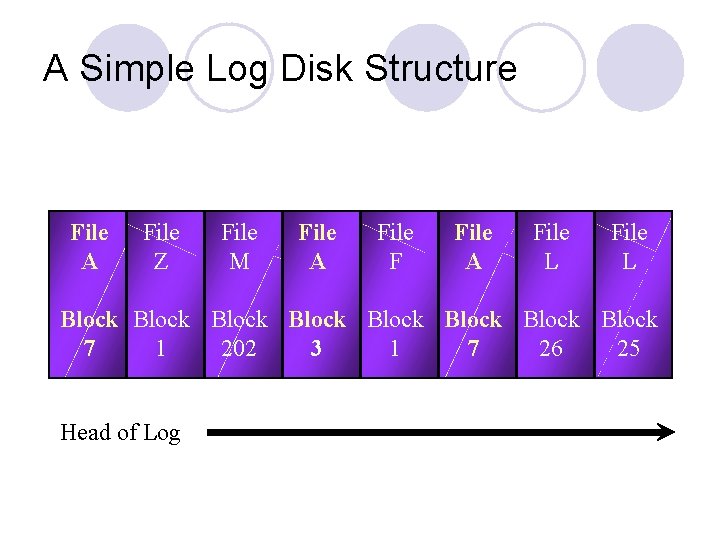

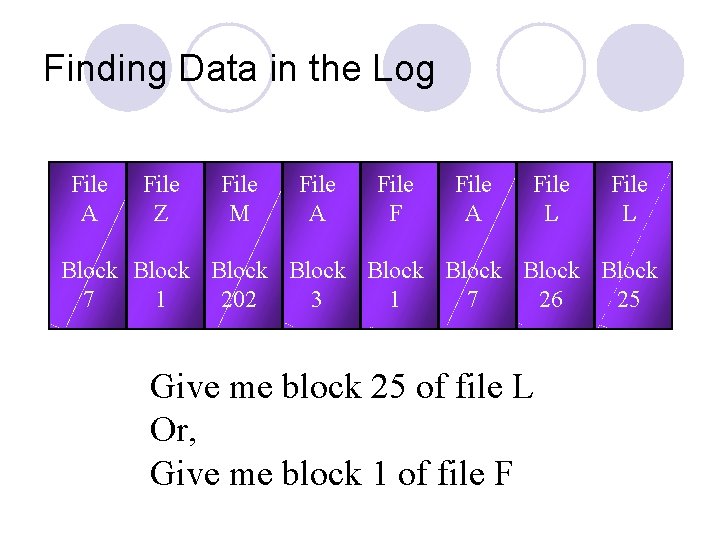

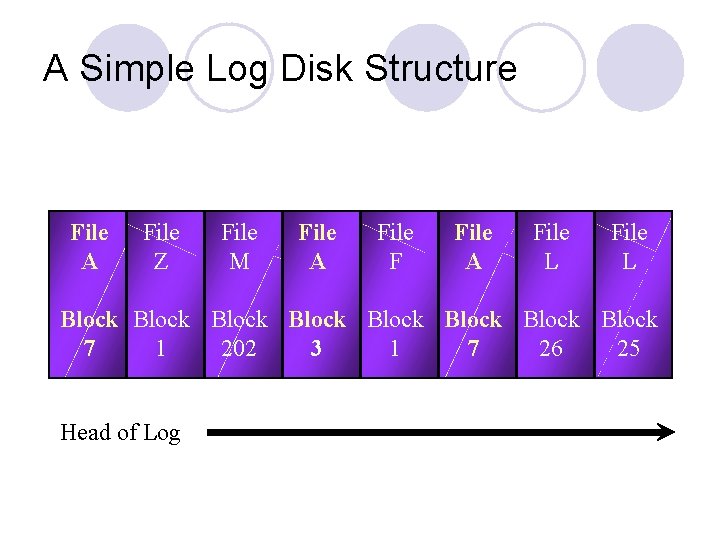

A Simple Log Disk Structure File A File Z File M File A File F File A File L Block Block 7 1 202 3 1 7 26 25 Head of Log

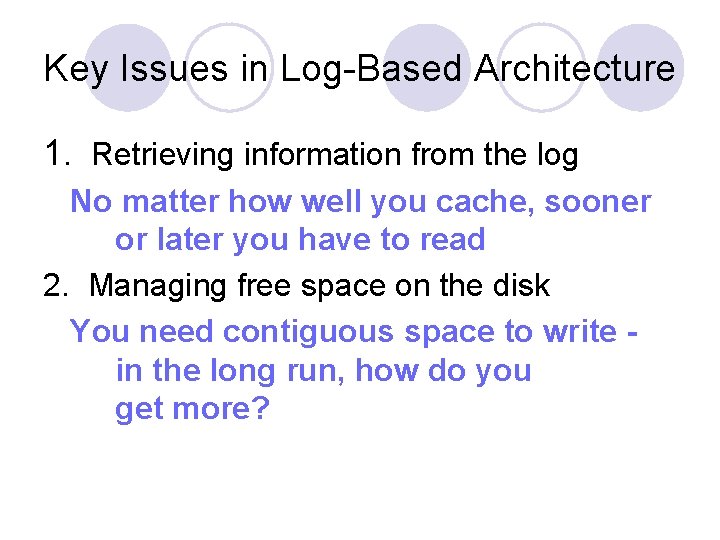

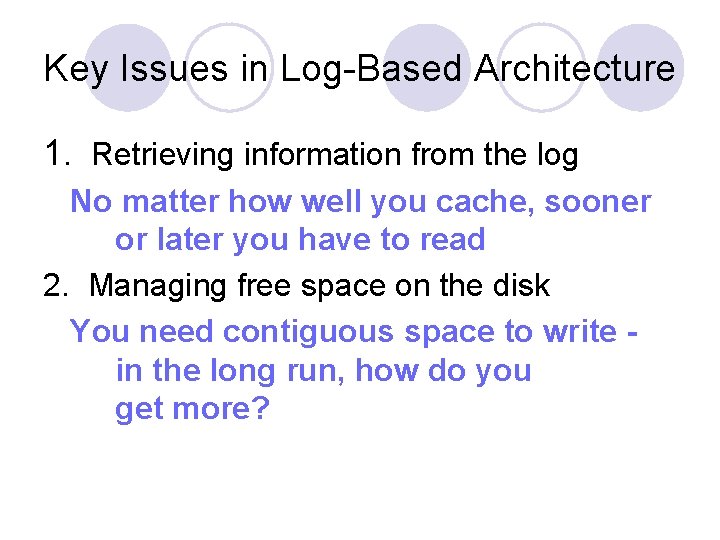

Key Issues in Log-Based Architecture 1. Retrieving information from the log No matter how well you cache, sooner or later you have to read 2. Managing free space on the disk You need contiguous space to write in the long run, how do you get more?

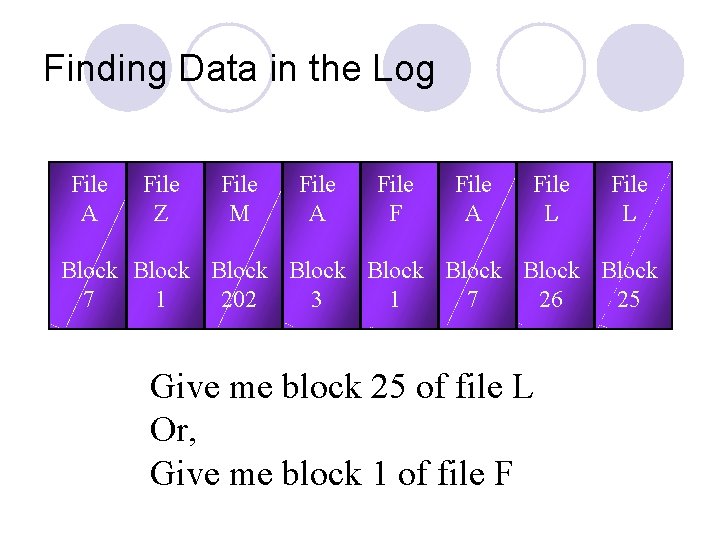

Finding Data in the Log File A File Z File M File A File F File A File L Block Block 7 1 202 3 1 7 26 25 Give me block 25 of file L Or, Give me block 1 of file F

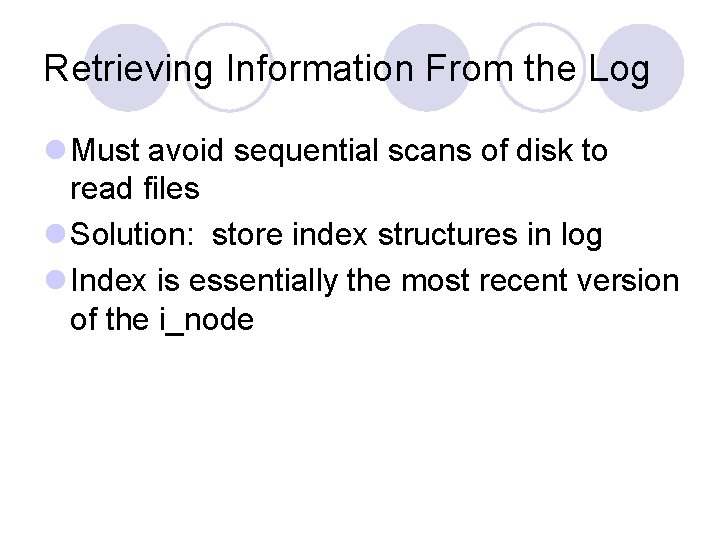

Retrieving Information From the Log l Must avoid sequential scans of disk to read files l Solution: store index structures in log l Index is essentially the most recent version of the i_node

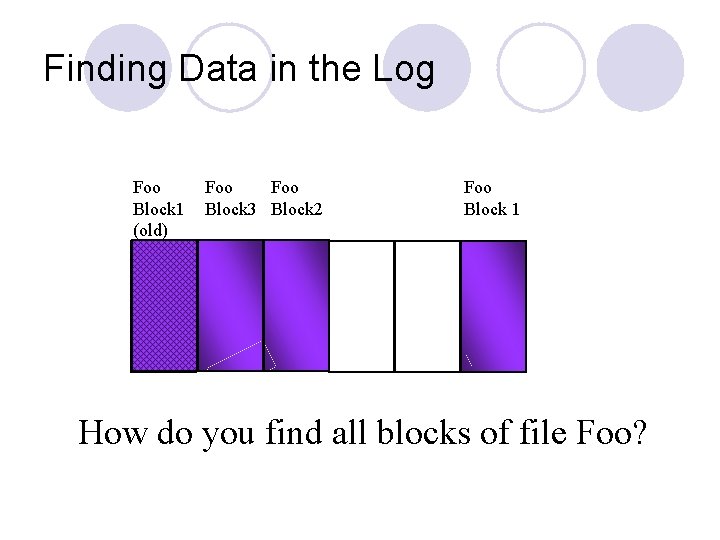

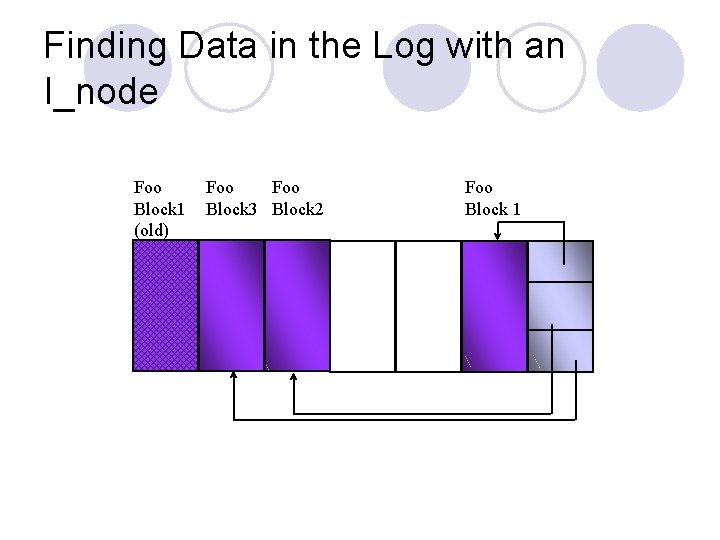

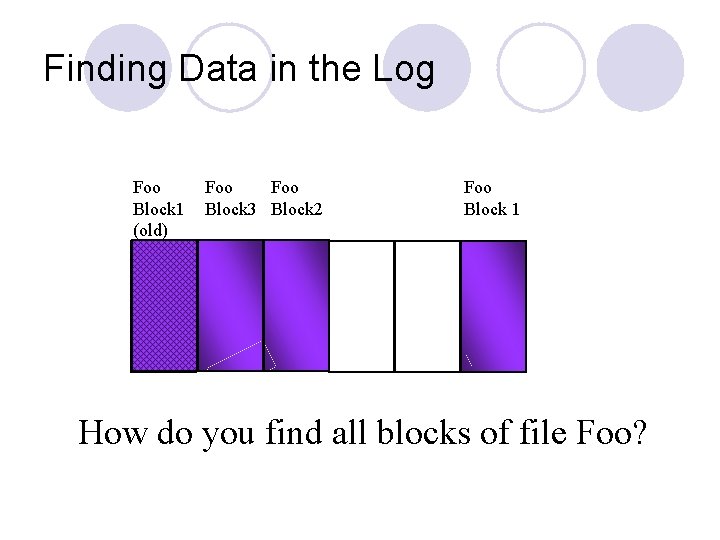

Finding Data in the Log Foo Block 1 (old) Foo Block 3 Block 2 Foo Block 1 How do you find all blocks of file Foo?

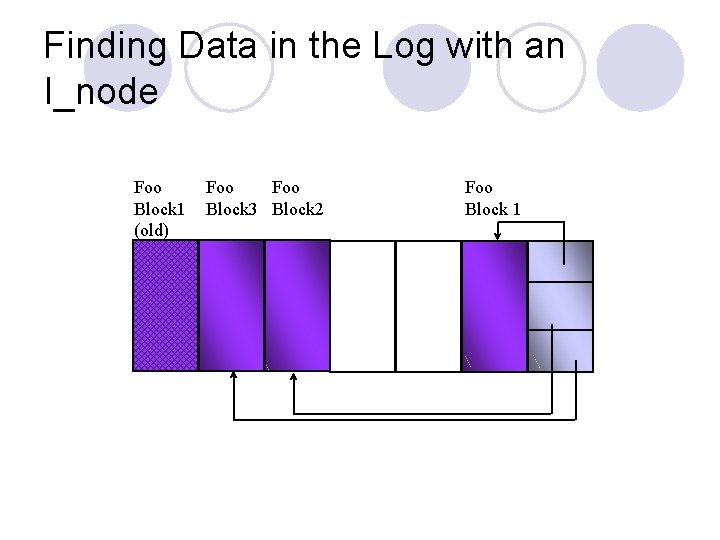

Finding Data in the Log with an I_node Foo Block 1 (old) Foo Block 3 Block 2 Foo Block 1

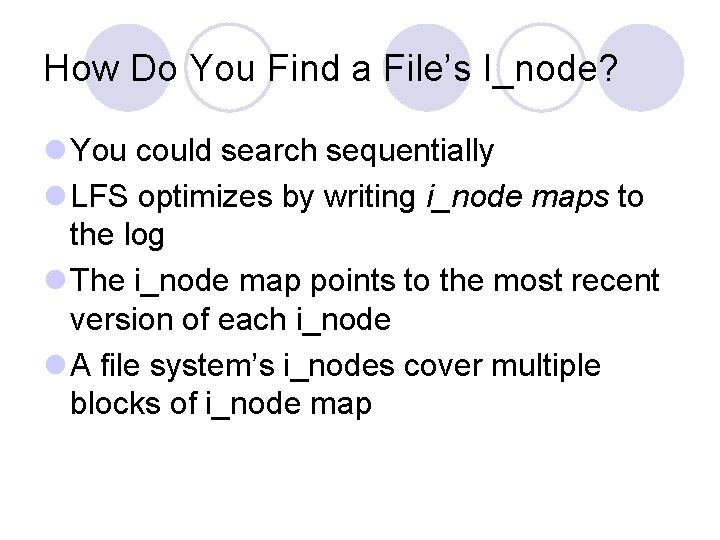

How Do You Find a File’s I_node? l You could search sequentially l LFS optimizes by writing i_node maps to the log l The i_node map points to the most recent version of each i_node l A file system’s i_nodes cover multiple blocks of i_node map

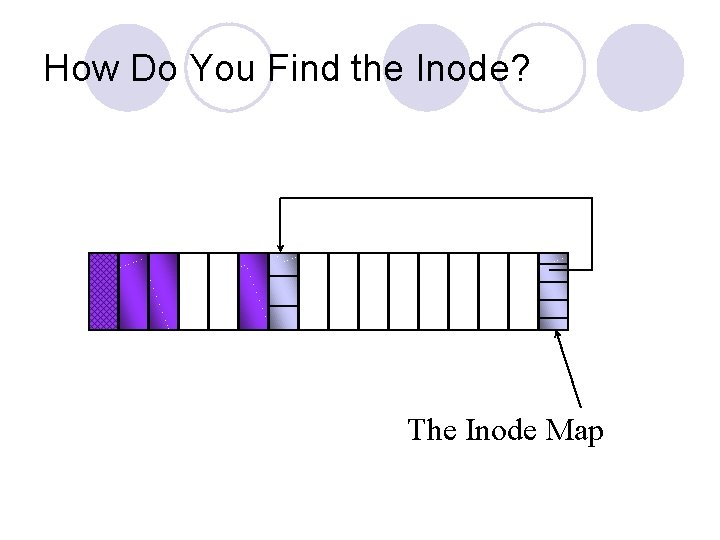

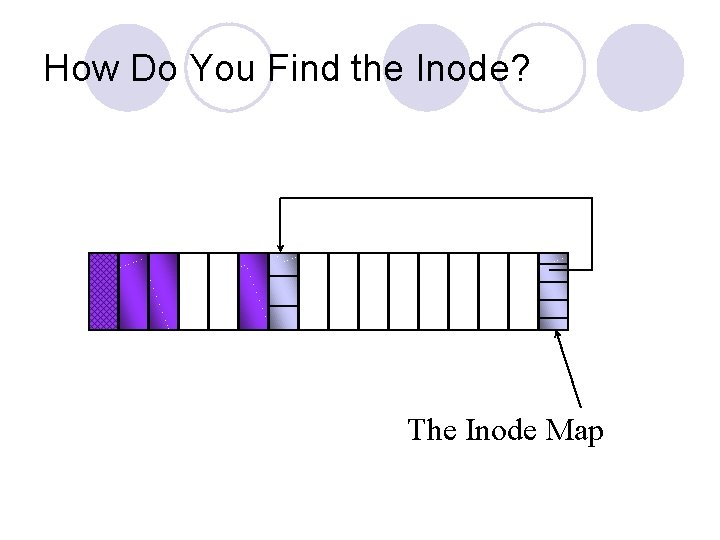

How Do You Find the Inode? The Inode Map

How Do You Find Inode Maps? l Use a fixed region on disk that always points to the most recent i_node map blocks l But cache i_node maps in main memory l Small enough that few disk accesses required to find i_node maps

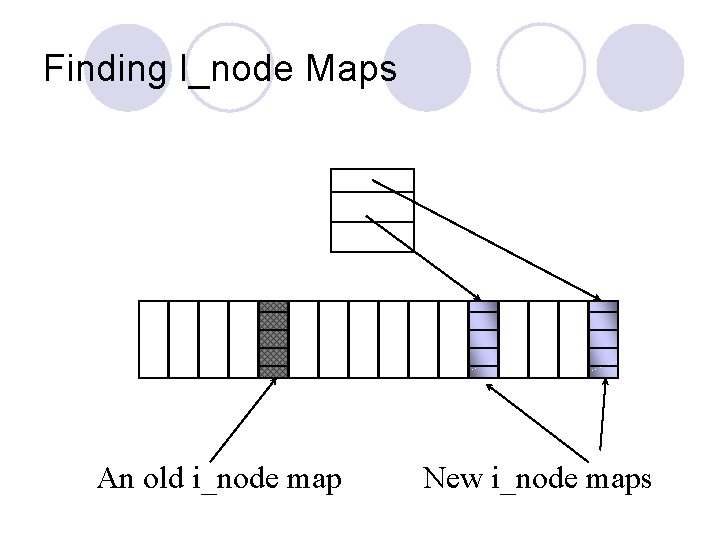

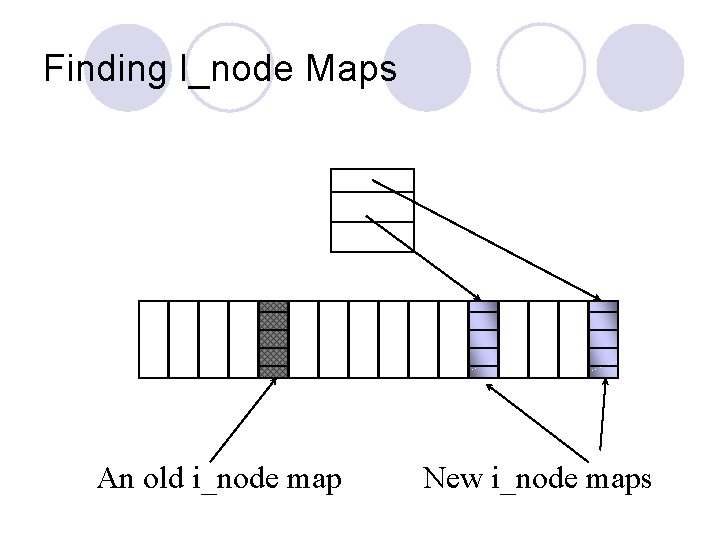

Finding I_node Maps An old i_node map New i_node maps

Reclaiming Space in the Log l Eventually, the log reaches the end of the disk partition l So LFS must reuse disk space like superseded data blocks l Space can be reclaimed in background or when needed l Goal is to maintain large free extents on disk

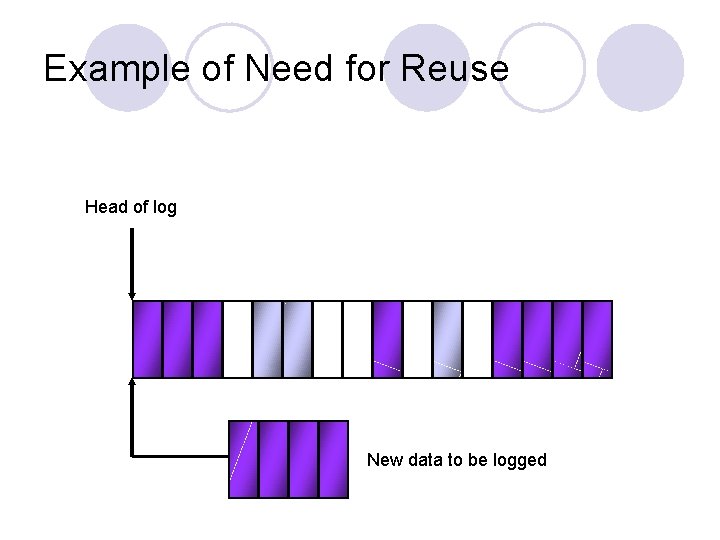

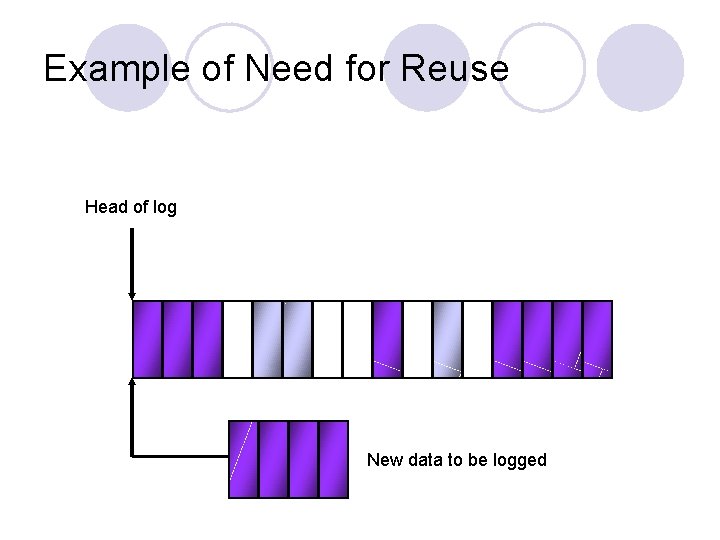

Example of Need for Reuse Head of log New data to be logged

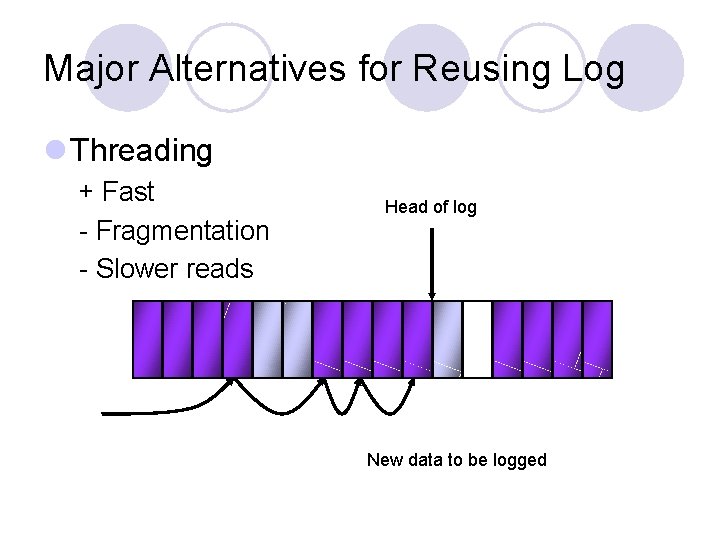

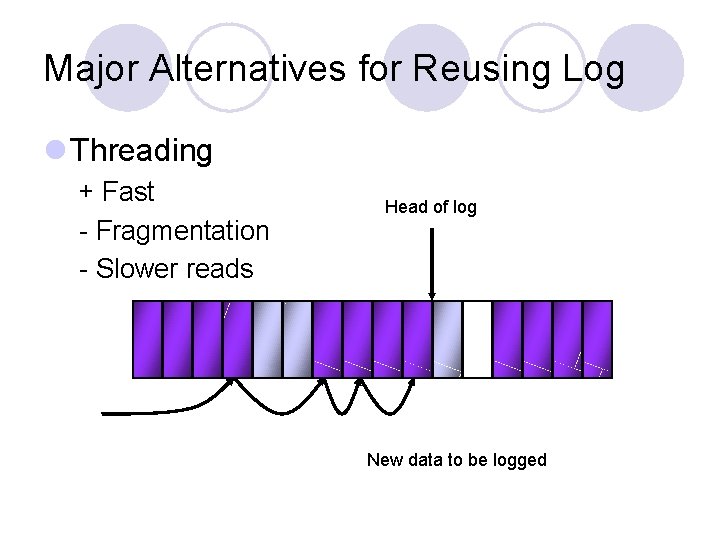

Major Alternatives for Reusing Log l Threading + Fast - Fragmentation - Slower reads Head of log New data to be logged

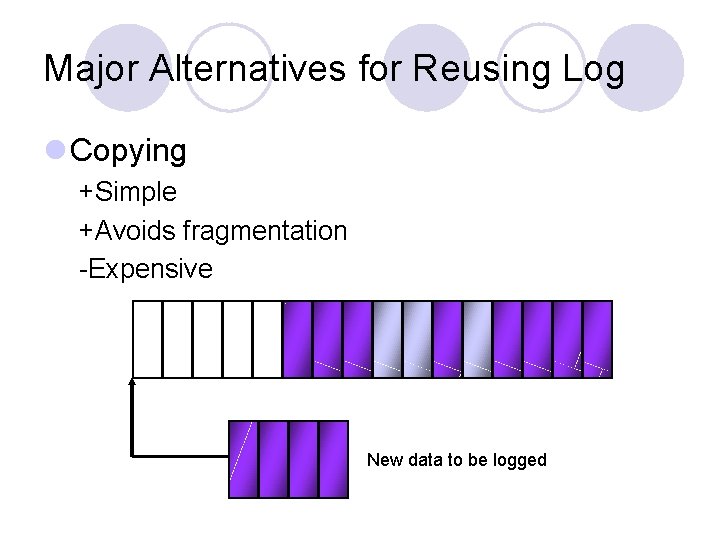

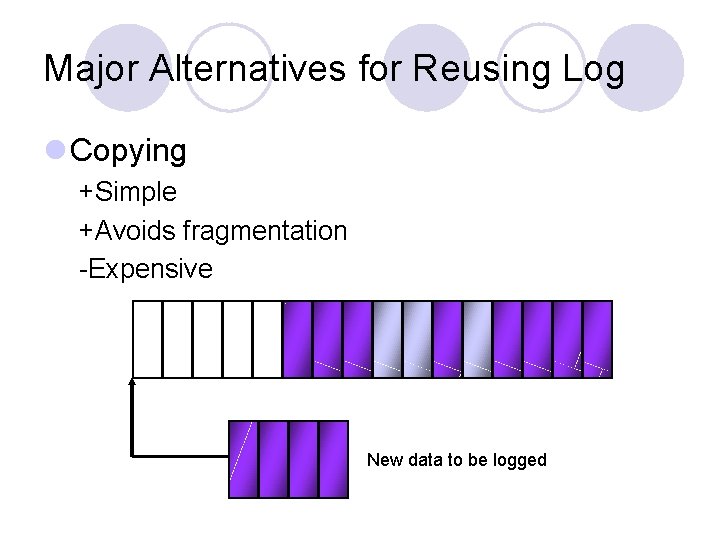

Major Alternatives for Reusing Log l Copying +Simple +Avoids fragmentation -Expensive New data to be logged

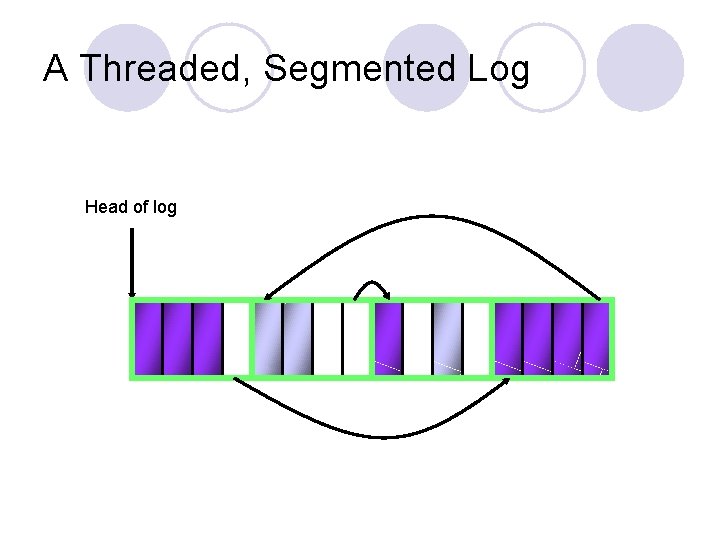

LFS Space Reclamation Strategy l Combination of copying and threading l Copy to free large fixed-size segments l Thread free segments together l Try to collect long-lived data permanently into segments

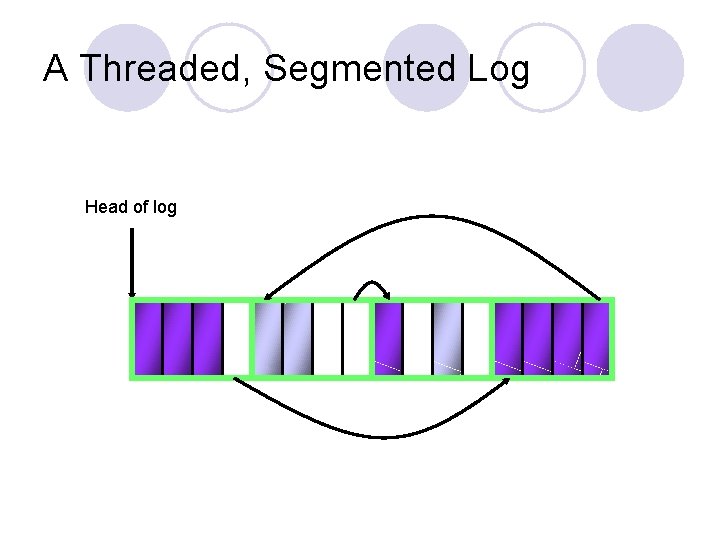

A Threaded, Segmented Log Head of log

Cleaning a Segment 1. Read several segments into memory 2. Identify the live blocks 3. Write live data back (hopefully) into a smaller number of segments

Identifying Live Blocks l Hard to track down live blocks of all files l Instead, each segment maintains a segment summary block ¡Identifying what is in each block l Crosscheck blocks with owning i_node’s block pointers l Written at end of log write, for low overhead

Segment Cleaning Policies l What are some important questions? ¡When do you clean segments? ¡How many segments to clean? ¡Which segments to clean? ¡How to group blocks in their new segments?

When to Clean l Periodically l Continuously l During off-hours l When disk is nearly full l On-demand l LFS uses a threshold system

How Many Segments to Clean l The more cleaned at once, the better the reorganization of the disk ¡But the higher the cost of cleaning l LFS cleans a few tens at a time ¡Till disk drops below threshold value l Empirically, LFS not very sensitive to this factor

Which Segments to Clean? l Cleaning segments with lots of dead data gives great benefit l Some segments are hot, some segments are cold l But “cold” free space is more valuable than “hot” free space l Since cold blocks tend to stay cold

Cost-Benefit Analysis l u = utilization l A = age l Benefit to cost = u*A/(u + 1) l Clean cold segments with some space, hot segments with a lot of space

What to Put Where? l Given a set of live blocks and some cleaned segments, which goes where? ¡Order blocks by age ¡Write them to segments oldest first l Goal is very cold, highly utilized segments

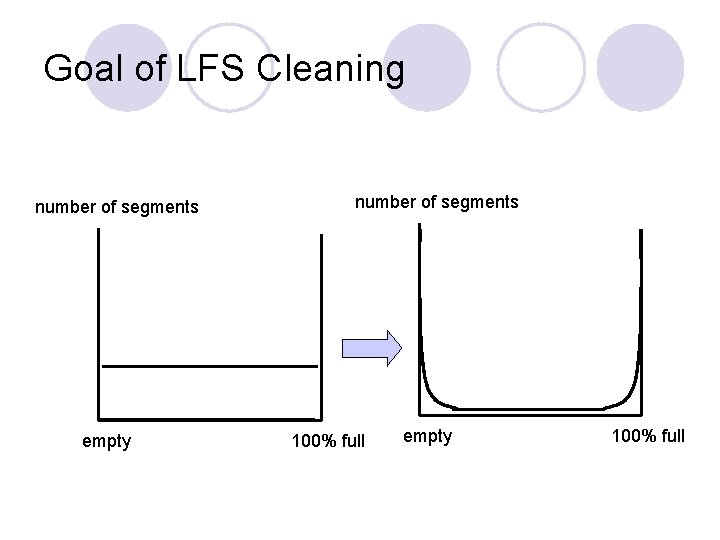

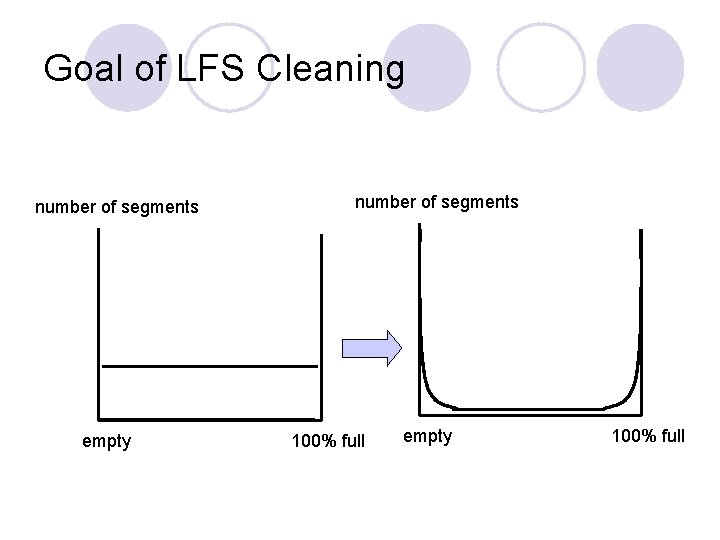

Goal of LFS Cleaning number of segments empty number of segments 100% full empty 100% full

Performance of LFS l On modified Andrew benchmark, 20% faster than FFS l LFS can create and delete 8 times as many files per second as FFS l LFS can read 1 ½ times as many small files l LFS slower than FFS at sequential reads of randomly written files

Logical Locality vs. Temporal Locality l Logical locality (spatial locality): Normal file systems keep a file’s data blocks close together l Temporal locality: LFS keeps data written at the same time close together l When temporal locality = logical locality ¡Systems perform the same

Major Innovations of LFS l Abstraction: everything is a log l Temporal locality l Use of caching to shape disk access patterns ¡Cache most reads ¡Optimized writes l Separating full and empty segments

Where Did LFS Look For Performance Improvements? l Minimized disk access ¡Only write when segments filled up l Increased size of data transfers ¡Write whole segments at a time l Improving locality ¡Assuming temporal locality, a file’s blocks are all adjacent on disk ¡And temporally related files are nearby

Parallel Disk Access and RAID l One disk can only deliver data at its maximum rate l So to get more data faster, get it from multiple disks simultaneously l Saving on rotational latency and seek time

Utilizing Disk Access Parallelism l Some parallelism available just from having several disks l But not much l Instead of satisfying each access from one disk, use multiple disks for each access l Store part of each data block on several disks

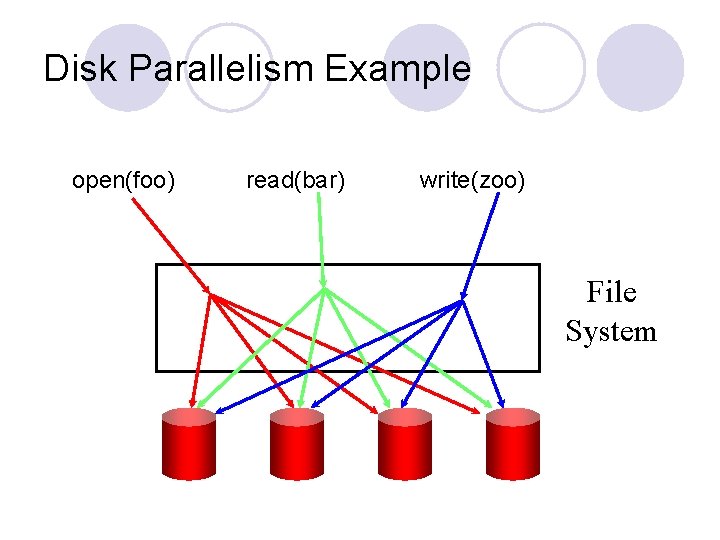

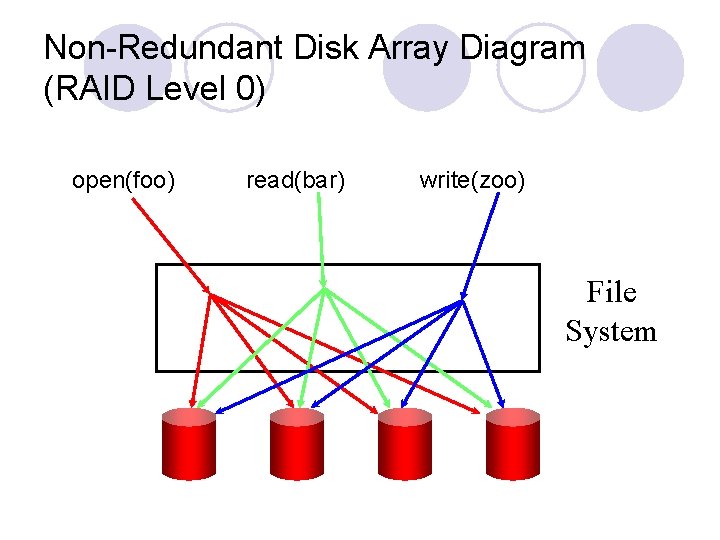

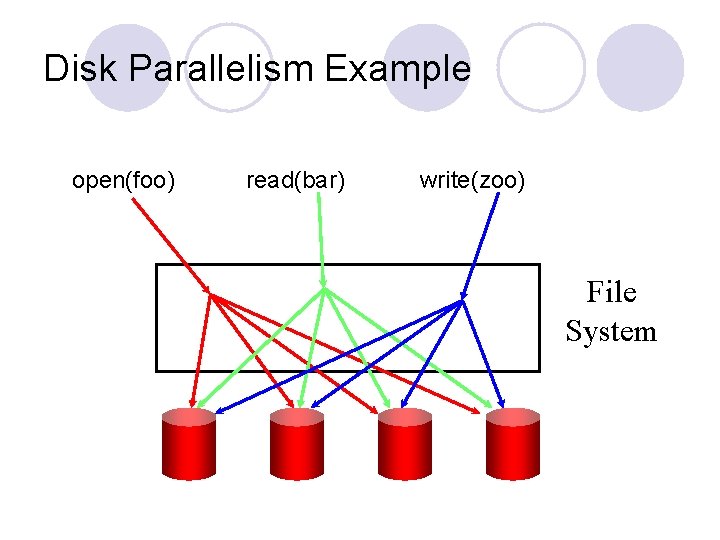

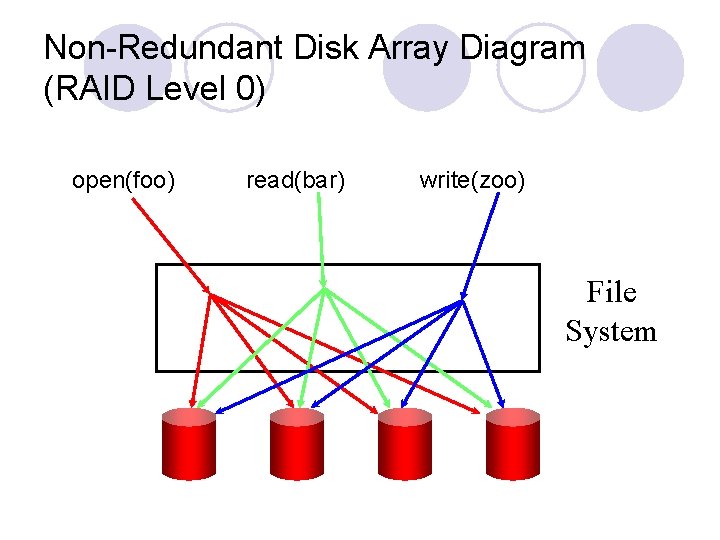

Disk Parallelism Example open(foo) read(bar) write(zoo) File System

Data Striping l Transparently distributing data over disks l Benefits – ¡Increases disk parallelism ¡Faster response for big requests l Major parameters ¡Number of disks ¡Size of data interleaf

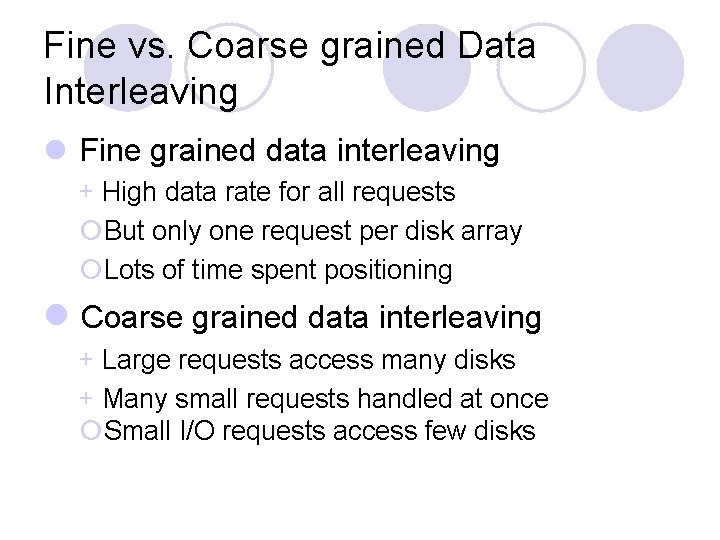

Fine vs. Coarse grained Data Interleaving l Fine grained data interleaving + High data rate for all requests ¡But only one request per disk array ¡Lots of time spent positioning l Coarse grained data interleaving + Large requests access many disks + Many small requests handled at once ¡Small I/O requests access few disks

Reliability of Disk Arrays l Without disk arrays, failure of one disk among N loses 1/Nth of the data l With disk arrays (fine grained across all N disks), failure of one disk loses all data l N disks 1/Nth as reliable as one disk

Adding Reliability to Disk Arrays l Buy more reliable disks l Build redundancy into the disk array ¡Multiple levels of disk array redundancy possible ¡Most organizations can prevent any data loss from single disk failure

Basic Reliability Mechanisms l Duplicate data l Parity for error detection l Error Correcting Code for detection and correction

Parity Methods l Can use parity to detect multiple errors ¡But typically used to detect single error l If hardware errors are self-identifying, parity can also correct errors l When data is written, parity must be written, too

Error-Correcting Code l Based on Hamming codes, mostly l Not only detect error, but identify which bit is wrong

RAID Architectures l Redundant Arrays of Independent Disks l Basic architectures for organizing disks into arrays l Assuming independent control of each disk l Standard classification scheme divides architectures into levels

Non-Redundant Disk Arrays (RAID Level 0) l No redundancy at all l So, what we just talked about l Any failure causes data loss

Non-Redundant Disk Array Diagram (RAID Level 0) open(foo) read(bar) write(zoo) File System

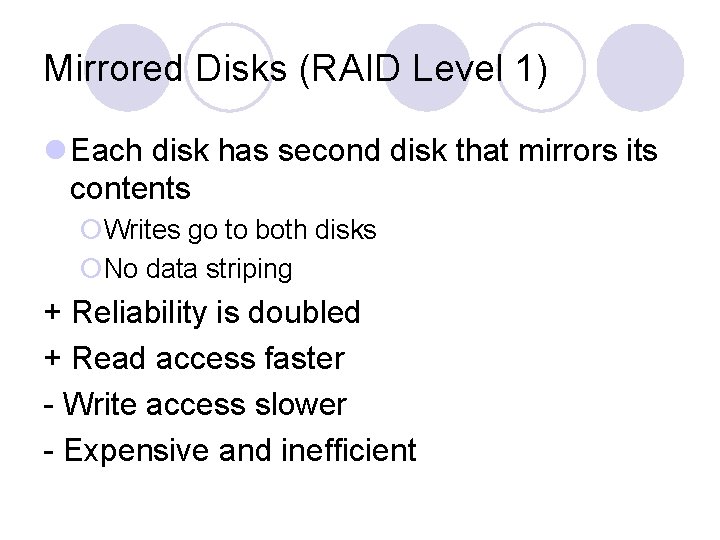

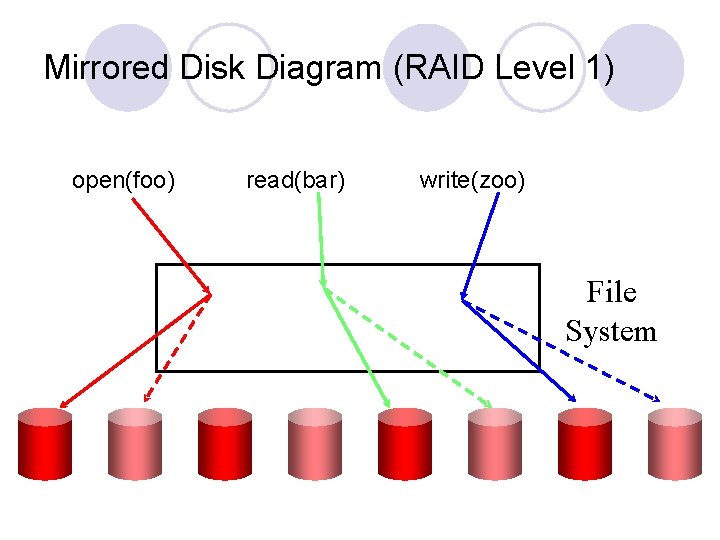

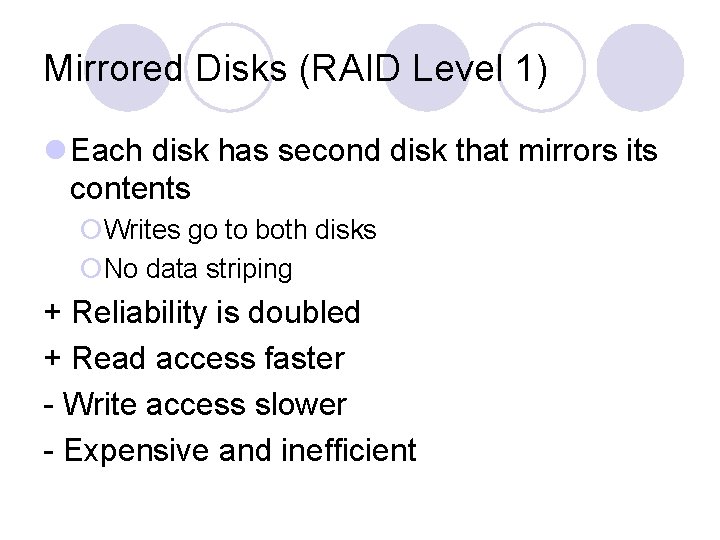

Mirrored Disks (RAID Level 1) l Each disk has second disk that mirrors its contents ¡Writes go to both disks ¡No data striping + Reliability is doubled + Read access faster - Write access slower - Expensive and inefficient

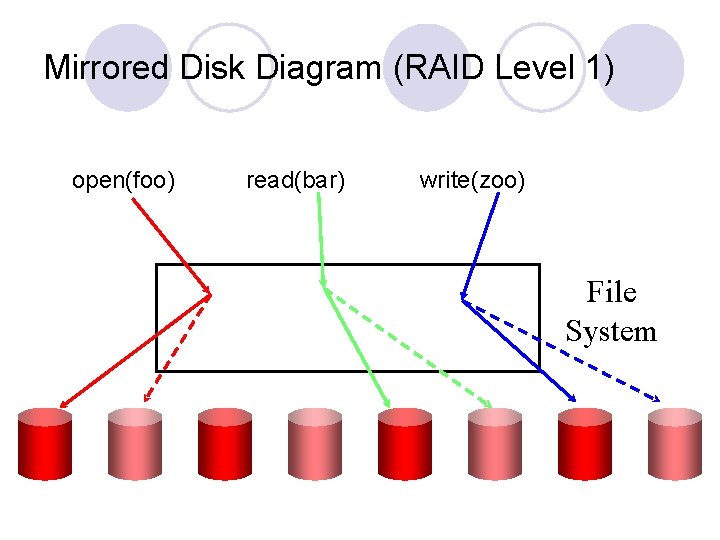

Mirrored Disk Diagram (RAID Level 1) open(foo) read(bar) write(zoo) File System

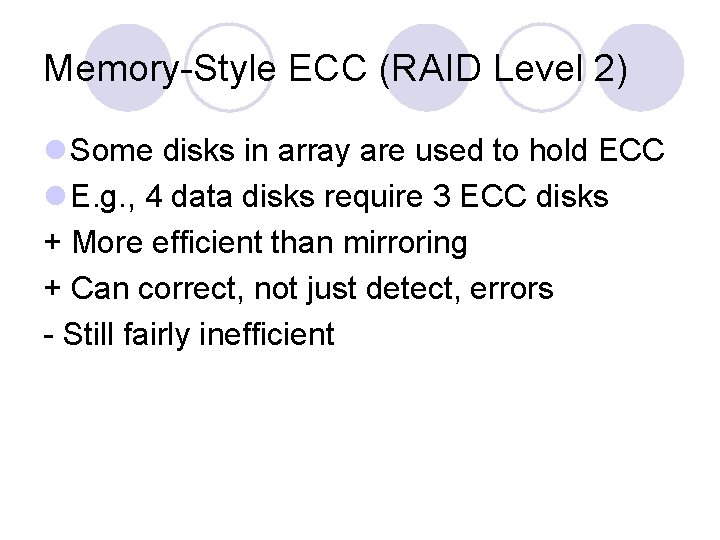

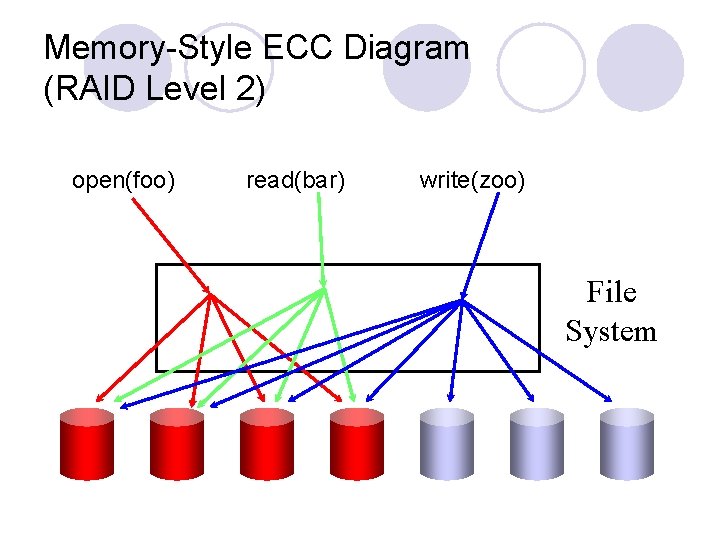

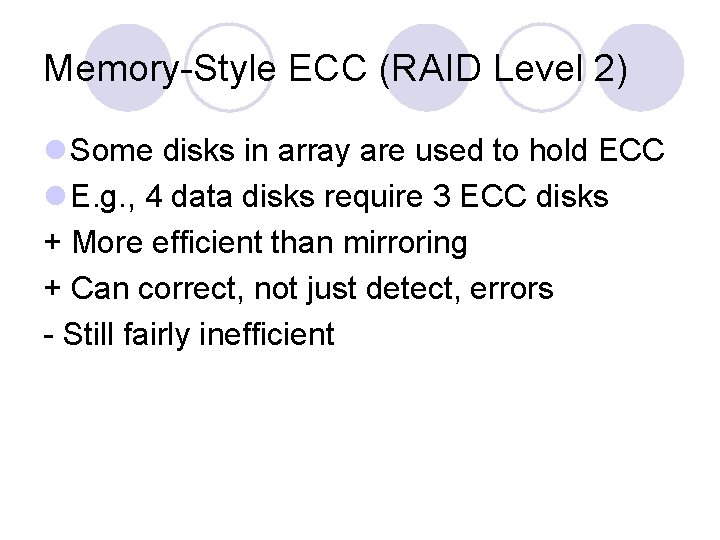

Memory-Style ECC (RAID Level 2) l Some disks in array are used to hold ECC l E. g. , 4 data disks require 3 ECC disks + More efficient than mirroring + Can correct, not just detect, errors - Still fairly inefficient

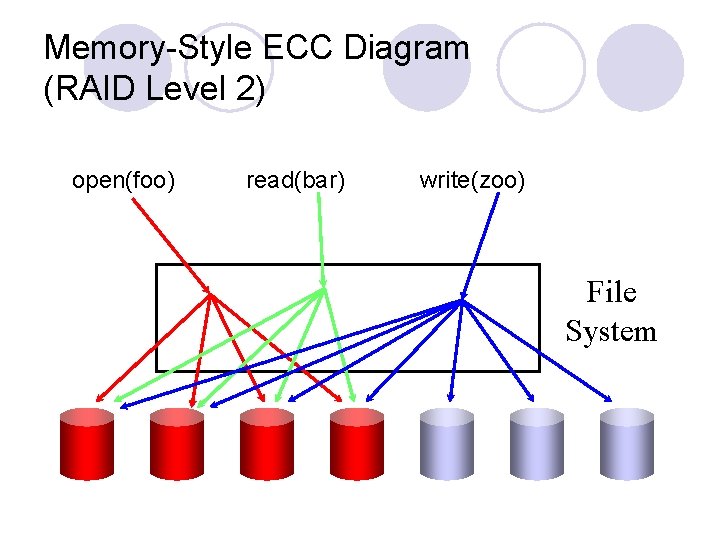

Memory-Style ECC Diagram (RAID Level 2) open(foo) read(bar) write(zoo) File System

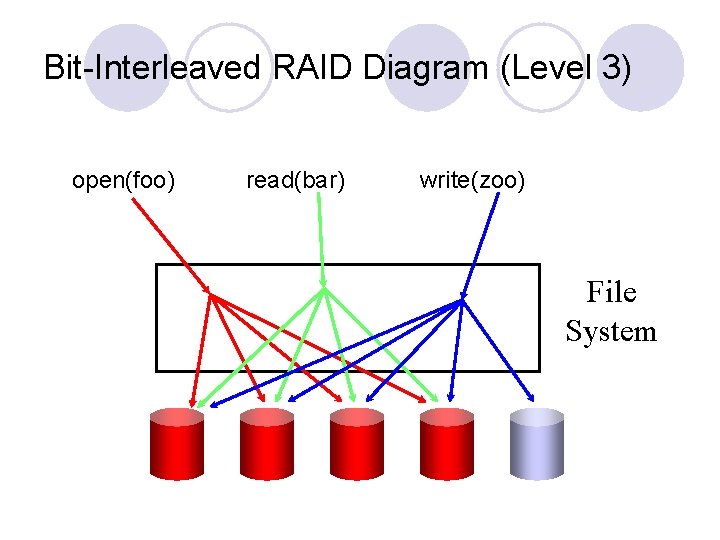

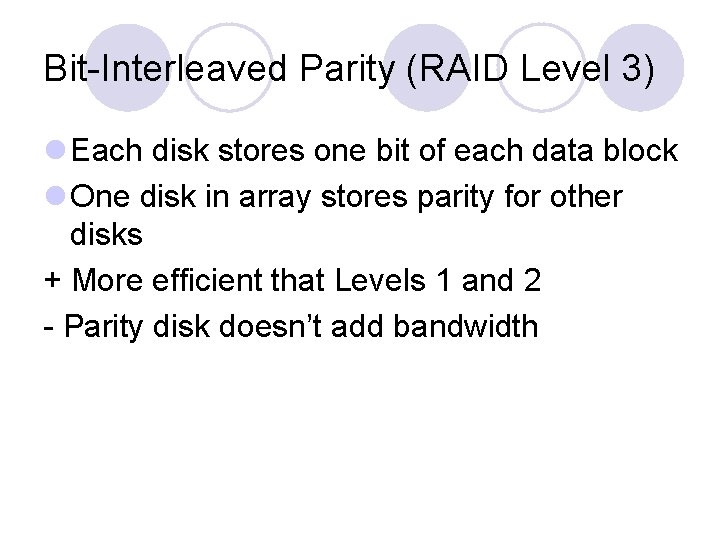

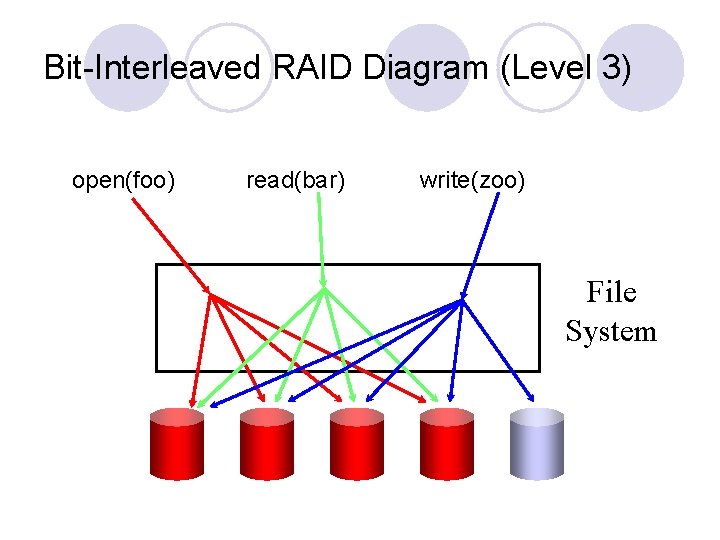

Bit-Interleaved Parity (RAID Level 3) l Each disk stores one bit of each data block l One disk in array stores parity for other disks + More efficient that Levels 1 and 2 - Parity disk doesn’t add bandwidth

Bit-Interleaved RAID Diagram (Level 3) open(foo) read(bar) write(zoo) File System

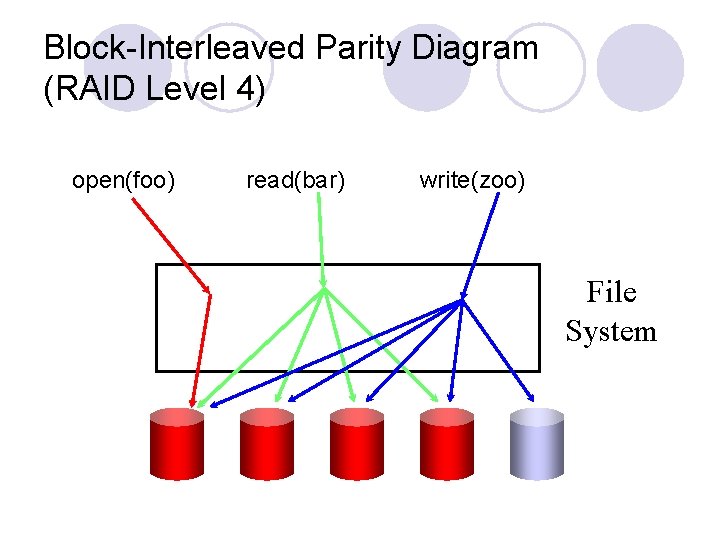

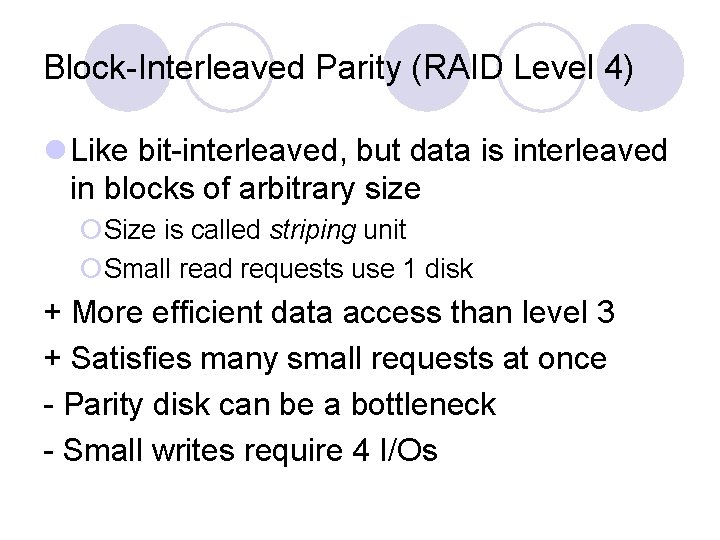

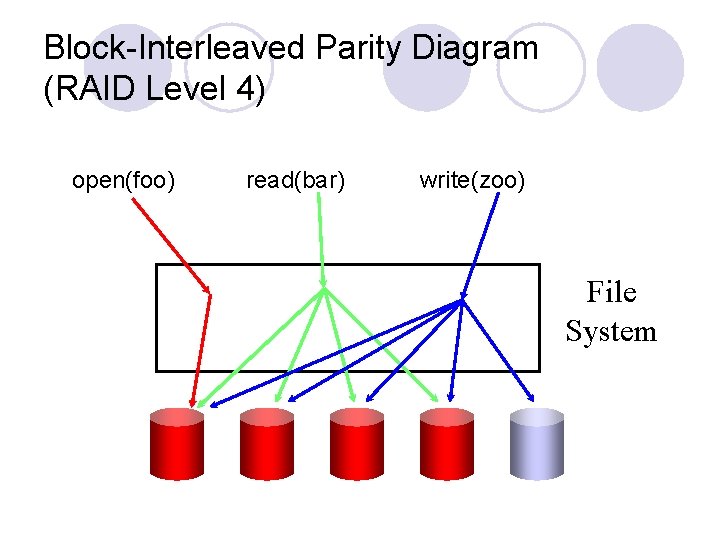

Block-Interleaved Parity (RAID Level 4) l Like bit-interleaved, but data is interleaved in blocks of arbitrary size ¡Size is called striping unit ¡Small read requests use 1 disk + More efficient data access than level 3 + Satisfies many small requests at once - Parity disk can be a bottleneck - Small writes require 4 I/Os

Block-Interleaved Parity Diagram (RAID Level 4) open(foo) read(bar) write(zoo) File System

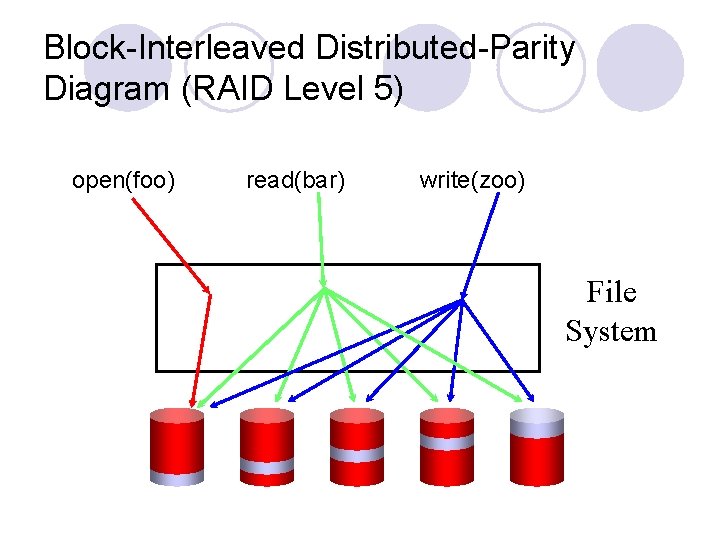

Block-Interleaved Distributed-Parity (RAID Level 5) l Spread the parity out over all disks + No parity disk bottleneck + All disks contribute read bandwidth – Requires 4 I/Os for small writes

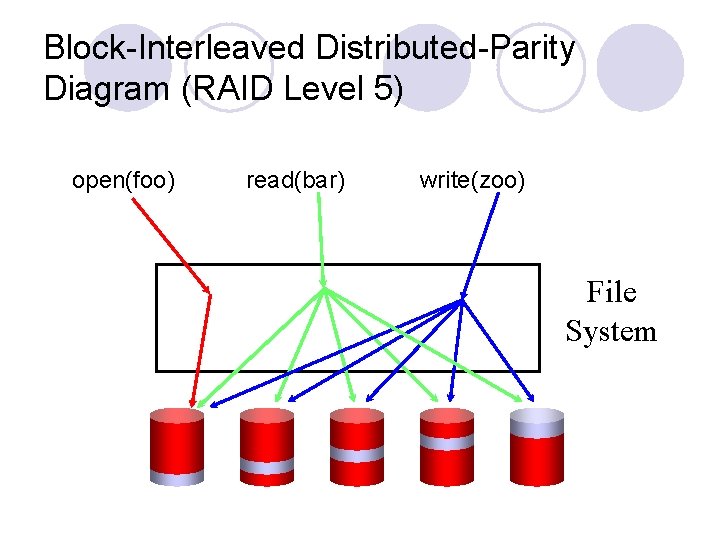

Block-Interleaved Distributed-Parity Diagram (RAID Level 5) open(foo) read(bar) write(zoo) File System

Other RAID Configurations l RAID 6 ¡Can survive two disk failures l RAID 10 (RAID 1+0) ¡Data striped across mirrored pairs l RAID 01 (RAID 0+1) ¡Mirroring two RAID 0 arrays l RAID 15, RAID 51

Where Did RAID Look For Performance Improvements? l Parallel use of disks ¡Improve overall delivered bandwidth by getting data from multiple disks l Biggest problem is small write performance l But we know how to deal with small writes. . .

Bonus (1 critique) l File system content analysis ¡Need root access to computers running workloads that generate lots of files (e. g. , simulation, web development) l. Used by government l. Used by industry l. Used by academic ¡Run content analysis based on (1) a static snapshot (e. g. , recursive ls) (2) a one-week dynamic trace (e. g. , strace file system calls)

Bonus (1 critique) l Contact Bobby Roy for the trace scripts l Report ¡Description of the workload environment (e. g. , computer from a company, running simulations) ¡Number of files and bytes that can be (1) reinstalled (e. g. , software packages), (2) redownloaded (e. g. , multimedia files), (3) regenerated (e. g. , . o files) ¡Number of files/bytes that contain usergenerated content