Feature Selection and Causal discovery Isabelle Guyon Clopinet

Feature Selection and Causal discovery Isabelle Guyon, Clopinet André Elisseeff, IBM Zürich Constantin Aliferis, Vanderbilt University

Road Map Feature selection • • • What is feature selection? Why is it hard? What works best in practice? How to make progress using causality? Can causal discovery benefit from feature selection? Causal discovery

Introduction

Causal discovery • What affects your health? • What affects the economy? • What affects climate changes? and… Which actions will have beneficial effects?

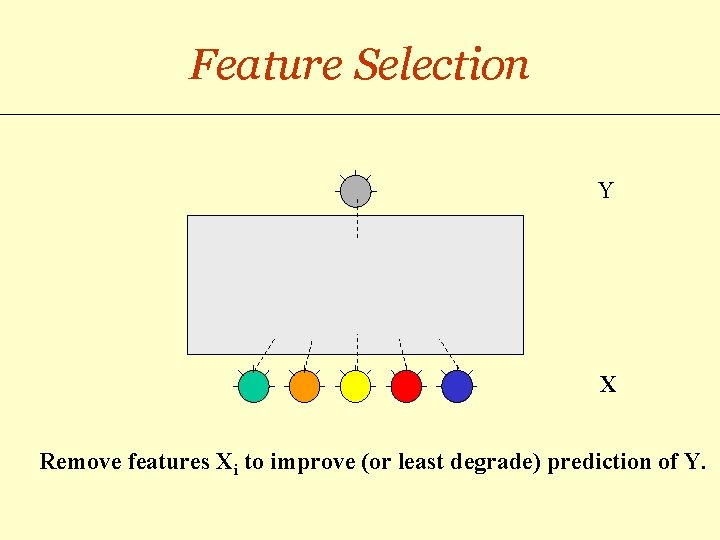

Feature Selection Y X Remove features Xi to improve (or least degrade) prediction of Y.

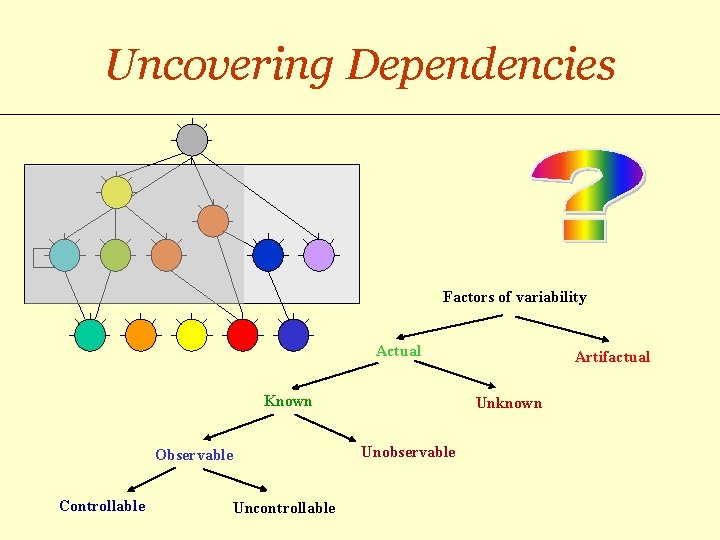

Uncovering Dependencies Factors of variability Actual Known Observable Controllable Uncontrollable Artifactual Unknown Unobservable

Predictions and Actions Y X See e. g. Judea Pearl, “Causality”, 2000

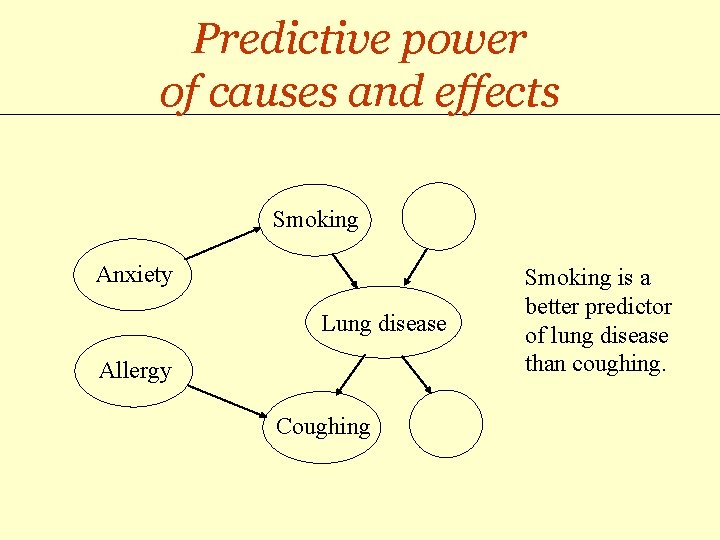

Predictive power of causes and effects Smoking Anxiety Lung disease Allergy Coughing Smoking is a better predictor of lung disease than coughing.

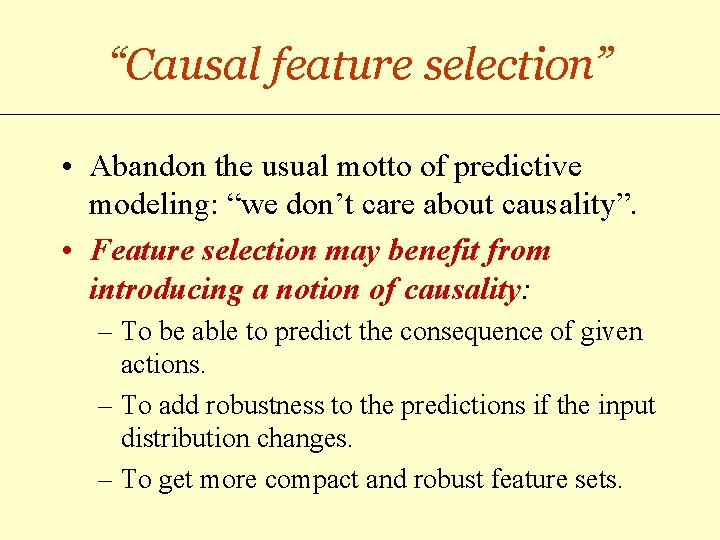

“Causal feature selection” • Abandon the usual motto of predictive modeling: “we don’t care about causality”. • Feature selection may benefit from introducing a notion of causality: – To be able to predict the consequence of given actions. – To add robustness to the predictions if the input distribution changes. – To get more compact and robust feature sets.

“FS-enabled causal discovery” Isn’t causal discovery solved with experiments? • No! Randomized Controlled Trials (RCT) may be: – Unethical (e. g. a RCT about the effects of smoking) – Costly and time consuming – Impossible (e. g. astronomy) • Observational data may be available to help plan future experiments Causal discovery may benefit from feature selection.

Feature selection basics

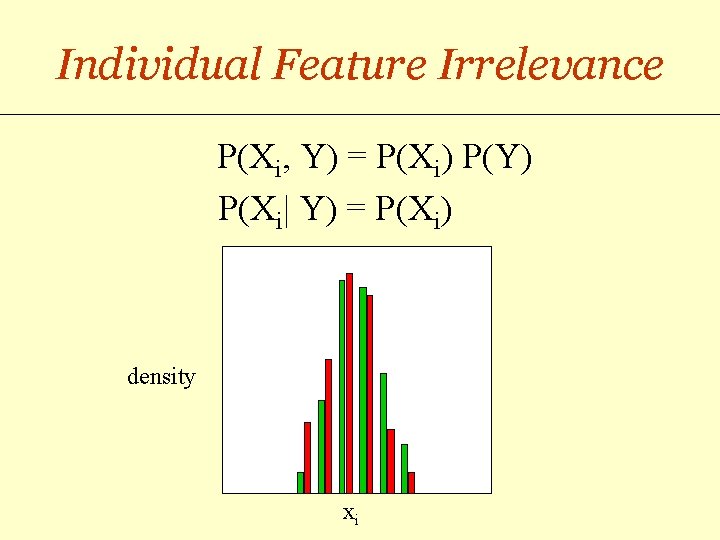

Individual Feature Irrelevance P(Xi, Y) = P(Xi) P(Y) P(Xi| Y) = P(Xi) density xi

Individual Feature Relevance m- 1 m+ Sensitivity ROC curve 0 AUC Specificity -1 1 s- s+

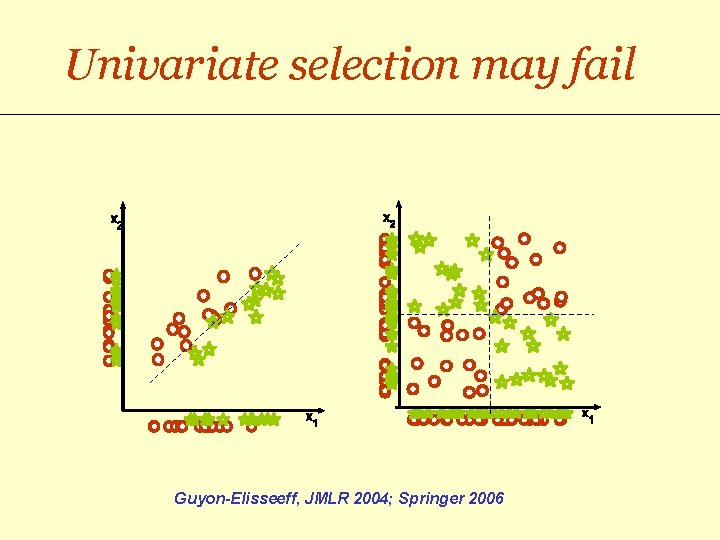

Univariate selection may fail Guyon-Elisseeff, JMLR 2004; Springer 2006

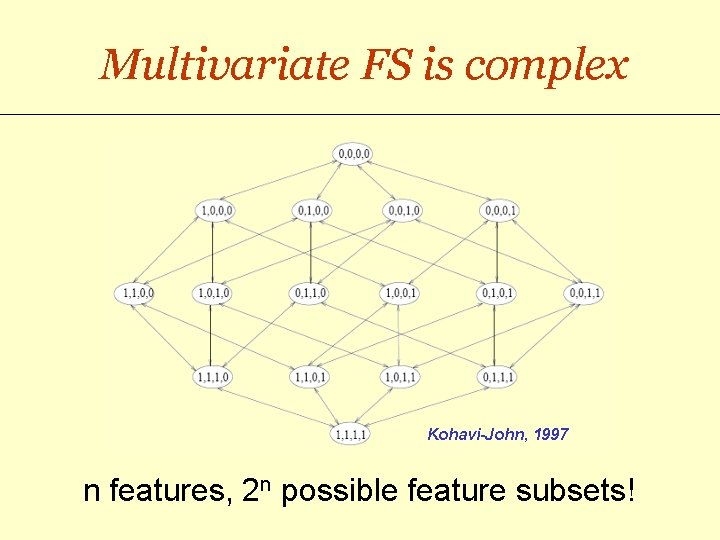

Multivariate FS is complex Kohavi-John, 1997 n features, 2 n possible feature subsets!

FS strategies • Wrappers: – Use the target risk functional to evaluate feature subsets. – Train one learning machine for each feature subset investigated. • Filters: – Use another evaluation function than the target risk functional. – Often no learning machine is involved in the feature selection process.

Reducing complexity • For wrappers: – Use forward or backward selection: O(n 2) steps. – Mix forward and backward search, e. g. floating search. • For filters: – Use a cheap evaluation function (no learning machine). – Make independence assumptions: n evaluations. • Embedded methods: – Do not retrain the LM at every step: e. g. RFE, n steps. – Search FS space and LM parameter space simultaneously: e. g. 1 -norm/Lasso approaches.

In practice… • Univariate feature selection often yields better accuracy results than multivariate feature selection. • NO feature selection at all gives sometimes the best accuracy results, even in the presence of known distracters. • Multivariate methods usually claim only better “parsimony”. • How can we make multivariate FS work better? NIPS 2003 and WCCI 2006 challenges : http: //clopinet. com/challenges

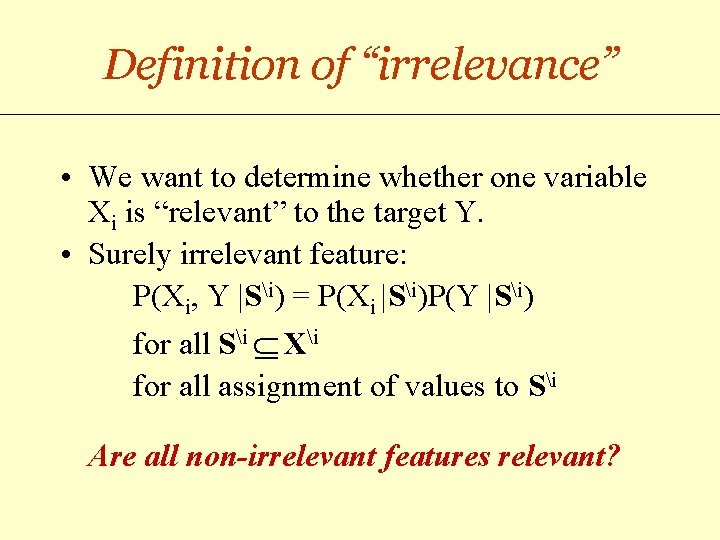

Definition of “irrelevance” • We want to determine whether one variable Xi is “relevant” to the target Y. • Surely irrelevant feature: P(Xi, Y |Si) = P(Xi |Si)P(Y |Si) for all Si Xi for all assignment of values to Si Are all non-irrelevant features relevant?

Causality enters the picture

Causal Bayesian networks • Bayesian network: – Graph with random variables X 1, X 2, …Xn as nodes. – Dependencies represented by edges. – Allow us to compute P(X 1, X 2, …Xn) as Pi P( Xi | Parents(Xi) ). – Edge directions have no meaning. • Causal Bayesian network: egde directions indicate causality.

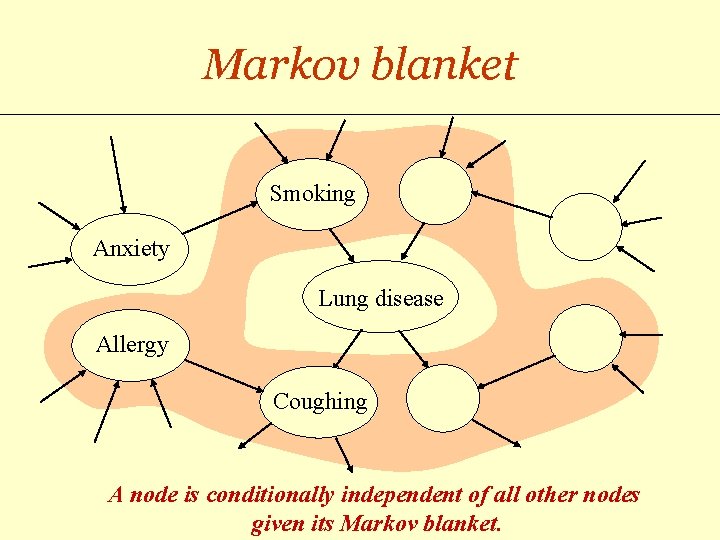

Markov blanket Smoking Anxiety Lung disease Allergy Coughing A node is conditionally independent of all other nodes given its Markov blanket.

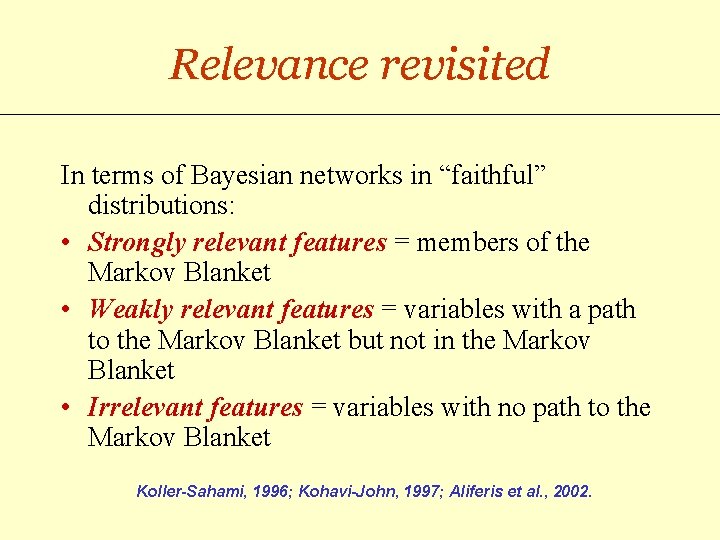

Relevance revisited In terms of Bayesian networks in “faithful” distributions: • Strongly relevant features = members of the Markov Blanket • Weakly relevant features = variables with a path to the Markov Blanket but not in the Markov Blanket • Irrelevant features = variables with no path to the Markov Blanket Koller-Sahami, 1996; Kohavi-John, 1997; Aliferis et al. , 2002.

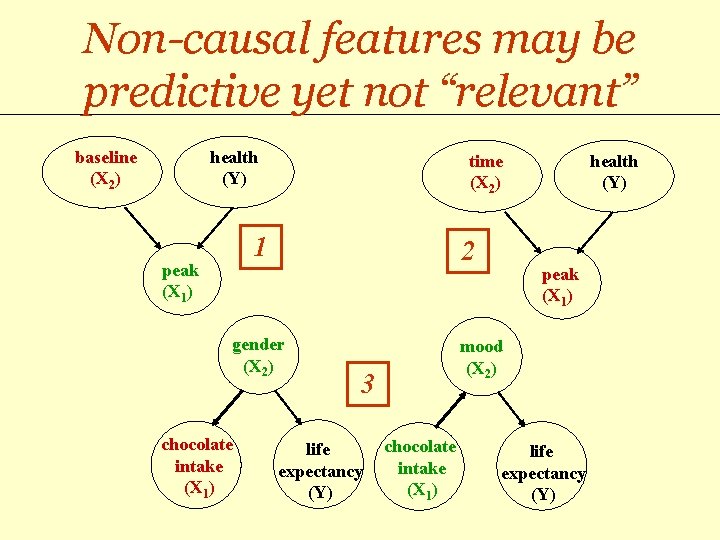

1 Is X 2 “relevant”? X 2 || Y | X 1 x 2 baseline (X 2) Y disease normal peak x 1 health (Y) peak (X 1) P(X 1, X 2 , Y)= P(X 1 | X 2 , Y) P(X 2) P(Y)

2 Are X 1 and X 2“relevant”? sample processing time Y disease normal X 1 || Y X 2 || Y X 1 || X 2 time (X 2) peak health (Y) peak (X 1) P(X 1, X 2 , Y)= P(X 1 | X 2 , Y) P(X 2) P(Y)

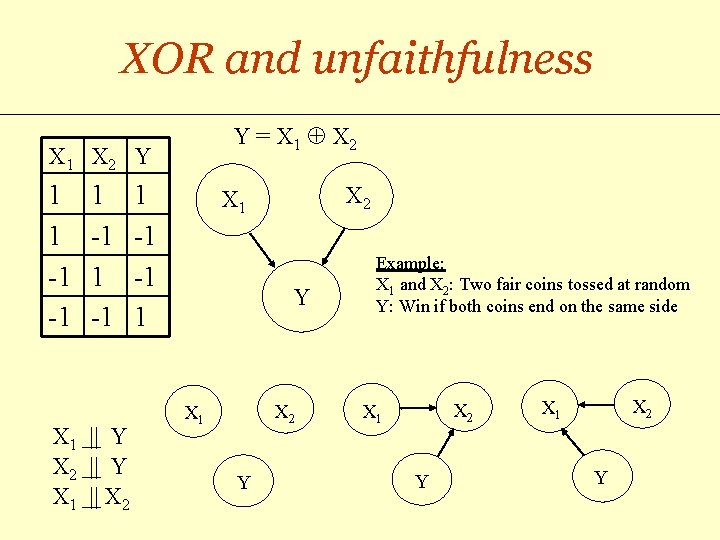

XOR and unfaithfulness Y = X 1 X 2 X 1 X 2 Y 1 1 -1 -1 X 1 || Y X 2 || Y X 1 || X 2 1 -1 -1 1 X 2 X 1 Y Example: X 1 and X 2: Two fair coins tossed at random Y: Win if both coins end on the same side X 2 X 1 Y

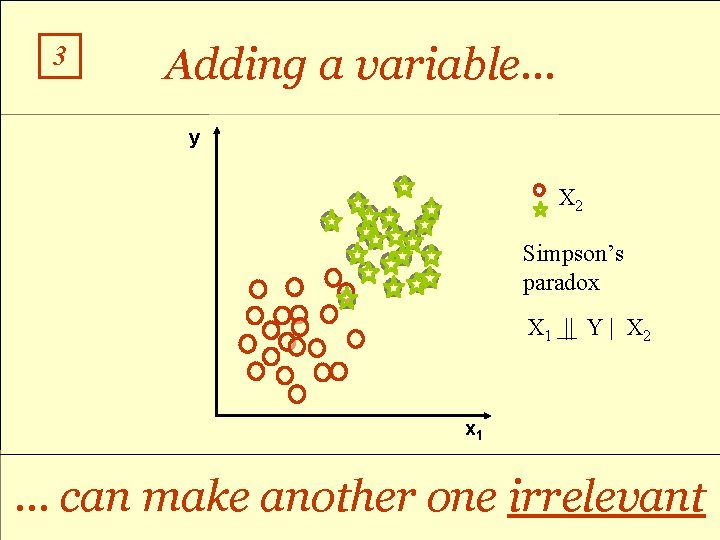

3 Adding a variable… y X 2 Simpson’s paradox X 1 || Y | X 2 x 1 … can make another one irrelevant

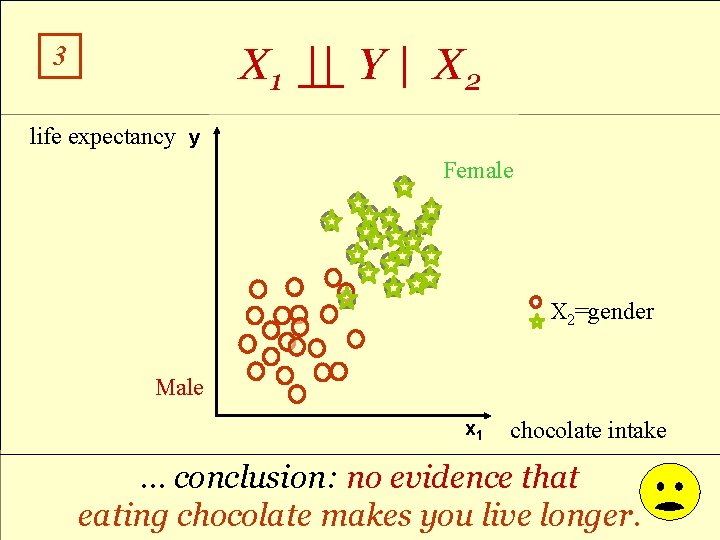

X 1 || Y | X 2 3 life expectancy y Female Is chocolate good for your X 2 health? =gender Male x 1 chocolate intake … conclusion: no evidence that eating chocolate makes you live longer.

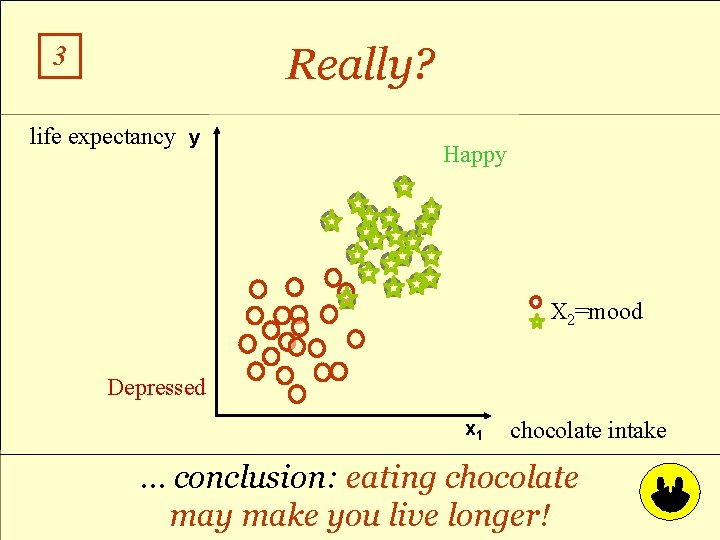

Really? 3 life expectancy y Happy Is chocolate good for your X 2 health? =mood Depressed x 1 chocolate intake … conclusion: eating chocolate may make you live longer!

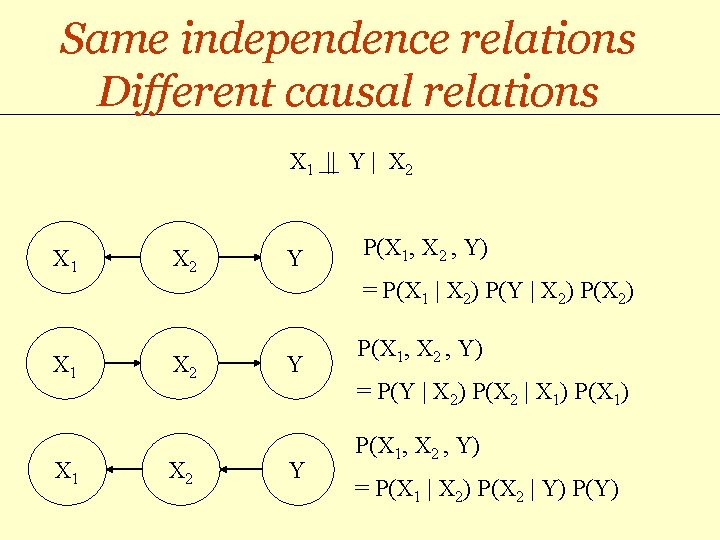

Same independence relations Different causal relations X 1 || Y | X 2 X 1 X 2 Y P(X 1, X 2 , Y) = P(X 1 | X 2) P(Y | X 2) P(X 2) X 1 X 2 Y P(X 1, X 2 , Y) = P(Y | X 2) P(X 2 | X 1) P(X 1) Y P(X 1, X 2 , Y) = P(X 1 | X 2) P(X 2 | Y) P(Y)

3 Is X 1 “relevant”? X 1 || Y | X 2 gender (X 2) chocolate intake (X 1) life expectancy (Y) mood (X 2) chocolate intake (X 1) life expectancy (Y)

Non-causal features may be predictive yet not “relevant” baseline (X 2) health (Y) time (X 2) 1 peak (X 1) 2 gender (X 2) chocolate intake (X 1) peak (X 1) mood (X 2) 3 life expectancy (Y) health (Y) chocolate intake (X 1) life expectancy (Y)

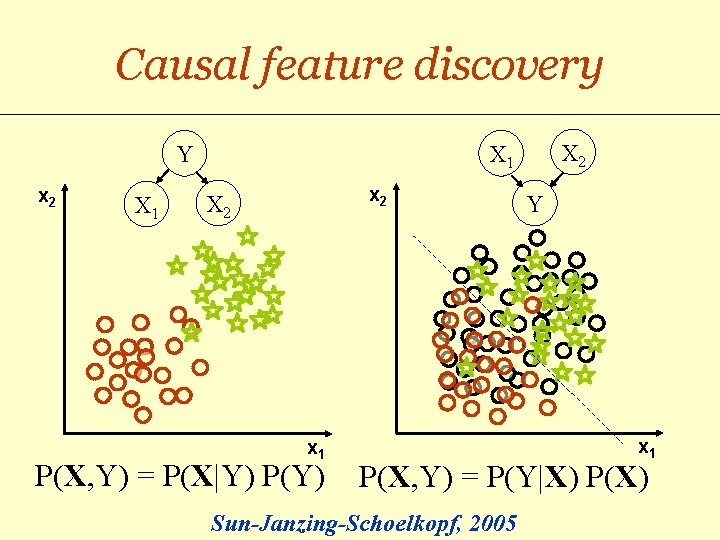

Causal feature discovery Y x 2 X 1 X 2 X 1 x 2 X 2 x 1 P(X, Y) = P(X|Y) P(Y) Y x 1 P(X, Y) = P(Y|X) P(X) Sun-Janzing-Schoelkopf, 2005

Conclusion • Feature selection focuses on uncovering subsets of variables X 1, X 2, … predictive of the target Y. • Taking a closer look at the type of dependencies may help refining the notion of variable relevance. • Uncovering causal relationships may yield better feature selection, robust under distribution changes. • These “causal features” may be better targets of action.

- Slides: 34