Fault Tolerance Fault tolerance concepts Implementation distributed agreement

Fault Tolerance • Fault tolerance concepts • Implementation – distributed agreement • Distributed agreement meets transaction processing: 2 - and 3 -phase commit Bonus material • Implementation – reliable point-to-point communication • Implementation – process groups • Implementation – reliable multicast • Recovery • Sparing 2/25/2021 Distributed Systems - Comp 655 1

Fault tolerance concepts • Availability – can I use it now? – Usually quantified as a percentage • Reliability – can I use it for a certain period of time? – Usually quantified as MTBF • Safety – will anything really bad happen if it does fail? • Maintainability – how hard is it to fix when it fails? – Usually quantified as MTTR 2/25/2021 Distributed Systems - Comp 655 2

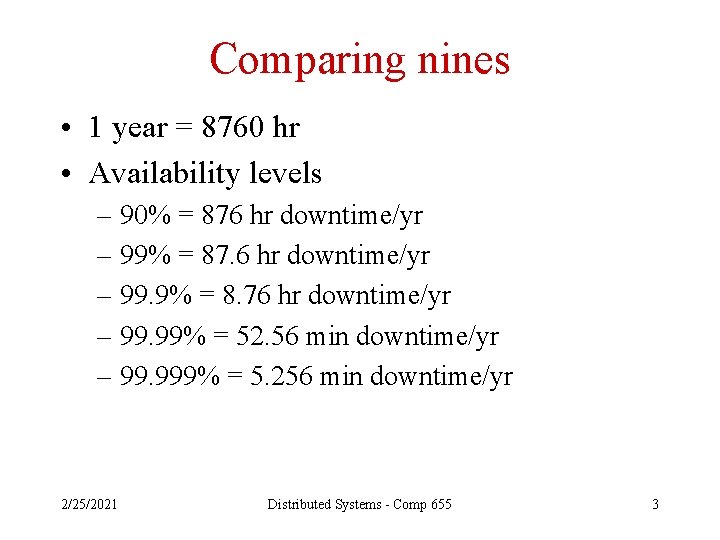

Comparing nines • 1 year = 8760 hr • Availability levels – 90% = 876 hr downtime/yr – 99% = 87. 6 hr downtime/yr – 99. 9% = 8. 76 hr downtime/yr – 99. 99% = 52. 56 min downtime/yr – 99. 999% = 5. 256 min downtime/yr 2/25/2021 Distributed Systems - Comp 655 3

Exercise: how to get five nines 1. Brainstorm what you would have to deal with to build a single-machine system that could run for five years with 25 min downtime. Consider: – – – Hardware failures, especially disks Power failures Network outages Software installation What else? 2. Come up with some ideas about how to solve the problems you identify 2/25/2021 Distributed Systems - Comp 655 4

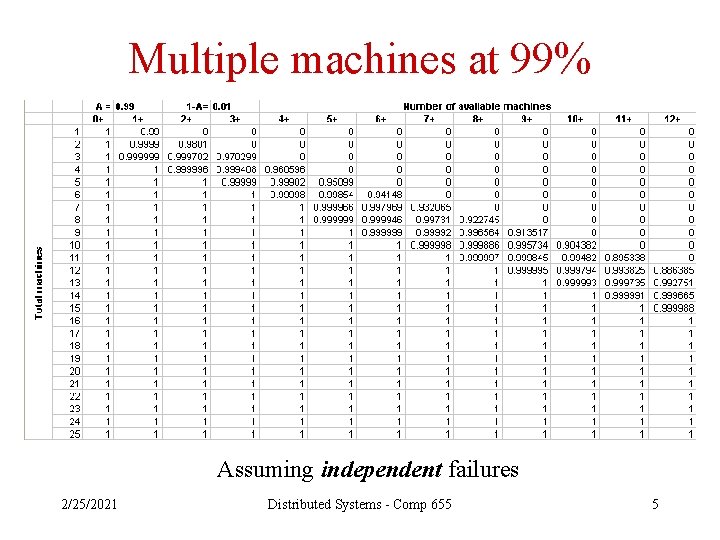

Multiple machines at 99% Assuming independent failures 2/25/2021 Distributed Systems - Comp 655 5

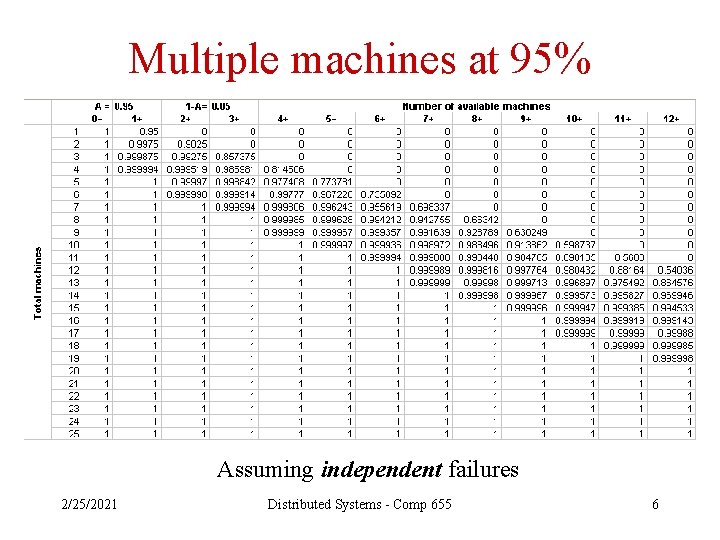

Multiple machines at 95% Assuming independent failures 2/25/2021 Distributed Systems - Comp 655 6

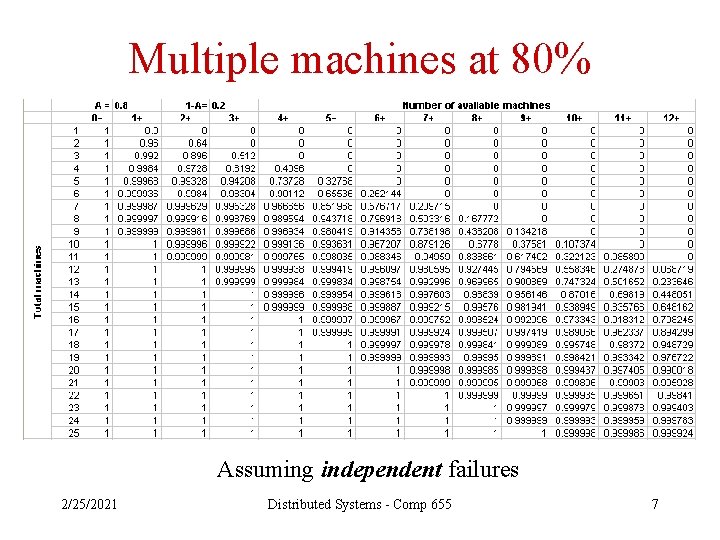

Multiple machines at 80% Assuming independent failures 2/25/2021 Distributed Systems - Comp 655 7

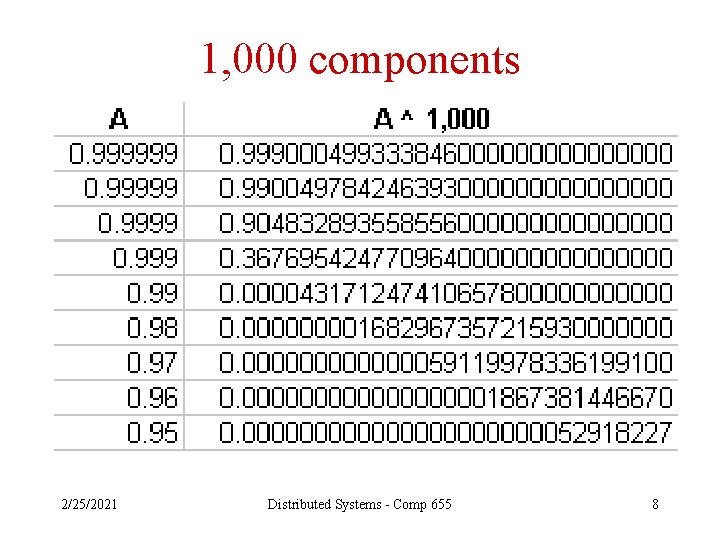

1, 000 components 2/25/2021 Distributed Systems - Comp 655 8

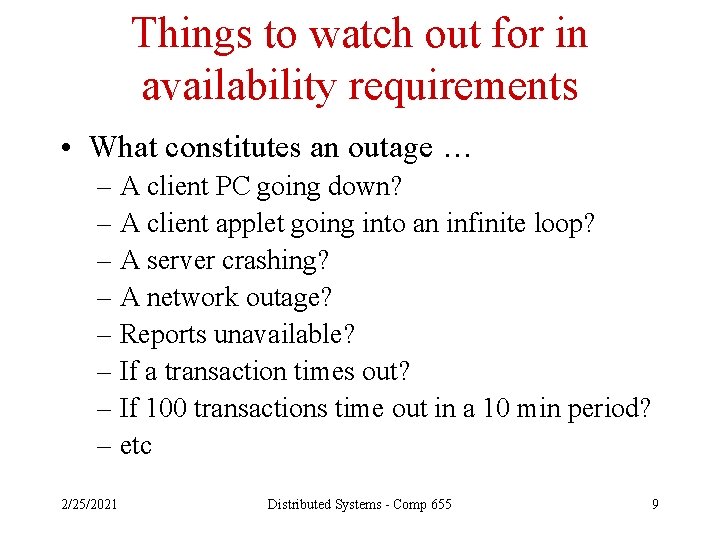

Things to watch out for in availability requirements • What constitutes an outage … – A client PC going down? – A client applet going into an infinite loop? – A server crashing? – A network outage? – Reports unavailable? – If a transaction times out? – If 100 transactions time out in a 10 min period? – etc 2/25/2021 Distributed Systems - Comp 655 9

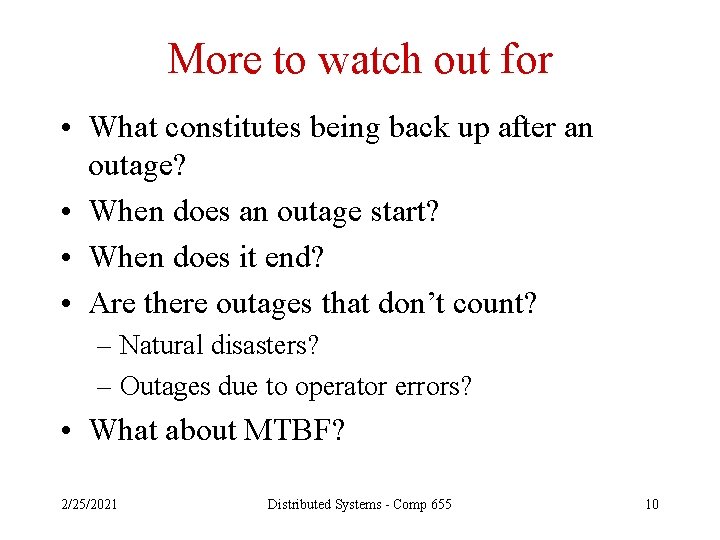

More to watch out for • What constitutes being back up after an outage? • When does an outage start? • When does it end? • Are there outages that don’t count? – Natural disasters? – Outages due to operator errors? • What about MTBF? 2/25/2021 Distributed Systems - Comp 655 10

Ways to get 99% availability 1. MTBF = 99 hr, MTTR = 1 hr 2. MTBF = 99 min, MTTR = 1 min 3. MTBF = 99 sec, MTTR = 1 sec 2/25/2021 Distributed Systems - Comp 655 11

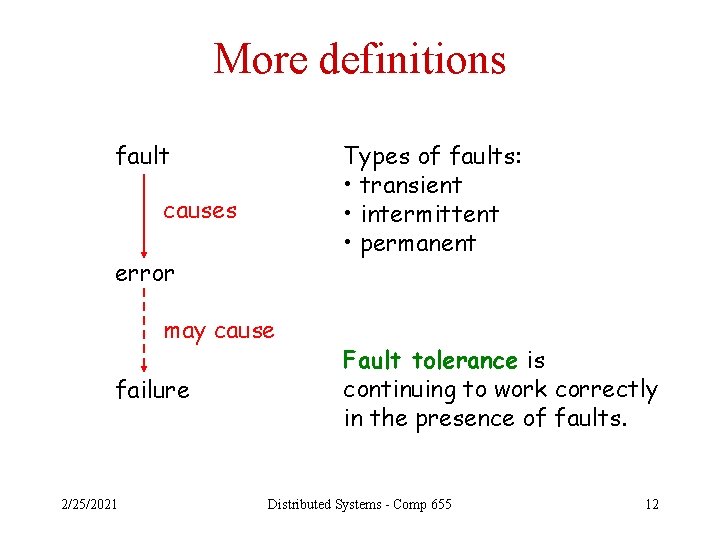

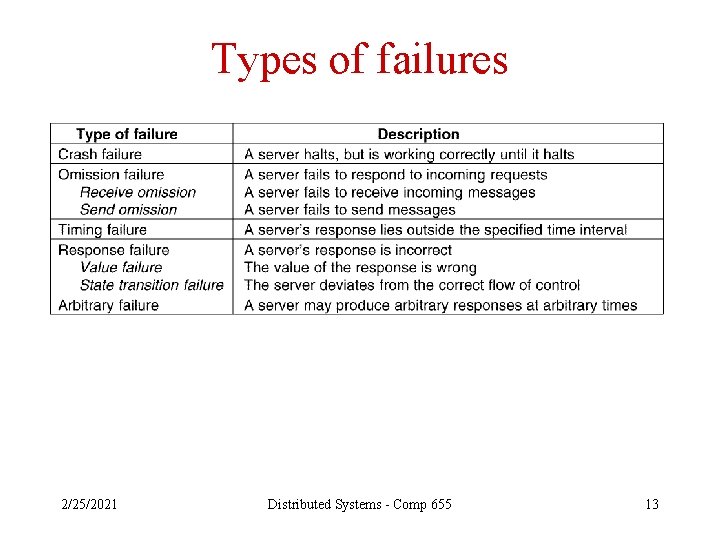

More definitions fault Types of faults: • transient • intermittent • permanent causes error may cause failure 2/25/2021 Fault tolerance is continuing to work correctly in the presence of faults. Distributed Systems - Comp 655 12

Types of failures 2/25/2021 Distributed Systems - Comp 655 13

If you remember one thing • Components fail in distributed systems on a regular basis. • Distributed systems have to be designed to deal with the failure of individual components so that the system as a whole – – Is available and/or Is reliable and/or Is safe and/or Is maintainable depending on the problem it is trying to solve and the resources available … 2/25/2021 Distributed Systems - Comp 655 14

Fault Tolerance • Fault tolerance concepts • Implementation – distributed agreement • Distributed agreement meets transaction processing: 2 - and 3 -phase commit 2/25/2021 Distributed Systems - Comp 655 15

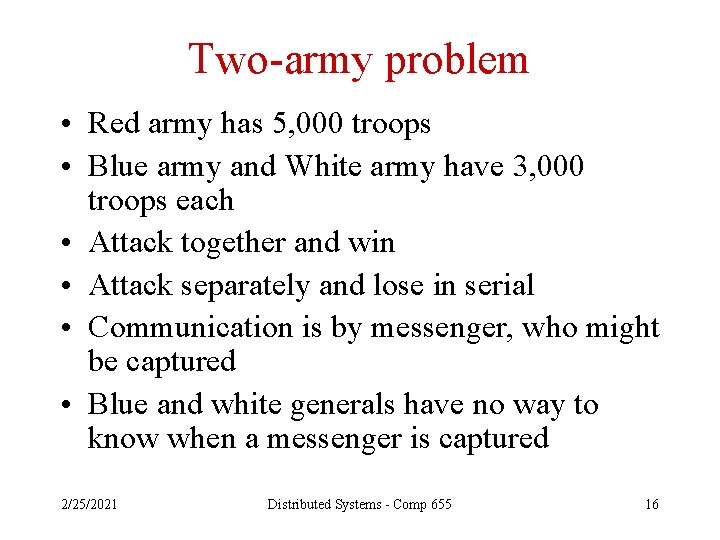

Two-army problem • Red army has 5, 000 troops • Blue army and White army have 3, 000 troops each • Attack together and win • Attack separately and lose in serial • Communication is by messenger, who might be captured • Blue and white generals have no way to know when a messenger is captured 2/25/2021 Distributed Systems - Comp 655 16

Activity: outsmart the generals • Take your best shot at designing a protocol that can solve the two-army problem • Spend ten minutes • Did you think of anything promising? 2/25/2021 Distributed Systems - Comp 655 17

Conclusion: go home • “agreement between even two processes is not possible in the face of unreliable communication” - p 372 2/25/2021 Distributed Systems - Comp 655 18

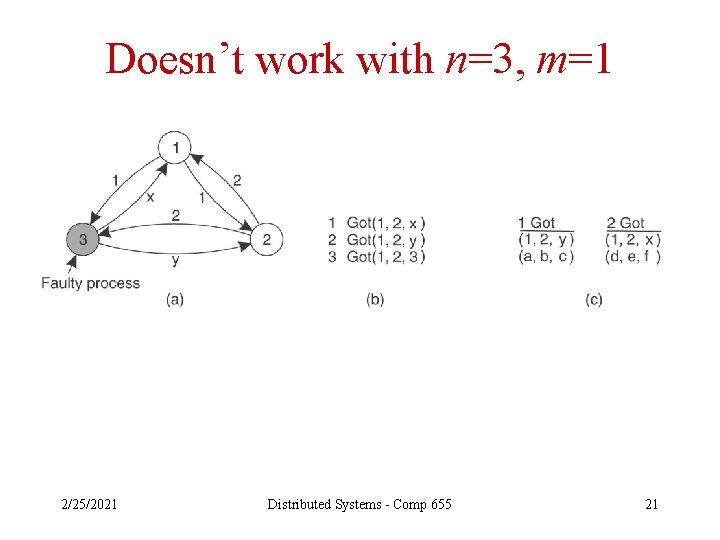

Byzantine generals • Assume perfect communication • Assume n generals, m of whom should not be trusted • The problem is to reach agreement on troop strength among the non-faulty generals 2/25/2021 Distributed Systems - Comp 655 19

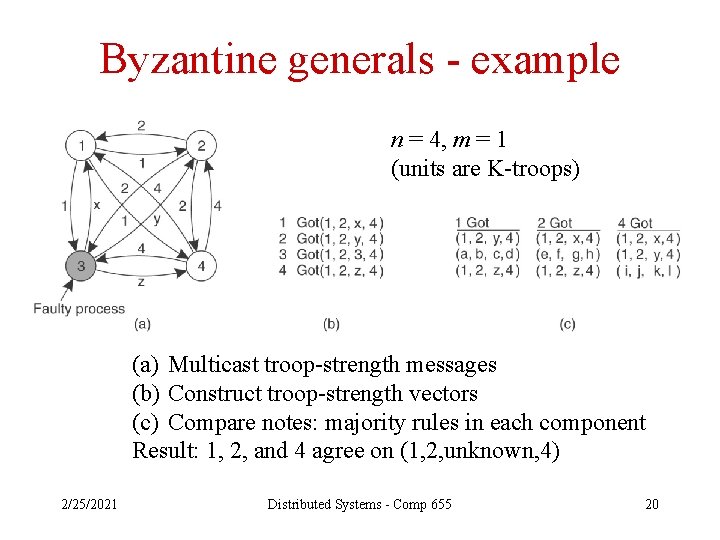

Byzantine generals - example n = 4, m = 1 (units are K-troops) (a) Multicast troop-strength messages (b) Construct troop-strength vectors (c) Compare notes: majority rules in each component Result: 1, 2, and 4 agree on (1, 2, unknown, 4) 2/25/2021 Distributed Systems - Comp 655 20

Doesn’t work with n=3, m=1 2/25/2021 Distributed Systems - Comp 655 21

Fault Tolerance • Fault tolerance concepts • Implementation – distributed agreement • Distributed agreement meets transaction processing: 2 - and 3 -phase commit 2/25/2021 Distributed Systems - Comp 655 22

Distributed commit protocols • What is the problem they are trying to solve? – Ensure that a group of processes all do something, or none of them do – Example: in a distributed transaction that involves updates to data on three different servers, ensure that all three commit or none of them do 2/25/2021 Distributed Systems - Comp 655 23

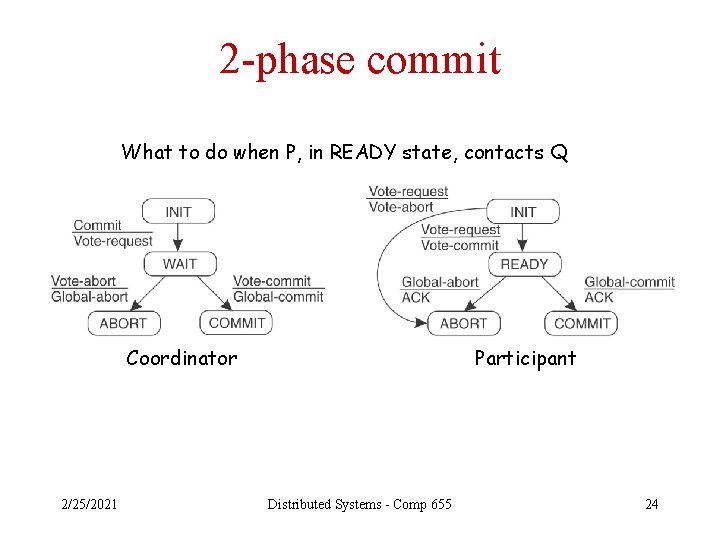

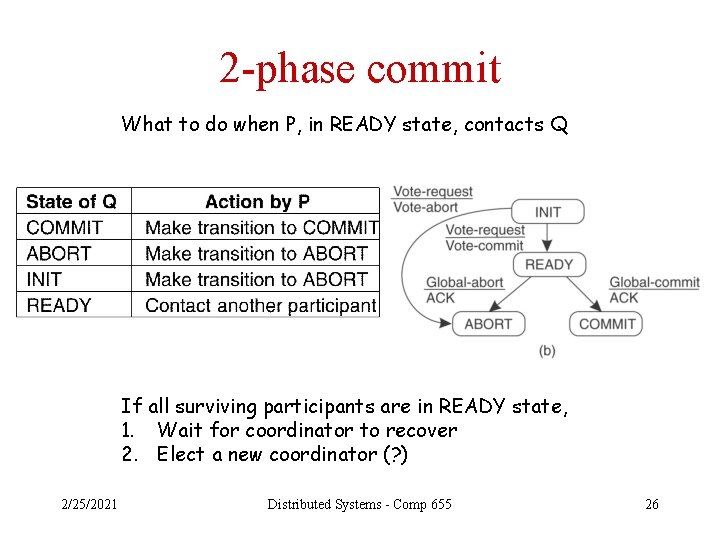

2 -phase commit What to do when P, in READY state, contacts Q Coordinator 2/25/2021 Participant Distributed Systems - Comp 655 24

If coordinator crashes • Participants could wait until the coordinator recovers • Or, they could try to figure out what to do among themselves – Example, if P contacts Q, and Q is in the COMMIT state, P should COMMIT as well 2/25/2021 Distributed Systems - Comp 655 25

2 -phase commit What to do when P, in READY state, contacts Q If all surviving participants are in READY state, 1. Wait for coordinator to recover 2. Elect a new coordinator (? ) 2/25/2021 Distributed Systems - Comp 655 26

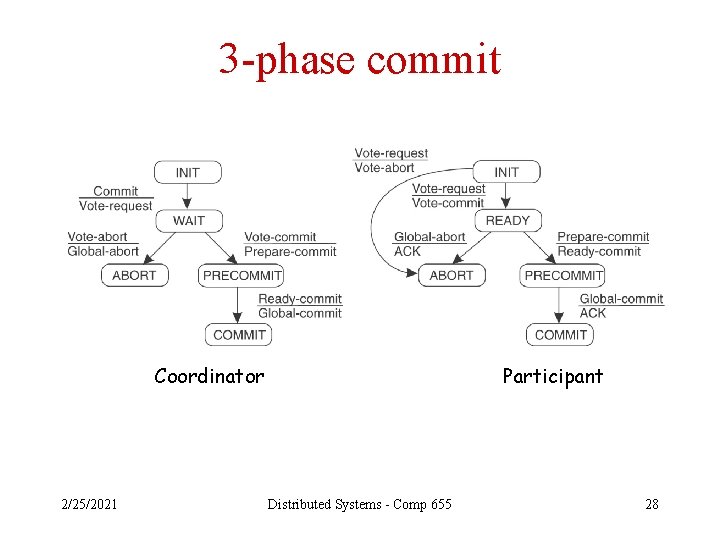

3 -phase commit • Problem addressed: – Non-blocking distributed commit in the presence of failures – Interesting theoretically, but rarely used in practice 2/25/2021 Distributed Systems - Comp 655 27

3 -phase commit Coordinator 2/25/2021 Participant Distributed Systems - Comp 655 28

Bonus material • Implementation – reliable point-to-point communication • Implementation – process groups • Implementation – reliable multicast • Recovery • Sparing 2/25/2021 Distributed Systems - Comp 655 29

RPC, RMI crash & omission failures • • • Client can’t locate server Request lost Server crashes after receipt of request Response lost Client crashes after sending request 2/25/2021 Distributed Systems - Comp 655 30

Can’t locate server • Raise an exception, or • Send a signal, or • Log an error and return an error code Note: hard to mask distribution in this case 2/25/2021 Distributed Systems - Comp 655 31

Request lost • Timeout and retry • Back off to “cannot locate server” if too many timeouts occur 2/25/2021 Distributed Systems - Comp 655 32

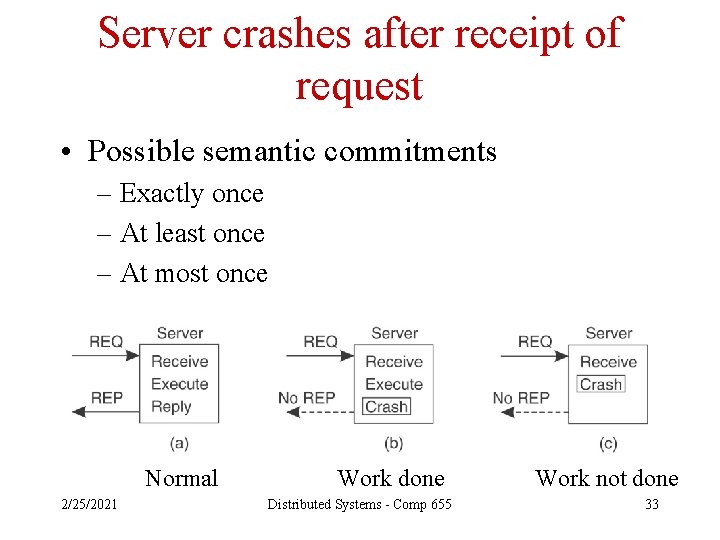

Server crashes after receipt of request • Possible semantic commitments – Exactly once – At least once – At most once Normal 2/25/2021 Work done Distributed Systems - Comp 655 Work not done 33

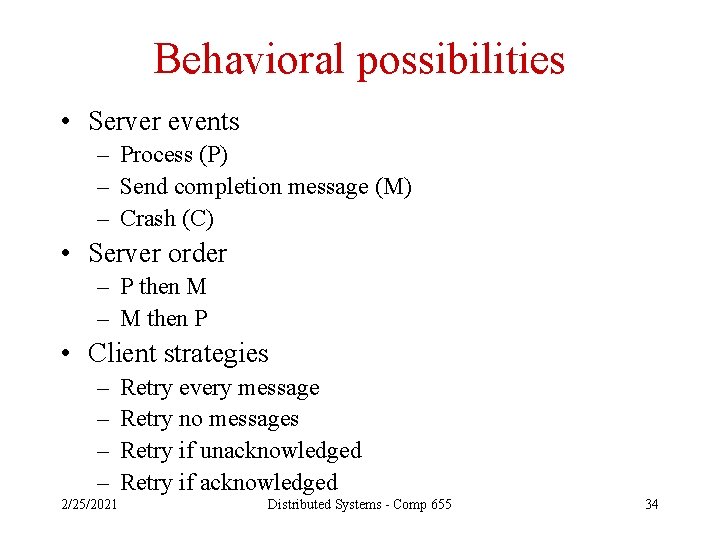

Behavioral possibilities • Server events – Process (P) – Send completion message (M) – Crash (C) • Server order – P then M – M then P • Client strategies – – 2/25/2021 Retry every message Retry no messages Retry if unacknowledged Retry if acknowledged Distributed Systems - Comp 655 34

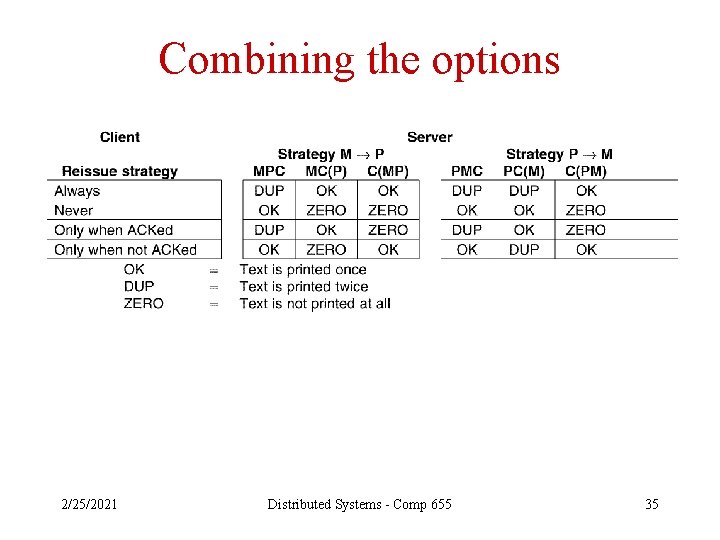

Combining the options 2/25/2021 Distributed Systems - Comp 655 35

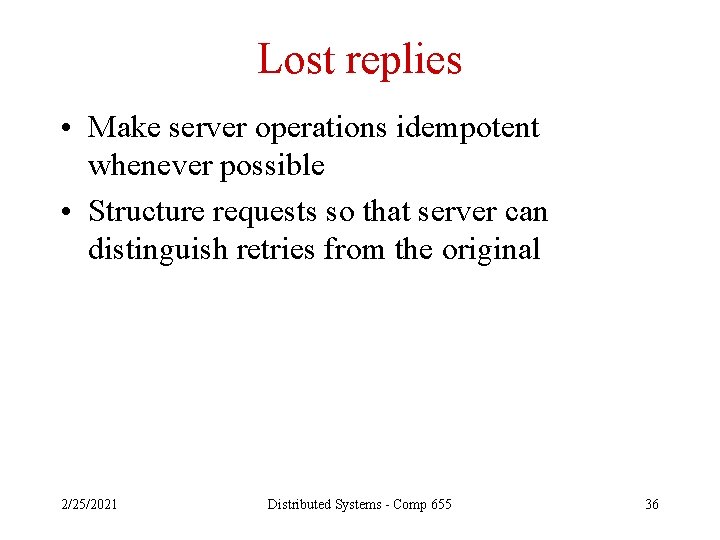

Lost replies • Make server operations idempotent whenever possible • Structure requests so that server can distinguish retries from the original 2/25/2021 Distributed Systems - Comp 655 36

Client crashes • The server-side activity is called an orphan computation • Orphans can tie up resources, hold locks, etc • Four strategies (at least) – Extermination, based on client-side logs • Client writes a log record before and after each call • When client restarts after a crash, it checks the log and kills outstanding orphan computations • Problems include: – Lots of disk activity – Grand-orphans 2/25/2021 Distributed Systems - Comp 655 37

Client crashes, continued • More approaches for handling orphans – Re-incarnation, based on client-defined epochs • When client restarts after a crash, it broadcasts a start-ofepoch message • On receipt of a start-of-epoch message, each server kills any computation for that client – “Gentle” re-incarnation • Similar, but server tries to verify that a computation is really an orphan before killing it 2/25/2021 Distributed Systems - Comp 655 38

Yet more client-crash strategies • One more strategy – Expiration • Each computation has a lease on life • If not complete when the lease expires, a computation must obtain another lease from its owner • Clients wait one lease period before restarting after a crash (so any orphans will be gone) • Problem: what’s a reasonable lease period? 2/25/2021 Distributed Systems - Comp 655 39

Common problems with clientcrash strategies • Crashes that involve network partition (communication between partitions will not work at all) • Killed orphans may leave persistent traces behind, for example – Locks – Requests in message queues 2/25/2021 Distributed Systems - Comp 655 40

Bonus material • Implementation – reliable point-to-point communication • Implementation – process groups • Implementation – reliable multicast • Recovery • Sparing 2/25/2021 Distributed Systems - Comp 655 41

How to do it? • Redundancy applied – In the appropriate places – In the appropriate ways • Types of redundancy – Data (e. g. error correcting codes, replicated data) – Time (e. g. retry) – Physical (e. g. replicated hardware, backup systems) 2/25/2021 Distributed Systems - Comp 655 42

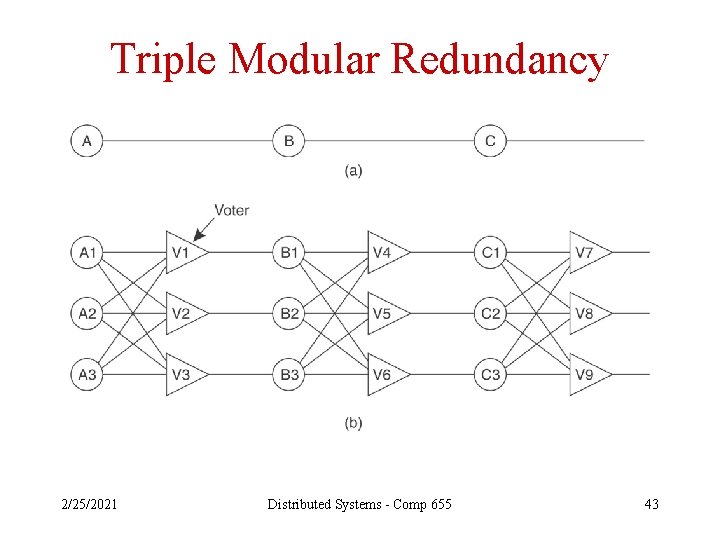

Triple Modular Redundancy 2/25/2021 Distributed Systems - Comp 655 43

Tandem Computers • TMR on – CPUs – Memory • Duplicated – Buses – Disks – Power supplies • A big hit in operations systems for a while 2/25/2021 Distributed Systems - Comp 655 44

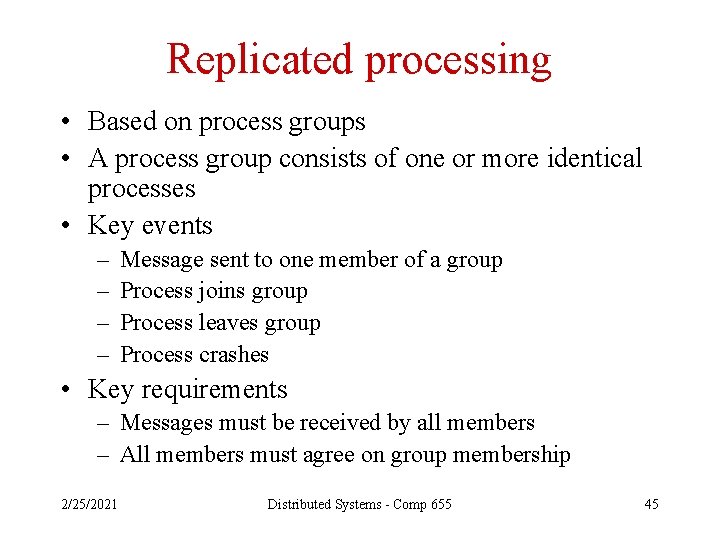

Replicated processing • Based on process groups • A process group consists of one or more identical processes • Key events – – Message sent to one member of a group Process joins group Process leaves group Process crashes • Key requirements – Messages must be received by all members – All members must agree on group membership 2/25/2021 Distributed Systems - Comp 655 45

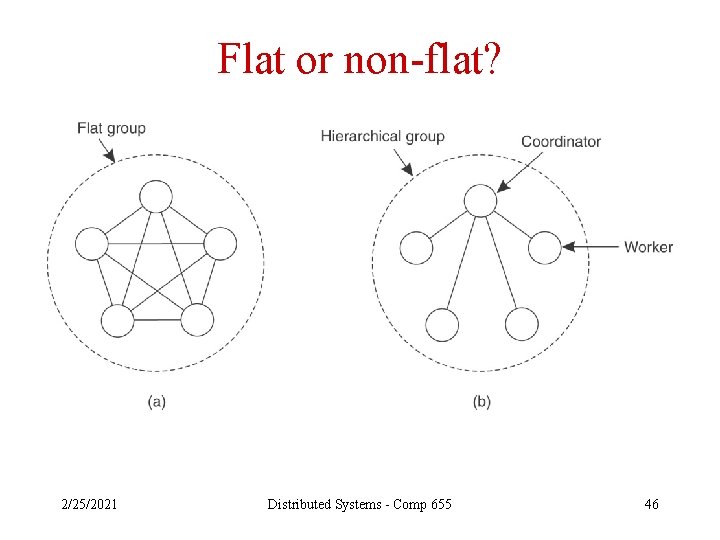

Flat or non-flat? 2/25/2021 Distributed Systems - Comp 655 46

Effective process groups require • Distributed agreement – On group membership – On coordinator elections – On whether or not to commit a transaction • Effective communication – Reliable enough – Scalable enough – Often, multicast – Typically looking for atomic multicast 2/25/2021 Distributed Systems - Comp 655 47

Process groups also require • Ability to tolerate crash failures and omission failures – Need k+1 processes to deal with up to k silent failures • Ability to tolerate performance, response, and arbitrary failures – Need 3 k+1 processes to reach agreement with up to k Byzantine failures – Need 2 k+1 processes to ensure that a majority of the system produces the correct results with up to k Byzantine failures 2/25/2021 Distributed Systems - Comp 655 48

Bonus material • Implementation – reliable point-to-point communication • Implementation – process groups • Implementation – reliable multicast • Recovery • Sparing 2/25/2021 Distributed Systems - Comp 655 49

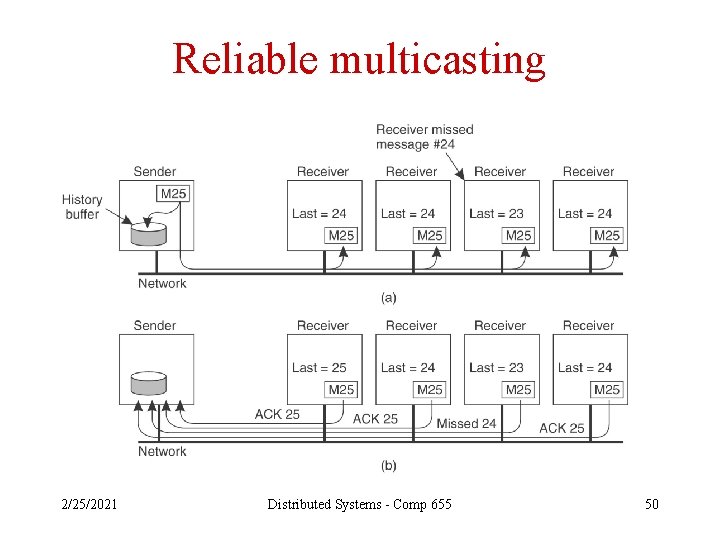

Reliable multicasting 2/25/2021 Distributed Systems - Comp 655 50

Scalability problem • Too many acknowledgements – One from each receiver – Can be a huge number in some systems – Also known as “feedback implosion” 2/25/2021 Distributed Systems - Comp 655 51

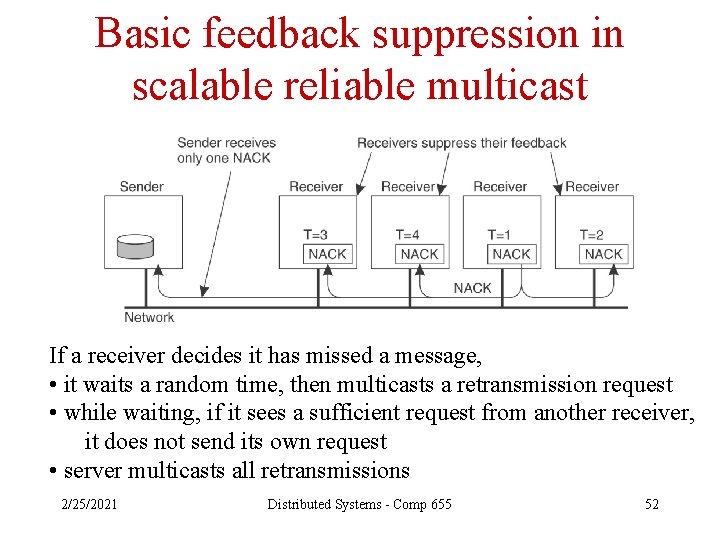

Basic feedback suppression in scalable reliable multicast If a receiver decides it has missed a message, • it waits a random time, then multicasts a retransmission request • while waiting, if it sees a sufficient request from another receiver, it does not send its own request • server multicasts all retransmissions 2/25/2021 Distributed Systems - Comp 655 52

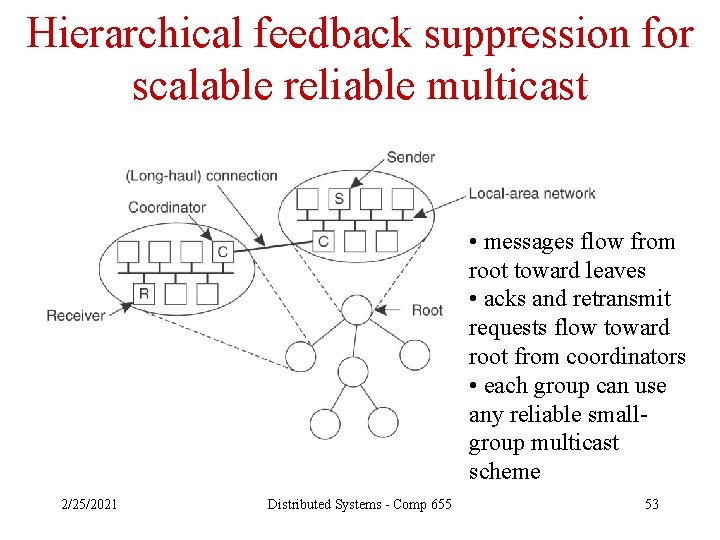

Hierarchical feedback suppression for scalable reliable multicast • messages flow from root toward leaves • acks and retransmit requests flow toward root from coordinators • each group can use any reliable smallgroup multicast scheme 2/25/2021 Distributed Systems - Comp 655 53

Atomic multicast • Often, in a distributed system, reliable multicast is a step toward atomic multicast • Atomic multicast is atomicity applied to communications: – Either all members of a process group receive a message, OR – No members receive it • Often requires some form of order agreement as well 2/25/2021 Distributed Systems - Comp 655 54

How atomic multicast helps 1. Assume we have atomic multicast, among a group of processes, each of which owns a replica of a database 2. One replica goes down 3. Database activity continues 4. The process comes back up 5. Atomic multicast allows us to figure out exactly which transactions have to be replayed (see pp 386 -387) 2/25/2021 Distributed Systems - Comp 655 55

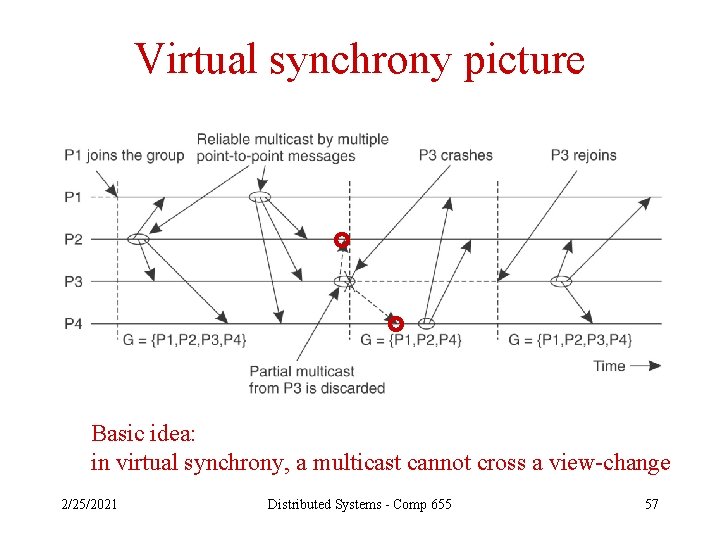

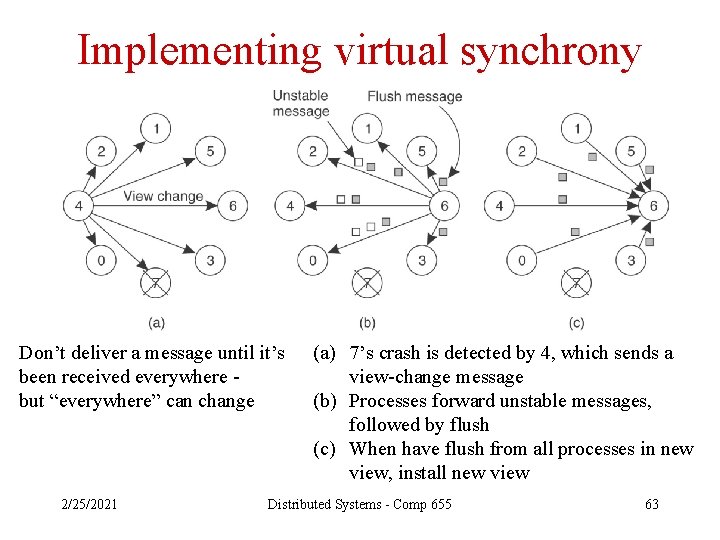

More concepts • Group view • View change • Virtually synchronous – Each message is received by all non-faulty processes, or – If sender crashes during multicast, message could be ignored by all processes 2/25/2021 Distributed Systems - Comp 655 56

Virtual synchrony picture Basic idea: in virtual synchrony, a multicast cannot cross a view-change 2/25/2021 Distributed Systems - Comp 655 57

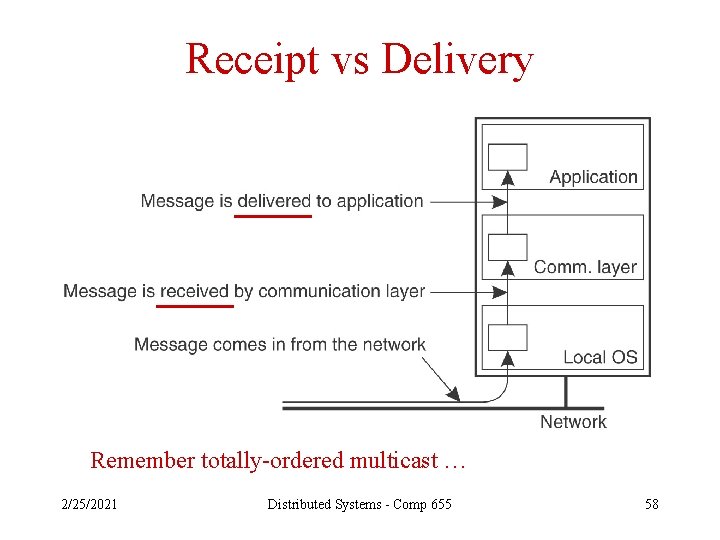

Receipt vs Delivery Remember totally-ordered multicast … 2/25/2021 Distributed Systems - Comp 655 58

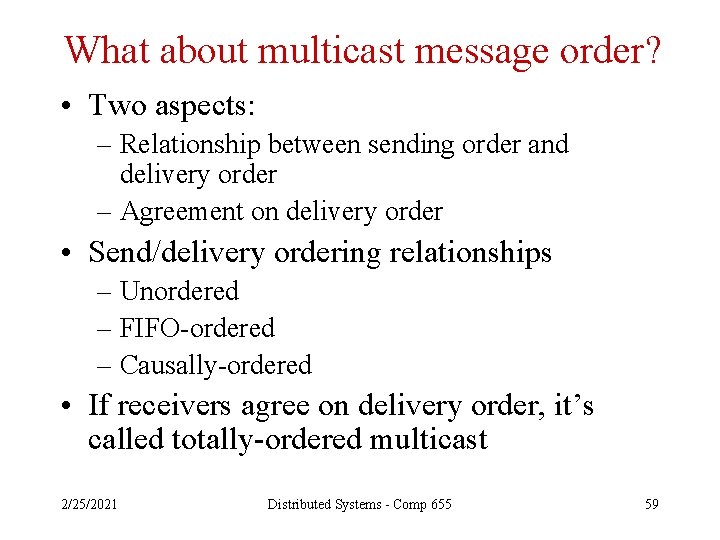

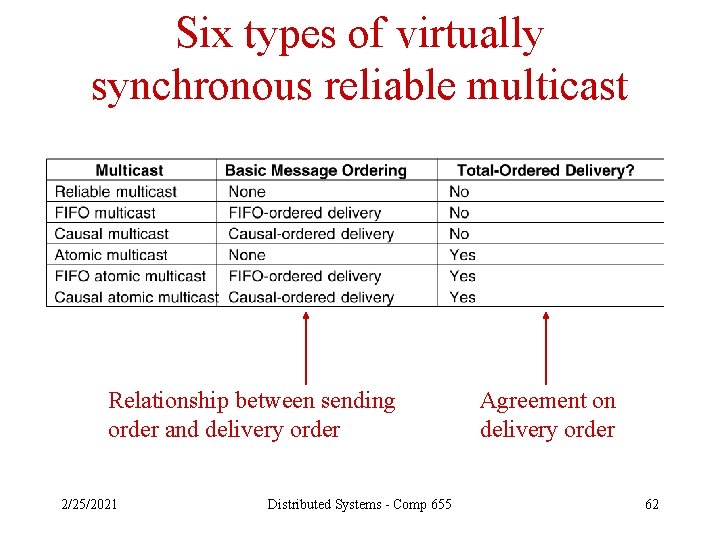

What about multicast message order? • Two aspects: – Relationship between sending order and delivery order – Agreement on delivery order • Send/delivery ordering relationships – Unordered – FIFO-ordered – Causally-ordered • If receivers agree on delivery order, it’s called totally-ordered multicast 2/25/2021 Distributed Systems - Comp 655 59

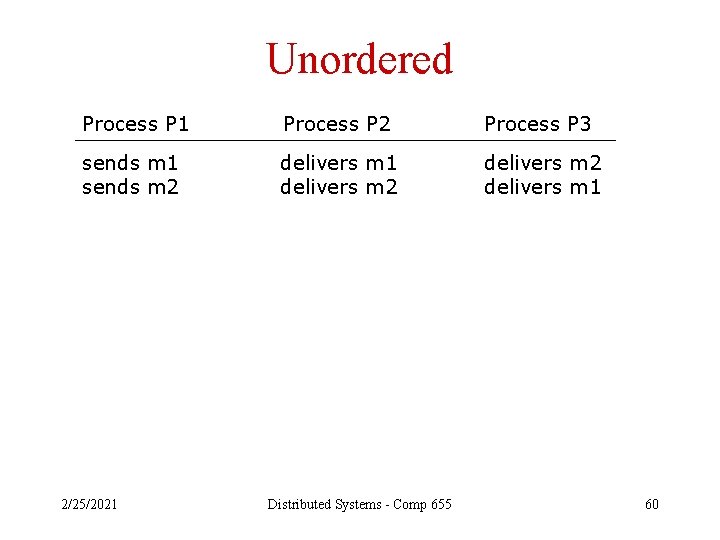

Unordered Process P 1 Process P 2 Process P 3 sends m 1 sends m 2 delivers m 1 delivers m 2 delivers m 1 2/25/2021 Distributed Systems - Comp 655 60

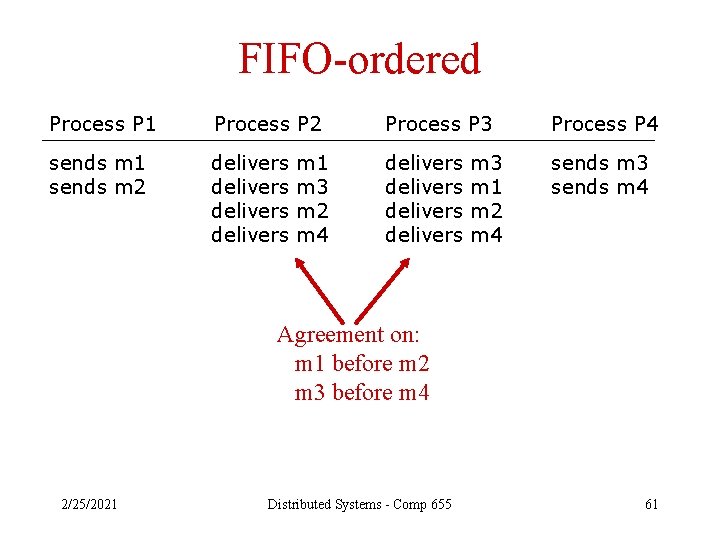

FIFO-ordered Process P 1 Process P 2 Process P 3 Process P 4 sends m 1 sends m 2 delivers delivers sends m 3 sends m 4 m 1 m 3 m 2 m 4 m 3 m 1 m 2 m 4 Agreement on: m 1 before m 2 m 3 before m 4 2/25/2021 Distributed Systems - Comp 655 61

Six types of virtually synchronous reliable multicast Relationship between sending order and delivery order 2/25/2021 Distributed Systems - Comp 655 Agreement on delivery order 62

Implementing virtual synchrony Don’t deliver a message until it’s been received everywhere but “everywhere” can change 2/25/2021 (a) 7’s crash is detected by 4, which sends a view-change message (b) Processes forward unstable messages, followed by flush (c) When have flush from all processes in new view, install new view Distributed Systems - Comp 655 63

Bonus material • Implementation – reliable point-to-point communication • Implementation – process groups • Implementation – reliable multicast • Recovery • Sparing 2/25/2021 Distributed Systems - Comp 655 64

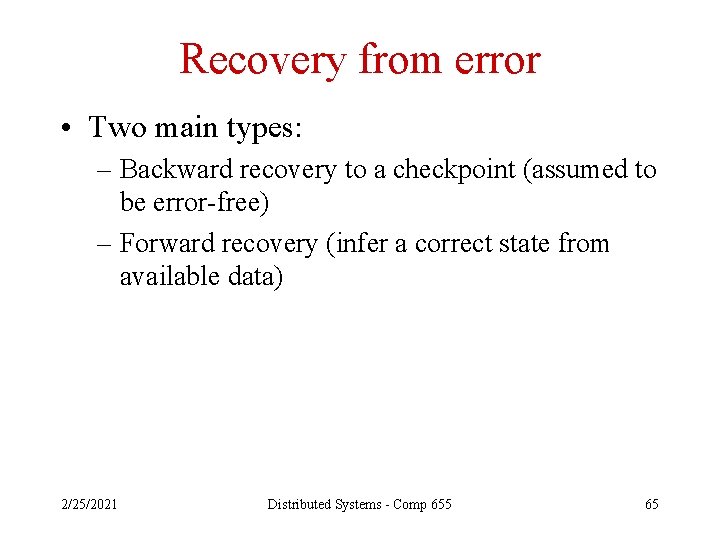

Recovery from error • Two main types: – Backward recovery to a checkpoint (assumed to be error-free) – Forward recovery (infer a correct state from available data) 2/25/2021 Distributed Systems - Comp 655 65

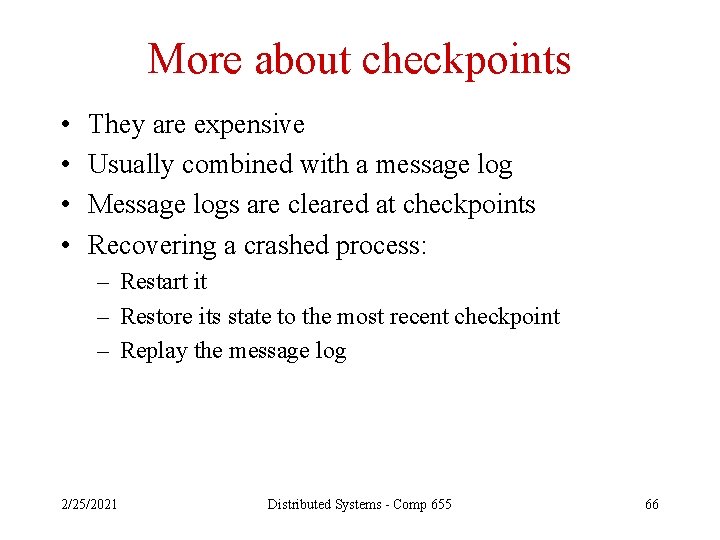

More about checkpoints • • They are expensive Usually combined with a message log Message logs are cleared at checkpoints Recovering a crashed process: – Restart it – Restore its state to the most recent checkpoint – Replay the message log 2/25/2021 Distributed Systems - Comp 655 66

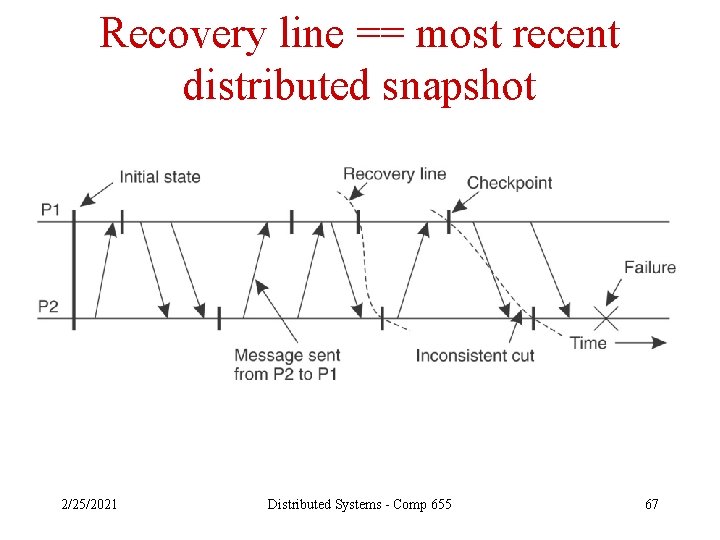

Recovery line == most recent distributed snapshot 2/25/2021 Distributed Systems - Comp 655 67

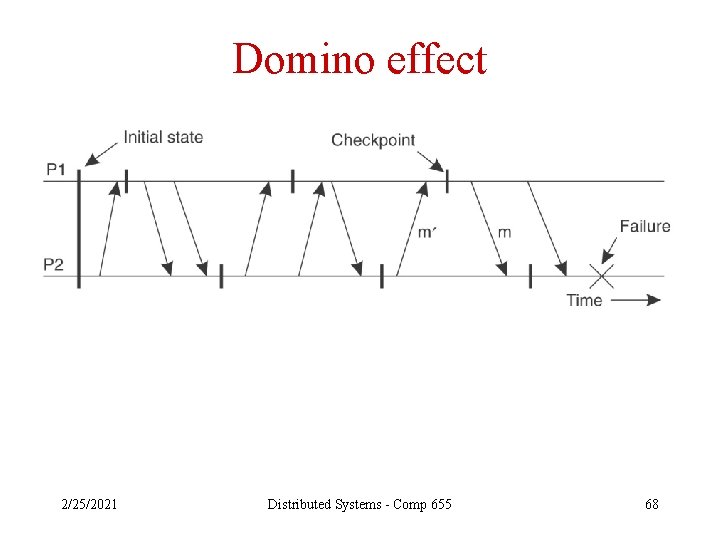

Domino effect 2/25/2021 Distributed Systems - Comp 655 68

Bonus material • Implementation – reliable point-to-point communication • Implementation – process groups • Implementation – reliable multicast • Recovery • Sparing 2/25/2021 Distributed Systems - Comp 655 69

Sparing • Not really fault tolerance • But it can be cheaper, and provide fast restoration time after a failure • Types of spares – Cold – Hot – Warm • The spare may or may not also have regular responsibilities in the system 2/25/2021 Distributed Systems - Comp 655 70

Switchover • Repair is accomplished by switching processing away from a failed server to a spare 2/25/2021 Distributed Systems - Comp 655 71

Questions on switchover • Has the failed system really failed? • Is the spare operational? • Can the spare handle the load? – May need a way to block medium to low priority work during switchovers • How will the spare get access to the failed server’s data? • What client session data will be preserved, and how? 2/25/2021 Distributed Systems - Comp 655 72

More switchover questions • What about configuration files? • What about network addressing? • What about switching back after the failed server has been repaired? – Partial shutdown of the spare – Updating directories to redirect part of the load – Making up for lost medium-to-low priority work 2/25/2021 Distributed Systems - Comp 655 73

- Slides: 73