Fast and Practical Algorithms for Computing Runs Gang

![LZ Factorization (Defn) • The LZ-factorization, LZx of string x[1. . n] is a LZ Factorization (Defn) • The LZ-factorization, LZx of string x[1. . n] is a](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-3.jpg)

![The ubiquitous Suffix Array • Sort the n suffixes of x[1. . n] into The ubiquitous Suffix Array • Sort the n suffixes of x[1. . n] into](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-7.jpg)

![Overwrite LCP with POS • Once POS[SA[i]] has been assigned – SA[i] and LCP[i] Overwrite LCP with POS • Once POS[SA[i]] has been assigned – SA[i] and LCP[i]](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-11.jpg)

![Eliminate the LEN Array • Given POS[i] = p – LEN[i] = longestmatch(x[POS[i]…n], x[i…n]) Eliminate the LEN Array • Given POS[i] = p – LEN[i] = longestmatch(x[POS[i]…n], x[i…n])](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-12.jpg)

- Slides: 20

Fast and Practical Algorithms for Computing Runs Gang Chen – Mc. Master, Ontario, CAN Simon J. Puglisi – RMIT, Melbourne, AUS Bill Smyth – Mc. Master, Ontario, CAN CPM, UWO, July 11, 2007

Overview • I won’t talk much about runs! • Lempel-Ziv (LZ) Factorization • How to compute LZ with SA & LCP – – Suffix Array & LCP Array Basics (again!) Two different methods for LZ factorization CPS 1 and CPS 2 Various space time trade-offs • Experimental comparison to other approaches

![LZ Factorization Defn The LZfactorization LZx of string x1 n is a LZ Factorization (Defn) • The LZ-factorization, LZx of string x[1. . n] is a](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-3.jpg)

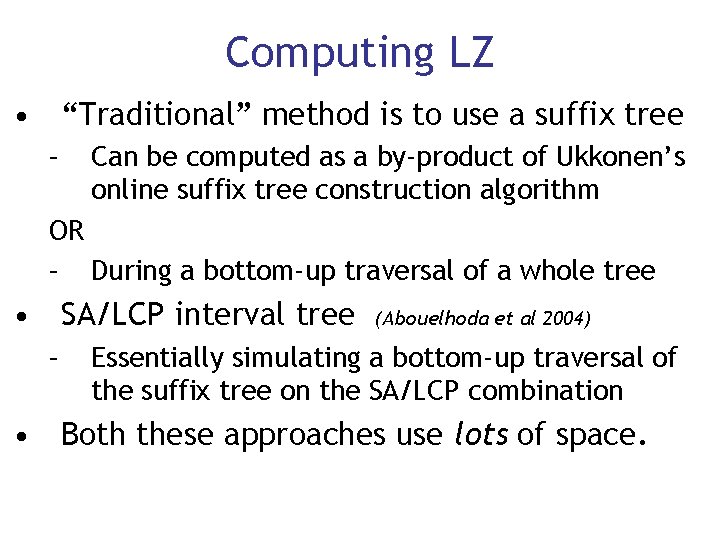

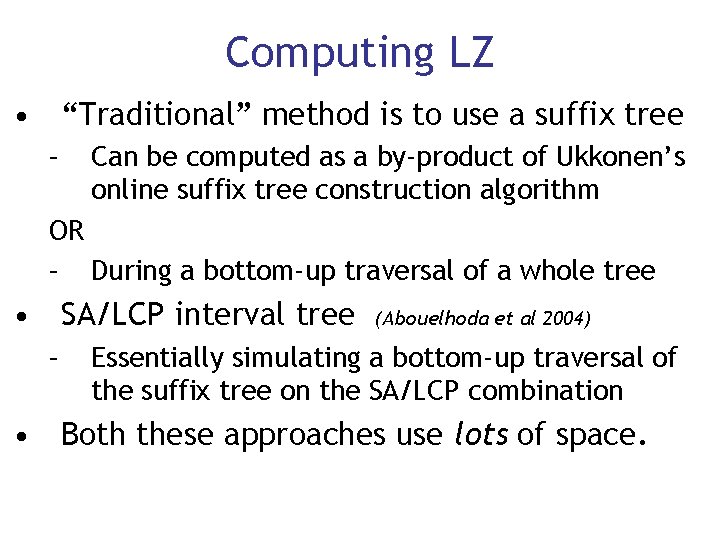

LZ Factorization (Defn) • The LZ-factorization, LZx of string x[1. . n] is a factorization x = w 1 w 2. . . wk such that each wj, j ε 1. . k, is either: 1. a letter that does not occur in w 1 w 2. . . wj-1; or 2. the longest substring that occurs at least twice in w 1 w 2. . . wj. • • This is the LZ-77 parsing of the input string Also known as the S-Factorization (Crochemore)

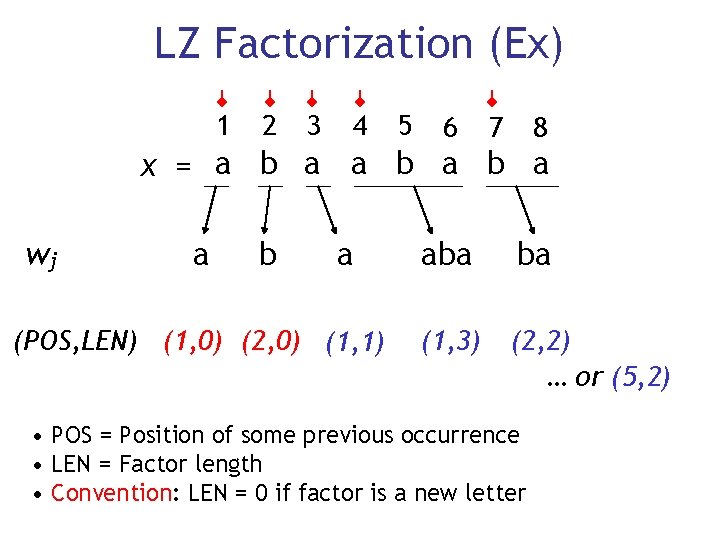

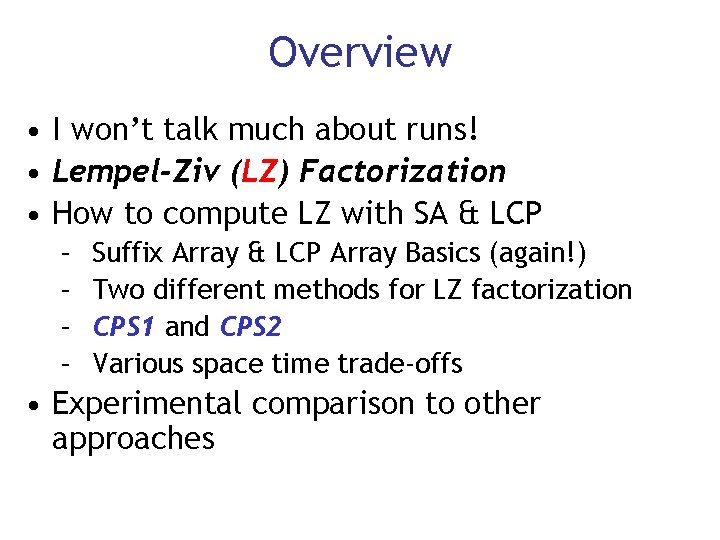

LZ Factorization (Ex) 1 2 3 4 5 6 7 8 x = a b a b a wj a b a (POS, LEN) (1, 0) (2, 0) (1, 1) aba ba (1, 3) (2, 2) … or (5, 2) • POS = Position of some previous occurrence • LEN = Factor length • Convention: LEN = 0 if factor is a new letter

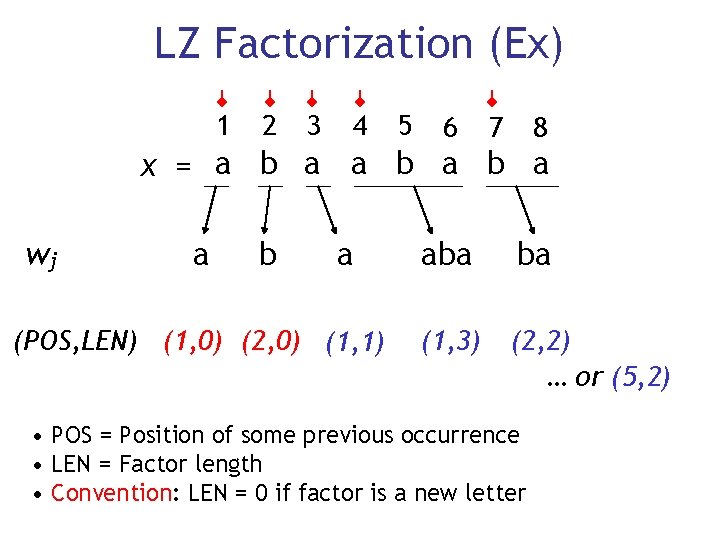

Applications of LZ Factorization is the computational bottleneck in numerous string processing algorithms • • • Computing all runs (Kolpakov & Kucherov) Repeats with fixed gap (Kolpakov & Kucherov… again) Branching repeats (Gusfield & Stoye) Sequence Alignment (Crochemore et al. ) Local periods (Duval et al. ) Data Compression (Lempel & Ziv, many others) Etcetera…

Computing LZ • “Traditional” method is to use a suffix tree – Can be computed as a by-product of Ukkonen’s online suffix tree construction algorithm OR – During a bottom-up traversal of a whole tree • SA/LCP interval tree – (Abouelhoda et al 2004) Essentially simulating a bottom-up traversal of the suffix tree on the SA/LCP combination • Both these approaches use lots of space.

![The ubiquitous Suffix Array Sort the n suffixes of x1 n into The ubiquitous Suffix Array • Sort the n suffixes of x[1. . n] into](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-7.jpg)

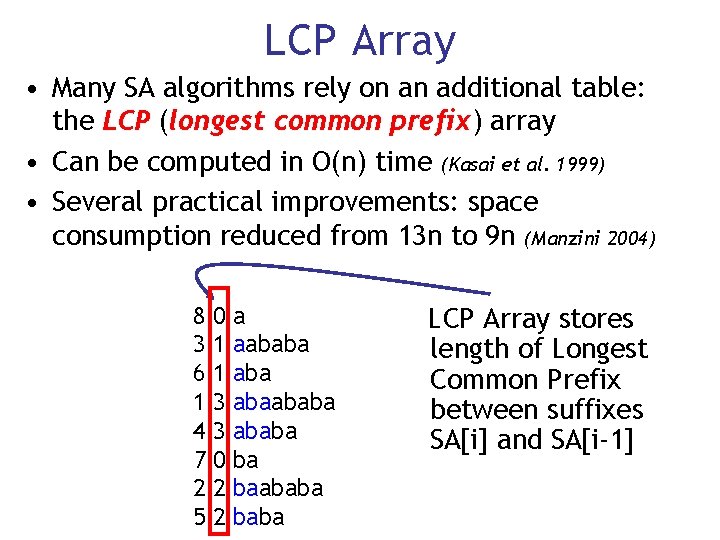

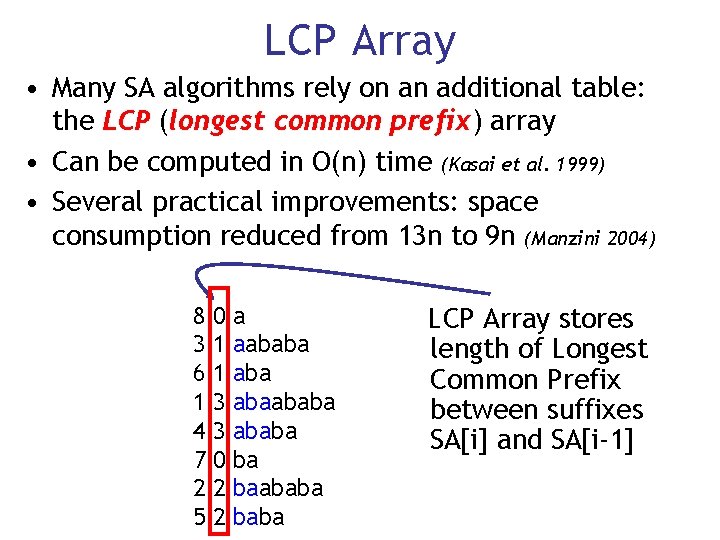

The ubiquitous Suffix Array • Sort the n suffixes of x[1. . n] into lexorder • Store the offsets in an array 1 2 3 4 5 6 7 8 x = a b a b a 1 2 3 4 5 6 7 8 abaababa baba ba a SORT 8 3 6 1 4 7 2 5 a aababa abaababa ba baababa

LCP Array • Many SA algorithms rely on an additional table: the LCP (longest common prefix) array • Can be computed in O(n) time (Kasai et al. 1999) • Several practical improvements: space consumption reduced from 13 n to 9 n (Manzini 2004) 8 3 6 1 4 7 2 5 0 1 1 3 3 0 2 2 a aababa abaababa ba baababa LCP Array stores length of Longest Common Prefix between suffixes SA[i] and SA[i-1]

Computing LZ with the SA • First “family” of LZ algorithms we call CPS 1 • CPS 1 algorithms compute arrays POS and LEN • These arrays give us the factor information for every position (which is more than we require) • Also, LEN is a permutation of LCP 1 2 3 4 5 6 7 8 x = a b a b a POS = 1 2 1 2 1 LEN = 0 0 1 3 2 1 LCP = 0 1 1 3 3 0 2 2

CPS 1: LZ from SA & LCP • POS and LEN are computed in a straight left-toright traversal of the SA/LCP arrays • We “ascend” the LCP array, saving indexes on the stack until LCP values decrease • Backtrack using the stack to locate the rightmost i 1 < i 2 with LCP[i 1] < LCP[i 2] • As we go set the larger position with equal LCP to point leftwards to the smaller one • 14 lines of C code! • x, SA, LCP, POS, LEN arrays → 17 n + stack

![Overwrite LCP with POS Once POSSAi has been assigned SAi and LCPi Overwrite LCP with POS • Once POS[SA[i]] has been assigned – SA[i] and LCP[i]](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-11.jpg)

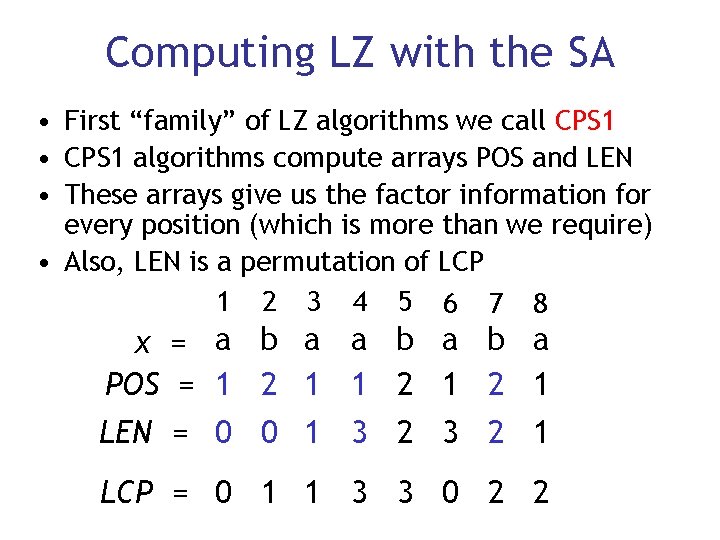

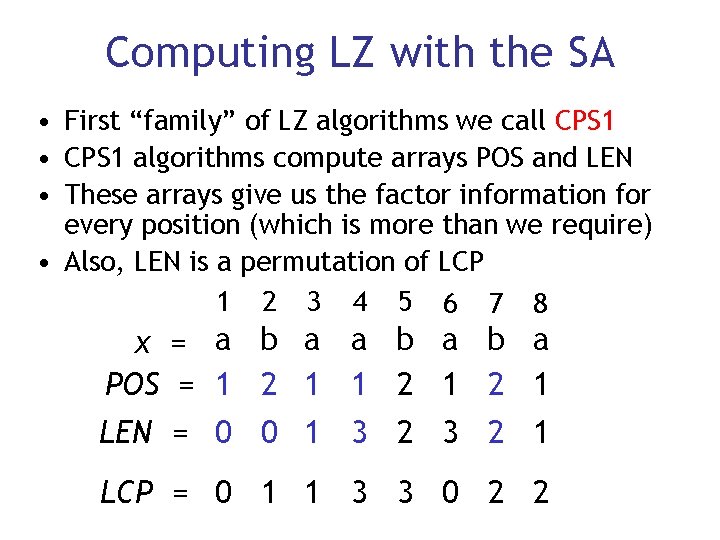

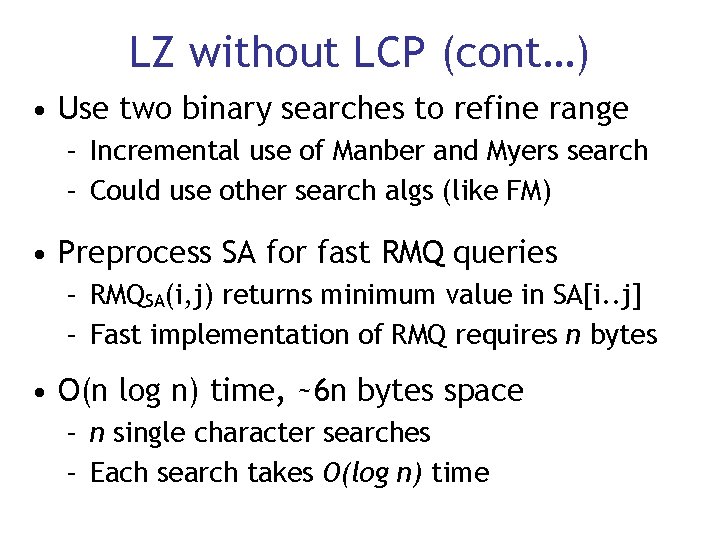

Overwrite LCP with POS • Once POS[SA[i]] has been assigned – SA[i] and LCP[i] are no longer accessed… • Reuse the space – Leave SA[i] as is – Assign LCP[i] = POS[SA[i]] – Store LEN separately as before • After the traversal of SA/LCP is complete, permute the SA and “LCP” arrays inplace into string order by following all cycles • POS array no longer needed → 13 n + stack

![Eliminate the LEN Array Given POSi p LENi longestmatchxPOSin xin Eliminate the LEN Array • Given POS[i] = p – LEN[i] = longestmatch(x[POS[i]…n], x[i…n])](https://slidetodoc.com/presentation_image_h/b27bf89976bd61fae07c8fe363d424f6/image-12.jpg)

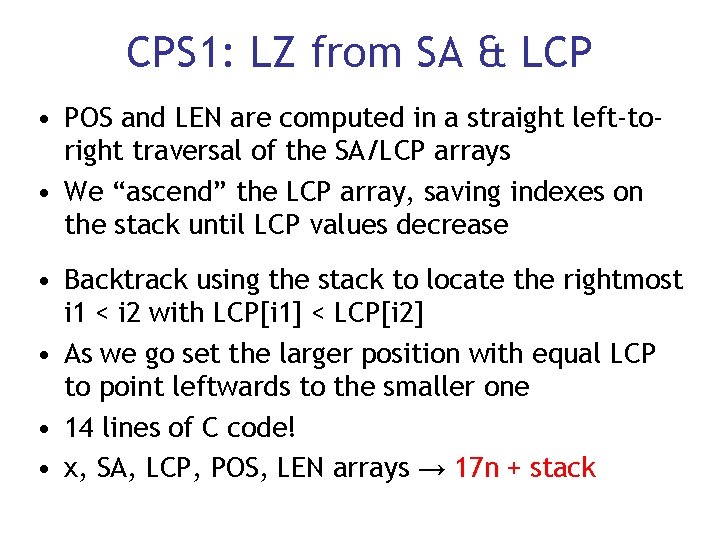

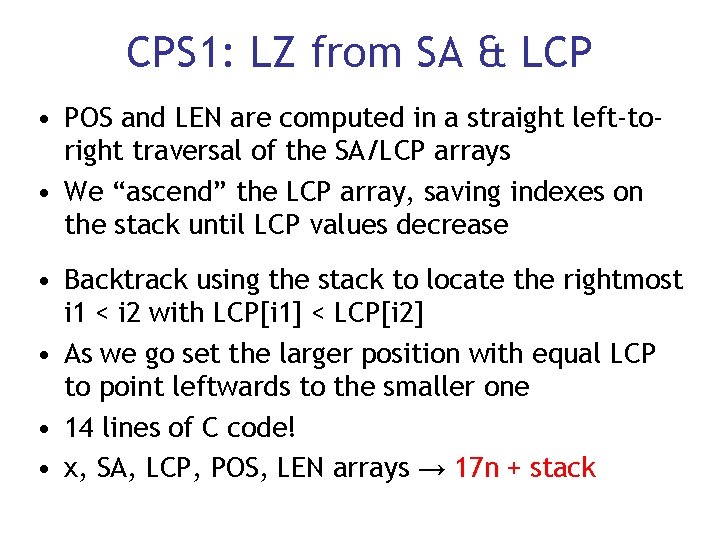

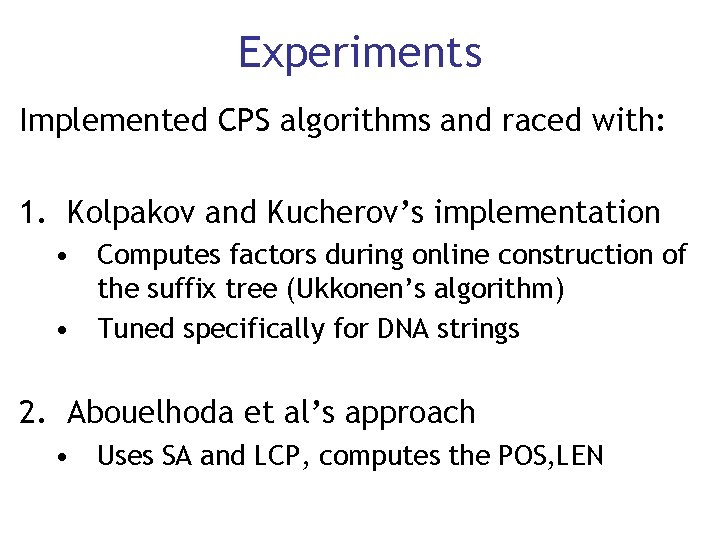

Eliminate the LEN Array • Given POS[i] = p – LEN[i] = longestmatch(x[POS[i]…n], x[i…n]) • Compute only the POS values – Permute them into the POS array (as last slide) • Compute LEN values only for factors in the parsing • Sum of factors lengths required for the parsing is n, still O(n) time • LEN array no longer needed → 9 n + stack

CPS 2: LZ without LCP • LCP computation is slow (though linear) – requires extra space: can we drop it? • Use SA to search for the longest previous match at each position in the factorization – Problem is: we don’t want any match - we want a match to the left. – When do we stop the search?

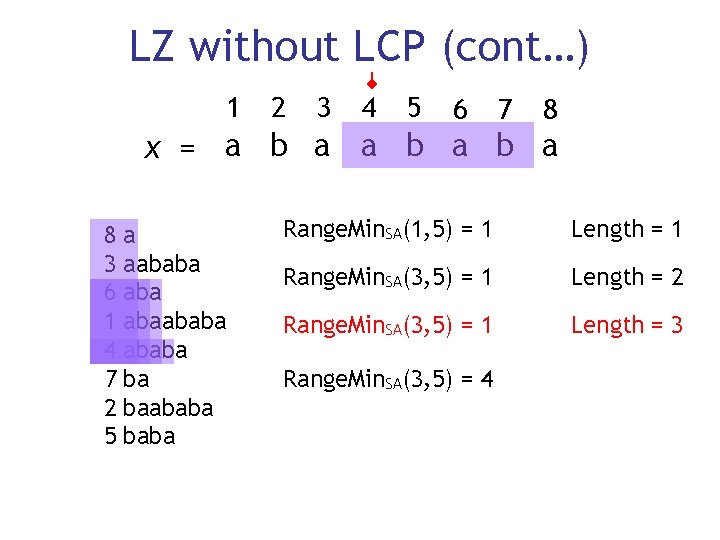

LZ without LCP (cont…) 1 2 3 4 5 6 7 8 x = a b a b a 8 3 6 1 4 7 2 5 a aababa abaababa ba baababa Range. Min. SA(1, 5) = 1 Length = 1 Range. Min. SA(3, 5) = 1 Length = 2 Range. Min. SA(3, 5) = 1 Length = 3 Range. Min. SA(3, 5) = 4

LZ without LCP (cont…) • Use two binary searches to refine range – Incremental use of Manber and Myers search – Could use other search algs (like FM) • Preprocess SA for fast RMQ queries – RMQSA(i, j) returns minimum value in SA[i. . j] – Fast implementation of RMQ requires n bytes • O(n log n) time, ~6 n bytes space – n single character searches – Each search takes O(log n) time

Experiments Implemented CPS algorithms and raced with: 1. Kolpakov and Kucherov’s implementation • Computes factors during online construction of the suffix tree (Ukkonen’s algorithm) • Tuned specifically for DNA strings 2. Abouelhoda et al’s approach • Uses SA and LCP, computes the POS, LEN

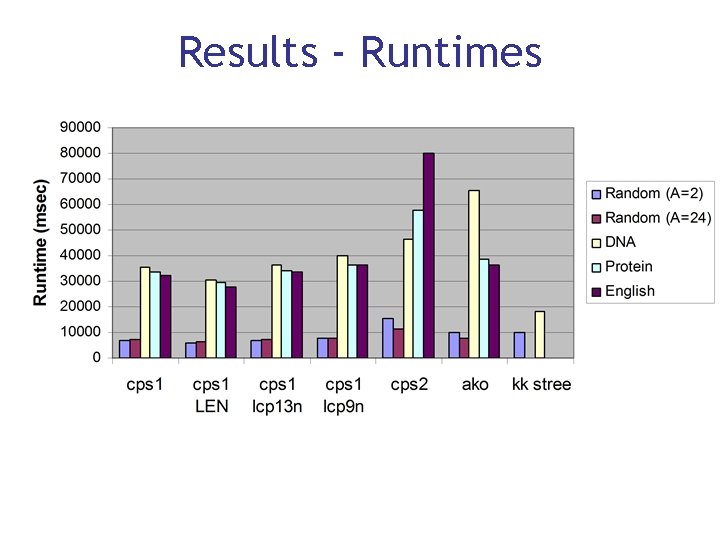

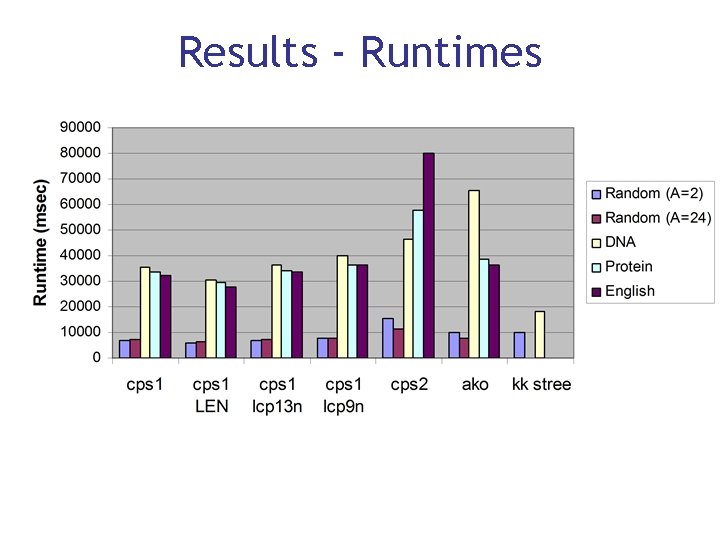

Results - Runtimes

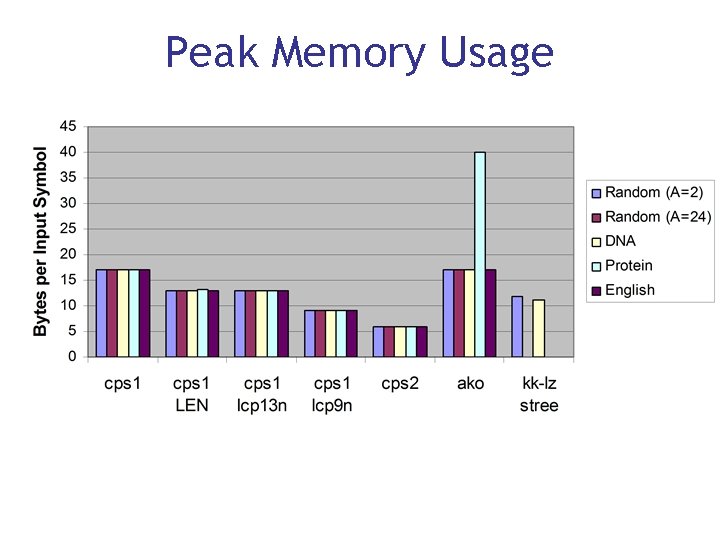

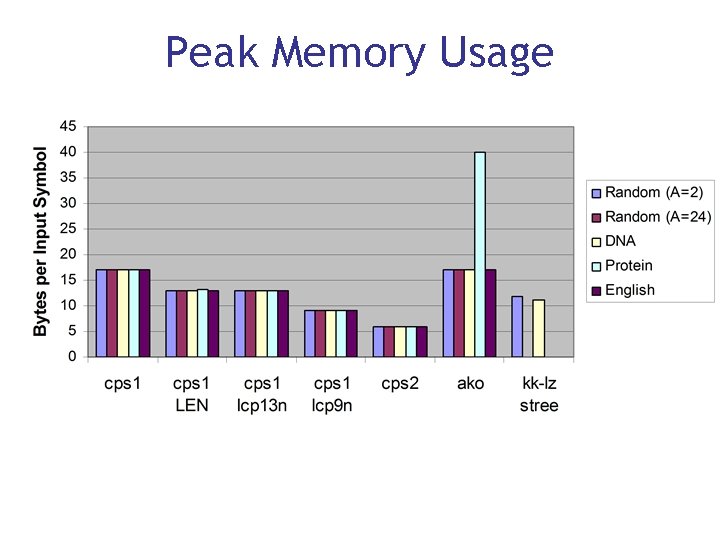

Peak Memory Usage

Conclusions • KK remains fastest algorithm on DNA • CPS 1 (13 n) is consistently fastest on larger alphabets (notably faster than AKO) • CPS 1 (9 n) provides a nice space time tradeoff • CPS 2 most suitable if memory is tight

Future Work • Computing the LCP array is a burden – Can we speed it up? – Compute it during SA construction? • How easily do these algorithms map to compressed SAs? – Overwriting SA/LCP difficult in that setting • Can LZ be computed efficiently without using SA/LCP or STree? • Can we compute the rightmost previous POS instead of the leftmost? (Veli Makinen 7 -9 -2007)