FANCCO 2015 IDRBT Hyderabad 17 19 December 2015

FANCCO 2015 IDRBT, Hyderabad, 17 -19 December, 2015 Spiking Neural Networks for On-Line Temporal Stream Data Modelling and Prediction Prof. Nikola Kasabov FIEEE, FRSNZ, DV Fellow RAE UK Director, Knowledge Engineering and Discovery Research Institute (KEDRI), Auckland University of Technology, New Zealand Visiting Professor Shanghai Jiaotong University and ETH/UZH Zurich nkasabov@aut. ac. nz 1 www. kedri. aut. ac. nz

The Knowledge Engineering and Discovery Research Institute (KEDRI) Auckland, NZ KEDRI 2015 2 www. kedri. aut. ac. nz

Content 1. Problems with temporal stream data (TSD). 2. Why SNN? 3. Design and implementation of SNN systems for deep learning and predictive modelling on TSD. Neu. Cube. 4. Neu. Cube applications for TSD. 5. Future directions 6. Software, data and papers: http: //www. kedri. aut. ac. nz/neucube/ 3 nkasabov@aut. ac. nz

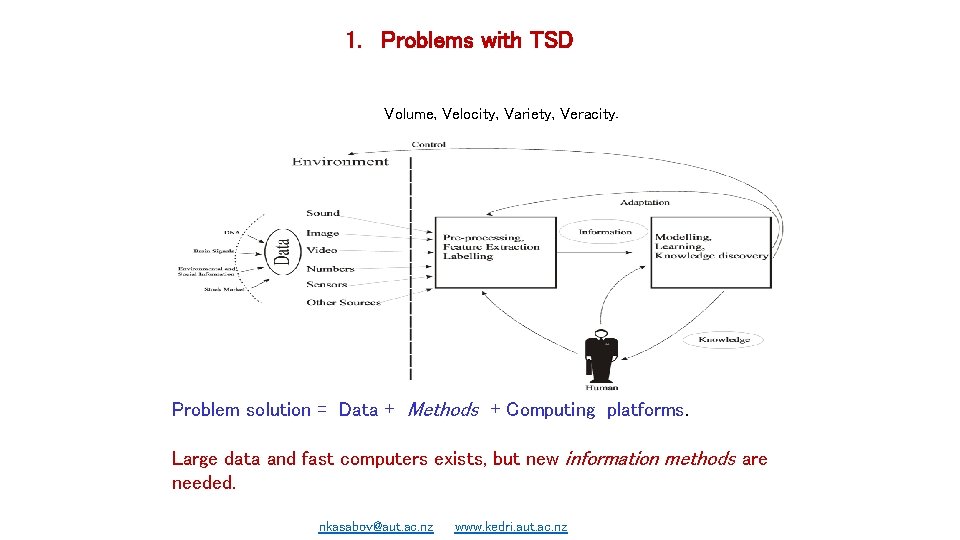

1. Problems with TSD Volume, Velocity, Variety, Veracity. Problem solution = Data + Methods + Computing platforms. Large data and fast computers exists, but new information methods are needed. nkasabov@aut. ac. nz 4 www. kedri. aut. ac. nz

PRESENTATION OUTLINE Examples of TSD – – – Business Finance Economics Geology and environment Social networks Astronomy Chemical informatics Health informatics Ecological informatics Quantum physics Brain data in neuroinformatics Genomics and proteomics data in Bioinformatics nkasabov@aut. ac. nz 5 www. kedri. aut. ac. nz

Problems with TSD Different types: -Temporal (e. g. climate data) -Fixed spatial locations of variables (e. g. brain data) -Changing locations of variables (e. g. moving objects) -Spectro-temporal (e. g. radio-astronomy; audio) Different spatio-temporal characteristics: - Sparse features/low frequency (e. g. climate; ecology; marketing); - Sparse features/high frequency (e. g. financial data; seismic data; EEG); - Dense features/low frequency (e. g. business and economic data; f. MRI); - Dense features/high frequency (e. g. global financial systems; astronomy). Challenges with big TSD: - Multimodal and multidimensional; - Complex temporal interactions over time; - Changing dynamics; - Noisy; - Difficult to capture dynamic patterns from TSD for predictive modelling! 6 nkasabov@aut. ac. nz

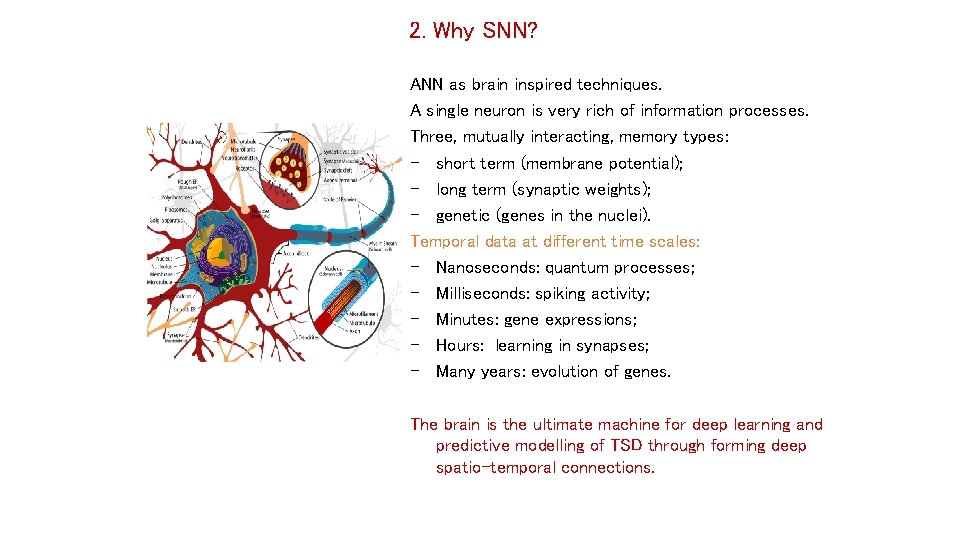

2. Why SNN? ANN as brain inspired techniques. A single neuron is very rich of information processes. Three, mutually interacting, memory types: - short term (membrane potential); - long term (synaptic weights); - genetic (genes in the nuclei). Temporal data at different time scales: - Nanoseconds: quantum processes; - Milliseconds: spiking activity; - Minutes: gene expressions; - Hours: learning in synapses; - Many years: evolution of genes. The brain is the ultimate machine for deep learning and predictive modelling of TSD through forming deep spatio-temporal connections. 7 nkasabov@aut. ac. nz

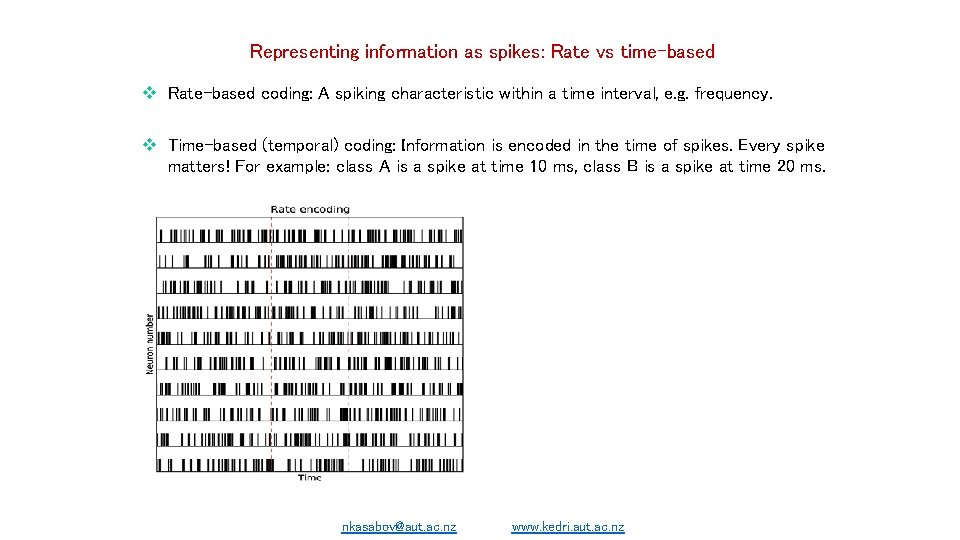

Representing information as spikes: Rate vs time-based Rate-based coding: A spiking characteristic within a time interval, e. g. frequency. Time-based (temporal) coding: Information is encoded in the time of spikes. Every spike matters! For example: class A is a spike at time 10 ms, class B is a spike at time 20 ms. nkasabov@aut. ac. nz 8 www. kedri. aut. ac. nz

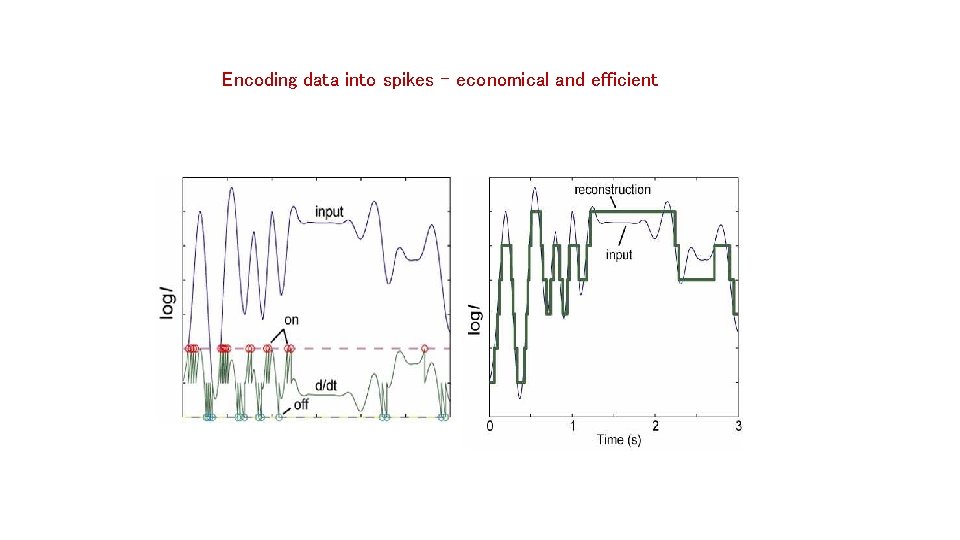

Encoding data into spikes – economical and efficient nkasabov@aut. ac. nz 9 www. kedri. aut. ac. nz

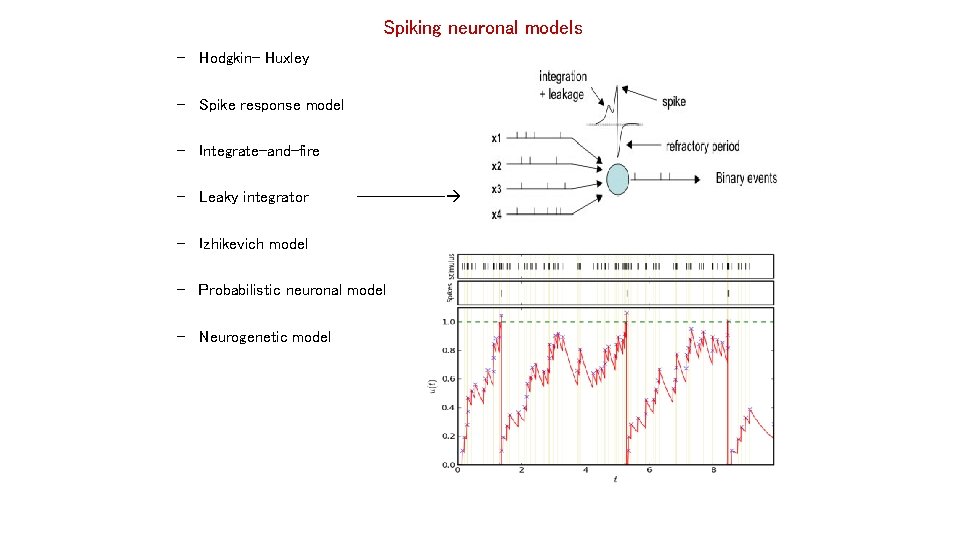

Spiking neuronal models – Hodgkin- Huxley – Spike response model – Integrate-and-fire – Leaky integrator ------ – Izhikevich model – Probabilistic neuronal model – Neurogenetic model 10 nkasabov@aut. ac. nz

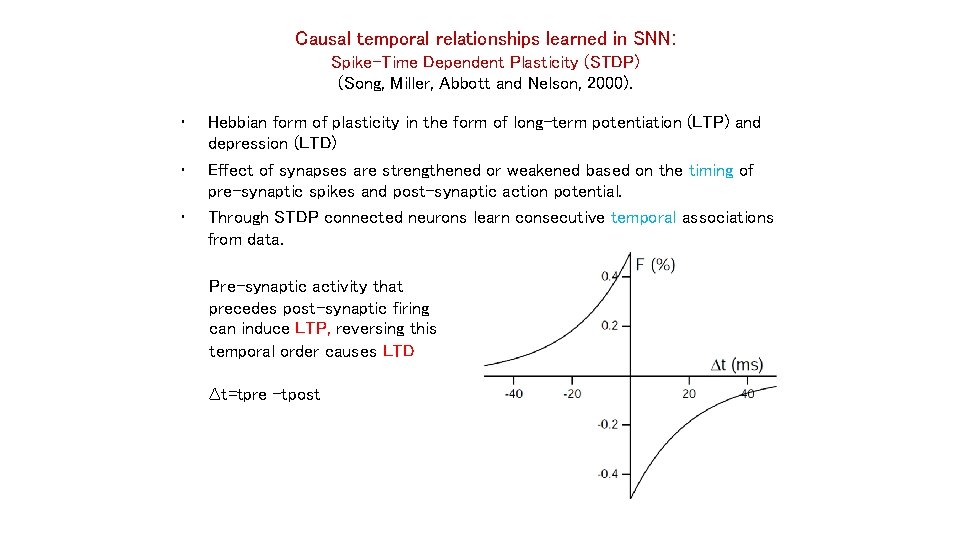

Causal temporal relationships learned in SNN: Spike-Time Dependent Plasticity (STDP) (Song, Miller, Abbott and Nelson, 2000). • • • Hebbian form of plasticity in the form of long-term potentiation (LTP) and depression (LTD) Effect of synapses are strengthened or weakened based on the timing of pre-synaptic spikes and post-synaptic action potential. Through STDP connected neurons learn consecutive temporal associations from data. Pre-synaptic activity that precedes post-synaptic firing can induce LTP, reversing this temporal order causes LTD ∆t=tpre -tpost nkasabov@aut. ac. nz 11 www. kedri. aut. ac. nz

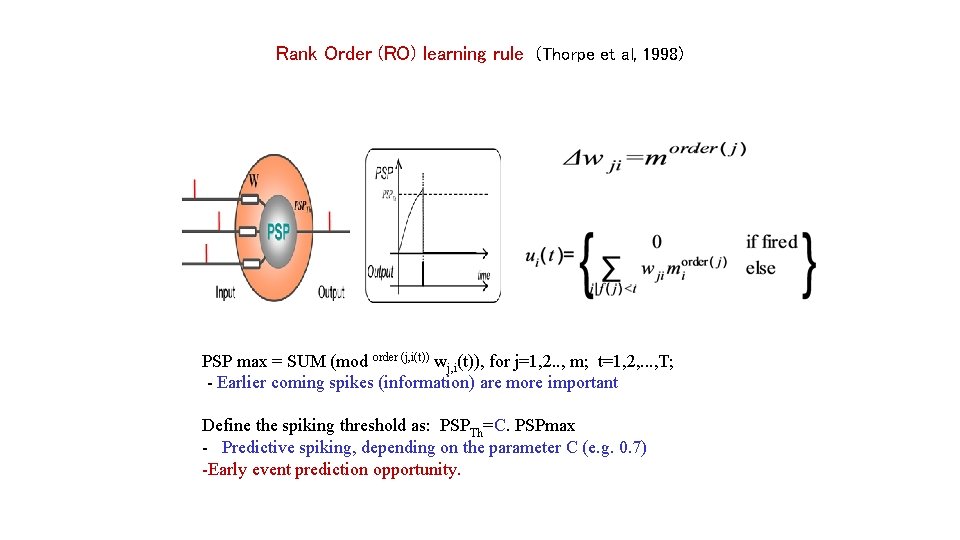

Rank Order (RO) learning rule (Thorpe et al, 1998) PSP max = SUM (mod order (j, i(t)) wj, i(t)), for j=1, 2. . , m; t=1, 2, . . . , T; - Earlier coming spikes (information) are more important Define the spiking threshold as: PSPTh=C. PSPmax - Predictive spiking, depending on the parameter C (e. g. 0. 7) -Early event prediction opportunity. 12 nkasabov@aut. ac. nz

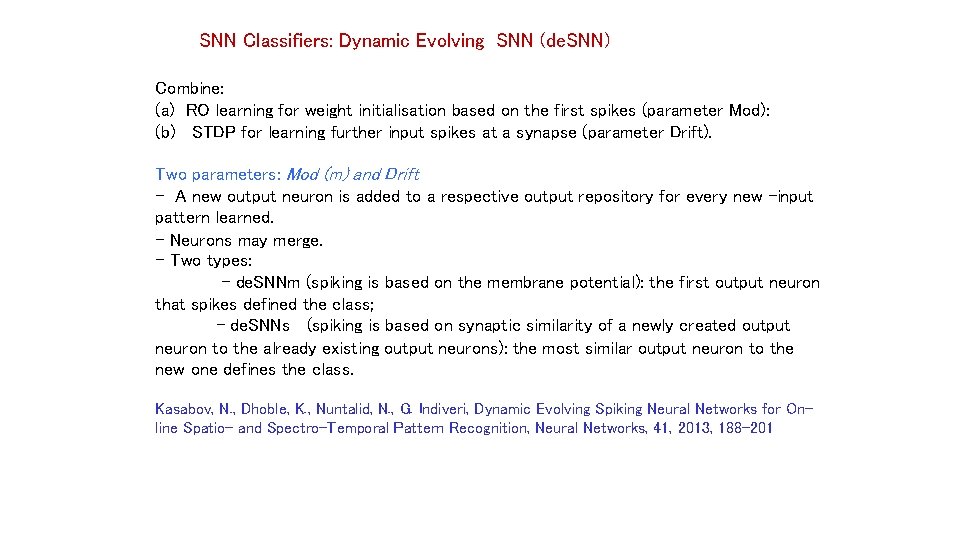

SNN Classifiers: Dynamic Evolving SNN (de. SNN) Combine: (a) RO learning for weight initialisation based on the first spikes (parameter Mod): (b) STDP for learning further input spikes at a synapse (parameter Drift). Two parameters: Mod (m) and Drift - A new output neuron is added to a respective output repository for every new -input pattern learned. - Neurons may merge. - Two types: - de. SNNm (spiking is based on the membrane potential): the first output neuron that spikes defined the class; - de. SNNs (spiking is based on synaptic similarity of a newly created output neuron to the already existing output neurons): the most similar output neuron to the new one defines the class. Kasabov, N. , Dhoble, K. , Nuntalid, N. , G. Indiveri, Dynamic Evolving Spiking Neural Networks for Online Spatio- and Spectro-Temporal Pattern Recognition, Neural Networks, 41, 2013, 188 -201 13 nkasabov@aut. ac. nz www. kedri. info

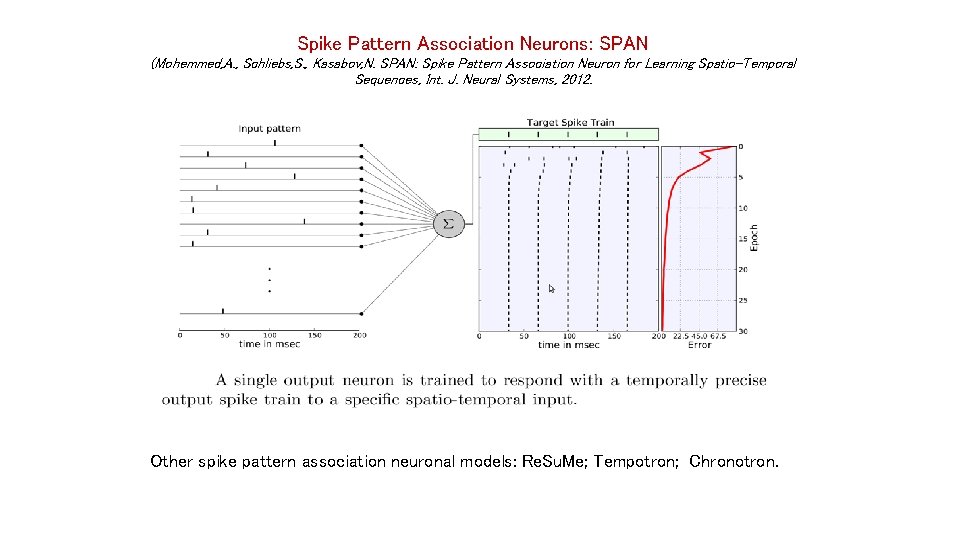

Spike Pattern Association Neurons: SPAN (Mohemmed, A. , Schliebs, S. , Kasabov, N. SPAN: Spike Pattern Association Neuron for Learning Spatio-Temporal Sequences, Int. J. Neural Systems, 2012. Other spike pattern association neuronal models: Re. Su. Me; Tempotron; Chronotron. nkasabov@aut. ac. nz 14 www. kedrui. info

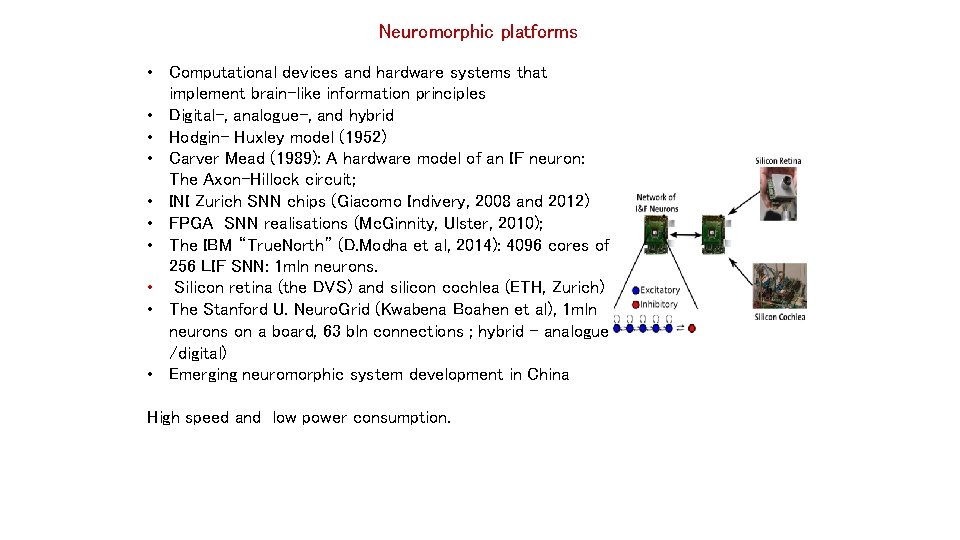

Neuromorphic platforms • Computational devices and hardware systems that implement brain-like information principles • Digital-, analogue-, and hybrid • Hodgin- Huxley model (1952) • Carver Mead (1989): A hardware model of an IF neuron: The Axon-Hillock circuit; • INI Zurich SNN chips (Giacomo Indivery, 2008 and 2012) • FPGA SNN realisations (Mc. Ginnity, Ulster, 2010); • The IBM “True. North” (D. Modha et al, 2014): 4096 cores of 256 LIF SNN: 1 mln neurons. • Silicon retina (the DVS) and silicon cochlea (ETH, Zurich) • The Stanford U. Neuro. Grid (Kwabena Boahen et al), 1 mln neurons on a board, 63 bln connections ; hybrid - analogue /digital) • Emerging neuromorphic system development in China High speed and low power consumption. 15 nkasabov@aut. ac. nz www. kedri. info

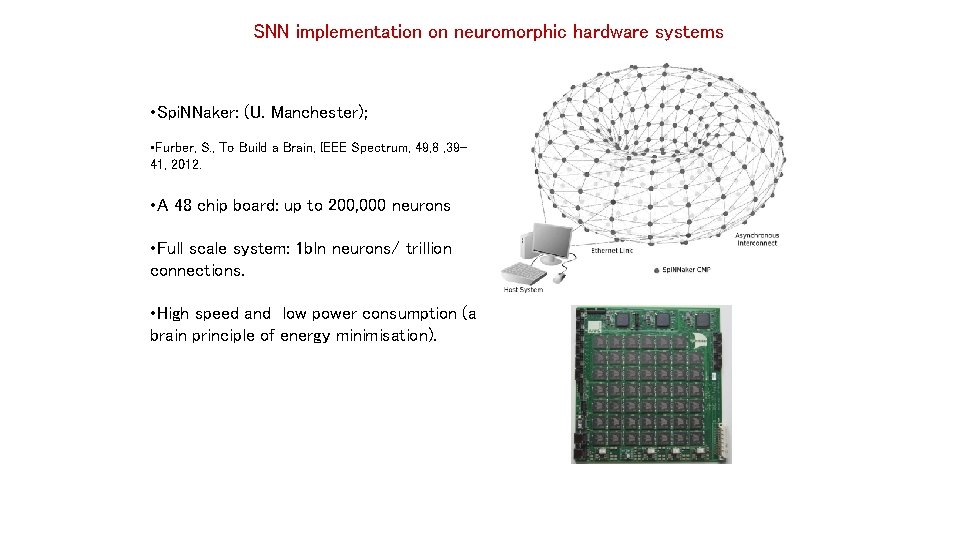

SNN implementation on neuromorphic hardware systems • Spi. NNaker: (U. Manchester); • Furber, S. , To Build a Brain, IEEE Spectrum, 49, 8 , 3941, 2012. • A 48 chip board: up to 200, 000 neurons • Full scale system: 1 bln neurons/ trillion connections. • High speed and low power consumption (a brain principle of energy minimisation). 16 nkasabov@aut. ac. nz www. kedri. info

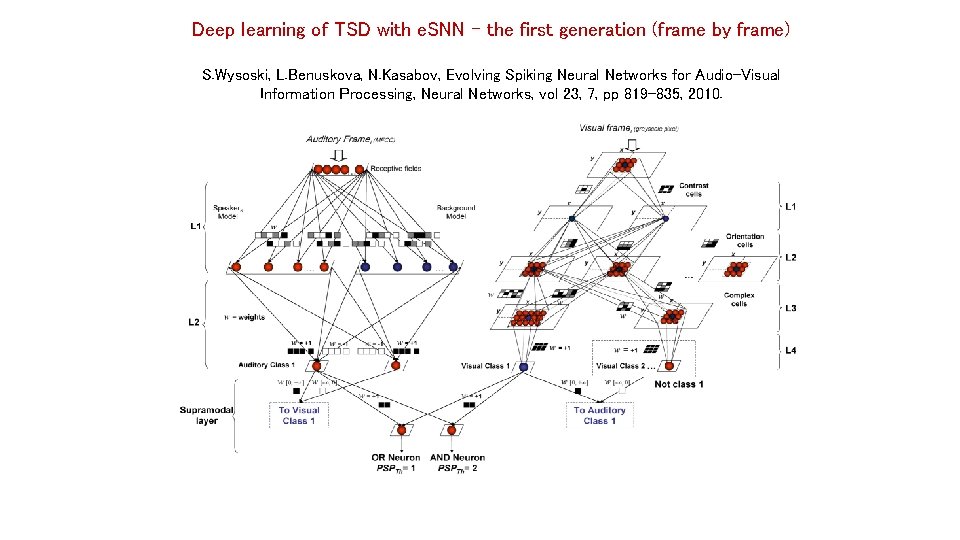

Deep learning of TSD with e. SNN – the first generation (frame by frame) S. Wysoski, L. Benuskova, N. Kasabov, Evolving Spiking Neural Networks for Audio-Visual Information Processing, Neural Networks, vol 23, 7, pp 819 -835, 2010. 17 nkasabov@aut. ac. nz www. kedri. aut. ac. nz

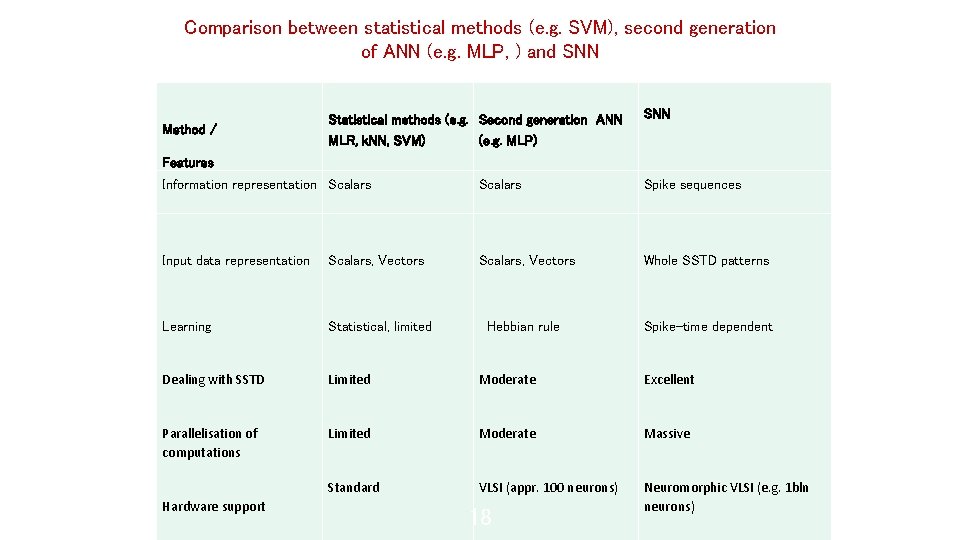

Comparison between statistical methods (e. g. SVM), second generation of ANN (e. g. MLP, ) and SNN Method / Statistical methods (e. g. Second generation ANN MLR, k. NN, SVM) (e. g. MLP) SNN Features Information representation Scalars Spike sequences Input data representation Scalars, Vectors Whole SSTD patterns Learning Statistical, limited Hebbian rule Spike-time dependent Dealing with SSTD Limited Moderate Excellent Parallelisation of computations Limited Moderate Massive Standard VLSI (appr. 100 neurons) Neuromorphic VLSI (e. g. 1 bln neurons) Hardware support 18 nkasabov@aut. ac. nz

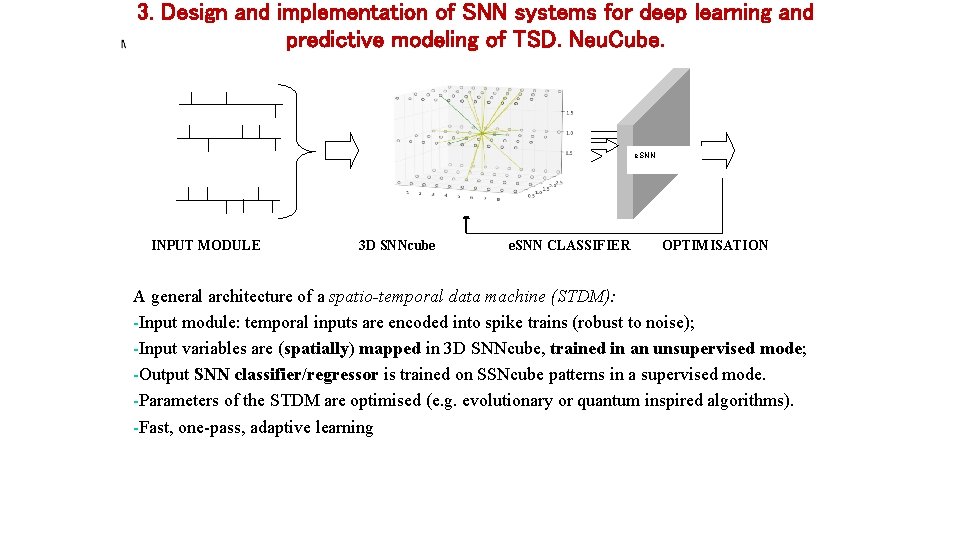

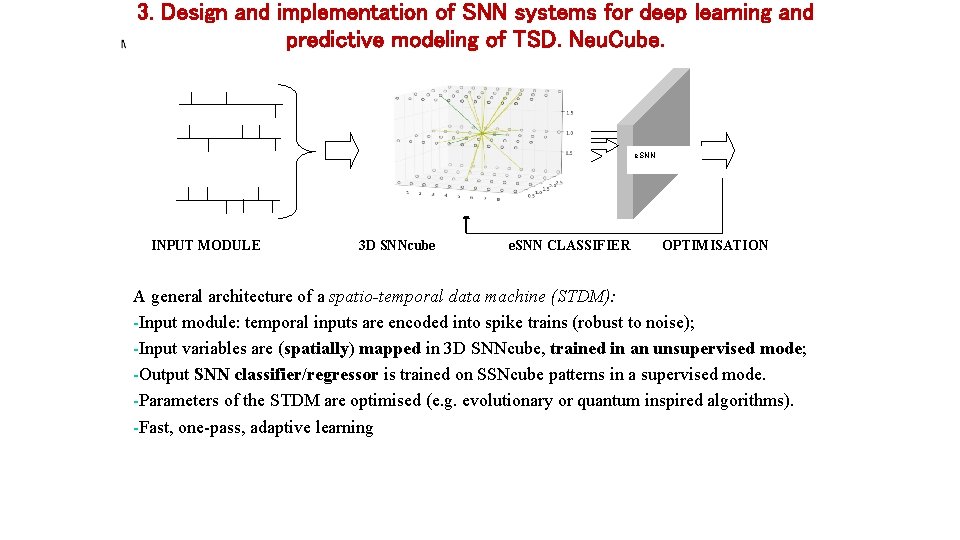

3. Design and implementation of SNN systems for deep learning and predictive modeling of TSD. Neu. Cube. SNNr e. SNN INPUT MODULE 3 D SNNcube e. SNN CLASSIFIER OPTIMISATION A general architecture of a spatio-temporal data machine (STDM): -Input module: temporal inputs are encoded into spike trains (robust to noise); -Input variables are (spatially) mapped in 3 D SNNcube, trained in an unsupervised mode; -Output SNN classifier/regressor is trained on SSNcube patterns in a supervised mode. -Parameters of the STDM are optimised (e. g. evolutionary or quantum inspired algorithms). -Fast, one-pass, adaptive learning nkasabov@aut. ac. nz 19 www. kedri. aut. ac. nz

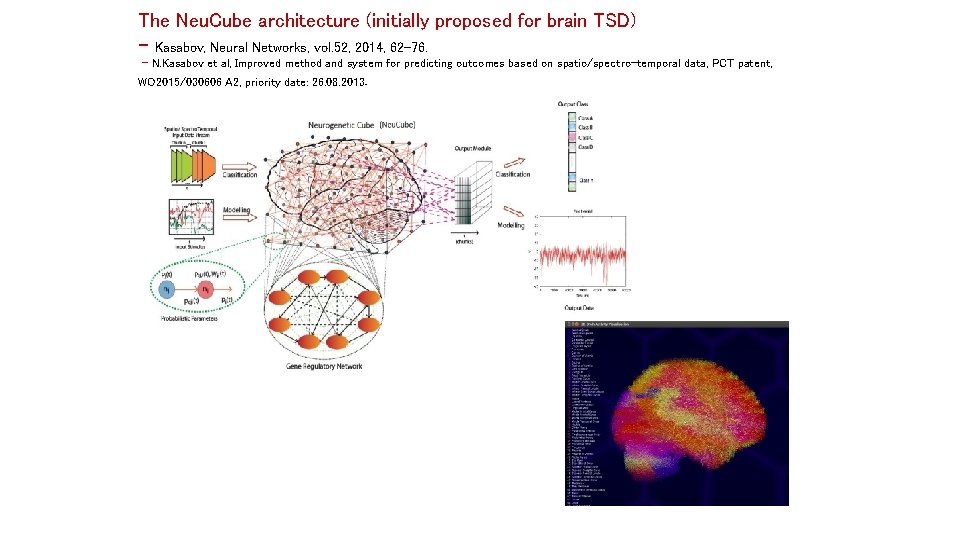

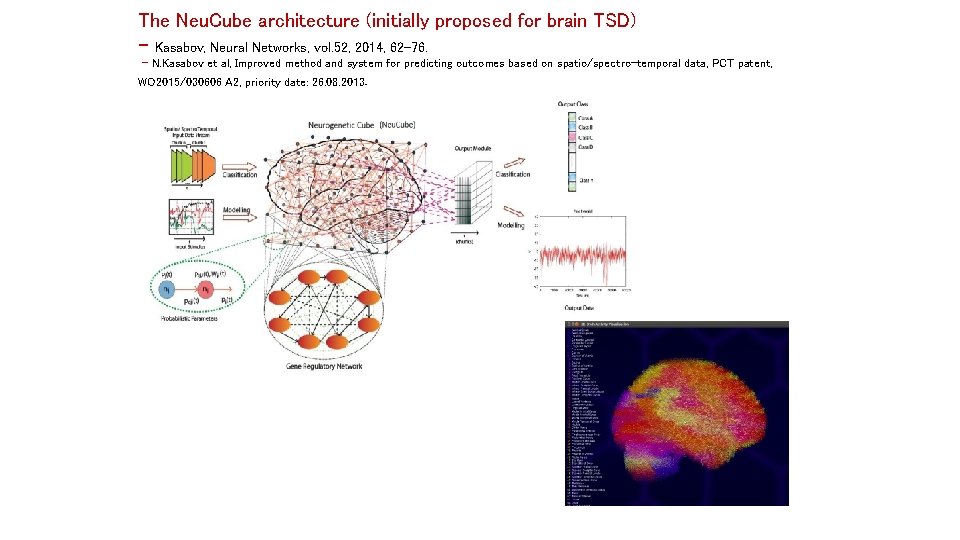

The Neu. Cube architecture (initially proposed for brain TSD) - Kasabov, Neural Networks, vol. 52, 2014, 62 -76. - N. Kasabov et al, Improved method and system for predicting outcomes based on spatio/spectro-temporal data, PCT patent, WO 2015/030606 A 2, priority date: 26. 08. 2013. nkasabov@aut. ac. nz 20 www. kedri. aut. ac. nz/neucube/

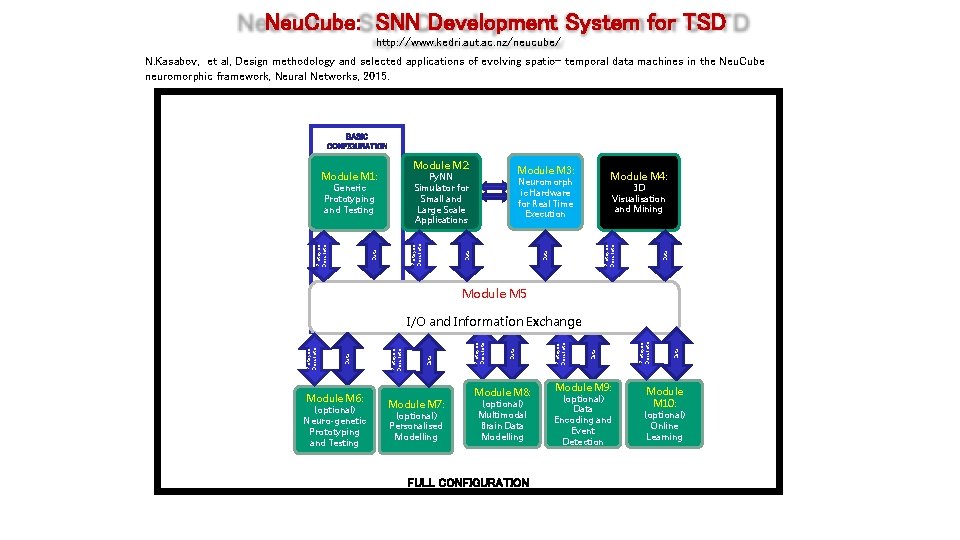

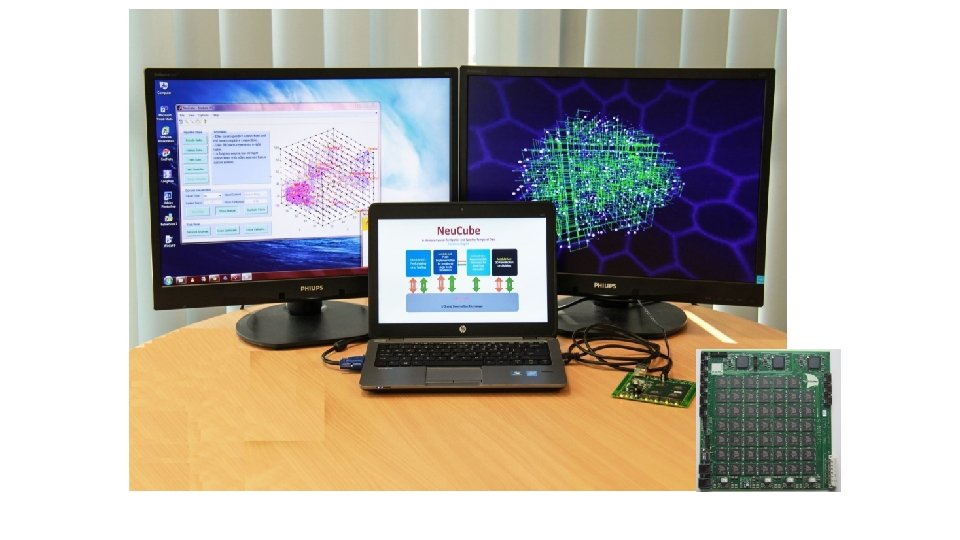

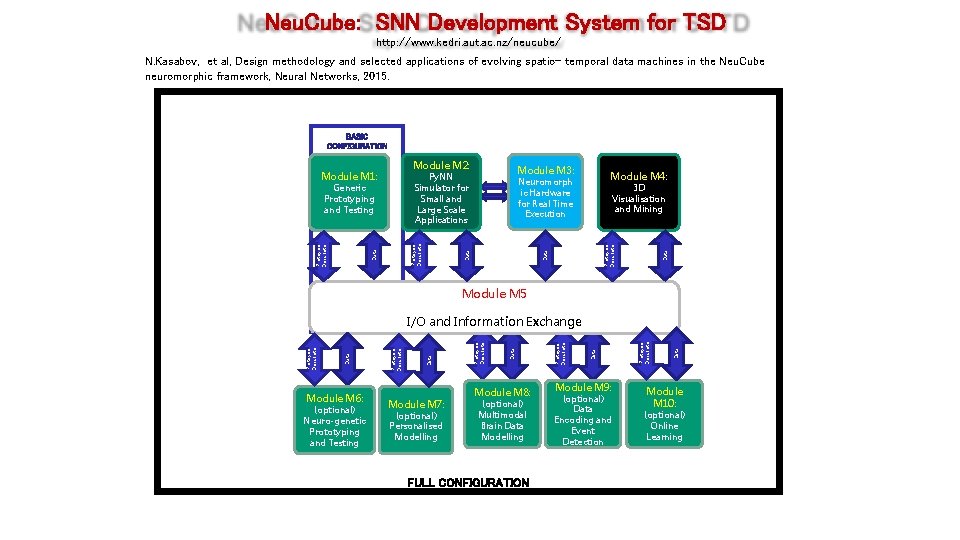

Neu. Cube: SNN Development System for TSD http: //www. kedri. aut. ac. nz/neucube/ N. Kasabov, et al, Design methodology and selected applications of evolving spatio- temporal data machines in the Neu. Cube neuromorphic framework, Neural Networks, 2015. STANDARD CONFIGURATION BASIC CONFIGURATION Module M 4: Neuromorph ic Hardware for Real Time Execution Protoype Descripto r 3 D Visualisation and Mining Data Protoype Descripto r Generic Prototyping and Testing Protoype Descripto r Module M 3: Py. NN Simulator for Small and Large Scale Applications Data Module M 2: Module M 1: Module M 5 Module M 6: (optional) Neuro-genetic Prototyping and Testing Module M 7: (optional) Personalised Modelling Module M 8: (optional) Multimodal Brain Data Modelling Module M 9: (optional) Data Encoding and Event Detection FULL CONFIGURATION nkasabov@aut. ac. nz 21 www. kedri. aut. ac. nz/neucube/ Data Protoype Descripto r Data Protoype Descripto r I/O and Information Exchange Module M 10: (optional) Online Learning

nkasabov@aut. ac. nz 22 www. kedri. aut. ac. nz/neucube/

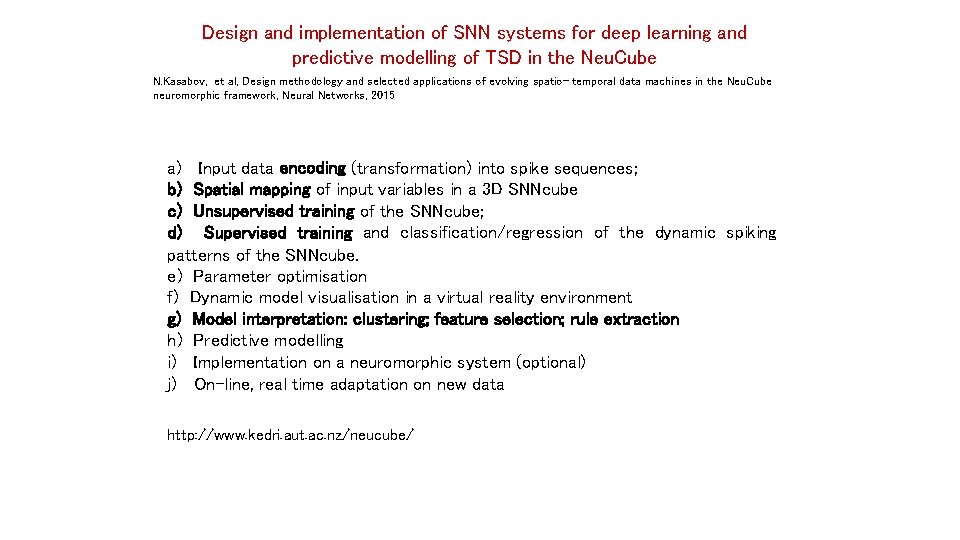

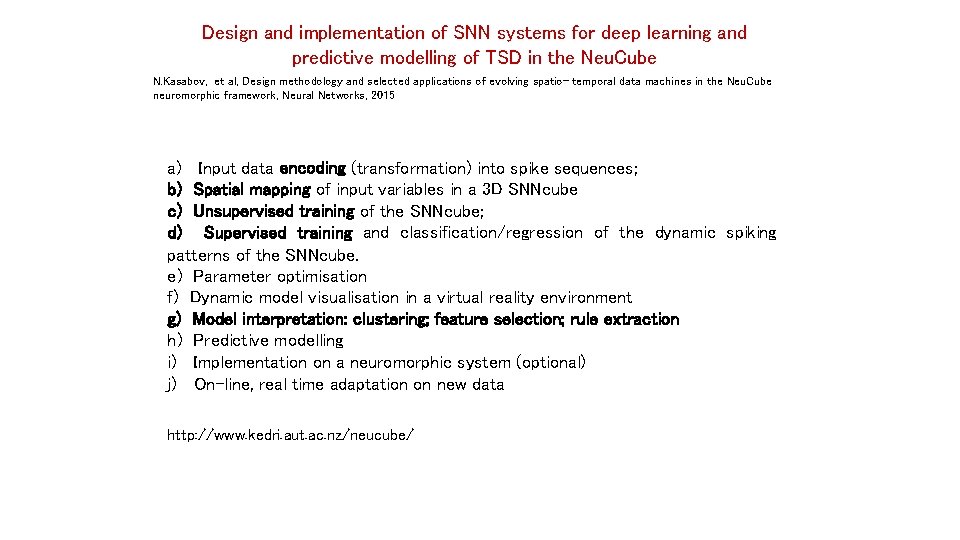

Design and implementation of SNN systems for deep learning and predictive modelling of TSD in the Neu. Cube N. Kasabov, et al, Design methodology and selected applications of evolving spatio- temporal data machines in the Neu. Cube neuromorphic framework, Neural Networks, 2015 a) Input data encoding (transformation) into spike sequences; b) Spatial mapping of input variables in a 3 D SNNcube c) Unsupervised training of the SNNcube; d) Supervised training and classification/regression of the dynamic spiking patterns of the SNNcube. e) Parameter optimisation f) Dynamic model visualisation in a virtual reality environment g) Model interpretation: clustering; feature selection; rule extraction h) Predictive modelling i) Implementation on a neuromorphic system (optional) j) On-line, real time adaptation on new data http: //www. kedri. aut. ac. nz/neucube/ nkasabov@aut. ac. nz 23 http: //www. kedri. aut. ac. nz/neucube/

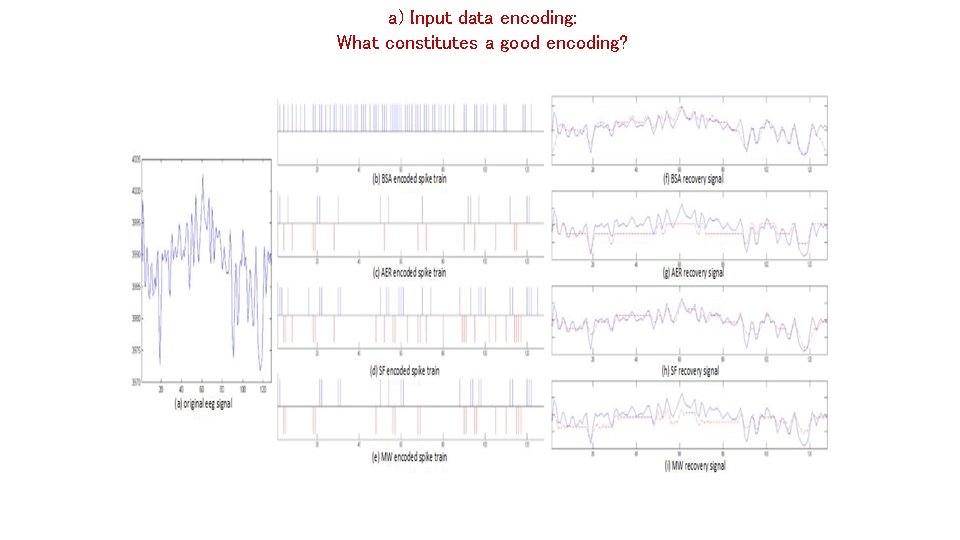

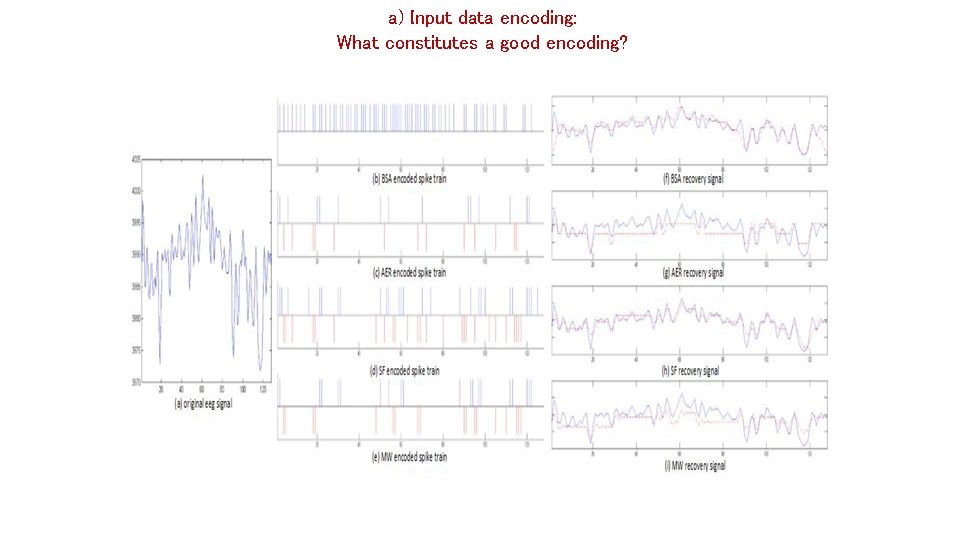

a) Input data encoding: What constitutes a good encoding? 24 nkasabov@aut. ac. nz

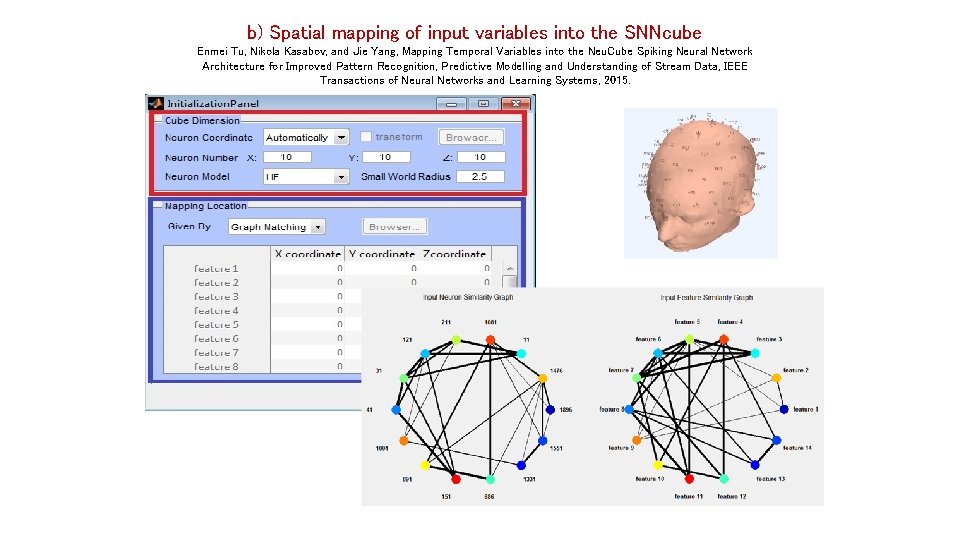

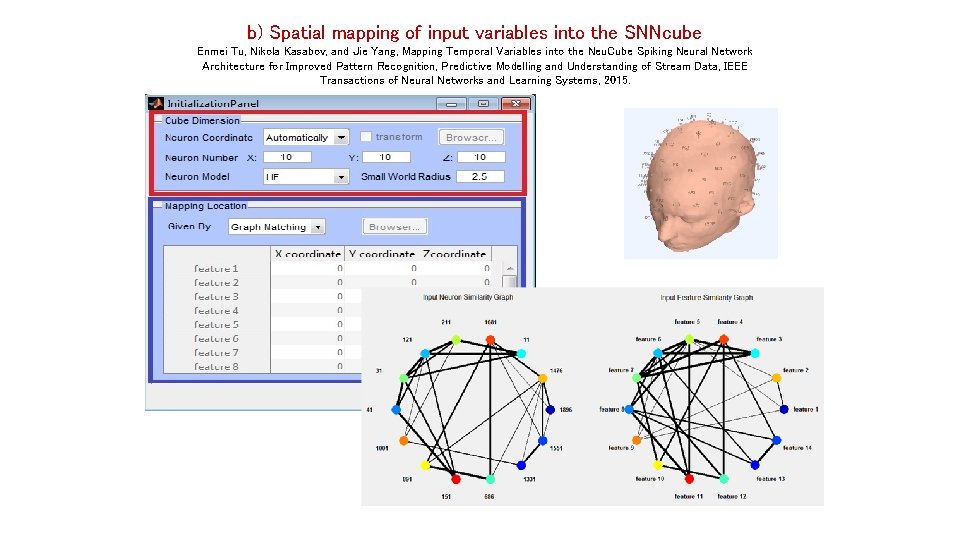

b) Spatial mapping of input variables into the SNNcube Enmei Tu, Nikola Kasabov, and Jie Yang, Mapping Temporal Variables into the Neu. Cube Spiking Neural Network Architecture for Improved Pattern Recognition, Predictive Modelling and Understanding of Stream Data, IEEE Transactions of Neural Networks and Learning Systems, 2015. 25 nkasabov@aut. ac. nz

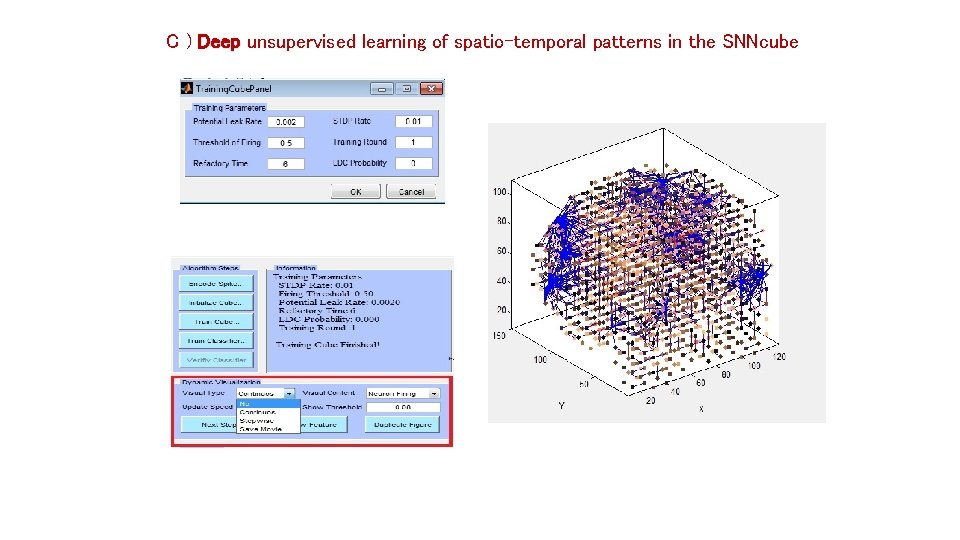

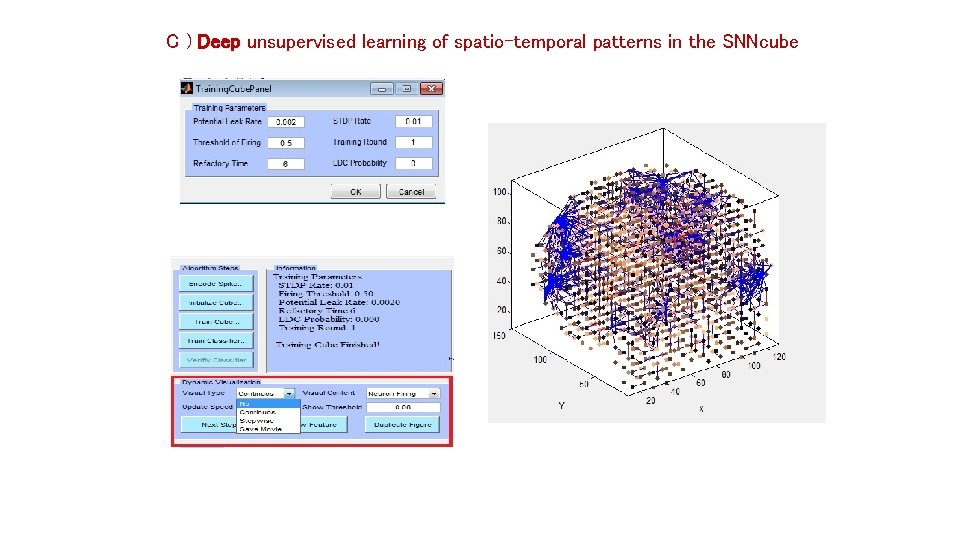

C ) Deep unsupervised learning of spatio-temporal patterns in the SNNcube 26 nkasabov@aut. ac. nz

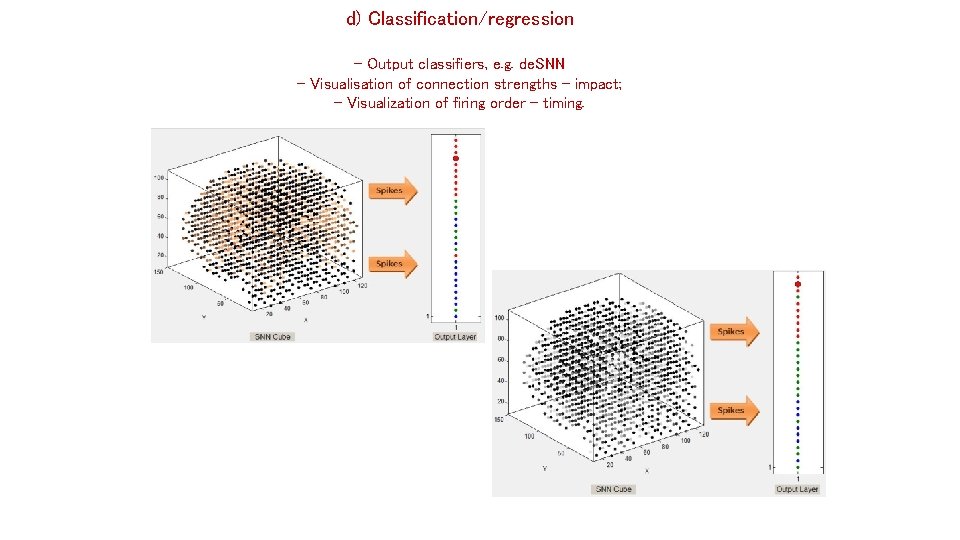

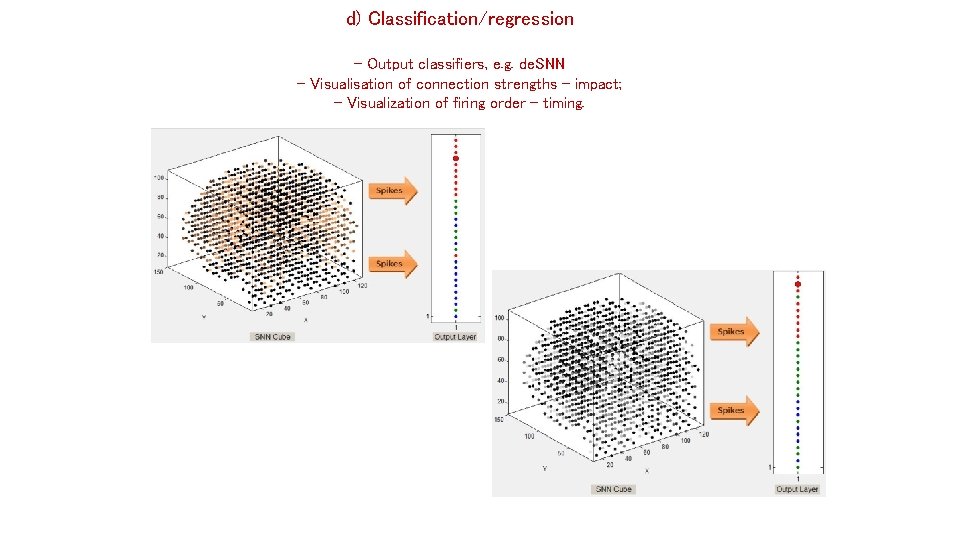

d) Classification/regression - Output classifiers, e. g. de. SNN - Visualisation of connection strengths – impact; - Visualization of firing order – timing. 27 nkasabov@aut. ac. nz

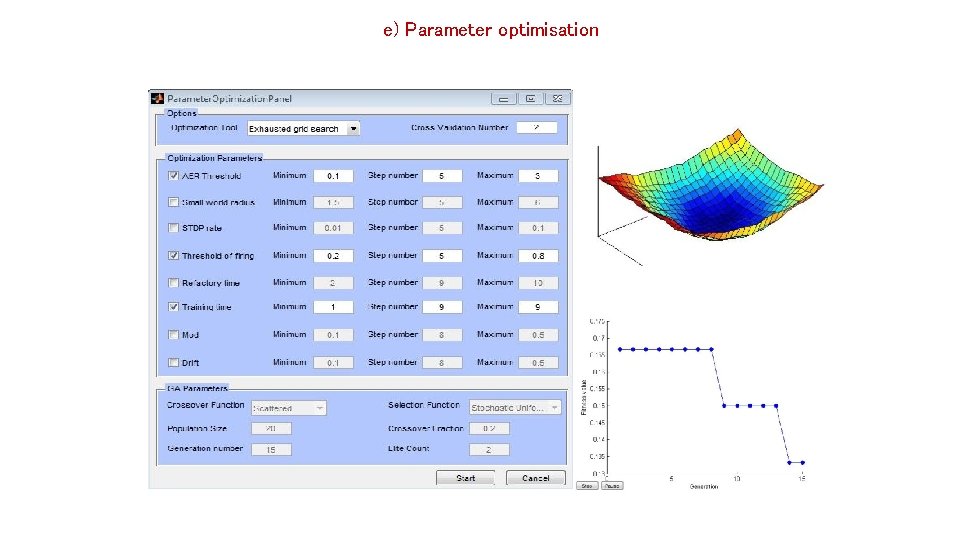

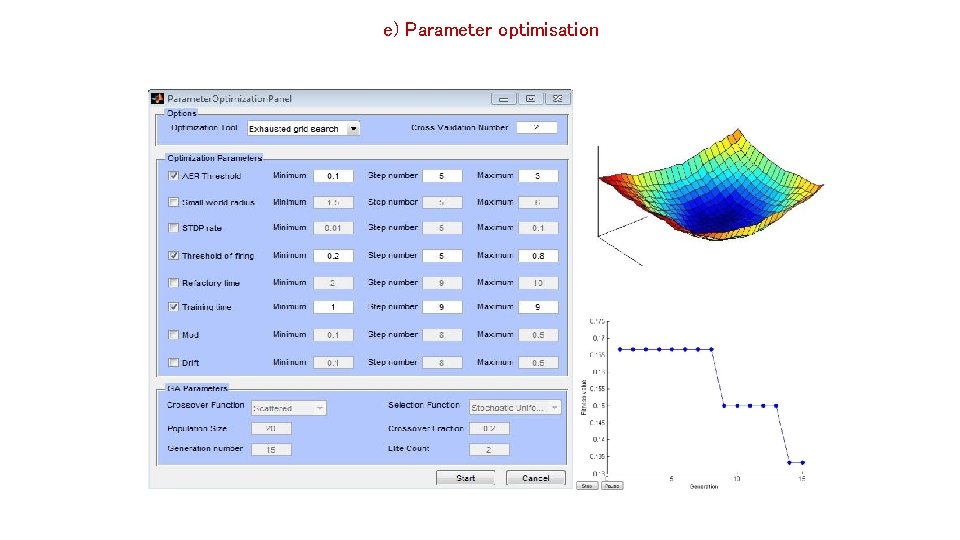

e) Parameter optimisation 28 nkasabov@aut. ac. nz

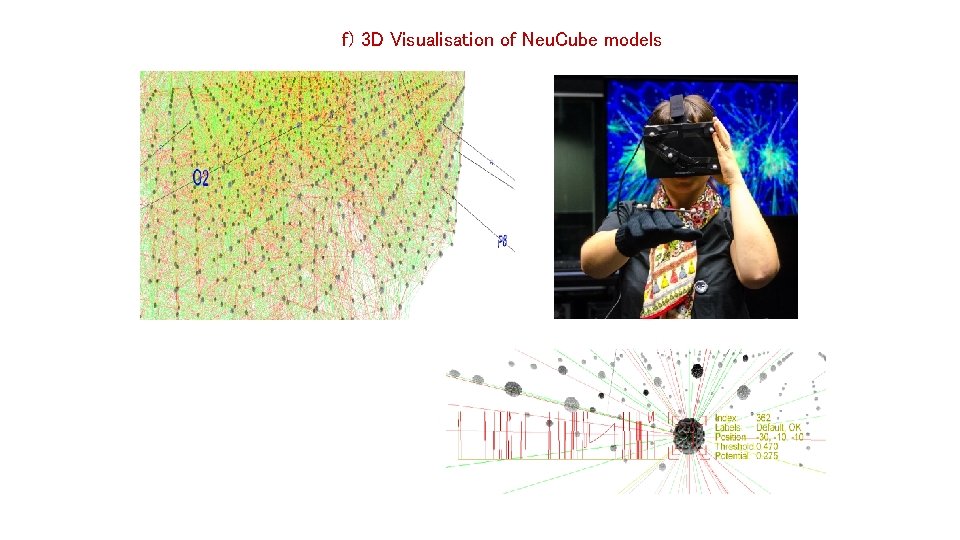

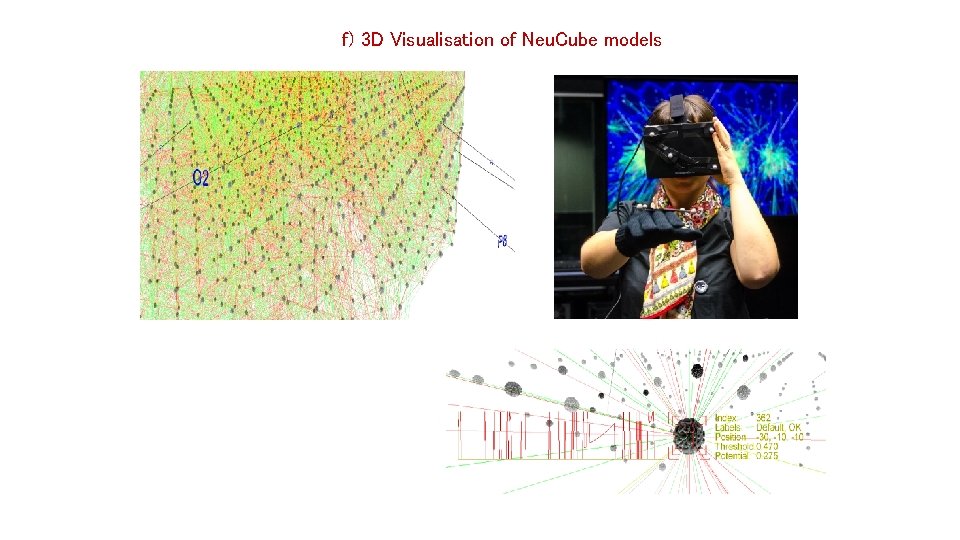

f) 3 D Visualisation of Neu. Cube models 29 nkasabov@aut. ac. nz

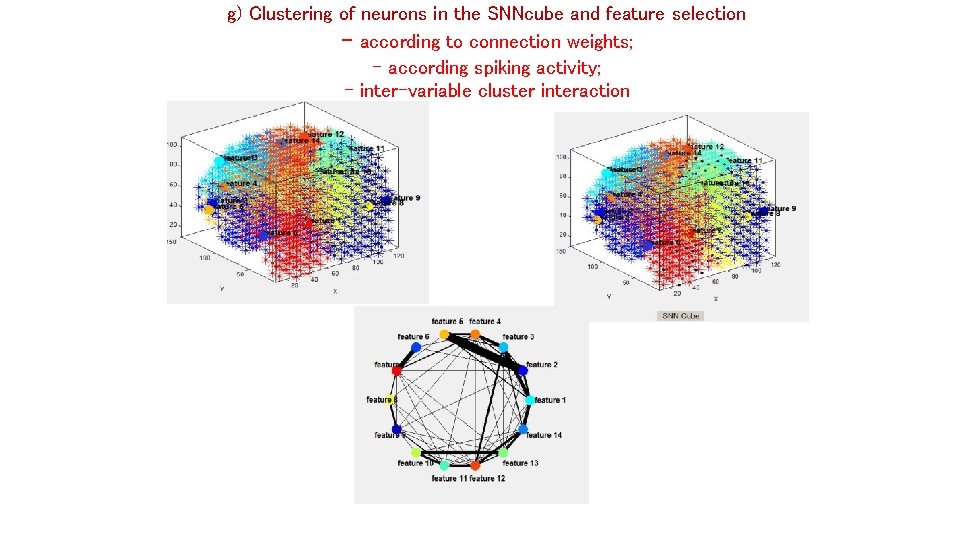

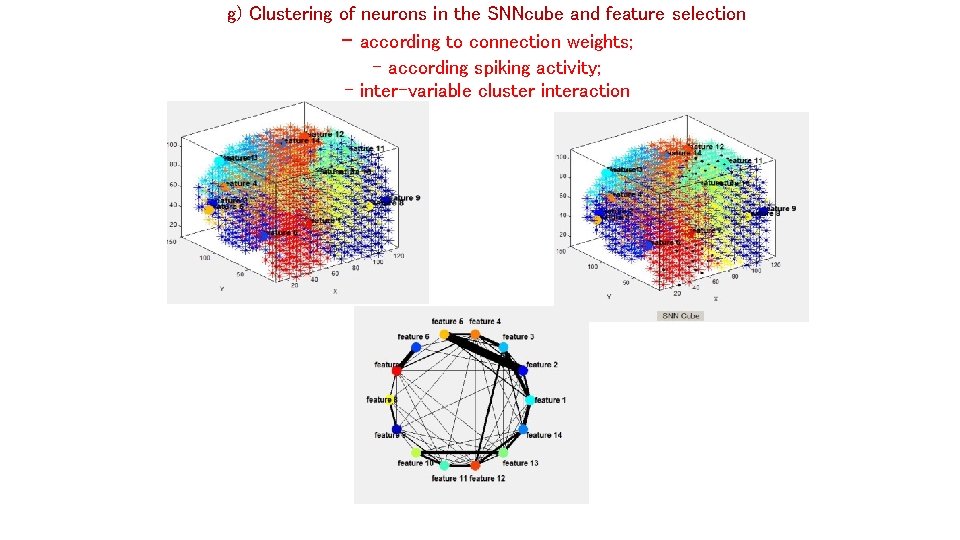

g) Clustering of neurons in the SNNcube and feature selection - according to connection weights; - according spiking activity; - inter-variable cluster interaction 30 nkasabov@aut. ac. nz

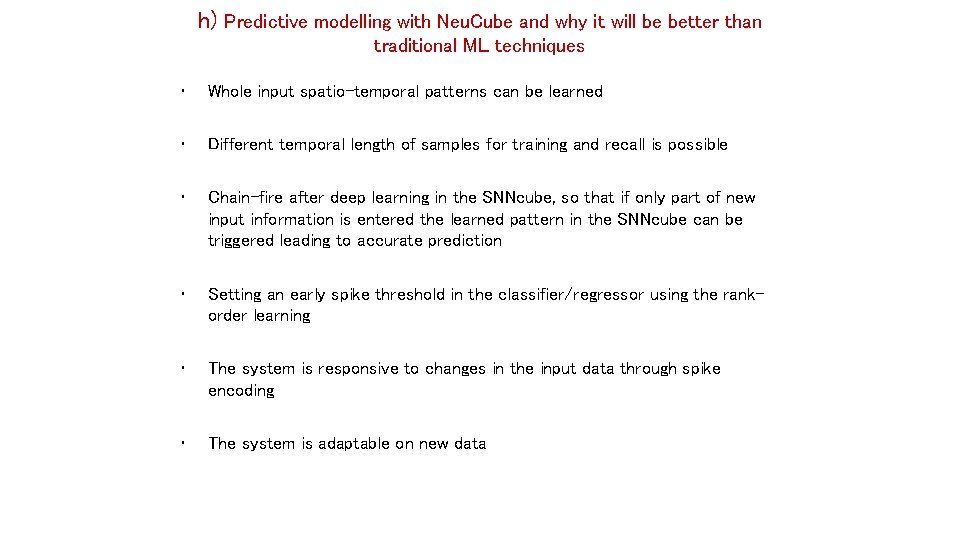

h) Predictive modelling with Neu. Cube and why it will be better than traditional ML techniques • Whole input spatio-temporal patterns can be learned • Different temporal length of samples for training and recall is possible • Chain-fire after deep learning in the SNNcube, so that if only part of new input information is entered the learned pattern in the SNNcube can be triggered leading to accurate prediction • Setting an early spike threshold in the classifier/regressor using the rankorder learning • The system is responsive to changes in the input data through spike encoding • The system is adaptable on new data 31 nkasabov@aut. ac. nz

3. Design and implementation of SNN systems for deep learning and predictive modeling of TSD. Neu. Cube. SNNr e. SNN INPUT MODULE 3 D SNNcube e. SNN CLASSIFIER OPTIMISATION A general architecture of a spatio-temporal data machine (STDM): -Input module: temporal inputs are encoded into spike trains (robust to noise); -Input variables are (spatially) mapped in 3 D SNNcube, trained in an unsupervised mode; -Output SNN classifier/regressor is trained on SSNcube patterns in a supervised mode. -Parameters of the STDM are optimised (e. g. evolutionary or quantum inspired algorithms). -Fast, one-pass, adaptive learning nkasabov@aut. ac. nz 32 www. kedri. aut. ac. nz

The Neu. Cube architecture (initially proposed for brain TSD) - Kasabov, Neural Networks, vol. 52, 2014, 62 -76. - N. Kasabov et al, Improved method and system for predicting outcomes based on spatio/spectro-temporal data, PCT patent, WO 2015/030606 A 2, priority date: 26. 08. 2013. nkasabov@aut. ac. nz 33 www. kedri. aut. ac. nz/neucube/

Neu. Cube: SNN Development System for TSD http: //www. kedri. aut. ac. nz/neucube/ N. Kasabov, et al, Design methodology and selected applications of evolving spatio- temporal data machines in the Neu. Cube neuromorphic framework, Neural Networks, 2015. STANDARD CONFIGURATION BASIC CONFIGURATION Module M 4: Neuromorph ic Hardware for Real Time Execution Protoype Descripto r 3 D Visualisation and Mining Data Protoype Descripto r Generic Prototyping and Testing Protoype Descripto r Module M 3: Py. NN Simulator for Small and Large Scale Applications Data Module M 2: Module M 1: Module M 5 Module M 6: (optional) Neuro-genetic Prototyping and Testing Module M 7: (optional) Personalised Modelling Module M 8: (optional) Multimodal Brain Data Modelling Module M 9: (optional) Data Encoding and Event Detection FULL CONFIGURATION nkasabov@aut. ac. nz 34 www. kedri. aut. ac. nz/neucube/ Data Protoype Descripto r Data Protoype Descripto r I/O and Information Exchange Module M 10: (optional) Online Learning

nkasabov@aut. ac. nz 35 www. kedri. aut. ac. nz/neucube/

Design and implementation of SNN systems for deep learning and predictive modelling of TSD in the Neu. Cube N. Kasabov, et al, Design methodology and selected applications of evolving spatio- temporal data machines in the Neu. Cube neuromorphic framework, Neural Networks, 2015 a) Input data encoding (transformation) into spike sequences; b) Spatial mapping of input variables in a 3 D SNNcube c) Unsupervised training of the SNNcube; d) Supervised training and classification/regression of the dynamic spiking patterns of the SNNcube. e) Parameter optimisation f) Dynamic model visualisation in a virtual reality environment g) Model interpretation: clustering; feature selection; rule extraction h) Predictive modelling i) Implementation on a neuromorphic system (optional) j) On-line, real time adaptation on new data http: //www. kedri. aut. ac. nz/neucube/ nkasabov@aut. ac. nz 36 http: //www. kedri. aut. ac. nz/neucube/

a) Input data encoding: What constitutes a good encoding? 37 nkasabov@aut. ac. nz

b) Spatial mapping of input variables into the SNNcube Enmei Tu, Nikola Kasabov, and Jie Yang, Mapping Temporal Variables into the Neu. Cube Spiking Neural Network Architecture for Improved Pattern Recognition, Predictive Modelling and Understanding of Stream Data, IEEE Transactions of Neural Networks and Learning Systems, 2015. 38 nkasabov@aut. ac. nz

C ) Deep unsupervised learning of spatio-temporal patterns in the SNNcube 39 nkasabov@aut. ac. nz

d) Classification/regression - Output classifiers, e. g. de. SNN - Visualisation of connection strengths – impact; - Visualization of firing order – timing. 40 nkasabov@aut. ac. nz

e) Parameter optimisation 41 nkasabov@aut. ac. nz

f) 3 D Visualisation of Neu. Cube models 42 nkasabov@aut. ac. nz

g) Clustering of neurons in the SNNcube and feature selection - according to connection weights; - according spiking activity; - inter-variable cluster interaction 43 nkasabov@aut. ac. nz

h) Predictive modelling with Neu. Cube and why it will be better than traditional ML techniques • Whole input spatio-temporal patterns can be learned • Different temporal length of samples for training and recall is possible • Chain-fire after deep learning in the SNNcube, so that if only part of new input information is entered the learned pattern in the SNNcube can be triggered leading to accurate prediction • Setting an early spike threshold in the classifier/regressor using the rankorder learning • The system is responsive to changes in the input data through spike encoding • The system is adaptable on new data 44 nkasabov@aut. ac. nz

- Slides: 44