False and Missed Discoveries in Financial Economics abridged

False (and Missed) Discoveries in Financial Economics (abridged) Campbell R. Harvey Duke University, NBER and Man Group, PLC Yan Liu Purdue University November 2019 2

A framework to separate luck from skill Six research initiatives: 1. Explicitly adjust for multiple tests (“…”) [RFS 2016] 2. Bootstrap (“Lucky Factors”) [in review, 2019] 3. Noise reduction (“Detecting Repeatable Performance”) [RFS 2018] 4. Rare effects (“Presidential Address”) [JF 2017] 5. Idiosyncratic noise (“Alpha dispersion”) [JFE 2019] 6. “False (and missed) discoveries” [JF 2020] Campbell R. Harvey 2019 14

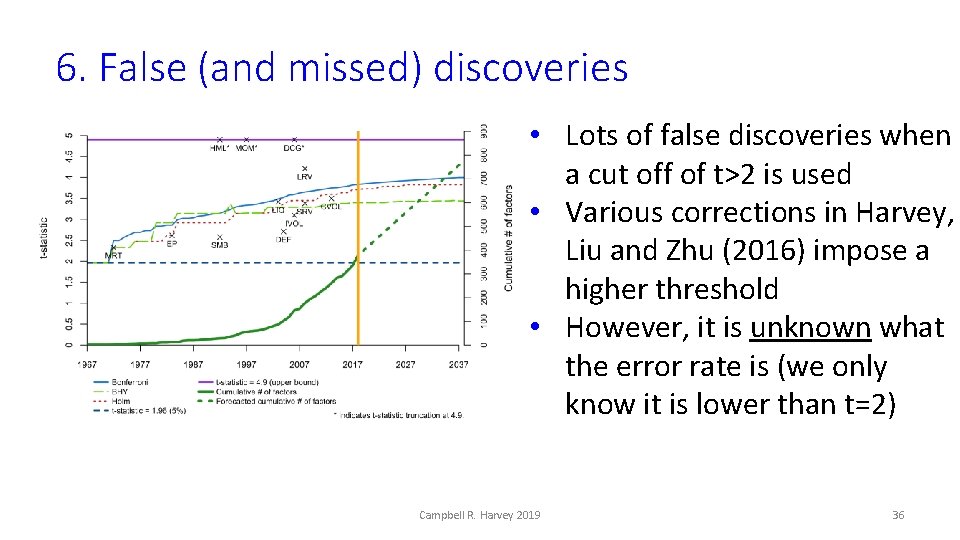

6. False (and missed) discoveries • Lots of false discoveries when a cut off of t>2 is used • Various corrections in Harvey, Liu and Zhu (2016) impose a higher threshold • However, it is unknown what the error rate is (we only know it is lower than t=2) Campbell R. Harvey 2019 36

6. False (and missed) discoveries In addition: • Current statistical tools rely on a binary classification (all false discoveries are simply counted to get the error rate – ignoring the magnitude of the mistake) • Research in financial economics pays little attention to power (ability to identify truly skilled managers) • There is no work on the relative costs of Type I and Type II errors Campbell R. Harvey 2019 37

6. False (and missed) discoveries • Explicitly calibrate the Type I (hiring a bad manager, choosing a false factor) and Type II (missing a good manager) rates given the data using a novel double bootstrapping method • We are able to accommodate the magnitude of the error not just a binary classification • Our framework enables a new decision rule: e. g. , “To avoid a bad manager, I am willing to miss five good managers” Campbell R. Harvey 2019 38

6. False (and missed) discoveries Preview • Fama and French (2010) conclude that they cannot reject the hypothesis that no mutual fund outperforms (consistent with market efficiency) • We show that the application of their technique to mutual funds has close to zero power (they are unable to identify truly skilled managers) • With the application of our method, the narrative changes Campbell R. Harvey 2019 39

6. False (and missed) discoveries Single test § Control for Type I error at a certain level (5%), while seeking methods that generate low Type II errors (high power) § Type I error: Assume null is true and calculate the probability of false discovery § Type II error: Assume a certain level for parameter of interest, say, m 0, calculate the probability of false negative as a function of m 0 Campbell R. Harvey 2019 40

6. False (and missed) discoveries Multiple tests § Type I error: null holding true for every fund seems unrealistic. We need alternative definitions (like False Discovery Rate). § We provide data-driven statistical cutoff. § Type II error: Depends on parameters of interest – which is a high dimensional vector with multiple tests. Not clear what the value of this vector is. § We propose a simple way to summarize the parameters of interest. Campbell R. Harvey 2019 41

6. False (and missed) discoveries Method § N strategies and D time periods: Data matrix X 0 (Dx. N) § Suppose you believe that proportion p 0 are “skilled” § Special case is Fama and French (2010) and Kosowski et al. (2010) where p 0=0 Campbell R. Harvey 2019 45

6. False (and missed) discoveries Method § When p 0>0, some strategies believed to be true, then p 0 acts like a plug in parameter (similar to m 0) that helps us measure the error rates in terms of multiple testing. § In multiple testing, we need to make assumptions on population statistics that are believed to be true in order to determine error rates § In our framework, p 0 is a single summary statistic that allows us to evaluate errors without conditioning on the values of population statistics Campbell R. Harvey 2019 46

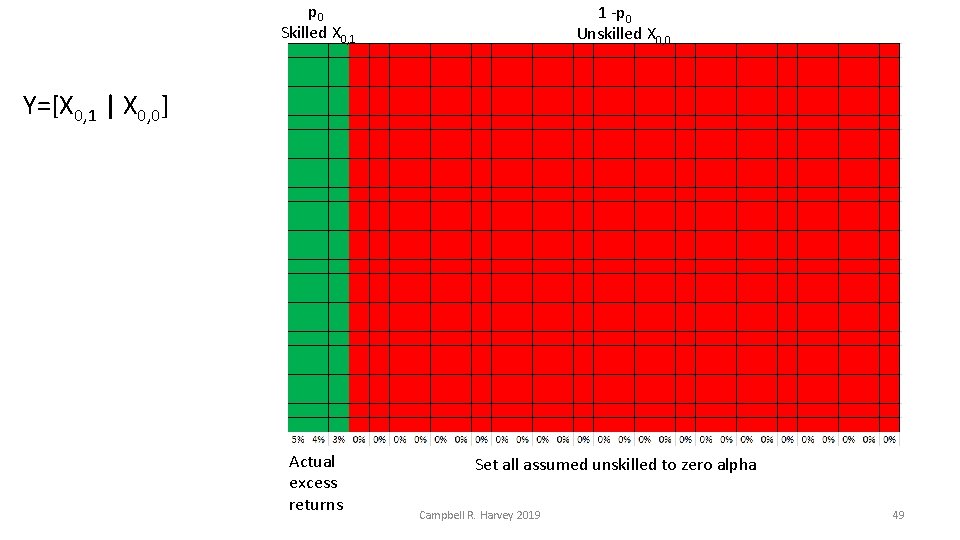

6. False (and missed) discoveries Method: § Sort the N strategies by t-statistics § p 0 x N are deemed “skilled” (1 -p 0) x N “unskilled” § Create a new data matrix where we use the p 0 x N actual excess returns concatenated with (1 -p 0) x N returns that are adjusted to have zero excess performance § Y=[X 0, 1 | X 0, 0] Campbell R. Harvey 2019 48

p 0 Skilled X 0, 1 1 -p 0 Unskilled X 0, 0 Y=[X 0, 1 | X 0, 0] Actual excess returns Set all assumed unskilled to zero alpha Campbell R. Harvey 2019 49

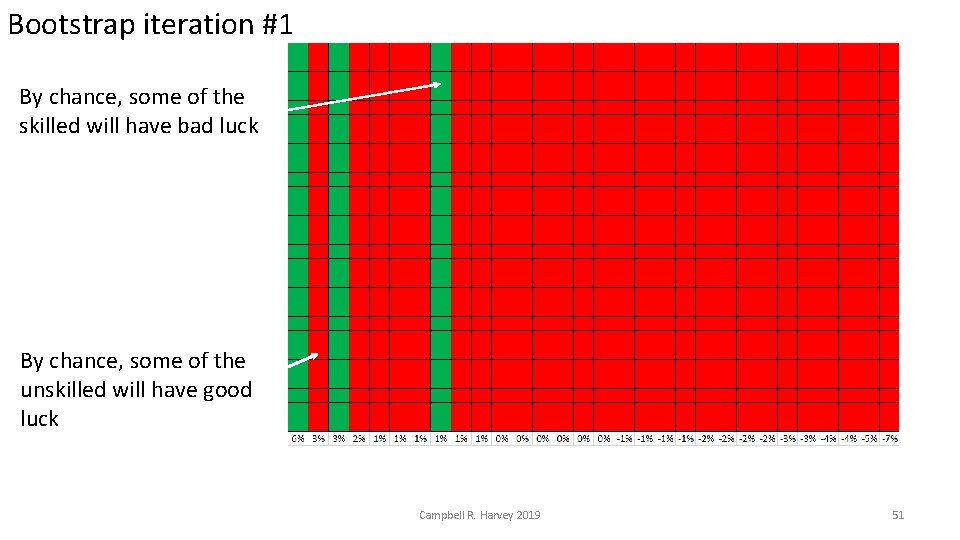

6. False (and missed) discoveries Method: Bootstrap 1 § Bootstrap Y=[X 0, 1 | X 0, 0] and create a “new history” by randomly sampling (with replacement) rows. § Given a t-statistic cutoff, by chance some of the unskilled will show up as “skilled” and some of the skilled as “unskilled” § At a various level of t-statistics, we can count the Type I and Type II errors (or take the magnitudes into account). § Repeat for 10, 000 bootstrap iterations Campbell R. Harvey 2019 50

Bootstrap iteration #1 By chance, some of the skilled will have bad luck By chance, some of the unskilled will have good luck Campbell R. Harvey 2019 51

6. False (and missed) discoveries Method: Bootstrap 1 § Averaging over the iterations, we can determine the Type I error rate at different levels of t-statistic thresholds § It is straightforward to find the level of t-statistic that delivers a 5% error rate § Type II error rates are easily calculated too Campbell R. Harvey 2019 52

6. False (and missed) discoveries Method: Bootstrap 2 § This method is flawed. § Our original assumption is that we know the p 0 skilled funds and we assign their sample performance as the “truth” – that is, some of the funds we declare “skilled” are not. § We take a step back. Campbell R. Harvey 2019 53

6. False (and missed) discoveries Method: Bootstrap 2 § With our original data matrix X 0, we perturb it by doing an initial bootstrap, i. § With perturbed data, we follow the previous steps and bootstrap Yi=[X 0, 1 | X 0, 0] § This initial bootstrap is essential to control for sampling uncertainty; we repeat it 1, 000 times § This is what we refer to as “double bootstrap” Campbell R. Harvey 2019 54

6. False (and missed) discoveries Method: Bootstrap 2 § Bootstrap allows for data dependence § Allows us to make data specific cutoffs § Allows us to evaluate the performance of different multiple testing adjustments, e. g. , Bonferroni Campbell R. Harvey 2019 55

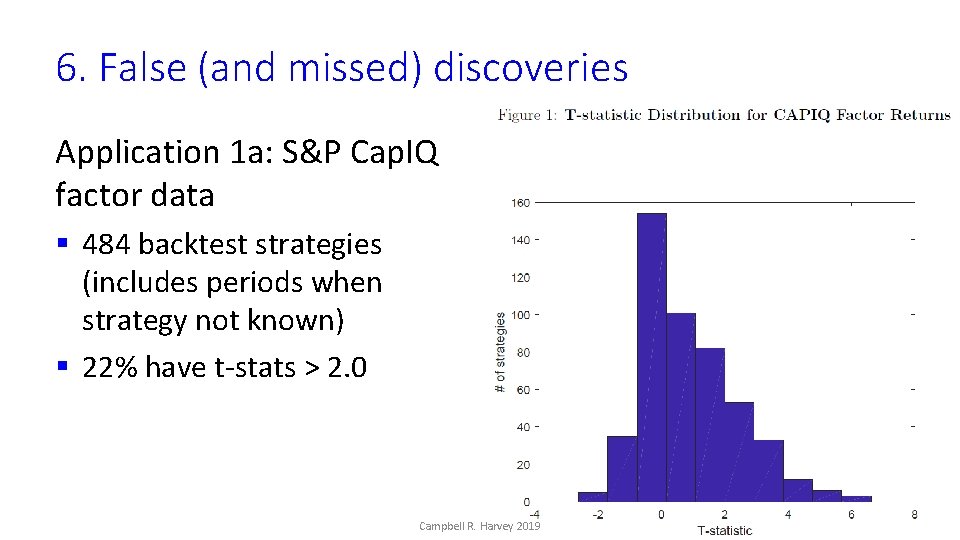

6. False (and missed) discoveries Application 1 a: S&P Cap. IQ factor data § 484 backtest strategies (includes periods when strategy not known) § 22% have t-stats > 2. 0 Campbell R. Harvey 2019 56

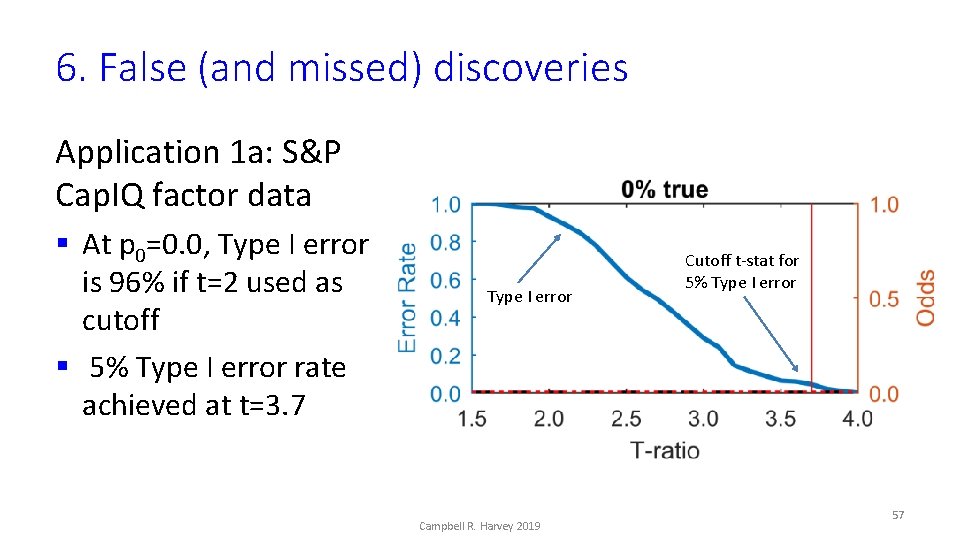

6. False (and missed) discoveries Application 1 a: S&P Cap. IQ factor data § At p 0=0. 0, Type I error is 96% if t=2 used as cutoff § 5% Type I error rate achieved at t=3. 7 Type I error Campbell R. Harvey 2019 Cutoff t-stat for 5% Type I error 57

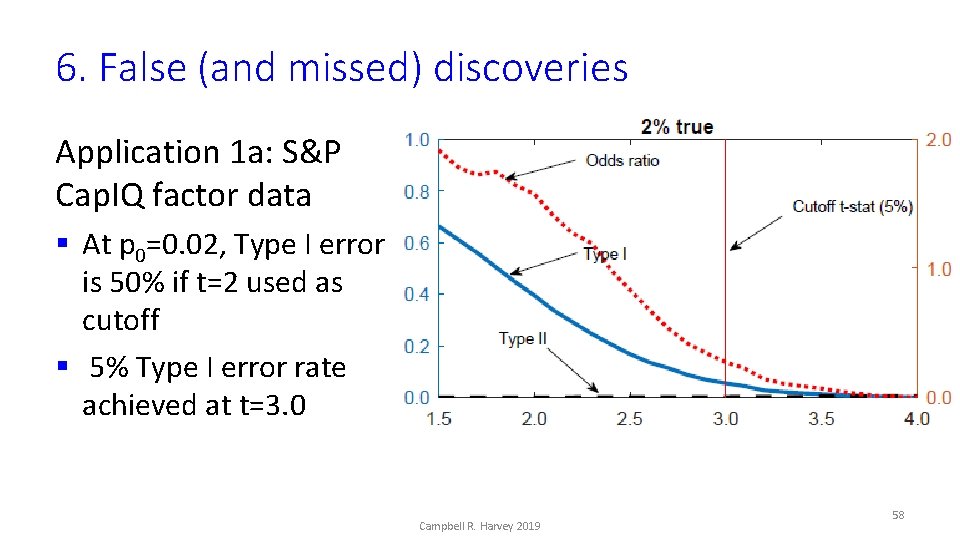

6. False (and missed) discoveries Application 1 a: S&P Cap. IQ factor data § At p 0=0. 02, Type I error is 50% if t=2 used as cutoff § 5% Type I error rate achieved at t=3. 0 Campbell R. Harvey 2019 58

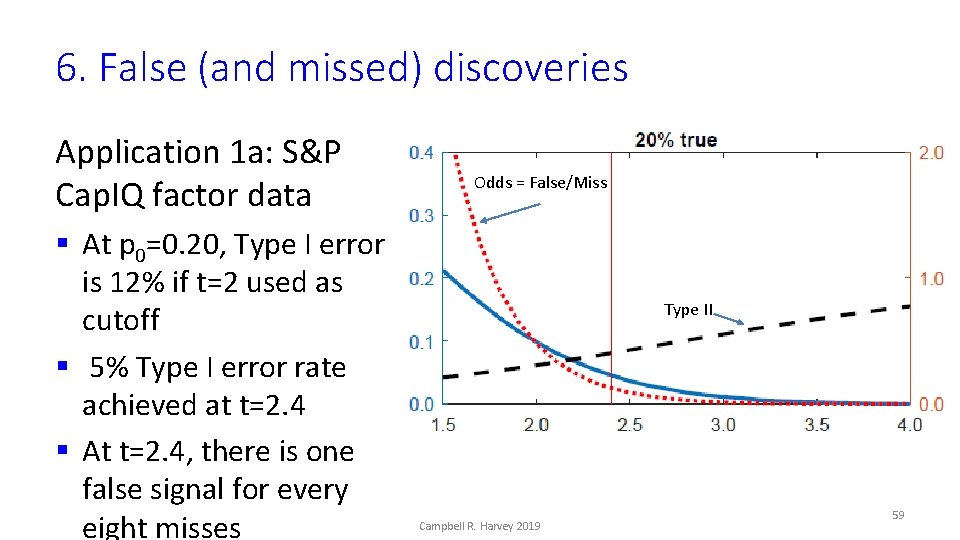

6. False (and missed) discoveries Application 1 a: S&P Cap. IQ factor data § At p 0=0. 20, Type I error is 12% if t=2 used as cutoff § 5% Type I error rate achieved at t=2. 4 § At t=2. 4, there is one false signal for every eight misses Odds = False/Miss Type II Campbell R. Harvey 2019 59

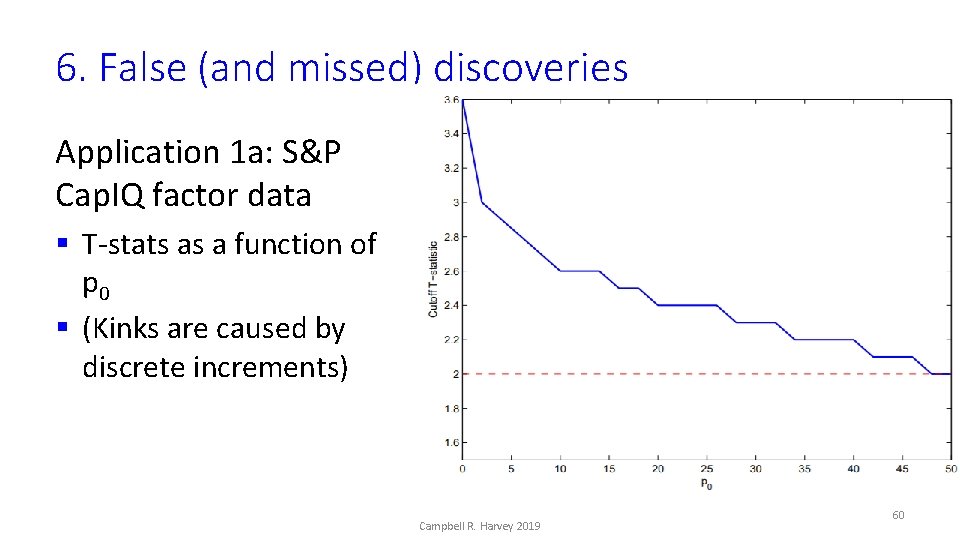

6. False (and missed) discoveries Application 1 a: S&P Cap. IQ factor data § T-stats as a function of p 0 § (Kinks are caused by discrete increments) Campbell R. Harvey 2019 60

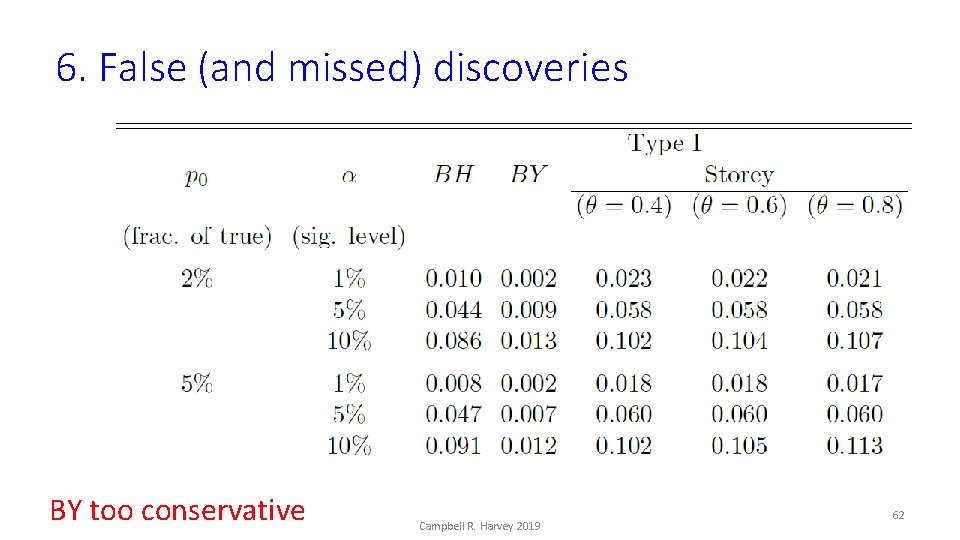

6. False (and missed) discoveries Application 1: S&P Cap. IQ factor data § We evaluate the performance of different multiple testing adjustments § Benjamini and Hochberg 1995 (BH) § Benjamini and Yekutieli 2001 (BY) § Storey (2002) Campbell R. Harvey 2019 61

6. False (and missed) discoveries BY too conservative Campbell R. Harvey 2019 62

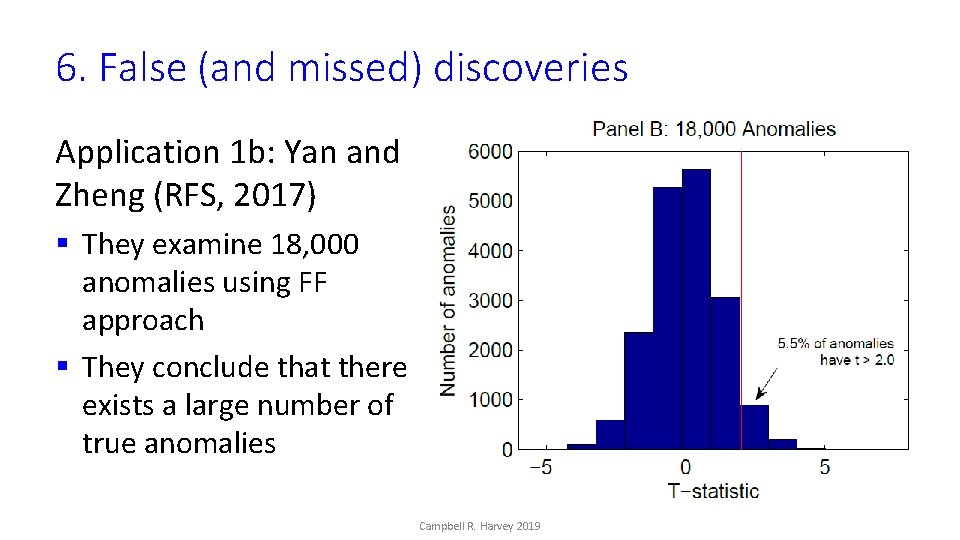

6. False (and missed) discoveries Application 1 b: Yan and Zheng (RFS, 2017) § They examine 18, 000 anomalies using FF approach § They conclude that there exists a large number of true anomalies Campbell R. Harvey 2019 63

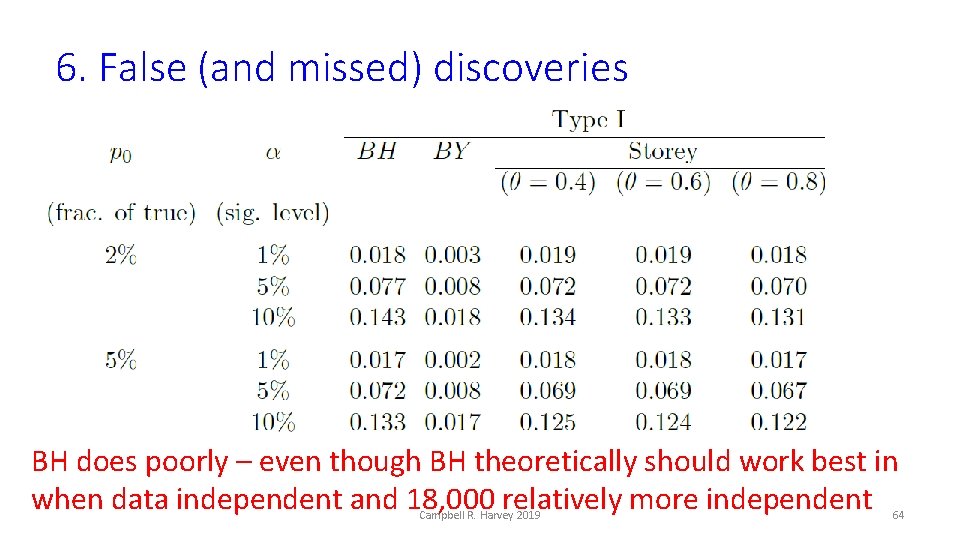

6. False (and missed) discoveries BH does poorly – even though BH theoretically should work best in when data independent and 18, 000 relatively more independent Campbell R. Harvey 2019 64

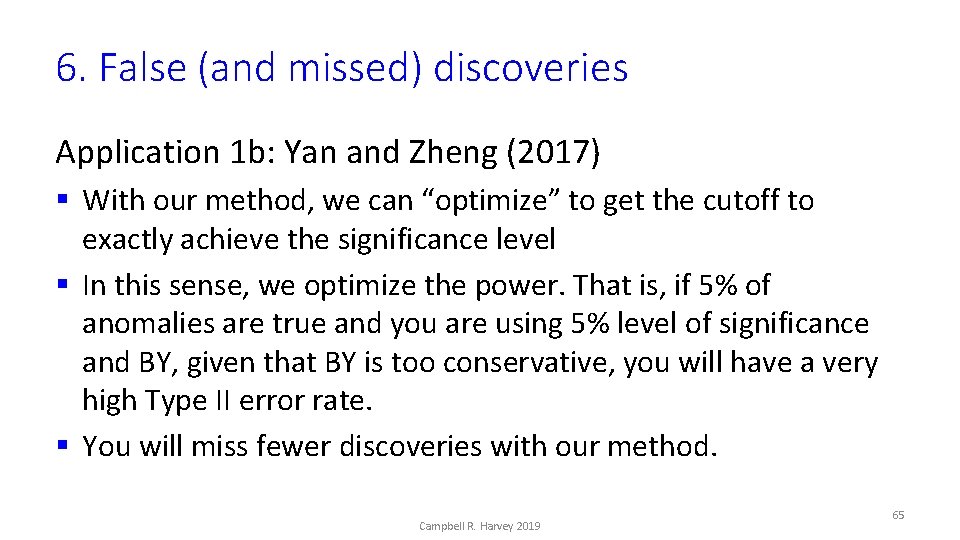

6. False (and missed) discoveries Application 1 b: Yan and Zheng (2017) § With our method, we can “optimize” to get the cutoff to exactly achieve the significance level § In this sense, we optimize the power. That is, if 5% of anomalies are true and you are using 5% level of significance and BY, given that BY is too conservative, you will have a very high Type II error rate. § You will miss fewer discoveries with our method. Campbell R. Harvey 2019 65

6. False (and missed) discoveries Application 1 b: Yan and Zheng (2017) § Yan and Zheng (2017) claim 1, 800 anomalies are true § Using the most powerful methods and controlling for a False Discovery Rate of 10%, we find 0. 1% “true”, i. e. , 18 factors § Yan and Zheng (2017) misapply the FF method (cannot be directly used to deduce the fraction of true anomalies) Campbell R. Harvey 2019 66

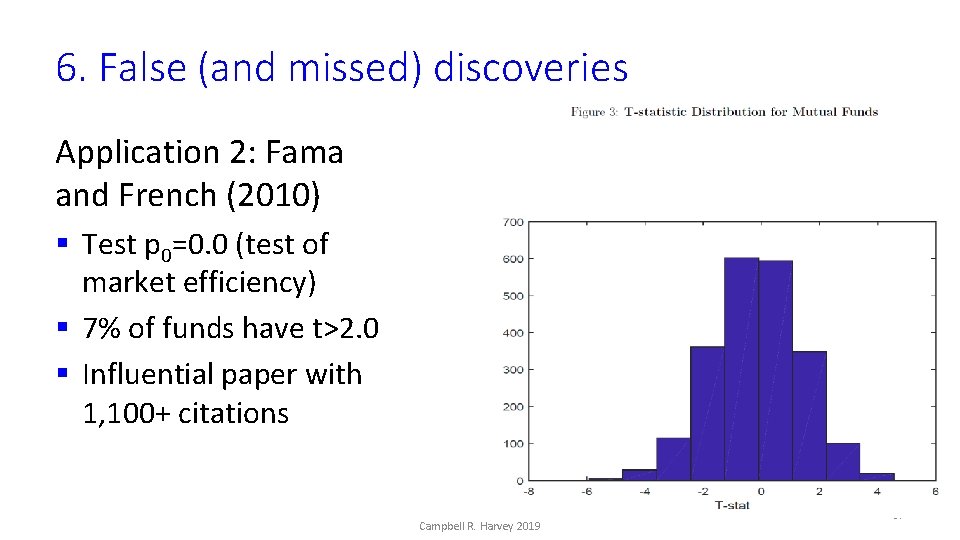

6. False (and missed) discoveries Application 2: Fama and French (2010) § Test p 0=0. 0 (test of market efficiency) § 7% of funds have t>2. 0 § Influential paper with 1, 100+ citations Campbell R. Harvey 2019 67

6. False (and missed) discoveries Fama and French’s approach: § Adjust all funds so they have zero excess performance (null) § Bootstrap to generate the distribution of the p-th percentile of the crosssection of t-statistics § Compare this distribution with the p-th percentile for the actual data Fama and French’s finding: § Unlikely any fund outperforms: consistent with market efficiency Campbell R. Harvey 2019 68

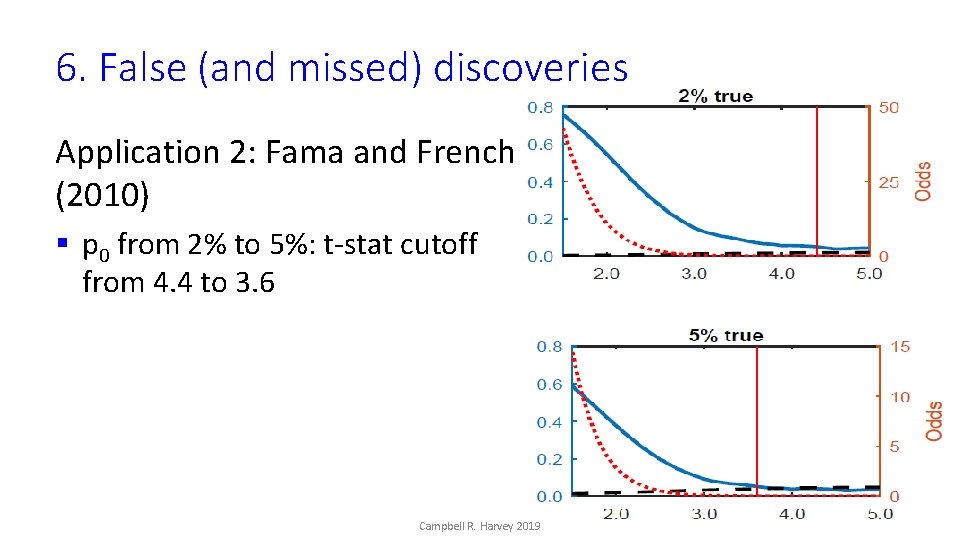

6. False (and missed) discoveries Application 2: Fama and French (2010) § p 0 from 2% to 5%: t-stat cutoff from 4. 4 to 3. 6 Campbell R. Harvey 2019 69

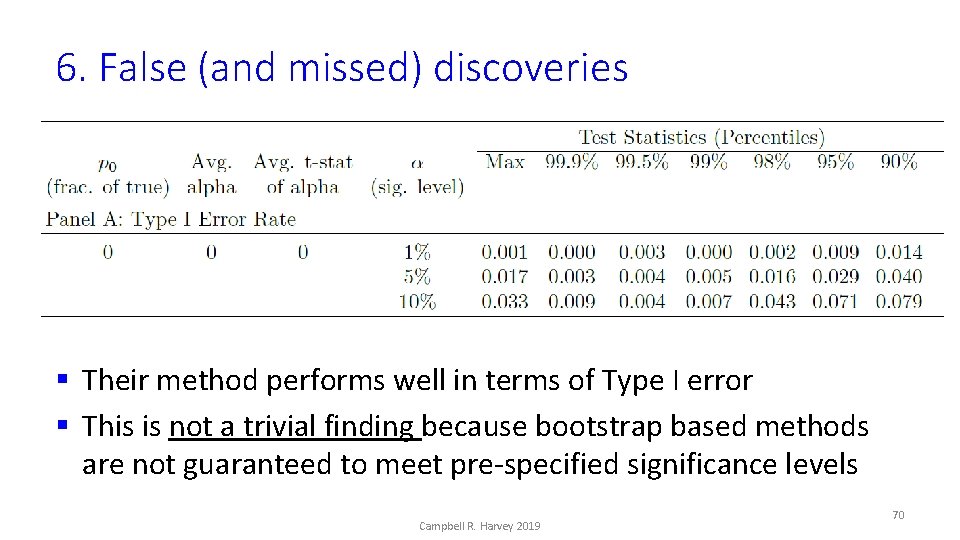

6. False (and missed) discoveries § Their method performs well in terms of Type I error § This is not a trivial finding because bootstrap based methods are not guaranteed to meet pre-specified significance levels Campbell R. Harvey 2019 70

6. False (and missed) discoveries Application 2: Fama and French (2010) § Their method performs poorly in terms of Type II error § If p 0=0. 02, and funds have large positive returns (top 2% of actual), FF falsely declares zero alpha across all funds Campbell R. Harvey 2019 71

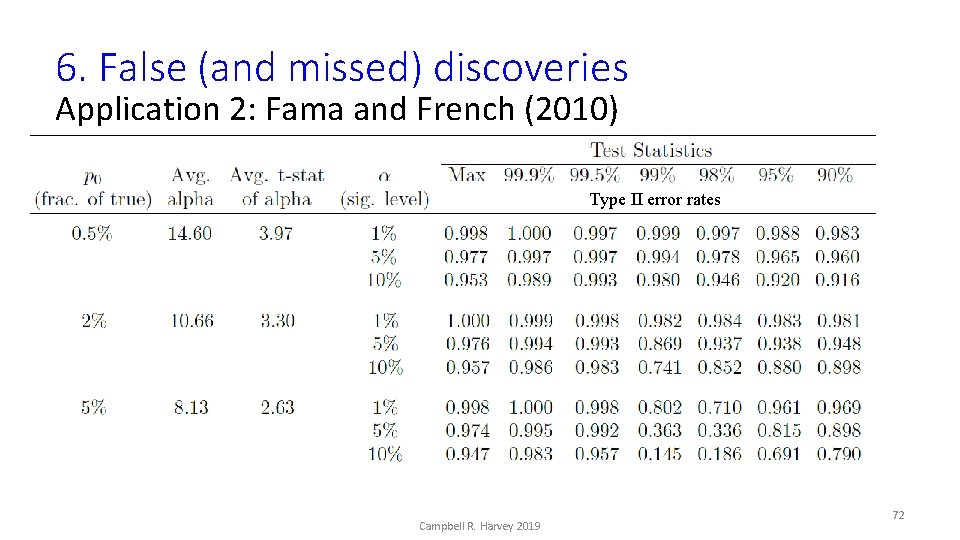

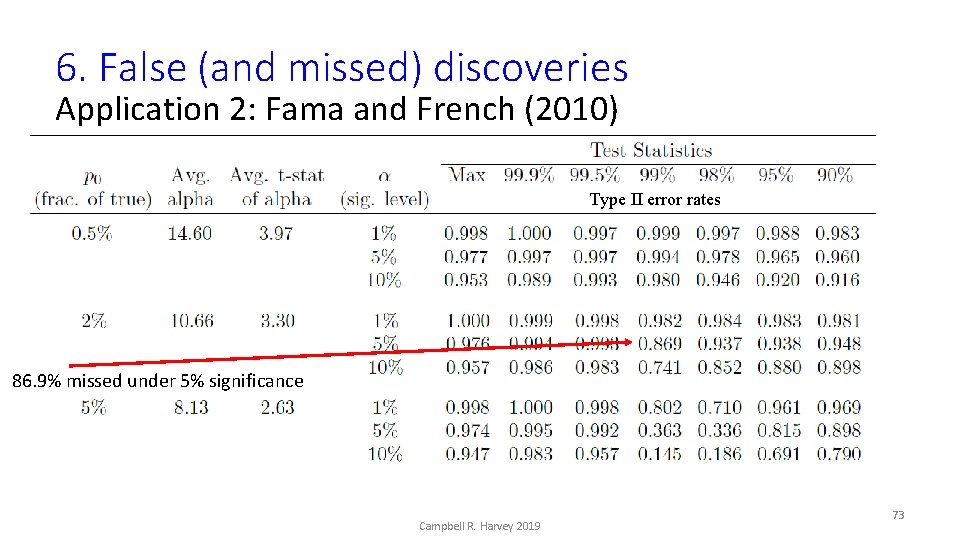

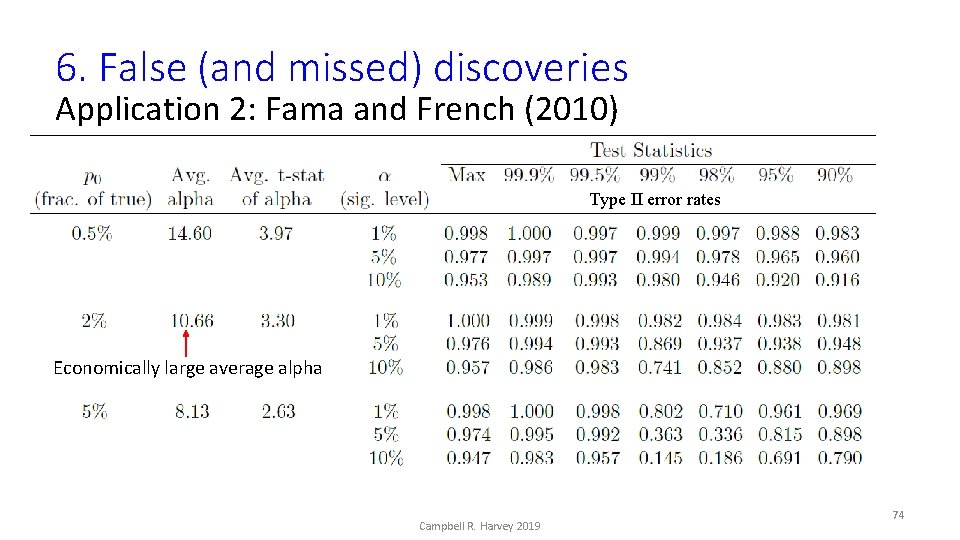

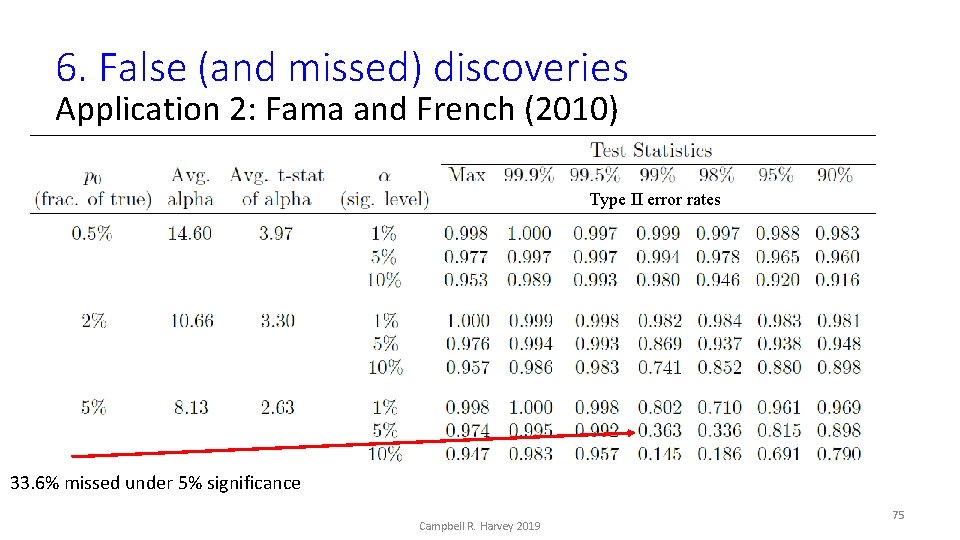

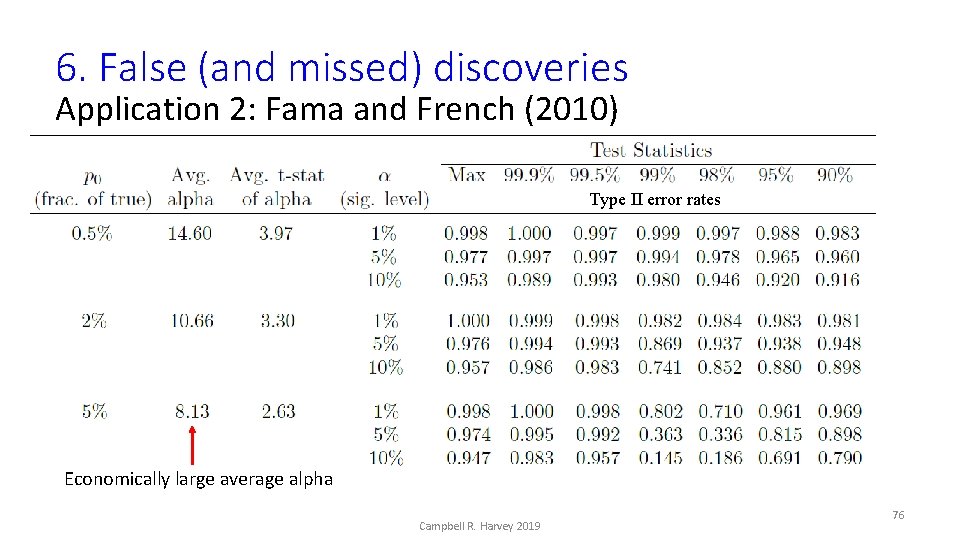

6. False (and missed) discoveries Application 2: Fama and French (2010) Type II error rates Campbell R. Harvey 2019 72

6. False (and missed) discoveries Application 2: Fama and French (2010) Type II error rates 86. 9% missed under 5% significance Campbell R. Harvey 2019 73

6. False (and missed) discoveries Application 2: Fama and French (2010) Type II error rates Economically large average alpha Campbell R. Harvey 2019 74

6. False (and missed) discoveries Application 2: Fama and French (2010) Type II error rates 33. 6% missed under 5% significance Campbell R. Harvey 2019 75

6. False (and missed) discoveries Application 2: Fama and French (2010) Type II error rates Economically large average alpha Campbell R. Harvey 2019 76

6. False (and missed) discoveries What is going on? § Fama and French (2010) seems like an obvious improvement over Kosowski et al. because FF take into account crosssectional correlation (Kosowski et al. bootstrap fund by fund) § We show the problem is mainly focused on how FF handle funds with limited histories Campbell R. Harvey 2019 77

6. False (and missed) discoveries What is going on? § Suppose a fund has only 20 observations § Kosowski et al. will generate bootstrap samples that always have 20 observations § Fama and French are bootstrapping the entire cross-section. For any iteration, this fund might have, e. g. , 20, 25, 10, or even 0 observations § Fama and French require a minimum of 8 observations Campbell R. Harvey 2019 78

6. False (and missed) discoveries What is going on? § Fama and French recognize this problem and state that the under and over sampling should “about balance out” § It does not. It creates a big problem. Campbell R. Harvey 2019 79

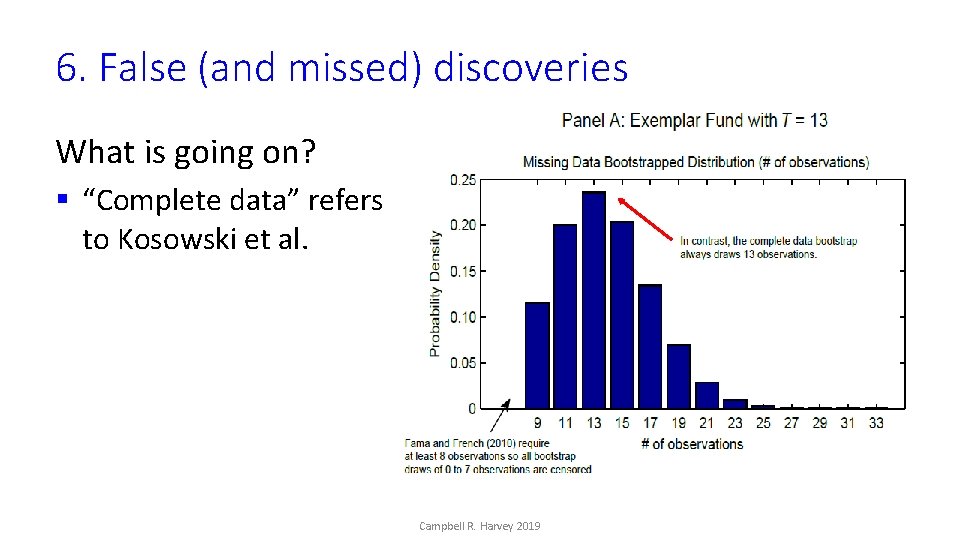

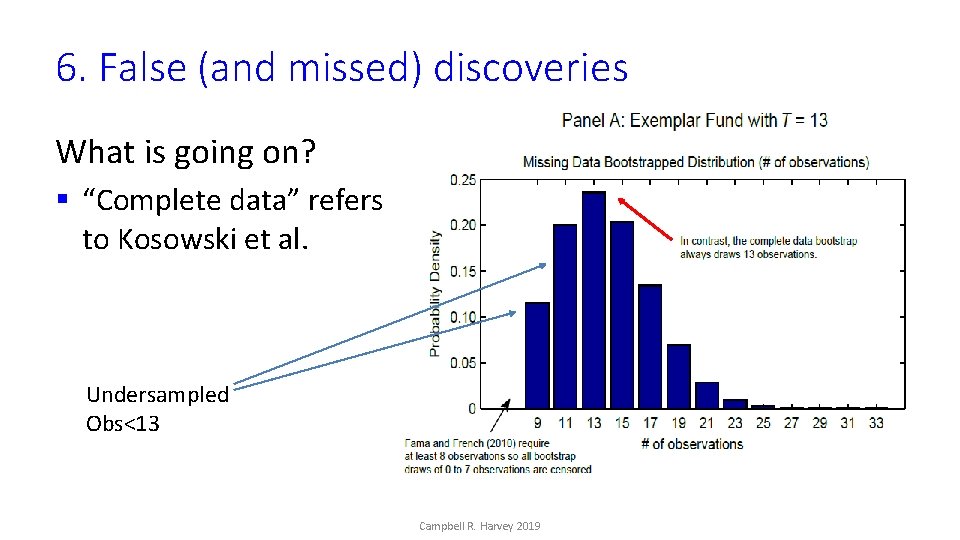

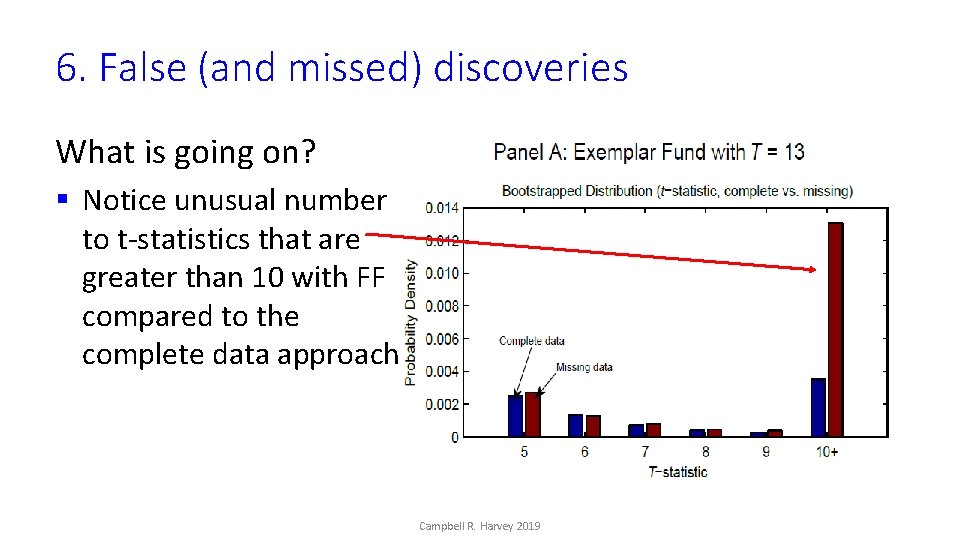

6. False (and missed) discoveries What is going on? § “Complete data” refers to Kosowski et al. Campbell R. Harvey 2019

6. False (and missed) discoveries What is going on? § “Complete data” refers to Kosowski et al. Undersampled Obs<13 Campbell R. Harvey 2019

6. False (and missed) discoveries What is going on? § Notice unusual number to t-statistics that are greater than 10 with FF compared to the complete data approach Campbell R. Harvey 2019

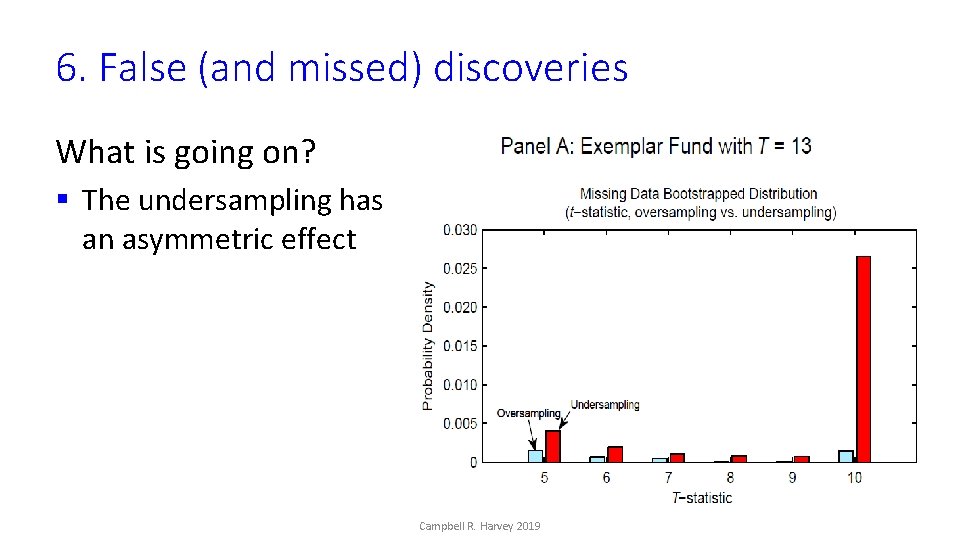

6. False (and missed) discoveries What is going on? § The undersampling has an asymmetric effect Campbell R. Harvey 2019

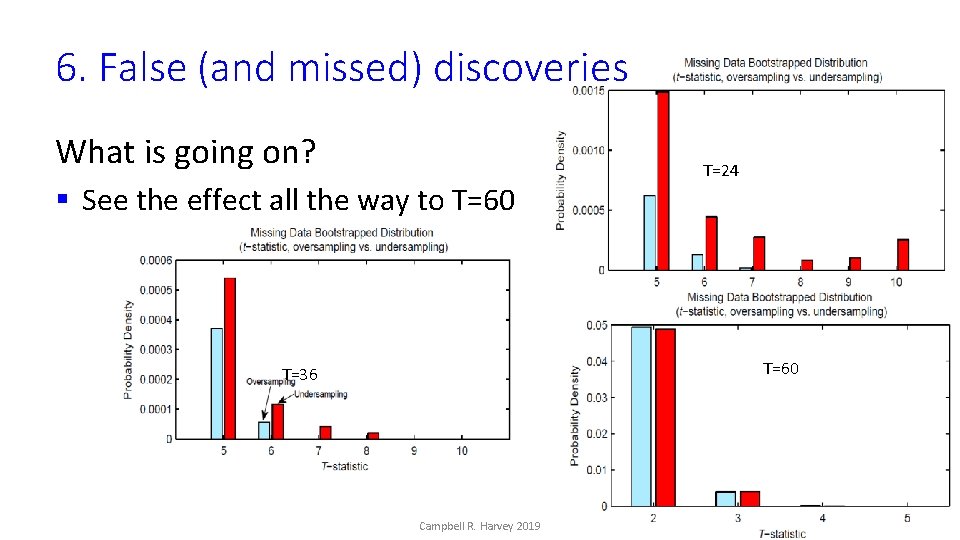

6. False (and missed) discoveries What is going on? § See the effect all the way to T=60 T=24 T=60 T=36 Campbell R. Harvey 2019

6. False (and missed) discoveries What is going on? § These extreme tails are an exaggeration of what the tails of the cross-sectional distribution should be if we had complete data for each fund. § These exaggerated tails make it difficult for the tails of the actual cross-sectional distribution of t-statistics to surpass, leading to the low rejection rate (i. e. , low test power) even when some funds are actually outperforming. Campbell R. Harvey 2019 85

6. False (and missed) discoveries What is going on? § One possibility is to a higher number of minimum observations – which induces a selection bias § Another possibility is to conduct sub-sample analysis (say five years) requiring, again, the same number of observations. There is also a selection bias with this approach § Our results show that both of these implementations greatly increase the power of the Fama and French method Campbell R. Harvey 2019 86

6. False (and missed) discoveries Bottom line § To be clear, we believe that the Fama and French method is innovative and valuable (we use a variant in our paper, Lucky Factors) § Given the implementation problem in Fama and French (2010), our analysis presents a different conclusion § We can reject the null that no mutual fund outperforms Campbell R. Harvey 2019 87

6. False (and missed) discoveries Conclusions Commonplace to ignore test power. § Ziliak and Mc. Closkey (2004) find that only 8% of AER papers consider test power § Ioannidis et al. (2017) 90% of economics research findings underpowered – leading to an exaggeration of results. 88

6. False (and missed) discoveries Conclusions § Why is this a big deal? § If main finding is non-existence of an effect (no fund outperforms), test power directly affects the credibility of the findings Campbell R. Harvey 2019 89

6. False (and missed) discoveries Takeaways We provide a general way to calibrate Type I and Type II errors Double bootstrap preserves the correlation structure in the data Ability to evaluate multiple testing correction methods We also propose an odds ratio (false/missed discoveries) that allows us to incorporate asymmetric costs of these errors into financial decision making § Our method also allows us to capture the magnitude of the error (a 3% error is much less important than a 30% error) § § Campbell R. Harvey 2019 90

References Joint work with Based on our joint work (except Scientific Outlook): Yan Liu Purdue University § (1) “… and the Cross-section of Expected Returns” http: //ssrn. com/abstract=2249314 • (1 b) “Backtesting” http: //ssrn. com/abstract=2345489 • (1 c) “Evaluating Trading Strategies” http: //ssrn. com/abstract=2474755 § (2) “Lucky Factors” http: //ssrn. com/abstract=2528780 § (3) “Detecting Repeatable Performance” http: //ssrn. com/abstract=2691658 § (4) “The Scientific Outlook in Financial Economics”, https: //ssrn. com/abstract=2893930 § (5) “Cross-Sectional Alpha Dispersion and Performance Evaluation”, https: //ssrn. com/abstract=3143806 § (6) “False (and Missed) Discoveries in Financial Economics”, https: //ssrn. com/abstract=3073799 § (7) “A Census of the Factor Zoo”, https: //ssrn. com/abstract=3341728 Campbell R. Harvey 2019 91

Contact – Follow me on Linkedin! https: //www. man. com/maninstitute/perspectives-by-campbell-r-harvey cam. harvey@duke. edu @camharvey http: //linkedin. com/in/camharvey SSRN: http: //ssrn. com/author=16198 PGP: E 004 4 F 24 1 FBC 6 A 4 A CF 31 D 520 0 F 43 AE 4 D D 2 B 8 4 EF 4 Campbell R. Harvey 2019 92

Citizen Science Link to form Campbell R. Harvey 2019 Link to factor census 93

Please read my paper and pass on to others Campbell R. Harvey 2019 94

Revision Strategy: Simulations We simulate the data generating process and assess our model performance by comparing the true Type I error rate for a given method (e. g. , BHY) and the Type I error rate calculated by our method • Suppose we assemble an anomaly data. We demean all anomalies first. We then inject alphas (say, alpha = 5% per annum) to p 0= 10% of strategies. This data corresponds to the anomaly population. Campbell R. Harvey 2019 112

Revision Strategy: Simulations • We then perturb (more precisely, bootstrap) the anomaly population to generate the anomaly data in sample. • For this in-sample data, we know exactly which anomalies are true or false. We apply, say, BH, under a 5% significance level. We calculate the true BH implied error rate (since we know the true identities of anomalies). Suppose it is 1%. Alternatively, we use our method and get a new error rate of 0. 5%. We assess whether our method is doing better than BH (if our error rate is closer to the true error rate than the nominal rate of 5%). • We do this repeatedly to compare the performance of our model with BH. Campbell R. Harvey 2019 113

Revision Strategy: Simulations We vary the simulation assumptions • Case 1: Constant alpha among strategies • Case 2: True alphas follow a thin-tailed distribution (e. g. , normal distribution) • Case 3: True alphas follow a fat-tailed distribution (e. g. , a t-distribution) Campbell R. Harvey 2019 114

- Slides: 60