Faculty of Computer Science Institute System Architecture Database

Faculty of Computer Science, Institute System Architecture, Database Technology Group A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets Rainer Gemulla (University of Technology Dresden) Wolfgang Lehner (University of Technology Dresden) Peter J. Haas (IBM Almaden Research Center)

Outline 1. Introduction 2. Deletions 3. Resizing 4. Experiments 5. Summary Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 2

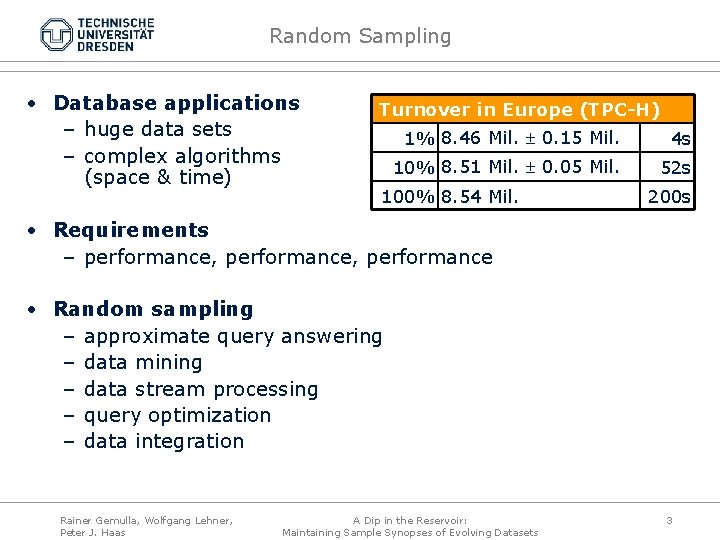

Random Sampling • Database applications – huge data sets – complex algorithms (space & time) Turnover in Europe (TPC-H) 1% 8. 46 Mil. 0. 15 Mil. 4 s 10% 8. 51 Mil. 0. 05 Mil. 52 s 100% 8. 54 Mil. 200 s • Requirements – performance, performance • Random sampling – approximate query answering – data mining – data stream processing – query optimization – data integration Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 3

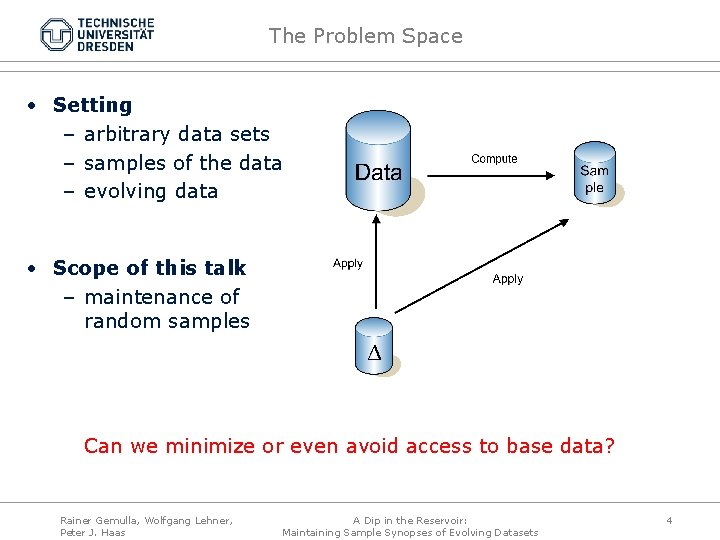

The Problem Space • Setting – arbitrary data sets – samples of the data – evolving data • Scope of this talk – maintenance of random samples Can we minimize or even avoid access to base data? Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 4

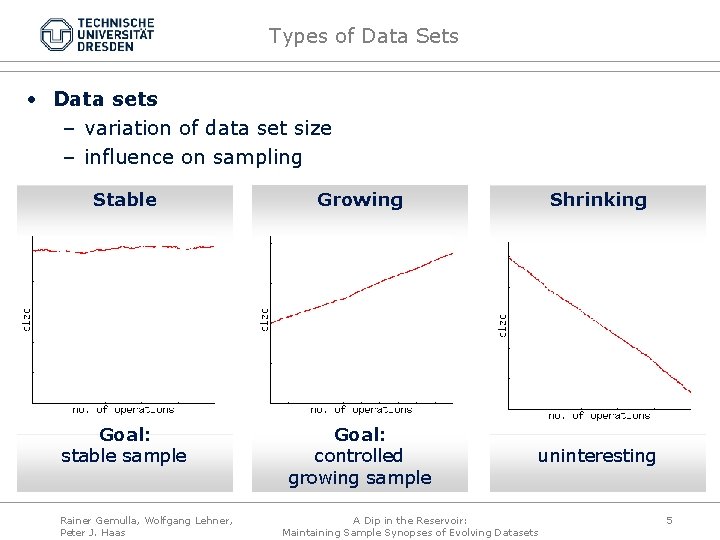

Types of Data Sets • Data sets – variation of data set size – influence on sampling Stable Growing Shrinking Goal: stable sample Goal: controlled growing sample uninteresting Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 5

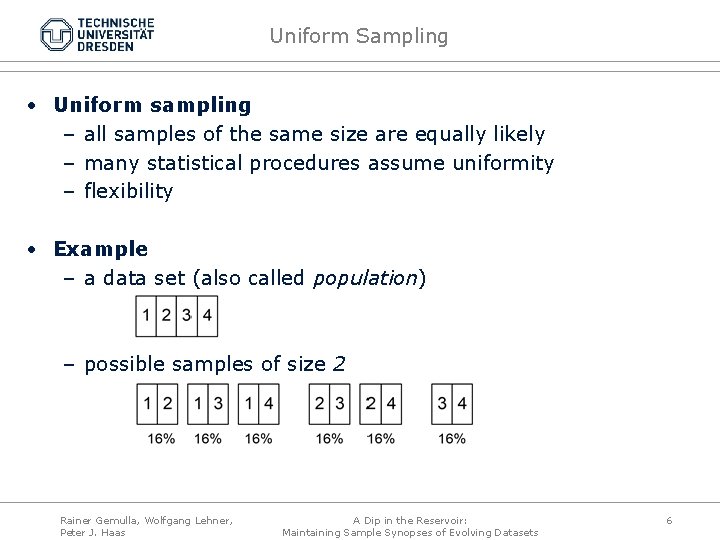

Uniform Sampling • Uniform sampling – all samples of the same size are equally likely – many statistical procedures assume uniformity – flexibility • Example – a data set (also called population) – possible samples of size 2 Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 6

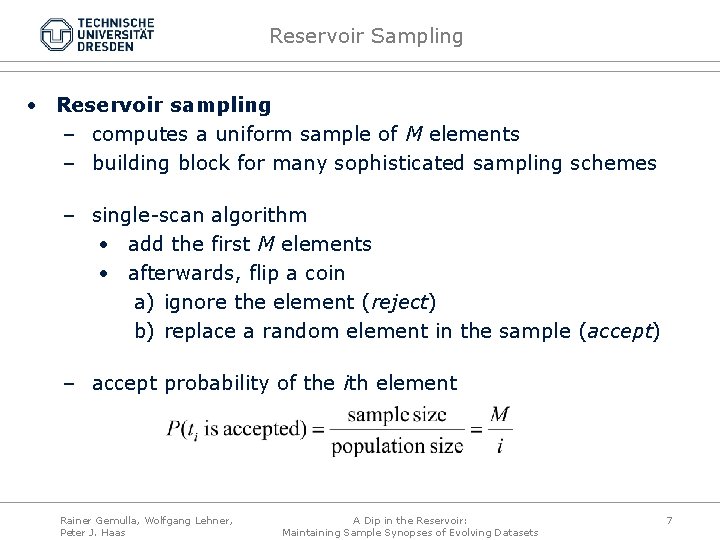

Reservoir Sampling • Reservoir sampling – computes a uniform sample of M elements – building block for many sophisticated sampling schemes – single-scan algorithm • add the first M elements • afterwards, flip a coin a) ignore the element (reject) b) replace a random element in the sample (accept) – accept probability of the ith element Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 7

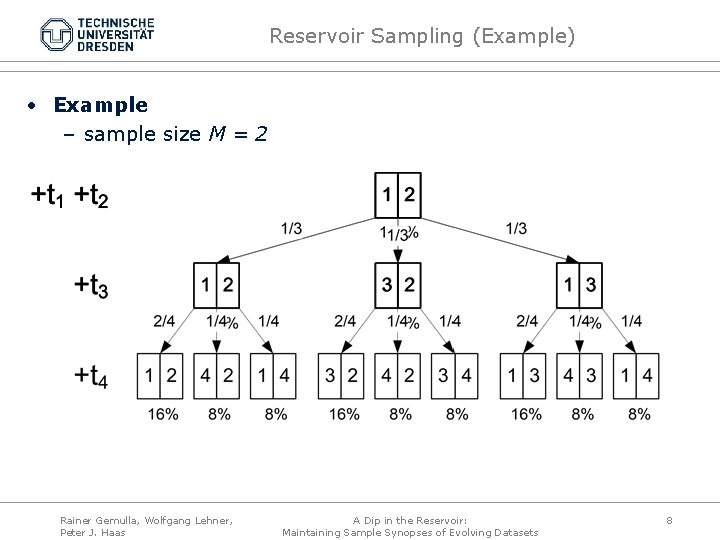

Reservoir Sampling (Example) • Example – sample size M = 2 Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 8

Problems with Reservoir Sampling • Problems with reservoir sampling – lacks support for deletions (stable data sets) – cannot efficiently enlarge sample (growing data sets) Rainer Gemulla, Wolfgang Lehner, Peter J. Haas ? A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 9

Outline 1. Introduction 2. Deletions 3. Resizing 4. Experiments 5. Summary Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 10

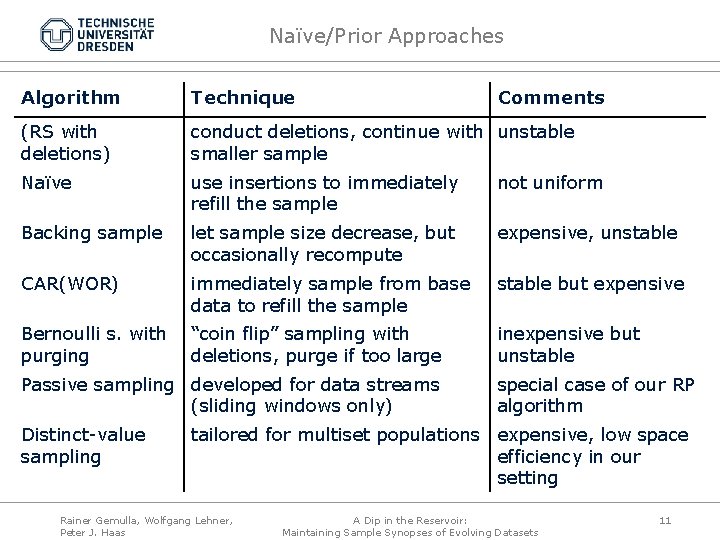

Naïve/Prior Approaches Algorithm Technique (RS with deletions) conduct deletions, continue with unstable smaller sample Naïve use insertions to immediately refill the sample not uniform Backing sample let sample size decrease, but occasionally recompute expensive, unstable CAR(WOR) immediately sample from base data to refill the sample stable but expensive Bernoulli s. with purging “coin flip” sampling with deletions, purge if too large inexpensive but unstable Passive sampling developed for data streams (sliding windows only) Distinct-value sampling Comments special case of our RP algorithm tailored for multiset populations expensive, low space efficiency in our setting Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 11

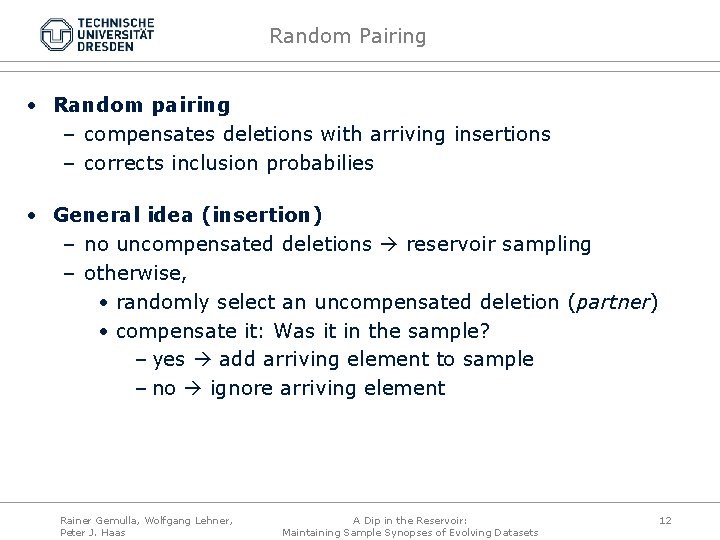

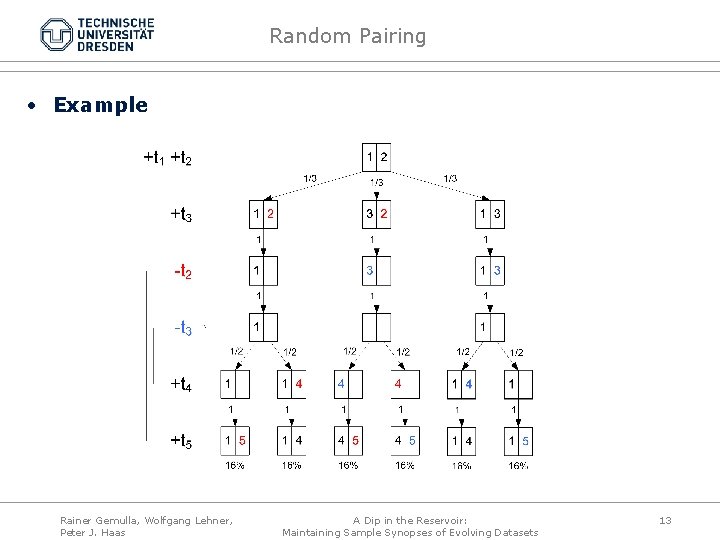

Random Pairing • Random pairing – compensates deletions with arriving insertions – corrects inclusion probabilies • General idea (insertion) – no uncompensated deletions reservoir sampling – otherwise, • randomly select an uncompensated deletion (partner) • compensate it: Was it in the sample? – yes add arriving element to sample – no ignore arriving element Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 12

Random Pairing • Example Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 13

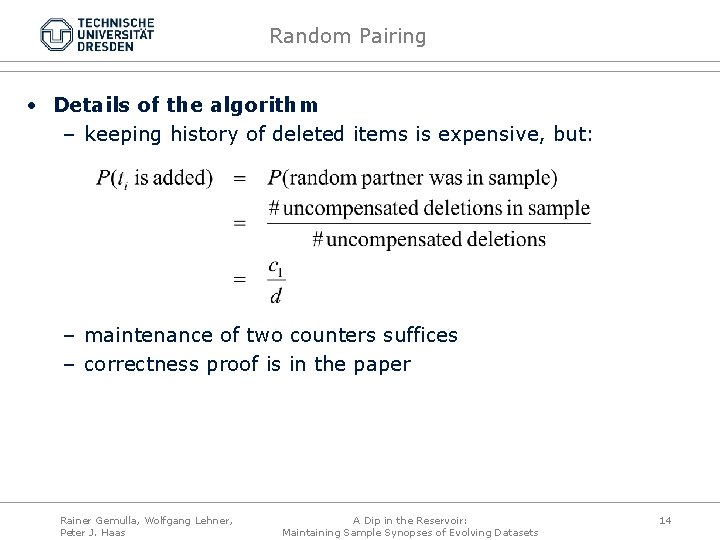

Random Pairing • Details of the algorithm – keeping history of deleted items is expensive, but: – maintenance of two counters suffices – correctness proof is in the paper Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 14

Outline 1. Introduction 2. Deletions 3. Resizing 4. Experiments 5. Summary Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 15

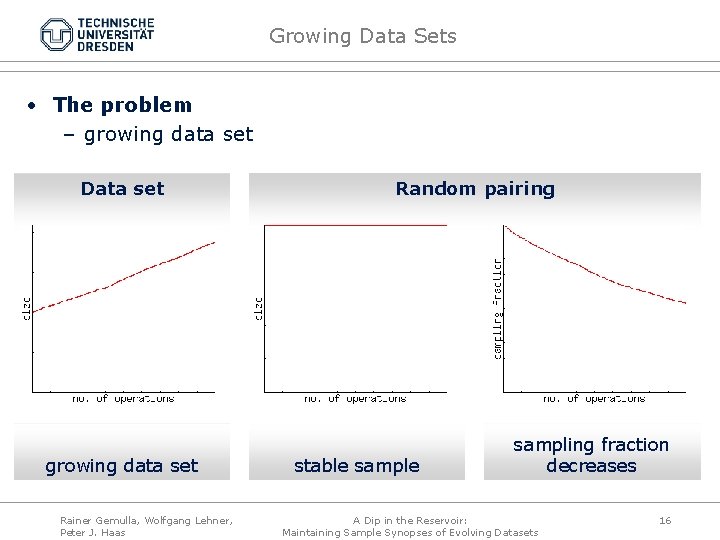

Growing Data Sets • The problem – growing data set Data set growing data set Rainer Gemulla, Wolfgang Lehner, Peter J. Haas Random pairing stable sampling fraction decreases A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 16

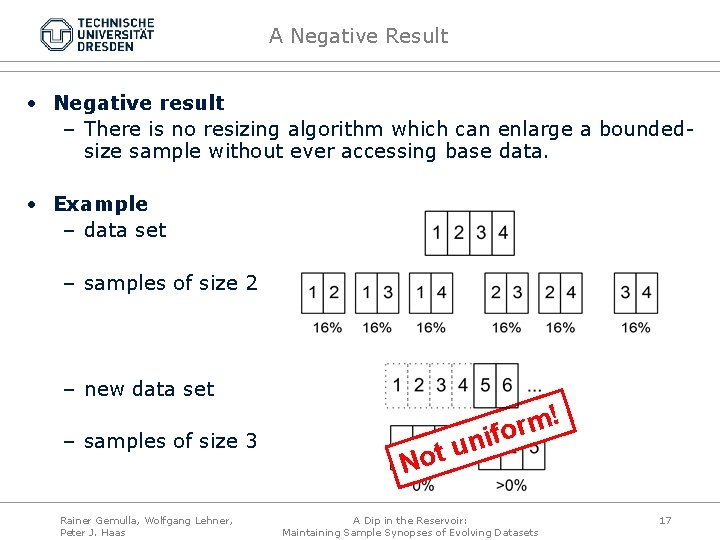

A Negative Result • Negative result – There is no resizing algorithm which can enlarge a boundedsize sample without ever accessing base data. • Example – data set – samples of size 2 – new data set – samples of size 3 Rainer Gemulla, Wolfgang Lehner, Peter J. Haas ! m r o f i n u t o N A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 17

Resizing • Goal – efficiently increase sample size – stay within an upper bound at all times • General idea 1. convert sample to Bernoulli sample 2. continue Bernoulli sampling until new sample size is reached 3. convert back to reservoir sample • Optimally balance cost – cost of base data accesses (in step 1) – time to reach new sample size (in step 2) Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 18

Resizing • Bernoulli sampling – uniform sampling scheme – each tuple is added to the sample with probability q – sample size follows binomial distribution no effective upper bound • Phase 1: Conversion to a Bernoulli sample – given q, randomly determine sample size – reuse reservoir sample to create Bernoulli sample • subsample • sample additional tuples (base data access) – choice of q • small less base data accesses • large more base data accesses Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 19

Resizing • Phase 2: Run Bernoulli sampling – accept new tuples with probability q – conduct deletions – stop as soon as new sample size is reached • Phase 3: Revert to Reservoir sampling – switchover is trivial • Choosing q – determines cost of Phase 1 and Phase 2 – goal: minimize total cost • base data access expensive small q • base data access cheap large q – details in paper Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 20

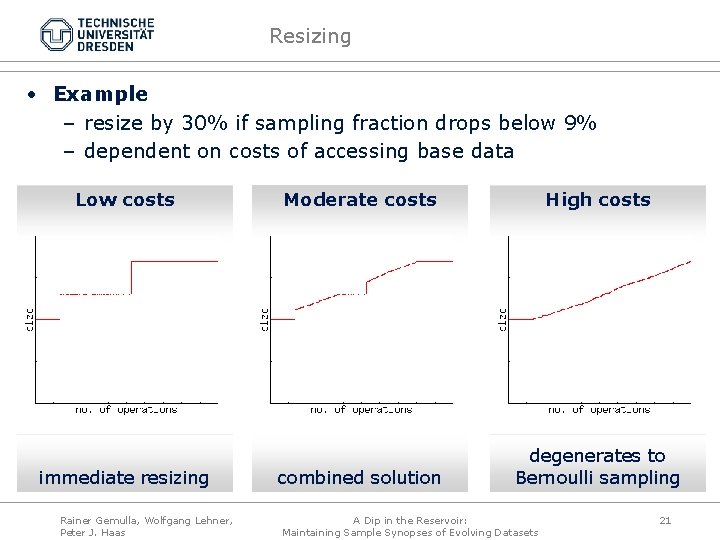

Resizing • Example – resize by 30% if sampling fraction drops below 9% – dependent on costs of accessing base data Low costs immediate resizing Rainer Gemulla, Wolfgang Lehner, Peter J. Haas Moderate costs High costs combined solution degenerates to Bernoulli sampling A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 21

Outline 1. Introduction 2. Deletions 3. Resizing 4. Experiments 5. Summary Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 22

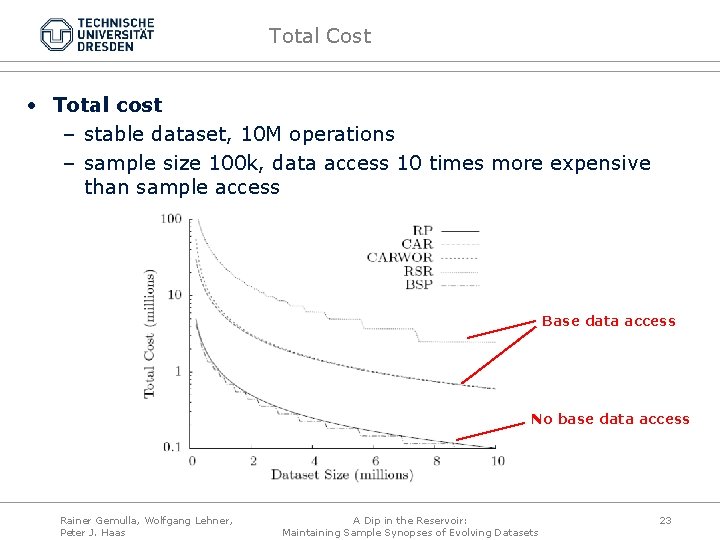

Total Cost • Total cost – stable dataset, 10 M operations – sample size 100 k, data access 10 times more expensive than sample access Base data access No base data access Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 23

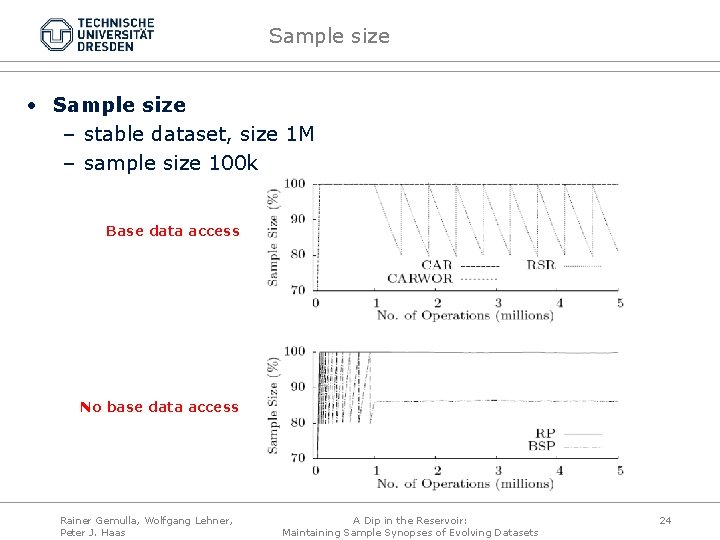

Sample size • Sample size – stable dataset, size 1 M – sample size 100 k Base data access No base data access Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 24

Outline 1. Introduction 2. Deletions 3. Resizing 4. Experiments 5. Summary Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 25

Summary • Reservoir Sampling – lacks support for deletions – complete recomputation to enlarge the sample • Random Pairing – uses arriving insertions to compensate for deletions • Resizing – base data access cannot be avoided – minimizes total cost • Future work – better q for resizing – combine with existing techniques [4, 8, 17] to enhance flexibility, scalability Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 26

Thank you! Questions? Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 27

Backup: Bounded-Size Sampling • Why sampling? – performance, performance • How much to sample? – influencing factors 1. storage consumption 2. response time 3. accuracy – choosing the sample size / sampling fraction 1. largest sample that meets storage requirements 2. largest sample that meets response time requirements 3. smallest sample that meets accuracy requirements Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 28

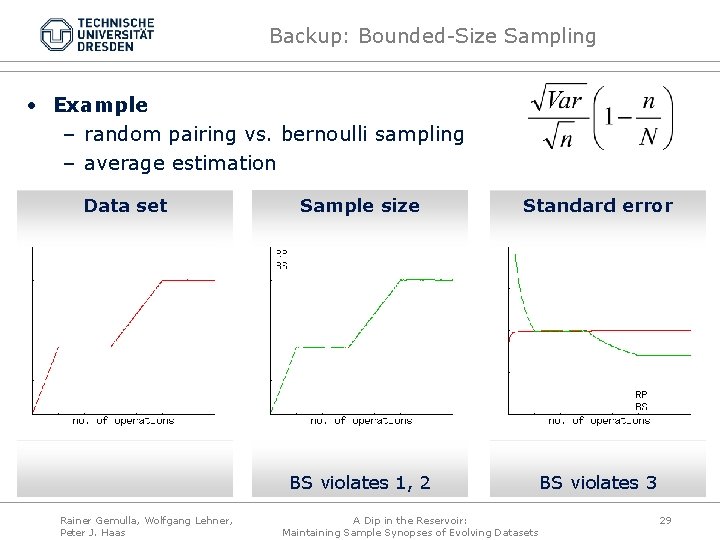

Backup: Bounded-Size Sampling • Example – random pairing vs. bernoulli sampling – average estimation Data set Rainer Gemulla, Wolfgang Lehner, Peter J. Haas Sample size Standard error BS violates 1, 2 BS violates 3 A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 29

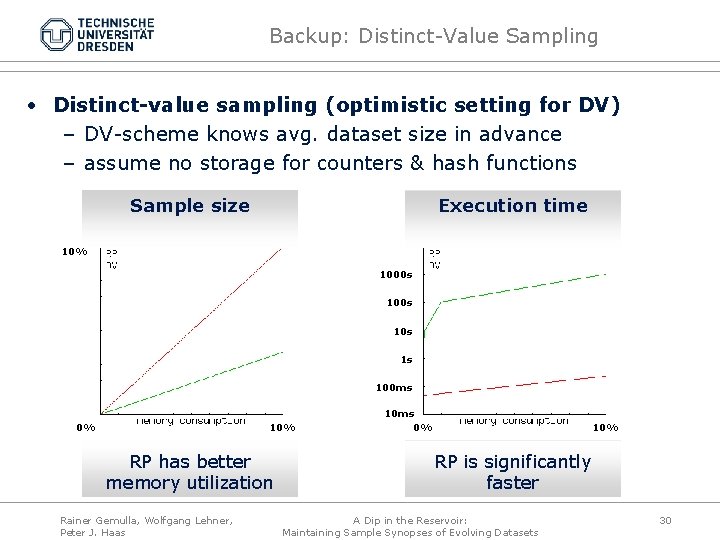

Backup: Distinct-Value Sampling • Distinct-value sampling (optimistic setting for DV) – DV-scheme knows avg. dataset size in advance – assume no storage for counters & hash functions Sample size Execution time 10% 1000 s 10 s 1 s 100 ms 0% 10% RP has better memory utilization Rainer Gemulla, Wolfgang Lehner, Peter J. Haas 10 ms 0% 10% RP is significantly faster A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 30

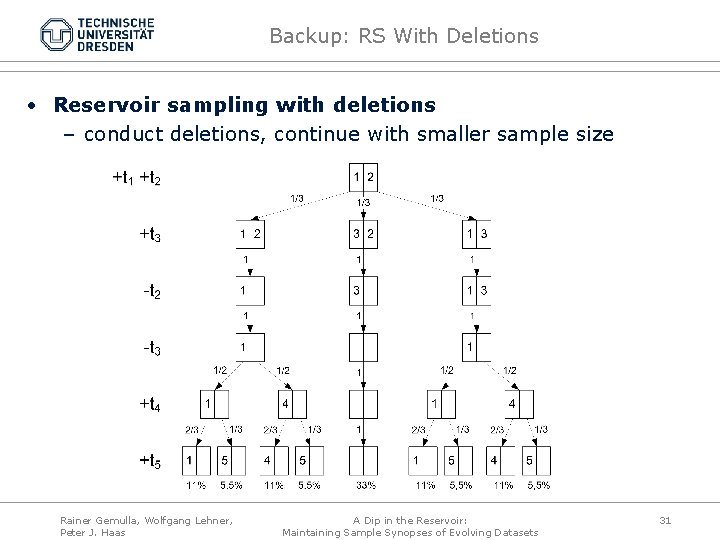

Backup: RS With Deletions • Reservoir sampling with deletions – conduct deletions, continue with smaller sample size Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 31

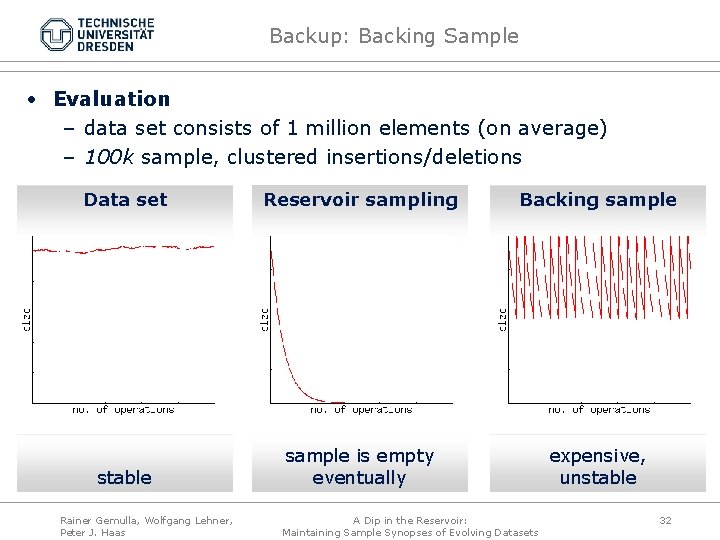

Backup: Backing Sample • Evaluation – data set consists of 1 million elements (on average) – 100 k sample, clustered insertions/deletions Data set Reservoir sampling Backing sample stable sample is empty eventually expensive, unstable Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 32

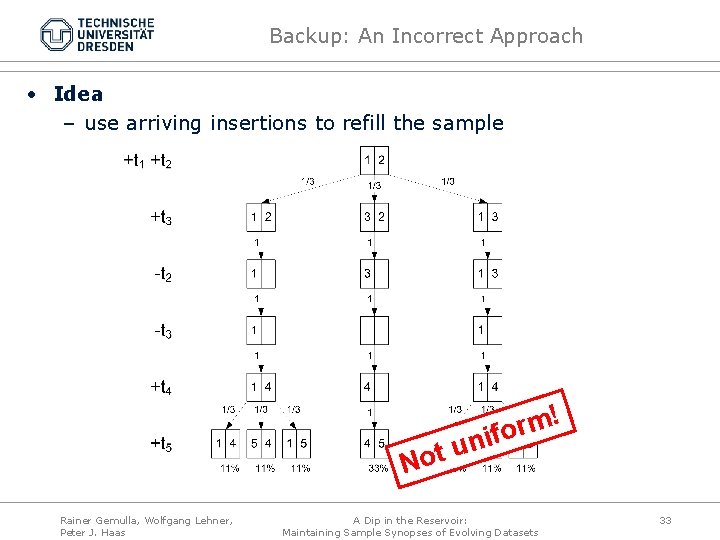

Backup: An Incorrect Approach • Idea – use arriving insertions to refill the sample ! m r o f i n u t o N Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 33

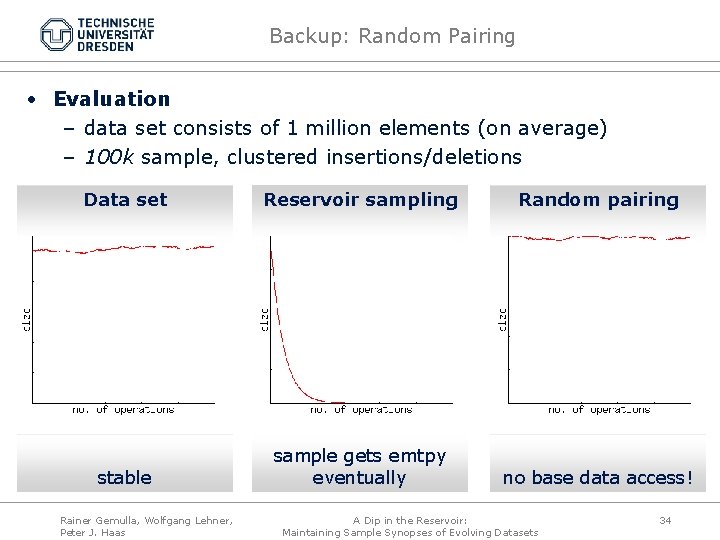

Backup: Random Pairing • Evaluation – data set consists of 1 million elements (on average) – 100 k sample, clustered insertions/deletions Data set Reservoir sampling Random pairing stable sample gets emtpy eventually no base data access! Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 34

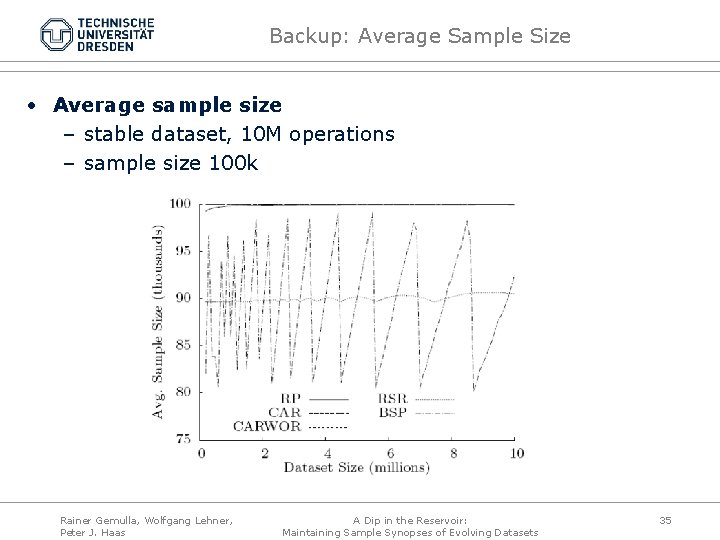

Backup: Average Sample Size • Average sample size – stable dataset, 10 M operations – sample size 100 k Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 35

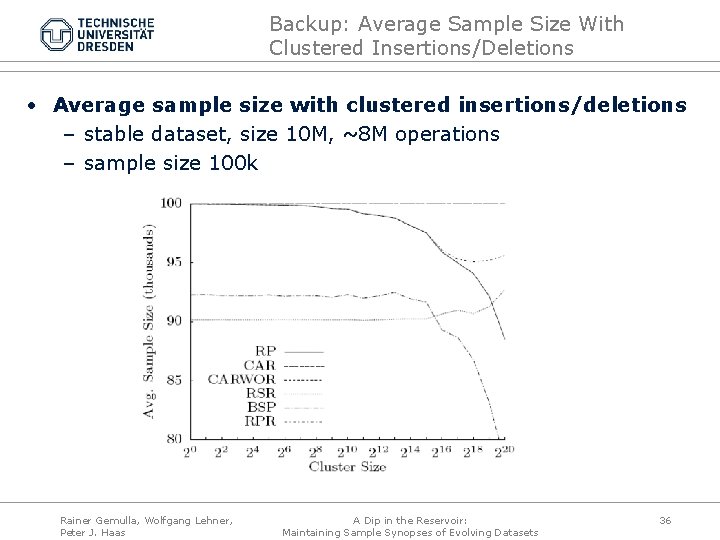

Backup: Average Sample Size With Clustered Insertions/Deletions • Average sample size with clustered insertions/deletions – stable dataset, size 10 M, ~8 M operations – sample size 100 k Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 36

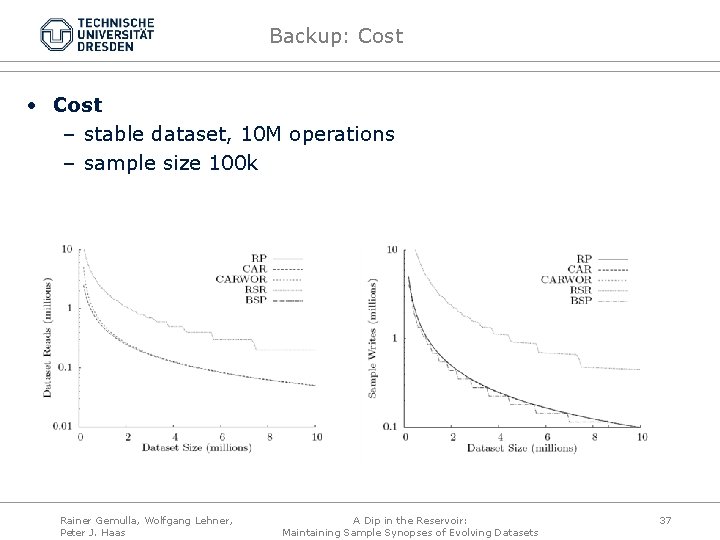

Backup: Cost • Cost – stable dataset, 10 M operations – sample size 100 k Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 37

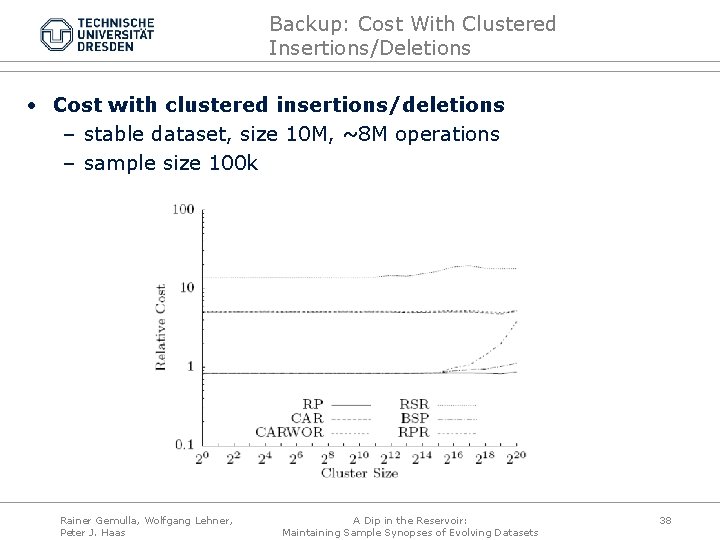

Backup: Cost With Clustered Insertions/Deletions • Cost with clustered insertions/deletions – stable dataset, size 10 M, ~8 M operations – sample size 100 k Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 38

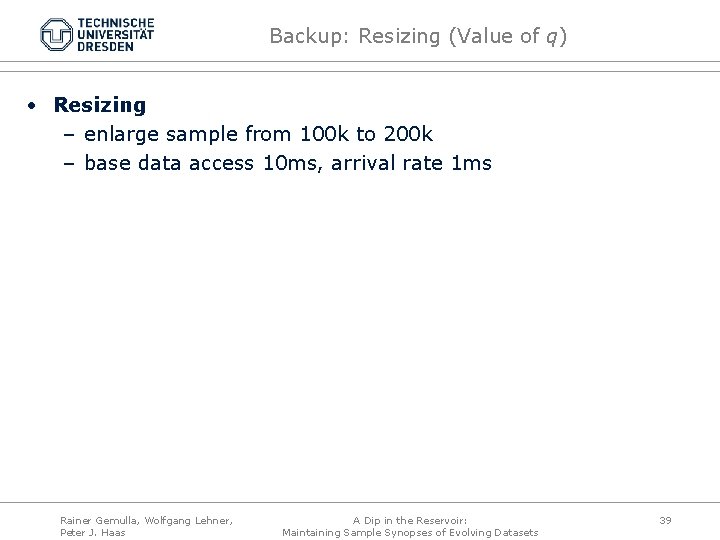

Backup: Resizing (Value of q) • Resizing – enlarge sample from 100 k to 200 k – base data access 10 ms, arrival rate 1 ms Rainer Gemulla, Wolfgang Lehner, Peter J. Haas A Dip in the Reservoir: Maintaining Sample Synopses of Evolving Datasets 39

- Slides: 39