Factor Analysis Exploratory Factor Analysis What we will

- Slides: 41

Factor Analysis Exploratory Factor Analysis

What we will cover • High level conceptual overview of factor analysis • Multivariate analysis • Factor analysis • Constructs • Survey design • • Cross-correlation EFA vs CFA An example from Urdan (2010) A short example in R

Multivariate • Multivariate analysis consists of a collection of methods that can be used when several measurements are made on each individual or object in one or more samples. • We will refer to the measurements as variables and to the individuals or objects as units (research units, sampling units, or experimental units) or observations. • In practice, multivariate data sets are common, although they are not always analyzed as such. • Historically, the bulk of applications of multivariate techniques have been in the behavioral and biological sciences.

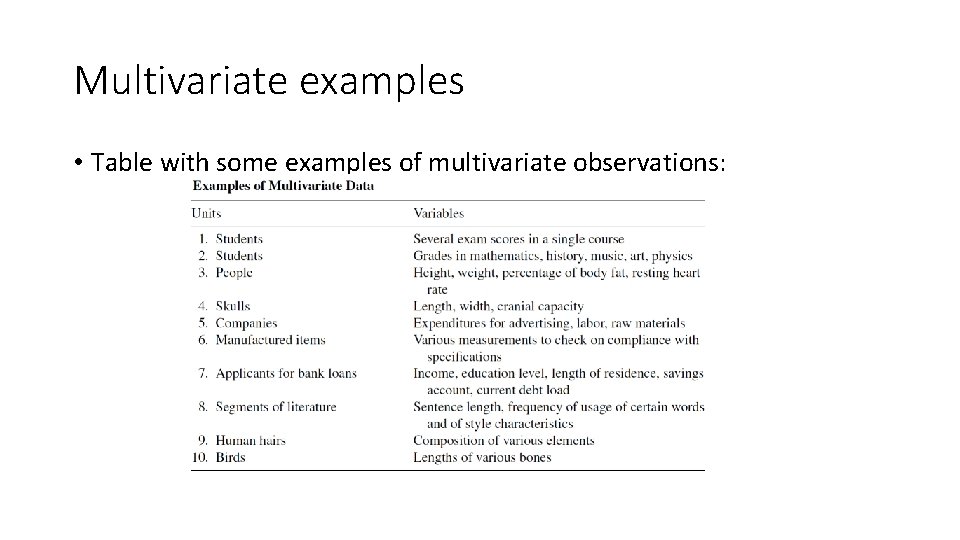

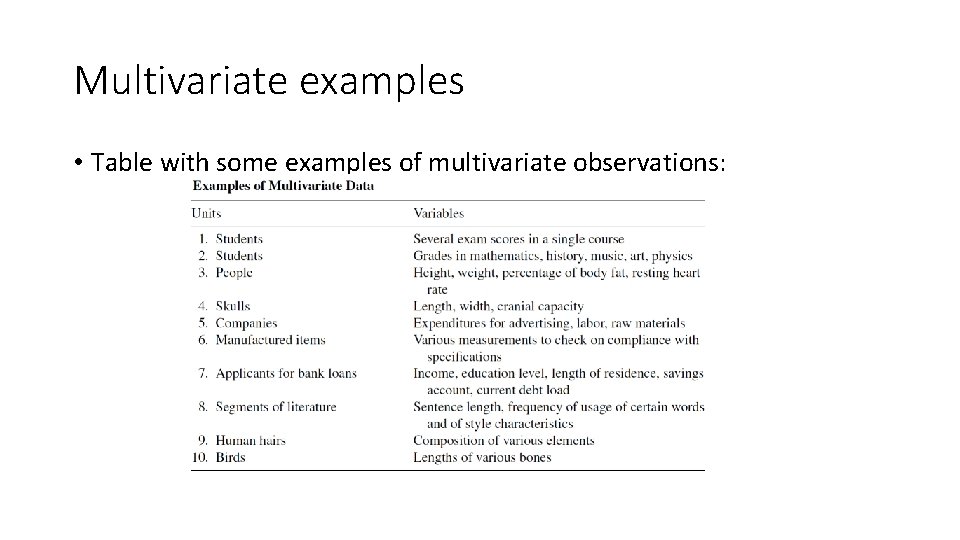

Multivariate examples • Table with some examples of multivariate observations:

Factor Analysis • Factor analysis is a multivariate statistical approach commonly used in psychology, education. • Factor analysis is a multivariate statistical procedure that has many uses two of which will be briefly noted here: • Firstly, factor analysis reduces a large number of variables into a smaller set of variables (also referred to as factors). • Secondly, it establishes underlying dimensions between measured variables and latent constructs, thereby allowing the formation and refinement of theory. Long story short: a particular version of it is often used to consolidate survey data by revealing the groupings (factors) that underlie individual questions.

Why has it been so common in the social sciences? • It is quite common for researchers to measure a single construct using more than one item. This is especially common in survey research. • For example, someone examining student motivation may give students surveys that ask them about their interests, values, and goals. Within the survey itself there will potentially be several survey items (questions) to measure each of these constructs, i. e. , there are typically more survey items than constructs. • For example we may wish to survey students re. their motivation. The questions: • (1) The information we learn in this class is interesting. • (2) The information we learn in this class is important. • (3) The information we learn in this class will be useful to me. • Where students indicate how much they agree or disagree with each statement

Factors & Surveys • Although these three items on the survey are all separate questions, they are assumed to all be parts of a larger, underlying construct called Value. • The three survey items are called observed variables because they have actually been measured (i. e. , observed) with the survey items. • The underlying construct these items are supposed to represent, Value, is called an unobserved variable or a latent variable because it is not measured directly. • Rather, it is inferred, or indicated, by the three observed variables.

Factors & Surveys - cont • When researchers use multiple measures to represent a single underlying construct, they must perform some statistical analyses to determine how well the items in one construct go together, and how well the items that are supposed to represent one construct separate from the items that are supposed to represent a different construct. • The way that we do this is with factor analysis and reliability analysis. • Lets think of an example – supposed we wished to measure ‘work satisfaction’. • We know or at least can guess that might several different things that make up an employee’s work satisfaction score. (In other words it is multi-faceted).

Making a survey • So you decide to ask several questions on a survey to measure the single construct of Work Satisfaction: • You ask a question about whether people are happy with their pay. • You ask another question about whether people feel they have a good relationship with their boss. • You ask a third question about whether people are happy with the amount of responsibility they are given at work, and a fourth question about whether they like the physical space in which they work.

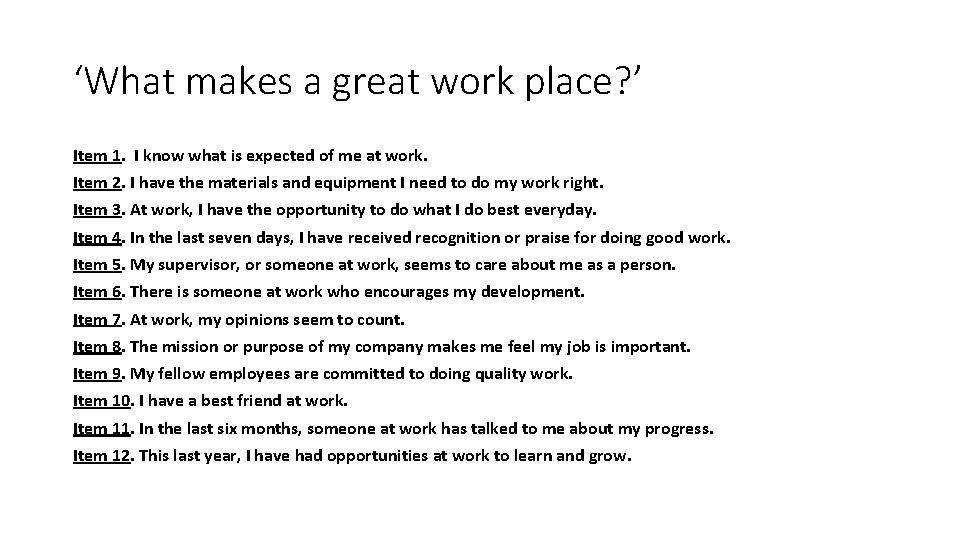

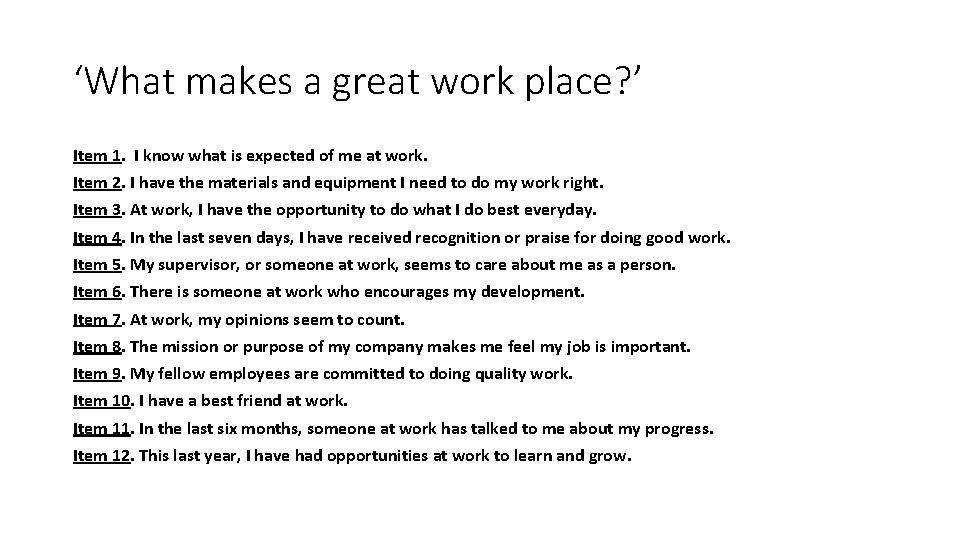

‘What makes a great work place? ’ Item 1. I know what is expected of me at work. Item 2. I have the materials and equipment I need to do my work right. Item 3. At work, I have the opportunity to do what I do best everyday. Item 4. In the last seven days, I have received recognition or praise for doing good work. Item 5. My supervisor, or someone at work, seems to care about me as a person. Item 6. There is someone at work who encourages my development. Item 7. At work, my opinions seem to count. Item 8. The mission or purpose of my company makes me feel my job is important. Item 9. My fellow employees are committed to doing quality work. Item 10. I have a best friend at work. Item 11. In the last six months, someone at work has talked to me about my progress. Item 12. This last year, I have had opportunities at work to learn and grow.

Factors & Surveys • Back to our thoughts on ‘worker satisfaction’. There are two reasons you might ask so many questions about a single underlying construct like Work Satisfaction. • (1) you want to cover several aspects of Work Satisfaction because you want your measure to be a good representation of the construct. • (2) you want to have some confidence that the participants in your study are interpreting your questions as you mean them. • If you just asked a single question like “How happy are you with your work? ” it would be difficult for you to know what your participants mean with their responses. • One participant may say he is very happy with his work because he thought you were asking whether he thinks he is producing work of high quality. • Another participant may she is very happy because she thought you were asking whether she feels like she is paid enough, and she just received a large bonus…

Factors & Surveys • So if you only measure your construct with a single question, it may be difficult for you determine if the same answers on your question mean the same thing for different participants. • Using several questions to measure the same construct helps researchers feel confident that participants are interpreting the questions in similar ways. • If the four items that you asked about Work Satisfaction really do measure the same underlying construct, then most participants will answer all four questions in similar ways. • In other words, people with high Work Satisfaction will generally say they are paid well, like their boss, have an appropriate level of responsibility, and are comfortable in their work space. Similarly, most people with low Work Satisfaction will respond in similar ways to all four of your questions. • To use statistical language, the responses on all of the questions that you use to measure a single construct should be strongly correlated.

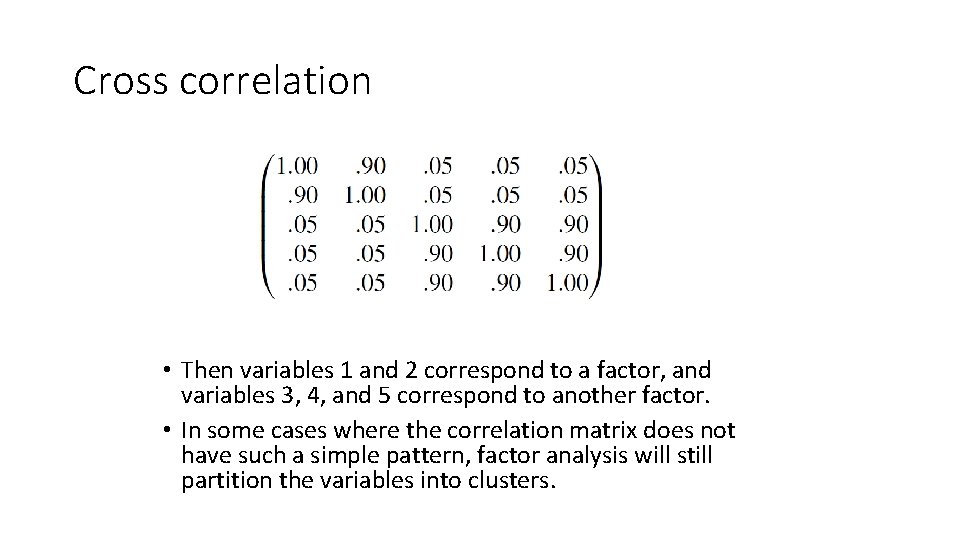

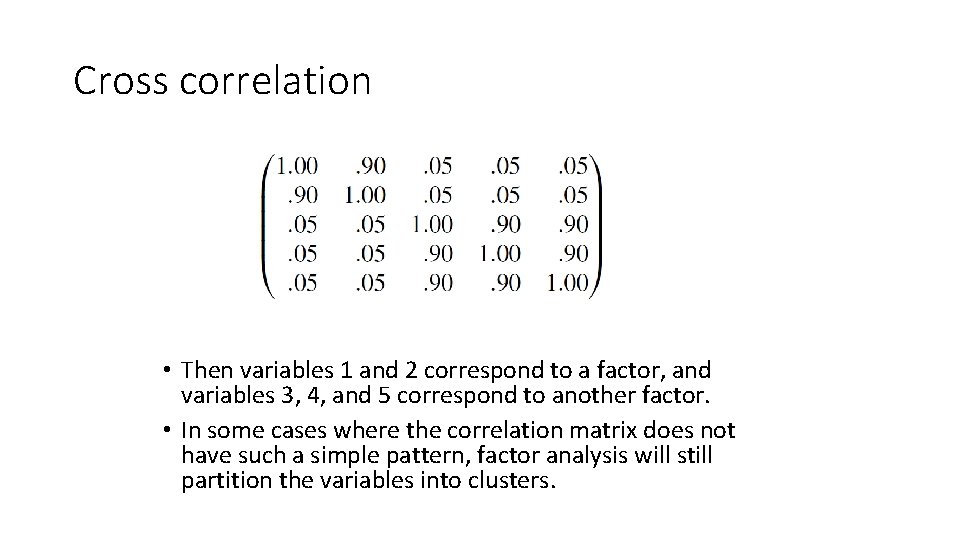

Cross correlation • Lets consider this ‘strong correlation’ abstractly. If we are to correlate several questions (survey items) then we would look at cross correlation via a correlation matrix. • Suppose the pattern of the high and low correlations in the correlation matrix is such that the variables in a particular subset have high correlations among themselves but low correlations with all the other variables. • Then there may be a single underlying factor that gave rise to the variables in the subset. If the other variables can be similarly grouped into subsets with a like pattern of correlations, then a few factors can represent these groups of variables. In this case the pattern in the correlation matrix corresponds directly to the factors. • For example, suppose the correlation matrix has the form:

Cross correlation • Then variables 1 and 2 correspond to a factor, and variables 3, 4, and 5 correspond to another factor. • In some cases where the correlation matrix does not have such a simple pattern, factor analysis will still partition the variables into clusters.

Factor Analysis • So what does all of this have to do with factor analysis? What a factor analysis does, in a nutshell, is find out which items are most strongly correlated with each other and then group them together. • As a researcher, you hope and expect that the items you use to measure one construct (e. g. , Work Satisfaction) are all highly correlated with each other and can be grouped together. • There are two major classes of factor analysis: • Exploratory Factor Analysis (EFA) • Confirmatory Factor Analysis (CFA).

Exploratory vs. Confirmatory Factor Analysis • Exploratory factor analysis (EFA) and confirmatory factor analysis (CFA) are two statistical approaches used to examine the internal reliability of a measure. • Both are used to investigate theoretical constructs, or factors, that might be represented by a set of items. • With EFA, researchers usually decide on the number of factors by examining output from a principal components analysis (i. e. , eigenvalues are used). With CFA, the researchers must specify the number of factors a priori. • CFA requires that a particular factor structure be specified, in which the researcher indicates which items load on which factor. EFA allows all items to load on all factors.

5 -steps in EPA • The 5 -step Exploratory Factor Analysis Protocol: • • • Is the data suitable for factor analysis? How will the factors be extracted? What criteria will assist in determining factor extraction? Selection of rotational method. Interpretation and labeling. • Before we move on lets just briefly say something about ‘rotational method’. • Factor rotation: in the process of identifying and creating factors from a set of items, the factor analysis works to make the factors distinct from each other. • In the most common method of factor rotation, orthogonal, the factor analysis rotates the factors to maximize the distinction between them.

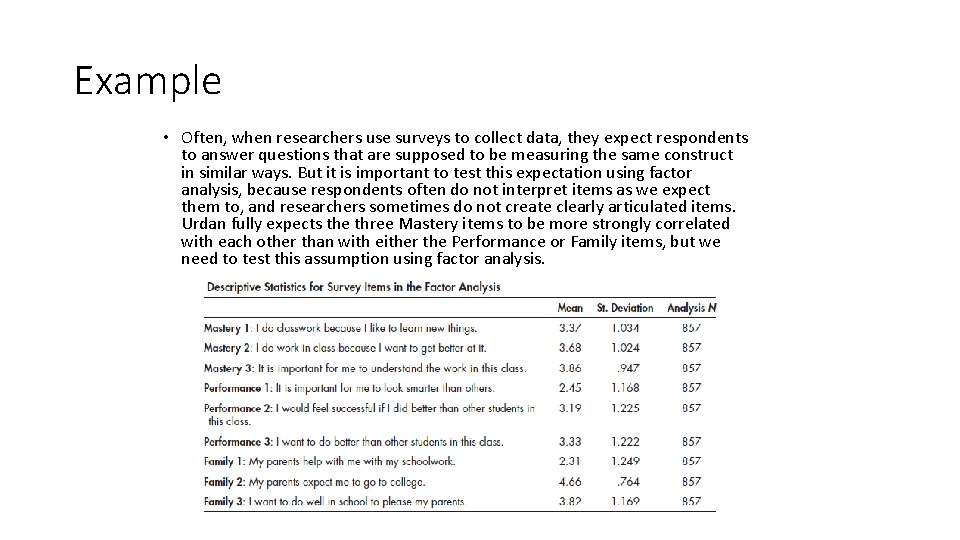

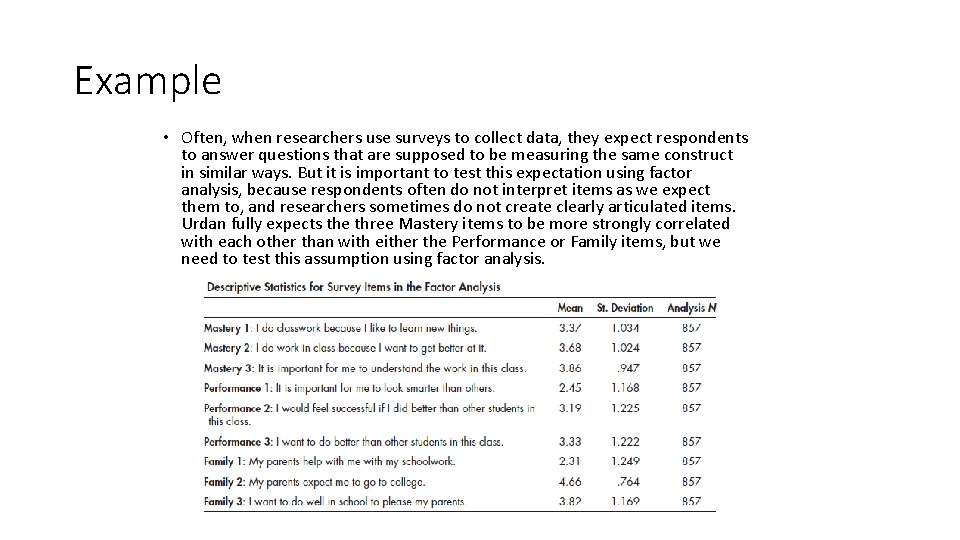

Example • Lets keep the analysis at the conceptual level for moment and look at a worked example given by Urdan (2010). • Urdan gave surveys to a sample of 857 students. The surveys included questions asking about Mastery goals, Performance goals, and Family-related concerns regarding education. • Mastery goals represent a desire to learn, improve, and understand new concepts. • Performance goals represent a desire to look smart and to do better than others. • And the family questions asked about whether parents help with homework, whether parents expect the students to attend college, and whether the student wants to succeed for the sake of pleasing family members. • Three items from each construct are listed along with their means and standard deviations. Each of these items was measured on a 5 -point scale with 1 indicating less agreement with the statement and 5 indicating total agreement with the statement.

Example • Often, when researchers use surveys to collect data, they expect respondents to answer questions that are supposed to be measuring the same construct in similar ways. But it is important to test this expectation using factor analysis, because respondents often do not interpret items as we expect them to, and researchers sometimes do not create clearly articulated items. Urdan fully expects the three Mastery items to be more strongly correlated with each other than with either the Performance or Family items, but we need to test this assumption using factor analysis.

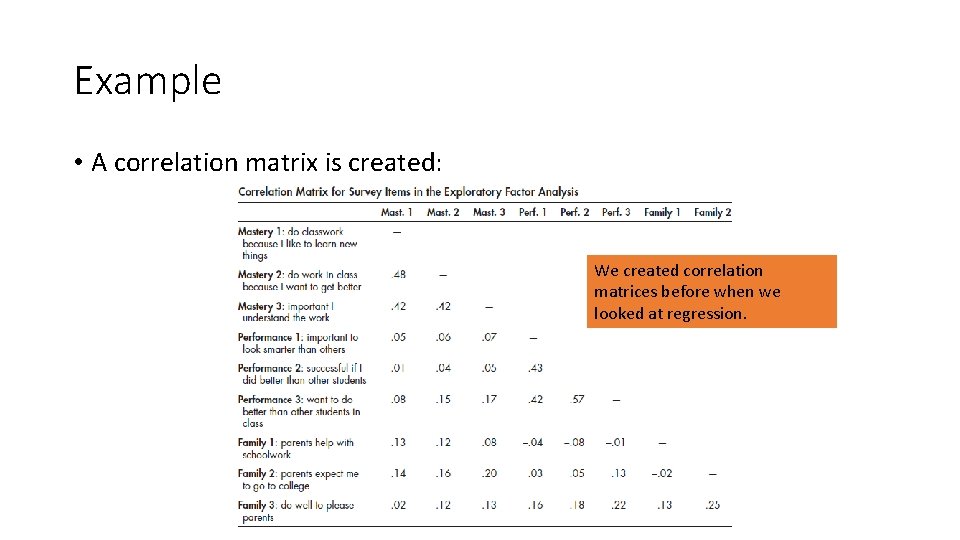

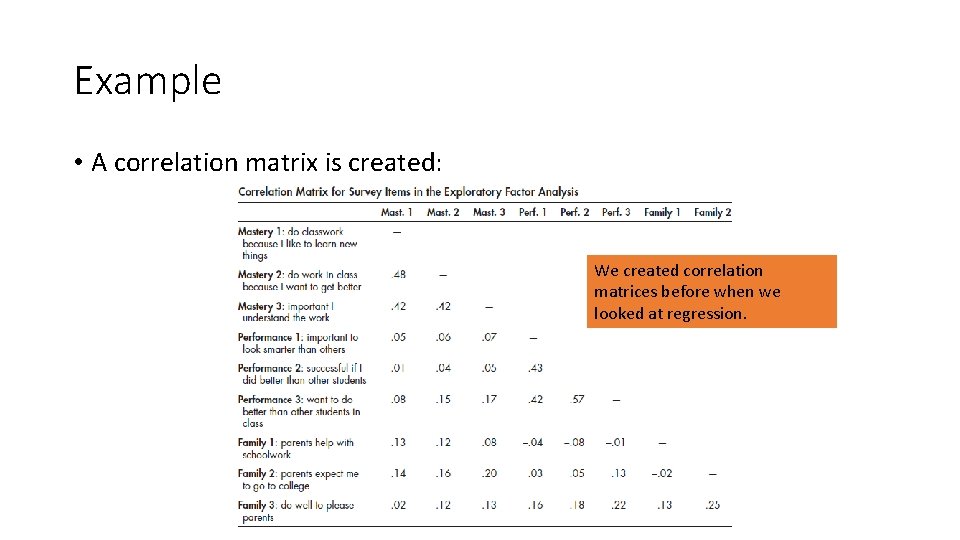

Example • A correlation matrix is created: We created correlation matrices before when we looked at regression.

Example • The three Mastery items are all correlated with each other above the r =. 40 level and are correlated with the Performance and Family items at r <. 25 level. • Similarly, all of the Performance items correlated with each other above the r =. 40 and are correlated with the other 6 items below r <. 25. • In contrast, the 3 Family items are not very strongly correlated with each other (r’s <. 30) and in some cases are more strongly correlated with a Mastery or Performance item than with other Family items. • These correlations suggest that the Mastery and Performance items will separate into nice, clean, separate factors in our factor analysis, but the Family items may not.

Example • When a statistics programs performs a factor analysis, it keeps reorganizing all of the items in the analysis into new factors and then rotating these factors away from each other to create as many meaningful, separate factors as it can. • The first factor starts by combining the most strongly correlated items because it is these items that explain the most variance in the full collection of all 9 items. • Then, it creates a second factor based on the items with the second strongest set of correlations, and this new factor will explain the second most variance in the total collection of items. • As you can see, each time the program creates a new factor, the new factors will explain less and less of the total variance. Pretty soon, the new factors being created hardly explain any additional variance, and they are therefore not all that useful.

Output • One of the jobs of the researcher is to interpret the results of the factor analysis to decide how many factors are needed to make sense of the data. Typically, researchers use several pieces of information to help them decide, including some of the information in the table. • For example, many researchers will only consider a factor meaningful if it has an eigenvalue of at least 1. 0. • (An eigenvalue is a measure of variance explained in the vector space created by the factors).

Eigenvalues • Two interpretations: • eigenvalue equivalent number of variables which the factor represents • eigenvalue amount of variance in the data described by the factor. • Rules to go by: • • number of eigenvalues > 1 scree plot % variance explained comprehensibility • Note: sum of eigenvalues is equal to the number of items

Example • Factors that explain less than 10% of the total variance in the full set of items are sometimes considered too weak to be considered. In addition, conceptual considerations are important. • We may have a factor that has an eigenvalue greater than 1. 0 but the items that load most strongly on the factor do not make sense together, so we may not keep this factor in my subsequent analyses. • What can we tell from the table? • The values suggest that the 9 items form 4 meaningful factors.

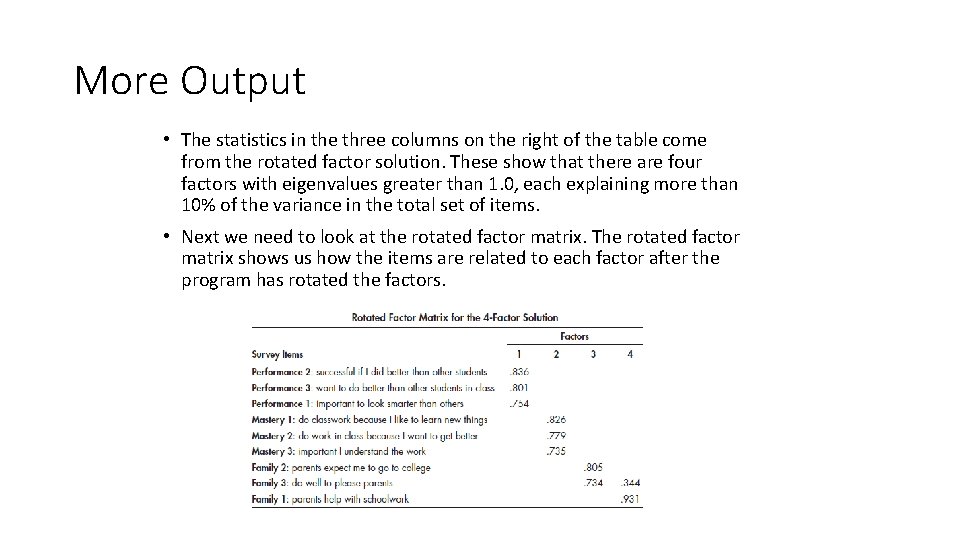

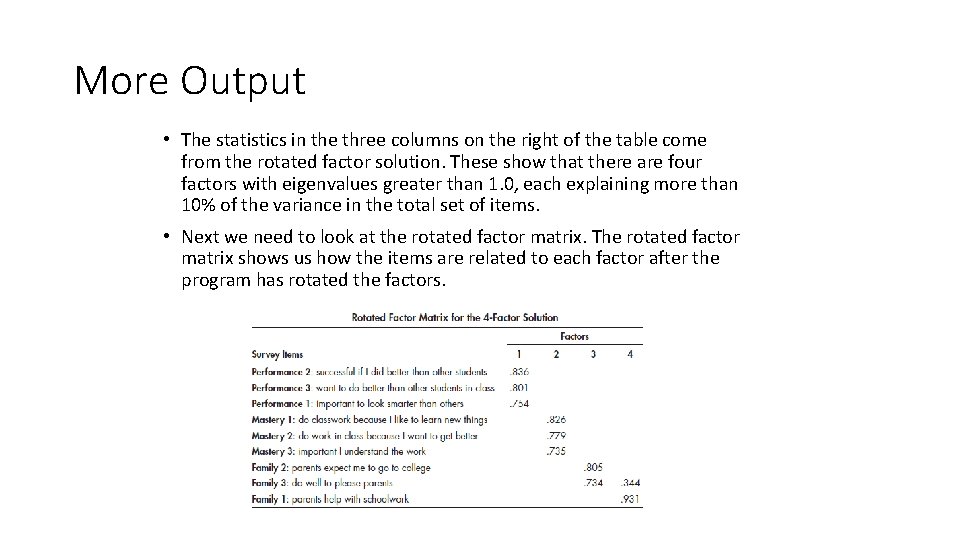

More Output • The statistics in the three columns on the right of the table come from the rotated factor solution. These show that there are four factors with eigenvalues greater than 1. 0, each explaining more than 10% of the variance in the total set of items. • Next we need to look at the rotated factor matrix. The rotated factor matrix shows us how the items are related to each factor after the program has rotated the factors.

Rotation? • “Orthogonal” • • maintains independence of factors more commonly seen usually at least one options: varimax, quartimax, equamax, parsimax, etc. • “Oblique” • allows dependence of factors • make distinctions sharper (loadings closer to 0’s and 1’s • can be harder to interpret once you lose independence of factors

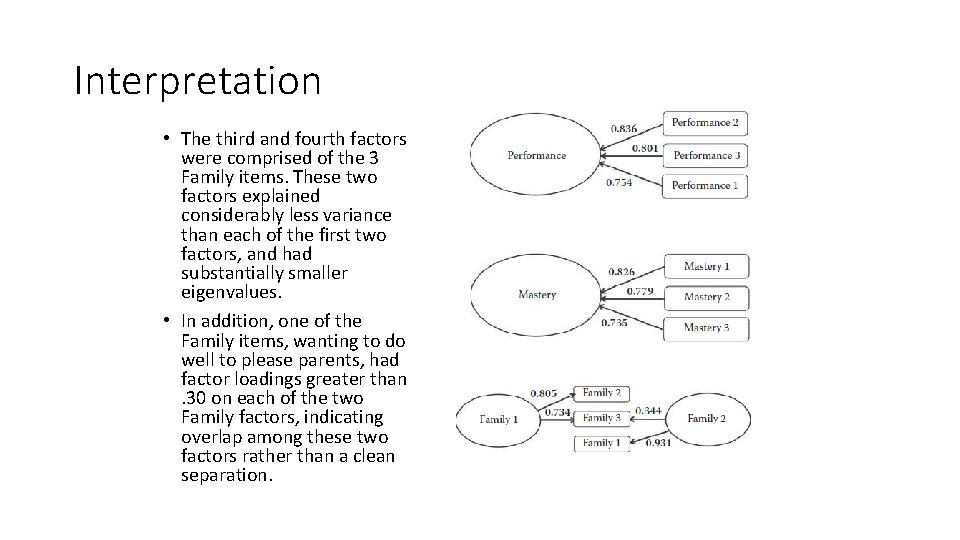

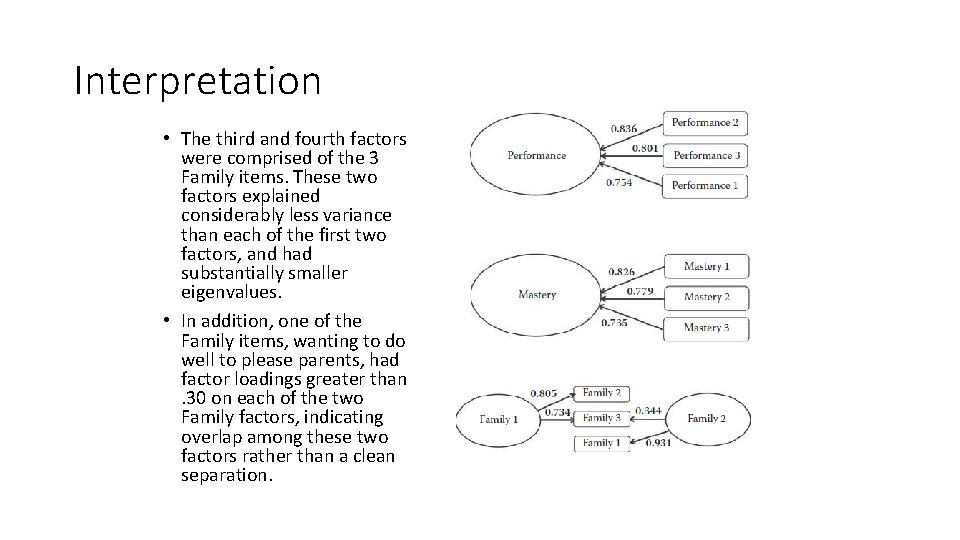

Interpretation • What does this mean? • Basically, it shows how the items relate to the proposed factors. • The first factor, which explained about 22% of the total variance and had a rotated eigenvalue of 1. 98, was dominated by the three Performance items. • Each of these items was strongly correlated with the first factor (factor loadings greater than. 70) and weakly related to the other three factors (factor loadings less than. 30, and therefore invisible). • Similarly, the second factor in the table was dominated by the 3 Mastery items. This factor explained almost as much variance as the first factor (21%) and had a similar rotated eigenvalue (1. 91).

Interpretation • The third and fourth factors were comprised of the 3 Family items. These two factors explained considerably less variance than each of the first two factors, and had substantially smaller eigenvalues. • In addition, one of the Family items, wanting to do well to please parents, had factor loadings greater than. 30 on each of the two Family factors, indicating overlap among these two factors rather than a clean separation.

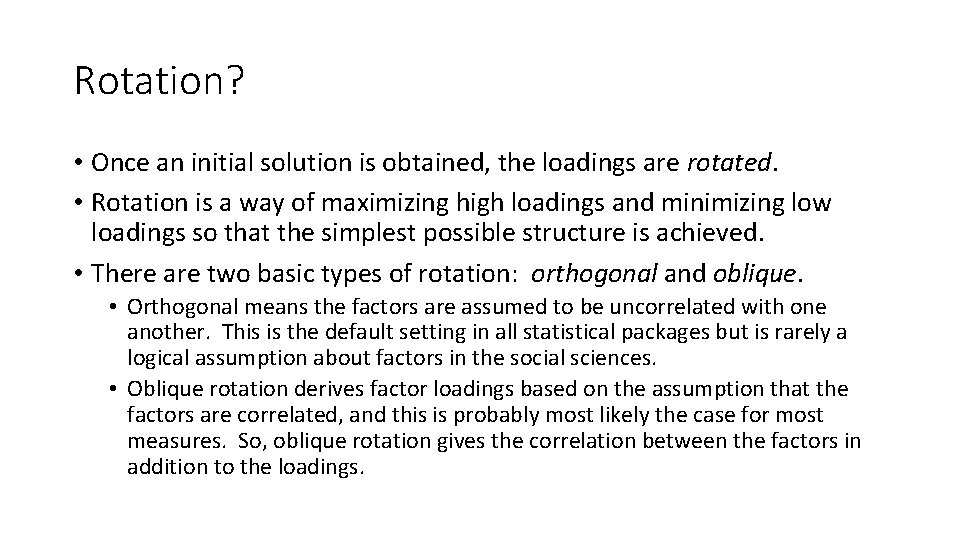

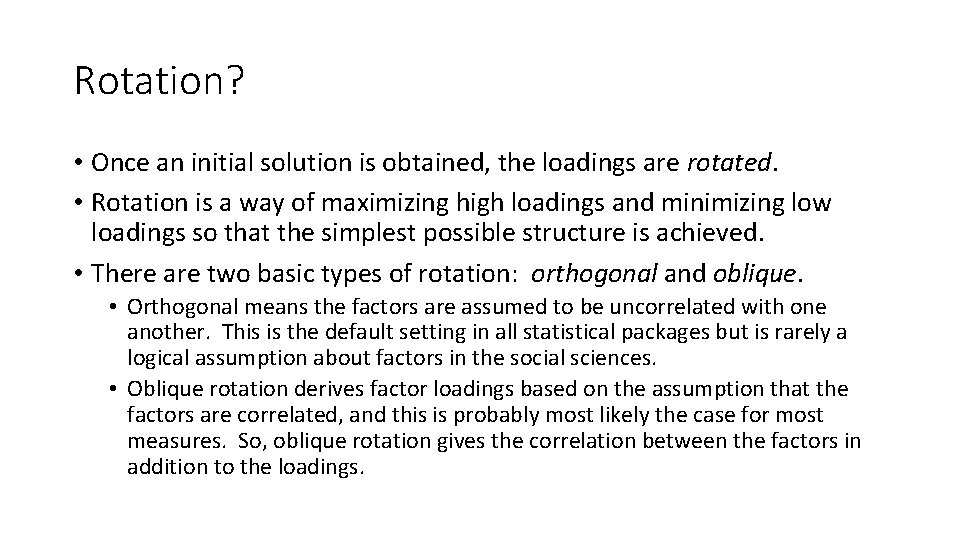

Rotation? • Once an initial solution is obtained, the loadings are rotated. • Rotation is a way of maximizing high loadings and minimizing low loadings so that the simplest possible structure is achieved. • There are two basic types of rotation: orthogonal and oblique. • Orthogonal means the factors are assumed to be uncorrelated with one another. This is the default setting in all statistical packages but is rarely a logical assumption about factors in the social sciences. • Oblique rotation derives factor loadings based on the assumption that the factors are correlated, and this is probably most likely the case for most measures. So, oblique rotation gives the correlation between the factors in addition to the loadings.

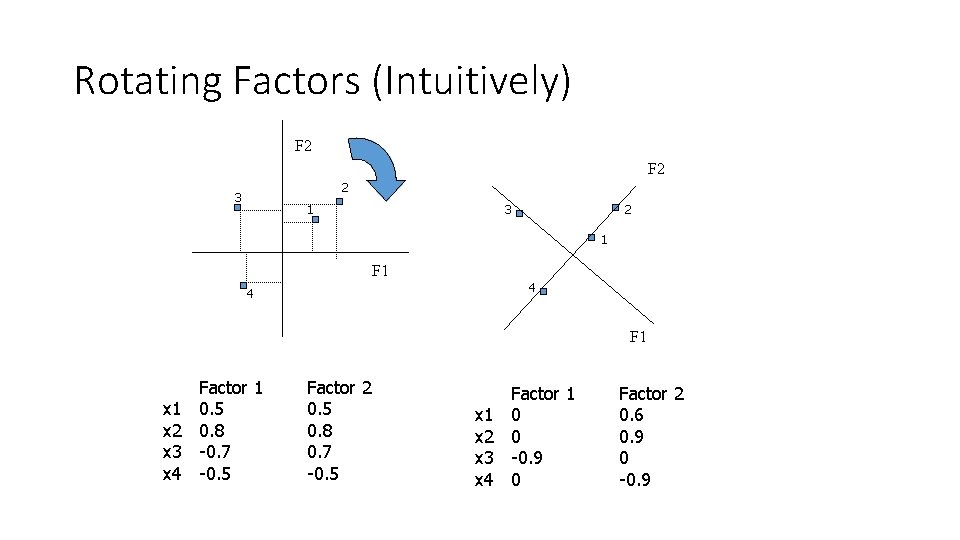

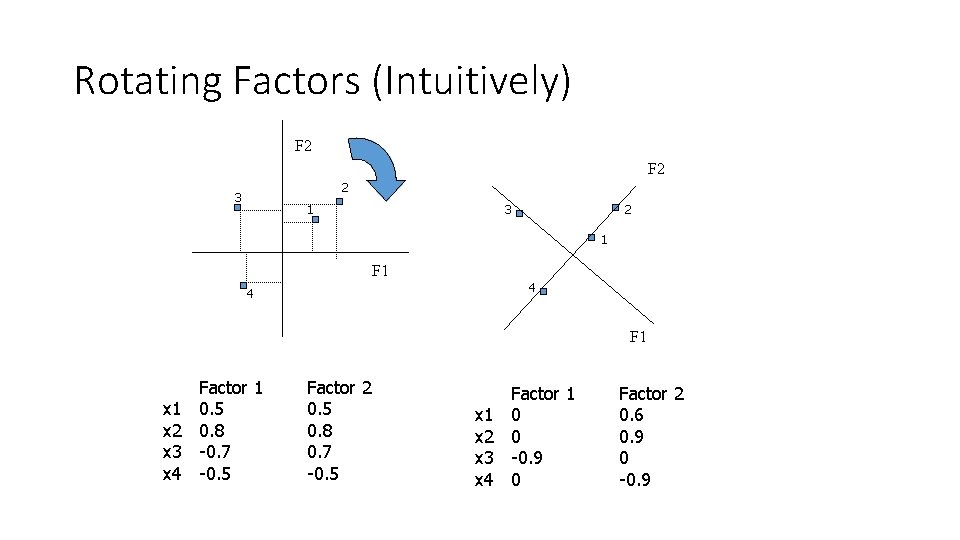

Rotating Factors (Intuitively) F 2 2 3 1 3 2 1 F 1 4 4 F 1 x 2 x 3 x 4 Factor 1 0. 5 0. 8 -0. 7 -0. 5 Factor 2 0. 5 0. 8 0. 7 -0. 5 x 1 x 2 x 3 x 4 Factor 1 0 0 -0. 9 0 Factor 2 0. 6 0. 9 0 -0. 9

Sample size & variable ratio • Sample size: Although sample size is important in factor analysis, there are varying opinions, and several guiding rules of thumb are cited in the literature. • General guides include, Tabachnick’s rule of thumb that suggests having at least 300 cases are needed for factor analysis. • Comrey and Lee in their guide state that sample sizes can be rated: 100 as poor, 200 as fair, 300 as good, 500 as very good, and 1000 or more as excellent. • Sample to variable ratio: Another set of recommendations also exist providing researchers with guidance regarding how many participants are required for each variable, often termed, the sample to variable ratio, often denoted as N: p ratio where N refers to the number of participants and p refers to the number of variables. • The same disparate recommendations also occur for sample to variable ratios as they do for determining adequate sample sizes.

A quick example in R • Dataset taken from the R Tutorial created by John Quick: • http: //www. r-bloggers. com/r-tutorial-series-exploratory-factor-analysis/ • This dataset contains a hypothetical sample of 300 responses on 6 items from a survey of college students' favorite subject matter. • The items range in value from 1 to 5, which represent a scale from Strongly Dislike to Strongly Like. • Our 6 items asked students to rate their liking of different college subject matter areas, including biology (BIO), geology (GEO), chemistry (CHEM), algebra (ALG), calculus (CALC), and statistics (STAT). • Q - Are there latent variables underlying the students responses?

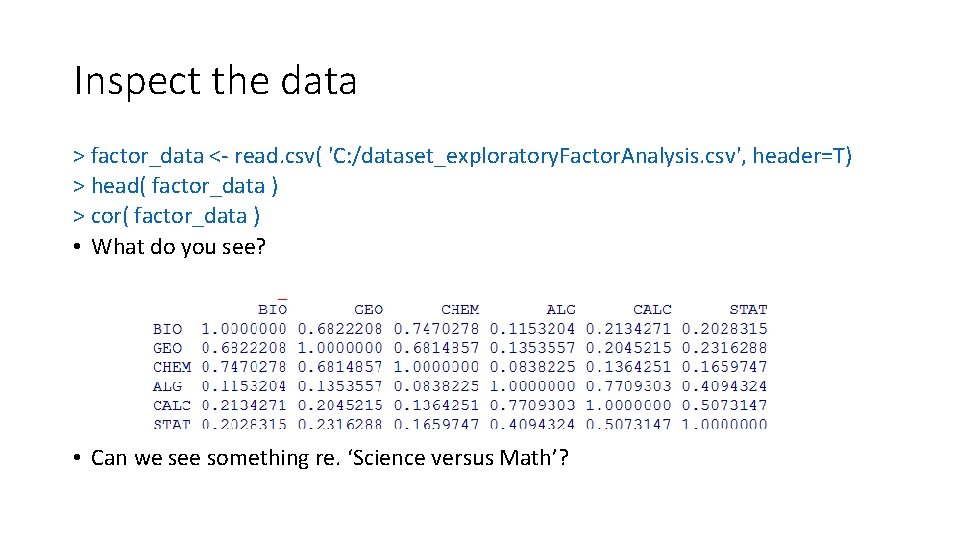

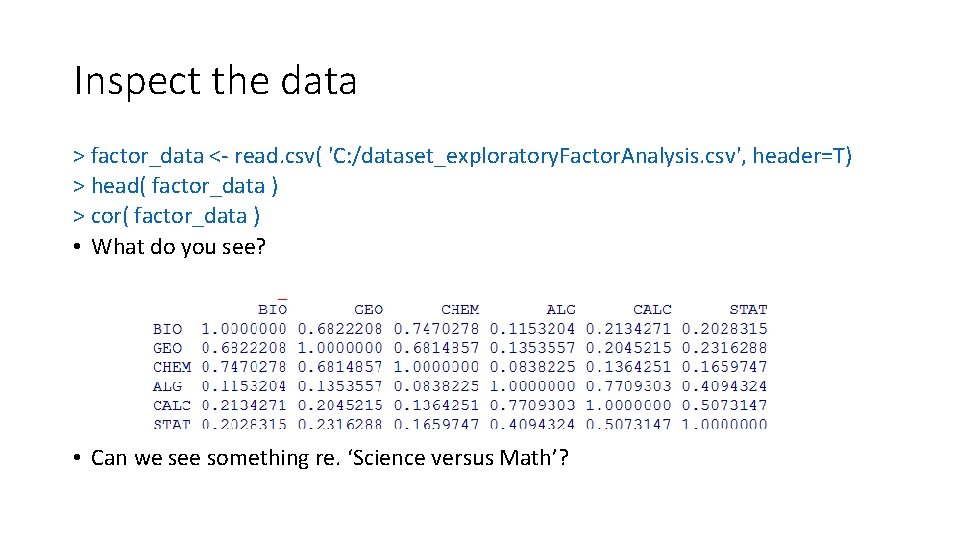

Inspect the data > factor_data <- read. csv( 'C: /dataset_exploratory. Factor. Analysis. csv', header=T) > head( factor_data ) > cor( factor_data ) • What do you see? • Can we see something re. ‘Science versus Math’?

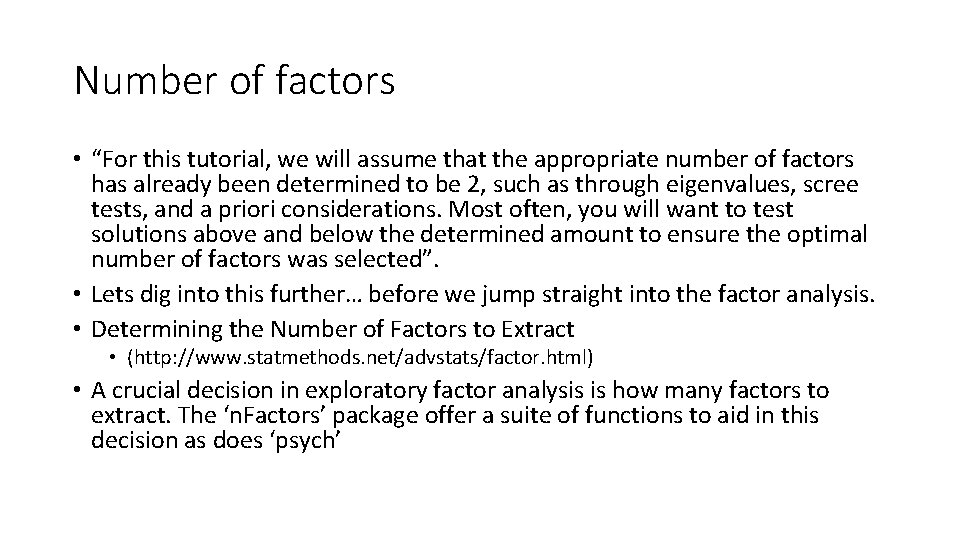

Number of factors • “For this tutorial, we will assume that the appropriate number of factors has already been determined to be 2, such as through eigenvalues, scree tests, and a priori considerations. Most often, you will want to test solutions above and below the determined amount to ensure the optimal number of factors was selected”. • Lets dig into this further… before we jump straight into the factor analysis. • Determining the Number of Factors to Extract • (http: //www. statmethods. net/advstats/factor. html) • A crucial decision in exploratory factor analysis is how many factors to extract. The ‘n. Factors’ package offer a suite of functions to aid in this decision as does ‘psych’

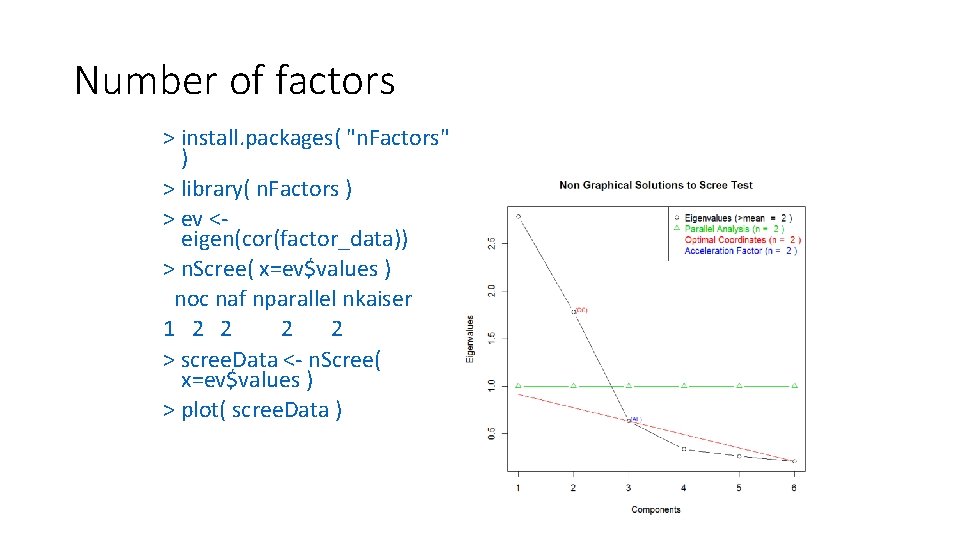

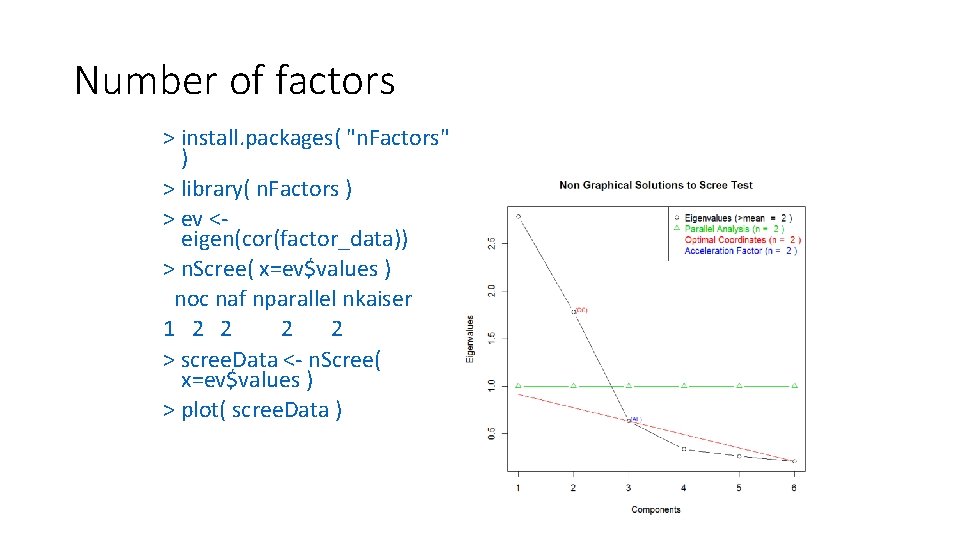

Number of factors > install. packages( "n. Factors" ) > library( n. Factors ) > ev <eigen(cor(factor_data)) > n. Scree( x=ev$values ) noc naf nparallel nkaiser 1 2 2 > scree. Data <- n. Scree( x=ev$values ) > plot( scree. Data )

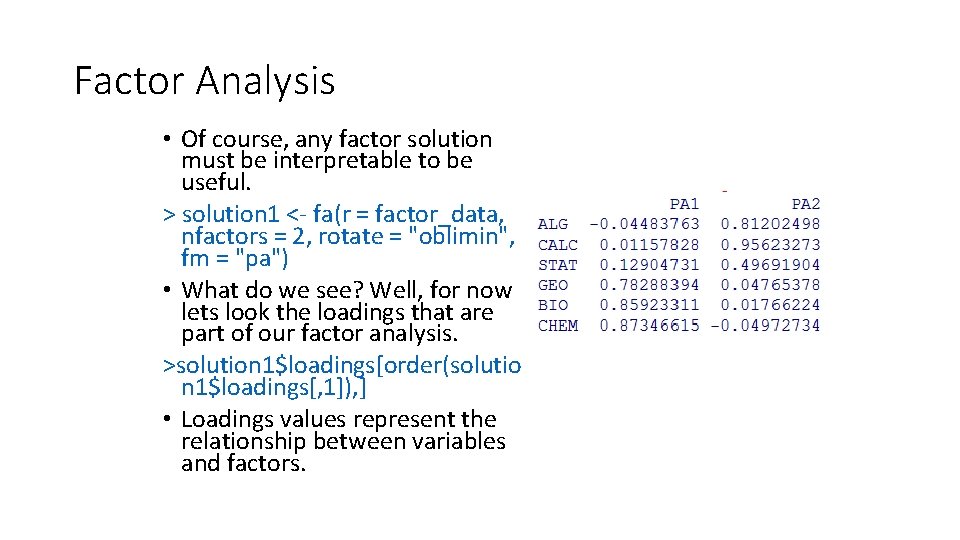

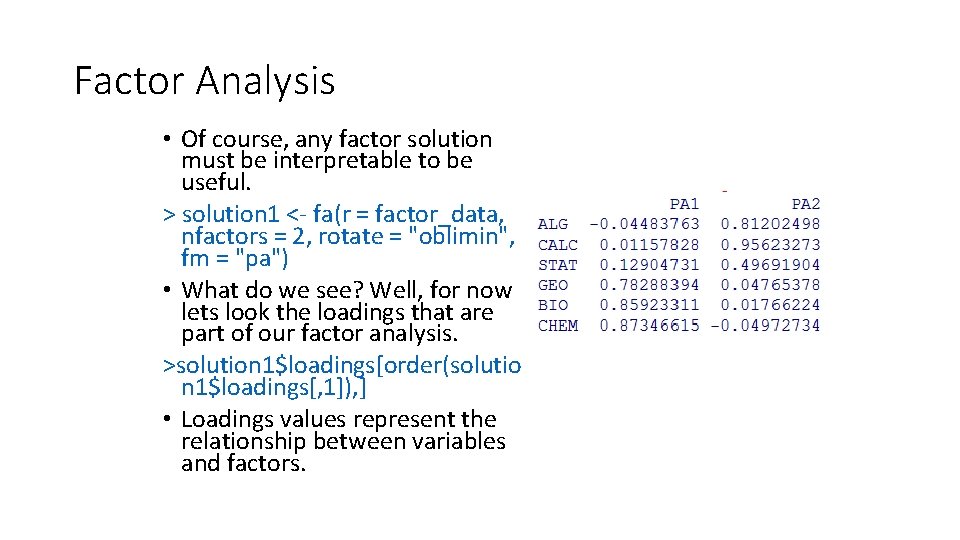

Factor Analysis • Of course, any factor solution must be interpretable to be useful. > solution 1 <- fa(r = factor_data, nfactors = 2, rotate = "oblimin", fm = "pa") • What do we see? Well, for now lets look the loadings that are part of our factor analysis. >solution 1$loadings[order(solutio n 1$loadings[, 1]), ] • Loadings values represent the relationship between variables and factors.

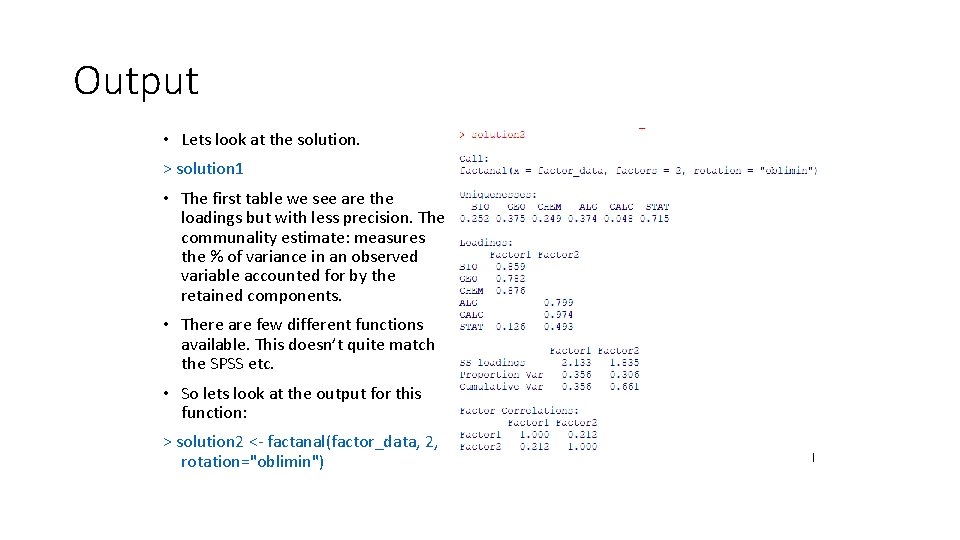

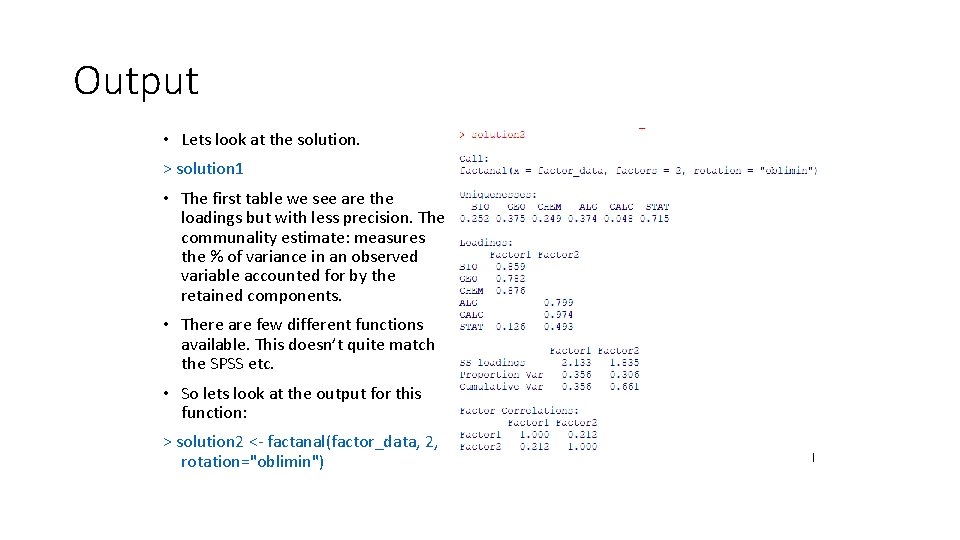

Output • Lets look at the solution. > solution 1 • The first table we see are the loadings but with less precision. The communality estimate: measures the % of variance in an observed variable accounted for by the retained components. • There are few different functions available. This doesn’t quite match the SPSS etc. • So lets look at the output for this function: > solution 2 <- factanal(factor_data, 2, rotation="oblimin")

Interpretation • From the web tutorial: • By looking at our factor loadings, we can begin to assess our factor solution. We can see that BIO, GEO, and CHEM all have high factor loadings around 0. 8 on the first factor (PA 1). • Therefore, we might call this factor Science and consider it representative of a student's interest in science subject matter. • Similarly, ALG, CALC, and STAT load highly on the second factor (PA 2), which we might call Math. Note that STAT has a much lower loading on PA 2 than ALG or CALC and that it has a slight loading on factor PA 1. • This suggests that statistics is less related to the concept of Math than algebra and calculus. Just below the loadings table, we can see that each factor accounted for around 30% of the variance in responses, leading to a factor solution that accounted for 66% of the total variance in students' subject matter preference. • Lastly, notice that our factors are correlated at 0. 21 and recall that our choice of oblique rotation allowed for the recognition of this relationship.

PCA • Related to factor analysis is principal component analysis. In fact, you may use PCA to determine how many factors you need before you build the factor analysis. • Principal components analysis is used to find optimal ways of combining variables into a small number of subsets, while factor analysis may be used to identify the structure underlying such variables and to estimate scores to measure latent factors themselves.

Summary • We looked at a high level conceptual overview of factor analysis. • Remember: • Exploratory factor analysis (EFA) is generally used to discover the factor structure of a measure and to examine its internal reliability. EFA is often recommended when researchers have no hypotheses about the nature of the underlying factor structure of their measure. Exploratory factor analysis has three basic decision points: • (1) decide the number of factors, • (2) choosing an extraction method, • (3) choosing a rotation method.