Face Recognition using Tensor Analysis Presented by Prahlad

Face Recognition using Tensor Analysis Presented by Prahlad R Enuganti

Face Recognition n Why is it necessary? n n Human Computer Interaction Authentication Surveillance Problems include change in n n Illumination Expression Pose Aging

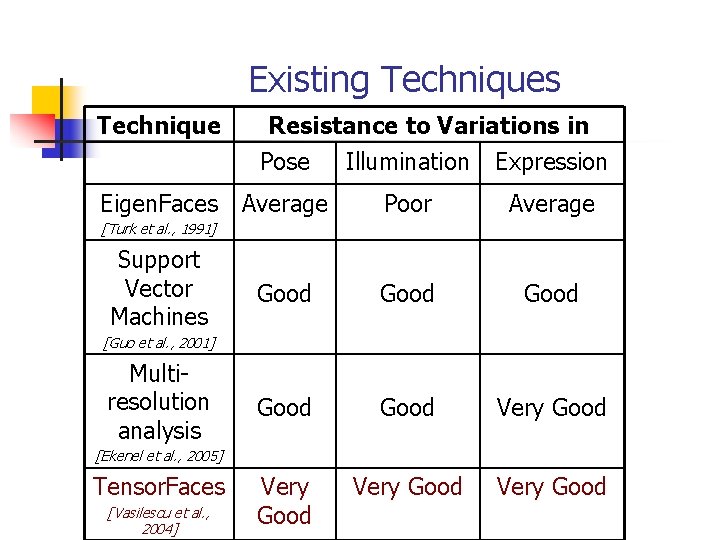

Existing Techniques Technique Eigen. Faces Resistance to Variations in Pose Illumination Expression Average Poor Average Good Good Very Good [Turk et al. , 1991] Support Vector Machines [Guo et al. , 2001] Multiresolution analysis [Ekenel et al. , 2005] Tensor. Faces [Vasilescu et al. , 2004]

![Tensor Algebra n n [Vasilescu et al. , 2002] Higher order generalization of vectors Tensor Algebra n n [Vasilescu et al. , 2002] Higher order generalization of vectors](http://slidetodoc.com/presentation_image_h2/b88e374cb71512aa37bbea3f94606435/image-4.jpg)

Tensor Algebra n n [Vasilescu et al. , 2002] Higher order generalization of vectors and matrices. An Nth order tensor is represented as A Є R I 1 x I 2 x…. IN and each element by aijk…. N The mode n vectors of a tensor are obtained by varying index n while keeping other indices fixed. They are obtained by flattening the tensor A and are represented by A(n) Example of flattening a 3 rd order tensor

Tensor Decomposition n In case of 2 -D, a matrix D can be decomposing using SVD D = U 1 ∑ U 2 T , where ∑ is a diagonal singular matrix, U 1 and U 2 are column and row orthogonal space respectively In terms of mode - n vectors, the product can be rewritten as D = (∑ ) X 1 (U 1) X 2 (U 2) In case of a Tensor of dimension N, the N-mode SVD can be expressed as D = (Z ) X 1 (U 1) X 2 (U 2) … … XN (UN) Where Z is known as the core tensor and is analogous to diagonal singular value matrix in 2 -D SVD

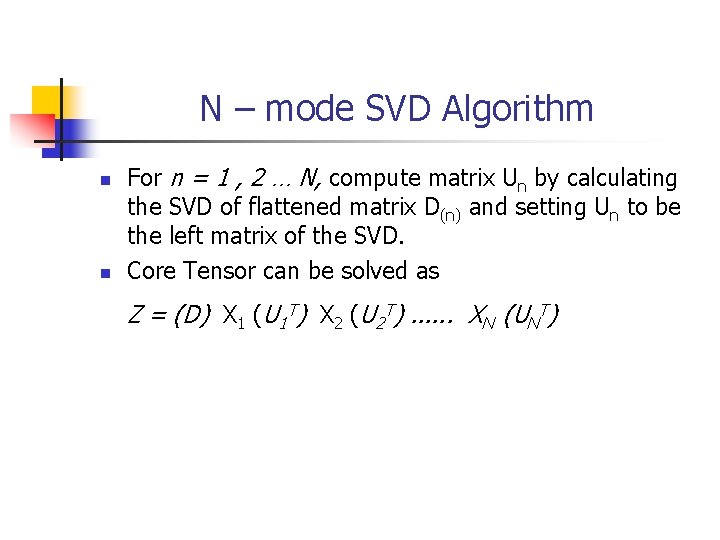

N – mode SVD Algorithm n n For n = 1 , 2 … N, compute matrix Un by calculating the SVD of flattened matrix D(n) and setting Un to be the left matrix of the SVD. Core Tensor can be solved as Z = (D) X 1 (U 1 T) X 2 (U 2 T). . . XN (UNT)

Tensor. Faces n n Our data here consists of 5 variables: people, pixels, pose, illumination and expression. Therefore we perform the N –mode decomposition of the 5 th order tensor and obtain n D = Z X 1 Upeople X 2 Uviews X 3 Uillum X 4 Uexpr X 5 Upixels The main advantage of tensor analysis is that it maps all images of a person regardless of other variables to the same coefficient vector giving zero inter-class scatter.

![ISOMAP (Isometric Feature Mapping) [Tenenbaum et al. ] n n n Finds meaningful low-dimensional ISOMAP (Isometric Feature Mapping) [Tenenbaum et al. ] n n n Finds meaningful low-dimensional](http://slidetodoc.com/presentation_image_h2/b88e374cb71512aa37bbea3f94606435/image-8.jpg)

ISOMAP (Isometric Feature Mapping) [Tenenbaum et al. ] n n n Finds meaningful low-dimensional manifold of higher dimensional data by preserving the geodesic distances. Unlike PCA or MDS, ISOMAP is capable of discovering even the nonlinear degrees of freedom. It is guaranteed to converge to the true structure.

ISOMAP : How does it work? n n n Calculates the weighted neighborhood graph for every point by either the Є neighborhood rule or the k nearest neighbor rule. Estimates the geodesic distances between all pairs of points on the lower dimensional manifold by computing the shortest path distances in the graph Applies classical MDS to construct an embedding in the lower dimensional space that best preserves the manifold’s estimated geometry.

- Slides: 9