Fabric Management Progress and Plans PEB Tim Smith

Fabric Management: Progress and Plans PEB Tim Smith IT/FIO 2003/09/23 Fabric Management: Tim. Smith@cern. ch

Contents § Manageable Nodes § Framework for management § Deployed at CERN § Plans 2003/09/23 Fabric Management: Tim. Smith@cern. ch 2

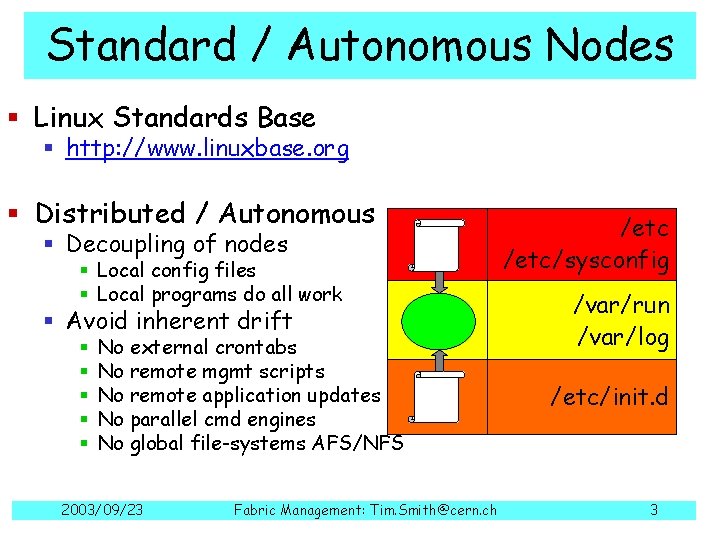

Standard / Autonomous Nodes § Linux Standards Base § http: //www. linuxbase. org § Distributed / Autonomous § Decoupling of nodes /etc/sysconfig § Avoid inherent drift /var/run /var/log § Local config files § Local programs do all work § § § No external crontabs No remote mgmt scripts No remote application updates No parallel cmd engines No global file-systems AFS/NFS 2003/09/23 Fabric Management: Tim. Smith@cern. ch /etc/init. d 3

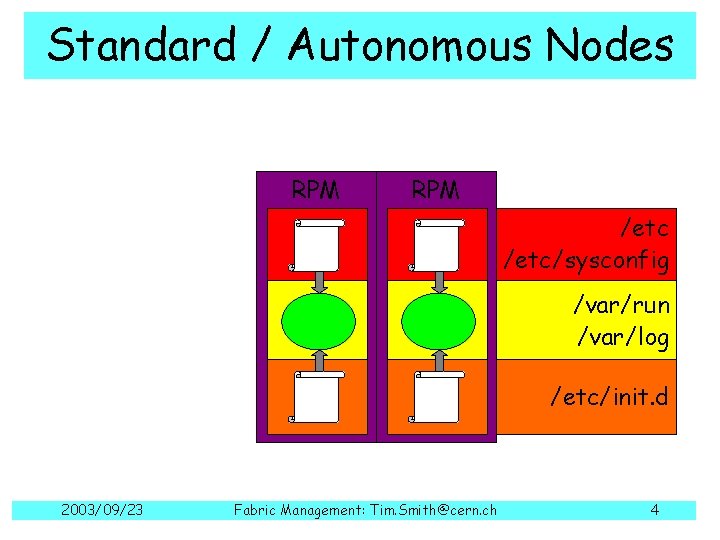

Standard / Autonomous Nodes RPM /etc/sysconfig /var/run /var/log /etc/init. d 2003/09/23 Fabric Management: Tim. Smith@cern. ch 4

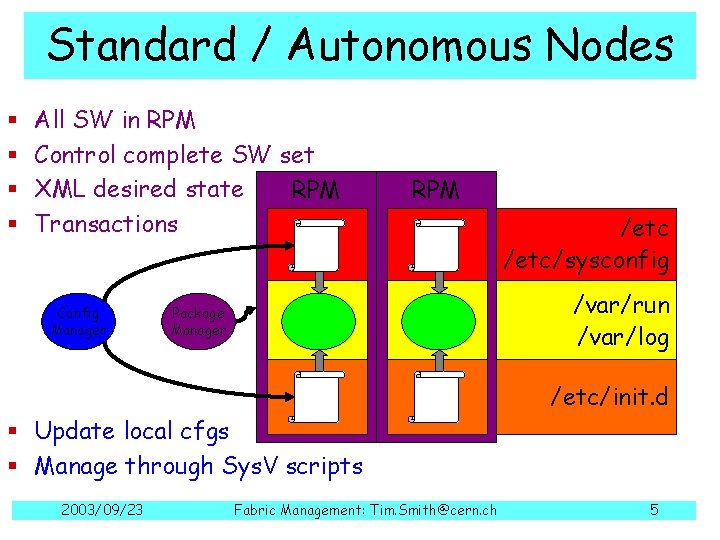

Standard / Autonomous Nodes § § All SW in RPM Control complete SW set XML desired state RPM Transactions Config Manager RPM /etc/sysconfig /var/run /var/log Package Manager /etc/init. d § Update local cfgs § Manage through Sys. V scripts 2003/09/23 Fabric Management: Tim. Smith@cern. ch 5

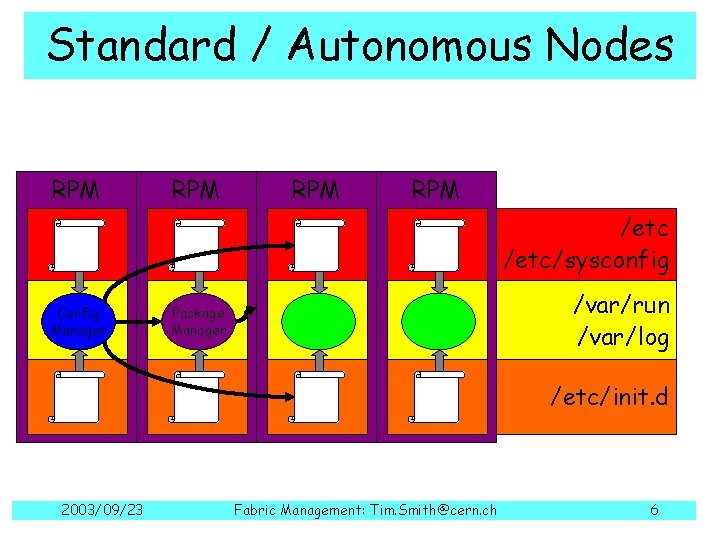

Standard / Autonomous Nodes RPM RPM /etc/sysconfig Config Manager /var/run /var/log Package Manager /etc/init. d 2003/09/23 Fabric Management: Tim. Smith@cern. ch 6

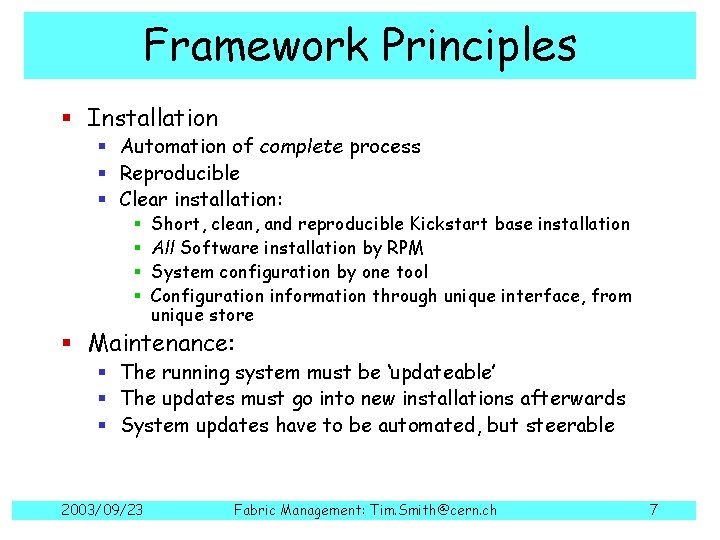

Framework Principles § Installation § Automation of complete process § Reproducible § Clear installation: § § Short, clean, and reproducible Kickstart base installation All Software installation by RPM System configuration by one tool Configuration information through unique interface, from unique store § Maintenance: § The running system must be ‘updateable’ § The updates must go into new installations afterwards § System updates have to be automated, but steerable 2003/09/23 Fabric Management: Tim. Smith@cern. ch 7

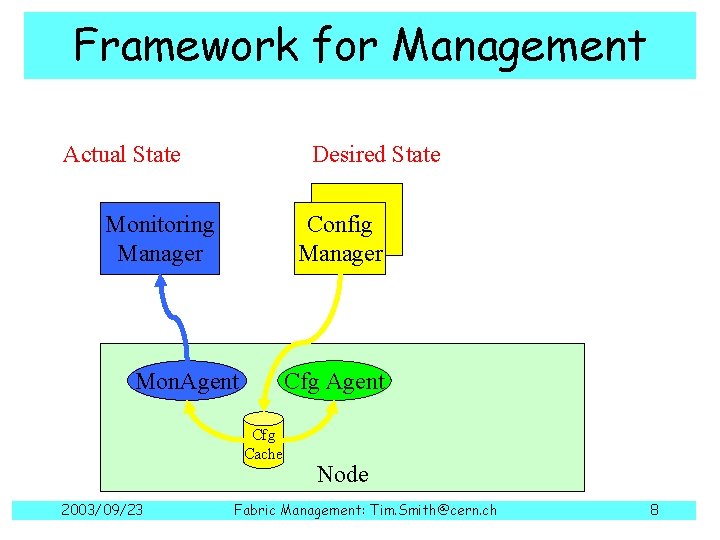

Framework for Management Actual State Desired State CDB Config Manager Monitoring Manager Mon. Agent Cfg Cache 2003/09/23 Node Fabric Management: Tim. Smith@cern. ch 8

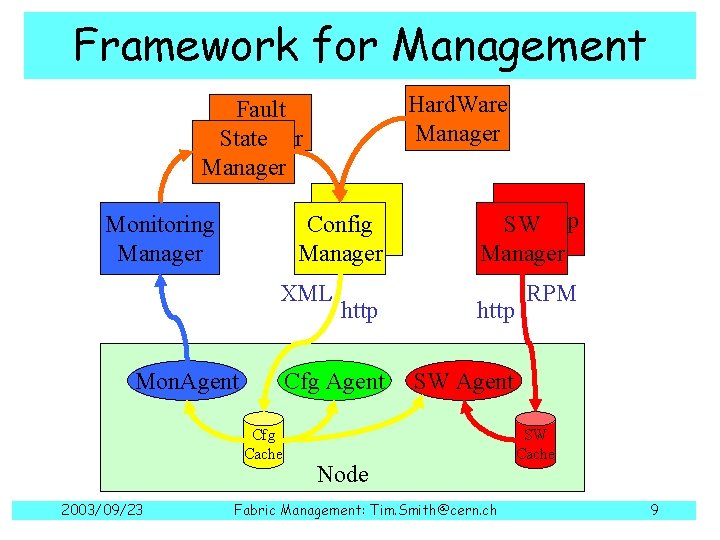

Framework for Management Hard. Ware Manager Fault Manager State Manager CDB Config Manager Monitoring Manager XML Mon. Agent Cfg Cache 2003/09/23 SW SWRep Manager http Cfg Agent SW Agent Node Fabric Management: Tim. Smith@cern. ch RPM SW Cache 9

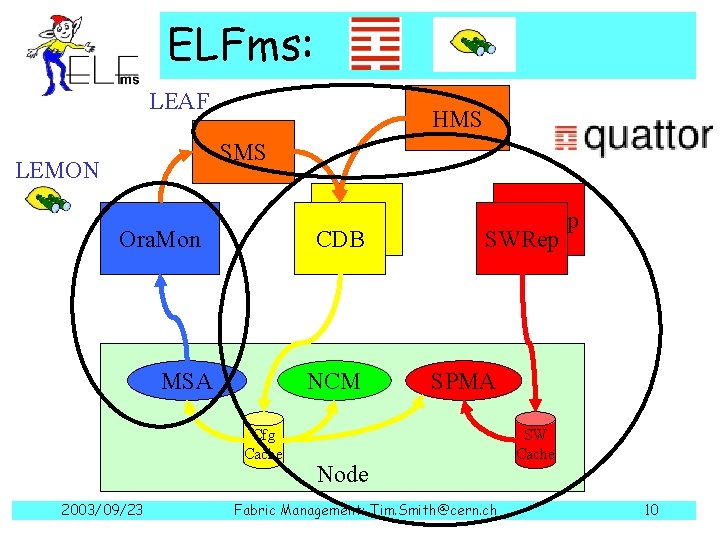

ELFms: LEAF HMS SMS LEMON CDB Ora. Mon MSA NCM Cfg Cache 2003/09/23 SW Rep SWRep SPMA Node Fabric Management: Tim. Smith@cern. ch SW Cache 10

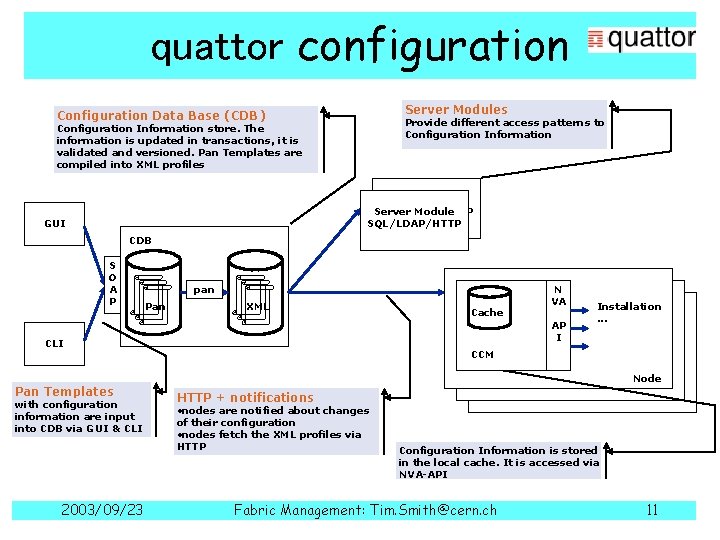

quattor configuration Server Modules Configuration Data Base (CDB) Provide different access patterns to Configuration Information store. The information is updated in transactions, it is validated and versioned. Pan Templates are compiled into XML profiles Server Module SQL/LDAP/HTTP GUI CDB S O A P pan Pan XML AP I CLI Pan Templates with configuration information are input into CDB via GUI & CLI 2003/09/23 Cache N VA Installation. . . CCM Node HTTP + notifications • nodes are notified about changes of their configuration • nodes fetch the XML profiles via HTTP Configuration Information is stored in the local cache. It is accessed via NVA-API Fabric Management: Tim. Smith@cern. ch 11

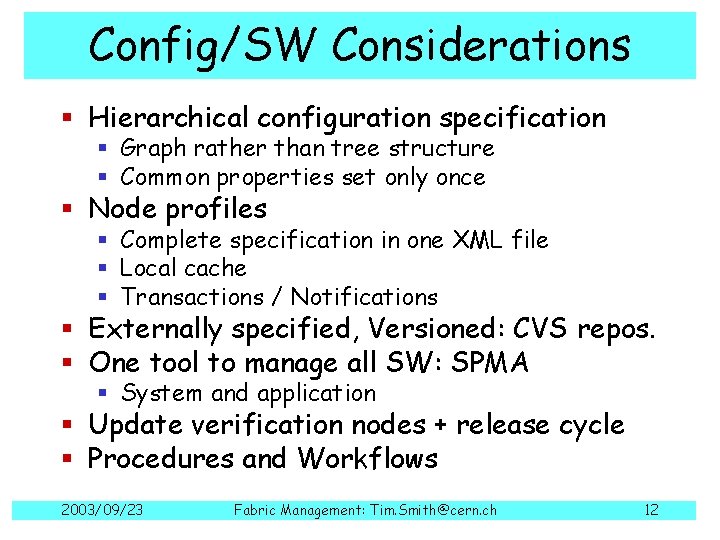

Config/SW Considerations § Hierarchical configuration specification § Graph rather than tree structure § Common properties set only once § Node profiles § Complete specification in one XML file § Local cache § Transactions / Notifications § Externally specified, Versioned: CVS repos. § One tool to manage all SW: SPMA § System and application § Update verification nodes + release cycle § Procedures and Workflows 2003/09/23 Fabric Management: Tim. Smith@cern. ch 12

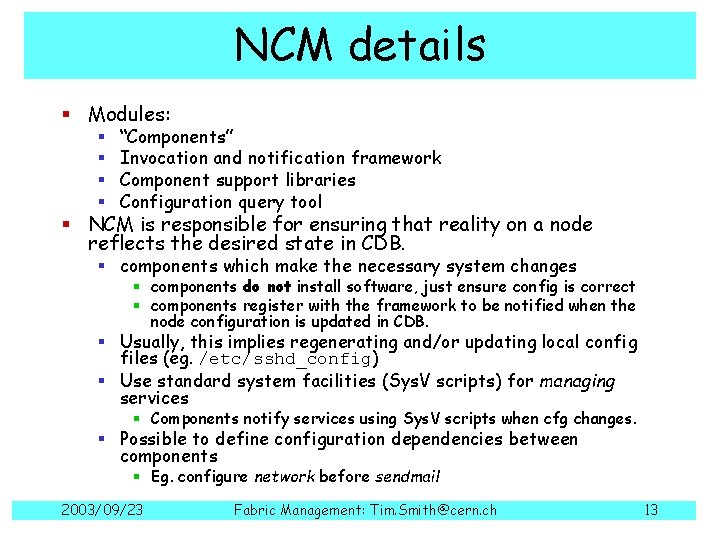

NCM details § Modules: § § “Components” Invocation and notification framework Component support libraries Configuration query tool § NCM is responsible for ensuring that reality on a node reflects the desired state in CDB. § components which make the necessary system changes § components do not install software, just ensure config is correct § components register with the framework to be notified when the node configuration is updated in CDB. § Usually, this implies regenerating and/or updating local config files (eg. /etc/sshd_config) § Use standard system facilities (Sys. V scripts) for managing services § Components notify services using Sys. V scripts when cfg changes. § Possible to define configuration dependencies between components § Eg. configure network before sendmail 2003/09/23 Fabric Management: Tim. Smith@cern. ch 13

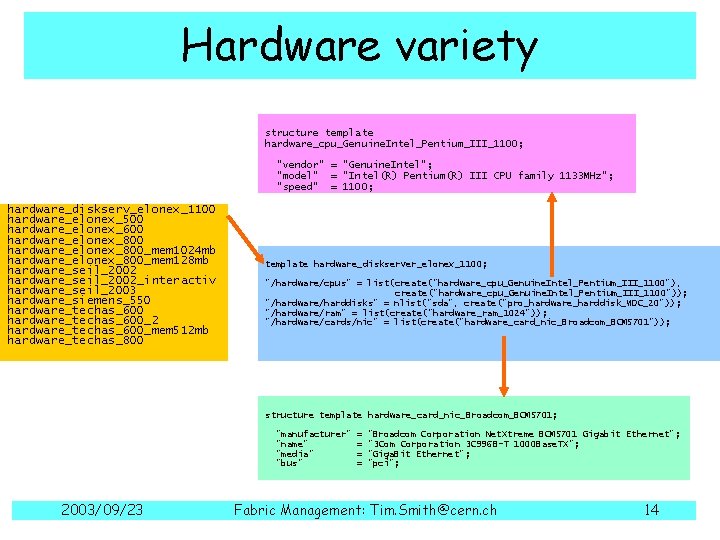

Hardware variety structure template hardware_cpu_Genuine. Intel_Pentium_III_1100; "vendor" = "Genuine. Intel"; "model" = "Intel(R) Pentium(R) III CPU family 1133 MHz"; "speed" = 1100; hardware_diskserv_elonex_1100 hardware_elonex_500 hardware_elonex_600 hardware_elonex_800_mem 1024 mb hardware_elonex_800_mem 128 mb hardware_seil_2002_interactiv hardware_seil_2003 hardware_siemens_550 hardware_techas_600_2 hardware_techas_600_mem 512 mb hardware_techas_800 template hardware_diskserver_elonex_1100; "/hardware/cpus" = list(create("hardware_cpu_Genuine. Intel_Pentium_III_1100"), create("hardware_cpu_Genuine. Intel_Pentium_III_1100")); "/hardware/harddisks" = nlist("sda", create("pro_hardware_harddisk_WDC_20")); "/hardware/ram" = list(create("hardware_ram_1024")); "/hardware/cards/nic" = list(create("hardware_card_nic_Broadcom_BCM 5701")); structure template hardware_card_nic_Broadcom_BCM 5701; "manufacturer" "name" "media" "bus" 2003/09/23 = = "Broadcom Corporation Net. Xtreme BCM 5701 Gigabit Ethernet"; "3 Com Corporation 3 C 996 B-T 1000 Base. TX"; "Giga. Bit Ethernet"; "pci"; Fabric Management: Tim. Smith@cern. ch 14

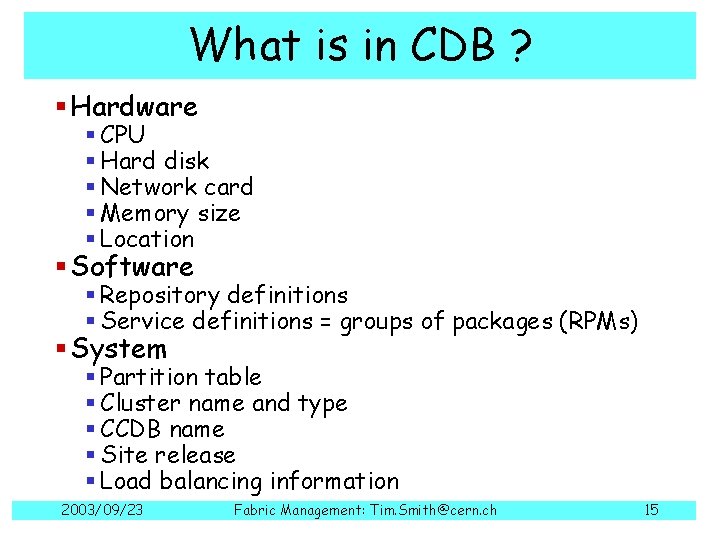

What is in CDB ? § Hardware § CPU § Hard disk § Network card § Memory size § Location § Software § Repository definitions § Service definitions = groups of packages (RPMs) § System § Partition table § Cluster name and type § CCDB name § Site release § Load balancing information 2003/09/23 Fabric Management: Tim. Smith@cern. ch 15

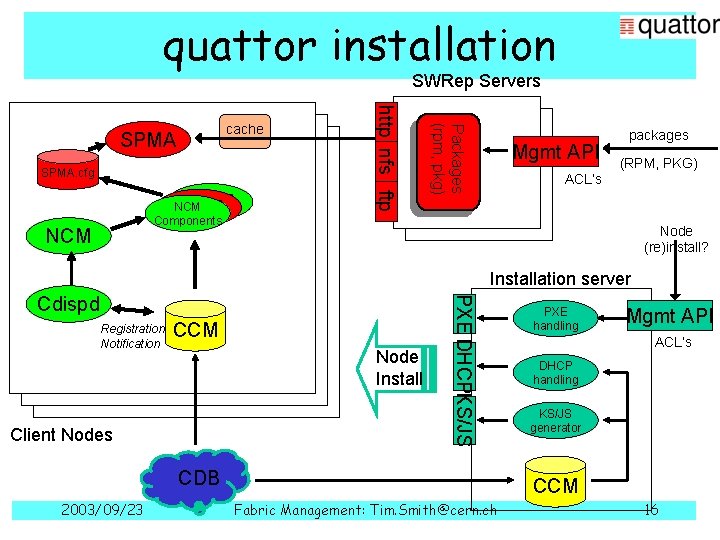

quattor installation SWRep Servers SPMA NCM SPMA Components NCM Packages (rpm, pkg) SPMA. cfg http nfs ftp cache SPMA Mgmt API ACL’s packages (RPM, PKG) Node (re)install? Installation server Registration Notification CCM Node Install Client Nodes PXE DHCPKS/JS Cdispd CDB 2003/09/23 PXE handling Mgmt API ACL’s DHCP handling KS/JS generator CCM Fabric Management: Tim. Smith@cern. ch 16

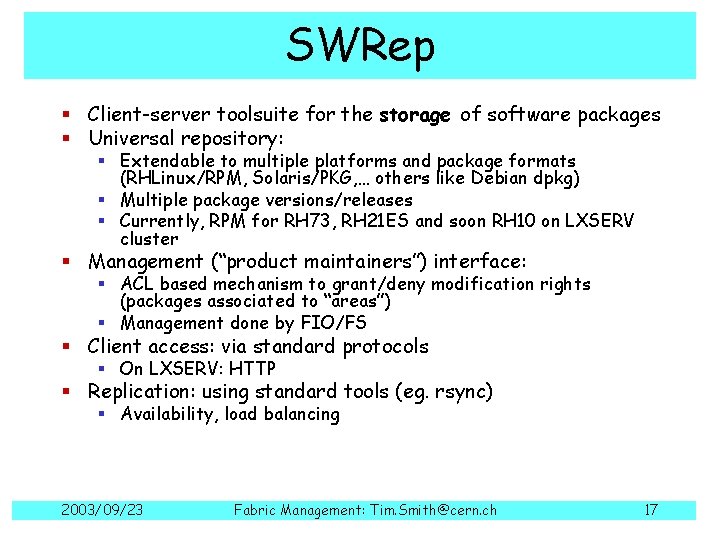

SWRep § Client-server toolsuite for the storage of software packages § Universal repository: § Extendable to multiple platforms and package formats (RHLinux/RPM, Solaris/PKG, … others like Debian dpkg) § Multiple package versions/releases § Currently, RPM for RH 73, RH 21 ES and soon RH 10 on LXSERV cluster § Management (“product maintainers”) interface: § ACL based mechanism to grant/deny modification rights (packages associated to “areas”) § Management done by FIO/FS § Client access: via standard protocols § On LXSERV: HTTP § Replication: using standard tools (eg. rsync) § Availability, load balancing 2003/09/23 Fabric Management: Tim. Smith@cern. ch 17

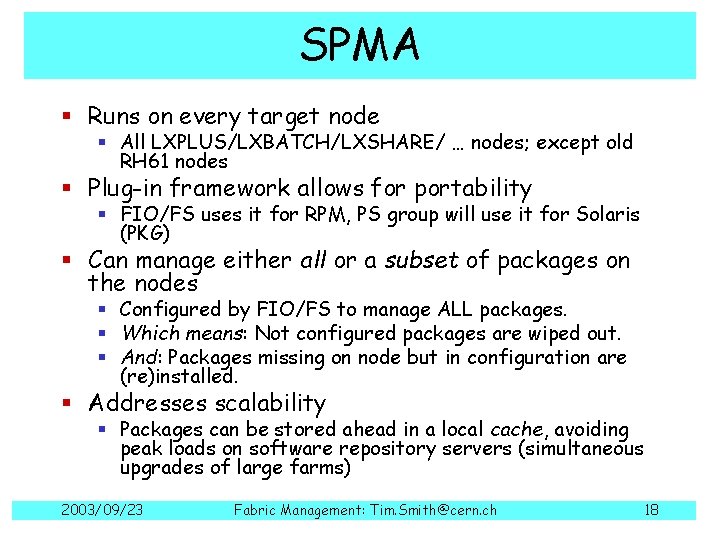

SPMA § Runs on every target node § All LXPLUS/LXBATCH/LXSHARE/ … nodes; except old RH 61 nodes § Plug-in framework allows for portability § FIO/FS uses it for RPM, PS group will use it for Solaris (PKG) § Can manage either all or a subset of packages on the nodes § Configured by FIO/FS to manage ALL packages. § Which means: Not configured packages are wiped out. § And: Packages missing on node but in configuration are (re)installed. § Addresses scalability § Packages can be stored ahead in a local cache, avoiding peak loads on software repository servers (simultaneous upgrades of large farms) 2003/09/23 Fabric Management: Tim. Smith@cern. ch 18

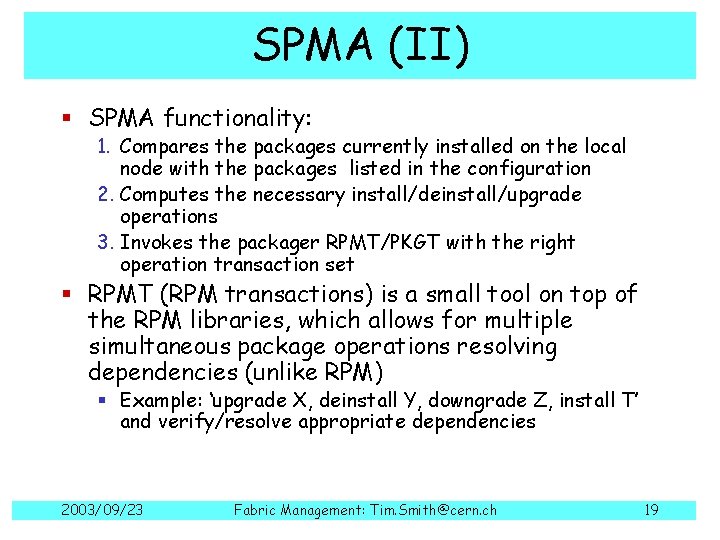

SPMA (II) § SPMA functionality: 1. Compares the packages currently installed on the local node with the packages listed in the configuration 2. Computes the necessary install/deinstall/upgrade operations 3. Invokes the packager RPMT/PKGT with the right operation transaction set § RPMT (RPM transactions) is a small tool on top of the RPM libraries, which allows for multiple simultaneous package operations resolving dependencies (unlike RPM) § Example: ‘upgrade X, deinstall Y, downgrade Z, install T’ and verify/resolve appropriate dependencies 2003/09/23 Fabric Management: Tim. Smith@cern. ch 19

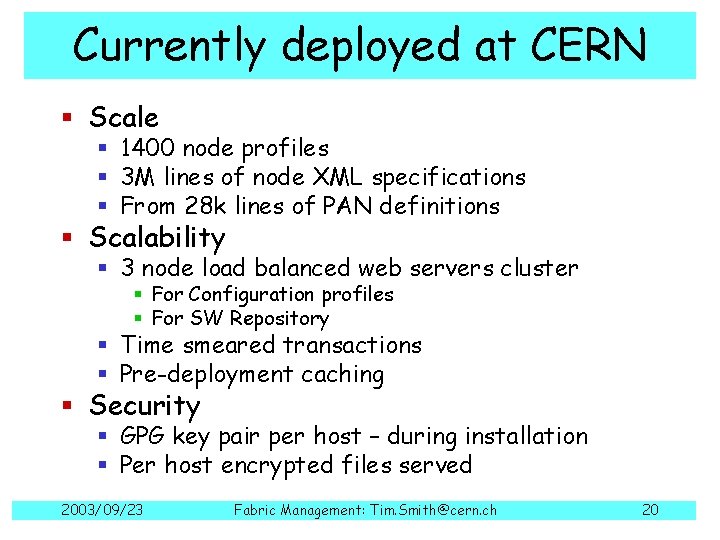

Currently deployed at CERN § Scale § 1400 node profiles § 3 M lines of node XML specifications § From 28 k lines of PAN definitions § Scalability § 3 node load balanced web servers cluster § For Configuration profiles § For SW Repository § Time smeared transactions § Pre-deployment caching § Security § GPG key pair per host – during installation § Per host encrypted files served 2003/09/23 Fabric Management: Tim. Smith@cern. ch 20

Examples § Clusters § LXPLUS, LXBATCH, LXBUILD, LXSHARE, Oracle. DBs § Tape. Servers, Disk. Servers, Stage. Servers § Supported Platforms § RH 7. 3. 3 2400 packages § RHES 1300 packages § RH 10 § KDE/Gnome security patch rollout § 0. 5 GB onto 700 nodes § 15 minute time smearing from 3 lb-servers § LSF 4 -5 transition § 10 minutes § No queues or jobs stopped § C. f. last time 3 weeks, 3 people! 2003/09/23 Fabric Management: Tim. Smith@cern. ch 21

Plans § Deploying NCM § Port SUE features to NCM for RH 10 § Use for Solaris 10 § Deploying State Manager (SMS) § Use case targetted GUIs § Web Servers § Split: Front. End / Back. End Architecture 2003/09/23 Fabric Management: Tim. Smith@cern. ch 22

Conclusions § Maturity brings… § Degradation of initial state definition § HW + SW § Accumulation of innocuous temporary procedures § Scale brings… § Marginal activities become full time § Many hands on the systems § Combat with strong management automation 2003/09/23 Fabric Management: Tim. Smith@cern. ch 23

- Slides: 23