Extensions ESSLLI 02 Other extensions Influenced by Merge

Extensions ESSLLI 02

Other extensions • Influenced by Merge and Move analysis

Minimalism and Formal Grammars Several attempts to formalise « minimalist principles » : • Stabler, 1997, 1999, 2000, 2001: - « minimalist grammars » (MG) • Weak equivalence with: - Multiple Context-Free Grammars (Seki et al. Harkema) - Linear Context-Free Rewriting Systems (Michaelis)

Results from formal grammars • Mildly context-sensitivity • Equivalence with multi-component TAGs (Weir, Rambow, Vijay-Shankar) • Polynomiality of recognition

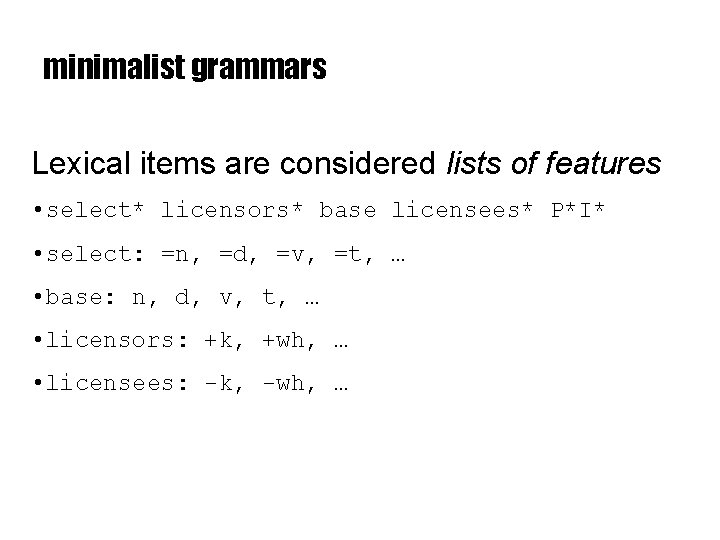

minimalist grammars Lexical items are considered lists of features • select* licensors* base licensees* P*I* • select: =n, =d, =v, =t, … • base: n, d, v, t, … • licensors: +k, +wh, … • licensees: -k, -wh, …

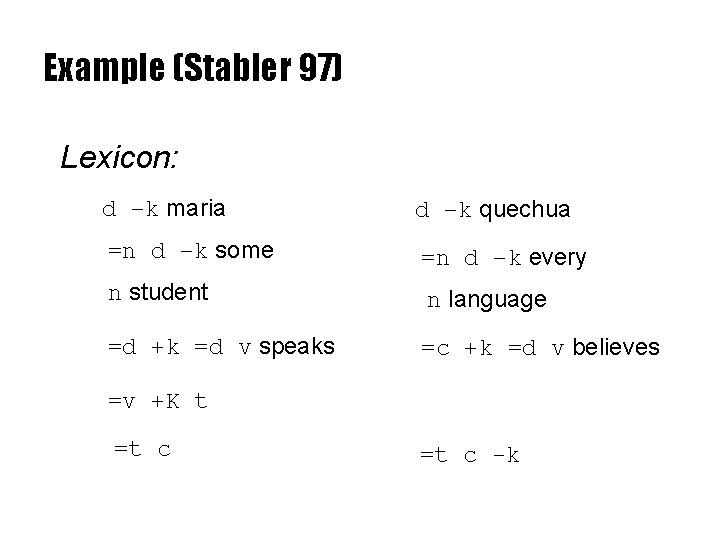

Example (Stabler 97) Lexicon: d –k maria d –k quechua =n d –k some =n d –k every n student n language =d +k =d v speaks =c +k =d v believes =v +K t =t c -k

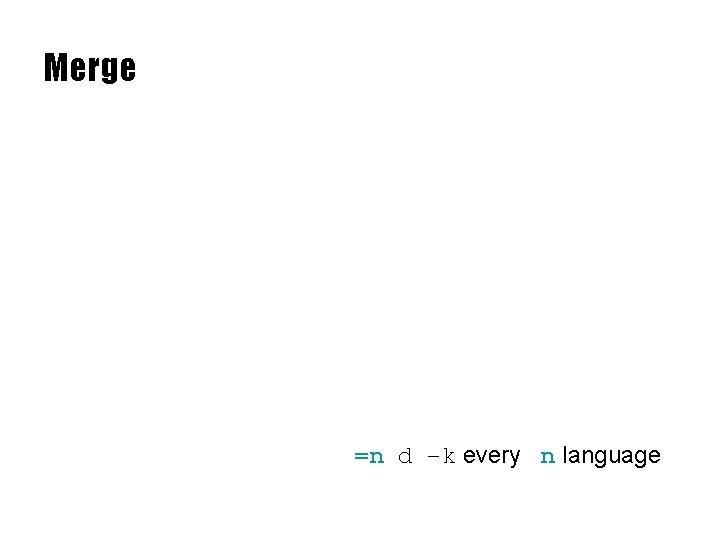

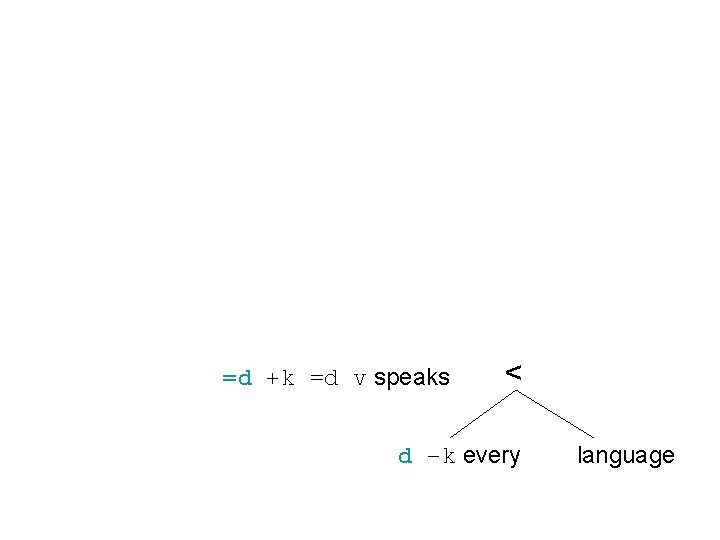

Merge =n d –k every n language

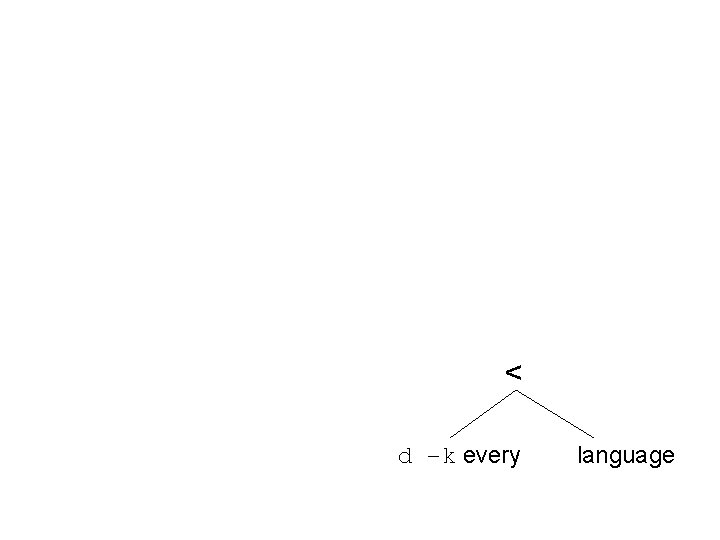

< d –k every language

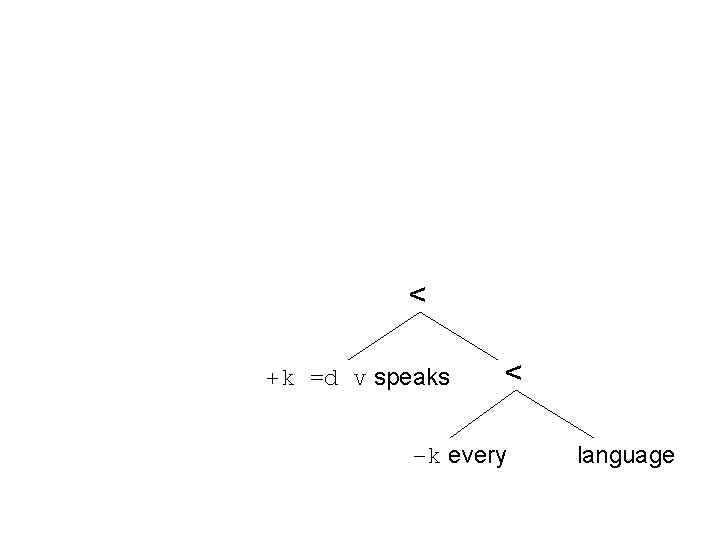

=d +k =d v speaks < d –k every language

< +k =d v speaks < –k every language

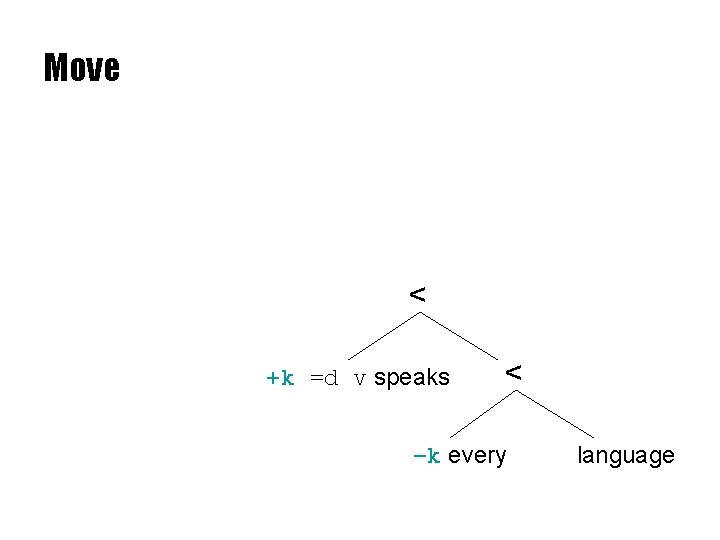

Move < +k =d v speaks < –k every language

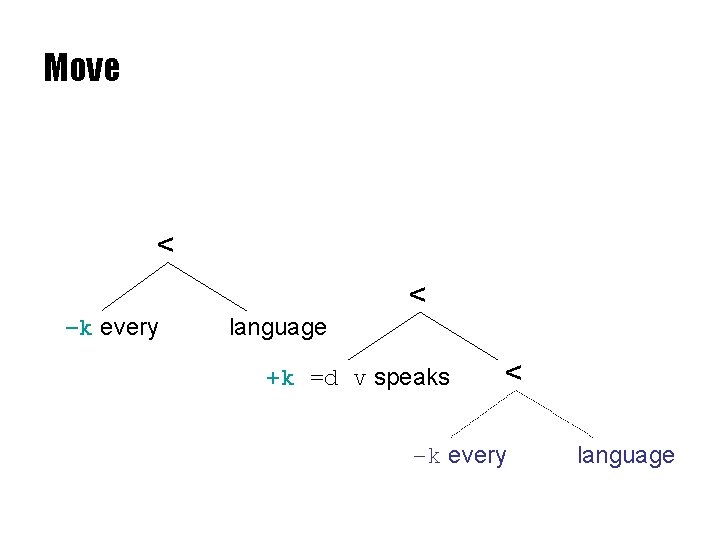

Move < < –k every language +k =d v speaks < –k every language

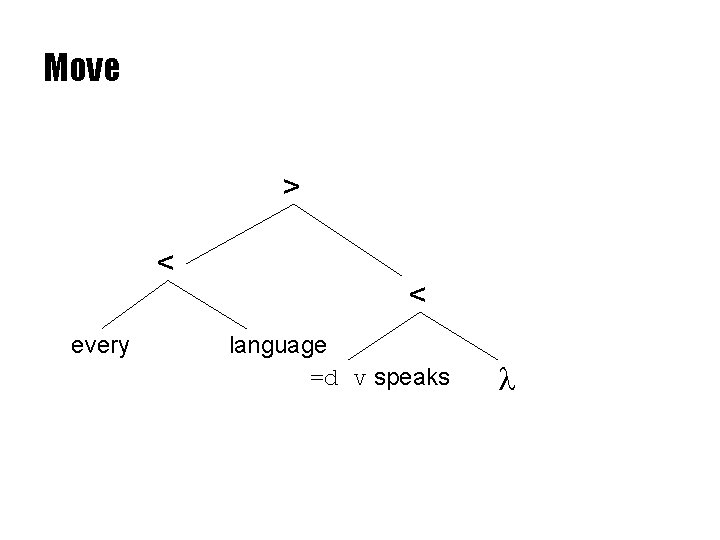

Move > < every < language =d v speaks l

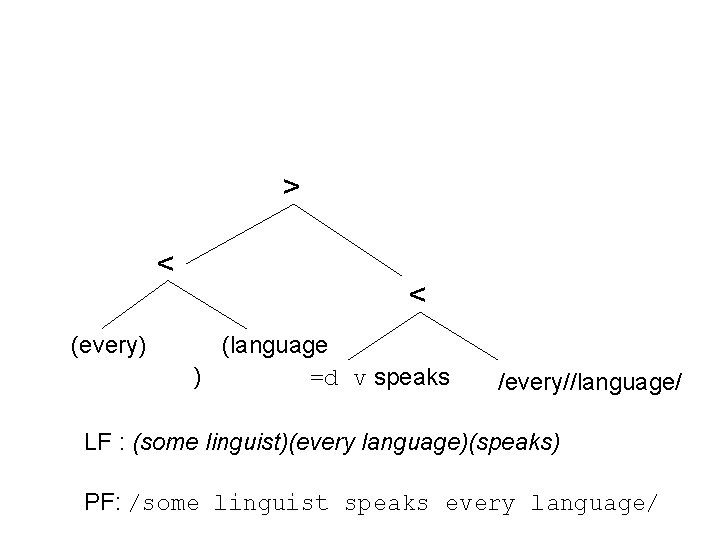

> < (every) < (language ) =d v speaks /every//language/ LF : (some linguist)(every language)(speaks) PF: /some linguist speaks every language/

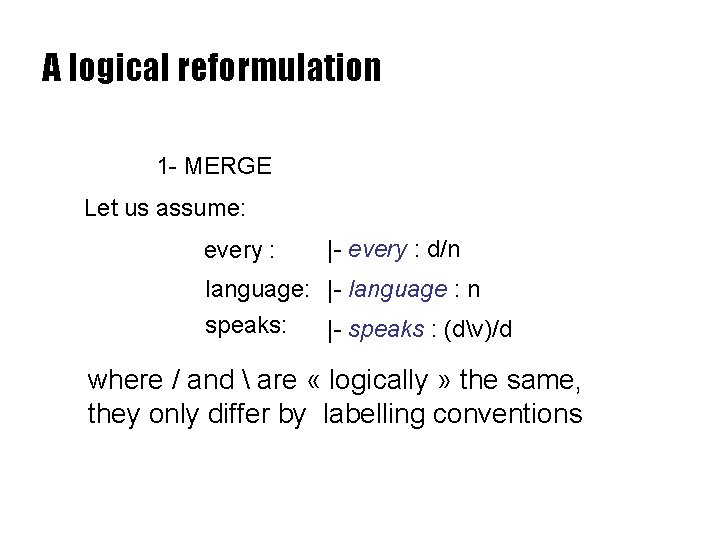

A logical reformulation 1 - MERGE Let us assume: every : |- every : d/n language: |- language : n speaks: |- speaks : (dv)/d where / and are « logically » the same, they only differ by labelling conventions

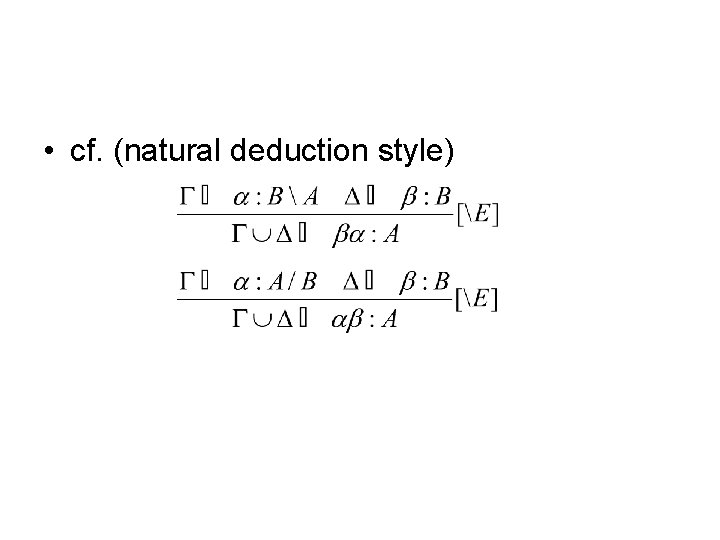

• cf. (natural deduction style)

• commutative product : the order of formulae in the antecedent is not relevant • word order supposed to be induced from the dominance relations in the proof-tree (the highest, the leftest) – words = extra-logical axioms – sequents combined by [/E], [E] and …

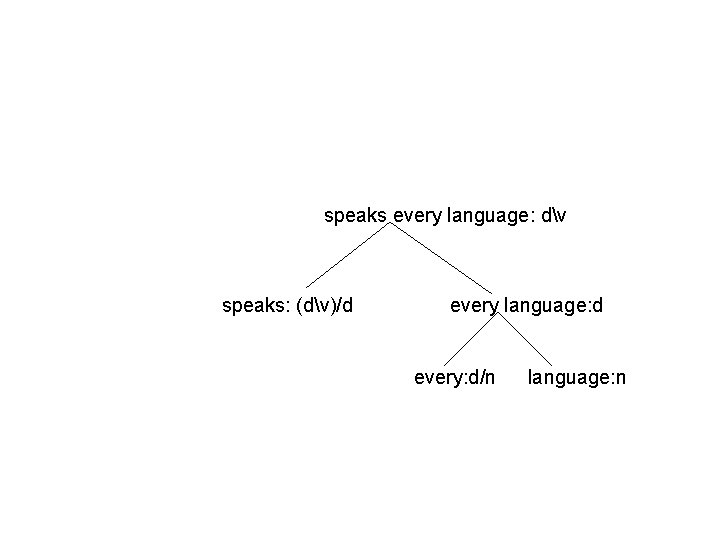

speaks every language: dv speaks: (dv)/d every language: d every: d/n language: n

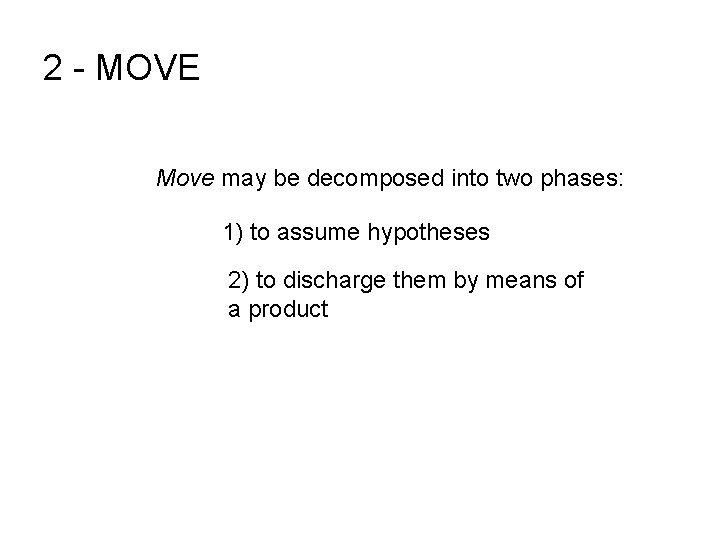

2 - MOVE Move may be decomposed into two phases: 1) to assume hypotheses 2) to discharge them by means of a product

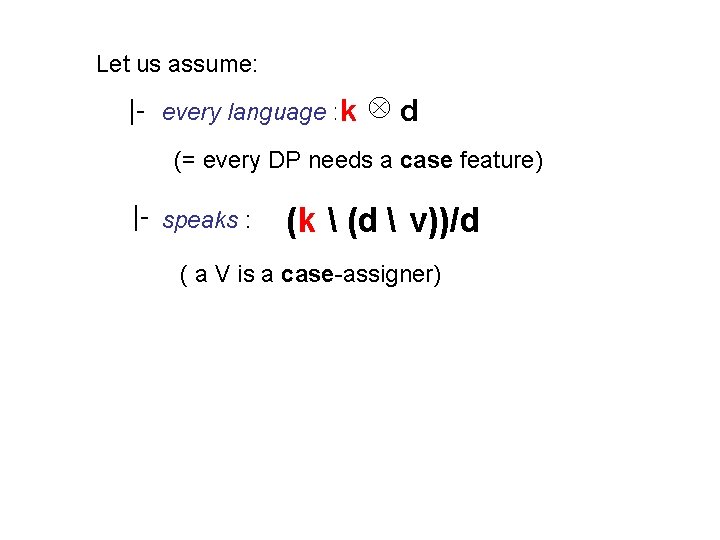

Let us assume: |- every language : k d (= every DP needs a case feature) |- speaks : (k (d v))/d ( a V is a case-assigner)

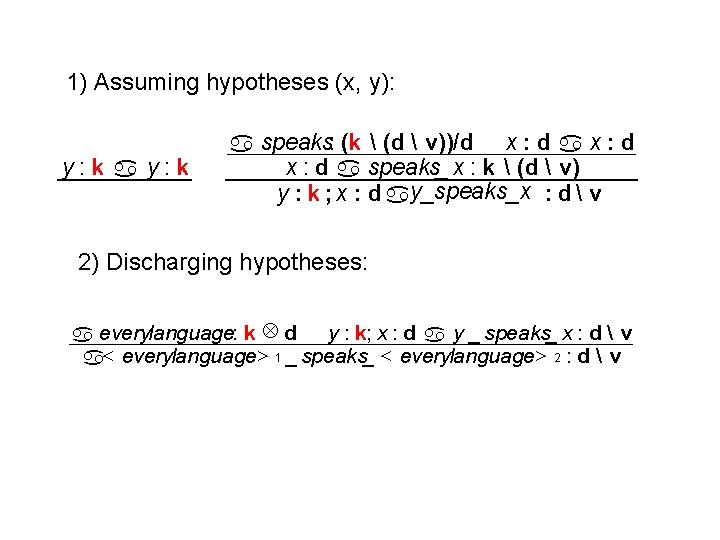

1) Assuming hypotheses (x, y): y: k a speaks: (k (d v))/d x : d a speaks_ x : k (d v) y : k ; x : d ay_speaks_x : d v 2) Discharging hypotheses: y : k; x : d a y _ speaks_ x : d v a everylanguage: k d a< everylanguage> 1 _ speaks_ < everylanguage> 2 : d v

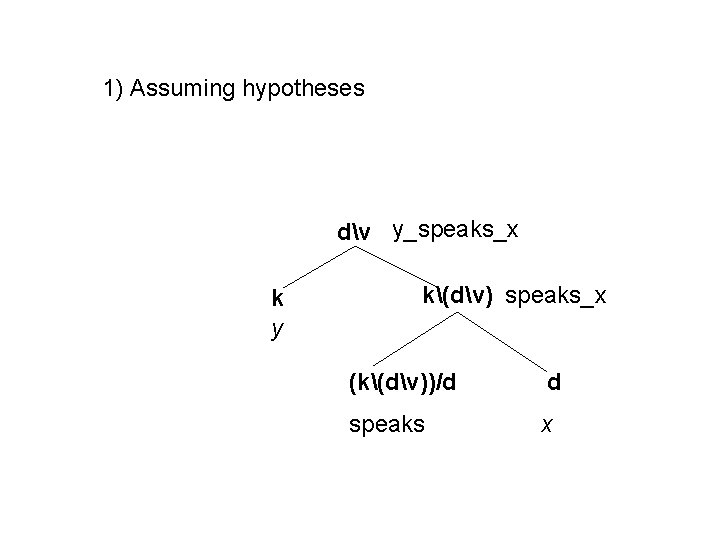

1) Assuming hypotheses dv y_speaks_x k y k(dv) speaks_x (k(dv))/d d speaks x

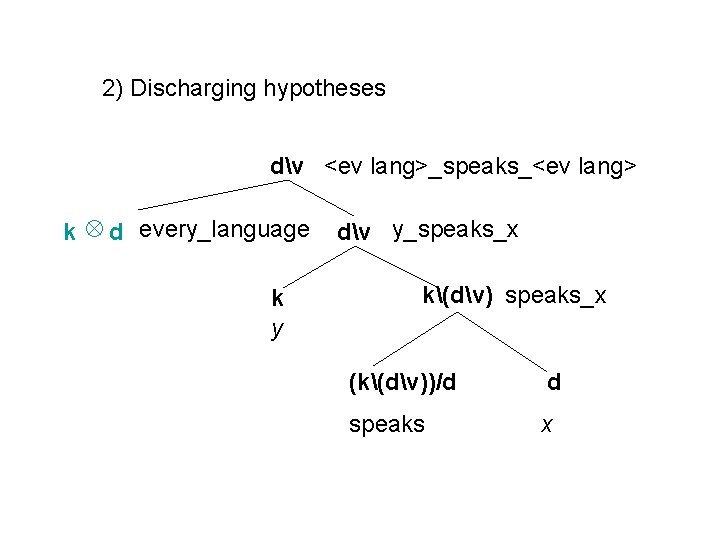

2) Discharging hypotheses dv <ev lang>_speaks_<ev lang> k d every_language k y dv y_speaks_x k(dv) speaks_x (k(dv))/d d speaks x

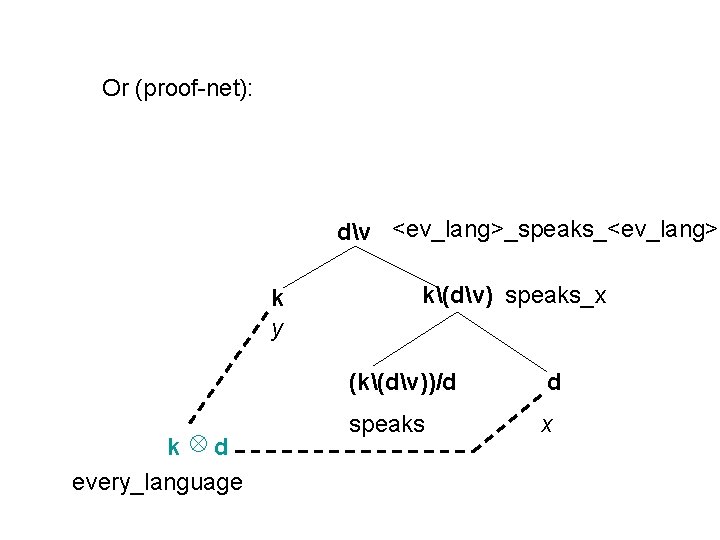

Or (proof-net): dv <ev_lang>_speaks_<ev_lang> k y k d every_language k(dv) speaks_x (k(dv))/d d speaks x

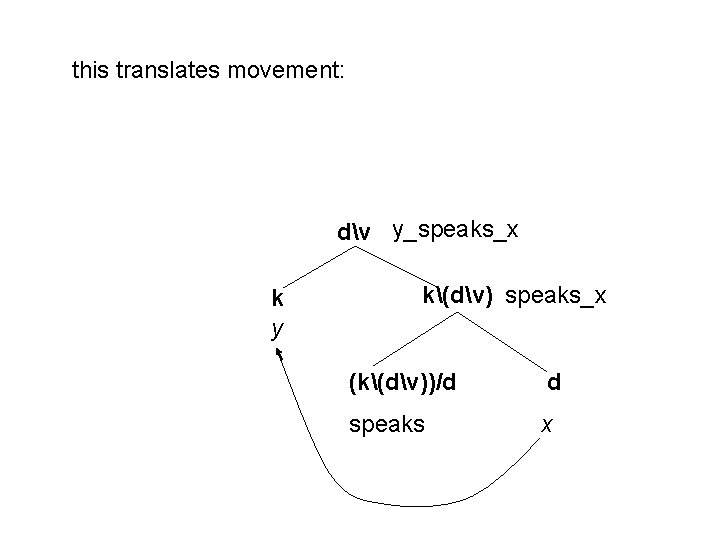

this translates movement: dv y_speaks_x k y k(dv) speaks_x (k(dv))/d d speaks x

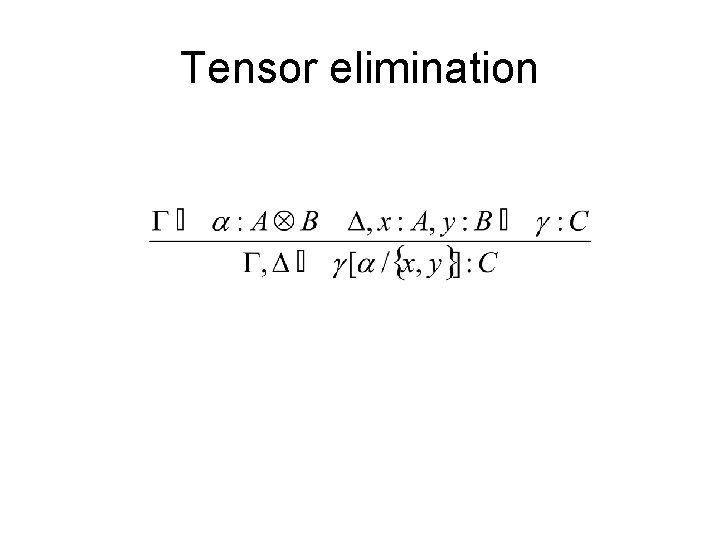

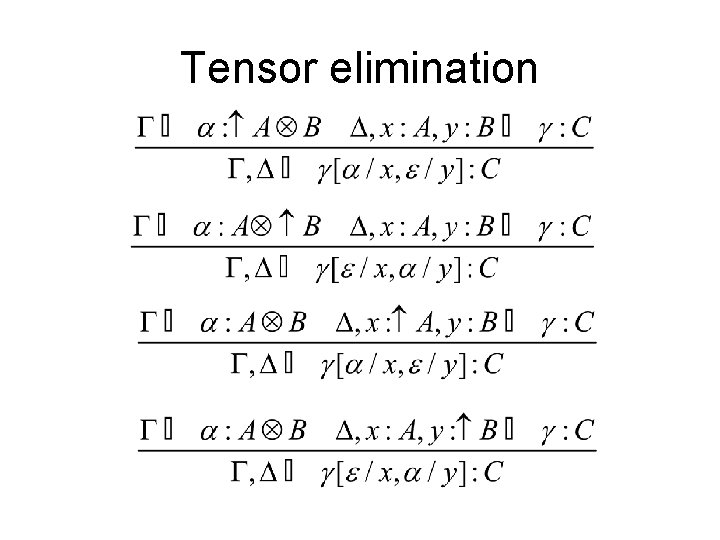

Tensor elimination

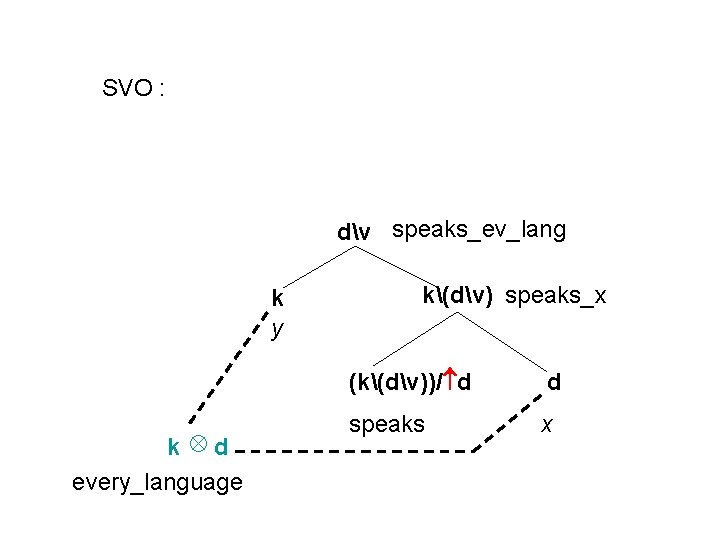

SVO : dv speaks_ev_lang k y k d every_language k(dv) speaks_x (k(dv))/ d d speaks x

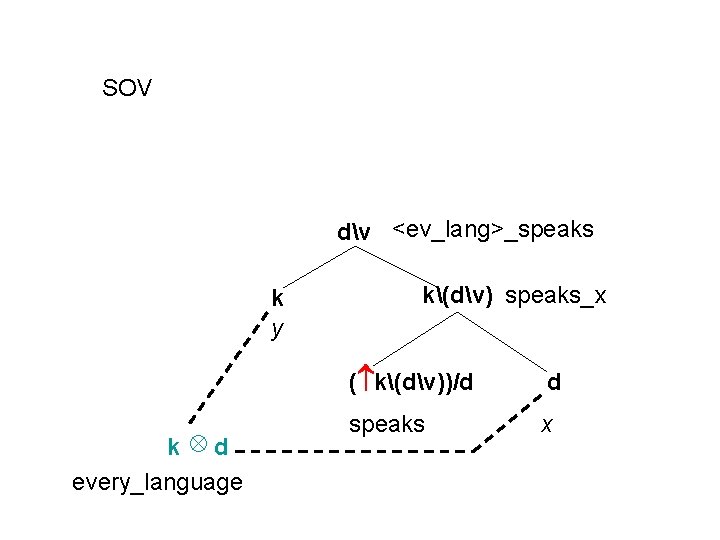

SOV dv <ev_lang>_speaks k y k d every_language k(dv) speaks_x ( k(dv))/d d speaks x

Tensor elimination

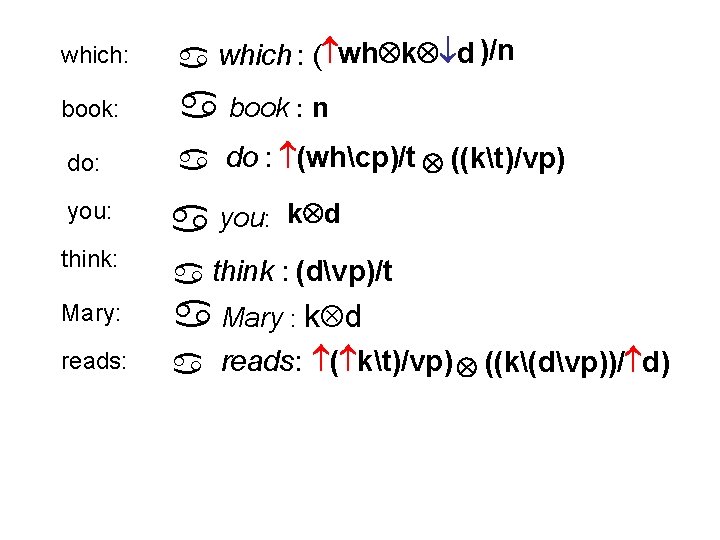

which: a which : ( wh k d )/n book: a book : n do: a do : (whcp)/t ((kt)/vp) you: a you: think: a think : (dvp)/t a Mary : k d a reads: ( kt)/vp) ((k(dvp))/ d) Mary: reads: k d

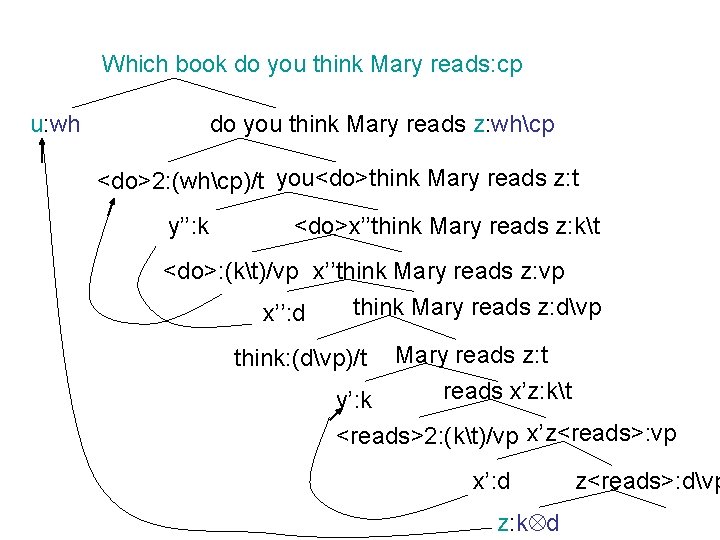

x’’think Mary reads z: vp x’’: d think Mary reads z: dvp Mary reads z: t reads x’z: kt y’: k <reads>2: (kt)/vp x’z<reads>: vp think: (dvp)/t x’: d z<reads>: dvp z: k d reads: (kt)/vp k(dvp)/d y<reads>x: dvp y: k <reads>x: k(dvp) <reads>: k(dvp)/d x: d

Which book do you think Mary reads: cp u: wh do you think Mary reads z: whcp <do>2: (whcp)/t you<do>think Mary reads z: t y’’: k <do>x’’think Mary reads z: kt <do>: (kt)/vp x’’think Mary reads z: vp x’’: d think Mary reads z: dvp think: (dvp)/t Mary reads z: t reads x’z: kt y’: k <reads>2: (kt)/vp x’z<reads>: vp x’: d z: k d z<reads>: dvp

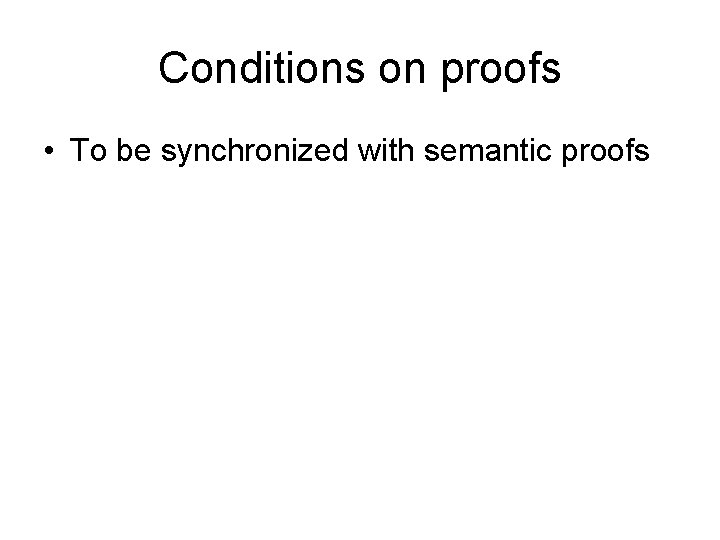

Conditions on proofs • To be synchronized with semantic proofs

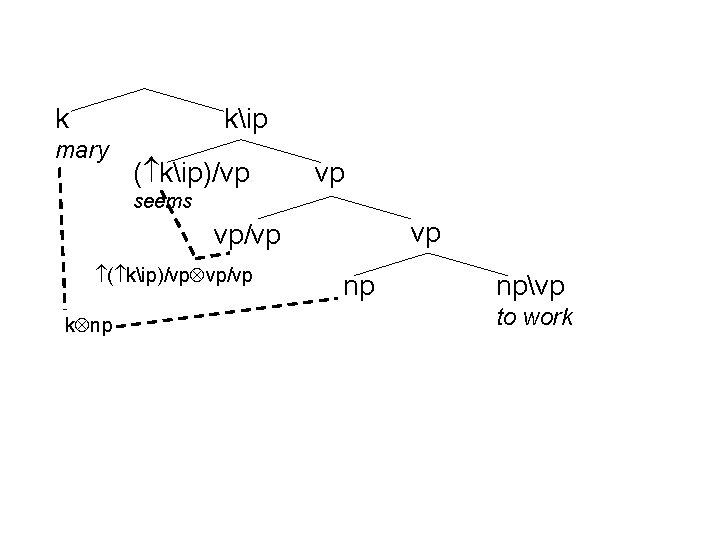

k kip mary ( kip)/vp vp seems vp vp/vp ( kip)/vp vp/vp k np np npvp to work

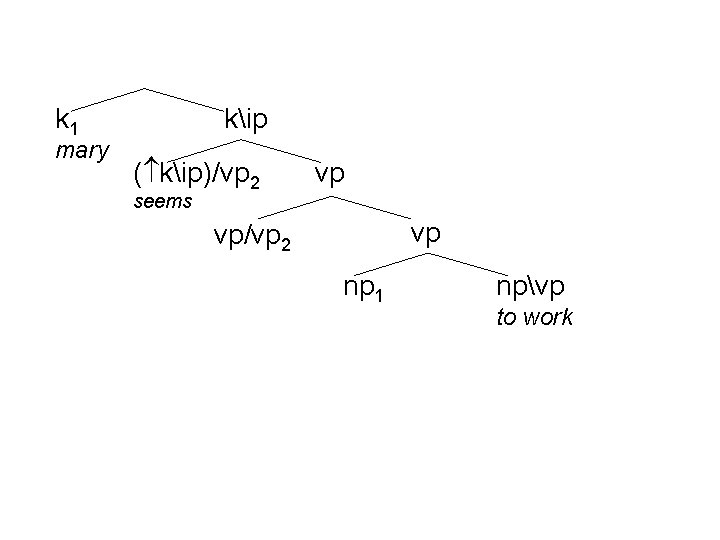

k 1 mary kip ( kip)/vp 2 vp seems vp vp/vp 2 np 1 npvp to work

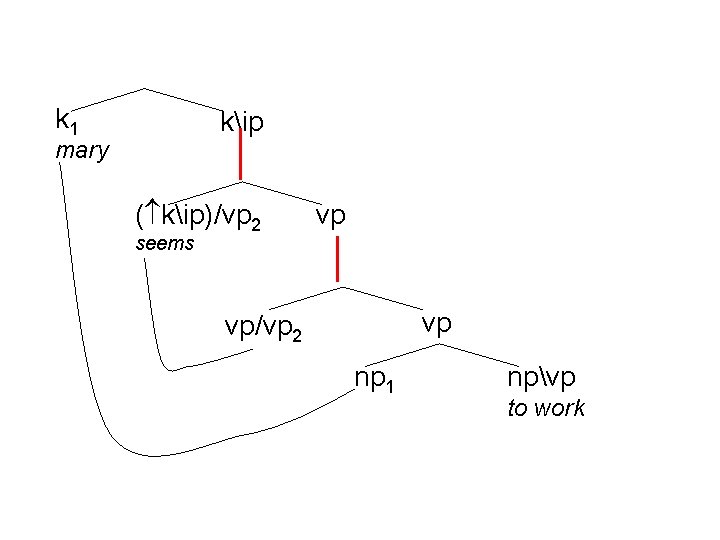

k 1 kip mary ( kip)/vp 2 vp seems vp vp/vp 2 np 1 npvp to work

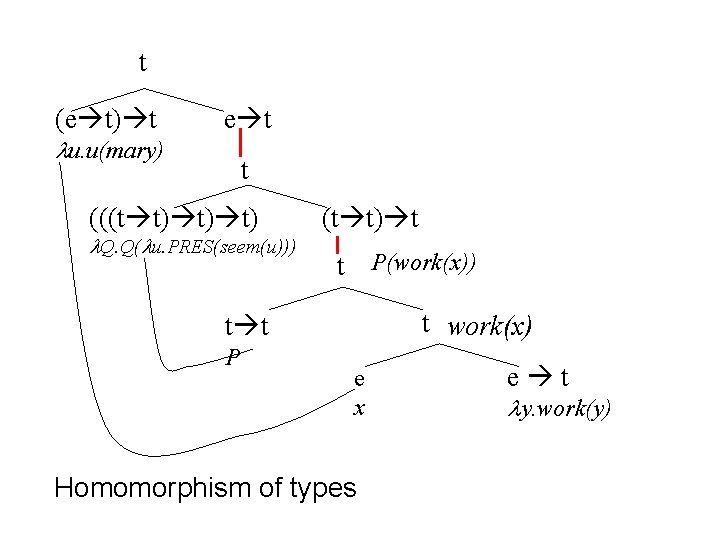

t (e t) t u. u(mary) e t t (((t t) t) t) Q. Q( u. PRES(seem(u))) (t t) t t P(work(x)) t work(x) t t P e x Homomorphism of types e t y. work(y)

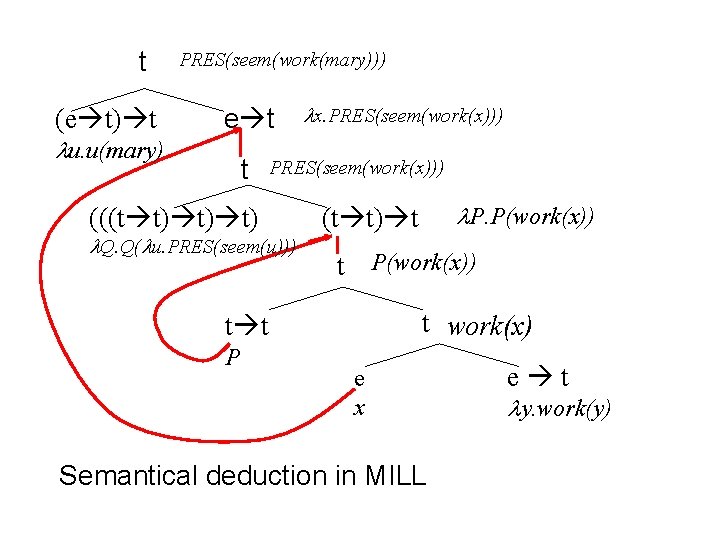

t (e t) t u. u(mary) PRES(seem(work(mary))) e t t (((t t) t) t) x. PRES(seem(work(x))) Q. Q( u. PRES(seem(u))) t P(work(x)) t work(x) t t P P. P(work(x)) (t t) t e x Semantical deduction in MILL e t y. work(y)

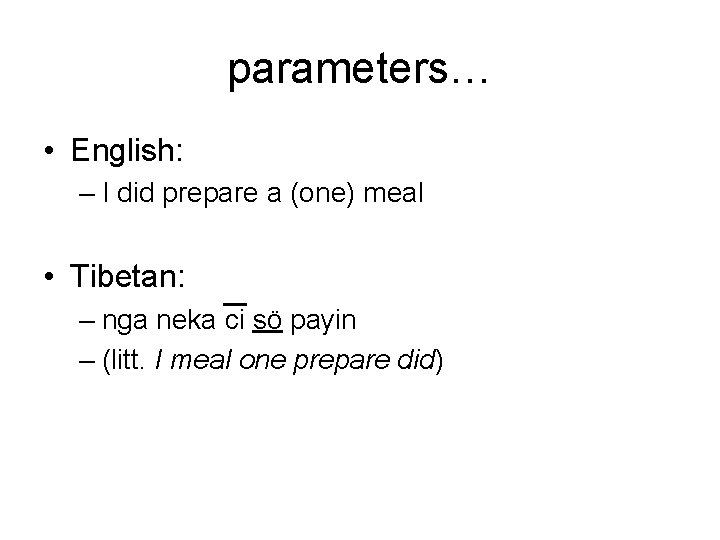

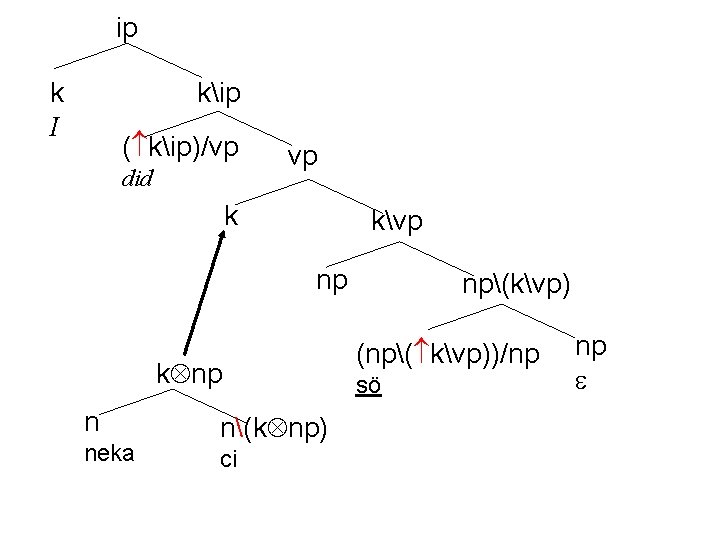

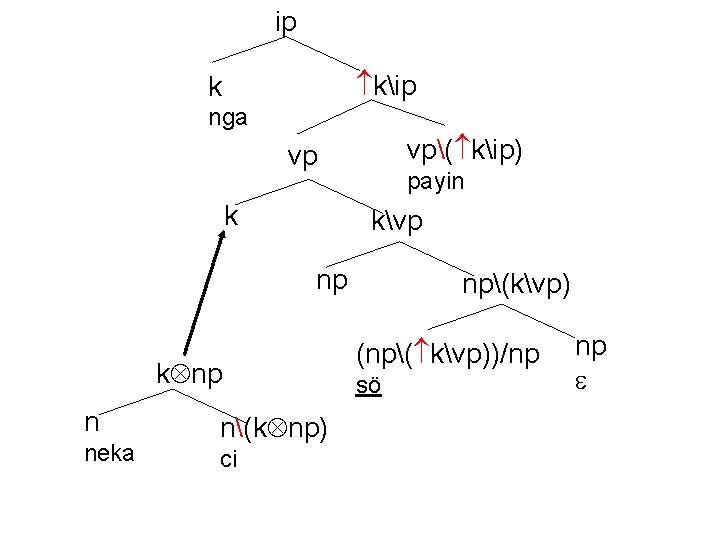

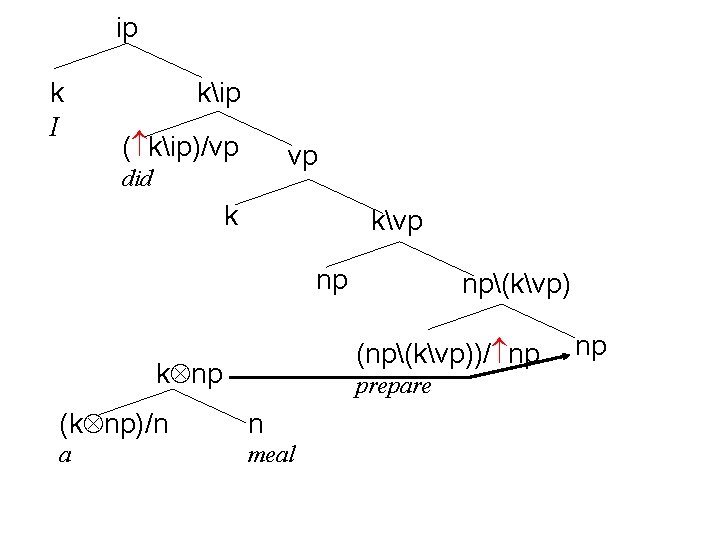

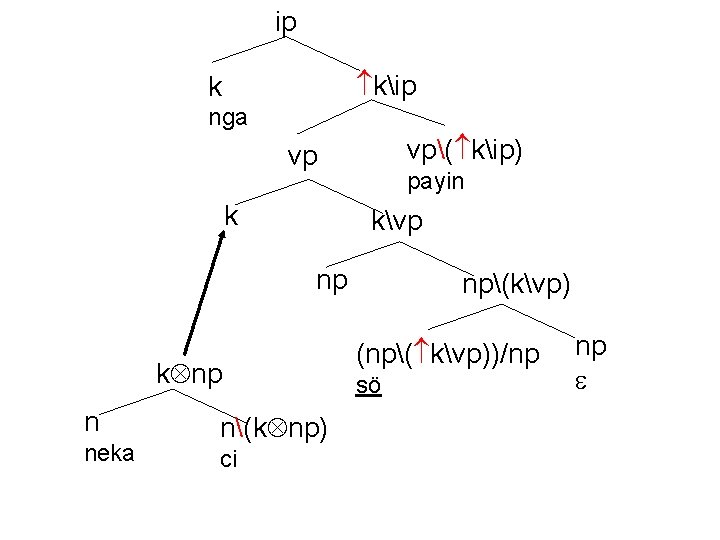

parameters… • English: – I did prepare a (one) meal • Tibetan: – nga neka ci sö payin – (litt. I meal one prepare did)

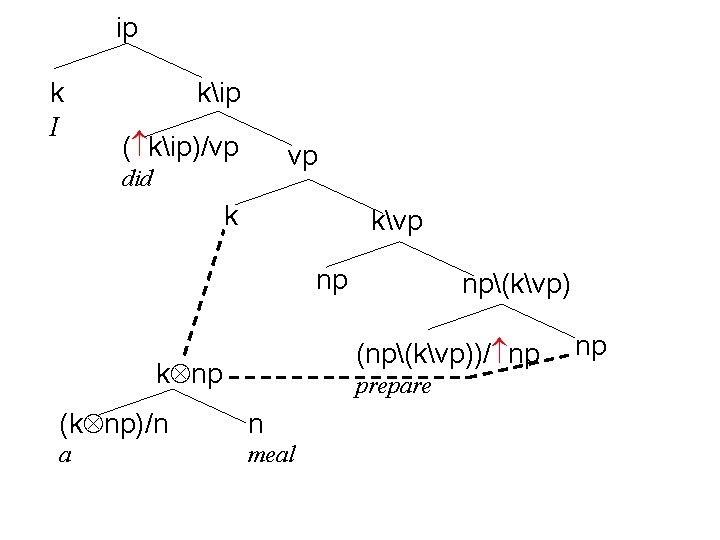

ip k I kip ( kip)/vp vp did k kvp np np(kvp) (np(kvp))/ np k np prepare (k np)/n n a meal np

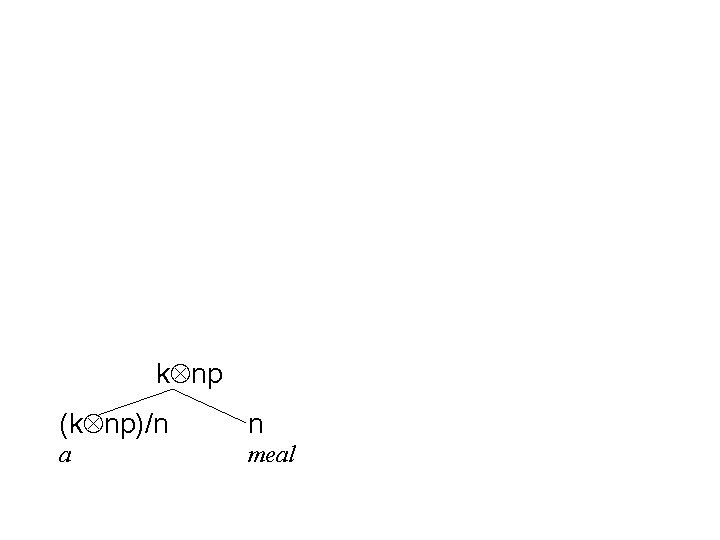

k np (k np)/n n a meal

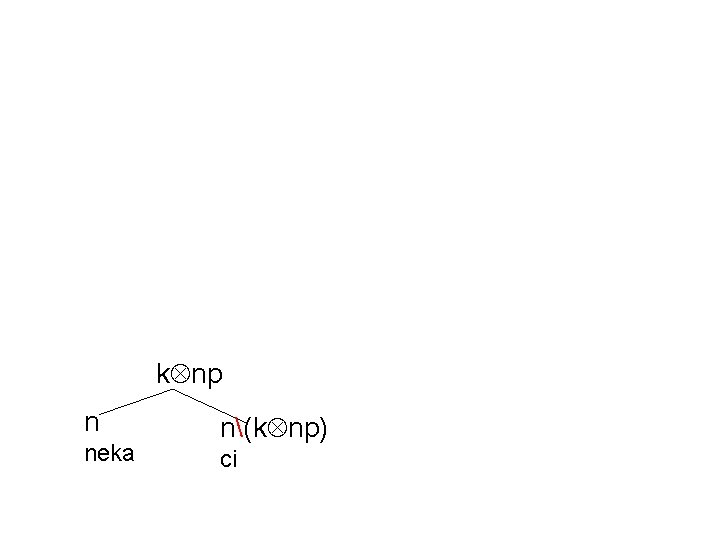

k np n neka n(k np) ci

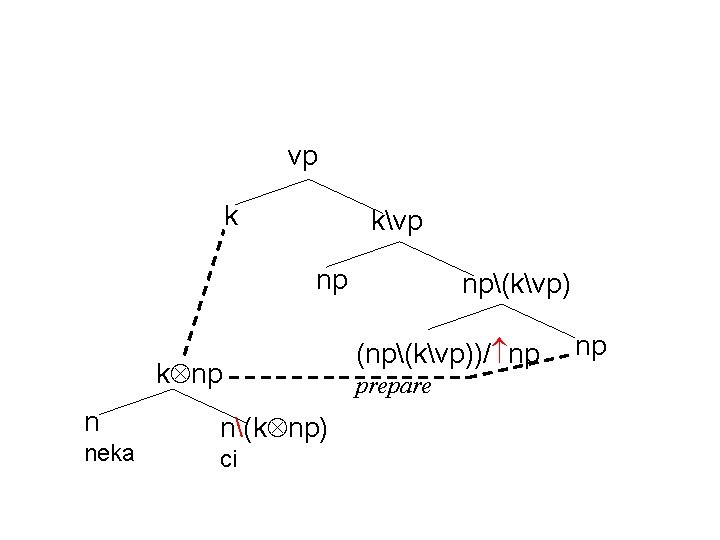

vp k kvp np k np n neka n(k np) ci np(kvp) (np(kvp))/ np prepare np

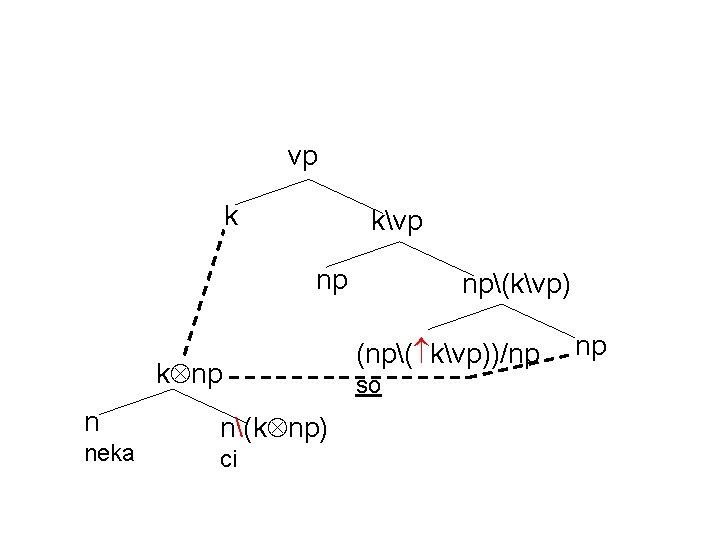

vp k kvp np k np n neka n(k np) ci np(kvp) (np( kvp))/np sö np

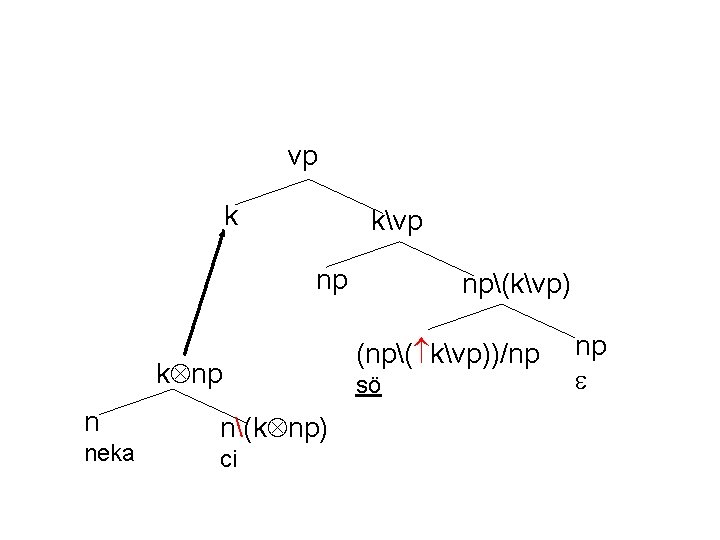

vp k kvp np k np n neka n(k np) ci np(kvp) (np( kvp))/np sö np

ip k I kip ( kip)/vp did vp k kvp np k np n neka n(k np) ci np(kvp) (np( kvp))/np sö np

ip kip k nga vp( kip) vp k payin kvp np k np n neka n(k np) ci np(kvp) (np( kvp))/np sö np

ip k I kip ( kip)/vp vp did k kvp np np(kvp) (np(kvp))/ np k np prepare (k np)/n n a meal np

ip kip k nga vp( kip) vp k payin kvp np k np n neka n(k np) ci np(kvp) (np( kvp))/np sö np

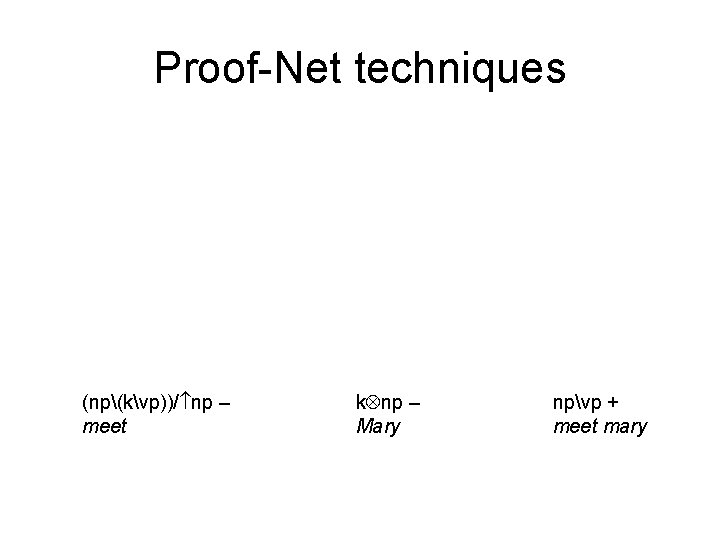

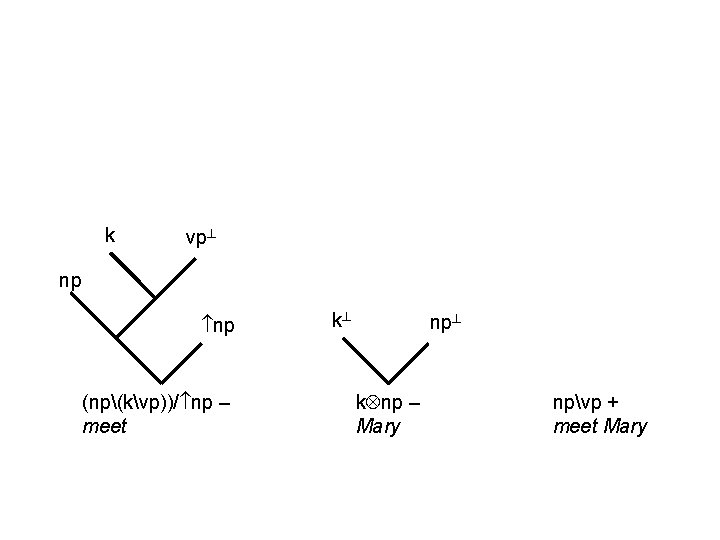

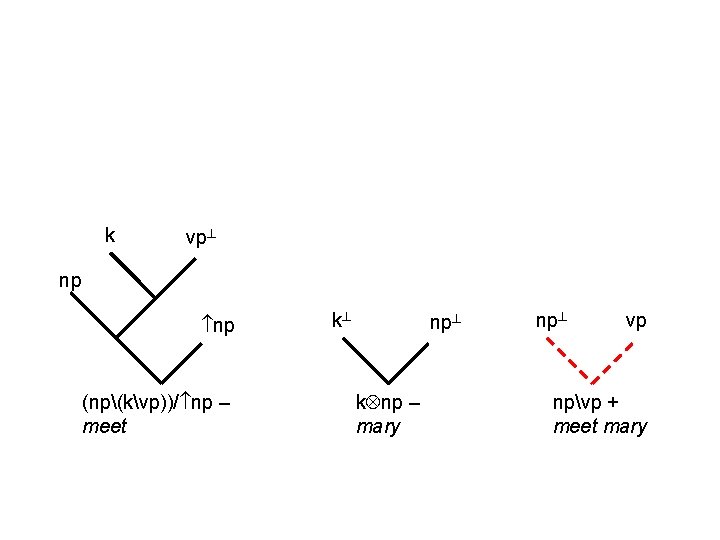

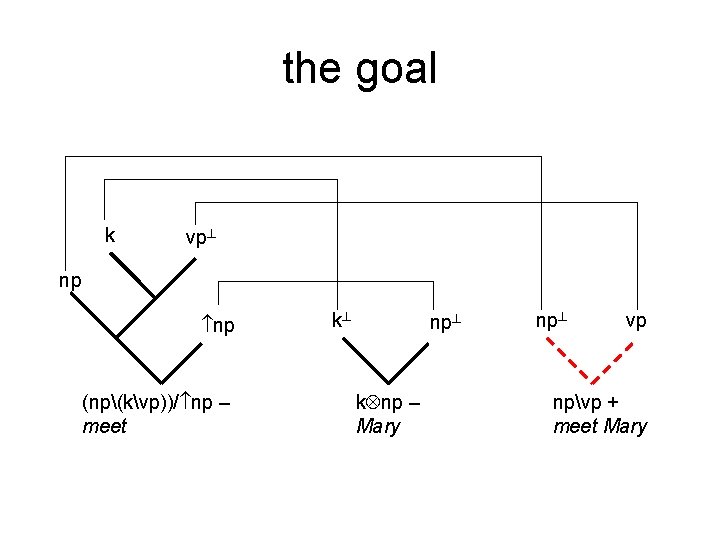

Proof-Net techniques (np(kvp))/ np – meet k np – Mary npvp + meet mary

k vp np np (np(kvp))/ np – meet k np k np – Mary npvp + meet Mary

k vp np np (np(kvp))/ np – meet k np k np – mary np vp npvp + meet mary

the goal k vp np np (np(kvp))/ np – meet k np k np – Mary np vp npvp + meet Mary

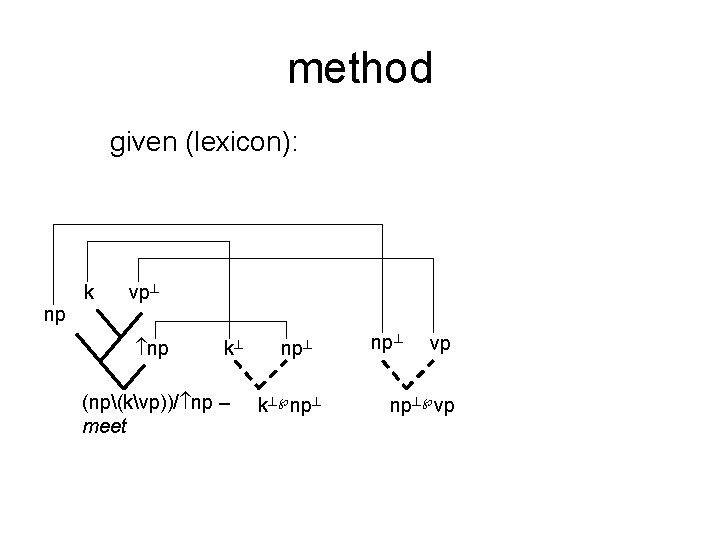

method given (lexicon): k vp np np k (np(kvp))/ np – meet np k np vp np vp

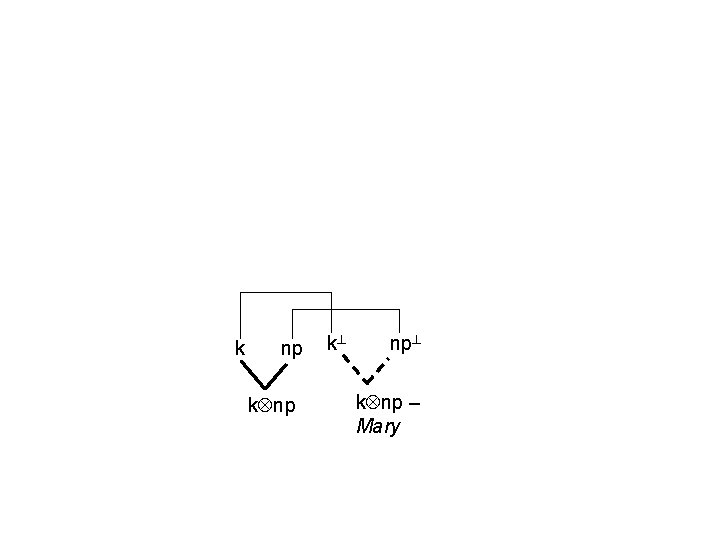

k np k np – Mary

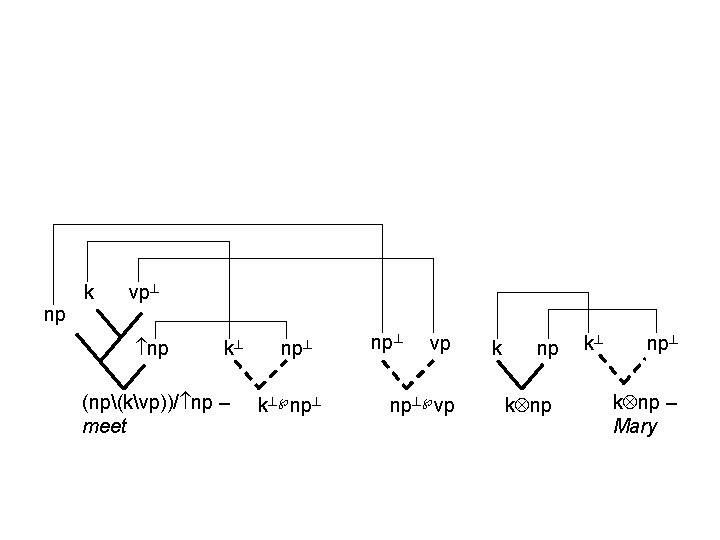

k vp np np k (np(kvp))/ np – meet np k np vp np vp k np k np – Mary

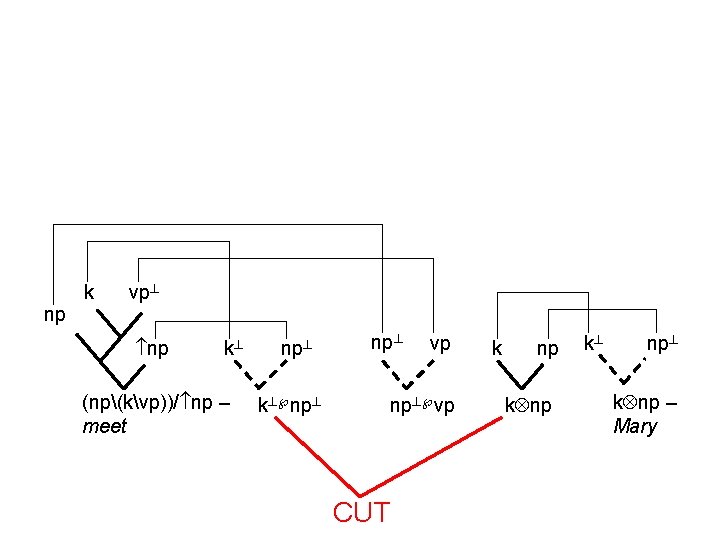

k vp np np k (np(kvp))/ np – meet np k np vp np vp CUT k np k np – Mary

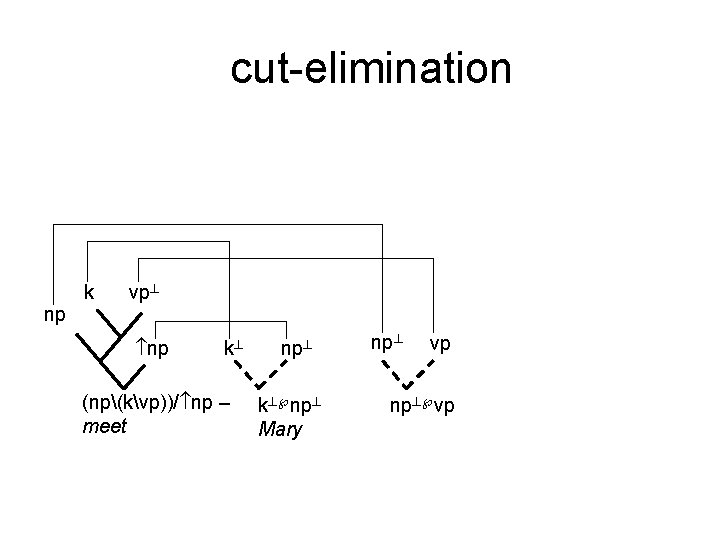

cut-elimination k vp np np k (np(kvp))/ np – meet np k np Mary np vp np vp

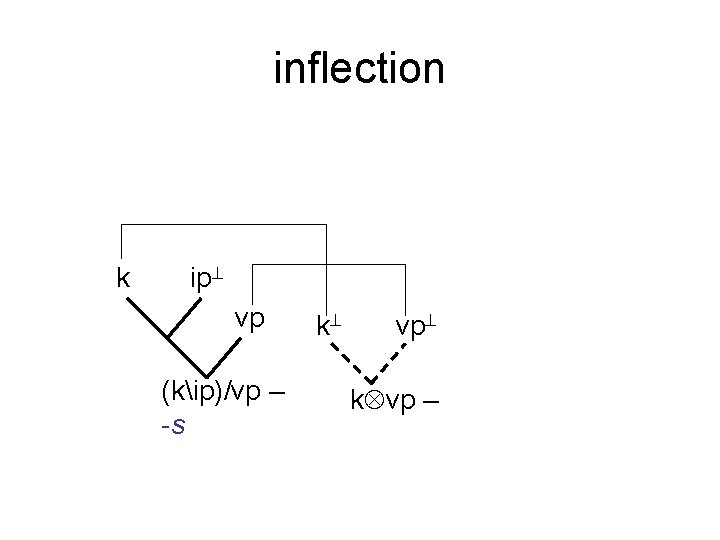

inflection k ip vp (kip)/vp – -s k vp k vp –

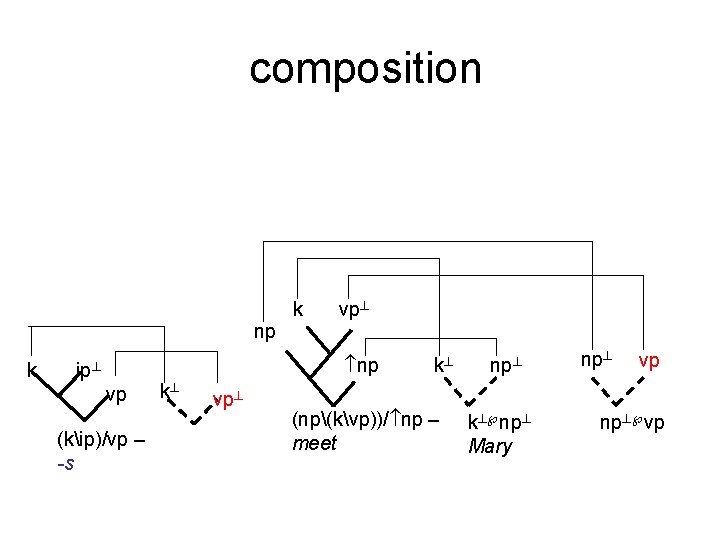

composition k vp np k np ip vp (kip)/vp – -s k vp k (np(kvp))/ np – meet np k np Mary np vp np vp

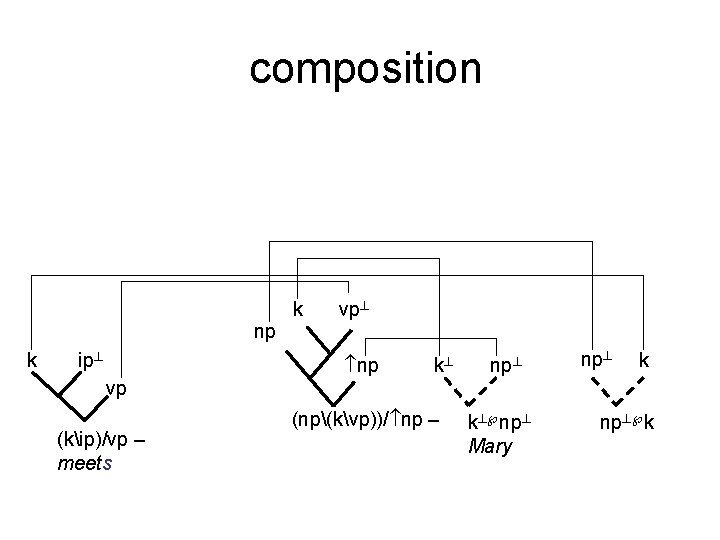

composition k vp np k ip np k np k vp (kip)/vp – meets (np(kvp))/ np – k np Mary np k

- Slides: 61