Extending an Infini Band Fabric around the world

Extending an Infini. Band Fabric around the world Yves Poppe A*STAR Computational Resource Centre Singapore ISGC 2015 Taipei, Taiwan , 15 -20 th march 2015

Please, maximize my effective throughput Connect HPC resources at Fusionopolis with the storage and genomics pipeline in the Biopolis Matrix Building Pg 2

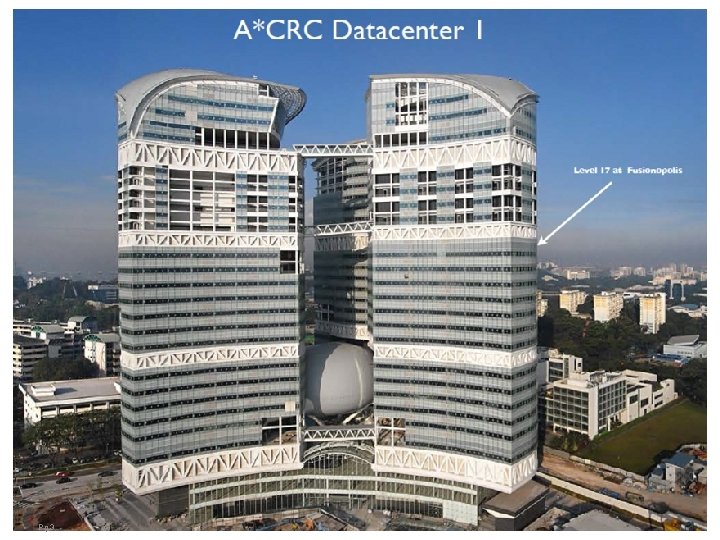

Pg 3

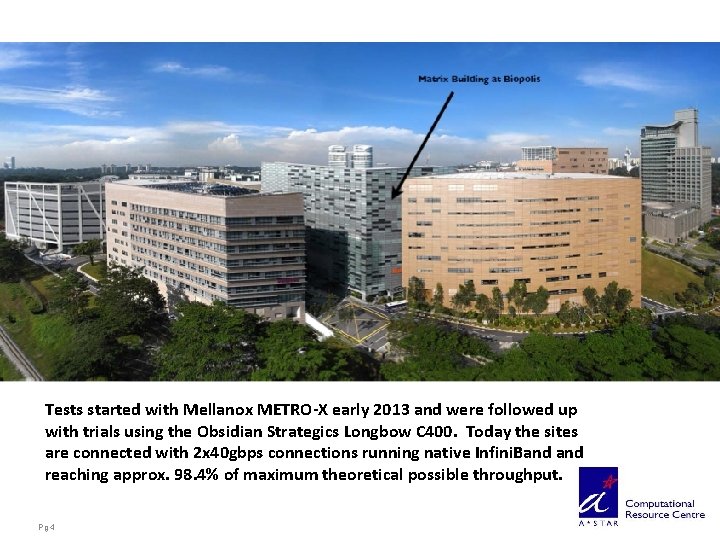

Tests started with Mellanox METRO-X early 2013 and were followed up with trials using the Obsidian Strategics Longbow C 400. Today the sites are connected with 2 x 40 gbps connections running native Infini. Band reaching approx. 98. 4% of maximum theoretical possible throughput. Pg 4

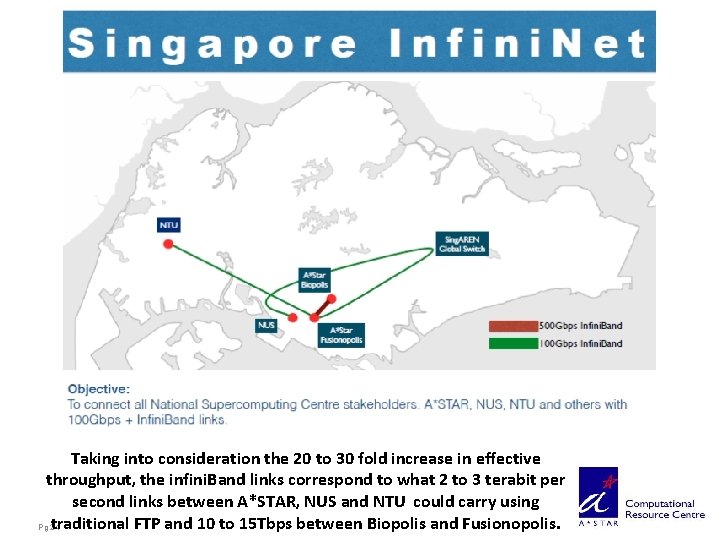

The big picture: NSCC Singapore’s National Super. Computer Centre • Funding (MTI) and co-funding (A*STAR, NUS, NTU) approved in November 2014 • NSCC – – Calls for new 1 -3+ Peta. FLOP Supercomputer Recurrent investment every 3 to 5 years Pooling up and high tier compute resources at A*STAR and IHLs Co-investment from primary stakeholders • Science, Technology and Research Network (STAR-N) – – Pg 5 High bandwidth network to connect distributed compute resources Provides high speed access to users, both public and private, anywhere Supports transfer of large data sets both locally and internationally Builds local and international connectivity

NSCC and STAR-N timetables • NSCC – Tender opens on 20 th of January 2015 – Tender closes on 14 th of April 2015 – Facility opens to users: October 2015 • STAR-N – Singapore infini. Net connecting A*STAR, NUS and NTU – International access point to infini. Net at Global Switch – Partner negotiations with Europe, Japan and the USA in collaboration with Singaren. – Timetables to coincide with NSCC readinesss. – Major intercontinental demonstration planned at SC 15 in November 2015 in Austin TX. Pg 6

The Ultimate Infini. Band Jailbreak • HPC’s and Infiniband were suffocating within the Data Center walls. • Range extenders like the Mellanox Metro. X gave Infiniband consequently HPC’s and data centres themselves more breathing room and ways to expand on metro level. • Obsidian Strategics took the final step: It took Data Centre walls away completely. Infini. Band connections can cross continents and circle the globe. • The ultimate step: BGFC makes Infini. Band routeable and opens the possibility to permeate the globe giving rise to an Infini. Cortex. Internet gave us classrooms without walls. Infini. Cortex will give us supercomputing without walls Pg 7

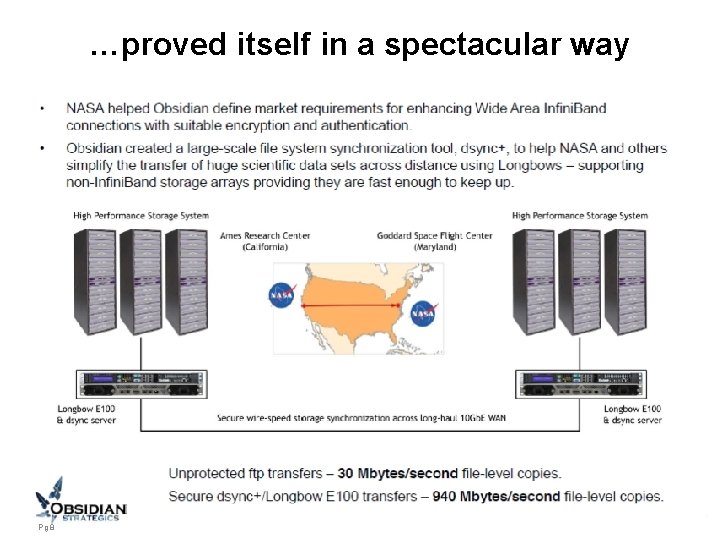

…proved itself in a spectacular way Pg 8

A*STAR’s vision: Infini. Cortex a Supercomputer of Supercomputers Professor Tan Tin Wee and Dr. Marek Michalewicz proposed to demonstrate something totally new, never done before, Very High speed transcontinental transmission of native Long Distance Infiniband between High Performance Computing (HPC) centres continents apart and have them operate as one, tackling the biggest computational challenges and opening a possible avenue to exascale supercomputing where the most vexing problem is power and heat generation. This is not cloud computing, this is not Grid Pg 9

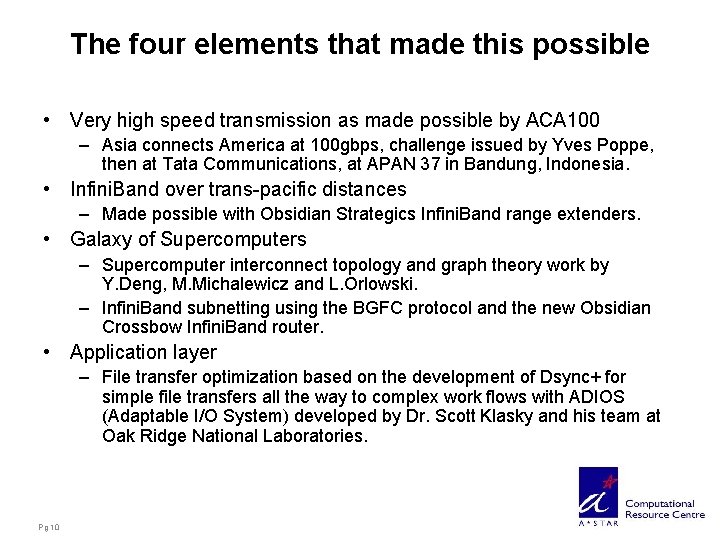

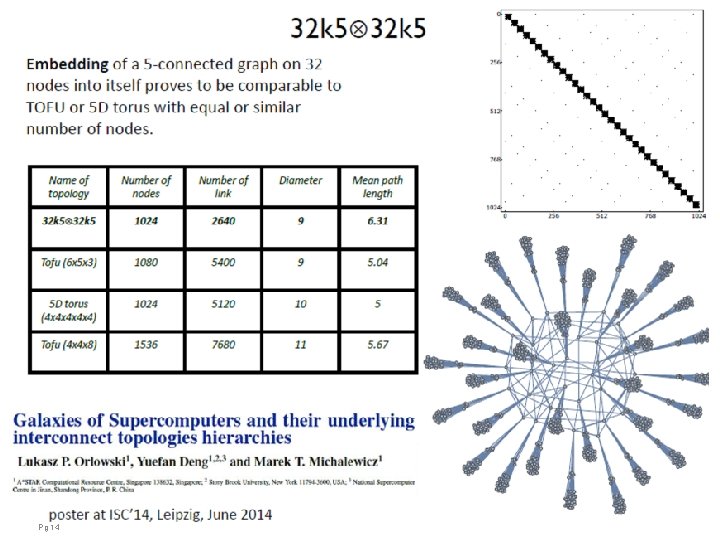

The four elements that made this possible • Very high speed transmission as made possible by ACA 100 – Asia connects America at 100 gbps, challenge issued by Yves Poppe, then at Tata Communications, at APAN 37 in Bandung, Indonesia. • Infini. Band over trans-pacific distances – Made possible with Obsidian Strategics Infini. Band range extenders. • Galaxy of Supercomputers – Supercomputer interconnect topology and graph theory work by Y. Deng, M. Michalewicz and L. Orlowski. – Infini. Band subnetting using the BGFC protocol and the new Obsidian Crossbow Infini. Band router. • Application layer – File transfer optimization based on the development of Dsync+ for simple file transfers all the way to complex work flows with ADIOS (Adaptable I/O System) developed by Dr. Scott Klasky and his team at Oak Ridge National Laboratories. Pg 10

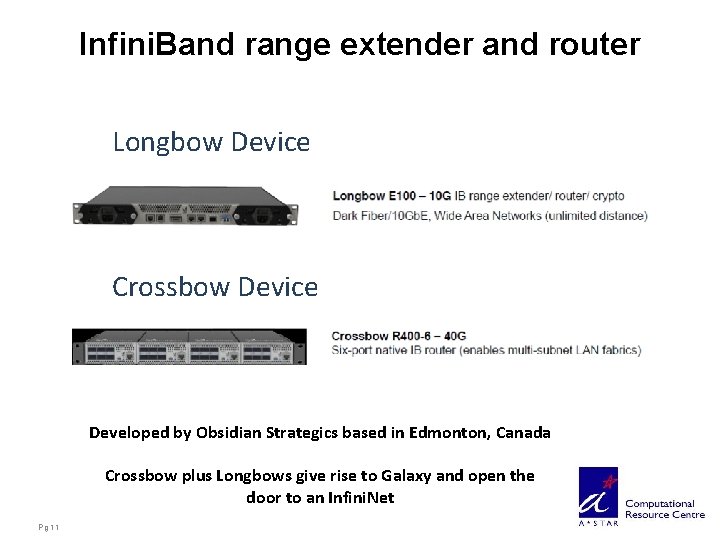

Infini. Band range extender and router Longbow Device Crossbow Device Developed by Obsidian Strategics based in Edmonton, Canada Crossbow plus Longbows give rise to Galaxy and open the door to an Infini. Net Pg 11

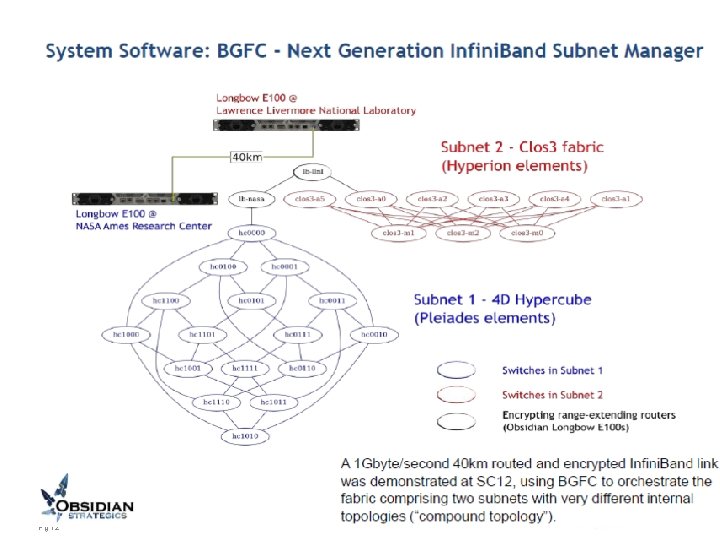

Pg 12

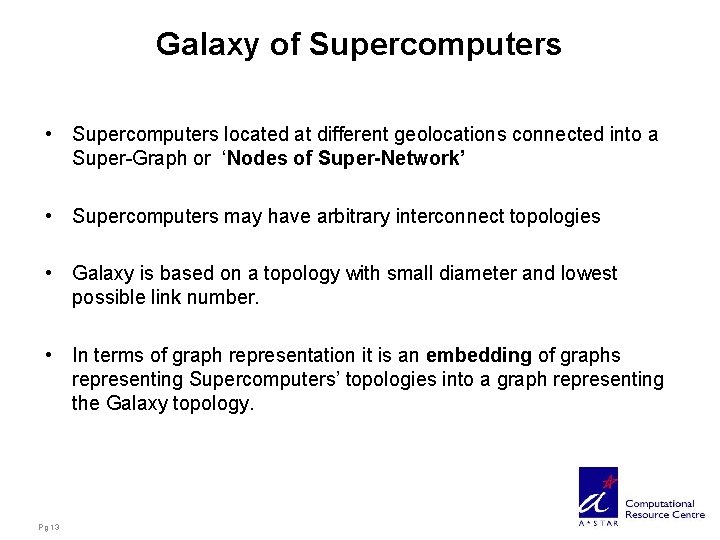

Galaxy of Supercomputers • Supercomputers located at different geolocations connected into a Super-Graph or ‘Nodes of Super-Network’ • Supercomputers may have arbitrary interconnect topologies • Galaxy is based on a topology with small diameter and lowest possible link number. • In terms of graph representation it is an embedding of graphs representing Supercomputers’ topologies into a graph representing the Galaxy topology. Pg 13

Pg 14

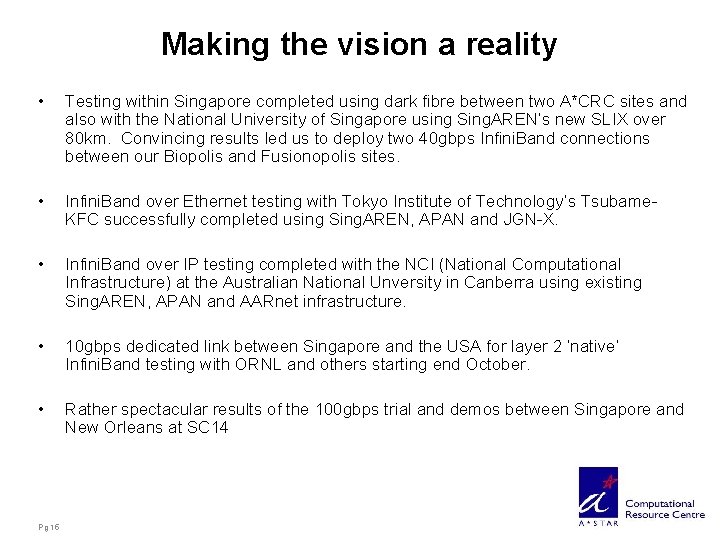

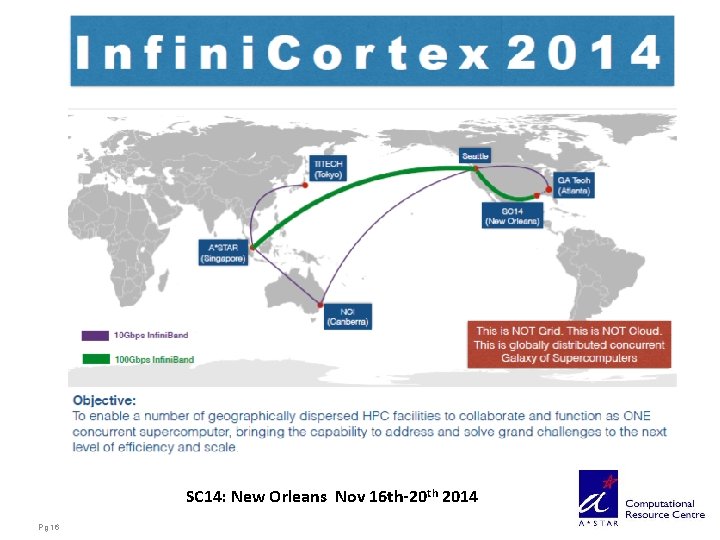

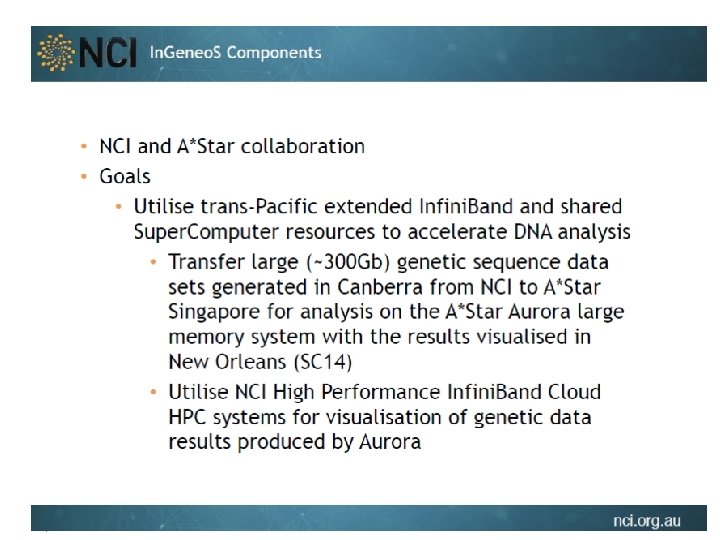

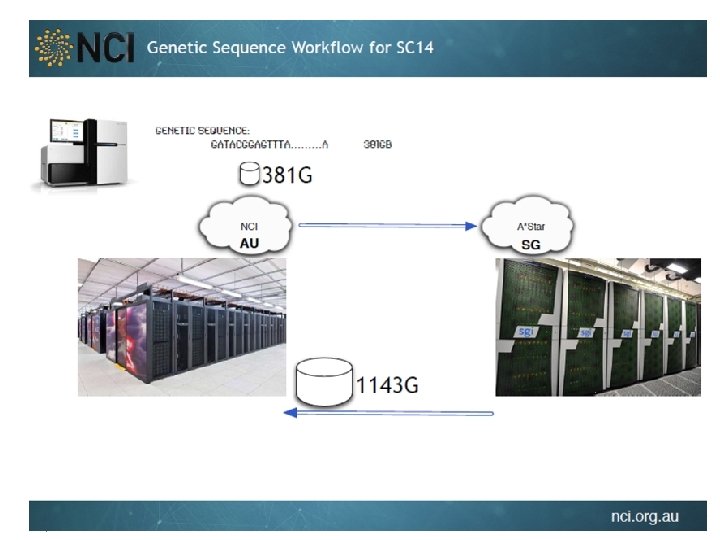

Making the vision a reality • Testing within Singapore completed using dark fibre between two A*CRC sites and also with the National University of Singapore using Sing. AREN’s new SLIX over 80 km. Convincing results led us to deploy two 40 gbps Infini. Band connections between our Biopolis and Fusionopolis sites. • Infini. Band over Ethernet testing with Tokyo Institute of Technology’s Tsubame. KFC successfully completed using Sing. AREN, APAN and JGN-X. • Infini. Band over IP testing completed with the NCI (National Computational Infrastructure) at the Australian National Unversity in Canberra using existing Sing. AREN, APAN and AARnet infrastructure. • 10 gbps dedicated link between Singapore and the USA for layer 2 ‘native’ Infini. Band testing with ORNL and others starting end October. • Rather spectacular results of the 100 gbps trial and demos between Singapore and New Orleans at SC 14 Pg 15

SC 14: New Orleans Nov 16 th-20 th 2014 Pg 16

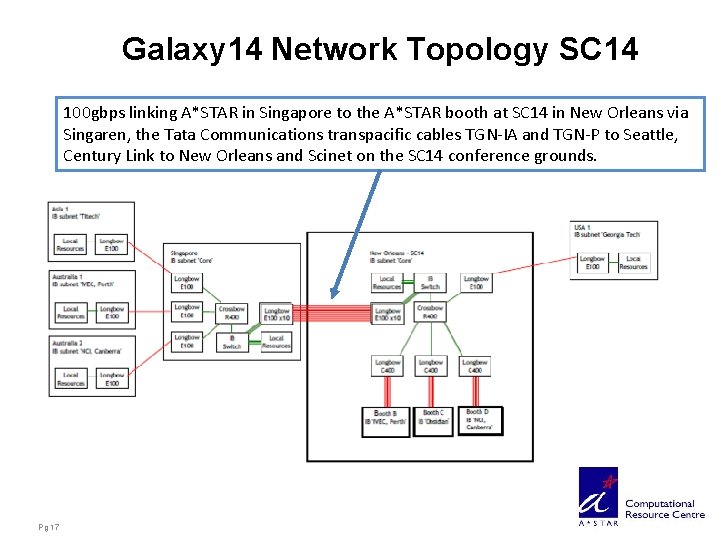

Galaxy 14 Network Topology SC 14 100 gbps linking A*STAR in Singapore to the A*STAR booth at SC 14 in New Orleans via Singaren, the Tata Communications transpacific cables TGN-IA and TGN-P to Seattle, Century Link to New Orleans and Scinet on the SC 14 conference grounds. Pg 17

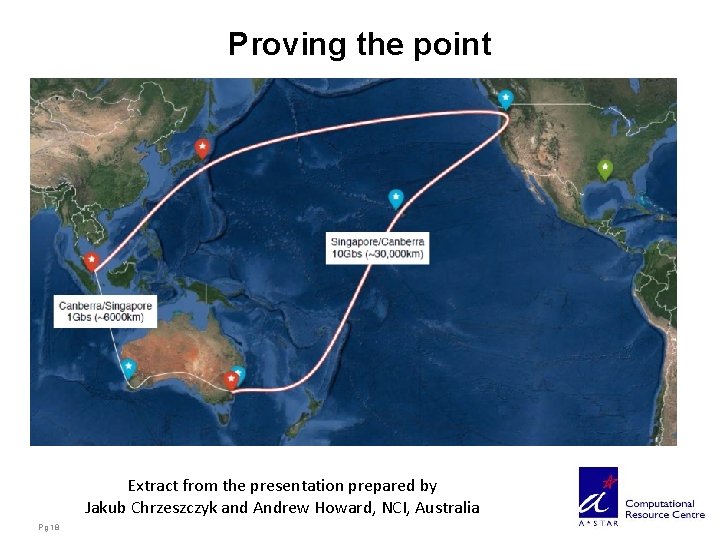

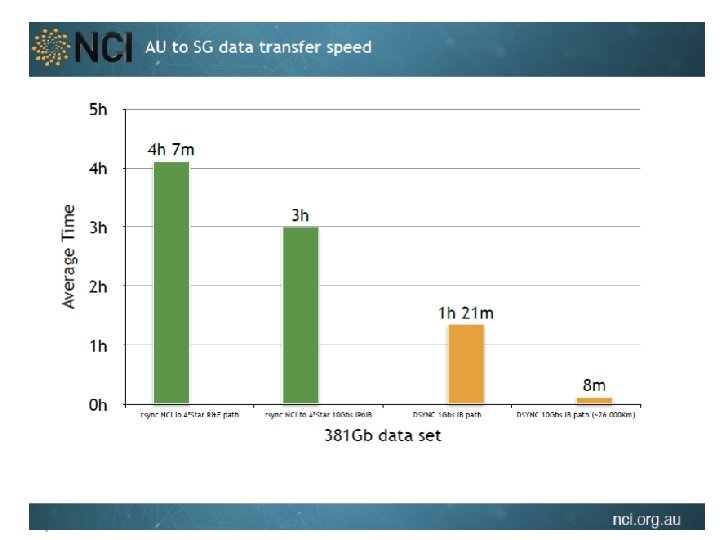

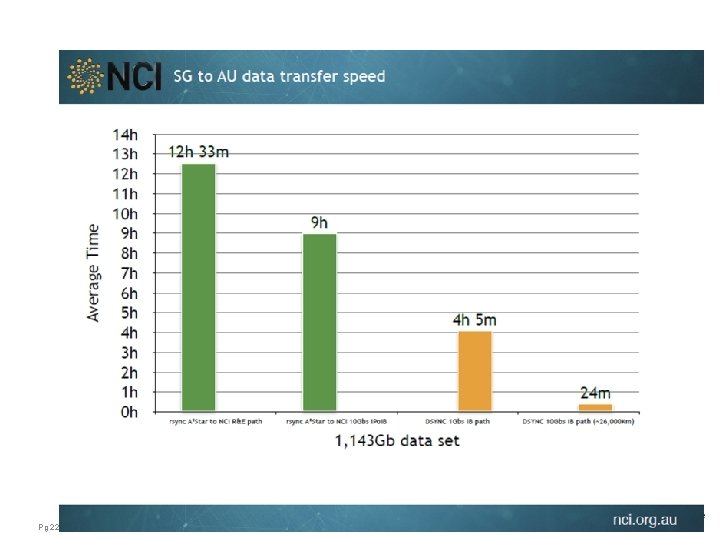

Proving the point Extract from the presentation prepared by Jakub Chrzeszczyk and Andrew Howard, NCI, Australia Pg 18

Pg 19

Pg 20

Pg 21

Pg 22

The power of Collaboration • Pg 23

Taking into consideration the 20 to 30 fold increase in effective throughput, the infini. Band links correspond to what 2 to 3 terabit per second links between A*STAR, NUS and NTU could carry using traditional FTP and 10 to 15 Tbps between Biopolis and Fusionopolis. Pg 24

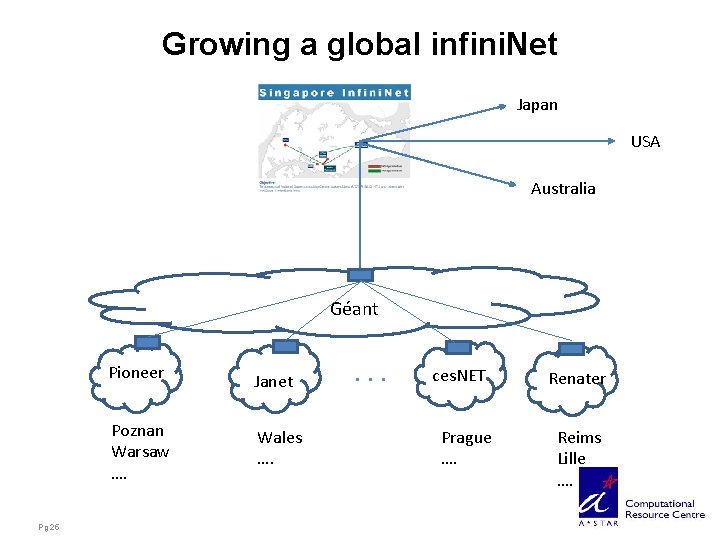

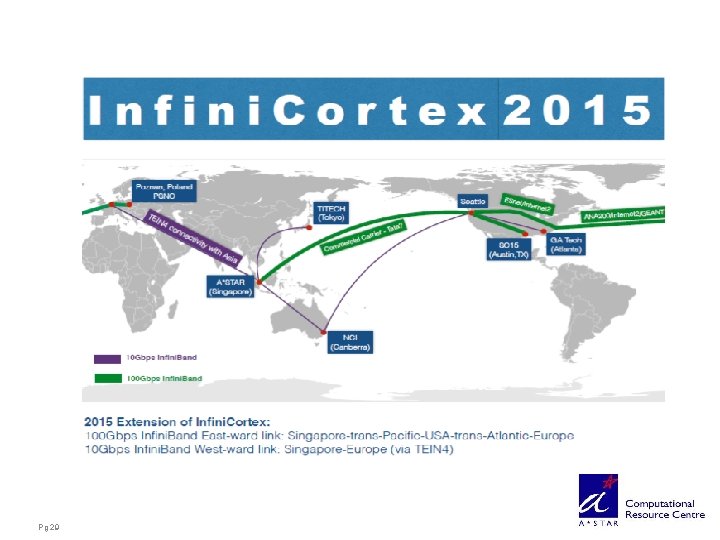

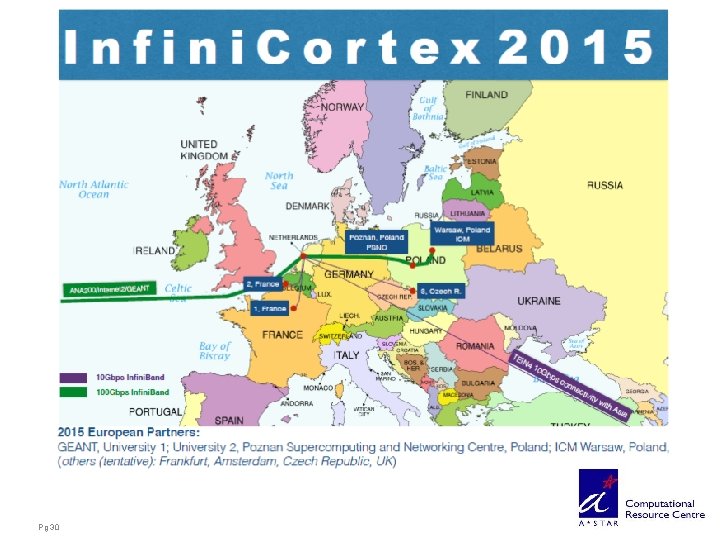

Growing a global infini. Net Japan USA Australia Géant Pioneer Poznan Warsaw …. Pg 25 Janet Wales …. . ces. NET Prague …. Renater Reims Lille ….

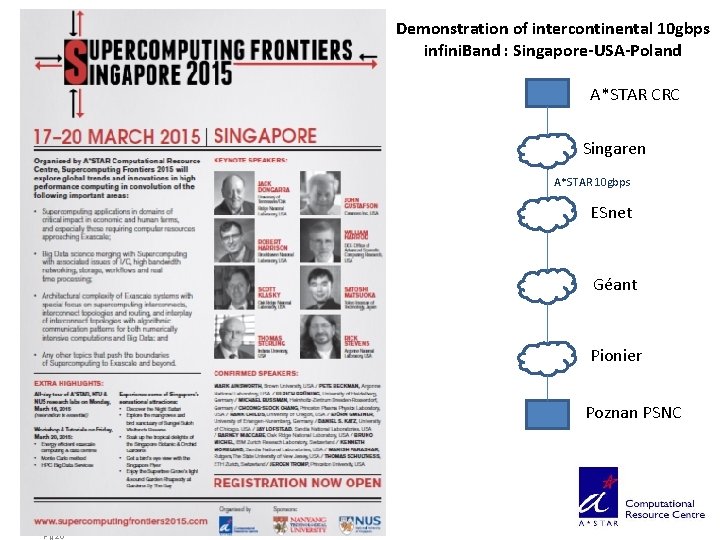

Demonstration of intercontinental 10 gbps infini. Band : Singapore-USA-Poland A*STAR CRC Singaren A*STAR 10 gbps ESnet Géant Pionier Poznan PSNC Pg 26

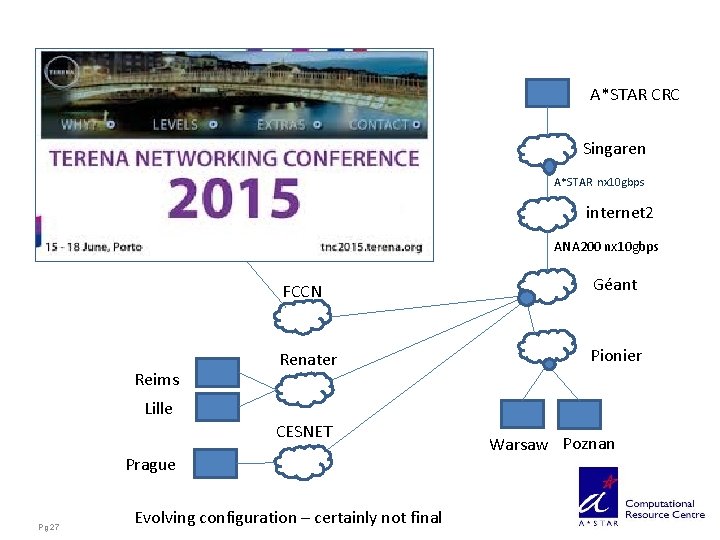

A*STAR CRC Singaren A*STAR nx 10 gbps internet 2 ANA 200 nx 10 gbps Reims FCCN Géant Renater Pionier Lille CESNET Prague Pg 27 Evolving configuration – certainly not final Warsaw Poznan

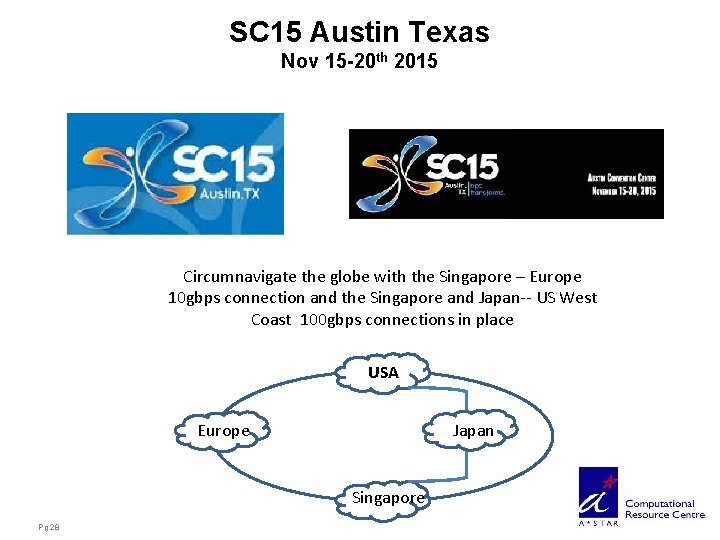

SC 15 Austin Texas Nov 15 -20 th 2015 Circumnavigate the globe with the Singapore – Europe 10 gbps connection and the Singapore and Japan-- US West Coast 100 gbps connections in place USA Japan Europe Singapore Pg 28

Pg 29

Pg 30

Long Distance Infini. Band: a game changer for R&E networking Over the past year the long distance infini. Band approach to global collaborative HPC was presented to the global NREN community including TERENA, APAN, GLIF, Géant, ASREN and internet 2. Long distance infini. Band was successfully tested within Singapore, with Japan, Australia and the USA, in collaboration with our NREN partners, culminating in a 100 gbps transpacific demonstration at SC 14 which produced spectacular results. Close collaboration with the HPC community provides the NREN’s a unique opportunity to regain the lead in innovation and to clearly differentiate themselves from commercial network service offerings. Pg 31

Long Distance Infini. Band: a game changer for HPC In Singapore, A*STAR CRC in collaboration with Singaren, is deploying an infininet to cater to the needs of the new NSCC. High speed infini. Band connectivity to Europe, Japan, Australia and the USA is required to connect with participating overseas HPC partners. The HPC community globally is faced with an unabated exponential growth of data generated. Current NREN internetworking capacity is already insufficient just to meet the anticipated needs of genomics data interchange. The next frontier is exascale computing, where we demonstrated the viability of a distributed and collaborative approach which has the added benefits of solving the huge power requirements, data replication and disaster recovery. Global HPC and global R&E networking can only live and thrive in symbiosis Pg 32

Applicability to Cloud Computing • Most remarkable so far has been the observed improvement of effective throughput over long and very long distances. • These results have been confirmed in a number of independent trials with real data exchanges. The use of Dsync developed by Obsidian Strategics is the main ingredient for this improvement. • The need for fast data replication and disaster recovery makes this a protocol of choice for data centre operators and Cloud Computing. • Leading the way: The infini. Cloud initiative led by ANU’s Andrew Howard and the AU-SG Bioinfini. Cloud trials led by Dr. Kenneth Hon Kim Ban of A*Star. Pg 33

Some recurring and outstanding questions • Scaleability? • Everything over IB between expensive long haul point to point links? Effectiveness of IPo. IB and Eo. IB. Worth some serious trials. • Effective throughput using infini. Band with Dsync was benchmarked compared to the use of FTP. How does it compare to Grid FTP or GLOBUS? • Infini. Band NIC cards to advanced desktops or scientific instrument? Pg 34

Thank You Creativity requires the courage to let go of certainties. Erich Fromm Pg 35

- Slides: 35