Exploring Image Data with QuantizationBased Techniques Svetlana Lazebnik

Exploring Image Data with Quantization-Based Techniques Svetlana Lazebnik (lazebnik@cs. unc. edu) University of North Carolina at Chapel Hill Joint work with Maxim Raginsky (m. raginsky@duke. edu) Duke University

Overview • Motivation: working with high-dimensional image data – Learning lower-dimensional structure (unsupervised) – Learning posterior class distributions (supervised) • Vector quantization framework – Can learn a lot of useful structure by compressing the data – Nearest-neighbor quantizers are convenient and familiar – VQ theory and practice is extremely well-developed • Outline of talk – Dimensionality estimation and separation (NIPS 2005, work in progress) – Learning quantizer codebooks by information loss minimization (AISTATS 2007)

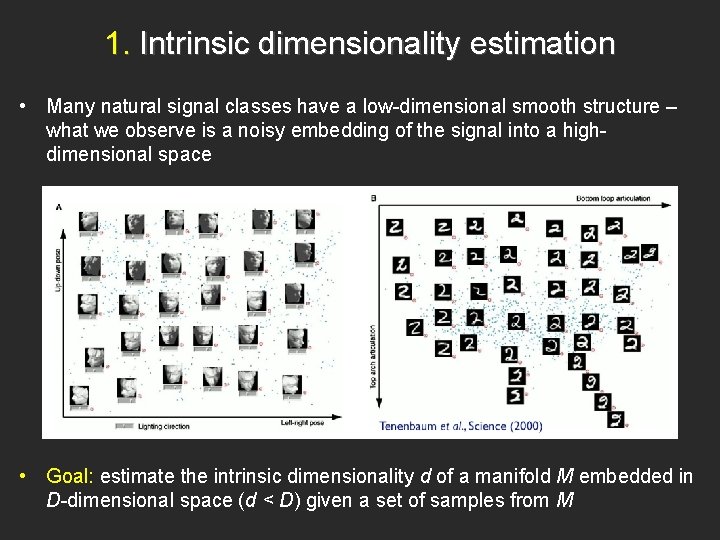

1. Intrinsic dimensionality estimation • Many natural signal classes have a low-dimensional smooth structure – what we observe is a noisy embedding of the signal into a highdimensional space • Goal: estimate the intrinsic dimensionality d of a manifold M embedded in D-dimensional space (d < D) given a set of samples from M

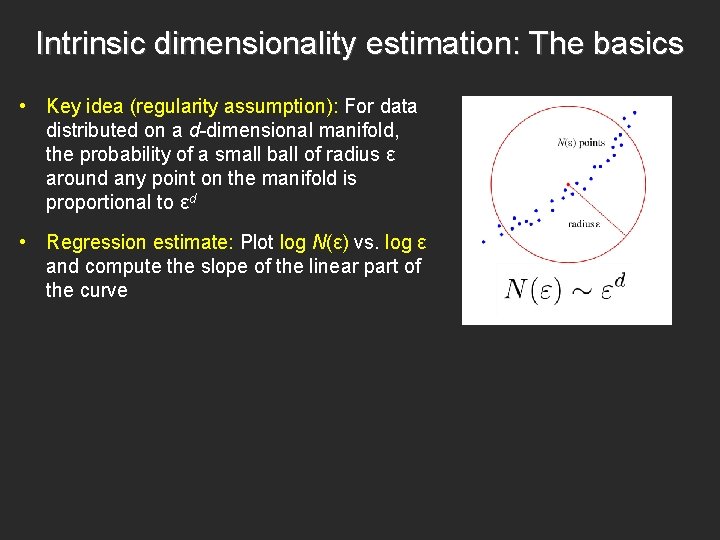

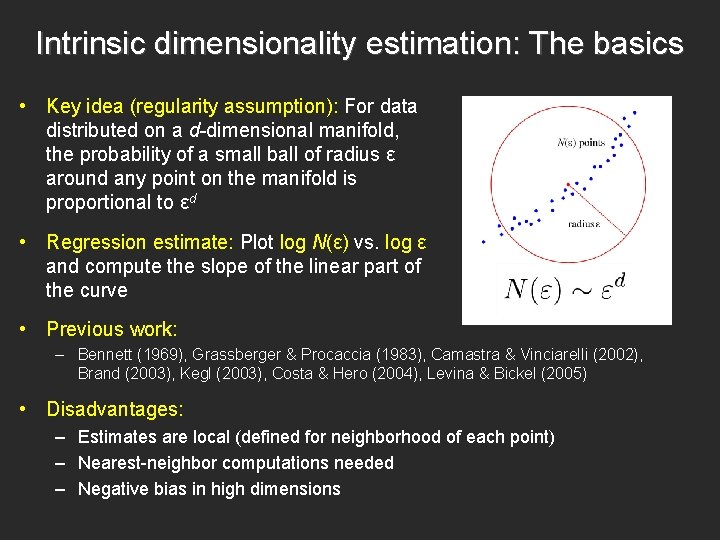

Intrinsic dimensionality estimation: The basics • Key idea (regularity assumption): For data distributed on a d-dimensional manifold, the probability of a small ball of radius ε around any point on the manifold is proportional to εd • Regression estimate: Plot log N(ε) vs. log ε and compute the slope of the linear part of the curve

Intrinsic dimensionality estimation: The basics • Key idea (regularity assumption): For data distributed on a d-dimensional manifold, the probability of a small ball of radius ε around any point on the manifold is proportional to εd • Regression estimate: Plot log N(ε) vs. log ε and compute the slope of the linear part of the curve • Previous work: – Bennett (1969), Grassberger & Procaccia (1983), Camastra & Vinciarelli (2002), Brand (2003), Kegl (2003), Costa & Hero (2004), Levina & Bickel (2005) • Disadvantages: – Estimates are local (defined for neighborhood of each point) – Nearest-neighbor computations needed – Negative bias in high dimensions

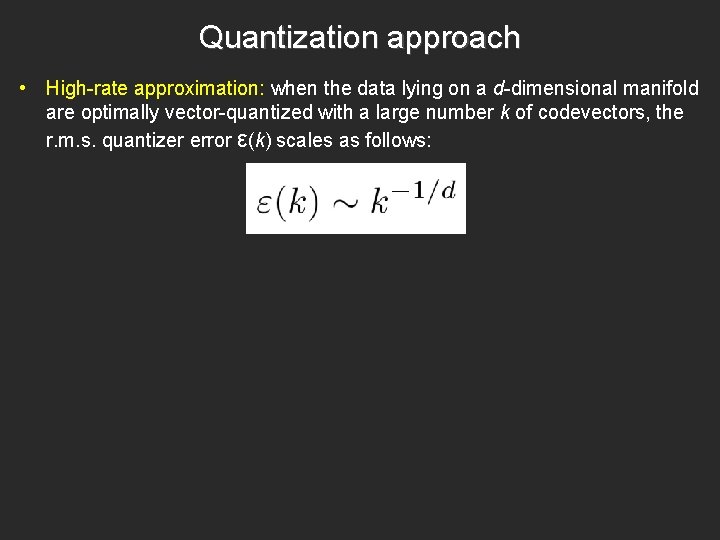

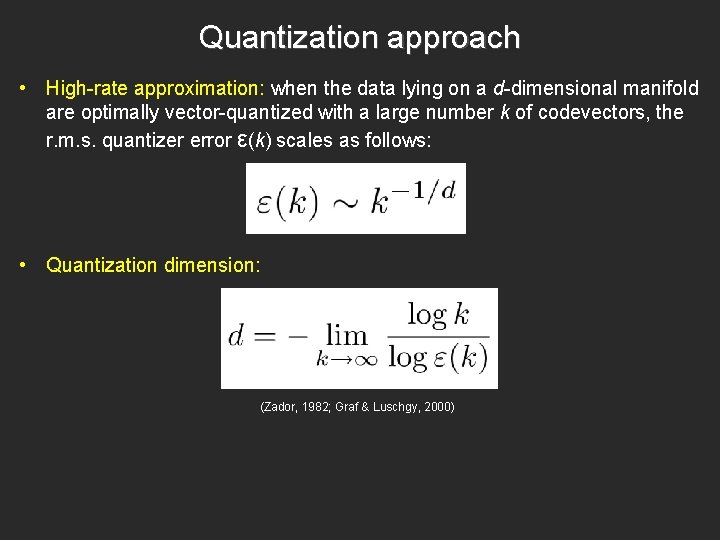

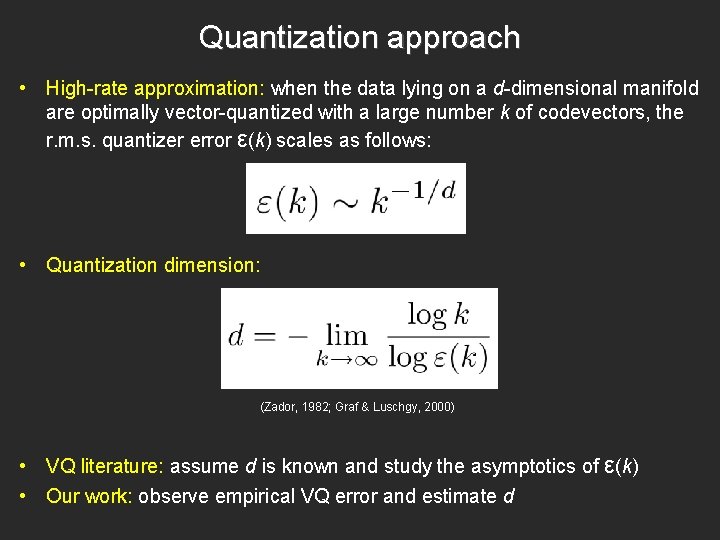

Quantization approach • High-rate approximation: when the data lying on a d-dimensional manifold are optimally vector-quantized with a large number k of codevectors, the r. m. s. quantizer error ε(k) scales as follows:

Quantization approach • High-rate approximation: when the data lying on a d-dimensional manifold are optimally vector-quantized with a large number k of codevectors, the r. m. s. quantizer error ε(k) scales as follows: • Quantization dimension: (Zador, 1982; Graf & Luschgy, 2000)

Quantization approach • High-rate approximation: when the data lying on a d-dimensional manifold are optimally vector-quantized with a large number k of codevectors, the r. m. s. quantizer error ε(k) scales as follows: • Quantization dimension: (Zador, 1982; Graf & Luschgy, 2000) • VQ literature: assume d is known and study the asymptotics of ε(k) • Our work: observe empirical VQ error and estimate d

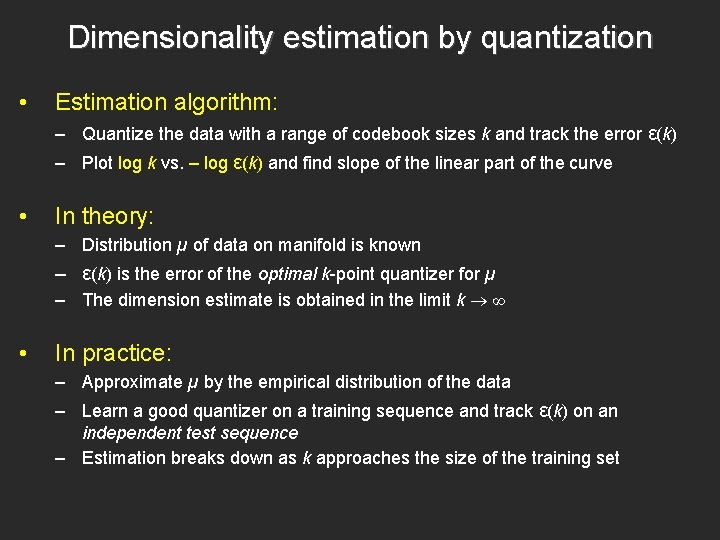

Dimensionality estimation by quantization • Estimation algorithm: – Quantize the data with a range of codebook sizes k and track the error ε(k) – Plot log k vs. – log ε(k) and find slope of the linear part of the curve • In theory: – Distribution µ of data on manifold is known – ε(k) is the error of the optimal k-point quantizer for µ – The dimension estimate is obtained in the limit k ∞ • In practice: – Approximate µ by the empirical distribution of the data – Learn a good quantizer on a training sequence and track ε(k) on an independent test sequence – Estimation breaks down as k approaches the size of the training set

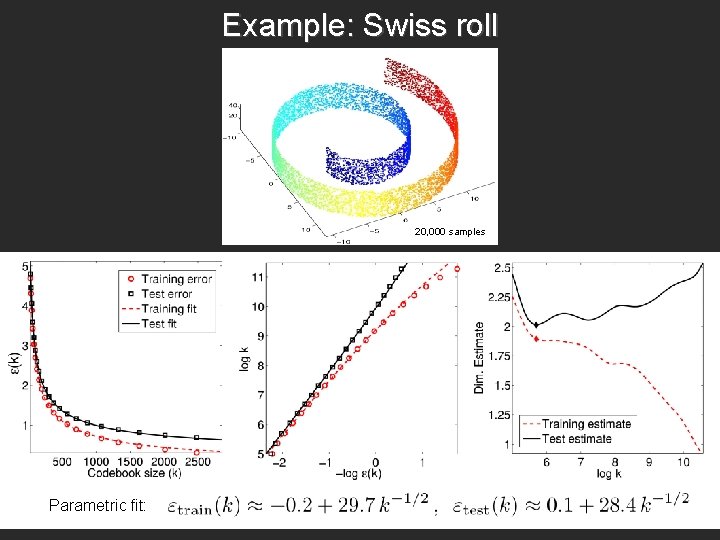

Example: Swiss roll 20, 000 samples Parametric fit:

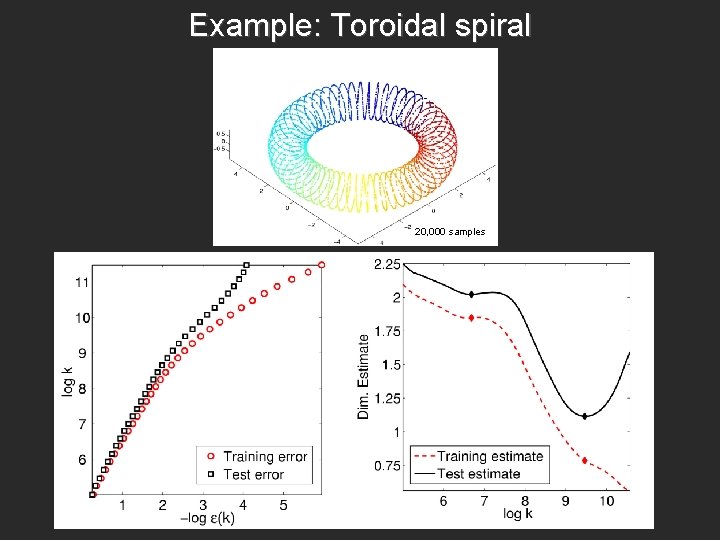

Example: Toroidal spiral 20, 000 samples

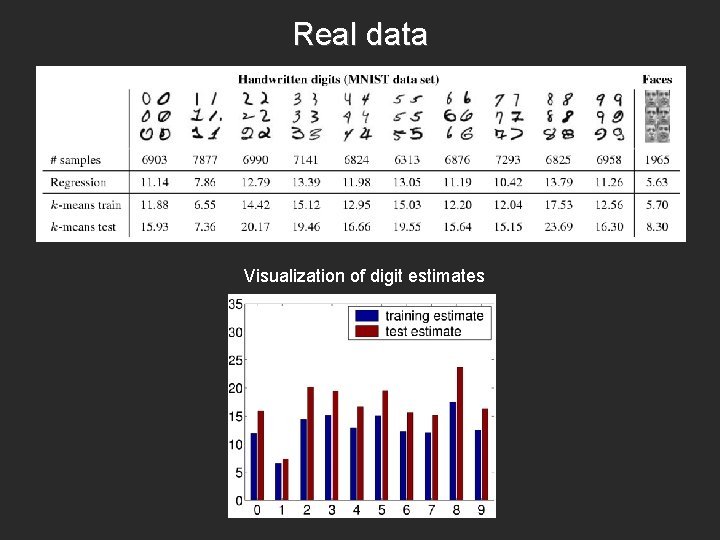

Real data Visualization of digit estimates

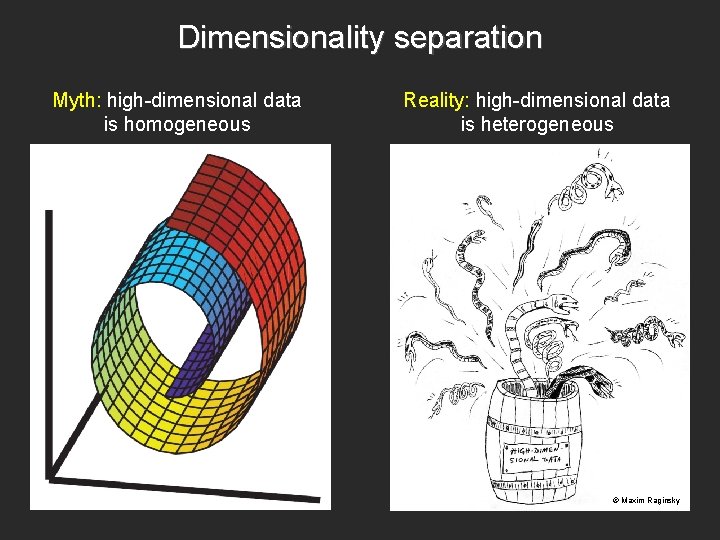

Dimensionality separation Myth: high-dimensional data is homogeneous Reality: high-dimensional data is heterogeneous © Maxim Raginsky

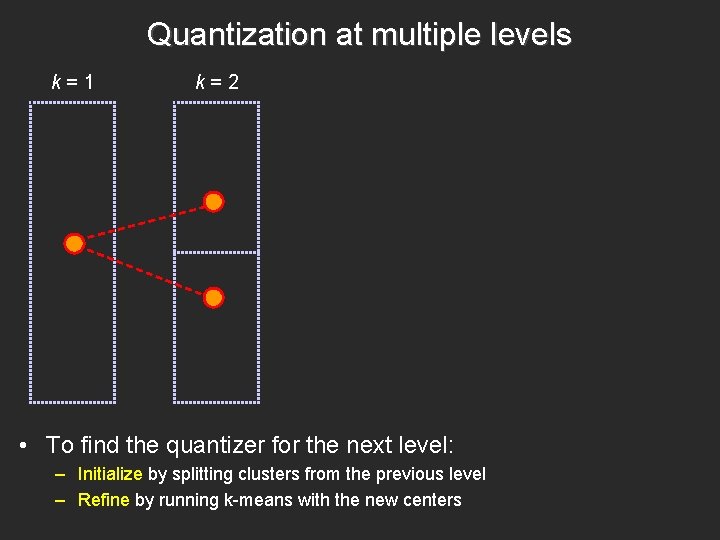

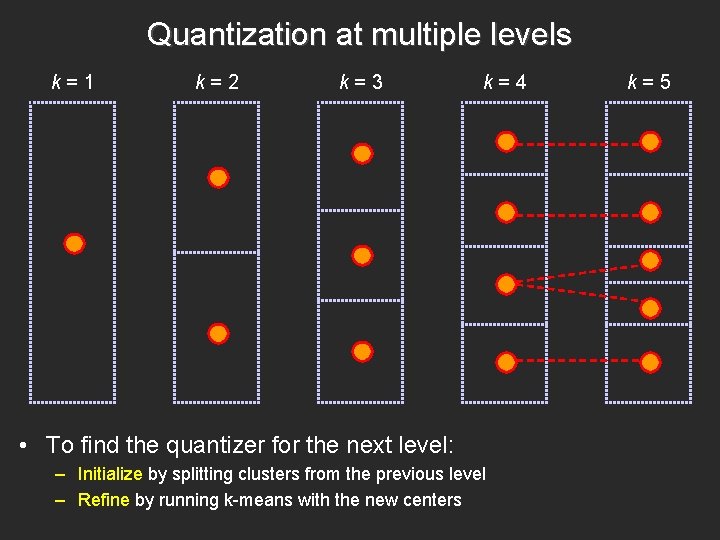

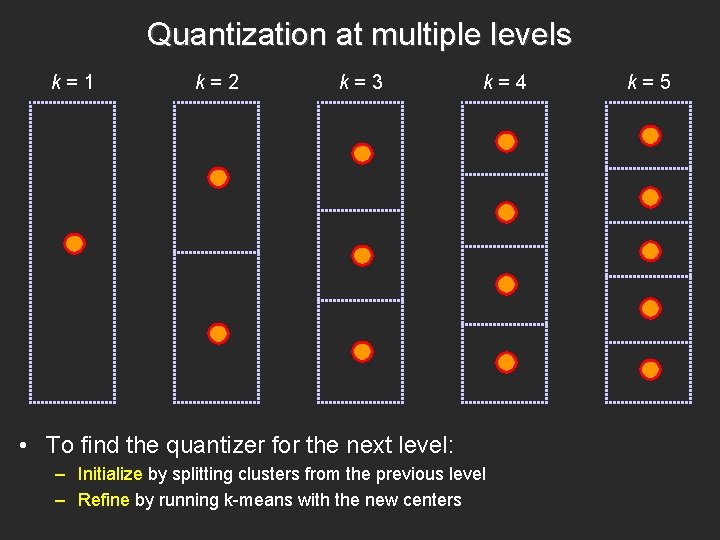

Quantization at multiple levels k=1 k=2 • To find the quantizer for the next level: – Initialize by splitting clusters from the previous level – Refine by running k-means with the new centers

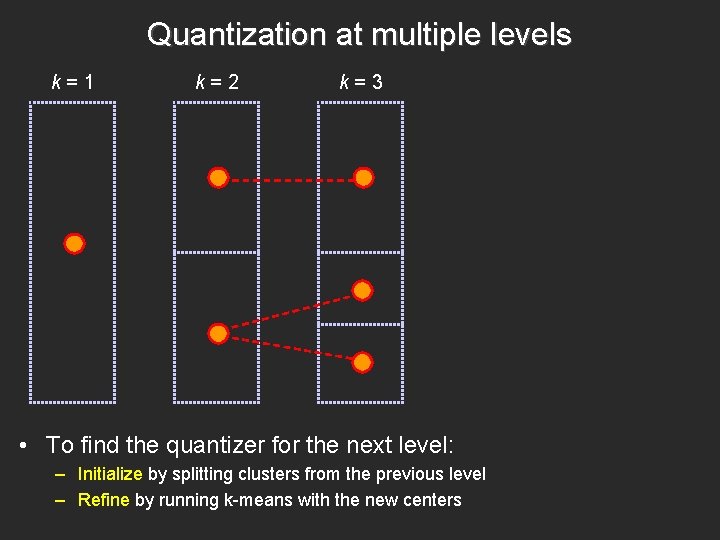

Quantization at multiple levels k=1 k=2 k=3 • To find the quantizer for the next level: – Initialize by splitting clusters from the previous level – Refine by running k-means with the new centers

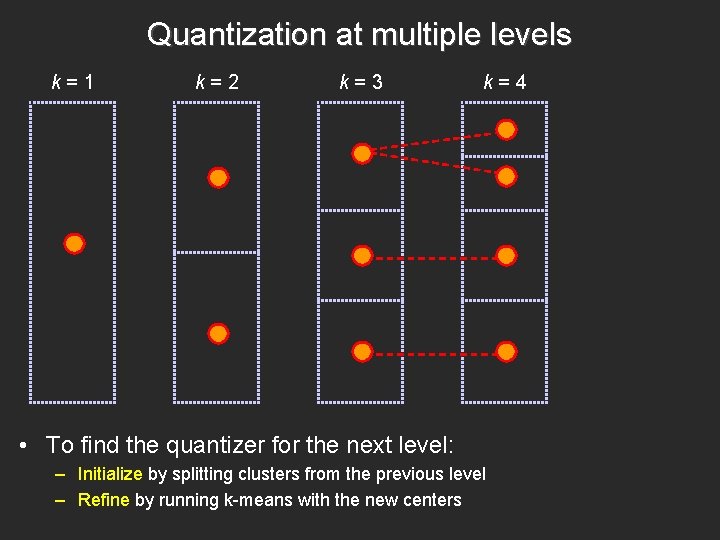

Quantization at multiple levels k=1 k=2 k=3 k=4 • To find the quantizer for the next level: – Initialize by splitting clusters from the previous level – Refine by running k-means with the new centers

Quantization at multiple levels k=1 k=2 k=3 k=4 • To find the quantizer for the next level: – Initialize by splitting clusters from the previous level – Refine by running k-means with the new centers k=5

Quantization at multiple levels k=1 k=2 k=3 k=4 • To find the quantizer for the next level: – Initialize by splitting clusters from the previous level – Refine by running k-means with the new centers k=5

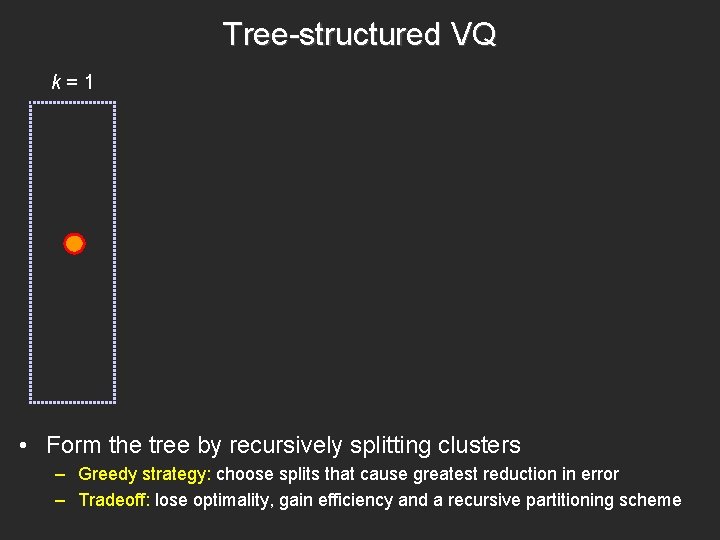

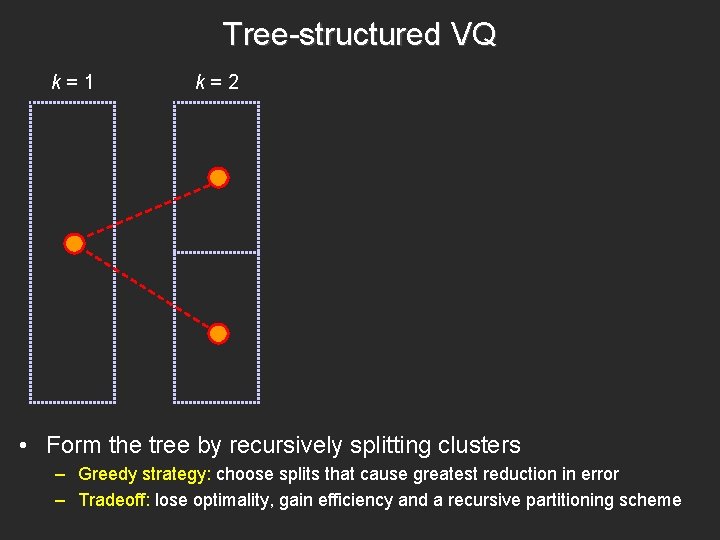

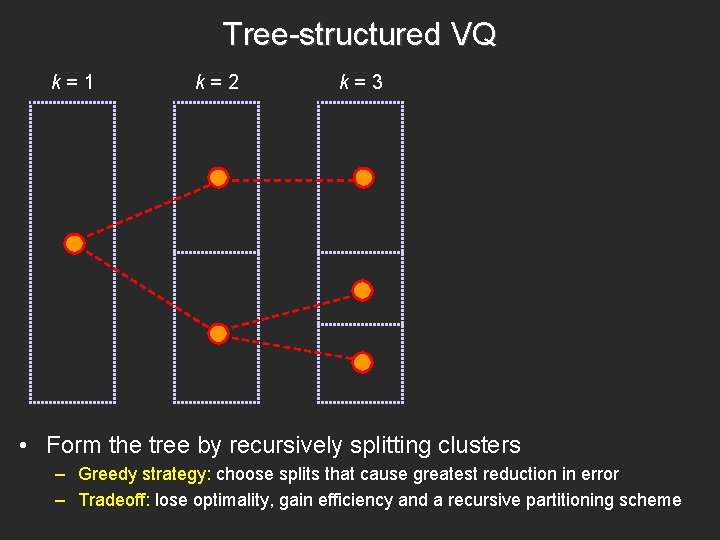

Tree-structured VQ k=1 • Form the tree by recursively splitting clusters – Greedy strategy: choose splits that cause greatest reduction in error – Tradeoff: lose optimality, gain efficiency and a recursive partitioning scheme

Tree-structured VQ k=1 k=2 • Form the tree by recursively splitting clusters – Greedy strategy: choose splits that cause greatest reduction in error – Tradeoff: lose optimality, gain efficiency and a recursive partitioning scheme

Tree-structured VQ k=1 k=2 k=3 • Form the tree by recursively splitting clusters – Greedy strategy: choose splits that cause greatest reduction in error – Tradeoff: lose optimality, gain efficiency and a recursive partitioning scheme

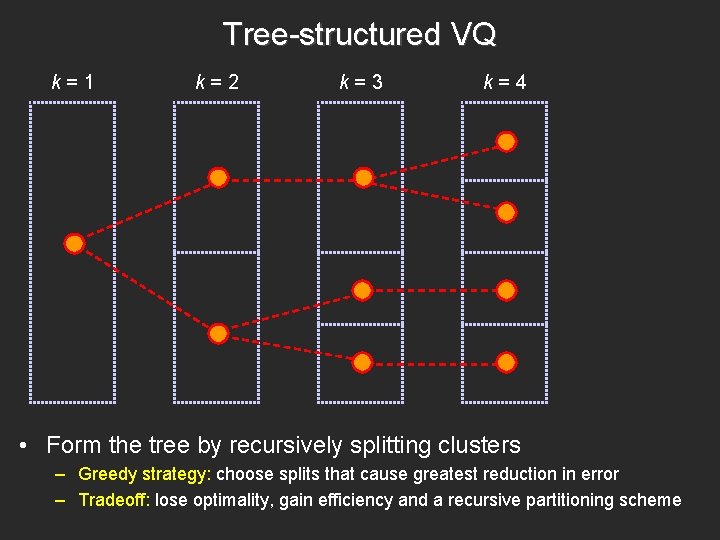

Tree-structured VQ k=1 k=2 k=3 k=4 • Form the tree by recursively splitting clusters – Greedy strategy: choose splits that cause greatest reduction in error – Tradeoff: lose optimality, gain efficiency and a recursive partitioning scheme

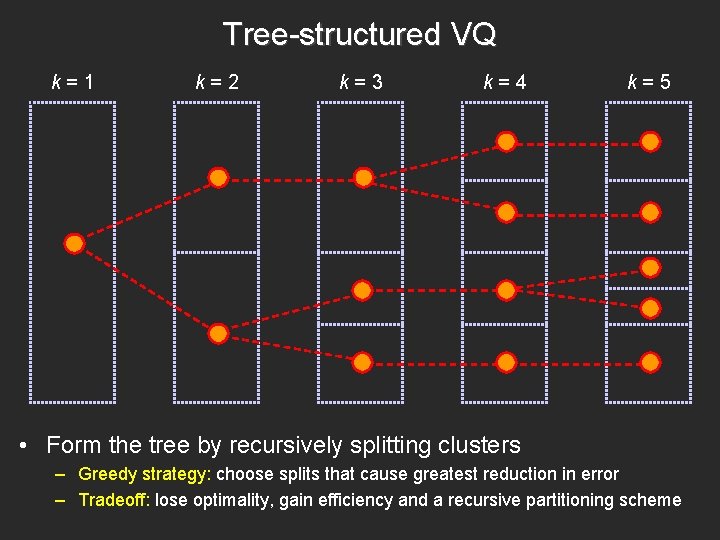

Tree-structured VQ k=1 k=2 k=3 k=4 k=5 • Form the tree by recursively splitting clusters – Greedy strategy: choose splits that cause greatest reduction in error – Tradeoff: lose optimality, gain efficiency and a recursive partitioning scheme

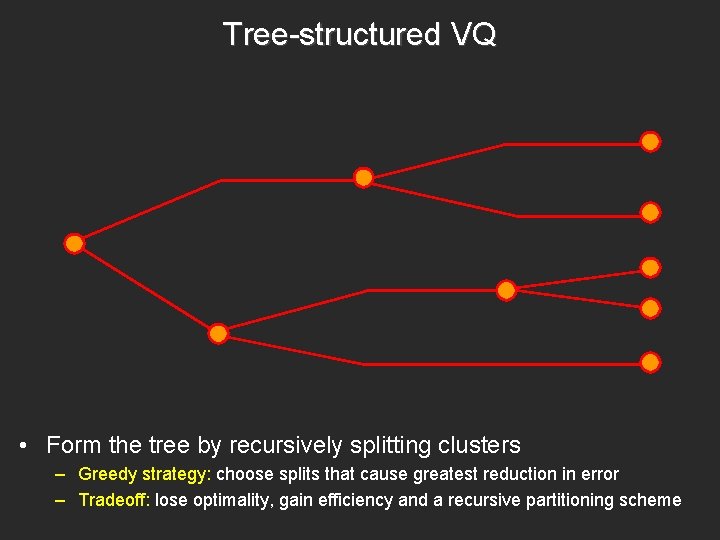

Tree-structured VQ • Form the tree by recursively splitting clusters – Greedy strategy: choose splits that cause greatest reduction in error – Tradeoff: lose optimality, gain efficiency and a recursive partitioning scheme

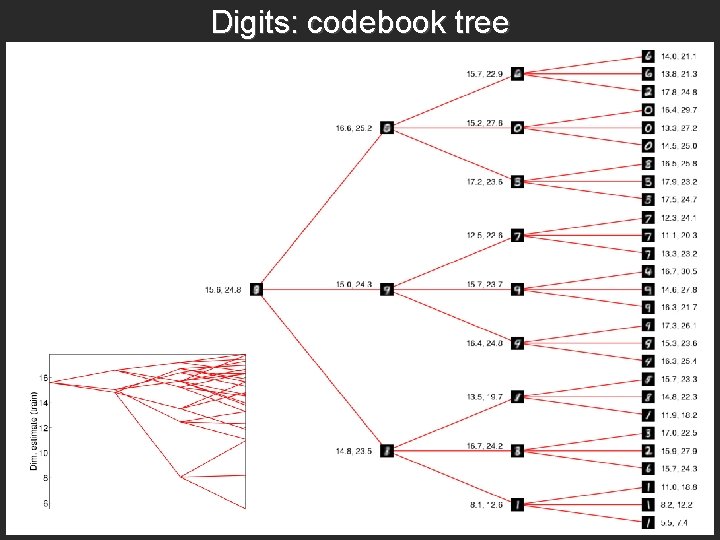

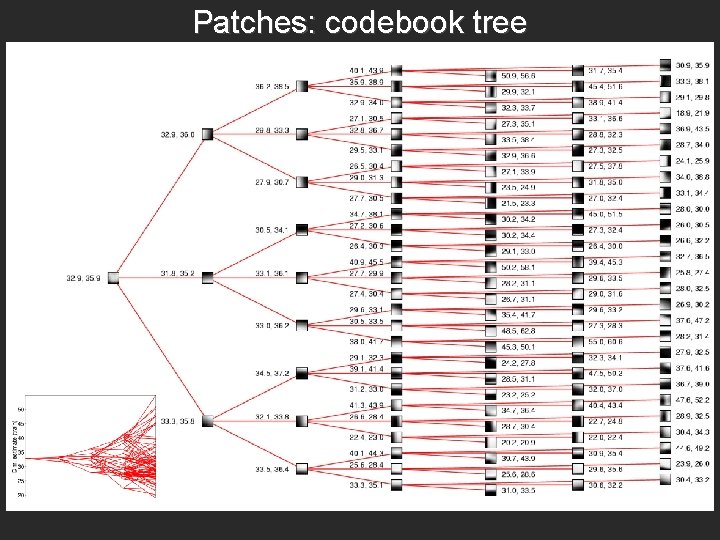

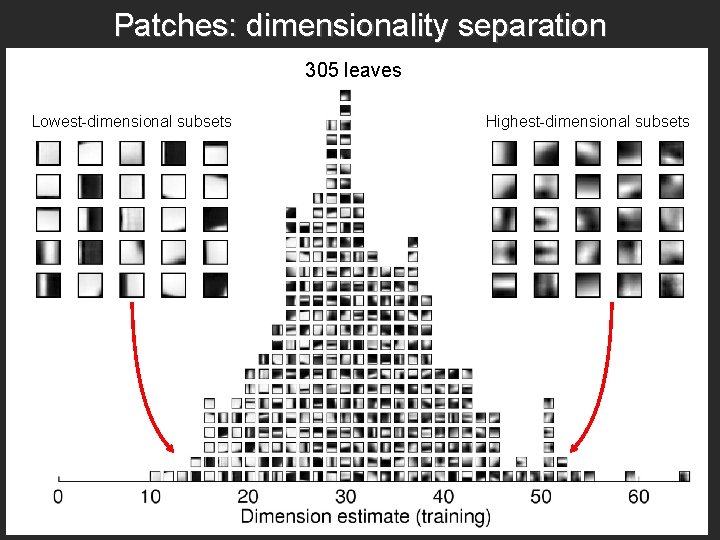

Dimensionality separation • Each node in the tree-structured codebook represents a subset of the data • We can compute a dimension estimate for that subset using the subtree rooted at that node (provided the subtree is large enough) • Use test error to find out whether the estimate for a given subtree is reliable

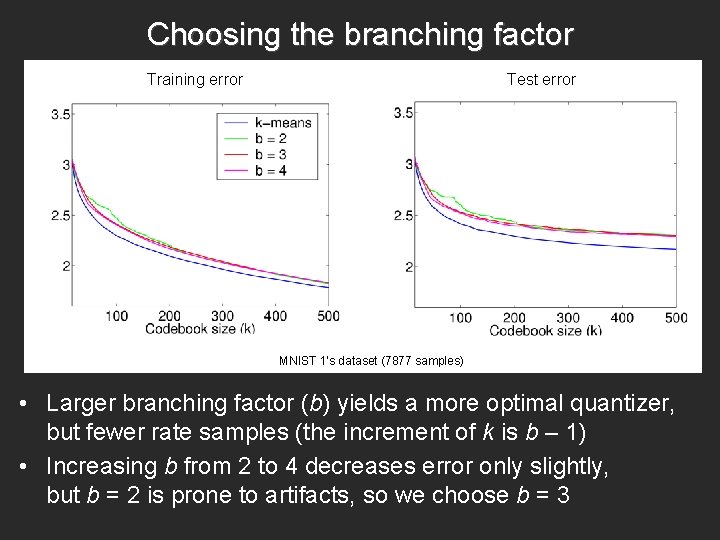

Choosing the branching factor Training error Test error MNIST 1’s dataset (7877 samples) • Larger branching factor (b) yields a more optimal quantizer, but fewer rate samples (the increment of k is b – 1) • Increasing b from 2 to 4 decreases error only slightly, but b = 2 is prone to artifacts, so we choose b = 3

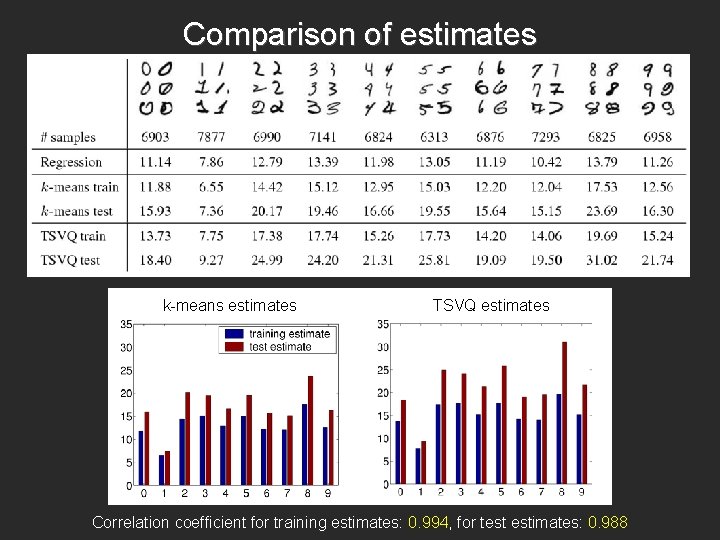

Comparison of estimates k-means estimates TSVQ estimates Correlation coefficient for training estimates: 0. 994, for test estimates: 0. 988

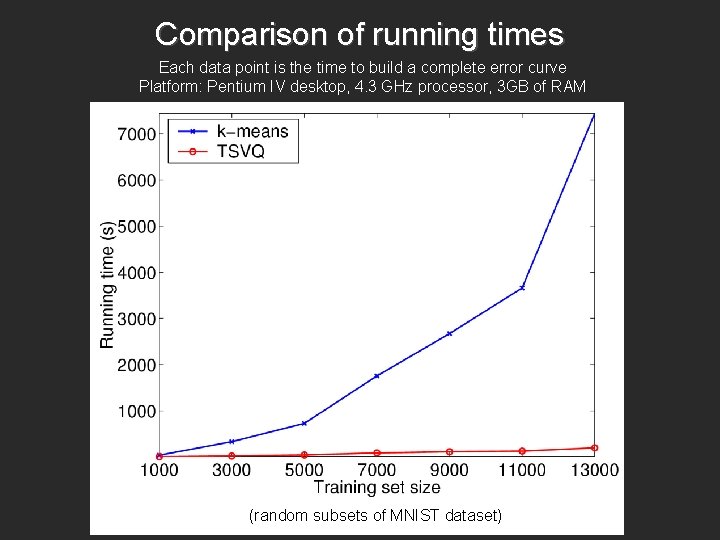

Comparison of running times Each data point is the time to build a complete error curve Platform: Pentium IV desktop, 4. 3 GHz processor, 3 GB of RAM (random subsets of MNIST dataset)

Dimensionality separation experiments • MNIST digits – 70, 000 samples – Extrinsic dimensionality: 784 (28 x 28) • Grayscale image patches – 750, 000 samples (from the 15 scene categories dataset) – Extrinsic dimensionality: 256 (16 x 16)

Digits: codebook tree

Patches: codebook tree

Patches: dimensionality separation 305 leaves Lowest-dimensional subsets Highest-dimensional subsets

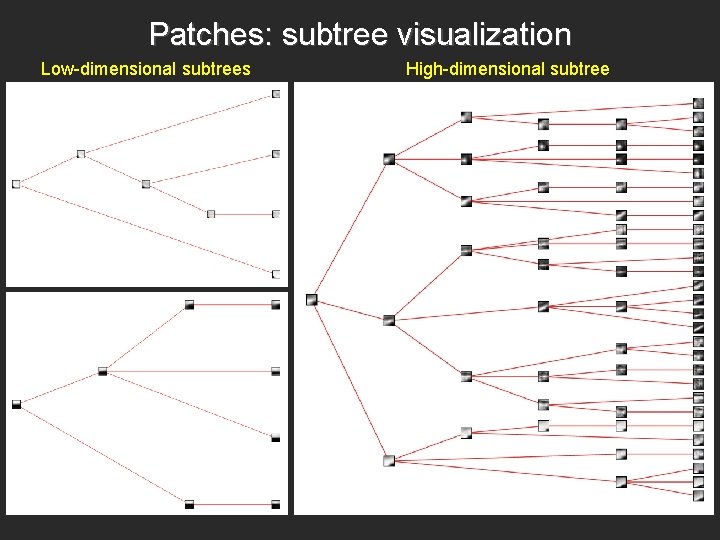

Patches: subtree visualization Low-dimensional subtrees High-dimensional subtree

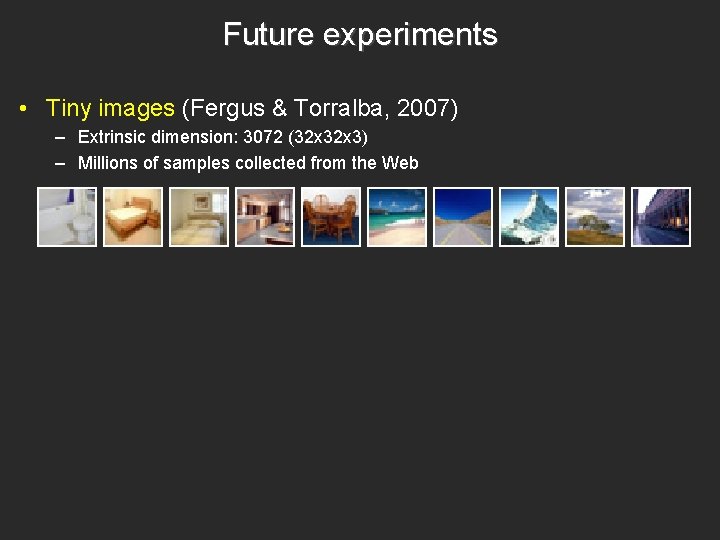

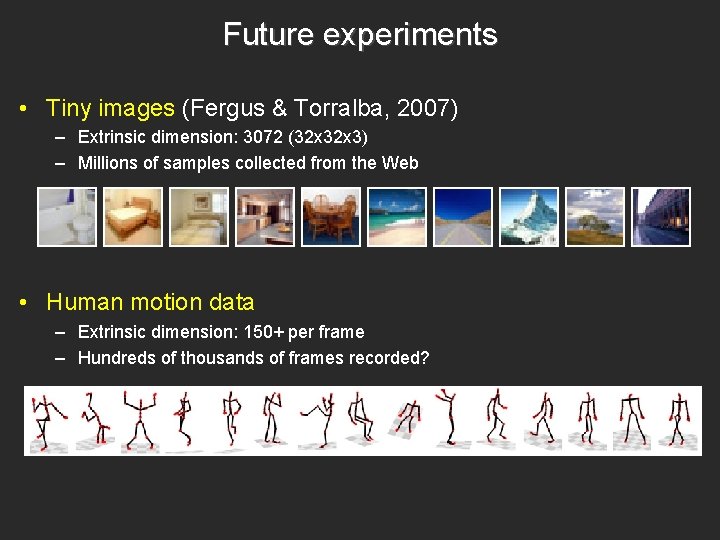

Future experiments • Tiny images (Fergus & Torralba, 2007) – Extrinsic dimension: 3072 (32 x 3) – Millions of samples collected from the Web

Future experiments • Tiny images (Fergus & Torralba, 2007) – Extrinsic dimension: 3072 (32 x 3) – Millions of samples collected from the Web • Human motion data – Extrinsic dimension: 150+ per frame – Hundreds of thousands of frames recorded?

Summary: Intrinsic Dimensionality Estimation • Our method relates the intrinsic dimension of a manifold (a topological property) to the asymptotic optimal quantization error for distributions on the manifold (an operational property) • Advantage over regression methods: fewer nearest neighbor computations • By using TSVQ, framework can naturally be extended to dimensionality separation of heterogeneous data

2. Learning quantizer codebooks by minimizing information loss • Problem formulation X Y continuous feature vector class label – Given: a training set of feature vectors X with class labels Y Lazebnik & Raginsky (AISTATS 2007)

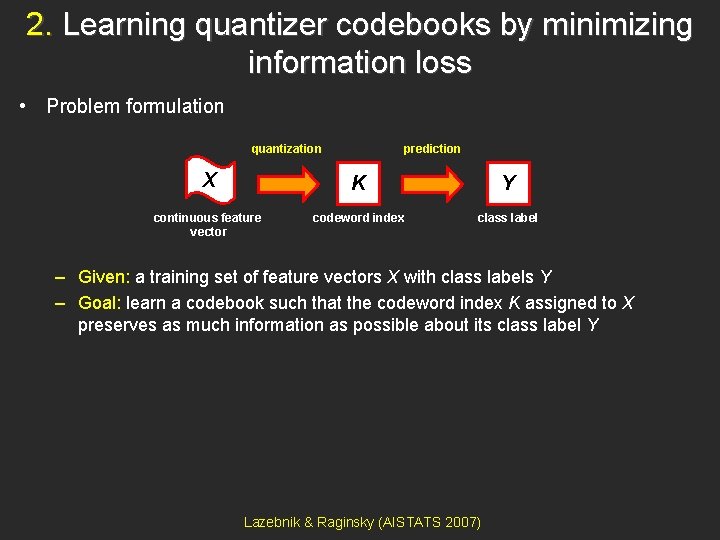

2. Learning quantizer codebooks by minimizing information loss • Problem formulation quantization prediction X K Y continuous feature vector codeword index class label – Given: a training set of feature vectors X with class labels Y – Goal: learn a codebook such that the codeword index K assigned to X preserves as much information as possible about its class label Y Lazebnik & Raginsky (AISTATS 2007)

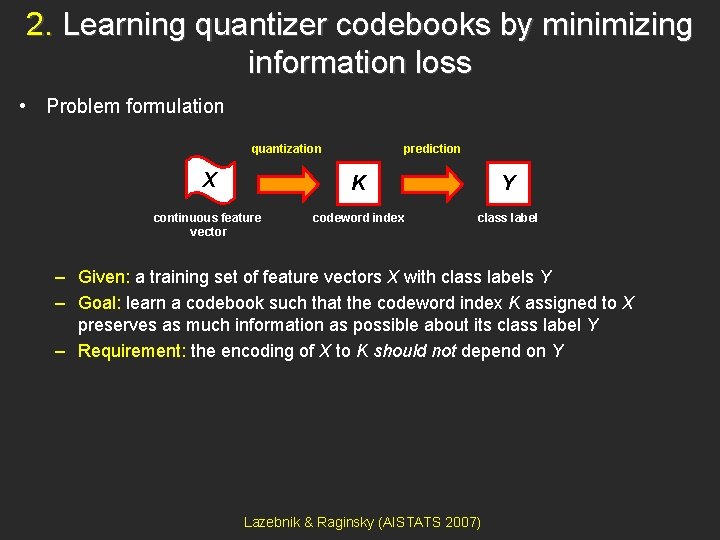

2. Learning quantizer codebooks by minimizing information loss • Problem formulation quantization prediction X K Y continuous feature vector codeword index class label – Given: a training set of feature vectors X with class labels Y – Goal: learn a codebook such that the codeword index K assigned to X preserves as much information as possible about its class label Y – Requirement: the encoding of X to K should not depend on Y Lazebnik & Raginsky (AISTATS 2007)

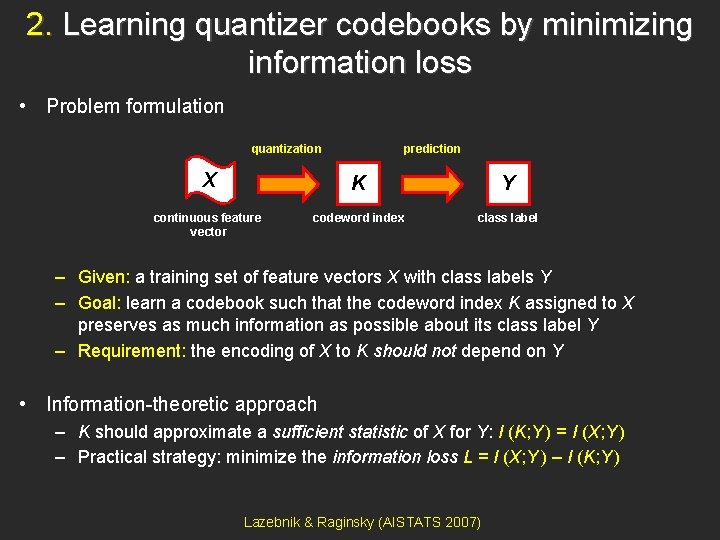

2. Learning quantizer codebooks by minimizing information loss • Problem formulation quantization prediction X K Y continuous feature vector codeword index class label – Given: a training set of feature vectors X with class labels Y – Goal: learn a codebook such that the codeword index K assigned to X preserves as much information as possible about its class label Y – Requirement: the encoding of X to K should not depend on Y • Information-theoretic approach – K should approximate a sufficient statistic of X for Y: I (K; Y ) = I (X; Y ) – Practical strategy: minimize the information loss L = I (X; Y ) – I (K; Y ) Lazebnik & Raginsky (AISTATS 2007)

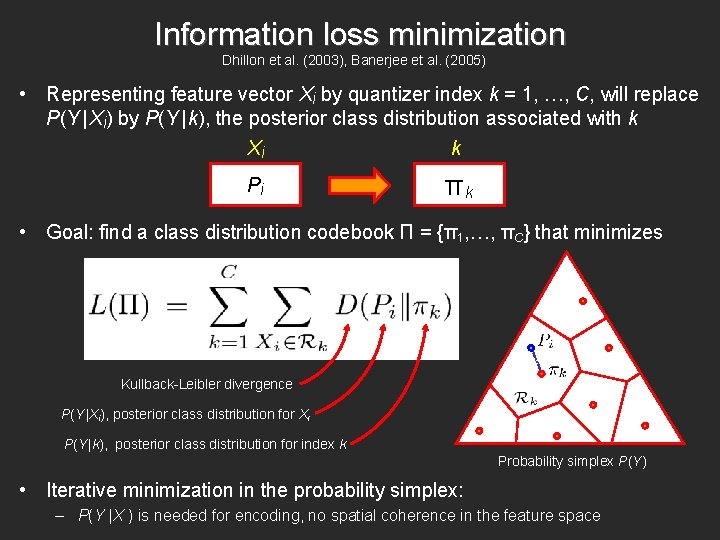

Information loss minimization Dhillon et al. (2003), Banerjee et al. (2005) • Representing feature vector Xi by quantizer index k = 1, …, C, will replace P(Y | Xi) by P(Y | k), the posterior class distribution associated with k Xi k P(Y |Xi) P(Y |k)

Information loss minimization Dhillon et al. (2003), Banerjee et al. (2005) • Representing feature vector Xi by quantizer index k = 1, …, C, will replace P(Y | Xi) by P(Y | k), the posterior class distribution associated with k Xi k Pi πk • Goal: find a class distribution codebook П = {π1, …, πC} that minimizes Kullback-Leibler divergence P(Y |Xi), posterior class distribution for Xi P(Y |k), posterior class distribution for index k Probability simplex P(Y) • Iterative minimization in the probability simplex: – P(Y |X ) is needed for encoding, no spatial coherence in the feature space

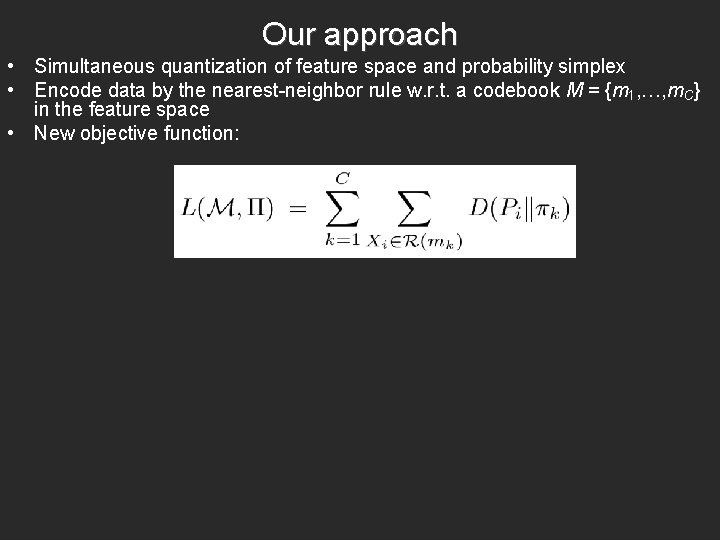

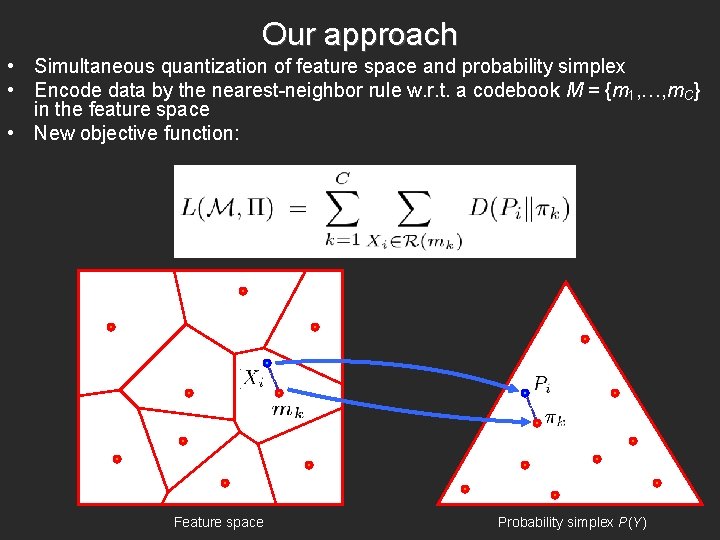

Our approach • Simultaneous quantization of feature space and probability simplex • Encode data by the nearest-neighbor rule w. r. t. a codebook M = {m 1, …, m. C} in the feature space • New objective function:

Our approach • Simultaneous quantization of feature space and probability simplex • Encode data by the nearest-neighbor rule w. r. t. a codebook M = {m 1, …, m. C} in the feature space • New objective function: Feature space Probability simplex P(Y)

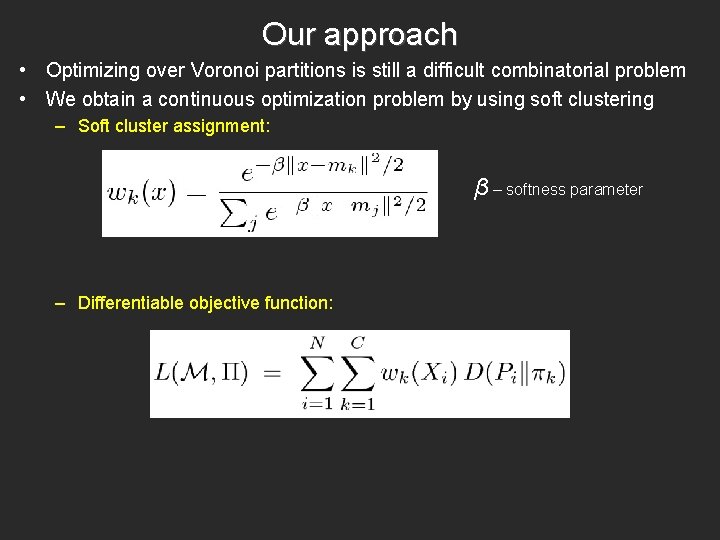

Our approach • Optimizing over Voronoi partitions is still a difficult combinatorial problem • We obtain a continuous optimization problem by using soft clustering – Soft cluster assignment: β – softness parameter – Differentiable objective function:

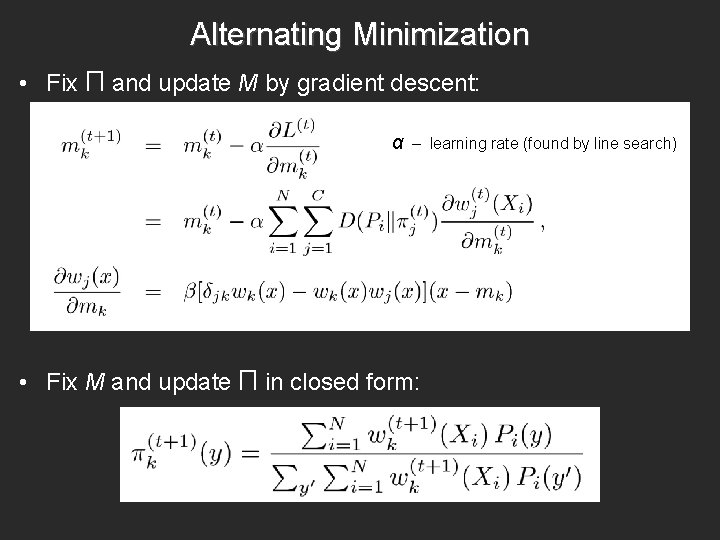

Alternating Minimization • Fix П and update M by gradient descent: α – learning rate (found by line search) • Fix M and update П in closed form:

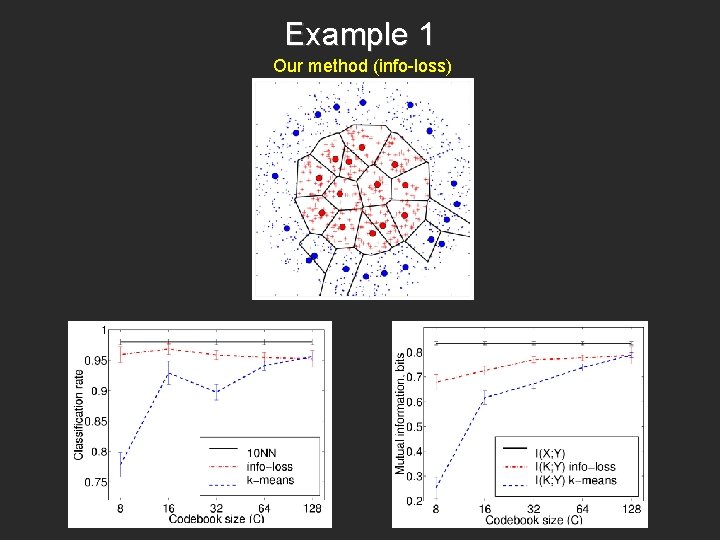

Example 1 Data

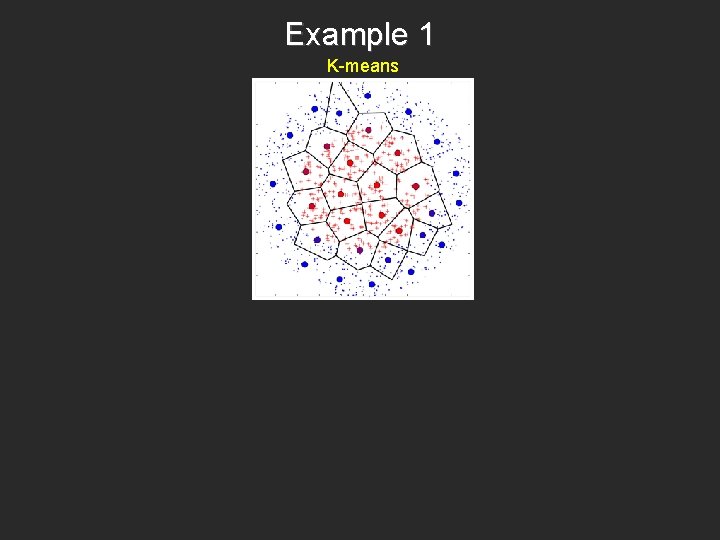

Example 1 K-means

Example 1 Our method (info-loss)

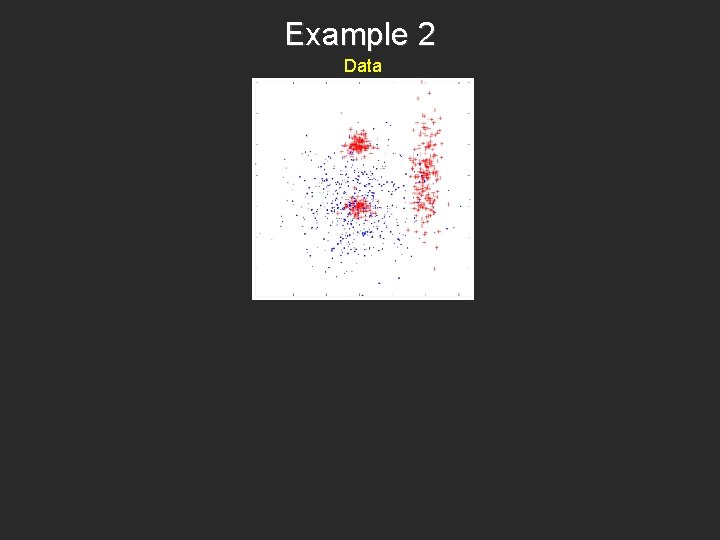

Example 2 Data

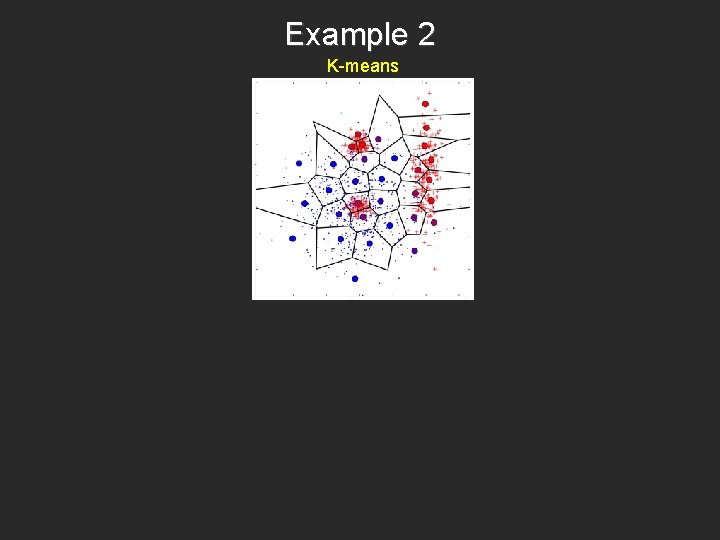

Example 2 K-means

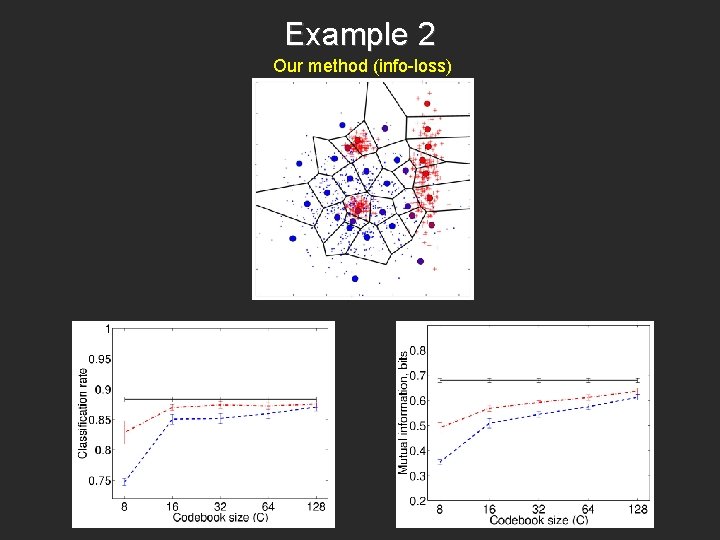

Example 2 Our method (info-loss)

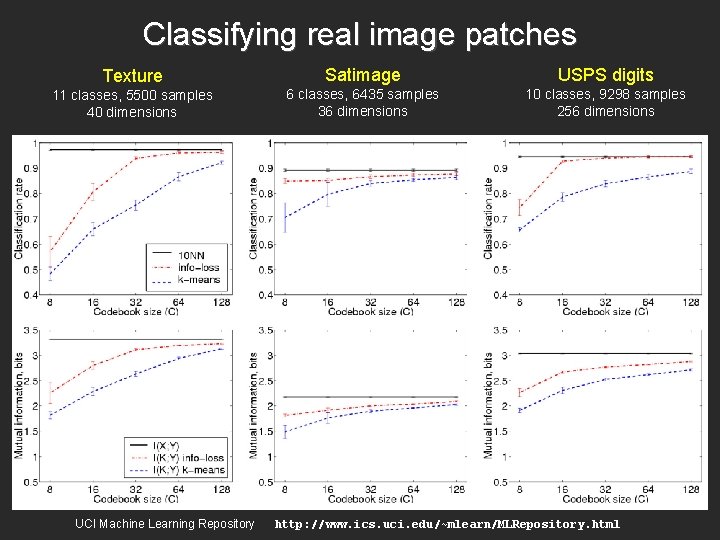

Classifying real image patches Texture 11 classes, 5500 samples 40 dimensions UCI Machine Learning Repository Satimage USPS digits 6 classes, 6435 samples 36 dimensions 10 classes, 9298 samples 256 dimensions http: //www. ics. uci. edu/~mlearn/MLRepository. html

Application 1: Building better codebooks for bag-of-features image classification

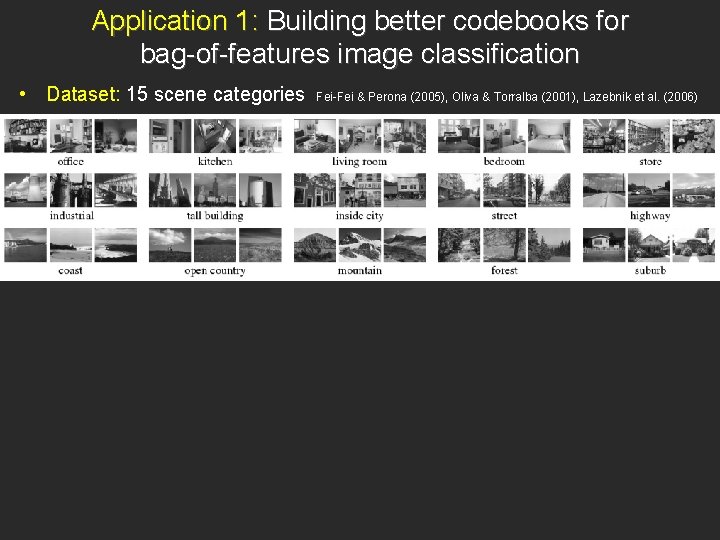

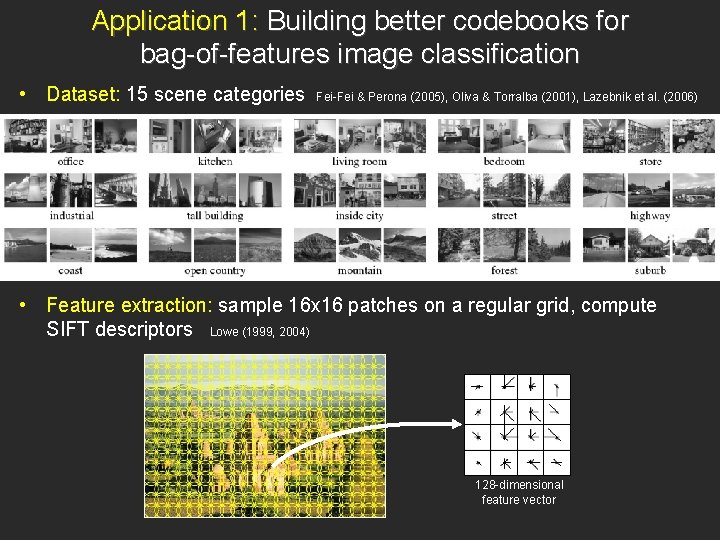

Application 1: Building better codebooks for bag-of-features image classification • Dataset: 15 scene categories Fei-Fei & Perona (2005), Oliva & Torralba (2001), Lazebnik et al. (2006)

Application 1: Building better codebooks for bag-of-features image classification • Dataset: 15 scene categories Fei-Fei & Perona (2005), Oliva & Torralba (2001), Lazebnik et al. (2006) • Feature extraction: sample 16 x 16 patches on a regular grid, compute SIFT descriptors Lowe (1999, 2004) 128 -dimensional feature vector

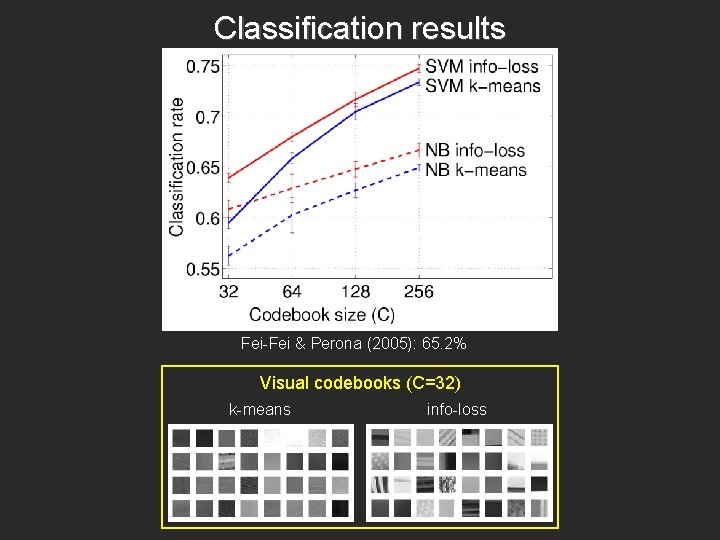

Classification results Fei-Fei & Perona (2005): 65. 2% Visual codebooks (C=32) k-means info-loss

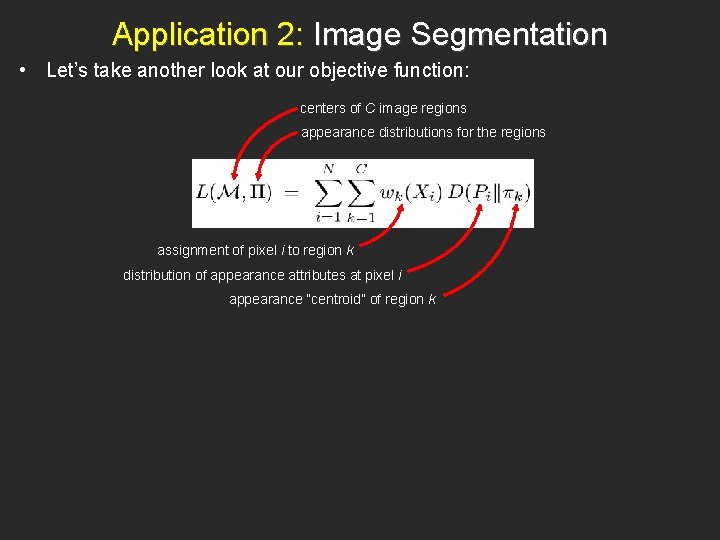

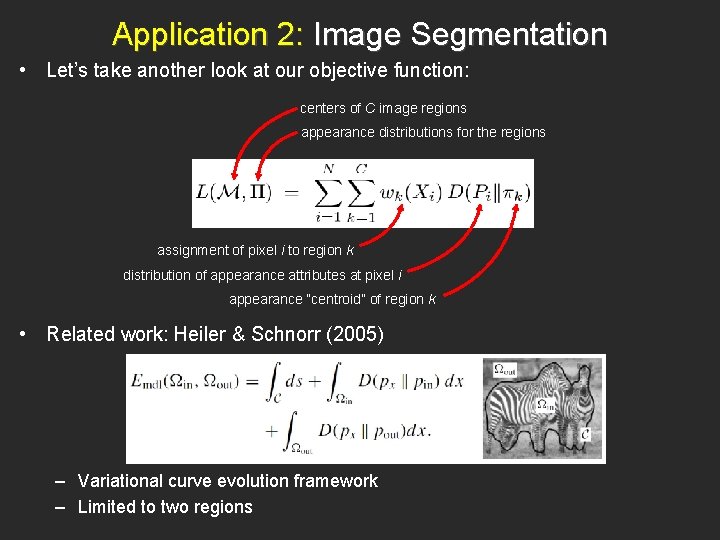

Application 2: Image Segmentation • Let’s take another look at our objective function: centers of C image regions appearance distributions for the regions assignment of pixel i to region k distribution of appearance attributes at pixel i appearance “centroid” of region k

Application 2: Image Segmentation • Let’s take another look at our objective function: centers of C image regions appearance distributions for the regions assignment of pixel i to region k distribution of appearance attributes at pixel i appearance “centroid” of region k • Related work: Heiler & Schnorr (2005) – Variational curve evolution framework – Limited to two regions

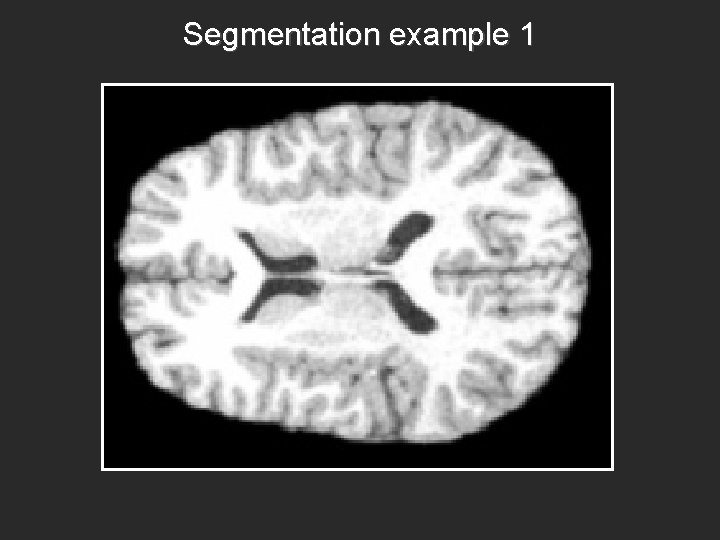

Segmentation example 1

Segmentation example 2

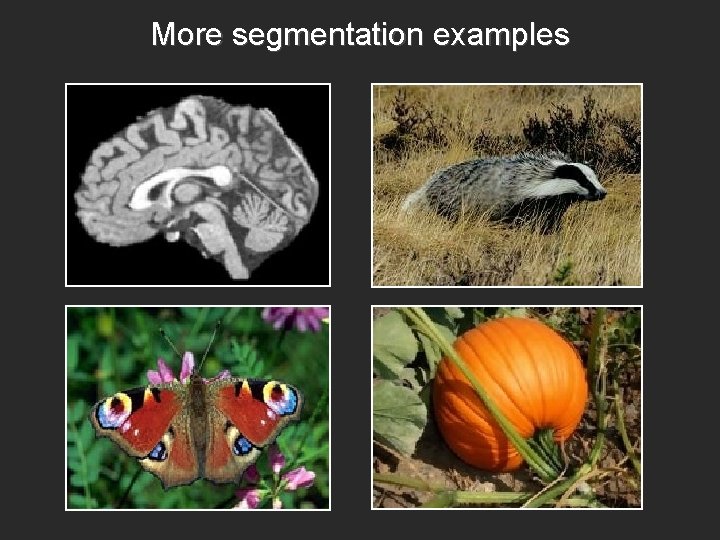

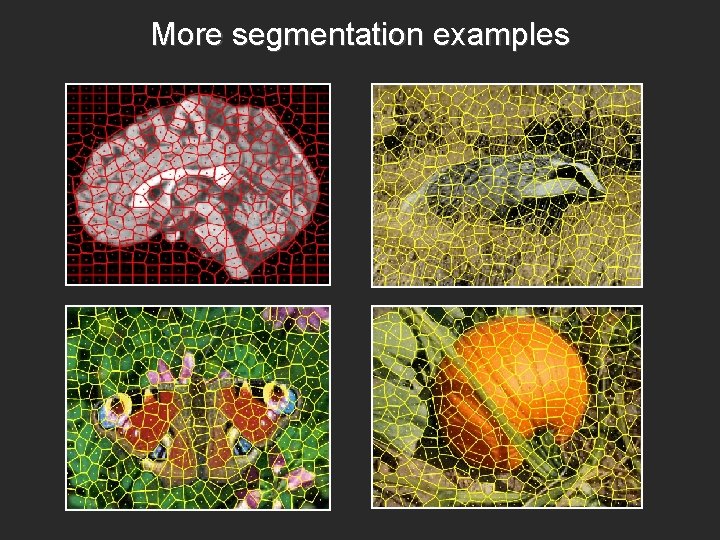

More segmentation examples

More segmentation examples

Summary: Learning quantizer codebooks by minimizing information loss • Quantization is a key operation forming visual representations • Information-theoretic formulation is discriminative, yet independent of choice of classifier • Diverse applications: construction of visual codebooks, image segmentation

Summary of talk • Intrinsic dimensionality estimation – Theoretical notion of quantization dimension leads to a practical estimation procedure – TSVQ for partitioning of heterogeneous datasets • Information loss minimization – Simultaneous quantization of feature space and posterior class distributions – Applications to visual codebook design, image segmentation • Future work: more fun with quantizers – VQ techniques for density estimation in the space of image patches – Applications to modeling of saliency and visual codebook construction

- Slides: 65