Exploring cultural transmission by iterated learning Tom Griffiths

Exploring cultural transmission by iterated learning Tom Griffiths Mike Kalish Brown University of Louisiana With thanks to: Anu Asnaani, Brian Christian, and Alana Firl

Cultural transmission • Most knowledge is based on secondhand data • Some things can only be learned from others – cultural knowledge transmitted across generations • What are the consequences of learners learning from other learners?

Iterated learning (Kirby, 2001) Each learner sees data, forms a hypothesis, produces the data given to the next learner

Objects of iterated learning • Knowledge communicated through data • Examples: – religious concepts – social norms – myths and legends – causal theories – language

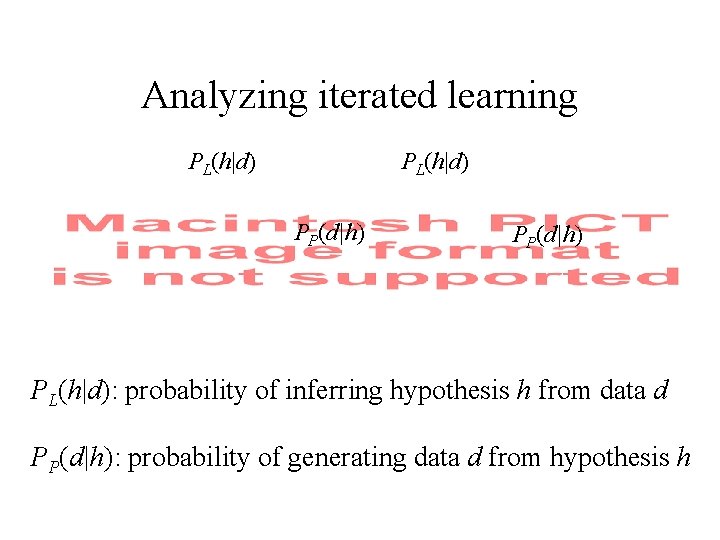

Analyzing iterated learning PL(h|d) PP(d|h) PL(h|d): probability of inferring hypothesis h from data d PP(d|h): probability of generating data d from hypothesis h

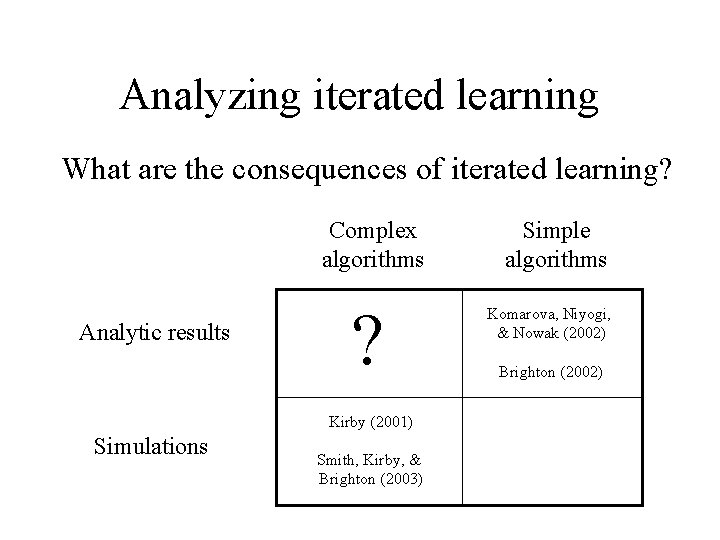

Analyzing iterated learning What are the consequences of iterated learning? Complex algorithms Analytic results ? Kirby (2001) Simulations Smith, Kirby, & Brighton (2003) Simple algorithms Komarova, Niyogi, & Nowak (2002) Brighton (2002)

Bayesian inference • Rational procedure for updating beliefs • Foundation of many learning algorithms • Widely used for language learning Reverend Thomas Bayes

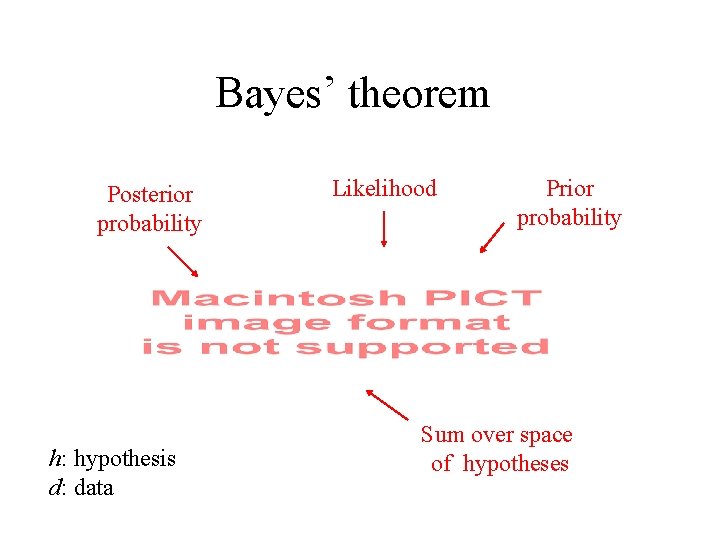

Bayes’ theorem Posterior probability h: hypothesis d: data Likelihood Prior probability Sum over space of hypotheses

Iterated Bayesian learning PL(h|d) PP(d|h) Learners are Bayesian agents

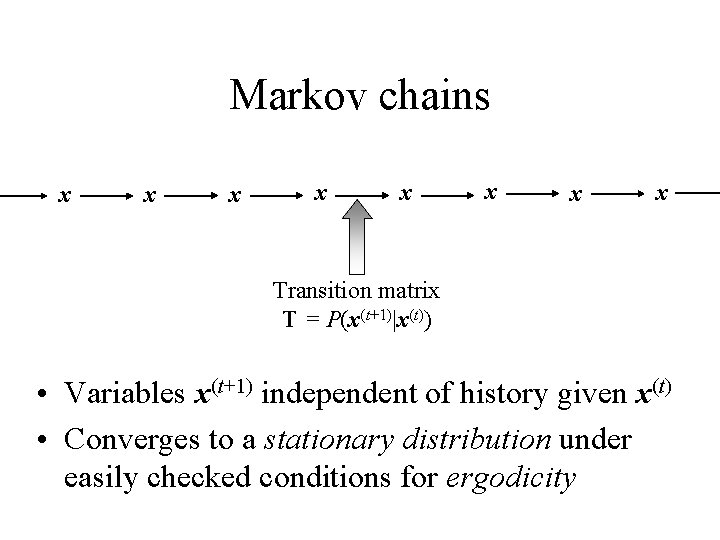

Markov chains x x x x Transition matrix T = P(x(t+1)|x(t)) • Variables x(t+1) independent of history given x(t) • Converges to a stationary distribution under easily checked conditions for ergodicity

Stationary distributions • Stationary distribution: • In matrix form • is the first eigenvector of the matrix T • Second eigenvalue sets rate of convergence

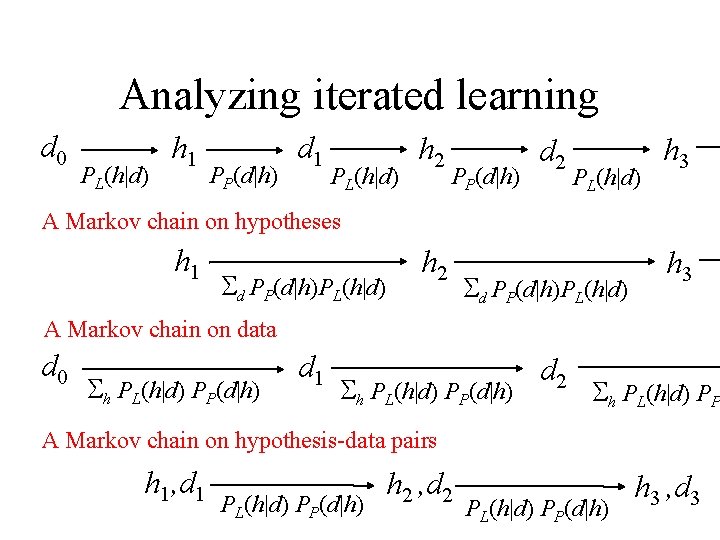

Analyzing iterated learning d 0 PL(h|d) h 1 PP(d|h) d 1 PL(h|d) h 2 PP(d|h) d 2 PL(h|d) h 3 A Markov chain on hypotheses h 1 d PP(d|h)PL(h|d) h 2 d PP(d|h)PL(h|d) h 3 A Markov chain on data d 0 h PL(h|d) PP(d|h) d 1 h PL(h|d) PP(d|h) d 2 h PL(h|d) PP A Markov chain on hypothesis-data pairs h 1, d 1 PL(h|d) PP(d|h) h 2 , d 2 PL(h|d) PP(d|h) h 3 , d 3

Stationary distributions • Markov chain on h converges to the prior, p(h) • Markov chain on d converges to the “prior predictive distribution” • Markov chain on (h, d) is a Gibbs sampler for

Implications • The probability that the nth learner entertains the hypothesis h approaches p(h) as n • Convergence to the prior occurs regardless of: – the properties of the hypotheses themselves – the amount or structure of the data transmitted • The consequences of iterated learning are determined entirely by the biases of the learners

Identifying inductive biases • Many problems in cognitive science can be formulated as problems of induction – learning languages, concepts, and causal relations • Such problems are not solvable without bias (e. g. , Goodman, 1955; Kearns & Vazirani, 1994; Vapnik, 1995) • What biases guide human inductive inferences? If iterated learning converges to the prior, then it may provide a method for investigating biases

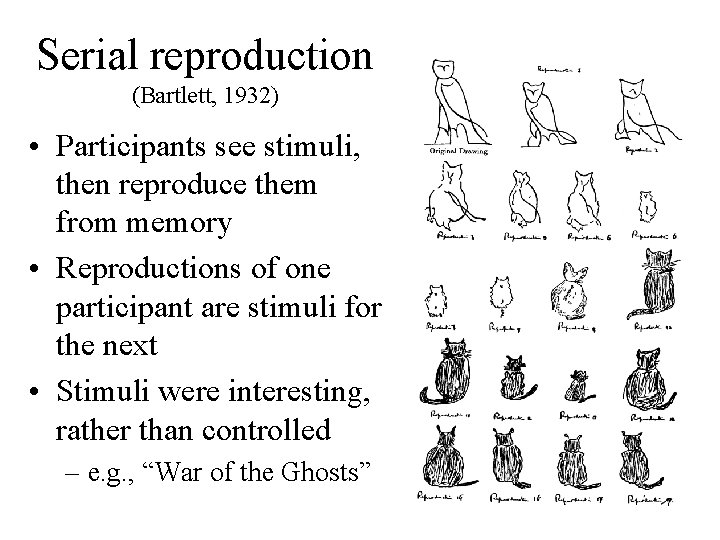

Serial reproduction (Bartlett, 1932) • Participants see stimuli, then reproduce them from memory • Reproductions of one participant are stimuli for the next • Stimuli were interesting, rather than controlled – e. g. , “War of the Ghosts”

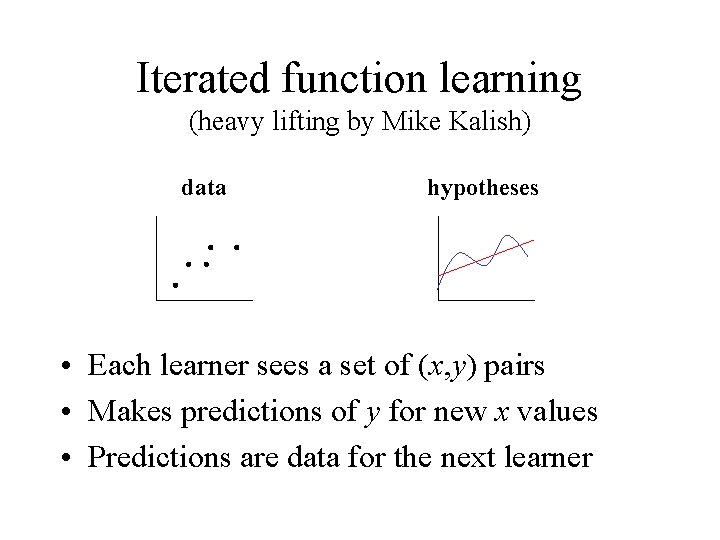

Iterated function learning (heavy lifting by Mike Kalish) data hypotheses • Each learner sees a set of (x, y) pairs • Makes predictions of y for new x values • Predictions are data for the next learner

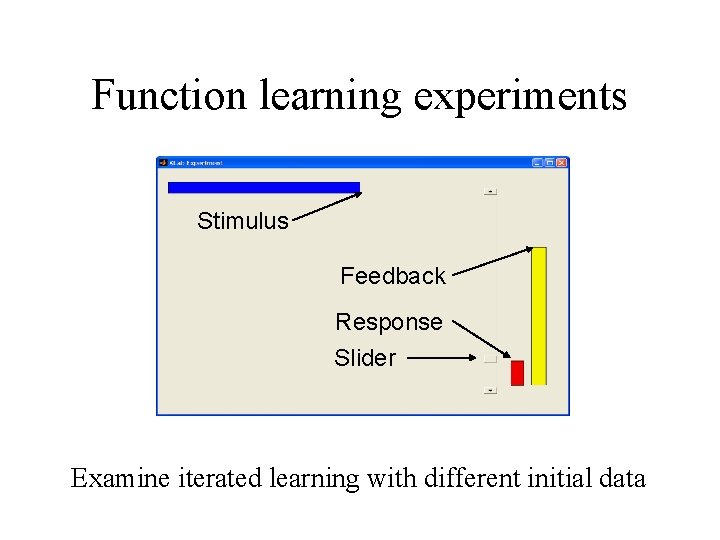

Function learning experiments Stimulus Feedback Response Slider Examine iterated learning with different initial data

Initial data Iteration 1 2 3 4 5 6 7 8 9

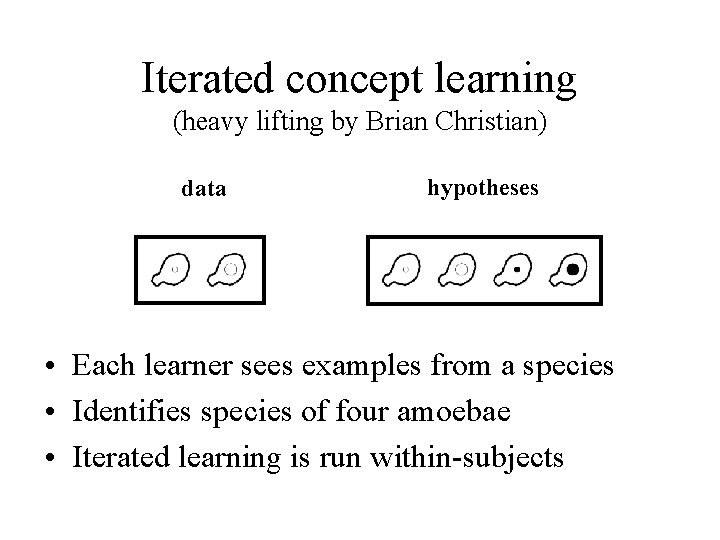

Iterated concept learning (heavy lifting by Brian Christian) data hypotheses • Each learner sees examples from a species • Identifies species of four amoebae • Iterated learning is run within-subjects

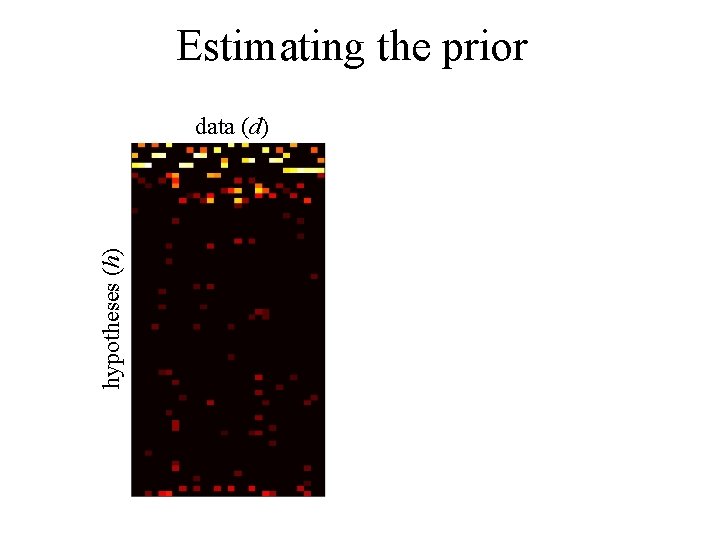

Two positive examples data (d) hypotheses (h)

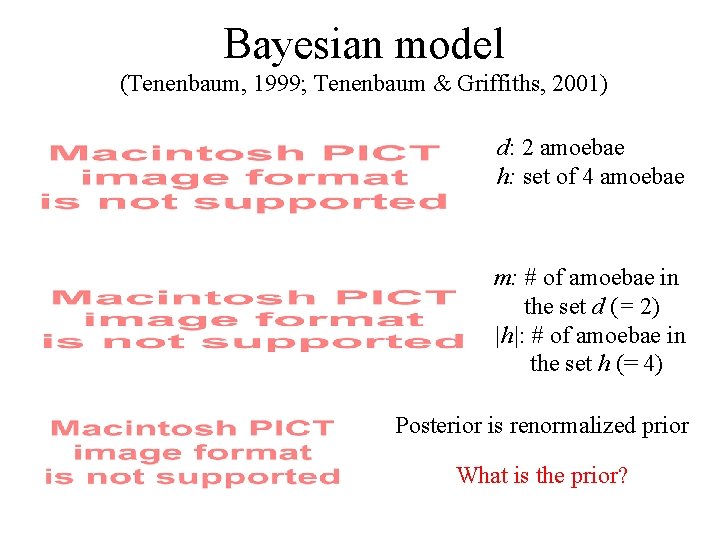

Bayesian model (Tenenbaum, 1999; Tenenbaum & Griffiths, 2001) d: 2 amoebae h: set of 4 amoebae m: # of amoebae in the set d (= 2) |h|: # of amoebae in the set h (= 4) Posterior is renormalized prior What is the prior?

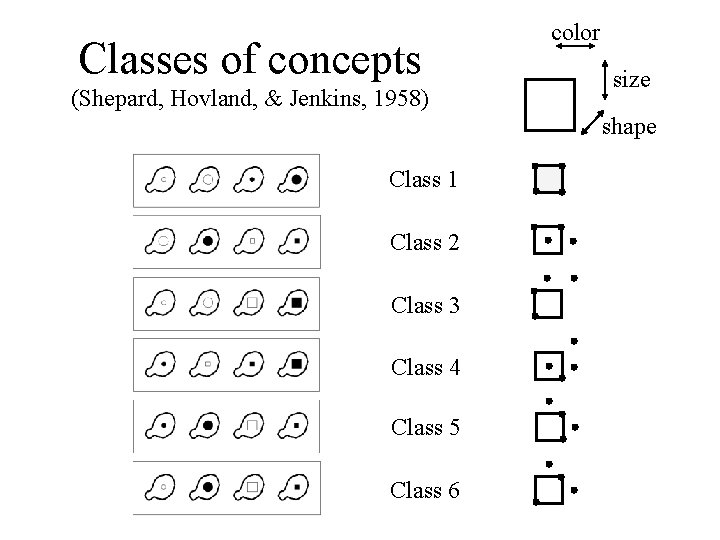

Classes of concepts (Shepard, Hovland, & Jenkins, 1958) color size shape Class 1 Class 2 Class 3 Class 4 Class 5 Class 6

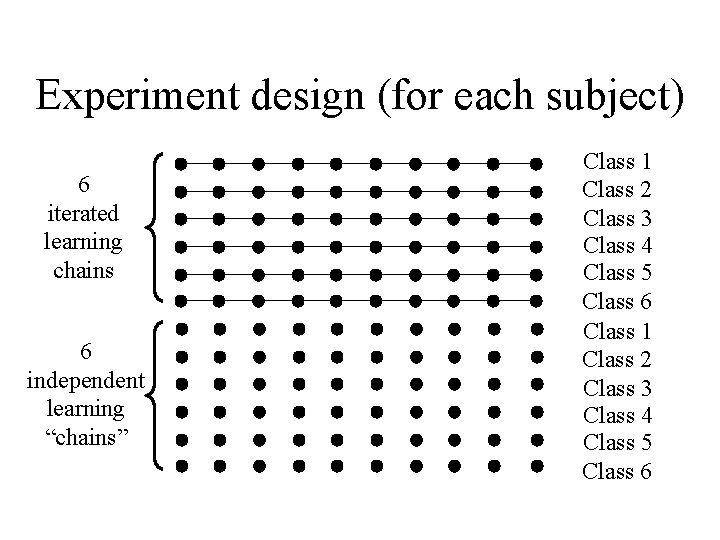

Experiment design (for each subject) 6 iterated learning chains 6 independent learning “chains” Class 1 Class 2 Class 3 Class 4 Class 5 Class 6

Estimating the prior hypotheses (h) data (d)

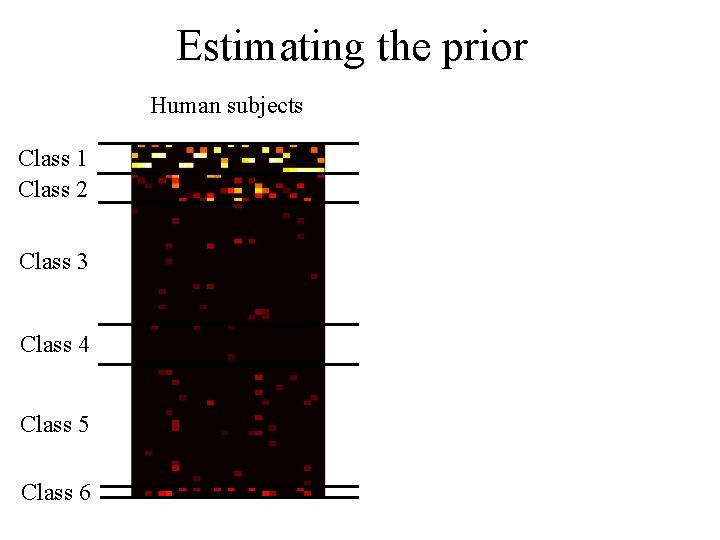

Estimating the prior Human subjects Bayesian model Prior Class 1 Class 2 0. 861 0. 087 Class 3 0. 009 Class 4 0. 002 Class 5 0. 013 Class 6 0. 028 r = 0. 952

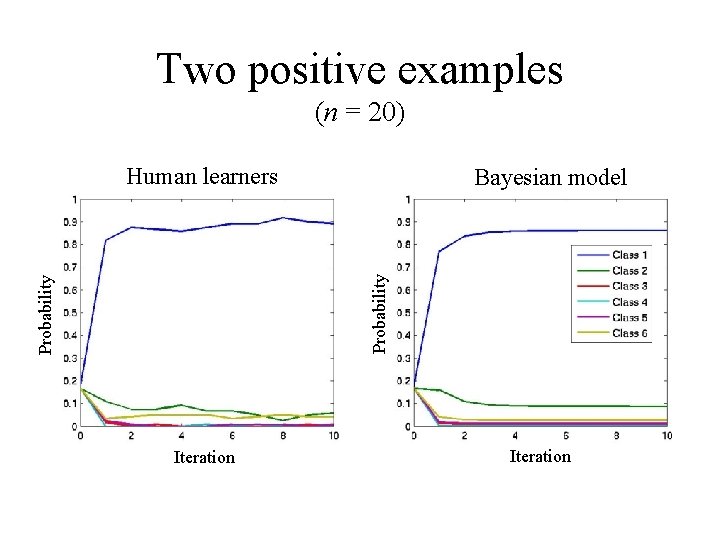

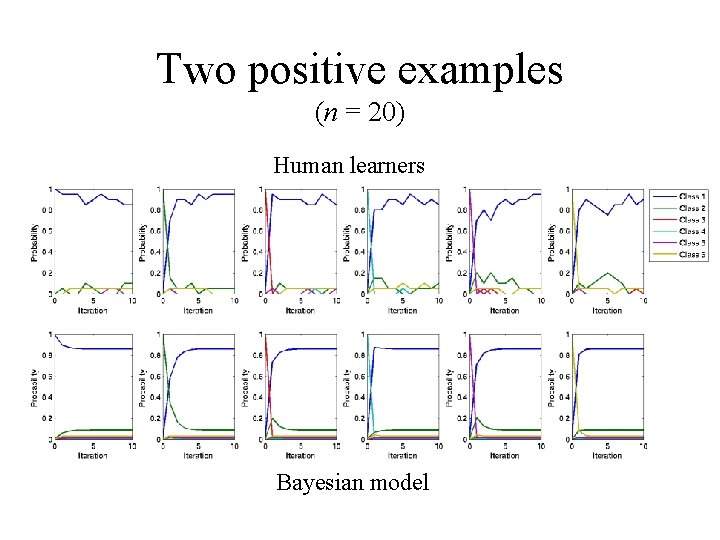

Two positive examples (n = 20) Human learners Probability Bayesian model Iteration

Two positive examples (n = 20) Probability Human learners Bayesian model

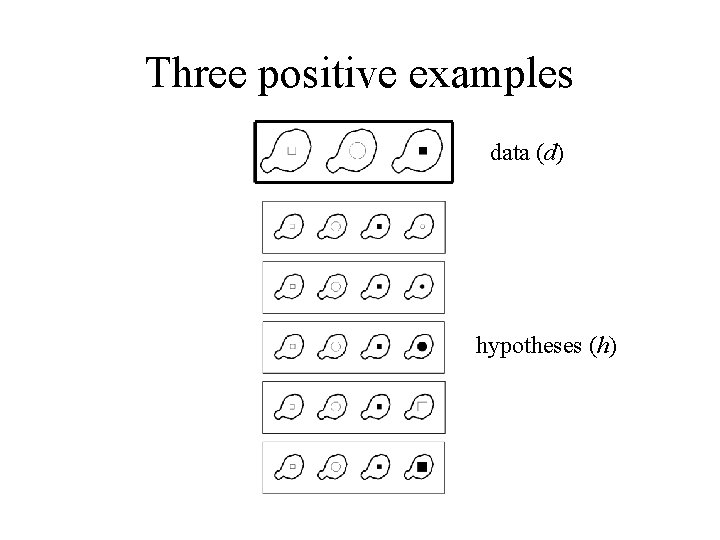

Three positive examples data (d) hypotheses (h)

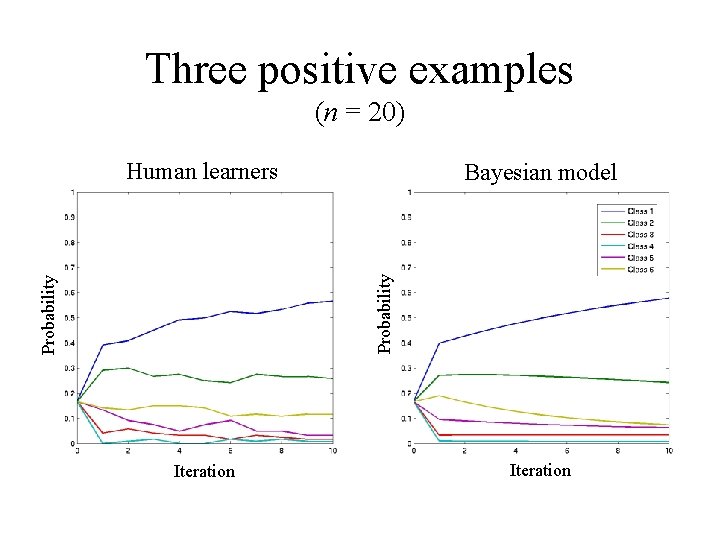

Three positive examples (n = 20) Human learners Probability Bayesian model Iteration

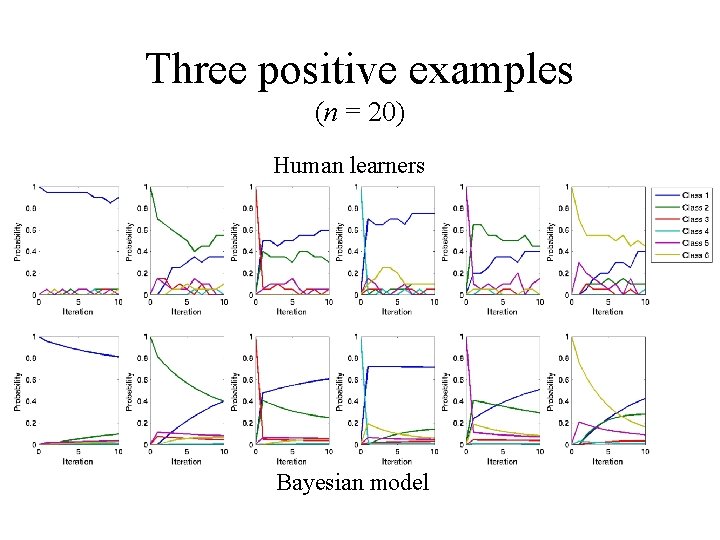

Three positive examples (n = 20) Human learners Bayesian model

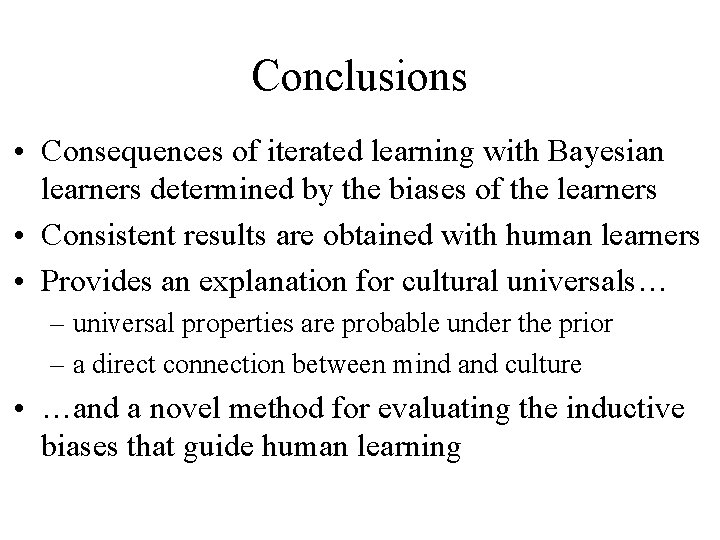

Conclusions • Consequences of iterated learning with Bayesian learners determined by the biases of the learners • Consistent results are obtained with human learners • Provides an explanation for cultural universals… – universal properties are probable under the prior – a direct connection between mind and culture • …and a novel method for evaluating the inductive biases that guide human learning

Discovering the biases of models Generic neural network:

Discovering the biases of models EXAM (Delosh, Busemeyer, & Mc. Daniel, 1997):

Discovering the biases of models POLE (Kalish, Lewandowsky, & Kruschke, 2004):

- Slides: 37