Exploitation of InstructionLevel Parallelism ILP courseELEG 652 03

![Cycle per Instruction Enough Parallelism ? [Theobald. Gao. Hen 1992, 1993, 1994] { Design Cycle per Instruction Enough Parallelism ? [Theobald. Gao. Hen 1992, 1993, 1994] { Design](https://slidetodoc.com/presentation_image_h2/80cc4dc17d99f6673f948681c5db8c45/image-3.jpg)

- Slides: 27

Exploitation of Instruction-Level Parallelism (ILP) courseELEG 652 -03 FallTopic 3 -652 1

Reading List • Slides: Topic 4 x • Henn&Patt: Chapter 4 • Other assigned readings from homework and classes courseELEG 652 -03 FallTopic 3 -652 2

![Cycle per Instruction Enough Parallelism Theobald Gao Hen 1992 1993 1994 Design Cycle per Instruction Enough Parallelism ? [Theobald. Gao. Hen 1992, 1993, 1994] { Design](https://slidetodoc.com/presentation_image_h2/80cc4dc17d99f6673f948681c5db8c45/image-3.jpg)

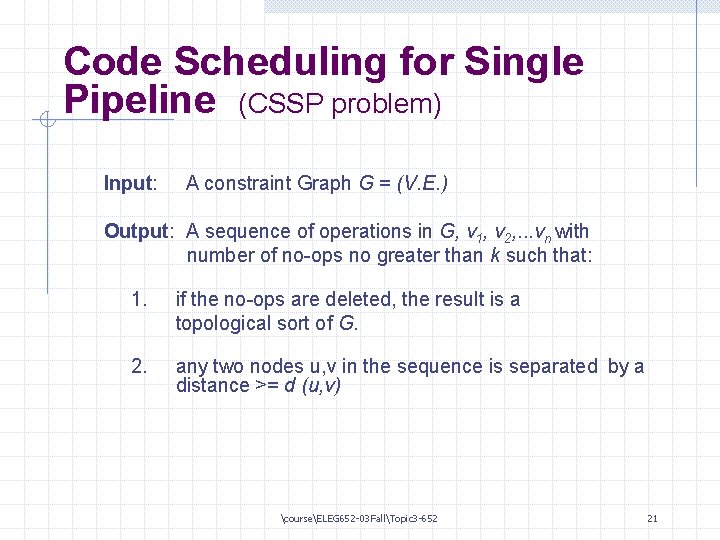

Cycle per Instruction Enough Parallelism ? [Theobald. Gao. Hen 1992, 1993, 1994] { Design Space for Processors 20 10 5. 0 2. 0 1. 0 0. 5 0. 2 0. 1 0. 05 Scalar CISC Superpipelined Most likely future processor space Scalar RISC Superscalar RISC VLIW 5 10 20 Multithreaded Vector Supercomputer 50 100 200 500 1000 courseELEG 652 -03 FallTopic 3 -652 MHz Clock Rate 3

Pipelining - A Review Hazards • Structural: resource conflicts when hardware cannot support all possible combinations of insets. . in overlapped exec. • Data: insts depend on the results of a previous inst. • Control: due to branches and other insts that change PC • Hazard will cause “stall” • but in pipeline “stall” is serious - it will hold up multiple insts. courseELEG 652 -03 FallTopic 3 -652 4

RISC Concepts: Revisit • What makes it a success ? - Pipeline cache • What prevents CPI = 1? - hazards and its resolution Def dependence graph courseELEG 652 -03 FallTopic 3 -652 5

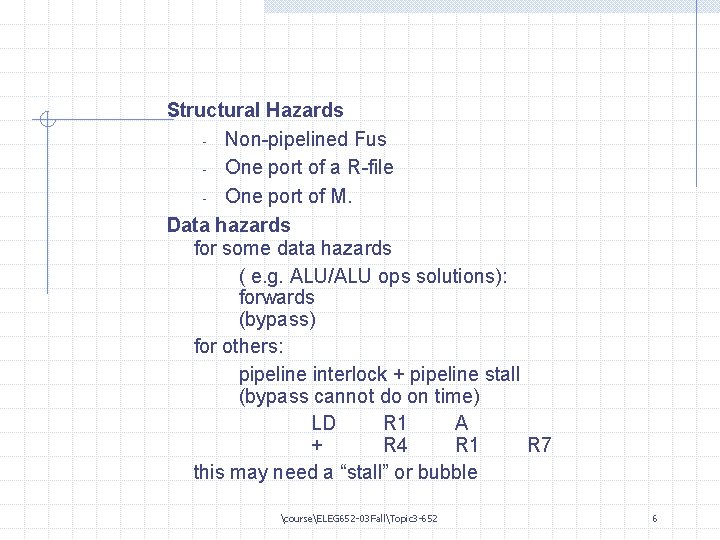

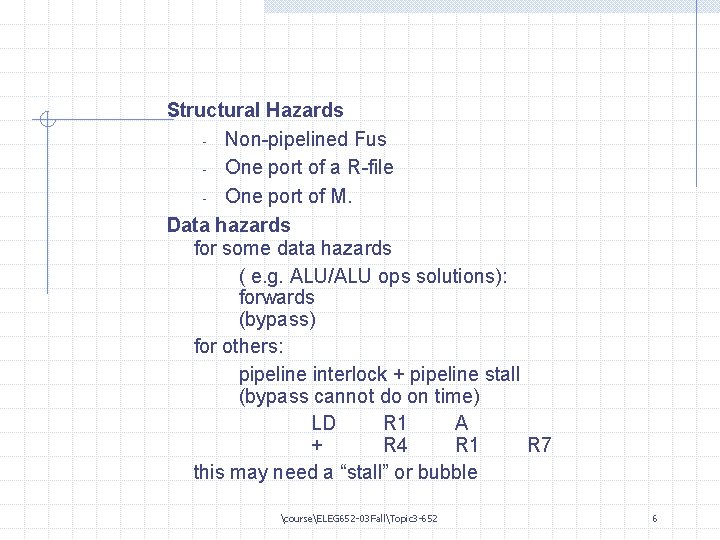

Structural Hazards - Non-pipelined Fus - One port of a R-file - One port of M. Data hazards for some data hazards ( e. g. ALU/ALU ops solutions): forwards (bypass) for others: pipeline interlock + pipeline stall (bypass cannot do on time) LD R 1 A + R 4 R 1 R 7 this may need a “stall” or bubble courseELEG 652 -03 FallTopic 3 -652 6

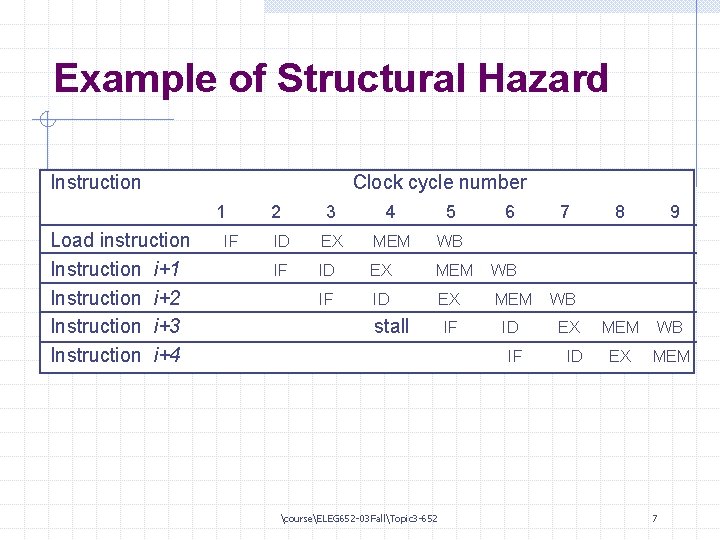

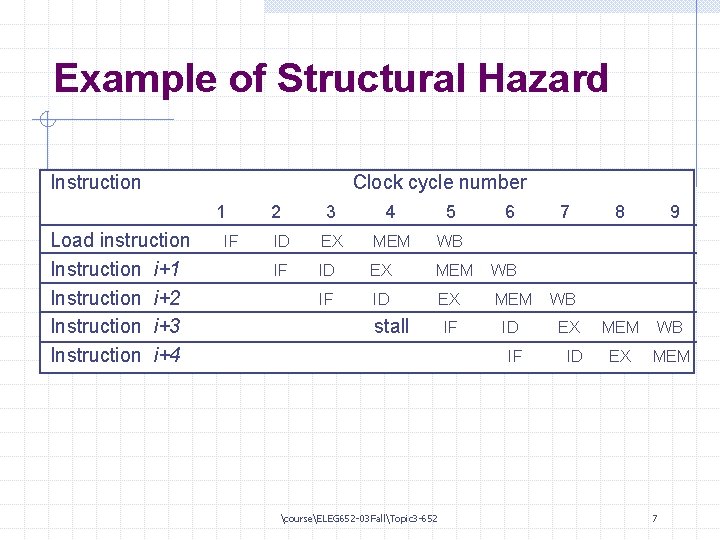

Example of Structural Hazard Instruction Clock cycle number 1 Load instruction Instruction i+1 Instruction i+2 Instruction i+3 Instruction i+4 IF 2 3 4 5 ID EX MEM WB IF ID EX MEM IF ID EX IF ID stall courseELEG 652 -03 FallTopic 3 -652 6 7 8 9 WB MEM WB EX MEM 7

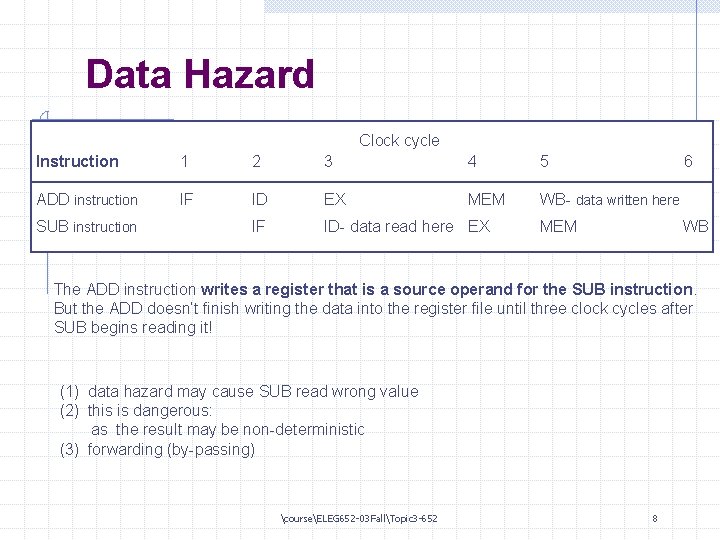

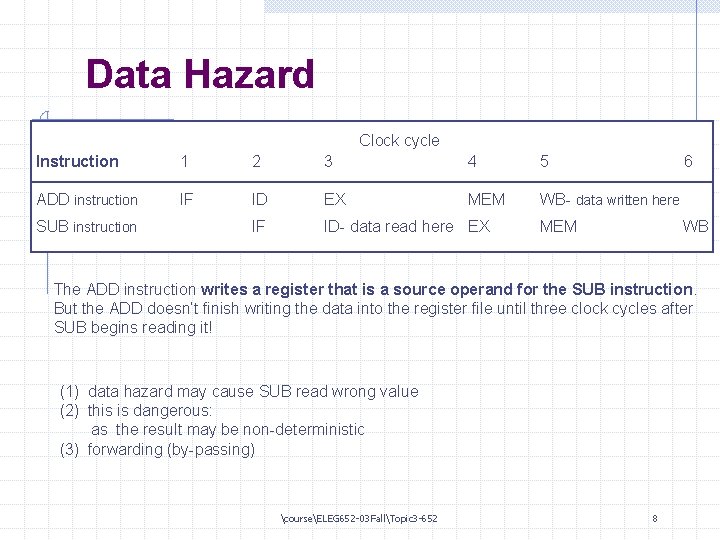

Data Hazard Clock cycle Instruction 1 2 3 4 5 ADD instruction IF ID EX MEM WB- data written here IF ID- data read here EX SUB instruction 6 MEM WB The ADD instruction writes a register that is a source operand for the SUB instruction. But the ADD doesn’t finish writing the data into the register file until three clock cycles after SUB begins reading it! (1) data hazard may cause SUB read wrong value (2) this is dangerous: as the result may be non-deterministic (3) forwarding (by-passing) courseELEG 652 -03 FallTopic 3 -652 8

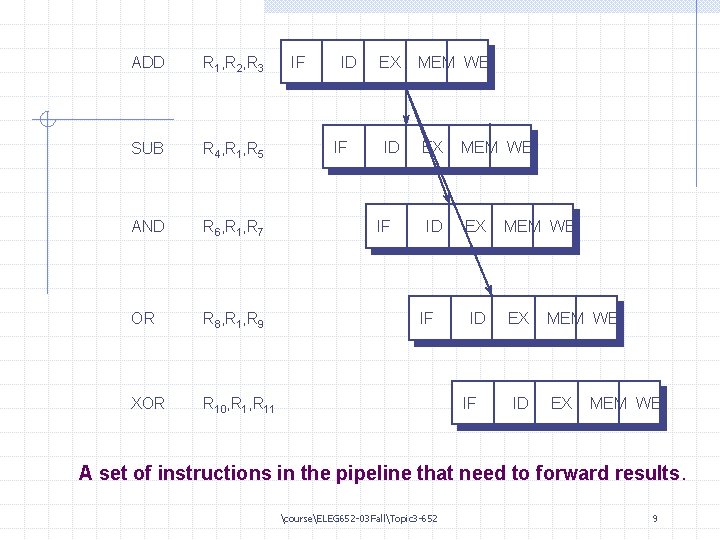

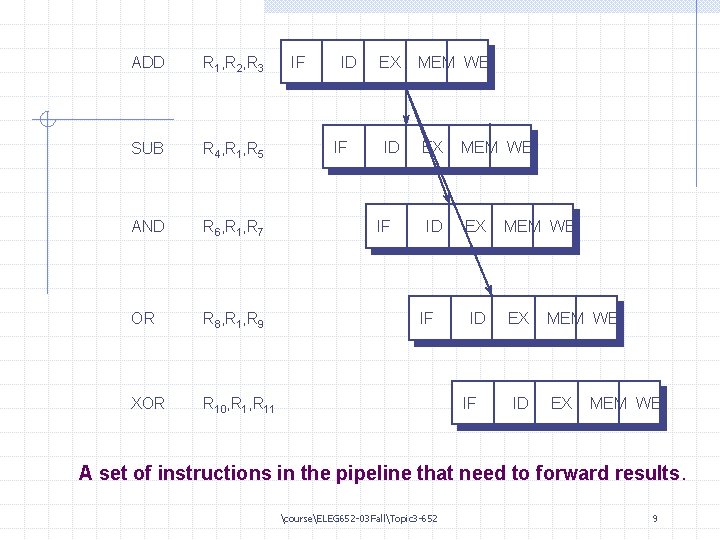

ADD R 1, R 2, R 3 SUB R 4, R 1, R 5 AND R 6, R 1, R 7 OR R 8, R 1, R 9 XOR R 10, R 11 IF ID IF EX MEM WB ID EX IF IF ID IF MEM WB EX ID MEM WB EX MEM WB A set of instructions in the pipeline that need to forward results. courseELEG 652 -03 FallTopic 3 -652 9

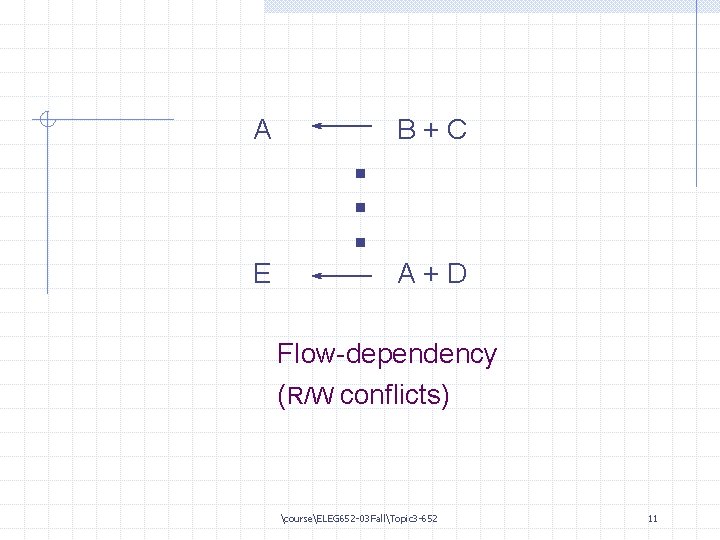

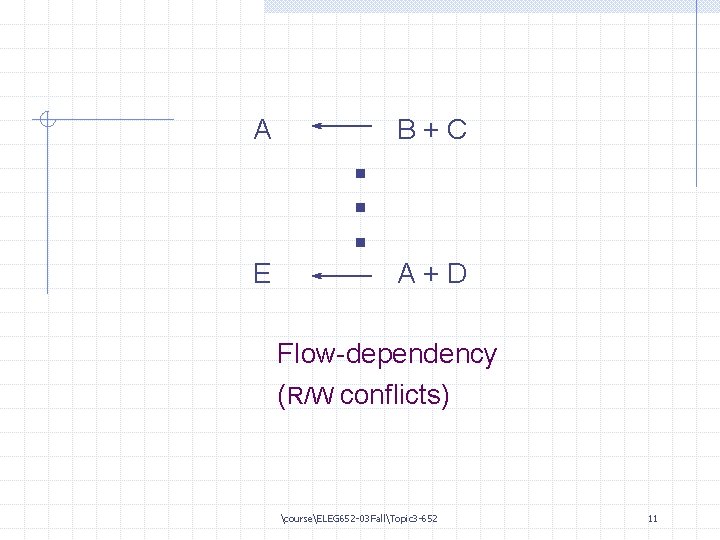

A E . . . B+C A+D Flow-dependency (R/W conflicts) courseELEG 652 -03 FallTopic 3 -652 11

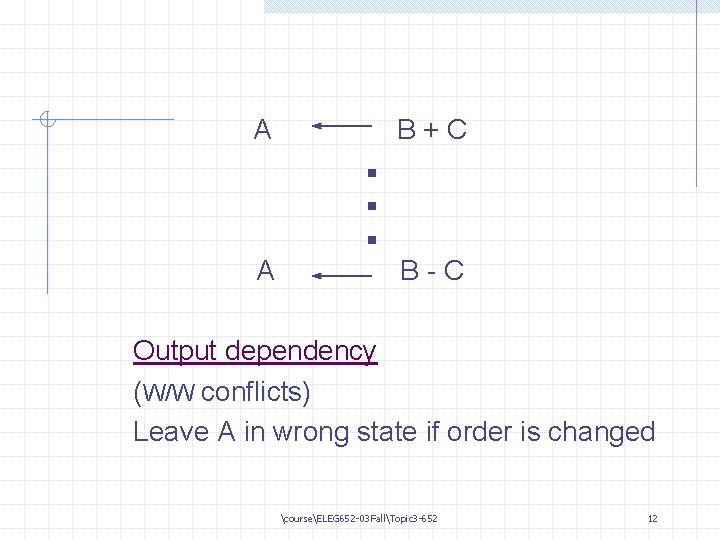

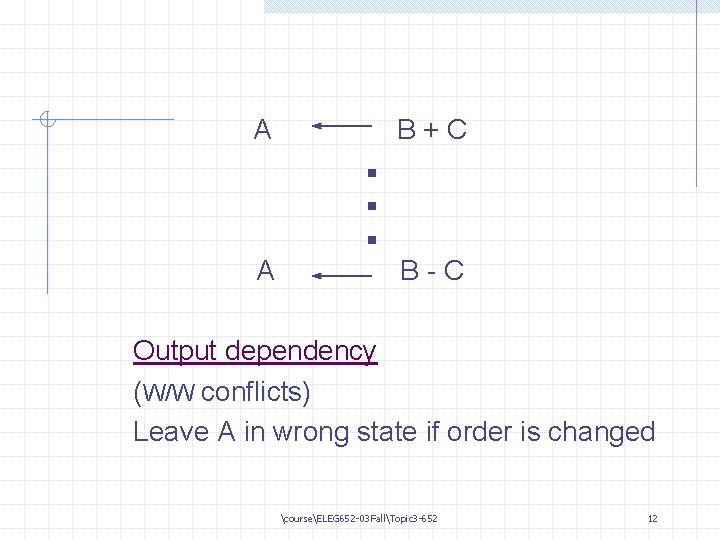

A A . . . B+C B-C Output dependency (W/W conflicts) Leave A in wrong state if order is changed courseELEG 652 -03 FallTopic 3 -652 12

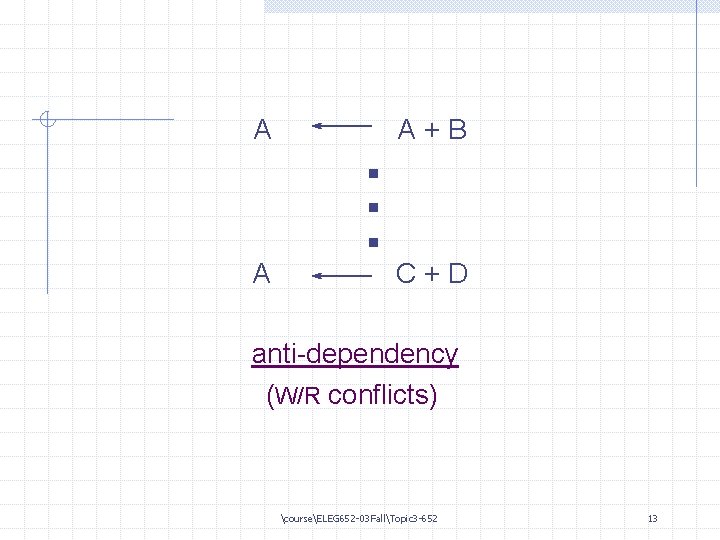

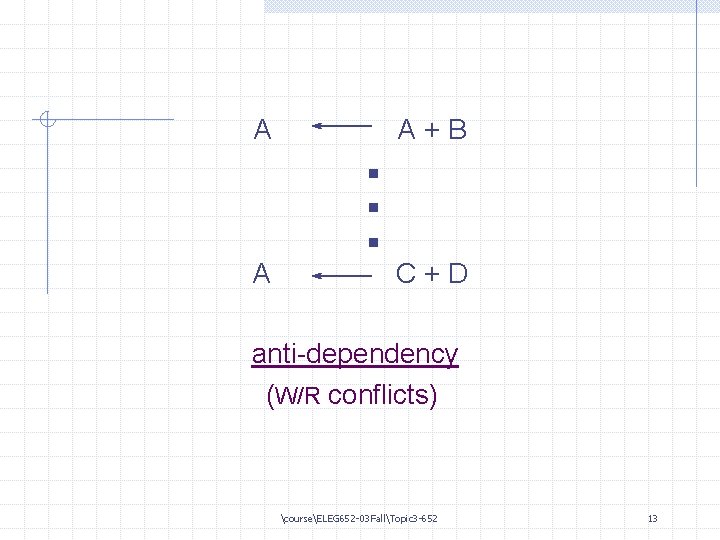

A A . . . A+B C+D anti-dependency (W/R conflicts) courseELEG 652 -03 FallTopic 3 -652 13

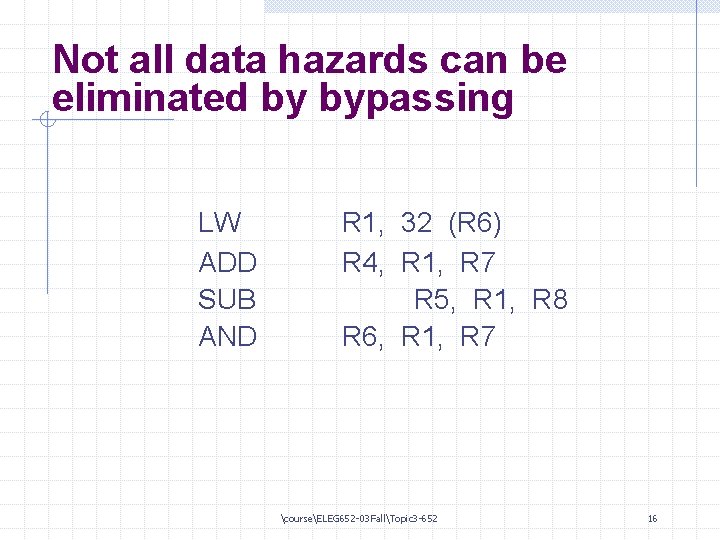

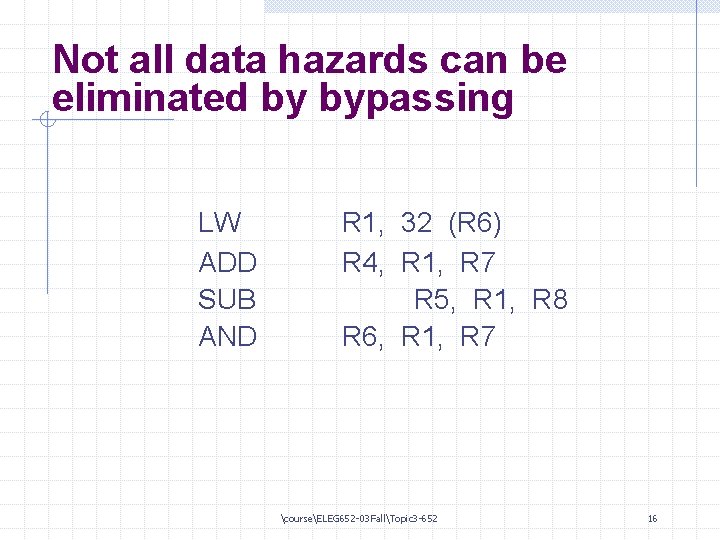

Not all data hazards can be eliminated by bypassing LW ADD SUB AND R 1, 32 (R 6) R 4, R 1, R 7 R 5, R 1, R 8 R 6, R 1, R 7 courseELEG 652 -03 FallTopic 3 -652 16

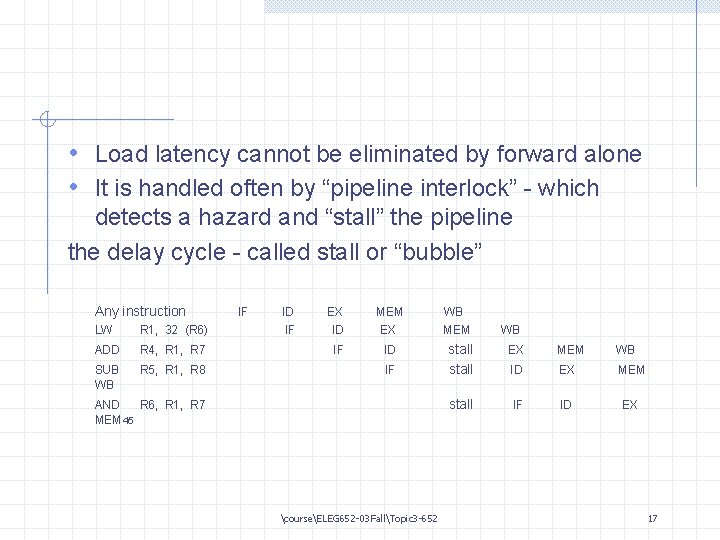

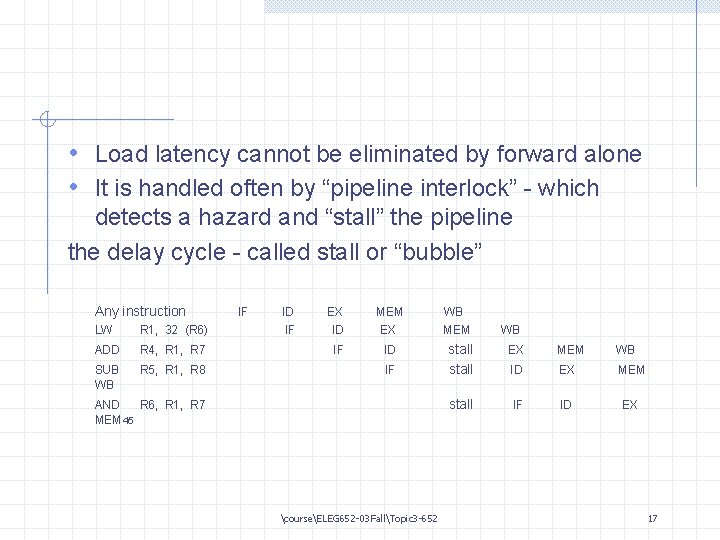

• Load latency cannot be eliminated by forward alone • It is handled often by “pipeline interlock” - which detects a hazard and “stall” the pipeline the delay cycle - called stall or “bubble” Any instruction LW R 1, 32 (R 6) ADD R 4, R 1, R 7 SUB WB R 5, R 1, R 8 IF ID EX MEM WB IF ID stall EX MEM WB ID EX MEM stall IF ID EX IF AND R 6, R 1, R 7 MEM 45 courseELEG 652 -03 FallTopic 3 -652 17

“Issue” - pass ID stage “Issued instructions” DLX always only issue inst where there is no hazard. Detect interlock early in the pipeline has the advantage that it never needs to suspend an inst and undo the state changes. courseELEG 652 -03 FallTopic 3 -652 18

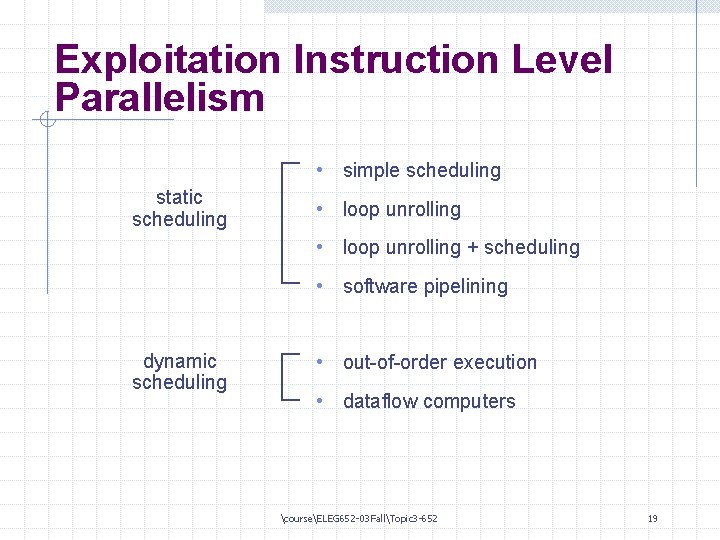

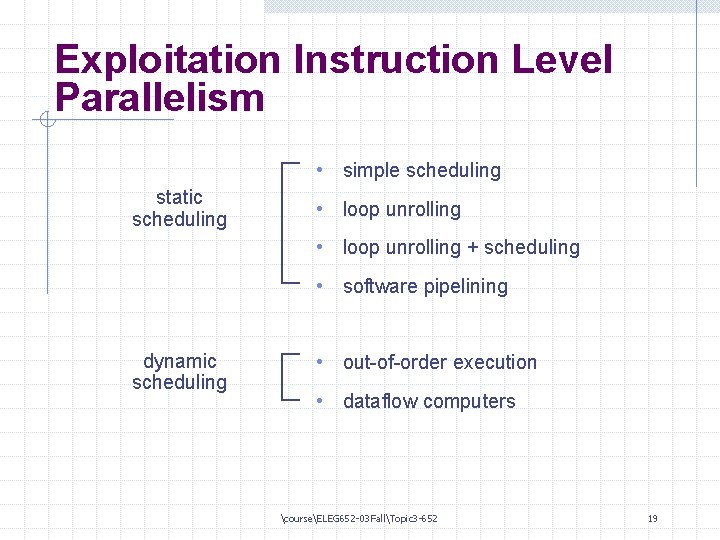

Exploitation Instruction Level Parallelism • simple scheduling static scheduling • loop unrolling + scheduling • software pipelining dynamic scheduling • out-of-order execution • dataflow computers courseELEG 652 -03 FallTopic 3 -652 19

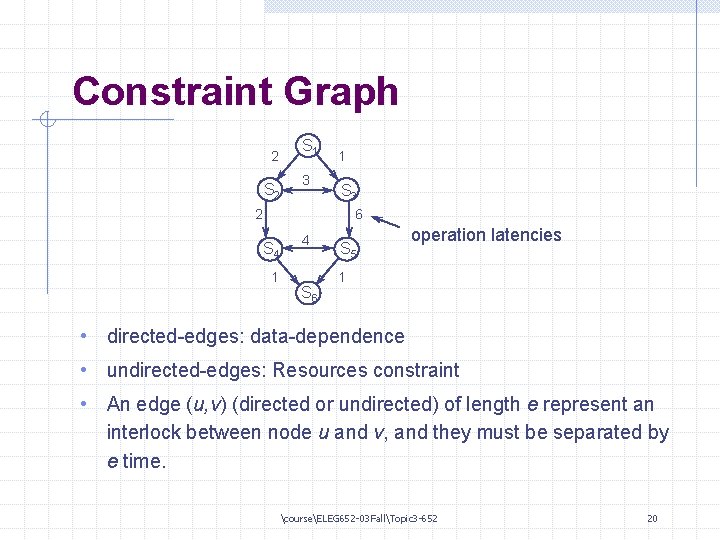

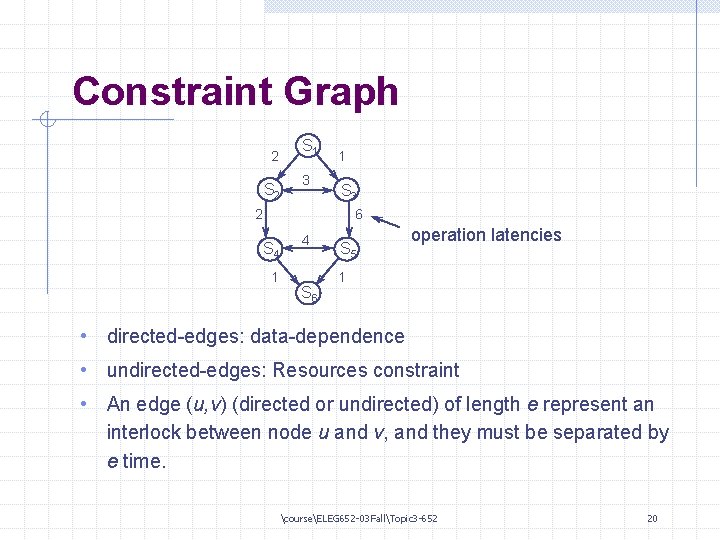

Constraint Graph 2 S 1 3 1 S 3 2 6 S 4 1 4 S 6 S 5 operation latencies 1 • directed-edges: data-dependence • undirected-edges: Resources constraint • An edge (u, v) (directed or undirected) of length e represent an interlock between node u and v, and they must be separated by e time. courseELEG 652 -03 FallTopic 3 -652 20

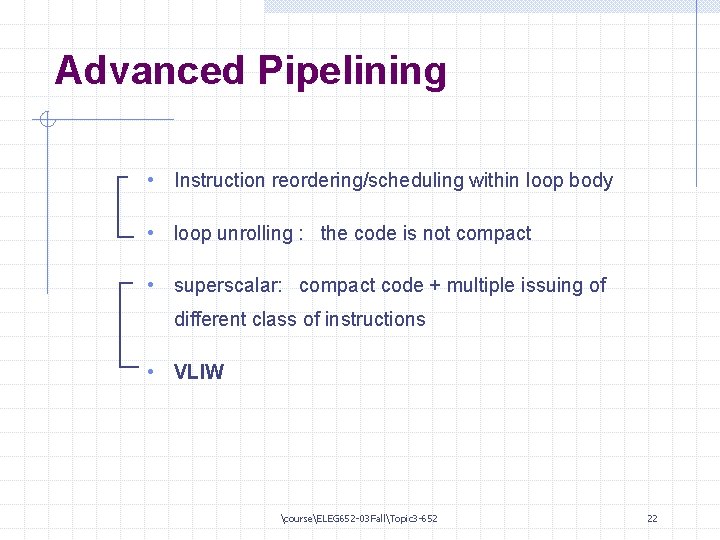

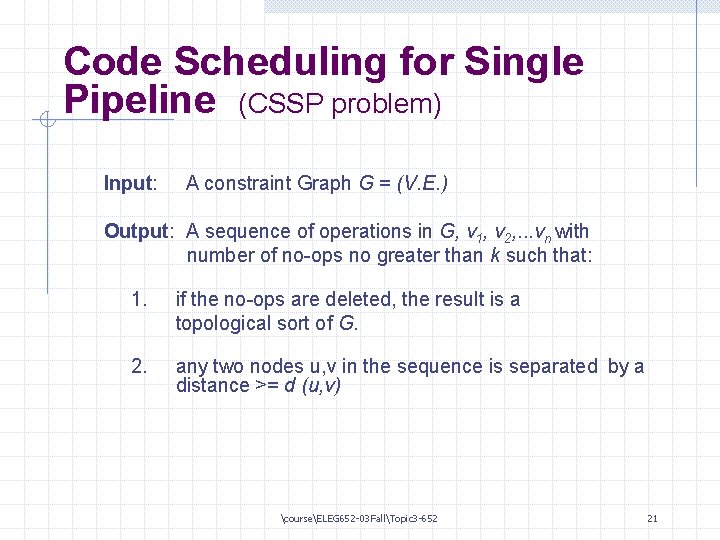

Code Scheduling for Single Pipeline (CSSP problem) Input: A constraint Graph G = (V. E. ) Output: A sequence of operations in G, v 1, v 2, . . . vn with number of no-ops no greater than k such that: 1. if the no-ops are deleted, the result is a topological sort of G. 2. any two nodes u, v in the sequence is separated by a distance >= d (u, v) courseELEG 652 -03 FallTopic 3 -652 21

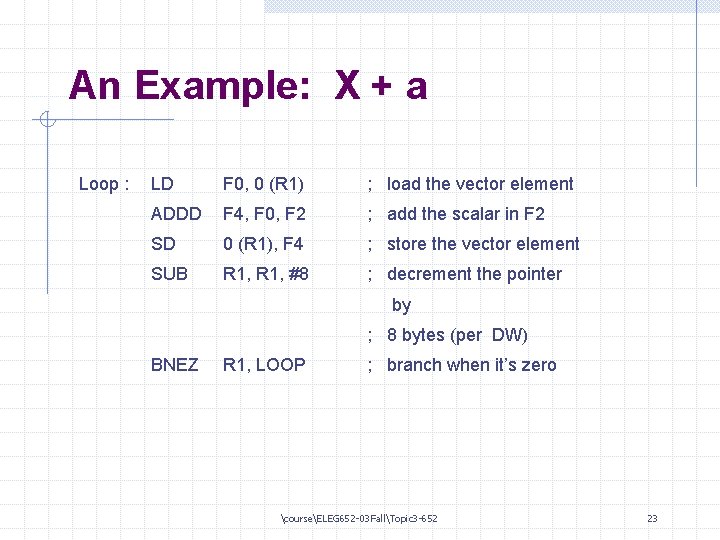

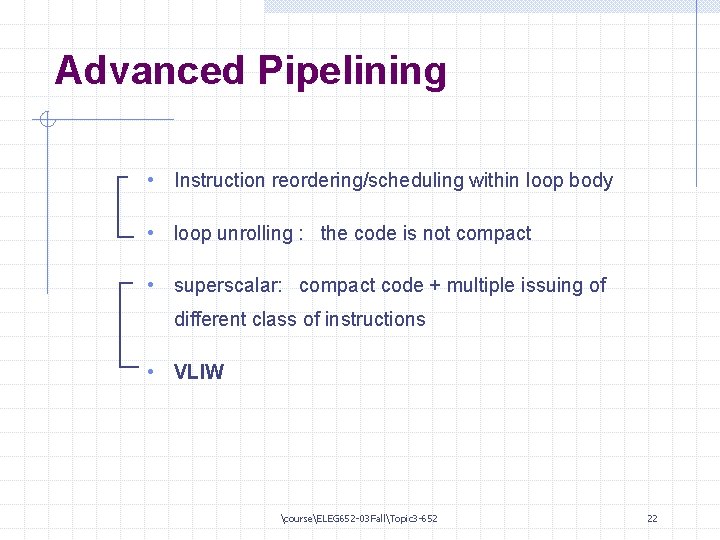

Advanced Pipelining • Instruction reordering/scheduling within loop body • loop unrolling : the code is not compact • superscalar: compact code + multiple issuing of different class of instructions • VLIW courseELEG 652 -03 FallTopic 3 -652 22

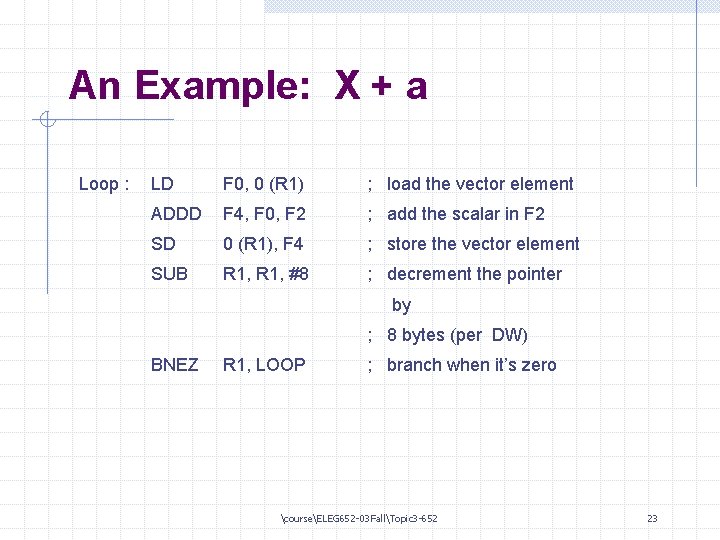

An Example: X + a Loop : LD F 0, 0 (R 1) ; load the vector element ADDD F 4, F 0, F 2 ; add the scalar in F 2 SD 0 (R 1), F 4 ; store the vector element SUB R 1, #8 ; decrement the pointer by ; 8 bytes (per DW) BNEZ R 1, LOOP ; branch when it’s zero courseELEG 652 -03 FallTopic 3 -652 23

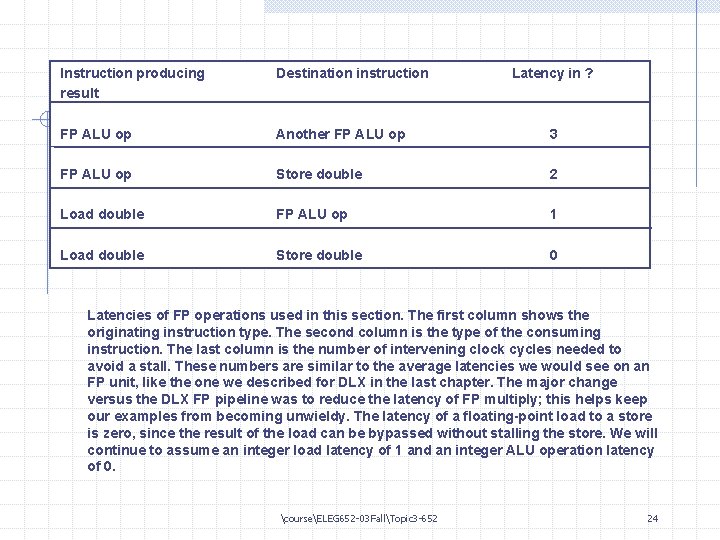

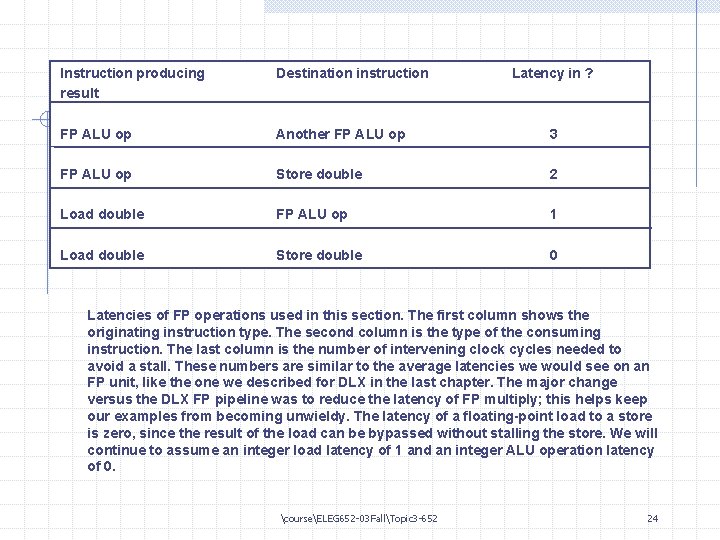

Instruction producing result Destination instruction Latency in ? FP ALU op Another FP ALU op 3 FP ALU op Store double 2 Load double FP ALU op 1 Load double Store double 0 Latencies of FP operations used in this section. The first column shows the originating instruction type. The second column is the type of the consuming instruction. The last column is the number of intervening clock cycles needed to avoid a stall. These numbers are similar to the average latencies we would see on an FP unit, like the one we described for DLX in the last chapter. The major change versus the DLX FP pipeline was to reduce the latency of FP multiply; this helps keep our examples from becoming unwieldy. The latency of a floating-point load to a store is zero, since the result of the load can be bypassed without stalling the store. We will continue to assume an integer load latency of 1 and an integer ALU operation latency of 0. courseELEG 652 -03 FallTopic 3 -652 24

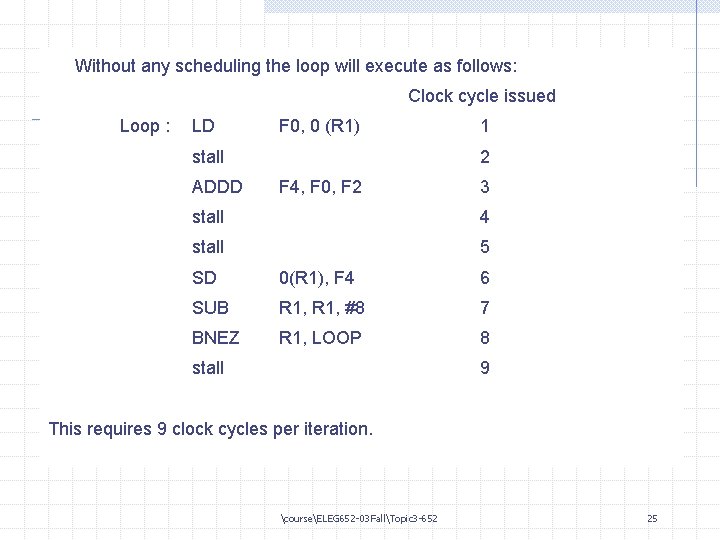

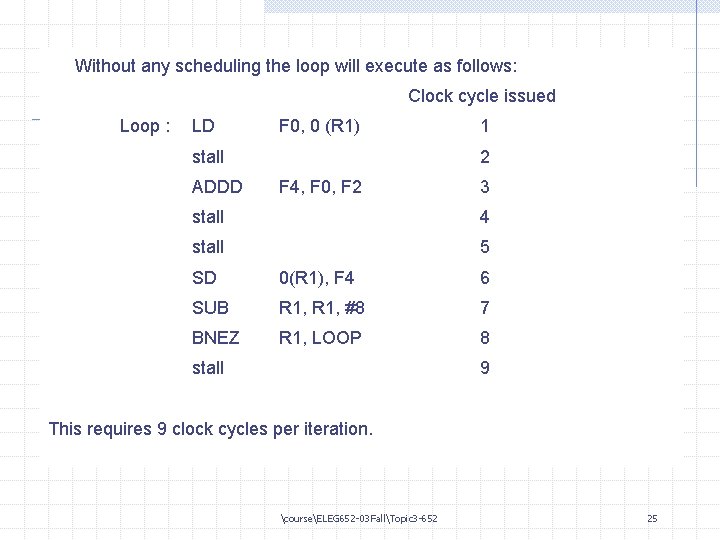

Without any scheduling the loop will execute as follows: Clock cycle issued Loop : LD F 0, 0 (R 1) stall ADDD 1 2 F 4, F 0, F 2 3 stall 4 stall 5 SD 0(R 1), F 4 6 SUB R 1, #8 7 BNEZ R 1, LOOP 8 stall 9 This requires 9 clock cycles per iteration. courseELEG 652 -03 FallTopic 3 -652 25

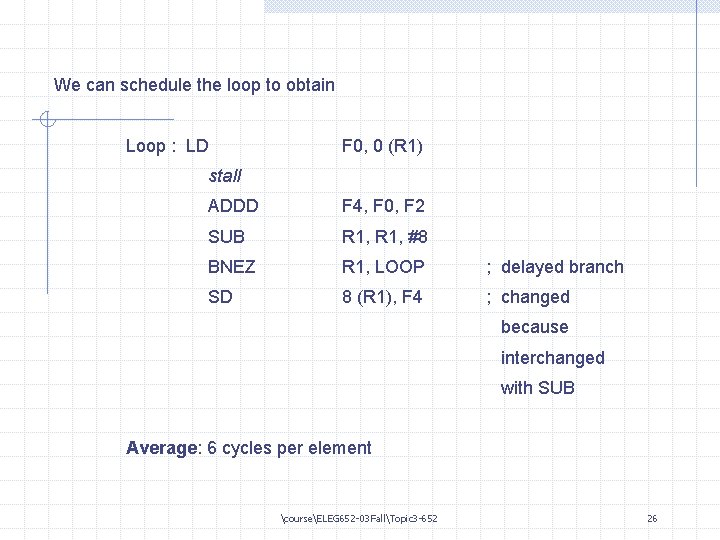

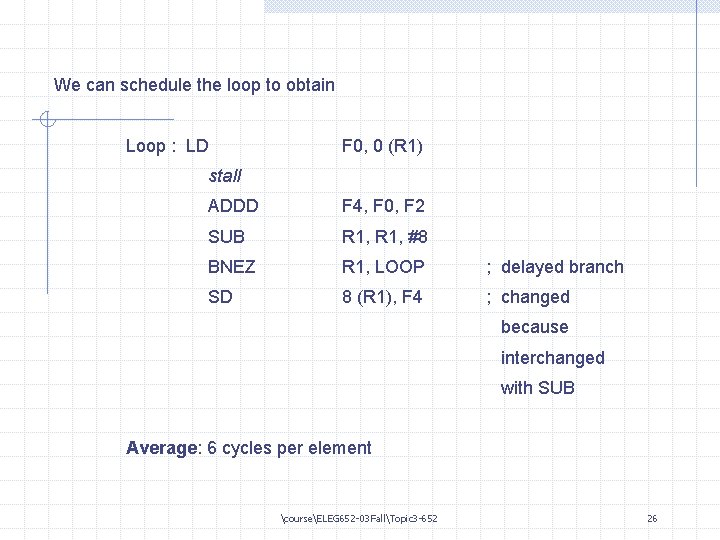

We can schedule the loop to obtain Loop : LD F 0, 0 (R 1) stall ADDD F 4, F 0, F 2 SUB R 1, #8 BNEZ R 1, LOOP ; delayed branch SD 8 (R 1), F 4 ; changed because interchanged with SUB Average: 6 cycles per element courseELEG 652 -03 FallTopic 3 -652 26

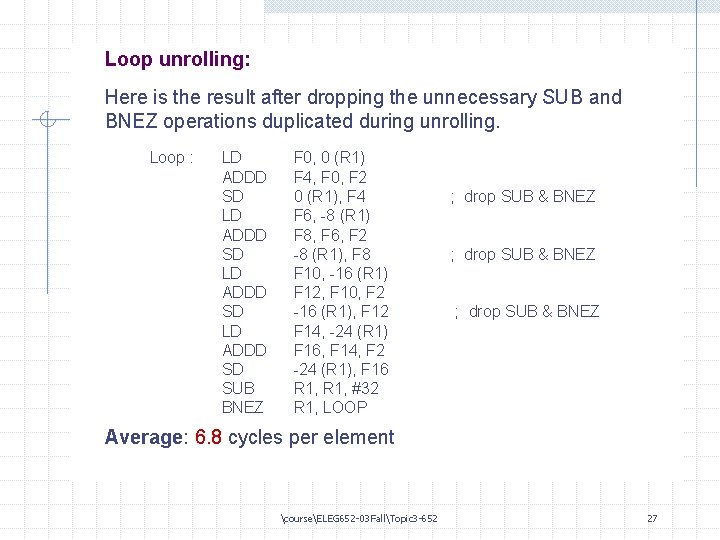

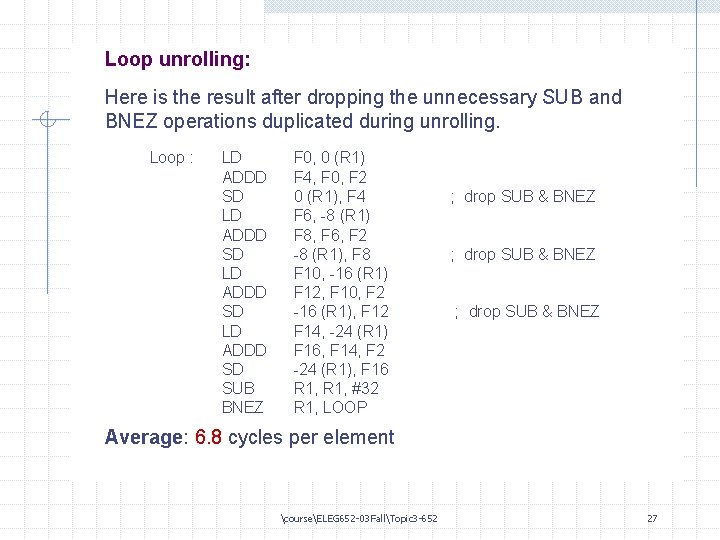

Loop unrolling: Here is the result after dropping the unnecessary SUB and BNEZ operations duplicated during unrolling. Loop : LD ADDD SD SUB BNEZ F 0, 0 (R 1) F 4, F 0, F 2 0 (R 1), F 4 F 6, -8 (R 1) F 8, F 6, F 2 -8 (R 1), F 8 F 10, -16 (R 1) F 12, F 10, F 2 -16 (R 1), F 12 F 14, -24 (R 1) F 16, F 14, F 2 -24 (R 1), F 16 R 1, #32 R 1, LOOP ; drop SUB & BNEZ Average: 6. 8 cycles per element courseELEG 652 -03 FallTopic 3 -652 27

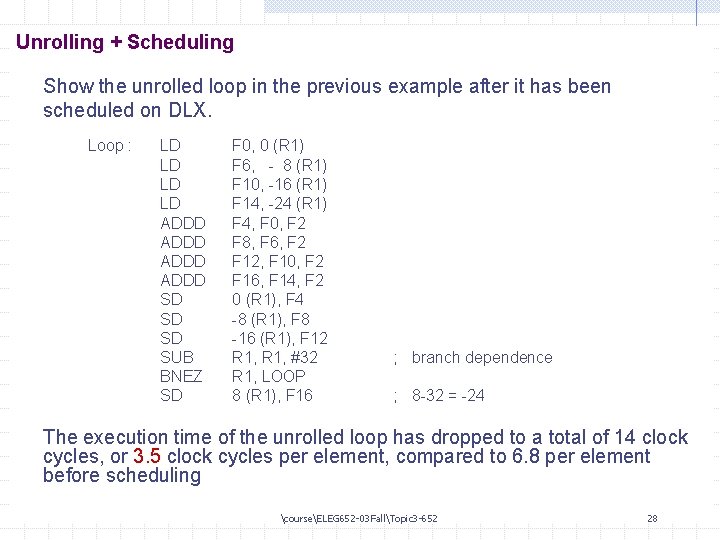

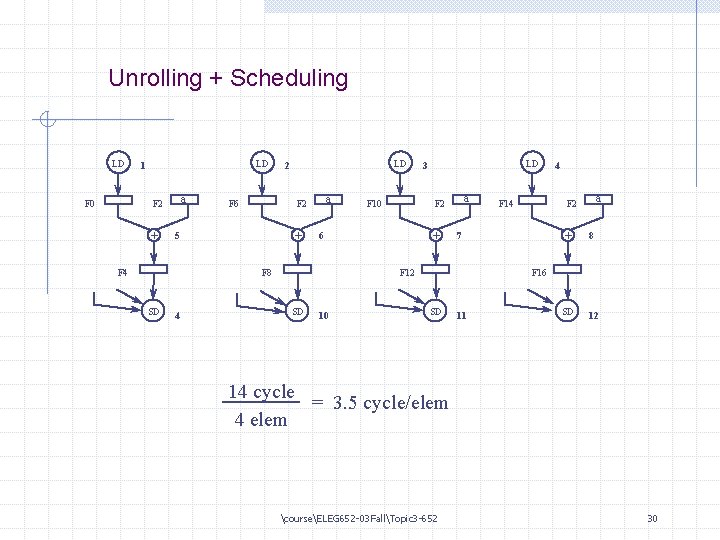

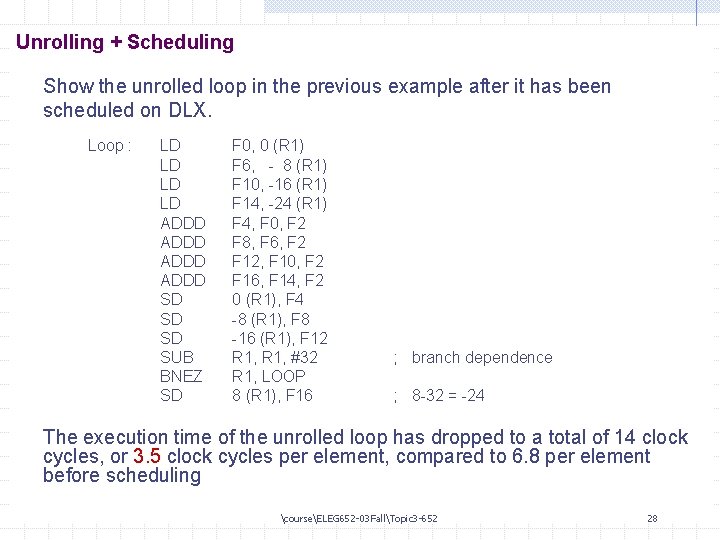

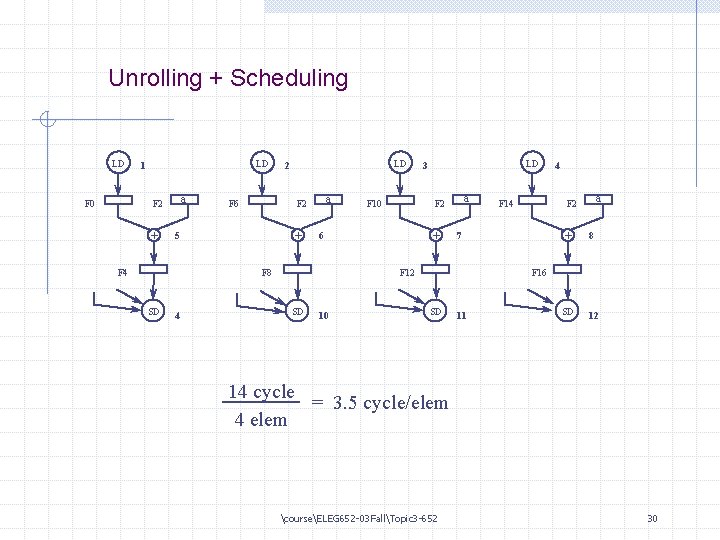

Unrolling + Scheduling Show the unrolled loop in the previous example after it has been scheduled on DLX. Loop : LD LD ADDD SD SD SD SUB BNEZ SD F 0, 0 (R 1) F 6, - 8 (R 1) F 10, -16 (R 1) F 14, -24 (R 1) F 4, F 0, F 2 F 8, F 6, F 2 F 12, F 10, F 2 F 16, F 14, F 2 0 (R 1), F 4 -8 (R 1), F 8 -16 (R 1), F 12 R 1, #32 R 1, LOOP 8 (R 1), F 16 ; branch dependence ; 8 -32 = -24 The execution time of the unrolled loop has dropped to a total of 14 clock cycles, or 3. 5 clock cycles per element, compared to 6. 8 per element before scheduling courseELEG 652 -03 FallTopic 3 -652 28

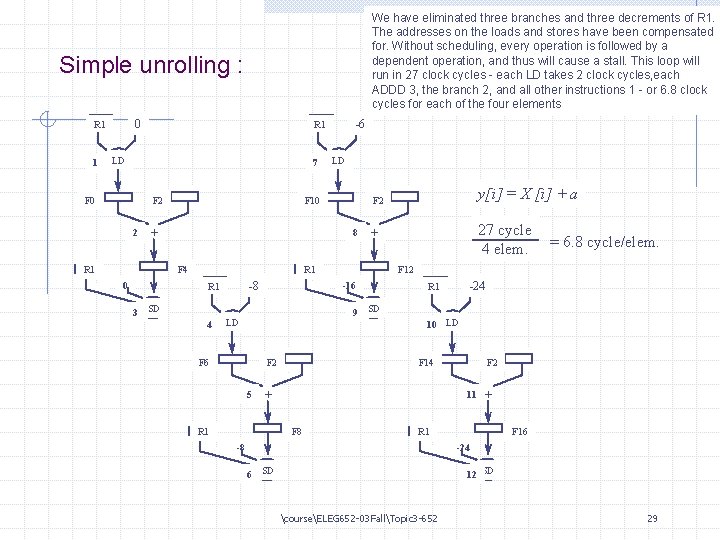

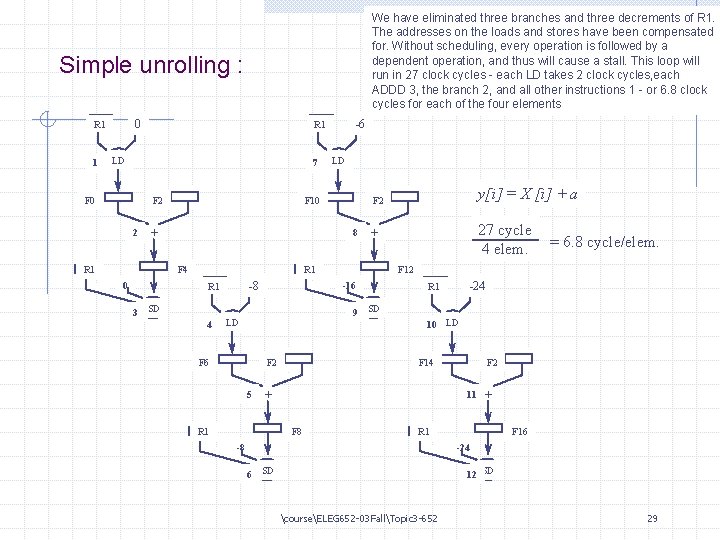

We have eliminated three branches and three decrements of R 1. The addresses on the loads and stores have been compensated for. Without scheduling, every operation is followed by a dependent operation, and thus will cause a stall. This loop will run in 27 clock cycles - each LD takes 2 clock cycles, each ADDD 3, the branch 2, and all other instructions 1 - or 6. 8 clock cycles for each of the four elements Simple unrolling : 0 R 1 1 LD 7 F 2 F 0 2 -6 R 1 a 8 F 4 0 F 2 F 10 + R 1 LD -8 3 SD 4 9 SD F 2 F 6 5 a -24 R 1 10 LD F 2 F 14 11 + R 1 F 8 = 6. 8 cycle/elem. F 12 -16 LD 27 cycle 4 elem. + R 1 y[i] = X [i] + a a + R 1 -8 a F 16 -24 6 SD 12 SD courseELEG 652 -03 FallTopic 3 -652 29

Unrolling + Scheduling LD LD 1 a F 2 F 0 + a F 2 F 6 5 F 4 LD 2 + SD 4 a F 2 F 10 6 F 8 LD 3 + SD 10 a F 2 F 14 7 F 12 4 + 8 SD 12 F 16 SD 11 14 cycle = 3. 5 cycle/elem 4 elem courseELEG 652 -03 FallTopic 3 -652 30