Experiences Deploying Parallel Applications on a Largescale Grid

- Slides: 23

Experiences Deploying Parallel Applications on a Large-scale Grid Rob van Nieuwpoort, Jason Maassen, Andrei Agapi, Ana-Maria Oprescu, Thilo Kielmann Vrije Universiteit, Amsterdam kielmann@cs. vu. nl European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

H. M. Beatrix, Koningin der Nederlanden European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

The N-Queens Contest • Challenge: solve the most board solutions within 1 hour • Testbed: – Grid 5000, DAS-2, some smaller clusters – Globus, Nordu. Grid, LCG, ? ? ? – In fact, there was not too much precise information available in advance. . . European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

Computing in an Unknown Grid? • Heterogeneous machines (architectures, compilers, etc. ) – Use Java: “write once, run anywhere” Use Ibis! • Heterogeneous machines (fast / slow, small / big clusters) – Use automatic load balancing (divide-andconquer) Use Satin! • Heterogeneous middleware (job submission European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

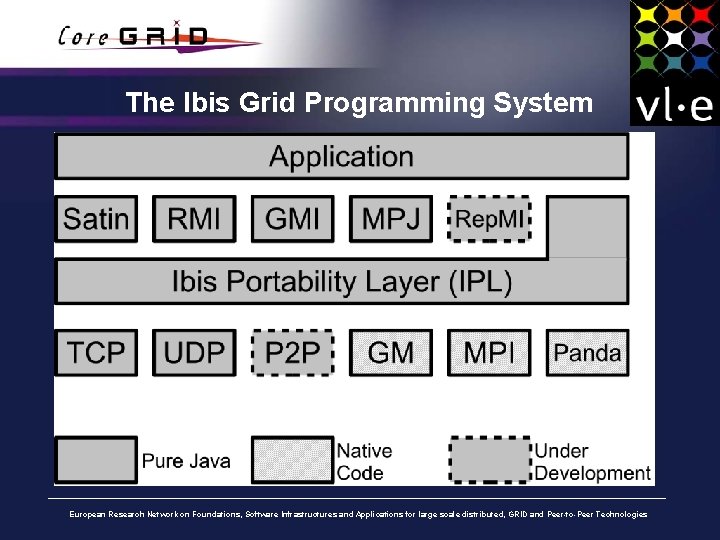

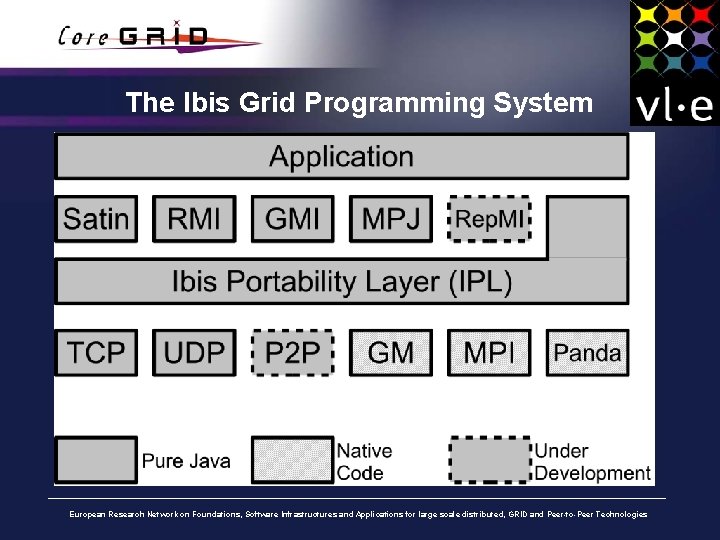

The Ibis Grid Programming System European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

The Ibis System • Java centric: “write once, run anywhere” • Efficient communication (pure Java or native) • Parallel programming models: – RMI (remote method invocation) – GMI (group method invocation) • Collective communication (MPI-like) and more – Rep. MI (replicated method invocation) • Strong consistency – Satin (divide and conquer) – MPJ (Java binding for MPI) European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

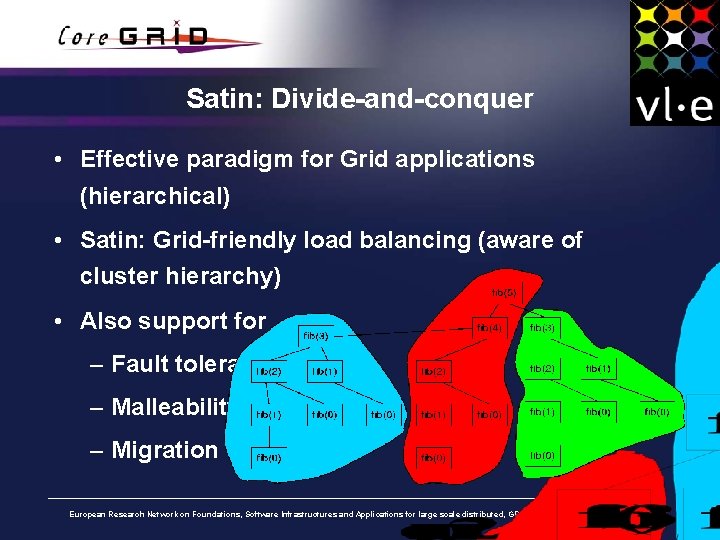

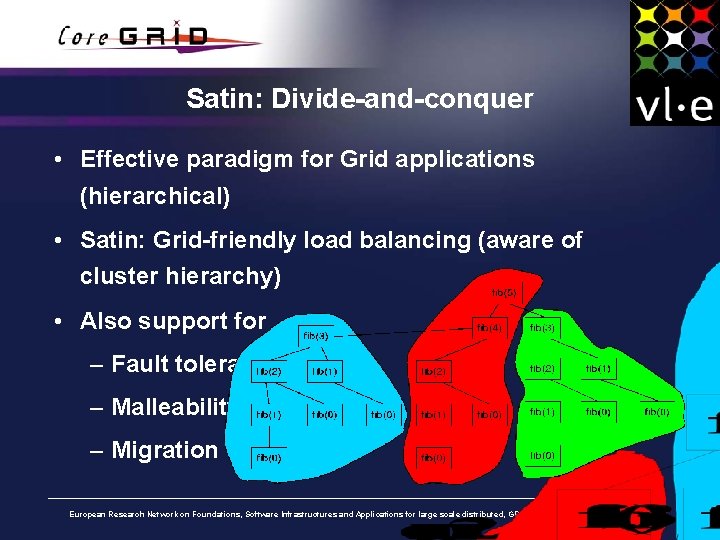

Satin: Divide-and-conquer • Effective paradigm for Grid applications (hierarchical) • Satin: Grid-friendly load balancing (aware of cluster hierarchy) • Also support for – Fault tolerance – Malleability – Migration European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

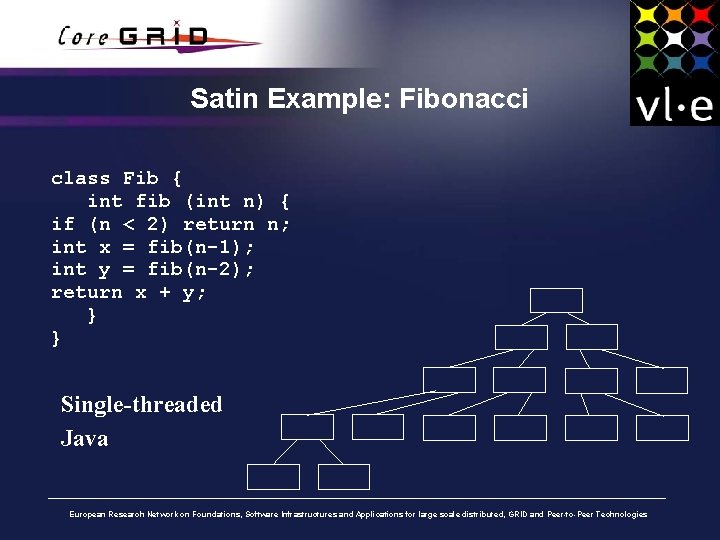

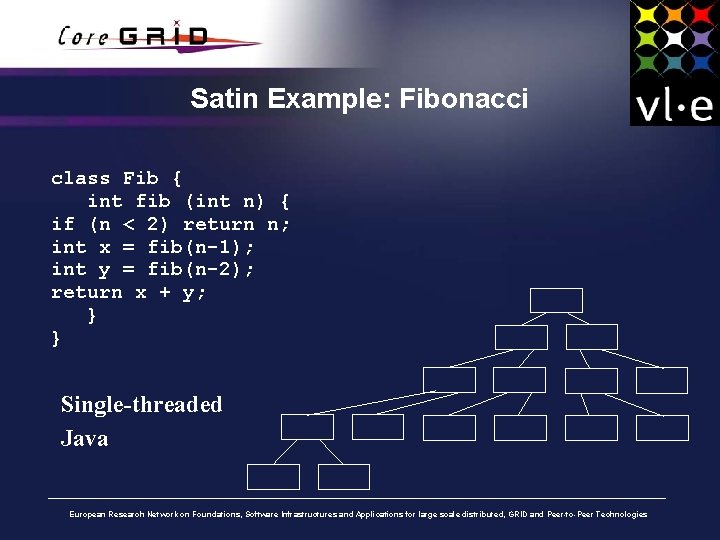

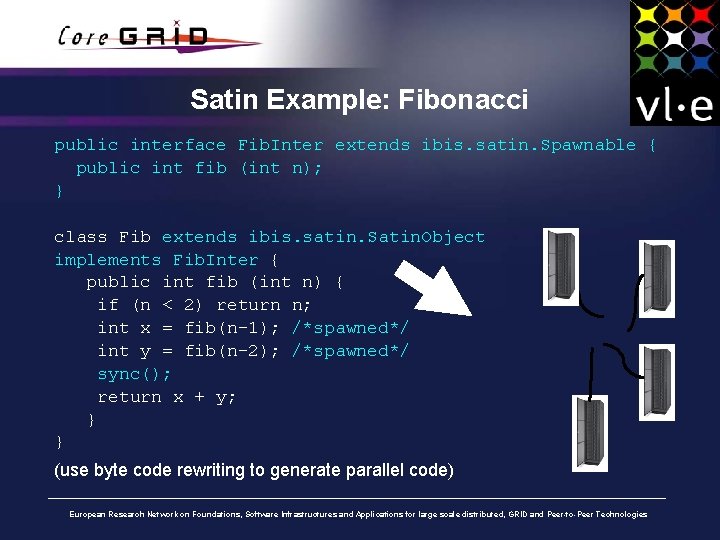

Satin Example: Fibonacci class Fib { int fib (int n) { if (n < 2) return n; int x = fib(n-1); int y = fib(n-2); return x + y; } } Single-threaded Java European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

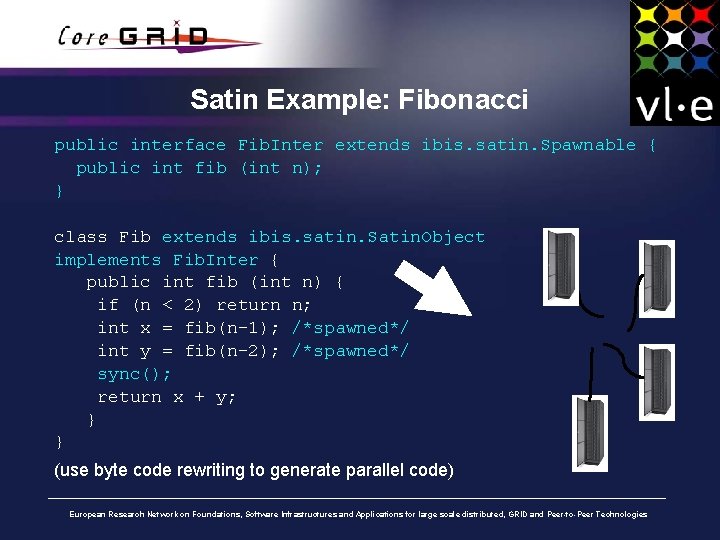

Satin Example: Fibonacci public interface Fib. Inter extends ibis. satin. Spawnable { public int fib (int n); } class Fib extends ibis. satin. Satin. Object implements Fib. Inter { public int fib (int n) { if (n < 2) return n; int x = fib(n-1); /*spawned*/ int y = fib(n-2); /*spawned*/ sync(); return x + y; } } (use byte code rewriting to generate parallel code) European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

Satin: Grid-friendly load balancing (aware of cluster hierarchy) • Random Stealing (RS) – Provably optimal on a single cluster (Cilk) – Problems on multiple clusters: • (C-1)/C % stealing over WAN • Synchronous protocol • Satin: Cluster-aware Random Stealing (CRS) – When idle: • Send asynchronous steal request to random node in different cluster • In the meantime steal locally (synchronously) • Only one wide-area steal request at a time European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

Summary: Ibis • Java: “write once, run anywhere” • Ibis provides efficient communication among parallel processes • Satin provides highly-efficient load balancing among nodes of multiples clusters • But how do we deploy our Ibis / Satin application? European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

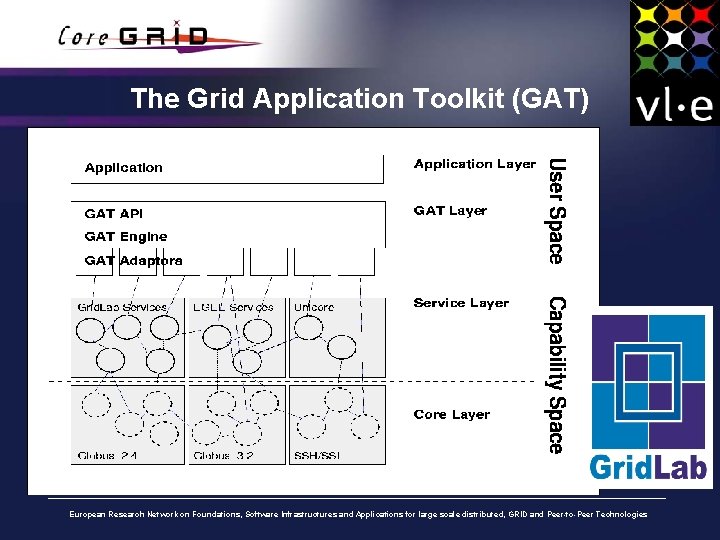

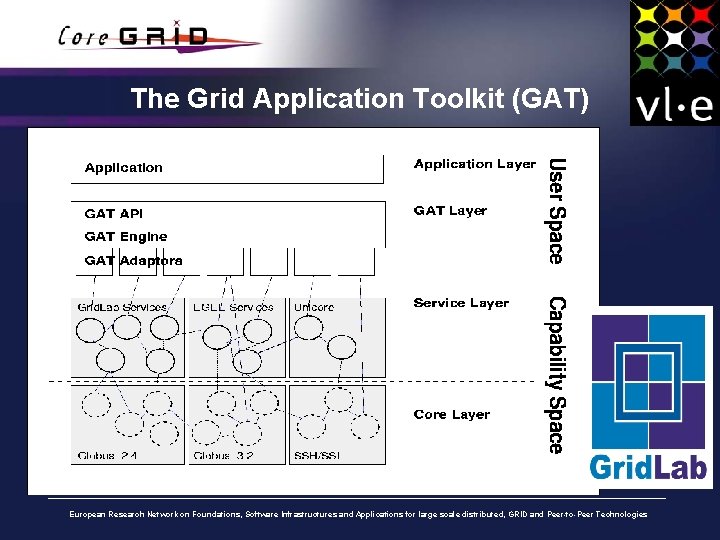

The Grid Application Toolkit (GAT) European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

The Grid Application Toolkit (GAT) • Simple and uniform API to various Grid middleware: – Globus 2, 3, 4, ssh, Unicore, . . . • Job submission, remote file access, job monitoring and steering • Implementations: – C, with wrappers for C++ and Python – Java European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

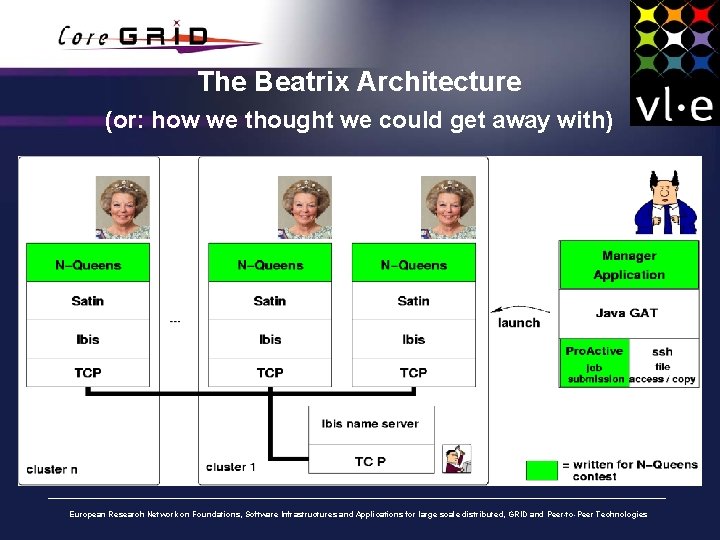

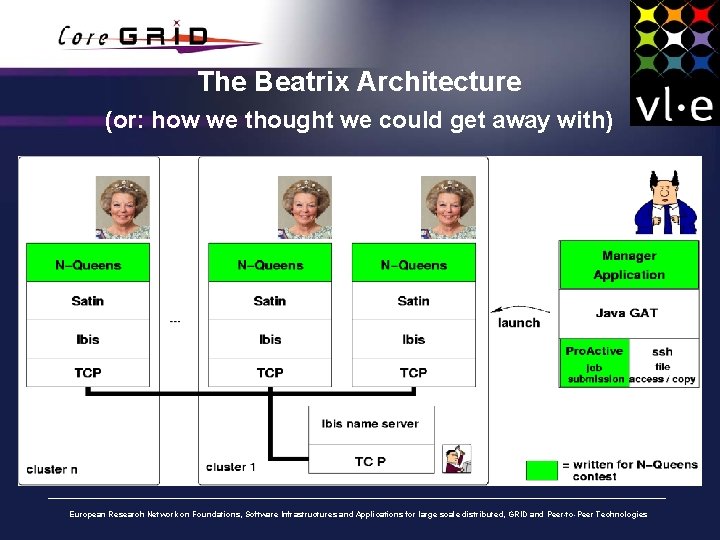

The Beatrix Architecture (or: how we thought we could get away with) European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

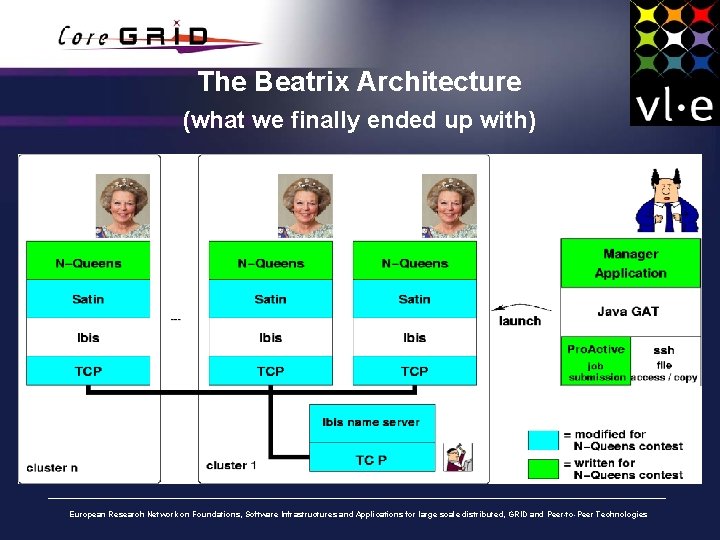

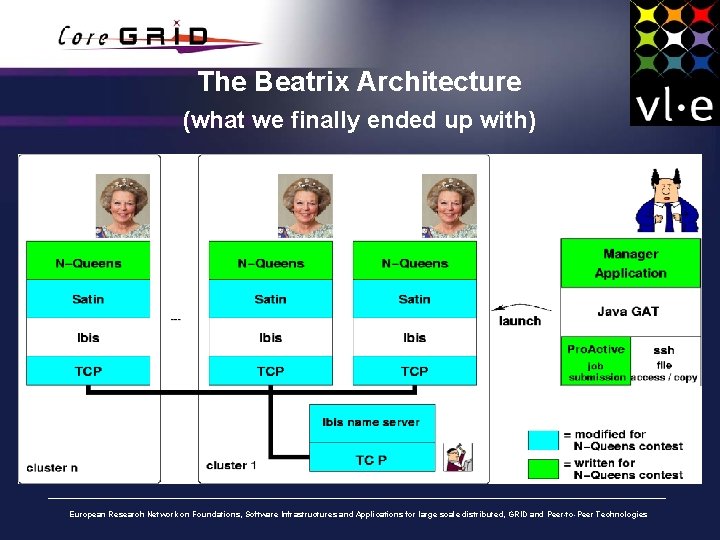

The Beatrix Architecture (what we finally ended up with) European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

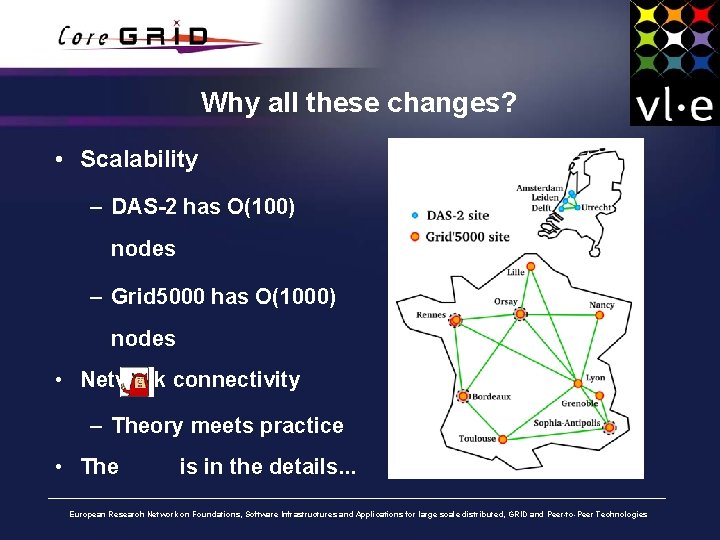

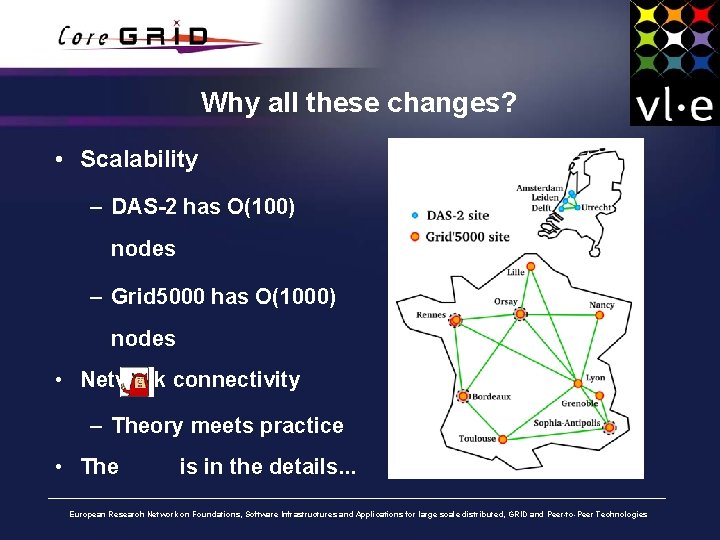

Why all these changes? • Scalability – DAS-2 has O(100) nodes – Grid 5000 has O(1000) nodes • Network connectivity – Theory meets practice • The is in the details. . . European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

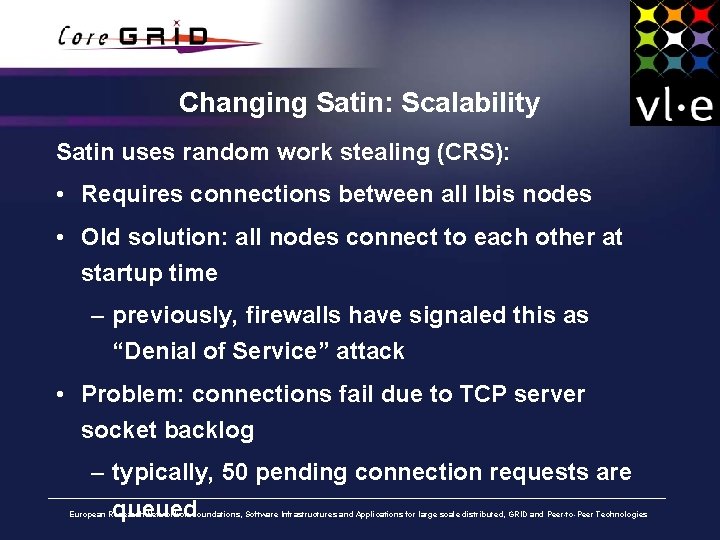

Changing Satin: Scalability Satin uses random work stealing (CRS): • Requires connections between all Ibis nodes • Old solution: all nodes connect to each other at startup time – previously, firewalls have signaled this as “Denial of Service” attack • Problem: connections fail due to TCP server socket backlog – typically, 50 pending connection requests are queued European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

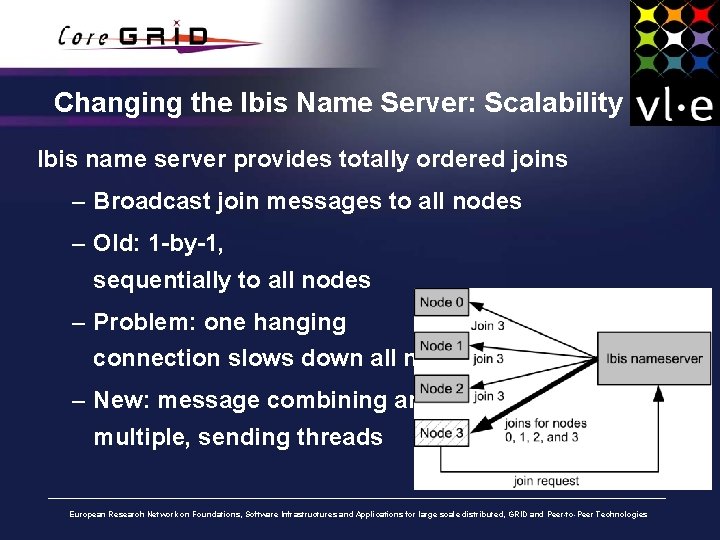

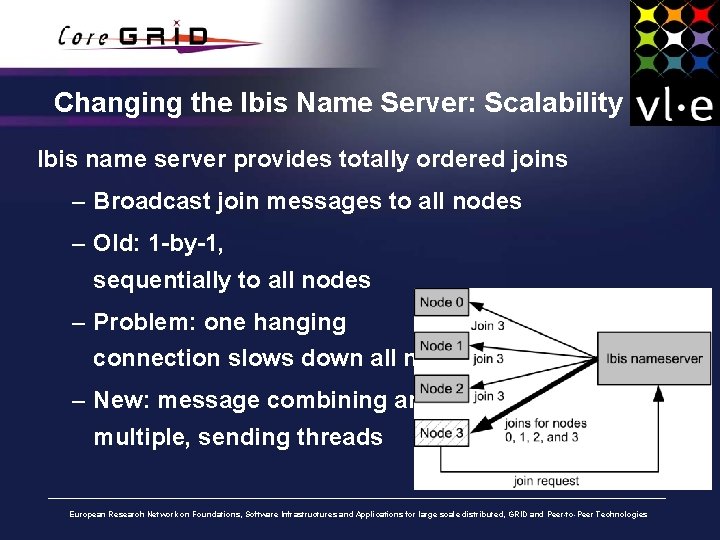

Changing the Ibis Name Server: Scalability Ibis name server provides totally ordered joins – Broadcast join messages to all nodes – Old: 1 -by-1, sequentially to all nodes – Problem: one hanging connection slows down all nodes – New: message combining and multiple, sending threads European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

Changing the TCP Driver: Connectivity Nodes advertise their IP address (to connect to) to the name server: • Grid 5000 nodes have up to 5 IP addresses: which one? – Global and local (RFC 1918), like 10. x. y. z – Address registered in DNS – Some are routed, some are not • Problem: incomplete and inconsistent information • Solution: manual configuration, TCP-Ibis tries out multiple addresses European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

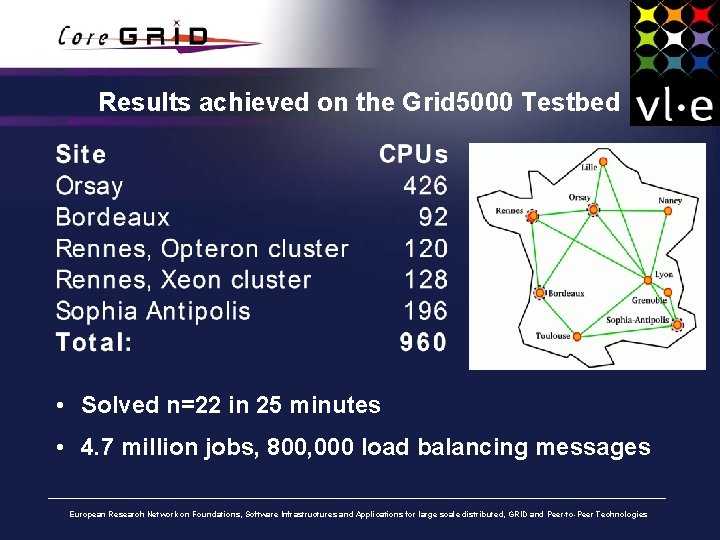

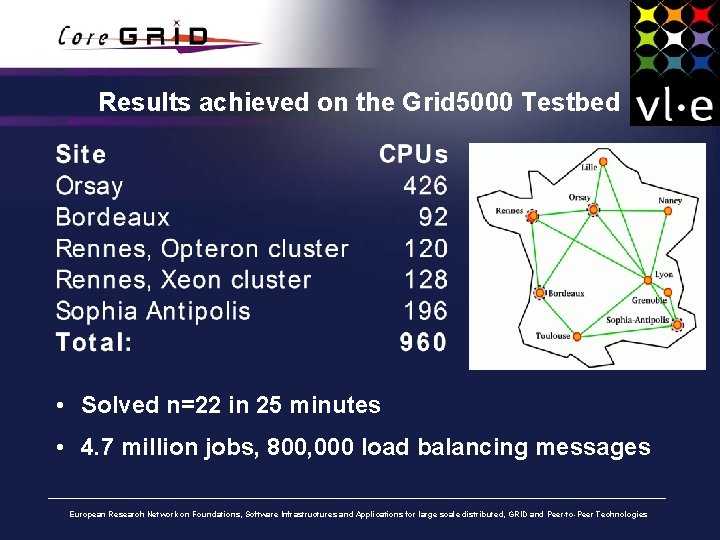

Results achieved on the Grid 5000 Testbed • Solved n=22 in 25 minutes • 4. 7 million jobs, 800, 000 load balancing messages European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

More Results. . . European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies

Conclusions • We used a mix of software: – Ibis with Satin – Java GAT with Pro. Active and ssh adaptors • Scaling from 100 to 1000 processors required some redesign of Ibis and Satin • Connectivity in Grid 5000 is non-trivial (we still cannot connect Grid 5000 with DAS-2) • Ibis / Satin / GAT / Pro. Active has shown to be a viable and European Research Network on Foundations, Software Infrastructures and Applications for large scale distributed, GRID and Peer-to-Peer Technologies