Exercise 3 Towards a solution Paul Burton Paul

Exercise 3 Towards a solution Paul Burton Paul. Burton@ecmwf. int © ECMWF October 3, 2020

General Guidance • Break it into managable pieces to deal with – Already nicely broken down into neat subroutines! • Look at the data structures – How are you going to split between processors? • Don’t cheat – Try and work it out for yourself before you look through the rest of these slides! EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 2

Parallel Initialisation • Need to find out from MPI: – How many processors? (NTasks) • CALL MPI_COMM_SIZE(MPI_COMM_WORLD, NTasks, ierror) – What is my ID/Rank? (My. Task) • CALL MPI_COMM_RANK(MPI_COMM_WORLD, My. Task, ierror) – Who are my neighbours? • My. Neighbour. Left=My. Task-1 • My. Neighbour. Right=My. Task+1 – Don’t forget the wrap around, so it’s a bit different for My. Task=0 and My. Task=NTasks-1 – Calculate NPoints. Per. Task EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 3

Model_Driver • No longer with npoints (Total number of points) – Use NPoints. Per. Task (from Parallel_Info_Mod) EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 4

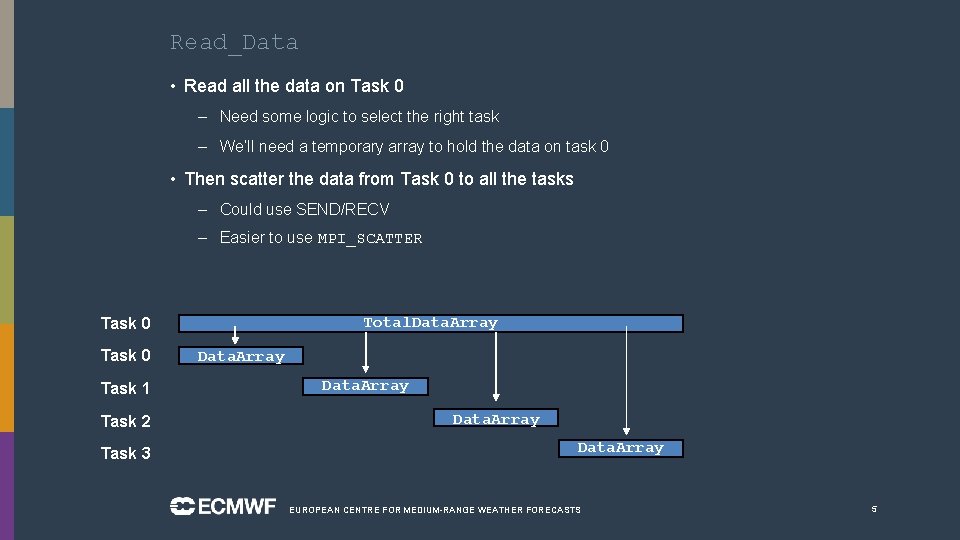

Read_Data • Read all the data on Task 0 – Need some logic to select the right task – We’ll need a temporary array to hold the data on task 0 • Then scatter the data from Task 0 to all the tasks – Could use SEND/RECV – Easier to use MPI_SCATTER Total. Data. Array Task 0 Task 1 Task 2 Task 3 Data. Array EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 5

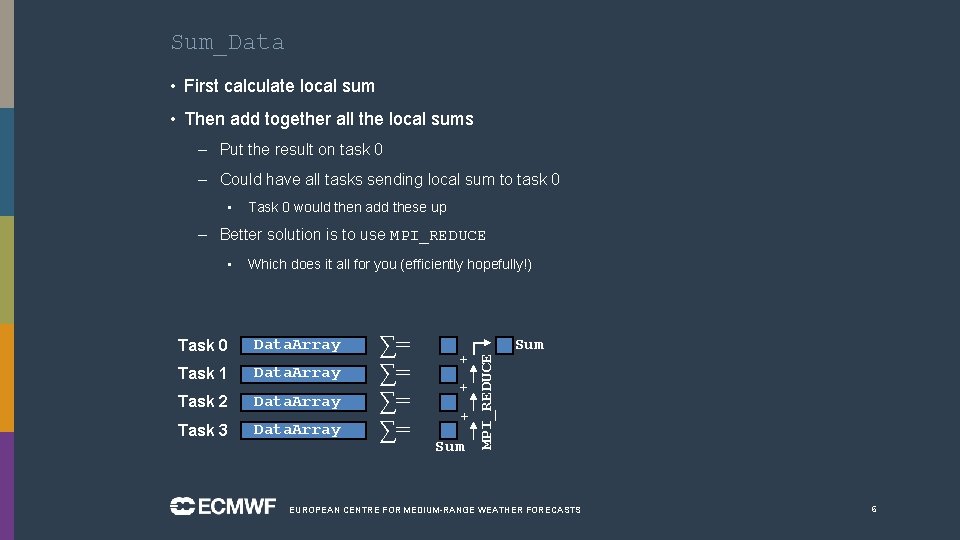

Sum_Data • First calculate local sum • Then add together all the local sums – Put the result on task 0 – Could have all tasks sending local sum to task 0 • Task 0 would then add these up – Better solution is to use MPI_REDUCE Which does it all for you (efficiently hopefully!) Task 0 Data. Array Task 1 Data. Array Task 2 Data. Array Task 3 Data. Array ∑= ∑= + + + Sum MPI_REDUCE • Sum EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 6

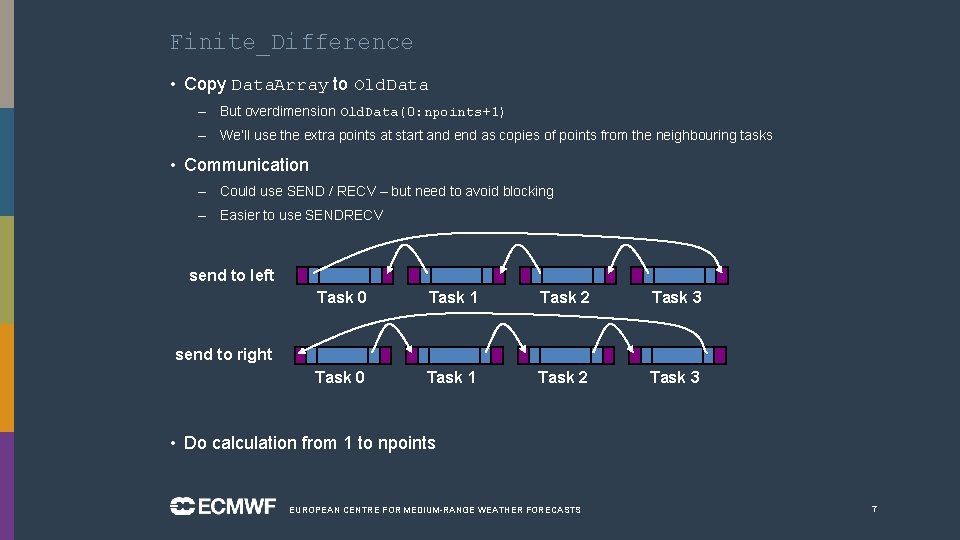

Finite_Difference • Copy Data. Array to Old. Data – But overdimension Old. Data(0: npoints+1) – We’ll use the extra points at start and end as copies of points from the neighbouring tasks • Communication – Could use SEND / RECV – but need to avoid blocking – Easier to use SENDRECV send to left Task 0 Task 1 Task 2 Task 3 send to right • Do calculation from 1 to npoints EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 7

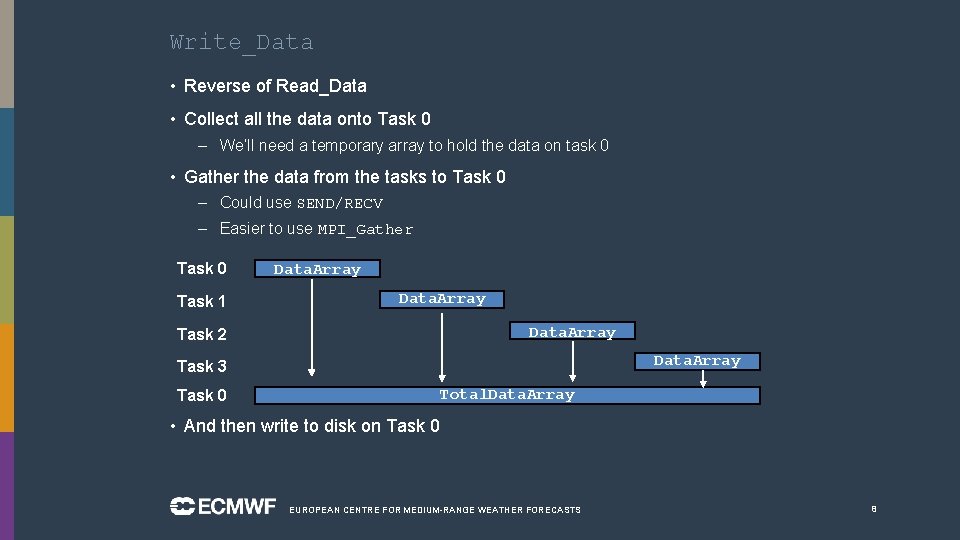

Write_Data • Reverse of Read_Data • Collect all the data onto Task 0 – We’ll need a temporary array to hold the data on task 0 • Gather the data from the tasks to Task 0 – Could use SEND/RECV – Easier to use MPI_Gather Task 0 Task 1 Data. Array Task 2 Data. Array Task 3 Total. Data. Array Task 0 • And then write to disk on Task 0 EUROPEAN CENTRE FOR MEDIUM-RANGE WEATHER FORECASTS 8

- Slides: 8