Examining Rubric Design and Interrater Reliability a Fun

Examining Rubric Design and Inter-rater Reliability: a Fun Grading Project Presented at the Third Annual Association for the Assessment of Learning in Higher Education (AALHE) Conference, Lexington, Kentucky, June 3, 2013 Dr. Yan Zhang Cooksey University of Maryland University College

Outline of Today’s Presentation • Background and purposes of the full-day grading project • Procedural methods of the project • Discuss the results and decisions informed by the assessment findings • Lessons learned through the process

Purposes of the Full-day Grading Project • To simplify the current assessment process • To validate the newly developed common rubric measuring four core student learning areas (written communication, critical thinking, technology fluency, and information literacy)

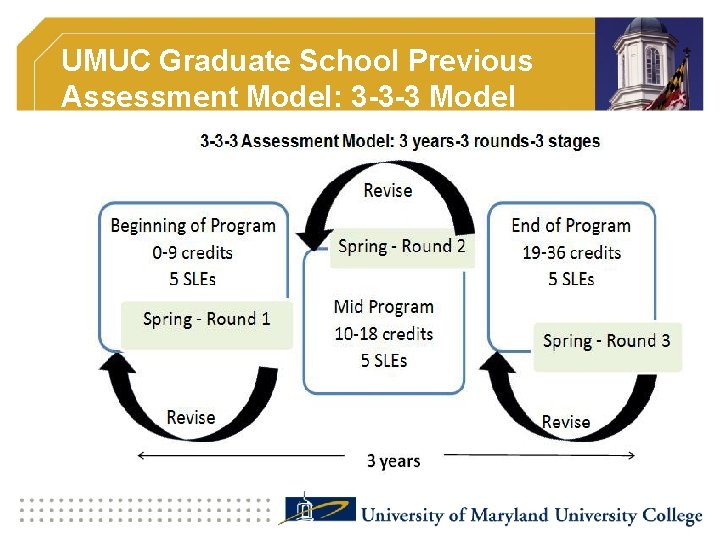

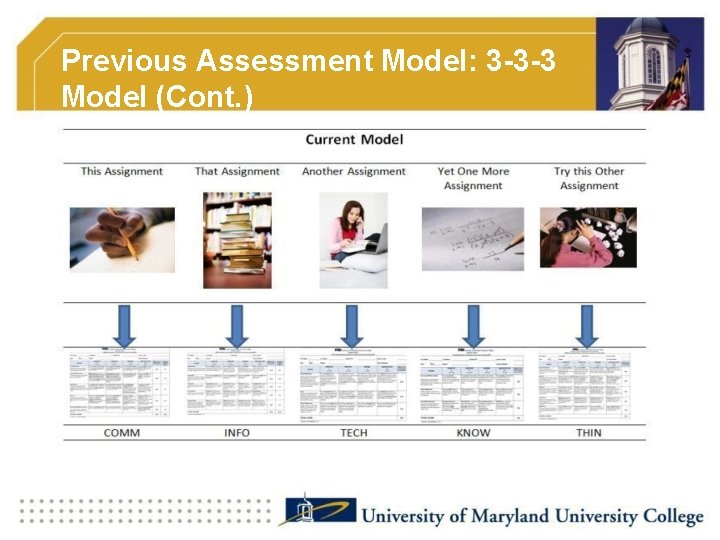

UMUC Graduate School Previous Assessment Model: 3 -3 -3 Model

Previous Assessment Model: 3 -3 -3 Model (Cont. )

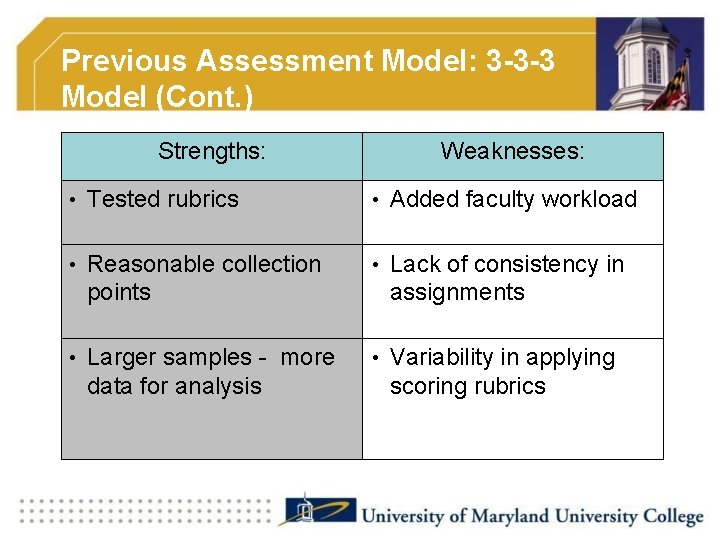

Previous Assessment Model: 3 -3 -3 Model (Cont. ) Strengths: Weaknesses: • Tested rubrics • Added faculty workload • Reasonable collection • Lack of consistency in points • Larger samples - more data for analysis assignments • Variability in applying scoring rubrics

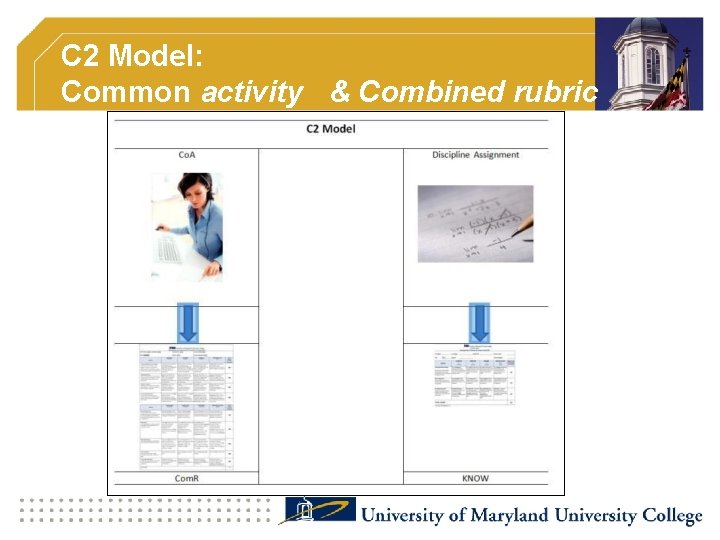

C 2 Model: Common activity & Combined rubric

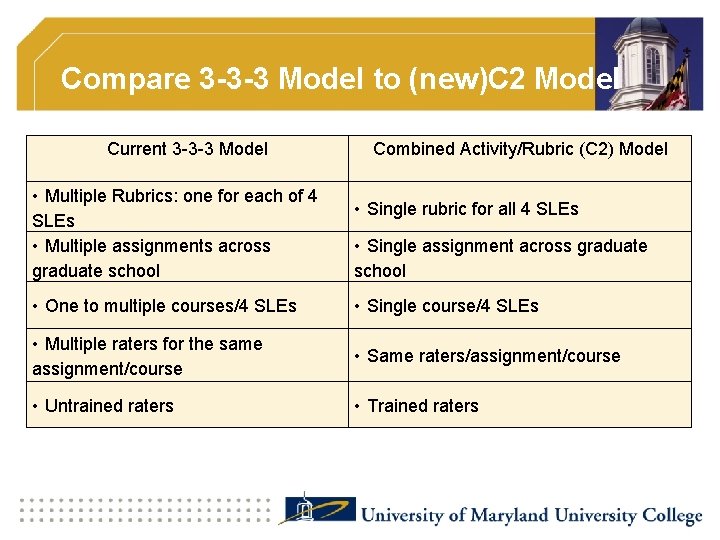

Compare 3 -3 -3 Model to (new)C 2 Model Current 3 -3 -3 Model • Multiple Rubrics: one for each of 4 SLEs • Multiple assignments across graduate school • One to multiple courses/4 SLEs • Multiple raters for the same assignment/course • Untrained raters Combined Activity/Rubric (C 2) Model • Single rubric for all 4 SLEs • Single assignment across graduate school • Single course/4 SLEs • Same raters/assignment/course • Trained raters

Purposes of the Full-day Grading Project • To simplify the current assessment process • To validate the newly developed common rubric measuring four core student learning areas (written communication, critical thinking, technology fluency, and information literacy)

Procedural Methods of the Grading Project • Data Source • Rubric • Experimental design for data collection • Inter-rater reliability

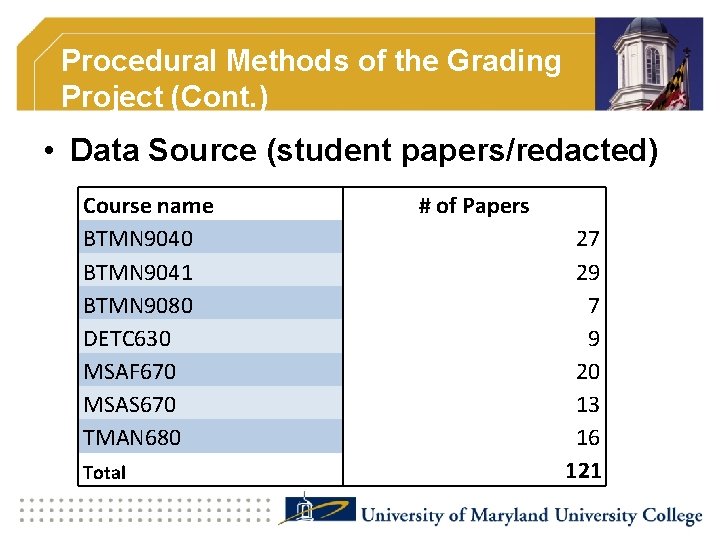

Procedural Methods of the Grading Project (Cont. ) • Data Source (student papers/redacted) Course name BTMN 9040 BTMN 9041 BTMN 9080 DETC 630 MSAF 670 MSAS 670 TMAN 680 Total # of Papers 27 29 7 9 20 13 16 121

Procedural Methods of the Grading Project (Cont. ) • Common Assignment • Rubric (rubric design and refinement) • 18 Raters (faculty members)

Procedural Methods of the Grading Project (Cont. ) • Experimental design for data collection § randomized trial (Group A&B) § raters’ norming and training § grading instruction

Procedural Methods of the Grading Project (Cont. ) • Inter-rater reliability (literature) § Stemler (2004): in any situation that involves judges (raters), the degree of inter -rater reliability is worthwhile to investigate, as the value of inter-rater reliability has significant implication for the validity of the subsequent study results. § Intraclass Correlation Coefficients (ICC) were used in this study.

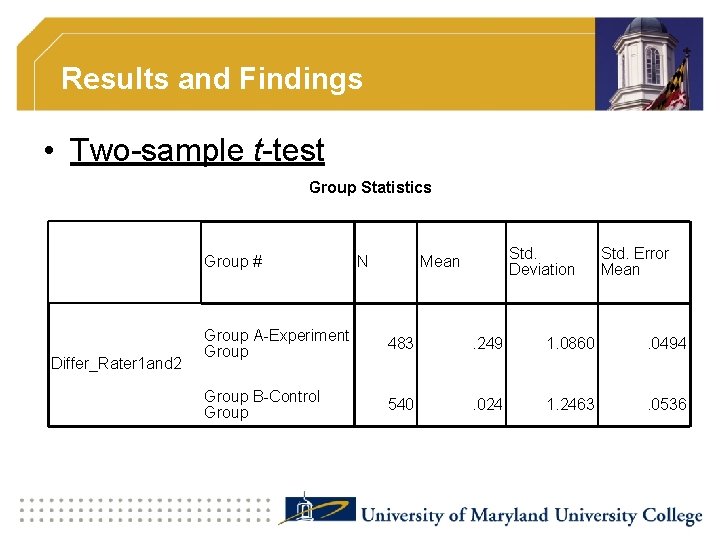

Results and Findings • Two-sample t-test Group Statistics Differ_Rater 1 and 2 Group # N Std. Deviation Mean Std. Error Mean Group A-Experiment Group 483 . 249 1. 0860 . 0494 Group B-Control Group 540 . 024 1. 2463 . 0536

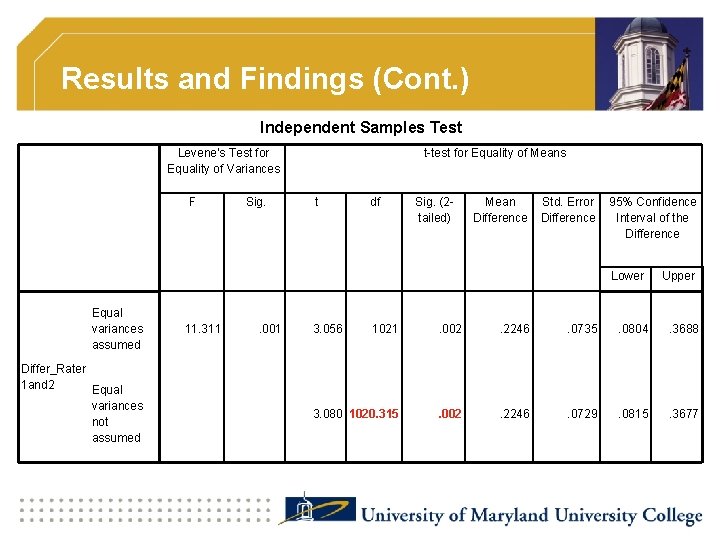

Results and Findings (Cont. ) Independent Samples Test Levene's Test for Equality of Variances F Sig. t-test for Equality of Means t df Sig. (2 tailed) Mean Difference Std. Error 95% Confidence Difference Interval of the Difference Lower Equal variances assumed Differ_Rater 1 and 2 Equal variances not assumed 11. 311 . 001 3. 056 Upper 1021 . 002 . 2246 . 0735 . 0804 . 3688 3. 080 1020. 315 . 002 . 2246 . 0729 . 0815 . 3677

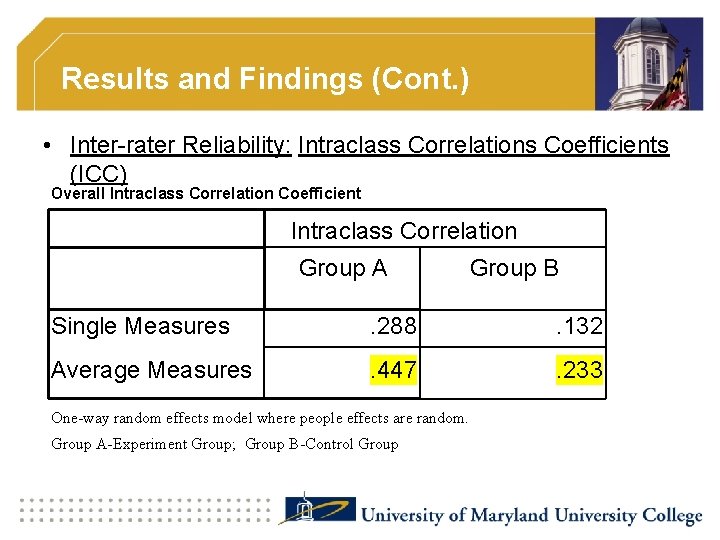

Results and Findings (Cont. ) • Inter-rater Reliability: Intraclass Correlations Coefficients (ICC) Overall Intraclass Correlation Coefficient Intraclass Correlation Group A Group B Single Measures . 288 . 132 Average Measures . 447 . 233 One-way random effects model where people effects are random. Group A-Experiment Group; Group B-Control Group

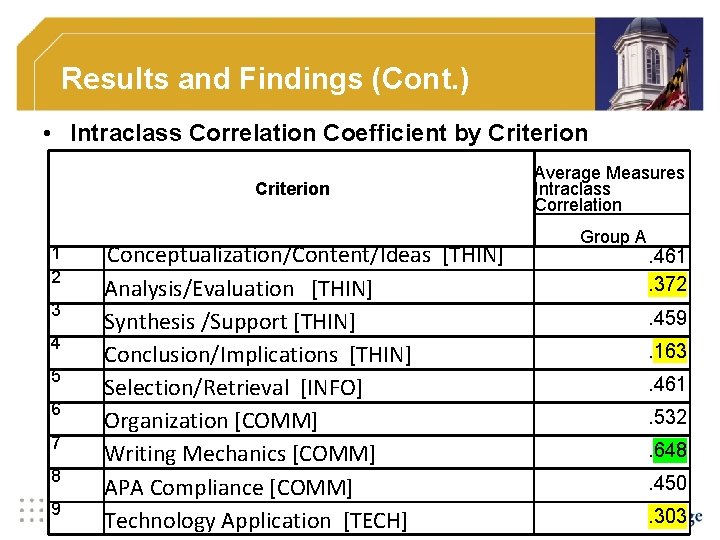

Results and Findings (Cont. ) • Intraclass Correlation Coefficient by Criterion 1 2 3 4 5 6 7 8 9 Average Measures Intraclass Correlation Group A Conceptualization/Content/Ideas [THIN] Analysis/Evaluation [THIN] Synthesis /Support [THIN] Conclusion/Implications [THIN] Selection/Retrieval [INFO] Organization [COMM] Writing Mechanics [COMM] APA Compliance [COMM] Technology Application [TECH] . 461. 372. 459. 163. 461. 532. 648. 450. 303

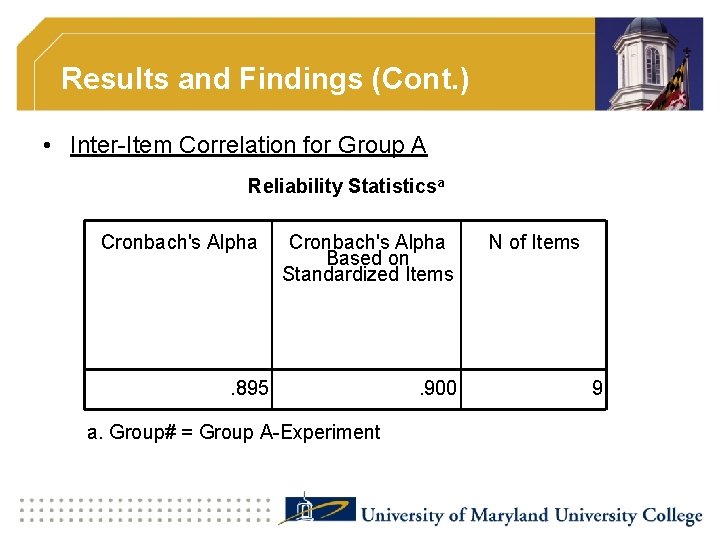

Results and Findings (Cont. ) • Inter-Item Correlation for Group A Reliability Statisticsa Cronbach's Alpha Based on Standardized Items . 895 a. Group# = Group A-Experiment . 900 N of Items 9

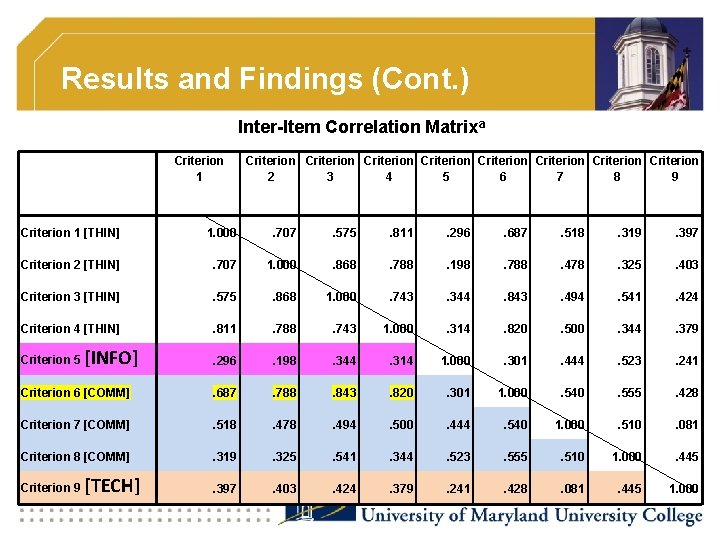

Results and Findings (Cont. ) Inter-Item Correlation Matrixa Criterion 1 Criterion Criterion 2 3 4 5 6 7 8 9 Criterion 1 [THIN] 1. 000 . 707 . 575 . 811 . 296 . 687 . 518 . 319 . 397 Criterion 2 [THIN] . 707 1. 000 . 868 . 788 . 198 . 788 . 478 . 325 . 403 Criterion 3 [THIN] . 575 . 868 1. 000 . 743 . 344 . 843 . 494 . 541 . 424 Criterion 4 [THIN] . 811 . 788 . 743 1. 000 . 314 . 820 . 500 . 344 . 379 . 296 . 198 . 344 . 314 1. 000 . 301 . 444 . 523 . 241 Criterion 6 [COMM] . 687 . 788 . 843 . 820 . 301 1. 000 . 540 . 555 . 428 Criterion 7 [COMM] . 518 . 478 . 494 . 500 . 444 . 540 1. 000 . 510 . 081 Criterion 8 [COMM] . 319 . 325 . 541 . 344 . 523 . 555 . 510 1. 000 . 445 . 397 . 403 . 424 . 379 . 241 . 428 . 081 . 445 1. 000 Criterion 5 Criterion 9 [INFO] [TECH]

Lessons Learned through the Process • Get faculty excited about assessment! • Strategies to improve inter-rater agreement § More training § Clear rubric criteria § Map assignment instructions to rubric criteria • Make decisions based on the assessment results § Further refined the rubric and common assessment activity

Resources • Mc. Graw, K. O. , & Wong, S. P. (1996). Forming inferences about some intraclass correlation coefficients. Psychological Methods, 1(1), 30 -46 (Correction, 1(1), 390). • Nunnally, J. (1978). Psychometric theory (2 nd ed. ). New York: Mc. Graw. Hill. • Stemler, S. E. (2004). A comparison of consensus, consistency, and measurement approaches to estimating. Practical Assessment, Research & Evaluation, 9(4). Retrieved from http: //pareonline. net/getvn. asp? v=9&n=4. • Shrout, P. E. & Fleiss, J. L. (1979). Intraclass Correlations: Uses in Assessing Rater reliability. Psychological Bulletin, 2, 420 -428. Retrieved from http: //www. hongik. edu/~ym 480/Shrout-Fleiss. ICC. pdf.

Stay Connected… • Dr. Yan Zhang Cooksey Director for Outcomes Assessment The Graduate School, University of Maryland University College Email: yan. cooksey@umuc. edu Homepage: http: //assessmentmatters. weebly. com

- Slides: 23