Exact and approximate inference in probabilistic graphical models

Exact and approximate inference in probabilistic graphical models Kevin Murphy MIT CSAIL UBC CS/Stats www. ai. mit. edu/~murphyk/AAAI 04 AAAI 2004 tutorial SP 2 -1

Recommended reading • Cowell, Dawid, Lauritzen, Spiegelhalter, “Probabilistic Networks and Expert Systems“ 1999 • Jensen 2001, “Bayesian Networks and Decision Graphs” • Jordan (due 2005) “Probabilistic graphical models” • Koller & Friedman (due 2005), “Bayes nets and beyond” • “Learning in graphical models”, edited M. Jordan SP 2 -2

Outline • Introduction • Exact inference • Approximate inference – Deterministic – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -3

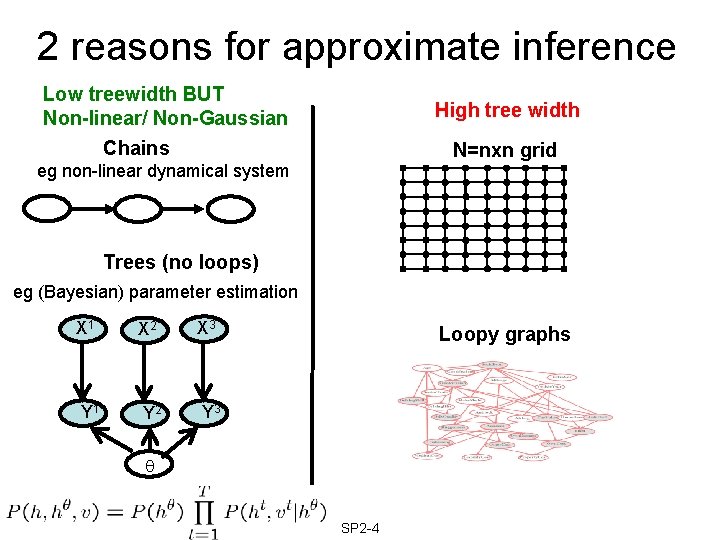

2 reasons for approximate inference Low treewidth BUT Non-linear/ Non-Gaussian High tree width Chains N=nxn grid eg non-linear dynamical system Trees (no loops) eg (Bayesian) parameter estimation X 1 X 2 X 3 Y 1 Y 2 Y 3 Loopy graphs SP 2 -4

Complexity of approximate inference • Approximating P(Xq|Xe) to within a constant factor for all discrete BNs is NPhard. Dagum 93 – In practice, many models exhibit “weak coupling”, so we may safely ignore certain dependencies. • Computing P(Xq|Xe) for all polytrees with discrete and Gaussian nodes is NP-hard. Lerner 01 – In practice, some of the modes of the posterior will have negligible mass. SP 2 -5

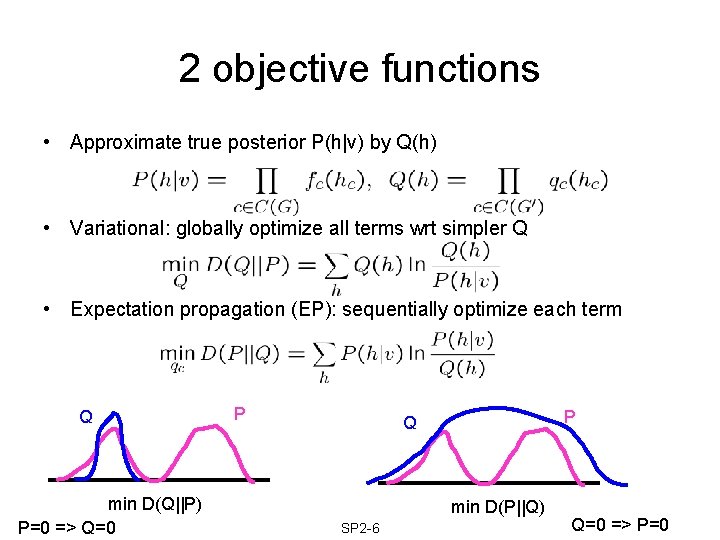

2 objective functions • Approximate true posterior P(h|v) by Q(h) • Variational: globally optimize all terms wrt simpler Q • Expectation propagation (EP): sequentially optimize each term Q min D(Q||P) P=0 => Q=0 P P Q min D(P||Q) SP 2 -6 Q=0 => P=0

Outline • Introduction • Exact inference • Approximate inference – Deterministic • • Variational Loopy belief propagation Expectation propagation Graph cuts – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -7

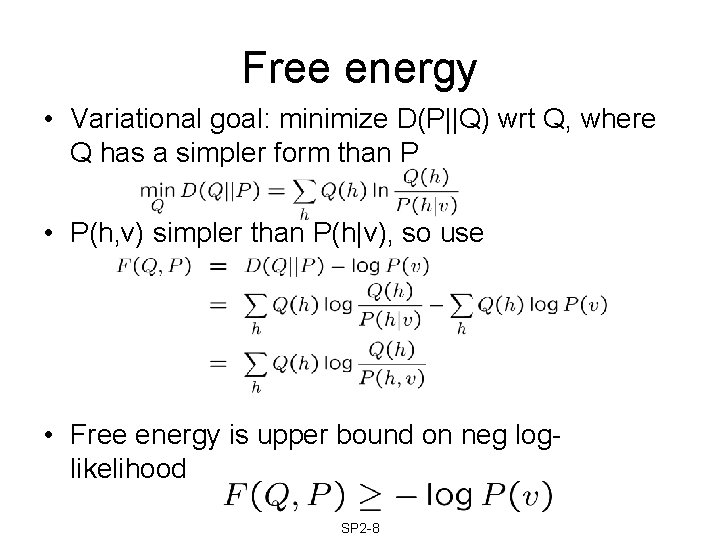

Free energy • Variational goal: minimize D(P||Q) wrt Q, where Q has a simpler form than P • P(h, v) simpler than P(h|v), so use • Free energy is upper bound on neg loglikelihood SP 2 -8

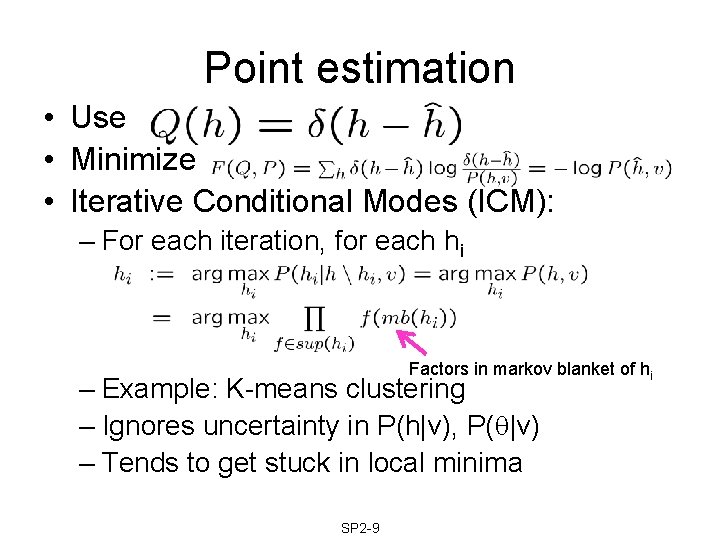

Point estimation • Use • Minimize • Iterative Conditional Modes (ICM): – For each iteration, for each hi Factors in markov blanket of hi – Example: K-means clustering – Ignores uncertainty in P(h|v), P( |v) – Tends to get stuck in local minima SP 2 -9

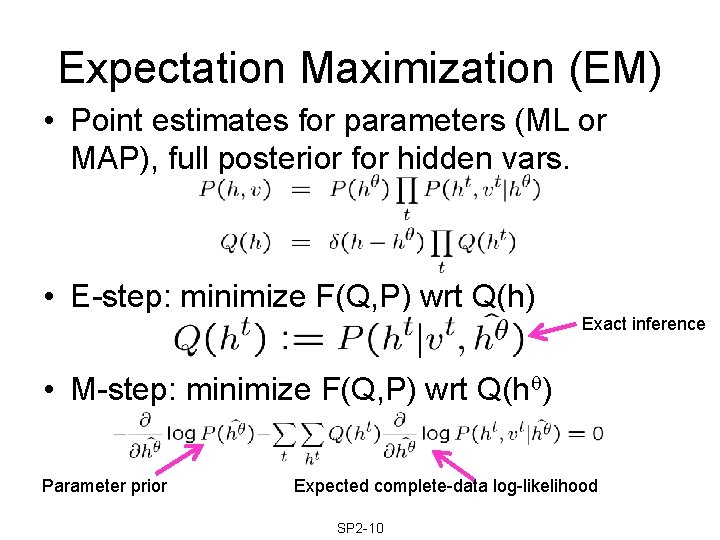

Expectation Maximization (EM) • Point estimates for parameters (ML or MAP), full posterior for hidden vars. • E-step: minimize F(Q, P) wrt Q(h) Exact inference • M-step: minimize F(Q, P) wrt Q(h ) Parameter prior Expected complete-data log-likelihood SP 2 -10

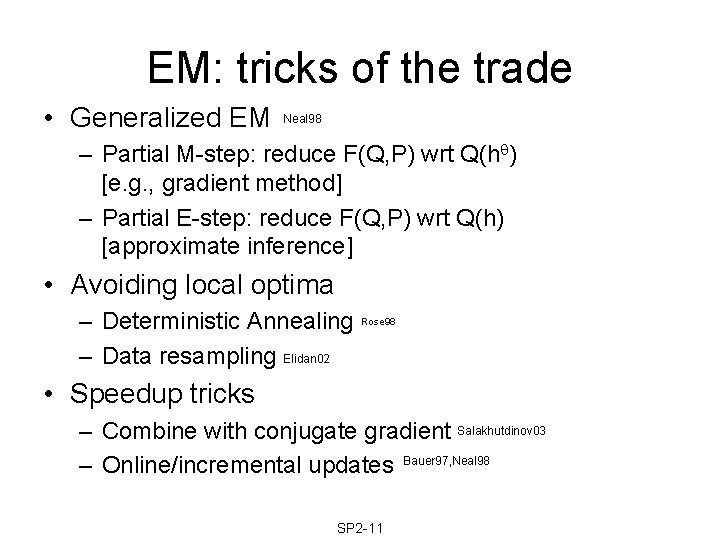

EM: tricks of the trade • Generalized EM Neal 98 – Partial M-step: reduce F(Q, P) wrt Q(h ) [e. g. , gradient method] – Partial E-step: reduce F(Q, P) wrt Q(h) [approximate inference] • Avoiding local optima – Deterministic Annealing – Data resampling Elidan 02 Rose 98 • Speedup tricks – Combine with conjugate gradient Salakhutdinov 03 – Online/incremental updates Bauer 97, Neal 98 SP 2 -11

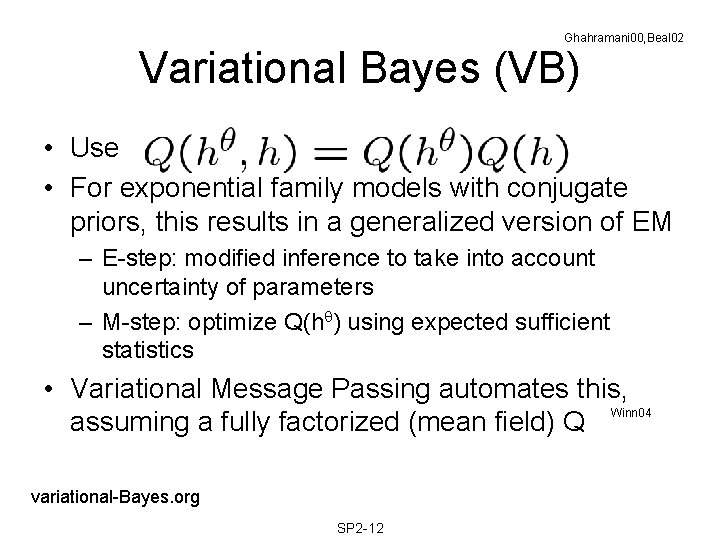

Ghahramani 00, Beal 02 Variational Bayes (VB) • Use • For exponential family models with conjugate priors, this results in a generalized version of EM – E-step: modified inference to take into account uncertainty of parameters – M-step: optimize Q(h ) using expected sufficient statistics • Variational Message Passing automates this, Winn 04 assuming a fully factorized (mean field) Q variational-Bayes. org SP 2 -12

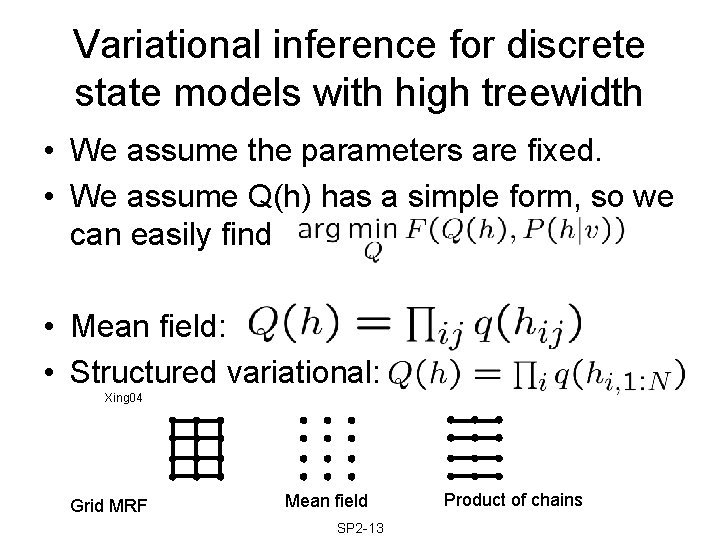

Variational inference for discrete state models with high treewidth • We assume the parameters are fixed. • We assume Q(h) has a simple form, so we can easily find • Mean field: • Structured variational: Xing 04 Grid MRF Mean field SP 2 -13 Product of chains

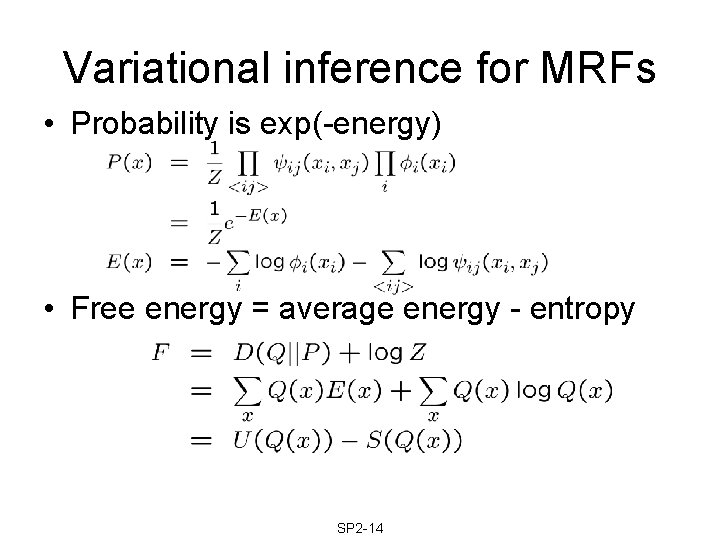

Variational inference for MRFs • Probability is exp(-energy) • Free energy = average energy - entropy SP 2 -14

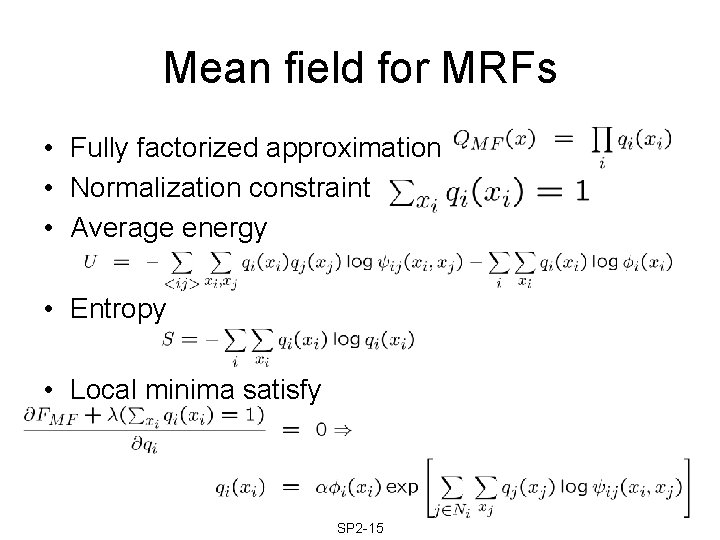

Mean field for MRFs • Fully factorized approximation • Normalization constraint • Average energy • Entropy • Local minima satisfy SP 2 -15

Outline • Introduction • Exact inference • Approximate inference – Deterministic • • Variational Loopy belief propagation Expectation propagation Graph cuts – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -16

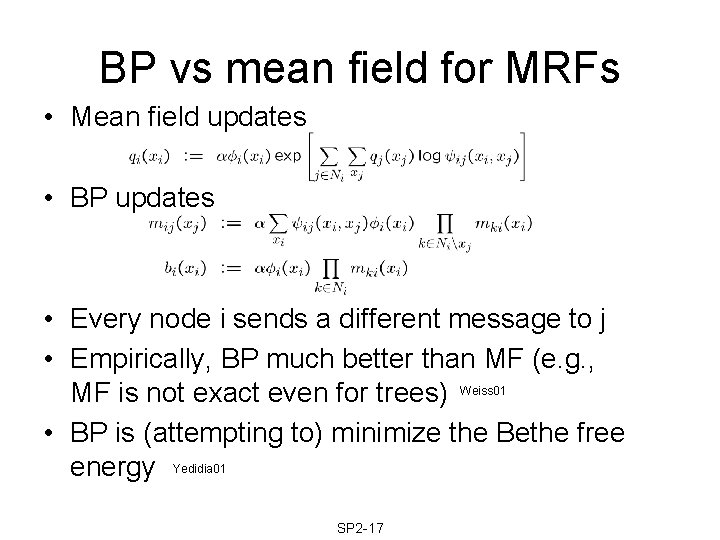

BP vs mean field for MRFs • Mean field updates • BP updates • Every node i sends a different message to j • Empirically, BP much better than MF (e. g. , MF is not exact even for trees) Weiss 01 • BP is (attempting to) minimize the Bethe free energy Yedidia 01 SP 2 -17

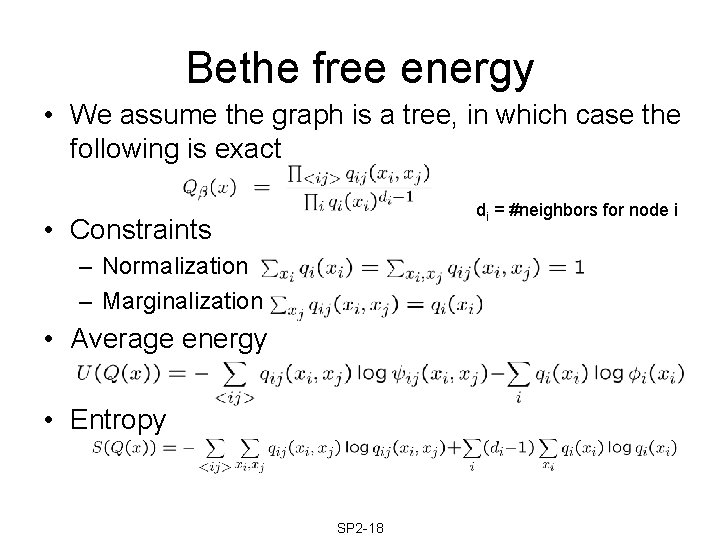

Bethe free energy • We assume the graph is a tree, in which case the following is exact di = #neighbors for node i • Constraints – Normalization – Marginalization • Average energy • Entropy SP 2 -18

![BP minimizes Bethe free energy Yedidia 01 • Theorem [Yedida, Freeman, Weiss]: fixed points BP minimizes Bethe free energy Yedidia 01 • Theorem [Yedida, Freeman, Weiss]: fixed points](http://slidetodoc.com/presentation_image_h/c9439660fa905c8d618343b814f4e349/image-19.jpg)

BP minimizes Bethe free energy Yedidia 01 • Theorem [Yedida, Freeman, Weiss]: fixed points of BP are local stationary points of the Bethe free energy • BP may not converge; other algorithms can directly minimize F , but are slower. • If BP does not converge, it often means F is a poor approximation SP 2 -19

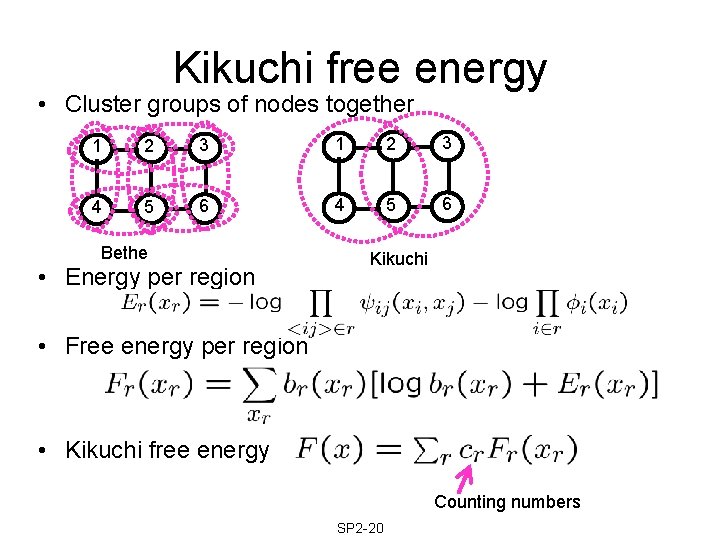

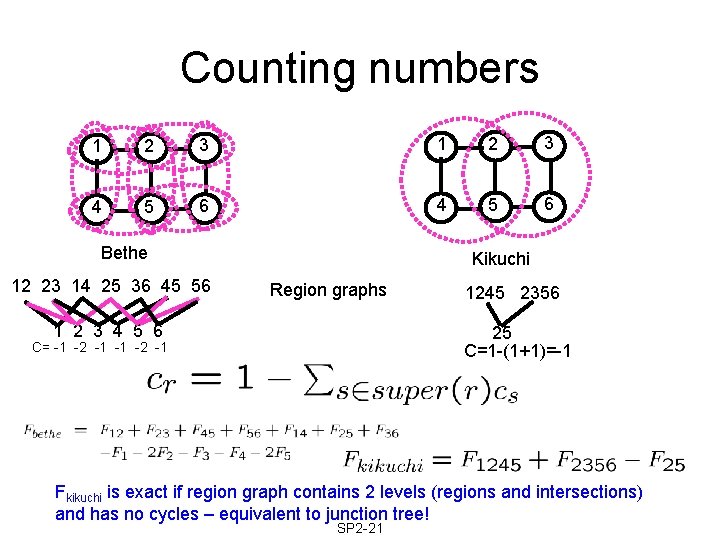

Kikuchi free energy • Cluster groups of nodes together 1 2 3 4 5 6 Bethe • Energy per region Kikuchi • Free energy per region • Kikuchi free energy Counting numbers SP 2 -20

Counting numbers 1 2 3 4 5 6 Bethe 12 23 14 25 36 45 56 Kikuchi Region graphs 1 2 3 4 5 6 1245 2356 25 C=1 -(1+1)=-1 C= -1 -2 -1 Fkikuchi is exact if region graph contains 2 levels (regions and intersections) and has no cycles – equivalent to junction tree! SP 2 -21

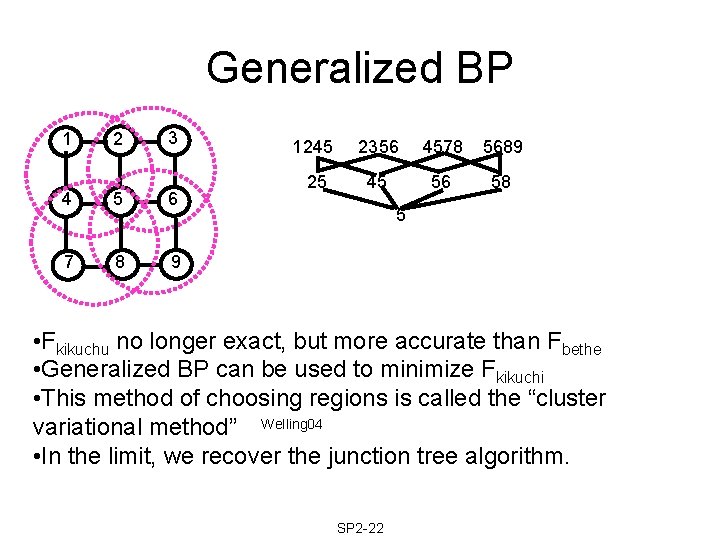

Generalized BP 1 2 3 4 5 6 7 8 9 1245 2356 4578 5689 25 45 56 58 5 • Fkikuchu no longer exact, but more accurate than Fbethe • Generalized BP can be used to minimize Fkikuchi • This method of choosing regions is called the “cluster variational method” Welling 04 • In the limit, we recover the junction tree algorithm. SP 2 -22

Outline • Introduction • Exact inference • Approximate inference – Deterministic • • Variational Loopy belief propagation Expectation propagation Graph cuts – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -23

Minka 01 Expectation Propagation (EP) • EP = iterated assumed density filtering • ADF = recursive Bayesian estimation interleaved with projection step • Examples of ADF: – Extended Kalman filtering – Moment-matching (weak marginalization) – Boyen-Koller algorithm – Some online learning algorithms SP 2 -24

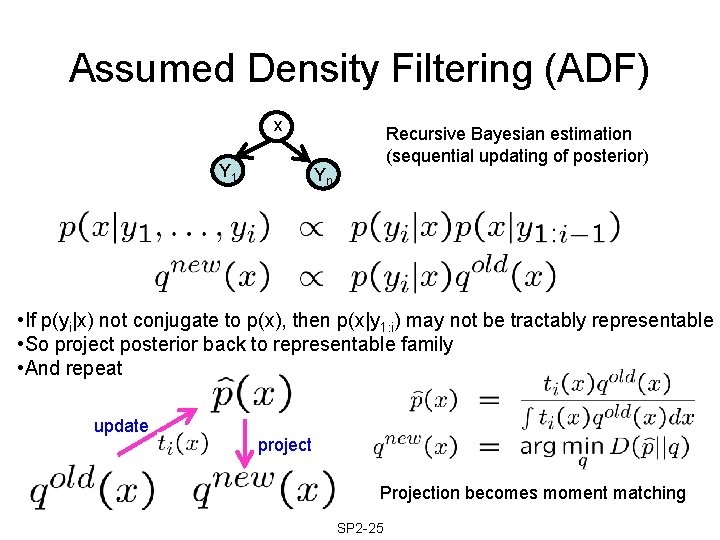

Assumed Density Filtering (ADF) x Y 1 Recursive Bayesian estimation (sequential updating of posterior) Yn • If p(yi|x) not conjugate to p(x), then p(x|y 1: i) may not be tractably representable • So project posterior back to representable family • And repeat update project Projection becomes moment matching SP 2 -25

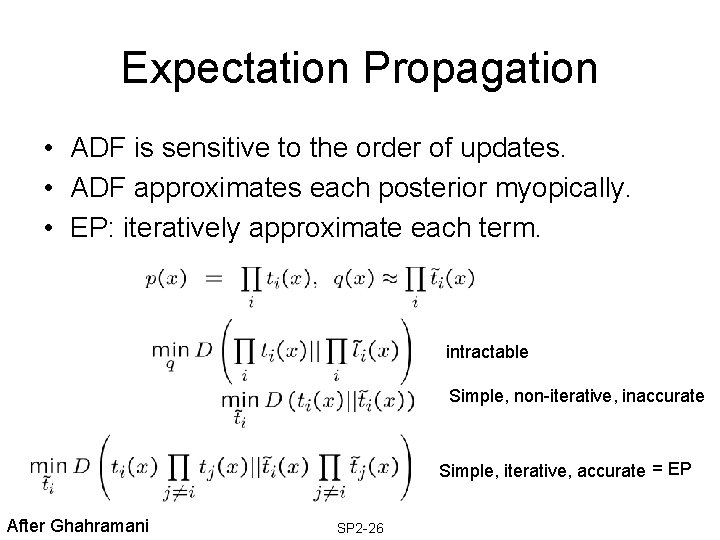

Expectation Propagation • ADF is sensitive to the order of updates. • ADF approximates each posterior myopically. • EP: iteratively approximate each term. intractable Simple, non-iterative, inaccurate Simple, iterative, accurate = EP After Ghahramani SP 2 -26

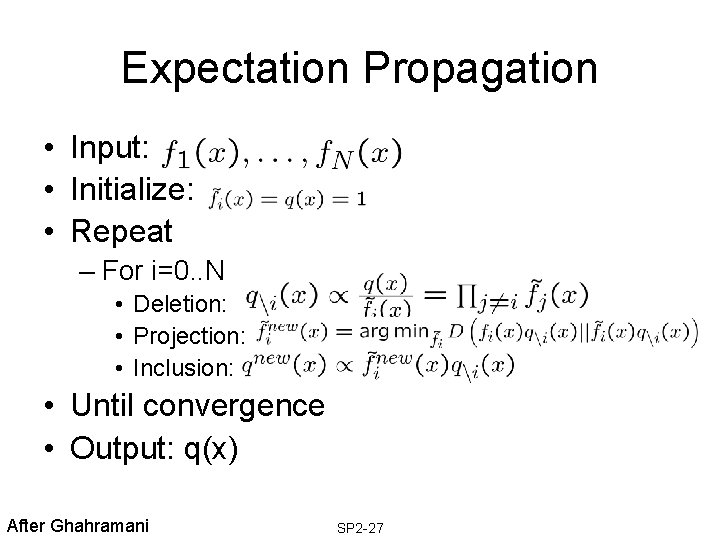

Expectation Propagation • Input: • Initialize: • Repeat – For i=0. . N • Deletion: • Projection: • Inclusion: • Until convergence • Output: q(x) After Ghahramani SP 2 -27

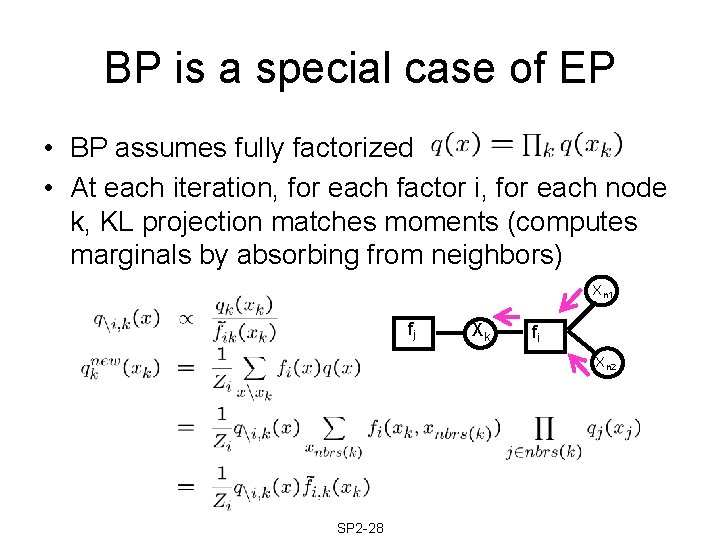

BP is a special case of EP • BP assumes fully factorized • At each iteration, for each factor i, for each node k, KL projection matches moments (computes marginals by absorbing from neighbors) Xn 1 fj Xk fi Xn 2 SP 2 -28

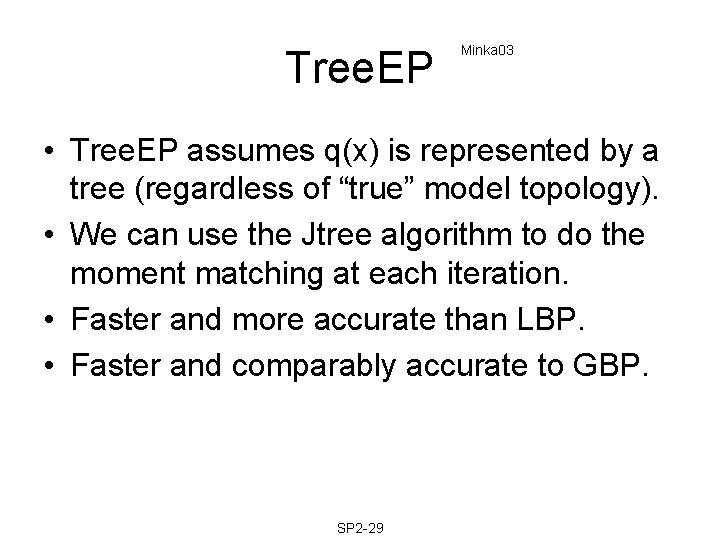

Tree. EP Minka 03 • Tree. EP assumes q(x) is represented by a tree (regardless of “true” model topology). • We can use the Jtree algorithm to do the moment matching at each iteration. • Faster and more accurate than LBP. • Faster and comparably accurate to GBP. SP 2 -29

Outline • Introduction • Exact inference • Approximate inference – Deterministic • • Variational Loopy belief propagation Expectation propagation Graph cuts – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -30

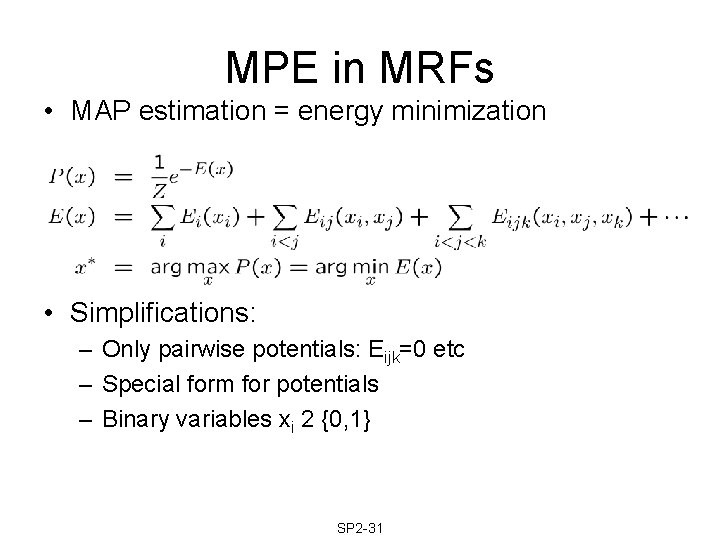

MPE in MRFs • MAP estimation = energy minimization • Simplifications: – Only pairwise potentials: Eijk=0 etc – Special form for potentials – Binary variables xi 2 {0, 1} SP 2 -31

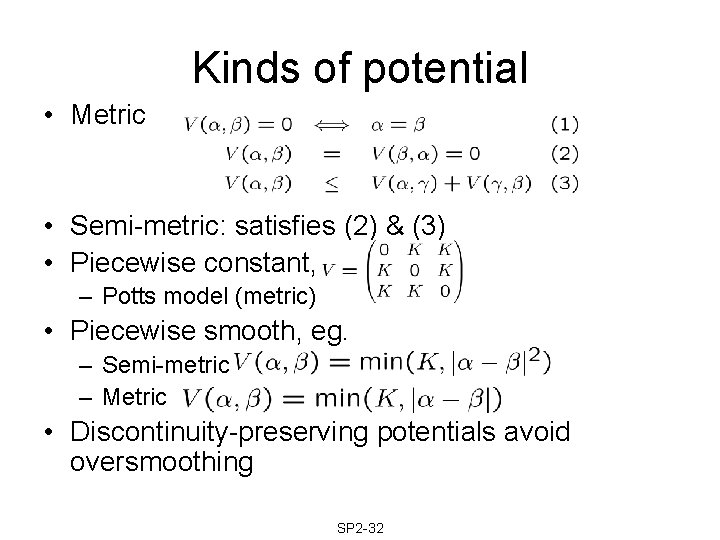

Kinds of potential • Metric • Semi-metric: satisfies (2) & (3) • Piecewise constant, eg. – Potts model (metric) • Piecewise smooth, eg. – Semi-metric – Metric • Discontinuity-preserving potentials avoid oversmoothing SP 2 -32

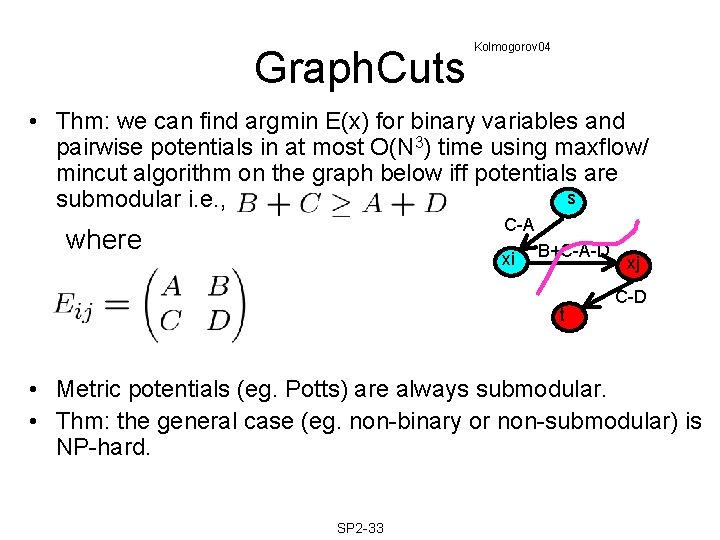

Graph. Cuts Kolmogorov 04 • Thm: we can find argmin E(x) for binary variables and pairwise potentials in at most O(N 3) time using maxflow/ mincut algorithm on the graph below iff potentials are s submodular i. e. , C-A where xi B+C-A-D t xj C-D • Metric potentials (eg. Potts) are always submodular. • Thm: the general case (eg. non-binary or non-submodular) is NP-hard. SP 2 -33

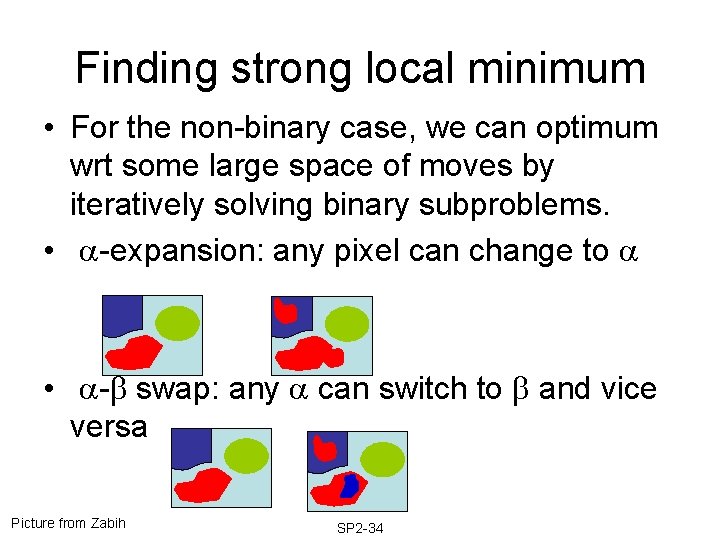

Finding strong local minimum • For the non-binary case, we can optimum wrt some large space of moves by iteratively solving binary subproblems. • -expansion: any pixel can change to • - swap: any can switch to and vice versa Picture from Zabih SP 2 -34

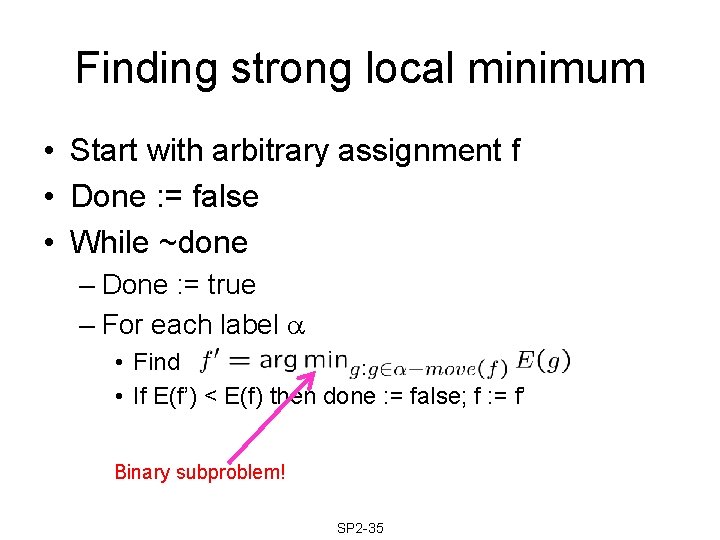

Finding strong local minimum • Start with arbitrary assignment f • Done : = false • While ~done – Done : = true – For each label • Find • If E(f’) < E(f) then done : = false; f : = f’ Binary subproblem! SP 2 -35

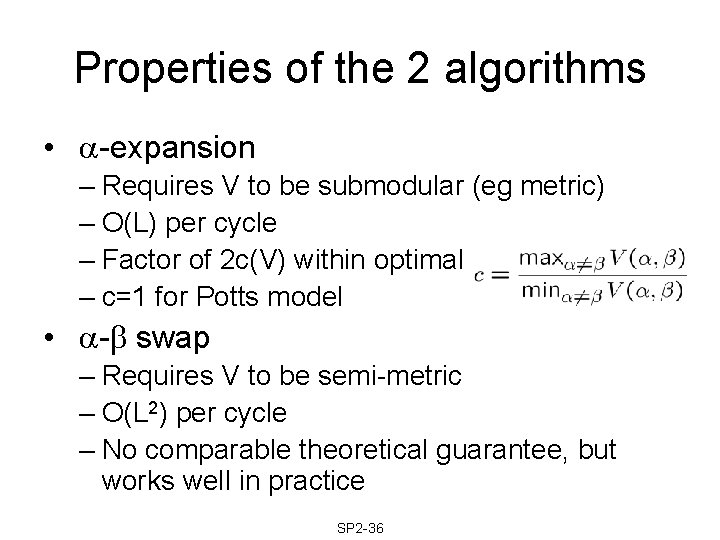

Properties of the 2 algorithms • -expansion – Requires V to be submodular (eg metric) – O(L) per cycle – Factor of 2 c(V) within optimal – c=1 for Potts model • - swap – Requires V to be semi-metric – O(L 2) per cycle – No comparable theoretical guarantee, but works well in practice SP 2 -36

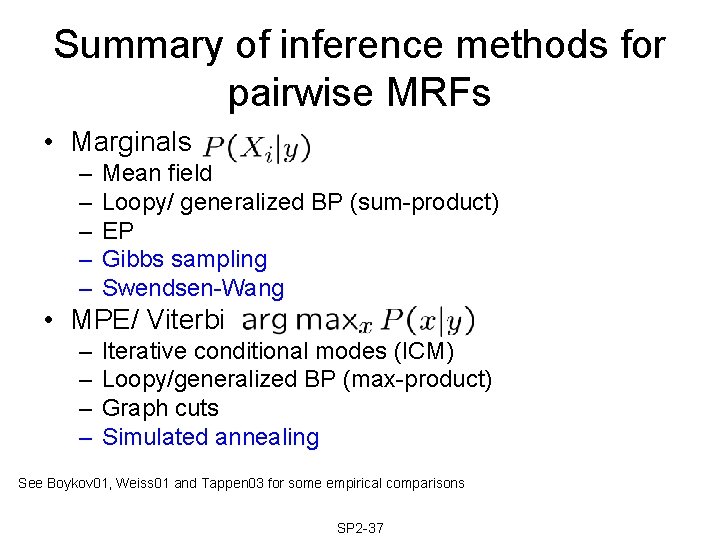

Summary of inference methods for pairwise MRFs • Marginals – – – Mean field Loopy/ generalized BP (sum-product) EP Gibbs sampling Swendsen-Wang • MPE/ Viterbi – – Iterative conditional modes (ICM) Loopy/generalized BP (max-product) Graph cuts Simulated annealing See Boykov 01, Weiss 01 and Tappen 03 for some empirical comparisons SP 2 -37

Outline • Introduction • Exact inference • Approximate inference – Deterministic – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -38

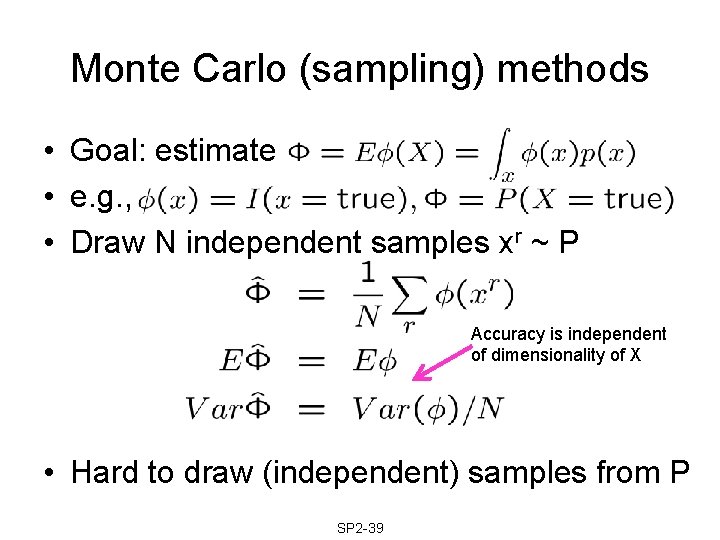

Monte Carlo (sampling) methods • Goal: estimate • e. g. , • Draw N independent samples xr ~ P Accuracy is independent of dimensionality of X • Hard to draw (independent) samples from P SP 2 -39

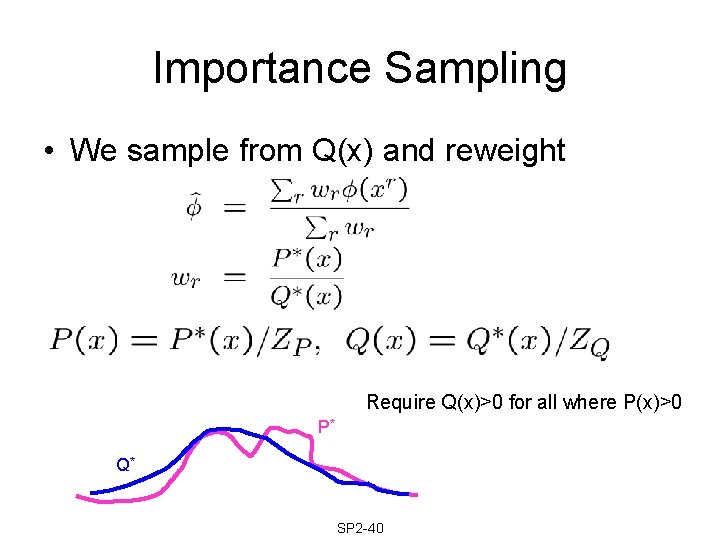

Importance Sampling • We sample from Q(x) and reweight Require Q(x)>0 for all where P(x)>0 P* Q* SP 2 -40

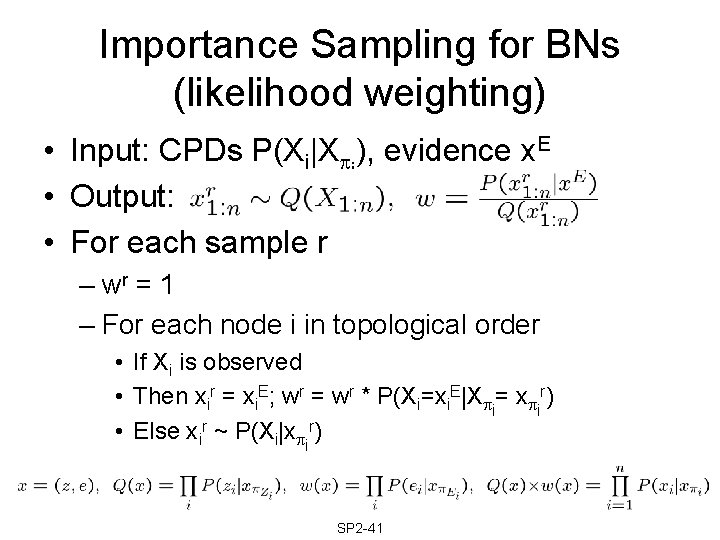

Importance Sampling for BNs (likelihood weighting) • Input: CPDs P(Xi|X i), evidence x. E • Output: • For each sample r – wr = 1 – For each node i in topological order • If Xi is observed • Then xir = xi. E; wr = wr * P(Xi=xi. E|X i= x ir) • Else xir ~ P(Xi|x ir) SP 2 -41

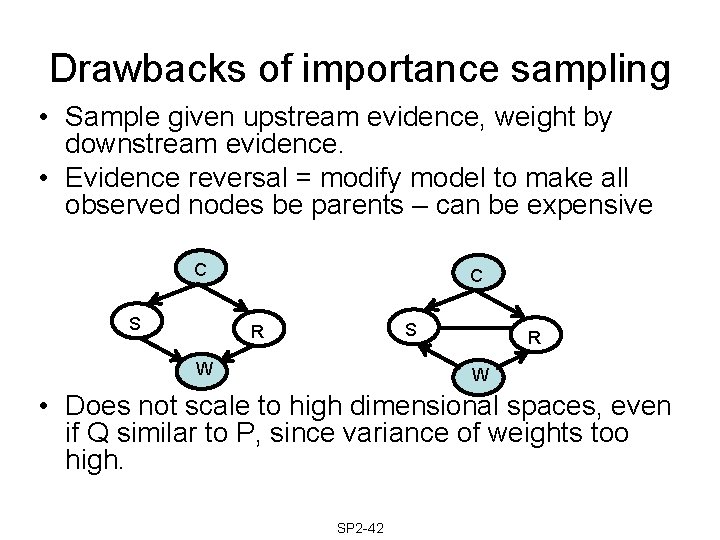

Drawbacks of importance sampling • Sample given upstream evidence, weight by downstream evidence. • Evidence reversal = modify model to make all observed nodes be parents – can be expensive C S R W • Does not scale to high dimensional spaces, even if Q similar to P, since variance of weights too high. SP 2 -42

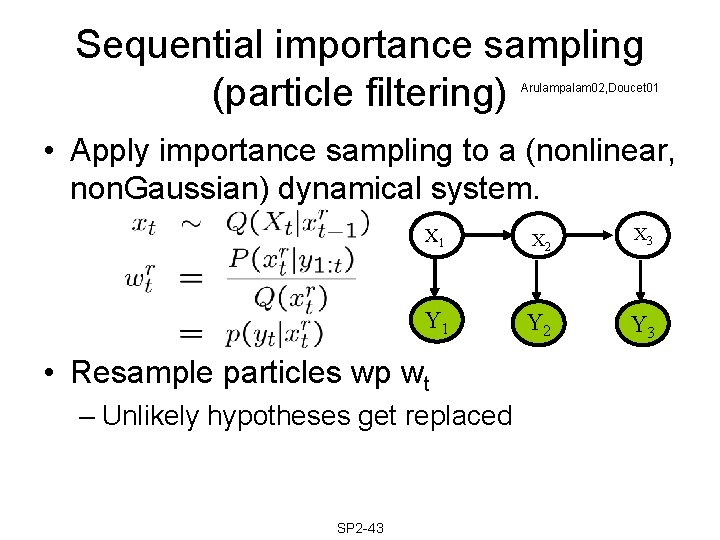

Sequential importance sampling (particle filtering) Arulampalam 02, Doucet 01 • Apply importance sampling to a (nonlinear, non. Gaussian) dynamical system. X 1 X 2 X 3 Y 1 Y 2 Y 3 • Resample particles wp wt – Unlikely hypotheses get replaced SP 2 -43

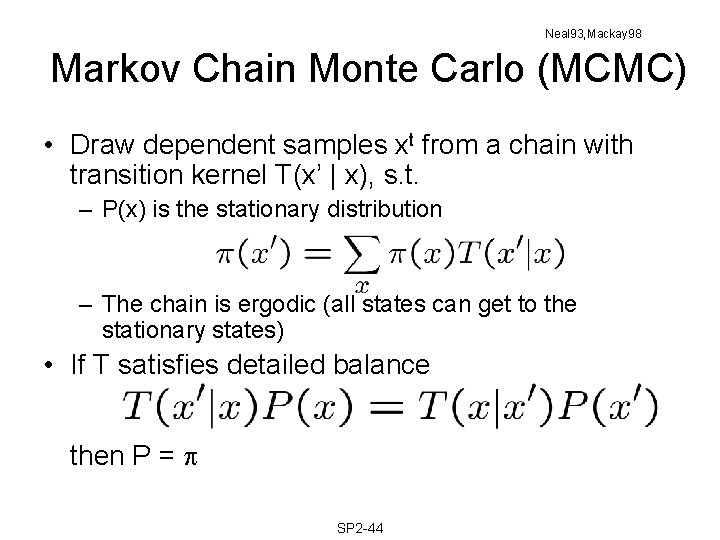

Neal 93, Mackay 98 Markov Chain Monte Carlo (MCMC) • Draw dependent samples xt from a chain with transition kernel T(x’ | x), s. t. – P(x) is the stationary distribution – The chain is ergodic (all states can get to the stationary states) • If T satisfies detailed balance then P = SP 2 -44

Metropolis Hastings • Sample xt ~ Q(x’|xt-1) • Accept new state with probability • Satisfies detailed balance SP 2 -45

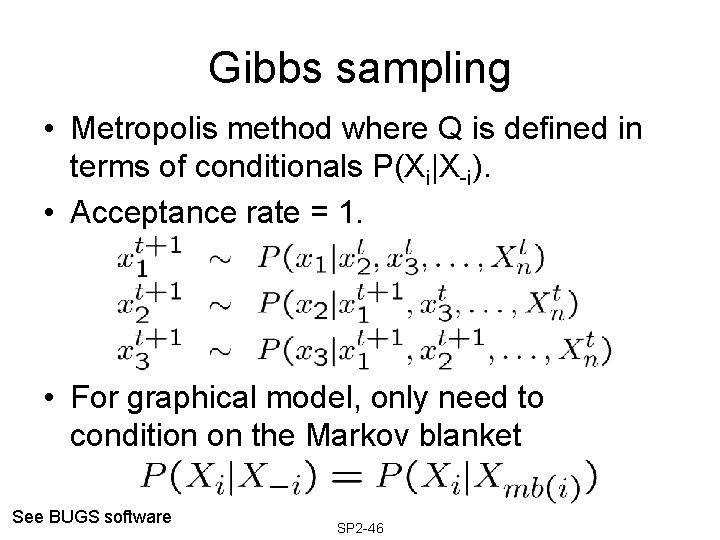

Gibbs sampling • Metropolis method where Q is defined in terms of conditionals P(Xi|X-i). • Acceptance rate = 1. • For graphical model, only need to condition on the Markov blanket See BUGS software SP 2 -46

Difficulties with MCMC • May take long time to “mix” (converge to stationary distribution). • Hard to know when chain has mixed. • Simple proposals exhibit random walk behavior. – Hybrid Monte Carlo (use gradient information) – Swendsen-Wang (large moves for Ising model) – Heuristic proposals SP 2 -47

Outline • Introduction • Exact inference • Approximate inference – Deterministic – Stochastic (sampling) – Hybrid deterministic/ stochastic SP 2 -48

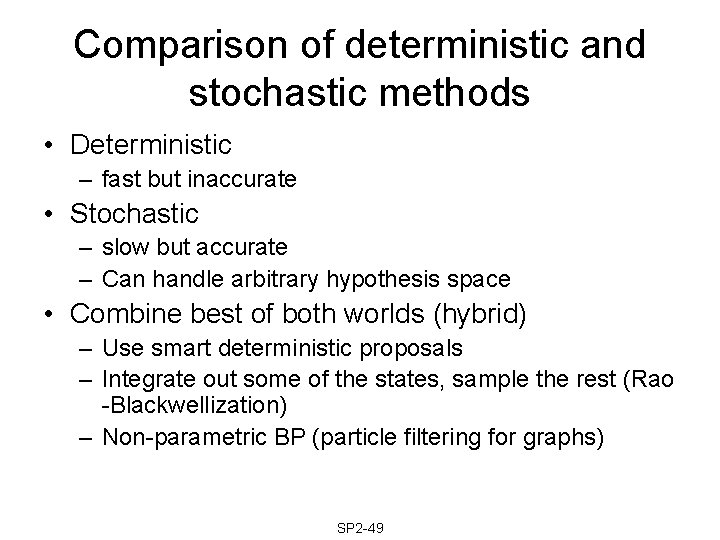

Comparison of deterministic and stochastic methods • Deterministic – fast but inaccurate • Stochastic – slow but accurate – Can handle arbitrary hypothesis space • Combine best of both worlds (hybrid) – Use smart deterministic proposals – Integrate out some of the states, sample the rest (Rao -Blackwellization) – Non-parametric BP (particle filtering for graphs) SP 2 -49

Examples of deterministic proposals • State estimation – Unscented particle filter • Machine learning – Variational MCMC de. Freitas 01 • Computer vision – Data driven MCMC Tu 02 SP 2 -50 Merwe 00

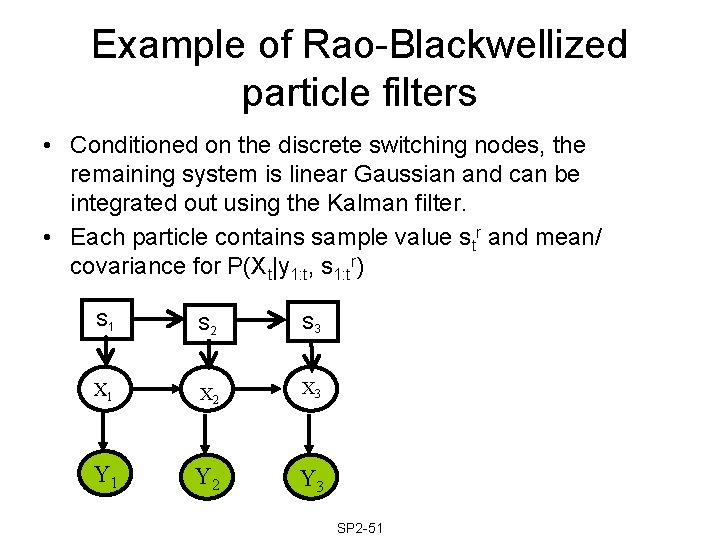

Example of Rao-Blackwellized particle filters • Conditioned on the discrete switching nodes, the remaining system is linear Gaussian and can be integrated out using the Kalman filter. • Each particle contains sample value str and mean/ covariance for P(Xt|y 1: t, s 1: tr) S 1 S 2 S 3 X 1 X 2 X 3 Y 1 Y 2 Y 3 SP 2 -51

Outline • Introduction • Exact inference • Approximate inference – Deterministic – Stochastic (sampling) – Hybrid deterministic/ stochastic • Summary SP 2 -52

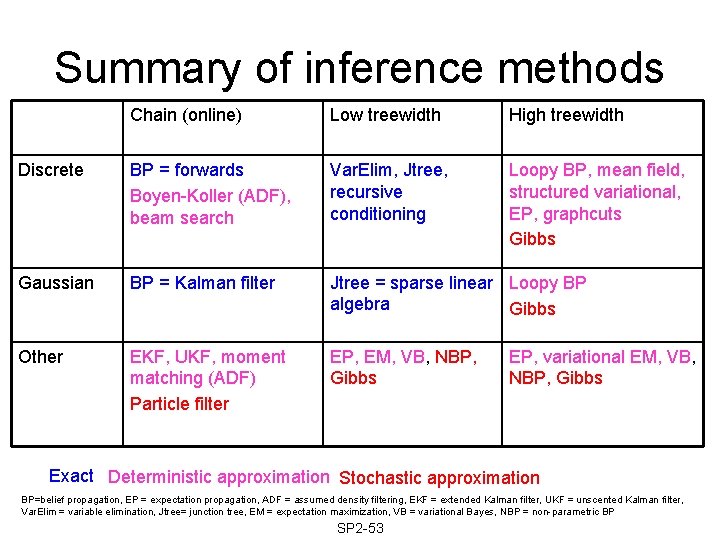

Summary of inference methods Chain (online) Low treewidth High treewidth Discrete BP = forwards Boyen-Koller (ADF), beam search Var. Elim, Jtree, recursive conditioning Loopy BP, mean field, structured variational, EP, graphcuts Gibbs Gaussian BP = Kalman filter Jtree = sparse linear Loopy BP algebra Gibbs Other EKF, UKF, moment matching (ADF) Particle filter EP, EM, VB, NBP, Gibbs EP, variational EM, VB, NBP, Gibbs Exact Deterministic approximation Stochastic approximation BP=belief propagation, EP = expectation propagation, ADF = assumed density filtering, EKF = extended Kalman filter, UKF = unscented Kalman filter, Var. Elim = variable elimination, Jtree= junction tree, EM = expectation maximization, VB = variational Bayes, NBP = non-parametric BP SP 2 -53

- Slides: 53